Extending the Adapted PageRank Algorithm Centrality to Multiplex Networks with Data Using the PageRank Two-Layer Approach

Abstract

1. Introduction

1.1. Literature Review

1.2. Main Contribution

1.3. Structure of the Paper

2. Methodology

2.1. The Adapted PageRank Algorithm (APA) Model

- It is nonnegative.

- It is stochastic by columns.

- The highest eigenvalue of P is .

| Algorithm 1: (Adapted PageRank algorithm (APA)). Let be a primary graph representing a network with n nodes. |

|

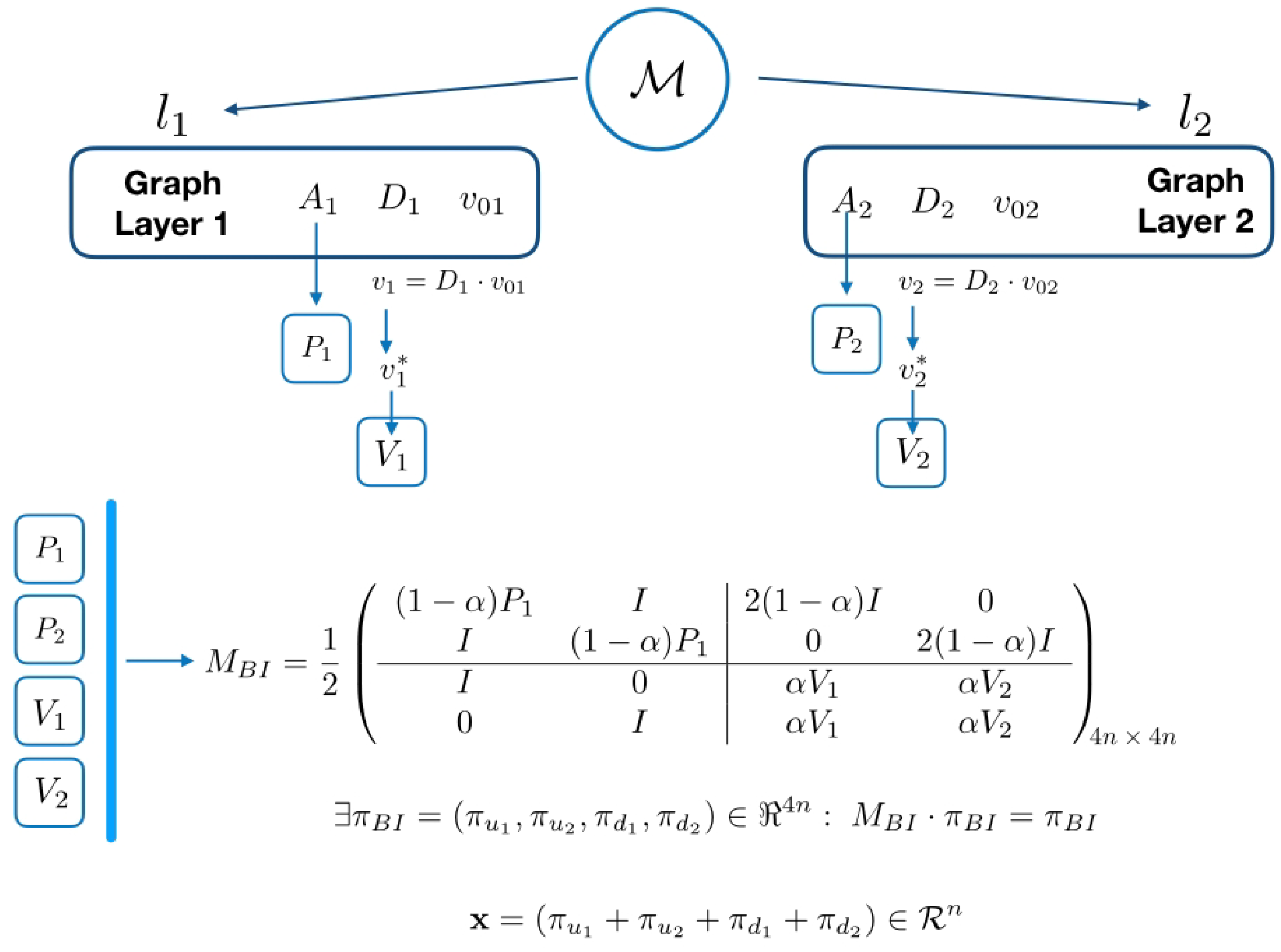

2.2. The Biplex Approach for Classic PageRank

- , physical layer, it is the network G.

- , teleportation layer, it is an all-to-all network, with weights given by the personalized vector.

2.3. Constructing the APABI Centrality by Applying the Two-Layer Approach

| Algorithm 2: (Adapted PageRank algorithm biplex (APABI)). Let , with layers and adjacency matrices be a biplex network with n nodes. |

|

2.4. A Note about the Computational Cost

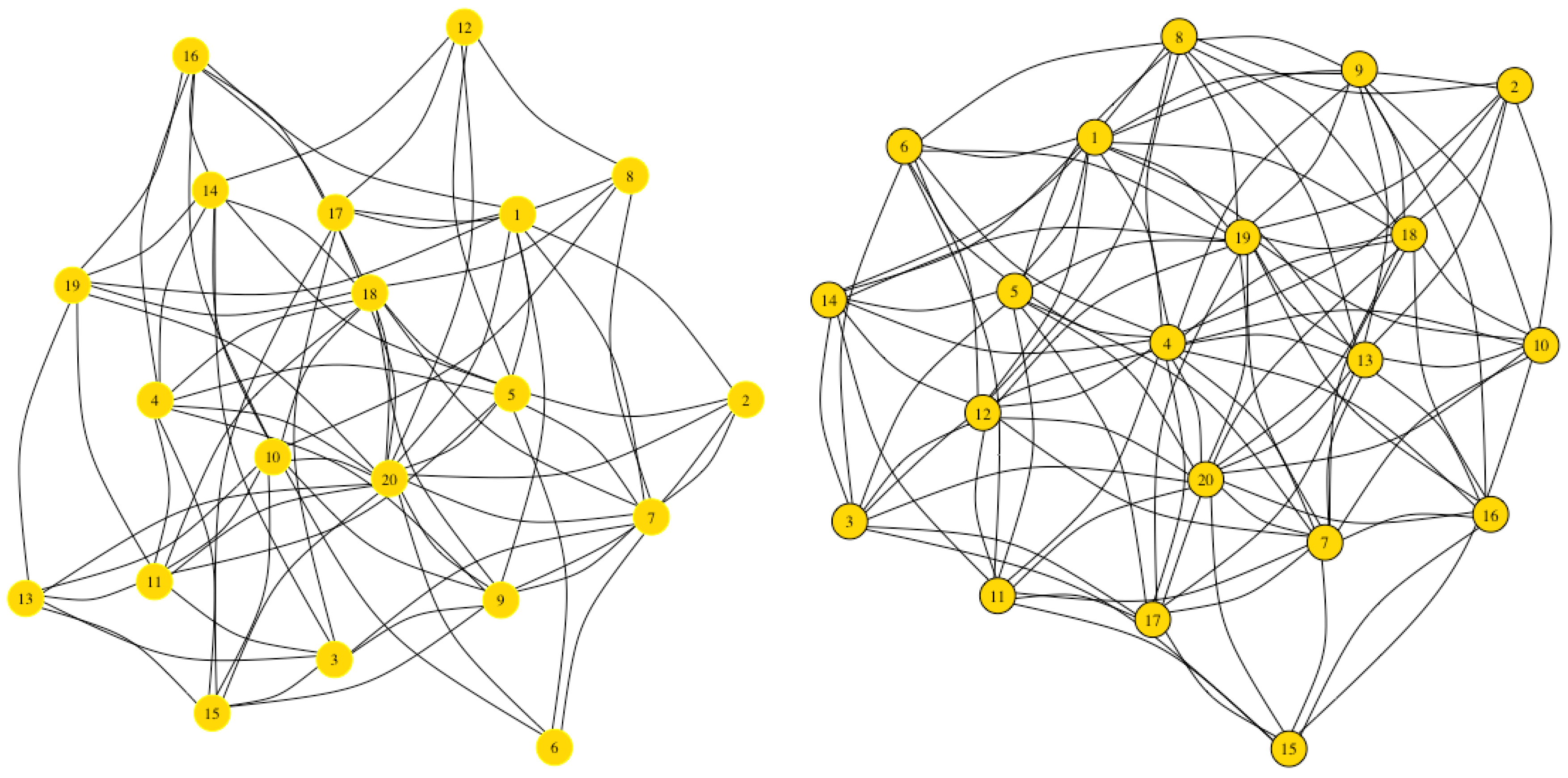

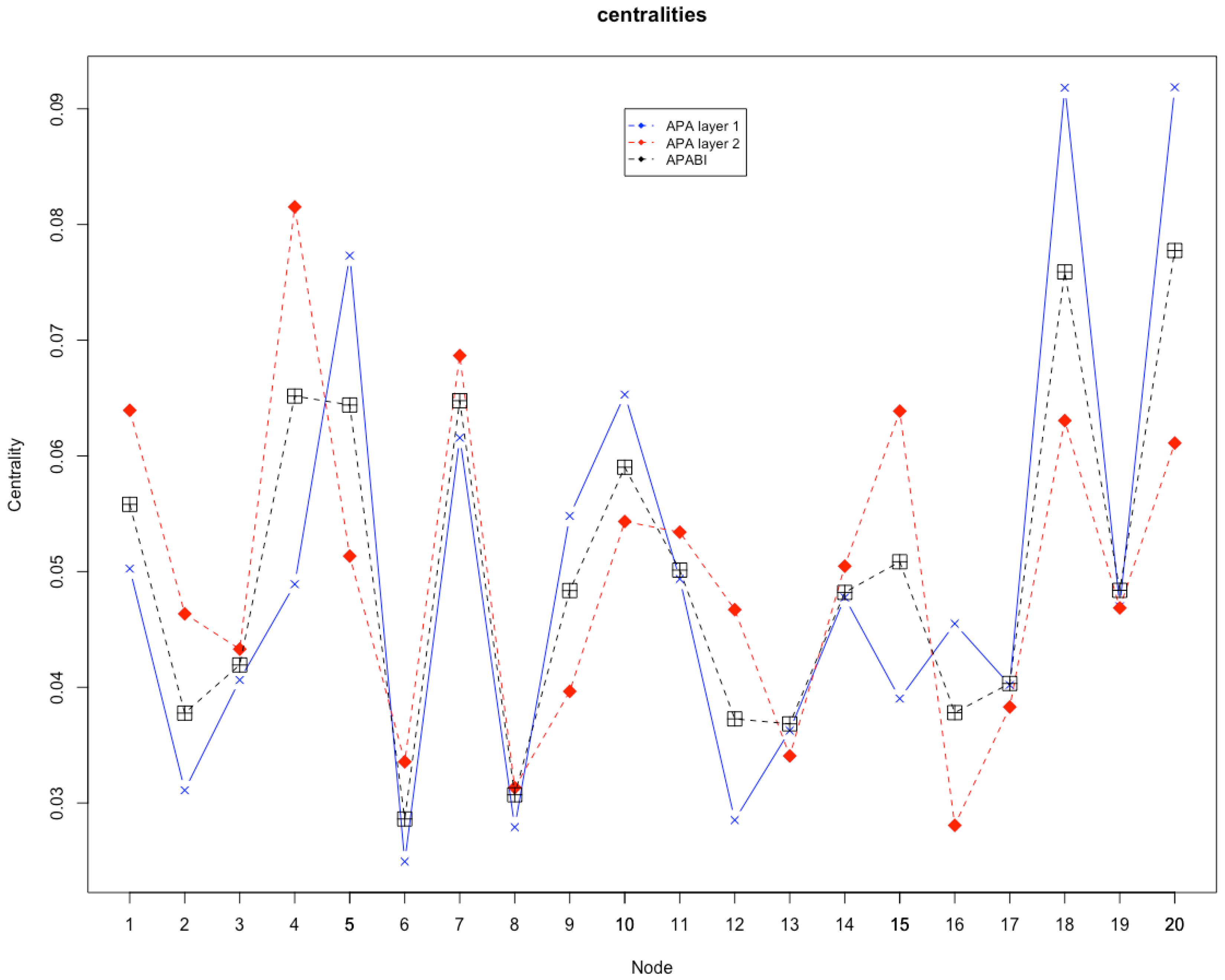

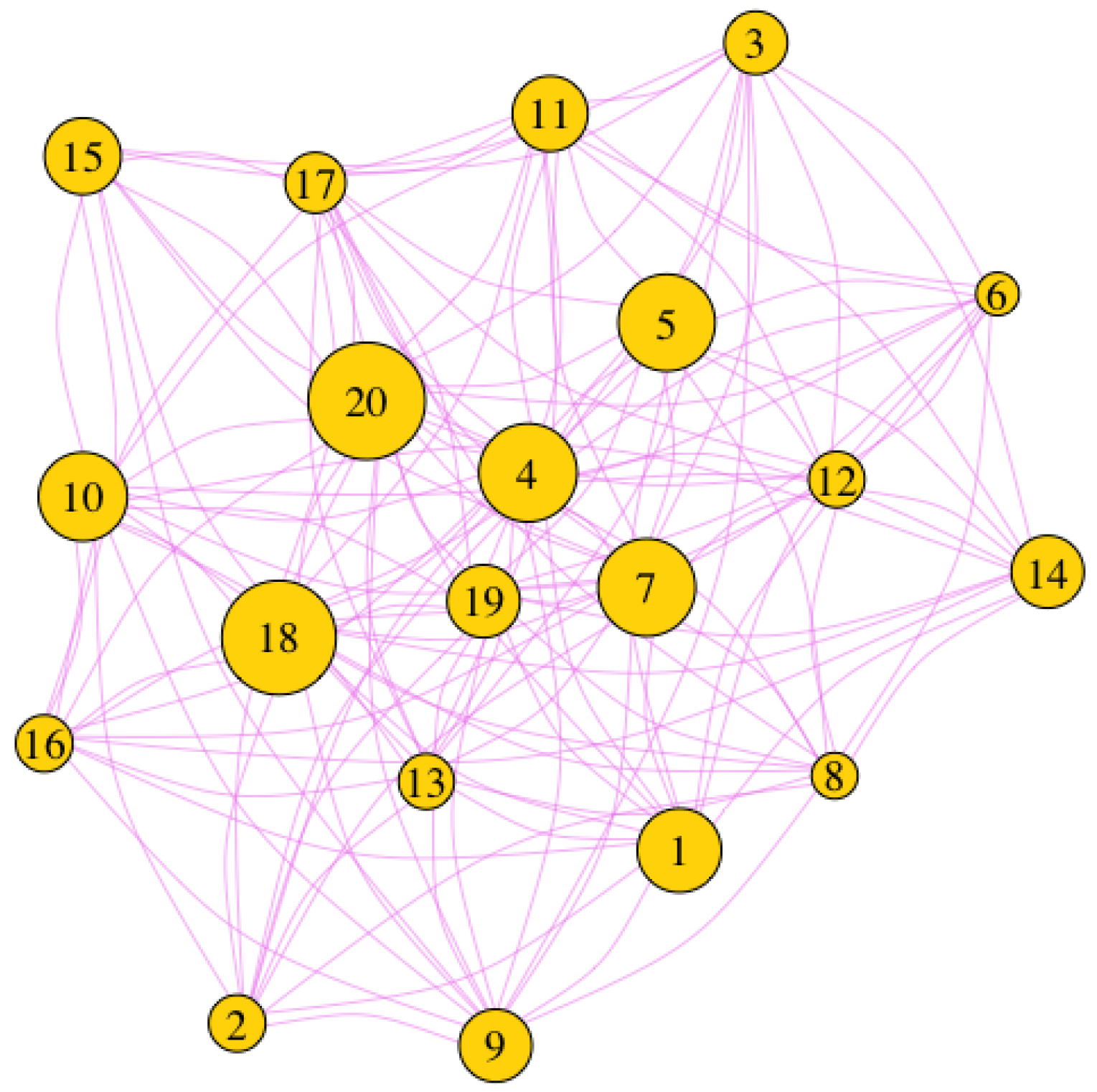

3. Results

4. Discussion

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

Abbreviations

| APA | Adapted PageRank algorithm |

| APABI | Adapted PageRank algorithm biplex |

References

- Newman, M. Networks: An Introduction; Oxford University Press: Oxford, UK, 2010. [Google Scholar]

- Bollobas, B. Modern Graph Theory; Springer: Berlin, Germany, 1998. [Google Scholar]

- Caluset, A.; Shalizi, C.; Newman, M. Power-law distributions in empirical data. SIAM Rev. 2009, 51, 661–703. [Google Scholar] [CrossRef]

- Porter, M. Small-world network. Scholarpedia 2012, 7, 1739. [Google Scholar] [CrossRef]

- Boccaleti, S.; Latora, V.; Moreno, Y.; Hwang, D. Complex networks: structure and dynamics. Phys. Rep. 2006, 424, 175–308. [Google Scholar] [CrossRef]

- Fortunato, S. Community detection in graphs. Phys. Rep. 2010, 486, 75–174. [Google Scholar] [CrossRef]

- De Domenico, M.; Granell, C.; Porter, M.; Arenas, A. The physics of spreading processes in multilayer networks. Nat. Phys. 2016, 12, 901–906. [Google Scholar] [CrossRef]

- De Domenico, M.; Solè-Ribalta, A.; Cozzo, E.; Kivelä, M.; Moreno, Y.; Porter, M.; Gómez, S.; Arenas, A. Mathematical formulation of multilayer networks. Phys. Rev. 2013, 3, 041022. [Google Scholar] [CrossRef]

- Kivela, M.; Arenas, A.; Barthelemy, M.; Gleeson, J.; Moreno, Y.; Porter, M. Multilayer networks. J. Complex Netw. 2014, 2, 203–271. [Google Scholar] [CrossRef]

- Cellai, D.; Bianconi, G. Multiplex networks with heterogeneous activities of the nodes. Phys. Rev. 2016, 93, 032302. [Google Scholar] [CrossRef]

- De Domenico, M.; Solè-Ribalta, A.; Gómez, S.; Arenas, A. Navigability of interconnected networks under random failures. Proc. Natl. Acad. Sci. USA 2014, 111, 8351–8356. [Google Scholar] [CrossRef]

- Padgett, J.; Ansell, C. Robust Action and the Rise of the Medici. Am. J. Sociol. 2016, 98, 1259–1319. [Google Scholar] [CrossRef]

- Cardillo, A.; Gómez-Gardeñes, A.; Zanin, M.; Romance, M.; Papo, D.; del Pozo, F.; Boccaletti, S. Emergence of network features from multiplexity. SIAM Rev. 2013, 3, 1–122. [Google Scholar] [CrossRef] [PubMed]

- De Domenico, M.; Lancichinetti, A.; Arenas, A.; Rosvall, M. Identifying modular flows on multilayer networks reveals highly overlapping organization in interconnected systems. Phys. Rev. X 2015, 5, 011027. [Google Scholar] [CrossRef]

- Battiston, S.; Caldarelli, G.; May, R.; Roukny, T.; Stiglitz, J. The price of complexity in financial networks. Proc. Natl. Acad. Sci. USA 2016, 113, 10031–10036. [Google Scholar] [CrossRef] [PubMed]

- Bentley, B.; Branicky, R.; Barnes, C.; Chew, Y.; Yemini, E.; Bullmore, E.; Vértes, P. The Multilayer Connectome of Caenorhabditis elegans. PLOS Comput. Biol. 2016, 12, e1005283. [Google Scholar] [CrossRef]

- Sola, L.; Romance, M.; Criado, R.; Flores, J.; Garcia del Amo, A.; Boccaletti, S. Eigenvector centrality of nodes in multiplex networks. Chaos 2013, 23, 033131. [Google Scholar] [CrossRef]

- Iacovacci, J.; Rahmede, C.; Arenas, A.; Bianconi, G. Functional Multiplex PageRank. arXiv, 2016; arXiv:1608.06328v2. [Google Scholar] [CrossRef]

- Bonacich, P. Power and centrality: A family of measures. Am. J. Sociol. 1987, 92, 1170–1182. [Google Scholar] [CrossRef]

- Bonacich, P. Simultaneous group and individual centrality. Soc. Netw. 1991, 13, 155–168. [Google Scholar] [CrossRef]

- Meiss, M.; Menczer, F.; Fortunato, S.; Flammini, A.; Vespignani, A. Ranking web sites with real user traffic. In Proceedings of the 2008 International Conference on Web Search and Data Mining (WSDM ’08), Palo Alto, CA, USA, 11–12 February 2008; pp. 65–76. [Google Scholar]

- Ghoshal, G.; Barabàsi, A.L. Ranking stability and super-stable nodes in complex networks. Nat. Commun. 2011, 2, 394. [Google Scholar] [CrossRef]

- Cristelli, M.; Gabrielli, A.; Tacchella, A.; Caldarelli, G.; Pietronero, L. Measuring the intangibles: A metrics for the economic complexity of countries and products. PLoS ONE 2013, 8, e70726. [Google Scholar] [CrossRef]

- Crucitti, P.; Latora, V.; Porta, S. Centrality measures in spatial networks of urban streets. Phys. Rev. E 2006, 73, 036125. [Google Scholar] [CrossRef] [PubMed]

- Agryzkov, T.; Oliver, J.; Tortosa, L.; Vicent, J. An algorithm for ranking the nodes of an urban network based on the concept of PageRank vector. Appl. Math. Comput. 2012, 219, 2186–2193. [Google Scholar] [CrossRef]

- Berkhin, P. A survey on PageRank computing. Internet Math. 2005, 2, 73–120. [Google Scholar] [CrossRef]

- Bianconi, G. Multilayer Networks. Structure and Functions; Oxford University Press: Oxford, UK, 2018. [Google Scholar]

- Halu, A.; Mondragón, R.; Panzarasa, P.; Bianconi, G. Multiplex PageRank. PLoS ONE 2013, 8, e78293. [Google Scholar] [CrossRef] [PubMed]

- Solé-Ribalta, A.; De Domenico, M.; Gómez, S.; Arenas, A. Centrality Rankings in Multiplex Networks. In Proceedings of the 2014 ACM Conference on Web Science, Bloomington, IN, USA, 23–26 June 2014; ACM: New York, NY, USA, 2014; pp. 149–155. [Google Scholar] [CrossRef]

- Bobadilla, J.; Ortega, F.; Hernando, A.; Gutiérrez, A. Recommender systems survey. Knowl.-Based Syst. 2013, 46, 109–132. [Google Scholar] [CrossRef]

- Stai, E.; Kafetzoglou, S.; Tsiropoulou, E.E.; Papavassiliou, S. A Holistic Approach for Personalization, Relevance Feedback and Recommendation in Enriched Multimedia Content. Multimedia Tools Appl. 2018, 77, 283–326. [Google Scholar] [CrossRef]

- Rabieekenari, L.; Sayrafian, K.; Baras, J. Autonomous relocation strategies for cells on wheels in environments with prohibited areas. In Proceedings of the 2017 IEEE International Conference on Communications (ICC), Paris, France, 21–25 May 2017; pp. 1–6. [Google Scholar] [CrossRef]

- Tsiropoulou, E.; Koukas, K.; Papavassiliou, S. A Socio-physical and Mobility-Aware Coalition Formation Mechanism in Public Safety Networks. EAI Endorsed Trans. Future Internet 2018, 4. [Google Scholar] [CrossRef]

- Pedroche, F.; Romance, M.; Criado, R. A biplex approach to PageRank centrality: From classic to multiplex networks. Chaos 2016, 26, 065301. [Google Scholar] [CrossRef]

- Agryzkov, T.; Tortosa, L.; Vicent, J.; Wilson, R. A centrality measure for urban networks based on the eigenvector centrality concept. Environ. Plan. B 2017, 291, 14–29. [Google Scholar] [CrossRef]

- Page, L.; Brin, S.; Motwani, R.; Winogrand, T. The Pagerank Citation Ranking: Bringing Order to the Web; Technical Report 1999-66; Stanford InfoLab: Stanford, CA, USA, 1999; Volume 66. [Google Scholar]

- Pedroche, F. Métodos de cálculo del vector PageRank. Bol. Soc. Esp. Mat. Apl. 2007, 39, 7–30. [Google Scholar]

- Agryzkov, T.; Pedroche, F.; Tortosa, L.; Vicent, J. Combining the Two-Layers PageRank Approach with the APA Centrality in Networks with Data. Int. J. Geo-Inform. 2018, 7. [Google Scholar] [CrossRef]

- Datta, B. Numerical Linear Algebra and Applications; Brooks/Cole Publishing Company: Pacific Grove, CA, USA, 1995. [Google Scholar]

| Node | Social Networks Links | Messages | Game Links | Games |

|---|---|---|---|---|

| 1 | 15 | 33 | ||

| 2 | 9 | 26 | ||

| 3 | 12 | 18 | ||

| 4 | 19 | 32 | ||

| 5 | 28 | 20 | ||

| 6 | 7 | 12 | ||

| 7 | 20 | 32 | ||

| 8 | 7 | 6 | ||

| 9 | 16 | 18 | ||

| 10 | 21 | 25 | ||

| 11 | 14 | 24 | ||

| 12 | 8 | 18 | ||

| 13 | 11 | 6 | ||

| 14 | 13 | 26 | ||

| 15 | 11 | 38 | ||

| 16 | 14 | 6 | ||

| 17 | 12 | 12 | ||

| 18 | 35 | 30 | ||

| 19 | 15 | 8 | ||

| 20 | 27 | 25 |

| Node | APA Layer | APA Layer | APABI | |||

|---|---|---|---|---|---|---|

| Centrality | Ranking | Centrality | Ranking | Centrality | Ranking | |

| 1 | 0.05025 | 7 | 0.06394 | 3 | 0.05581 | 7 |

| 2 | 0.03110 | 17 | 0.04635 | 13 | 0.03777 | 16 |

| 3 | 0.04063 | 13 | 0.04330 | 14 | 0.04193 | 13 |

| 4 | 0.04891 | 9 | 0.08152 | 1 | 0.06517 | 3 |

| 5 | 0.07731 | 3 | 0.05134 | 9 | 0.06440 | 5 |

| 6 | 0.02494 | 20 | 0.03356 | 18 | 0.02862 | 20 |

| 7 | 0.06157 | 5 | 0.06867 | 2 | 0.06477 | 4 |

| 8 | 0.02791 | 19 | 0.03133 | 19 | 0.03071 | 19 |

| 9 | 0.05481 | 6 | 0.03965 | 15 | 0.04836 | 11 |

| 10 | 0.06530 | 4 | 0.05433 | 7 | 0.05902 | 6 |

| 11 | 0.04936 | 8 | 0.05341 | 8 | 0.05013 | 9 |

| 12 | 0.02852 | 18 | 0.04671 | 12 | 0.03727 | 17 |

| 13 | 0.03626 | 16 | 0.03407 | 17 | 0.03684 | 18 |

| 14 | 0.04776 | 10 | 0.05047 | 10 | 0.04820 | 12 |

| 15 | 0.03902 | 15 | 0.06387 | 4 | 0.05085 | 8 |

| 16 | 0.04550 | 12 | 0.02807 | 20 | 0.03781 | 15 |

| 17 | 0.04020 | 14 | 0.03830 | 16 | 0.04033 | 14 |

| 18 | 0.09182 | 2 | 0.06305 | 5 | 0.07590 | 2 |

| 19 | 0.04700 | 11 | 0.04686 | 11 | 0.04838 | 10 |

| 20 | 0.09186 | 1 | 0.06111 | 6 | 0.07775 | 1 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Agryzkov, T.; Curado, M.; Pedroche, F.; Tortosa, L.; Vicent, J.F. Extending the Adapted PageRank Algorithm Centrality to Multiplex Networks with Data Using the PageRank Two-Layer Approach. Symmetry 2019, 11, 284. https://doi.org/10.3390/sym11020284

Agryzkov T, Curado M, Pedroche F, Tortosa L, Vicent JF. Extending the Adapted PageRank Algorithm Centrality to Multiplex Networks with Data Using the PageRank Two-Layer Approach. Symmetry. 2019; 11(2):284. https://doi.org/10.3390/sym11020284

Chicago/Turabian StyleAgryzkov, Taras, Manuel Curado, Francisco Pedroche, Leandro Tortosa, and José F. Vicent. 2019. "Extending the Adapted PageRank Algorithm Centrality to Multiplex Networks with Data Using the PageRank Two-Layer Approach" Symmetry 11, no. 2: 284. https://doi.org/10.3390/sym11020284

APA StyleAgryzkov, T., Curado, M., Pedroche, F., Tortosa, L., & Vicent, J. F. (2019). Extending the Adapted PageRank Algorithm Centrality to Multiplex Networks with Data Using the PageRank Two-Layer Approach. Symmetry, 11(2), 284. https://doi.org/10.3390/sym11020284