A Fast Approach for Generating Efficient Parsers on FPGAs

Abstract

:1. Introduction

- Heavy resource cost and long pipeline stages are used, such as taking more than 250 nanoseconds to process one packet and using more than 10% Flip Flops (FFs) and Look Up Tables (LUTs) in the FPGAs.

- All the packets, including the payload, go through the parser, which wastes a lot of cycles to transport the payloads and reduces the packet parsing performance.

- Parsers are not thoroughly pipelined, and multiple cycles are used to process a packet in one pipeline stage, which stalls the pipeline and reduces the performance.

- We design a hardware architecture in a pipeline fashion for the parser. Templates for the components in the hardware architecture are created and thoroughly tested.

- We employ an approach to map the P4 programs to the proposed hardware architecture, in which the network programmers of nonhardware knowledge focus on the high-level software.

- We demonstrate a fast compiler to implement the P4 program to FPGA targets.

2. Background and Related Work

2.1. Introduction to FPGAs

2.2. P4 Language

- Protocol Headers: Each of these structures defines the header of a specific protocol, and includes the fields’ names and lengths, as well as the order among all fields.

- Parser: This is a state machine that describes the transition among all supported protocol headers, which indicates the method of parsing one protocol header after another. For example, an IPv4 header can be confirmed and located by extracting the next type from the Ethernet header.

- Table: The first part of the “Match-action” implementation. It stores the mechanism for performing packet processing and defines how the extracted header fields are used for matching, including exact match, longest prefix match, or ternary match. For example, a destination IP address is used to find out which port the packet should be sent to.

- Action: The other part of “Match-action” implementation is built based on a series of predefined simple basic operations that are independent of the protocols. Complex customized actions are built with them and can be used in this part as well.

- Control flow: It is a simple imperative program that describes the flow of control, and it controls the process order within the “Match-action” architecture.

2.3. Packet Parser Solutions

3. Hardware Architecture of the Parser

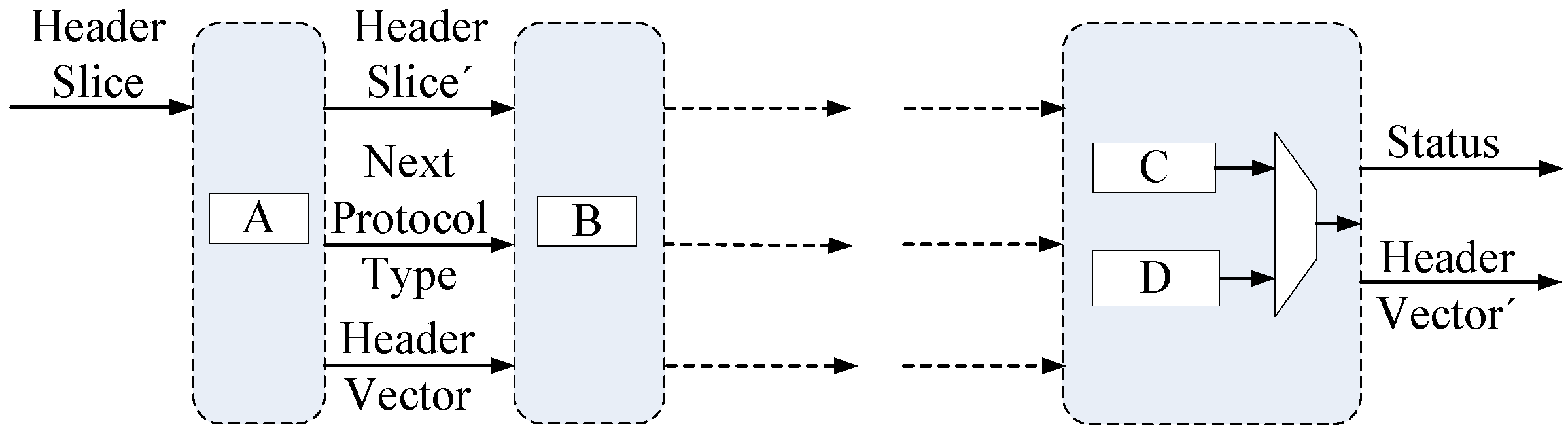

3.1. Microarchitecture

3.2. Main Input and Output

3.2.1. Header Slice

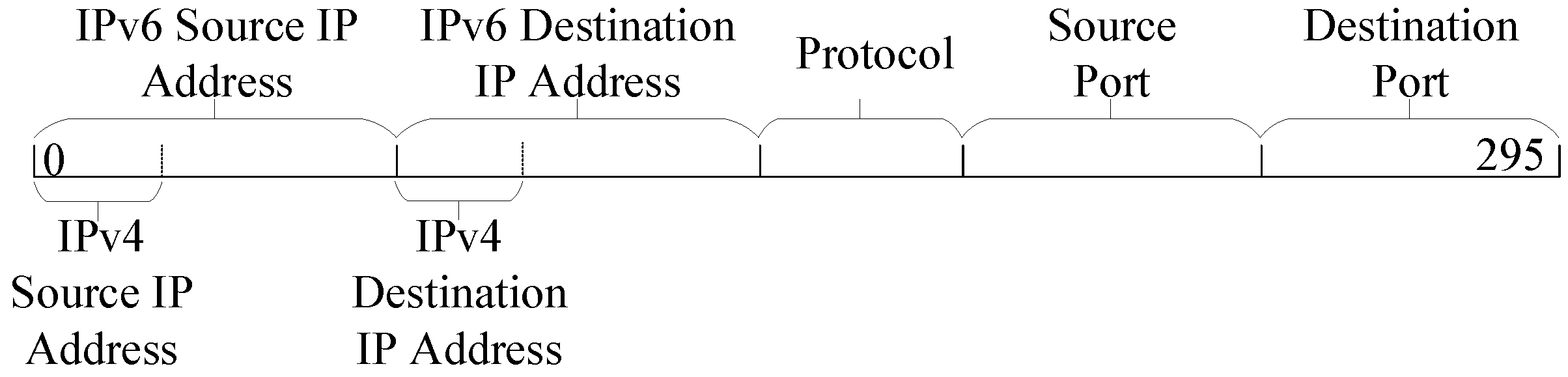

3.2.2. Header Vector

3.3. Processing Module Scheduling

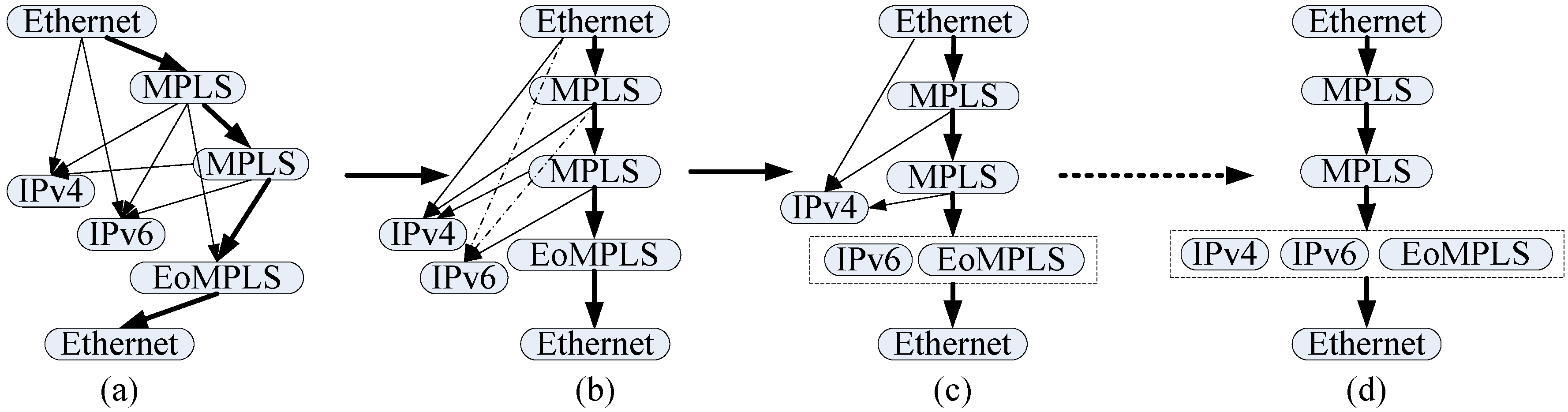

3.3.1. Potential Conflicts

3.3.2. Pipeline Scheduling

- Find one of the longest paths in the parse graph. There is only one longest path among these five nodes, namely, “Ethernet → MPLS → MPLS → EoMPLS → Ethernet”, and it is marked by the thick arrow in Figure 3a.

- Choose any one of the rest of the nontrunk nodes and identify all of its parents in the trunk. Then, reserve the dependency relationship with the last node in the trunk and delete the other dependencies. For example, if “IPv6” is selected, the reserved dependency should be that between “IPv6” and the second “MPLS” in the trunk, as shown in Figure 3b. The dependencies marked by dotted arrows will be deleted. This means that the packet cannot be directly transferred from the “Ethernet” stage and the first “MPLS” stage to the “IPv6” stage.

- Merge this chosen node with its brother in the trunk to form a new node, and further update the dependencies of its children. This process is shown in Figure 3c. After doing this, the packet can only flow in the trunk. We assume that there is a packet header which has only two protocol headers “Ethernet → IPv6” inputted, “Ethernet” will be processed at the first cycle; but the “IPv6” header will be processed in the fourth cycle.

- Go through the rest of non-trunk nodes one by one and add them to the trunk by repeating steps 2 and 3. The schedule is deemed completed when all nodes are in the trunk. Figure 3d shows the final result.

4. Hardware Architecture of the Processing Module

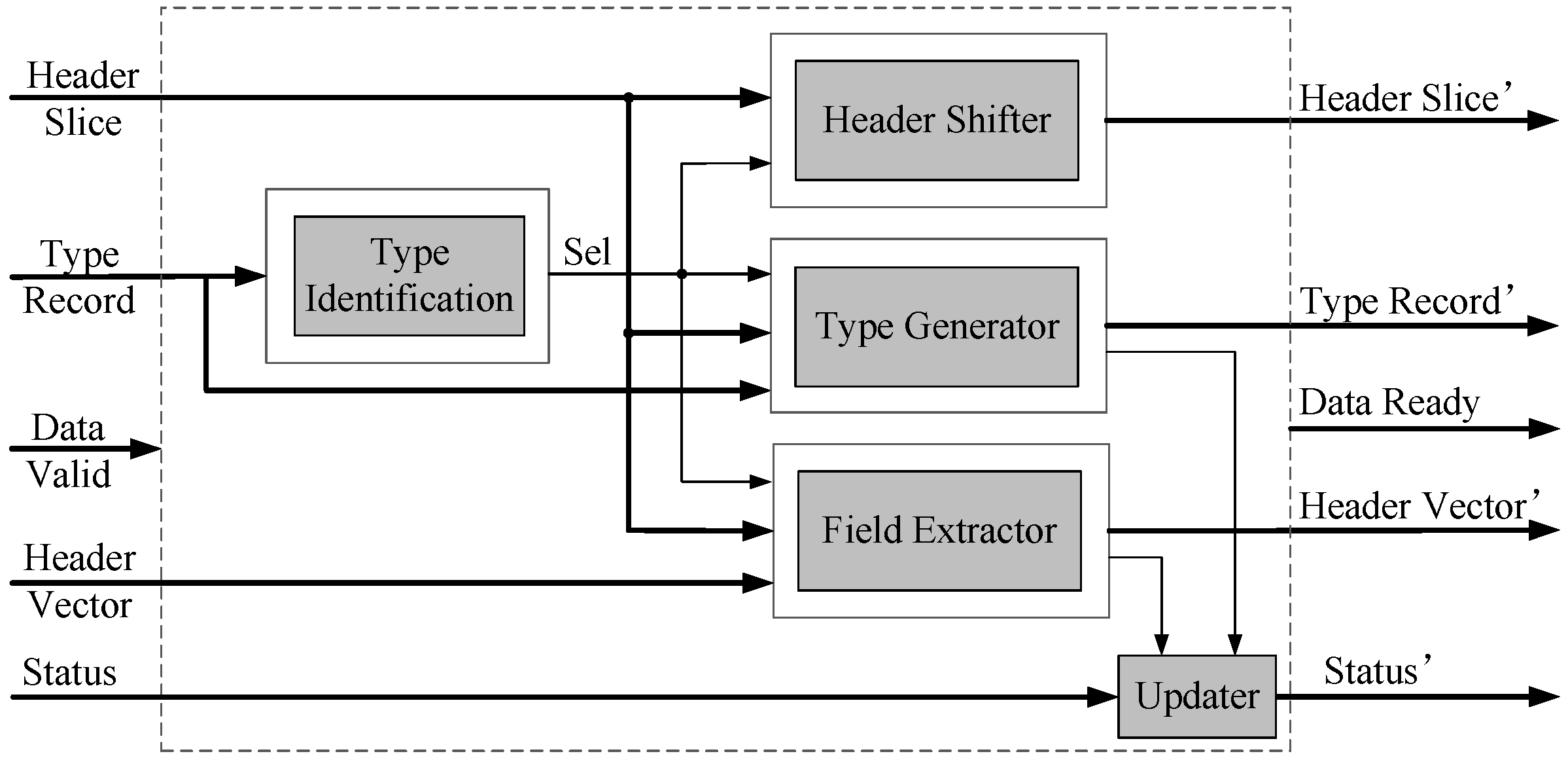

4.1. Microarchitecture

4.2. Function of Type Identification

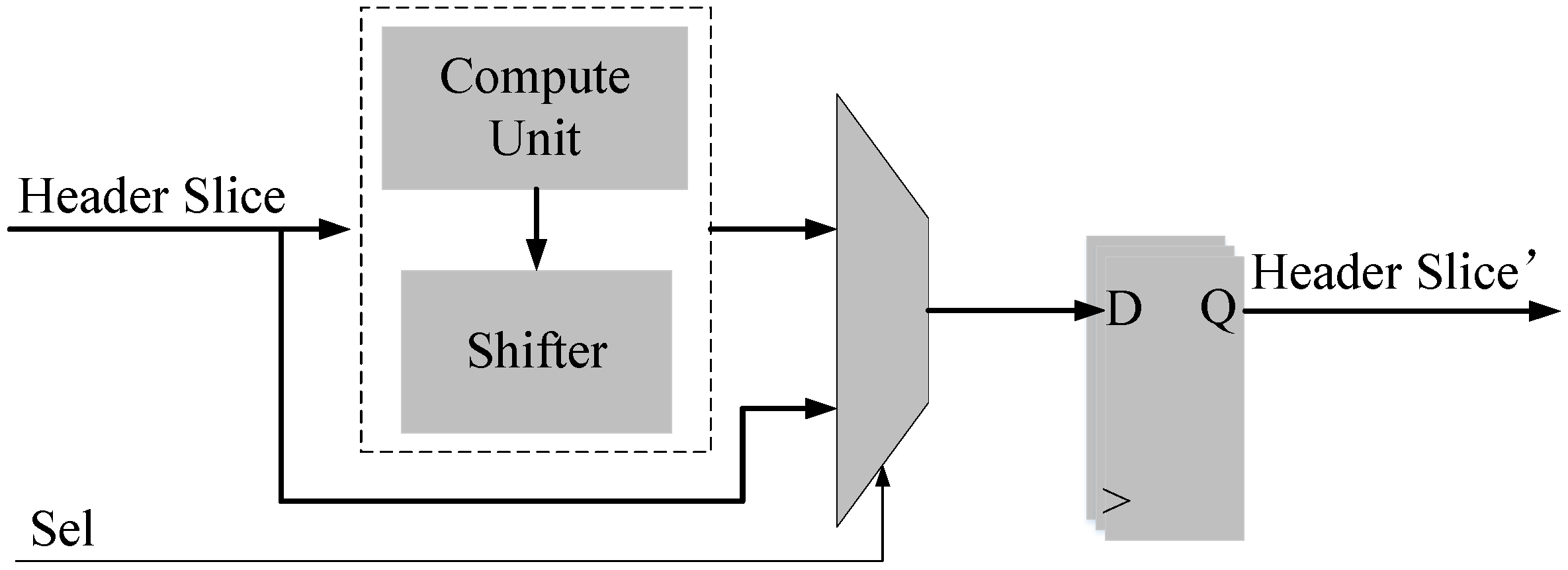

4.3. Function of Header Shifter

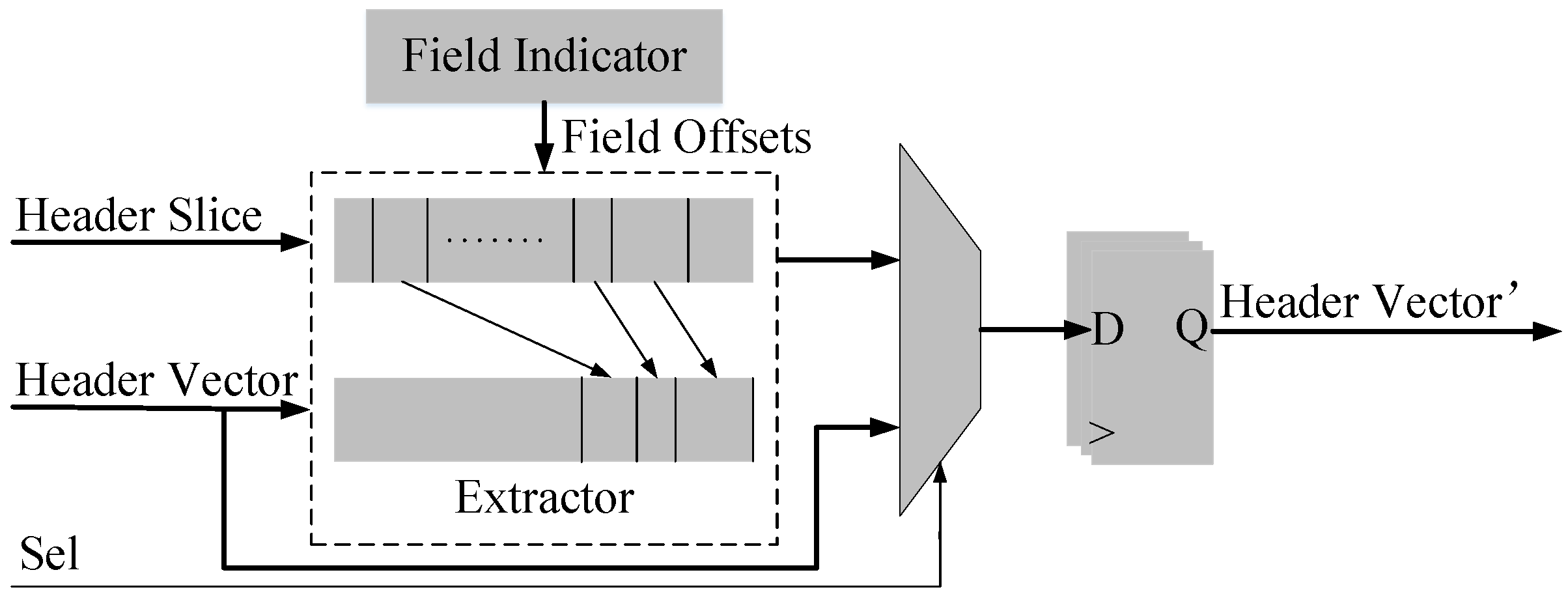

4.4. Function of Field Extraction

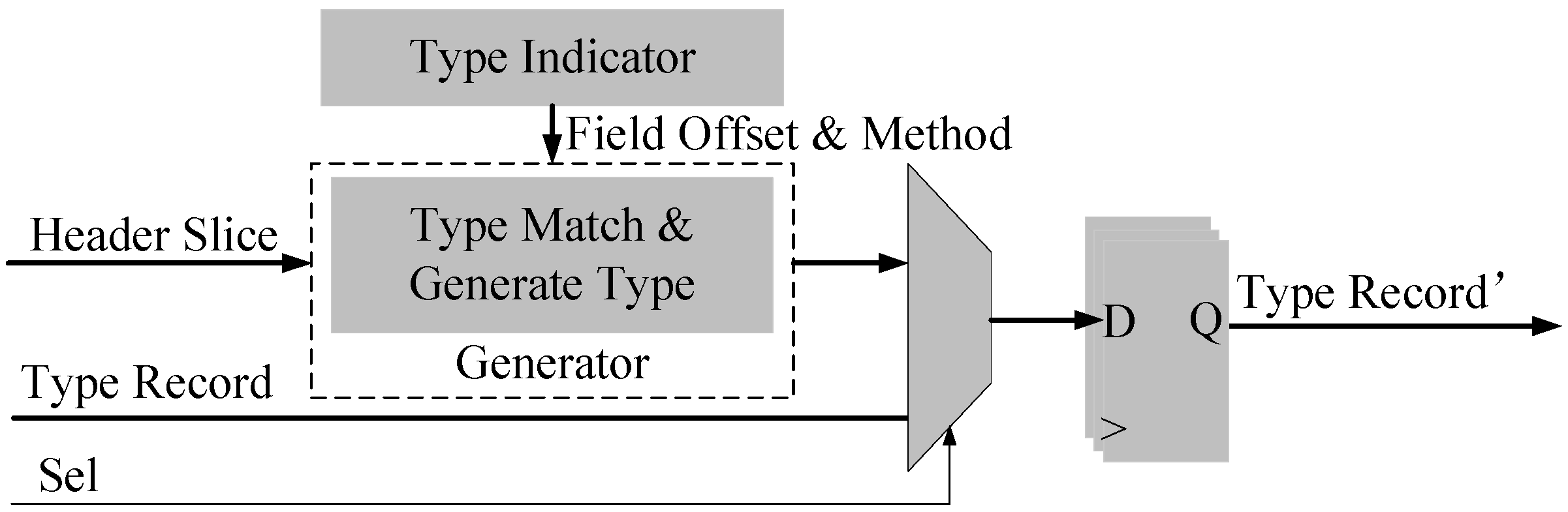

4.5. Function of Type Generation

4.6. Other Accessorial Circuits

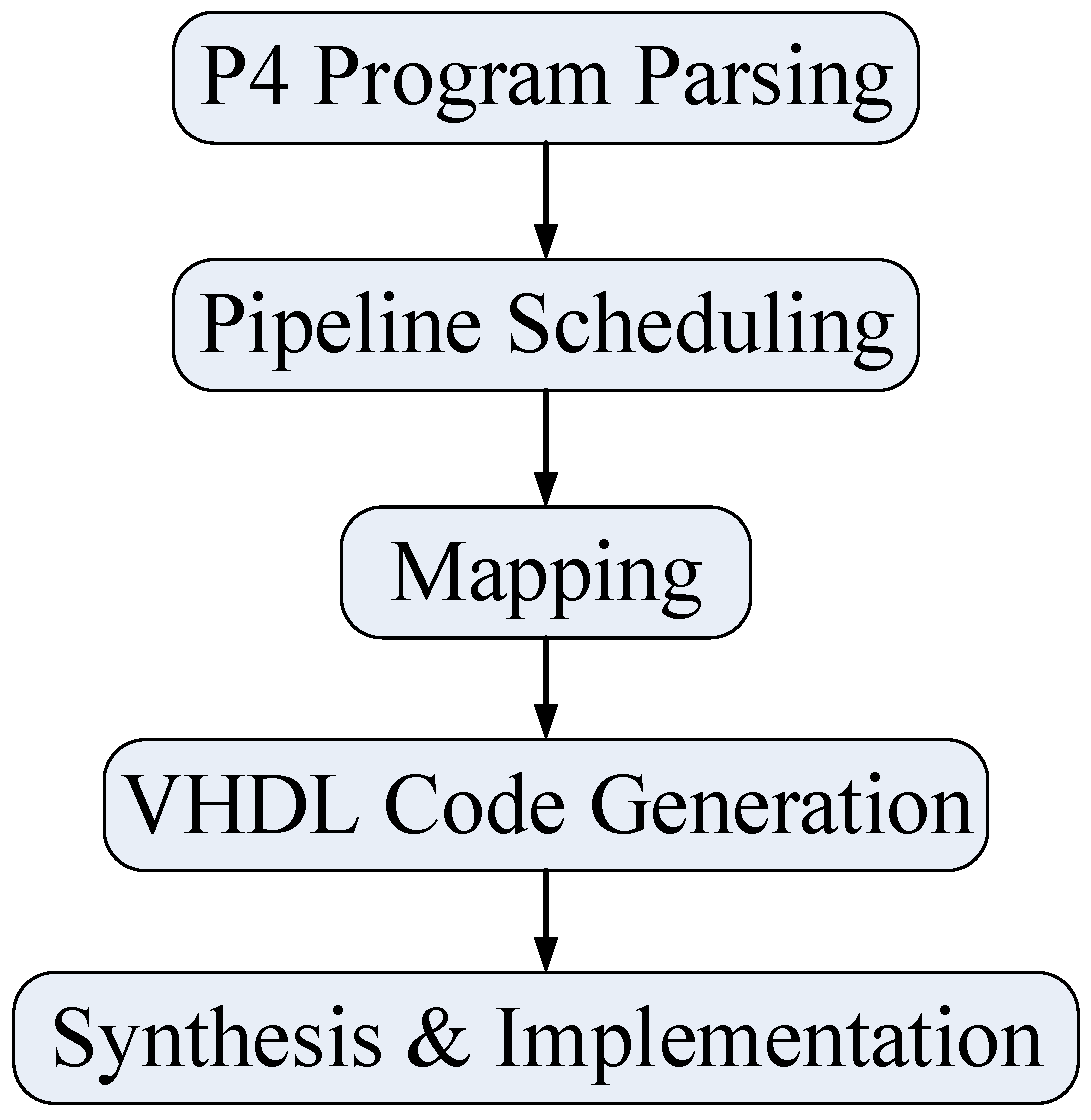

5. Compilation

5.1. Template Design

5.2. Compiling Process

6. Evaluations

6.1. Dynamic Shifter Evaluation

6.2. Parser Performance Evaluations

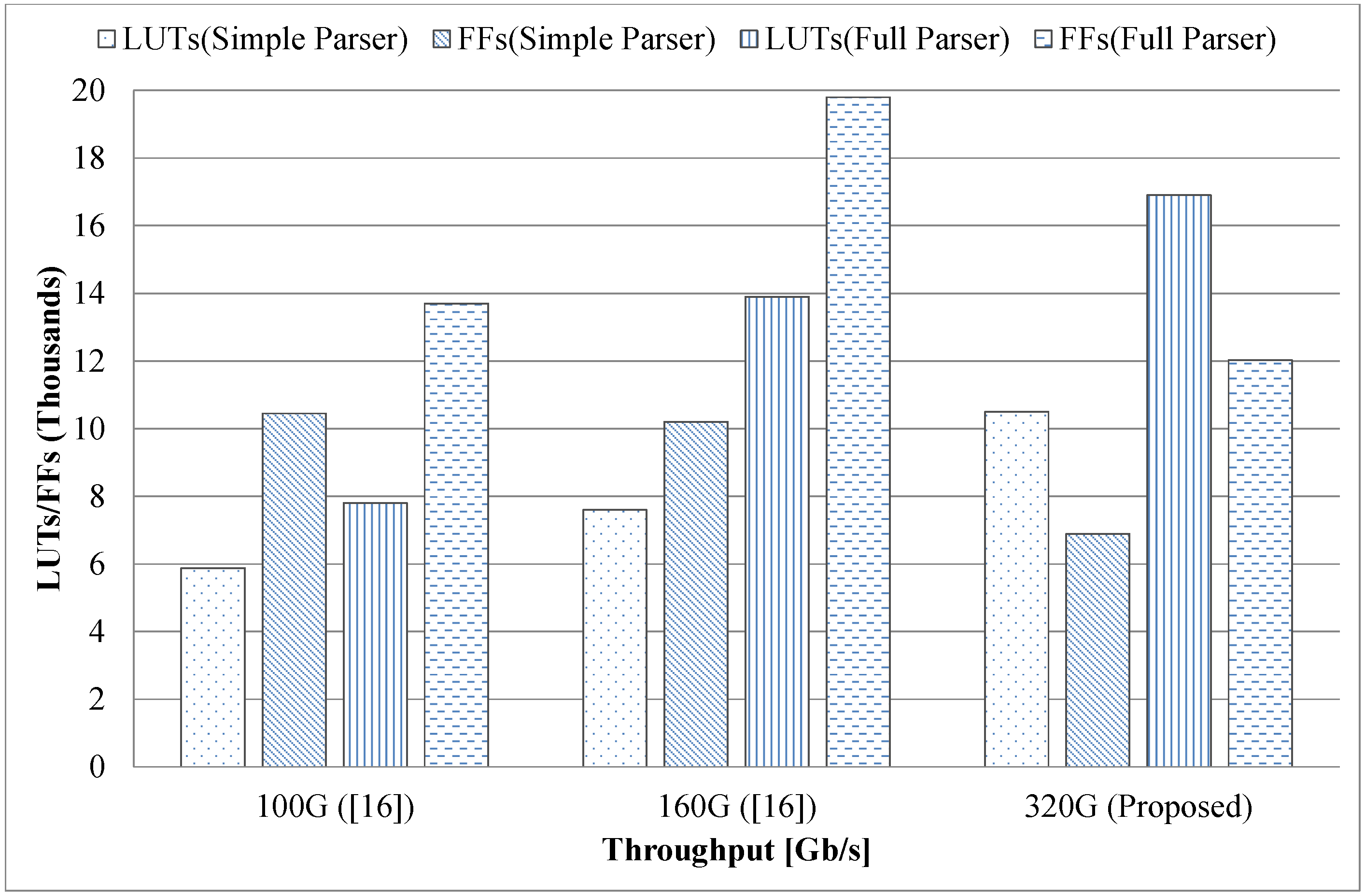

- Simple parser: Ethernet, IPv4/IPv6 (with 2 extensions), UDP, TCP, and ICMP/ICMPv6;

- Full parser: Same as the simple parser but also includes MPLS (with two nested headers) and VLAN (inner and outer).

7. Conclusions

- A hardware structure is configured to different parsers of varying performances.

- The pipeline of the parser is scheduled by the compiler to avoid conflicts and stalling—the fully pipelined parser processes one packet per cycle.

- Prebuilt function templates are used to generate the final VHDL code. The templates are all well-designed and have been thoroughly tested.

Author Contributions

Funding

Conflicts of Interest

References

- Switches for Every Network. Available online: https://www.cisco.com/c/en/us/products/switches/index.html (accessed on 15 December 2018).

- Network Switches. Available online: https://e.huawei.com/en/products/enterprise-networking/switches (accessed on 15 December 2018).

- Morgan, J. Intel® IXP2XXX Network Processor Architecture Overview. 2004. Available online: https://www.slideserve.com/bikita/intel-ixp2xxx-network-processor-architecture-overview (accessed on 5 May 2019).

- Networks, B. Tofino: World’s Fastest P4-Programmable Ethernet Switch ASICs. Available online: https://barefootnetworks.com/products/brief-tofino/ (accessed on 2 December 2018).

- Kohler, E.; Morris, R.; Chen, B.; Jannotti, J.; Kaashoek, M.F. The Click Modular Router. ACM Trans. Comput. Syst. 2000, 18, 263–297. [Google Scholar] [CrossRef]

- Lovato, J. Data Plane Development Kit (DPDK) Further Accelerates Packet Processing Workloads, Issues Most Robust Platform Release to Date. Available online: https://www.dpdk.org/announcements/2018/06/21/data-plane-development-kit-dpdk-further-accelerates-packet-processing-workloads-issues-most-robust-platform-release-to-date/ (accessed on 10 December 2018).

- Benzekki, K.; El Fergougui, A.; Elbelrhiti Elalaoui, A. Software-defined networking (SDN): A survey. Secur. Commun. Netw. 2016, 9, 5803–5833. [Google Scholar] [CrossRef]

- McKeown, N.; Anderson, T.; Balakrishnan, H.; Parulkar, G.; Peterson, L.; Rexford, J.; Shenker, S.; Turner, J. OpenFlow: Enabling Innovation in Campus Networks. SIGCOMM Comput. Commun. Rev. 2008, 38, 69–74. [Google Scholar] [CrossRef]

- Kreutz, D.; Ramos, F.M.; Verissimo, P. Towards Secure and Dependable Software-defined Networks. In Proceedings of the Second ACM SIGCOMM Workshop on Hot Topics in Software Defined Networking, Hong Kong, China, 16 August 2013; pp. 55–60. [Google Scholar] [CrossRef]

- Wan, T.; Abdou, A.; van Oorschot, P.C. A Framework and Comparative Analysis of Control Plane Security of SDN and Conventional Networks. arXiv 2017, arXiv:1703.06992. [Google Scholar]

- Bosshart, P.; Daly, D.; Gibb, G.; Izzard, M.; McKeown, N.; Rexford, J.; Schlesinger, C.; Talayco, D.; Vahdat, A.; Varghese, G.; et al. P4: Programming Protocol-independent Packet Processors. SIGCOMM Comput. Commun. Rev. 2014, 44, 87–95. [Google Scholar] [CrossRef]

- Li, B.; Tan, K.; Luo, L.L.; Peng, Y.; Luo, R.; Xu, N.; Xiong, Y.; Cheng, P.; Chen, E. ClickNP: Highly Flexible and High Performance Network Processing with Reconfigurable Hardware. In Proceedings of the 2016 ACM SIGCOMM Conference, Florianopolis, Brazil, 22–26 August 2016; pp. 1–14. [Google Scholar] [CrossRef]

- P4 Language. Available online: https://github.com/p4lang/ (accessed on 5 October 2018).

- Singh, S.; Greaves, D.J. Kiwi: Synthesis of FPGA Circuits from Parallel Programs. In Proceedings of the 2008 16th International Symposium on Field-Programmable Custom Computing Machines, Palo Alto, CA, USA, 14–15 April 2008; pp. 3–12. [Google Scholar] [CrossRef]

- Benácek, P.; Pu, V.; Kubátová, H. P4-to-VHDL: Automatic Generation of 100 Gbps Packet Parsers. In Proceedings of the 2016 IEEE 24th Annual International Symposium on Field-Programmable Custom Computing Machines (FCCM), Washington, DC, USA, 1–3 May 2016; pp. 148–155. [Google Scholar] [CrossRef]

- Santiago da Silva, J.; Boyer, F.R.; Langlois, J.P. P4-Compatible High-Level Synthesis of Low Latency 100 Gb/s Streaming Packet Parsers in FPGAs. In Proceedings of the 2018 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, Monterey, CA, USA, 25–27 February 2018; pp. 147–152. [Google Scholar] [CrossRef]

- Attig, M.; Brebner, G. 400 Gb/s Programmable Packet Parsing on a Single FPGA. In Proceedings of the 2011 ACM/IEEE Seventh Symposium on Architectures for Networking and Communications Systems, Brooklyn, NY, USA, 3–4 October 2011; pp. 12–23. [Google Scholar] [CrossRef]

- Gibb, G.; Varghese, G.; Horowitz, M.; McKeown, N. Design Principles for Packet Parsers. In Proceedings of the Architectures for Networking and Communications Systems, San Jose, CA, USA, 21–22 October 2013; pp. 13–24. [Google Scholar] [CrossRef]

- Bosshart, P.; Gibb, G.; Kim, H.S.; Varghese, G.; McKeown, N.; Izzard, M.; Mujica, F.; Horowitz, M. Forwarding Metamorphosis: Fast Programmable Match-action Processing in Hardware for SDN. SIGCOMM Comput. Commun. Rev. 2013, 43, 99–110. [Google Scholar] [CrossRef]

- Pus, V.; Kekely, L.; Korenek, J. Low-latency Modular Packet Header Parser for FPGA. In Proceedings of the Eighth ACM/IEEE Symposium on Architectures for Networking and Communications Systems, Austin, TX, USA, 29–30 October 2012; pp. 77–78. [Google Scholar] [CrossRef]

- Cabal, J.; Benáček, P.; Kekely, L.; Kekely, M.; Puš, V.; Kořenek, J. Configurable FPGA packet parser for terabit networks with guaranteed wire-speed throughput. In Proceedings of the 2018 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays, Monterey, CA, USA, 25–27 February 2018; pp. 249–258. [Google Scholar] [CrossRef]

- Yazdinejad, A.; Bohlooli, A.; Jamshidi, K. P4 to SDNet: Automatic Generation of an Efficient Protocol-Independent Packet Parser on Reconfigurable Hardware. In Proceedings of the 8th International Conference on Computer and Knowledge Engineering (ICCKE 2018), Mashhad, Iran, 25–26 October 2018; pp. 159–164. [Google Scholar] [CrossRef]

| Packet Header | Clock Number | |||

|---|---|---|---|---|

| 1 | 2 | 3 | 4 | |

| Ethernet→MPLS→IPv4 | Ethernet | MPLS | IPv4 | |

| Ethernet→IPv4 | Ethernet | Stall | IPv4 | |

| Ethernet→ | Stall | Ethernet | ||

| Type | Generics | Description |

|---|---|---|

| Port | Header width, header vector width, protocol length | Decide the ports’ width, as well as the primitives’ width—selector, comparator, and so on. |

| Functionality | Addresses, extract indicators | Decide what will be done to the header slice |

| Control | Protocol code, module code, optional module position | Related to the supported protocols |

| Type | LUTs | FFs | Clock Rate (MHz) |

|---|---|---|---|

| Fixed | 0 | 545 | 714.3 |

| Proposed | 1639 | 865 | 533.6 |

| Fully Dynamic | 5068 | 1025 | 306.6 |

| Parameters | Extract Type | Simple Parser | Full Parser | Notes |

|---|---|---|---|---|

| Pipeline Stage Number | 5 Tuple & All Fields | 5 | 7 | After the pipeline scheduling, it indicates how many cycles for parsing a packet |

| Header Slice Length | 5 Tuple & All Fields | 1072 | 1136 | Calculated by the supported longest packet header |

| Header Vector Length | 5 Tuple | 296 | 296 | Calculated by the lengths of all extracted fields |

| All Fields | 1072 | 1136 | ||

| Error Types | 5 Tuple & All Fields | Unrecognized, IPv4 Valid, TCP Valid | ||

| Individual Modules | 5 Tuple & All Fields | Such as protocol header length, type code, field indicators, positions, and so on, they vary due to different protocols. | ||

| Work | Performance | Resources | Extracted Fields | |||||

|---|---|---|---|---|---|---|---|---|

| Data Bus [bits] | Frequency | Throughput | Latency | LUTs | FFs | Slice Logic (LUTs + FFs) | ||

| Simple Parser | ||||||||

| Golden [15] | 512 | 195.3 | 100 | 15 | N/A | N/A | 5000 | TCP/IP 5-tuple |

| [15] | 512 | 195.3 | 100 | 29 | N/A | N/A | 12,000 | TCP/IP 5-tuple |

| [16] | 320 | 312.5 | 100 | 19.2 | 4270 | 6163 | 10,433 | TCP/IP 5-tuple |

| [16] | 320 | 312.5 | 100 | 19.2 | 5888 | 10,448 | 16,336 | All fields |

| Proposed | 1072 | 346 | 370 | 14.45 | 4884 | 3135 | 8019 | TCP/IP 5-tuple |

| Proposed | 1072 | 334.4 | 358 | 14.55 | 10,495 | 6886 | 17,381 | All fields |

| Full Parser | ||||||||

| Golden [15] | 512 | 195.3 | 100 | 27 | N/A | N/A | 8000 | TCP/IP 5-tuple |

| [15] | 512 | 195.3 | 100 | 46.1 | 10,103 | 5537 | 15,640 | TCP/IP 5-tuple |

| [16] | 320 | 312.5 | 100 | 25.6 | 6046 | 8900 | 14,946 | TCP/IP 5-tuple |

| [16] | 320 | 312.5 | 100 | 25.6 | 7831 | 13,671 | 21,502 | All fields |

| Proposed | 1136 | 320.5 | 364 | 21.84 | 9515 | 6930 | 16,445 | TCP/IP 5-tuple |

| Proposed | 1136 | 279.3 | 317 | 25.06 | 16,888 | 12,033 | 28,921 | All fields |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Cao, Z.; Zhang, H.; Li, J.; Wen, M.; Zhang, C. A Fast Approach for Generating Efficient Parsers on FPGAs. Symmetry 2019, 11, 1265. https://doi.org/10.3390/sym11101265

Cao Z, Zhang H, Li J, Wen M, Zhang C. A Fast Approach for Generating Efficient Parsers on FPGAs. Symmetry. 2019; 11(10):1265. https://doi.org/10.3390/sym11101265

Chicago/Turabian StyleCao, Zhuang, Huiguo Zhang, Junnan Li, Mei Wen, and Chunyuan Zhang. 2019. "A Fast Approach for Generating Efficient Parsers on FPGAs" Symmetry 11, no. 10: 1265. https://doi.org/10.3390/sym11101265

APA StyleCao, Z., Zhang, H., Li, J., Wen, M., & Zhang, C. (2019). A Fast Approach for Generating Efficient Parsers on FPGAs. Symmetry, 11(10), 1265. https://doi.org/10.3390/sym11101265