1. Introduction

Due to its great properties, the implicit curve has many applications. As a result, how to render implicit curves and surfaces is an important topic in computer graphics [

1], which usually adopts four techniques: (1) representation conversion; (2) curve tracking; (3) space subdivision; and (4) symbolic computation. Using approximate distance tests to replace the Euclidean distance test, a practical rendering algorithm is proposed to rasterize algebraic curves in [

2]. Employing the idea that field functions can be combined both on their values and gradients, a set of binary composition operators is developed to tackle four major problems in constructive modeling in [

3]. As a powerful tool for implicit shape modeling, a new type of bivariate spline function is applied in [

4], and it can be created from any given set of 2D polygons that divides the 2D plane into any required degree of smoothness. Furthermore, the spline basis functions created by the proposed procedure are piecewise polynomials and explicit in an analytical form.

Aside from rendering of computer graphics, implicit curves also play an important role in other aspects of computer graphics. To facilitate applications, it is important to compute the intersection of parametric and algebraic curves. Elimination theory and matrix determinant expression of the resultant in the intersection equations are used in [

5]. Some researchers try to transform the problem of intersection into that of computing the eigenvalues and eigenvectors of a numeric matrix. Similar to elimination theory and matrix determinant expression, combining the marching methods with the algebraic formulation generates an efficient algorithm to compute the intersection of algebraic and NURBSsurfaces in [

6]. For the cases with a degenerate intersection of two quadric surfaces, which are frequently applied in geometric and solid modeling, a simple method is proposed to determine the conic types without actually computing the intersection and to enumerate all possible conic types in [

7]. M.Aizenshtein et al. [

8] present a solver to robustly solve well-constrained

transcendental systems, which applies to curve-curve, curve-surface intersections, ray-trap and geometric constraint problems.

To improve implicit modeling, many techniques have been developed to compute the distance between a point and an implicit curve or surface. In order to compute the bounded Hausdorff distance between two real space algebraic curves, a theoretical result can reduce the bound of the Hausdorff distance of algebraic curves from the spatial to the planar case in [

9]. Ron [

10] discusses and analyzes formulas to calculate the curvature of implicit planar curves, the curvature and torsion of implicit space curves and the mean and Gaussian curvature for implicit surfaces, as well as curvature formulas to higher dimensions. Using parametric approximation of an implicit curve or surface, Thomas et al. [

11] introduce a relatively small number of low-degree curve segments or surface patches to approximate an implicit curve or surface accurately and further constructs monoid curves and surfaces after eliminating the undesirable singularities and the undesirable branches normally associated with implicit representation. Slightly different from ref. [

11], Eva et al. [

12] use support function representation to identify and approximate monotonous segments of algebraic curves. Anderson et al. [

13] present an efficient and robust algorithm to compute the foot points for planar implicit curves.

Contribution: An integrated hybrid second order algorithm is presented for orthogonal projection onto planar implicit curves. For any test point p, any planar implicit curve with or without singular points and any order of the planar implicit curve, any distance between the test point and the planar implicit curve, the algorithm could be convergent. It consists of two parts: the hybrid second order algorithm and the initial iterative value estimation algorithm.

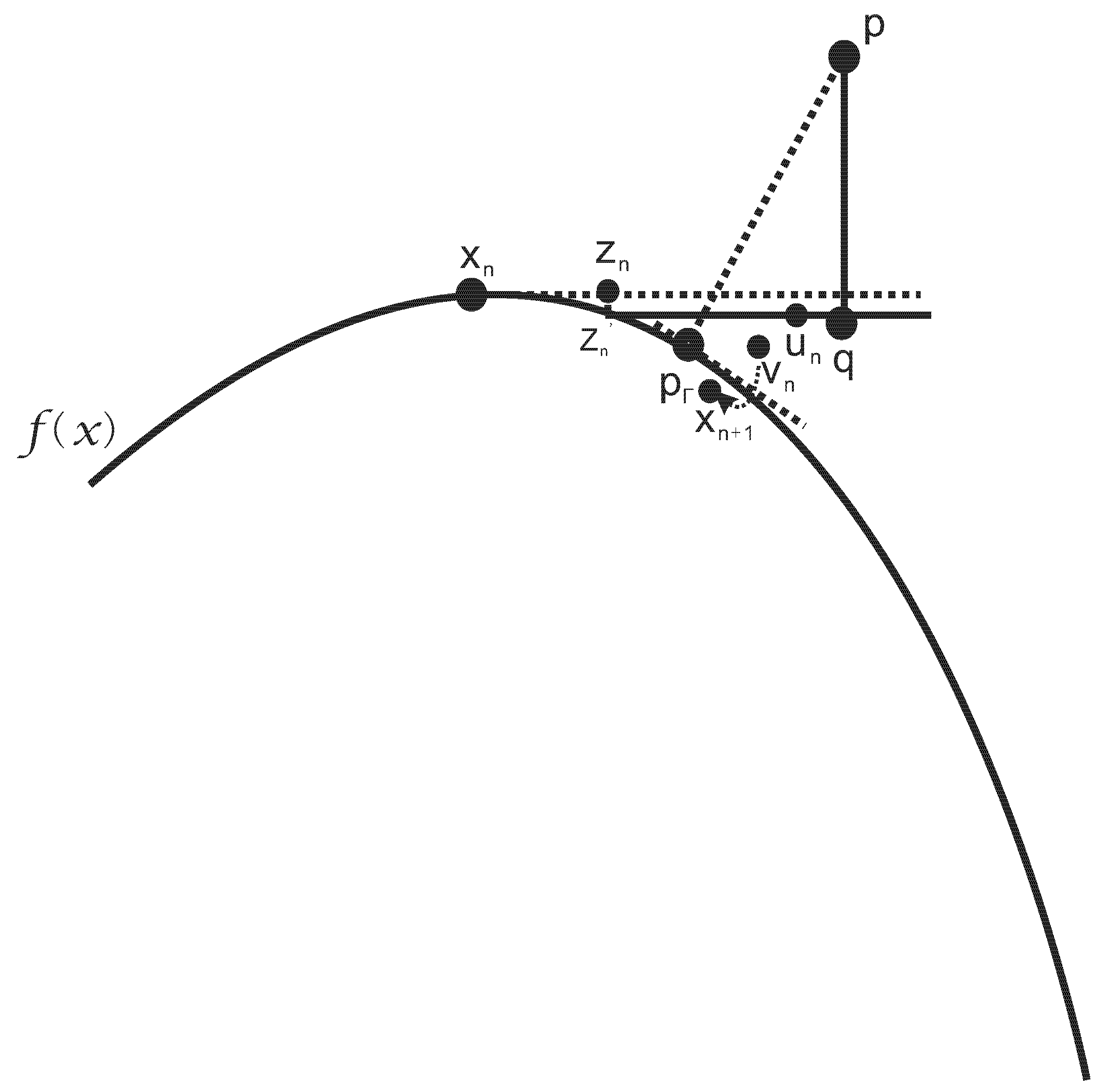

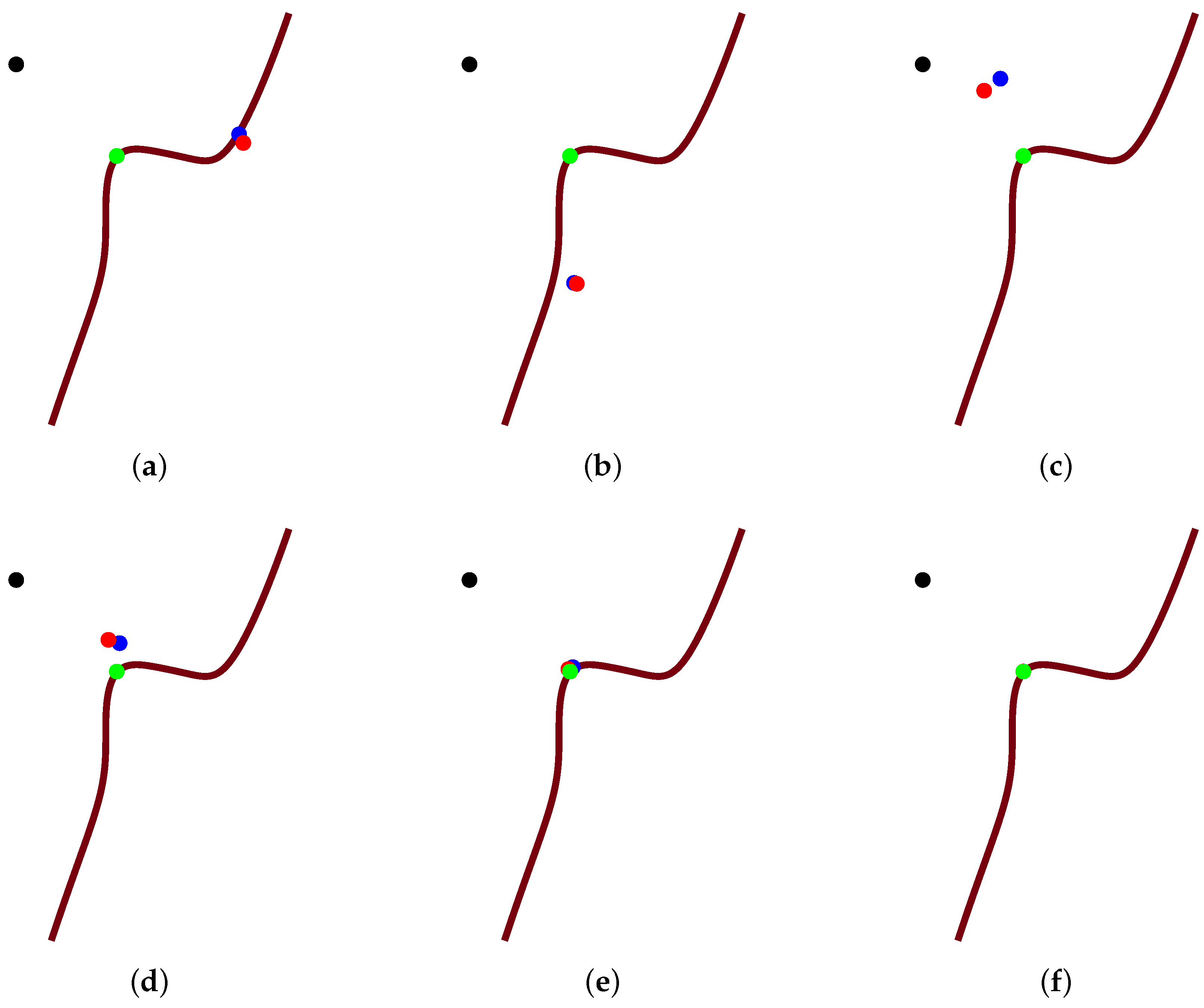

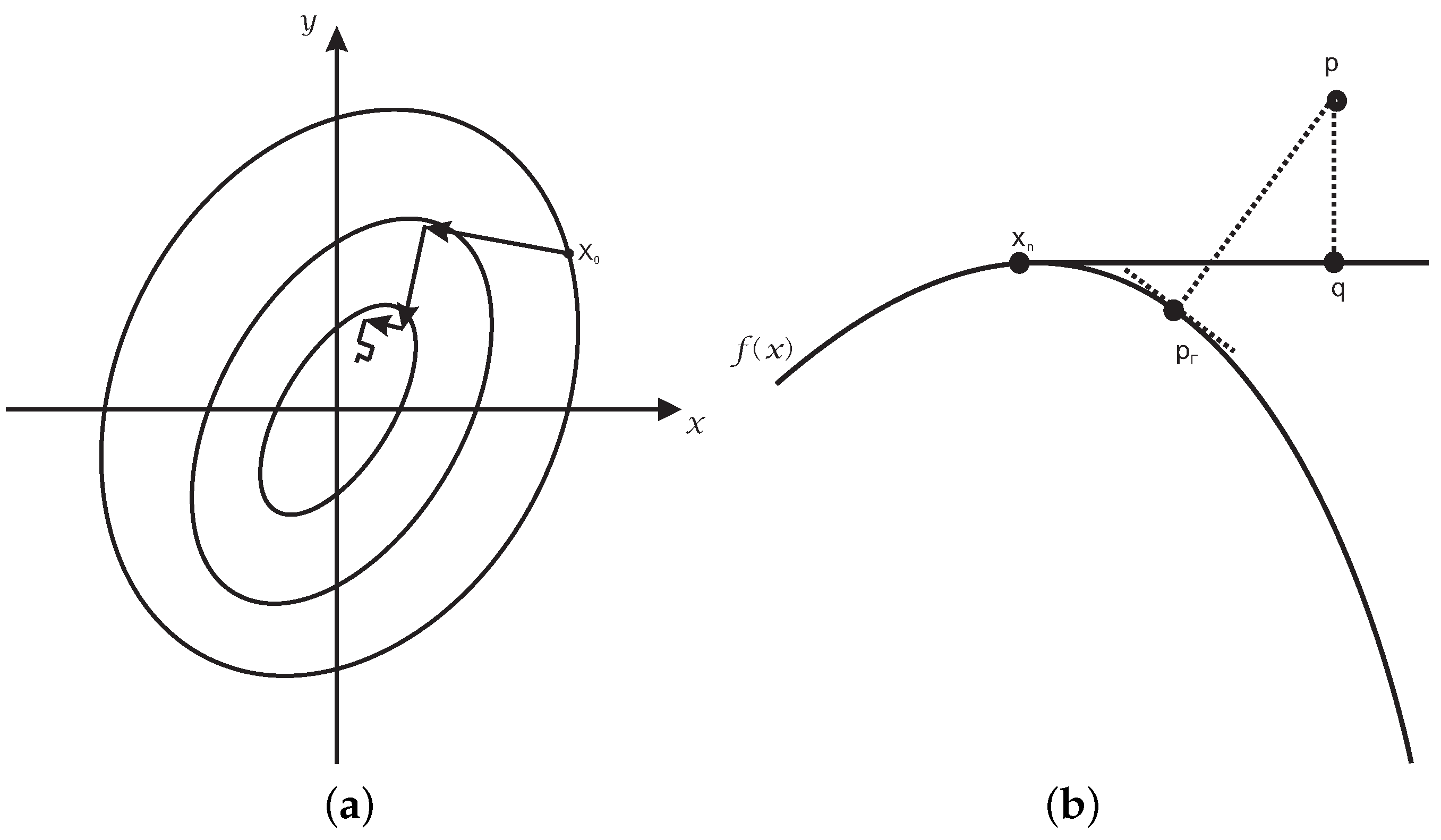

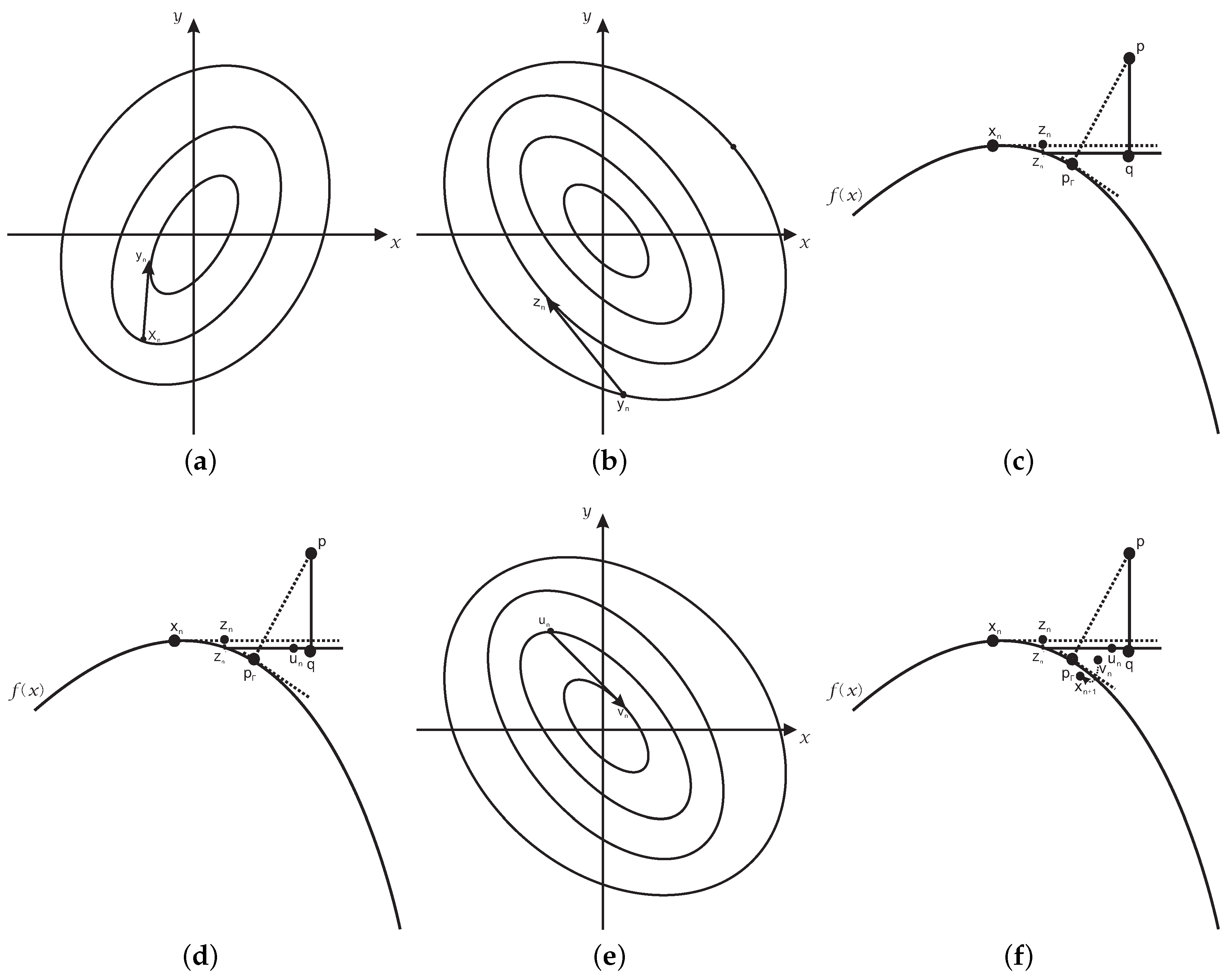

The hybrid second order algorithm fuses the three basic ideas: (1) the tangent line orthogonal iteration method with one correction; (2) the steepest descent method to force the iteration point to fall on the planar implicit curve as much as it can; (3) Newton–Raphson’s iterative method to accelerate iteration.

Therefore, the hybrid second order algorithm is composed of six steps. The first step uses the steepest descent method of Newton’s iterative method to force the iterative value of the initial value to lie on the planar implicit curve, which is not associated with the test point p. In the second step, Newton’s iterative method employs the relationship determined by the test point p to accelerate the iteration process. The third step finds the orthogonal projection point q on the tangent line, which goes through the initial iterative point, of a test point p. The fourth step gets the linear orthogonal increment value. The same relationship in the second step is used once more to accelerate the iteration process in the fifth step. The final step gives some correction to the result of the iterative value in the fourth and fifth step.

One problem for the hybrid second order algorithm is that it appears divergent if the test point p lies particularly far away from the planar implicit curve. Since it has been found that when the initial iterative point is close to the orthogonal projection point , no matter how far away the test point p is from the planar implicit curve, it will be convergent, an algorithm, named the initial iterative value estimation algorithm, is proposed to drive the initial iterative value toward the orthogonal projection point as much as possible. Accordingly, the second order algorithm with the initial iterative value estimation algorithm is named as the integrated hybrid second order algorithm.

The rest of this paper is organized as follows.

Section 2 presents related work for orthogonal projection onto the planar implicit curve.

Section 3 presents the integrated hybrid second order algorithm for orthogonal projection onto the planar implicit curve. In

Section 4, convergent analysis for the integrated hybrid second order algorithm is described. The experimental results including the evaluation of performance data are given in

Section 5. Finally,

Section 6 and

Section 7 conclude the paper.

4. Convergence Analysis

In this section, the convergence analysis for the integrated hybrid second order algorithm is presented. Proofs indicate the convergence order of the algorithm is up to two, and Algorithm 3 is independent of the initial value.

Theorem 1. Given an implicit function that can be parameterized, the convergence order of the iterative Formula (21) is up to two.

Proof. Without loss of generality, assume that the parametric representation of the planar implicit curve is . Suppose that parameter is the orthogonal projection point of test point onto the parametric curve . ☐

The first part will derive that the order of convergence of the first step for the iterative Formula (21) is up to two. It is not difficult to know the iteration equation in the corresponding Newton’s second order parameterized iterative method, i.e., the first step for the iterative Formula (21):

Taylor expansion around

generates:

where

and

. Thus, it is easy to have:

From (22)–(24), the error iteration can be expressed as,

where

.

The second part will prove that the order of convergence of the second step for the iterative Formula (21) is two. It is easy to get the corresponding parameterized iterative equation for Newton’s second-order iterative method, essentially the second step for the iterative Formula (21),

where:

Using Taylor expansion around

, it is easy to get:

where

and

. Thus, it is easy to get:

According to Formula (26)–(29), after Taylor expansion and simplifying, the error relationship can be expressed as follows,

where

. Because the fifth step is completely equal to the second step of the iterative Formula (21) and outputs from Newton’s iterative method are closely related with test point p, the order of convergence for the fifth step of the iterative Formula (21) is also two.

The third part will derive that the order of convergence of the third step and fourth step for iterative Formula (21) is one. According to the first order method for orthogonal projection onto the parametric curve [

32,

39,

40], the footpoint

of the parameterized iterative equation of the third step of the iterative Formula (21) can be expressed in the following way,

From the iterative Equation (

31) and combining with the fourth step of the iterative Formula (21), it is easy to have:

where

denotes the scalar product of vectors

. Let

, and repeat the procedure (32) until

is less than a given tolerance

. Because parameter

is the orthogonal projection point of test point

onto the parametric curve

, it is not difficult to verify,

Because the footpoint q is the intersection of the tangent line of the parametric curve

at

and the perpendicular line

determined by the test point p, the equation of the tangent line of the parametric curve

at

is:

At the same time, the vector of the line segment connected by the test point p and the point

is:

The vector (35) and the tangent vector

of the tangent line (34) are mutually orthogonal, so the parameter value

of the tangent line (34) is:

Substituting (36) into (34) and simplifying, it is not difficult to get the footpoint q =

,

Substituting (37) into (32) and simplifying, it is easy to obtain,

From (33) and combined with (38), using Taylor expansion by the symbolic computation software Maple 18, it is easy to get:

Simplifying (30), it is easy to obtain:

where the symbol

denotes the coefficient in the first order error

of the right-hand side of Formula (40). The result shows that the third step and the fourth step of the iterative Formula (21) comprise the first order convergence. According to the iterative Formula (21) and combined with three error iteration relationships (25), (30) and (40), the convergent order of each iterative formula is not more than two. Then, the iterative error relationship of the iterative Formula (21) can be expressed as follows:

To sum up, the convergence order of the iterative Formula (21) is up to two.

Theorem 2. The convergence of the hybrid second order algorithm (Algorithm 1) is a compromise method between the local and global method.

Proof. The third step and fourth step of the iterative Formula (21) of Algorithm 1 are equivalent to the foot point algorithm for implicit curves in [

32]. The work in [

14] has explained that the convergence of the foot point algorithm for the implicit curve proposed in [

14] is a compromise method between the local and global method. Then, the convergence of Algorithm 1 is also a compromise method between the local and global method. Namely, if a test point is close to the foot point of the planar implicit curve, the convergence of Algorithm 1 is independent of the initial iterative value, and if not, the convergence of Algorithm 1 is dependent on the initial iterative value. The sixth step in Algorithm 1 promotes the robustness. However, the third step, the fourth step and the sixth step in Algorithm 1 still constitute a compromise method between the local and global ones. Certainly, the first step (steepest descent method) of Algorithm 1 can make the iterative point fall on the planar implicit curve and improves its robustness. The second step and the fifth step constitute the classical Newton’s iterative method to accelerate convergence and improve robustness in some way. The steepest descent method of the first step and Newton’s iterative method of the second step and the fifth step in Algorithm 1 are more robust and efficient, but they can change the fact that Algorithm 1 is the compromise method between the local and global ones. To sum up, Algorithm 1 is the compromise method between the local and global ones. ☐

Theorem 3. The convergence of the integrated hybrid second order algorithm (Algorithm 3) is independent of the initial iterative value.

Proof. The integrated hybrid second order algorithm (Algorithm 3) is composed of two parts sub-algorithms (Algorithm 1 and Algorithm 2). From Theorem 2, Algorithm 1 is a compromise method between the local and global method. Of course, whether the test point p is very far away or not far away from the planar implicit curve , if the initial iterative value lies close to the orthogonal projection point , Algorithm 1 could be convergent. In any case, Algorithm 2 can change the initial iterative value of Algorithm 1 sufficiently close to the orthogonal projection point to ensure the convergence of Algorithm 1. In this way, Algorithm 3 can converge for any initial iterative value. Therefore, the convergence of the integrated hybrid second order algorithm (Algorithm 3) is independent of the initial value. ☐

5. Results of the Comparison

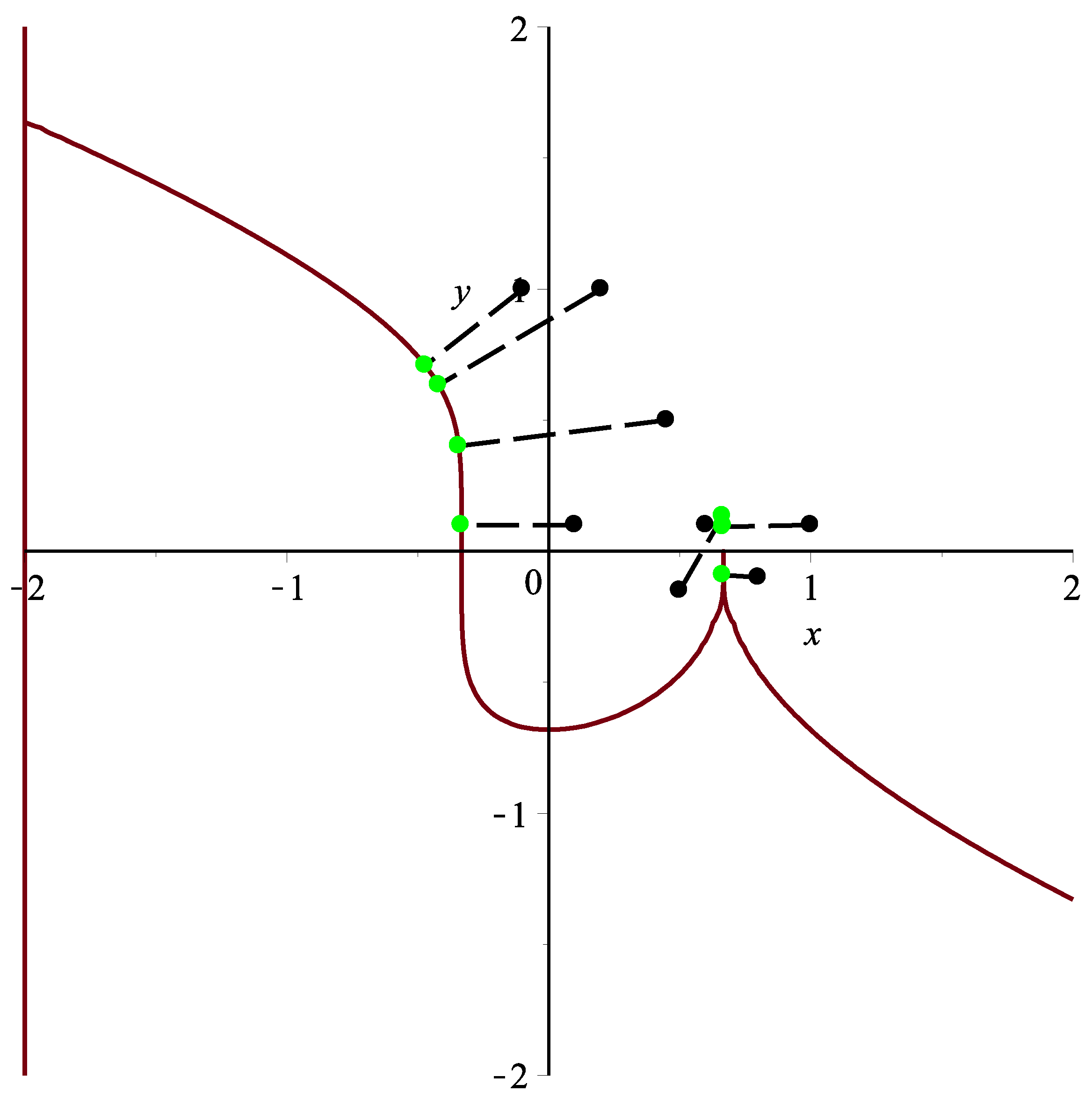

Example 1. ([14]) Assume a planar implicit curve . One thousand and six hundred test points from the square are taken. The integrated hybrid second order algorithm (Algorithm 3) can orthogonally project all 1600 points onto planar implicit curve Γ. It satisfies the relationships and . It consists of two steps to select/sample test points:

(1) Uniformly divide planar square of the planar implicit curve into sub-regions , where

(2) Randomly select a test point in each sub-region and then an initial iterative value in its vicinity.

The same procedure to select/sample test points applies for other examples below.

One test point

in the first case is specified. Using Algorithm 3, the corresponding orthogonal projection point is

= (−0.47144354751227009, 0.70879213227958752), and the initial iterative values

are (−0.1,0.8), (−0.1,0.9), (−0.1,1.1), (−0.1,1.2), (−0.2,0.8), (−0.2,0.9), (−0.2,1.1) and (−0.2,1.2), respectively. Each initial iterative value iterates 12 times, respectively, yielding 12 different iteration times in nanoseconds. In

Table 3, the average running times of Algorithm 3 for eight different initial iterative values are 1,099,243, 582,078, 525,942, 490,537, 392,090, 364,817, 369,739 and 367,654 nanoseconds, respectively. In the end, the overall average running time is 524,013 nanoseconds, while the overall average running time of the circle shrinking algorithm in [

14] is 8.9 ms under the same initial iteration condition.

The iterative error analysis for the test point

under the same condition is presented in

Table 4 with initial iterative points in the first row. The distance function

is used to compute error values in other rows than the first one, and other examples below apply the same criterion of the distance function. The left column in

Table 4 denotes the corresponding number of iterations, which is the same for Tables 8–15.

Another test point

in the second case is specified. Using Algorithm 3, the corresponding orthogonal projection point is

= (−0.42011639143389254, 0.63408011508207950), and the initial iterative values

are (0.3,0.9), (0.3,1.2), (0.4,0.9), (0.3,0.7), (0.1,0.8), (0.1,0.6), (0.4,1.1), (0.4,1.3), respectively. Each initial iterative value iterates 10 times, respectively, yielding 10 different iteration times in nanoseconds. In

Table 5, the average running times of Algorithm 3 for eight different initial iterative values are 1,152,664, 844,250, 525,540, 1,106,098, 1,280,232, 1,406,429, 516,779 and 752,429 nanoseconds, respectively. In the end, the overall average running time is 948,053 nanoseconds, while the overall average running time of the circle shrinking algorithm in [

14] is 12.6 ms under the same initial iteration condition.

The third test point

in the third case is specified. Using Algorithm 3, the corresponding orthogonal projection point is

=

,

, and the initial iterative values

are (0.1,0.2), (0.1,0.3), (0.1,0.4), (0.2,0.2), (0.2,0.3), (0.3,0.2), (0.3,0.3), (0.3,0.4), respectively. Each initial iterative value iterates 12 times, respectively, yielding 12 different iteration times in nanosecond. In

Table 6, the average running times of Algorithm 3 for eight different initial iterative values are 183,515, 680,338, 704,694, 192,564, 601,235, 161,127, 713,697 and 1,034,443 nanoseconds, respectively. In the end, the overall average running time is 533,952 nanoseconds, while the overall average running time of the circle shrinking algorithm in [

14] is 9.4 ms under the same initial iteration condition.

To sum up, Algorithm 3 is faster than the circle shrinking algorithm in [

14] (see

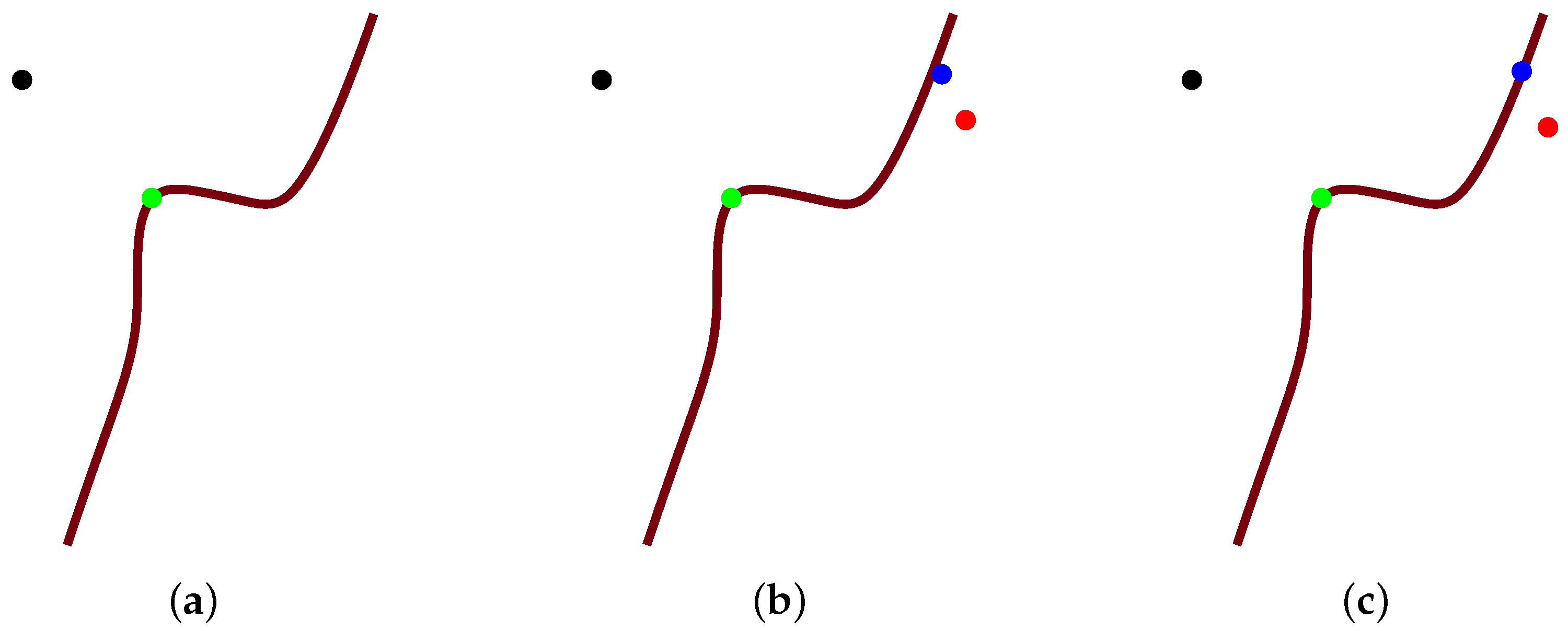

Figure 8).

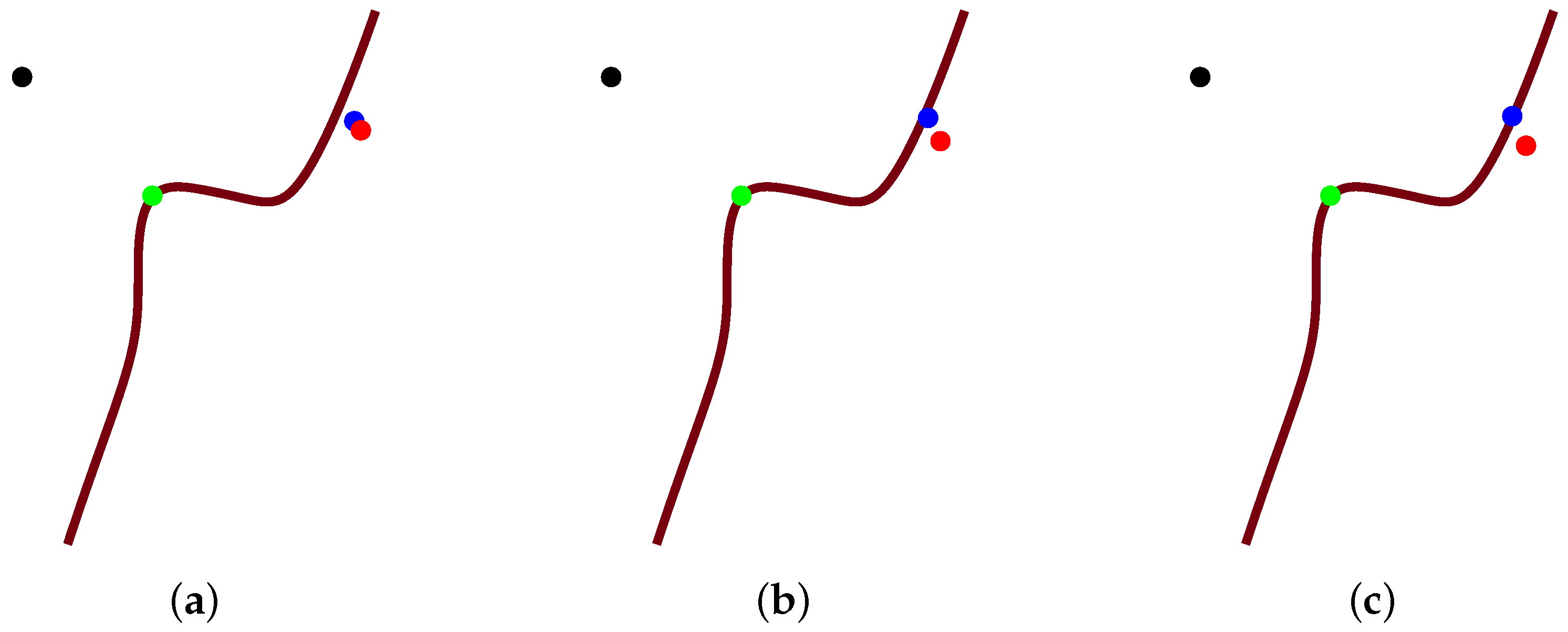

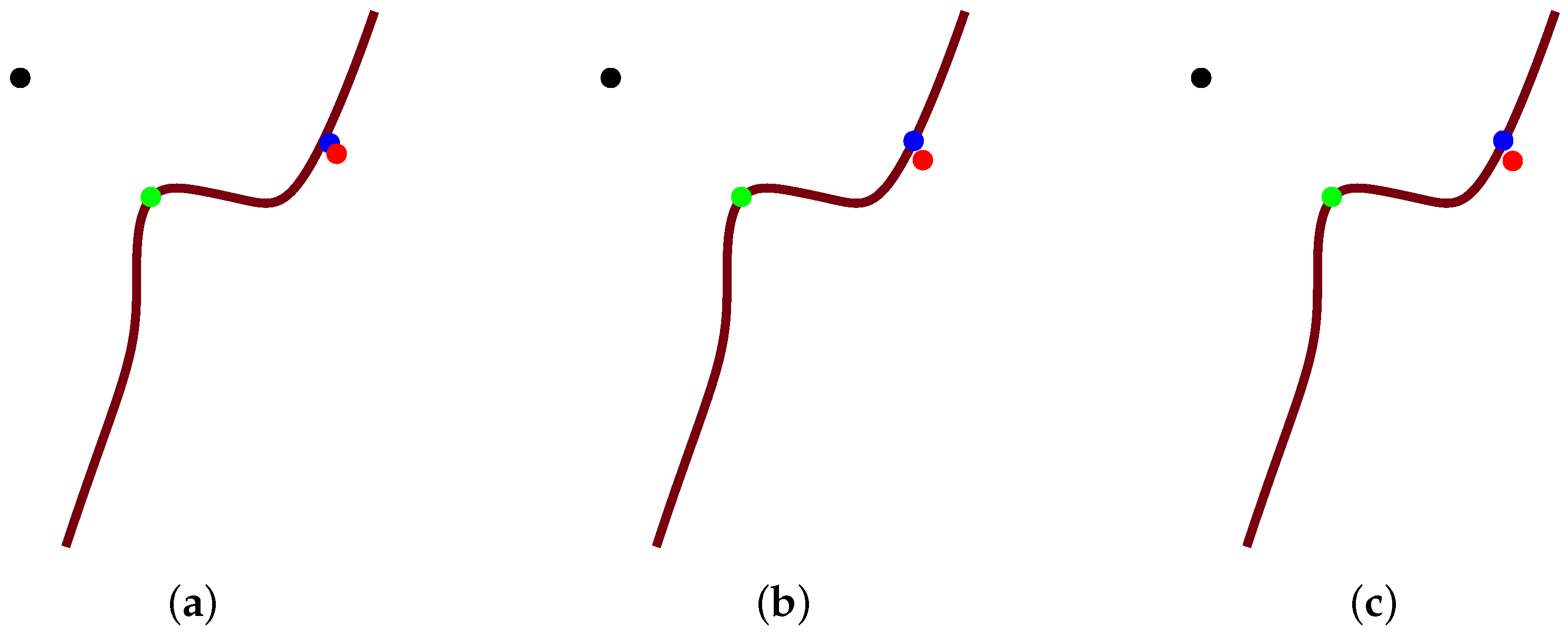

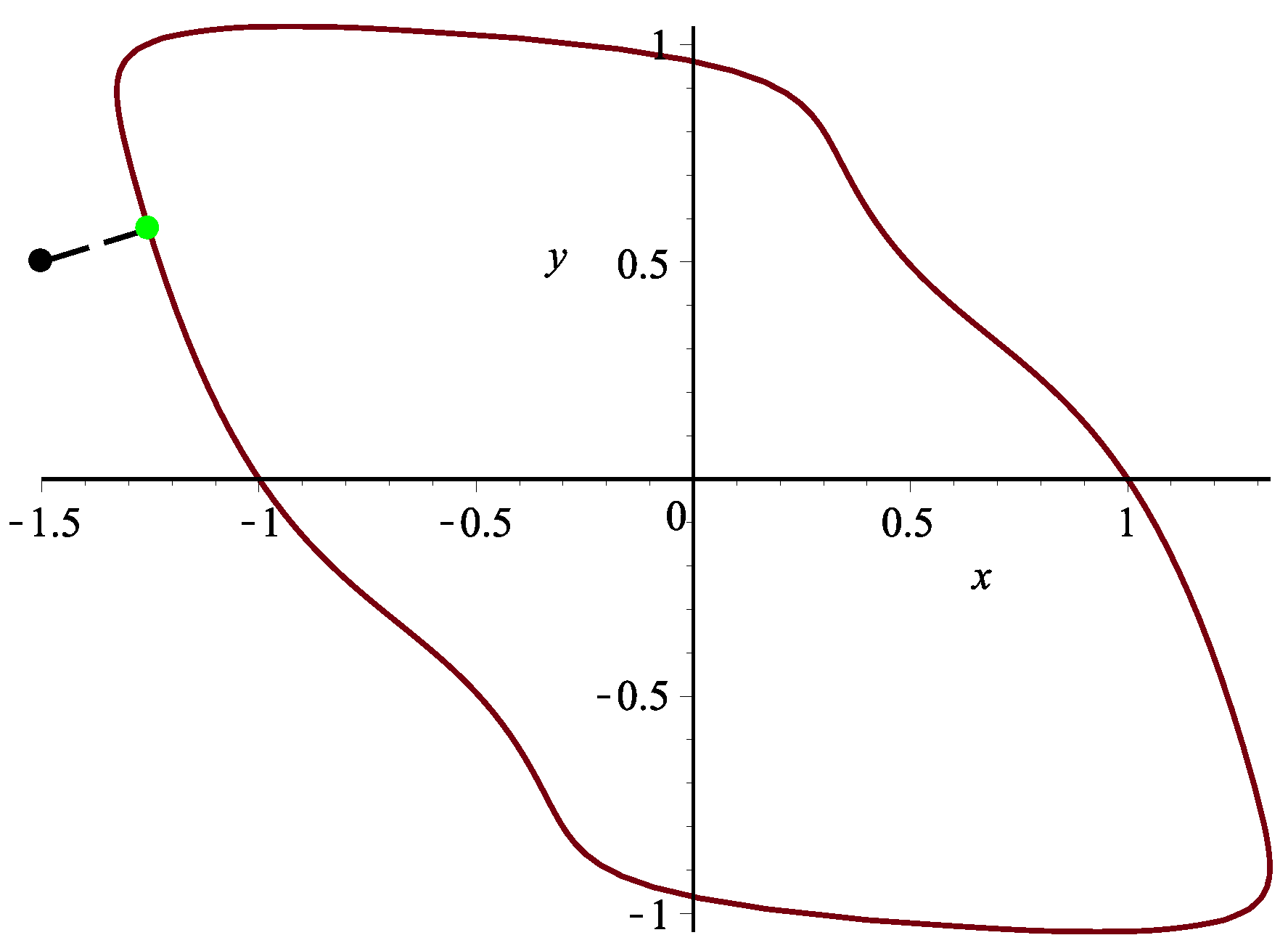

Example 2. Assume a planar implicit curve . Nine hundred test points from square are taken. Algorithm 3 can rightly orthogonally project all 900 points onto planar implicit curve Γ. It satisfies the relationships and . One test point in this case is specified. Using Algorithm 3, the corresponding orthogonal projection point is = (−1.2539379406252056281, 0.57568037362837924613), and the initial iterative values are (−1.4,0.6), (−1.3,0.7), (−1.2,0.6), (−1.6,0.4), (−1.4,0.7), (−1.4,0.3), (−1.3,0.6), (−1.2,0.8), respectively. Each initial iterative value iterates 10 times, respectively, yielding 10 different iteration times in nanoseconds. In Table 7, the average running times of Algorithm 3 for eight different initial iterative values are 4,487,449, 4,202,203, 4,555,396, 4,533,326, 4,304,781, 4,163,107, 4,268,792 and 4,378,470 nanoseconds, respectively. In the end, the overall average running time is 4,361,691 nanoseconds (see Figure 9). The iterative error analysis for the test point p = (−1.5,0.5) under the same condition is presented in

Table 8 with initial iterative points in the first row.

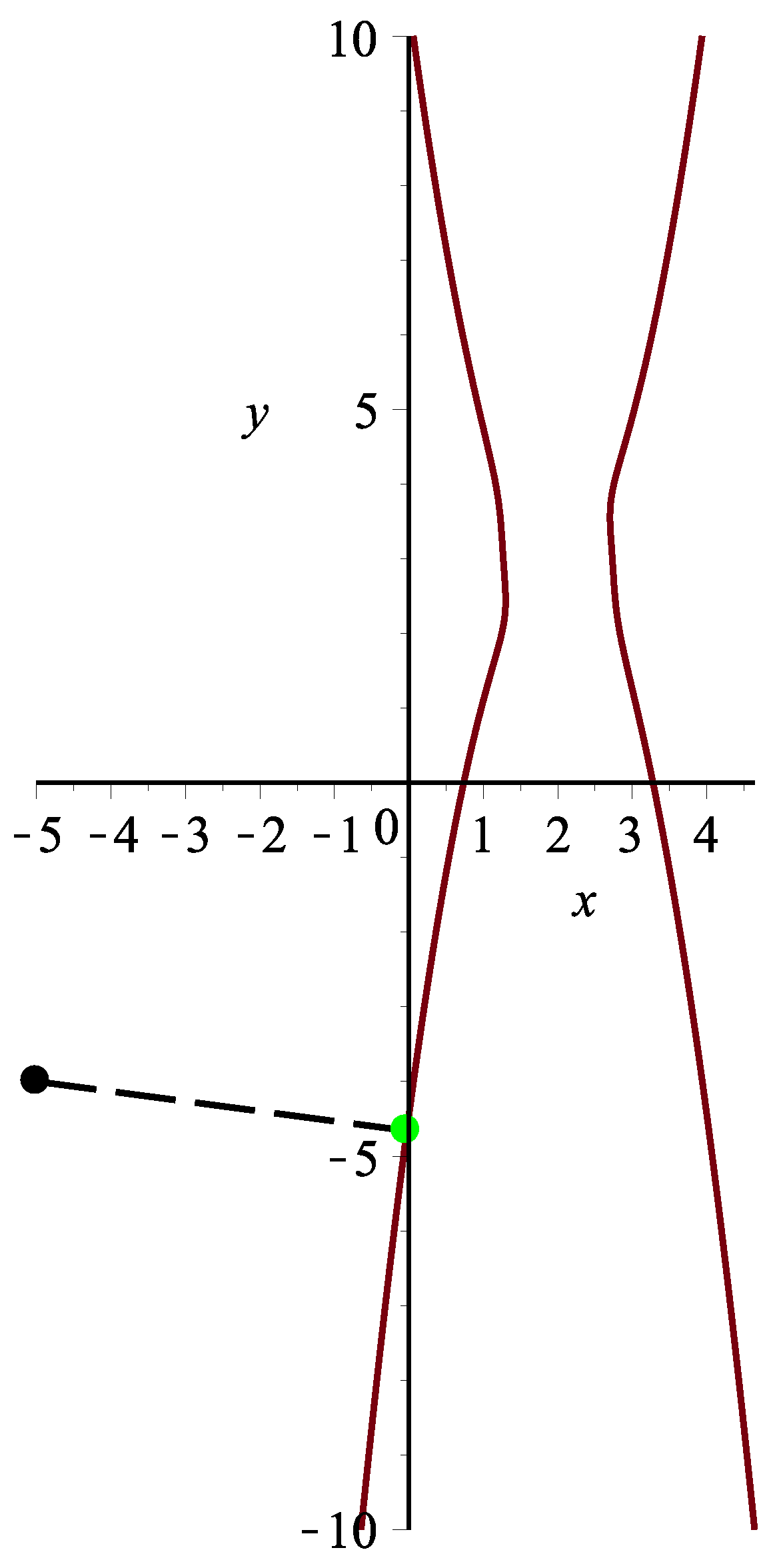

Example 3. Assume a planar implicit curve . Three thousand and six hundred points from square are taken. Algorithm 3 can can orthogonally project all 3600 points onto planar implicit curve Γ. It satisfies the relationships and . One test point in this case is specified. Using Algorithm 3, the corresponding orthogonal projection point is = (−0.027593939033081903,−4.6597845115690539), and the initial iterative values are (−12,−7), (−3,−5), (−5,−4), (−6.6,−9.9), (−2,−7), (−11,−6), (−5.6,−2.3), (−4.3,−5.7), respectively. Each initial iterative value iterates 10 times, respectively, yielding 10 different iteration times in nanoseconds. In Table 9, the average running times of Algorithm 3 for eight different initial iterative values are 299,569, 267,569, 290,719, 139,263, 125,962, 149,431, 289,643 and 124,885 nanoseconds, respectively. In the end, the overall average running time is 210,880 nanoseconds (see Figure 10). The iterative error analysis for the test point

under the same condition is presented in

Table 10 with initial iterative points in the first row.

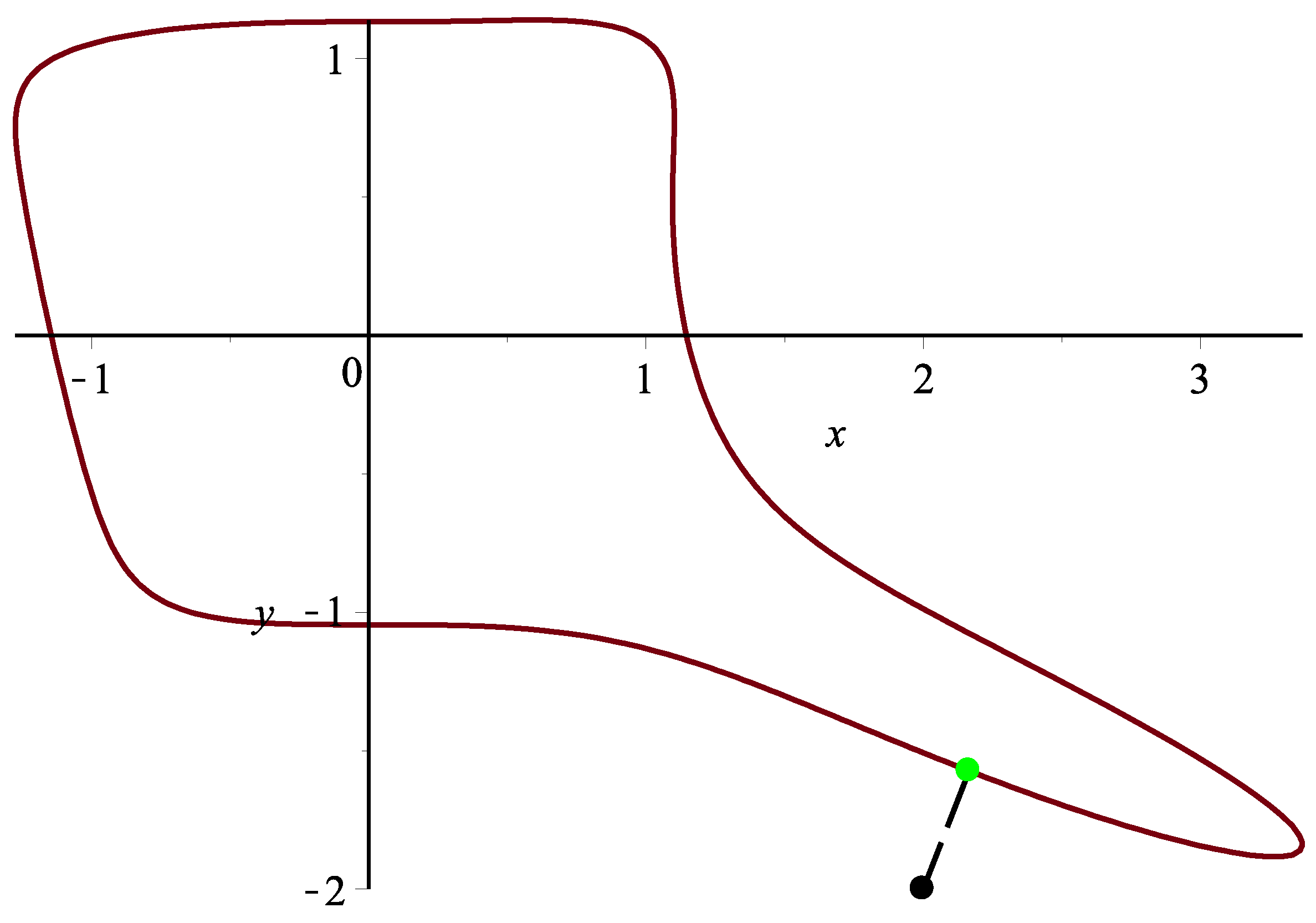

Example 4. Assume a planar implicit curve . Two thousand one hundred test points from the square are taken. Algorithm 3 can orthogonally project all 2100 points onto planar implicit curve Γ. It satisfies the relationships and . One test point in this case is specified. Using Algorithm 3, the corresponding orthogonal projection point is = (2.1654788271485294, −1.5734131236664724), and the initial iterative values are (2.2,−2.1), (2.3,−1.9), (2.4,−1.8), (2.1,−2.3), (2.4,−1.6), (2.3,−1), (1.6,−2.5), (2.6,−2.5), respectively. Each initial iterative value iterates 10 times, respectively, yielding 10 different iteration times in nanoseconds. In Table 11, the average running times of Algorithm 3 for eight different initial iterative values are 403,539, 442,631, 395,384, 253,156, 241,510, 193,592, 174,340 and 187,362 nanoseconds, respectively. In the end, the overall average running time is 286,439 nanoseconds (see Figure 11). The iterative error analysis for the test point

under the same condition is presented in

Table 12 with initial iterative points in the first row.

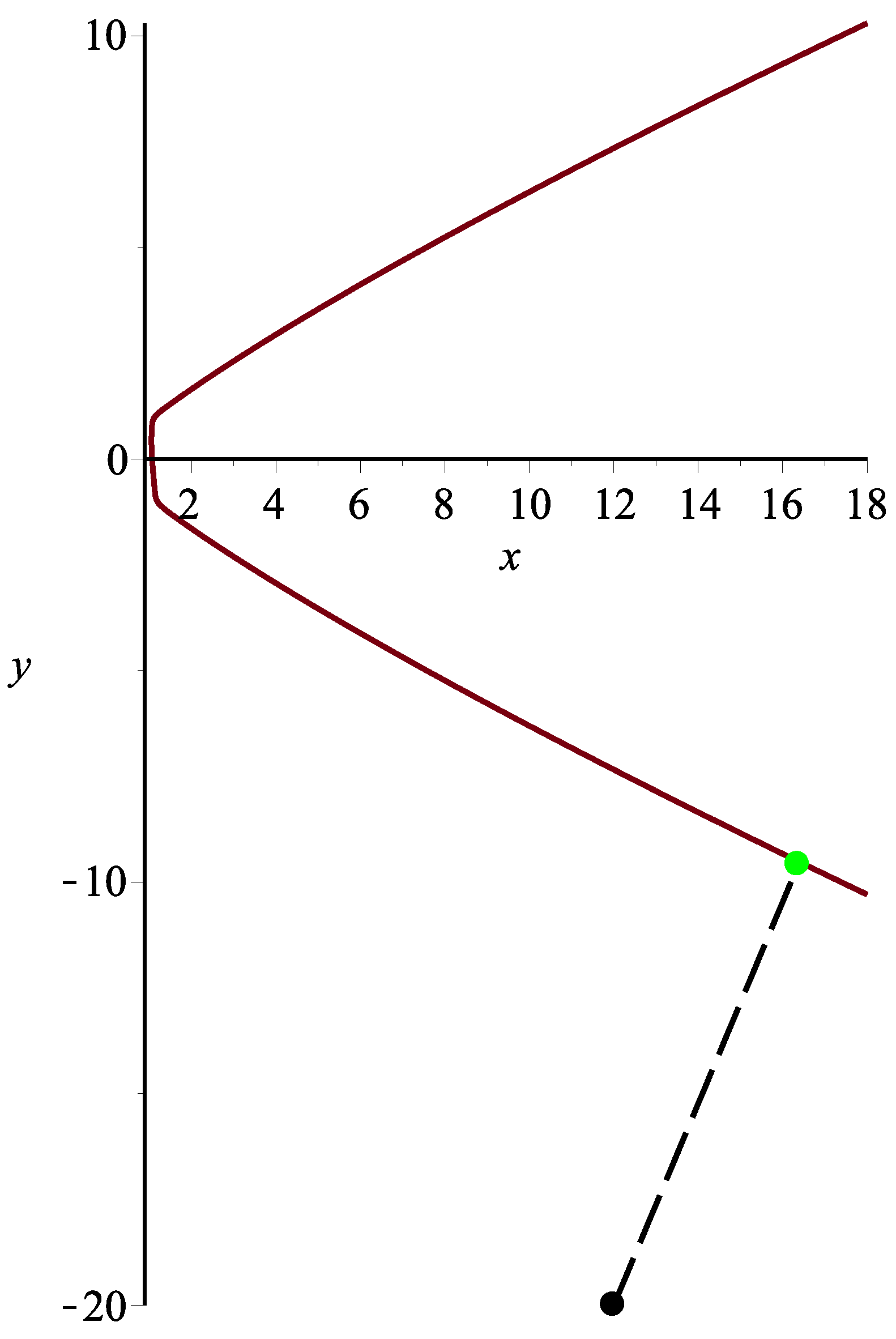

Example 5. Assume a planar implicit curve . Tow thousand four hundred test points from the square are taken. Algorithm 3 can orthogonally project all 2400 points onto planar implicit curve Γ. It satisfies the relationships and .

One test point

in this case is specified. Using Algorithm 3, the corresponding orthogonal projection point is

= (16.9221067487652, −9.77831982969495), and the initial iterative values

are (12,−20), (3,−5), (5,−4), (66,−99), (14,−21), (11,−6), (56,−23), (13,−7), respectively. Each initial iterative value iterates 10 times, respectively, yielding 10 different iteration times in nanoseconds. In

Table 13, the average running times of Algorithm 3 for eight different initial iterative values are 285,449, 447,036, 405,726, 451,383, 228,491, 208,624, 410,489 and 224,141 nanoseconds, respectively. In the end, the overall average running time is 332,667 nanoseconds (see

Figure 12).

The iterative error analysis for the test point

under the same condition is presented in

Table 14 with initial iterative points in the first row.

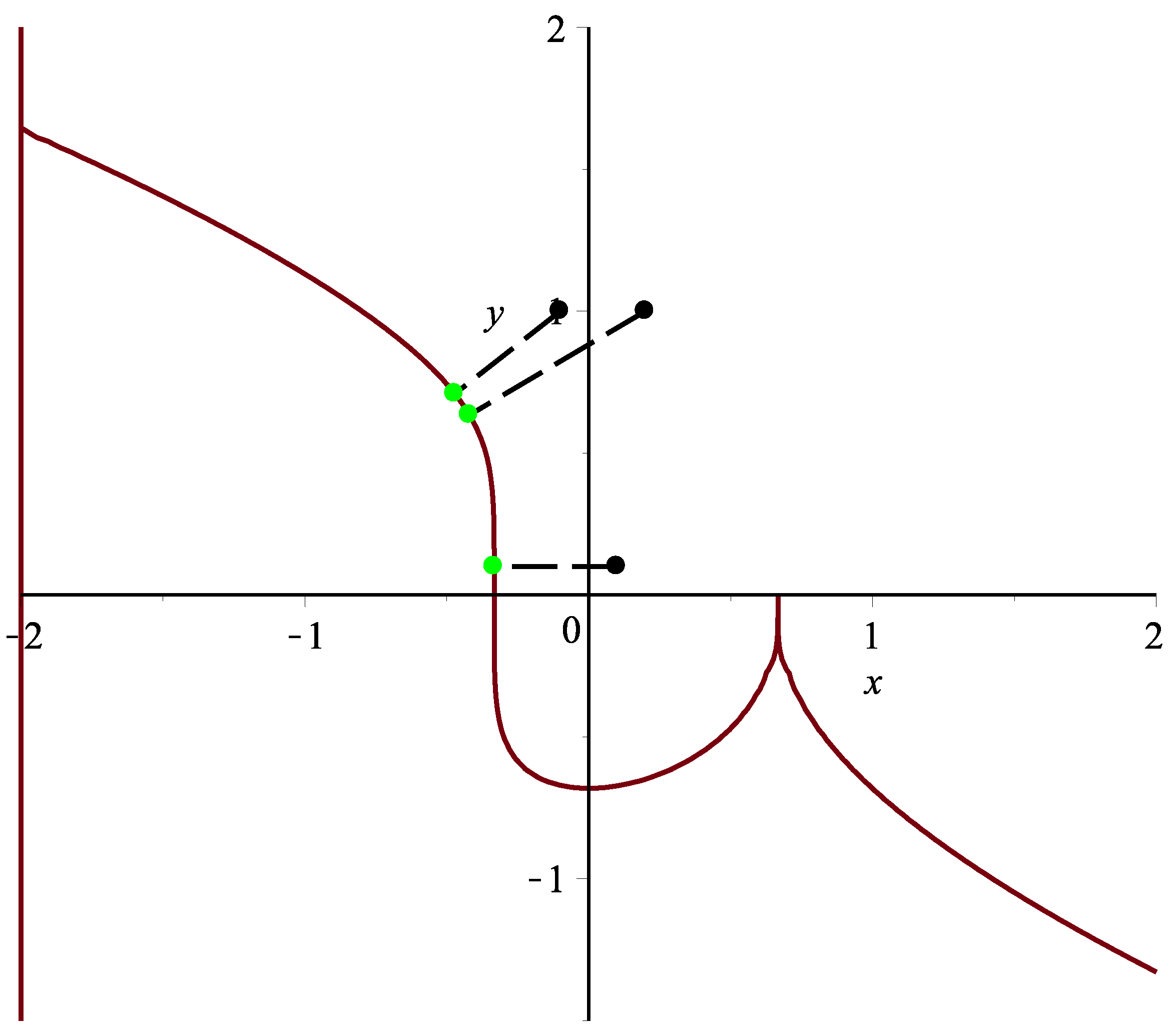

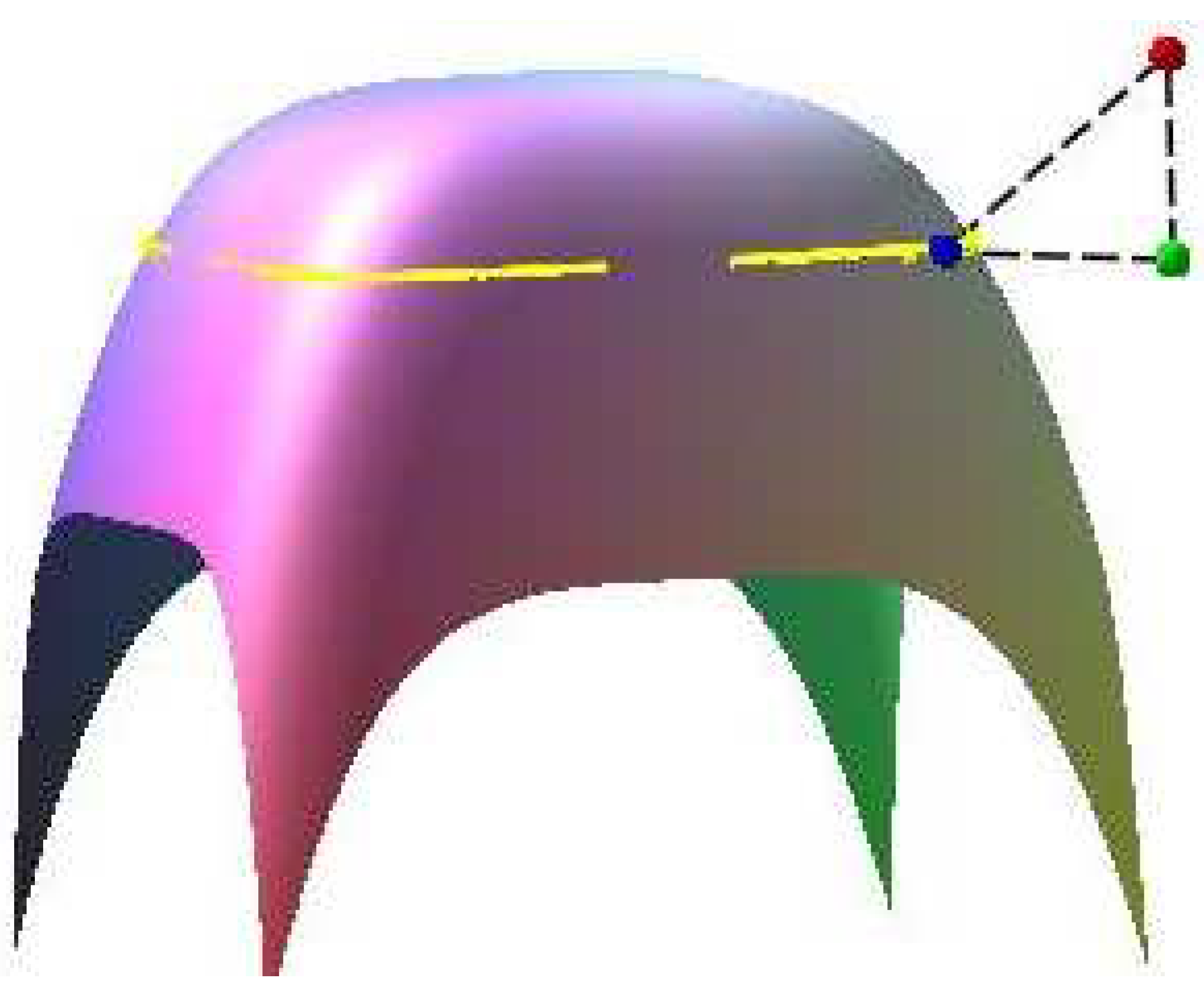

Example 6. Assume a planar implicit curve . One spatial test point in this case is specified, and orthogonally project it onto plane , so the planar test point will be . Using Algorithm 3, the corresponding orthogonal projection point on plane is , and it satisfies the two relationships and . In the iterative error Table 15, six points (1,1), (1.5,1.5), (−1,1), (1,−1), (1.5,1), (1,1.5) in the first row are the initial iterative points of Algorithm 3. In Figure 13, red, green and blue points are the spatial test point, planar test point and their common corresponding orthogonal projection point, respectively. Assume surface with two free variables x and y. The yellow curve is planar implicit curve . Remark 4. In the 22 tables, all computations were done by using g++ in the Fedora Linux 8 environment. The iterative termination criteria for Algorithm 1 and Algorithm 2 are and , respectively. Examples 1–6 are computed using a personal computer with Intel i7-4700 3.2-GHz CPU and 4.0 GB memory.

In Examples 2–6, if the degree of every planar implicit curve is more than five, it is difficult to get the intersection between the line segment determined by test point p and

and the planar implicit curve by using the circle shrinking algorithm in [

14]. The running time comparison for Algorithm in [

14] was not done, and it was not done for the circle double-and-bisect algorithm in [

36] due to the same reason. The running time comparison test by using the circle double-and-bisect algorithm in [

36] has not been done because it is difficult to solve the intersection between the circle and the planar implicit curve by using the circle double-and-bisect algorithm. In addition, many methods (Newton’s method, the geometrically-motivated method [

31,

32], the osculating circle algorithm [

33], the Bézer clipping method [

25,

26,

27], etc.) cannot guarantee complete convergence for Examples 2–5. The running time comparison test for those methods in [

25,

26,

27,

31,

32,

33] has not been done yet. From Table 2 in [

36], the circle shrinking algorithm in [

14] is faster than the existing methods, while Algorithm 3 is faster than the circle shrinking algorithm in [

14] in our Example 1. Then, Algorithm 3 is faster than the existing methods. Furthermore, Algorithm 3 is more robust and efficient than the existing methods.

Besides, it is not difficult to find that if test point p is close to the planar implicit curve and initial iterative point is close to the test point p, for a lower degree of and fewer terms in the planar implicit curve and lower precision of the iteration, Algorithm 3 will use less total average running time. Otherwise, Algorithm 3 will use more time.

Remark 5. Algorithm 3 essentially makes an orthogonal projection of test point onto a planar implicit curve . For the multiple orthogonal points situation, the basic idea of the authors’ approach is as follows:

- (1)

Divide a planar region of planar implicit curve into sub-regions , where .

- (2)

Randomly select an initial iterative value in each sub-region.

- (3)

Using Algorithm 3 and using each initial iterative value, do the iteration, respectively. Let us assume that the corresponding orthogonal projection points are , respectively.

- (4)

Compute the local minimum distances , where .

- (5)

Compute the global minimum distance .

To find as many solutions as possible, a larger value of m is taken.

Remark 6. In Example 1, for the test points (−0.1,1.0), (0.2,1.0), (0.1,0.1), (0.45,0.5), by using Algorithm 3, the corresponding orthogonal projection points are , , , ,, respectively (see Figure 14 and Table 16). In addition to the six test examples, many other examples have also been tested. According to these results, if test point p is close to the planar implicit curve , for different initial iterative values , which are also close to the corresponding orthogonal projection point , it can converge to the corresponding orthogonal projection point by using Algorithm 3, namely the test point p and its corresponding orthogonal projection point satisfy the inequality relationships: Thus, it illustrates that the convergence of Algorithm 3 is independent of the initial value and Algorithm 3 is efficient. In sum, the algorithm can meet the top two of the ten challenges proposed by Professor Les A. Piegl [

41] in terms of robustness and efficiency.

Remark 7. From the authors’ six test examples, Algorithm 3 is robust and efficient. If test point p is very far away from the planar implicit curve and the degree of the planar implicit curve is very high, Algorithm 3 also converges. However, inequality relationships (42) could not be satisfied simultaneously. In addition, if the planar implicit curve contains singular points, Algorithm 3 only works for test point p in a suitable position. Namely, for any initial iterative point , test point p can be orthogonally projected onto the planar implicit curve, but with a larger distance than the minimum distance between the test point and the orthogonal projection point, where is the singular point. For example, for the test point (1.0,0.01), (0.6,0.1), (0.5,−0.15), (0.8,−0.1), Algorithm 3 gives the corresponding orthogonal projection points as , , , , respectively. However, the actual corresponding orthogonal projection point of four test points is (see Figure 14 and Table 16). Remark 8. This remark is added to numerically validate the convergence order of two, thanks to the reviewers’ insightful comments, which corrects the previous wrong calculation of the convergence order. The iterative error ratios for the test point p = in Example 1 are presented in Table 17 with initial iterative points in the first row. The formula is used to compute error ratios for each iteration in rows other than the first one, which is the same for Table 18, Table 19, Table 20, Table 21 and Table 22. From the six tables, once again combined with the order of convergence formula , it is not difficult to find out that the order of convergence for each example is approximately between one and two, which verifies Theorem 1. The convergence formula ρ comes from the Formula [42], i.e., .