1. Introduction

Road detection based on vision in the advanced driving assisted system is an important and challengeable task [

1]. The vision data can provide abundant information about the driving scenes [

2], provide an explicit route plan, and can gain both obstacle detection and road profile estimate information precisely. Therefore, the systems that are vision-based have huge potential in complex road detection scenes [

3].

In recent years, road detection has been extensively studied as a key part of automated driving systems, especially in vision-based road detection [

4,

5,

6,

7]. Kong et al. [

8] applied the vanishing point detection algorithm to road detection, using the Gabor filter to obtain the pixel texture orientation, and the vanishing point position estimation algorithm of an adaptive soft voting scheme and the road region segmentation algorithm with a vanishing point constraint were proposed. Munajat et al. [

9] proposed a road detection algorithm based on an RGB histogram filtering and boundary classifier. The RGB image horizontal projection histogram was used to obtain the approximate area of the road, and the cubic spline interpolation was used to obtain the road boundary, which was combined to obtain the road detection result. Somawirata et al. [

10] proposed a road detection algorithm that combined the color space and cluster connecting. The color space was used to obtain the initial road area, and the wrong road area was removed by cluster connecting to achieve accurate road detection. Lu et al. [

11] proposed a layer-based road detection method, which used the multipopulation genetic algorithm to realize road vanishing point detection, fused vanishing point, and the clustering strategy at the super pixel level to realize road seed point selection; used the GrowCut algorithm to obtain the initial road contour, and used the conditional random field model correction to obtain accurate road detection results. Wang et al. [

12] proposed a road detection algorithm based on parallel edge features and a fast road rough segmentation method based on edge connection, and comprehensively applied the parallel edge and road location information, achieving road detection. Yang et al. [

13] proposed a road detection algorithm that combined image edge and region features, and used the custom difference operator and region growing method to obtain the edge and region features of the image, respectively, realizing road detection. However, the above studies did not fully consider the effects of illumination changes and shadows on road detection results. In addition, there is a lack of necessary road datasets with a shaded road as an effective verification. As a result, the detection results are more restrictive.

Nowadays, with the rapid development of deep learning and the maturity of its application fields [

14,

15], road detection methods based on deep learning have emerged, which have a good detection effect on shaded road surfaces [

16,

17]. Geng et al. [

18] proposed a road detection algorithm combining the convolutional neural network (CNN) and Markov random field (MRF), using simple linear iterative cluster (SLIC) to segment the original image into a super pixel map, training CNN to automatically learn road features to obtain road regions, and using MRF to optimize road detection results. Han et al. [

19] proposed a semi-supervised and weakly supervised road detection algorithm based on generative adversarial networks (GANs). For semisupervised road detection, GANs was used directly to train unmarked data to prevent overfitting. For weakly supervised road detection, the road shape was used as an additional restriction for conditional GANs. Unfortunately, most deep learning-based approaches have a high execution time and hardware requirements, and only a few of them are suitable for real-time applications [

20]. Therefore, it is not advisable to implement road detection through a deep learning-based approach and apply it to practical applications. So, how to effectively remove the effects of illumination changes and shadows on road detection results and obtain higher detection results?

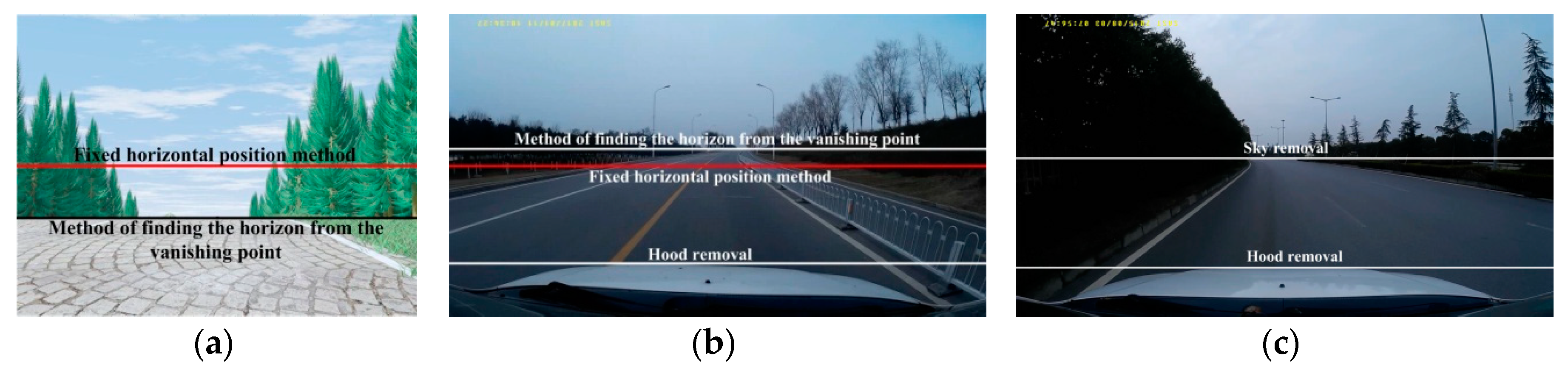

Based on the above, Alvarez et al. [

21] introduced the illumination invariance theory into road detection. Alvarez et al. [

21] and Frémont et al. [

22] systematically analyzed the application of illumination invariance theory to road detection (II-RD). Du et al. [

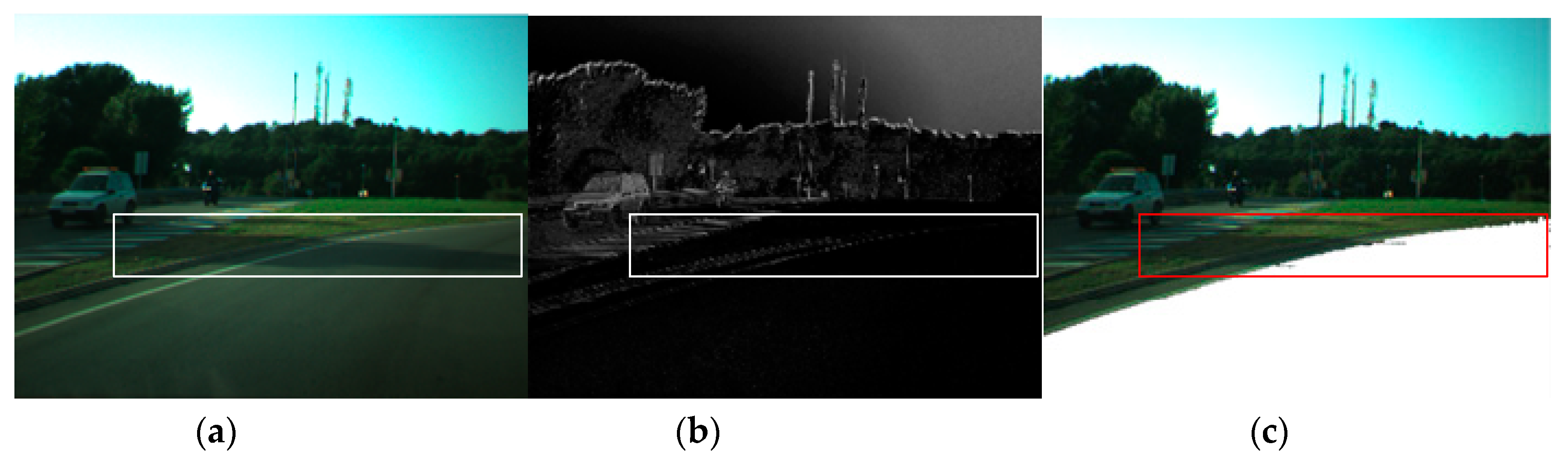

23] proposed a modified illumination invariant road detection method, which combines illumination invariance and random sampling to realize road detection (MII-RD). the above methods not only can effectively eliminate the influence of illumination changes and shadows on road detection results, but was also not limited by a long execution time and expensive hardware requirements. However, the methods of II-RD and MII-RD simply removed the sky area when obtaining the camera axis calibration angle. The remaining area was mixed with a large number of non-road areas, such as vehicles and vegetation, and the vehicle had large bumps and jitters during driving, which caused obvious errors in the obtained angle, and the road detection results were inaccurate. At the same time, II-RD fixedly sampled nine blocks with 10 × 10 pixel sizes in the safe distance area before the vehicle. This fixed position sampling method lacked the randomness of sample selection. When the fixed position was covered with different degrees of traffic markings, manhole covers, spills, cracks, pits, overhauls, ruts, etc. caused by road defects, the sampling of road samples were invalid, and the road detection results had more error. While MII-RD used a random sampling method to collect road sample sets, however, taking the previous frame detection area of the frame as a reference, a frame detection failure was likely to cause continuous detection errors, and the calculation efficiency was low.

Therefore, in this paper, we propose implementation of an online road detection process on a shaded traffic image using a learned illumination-independent image (OLII-RD). We manually calibrate the road block and non-road block of the image sequence, and use the multi-feature fusion method to obtain the road block and non-road block color information matrix and the gray level co-occurrence matrix feature vector to establish the road block SVM classifier. A cascading Hough transform parameterized by a parallel coordinate system is used to acquire a road region of interest, and blocks of 30 × 30 pixels are randomly extracted from the region of interest. We use the SVM classifier to determine the five road blocks and combine them, and convert the RGB space of the combined road block into a geometric mean log chromatic space, and determine the camera axis calibration angle for each frame according to Shannon entropy, and then calculate illumination-independent images for the respective frames. The road sample points are extracted by the random sampling method in the safety distance area of the vehicle, and the road confidence interval classifier is established. The final road detection result is obtained by the road confidence interval classifier.

In brief, this paper has three main contributions:

- (1)

It comes up with a learning-based illuminant invariance method, which greatly solves the influences of the major interference of shadow detection in the road images and gains more accurate, robust road detecting results.

- (2)

This paper proposes an online road detection method, which avoids partial detection in the offline detection, achieves subtle detection on every shaded frame of the road images, and gains more effective and robust results.

- (3)

Compared with the advanced methods of II-RD and MII-RD based on common open image sets, by using the methods proposed in this paper, we could gain more precise and robust detecting results. Besides these advantages of this detecting method based on the common open datasets, we can gain the same effective results by using our own images and datasets.

The contents of each part of our paper are as follows. The second part describes theoretical models based on the proposed illuminant invariant image. The third part introduces online learning-based precise capture processing of the theta angle proposed in this paper. The forth part particularly illustrates the accurate steps of the road detecting algorithm proposed in this paper. The fifth part mainly describes the experiments, including datasets, doctrines, compared methods, results, and necessary discussions. The last part summarizes the full text and describes the next research directions and work content.

2. Illumination-Independent Image

The traditional optical sensor model shows that the data acquired by the image acquisition device is the energy result of the spectral power distribution of the light source, the surface reflection of the object, and the imaging of the spectral sensor.

The spectral power distribution is represented by

, the surface reflection function is represented by

, and the camera sensitization function is represented by

. Then, the RGB color image has three channel values:

The

in the formula is Lambertian shading [

24].

Supposing the camera photoreceptor function,

, is a Dirac

function [

25], i.e.,

. Then, Formula (1) becomes:

Suppose the illumination approximates the Planckian Rule [

24], i.e.:

where

and

are constants,

T is the illumination color, and

I is the total illumination intensity.

Approximately, RGB has three channels,

(

k =

R,

G,

B or

k = 1, 2, 3), which can be obtained by Equation (3).

Let

be the geometric mean of the three channels,

, of the RGB:

Then, the chromaticity is:

After taking the logarithm on both sides:

In the geometric mean log chromaticity space of Equation (7),

and

are orthogonal (i.e.,

), and convert the

space into a two-dimensional

space using the coordinate system transformation.

where

is a two-dimensional column vector,

,

,

.

Thus, the illumination-independent image can be obtained from Equation (9):

where

is the camera axis calibration angle.

3. Learning-Based Online Calibration

Ideally, the

direction is an inherent parameter of the camera ranging from 1 to 180. It is the presented result on the basis of these three assumptions: a Planckian light, Lambertian surface, and narrow-band sensor [

24]. However, there are many limits while using the assumptions because most image sensors are not compatible with a narrow band. Additionally, the

value of each image frame is unstable because the camera in the car might be shaking while the car is moving on different road conditions. Therefore, it is necessary to confirm the value of

of each image frame online to make sure that each frame of the image is as close as possible to its optimal state.

In this paper, a learning-based method is proposed to determine the

of each frame image online. The algorithm flow chart is shown in

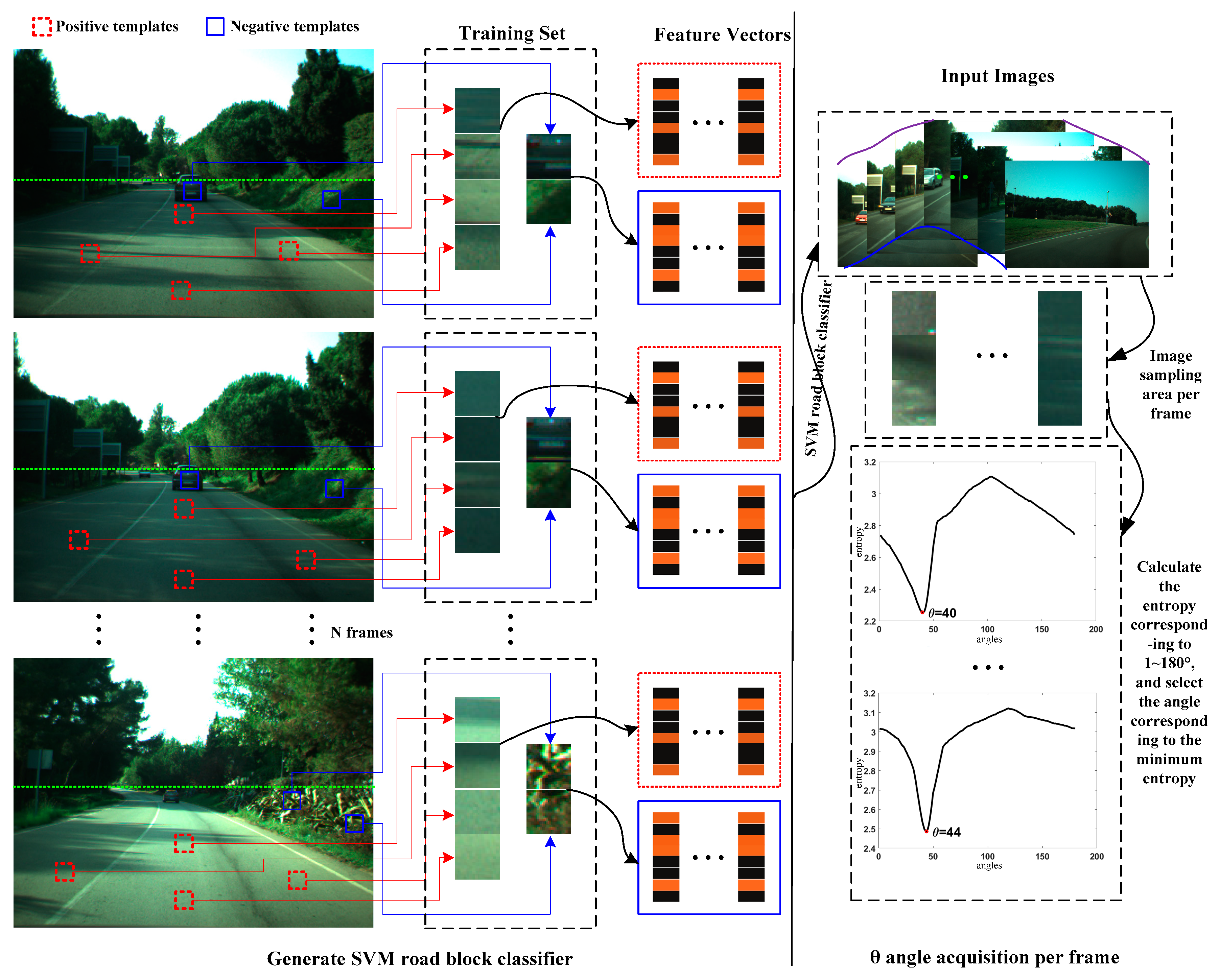

Figure 1.

3.1. Road Block Feature Extraction with Multi-Feature Fusion

The selected training set image sequence, N, should be big enough to contain different illumination environments, weather conditions, and images under different traffic scenes. We select several parts from each image frame to attain the road parts and the non-road parts, which, respectively, can be considered as the positive sample and negative sample. The feature extraction methods based on the multi-feature fusion proposed in this paper have effectively distinguished between the road parts and the non-road parts by using the feature vector of the color information matrix and gray level co-occurrence matrix.

The color information mainly scatters in low-level matrices. It can validly present the color distribution of images by extracting color information matrices, including color distance, first-level matrices, second-level matrices, and third-level matrices.

Among them, Ci and Cj represent pixel i and pixel j, and R, G, and B correspond to three channels.

Third moment:

where

is the probability of occurrence of a pixel whose gradation is

j in the

ith color channel component of the color image, and

N represents the number of pixels.

The color information matrix characteristics of the image are expressed as follows:

Except the color feature, the texture feature is also an important part of characterizing object features. Using the gray level co-occurrence matrix to obtain feature descriptions, such as the contrast, energy, entropy, and homogeneity, to describe object texture features [

26].

- (1)

Contrast: Is the measure of how much the local change is in the image, which reflects the sharpness and the texture groove depth of the image. The deeper the texture groove is, the clearer the result is. On the contrary, the contrast value is smaller, then the groove is shallower and the result is more blurred:

where

i and

j represent horizontal and vertical coordinates, and

p(

i,

j) refers to a normalized gray level co-occurrence matrix.

- (2)

Energy: Is the measure of stability of the image texture grayscale change, which reflects the image distribution uniformity and texture thickness. A high energy value reflects that the current texture change is more stable:

- (3)

Entropy: Is a measure of the randomness of an image containing the amount of information. It shows the complexity of the gray level distribution of the image. The larger the entropy value, the more complicated the image:

- (4)

Homogeneity: Is the similarity of the gray level of the image in the row or column direction, reflecting the local gray correlation. The larger the value, the stronger the correlation:

where

and

represent the average of the horizontal and vertical coordinates, respectively, and

and

represent the average of the horizontal and vertical coordinates, respectively.

The gray level co-occurrence matrix features of the image are expressed as follows:

3.2. SVM Road Block Classifier

SVM was first proposed by Cortes and Vapnik in 1995. It has unique advantages in solving small sample, nonlinear, and high-dimensional pattern recognition. It has good generalization ability and is incomparable to other machine learning [

27]. SVM uses the separation hyperplane as a linear function of the separation training data to solve the nonlinear classification problem. The optimization function for obtaining the optimal classification surface is as follows:

In the formula, x is the sample, n is the number of samples, y is the category number, is the Lagrange coefficient when the function is optimized, c is the penalty function, and is the kernel function that satisfies the Mercer condition.

The SVM discriminant function is:

When SVM is used for classification, the key lies in the choice of kernel function,

. The parameter optimization related to the kernel function is the key factor affecting the classification effect [

28]. The form of the kernel function and the determination of its associated parameters determine the type and complexity of the classifier. At present, the most commonly used kernel functions are linear kernel functions, polynomial kernel functions, radial basis RBF kernel functions, and S-shaped kernel functions. We select the RBF kernel function based on experimental data and experience, and the expression form is:

In the formula, is the bias term, gamma is the parameter, g, of the kernel function, is the number of support vectors, is the coefficient of the support vector, is the support vector, and is the sample to be predicted.

The experimental data of SVM is a matrix composed of rows of feature vectors. In the experiment, the matrix is normalized first, and then Libsvm [

29] is used to realize classification. In this paper, we use the method of

Section 3.1 to extract the road block and non-road block color information matrix and the gray level co-occurrence matrix as the feature vector for SVM training, and generate the SVM road block classifier.

3.3. Minimum Entropy Solution

In order to obtain the

of the road block, the Shannon entropy theorem [

24] is introduced in this paper:

where

is the entropy value and

is the probability that the gray value in the

gray histogram falls within the

ith bin. The bin-width of the gray histogram is determined by Scott’s law [

24]:

where

N is the size of the image.

According to the definition of entropy, if there are more bins in the gray histogram, the smaller is, the larger the entropy value is. Therefore, the angle corresponding to the minimum entropy is the angle of the frame image.

5. Experimental Results

In this chapter, we use several experiments to evaluate the performance of the road detection system proposed in this paper. Under different illuminating conditions, weather conditions, and traffic scenes, we test several moving-vehicle video sequences captured on city streets and suburban roads. In order to further evaluate the performances of our system, we also compare our method with other state of art methods with II-RD [

21] and MII-RD [

23]. The reason why we chose II-RD [

21] and MII-RD [

23] is that we have our considerations. II-RD [

21] introduced illumination invariance theory into road detection, and systematically analyzed the application of illumination invariance theory to road detection. As the first systematic argumentation analysis, the importance of II-RD [

21] is self-evident. MII-RD [

23] proposed a modified illumination invariant road detection method, and it is also the latest research results, so timeliness is guaranteed. The method proposed by us is also related to the theory of illumination invariance, so we chose to compare II-RD [

21] and MII-RD [

23], which are most closely related to the theory and application of illumination invariance and best reflect the advantages of the proposed method.

5.1. The Experiment of the CVC Public Dataset

The experimental image set uses the common dataset, CVC [

21], which contains a large number of road video image sequences. The sequence is acquired by an onboard camera based on the Sony ICX084 sensor. The camera is equipped with a microlens with a focal length of 6 mm and an image frame acquisition rate of 15 fps.

The experiment uses three different image sequences among them. The first image sequence, SS, contains 350 frames of different scene road images for road block feature extraction and SVM road block classifier training. The second sequence of images, dry road sequence (DS), includes 450 images of sunny shadows, supporting the presence of shadows. The third sequence of images, rainy road sequence (RS), consists of 250 images, which are road images after the rain and the road is wet. The resolution of the three image sequences is 640 × 480, and each pixel is 8 bits. The DS and RS sequences are dry and wet environment road pavements with various forms and complicated scenes, and the vehicles are driving on the road.

We compared this with the methods of II-RD [

21] and MII-RD [

23] on this dataset.

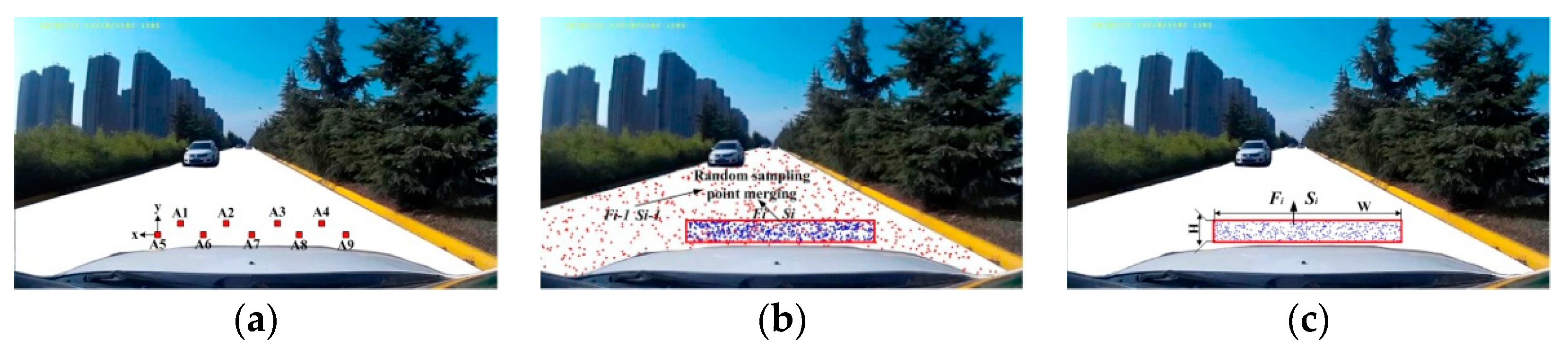

5.1.1. Sampling Method Experiment

For II-RD, nine fixed-area, fixed-size (10 × 10) road blocks are collected as sampling points in the safe distance area in front of the vehicle. MII-RD determines that the current frame, , has a size of 250 × 30 area, Si, and randomly selects 500 sampling points from Si, and 400 sampling points are randomly selected from the previous frame, Fi−1, road detection result, Si−1. Finally, a total of 900 sampling points are extracted. However, in this paper, we directly select 900 sampling points from Si.

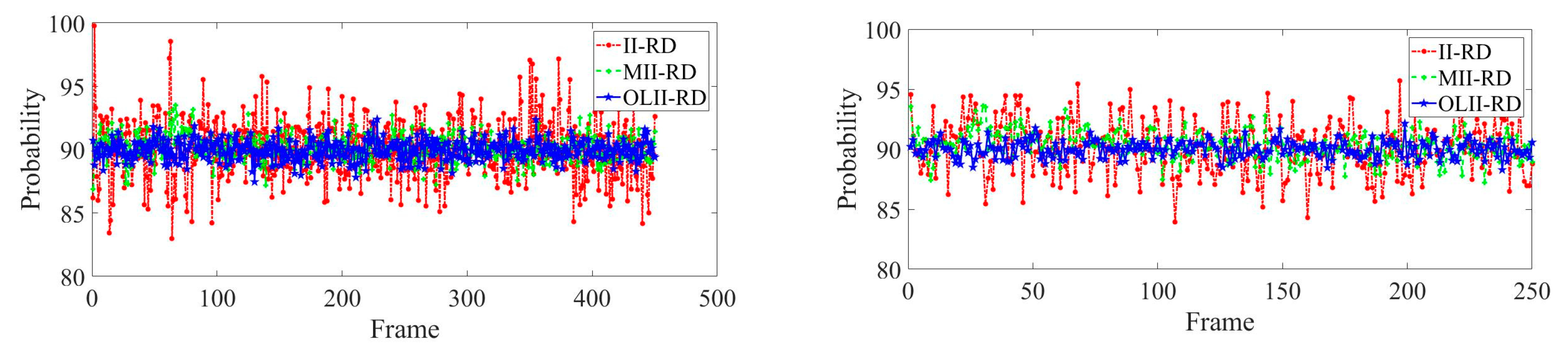

The data collected by II-RD, MII-RD, and OLII-RD are, respectively, fitted with normal distribution, as shown in

Figure 5. The sampling method’s error calculation results of II-RD, MII-RD and OLII-RD are shown in

Table 1.

It can be seen from

Figure 5 and

Table 1 that the mean value of our sampling method is closer to 90%, and the sampling standard deviation is significantly lower than for II-RD and MII-RD. For II-RD, the sampling method of the fixed position in the safe distance area in front of the vehicle lacks the randomness of sample selection, and it is easy to sample traffic signs, manhole covers, spills and road defects, cracks, pits, ruts, etc., which causes that road sample to be invalid, and the road detection result has a large error. For MII-RD, the upper and lower frame joint sampling method is adopted, the sampling distribution range is wide, and the probability of collecting non-road surface area is increased. If a frame detection fails, the error of the result is easily expanded. The sampling method proposed by us can effectively avoid the limitations of the fixed position sampling, and can also greatly reduce non-road interference, and can get better and more robust results.

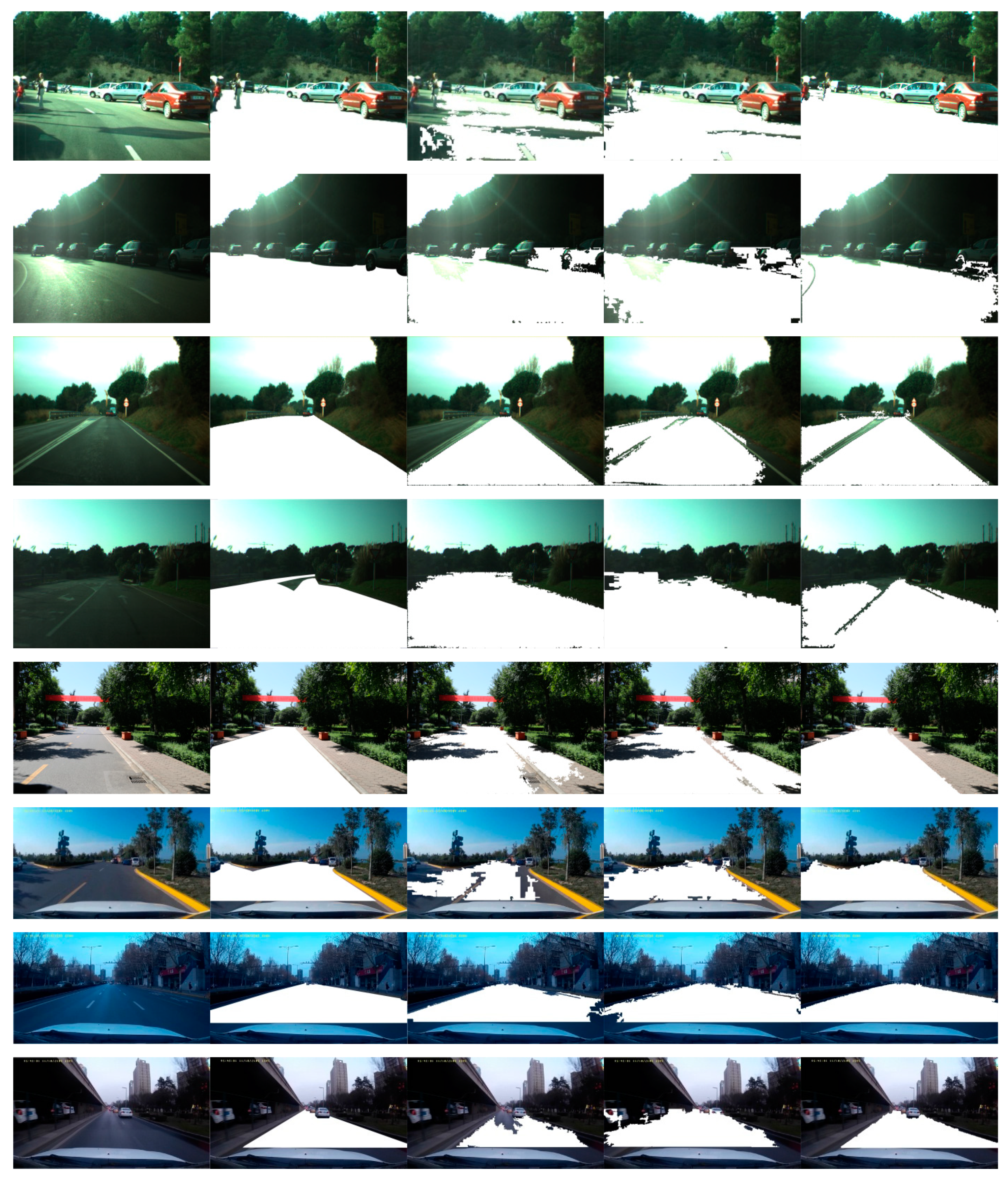

5.1.2. Qualitative Road Detection Result

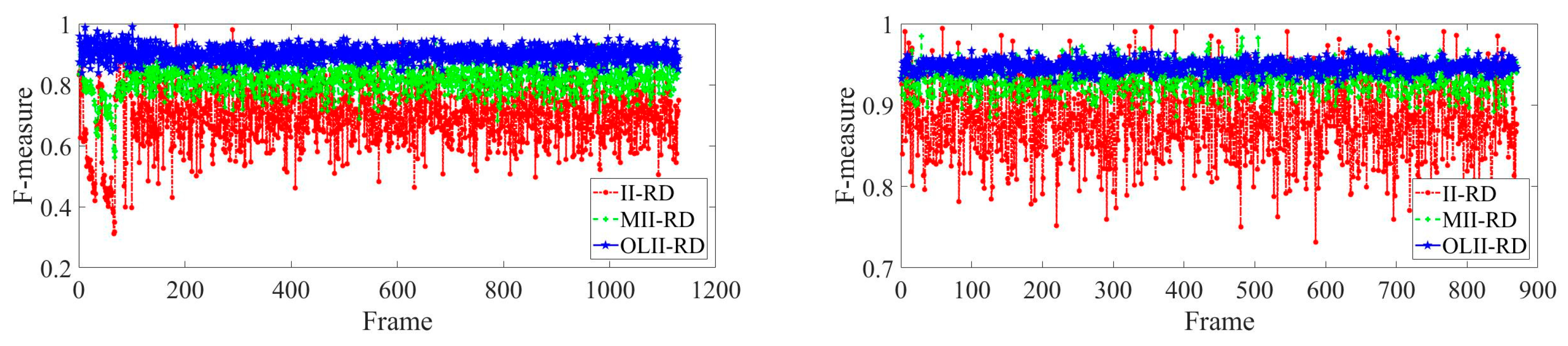

We systematically test the CVC public datasets.

Figure 6 shows some typical detection results of DS and RS video sequences. Among them, 1~3 lines are the detection results of many vehicles on the road; 4~6 lines are the results of the detection of obvious sunlight and road shadows; 7~9 lines are the detection results of irregular road lines and complex scenes. Each line shows the original image, groundtruth map, II-RD detection results, MII-RD detection results, and OLII-RD detection results.

5.1.3. Result Evaluation Index

This paper uses the precision, recall, and comprehensive evaluation index,

F, to quantitatively evaluate the road detection results [

31].

The definition is as follows: ① Accuracy rate,

P, ② recall rate,

R, ③ comprehensive evaluation index,

F. Let

be the real area of the road, and

be the actual detection result of the road surface, then

,

,

,

.

P and

R are complementary,

F is the weighted harmonic average of

P and

R, and can reconcile and comprehensively reflect the accuracy,

P, and the recall rate,

R. The closer

F is to 1, the better the road detection effect. Because we use the random sampling method, the

P,

R,

F values have slight fluctuations, and the standard deviation of the fluctuation is

. The comparison of the performance indexes of the three methods is shown in

Table 2. The comprehensive evaluation index,

F, curve of the three methods for each frame image detection result is shown in

Figure 7.

5.2. The Experiment of the Self-Built Video Sequence

To further illustrate the robustness of our detection system, we also test the road datasets that are taken by our own car outdoors. Similarly, we also compare our method with II-RD and MII-RD on these self-built datasets.

The test video sequence is the actual road dataset of China obtained by the driving recorder, named visual road recognition (VRR). The VRR is shot by the SAST F620 driving recorder. The wide angle is 170° and the frame rate is 30 frames per second. It is mounted on the top of the car about 1.5 m from the road surface. The original resolution is 1920 × 1080. For experimental purposes, the test video sequence selects one frame of the road image every five frames, and the original image of each frame obtained is normalized by downsampling with a resolution of 640 × 360.

Two of the video sequences, Sequence1, Sequence2 (hereinafter referred to as S1, S2), are selected for testing experiments. The sequence, S1, is collected from the Liuxue section of the Weihe River in Xi’an, Shaanxi Province. The video sequence duration is 3 m 9 s, and the effective frame number is 1130 frames. The road is a two-way two-lane road, the road video is intensely illuminated, the shadow contrast is obvious, and the vehicle passes frequently. S2 is collected from the Mingguang section of Weiyang District, Xi’an City, Shaanxi Province. The video sequence duration is 2 m 25 s and the effective frame number is 870 frames. The road video is dark and the road surface is similar to the surrounding features.

5.2.1. Qualitative Road Detection Result

We systematically test the self-built VRR datasets.

Figure 8 shows some typical detection results for the two video sequences, S1 and S2, where each row shows the comparison detection results of the three detection methods of the same image frame.

5.2.2. Result Evaluation Index

The road detection results are quantitatively evaluated using the same evaluation indicators as in

Section 5.1.3, and the precision, recall, and

F index of the detection results are calculated. The comparison of the performance of the three methods is shown in

Table 3. The index

F curve of the results of the three methods of each frame image is shown in

Figure 9.

In addition to the two video sequences (S1 and S2) tested above, the remaining five video sequences were also tested. The video sequences, 3 and 4, are captured in a suburban environment, the video sequence, 5, is captured in a school road environment, and the video sequences, 6 and 7, are captured in an urban road environment. We save the detection results on each frame, and after the entire process, calculate the accuracy, recall rate, and comprehensive evaluation index,

F.

Table 4 lists the details of the datasets and detection results. The results of these detections indicate the overall accuracy and stability of our system.

5.3. The Experiment of the Strong Sunlight and Low Contrast Condition

Illumination change is a challenge in the illumination-independent image acquisition stage and the road detection stage. To improve the robustness of road block selection, more road samples under different lighting conditions are added to the constraints in 3.1. We select 600 frames from the datasets, most of which were taken under strong sunlight or at very low contrast. Some typical results are shown in

Figure 10, where each row shows the results of three methods in the same sequence of scenes. The frames in scenes 1, 2 are cases where the reflected light is severe under strong sunlight. The frames in scenes 3 and 4 are when the sunlight is very low in the evening. The frames in scenes 5 and 6 are road scenes taken under noon direct sunlight conditions. The frames in scenes 7, 8 are road scenes taken at very low contrast time in the evening.

5.4. Detection Results Analysis and Discussion

Systematic detections are carried out for II-RD, MII-RD, and OLII-RD in the CVC dataset and self-built video sequence, respectively. For qualitative results, we find that the road detection results by II-RD and MII-RD have large errors, but the results of OLII-RD are more accurate and robust. The results of II-RD are usually incomplete, and there is a large road pavement missing, and the recall rate is the highest. The results of MII-RD are easy to include non-road parts on both sides of the road, and there is a large detection error. For quantitative results, OLII-RD in the accuracy of P, recall rate, R, and comprehensive evaluation index, F, and its stability is better than II-RD and MII-RD; among them, the comprehensive evaluation index, F, can reach more than 90%. Furthermore, under the strong sunlight and low contrast condition, our method still obtains better and more stable results.

Therefore, the road detection effects proposed by us in different lighting environments, different weather conditions, and different traffic scenarios were achieved, and our detection method also had good transplantation ability.

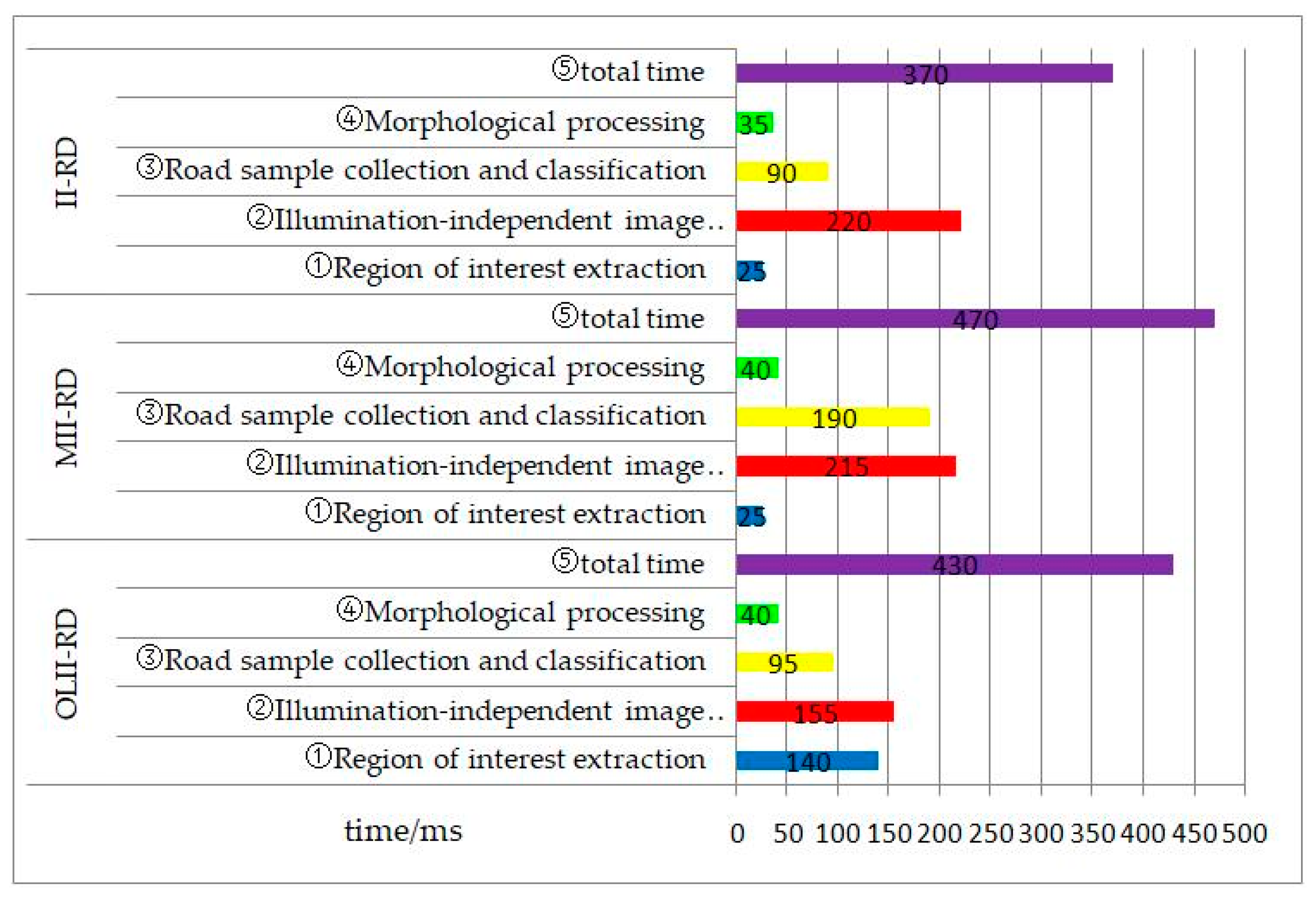

5.5. Time Validity Analysis

In this section, the calculation time of II-RD, MII-RD, and OLII-RD at each stage is calculated. This experiment uses Windows 10 64-bit operating system, CPU is Intel Core i5-4570, 3.2 GHz, 8 G PC as the experimental platform, and the experimental environment is MATLAB 2017b. In this part, the random sampling method is used to randomly extract 100 frames from the public dataset and the self-built dataset for time validity analysis experiments. For the resolution of the image frames of the two data sets, we use clipping and quantization processing to uniformly quantize to 640 × 480.

The road detection method in this paper can be summarized as two processes: Offline training and online detection. For the offline training process, mainly the road block feature extraction and the establishment of the SVM road block classifier, during the actual comparison process, the time consumption of this stage may not be measured. For the online detection process, it can be summarized into four stages: ① Interested region extraction, ② illumination-independent image acquisition, ③ road sample collection and classification, and ④ morphological processing.

With a uniform processing standard, our current implementation is non-optimized MATLAB code.

Figure 11 shows the average processing time for each of the three methods in each of the two datasets. II-RD and MII-RD removed the upper third of the image, respectively, and OLII-RD uses the horizon determination algorithm to remove the area above the horizon. It can be seen from the figure that the total processing time of MII-RD is the longest, the time is about 470 ms, and the road sample collection time is relatively long. The least time spent on total time is the method of II-RD, and the time is about 370 ms. The total time consumption of OLII-RD is about 430 ms, which is somewhere in between. Although, the paper uses the online acquisition,

, angle and the time consumption in the extraction stage of the region of interest is more than II-RD and MII-RD. However, the obtained road area of interest is more concentrated, and the number of pixels processed is relatively small, which saves more time for the subsequent illumination-independent image acquisition stage.

The experimental results show that the average processing speed of our detection method is about 2.5 fps. According to our experience in similar applications, we think that just by going to C++, this code can easily run in less than 70 ms. In the actual application process, a certain number of interval frames can be selectively selected, and specific real-time requirements can be met without affecting the final results of the experiment.

6. Conclusions

Road detection is a key step in realizing a vehicle’s automatic driving technology, and plays an important role in the rapid development of assisted driving and unmanned driving technology. In this paper, an online road detection method based solely on vision using learning-based illumination-independent image on shadowed traffic images was proposed to solve the effects of illumination changes and shadows on road detection results. We came up with a learning-based illuminant invariance method, which greatly solves the influences of the major interference of shadow detection in the road images. We proposed the online road detection method, which avoided partial detection in the offline detection, achieved subtle detection on every shadowed road image, and gained more effective and robust results. The effectiveness of the proposed method was tested by multiple image sequences under CVC and self-built datasets, including different traffic environments, different road types, different vehicle distributions, and different road scenarios. The F-value of the comprehensive evaluation index in road detection was about 93% on average, which proved the effectiveness of the proposed method. In addition, the entire detection process can meet real-time requirements. In the future, we plan to realize joint detection and classification identification of multiple traffic objects, such as people, vehicles, and roads.