Efficacy of Flushing and Chlorination in Removing Microorganisms from a Pilot Drinking Water Distribution System

Abstract

1. Introduction

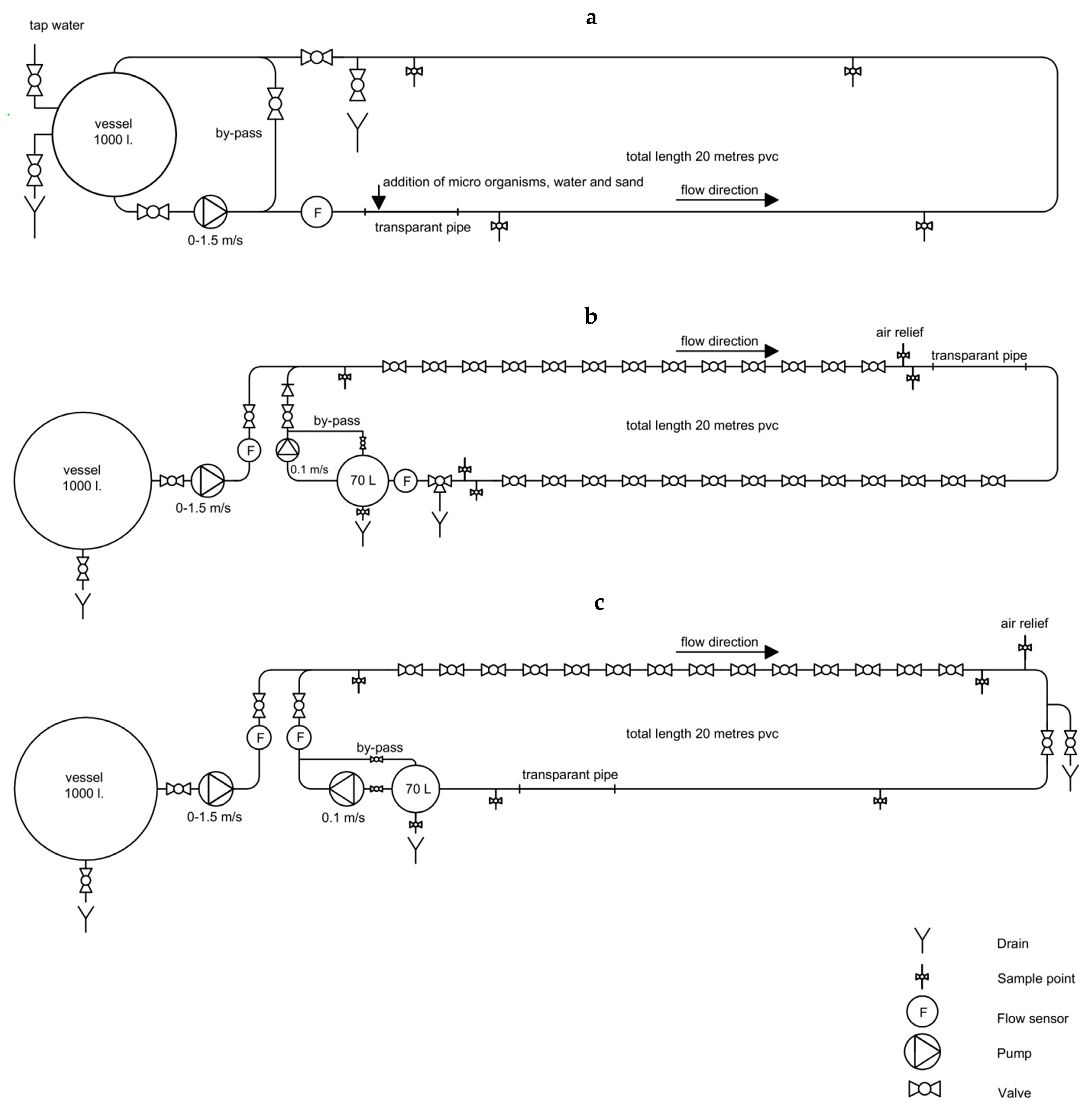

2. Materials and Methods

3. Results and Discussion

3.1. Growth or Decay of Microorganisms in Pipe-Loop System

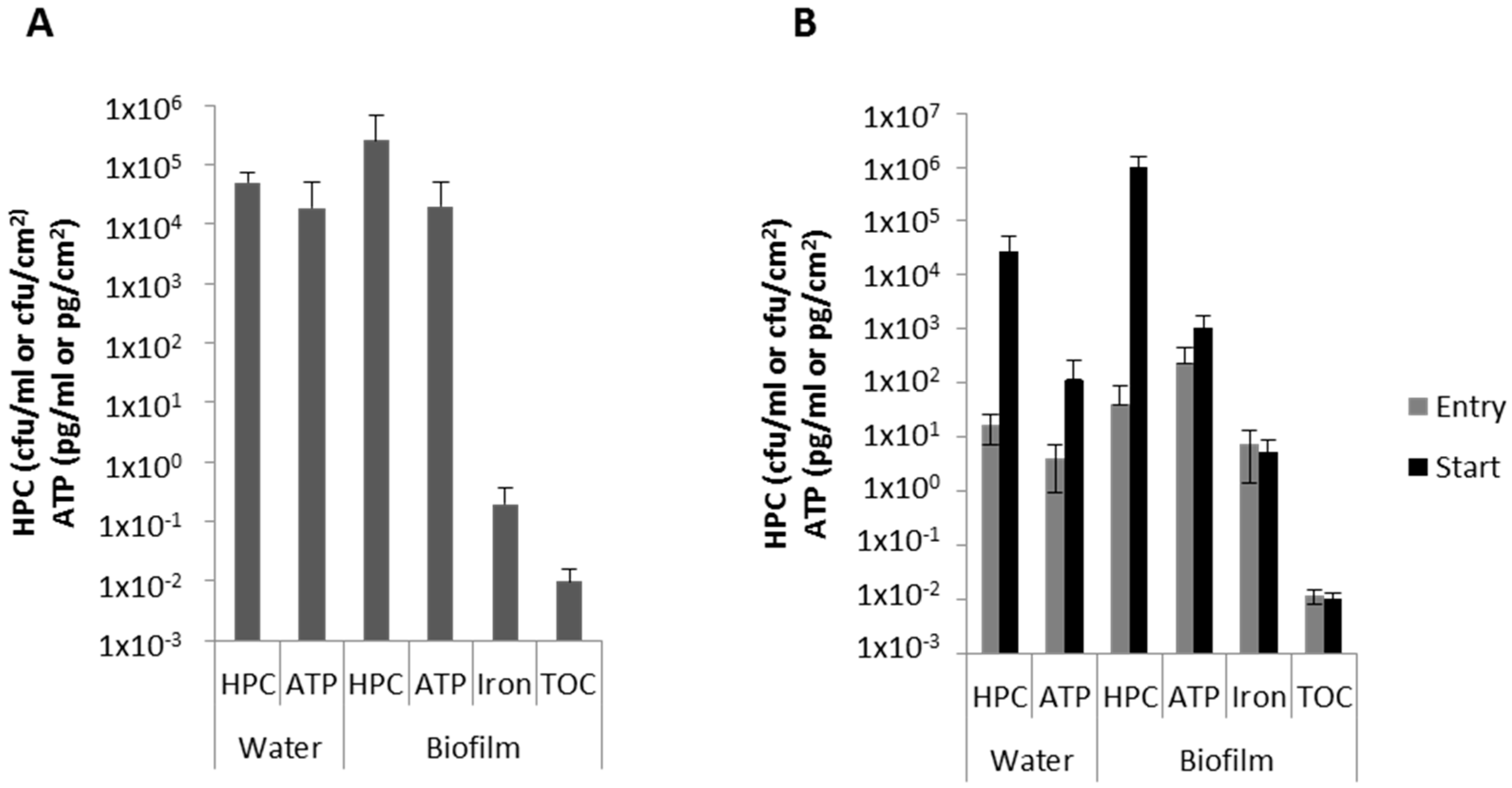

3.2. Biofilm Characteristics

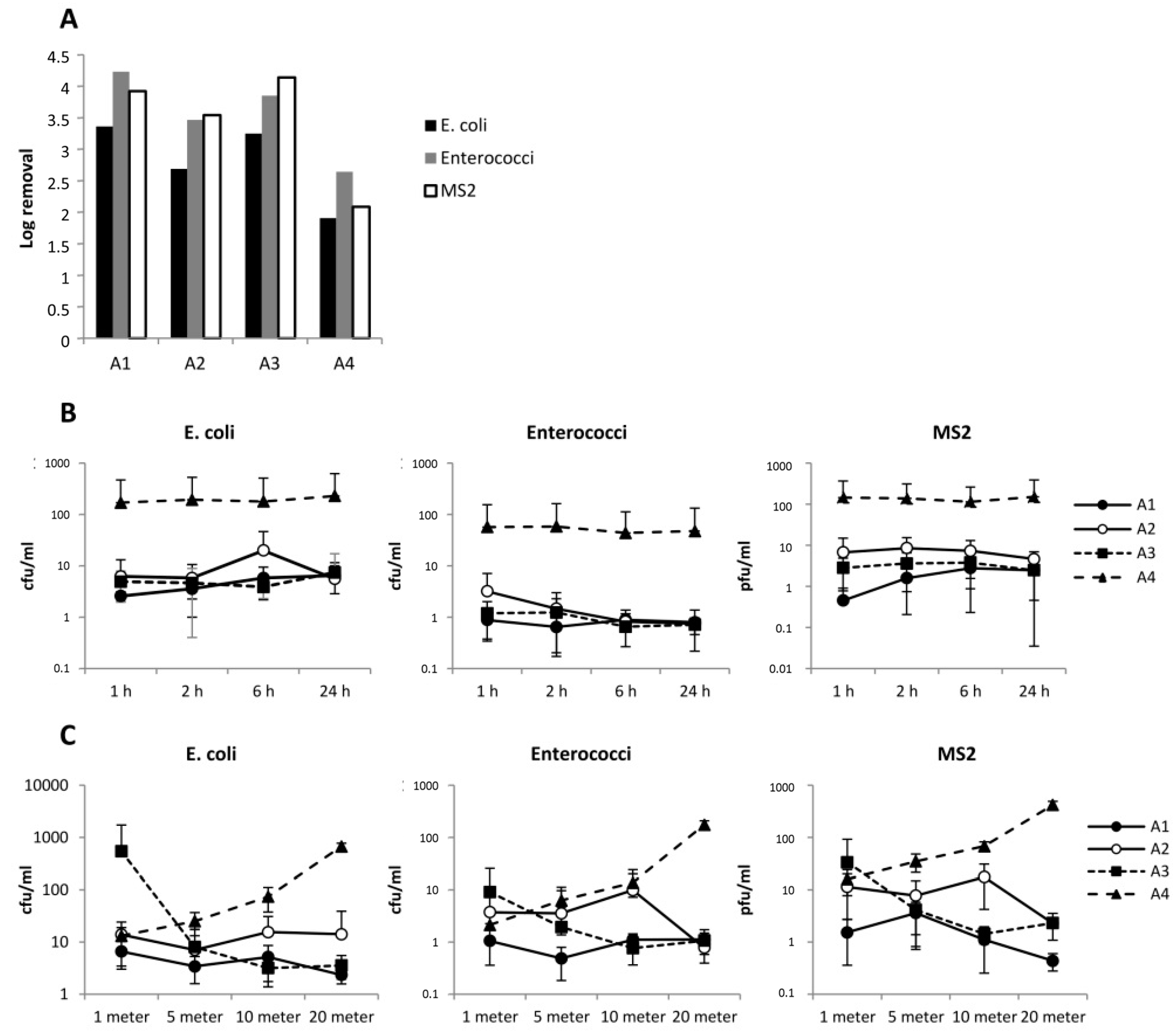

3.3. Flushing with 1.27 m/s

3.4. Role of Waiting Time between Flushing and Sampling

3.5. Attachment of Microorganisms to the Biofilm

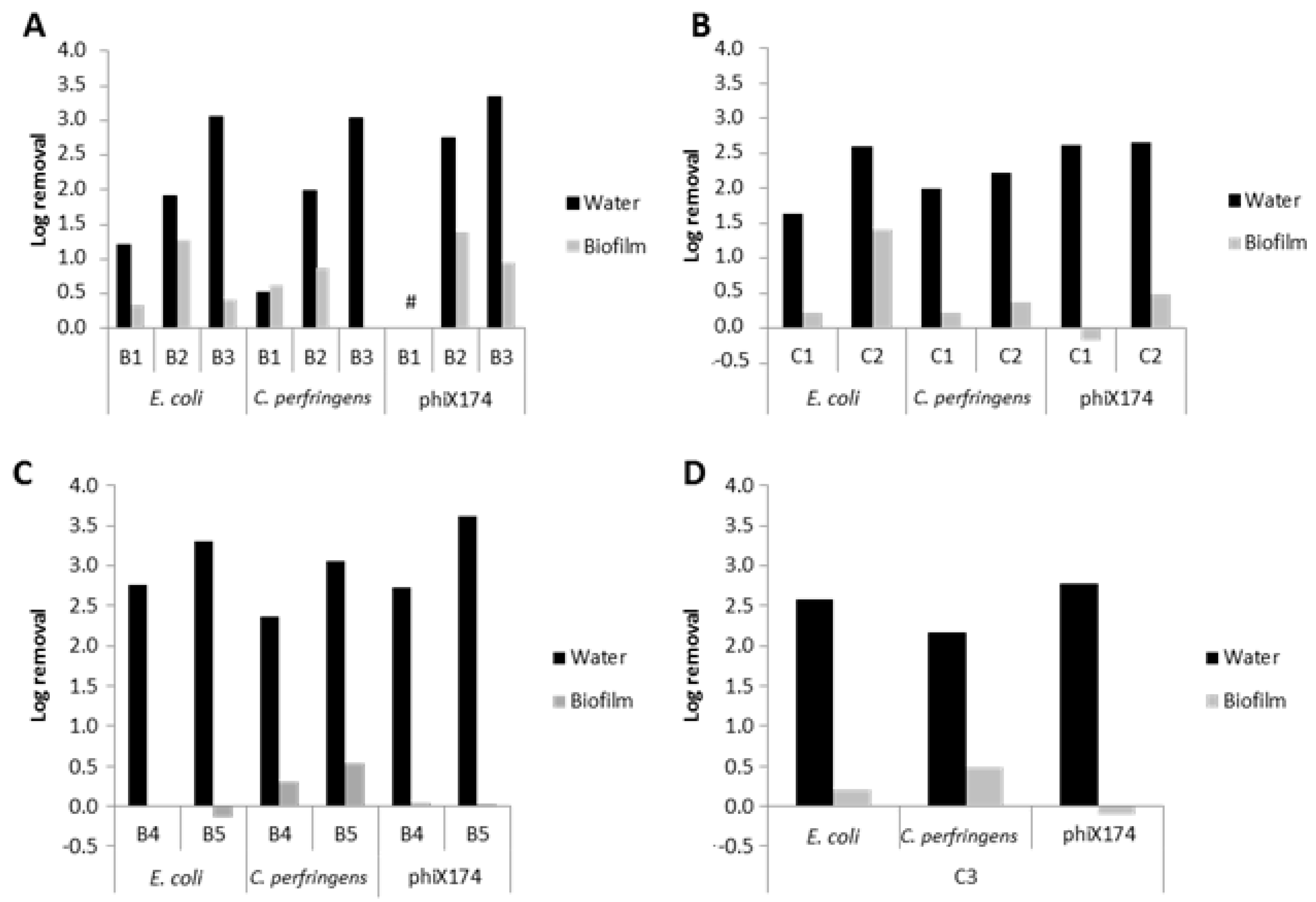

3.6. Normal Flushing with 1–1.5 m/s

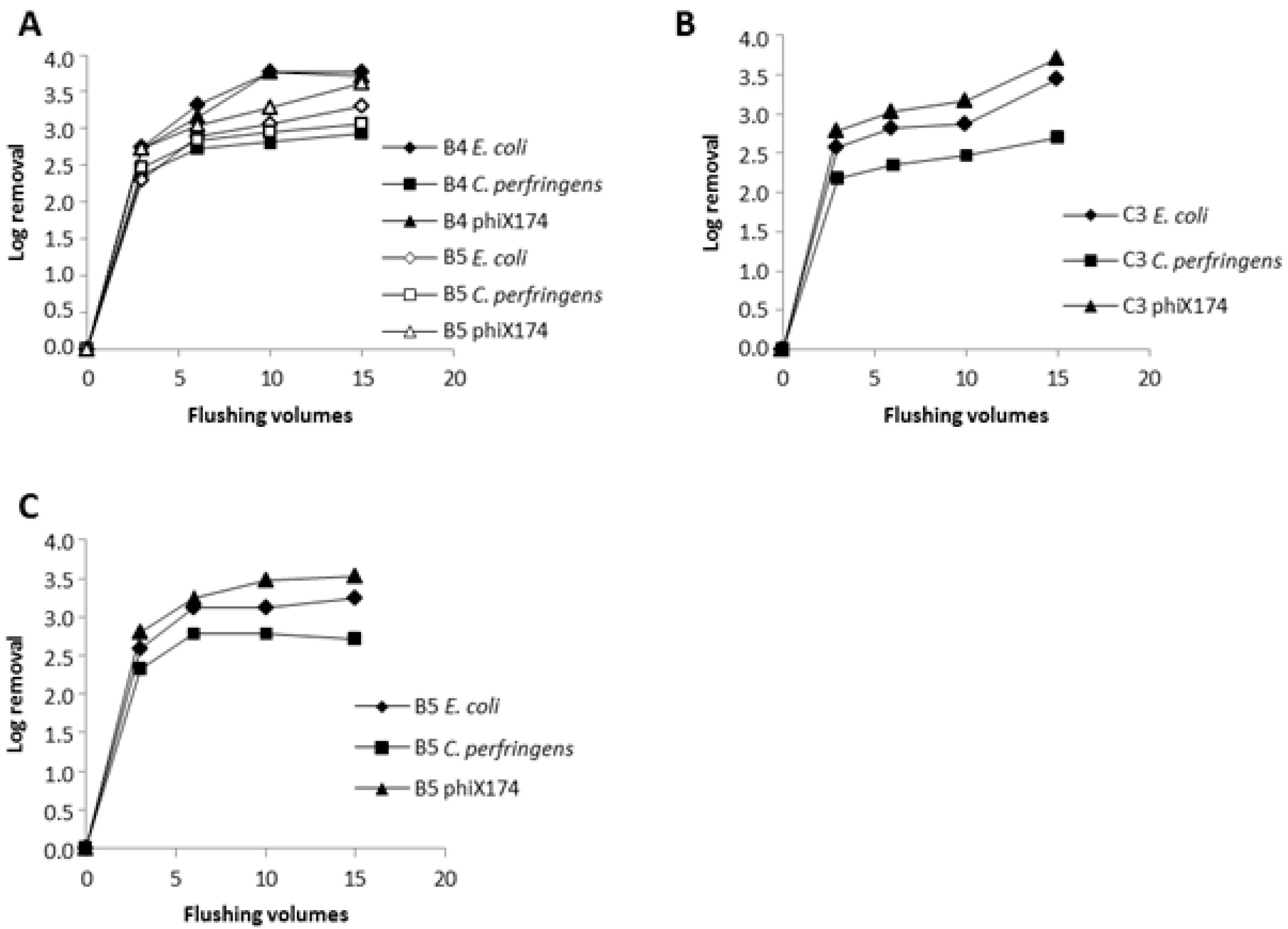

3.7. Slow Flushing with 0.3 m/s

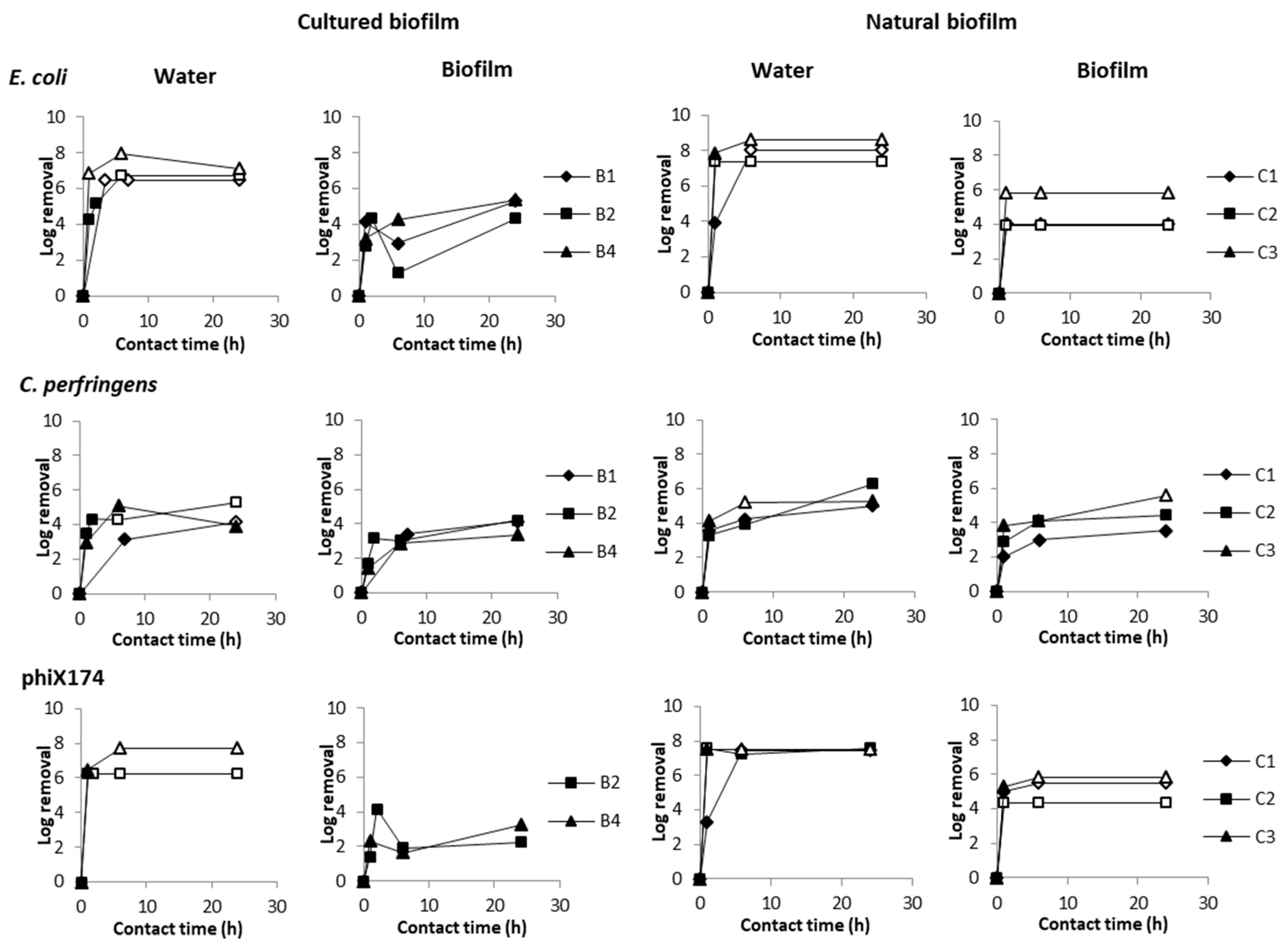

3.8. Chlorine Disinfection

3.9. Application to Real-Life Situation

4. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Karim, M.R.; Abbaszadegan, M.; LeChevallier, M. Potential for pathogen intrusion during pressure transients. J. Am. Water Works Assoc. 2003, 95, 134–146. [Google Scholar] [CrossRef]

- Besner, M.C.; Broseus, R.; Lavoie, J.; Giovanni, G.D.; Payment, P.; Prevost, M. Pressure monitoring and characterization of external sources of contamination at the site of the payment drinking water epidemiological studies. Environ. Sci. Technol. 2010, 44, 269–277. [Google Scholar] [CrossRef] [PubMed]

- Teunis, P.F.; Moe, C.L.; Liu, P.; Miller, S.E.; Lindesmith, L.; Baric, R.S.; Le Pendu, J.; Calderon, R.L. Norwalk virus: How infectious is it? J. Med. Virol. 2008, 80, 1468–1476. [Google Scholar] [CrossRef] [PubMed]

- Messner, M.J.; Berger, P.; Nappier, S.P. Fractional poisson—A simple dose-response model for human norovirus. Risk Anal. 2014, 34, 1820–1829. [Google Scholar] [CrossRef] [PubMed]

- Nygard, K.; Wahl, E.; Krogh, T.; Tveit, O.A.; Bohleng, E.; Tverdal, A.; Aavitsland, P. Breaks and maintenance work in the water distribution systems and gastrointestinal illness: A cohort study. Int. J. Epidemiol. 2007, 36, 873–880. [Google Scholar] [CrossRef] [PubMed]

- Save-Soderbergh, M.; Bylund, J.; Malm, A.; Simonsson, M.; Toljander, J. Gastrointestinal illness linked to incidents in drinking water distribution networks in Sweden. Water Res. 2017, 122, 503–511. [Google Scholar] [CrossRef] [PubMed]

- Payment, P.; Siemiatycki, J.; Richardson, L.; Renaud, G.; Franco, E.; Prevost, M. A prospective epidemiological study of gastrointestinal health effects due to the consumption of drinking water. Int. J. Environ. Health Res. 1997, 7, 5–31. [Google Scholar] [CrossRef]

- Payment, P.; Richardson, L.; Siemiatycki, J.; Dewar, R.; Edwardes, M.; Franco, E. A randomized trial to evaluate the risk of gastrointestinal disease due to consumption of drinking water meeting current microbiological standards. Am. J. Public Health 1991, 81, 703–708. [Google Scholar] [CrossRef]

- Nygard, K.; Andersson, Y.; Rottingen, J.A.; Svensson, A.; Lindback, J.; Kistemann, T.; Giesecke, J. Association between environmental risk factors and campylobacter infections in Sweden. Epidemiol. Infect. 2004, 132, 317–325. [Google Scholar] [CrossRef]

- Hellard, M.E.; Sinclair, M.I.; Forbes, A.B.; Fairley, C.K. A randomized, blinded, controlled trial investigating the gastrointestinal health effects of drinking water quality. Environ. Health Perspect. 2001, 109, 773–778. [Google Scholar] [CrossRef]

- Colford, J.M., Jr.; Wade, T.J.; Sandhu, S.K.; Wright, C.C.; Lee, S.; Shaw, S.; Fox, K.; Burns, S.; Benker, A.; Brookhart, M.A.; et al. A randomized, controlled trial of in-home drinking water intervention to reduce gastrointestinal illness. Am. J. Epidemiol. 2005, 161, 472–482. [Google Scholar] [CrossRef]

- Ercumen, A.; Gruber, J.S.; Colford, J.M., Jr. Water distribution system deficiencies and gastrointestinal illness: A systematic review and meta-analysis. Environ. Health Perspect. 2014, 122, 651–660. [Google Scholar] [CrossRef] [PubMed]

- Besner, M.C.; Prevost, M.; Regli, S. Assessing the public health risk of microbial intrusion events in distribution systems: Conceptual model, available data, and challenges. Water Res. 2011, 45, 961–979. [Google Scholar] [CrossRef] [PubMed]

- Teunis, P.F.; Xu, M.; Fleming, K.K.; Yang, J.; Moe, C.L.; Lechevallier, M.W. Enteric virus infection risk from intrusion of sewage into a drinking water distribution network. Environ. Sci. Technol. 2010, 44, 8561–8566. [Google Scholar] [CrossRef]

- Yang, J.; LeChevallier, M.W.; Teunis, P.F.; Xu, M. Managing risks from virus intrusion into water distribution systems due to pressure transients. J. Water Health 2011, 9, 291–305. [Google Scholar] [CrossRef]

- Davis, M.J.; Janke, R. Development of a Probabilistic Timing Model for the Ingestion of Tap Water. J. Water Resour. Plan. Manag. 2009, 135, 397–405. [Google Scholar] [CrossRef]

- Blokker, E.; Smeets, P.; Medema, G. QMRA in the Drinking Water Distribution System. Procedia Eng. 2014, 89, 151–159. [Google Scholar] [CrossRef]

- Blokker, M.; Smeets, P.; Medema, G. Quantitative microbial risk assessment of repairs of the drinking water distribution system. Microb. Risk Anal. 2017. [Google Scholar] [CrossRef]

- Teunis, P.F.M.; Chappell, C.L.; Okhuysen, P.C. Cryptosporidium Dose-Response Studies: Variation Between Hosts. Risk Anal. 2002, 22, 475–485. [Google Scholar] [CrossRef]

- Regli, S.; Rose, J.B.; Haas, C.N.; Gerba, C.P. Modeling the Risk From Giardia and Viruses in Drinking Water. J. Am. Water Works Assoc. 1991, 83, 76–84. [Google Scholar] [CrossRef]

- Teunis, P.; van der Heijden, O.; van der Giessen, J.; Havelaar, A.H. THE DOSE-RESPONSE RELATION in Human Volunteers for Gastro-Intestinal Pathogens; Rijksinstituut Voor Volksgezondheid en Milieu RIVM: Bilthoven, The Netherlands, 1996. [Google Scholar]

- Yang, J.; Schneider, O.D.; Jjemba, P.K.; Lechevallier, M.W. Microbial Risk Modeling for Main Breaks. J. Am. Water Works Assoc. 2015, 107, E97–E108. [Google Scholar] [CrossRef]

- Krishnan, R.P.; Randall, P. Pilot-Scale Tests and Systems Evaluation for the Containment, Treatment, and Decontamination of Selected Materials from T&E Building Pipe Loop Equipment; U.S. Environmental Protection Agency: Washington, DC, USA, 2008.

- McMinn, B.R.; Ashbolt, N.J.; Korajkic, A. Bacteriophages as indicators of faecal pollution and enteric virus removal. Lett. Appl. Microbiol. 2017, 65, 11–26. [Google Scholar] [CrossRef]

- Wingender, J.; Flemming, H.C. Contamination potential of drinking water distribution network biofilms. Water Sci. Technol. 2004, 49, 277–286. [Google Scholar] [CrossRef]

- Langmark, J.; Storey, M.V.; Ashbolt, N.J.; Stenstrom, T.A. Biofilms in an urban water distribution system: Measurement of biofilm biomass, pathogens and pathogen persistence within the Greater Stockholm Area, Sweden. Water Sci. Technol. 2005, 52, 181–189. [Google Scholar] [CrossRef]

- Bauman, W.J.; Nocker, A.; Jones, W.L.; Camper, A.K. Retention of a model pathogen in a porous media biofilm. Biofouling 2009, 25, 229–240. [Google Scholar] [CrossRef]

- Paris, T.; Skali-Lami, S.; Block, J.C. Probing young drinking water biofilms with hard and soft particles. Water Res. 2009, 43, 117–126. [Google Scholar] [CrossRef]

- Helmi, K.; Skraber, S.; Gantzer, C.; Willame, R.; Hoffmann, L.; Cauchie, H.M. Interactions of Cryptosporidium parvum, Giardia lamblia, vaccinal poliovirus type 1, and bacteriophages phiX174 and MS2 with a drinking water biofilm and a wastewater biofilm. Appl. Environ. Microbiol. 2008, 74, 2079–2088. [Google Scholar] [CrossRef]

- Mains, D.W. American Water Works Association. In ANSI/AWWA C651-14; American Water Works Association: New York, NY, USA, 2015. [Google Scholar] [CrossRef]

- Blaser, M.J.; Smith, P.F.; Wang, W.L.; Hoff, J.C. Inactivation of Campylobacter jejuni by chlorine and monochloramine. Appl. Environ. Microbiol. 1986, 51, 307–311. [Google Scholar]

- Zhao, T.; Doyle, M.P.; Zhao, P.; Blake, P.; Wu, F.M. Chlorine inactivation of Escherichia coli O157:H7 in water. J. Food Prot. 2001, 64, 1607–1609. [Google Scholar] [CrossRef]

- Engelbrecht, R.S.; Weber, M.J.; Salter, B.L.; Schmidt, C.A. Comparative inactivation of viruses by chlorine. Appl. Environ. Microbiol. 1980, 40, 249–256. [Google Scholar]

- Thurston-Enriquez, J.A.; Haas, C.N.; Jacangelo, J.; Gerba, C.P. Chlorine inactivation of adenovirus type 40 and feline calicivirus. Appl. Environ. Microbiol. 2003, 69, 3979–3985. [Google Scholar] [CrossRef] [PubMed]

- Jarroll, E.L.; Bingham, A.K.; Meyer, E.A. Effect of chlorine on Giardia lamblia cyst viability. Appl. Environ. Microbiol. 1981, 41, 483–487. [Google Scholar]

- Shields, J.M.; Hill, V.R.; Arrowood, M.J.; Beach, M.J. Inactivation of Cryptosporidium parvum under chlorinated recreational water conditions. J. Water Health 2008, 6, 513–520. [Google Scholar] [CrossRef] [PubMed]

- Venczel, L.V.; Arrowood, M.; Hurd, M.; Sobsey, M.D. Inactivation of Cryptosporidium parvum Oocysts and Clostridium perfringens Spores by a Mixed-Oxidant Disinfectant and by Free Chlorine. Appl. Environ. Microbiol. 1997, 63, 4625. [Google Scholar] [PubMed]

- Hijnen, W.A.; Beerendonk, E.F.; Medema, G.J. Inactivation credit of UV radiation for viruses, bacteria and protozoan (oo)cysts in water: A review. Water Res. 2006, 40, 3–22. [Google Scholar] [CrossRef]

- Sobsey, M.D.; Fuji, T.; Shields, P.A. Inactivation of Hepatitis a Virus and Model Viruses in Water by Free Chlorine and Monochloramine. Water Sci. Technol. 1988, 20, 385–391. [Google Scholar] [CrossRef]

| Contamination | E. coli | Enterococci | MS2 | Flushing | |

|---|---|---|---|---|---|

| Total cfu | Total cfu | Total pfu | |||

| A1 | Water | 1.2 × 1010 | 8.1 × 108 | 1.3 × 1011 | 1.27 m/s, 2.5 vol |

| A2 | Water | 5.3 × 109 | 4.0 × 108 | 9.7 × 1010 | 1.27 m/s, 2.5 vol |

| A3 | Sand and Water | 1.1 × 1010 | 2.6 × 108 | 1.7 × 1011 | 1.27 m/s, 2.5 vol |

| A4 | Water | 2.6 × 1010 | 7.4 × 108 | 1.0 × 1011 | 0.45 m/s, 0.9 vol |

| E. coli | Clostridium D10 | phiX174 | Flushing | Free Chlorine | ||||

|---|---|---|---|---|---|---|---|---|

| Total cfu | cfu/mL | Total cfu | cfu/mL | Total pfu | pfu/mL | |||

| B1 | 7.5 × 1010 | 9.4 × 105 | 2.6 × 108 | 3.3 × 103 | - | - | 1.5 m/s, 3 vol | 10 mg/L, 0–24 h |

| B2 | 6.3 × 1010 | 5.7 × 105 | 8.4 × 108 | 7.6 × 103 | 1.0 × 1011 | 9.1 × 105 | 1.5 m/s, 3 vol | 10.5 mg/L, 0–24 h |

| B3 | 7.9 × 1010 | 7.2 × 105 | 2.0 × 109 | 1.8 × 104 | 5.4 × 1010 | 5.0 × 105 | 1.0 m/s, 3 vol | – |

| B4 | 7.5 × 1011 | 6.8 × 106 | 3.4 × 108 | 3.1 × 103 | 9.4 × 1010 | 8.5 × 105 | 0.3 m/s, 3–6–10–15 vol | 10 mg/L, 0–24 h |

| B5 | 5.2 × 108 | 5.7 × 1010 | 1.5 × 107 | 1.7 × 109 | 4.0 × 108 | 4.4 × 1010 | 0.3 m/s, 3–6–10–15 vol; 1.5 m/s, 3–6–10–15 vol | - |

| E. coli | C. perfringens | phiX174 | Flushing | Free Chlorine | ||||

|---|---|---|---|---|---|---|---|---|

| Total cfu | cfu/mL | Total cfu | cfu/mL | Total pfu | pfu/mL | |||

| C1 | 5.0 × 1010 | 4.5 × 105 | 1.0 × 109 | 9.2 × 109 | 6.7 × 1010 | 6.1 × 105 | 1.5 m/s, 3 vol | 10 mg/L, 0–24 h |

| C2 | 7.5 × 1010 | 5.8 × 105 | 3.2 × 109 | 2.9 × 104 | 4.0 × 1010 | 3.7 × 105 | 1.5 m/s, 3 vol | 10 mg/L, 0–24 h |

| C3 | 1.0 × 1011 | 9.5 × 105 | 3.0 × 109 | 2.7 × 104 | 4.7 × 1010 | 4.3 × 105 | 0.3 m/s, 3–6–10–15 vol | 10 mg/L, 0–24 h |

| E. coli | C. perfringens | phiX174 | |||||

|---|---|---|---|---|---|---|---|

| Water | Biofilm | Water | Biofilm | Water | Biofilm | ||

| Cultured biofilm | B1 | 99.7 | 0.3 | 27.0 | 73.0 | - | - |

| B2 | 87.5 | 12.5 | 72.6 | 27.4 | 99.3 | 0.7 | |

| B3 | 86.3 | 13.7 | 76.2 | 23.8 | 99.7 | 0.3 | |

| B4 | 93.7 | 6.3 | 28.2 | 71.8 | 98.7 | 1.3 | |

| B5 | 99.2 | 0.8 | 84.4 | 15.6 | 99.9 | 0.1 | |

| Average ± SD | 93.3 ± 6.3 | 6.7 ± 6.3 | 57.7 ± 27.8 | 42.3 ± 27.8 | 99.4 ± 0.5 | 0.6 ± 0.5 | |

| Natural biofilm | C1 | 94.4 | 5.6 | 45.0 | 55.0 | 99.3 | 0.7 |

| C2 | 99.1 | 0.9 | 91.8 | 8.2 | 99.8 | 0.2 | |

| C3 | 96.5 | 3.5 | 54.0 | 46.0 | 98.8 | 1.2 | |

| Average ± SD | 96.7 ± 2.4 | 3.3 ± 2.4 | 63.6 ± 24.9 | 36.4 ± 24.9 | 99.3 ± 0.5 | 0.7 ± 0.5 | |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

van Bel, N.; Hornstra, L.M.; van der Veen, A.; Medema, G. Efficacy of Flushing and Chlorination in Removing Microorganisms from a Pilot Drinking Water Distribution System. Water 2019, 11, 903. https://doi.org/10.3390/w11050903

van Bel N, Hornstra LM, van der Veen A, Medema G. Efficacy of Flushing and Chlorination in Removing Microorganisms from a Pilot Drinking Water Distribution System. Water. 2019; 11(5):903. https://doi.org/10.3390/w11050903

Chicago/Turabian Stylevan Bel, Nikki, Luc M. Hornstra, Anita van der Veen, and Gertjan Medema. 2019. "Efficacy of Flushing and Chlorination in Removing Microorganisms from a Pilot Drinking Water Distribution System" Water 11, no. 5: 903. https://doi.org/10.3390/w11050903

APA Stylevan Bel, N., Hornstra, L. M., van der Veen, A., & Medema, G. (2019). Efficacy of Flushing and Chlorination in Removing Microorganisms from a Pilot Drinking Water Distribution System. Water, 11(5), 903. https://doi.org/10.3390/w11050903