Object-Based Convolutional Neural Networks for Cloud and Snow Detection in High-Resolution Multispectral Imagers

Abstract

:1. Introduction

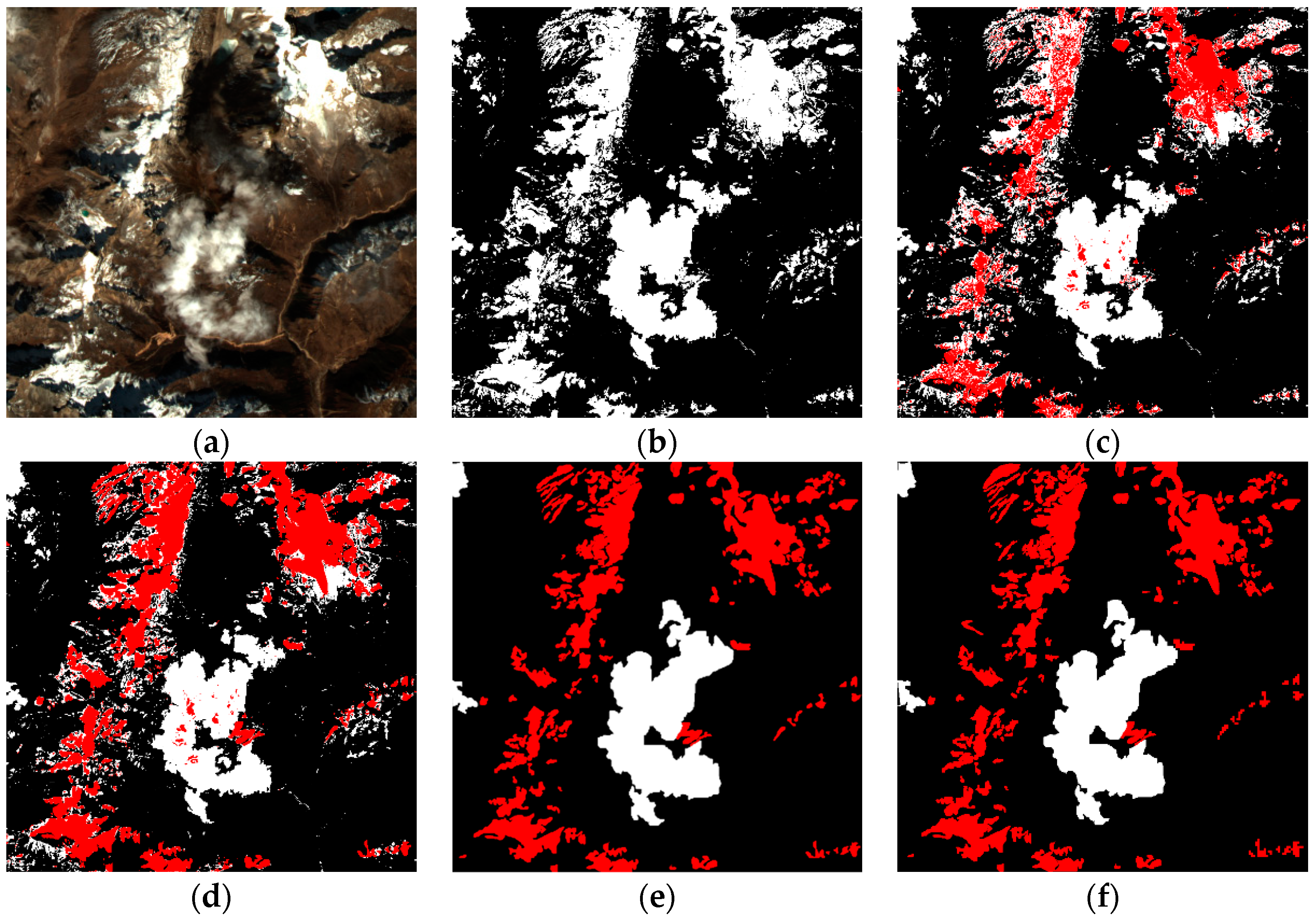

- (1)

- A new CNN structure is proposed to classify cloud and snow on an object level, which is capable of learning cloud and snow multi-scale semantic features from high-resolution multispectral imagery.

- (2)

- To better consider information from all bands and overcome the disadvantage of “salt-and-pepper” noise, we propose a new SLIC algorithm to generate superpixels at the preprocessing stage.

- (3)

- In order to reduce features loss in the pooling process, we present a self-adaptive pooling based on the maximum pooling and the average pooling.

2. Methods for Cloud and Snow Detection in High-Resolution Remote Sensing Images

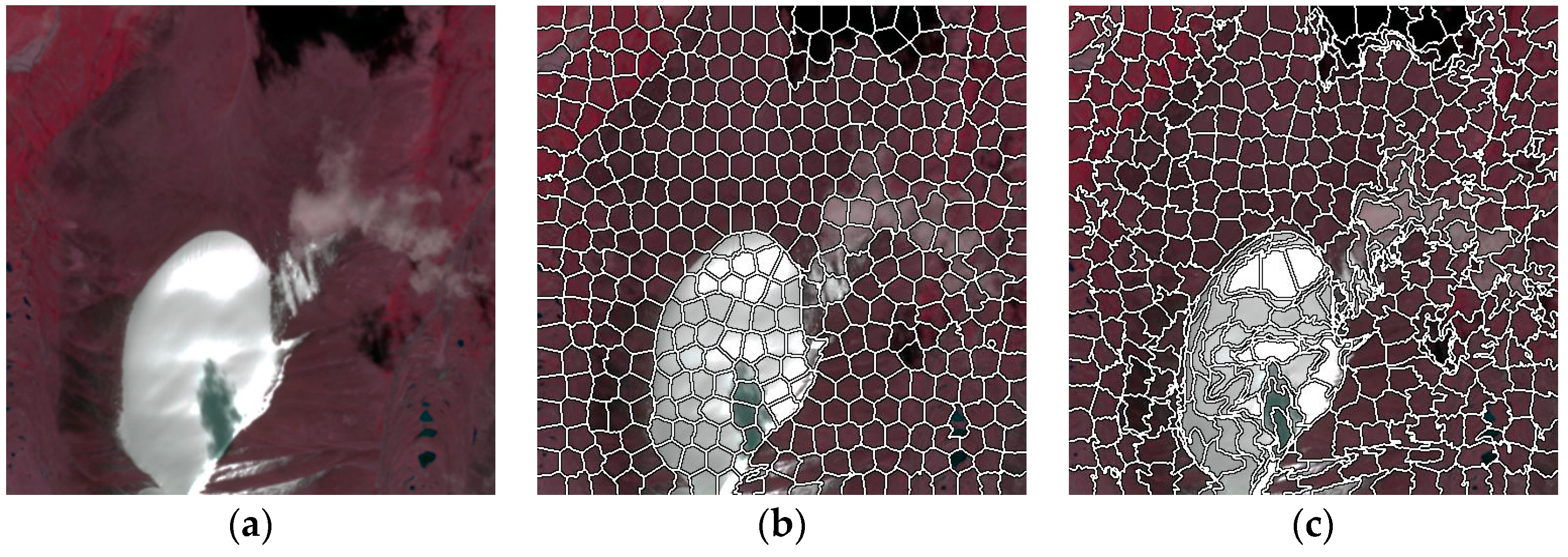

2.1. Preprocessing of Superpixels

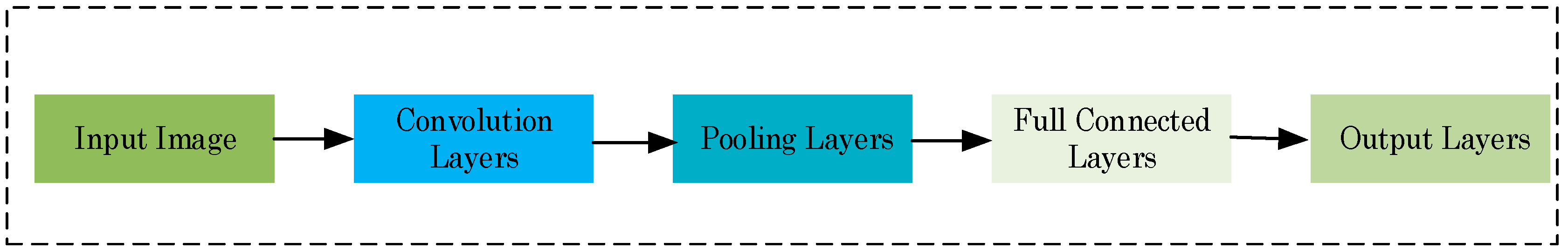

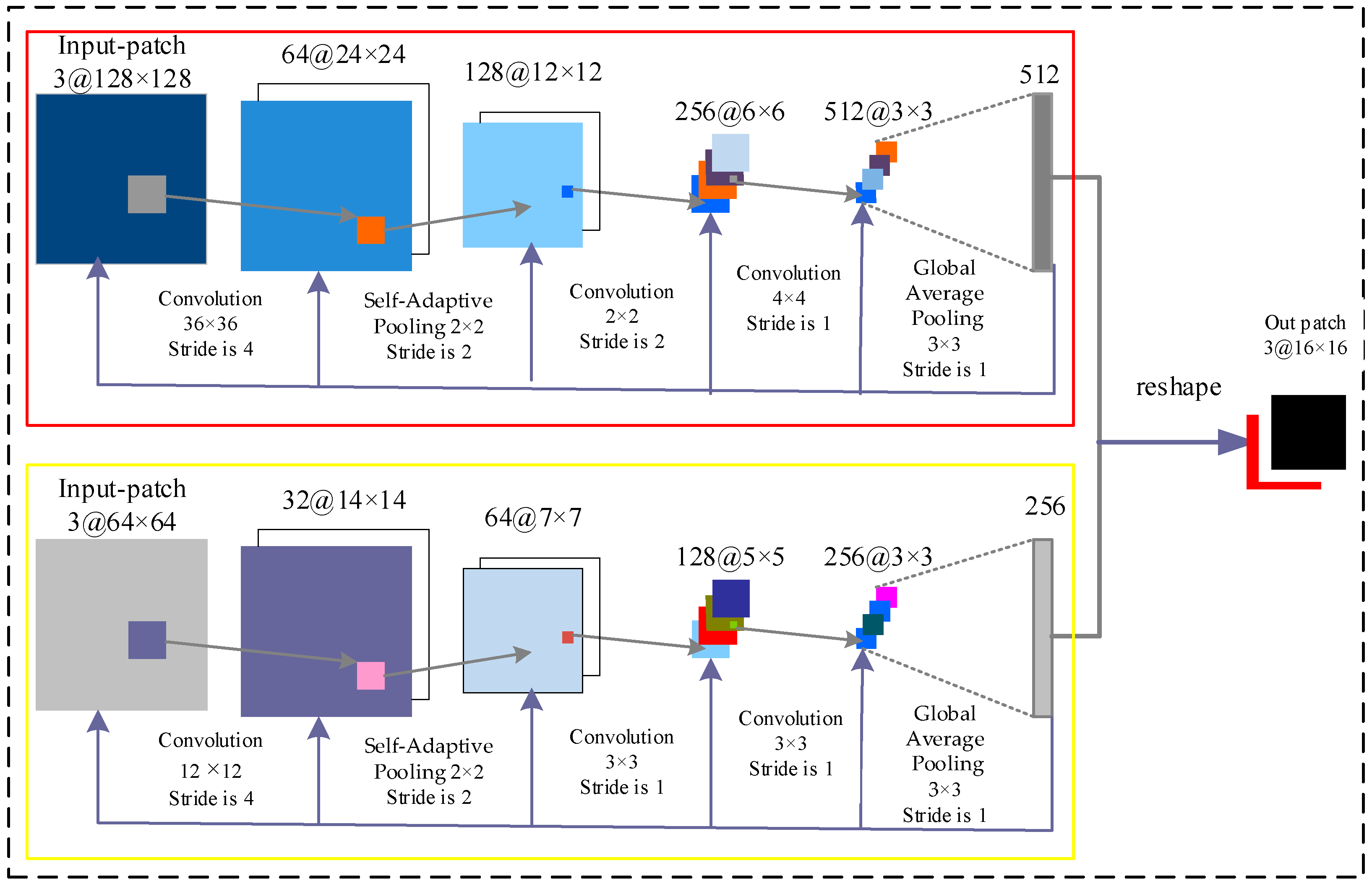

2.2. CNN Structure

2.3. Accuracy Assessment

3. Experiment and Analysis

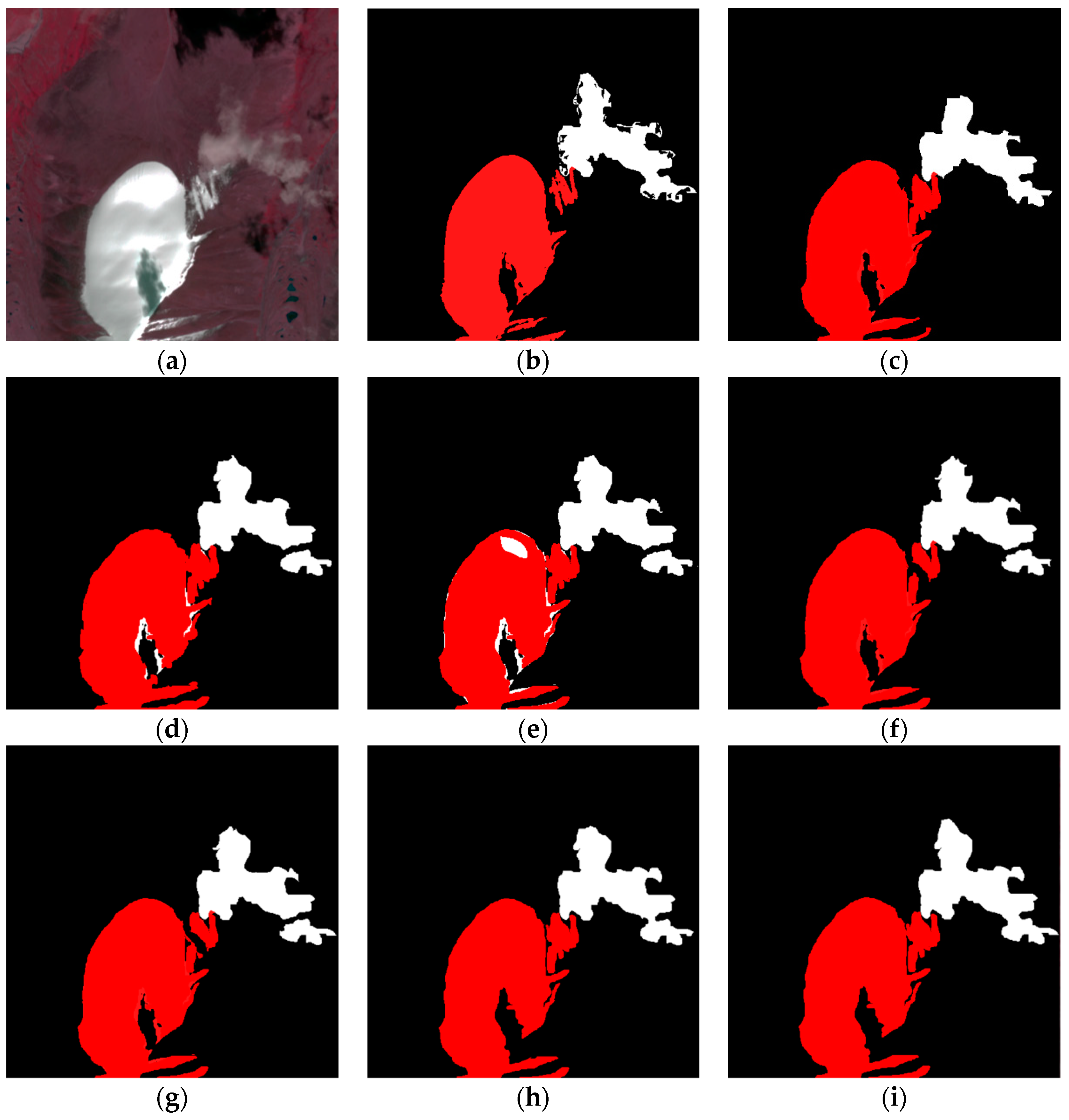

3.1. Performance of Improved Superpixel Method and Different CNN Architectures

3.2. Comparison with Other Cloud and Snow Detection Algorithms

4. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Kovalskyy, V.; Roy, D. A one year Landsat 8 conterminous United States study of cirrus and non-cirrus clouds. Remote Sens. 2015, 7, 564–578. [Google Scholar] [CrossRef]

- Xu, X.; Guo, Y.; Wang, Z. Cloud image detection based on Markov Random Field. J. Electron. (China) 2012, 29, 262–270. [Google Scholar] [CrossRef]

- Zhu, Z.; Woodcock, C.E. Object-based cloud and cloud shadow detection in Landsat imagery. Remote Sens. Environ. 2012, 118, 83–94. [Google Scholar] [CrossRef]

- Shen, H.F.; Li, H.F.; Qian, Y.; Zhang, L.P.; Yuan, Q.Q. An effective thin cloud removal procedure for visible remote sensing images. ISPRS J. Photogramm. Remote Sens. 2014, 96, 224–235. [Google Scholar] [CrossRef]

- Li, C.-H.; Kuo, B.-C.; Lin, C.-T.; Huang, C.-S. A spatial-contextual support vector machine for remotely sensed image classification. IEEE Trans. Geosci. Remote Sens. 2012, 50, 784–799. [Google Scholar] [CrossRef]

- Lasota, E.; Rohm, W.; Liu, C.-Y.; Hordyniec, P. Cloud detection from radio occultation measurements in tropical cyclones. Atmosphere 2018, 9, 418. [Google Scholar] [CrossRef]

- Hansen, M.C.; Roy, D.P.; Lindquist, E.; Adusei, B.; Justice, C.O.; Altstatt, A. A method for integrating MODIS and Landsat data for systematic monitoring of forest cover and change in the Congo basin. Remote Sens. Environ. 2008, 112, 2495–2513. [Google Scholar] [CrossRef]

- Huang, C.Q.; Thomas, N.; Goward, S.N.; Masek, J.G.; Zhu, Z.L.; Townshend, J.R.G.; Vogelmann, J.E. Automated masking of cloud and cloud shadow for forest change analysis using Landsat images. Int. J. Remote Sens. 2010, 31, 5449–5464. [Google Scholar] [CrossRef]

- Zhu, Z.; Woodcock, C.E. Automated cloud, cloud shadow, and snow detection in multitemporal Landsat data: An algorithm designed specifically for monitoring land cover change. Remote Sens. Environ. 2014, 152, 217–234. [Google Scholar] [CrossRef]

- Hall, D.K.; Riggs, G.A.; Salomonson, V.V. Development of methods for mapping global snow cover using moderate resolution imaging spectroradiometer data. Remote Sens. Environ. 1995, 54, 127–140. [Google Scholar] [CrossRef]

- Alireza, T.; Fabio, D.F.; Cristina, C.; Stefania, V. Neural networks and support vector machine algorithms for automatic cloud classification of whole-sky ground-based images. IEEE Trans. Geosci. Remote Sens. 2015, 12, 666–670. [Google Scholar]

- Achanta, R.; Shaji, A.; Smith, K.; Lucchi, A.; Fua, P.; Süsstrunk, S. SLIC superpixels compared to state-of-the-art superpixel methods. IEEE Trans. Pattern Anal. Mach. Intell. 2012, 34, 2274–2282. [Google Scholar] [CrossRef] [PubMed]

- Csillik, O. Fast Segmentation and classification of very high resolution remote sensing data using SLIC superpixels. Remote Sens. 2017, 9, 243. [Google Scholar] [CrossRef]

- Zhang, G.; Jia, X.; Hu, J. Superpixel-based graphical model for remote sensing image mapping. IEEE Trans. Geosci. Remote Sens. 2015, 53, 5861–5871. [Google Scholar] [CrossRef]

- Hagos, Y.B.; Minh, V.H.; Khawaldeh, S.; Pervaiz, U.; Aleef, T.A. Fast PET scan tumor segmentation using superpixels, principal component analysis and K-Means clustering. Methods Protoc. 2018, 1, 7. [Google Scholar] [CrossRef]

- Li, H.; Shi, Y.; Zhang, B.; Wang, Y. Superpixel-based feature for aerial image scene recognition. Sensors 2018, 18, 156. [Google Scholar] [CrossRef] [PubMed]

- Zhang, F.; Du, B.; Zhang, L. Scene classification via a gradient boosting random convolutional network ramework. IEEE Trans. Geosci. Remote Sens. 2016, 54, 1793–1802. [Google Scholar] [CrossRef]

- Mateo-García, G.; Gómez-Chova, L.; Amorós-López, J.; Muñoz-Marí, J.; Camps-Valls, G. Multitemporal cloud masking in the Google Earth Engine. Remote Sens. 2018, 10, 1079. [Google Scholar] [CrossRef]

- Dong, C.; Loy, C.C.; He, K.; Tang, X. Learning deep convolutional networks for image super resolution. In Proceedings of the European Conference on Computer Vision, Athens, Greece, 11–13 November 2015. [Google Scholar]

- Pouliot, D.; Latifovic, R.; Pasher, J.; Duffe, J. Landsat super-resolution enhancement using convolution neural networks and Sentinel-2 for training. Remote Sens. 2018, 10, 394. [Google Scholar] [CrossRef]

- Scarpa, G.; Gargiulo, M.; Mazza, A.; Gaetano, R. A CNN-based fusion method for feature extraction from Sentinel data. Remote Sens. 2018, 10, 236. [Google Scholar] [CrossRef]

- Chen, F.; Ren, R.; Van de Voorde, T.; Xu, W.; Zhou, G.; Zhou, Y. Fast automatic airport detection in remote sensing images using convolutional neural networks. Remote Sens. 2018, 10, 443. [Google Scholar] [CrossRef]

- Hu, F.; Xia, G.-S.; Hu, J.; Zhang, L. Transferring deep convolutional neural networks for the scene classification of high-resolution remote sensing imagery. Remote Sens. 2015, 7, 14680–14707. [Google Scholar] [CrossRef]

- Mboga, N.; Persello, C.; Bergado, J.R.; Stein, A. Detection of informal settlements from VHR images using convolutional neural networks. Remote Sens. 2017, 9, 1106. [Google Scholar] [CrossRef]

- Guo, Z.; Chen, Q.; Wu, G.; Xu, Y.; Shibasaki, R.; Shao, X. Village building identification based on ensemble convolutional neural networks. Sensors 2017, 17, 2487. [Google Scholar] [CrossRef] [PubMed]

- Xu, Y.; Wu, L.; Xie, Z.; Chen, Z. Building extraction in very high-resolution remote sensing imagery using deep learning and guided filters. Remote Sens. 2018, 10, 144. [Google Scholar] [CrossRef]

- Chen, Y.; Fan, R.; Yang, X.; Wang, J.; Latif, A. Extraction of urban water bodies from high-resolution remote-sensing imagery using deep learning. Water 2018, 10, 585. [Google Scholar] [CrossRef]

- Chen, Y.; Fan, R.; Bilal, M.; Yang, X.; Wang, J.; Li, W. Multilevel cloud detection for high-resolution remote sensing imagery using multiple convolutional neural networks. ISPRS Int. J. Geo-Inf. 2018, 7, 181. [Google Scholar] [CrossRef]

- Zhao, W.; Guo, Z.; Yue, J.; Zhang, X.; Luo, L. On combining multiscale deep learning features for the classification of hyperspectral remote sensing imagery. Int. J. Remote. Sens. 2015, 36, 3368–3379. [Google Scholar] [CrossRef]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards real-time object detection with region proposal networks. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 1137–1149. [Google Scholar] [CrossRef] [PubMed]

- Congalton, R.G.; Green, K. Assessing the Accuracy of Remotely Sensed Data: Principles and Practices; Lewis Publications (CRC Press): Boca Raton, FL, USA, 1999. [Google Scholar]

- Marais, I.V.Z.; Du Preez, J.A.; Steyn, W.H. An optimal image transforms for threshold-based cloud detection using heteroscedastic discriminant analysis. Int. J. Remote Sens. 2011, 32, 1713–1729. [Google Scholar] [CrossRef]

- Kussul, N.; Skakun, S.; Kussul, O. Comparative analysis of neural networks and statistical approaches to remote sensing image classification. Int. J. Comput. 2014, 5, 93–99. [Google Scholar]

- Wang, H.; He, Y.; Guan, H. Application support vector machines in cloud detection using EOS/MODIS. In Proceedings of the Remote Sensing Applications for Aviation Weather Hazard Detection and Decision Support, San Diego, CA, USA, 25 August 2008. [Google Scholar]

| Method | Cloud | Snow | ||

|---|---|---|---|---|

| OA (%) | Kappa (%) | OA (%) | Kappa (%) | |

| Our double-branch CNNs + pixel | 93.46 | 89.17 | 94.36 | 89.51 |

| Our double-branch CNNs + SLIC | 95.31 | 90.14 | 96.81 | 90.51 |

| CNNs (input branch size of 128 × 128) | 92.29 | 88.94 | 90.97 | 88.37 |

| CNNs (input branch size of 64 × 64) | 90.38 | 88.97 | 88.19 | 88.47 |

| double-branch CNNs + average pooling | 95.71 | 89.81 | 95.96 | 90.01 |

| Our double-branch CNNs + max pooling | 96.14 | 91.27 | 96.91 | 91.52 |

| Our proposed framework | 98.36 | 92.64 | 99.17 | 93.27 |

| Method | Cloud | Snow | ||

|---|---|---|---|---|

| OA (%) | Kappa (%) | OA (%) | Kappa (%) | |

| ENVI threshold method | 68.34 | 59.74 | / | / |

| ANN | 76.69 | 69.17 | 71.94 | 68.35 |

| SVM | 79.19 | 70.39 | 80.39 | 70.94 |

| Our proposed framework | 99.16 | 92.64 | 98.17 | 92.97 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wang, L.; Chen, Y.; Tang, L.; Fan, R.; Yao, Y. Object-Based Convolutional Neural Networks for Cloud and Snow Detection in High-Resolution Multispectral Imagers. Water 2018, 10, 1666. https://doi.org/10.3390/w10111666

Wang L, Chen Y, Tang L, Fan R, Yao Y. Object-Based Convolutional Neural Networks for Cloud and Snow Detection in High-Resolution Multispectral Imagers. Water. 2018; 10(11):1666. https://doi.org/10.3390/w10111666

Chicago/Turabian StyleWang, Lei, Yang Chen, Luliang Tang, Rongshuang Fan, and Yunlong Yao. 2018. "Object-Based Convolutional Neural Networks for Cloud and Snow Detection in High-Resolution Multispectral Imagers" Water 10, no. 11: 1666. https://doi.org/10.3390/w10111666

APA StyleWang, L., Chen, Y., Tang, L., Fan, R., & Yao, Y. (2018). Object-Based Convolutional Neural Networks for Cloud and Snow Detection in High-Resolution Multispectral Imagers. Water, 10(11), 1666. https://doi.org/10.3390/w10111666