1. Introduction

Owing to their high nutritional value and unique flavor, cherries have emerged as a key contributor to the global fruit economy. However, most cherry cultivars exhibit gametophytic self-incompatibility, requiring cross-pollination to ensure successful fruit set [

1,

2]. Under natural conditions, pollination efficiency is often limited by factors such as asynchronous blossoming, incompatibility among cultivars, and insufficient activity of pollinators (e.g., bees or wind). These challenges are exacerbated under abnormal climate conditions or ecological imbalance, frequently resulting in reduced fruit set, lower yield, and compromised fruit quality [

3]. Although traditional manual pollination can improve fruit set to some extent, it is labor-intensive, inefficient, and costly, making it inadequate for the demands of modern orchards seeking efficient and precise pollination. With ongoing advances in artificial intelligence, computer vision, and agricultural robotics, intelligent pollination systems have become a promising direction for achieving smart, adaptive pollination in fruit orchards. The last few years have witnessed considerable strides in the creation of intelligent pollination equipment for various horticultural applications. For example, Masuda et al. [

4] developed a tomato pollination robot equipped with a flexible robotic arm and airflow mechanism, achieving a pollination success rate of 92%. Li Guo [

5] designed a kiwifruit pollination system that integrates visual recognition with a dual-phase (gas-liquid) spray module, achieving an accuracy of 91.56%. To address the challenges posed by the compact canopy, dense branching, and uneven floral distribution of cherry trees, Liu Ping et al. [

6] developed a self-propelled pollination robot integrating autonomous navigation, adaptive spray booms, and a pollen control system, enabling precise operation across various cherry tree architectures. Bu Kunting et al. [

7] proposed an intelligent pollination system based on coordinated multi-arm manipulators that combines multi-target visual recognition with synchronized control strategies, significantly enhancing operational efficiency and environmental adaptability.

In intelligent pollination systems, blossom detection represents a pivotal step towards realizing automated localization and precise pollination, where both detection accuracy and processing speed critically influence the efficiency of pollination operations. The YOLO line of object recognition algorithms, distinguished by their end-to-end architecture and rapid inference capability, has been extensively applied to agricultural blossom detection tasks, demonstrating remarkable performance. For instance, Bai et al. [

8] incorporated the Swin transformer and GS-ELAN (GSConv and ELAN) modules into YOLOv7, thereby achieving high-precision detection of strawberry blossoms. Li et al. [

9] developed a kiwifruit blossom and bud detection model utilizing YOLOv4, achieving an mAP of 97.61%, thereby fulfilling real-time detection requirements. Shang Yuying et al. [

10] optimized the YOLOv5s architecture to achieve high-precision detection of apple blossoms under natural conditions, with an mAP of 97.2%. Shilei Lyu et al. [

11] achieved lychee flower detection through YOLO-HPFD multi-teacher feature distillation, with an mAP of 94.21%. Xu et al. [

12] improved tomato blossom detection accuracy to 91.87% by integrating attention-enhanced neural networks with multi-angle image feature fusion. Additionally, Wang et al. [

13] employed the YOLOv8 model for detecting chili pepper blossoms. Ren et al. [

14] proposed the FPG-YOLO model for the precise recognition of pear blossoms. These studies provide technical support for computer vision-based blossom detection, laying the groundwork for practical deployment of intelligent pollination systems and advancing the development of smart orchards.

However, relying solely on object detection algorithms is insufficient to ensure effective pollination outcomes in the process of achieving efficient pollination. In addition to precise detection, it is also essential to achieve accurate control of key spraying parameters—such as the distance between the nozzle and the blossom, spraying duration, and liquid flow rate—to increase pollen deposition and reduce resource waste. Therefore, the effective integration of blossom detection models with spray parameter optimization techniques is a key approach to improving both the efficiency and intelligence of cherry pollination. In response to these challenges, this work introduces an enhanced YOLO-based architecture—the SMD-YOLO model—that incorporates the ScConvC3k2 module, MSCA (multi-scale channel attention) mechanism, and DualConv module to enhance detection accuracy while achieving model lightweighting, making it suitable for embedded deployment in complex orchard environments. A cherry spray pollination testing platform was designed and constructed utilizing a secondary orthogonal rotational combination experimental method to optimize three critical parameters—spray distance, spray duration, and liquid flow rate—in order to derive the optimal pollination parameter combination. This study offers theoretical foundations and technical support for intelligent cherry pollination and presents a feasible solution for refined management in smart orchards.

2. Materials and Methods

2.1. Cherry Blossom Dataset Acquisition and Processing

In this study, a cherry blossom dataset was collected from the Northern Fruit Tree Nursery Base in Qixia, Shandong Province, and the Tianping Lake Demonstration Base of the Shandong Institute of Pomology. The images were captured during late February and early April 2025, respectively. The dataset encompassed cherry blossom images acquired under diverse environmental conditions. All images were captured using an IQOO 12 smartphone (IQOO Communications Technology Co., Ltd., Dongguan, China) with an original resolution of 3072 × 3072 pixels. After screening the raw images, a total of 2001 images meeting the experimental criteria were selected. To enhance training efficiency and model performance, a fixed input dimension of 640 × 640 pixels was applied to all images to ensure uniformity.

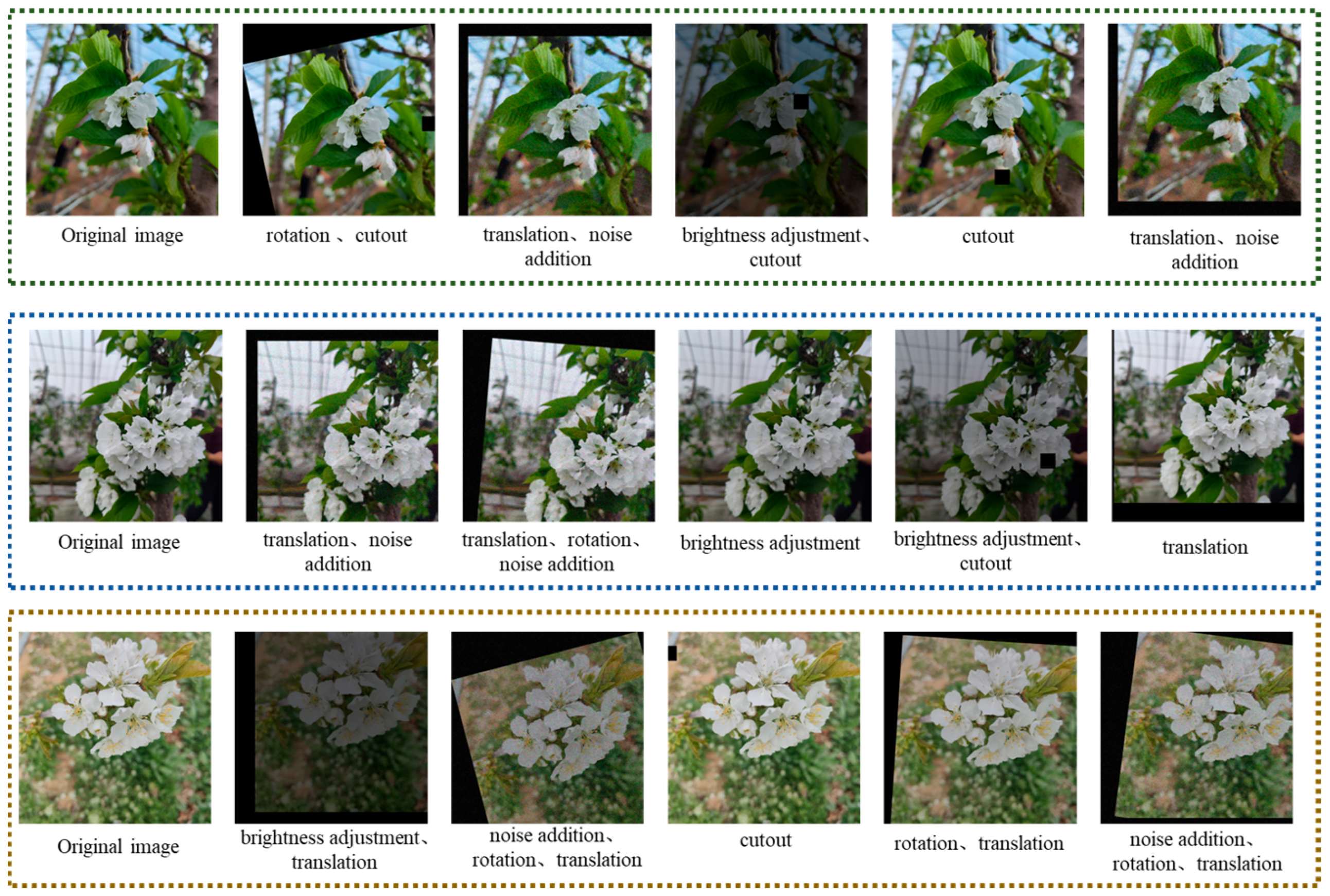

Manual annotations of all dataset images were conducted using the LabelImg (Version: 1.8.6) tool prior to model training. Following annotation, augmentation techniques were employed to improve the model’s ability to generalize. The dataset was augmented using five techniques: noise addition, brightness adjustment, cutout, rotation, and translation. Each original image underwent augmentation five times using a random combination of one to three techniques. A total of 12,006 augmented images were generated. To facilitate model training and evaluation, the dataset was partitioned at an 8:1:1 ratio into training (9606 images), validation (1200 images), and test (1200 images) sets. Examples of the augmented images are presented in

Figure 1.

2.2. Selection of Cherry Pollination Methods

Under facility conditions, traditional pollination methods that rely on natural agents (such as bees) face numerous challenges. In enclosed environments like greenhouses or plastic tunnels, limited space and fluctuating temperature and humidity hinder the activity and maintenance of pollinating insects. Their introduction is not only costly and management-intensive but also leads to unstable pollination outcomes. Consequently, artificial and mechanical pollination have emerged as more controllable and reliable alternatives for pollination in facility-based agriculture.

Current mechanical pollination methods mainly include dry and liquid pollination. Dry pollination simulates the natural dispersion of pollen and is characterized by simple operation and minimal physical disturbance. However, in mechanical applications, it often suffers from uneven pollen distribution, electrostatic interference, weak adhesion, and significant pollen loss, which hinder precise operation and efficient resource utilization. In contrast, liquid pollination offers superior mechanical compatibility and operational controllability. By suspending pollen in a liquid carrier and delivering it via a spraying system, key parameters such as spray distance and flow rate can be precisely regulated, thereby improving spray uniformity and application consistency. Taking into account the application stability, automation adaptability, and functional integration ability of both methods, liquid pollination was chosen in this study.

2.3. Cherry Pollination Test Platform

To assess how critical operating parameters influence the efficiency of cherry spray pollination, a cherry blossom spray pollination test platform was designed and constructed, as illustrated in

Figure 2. The platform primarily consisted of an LS-1116 self-priming pump (maximum head: 50 m, Shuyang County Guangruju E-Commerce Co., Ltd., Ningbo City, China), a YYC-2S relay (Shenzhen Yueyu Electronic Technology Co., Ltd., Shenzhen, China), a flow meter, a 1.0 mm diameter nozzle, a throttle valve, a custom-designed dual-axis rotating gimbal, and an adjustable test bench with a spray distance range of 1–30 cm. Water-sensitive paper was used to simulate the stigma region of cherry blossoms and assess droplet distribution via ImageJ (Version: 1.8.0) analysis of liquid coverage, and an electronic balance with 0.01 g precision was used to measure liquid deposition. The control system was based on an STM32 microcontroller platform, with custom-developed software that enabled real-time adjustment of spray parameters. To ensure consistency in spray characteristics and experimental reproducibility, key performance metrics of the spray unit were systematically measured. The working spray pressure of the selected nozzle, within the operational flow rate and throttle adjustment range, was found to be 0.25–0.45 MPa, corresponding to droplet sizes ranging from 120 to 190 μm. The overall system demonstrated good operability, stability, and repeatability, facilitating effective comparative experiments under various spray parameter combinations. It provides a robust and dependable experimental foundation for optimizing cherry pollination parameters and evaluating spray performance.

2.4. SMD-YOLO Model

The SMD-YOLO model is an optimized version of the YOLOv11 model [

15], designed to enhance cherry blossom detection performance in complex agricultural environments. By integrating the ScConv and C3k2 modules, a novel ScConvC3k2 module was designed as the core of the neck, enhancing feature extraction under complex backgrounds and improving detection accuracy. The MSCA mechanism was introduced at the 17th layer to dynamically adjust channel-wise weights in the feature map, thus improving multi-scale feature fusion and enhancing the model’s sensitivity to small objects and edge features. Furthermore, the DualConv module was employed to replace all standard convolutional layers except for the input layer (layer 0), boosting the extraction of texture and edge features while reducing the parameters to achieve a more lightweight model design. As a result, the SMD-YOLO model maintains the efficiency of YOLOv11 while offering improved robustness and real-time performance for small-object detection in complex agricultural settings, rendering it appropriate for use in embedded systems with limited computational resources. The structural configuration of SMD-YOLO is presented in

Figure 3.

The SMD-YOLO model is primarily composed of three parts: backbone, neck, and head. The operational process is as follows. The input image is sequentially processed by the backbone through a convolutional layer (Conv), three sets of DualConv and C3k2 modules, followed by the SPPF and C2PSA modules (layers 0–10), enabling progressive extraction and compression of image features. The neck component sequentially incorporates two sets of Unsample, Concat, and ScConvC3k2 modules, followed by an MSCA module, and then two additional sets of DualConv, Concat, and ScConvC3k2 modules (Layers 11–23), to achieve multi-scale feature fusion and enhance key feature representation through attention mechanisms. Finally, the head employs three detection heads at different spatial resolutions to identify targets of varying sizes. The entire model achieves efficient detection and accurate localization of cherry blossom targets in images through an end-to-end processing pipeline.

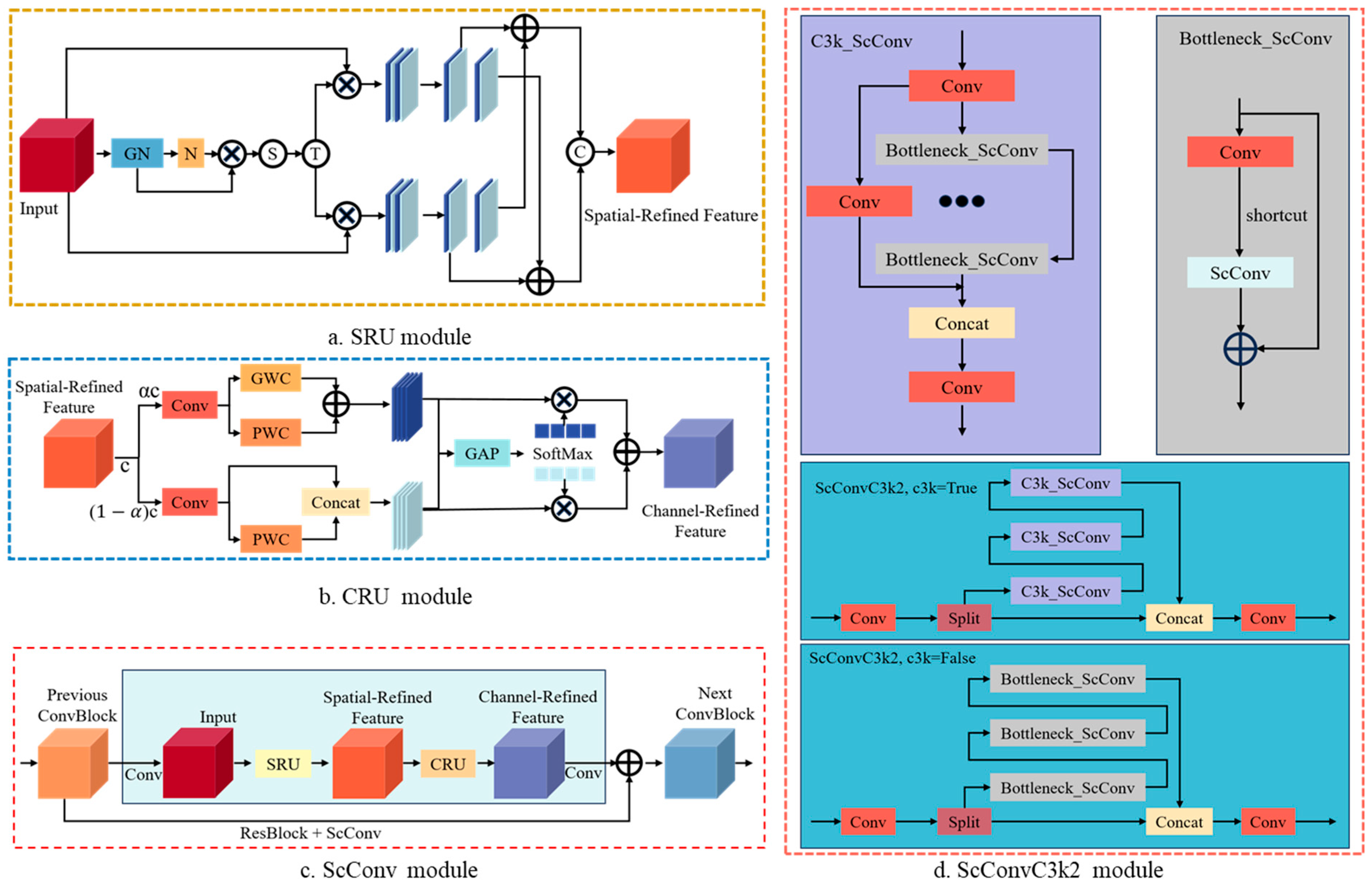

2.4.1. ScConvC3k2 Module

ScConvC3k2 is an efficient and lightweight neural network module that integrates the C3k module with the ScConv [

16] (spatial and channel reconstruction convolution) module, achieving robust feature extraction capabilities with low computational cost. The ScConv module consists of the spatial reconstruction unit (SRU) and the channel reconstruction unit (CRU). Specifically, the SRU enhances spatial information through a gating mechanism and channel rearrangement, thereby enhancing the model’s sensitivity to spatial position and boundary details. The CRU separates the input feature channels into high-frequency and low-frequency branches, employing pointwise convolution (PWC) and groupwise convolution (GWC) to augment feature representation. Channel importance weights are also introduced to enhance feature extraction efficiency and preserve normalization stability [

17]. The ScConvC3k2 module adopts a branched architecture where input channels are divided, processed independently, and subsequently concatenated for output. The main body consists of two 1 × 1 convolutional layers and a multi-branch bottleneck module. In the Bottleneck_ScConv sub-module, ScConv replaces traditional convolution and is integrated with standard convolution to jointly model spatial and channel information. The main hyperparameter settings of the module are as follows: the number of channels is set to 64; the number of groups in the group convolution within ScConv is 4; the channel partition ratio is 0.5; and the activation function is uniformly set to SiLU (sigmoid-weighted linear unit). The module supports flexible control over whether to activate the ScConv branch through the C3k parameter, allowing adaptation to different computational resource scenarios. The module also facilitates flexible switching of the ScConv branch via the C3k parameter. The ScConvC3k2 module enhances semantic modeling while effectively controlling parameter count and inference time, rendering it well adapted to the constraints of embedded edge hardware.

In the cherry blossom detection task, the ScConvC3k2 module exhibits substantial performance advantages. The SRU enhances the extraction of floral edge contours and spatial distribution patterns, contributing to more precise feature representation. The CRU enhances the robustness of fine-grained petal feature representation and occluded object detection by fusing and compressing multifrequency information. The bottleneck_ScConv sub-module reduces model size while retaining strong semantic modeling capability, thereby enabling high-precision and low-latency object detection on resource-constrained edge devices. The ScConvC3k2 module provides robust architectural support for real-time cherry blossom detection in agricultural environments, demonstrating exceptional performance in balancing model efficiency and detection accuracy. A schematic of the module architecture is presented in

Figure 4.

2.4.2. MSCA Module

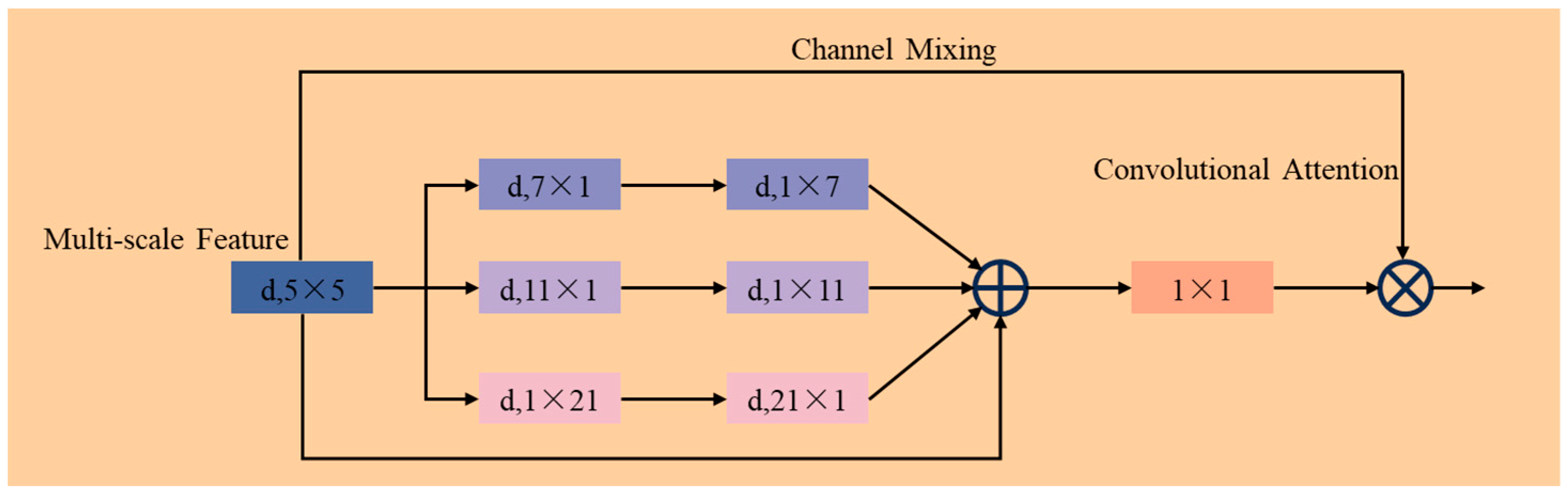

The MSCA (multi-scale channel attention) module [

18] is an efficient feature enhancement mechanism that integrates multi-scale receptive fields and channel attention, effectively improving the model’s capability to represent critical objects amid intricate scenes. The mechanism comprises three components. First, local spatial information is aggregated through deep convolution operations to construct a foundational feature representation. Second, multiple depth-wise strip convolution branches are designed to extract contextual semantic features in parallel with varying receptive fields, enabling multi-scale information representation. Finally, a 1 × 1 convolution is employed to model the dependencies between channels, generating adaptive channel attention weights that reweight the feature maps. In its architectural design, the MSCA module incorporates multiple depth-wise separable convolution kernels of varying sizes, including 5 × 5, 1 × 7, 7 × 1, 1 × 11, 11 × 1, 1 × 21, and 21 × 1, to capture semantic information across multiple directions and scales. All convolution operations use a dilation rate of 1 to maintain computational efficiency. MSCA dynamically modulates the input feature maps via channel-wise weighting, enhancing key region representations and suppressing background interference, thereby improving overall detection accuracy [

19]. Its multi-scale structure enables the model to effectively adapt to objects of varying sizes and shapes, while the channel attention enhances global perception. Compared with traditional self-attention mechanisms, MSCA maintains strong semantic modeling capability while reducing computational complexity, making it suitable for embedded deployment in lightweight detection networks. The structure of the MSCA module is illustrated in

Figure 5.

In this study, the MSCA module was integrated into the 17th layer of the YOLO architecture to enhance the model’s ability to perceive cherry blossom features under small-scale, dense overlap, and complex background conditions. The MSCA module effectively captures boundary and semantic variations of blossoms at different scales through its multi-scale receptive field convolution branches, enhancing the model’s robustness under varying shooting angles and lighting conditions. Simultaneously, the channel attention mechanism amplifies the response intensity of key regions, effectively suppressing background noise and improving the detection accuracy of small and occluded floral targets.

2.4.3. DualConv Module

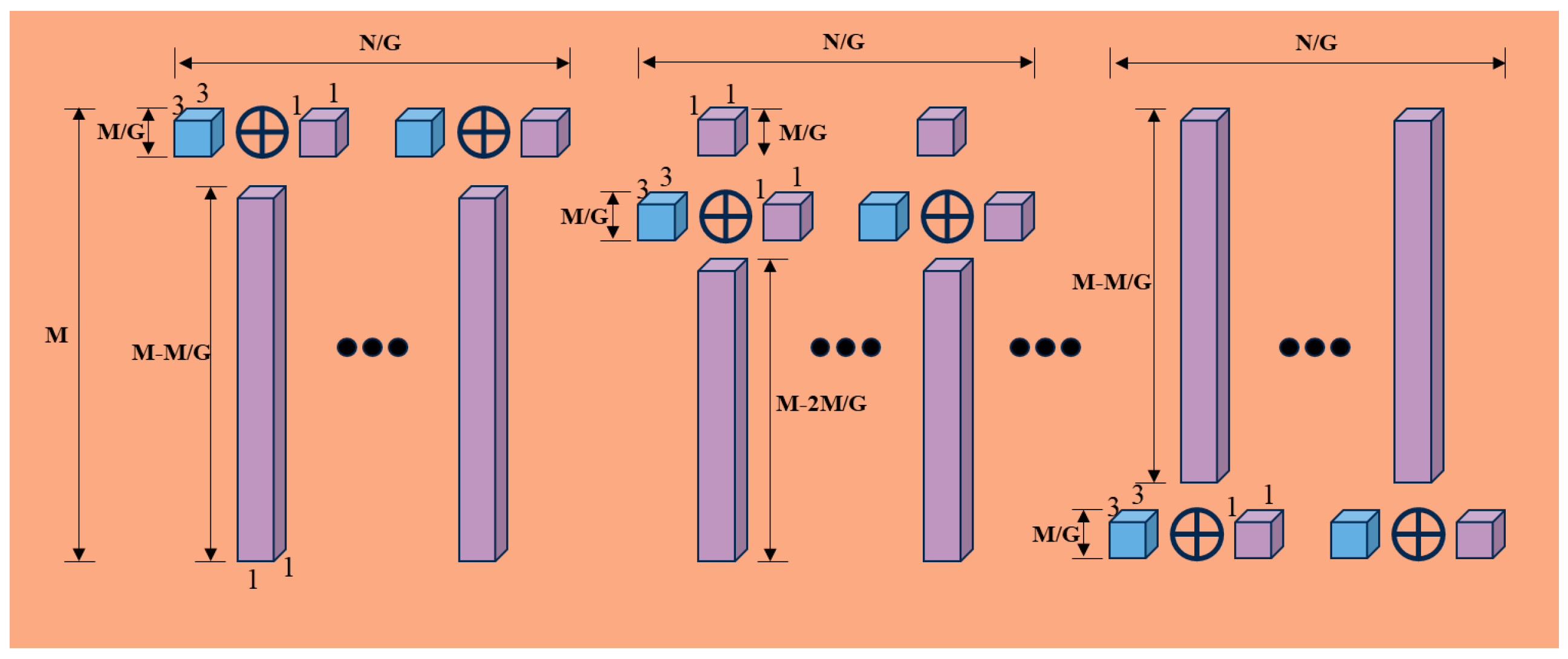

The DualConv module [

20] is a structurally efficient and computationally lightweight convolutional module that reduces model complexity while maintaining or even enhancing feature extraction capability. The module adopts a dual-branch parallel design: one branch utilizes a 3 × 3 group convolution to capture local spatial details, while the other employs a 1 × 1 pointwise convolution to strengthen interchannel information exchange. After the outputs from both branches are fused, they are further processed using normalization and a nonlinear activation function to enable effective modeling of multi-scale information [

21]. By introducing a grouped convolution strategy, the DualConv module reduces the parameters and computational overhead, avoids channel information fragmentation, and enhances the continuity of feature expression. Compared with traditional convolutional structures, this module offers improved computational efficiency along with structural flexibility and scalability, making it particularly well suited for resource-constrained edge computing scenarios. The network structure of the DualConv module is illustrated in

Figure 6.

In the cherry blossom detection task, the DualConv module’s fine-grained sensitivity to texture and edge features enables it to accurately capture floral morphology in complex environments, such as petal contours and stamen structures, thereby improving detection accuracy. Furthermore, the DualConv module exhibits strong multi-scale feature fusion capabilities, enhancing the model’s robustness under varying lighting conditions, shooting angles, and background interference, thus ensuring stable performance in complex scenarios. Moreover, the module’s lightweight design significantly reduces computational burden, making it particularly suitable for deployment on mobile robots, field data acquisition devices, and other resource-constrained platforms. It provides a feasible technical solution for efficient and low-power object recognition systems in smart agriculture.

2.5. Model Test Setup and Evaluation Criteria

Model training and testing in this study were conducted on a Linux operating system (Ubuntu 22.04). The hardware configuration consisted of an Intel Xeon Platinum 8352V (Intel Corporation, Santa Clara, CA, USA) processor, an Nvidia GeForce RTX 4090 GPU (NVIDIA Corporation, Santa Clara, CA, USA), and 24 GB of RAM. The experiments were implemented using Python 3.10 and trained with the PyTorch 2.1.0 deep learning framework. The study used CUDA 12.1 as the GPU acceleration library to enhance training efficiency. The model training hyperparameter settings are shown in

Table A1.

To comprehensively evaluate the model’s performance in cherry blossom detection, this study employed precision (P), recall (R), mean average precision (mAP), model size, parameters, computational complexity, and detection speed (FPS) as the primary evaluation metrics [

22]. These metrics collectively assessed the model’s overall performance in terms of detection accuracy, resource consumption, and inference efficiency, ensuring the practical relevance of the experimental results.

Here,

P denotes precision, expressed as a percentage;

R represents the recall rate, also in percentage terms;

AP refers to average precision (%); while

mAP indicates the mean average precision across categories (%).

TP denotes the number of true positives, i.e., correctly identified cherry blossoms;

FP corresponds to the number of false positives, i.e., incorrectly identified cherry blossoms;

FN signifies the number of false negatives, referring to missed detections of cherry blossoms; and

TN indicates the number of true negatives, referring to correctly identified background objects.

K represents the total number of cherry blossom categories present in the dataset.

T denotes the inference time per image, and

FPS (frames per second) quantifies the number of images processed per second.

2.6. Test Bench Pollination Test Design

2.6.1. Selection of Test Factors

To analyze the effects of different spray parameters on cherry blossom pollination performance, this study selected spray distance, spray duration, and liquid flow rate as key variables for a controlled spray pollination experiment [

23,

24]. These three factors can all influence pollen liquid deposition and coverage uniformity during pollination. Therefore, optimizing spray parameters is essential for improving overall pollination efficiency.

- (1)

Spray Distance

Spray distance refers to the physical gap between the nozzle outlet and the target blossom, which directly influences the uniformity of liquid distribution and the effectiveness of pollination. Too short a spray distance may result in excessive local accumulation of liquid, reducing coverage and increasing pollen liquid waste. Conversely, an overly long distance may result in droplet dispersion in the air, reducing effective deposition on the target surface. This experiment employed five spray distance levels—1.3, 2.0, 3.0, 4.0, and 4.7 cm—to investigate their effects on pollination performance.

- (2)

Spray Time

Spray time refers to the duration during which the nozzle continuously releases pollen liquid in the experiment. A short spray time may result in insufficient pollen release, making effective pollination difficult to achieve. Excessive spray time may lead to the overaccumulation of pollen liquid, reduced adhesion efficiency, and resource waste. To determine an optimal spray time, this experiment set five spray time levels—0.7, 1.0, 1.5, 2.0, and 2.3 s—to analyze their effects on pollination performance.

- (3)

Liquid Flow Rate

Liquid flow rate refers to the velocity at which pollen liquid exits the nozzle per unit time. It influences droplet size, kinetic energy, and distribution density on the target surface. High flow rates may result in larger droplets and wider spray coverage, but reduced deposition in the target area. Low flow rates may lead to insufficient droplet momentum and increased drift, thereby reducing spray penetration and overall coverage, which negatively impacts pollination efficiency. Five liquid flow rate levels were set—229, 270, 330, 390, and 431 mL/min—to evaluate spray uniformity and pollination performance under varying flow conditions.

2.6.2. Secondary Orthogonal Rotation Combination Test

To investigate the effects of three key parameters—spray distance, spray time, and liquid flow rate—on the pollination performance of cherry blossoms and to further optimize spraying conditions, this study employed a quadratic orthogonal rotational combination experimental design. The selection of experimental factors and their levels was based on the results of preliminary single-factor experiments. Details are provided in

Table 1. In this design, variables

,

, and

represent the coded values for spray distance (cm), spray time (s), and liquid flow rate (mL/min), respectively. The response variables

and

correspond to liquid deposition (g) and liquid coverage rate (%), respectively. These indicators were used to comprehensively evaluate the effectiveness of spray-based pollination. A multifactor interaction analysis was performed to identify the primary and secondary effects of each parameter on pollination performance, providing data support for parameter optimization.

3. Results

3.1. SMD-YOLO Cherry Blossom Detection Model Results

3.1.1. Comparison Experiments at Different Positions of the ScConvC3k2 Module

To evaluate the effectiveness and adaptability of the proposed ScConvC3k2 module within the network architecture, the module was integrated into both the backbone and neck, resulting in four distinct structural configurations for comparative experiments. The evaluation results of the detection performance for each configuration are presented in

Table 2.

As shown in

Table 2, the ScConvC3k2 module consistently improved performance across different configurations, indicating strong generalizability and feature fusion capability. Notably, the configuration with ScConvC3k2 in the neck (C3k2–ScConvC3k2) achieved the best detection performance, with a precision of 84.8%, recall of 85.2%, and mAP of 92.2%. Compared to the baseline configuration (C3k2–C3k2), this structure showed improvements of 1.0, 0.6, and 0.7 percentage points in precision, recall, and mAP, respectively. In comparison with the ScConvC3k2–C3k2 and ScConvC3k2–ScConvC3k2 configurations, it also demonstrated varying degrees of improvement in precision, recall, and mAP.

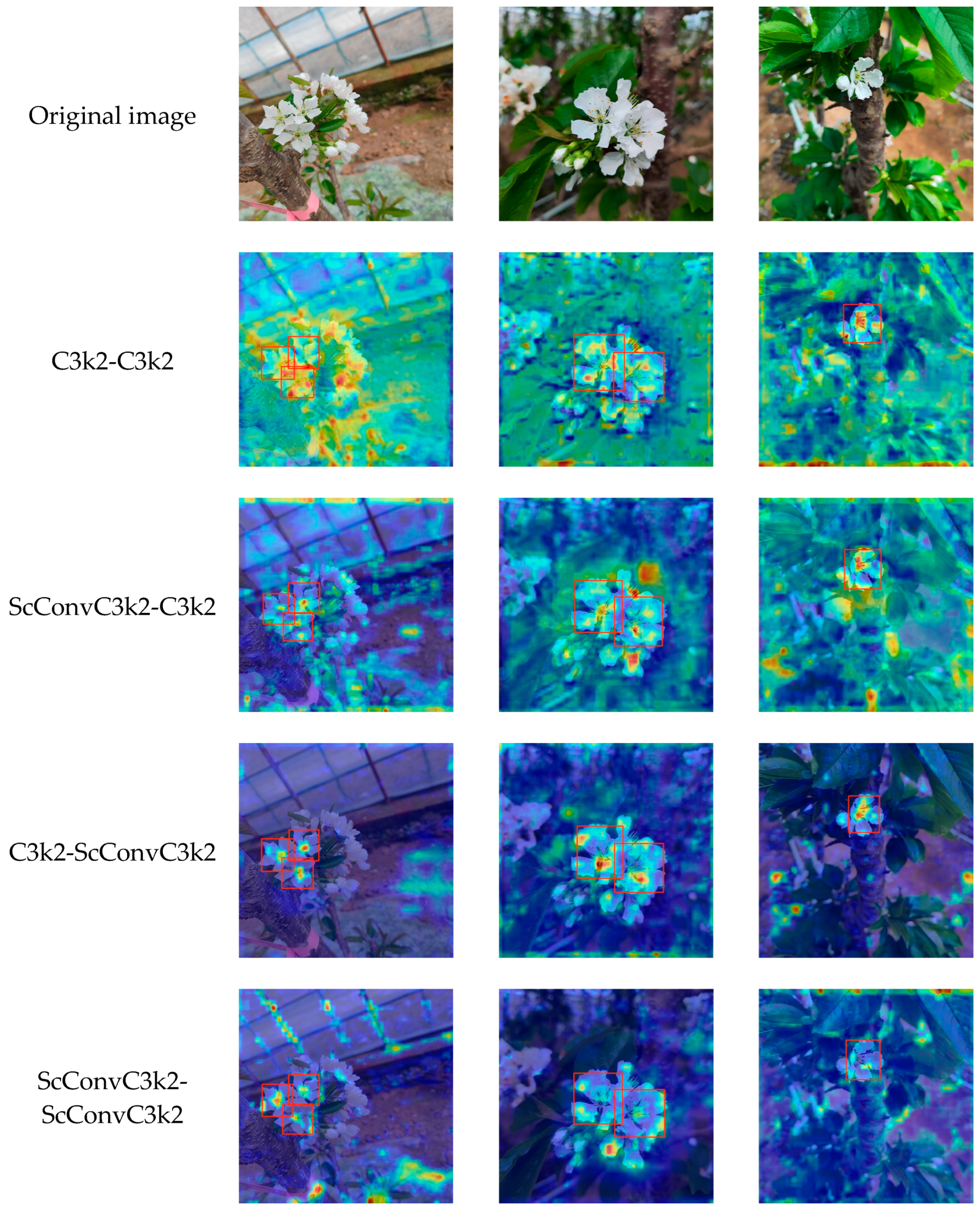

To further validate the effectiveness of the ScConvC3k2 module at different network positions, Grad-CAM was employed to visualize the feature maps of detection models with various structural combinations, as shown in

Figure 7. The C3k2-C3k2 combination exhibits dispersed activation regions, blurred target boundaries, and significant background interference. When ScConvC3k2 is introduced into the backbone (ScConvC3k2-C3k2), target perception becomes slightly more concentrated and edge enhancement is observed; however, redundant activations remain. This indicates that its representational capacity in the shallow feature stage is limited, mainly due to the smaller receptive field and longer gradient propagation paths at this level, which hinder sufficient global information integration. As a result, the advantages of SRU in reweighting spatial distribution features and CRU in fusing multifrequency fine-grained information cannot be fully exploited. In contrast, introducing ScConvC3k2 in the neck (C3k2-ScConvC3k2) leads to more focused activation regions, precise localization, and reduced background interference. This suggests that during the feature fusion stage, the larger receptive field and shorter gradient flow paths enable ScConvC3k2 to more effectively capture key target-region features, allowing SRU to enhance edge contours and CRU to improve the robustness of fine-grained petal feature representation. Although applying ScConvC3k2 in both the backbone and neck (ScConvC3k2-ScConvC3k2) further increases the overall activation intensity, some samples exhibit overly large activated regions around the target, implying that stacking the module may introduce redundant information and compromise discriminative accuracy.

As shown in

Table 2 and

Figure 7, integration of the ScConvC3k2 module into the neck best leverages its multi-scale feature fusion advantages. Therefore, the C3k2–ScConvC3k2 configuration was selected as the improved model architecture, designated as the YOLO-S model for subsequent experimental studies.

3.1.2. Comparative Experiments on Attention Mechanisms

To evaluate the effectiveness of the proposed MSCA mechanism in object detection, we conducted comparative experiments by integrating different attention modules—CA [

25], EMA [

26], and GAM [

27]—into the same position of the YOLOV11-S model. All models were trained and tested under identical conditions. The results are summarized in

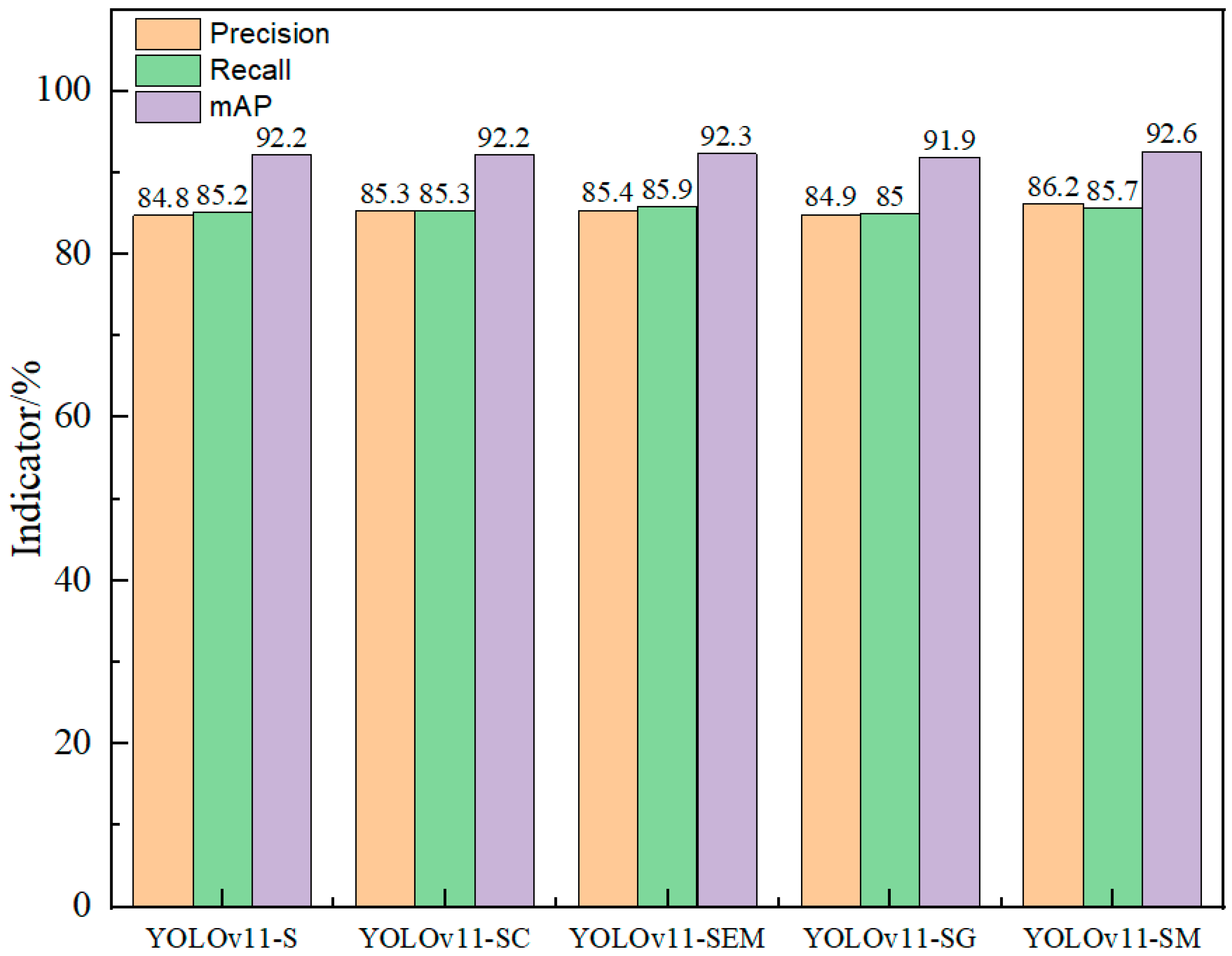

Figure 8.

As shown in

Figure 8, the YOLOv11-SM model incorporating the MSCA module achieved the best performance in all three metrics, precision, recall, and mAP reaching 86.2%, 85.7%, and 92.6%, respectively. Compared to the baseline YOLOv11-S model without attention mechanisms, YOLOv11-SM improved precision, recall, and mAP by 1.4, 0.5, and 0.4 percentage points, respectively. When compared with YOLOv11-SC and YOLOv11-SG, the precision of YOLOv11-SM increased by 0.9 and 1.3 percentage points, recall by 0.4 and 0.7 percentage points, and mAP by 0.4 and 0.7 percentage points, respectively. Although the recall was slightly lower than that of the YOLOv11-SEM model, YOLOv11-SM still achieved 0.8 and 0.3 percentage-point improvements in precision and mAP, respectively. These results indicate that the MSCA mechanism effectively enhances the model’s feature representation capabilities, leading to superior accuracy and stability in cherry blossom detection.

3.1.3. Comparison of Conv Modules

To validate the performance advantages of the DualConv module used in this study for object detection tasks, we replaced the same module in the YOLOv11-SM model with LDConv [

28], RFAConv [

29], SAConv [

30], and SPDConv [

31] modules and compared their performance with that of the DualConv module employed in this study. The experimental results are presented in

Table 3.

As shown in

Table 3, the SMD-YOLO model utilizing the DualConv module achieved the best performance across all metrics, with precision of 87.6%, recall of 86.1%, and mAP of 93.1%. Compared to the baseline YOLOv11-SM model, SMD-YOLO achieved increases of 1.4, 0.4, and 0.5 percentage points in precision, recall, and mAP, respectively. When compared with the SML-YOLO and SMR-YOLO models, SMD-YOLO demonstrated precision improvements of 1.0 and 2.3 percentage points, recall improvements of 1.6 and 0.8 percentage points, and mAP improvements of 0.2 and 0.3 percentage points, respectively. Although the precision of SMD-YOLO is slightly lower than that of the SMA-YOLO model, it achieved higher recall and mAP by 1.2 and 0.3 percentage points, respectively. Compared to the SMP-YOLO model, SMD-YOLO yielded a 1.2 percentage-point improvement in precision and a 0.4 percentage-point improvement in recall, despite a slight decrease in mAP. These results demonstrate that the DualConv module significantly enhances the accuracy and stability of the detection model in the cherry blossom identification task, providing a more robust structural foundation for future deployment.

3.1.4. Ablation Experiment

To further evaluate the effectiveness of the individual modules integrated into the SMD-YOLO model, ablation experiments were conducted using YOLOv11 as the baseline on a custom cherry blossom dataset. The impact of the ScConvC3k2, MSCA, and DualConv modules on detection performance was analyzed. In the table, “√” indicates the inclusion of the module, while “×” denotes its absence. S, M, and D represent the ScConvC3k2, MSCA, and DualConv modules, respectively.

As shown in

Table 4, the proposed SMD-YOLO model achieved improvements of 3.8, 1.5, and 1.6 percentage points in precision, recall, and mAP, respectively, compared to the baseline YOLOv11. Additionally, SMD-YOLO outperformed all other models across all evaluation metrics, demonstrating robust detection performance.

The addition of each individual module led to varying degrees of performance improvement. Compared to the original YOLOv11 model, YOLOv11-S improved precision, recall, and mAP by 1.0, 0.6, and 0.7 percentage points, respectively. YOLOv11-M achieved increases of 0.7, 0.9, and 0.9 points in precision, recall, and mAP, respectively. The YOLOv11-D model achieved improvements of 0.2, 0.4, and 0.4 percentage points in precision, recall, and mAP, respectively. These results indicate that incorporating ScConvC3k2, MSCA, or DualConv individually can effectively enhance detection performance. Models with two combined modules exhibited cumulative performance gains. For example, YOLOv11-SM showed consistent improvements over YOLOv11, YOLOv11-S, and YOLOv11-M across all three metrics. Similarly, YOLOv11-SD outperformed YOLOv11, YOLOv11-S, and YOLOv11-D in precision, recall, and mAP. YOLOv11-MD also showed gains over YOLOv11, YOLOv11-M, and YOLOv11-D in all evaluation metrics. These findings confirm the effectiveness and rationality of the SMD-YOLO model. It not only achieves the best accuracy but also offers notable advantages in structural simplicity and computational efficiency, making it particularly suitable for deployment in resource-constrained agricultural environments, with promising application potential.

Based on the comparative and ablation experiments presented in

Section 3.1.1,

Section 3.1.2,

Section 3.1.3 and

Section 3.1.4 it is evident that the ScConvC3k2 module, MSCA mechanism, and DualConv structure each contribute effectively to improving the detection performance of the model. The ScConvC3k2 module demonstrates consistent performance across different network positions, with the best results achieved when it is integrated into the neck (C3k2-ScConvC3k2 configuration). Compared to the backbone, the neck stage benefits from a larger receptive field and shorter gradient propagation paths, which facilitate more effective feature fusion and target representation. Grad-CAM visualizations further support this observation, showing more focused activation regions and sharper object boundaries when the module is applied in the neck, confirming its structural suitability. The MSCA mechanism also outperforms other mainstream attention modules in terms of detection accuracy and robustness, significantly enhancing feature representation and model stability. The DualConv module demonstrated superior detection performance in the convolution replacement experiments, effectively enhancing the modeling of local spatial features. The final SMD-YOLO model achieved the best overall performance across all evaluation metrics, demonstrating excellent detection capability and structural efficiency, making it well suited for lightweight deployment in resource-constrained agricultural scenarios.

3.1.5. Comparative Experiments of Different Models

To comprehensively evaluate the performance advantages of the SMD-YOLO model in cherry blossom object detection tasks, comparison experiments were conducted with the Faster R-CNN [

32], YOLOv5 [

33], YOLOv7 [

34], YOLOv8 [

35], YOLOv9 [

36], YOLOv10 [

37], YOLOv11, and YOLOv12 [

38] models. All models were trained and tested under identical experimental conditions using a custom-built dataset. The comparison results are summarized in

Table 5.

As shown in

Table 5, the proposed SMD-YOLO model achieved precision of 87.6%, recall of 86.1%, and mAP of 93.1%, with model size of 4765 KB, 2.28 × 10

6 parameters, computational cost of 5.8 GFLOPs, and an inference speed of 75.76 FPS. Although SMD-YOLO consumed slightly more memory, parameters, and computation than YOLOv5, it improved precision, recall, and mAP by 5.6, 5.6, and 2.5 percentage points, respectively, demonstrating superior detection performance. Compared to the Faster R-CNN model, the SMD-YOLO model outperformed it in all metrics. Compared to YOLOv7, YOLOv8, YOLOv9, YOLOv10, YOLOv11, and YOLOv12, the SMD-YOLO model achieved precision improvements of 5.2, 4.7, 6.1, 2.8, 3.8, and 5.1 percentage points, respectively; recall improvements of 0.6, 1.2, 3.0, 3.5, 1.5, and 2.1 points; and mAP improvements of 1.8, 2.9, 0.8, 2.6, 1.6, and 2.1 points, respectively. In terms of resource consumption, SMD-YOLO also demonstrated superior efficiency, with model size reduced by 60.29, 13.54, 20.37, 15.40, 11.15, and 11.94%, respectively; parameter counts reduced by 62.06, 15.07, 12.88, 15.39, 11.71, and 10.83%; and computational cost decreased by 55.38, 14.71, 45.79, 29.27, 7.94, and 7.94%, respectively. In terms of detection speed, although the SMD-YOLO model is slower than the YOLOv11 model, the decrease is small, and its detection speed is still superior to other models. The SMD-YOLO model still fully meets the real-time requirements of agricultural applications. By maintaining its lightweight characteristics while improving accuracy and resource efficiency, SMD-YOLO achieves an optimal balance among performance, size, and speed, making it particularly well suited for deployment on resource-constrained edge devices and smart agriculture platforms, with promising practical applications.

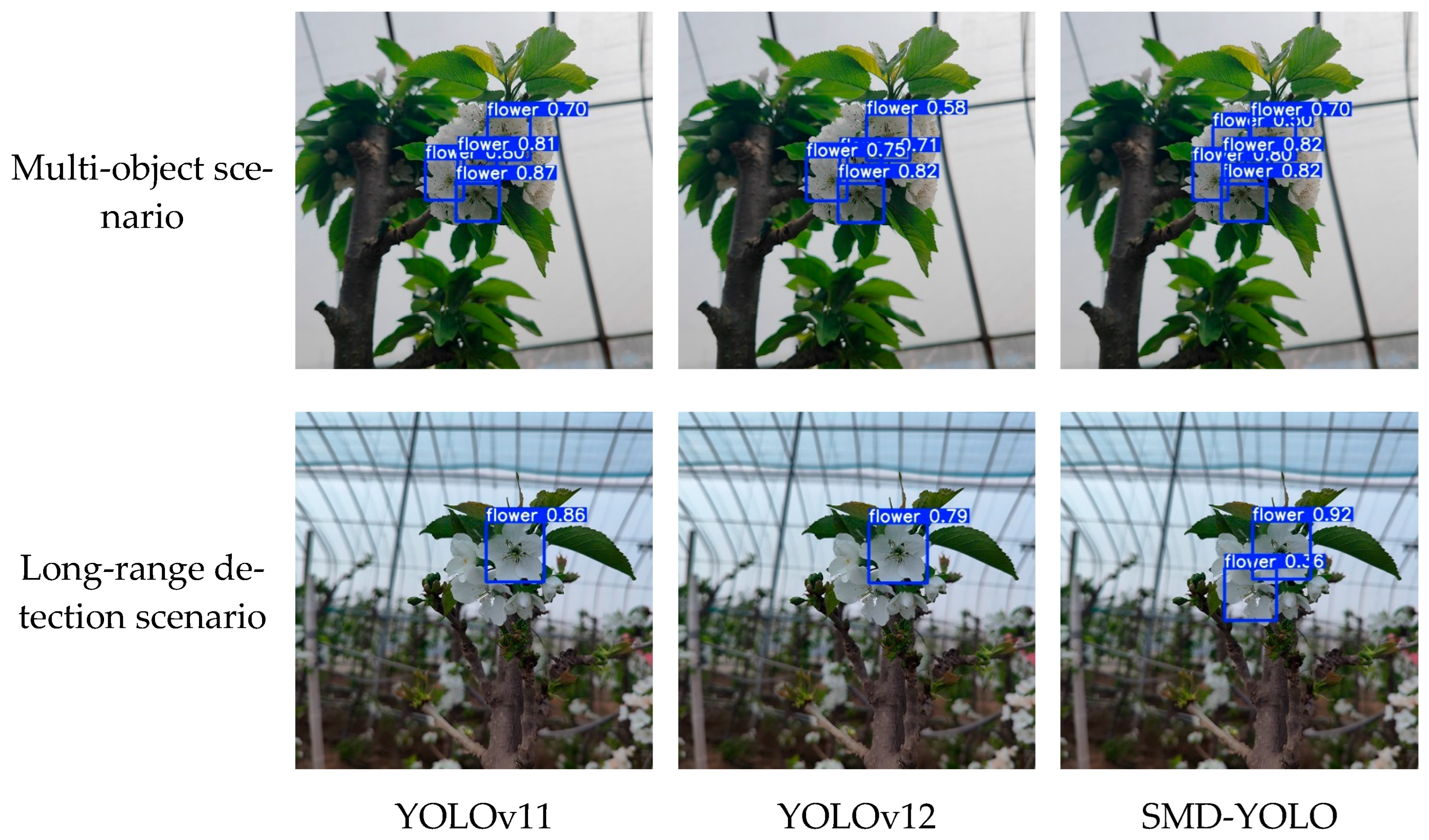

3.1.6. Comparative Visualization and Performance Analysis

To further validate the performance of the proposed SMD-YOLO model in real-world detection scenarios, we compared it with YOLOv11 and YOLOv12 on cherry blossom images of varying complexity. The detection results are illustrated in

Figure 9. On single-object cherry blossom images, the SMD-YOLO model accurately identified the target regions with precise bounding boxes and high confidence scores, demonstrating strong detection performance. In contrast, while YOLOv11 and YOLOv12 were also able to detect the targets, their bounding boxes often exhibited lower confidence scores. On multi-object cherry blossom images, SMD-YOLO demonstrated superior target separation and spatial feature extraction capabilities, reliably detecting the majority of objects with minimal missed detections. In dense regions, YOLOv11 and YOLOv12 showed noticeable missed detections, resulting in a decline in overall performance. On images with long-distance targets and background interference, SMD-YOLO maintained high detection accuracy for small, distant objects and effectively suppressed background noise. In comparison, YOLOv11 and YOLOv12 suffered from reduced accuracy due to background interference, with a more significant decline in detection performance. The visualization of detection results provides direct evidence that the SMD-YOLO model consistently demonstrates superior performance across various real-world complex detection scenarios. In particular, it exhibits superior precision, stability, and robustness under challenging agricultural conditions, such as dense object distribution, long-range detection, and cluttered backgrounds.

3.2. Pollination Experiment Results

To investigate the effects of spray distance, spray duration, and liquid flow rate on the pollination efficiency of cherry blossoms, a quadratic orthogonal rotational combination experiment was designed. Liquid deposition (g) and coverage (%) were selected as response variables to comprehensively evaluate spray precision and pollination efficiency. Ideally, the pollination liquid should be maximally deposited in the pistil region, while coverage should remain below 100% to prevent excessive droplet accumulation and resource waste. Greater deposition is not necessarily better. Instead, the pollen liquid should be deposited precisely and uniformly in the target area. The experimental design and measured data are presented in

Table 6.

The experimental data presented in

Table 6 were analyzed using Design-Expert 13.0 software, yielding the following second-order regression models for liquid deposition (

) and coverage (

):

where

represents the spray distance (cm),

represents the spray time (s), and

represents the liquid flow rate (mL/min).

Analysis of variance (ANOVA) for the regression models (

Table 7) indicated that both the liquid deposition (

) and coverage (

) models were highly significant overall (

p < 0.0001), demonstrating strong predictive capability with high confidence. Moreover, the lack-of-fit

p-values for both models were greater than 0.05, indicating no significant lack of fit. This suggests good agreement with the experimental data and confirms the models’ strong fitting performance and practical applicability. Regarding the factors influencing liquid deposition (

), spray duration (

) was the most significant (

p < 0.0001), followed by liquid flow rate (

) (

p = 0.0022), while spray distance (X

1) had no significant effect. For coverage (

), all three main factors (

,

, and

) showed highly significant effects (

p < 0.001), with spray duration and flow rate contributing most prominently. Moreover, the quadratic terms (

,

) had highly significant effects (

p < 0.001) on both response variables, indicating the presence of strong nonlinear effects within the system.

To determine the optimal parameter combination for maximizing cherry blossom pollination quality, the regression models were solved using the optimization module in Design-Expert 13.0. The optimal combination was found to be: spray distance of 3.4 cm, spray duration of 1.9 s, and liquid flow rate of 339 mL/min. Under these conditions, the predicted deposition and coverage were 0.18 g and 97.25%, respectively. These results provide theoretical support for cherry blossom spray pollination and are also applicable to the design of efficient spray strategies and resource utilization within intelligent agricultural platforms.

4. Discussion

Compared with traditional methods, the SMD-YOLO model demonstrates significant advantages in detection accuracy, robustness, and response speed. Conventional object detection approaches typically rely on manually designed features, making them susceptible to variations in lighting, occlusion, and viewing angles, thereby limiting their adaptability to complex natural environments [

39]. In contrast, SMD-YOLO benefits from end-to-end learning capabilities and stronger generalization performance, enabling it to adapt reliably to diverse scenarios. When evaluated against several existing floral detection models, SMD-YOLO exhibits superior performance. Specifically, compared to the VM-YOLO model for strawberry flower detection, SMD-YOLO achieves improvements of 0.5, 23.8, and 21.7 percentage points in precision, recall, and mAP, respectively [

40]. Compared to the LT-YOLO model for apple flower detection, it improves by 4.4, 3.9, and 5.6 percentage points in the same metrics [

41]. Relative to the lychee flower detection model based on a polyphyletic loss function, it shows a 5.2 percentage point increase in mAP [

42]. When compared to the Light-FC-YOLO model, SMD-YOLO yields gains of 3.5 and 5.3 percentage points in recall and mAP, respectively [

43]. Although its recall is slightly lower by 0.2 percentage points than that of the YOLOv5s-SE7 model for cucumber flower detection, it still achieves higher precision and mAP by 2.1 and 2.6 percentage points, respectively [

44]. While SMD-YOLO demonstrates clear performance advantages over other floral detection models, it still faces challenges under extreme conditions such as drastic lighting changes, severe occlusions, or high degrees of flower overlap, which may result in missed or false detections. Furthermore, although the model has been structurally optimized for lightweight deployment, it may still encounter computational limitations on ultralow-power or highly constrained embedded devices. Future research may focus on applying techniques such as model pruning, quantization, and knowledge distillation to further compress and optimize the model, thereby enhancing its applicability in resource-constrained environments [

45,

46,

47].

The application of the SMD-YOLO model in intelligent agricultural platforms demonstrates broad development potential, particularly in resource-constrained operational environments. By achieving coordinated optimization of detection performance, model size, and computational speed at the architectural level, SMD-YOLO not only operates efficiently on edge devices but also meets the real-time requirements of agricultural production. In real field conditions, the model can reliably handle various complex detection scenarios, including single-object, densely clustered objects, distant viewpoints, and strong background interference, exhibiting excellent generalization ability and detection robustness. The spraying pollination control system built on this model can significantly enhance pollination accuracy and efficiency, offering an effective alternative to manual pollination in traditional agriculture. The deployment of the SMD-YOLO model provides an intelligent sensing foundation for optimized allocation of agricultural resources, enabling functions such as precision spray path planning, target tracking, and variable-rate control, thereby further improving resource utilization efficiency.

To investigate the effects of spraying parameters on cherry blossom pollination, a dedicated spraying-pollination test platform was developed in this study. This platform integrates an adjustable spraying system, an STM32-based control module, and a dual-measurement system consisting of water-sensitive paper and an electronic balance to quantify both coverage and deposition. It enables precise control and quantitative assessment of key spraying parameters, such as spray distance, duration, and liquid flow rate. Under controlled indoor conditions, the platform demonstrates good repeatability and operational stability. However, certain limitations remain. While water-sensitive paper serves as a surrogate for floral stigmas and offers basic simulation capability, it cannot accurately replicate the three-dimensional morphology and biological adhesion characteristics of actual pistils, potentially leading to discrepancies between deposition metrics and true pollination efficacy. Moreover, the experiments were conducted in static indoor environments without accounting for field variables such as wind speed and humidity, which may significantly influence droplet behavior. Future improvements should enhance the platform’s environmental adaptability and biological fidelity to better simulate field conditions, thereby enabling a more comprehensive, multidimensional evaluation of spray-based pollination performance.

5. Conclusions

This study proposed the SMD-YOLO model for detecting and localizing cherry blossoms during pollination. The model achieved precision of 87.6%, recall of 86.1%, and mAP of 93.1% with a model size of 4765 KB, 2.28 × 106 parameters, computational cost of 5.8 GFLOPs, and an inference speed of 75.76 FPS. Compared to the YOLOv11 model, precision, recall, and mAP increased by 3.8, 1.5, and 1.6 percentage points, while model size, parameter count, and computation were reduced by 11.15%, 11.71%, and 7.94%, respectively. In addition, SMD-YOLO achieved a favorable balance between detection accuracy and model compactness compared to other models, effectively meeting the real-time and precision requirements of intelligent mechanical cherry pollination.

To address the optimization of key parameters in intelligent mechanical cherry pollination, a dedicated spray pollination test platform was designed and constructed. A quadratic orthogonal rotational combination design was employed to systematically evaluate the effects of spray distance, duration, and liquid flow rate on pollination performance. The optimal parameter combination was determined as a spray distance of 3.4 cm, spray duration of 1.9 s, and liquid flow rate of 339 mL/min. Under these conditions, the deposition and coverage reached 0.18 g and 97.25%, respectively, achieving efficient and precise spray-based pollination control. This provides both theoretical and technical support for precision pollination operations during the cherry blossom period.

In the future, the SMD-YOLO model can be extended to a variety of fruit tree management tasks. It is applicable not only to floral target detection and thinning assistance during the flowering period in cherry orchards but also holds potential for transferability to flowering-stage detection and pollination operations in other crops such as apples, pears, and strawberries. When integrated with intelligent agricultural machinery and IoT platforms, the model can serve as a core visual recognition module within smart orchard management systems, supporting unmanned operations, refined management, and environmentally sustainable resource utilization. It exhibits strong generalizability, scalability, and developmental potential.

Author Contributions

Conceptualization, L.R. and Y.D.; investigation, Y.D. and Y.L.; resources, L.R.; data curation, A.G.; writing—original draft preparation, Y.D.; writing—review and editing, L.R. and Y.D.; visualization, W.M. and X.H.; supervision, L.R.; project administration, L.R. and Y.S.; funding acquisition, Y.S. All authors have read and agreed to the published version of the manuscript.

Funding

We would like to acknowledge the financial support from the Key R&D Program of Shandong Province, China (2024TZXD045, 2024TZXD038) and Innovation Team Fund for Fruit Industry of Modern Agricultural Technology System in Shandong Province (SDAlT-06-12).

Data Availability Statement

Dataset available on request from the authors.

Conflicts of Interest

The authors declare no conflicts of interest.

Appendix A

Table A1.

Model training hyperparameter settings.

Table A1.

Model training hyperparameter settings.

| Hyperparameter | Setting |

|---|

| Number of training epochs | 200 |

| Batch size | 32 |

| Optimizer | SGD |

| Learning rate schedule | 0.01 |

| Workers | 8 |

| Data augmentation policy | Mosaic augmentation, random flipping, affine transformation |

References

- Piri, S.; Kiani, E.; Sedaghathoor, S. Study on fruitset and pollen-compatibility status in sweet cherry (Prunus avuim L.) cultivars. Erwerbs-Obstbau 2022, 64, 165–170. [Google Scholar] [CrossRef]

- Eeraerts, M.; Smagghe, G.; Meeus, I. Pollinator diversity, floral resources and semi-natural habitat, instead of honey bees and intensive agriculture, enhance pollination service to sweet cherry. Agric. Ecosyst. Environ. 2019, 284, 106586. [Google Scholar] [CrossRef]

- Osterman, J.; Mateos-Fierro, Z.; Siopa, C.; Castro, H.; Castro, S.; Eeraerts, M. The impact of pollination requirements, pollinators, landscape and management practices on pollination in sweet and sour cherry: A systematic review. Agric. Ecosyst. Environ. 2024, 374, 109163. [Google Scholar] [CrossRef]

- Masuda, N.; Khalil, M.M.; Toda, S.; Takayama, K.; Kanada, A.; Mashimo, T. A Suspended Pollination Robot with a Flexible Multi-Degrees-of-Freedom Manipulator for Self-Pollinated Plants. IEEE Access 2024, 12, 142449–142458. [Google Scholar] [CrossRef]

- Li, G. Accurate Pollination Device of Kiwifruit Based on Vision Perception and Air-Liquid Spray. Master’s Thesis, Northwest A&F University, Yangling, China, 2023. [Google Scholar]

- Liu, P.; Zhu, Y.; Liu, L.; Wang, C. A Self-Propelled Cherry Pollination Machine. CN212306437U, 8 January 2021. [Google Scholar]

- Bu, K.; Li, N.; Xiao, L.; Cao, F.; Gao, M.; Wang, L.; Zhao, X.; Alslan. A Multi-Robotic Arm Pollination Robot Based on Machine Vision. Shandong Province CN118901577A, 8 November 2024. [Google Scholar]

- Bai, Y.; Yu, J.; Yang, S.; Ning, J. An improved YOLO algorithm for detecting flowers and fruits on strawberry seedlings. Biosyst. Eng. 2024, 237, 1–12. [Google Scholar] [CrossRef]

- Li, G.; Suo, R.; Zhao, G.; Gao, C.; Fu, L.; Shi, F.; Dhupia, J.; Li, R.; Cui, Y. Real-time detection of kiwifruit flower and bud simultaneously in orchard using YOLOv4 for robotic pollination. Comput. Electron. Agric. 2022, 193, 106641. [Google Scholar] [CrossRef]

- Shang, Y.; Zhang, Q.; Song, H. Application of deep learning using YOLOv5s to apple flower detection in natural scenes. Trans. Chin. Soc. Agric. Eng. 2022, 38, 222–229. [Google Scholar]

- Lyu, S.; Zhao, Y.; Liu, X.; Li, Z.; Wang, C.; Shen, J. Detection of male and female litchi flowers using YOLO-HPFD multi-teacher feature distillation and FPGA-embedded platform. Agronomy 2023, 13, 987. [Google Scholar] [CrossRef]

- Xu, T.; Qi, X.; Lin, S.; Zhang, Y.; Ge, Y.; Li, Z.; Dong, J.; Yang, X. A neural network structure with attention mechanism and additional feature fusion layer for tomato flowering phase detection in pollination robots. Machines 2022, 10, 1076. [Google Scholar] [CrossRef]

- Wang, Z.Y.; Zhang, C.P. An improved chilli pepper flower detection approach based on YOLOv8. Plant Methods 2025, 21, 71. [Google Scholar] [CrossRef]

- Ren, R.; Sun, H.; Zhang, S.; Zhao, H.; Wang, L.; Su, M.; Sun, T. FPG-YOLO: A detection method for pollenable stamen in’Yuluxiang’pear under non-structural environments. Sci. Hortic. 2024, 328, 112941. [Google Scholar] [CrossRef]

- Khanam, R.; Hussain, M. Yolov11: An overview of the key architectural enhancements. arXiv 2024, arXiv:2410.17725. [Google Scholar]

- Li, J.; Wen, Y.; He, L.S. Spatial and Channel Reconstruction Convolution for Feature Redundancy. In Proceedings of the 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 17–24 June 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 6153–6162. [Google Scholar]

- Zhou, J.; Yang, X.; Ren, Z. SCConv-Denoising Diffusion Probabilistic Model Anomaly Detection Based on TimesNet. Electronics 2025, 14, 746. [Google Scholar] [CrossRef]

- Guo, M.H.; Lu, C.Z.; Hou, Q.; Liu, Z.; Cheng, M.M.; Hu, S.M. Segnext: Rethinking convolutional attention design for semantic segmentation. Adv. Neural Inf. Process. Syst. 2022, 35, 1140–1156. [Google Scholar]

- Gugulothu, V.K.; Balaji, S. A novel EZS-MSCA and SeLu SqueezeNet-based lung tumor detection and classification. Soft Comput. 2023, 1–12. [Google Scholar] [CrossRef]

- Zhong, J.; Chen, J.; Mian, A. DualConv: Dual convolutional kernels for lightweight deep neural networks. IEEE Trans. Neural Netw. Learn. Syst. 2022, 34, 9528–9535. [Google Scholar] [CrossRef]

- Wang, G.; Gao, Y.; Xu, F.; Sang, W.; Han, Y.; Liu, Q. A Banana Ripeness Detection Model Based on Improved YOLOv9c Multifactor Complex Scenarios. Symmetry 2025, 17, 231. [Google Scholar] [CrossRef]

- Arias-Martínez, L.; Jáñez-Martino, F.; Fidalgo, E.; Rodríguez-González, P.; Fernández-Abia, A.I.; Alegre, E.; Barreiro, J. Automated Shrinkage and Gas Porosity Detection Using YOLO Model in Additive-Manufactured Aluminum Alloy Parts. Integr. Mater. Manuf. Innov. 2025, 1–13. [Google Scholar] [CrossRef]

- Liu, L. Influence of different liquid spray pollination parameters on pollen activity of fruit trees—Pear liquid spray pollination as an example. Horticulturae 2023, 9, 350. [Google Scholar] [CrossRef]

- Shi, F.; Jiang, Z.; Ma, C.; Zhu, Y.; Liu, Z. Controlled Parameters of Targeted Pollination Deposition by Air-Liquid Nozzle. Trans. Chin. Soc. Agric. Mach. 2019, 50, 115–124. [Google Scholar]

- Hou, Q.; Zhou, D.; Feng, J. Coordinate attention for efficient mobile network design. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 20–25 June 2021; pp. 13713–13722. [Google Scholar]

- Ouyang, D.; He, S.; Zhang, G.; Luo, M.; Guo, H.; Zhan, J.; Huang, Z. Efficient multi-scale attention module with cross-spatial learning. In Proceedings of the ICASSP 2023-2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Rhodes, Greece, 4–9 June 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 1–5. [Google Scholar]

- Liu, Y.; Shao, Z.; Hoffmann, N. Global attention mechanism: Retain information to enhance channel-spatial interactions. arXiv 2021, arXiv:2112.05561. [Google Scholar]

- Zhang, X.; Song, Y.; Song, T.; Yang, D.; Ye, Y.; Zhou, J.; Zhang, L. LDConv: Linear deformable convolution for improving convolutional neural networks. Image Vis. Comput. 2024, 149, 105190. [Google Scholar] [CrossRef]

- Zhang, X.; Liu, C.; Yang, D.; Song, T.; Ye, Y.; Li, K.; Song, Y. RFAConv: Innovating spatial attention and standard convolutional operation. arXiv 2023, arXiv:2304.03198. [Google Scholar]

- Qiao, S.; Chen, L.C.; Yuille, A.L. b16: Detecting objects with recursive feature pyramid and switchable atrous convolution. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 20–25 June 2021. [Google Scholar]

- Sunkara, R.; Luo, T. No more strided convolutions or pooling: A new CNN building block for low-resolution images and small objects. arXiv 2022, arXiv:2208.03641. [Google Scholar]

- Chen, M.; Yu, L.; Zhi, C.; Sun, R.; Zhu, S.; Gao, Z.; Ke, Z.; Zhu, M.; Zhang, Y. Improved faster R-CNN for fabric defect detection based on Gabor filter with Genetic Algorithm optimization. Comput. Ind. 2022, 134, 103551. [Google Scholar] [CrossRef]

- Gu, T.; Zhao, H.; Chang, Y.; Yan, S.; Cao, F.; Liu, W. A novel deep learning model based on YOLOv5 optimal method for coal gangue image recognition. Sci. Rep. 2025, 15, 18630. [Google Scholar] [CrossRef]

- Wang, C.Y.; Bochkovskiy, A.; Liao, H.Y.M. YOLOv7: Trainable bag-of-freebies sets new state-of-the-art for real-time object detectors. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 17–24 June 2023; pp. 7464–7475. [Google Scholar]

- Huang, Y.; Zhou, X.; Wang, G.; Bai, X. Lightweight defect detection algorithm for wire and arc additive manufacturing based on modified YOLOv8 model. J. Real-Time Image Process. 2025, 22, 129. [Google Scholar] [CrossRef]

- Wang, C.Y.; Yeh, I.H.; Mark Liao, H.Y. Yolov9: Learning what you want to learn using programmable gradient information. In European Conference on Computer Vision; Springer Nature: Cham, Switzerland, 2024; pp. 1–21. [Google Scholar]

- Wang, A.; Chen, H.; Liu, L.; Chen, K.; Lin, Z.; Han, J.; Ding, G. Yolov10: Real-time end-to-end object detection. arXiv 2024, arXiv:2405.14458. [Google Scholar]

- Tian, Y.; Ye, Q.; Doermann, D. Yolov12: Attention-centric real-time object detectors. arXiv 2025, arXiv:2502.12524. [Google Scholar]

- Zhang, H.C.; Shen, Z.H.; Bian, L.M. Advances in plant phenology observation and prediction driven by modern imaging technologies and machine learning. Trans. Chin. Soc. Agric. Eng. 2025, 41, 14–25. [Google Scholar]

- Wang, Y.; Lin, X.; Xiang, Z.; Su, W.-H. VM-YOLO: YOLO with VMamba for Strawberry Flowers Detection. Plants 2025, 14, 468. [Google Scholar] [CrossRef]

- Gao, A.; Wu, K.; Song, Y.; Ren, L.; Ma, W.; Liu, Y. Parameter Optimization and Testing of Apple Laser Flower Thinning Test Bed Based on LT-YOLO Inspection and Machine Vision. Trans. Chin. Soc. Agric. Mach. 2025, 56, 393–401. [Google Scholar]

- Ye, J.; Wu, M.; Qiu, W.; Yang, J.; Lan, W. Litchi Flower Detection Method Based on Polyphyletic Loss Function. Trans. Chin. Soc. Agric. Mach. 2023, 54, 253–260. [Google Scholar]

- Yi, X.; Chen, H.; Wu, P.; Wang, G.; Mo, L.; Wu, B.; Yi, Y.; Fu, X.; Qian, P. Light-FC-YOLO: A Lightweight Method for Flower Counting Based on Enhanced Feature Fusion with a New Efficient Detection Head. Agronomy 2024, 14, 1285. [Google Scholar] [CrossRef]

- Xu, X.; Wang, H.; Miao, M.; Zhang, W.; Zhang, Y.; Dai, H.; Zheng, Z.; Zhang, X. Cucumber flower detection based on YOLOv5s-SE7 within greenhouse environments. IEEE Access 2023, 11, 64358–64369. [Google Scholar] [CrossRef]

- Zhang, R.; Lu, Y.; Song, Z. YOLO sparse training and model pruning for street view house numbers recognition. J. Phys. Conf. Ser. 2023, 2646, 012025. [Google Scholar] [CrossRef]

- Zheng, Y.; Cheng, B. Lightweight ship recognition network based on model pruning. J. Wuhan Univ. Technol. (Transp. Sci. Eng. Ed.) 2025, 49, 682–691. [Google Scholar]

- Zhu, Y.X.; Hao, S.S.; Zheng, W.J.; Jin, C.Q.; Yin, X.; Zhou, P. Multi-teacher cotton field weed detection model based on knowledge distillation. Trans. Chin. Soc. Agric. Eng. 2025, 41, 200–210. [Google Scholar]

Figure 1.

Sample images from the cherry blossom dataset. Note: The black squares in the figure indicate that the image has been cut out.

Figure 1.

Sample images from the cherry blossom dataset. Note: The black squares in the figure indicate that the image has been cut out.

Figure 2.

Cherry blossom spray pollination test platform.

Figure 2.

Cherry blossom spray pollination test platform.

Figure 3.

SMD-YOLO model architecture for cherry blossom detection.

Figure 3.

SMD-YOLO model architecture for cherry blossom detection.

Figure 4.

Structural diagram of the ScConvC3k2 module.

Figure 4.

Structural diagram of the ScConvC3k2 module.

Figure 5.

Structural diagram of the MSCA module.

Figure 5.

Structural diagram of the MSCA module.

Figure 6.

Structural diagram of the DualConv module. Note: M denotes the number of input channels (i.e., the depth of the input feature map), N represents the number of convolutional filters, which also corresponds to the number of output channels (i.e., the depth of the output feature map), and G refers to the number of groups used in group and dual convolutions.

Figure 6.

Structural diagram of the DualConv module. Note: M denotes the number of input channels (i.e., the depth of the input feature map), N represents the number of convolutional filters, which also corresponds to the number of output channels (i.e., the depth of the output feature map), and G refers to the number of groups used in group and dual convolutions.

Figure 7.

Grad-CAM heatmaps of different ScConvC3k2 module configurations.

Figure 7.

Grad-CAM heatmaps of different ScConvC3k2 module configurations.

Figure 8.

Bar chart comparing the performance of different attention modules. Note: The YOLOv11-S model does not include any attention mechanism, while the YOLOv11-SC, YOLOv11-SEM, YOLOv11-SG, and YOLOv11-SM models incorporate the CA, EMA, GAM, and MSCA mechanisms, respectively.

Figure 8.

Bar chart comparing the performance of different attention modules. Note: The YOLOv11-S model does not include any attention mechanism, while the YOLOv11-SC, YOLOv11-SEM, YOLOv11-SG, and YOLOv11-SM models incorporate the CA, EMA, GAM, and MSCA mechanisms, respectively.

Figure 9.

Detection results of different models.

Figure 9.

Detection results of different models.

Table 1.

Experimental factors and levels.

Table 1.

Experimental factors and levels.

| Level | Spray Distance (cm) | Spray Time (s) | Liquid Flow Rate (mL/min) |

|---|

| −1.68 | 1.3 | 0.7 | 229 |

| −1 | 2 | 1 | 270 |

| 0 | 3 | 1.5 | 330 |

| 1 | 4 | 2 | 390 |

| 1.68 | 4.7 | 2.3 | 431 |

Table 2.

Comparison of the performance of different feature extraction fusion modules.

Table 2.

Comparison of the performance of different feature extraction fusion modules.

| Backbone | Neck | P/% | R/% | mAP/% |

|---|

| C3k2 | C3k2 | 83.8 | 84.6 | 91.5 |

| ScConvC3k2 | C3k2 | 83.5 | 84.8 | 91.7 |

| C3k2 | ScConvC3k2 | 84.8 | 85.2 | 92.2 |

| ScConvC3k2 | ScConvC3k2 | 84.1 | 84.9 | 92.0 |

Table 3.

Impact of different convolutional modules on detection performance.

Table 3.

Impact of different convolutional modules on detection performance.

| Model | Precision/% | Recall/% | mAP/% |

|---|

| YOLOv11-SM | 86.2 | 85.7 | 92.6 |

| SMD-YOLO | 87.6 | 86.1 | 93.1 |

| SML-YOLO | 86.6 | 84.5 | 92.9 |

| SMR-YOLO | 84.3 | 85.3 | 92.8 |

| SMA-YOLO | 87.7 | 84.9 | 92.8 |

| SMP-YOLO | 86.4 | 85.7 | 93.5 |

Table 4.

Ablation experiments.

Table 4.

Ablation experiments.

| Model | ScConvC3k2 | MSCA | DualConv | Precision/% | Recall/% | mAP/% |

|---|

| YOLOv11 | × | × | × | 83.8 | 84.6 | 91.5 |

| YOLOv11-S | √ | × | × | 84.8 | 85.2 | 92.2 |

| YOLOv11-M | × | √ | × | 84.5 | 85.5 | 92.4 |

| YOLOv11-D | × | × | √ | 84.0 | 85.0 | 91.9 |

| YOLOv11-SM | √ | √ | × | 86.2 | 85.7 | 92.6 |

| YOLOv11-SD | √ | × | √ | 85.9 | 85.5 | 92.6 |

| YOLOv11-MD | × | √ | √ | 85.0 | 84.3 | 92.8 |

| SMD-YOLO | √ | √ | √ | 87.6 | 86.1 | 93.1 |

Table 5.

Comparison test of different models.

Table 5.

Comparison test of different models.

| Model | Precision/% | Recall/% | mAP/% | Model Size/KB | Parameters/106 | GFLOPs/G | Inference Speed/FPS |

|---|

| Faster R-CNN | 82.4 | 82.5 | 90.4 | 821242 | 43.2 | 136.5 | 30.42 |

| YOLOv5 | 82.0 | 80.5 | 90.6 | 3820 | 1.76 | 4.1 | 74.89 |

| YOLOv7 | 82.4 | 85.5 | 91.3 | 12001 | 6.01 | 13.0 | 58.68 |

| YOLOv8 | 82.9 | 84.9 | 90.2 | 5511 | 2.68 | 6.8 | 72.54 |

| YOLOv9 | 81.5 | 83.1 | 92.3 | 5984 | 2.62 | 10.7 | 52.52 |

| YOLOv10 | 84.8 | 82.6 | 90.5 | 5631 | 2.69 | 8.2 | 68.40 |

| YOLOv11 | 83.8 | 84.6 | 91.5 | 5363 | 2.58 | 6.3 | 79.75 |

| YOLOv12 | 82.5 | 84.0 | 91.0 | 5411 | 2.56 | 6.3 | 70.42 |

| SMD-YOLO | 87.6 | 86.1 | 93.1 | 4765 | 2.28 | 5.8 | 75.76 |

Table 6.

Experimental design and results of the quadratic orthogonal rotational combination test.

Table 6.

Experimental design and results of the quadratic orthogonal rotational combination test.

| Experiment ID | Experimental Factors | Deposit Amount (g) | Coverage (%) |

|---|

| Spray Distance (cm) | Spray Time (s) | Liquid Flow Rate (mL/min) |

|---|

| 1 | 4 | 1 | 270 | 0.08 | 76.85 |

| 2 | 3 | 1.5 | 330 | 0.15 | 95.25 |

| 3 | 3 | 1.5 | 229 | 0.08 | 84.95 |

| 4 | 2 | 2 | 390 | 0.15 | 86.54 |

| 5 | 3 | 2.3 | 330 | 0.2 | 96.84 |

| 6 | 3 | 1.5 | 330 | 0.16 | 96.89 |

| 7 | 2 | 2 | 270 | 0.13 | 84.56 |

| 8 | 2 | 1 | 390 | 0.1 | 81.26 |

| 9 | 4 | 2 | 270 | 0.14 | 84.62 |

| 10 | 4 | 1 | 390 | 0.09 | 90.48 |

| 11 | 3 | 0.7 | 330 | 0.1 | 85.47 |

| 12 | 3 | 1.5 | 431 | 0.1 | 95.64 |

| 13 | 3 | 1.5 | 330 | 0.16 | 96.89 |

| 14 | 3 | 1.5 | 330 | 0.15 | 70.94 |

| 15 | 1.3 | 1.5 | 330 | 0.13 | 97.25 |

| 16 | 3 | 1.5 | 330 | 0.15 | 97.85 |

| 17 | 3 | 1.5 | 330 | 0.17 | 80.64 |

| 18 | 4.7 | 1.5 | 330 | 0.14 | 98.04 |

| 19 | 3 | 1.5 | 330 | 0.15 | 93.41 |

| 20 | 4 | 2 | 390 | 0.16 | 98.04 |

| 21 | 3 | 1.5 | 330 | 0.15 | 95.84 |

| 22 | 2 | 1 | 270 | 0.09 | 76.45 |

| 23 | 3 | 1.5 | 330 | 0.16 | 96.87 |

Table 7.

Analysis of variance (ANOVA).

Table 7.

Analysis of variance (ANOVA).

| Source | Deposition | Coverage |

|---|

| F-Value | p-Value | F-Value | p-Value |

|---|

| Model | 56.46 | <0.0001 *** | 273.65 | <0.0001 *** |

| 0.4659 | 0.5069 | 24.36 | 0.0003 ** |

| 248.21 | <0.0001 *** | 239.83 | <0.0001 *** |

| 14.44 | 0.0022 ** | 239.58 | <0.0001 *** |

| 4.50 | 0.0537 | 3.89 | 0.0702 |

| 0.0000 | 1.0000 | 23.67 | 0.0003 ** |

| 1.12 | 0.3082 | 2.32 | 0.1515 |

| 25.45 | 0.0002 ** | 1692.89 | <0.0001 *** |

| 3.51 | 0.0835 | 107.70 | <0.0001 *** |

| 211.91 | <0.0001 *** | 142.72 | <0.0001 *** |

| Lack of Fit | 0.5899 | 0.7092 | 2.11 | 0.1658 |

| Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).