Technologies and Data Analytics to Manage Grain Quality On-Farm—A Review

Abstract

1. Introduction

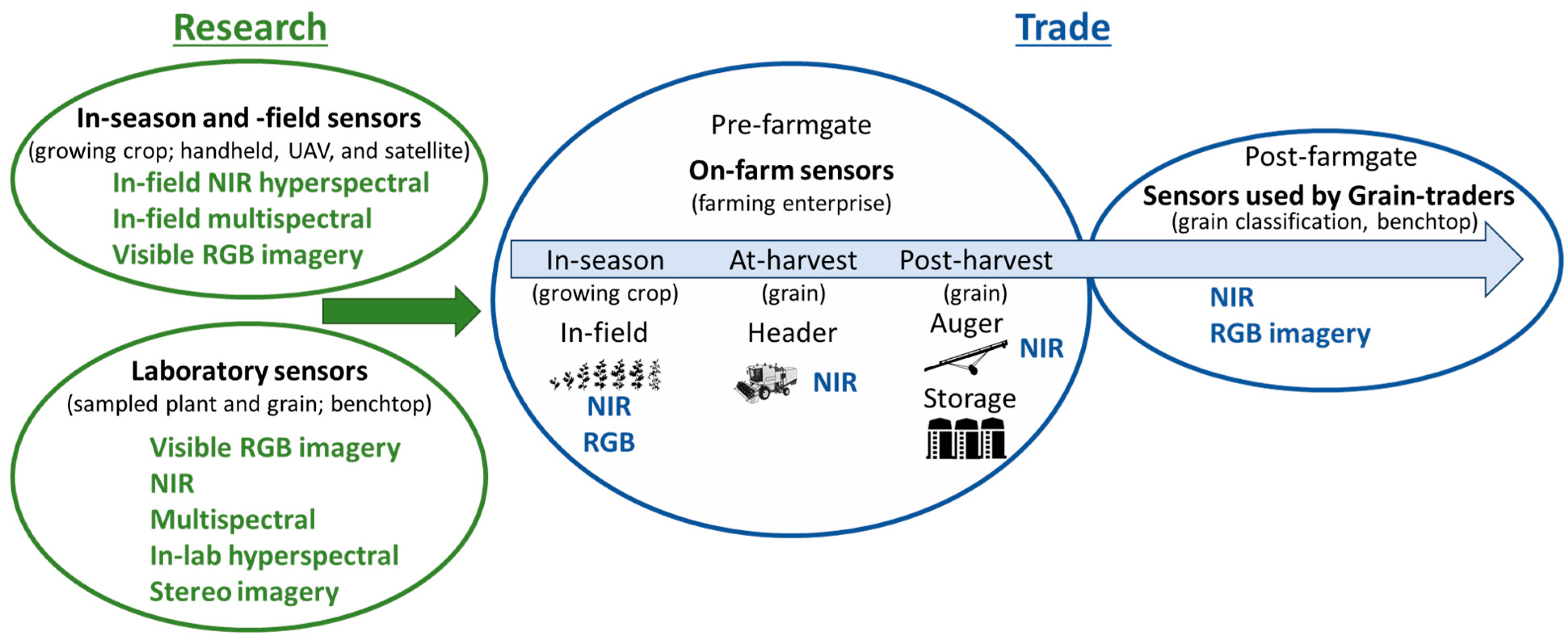

1.1. Options for On-Farm Sensing Grain Quality

1.2. Data Acquisition Systems and Sample Handling

2. Sensor Technologies Used in the Agricultural Production System

2.1. Digital RGB Camera

2.2. Stereo Cameras

2.3. Near Infrared Spectroscopy

2.4. Multispectral Imaging

2.5. Hyperspectral

2.5.1. In-Field Measurements

2.5.2. In-Laboratory Hyperspectral Imaging

3. Data Analysis and Modelling

3.1. Regression

3.2. Principal Component Analysis (PCA)

3.3. Partial Least Squares (PLS)

3.4. Linear Discriminant Analysis (LDA)

3.5. Support Vector Machine (SVM)

3.6. Decision Trees Learning

3.7. Neural Networks and Deep Learning

3.8. Validation

4. Sensor Technologies Applied In-Line within Agricultural Systems

5. Considerations for Adoption

5.1. On-Farm Segregation and Storage

5.2. Data and Interfaces

5.3. Calibration and Technical Support

6. Gaps, Challenges, and Benefits

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Panozzo, J.F.; Walker, C.K.; Maharjan, P.; Partington, D.L.; Korte, C.J. Elevated CO2 affects plant nitrogen and water-soluble carbohydrates but not in vitro metabolisable energy. J. Agron. Crop Sci. 2019, 205, 647–658. [Google Scholar] [CrossRef]

- Martin, C.T.; McCallum, J.D.; Long, D.S. A Web-Based Calculator for Estimating the Profit Potential of Grain Segregation by Protein Concentration. Agron. J. 2013, 105, 721–726. [Google Scholar] [CrossRef]

- Meneghetti, V.L.; Pohndorf, R.S.; Biduski, B.; Zavareze, E.d.R.; Gutkoski, L.C.; Elias, M.C. Wheat grain storage at moisture milling: Control of protein quality and bakery performance. J. Food Process. Preserv. 2019, 43, e13974. [Google Scholar] [CrossRef]

- Delahunty, A.; Perry, E.; Wallace, A.; Nuttall, J. Frost response in lentil. Part 1. Measuring the impact on yield and quality. In Proceedings of the 19th Australian Agronomy Conference, Wagga Wagga, NSW, Australia, 25–29 August 2019. [Google Scholar]

- Walker, C.; Armstrong, R.; Panozzo, J.; Partington, D.; Fitzgerald, G. Can nitrogen fertiliser maintain wheat (Triticum aestivum) grain protein concentration in an elevated CO2 environment? Soil Res. 2017, 55, 518–523. [Google Scholar] [CrossRef]

- Thakur, M.; Hurburgh, C.R. Framework for implementing traceability system in the bulk grain supply chain. J. Food Eng. 2009, 95, 617–626. [Google Scholar] [CrossRef]

- Bourgault, M.; Löw, M.; Tausz-Posch, S.; Nuttall, J.G.; Delahunty, A.J.; Brand, J.; Panozzo, J.F.; McDonald, L.; O’Leary, G.J.; Armstrong, R.D.; et al. Effect of a Heat Wave on Lentil Grown under Free-Air CO2 Enrichment (FACE) in a Semi-Arid Environment. Crop Sci. 2018, 58, 803–812. [Google Scholar] [CrossRef]

- Black, C.K.; Panozzo, J.F. Accurate Technique for Measuring Color Values of Grain and Grain Products Using a Visible-NIR Instrument. Cereal Chem. 2004, 81, 469–474. [Google Scholar] [CrossRef]

- McDonald, L.S.; Assadzadeh, S.; Panozzo, J.F. Images, features, or feature distributions? A comparison of inputs for training convolutional neural networks to classify lentil and field pea milling fractions. Biosyst. Eng. 2021, 208, 16–27. [Google Scholar] [CrossRef]

- McDonald, L.; Panozzo, J. A review of the opportunities for spectral-based technologies in post-harvest testing of pulse grains. Legume Sci. 2022, e175. [Google Scholar] [CrossRef]

- Grain Trade Australia. Trading Standards. Available online: https://www.graintrade.org.au/commodity_standards (accessed on 13 April 2023).

- Assadzadeh, S.; Walker, C.K.; McDonald, L.S.; Panozzo, J.F. Prediction of milling yield in wheat with the use of spectral, colour, shape, and morphological features. Biosyst. Eng. 2022, 214, 28–41. [Google Scholar] [CrossRef]

- McDonald, L.S.; Panozzo, J.F.; Salisbury, P.A.; Ford, R. Discriminant Analysis of Defective and Non-Defective Field Pea (Pisum sativum L.) into Broad Market Grades Based on Digital Image Features. PLoS ONE 2016, 11, e0155523. [Google Scholar] [CrossRef] [PubMed]

- Caporaso, N.; Whitworth, M.; Fisk, I. Application of calibrations to hyperspectral images of food grains: Example for wheat falling number. J. Spectr. Imaging 2017, 6, 1–15. [Google Scholar] [CrossRef]

- Nuttall, J.G.; O’Leary, G.J.; Panozzo, J.F.; Walker, C.K.; Barlow, K.M.; Fitzgerald, G.J. Models of grain quality in wheat—A review. Field Crops Res. 2017, 202, 136–145. [Google Scholar] [CrossRef]

- Walsh, O.S.; Torrion, J.A.; Liang, X.; Shafian, S.; Yang, R.; Belmont, K.M.; McClintick-Chess, J.R. Grain yield, quality, and spectral characteristics of wheat grown under varied nitrogen and irrigation. Agrosyst. Geosci. Environ. 2020, 3, e20104. [Google Scholar] [CrossRef]

- Rodriguez, D.; Fitzgerald, G.J.; Belford, R.; Christensen, L.K. Detection of nitrogen deficiency in wheat from spectral reflectance indices and basic crop eco-physiological concepts. Aust. J. Agric. Res. 2006, 57, 781–789. [Google Scholar] [CrossRef]

- Cammarano, D.; Fitzgerald, G.; Basso, B.; O’Leary, G.; Chen, D.; Grace, P.; Fiorentino, C. Use of the Canopy Chlorophyl Content Index (CCCI) for Remote Estimation of Wheat Nitrogen Content in Rainfed Environments. Agron. J. 2011, 103, 1597–1603. [Google Scholar] [CrossRef]

- Nuttall, J.G.; Perry, E.M.; Delahunty, A.J.; O’Leary, G.J.; Barlow, K.M.; Wallace, A.J. Frost response in wheat and early detection using proximal sensors. J. Agron. Crop Sci. 2019, 205, 220–234. [Google Scholar] [CrossRef]

- Behmann, J.; Mahlein, A.-K.; Rumpf, T.; Römer, C.; Plümer, L. A review of advanced machine learning methods for the detection of biotic stress in precision crop protection. Precis. Agric. 2015, 16, 239–260. [Google Scholar] [CrossRef]

- Basso, B.; Fiorentino, C.; Cammarano, D.; Cafiero, G.; Dardanelli, J. Analysis of rainfall distribution on spatial and temporal patterns of wheat yield in Mediterranean environment. Eur. J. Agron. 2012, 41, 52–65. [Google Scholar] [CrossRef]

- Perry, E.M.; Nuttall, J.G.; Wallace, A.J.; Fitzgerald, G.J. In-field methods for rapid detection of frost damage in Australian dryland wheat during the reproductive and grain-filling phase. Crop Pasture Sci. 2017, 68, 516–526. [Google Scholar] [CrossRef]

- McEntee, P.; Bennett, S.; Belford, R.; Harper, J.; Trotter, M. Mapping the stability of spatial production in integrated crop and pasture systems: Towards zonal management that accounts for both yield and livestock-landscape interactions. In Proceedings of the 13th International Conference on Precision Agriculture, St. Louis, MO, USA, 31 July–3 August 2016; pp. 1–13. [Google Scholar]

- Pantazi, X.E.; Moshou, D.; Oberti, R.; West, J.; Mouazen, A.M.; Bochtis, D. Detection of biotic and abiotic stresses in crops by using hierarchical self organizing classifiers. Precis. Agric. 2017, 18, 383–393. [Google Scholar] [CrossRef]

- Skerritt, J.H.; Adams, M.L.; Cook, S.E.; Naglis, G. Within-field variation in wheat quality: Implications for precision agricultural management. Aust. J. Agric. Res. 2002, 53, 1229–1242. [Google Scholar] [CrossRef]

- Whelan, B.M.; Taylor, J.A.; Hassall, J.A. Site-specific variation in wheat grain protein concentration and wheat grain yield measured on an Australian farm using harvester-mounted on-the-go sensors. Crop Pasture Sci. 2009, 60, 808–817. [Google Scholar] [CrossRef]

- Cillis, D.; Pezzuolo, A.; Gasparini, F.; Marinello, F.; Sartori, L. Differential harvesting strategy: Technical and economic feasibility. In Proceedings of the 6th International Conference on Trends in Agricultural Engineering, Prague, Czech Republic, 7–9 September 2016. [Google Scholar]

- Tozer, P.R.; Isbister, B.J. Is it economically feasible to harvest by management zone? Precis. Agric. 2007, 8, 151–159. [Google Scholar] [CrossRef]

- Long, D.S.; McCallum, J.D.; Scharf, P.A. Optical-Mechanical System for On-Combine Segregation of Wheat by Grain Protein Concentration. Agron. J. 2013, 105, 1529–1535. [Google Scholar] [CrossRef]

- ElMasry, G.; Mandour, N.; Al-Rejaie, S.; Belin, E.; Rousseau, D. Recent Applications of Multispectral Imaging in Seed Phenotyping and Quality Monitoring-An Overview. Sensors 2019, 19, 51090. [Google Scholar] [CrossRef]

- Assadzadeh, S.; Walker, C.; McDonald, L.; Maharjan, P.; Panozzo, J. Multi-task deep learning of near infrared spectra for improved grain quality trait predictions. J. Near Infrared Spectrosc. 2020, 28, 275–286. [Google Scholar] [CrossRef]

- LeMasurier, L.S.; Panozzo, J.F.; Walker, C.K. A digital image analysis method for assessment of lentil size traits. J. Food Eng. 2014, 128, 72–78. [Google Scholar] [CrossRef]

- León, K.; Mery, D.; Pedreschi, F.; León, J. Color measurement in L*a*b* units from RGB digital images. Food Res. Int. 2006, 39, 1084–1091. [Google Scholar] [CrossRef]

- Hatfield, J.L.; Gitelson, A.A.; Schepers, J.S.; Walthall, C.L. Application of Spectral Remote Sensing for Agronomic Decisions. Agron. J. 2008, 100, S-117–S-131. [Google Scholar] [CrossRef]

- Jackson, R. Remote Sensing of Biotic and Abiotic Plant Stress. Annu. Rev. Phytopathol. 1986, 24, 265–287. [Google Scholar] [CrossRef]

- Rouse, J.; Haas, R.; Schell, J.A.; Deering, D.W. Monitoring vegetation systems in the Great Plains with ETRS. In Proceedings of the 3rd ETRS Symposium, NASA SP353, Washington, DC, USA, 10–14 December 1974. [Google Scholar]

- Chen, Y.; Zhang, Z.; Tao, F.; Palosuo, T.; Rötter, R.P. Impacts of heat stress on leaf area index and growth duration of winter wheat in the North China Plain. Field Crops Res. 2018, 222, 230–237. [Google Scholar] [CrossRef]

- Fitzgerald, G.; Rodriguez, D.; O’Leary, G. Measuring and predicting canopy nitrogen nutrition in wheat using a spectral index—The canopy chlorophyll content index (CCCI). Field Crops Res. 2010, 116, 318–324. [Google Scholar] [CrossRef]

- Perry, E.; Delahunty, A.; Nuttall, J.; Clancy, A.; Wallace, A. Frost response in lentil. Part 2. Detecting early frost damage using proximal sensing. In Proceedings of the 19th Australian Agronomy Conference, Wagga Wagga, NSW, Australia, 25–29 August 2019. [Google Scholar]

- Wallace, A.; Nuttall, J.; Perry, E.; Brand, J.; Henry, F. Proximal sensors to detect fungal disease in chickpea and faba bean. In Proceedings of the “Doing More with Less”, Proceedings of the 18th Australian Agronomy Conference 2017, Ballarat, VIC, Australia, 24–28 September 2017. [Google Scholar]

- Singh, C.B.; Fielke, J.M. Recent developments in stored grain sensors, monitoring and management technology. IEEE Instrum. Meas. Mag. 2017, 20, 32–55. [Google Scholar] [CrossRef]

- Clancy, P. Finding the sweet spot in nitrogen fertilization by measuring protein with an on combine NIR analyser. In Proceedings of the 69th Australasian Grain Science Conference, Melbourne, VIC, Australia, 26–29 August 2019; pp. 106–108. [Google Scholar]

- Cassells, J.A.; Reuss, R.; Osborne, B.G.; Wesley, I.J. Near Infrared Spectroscopic Studies of Changes in Stored Grain. J. Near Infrared Spectrosc. 2007, 15, 161–167. [Google Scholar] [CrossRef]

- Gras, P.; Kaur, S.; Lewis, D.; O’Riordan, B.; Suter, D.; Thomson, W. How and why to keep grain quality constant. In Proceedings of the Stored Grain in Australia 2000: Proceedings of the 2nd Australian Postharvest Technical Conference, Adelaide, South Australia, 1–4 August 2000; pp. 195–198. [Google Scholar]

- Wrigley, C.W. Potential methodologies and strategies for the rapid assessment of feed-grain quality. Aust. J. Agric. Res. 1999, 50, 789–806. [Google Scholar] [CrossRef]

- Pearson, T.C.; Maghirang, E.; Dowell, F. A Multispectral Sorting Device for Wheat Kernels. Am. J. Agric. Sci. Technol. 2013, 2, 45–60. [Google Scholar] [CrossRef]

- Tragesser, S. Use of Color Image Analyzers for Quantifying Grain Quality Traits. U.S. Patent 5,835,206, 10 November 1998. [Google Scholar]

- Jones, M.A.; Foster, D.J.; Rimathe, D.M. Method and Apparatus for Analyzing Quality Traits of Grain or Seed. U.S. Patent 8,031,910, 4 October 2004. [Google Scholar]

- Walker, C.K.; Panozzo, J.; Ford, R.; Moody, D. Measuring grain plumpness in barley using image analysis. In Proceedings of the 14th Australian Barley Technical Symposium, Sunshine Coast, QLD, Australia, 13–16 September 2009; pp. 1–7. [Google Scholar]

- Walker, C.; Ford, R.; Muñoz-Amatriaín, M.; Panozzo, J. The detection of QTLs associated with endosperm hardness, grain density, malting quality and plant development traits in barley using rapid phenotyping tools. Theor. Appl. Genet. 2013, 126, 2533–2551. [Google Scholar] [CrossRef]

- Kim, W.-S.; Lee, D.-H.; Kim, Y.-J.; Kim, T.; Lee, W.-S.; Choi, C.-H. Stereo-vision-based crop height estimation for agricultural robots. Comput. Electron. Agric. 2021, 181, 105937. [Google Scholar] [CrossRef]

- Dandrifosse, S.; Bouvry, A.; Leemans, V.; Dumont, B.; Mercatoris, B. Imaging Wheat Canopy Through Stereo Vision: Overcoming the Challenges of the Laboratory to Field Transition for Morphological Features Extraction. Front. Plant Sci. 2020, 11, 96. [Google Scholar] [CrossRef]

- Vrešak, M.; Halkjaer Olesen, M.; Gislum, R.; Bavec, F.; Ravn Jørgensen, J. The Use of Image-Spectroscopy Technology as a Diagnostic Method for Seed Health Testing and Variety Identification. PLoS ONE 2016, 11, e0152011. [Google Scholar] [CrossRef] [PubMed]

- Williams, P.C.; Norris, K. Near-Infrared Technology in the Agricultural and Food Industries, 2nd ed.; American Association of Cereal Chemists (AACC): St. Paul, MN, USA, 2001. [Google Scholar]

- Jaillais, B.; Perrin, E.; Mangavel, C.; Bertrand, D. Characterization of the desiccation of wheat kernels by multivariate imaging. Planta 2011, 233, 1147–1156. [Google Scholar] [CrossRef] [PubMed]

- Wilkes, T.; Nixon, G.; Bushell, C.; Waltho, A.; Alroichdi, A.; Burns, M. Feasibility Study for Applying Spectral Imaging for Wheat Grain Authenticity Testing in Pasta. Food Nutr. Sci. 2016, 7, 355–361. [Google Scholar] [CrossRef]

- Jaillais, B.; Roumet, P.; Pinson-Gadais, L.; Bertrand, D. Detection of Fusarium head blight contamination in wheat kernels by multivariate imaging. Food Control 2015, 54, 250–258. [Google Scholar] [CrossRef]

- Jaillais, B.; Bertrand, D.; Abecassis, J. Identification of the histological origin of durum wheat milling products by multispectral imaging and chemometrics. J. Cereal Sci. 2012, 55, 210–217. [Google Scholar] [CrossRef]

- Shahin, M.A.; Hatcher, D.W.; Symons, S.J. 17-Development of multispectral imaging systems for quality evaluation of cereal grains and grain products. In Computer Vision Technology in the Food and Beverage Industries; Sun, D.-W., Ed.; Woodhead Publishing: Cambridge, UK, 2012; pp. 451–482. [Google Scholar]

- Caporaso, N.; Whitworth, M.B.; Fisk, I.D. Protein content prediction in single wheat kernels using hyperspectral imaging. Food Chem. 2018, 240, 32–42. [Google Scholar] [CrossRef]

- McGoverin, C.M.; Engelbrecht, P.; Geladi, P.; Manley, M. Characterisation of non-viable whole barley, wheat and sorghum grains using near-infrared hyperspectral data and chemometrics. Anal. Bioanal. Chem. 2011, 401, 2283–2289. [Google Scholar] [CrossRef]

- Hu, N.; Li, W.; Du, C.; Zhang, Z.; Gao, Y.; Sun, Z.; Yang, L.; Yu, K.; Zhang, Y.; Wang, Z. Predicting micronutrients of wheat using hyperspectral imaging. Food Chem. 2021, 343, 128473. [Google Scholar] [CrossRef]

- Vithu, P.; Moses, J.A. Machine vision system for food grain quality evaluation: A review. Trends Food Sci. Technol. 2016, 56, 13–20. [Google Scholar] [CrossRef]

- Humeau-Heurtier, A. Texture Feature Extraction Methods: A Survey. IEEE Access 2019, 7, 8975–9000. [Google Scholar] [CrossRef]

- Brosnan, T.; Sun, D.-W. Inspection and grading of agricultural and food products by computer vision systems—A review. Comput. Electron. Agric. 2002, 36, 193–213. [Google Scholar] [CrossRef]

- Liu, D.; Ning, X.; Li, Z.; Yang, D.; Li, H.; Gao, L. Discriminating and elimination of damaged soybean seeds based on image characteristics. J. Stored Prod. Res. 2015, 60, 67–74. [Google Scholar] [CrossRef]

- Mahajan, S.; Das, A.; Sardana, H.K. Image acquisition techniques for assessment of legume quality. Trends Food Sci. Technol. 2015, 42, 116–133. [Google Scholar] [CrossRef]

- Pearson, T.; Moore, D.; Pearson, J. A machine vision system for high speed sorting of small spots on grains. J. Food Meas. Charact. 2012, 6, 27–34. [Google Scholar] [CrossRef]

- Wu, D.; Sun, D.-W. Colour measurements by computer vision for food quality control—A review. Trends Food Sci. Technol. 2013, 29, 5–20. [Google Scholar] [CrossRef]

- Neuman, M.R.; Sapirstein, H.D.; Shwedyk, E.; Bushuk, W. Wheat grain colour analysis by digital image processing II. Wheat class discrimination. J. Cereal Sci. 1989, 10, 183–188. [Google Scholar] [CrossRef]

- Huang, M.; Wang, Q.G.; Zhu, Q.B.; Qin, J.W.; Huang, G. Review of seed quality and safety tests using optical sensing technologies. Seed Sci. Technol. 2015, 43, 337–366. [Google Scholar] [CrossRef]

- Vázquez-Arellano, M.; Griepentrog, H.W.; Reiser, D.; Paraforos, D.S. 3-D Imaging Systems for Agricultural Applications—A Review. Sensors 2016, 16, 618. [Google Scholar] [CrossRef]

- Rovira-Más, F.; Zhang, Q.; Reid, J.F. Stereo vision three-dimensional terrain maps for precision agriculture. Comput. Electron. Agric. 2008, 60, 133–143. [Google Scholar] [CrossRef]

- Boldt, J.L.; Williams, K.; Rooper, C.N.; Towler, R.H.; Gauthier, S. Development of stereo camera methodologies to improve pelagic fish biomass estimates and inform ecosystem management in marine waters. Fish. Res. 2018, 198, 66–77. [Google Scholar] [CrossRef]

- Sun, C.; Berman, M.; Coward, D.; Osborne, B. Thickness measurement and crease detection of wheat grains using stereo vision. Pattern Recognit. Lett. 2007, 28, 1501–1508. [Google Scholar] [CrossRef]

- Park, B.; Lu, R. Hyperspectral Imaging Technology in Food and Agriculture; Springer: New York, NY, USA, 2015. [Google Scholar]

- Subramanian, A.; Rodriguez-Saona, L. Chapter 7-Fourier Transform Infrared (FTIR) Spectroscopy. In Infrared Spectroscopy for Food Quality Analysis and Control; Sun, D.-W., Ed.; Academic Press: San Diego, CA, USA, 2009; pp. 145–178. [Google Scholar]

- Armstrong, P.R.; Maghirang, E.; Xie, F.; Dowell, F. Comparison of Dispersive and Fourier-transform NIR Instruments for Measuring Grain and Flour Attributes. Appl. Eng. Agric. 2006, 22, 453–457. [Google Scholar] [CrossRef]

- Shenk, J.S.; Workman, J.J.; Westerhaus, M.O. Application of NIR spectroscopy to agricultural products. In Handbook of Near-Infrared Analysis; Burns, D.A., Ciurczak, E.W., Eds.; CRC Press: Boca Raton, FL, USA, 2007; pp. 419–474. [Google Scholar]

- Guo, Y.; Senthilnath, J.; Wu, W.; Zhang, X.; Zeng, Z.; Huang, H. Radiometric Calibration for Multispectral Camera of Different Imaging Conditions Mounted on a UAV Platform. Sustainability 2019, 11, 978. [Google Scholar] [CrossRef]

- Kim, M.S.; Chen, Y.-R.; Cho, B.-K.; Chao, K.; Yang, C.-C.; Lefcourt, A.M.; Chan, D. Hyperspectral reflectance and fluorescence line-scan imaging for online defect and fecal contamination inspection of apples. Sens. Instrum. Food Qual. Saf. 2007, 1, 151. [Google Scholar] [CrossRef]

- Xing, J.; Bravo, C.; Jancsók, P.T.; Ramon, H.; De Baerdemaeker, J. Detecting Bruises on ‘Golden Delicious’ Apples using Hyperspectral Imaging with Multiple Wavebands. Biosyst. Eng. 2005, 90, 27–36. [Google Scholar] [CrossRef]

- Gómez-Sanchis, J.; Gómez-Chova, L.; Aleixos, N.; Camps-Valls, G.; Montesinos-Herrero, C.; Moltó, E.; Blasco, J. Hyperspectral system for early detection of rottenness caused by Penicillium digitatum in mandarins. J. Food Eng. 2008, 89, 80–86. [Google Scholar] [CrossRef]

- Lu, R.; Peng, Y. Hyperspectral Scattering for assessing Peach Fruit Firmness. Biosyst. Eng. 2006, 93, 161–171. [Google Scholar] [CrossRef]

- ElMasry, G.; Wang, N.; ElSayed, A.; Ngadi, M. Hyperspectral imaging for nondestructive determination of some quality attributes for strawberry. J. Food Eng. 2007, 81, 98–107. [Google Scholar] [CrossRef]

- Mendoza, F.; Lu, R.; Ariana, D.; Cen, H.; Bailey, B. Integrated spectral and image analysis of hyperspectral scattering data for prediction of apple fruit firmness and soluble solids content. Postharvest Biol. Technol. 2011, 62, 149–160. [Google Scholar] [CrossRef]

- ElMasry, G.; Wang, N.; Vigneault, C. Detecting chilling injury in Red Delicious apple using hyperspectral imaging and neural networks. Postharvest Biol. Technol. 2009, 52, 1–8. [Google Scholar] [CrossRef]

- Lu, B.; Dao, P.D.; Liu, J.; He, Y.; Shang, J. Recent Advances of Hyperspectral Imaging Technology and Applications in Agriculture. Remote Sens. 2020, 12, 2659. [Google Scholar] [CrossRef]

- Osella, C.A.; Robutti, J.; Sánchez, H.D.; Borrás, F.; de la Torre, M.A. Dough properties related to baking quality using principal component analysis. Cienc. Tecnol. Aliment. 2008, 6, 95–100. [Google Scholar] [CrossRef]

- Amigo, J.M.; Martí, I.; Gowen, A. Chapter 9-Hyperspectral Imaging and Chemometrics: A Perfect Combination for the Analysis of Food Structure, Composition and Quality. In Data Handling in Science and Technology; Marini, F., Ed.; Elsevier: Amsterdam, The Netherlands, 2013; Volume 28, pp. 343–370. [Google Scholar]

- Walker, C.; Panozzo, J. Gathering reliable data on malting quality for genetic analysis from barley using near infrared spectroscopy. J. Near Infrared Spectrosc. 2014, 22, 81–92. [Google Scholar] [CrossRef]

- Delwiche, S.R.; Graybosch, R.A. Examination of spectral pretreatments for partial least-squares calibrations for chemical and physical properties of wheat. Appl. Spectrosc. 2003, 57, 1517–1527. [Google Scholar] [CrossRef]

- Brunton, S.L.; Kutz, J.N. Data-Driven Science and Engineering: Machine Learning, Dynamical Systems, and Control; Cambridge University Press: Cambridge, UK, 2019. [Google Scholar]

- Mahesh, S.; Manickavasagan, A.; Jayas, D.S.; Paliwal, J.; White, N.D.G. Feasibility of near-infrared hyperspectral imaging to differentiate Canadian wheat classes. Biosyst. Eng. 2008, 101, 50–57. [Google Scholar] [CrossRef]

- Karuppiah, K.; Senthilkumar, T.; Jayas, D.S.; White, N.D.G. Detection of fungal infection in five different pulses using near-infrared hyperspectral imaging. J. Stored Prod. Res. 2016, 65, 13–18. [Google Scholar] [CrossRef]

- Sun, C.; Liu, T.; Ji, C.; Jiang, M.; Tian, T.; Guo, D.; Wang, L.; Chen, Y.; Liang, X. Evaluation and analysis the chalkiness of connected rice kernels based on image processing technology and support vector machine. J. Cereal Sci. 2014, 60, 426–432. [Google Scholar] [CrossRef]

- Ravikanth, L.; Singh, C.B.; Jayas, D.S.; White, N.D.G. Classification of contaminants from wheat using near-infrared hyperspectral imaging. Biosyst. Eng. 2015, 135, 73–86. [Google Scholar] [CrossRef]

- Jitanan, S.; Chimlek, P. Quality grading of soybean seeds using image analysis. Int. J. Electr. Comput. Engineering. Yogyak. IAES Inst. Adv. Eng. Sci. 2019, 9, 3495–3503. [Google Scholar] [CrossRef]

- Zapotoczny, P. Discrimination of Wheat Grain Varieties Using Image Analysis and Multidimensional Analysis Texture of Grain Mass. Int. J. Food Prop. 2014, 17, 139–151. [Google Scholar] [CrossRef]

- Waheed, T.; Bonnell, R.B.; Prasher, S.O.; Paulet, E. Measuring performance in precision agriculture: CART—A decision tree approach. Agric. Water Manag. 2006, 84, 173–185. [Google Scholar] [CrossRef]

- Kılıç, K.; Boyacı, İ.H.; Köksel, H.; Küsmenoğlu, İ. A classification system for beans using computer vision system and artificial neural networks. J. Food Eng. 2007, 78, 897–904. [Google Scholar] [CrossRef]

- Kamilaris, A.; Kartakoullis, A.; Prenafeta-Boldú, F.X. A review on the practice of big data analysis in agriculture. Comput. Electron. Agric. 2017, 143, 23–37. [Google Scholar] [CrossRef]

- Dardenne, P. Some Considerations about NIR Spectroscopy: Closing Speech at NIR-2009. NIR News 2010, 21, 8–14. [Google Scholar] [CrossRef]

- Lee, C.Y.; Yan, L.; Wang, T.; Lee, S.R.; Park, C.W. Intelligent classification methods of grain kernels using computer vision analysis. Meas. Sci. Technol. 2011, 22, 064006. [Google Scholar] [CrossRef]

- Pezzementi, Z.; Wellington, C.; Tabor, T.; Male, C.; Herman, H.; Miller, S. Going against the Grain: Real-Time Classification of Grain Quality. In Proceedings of the ICRA 2018 Workshop on Robotic Vision and Action in Agriculture, Brisbane, QLD, Australia, 25 May 2018; pp. 1–5. [Google Scholar]

- Santos, J.R.; Viegas, O.; Páscoa, R.N.M.J.; Ferreira, I.M.P.L.V.O.; Rangel, A.O.S.S.; Lopes, J.A. In-line monitoring of the coffee roasting process with near infrared spectroscopy: Measurement of sucrose and colour. Food Chem. 2016, 208, 103–110. [Google Scholar] [CrossRef] [PubMed]

- Walker, C.K.; Panozzo, J.F. Measuring volume and density of a barley grain using ellipsoid approximation from a 2-D digital image. J. Cereal Sci. 2012, 55, 61–68. [Google Scholar] [CrossRef]

- Mebatsion, H.K.; Paliwal, J.; Jayas, D.S. Automatic classification of non-touching cereal grains in digital images using limited morphological and color features. Comput. Electron. Agric. 2013, 90, 99–105. [Google Scholar] [CrossRef]

- Mirik, M.; Michels Jr, G.J.; Kassymzhanova-Mirik, S.; Elliott, N.C.; Catana, V.; Jones, D.B.; Bowling, R. Using digital image analysis and spectral reflectance data to quantify damage by greenbug (Hemitera: Aphididae) in winter wheat. Comput. Electron. Agric. 2006, 51, 86–98. [Google Scholar] [CrossRef]

- Pound, M.P.; Atkinson, J.A.; Townsend, A.J.; Wilson, M.H.; Griffiths, M.; Jackson, A.S.; Bulat, A.; Tzimiropoulos, G.; Wells, D.M.; Murchie, E.H.; et al. Deep machine learning provides state-of-the-art performance in image-based plant phenotyping. GigaScience 2017, 6, 1–10. [Google Scholar] [CrossRef]

- Wang, Z.J.; Wang, J.H.; Liu, L.Y.; Huang, W.J.; Zhao, C.J.; Wang, C.Z. Prediction of grain protein content in winter wheat (Triticum aestivum L.) using plant pigment ratio (PPR). Field Crops Res. 2004, 90, 311–321. [Google Scholar] [CrossRef]

- Pandiselvam, R.; Mayookha, V.P.; Kothakota, A.; Ramesh, S.V.; Thirumdas, R.; Juvvi, P. Biospeckle laser technique–A novel non-destructive approach for food quality and safety detection. Trends Food Sci. Technol. 2020, 97, 1–13. [Google Scholar] [CrossRef]

- Sutton, D.B.; Punja, Z.K. Investigating biospeckle laser analysis as a diagnostic method to assess sprouting damage in wheat seeds. Comput. Electron. Agric. 2017, 141, 238–247. [Google Scholar] [CrossRef]

- Braga, R.A.; Dal Fabbro, I.M.; Borem, F.M.; Rabelo, G.; Arizaga, R.; Rabal, H.J.; Trivi, M. Assessment of Seed Viability by Laser Speckle Techniques. Biosyst. Eng. 2003, 86, 287–294. [Google Scholar] [CrossRef]

- Strahan, R.; Page, J. Economics of on-farm grain storage and drying. In Proceedings of the Australian Postharvest Technical Conference, Canberra, ACT, Australia, 25–27 June 2003. [Google Scholar]

- Stafford, J.V. Implementing Precision Agriculture in the 21st Century. J. Agric. Eng. Res. 2000, 76, 267–275. [Google Scholar] [CrossRef]

- Suprem, A.; Mahalik, N.; Kim, K. A review on application of technology systems, standards and interfaces for agriculture and food sector. Comput. Stand. Interfaces 2013, 35, 355–364. [Google Scholar] [CrossRef]

- Sigrimis, N.; Hashimoto, Y.; Munack, A.; Baerdemaker, J. Prospects in Agricultural Engineering in the Information Age-Technological Development for the Producer and the Consumer. Agric. Eng. Int. CIGR E-J. 1999, 1, 1–20. [Google Scholar]

- Khanna, A.; Kaur, S. Evolution of Internet of Things (IoT) and its significant impact in the field of Precision Agriculture. Comput. Electron. Agric. 2019, 157, 218–231. [Google Scholar] [CrossRef]

- Nikkilä, R.; Seilonen, I.; Koskinen, K. Software architecture for farm management information systems in precision agriculture. Comput. Electron. Agric. 2010, 70, 328–336. [Google Scholar] [CrossRef]

- Wiseman, L.; Sanderson, J.; Robb, L. Rethinking Ag Data Ownership. Farm Policy J. 2018, 15, 71–77. [Google Scholar]

- Cooley, T.; Anderson, G.; Felde, G.; Hoke, M.; Ratkowski, A.; Chetwynd, J.; Bernstein, L. FLAASH, a MODTRAN4-based atmospheric correction algorithm, its application and validation. In Proceedings of the IEEE International Geoscience and Remote Sensing Symposium, Toronto, ON, Canada, 6 August 2002; pp. 1414–1418. [Google Scholar]

- Kelcey, J.; Lucieer, A. Sensor correction and radiometric calibration of a 6-band multispectral imaging sensor for UAV remote sensing. In Proceedings of the 12th Congress of the International Society for Photogrammetry and Remote Sensing, Melbourne, VIC, Australia, 25 August–1 September 2012. [Google Scholar]

- Bramley, R.G.V. Lessons from nearly 20 years of Precision Agriculture research, development, and adoption as a guide to its appropriate application. Crop Pasture Sci. 2009, 60, 197–217. [Google Scholar] [CrossRef]

- Pierpaoli, E.; Carli, G.; Pignatti, E.; Canavari, M. Drivers of Precision Agriculture Technologies Adoption: A Literature Review. Procedia Technol. 2013, 8, 61–69. [Google Scholar] [CrossRef]

- Research and Markets. Global Plant-Based Protein Markets Report 2022: Data from 2021, Estimates for 2022, and CAGR Projections through 2027. Available online: https://www.globenewswire.com/news-release/2022/09/14/2515659/28124/en/Global-Plant-Based-Protein-Markets-Report-2022-Data-from-2021-Estimates-for-2022-and-CAGR-Projections-through-2027.html (accessed on 27 March 2023).

| Sensor Type | Spectral Range (nm) | Application | Product Stage | References |

|---|---|---|---|---|

| Digital camera (benchtop) | RGB 1 | separation of red vs. white wheat, Fusarium damaged vs. undamaged, high vs. medium vs. low protein | Research | [46] |

| measure grain color, % hard endosperm | Patent | [47] | ||

| gradation of color, whiteness, and hard endosperm of the seed/grain | Patent | [48] | ||

| measure grain plumpness, density, and volume | Research | [49,50] | ||

| Stereo camera (in-field, mounted cameras) | RGB | crop height estimation | Research | [51] |

| wheat canopy structure | Research | [52] | ||

| Near infrared (benchtop) | 900–1600 | variety identification and seed health | Research | [53] |

| 950–1650 | separation of red vs. white kernels, Fusarium damaged vs. undamaged, high vs. medium vs. low protein | Research | [46] | |

| 1100–2300 | prediction of protein and moisture constituency and grain type classification | Research | [31] | |

| 850–2300 | moisture, protein, oil concentration | Commercial | [54] | |

| Multispectral (benchtop) | 360–950; 8 bands | characterization of desiccation | Research | [55] |

| 375–970; 19 bands | variety identification and seed health | Research | [53] | |

| 375–970; 19 bands | seed authentication; % adulterated samples | Research | [56] | |

| 360–950; 8 bands | fungal contamination detection | Research | [57] | |

| 360–950; 8 bands | identification of the histological origin | Research | [58] | |

| Hyperspectral (in-field point-based) | 405–850; 6 bands 405–850; 6 bands | assessment of lentil size traits | Research | [32] |

| classification of defective vs. non-defective field pea | Research | [13] | ||

| 400–1000 350–2500 | detection and classification of biotic and abiotic stresses in-field; crop yield predictions and mapping | Research | [19,22,24,39,40] | |

| Hyperspectral (benchtop) | 400–1000 | seed authentication | Research | [56] |

| 400–1000 | detection of green vs. normal barley kernels | Research | [59] | |

| 980–2500 | protein in single wheat kernels | Research | [60] | |

| 1000–2500 | sprouting, enzymatic activity | Research | [61] | |

| 375–1050 | micronutrient composition; Ca, Mg, Mo, and Zn in wheat | Research | [62] |

| Grain | Trait | Sensor | Data Analysis and Accuracy | Reference |

|---|---|---|---|---|

| Lentil | Seed length, mass, seed size index | Image analysis (multispectral), benchtop | MLR, image segmentation, R2 for length 0.97, mass 0.96, seed size index 0.99 | [32] |

| Pea | Color, shape and size | Image analysis (multispectral), benchtop | Classification 77-87% in market grade classification | [13] |

| Soybean | Damaged kernels | Image analysis (visible), in-line benchtop | Neural Network classification 97.25% | [66] |

| Wheat | Protein, moisture | NIR, in-line mounted on a harvester | PLS regression, R2 for protein 0.88, SECV 7.64; in-field protein vs. yield maps | [29,42] |

| Rice | Chalkiness | Image Analysis (RGB), benchtop | Classification (SVM) 89.9–90.3 2 sides 97.6–98.5% 3 sides | [96] |

| Rice | Classification of type (brown rice, buckwheat, barley, common rice, rough rice, | Image Analysis (visible), benchtop | Classification (PCA-NN) 90–100% classification of grain type within a sample | [104] |

| Corn | Broken, unthreshed, Material other than corn grain | Image analysis (RGB), mounted in-line on harvester | Color features, texture features, classification (SVM) Bivariate correlation 0.948 (with an R2 = 0.898), based on weight percentages | [105] |

| Coffee | Sucrose, color (CIE L*, a*, b*) | Fourier-Transform NIR, mounted in-line | PLS regression, R2 for sucrose 0.93 and color 0.85–0.94. The predicted data was used to monitor the roasting process. | [106] |

| Sensor | Cost Estimation | Development and Equipment Complexity | Reference |

|---|---|---|---|

| NIR-spectra (in-laboratory) | USD 5000–50,000 | Standard software applications; used by industry; low maintenance; annual update of algorithms | [31,42,54] |

| Digital Camera (RGB) (in-laboratory) | USD 10,000–40,000 | Complex algorithm development; two-dimensional; low maintenance | [32,107,108] |

| Digital Camera (in-field) | USD 1000 to 10,000 | Complex algorithm development; two-dimensional; challenges validating at a single reading level; low maintenance | [109,110] |

| Stereo Camera | USD 20,000–30,000 | Complex algorithm development; multi-dimensional; low maintenance | [52] |

| Multi-spectral (in-laboratory) | USD 1000–40,000 | Complex algorithm development; image and spectral analysis; low maintenance; annual update of algorithms | [9,30,32,50] |

| Hyperspectral (in-laboratory) | USD 230,000 | Large data volumes, computation cost; complex algorithm development; challenges validating at a single seed level; research tool, image, and spectral analysis; low maintenance; annual update of algorithms | [14,60] |

| Hyperspectral (in-field) | USD 110,000–350,000 | Large data volumes, computation cost; complex algorithm development; challenges validating at a single reading level; used for predicting yield component, nitrogen inputs and grain protein; annual update of algorithms | [22,34,111] |

| Biospeckle (in-laboratory) | USD 15,000 | System not commercially available; complex algorithm development; low maintenance | [112,113,114] |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Walker, C.K.; Assadzadeh, S.; Wallace, A.J.; Delahunty, A.J.; Clancy, A.B.; McDonald, L.S.; Fitzgerald, G.J.; Nuttall, J.G.; Panozzo, J.F. Technologies and Data Analytics to Manage Grain Quality On-Farm—A Review. Agronomy 2023, 13, 1129. https://doi.org/10.3390/agronomy13041129

Walker CK, Assadzadeh S, Wallace AJ, Delahunty AJ, Clancy AB, McDonald LS, Fitzgerald GJ, Nuttall JG, Panozzo JF. Technologies and Data Analytics to Manage Grain Quality On-Farm—A Review. Agronomy. 2023; 13(4):1129. https://doi.org/10.3390/agronomy13041129

Chicago/Turabian StyleWalker, Cassandra K., Sahand Assadzadeh, Ashley J. Wallace, Audrey J. Delahunty, Alexander B. Clancy, Linda S. McDonald, Glenn J. Fitzgerald, James G. Nuttall, and Joe F. Panozzo. 2023. "Technologies and Data Analytics to Manage Grain Quality On-Farm—A Review" Agronomy 13, no. 4: 1129. https://doi.org/10.3390/agronomy13041129

APA StyleWalker, C. K., Assadzadeh, S., Wallace, A. J., Delahunty, A. J., Clancy, A. B., McDonald, L. S., Fitzgerald, G. J., Nuttall, J. G., & Panozzo, J. F. (2023). Technologies and Data Analytics to Manage Grain Quality On-Farm—A Review. Agronomy, 13(4), 1129. https://doi.org/10.3390/agronomy13041129