Towards a Framework for Assessing IT Strategy Execution †

Abstract

1. Introduction

2. Related Research

2.1. Main References

- Strategy represents strategic alignment over time. We borrow first the Strategic Alignment Model (SAM) as proposed by Henderson and Venkatraman [14], taking into account some more recent discussions, e.g., those of Chan and Reich [71], Coltman et al. [72] and Juiz and Toomey [73]. As for the ability to cope with new threats and opportunities, we took the classical approach by Mintzberg and Waters [74] for business strategy and Chan et al. [15] for IT strategy. Kopmann et al. [16] and Salmela and Spil [13] provide further practical considerations for the method of application.

- Performance is related with the effectiveness of IS Strategy application, either from an external point of view (the acquisition of business benefits) or an internal one (the quality and efficiency of program execution). For business realization, we took the repertoire of methods and libraries of examples provided by different academic and professional authors, mainly Parker et al. [76], Ashurts et al. [77], Ward and Daniel [78] and Hunter et al. [17] from Gartner. As to program execution, we chose authors who provide a more strategically driven approach to project portfolio management, such as Dye and Pennypacker [18], Thiry [19], Meskendahl [79] and again Kopmann et al. [16].

- Under Governance, we include both the feedback provided by key participants about the implementation of the strategy and those issues related with program management and governance mechanisms. As to the first part, we took advantage of the guidelines provided by Galliers [20]; we also needed to take some classical references of the literature about impact as in Gable et al. [82], and success of IS as in the works by DeLone and McNeal [80,81] and Petter et al. [83]. Program governance and management, from a strategic standpoint, is well represented in the books by Dye and Pennypacker (eds.) [18], and Thiry [19] and the article by Bartenschlager et al. [84]. Finally, ISACA’s COBIT is a professional standard that is attracting increased interest of the academia, more specially in the higher education industry.

3. Research Context

“We learned that Strategic Planning was the proper way to focus and preserve transformation and that transformation was not still complete... and maybe never will.”

4. Research Model: Methods and Arrangements

4.1. Research Methods

4.2. Project Organization

4.3. Project Plan

5. Results

5.1. Strategy

5.1.1. Strategic Alignment

“It is simple: priorities were stability and growth; so, infrastructure, sales and control were put on top of the list”.

5.1.2. Intended and Realized Strategies

“After the approval of the Master Plan, blunt execution was the focus; this stance privileged those mature well-defined projects with a clear and strong leadership against other with more potential strategic impact. When new demands arrive, we could make room for them without losing the focus.”

“The good news is that those demands were not managed as a free fighting among business units or departments, but as well discussed and approved priorities at the top level of the institution.”

5.2. Performance

5.2.1. Program Execution

“The IT department has shown strong abilities to execute, but we should improve our professional project management capabilities.”

5.2.2. Benefits Realization

“When dealing with demand, the major challenge is to introduce “value” into the equation. Many users, even business leaders, jump to ask for a solution, not much to reflect about business needs and benefits. Similarly, when dealing with IT staff, the challenge is to drawn from how many things we do to how much value we provide.”

5.3. Governance

5.3.1. Key Stakeholder’s Satisfaction

was mentioned by a middle manager. The satisfaction survey and individual interviews voiced also complaints about poor information on the priority setting mechanisms and the overall progress of the Plan. As to our observation, the greater was the distance to decision making or the lower was the involvement in key projects, the greater was the disconnection or even disenchantment. As a second conflicting but logical outcome, the closer was the relationship with perceived failed or failing projects, the larger was the frustration.“When we go and ask for a minor improvement, they put that it will be solved with the Master Plan”

However, the SC still acknowledged the risks of losing adherence to the ISMP among users, mainly academicians.“We were so involved in execution that we forgot to explain what we were doing.”

5.3.2. Program Governance and Management

On the other hand, one executive of the SC puts:“To some extent, program governance was replaced by project execution.”

“The organisation as a whole (be IT, administrative management and academy) was not mature for a more participatory scheme.”

“What is happening with IT is not different that what happens with other issues.”

5.4. Overall Balance

- The ISMP was regarded as a valuable tool for setting priorities to transform the IT base and to increase the IT effectiveness, ensuring alignment and providing value, particularly as regards business growth and stability.

- The level of execution and the agility to adapt the Plan to new business priorities were also considered overall satisfying and had allowed the institution to support its objectives of growth.

- The ability to raise the new demands or major change requests to an executive board was also noted.

- It was widely regarded that the focus on the ISMP had been at the expense of the day to day demands of improvement of the existing legacy applications and tools.

- Better execution results were shown in those projects where existed a clear project definition, the technology solution was simple and well identified and where there were stronger leaderships and lower coordination costs.

- The improvement of the corporate governance of IT was perceived as compulsory, with a major involvement of the faculty management leaders.

- Better prioritization mechanisms, communication policies and project management processes were demanded to be put in place, to ensure shared commitment of the different constituencies.

“Strategy making is a matter of yeses and nos. The plan was designed as a top-down strategy to renew the technology basis of a rapidly transforming organisation in a short time-frame. Focus was on transformation and execution. Other considerations were left behind, to some extent”.

5.5. Further Developments

“If the first Master Plan was the one of an actionable strategy, the Plan as recently reviewed is for better governance and improved execution.”

6. Conclusions

6.1. Discussion

6.2. Practical Implications

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Sutherland, A.R.; Galliers, R.D. The evolving information systems strategy information systems management and strategy formulation: Applying and extending the ‘stages of growth’ concept. In Strategic Information Management: Challenges and Strategies in Managing Information Systems; Routledge: Abingdon, UK, 2014; pp. 47–77. [Google Scholar]

- Bharadwaj, A.; El Sawy, O.A.; Pavlou, P.A.; Venkatraman, N.V. Digital Business Strategy: Toward a Next Generation of Insights. MIS Q. 2013, 37, 471–482. [Google Scholar] [CrossRef]

- Peppard, J.; Galliers, R.; Thorogood, A. Information systems strategy as practice: Micro strategy and strategizing for IS. J. Strateg. Inf. Syst. 2014, 23, 1–10. [Google Scholar] [CrossRef]

- Whittington, R. Completing the practice turn in strategy research. Organ. Stud. 2006, 27, 613–634. [Google Scholar] [CrossRef]

- Johnson, G.; Whittington, R.; Scholes, K.; Angwin, D.; Regnér, P. Exploring Strategy: Text and Cases, 11st ed.; Pearson Education: London, UK, 2016. [Google Scholar]

- Teubner, R.A.; Mocker, M. A literature Overview on Strategic Information Systems Planning. Available online: http://dx.doi.org/10.2139/ssrn.1959494 (accessed on 13 September 2019).

- Amrollahi, A.; Ghapanchi, A.H.; Talaei-Khoei, A. A systematic literature review on strategic information systems planning: Insights from the past decade. Pac. Asia J. Assoc. Inf. Syst. 2013, 5, 4-1–4-28. [Google Scholar] [CrossRef]

- Earl, M.J. (Ed.) Information Management: The Organizational Dimension; Oxford University Press: Oxford, UK, 1996. [Google Scholar]

- Peppard, J.; Ward, J. The Strategic Management of Information Systems: Building a Digital Strategy; John Wiley Sons: Hoboken, NJ, USA, 2016. [Google Scholar]

- Bates, A.W.; Bates, T.; Sangrá, A. Managing Technology in Higher Education: Strategies for Transforming Teaching and Learning; John Wiley Sons: Hoboken, NJ, USA, 2011. [Google Scholar]

- Rodríguez, J.-R. El Máster Plan (Plan Director) de Sistemas de Información de la UOC. Caso Práctico. Available online: http://openaccess.uoc.edu/webapps/o2/bitstream/10609/78267/6/Direcci%C3%B3n%20de%20sistemas%20de%20informaci%C3%B3n%20%28Executive%29_M%C3%B3dulo%205_Planificaci%C3%B3n%20estrat%C3%A9gica%20de%20sistemas%20de%20informaci%C3%B3n.pdf (accessed on 13 September 2019).

- Sein, M.K.; Henfridsson, O.; Purao, S.; Rossi, M.; Lindgren, R. Action design research. MIS Q. 2011, 35, 37–56. [Google Scholar] [CrossRef]

- Salmela, H.; Spil, T.A. Dynamic and emergent information systems strategy formulation and implementation. Int. J. Inf. Manag. 2002, 22, 441–460. [Google Scholar] [CrossRef]

- Henderson, J.C.; Venkatraman, H. Strategic alignment: Leveraging information technology for transforming organizations. IBM Syst. J. 1993, 32, 472–484. [Google Scholar] [CrossRef]

- Chan, Y.E.; Huff, S.L.; Copeland, D.G. Assessing realized information systems strategy. J. Strateg. Inf. Syst. 1997, 6, 273–298. [Google Scholar] [CrossRef]

- Kopmann, J.; Kock, A.; Killen, C.P.; Gemünden, H.G. The role of project portfolio management in fostering both deliberate and emergent strategy. Int. J. Proj. Manag. 2017, 35, 557–570. [Google Scholar] [CrossRef]

- Hunter, R.; Apfel, A.; McGee, K.; Handler, R.; Dreyfuss, C.; Smith, M.; Maurer, W. A Simple Framework to Translate IT Benefits Into Business Value Impact. Available online: https://www.gartner.com/en/documents/672507/a-simple-framework-to-translate-it-benefits-into-business (accessed on 13 September 2019).

- Pennypacker, J.S.; Dye, L. Project Portfolio Management and Managing Multiple Projects: Two Sides of the Same Coin; Marcel Dekker: New York, NY, USA, 2002. [Google Scholar]

- Thiry, M. Program Management; Gower: Farnham, UK, 2010. [Google Scholar]

- Galliers, R. Information Systems Planning in the United Kingdom and Australia: A Comparison of Current Practice; Oxford University Press: Oxford, UK, 1987. [Google Scholar]

- Isaca, C. Cobit 5: A Business Framework for the Governance and Management of Enterprise IT; ISACA: Rolling Meadows, IL, USA, 2014; ISBN 1963669381. [Google Scholar]

- Rodriguez, J.-R.; Clariso, R.; Marco-Simo, J. Strategy in the making: Assessing the execution of a strategic information systems plan. In European, Mediterranean, and Middle Eastern Conference on Information Systems; Springer: Cham, Switzerland, 2018; pp. 475–488. [Google Scholar]

- Lederer, A.; Sethi, V. The Implementation of Strategic Information Systems Planning Methodologies. MIS Q. 1988, 12, 445–461. [Google Scholar] [CrossRef]

- Galliers, R.D. Strategic information systems planning: Myths, reality and guide-lines for successful implementation. Eur. J. Inf. Syst. 1991, 1, 55–64. [Google Scholar] [CrossRef]

- Premkumar, G.; King, W. Assessing strategic information systems planning. Long Range Plan. 1991, 24, 41–58. [Google Scholar] [CrossRef]

- Lederer, A.L.; Sethi, V. Key prescriptions for strategic information systems planning. J. Manag. Inf. Syst. 1996, 13, 35–62. [Google Scholar] [CrossRef]

- Mentzas, G. Implementing an IS strategy—A team approach. Long Range Plan. 1997, 30, 84–95. [Google Scholar] [CrossRef]

- Segars, A.H.; Grover, V. Strategic information systems planning success: An investigation of the construct and its measurement. MIS Q. 1998, 22, 139–163. [Google Scholar] [CrossRef]

- Doherty, N.F.; Marples, C.G.; Suhaimi, A. The relative success of alternative approaches to strategic information systems planning: An empirical analysis. J. Strateg. Inf. Syst. 1999, 8, 263–283. [Google Scholar] [CrossRef]

- Teo, T.S.; Ang, J.S. An examination of major IS planning problems. Int. J. Inf. Manag. 2001, 21, 457–470. [Google Scholar] [CrossRef]

- Hartono, E.; Lederer, A.L.; Sethi, V.; Zhuang, Y. Key predictors of the implementation of strategic information systems plans. ACM SIGMIS Database DATABASE Adv. Inf. Syst. 2003, 34, 41–53. [Google Scholar] [CrossRef]

- Vitale, M.R.; Ives, B.; Beath, C.M. Linking information technology and corporate strategy: An organizational view. In Proceedings of the International Conference on Information Systems (ICIS), San Diego, CA, USA, 15–17 December 1986; p. 30. [Google Scholar]

- Sambamurthy, V.; Venkataraman, S.; DeSanctis, G. The design of information technology planning systems for varying organizational contexts. Eur. J. Inf. Syst. 1993, 2, 23–35. [Google Scholar] [CrossRef]

- Gottschalk, P. Strategic information systems planning: The IT strategy implementation matrix. Eur. J. Inf. Syst. 1999, 8, 107–118. [Google Scholar] [CrossRef]

- Da Cunha, P.R.; de Figueiredo, A.D. Information systems development as flowing wholeness. In Realigning Research and Practice in Information Systems Development; Springer: Boston, MA, USA, 2001; pp. 29–48. [Google Scholar]

- Brown, I.T. Testing and extending theory in strategic information systems plan-ning through literature analysis. Inf. Resour. Manag. J. 2004, 17, 20. [Google Scholar] [CrossRef]

- Chen, D.Q.; Mocker, M.; Preston, D.S.; Teubner, A. Information systems strategy: Reconceptualization, measurement, and implications. MIS Q. 2010, 34, 233–259. [Google Scholar] [CrossRef]

- Mikalef, P.; Pateli, A.; Batenburg, R.S.; Wetering, R.V.D. Purchasing alignment under multiple contingencies: A configuration theory approach. Ind. Manag. Data Syst. 2015, 115, 625–645. [Google Scholar] [CrossRef]

- Arvidsson, V.; Holmström, J.; Lyytinen, K. Information systems use as strategy practice: A multi-dimensional view of strategic information system implementation and use. J. Strateg. Inf. Syst. 2014, 23, 45–61. [Google Scholar] [CrossRef]

- Kamariotou, M.; Kitsios, F. Information systems phases and firm performance: A conceptual framework. In Strategic Innovative Marketing; Springer: Cham, Switzerland, 2017; pp. 553–560. [Google Scholar]

- Drnevich, P.; Croson, D. Information technology and business-level strategy: toward an integrated theoretical perspective. MIS Q. 2013, 483–509. [Google Scholar] [CrossRef]

- Vaara, E.; Whittington, R. Strategy-as-practice: Taking social practices seriously. Acad. Manag. Ann. 2012, 6, 285–336. [Google Scholar] [CrossRef]

- Jarzabkowski, P.; Kaplan, S.; Seidl, D.; Whittington, R. On the risk of studying practices in isolation: Linking what, who, and how in strategy research. Strateg. Organ. 2016, 14, 248–259. [Google Scholar] [CrossRef]

- Zelenkov, Y. Critical regular components of IT strategy: Decision making model and efficiency measurement. J. Manag. Anal. 2015, 2, 95–110. [Google Scholar] [CrossRef]

- Suomalainen, T.; Kuusela, R.; Tihinen, M. Continuous planning: An important aspect of agile and lean development. Int. J. Agile Syst. Manag. 2015, 8, 132–162. [Google Scholar] [CrossRef]

- Mirchandani, D.; Lederer, A. “Less is more”: Information systems planning in an uncertain environment. Inf. Syst. Manag. 2012, 29, 13–25. [Google Scholar] [CrossRef]

- Tellis, W.M. Application of a case study methodology. Qual. Rep. 1997, 3, 1–19. [Google Scholar]

- Sabherwal, R. The relationship between information system planning sophistication and information system success: An empirical assessment. Decis. Sci. 1999, 30, 137–167. [Google Scholar] [CrossRef]

- Bates, A. Managing technological change: Strategies for college and university leaders. In The Jossey-Bass Higher and Adult Education Series; Jossey-Bass Publishers: San Francisco, CA, USA, 2000. [Google Scholar]

- Bulchand, J.; Rodríguez, J. Information and communication technologies and information systems planning in higher education. Informatica (Ljubljana) 2003, 27, 275–284. [Google Scholar]

- Nguyen, F.; Frazee, J. Strategic technology planning in higher education. Perform. Improv. 2009, 48, 31–40. [Google Scholar] [CrossRef]

- Kirinic, V.; Kozina, M. Maturity assessment of strategy implementation in higher education institution. In Central European Conference on Information and Intelligent Systems; Faculty of Organization and Informatics Varazdin: Varaždin, Croatia, 2016; p. 169. [Google Scholar]

- Kobulnicky, P. Critical Factors in Information Technology Planning for the Academy. Cause/Effect 1999, 22, 19–26. [Google Scholar]

- Titthasiri, W. Information technology strategic planning process for institutions of higher education in Thailand. NECTEC Tech. J. 2000, 3, 153–164. [Google Scholar]

- Ishak, I.; Alias, R. Designing a Strategic Information System Planning Methodology for Malaysian Institutes of Higher Learning (ISP-IPTA); Universiti Teknologi Malaysia: Skudai, Malaysia, 2005. [Google Scholar]

- Clarke, S.; Lehaney, B. Mixing methodologies for information systems development and strategy: A higher education case study. J. Oper. Res. Soc. 2000, 51, 542–556. [Google Scholar] [CrossRef]

- Jaffer, S.; Ng’ambi, D.; Czerniewicz, L. The role of ICTs in higher education in South Africa: One strategy for addressing teaching and learning challenges. Int. J. Educ. Dev. Using ICT 2007, 3, 131–142. [Google Scholar]

- Goncalves, N.; Sapateiro, C. Aspects for Information Systems Implementation: Challenges and impacts. A higher education institution experience. Tékhne-Revista de Estudos Politécnicos 2008, 9, 225–241. [Google Scholar]

- Luic, L.; Boras, D. Strategic planning of the integrated business and information system-A precondition for successful higher education management. In Proceedings of the 32nd International Conference on Information Technology Interfaces (ITI 2010), Cavtat, Croatia, 21–24 June 2010. [Google Scholar]

- Barn, B.S.; Clark, T.; Hearne, G. Business and ICT alignment in higher education: A case study in measuring maturity. In Building Sustainable Information Systems; Linger, H., Fisher, J., Barnden, A., Barry, C., Lang, M., Schneider, C., Eds.; Springer: Boston, MA, USA, 2013. [Google Scholar]

- Soares, S.; Setyohady, D.B. Enterprise architecture modeling for oriental university in Timor Leste to support the strategic plan of integrated information system. In Proceedings of the 5th International Conference on Cyber and IT Service Management (CITSM), Denpasar, Indonesia, 8–10 August 2017; pp. 1–6. [Google Scholar]

- Rice, M.; Miller, M. Faculty involvement in planning for the use and integration of instructional and administrative technologies. J. Res. Comput. Educ. 2001, 33, 328–336. [Google Scholar] [CrossRef]

- Dempster, J.; Deepwell, F. Experiences of national projects in embedding learning technology into institutional practices in UK higher education. In Learning Technology in Transition: From Individual Enthusiasm to Institutional Implementation; Taylor & Francis: London, UK, 2003; pp. 45–62. [Google Scholar]

- Abel, R. Achieving Success in Internet-Supported Learning in Higher Education: Case Studies Illuminate Success Factors, Challenges, and Future Directions; Alliance for Higher Education Competitiveness: Lake Mary, FL, USA, 2005. [Google Scholar]

- Sharpe, R.; Benfield, G.; Francis, R. Implementing a university e-learning strategy: Levers for change within academic schools. Res. Learn. Technol. 2006, 14, 135–151. [Google Scholar] [CrossRef]

- Stensaker, B.; Maassen, P.; Borgan, M.; Oftebro, M.; Karseth, B. Use, updating and integration of ICT in higher education: Linking purpose, people and pedagogy. High. Educ. 2007, 54, 417–433. [Google Scholar] [CrossRef]

- Goolnik, G. Change Management Strategies When Undertaking eLearning Initiatives in Higher Education. J. Organ. Learn. Leadersh. 2012, 10, 16–28. [Google Scholar]

- Bianchi, I.; Rui, D. IT Governance mechanisms in higher education. Procedia Comput. Sci. 2016, 100, 941–946. [Google Scholar] [CrossRef]

- Khouja, M.; Rodriguez, I.; Halima, Y.; Moalla, S. IT Governance in Higher education Institutions: A systematic Literature review. Int. J. Hum. Cap. Inf. Technol. Prof. (IJHCITP) 2018, 9, 52–67. [Google Scholar] [CrossRef]

- Baskerville, R.; Wood-Harper, A.T. A Taxonomy of Action Research Methods; Institut for Informatik og Økonomistyring: Handelshøjskolen i København, Denmark, 1996. [Google Scholar]

- Chan, Y.E.; Reich, B.H. IT alignment: What have we learned? J. Inf. Technol. 2007, 22, 297–315. [Google Scholar] [CrossRef]

- Coltman, T.; Tallon, P.; Sharma, R.; Queiroz, M. Strategic IT alignment: Twenty-five years on. J. Inf. Technol. 2015, 30, 91–100. [Google Scholar] [CrossRef]

- Juiz, C.; Toomey, M. To govern IT, or not to govern IT? Commun. ACM 2015, 58, 58–64. [Google Scholar] [CrossRef]

- Mintzberg, H.; Waters, J.A. Of strategies, deliberate and emergent. Strateg. Manag. J. 1985, 6, 257–272. [Google Scholar] [CrossRef]

- Ambrosini, V.; Johnson, G.; Scholes, K. Exploring Techniques of Analysis and Evaluation in Strategic Management; Prentice Hall Europe: Hemel Hempstead, UK, 1998. [Google Scholar]

- Parker, M.M.; Benson, R.J.; Trainor, H.E. Information Economics: Linking Business Performance to Information Technology; Prentice-Hall: Upper Saddle River, NJ, USA, 1988. [Google Scholar]

- Ashurst, C.; Doherty, N.F.; Peppard, J. Improving the impact of IT development projects: The benefits realization capability model. Eur. J. Inf. Syst. 2008, 17, 352–370. [Google Scholar] [CrossRef]

- Ward, J.; Daniel, E. Benefits Management: How to Increase the Business Value of your IT Projects; John Wiley Sons: Hoboken, NJ, USA, 2012. [Google Scholar]

- Meskendahl, S. The influence of business strategy on project portfolio management and its success—A conceptual framework. Int. J. Proj. Manag. 2010, 28, 807–817. [Google Scholar] [CrossRef]

- DeLone, W.H.; McLean, E.R. Information systems success: The quest for the dependent variable. Inf. Syst. Res. 1992, 3, 60–95. [Google Scholar] [CrossRef]

- DeLone, W.H.; McLean, E.R. The DeLone and McLean model of information systems success: A ten-year update. J. Manag. Inf. Syst. 2003, 19, 9–30. [Google Scholar]

- Gable, G.G.; Sedera, D.; Chan, T. Re-conceptualizing information system success: The IS-impact measurement model. J. Assoc. Inf. Syst. 2008, 9, 377. [Google Scholar] [CrossRef]

- Petter, S.; DeLone, W.; McLean, E. Measuring information systems success: Models, dimensions, measures, and interrelationships. Eur. J. Inf. Syst. 2008, 17, 236–263. [Google Scholar] [CrossRef]

- Bartenschlager, J.; Goeken, M. IT strategy implementation framework-bridging enterprise architecture and IT governance. In Proceedings of the 16th Americas Conference on Information Systems (AMCIS 2010), Lima, Peru, 12–15 August 2010; p. 400. [Google Scholar]

- Universitat Oberta de Catalunya: Strategic Plan 2014–2020. Available online: https://www.uoc.edu/portal/_resources/EN/documents/la_universitat/uoc-strategic-plan-2014-2020.pdf (accessed on 13 September 2019).

- Hall, L.; Stegman, E.; Futela, S.; Badlani, D. IT Key Metrics Data 2018: Key Industry Measures: Software Publishing and Internet Services Analysis: Current Year. Available online: https://www.gartner.com/en/documents/3832775/it-key-metrics-data-2018-key-industry-measures-software- (accessed on 13 September 2019).

- Sarkar, S. The role of information and communication technology (ICT) in higher education for the 21st century. Science 2012, 1, 30–41. [Google Scholar]

- Barber, M.; Donnelly, K.; Rizvi, S.; Summers, L. An avalanche is coming. In Higher Education and the Revolution ahead; Institute for Public Policy Research: London, UK, 2013; Volume 73. [Google Scholar]

- Altbach, P.G. Global Perspectives on Higher Education; JHU Press: Baltimore, MD, USA, 2016. [Google Scholar]

- Pucciarelli, F.; Kaplan, A. Competition and strategy in higher education: Managing complexity and uncertainty. Bus. Horiz. 2016, 59, 311–320. [Google Scholar] [CrossRef]

- Lowendahl, J.M.; Thayer, T.B.; Morgan, G.; Yanckello, R.A. Top 10 Business Trends Impacting Higher Education in 2018. Available online: https://www.gartner.com/en/documents/3843385/top-10-business-trends-impacting-higher-education-in-2010 (accessed on 13 September 2019).

- Grajek, S. Top 10 IT Issues, 2018: The Remaking of Higher Education. Available online: https://er.educause.edu/articles/2018/1/top-10-it-issues-2018-the-remaking-of-higher-education (accessed on 13 September 2019).

| Strategic Information System Planning (as it Was) | Strategy as Practice (as it Goes) |

|---|---|

| SISP (Strategy Planning) | SAP (Strategy making) |

| IT-Business Alignment | Digital transformation/fusion |

| Intended | Emergent |

| Focus on planning | Focus on implementation and learning |

| Outcome | Process |

| Deviation as failure | Deviation as adaptation |

| Comprehensive | Incremental |

| Static | Ongoing |

| Formal | Agile |

| IT functional capability | IS organizational capability |

| Business Executives and IT Staff | Executives and employees |

| Prescriptive | Contingent, configurational |

| Dimension | Key Concepts | Main References |

|---|---|---|

| Strategy | Alignment | Henderson and Venkatraman [14], Chan and Reich [71], Coltman et al. [72], Juiz and Toomey [73] |

| Intended and realized strategies | Mintzberg and Waters [74], Chan et al. [15], Salmela and Spil [13], Vaara and Whittington [42], Kopmann et al. [16] | |

| Performance | Benefits realization | Ambrosini et al. [75], Parker et al. [76], Ashurts et al. [77], Hunter et al. [17], Ward and Daniel [78] |

| Program management | Dye and Pennypacker [18], Thiry [19], Meskendahl [79], Kopmann et al. [16] | |

| Governance | Stakeholders satisfaction | Galliers [20], DeLone and McNeal [80,81], Gable et al. [82], Petter et al. [83] |

| Program management and governance | Dye and Pennypacker [18], Bartenschlager et al. [84], Thiry [19], Isaca [21] |

| # | Title | Budget 2015–2018 (€) |

|---|---|---|

| 1 | Customer and community relationships management (CRM) | 795.000 |

| 2 | Learning management environment (LMS) and learning applications | 280.000 |

| 3 | Mobile first: responsible web site and mobile apps environment | 1297.000 |

| 4 | Enterprise data management (BI) | 810.000 |

| 5 | Student information system | 2620.000 |

| 6 | Administration support (ERPs: finance, human capital, other) | 1390.000 |

| 7 | Technology architecture and migration to the Cloud | 2925.000 |

| 8 | User experience (UX) transformation | 280.000 |

| 9 | Digital empowerment and change management | 240.000 |

| 10 | Security and data privacy | 550.000 |

| Total | 11,717.000 |

| Strategy | Performance | Governance | |

|---|---|---|---|

| Key concepts | Alignment. Deliberate and emergent strategies. | Program and project execution. Benefits realization. | Satisfaction of key stakeholders. Program and IT Governance. |

| Input and sources | Business Strategic Plan (2014–2020). Original IS Master Plan case study PMO execution reports. | PMO execution reports. KPI standard inventories of IT impact. Management reporting. | Online survey to managers and key users (115 respondents). Individual interviews to executives (23). (Source: report by external evaluator.) |

| Process | Qualified impact matrix. Overall analysis (2 iterations). Semi-structured interviews with top management (11). | Structured workshops with executives and managers for feedback and analysis (12). Lessons learned workshop (1) and individual report. | Results included for discussion and refinement in top management interviews and workshops. Internal discussion with sponsors and Project Steering Committee. |

| Participants | Members of the Project Steering Committee. Members of the Board of Executive Directors. | Business executives and managers (28). IT Project Leaders (15). | All. |

| Outcome | Summary of conclusions. | Individual files per project (10). Prioritized issue map for Project leaders. Summary of conclusions. | Summary of key values and major qualitative conclusions. |

| Timeframe | 15 June–30 J July 2017. | 15 July– 30 September 2017. | Survey: February 2017. Further analysis: September 2017. |

| Group | Role | Members |

|---|---|---|

| Steering Committee (SC) | Discuss and approve final and intermediate outcomes. Raise proposals to the Executive Board of the University. | CEO, Vice-Chancellor of Learning, COO, CFO, Dean of the Computer Science School, Leader of the PMO, Researcher |

| Project team (PT) | Gather and analyze data and documents, prepare and lead meetings and workshops, summarize conclusions and write reports and presentations. | Project Office of the ISMP (PMO), IT Demand Manager, Researcher |

| Project sponsors | Secure time and resources. Communicate and act in favor of the project. | COO, CIO |

| Project co-leaders | Plan, monitor and execute tasks. Prepare final deliverables. | Head of the PMO, Researcher |

| Researcher | Proposes methods and professional and scientific references. Co-leads the project team. Runs top individual interviews. | Lecturer and researcher in IS Management at the Computer Science Department |

| Input | Process | Outcome | Participants |

|---|---|---|---|

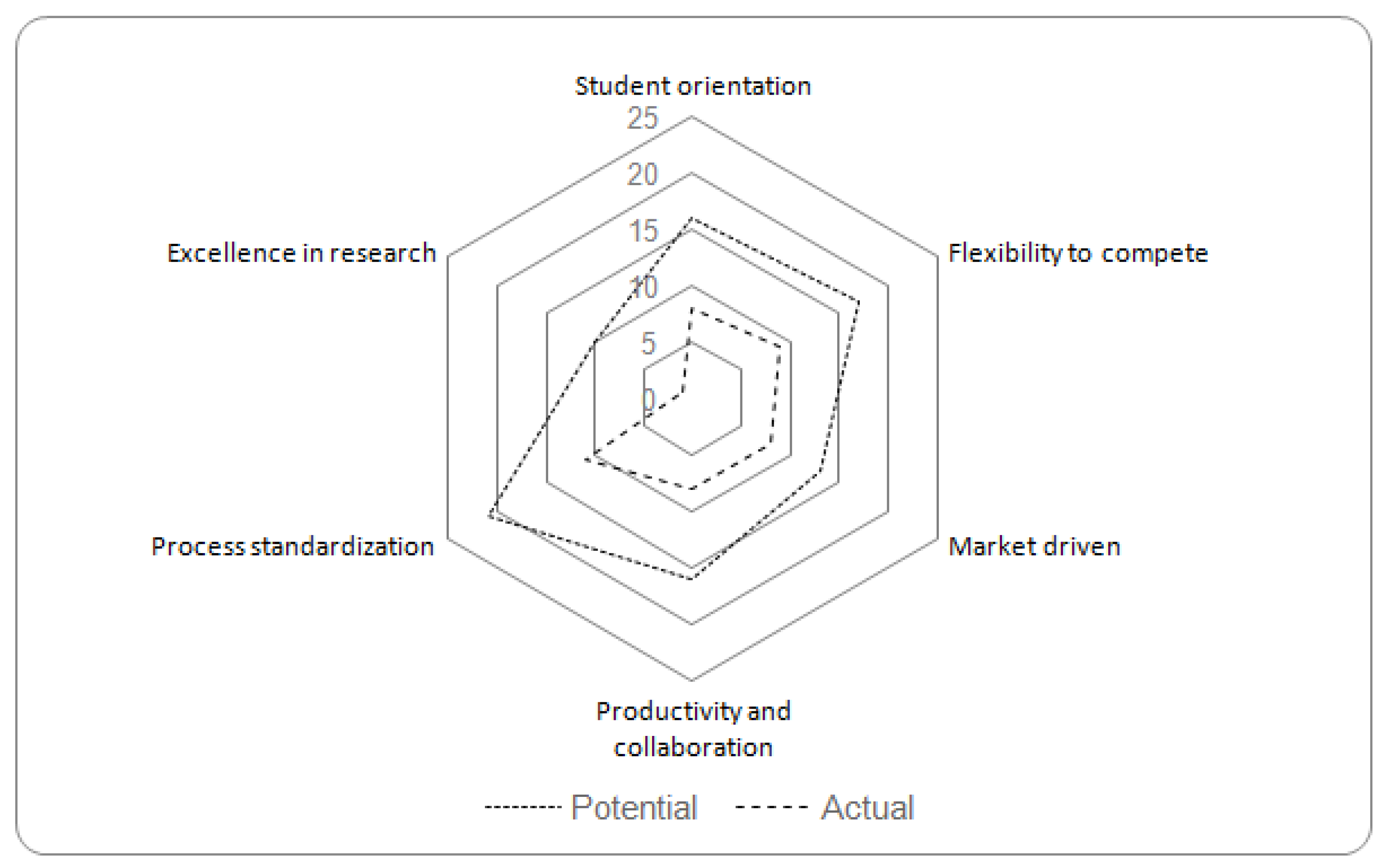

| WS 1. Strategic alignment Initial outline New initiatives Current status | Interviews with management members Comparison expected vs. actual Impact matrix Expert judgment | Radar chart Summary and feedback of conclusions | Members of the SC Members of the board of executive directors Project directors |

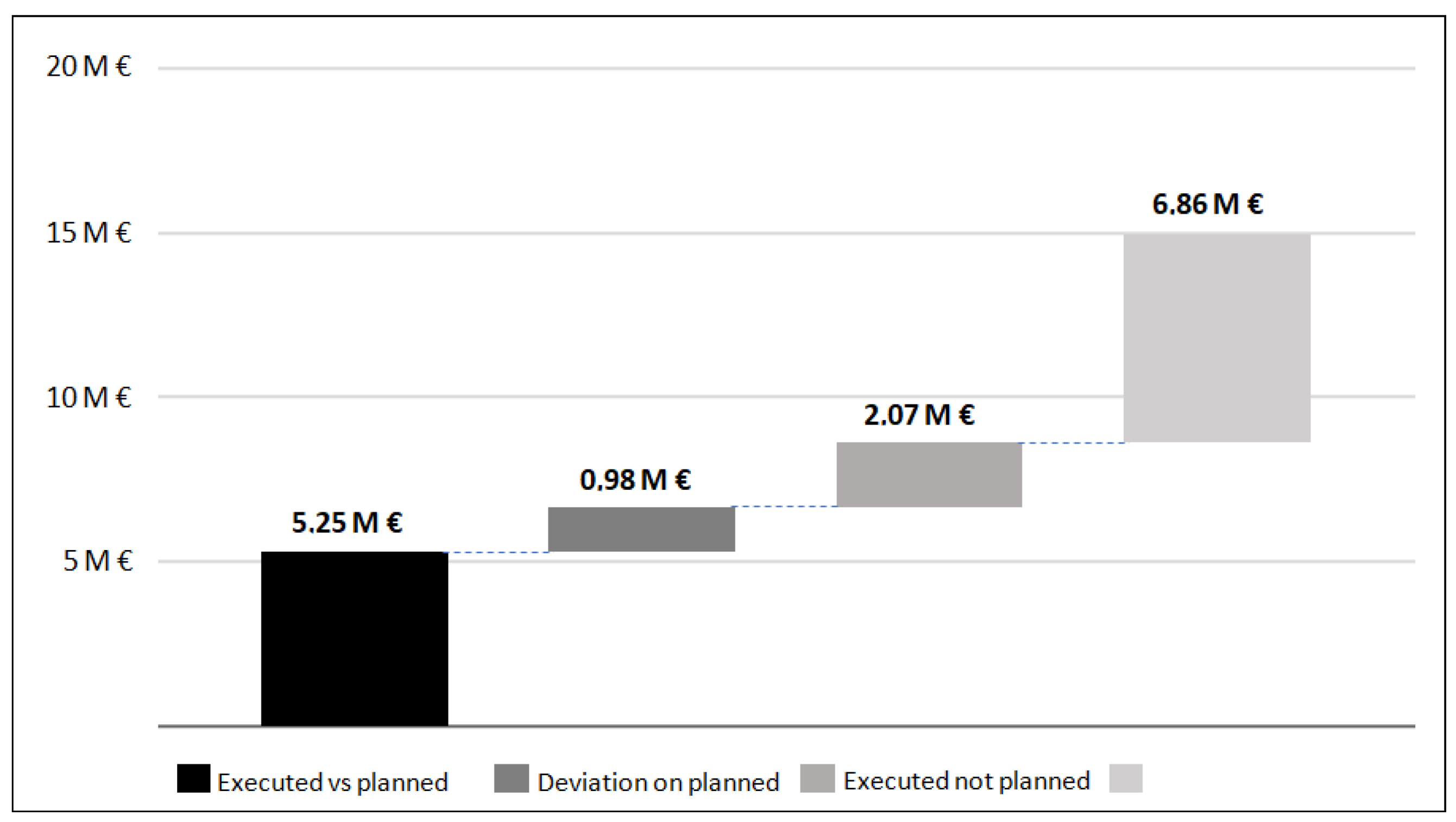

| WS 2. Program execution Initial outline Project breakdown Current status | Normalization of outcomes and results Comparison planned vs. actual Definition of metrics Production of indicators | Chronological description of activities Outputs obtained Normalized and aggregated indicators of scope, time and cost Summary and adjustment of conclusions | IT Business Partners IT Strategic Initiative Sponsors IT Project managers PMO |

| WS 3. Benefits realization Initial outline Project breakdown Outcomes obtained | Definition of benefits Definition of metrics Production of indicators Discussion with the business units in focus groups | Description of benefits Benefits quantified and qualified Summary and adjustment of conclusions | IT Business Partners IT Strategic Initiative Sponsors IT Project managers Business unit executives PMO |

| WS 4. Program management mechanisms Description of program governance and management mechanisms and methodologies Minutes of committee meetings | Interviews with management members Analysis of minutes and other materials Focus group and survey to IT project managers Expert judgement | Chronological description of program management mechanisms Results of the survey Opinion making Summary and feedback of conclusions | Members of the Steering Committee Members of the board of executive directors Business and IT executives IT Project managers PMO |

| WS 5. Key users’ satisfaction Results of internal surveys Internal data of complaints and incidents | Interviews with management members Analysis of internal surveys Discussion with the business units in focus group Expert judgement | Selection of metrics Summary and feedback of quantitative and qualitative conclusions | Members of the SC Members of the board of executive directors IT Head of Business Relationship External consultant PMO |

| # | Title | Scope | Completion | Time | Completion |

|---|---|---|---|---|---|

| (Scope) | (Budget) | ||||

| 1 | Customer and community relationships management (CRM) | Planned | 55% | On time | 30% |

| 2 | Learning management environment (LMS) and learning applications | Reviewed | 54% | Delayed | 132% |

| 3 | Mobile first: responsible web site and mobile apps environment | Planned | 62% | Delayed | 66% |

| 4 | Enterprise data management (BI) | Planned | 54% | On time | 69% |

| 5 | Student information system (SIS) | Planned | 30% | Delayed | 46% |

| 6 | Administration support (ERPs: finance, human capital, other) | Planned | 40% | Delayed | 45% |

| 7 | Technology architecture and migration to the Cloud | Planned | 60% | On time | 54% |

| 8 | User experience (UX) transformation | Planned | 79% | On time | 155% |

| 9 | Digital empowerment and change management | Planned | 55% | On time | 36% |

| 10 | Security and data privacy | Planned | 69% | On time | 55% |

| Mode (qualitative)/Average (quantitative) | Planned | 56% | 59% | ||

| Positive | Negative |

|---|---|

| Well defined business strategy and needs. Strong and dedicated leadership of business managers. Clear technological solution. | Slow public tendering procedures. Large cross departmental projects, especially those involving the faculty. Underestimation of integration and migration costs. |

| KPIs Measuring Value | KPIs Measuring Effort/Activity |

|---|---|

| Productivity and conversion rate of the call center. Enrolments from target countries. Increased multilingual portfolio. Personnel per student ratio. Regular users of Google Apps. Time for processing the payroll. Malicious IP addresses intercepted. IT expenditure per student/personnel. | User experience improvements. Availability and accessibility of new services at the classroom. New mobile apps. New management dashboards. Files managed with the new academic administration application. Expenditure in cloud infrastructure. Training sessions and tutorials. New contingency platform. |

| Question | Areas | ||

|---|---|---|---|

| Administration | Teaching&Research | Average | |

| Awareness of the ISMP | 4.43 | 3.69 | 4.06 |

| Contribution of the ISMP to the corporate strategy | 5.07 | 4.50 | 4.78 |

| Contribution of the ISMP to the different functional areas | 5.02 | 4.05 | 4.53 |

| Contribution of the ISMP to my area | 4.64 | 3.88 | 4.26 |

| Information about the execution of the ISMP | 4.09 | 3.52 | 3.80 |

| Overall rating | 4.64 | 3.88 | 4.26 |

| Order | Issue | Value |

|---|---|---|

| 1 | Lack of project leaders and managers | 15 |

| 2 | Lack of planning of business resources allocated to projects | 10 |

| 3 | Lack of project quality control end to end | 9 |

| 4 | Lack of business sponsorship, especially in cross-departmental projects | 7 |

| 5 | Poor project definition | 7 |

| 6 | Resistance to change when business process transformation is required | 6 |

| Dimension | Results of the Assessment | New Arrangements |

|---|---|---|

| Strategic alignment: Intended strategies | Good for growth, stability and control. | Major weight for quality, learning and research. |

| Emergent strategies | Satisfying, but affecting other priorities. | Review of the portfolio every 18 months. |

| Performance: Program execution. | Satisfying, but dependent on maturity. | Include maturity as a prioritization criterion. |

| Benefits realization | Aligned with main business priorities, uneven for the rest. | Progressively include business metrics for the approval of new investments. |

| Governance: Satisfaction | Uneven: good for top management, not so good for middle management and academia. | Set up a corporate IT Governance body, with greater participation of the academia. |

| Governance: Program management | Improve prioritization mechanisms, communication and project management. | New portfolio management arrangements. New IT operational model. New PMO. |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Rodríguez, J.-R.; Clarisó, R.; Marco-Simó, J.M. Towards a Framework for Assessing IT Strategy Execution. Computers 2019, 8, 69. https://doi.org/10.3390/computers8030069

Rodríguez J-R, Clarisó R, Marco-Simó JM. Towards a Framework for Assessing IT Strategy Execution. Computers. 2019; 8(3):69. https://doi.org/10.3390/computers8030069

Chicago/Turabian StyleRodríguez, José-Ramón, Robert Clarisó, and Josep Maria Marco-Simó. 2019. "Towards a Framework for Assessing IT Strategy Execution" Computers 8, no. 3: 69. https://doi.org/10.3390/computers8030069

APA StyleRodríguez, J.-R., Clarisó, R., & Marco-Simó, J. M. (2019). Towards a Framework for Assessing IT Strategy Execution. Computers, 8(3), 69. https://doi.org/10.3390/computers8030069