1. Introduction

Conventional sensors are unable to match the range of luminance that is captured by the human eye. Hence, the images obtained by conventional capturing devices suffer from a lower dynamic range compared to what is perceived by the human visual system. A natural scene may comprise of both bright and dark regions. The light captured by the sensor will depend on the exposure time and shall result in the capture of bright, dark or medium range features. If the exposure time is small, then only bright objects will be clearly visible in the captured image, while darker objects will appear black. If the exposure time is increased, dark objects start becoming visible; however, bright objects will become over-exposed or washed out in the image. This means that if you take a photograph standing in a room with the intention of capturing both the objects inside the room and outside the window, the camera will only be able to capture either what is inside the room or what is outside the window. Mimicking the human visual system, which is capable of seeing both inside the room and outside at the same time, researchers have proposed the generation of high dynamic range (HDR) images using multiple low dynamic range (LDR) images. The LDR images are captured at different exposure settings and therefore are capable of capturing objects with different intensity. Fusing them together results in an image with visible bright and dark objects.

Generally, a sequence of successive LDR images is captured—i.e., captured sequentially in time and with varying exposure—to generate an HDR image. The slight delay in changing the exposure setting and capturing the images may result in movement of objects in the scene. This movement of objects results in the issue of ghosting in generated HDR images. Ghosting may also be caused because of camera movement. However, in the course of this work, we shall not consider the case of camera movement and assume that ghosting has been caused because of the movement of objects. Numerous deghosting methods have been proposed over the past decade and will be discussed in detail in the next section. One of the most popular among them is based on identifying ghost pixels—i.e., pixels which have moved between images—and excluding them from the process of HDR image generation. If these pixels are not replaced appropriately, their absence would result in a hole in the generated HDR image. One solution is to replace these pixels by corresponding pixels from a reference image. This paper proposes the use of a spectral angle mapper (SAM) to identify ghost pixels and replace these pixels using a reference image with average exposure settings. The advantage of SAM is that it is illumination invariant and, hence, can be used to match the spectral signature of two pixels across images with varying exposures. This property makes SAM an ideal candidate for deghosting, even in the absence of image exposure values.

The rest of the paper is organized as follows.

Section 2 presents a brief overview of the state-of-the-art in the area of deghosting for the generation of HDR images.

Section 3 presents the proposed methodology.

Section 4 introduces the dataset used and a comparison with existing HDR deghosting methods. Conclusions are presented in

Section 5.

2. Literature Review

Deghosting is a topic that has been researched extensively over the past decade. In a recent survey, Tursun et al. classified HDR image deghosting methods into global exposure registration, moving object removal, moving object selection and moving object registration [

1]. Following the global exposure registration approach, Ward proposed a multi-resolution analysis method for removing the translational mis-alignment between captured images using pixel median values [

2]. Cerman et al. [

3] proposed to remove both translation and rotation-based mis-alignment using correlation in the frequency domain. Gevrekci et al. proposed using the contrast invariant feature transform (CIFT) for geometric registration of multi-exposure images, as CIFT does not require photometric registration as a pre-requisite for geometric registration [

4]. Tomaszewska et al. [

5] proposed extraction of spatial features using the scale invariant feature transform (SIFT) and the estimation of a planar homography between two multi-exposure images using random sample consensus (RANSAC). In [

6], Im et al. proposed the estimation of affine transformation between multi-exposure images by minimizing the sum of square errors. In 2011, Akyuz et al. [

7] proposed a method inspired by Ward’s method of testing pixel order relations. They identified that pixels having smaller intensity as compared to their bottom neighbor and higher intensity as compared to their right neighbor should have the same relation across exposures. They observed a correlation between such relations and minimized hamming distance between correlation maps for alignment.

To address the issue of object movement, researchers have focused on identifying and removing moving objects from multiple exposures. In this regard, Khan et al. [

8] proposed the removal of moving objects from HDR images iteratively. This was done by estimating the probability that each pixel is a background pixel—i.e., a static region—and separating it from non-static pixels. Granados et al. [

9] proposed the minimization of an energy function comprising data, smoothness and hard constraint terms using graph cuts. Silk et al. [

10] proposed the estimation of an initial motion mask using the absolute difference between two exposures. The motion mask was further refined by over-segmenting the super-pixels and then categorizing them into static or moving regions and assigning them less or more weight during HDR generation, respectively. Zhang et al. proposed an HDR generation method utilizing gradient domain-based quality measures [

11]. They proposed using visibility and consistency scores by assigning higher visibility score to pixels with larger gradient magnitudes and a higher consistency score if corresponding pixels had the same gradient direction.

Kao et al. [

12] estimated moving pixels using block-based matching between two exposures with ±2 EV difference. This meant that the intensities in the image with longer exposure should be scaled by a factor of 4; i.e., L

2 = 4 L

1. If this was not true, this would be an indication of potential movement, and the pixel would be replaced by a scaled version of the pixel from a shorter-exposure image. In [

13], Jacobs et al. proposed using uncertainty images, which are generated by calculating a variance map and entropy around object edges and checking if entropy changes for moving objects. The motion regions were replaced by a corresponding region of input exposure with the least amount of saturation and longest exposure time. In [

14], Pece and Kautz proposed motion-region detection using bitmap movement detection (BMD) based on median thresholding followed by refinement of the motion map using morphological operators. In [

15], Lee et al. proposed using the histogram of pixel intensities to detect ghost regions. They identified large differences in the rank of pixels as a ghost region. In [

10], Silk et al. proposed focusing on addressing movement due to fluttering or fluid motion by maximizing the sum of pixel weights in the region affected by motion. In [

16], Khan et al. proposed a simple deghosting method which assumed that a group of N pixels with intensity I

1 should have intensity I

2 in a second exposure. I

2 is sampled as the median value for the same group of N pixels in the second exposure. Assuming that M pixels in the second exposure differ from I

2 by a threshold, they are assigned the value I

2 to remove motion. Shim et al. addressed the issue of avoiding saturated pixels while generating an HDR image [

17]. They proposed a scaling function to estimate the scaling of static unsaturated pixels between each input image and a reference exposure. In [

18], Liu et al. proposed the use of dense scale invariant feature transform (DSIFT) for motion detection. Unlike SIFT, DSIFT is neither scale nor rotation invariant; however, it provides a per pixel feature vector and, hence, each pixel among two images can be checked for similarity. In a patch-based approach [

19], Zhang et al. proposed the calculation of correlation between local patches of reference and input images. The authors referred to the motion-free images as latent images. They further proposed the preservation of details by optimizing a contrast-based cost function. Chang et al. proposed the identification of areas with motion by calculating motion weights using a bidirectional intensity map and generated latent images using weight optimization in the gradient domain [

20]. In [

21], Zhang et al. proposed the estimation of inter-exposure consistency using histogram matching. To further restrain the outliers from contributing to the generation of HDR, they proposed using intra-consistency, which is motion detection at the super-pixel level. This helped assign similar weights to structures with similar intensities and structures.

Raman et al. proposed using the first few horizontal lines along the image border to identify the intensity map function (IMF) [

22]. Next, different rectangular patches were compared between the input and reference image. If the patches did not adhere to the intensity map function, it was assumed to have motion. Sen et al. [

23], proposed the use of a patch-based minimization of energy function, comprising a bidirectional similarity measure. Li et al. proposed a simple approach based on a bidirectional pixel similarity measure in [

24]. In [

25], Srikantha et al. assumed that input images have a linear camera response function (CRF). Only non-static pixels will not adhere to the linear CRF and, hence, will have smaller values when singular value decomposition (SVD) is applied to them. Sung et al. proposed an approach based on zero-mean normalized cross-correlation to estimate motion regions [

26]. Wang et al. proposed the normalization of each input image with a reference image (exposure) in Lab color space [

27]. The ghost mask was obtained using a threshold on the absolute difference map between reference and normalized input images in the Lab color space. As ghost masks contained holes, they proposed the use of morphological operations for refining the masks.

In [

28], Hossain et al. proposed the estimation of dense motion fields using optical flow to minimize forward and backward residuals. They believed that an effective intensity mapping function could be estimated if each pixel in each exposure was assigned occlusion weights using histograms. This method falls in the category of methods focusing on moving object registration. Jinno et al. proposed modelling displacement, occlusion and saturation regions using Markov random fields and minimized an energy function comprising the three defined terms [

29]. The resulting motion estimation, along with the detection of regions affected by occlusion or saturation, resulted in the development of an effective deghosting technique during HDR image generation. In [

30], Hafner et al. proposed the minimization of an energy function that simultaneously estimated HDR irradiance along with displacement fields. The energy function comprised the spatial smoothness term of displacement and spatial smoothness term of irradiance and displacement fields, which were used to calculate the difference between the predicted and actual pixel values at a given location.

The literature review presented above is not exhaustive, and the sheer volume of available material highlights the interest of the community in this problem. Most of the techniques presented above are computationally intensive. This was observed by Tursun et al. in [

1]. Therefore, they proposed a simple deghosting method and demonstrated that it performed at par as compared to more computationally intensive methods. In the same context, a simple deghosting method is proposed in the next section which is neither computationally demanding nor requires exposure information for deghosting.

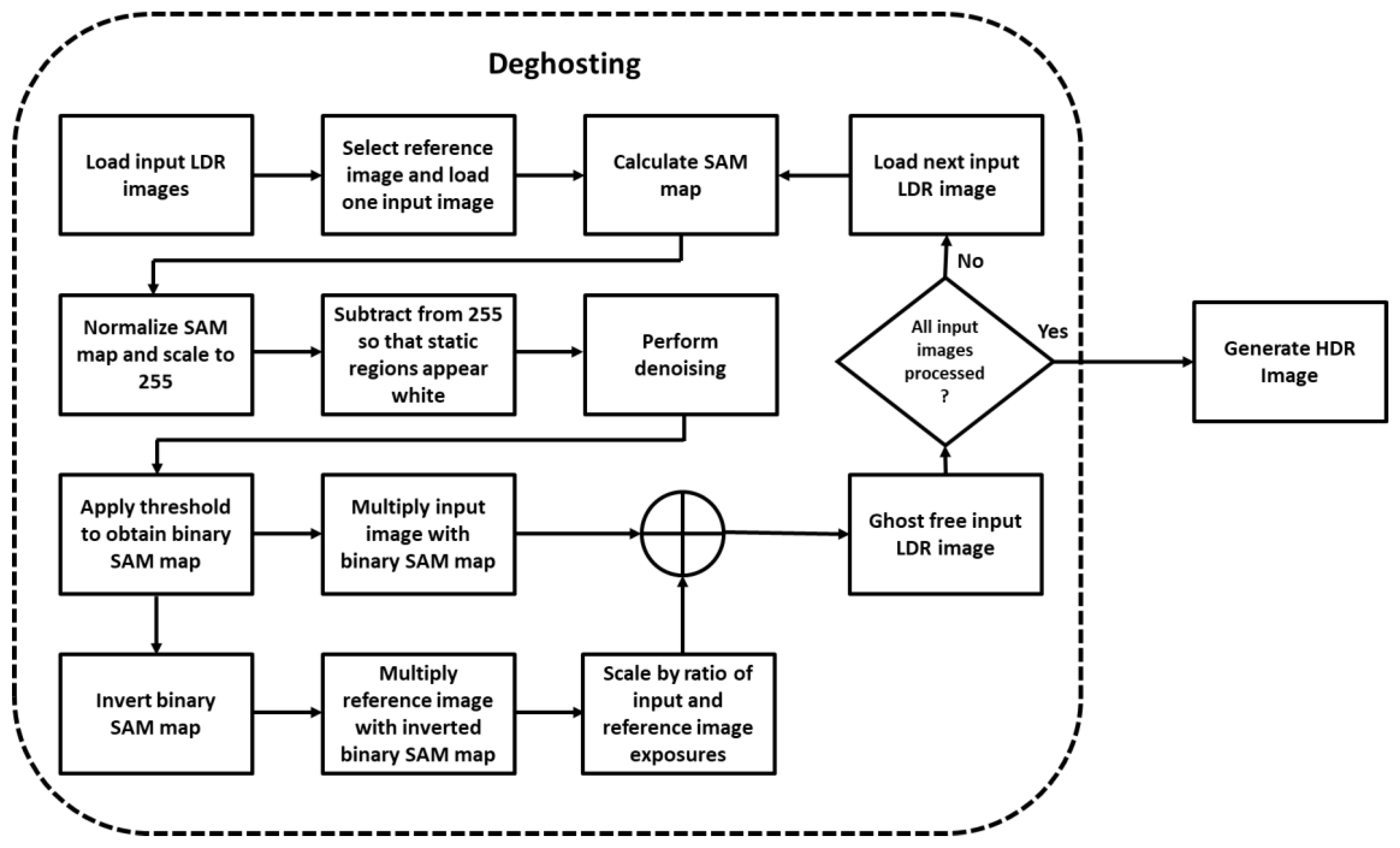

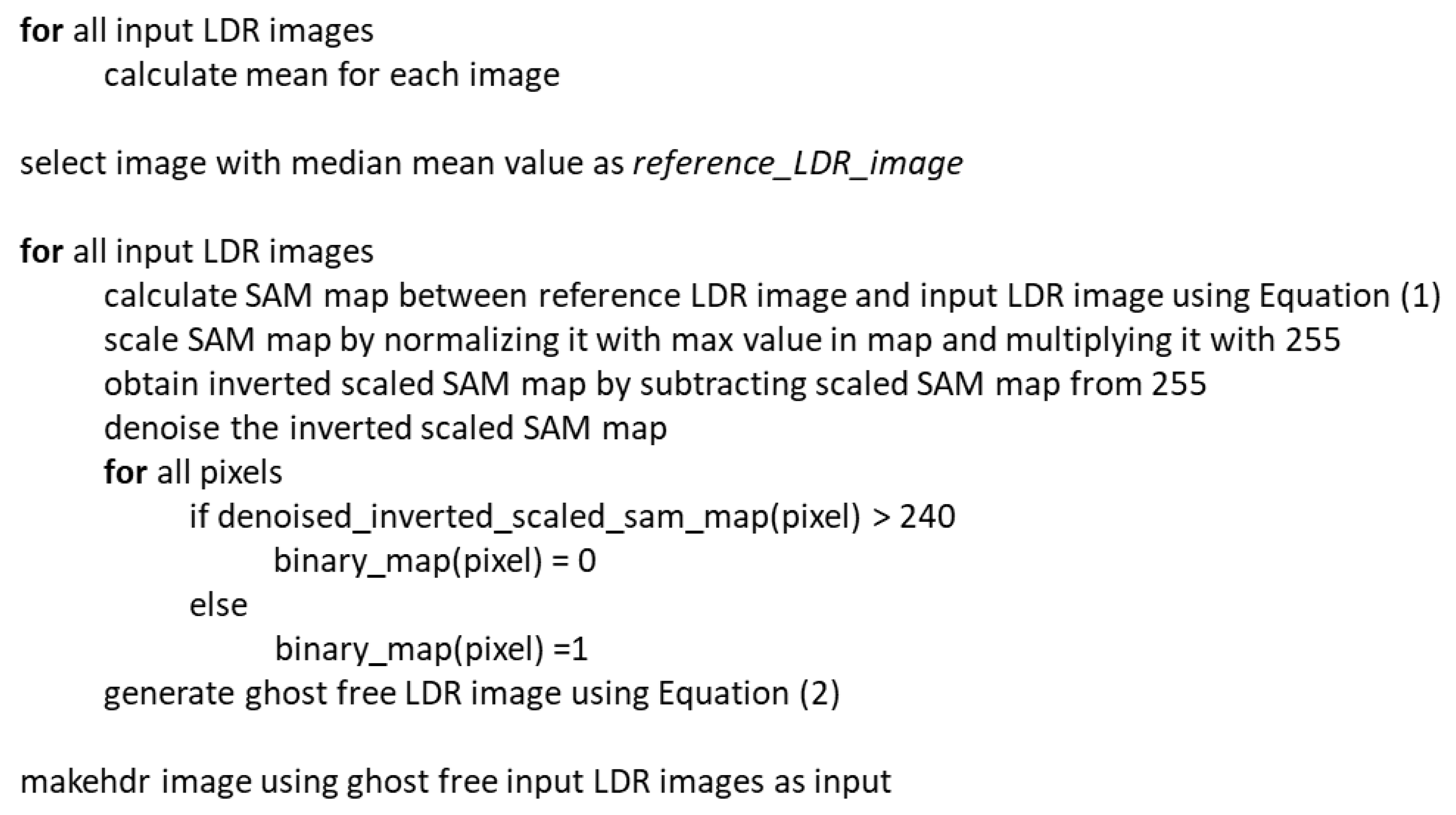

4. Experimentation and Results

To assess the performance of the proposed deghosting method, we used the dataset provided by Tursun et al. [

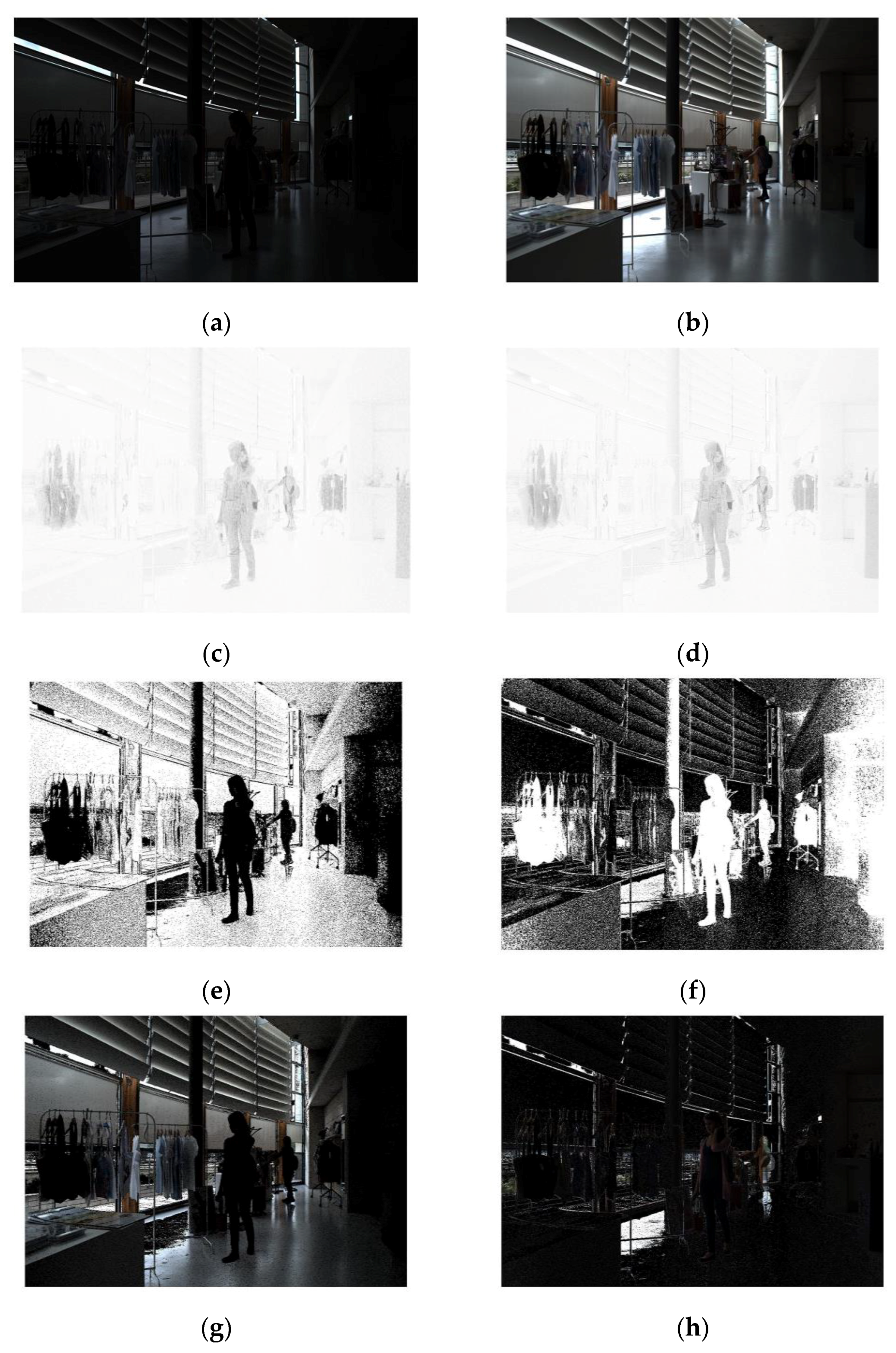

1]. The dataset comprises of 10 LDR image sets, with each set containing 9 images captured using an increasing exposure time setting. The data set images are globally registered; i.e., the camera stays still for the set of images, although the objects are not static. The ten images are titled “Cafe, Candles, Fastcars, Flag, Gallery1, Gallery2, Libraryside, Shop1, Shop2, PeopleWalking”. The results for four of the ten images are presented in

Figure 4,

Figure 5,

Figure 6 and

Figure 7 for subjective comparison and quality assessment, while the objective quality assessment results for all ten images are presented in Table 2.

For objective quality assessment, we employed Tursun et al.’s [

36] deghosting quality assessment measures. These indices were selected because they provide separate assessments for the dynamic range of HDR images and errors in magnitude and direction of gradients. These indices can be combined and presented as a unified score; however, keeping them separate helps relate them to subjective evaluation. To compare the results of the proposed algorithm (P), it has been compared with five existing methods. The methods have been selected based upon their performance, as determined in [

1,

37], and their readily available implementations. The proposed method has been compared to no deghosting (N), deghosting methods proposed by Tursun et al. [

1] (T), Pece and Kautz (K) [

14], Sen et al. (S) [

23] and with the deghosting option available in Picturenaut software version 3.2 [

38] (C). The implementation of [

23] was obtained from the authors’ website, while the implementation of [

14] was made available by the authors of [

39]. All experimentation was done using MATLAB R2018a. With the exception of the results of Pece and Kautz (K) and Picturenaut (C), all HDR images were generated using the ‘makehdr’ function of MATLAB. For these results, deghosting was done as proposed by their respective algorithms in MATLAB, and then the ‘makehdr’ function was used to construct the HDR image. To visualize the results, all HDR images were tone mapped using the tone mapping function provided in MATLAB. Quality assessment was done using the input LDR images and the HDR results. Alongside quality, we also compared the time for generation of HDR images by these methods and observed that, on average, (N) required 2.9 s, (C) required 8.7 s, (T) required 10.2 s, (K) required 17.1 s, (P) required 25.3 s and (S) required more than 4 min.

4.1. Subjective Assessment

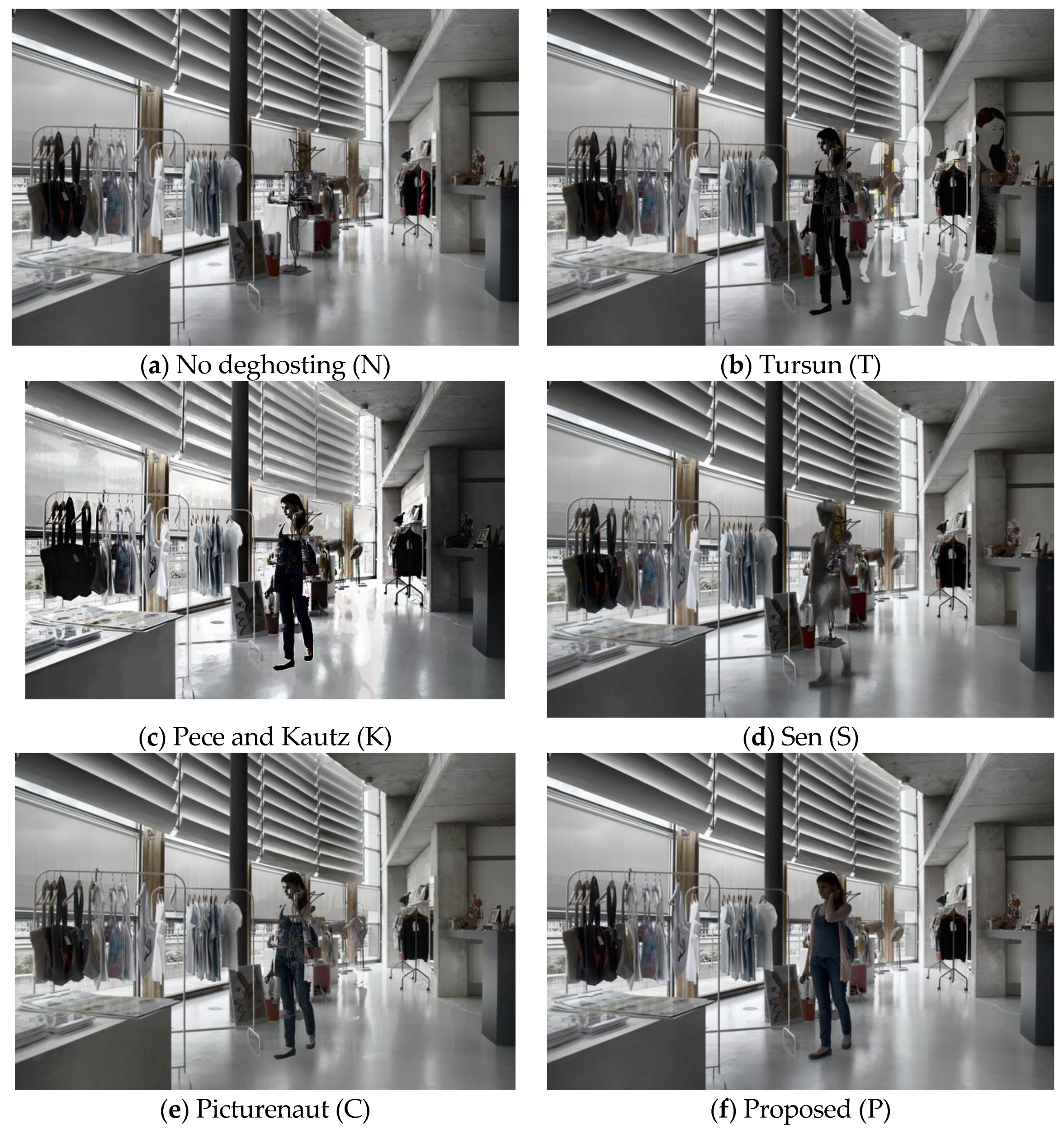

To perform a subjective comparison of the proposed deghosting algorithm, we visually inspected the output of HDR images (N, T, K, S, C and P) using tone mapping provided in MATLAB. The same tone mapping method is used to remove any inconsistencies that may be caused by using different tone mapping operators. The results for the ‘Cafe’ image are presented in

Figure 4. Looking at the no-deghosting (N) result in

Figure 4a, it appears as if the image does not suffer from ghost artifacts. However, a zoom of the image clearly shows that the heads of people in the image appear blurred. The result produced by Tursun et al.’s [

1] algorithm has ghost artifacts in it. This is shown as

Figure 4b and is further highlighted in the zoomed images. The results obtained by the Pece and Kautz method (K) perform better at deghosting but suffer from incomplete objects, evident from the objects in

Figure 4c. The result obtained using Sen et al.’s method appears to produce better deghosting, and objects do not have holes in them. However, the pixels demonstrating motion are not sharp, compared to the proposed method. Usage of Picturenaut software with the deghosting option results in an HDR image in which heads of both the ladies are clearly visible, but the image still contains noise and suffers from incomplete objects, as shown in

Figure 4e.

Figure 4f presents the results of the proposed deghosting method, and the two ladies can be clearly seen in the figure. Similarly, the couple standing next to the bar is visible in the image without deghosting. Thus, the best result is obtained using the proposed method.

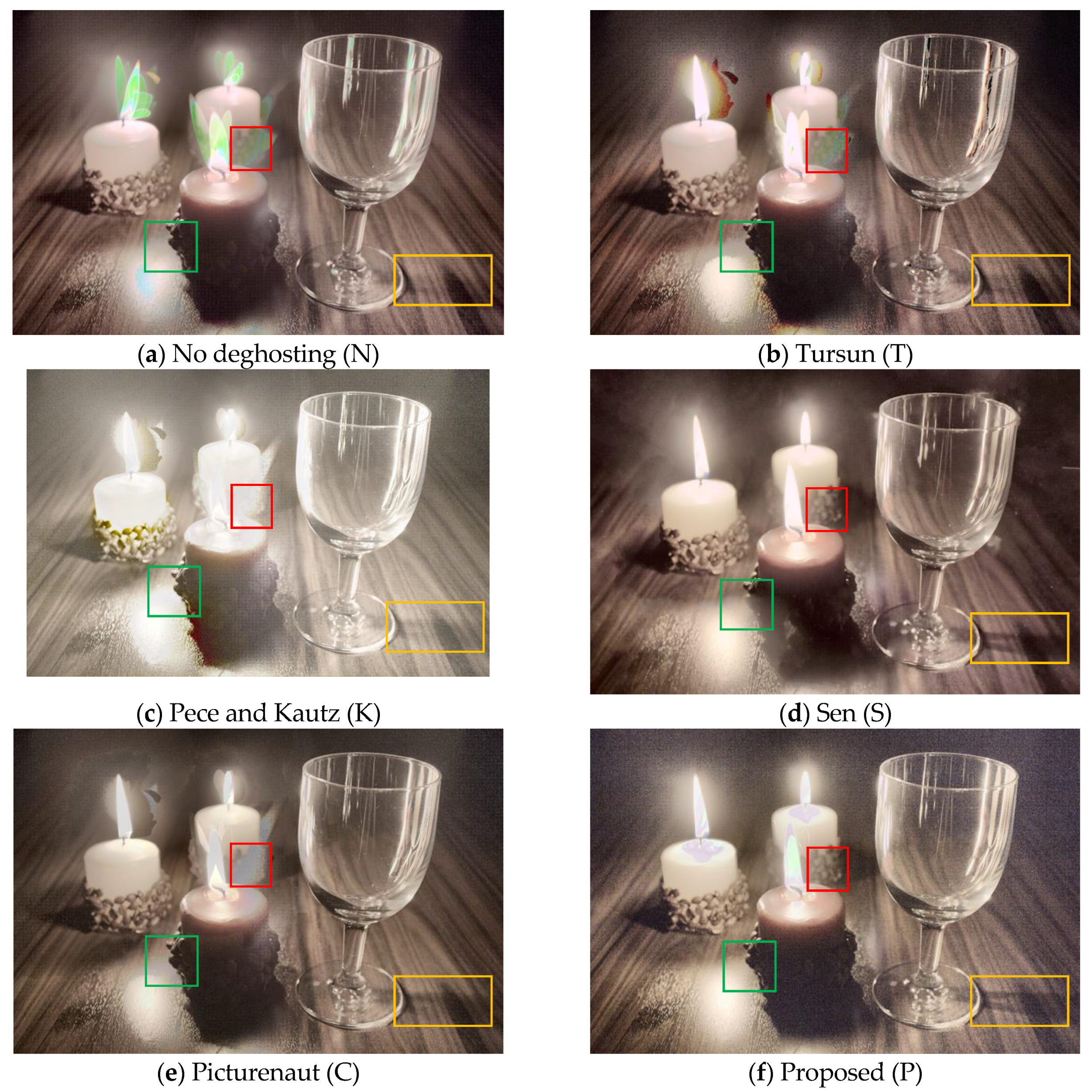

The image set titled ‘Candles’ is challenging as input LDR images not only have movement but also have illumination variation. A red box is used to highlight the difference between the compared algorithms. Except for the result shown in

Figure 5d,f, all the other images suffer from ghosting as the texture of the candle stand is not visible. A green box is used to demonstrate both deghosting and the dynamic range of the resultant images. Comparing

Figure 5d, obtained using Sen et al.’s deghosting, and

Figure 5f, obtained using proposed deghosting, it can be observed that the image presented in

Figure 5f is slightly clearer and sharper compared to the image in

Figure 5d. Looking at the yellow rectangles it can be observed that the shadow of the glass is hardly visible in images obtained by no deghosting, Tursun et al.’s method, Pece and Kautz’s method and Picturenaut software. The shadow of the glass can be clearly seen in

Figure 5d,f, thus indicating that they may have a higher dynamic range compared to the other images. However, looking at the overall quality of these two images,

Figure 5f seems to have slightly higher noise in dark regions. Also, the flame and candle wick are more visible in the result obtained by Sen et al.’s method.

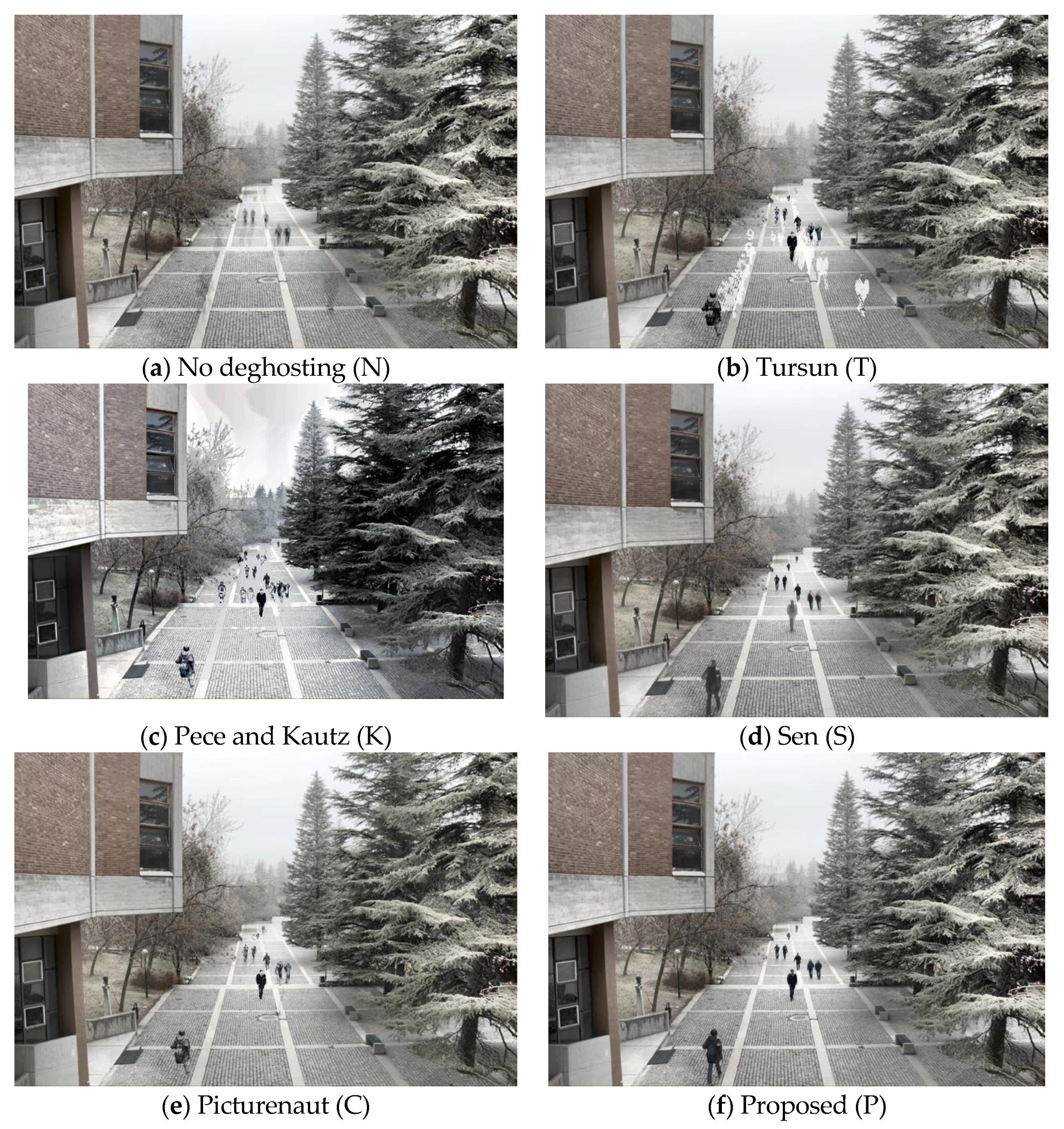

Observing the results presented in

Figure 6 and

Figure 7 it is clear that the best deghosting results are produced by the proposed method. It may appear that there are no ghost artifacts in

Figure 6a; however, a closer inspection of the image reveals a marginally visible silhouette in various parts of the image. These ghost artifacts are clearer in

Figure 7a. Ghost artifacts are clearly visible in

Figure 6b and

Figure 7b, obtained using Tursun et al.’s method [

1]. The result obtained by using Picturenaut software, in

Figure 6e and

Figure 7e, contain both ghost artifacts and holes. The ghost artifact seen in

Figure 6e seems to have reduced color information, whereas the ghost artifacts in

Figure 7e are clearly visible against a bright background. Methods (K) and (S) seem to present similar issues with deghosting for the person in the ‘Shop2’ image. The person does not appear complete and the colors do not appear natural. In this regard, the proposed method presents the best result among the compared methods, as shown in

Figure 7f. Similarly, for the image in which people are walking, the proposed method seems to perform appropriately, removing ghosting, and making the person in the center of the image appear clearly. Sen et al.’s method produces a nearly similar result for the rest of the image; however, the person in the center of the image is not clear and appears blurred.

A subjective assessment of the results clearly suggests that our proposed method outperforms the prior state-of-the-art it is being compared with. However, subjective assessment is user dependent and, therefore, it is better to assess the quality objectively. In this regard, we have compared the quality of the proposed method using the quantitative measures: dynamic range, difference in magnitude of gradient and difference in direction of gradient, as proposed by Tursun et al. in [

36].

4.2. Objective Assessment

In [

36], the authors proposed the calculation of dynamic range of non-static pixels. This was proposed to avoid the influence of static regions, as dynamic range from them could make the dynamic range contribution from non-static regions insignificant. The authors proposed estimation of dynamic regions

DR(

p) by observing if

DR’(

p) is greater than a tolerance threshold ‘τ = 0.3’. The authors estimated the threshold value by experimentation and defined

DR’(

p) as

where ‘

p’ represents the pixel location, ‘

c’ the RGB channel, ‘

En’ the input LDR image (exposure image), ‘

h’ is a function returning the Euclidean distance between

En and

En+1, and

Wn,n+1 attenuates the pixels which are under- or over-exposed.

The quality of dynamic range is finally calculated using

where ‘

QD’ represents the dynamic range quality measure, ‘

I’ represents the HDR image and where 1% of pixels are dropped from the calculations to obtain a stable result [

1]. The results of dynamic range are presented in

Table 1. The higher the dynamic range, the better the quality of the HDR image. For clarity, the best values for each image are presented in green. From the table, it is clear that the dynamic range of HDR image obtained by using proposed deghosting is better than the dynamic range of HDR image generated without deghosting, thus highlighting that the proposed deghosting algorithm does not affect the dynamic range of HDR image. It is important to note the dynamic range of the HDR image after deghosting, because if only the reference image is selected and all other images are discarded, then there will be no ghost artifacts in the HDR image. However, the dynamic range of the HDR image would be severely reduced. This is not the case with the proposed method, as is evident from the results presented in

Table 1.

Comparing the dynamic range of HDR images obtained using the method proposed by Sen et al. [

23], it can be observed from

Table 1 that the dynamic range of HDR image of the ‘Flag’ and ‘Gallery1’ data sets is slightly higher for Sen as compared to the proposed method. One possible reason for this could be that these test images are relatively bright as compared to other images in the dataset and Sens’ method works better on brighter images as compared to the proposed method.

In [

36], the authors also hypothesize that neither should an HDR image have gradients that are not present in the LDR input images, nor should it be missing gradients that are present in the LDR images. To assess this, the authors propose to calculate the change in magnitude of gradients as:

where, ‘∇

E’ represents the sobel operator-based gradient map of input LDR (exposure) image while ‘∇

I’ represents the gradient map for the HDR image. The ‘

—’ symbol indicates a mean value. The denominator term ensures that the result is normalized to the range [0,1]. Similar to gradient magnitude quality assessment, the authors propose the calculation of the gradient orientation quality using the following equation:

where they propose the measurement of the minimum angle between the directions of gradient vectors and divide the result by ‘

π’, normalizing the result to the range [0,1]. The authors proposed to use 5-level multi-resolution pyramid for gradient magnitude and orientation calculations.

The quantitative analysis of gradient magnitude and direction indicates that the proposed method (P) generates the best results. The results are presented in

Table 2, where each column represents the quality index for each of the tested methods. It is clear that results for the proposed scheme are always the best and hence appear in green color. The only exception to this is for the ‘Fastcars’ image, where the method proposed by Sen et al. [

23] outperforms the proposed method. For all other images, the proposed method (P) has a lower gradient magnitude and direction difference as compared to the rest of the methods. This is in accordance with the subjective assessment of results, where the proposed method removes ghost artifacts better than other methods. The quality assessment measures

QGmag and

QGdir require that no artifacts appear in the HDR image and also that the gradients of input exposure images should be represented in the HDR image. The quantitative results indicate that this is best performed by the proposed method. This can be described by visually looking at the results presented in

Figure 7. From

Figure 7b, it can be seen that there are approximately seven single people or couples walking, as their tracks are visible. The gradient magnitude difference of (K) is the highest, and correspondingly the most artifacts appear in the image presented as

Figure 7c. Both (C) and (S) have holes or blurred individuals, and hence they have a higher gradient magnitude difference value compared to the reference result (P). Although the no deghosting (N) gradient magnitude difference results are closer to the proposed method, it suffers from visual artifacts. It may have a lower value since it adheres to the condition of having the gradients of input LDR (exposure) images present in the HDR image.

Authors of [

1] demonstrated high correlation between their proposed measures and existing state-of-the-art deghosting quality assessment methods. Hence, it may be inferred that testing the methods presented in this work will lead to similar results with other quality assessment methods.