Hardware-Assisted Secure Communication in Embedded and Multi-Core Computing Systems

Abstract

:1. Introduction

2. Related Work

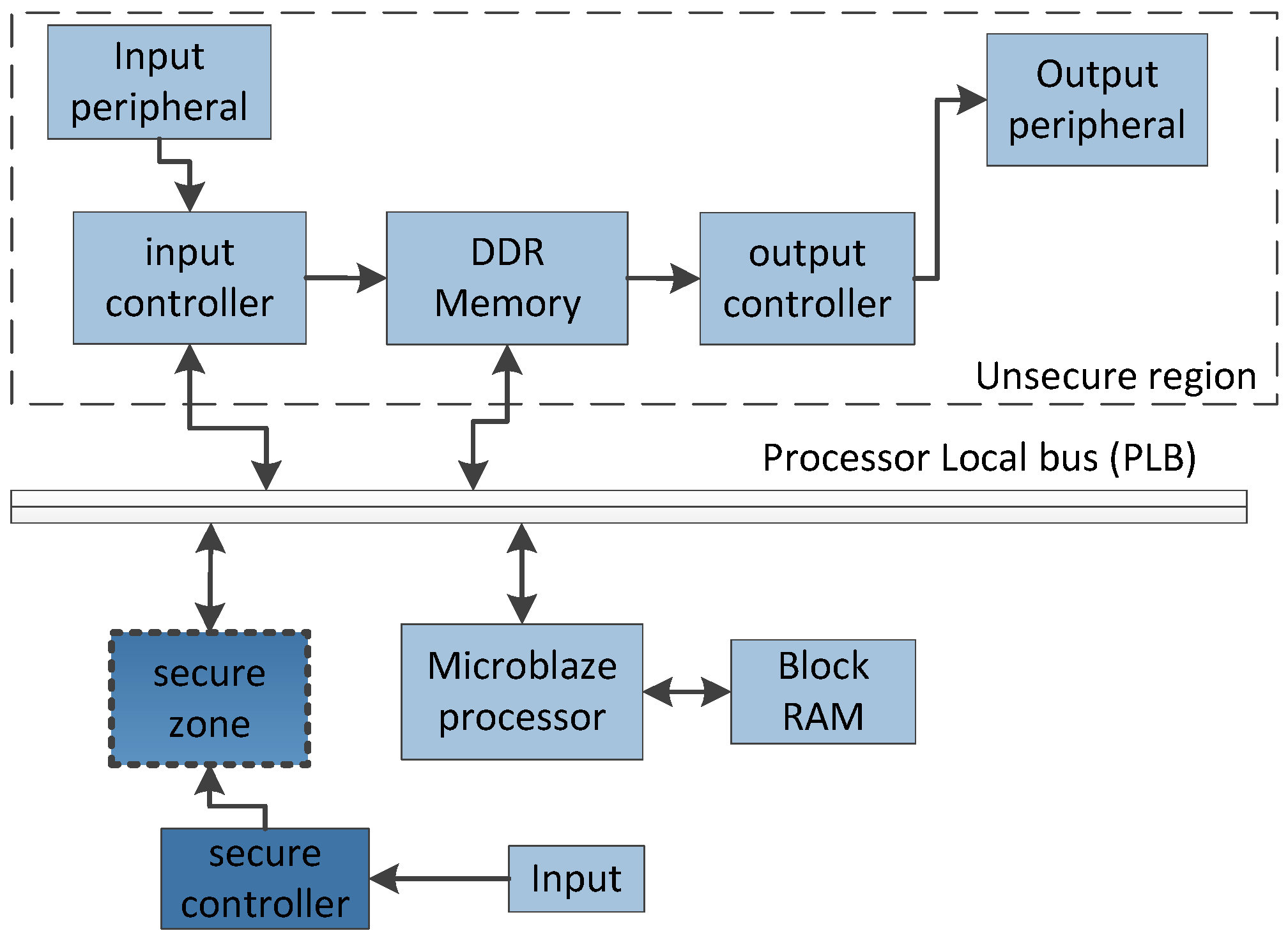

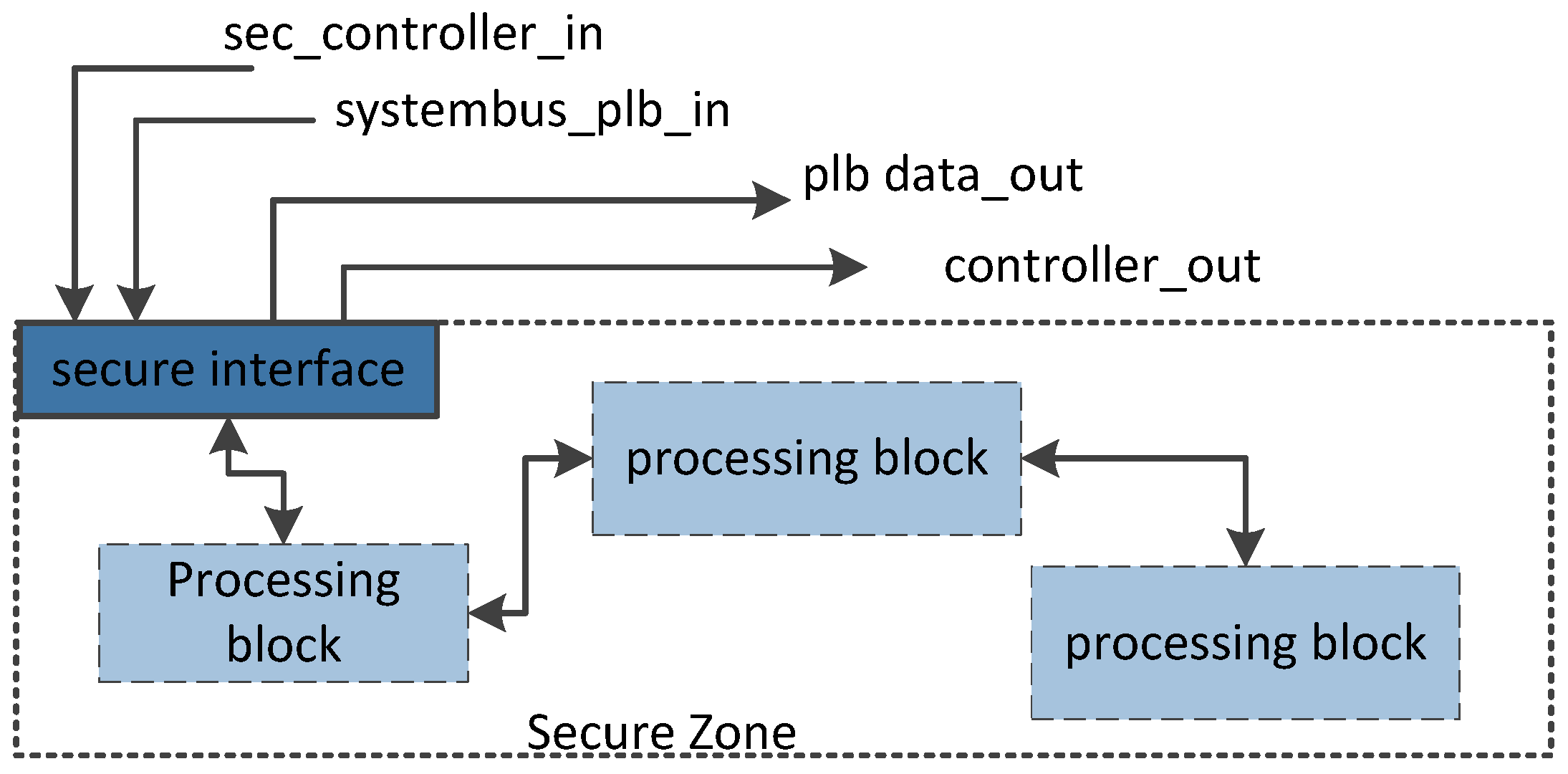

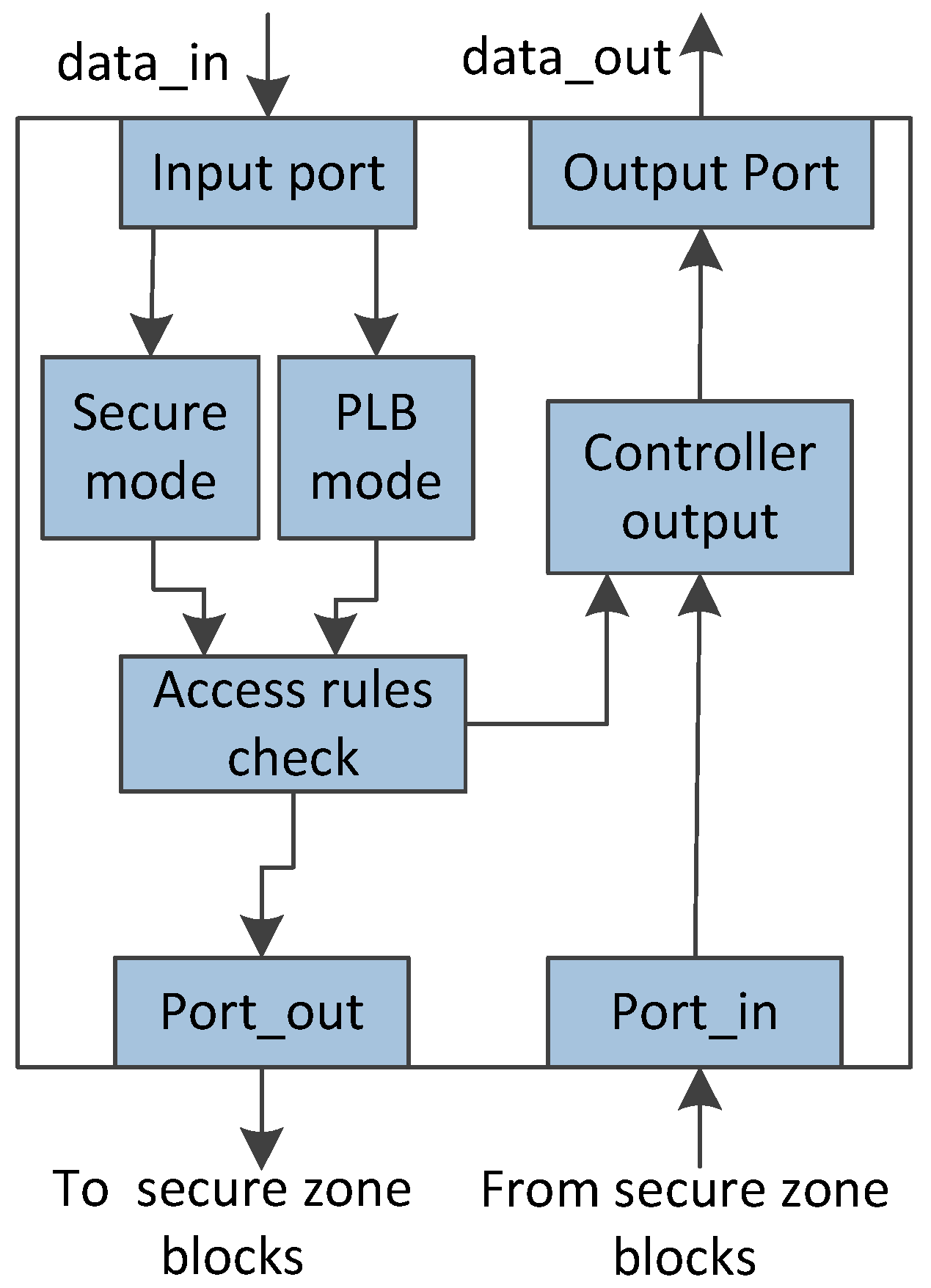

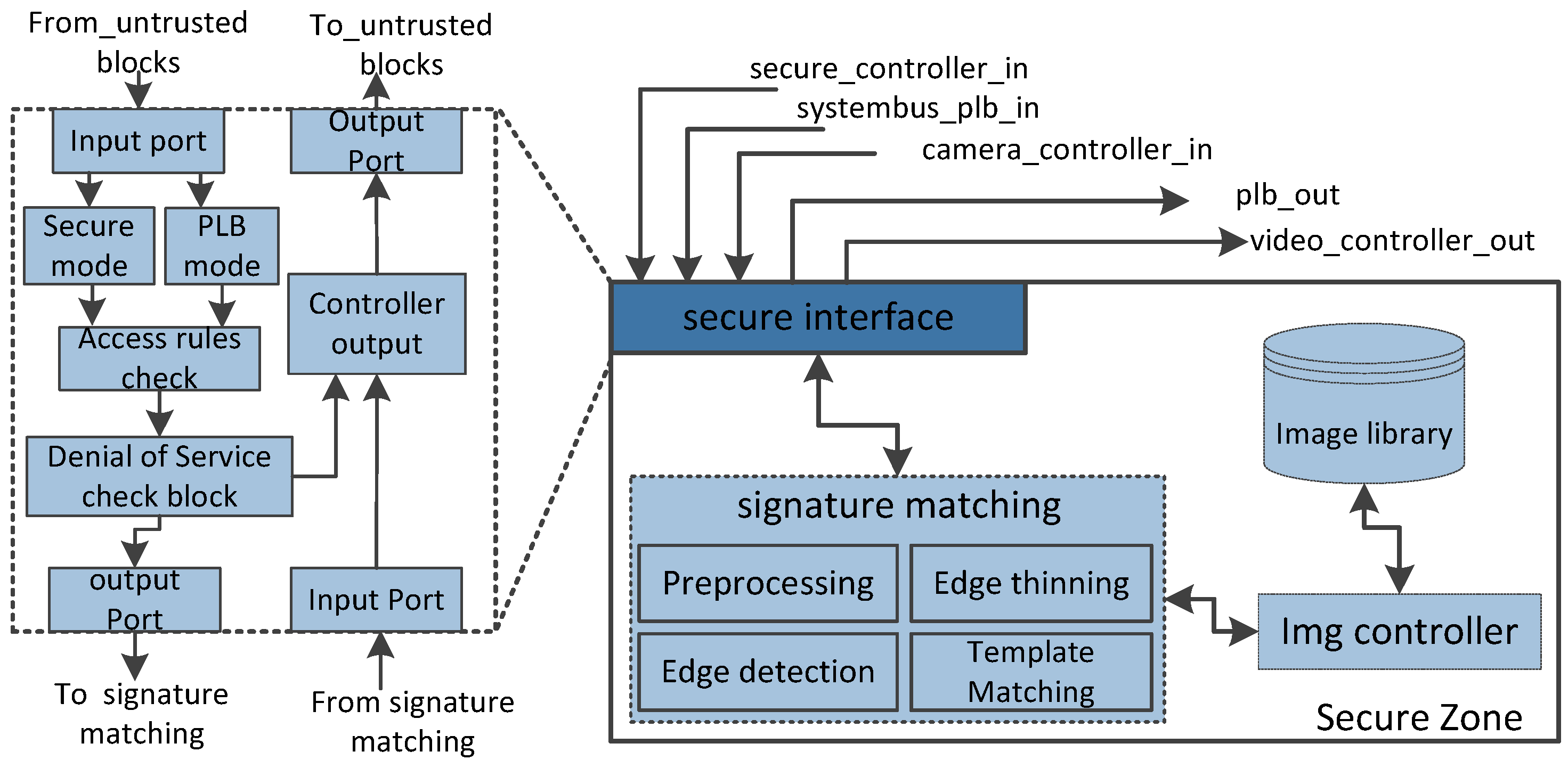

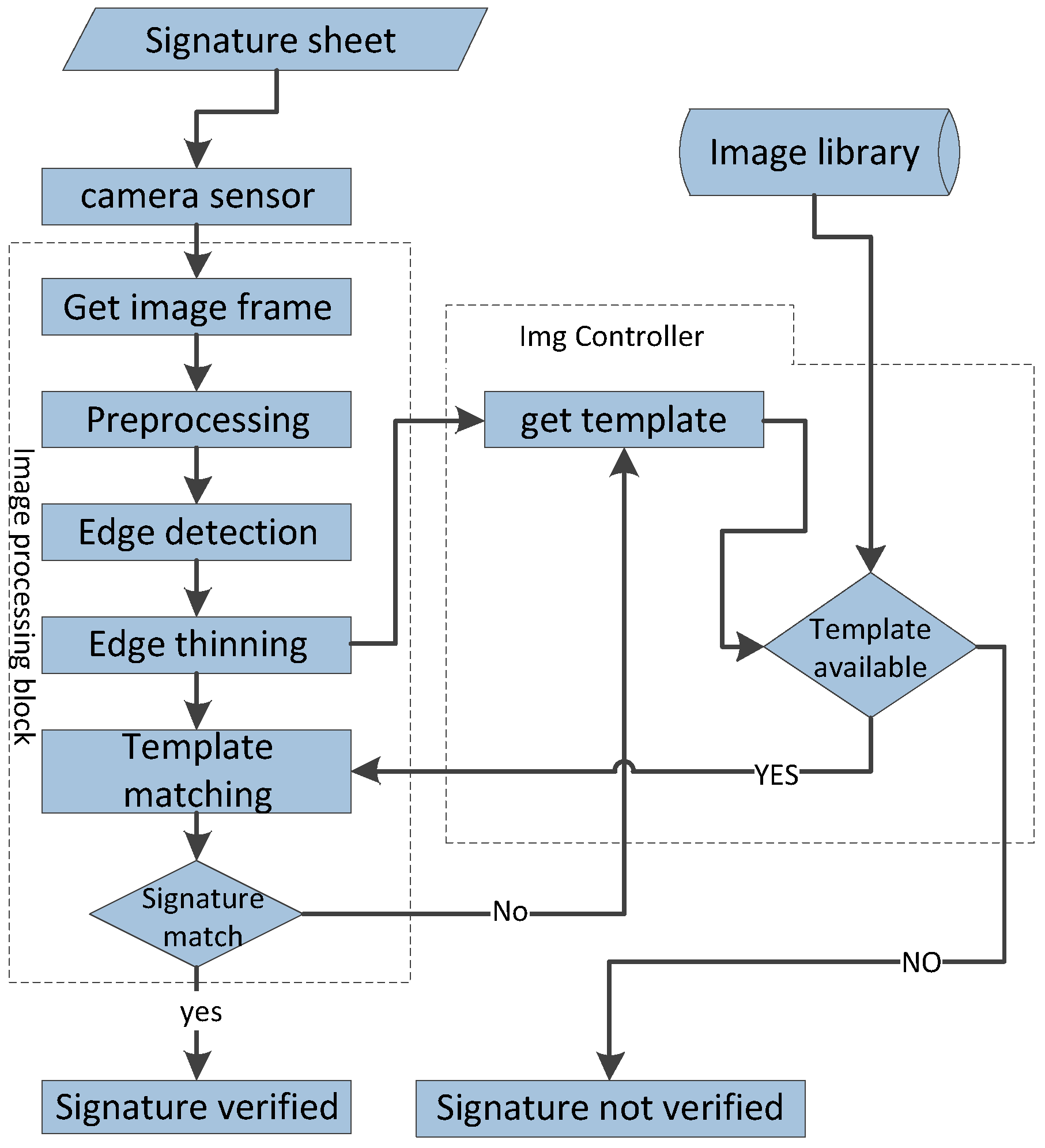

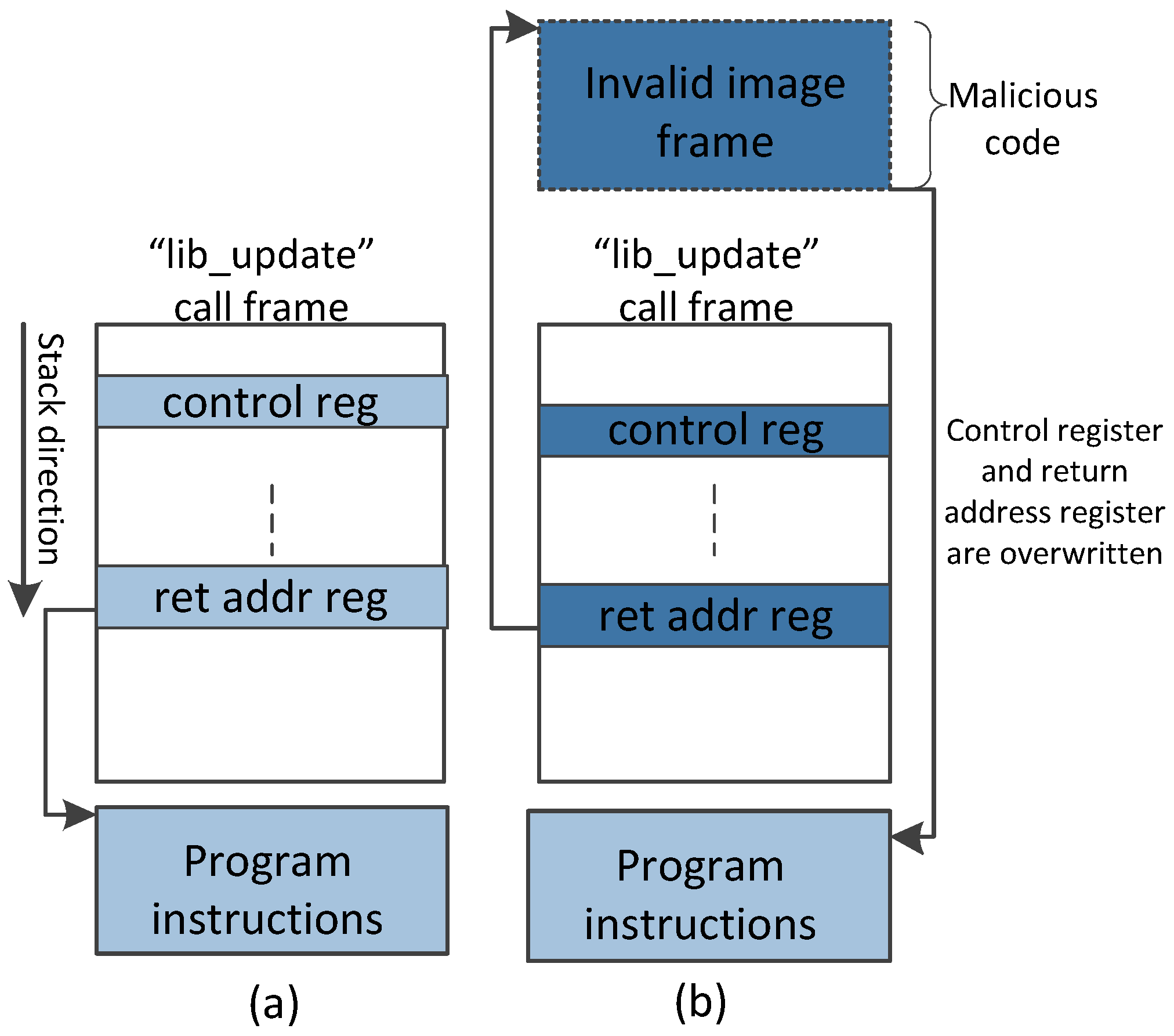

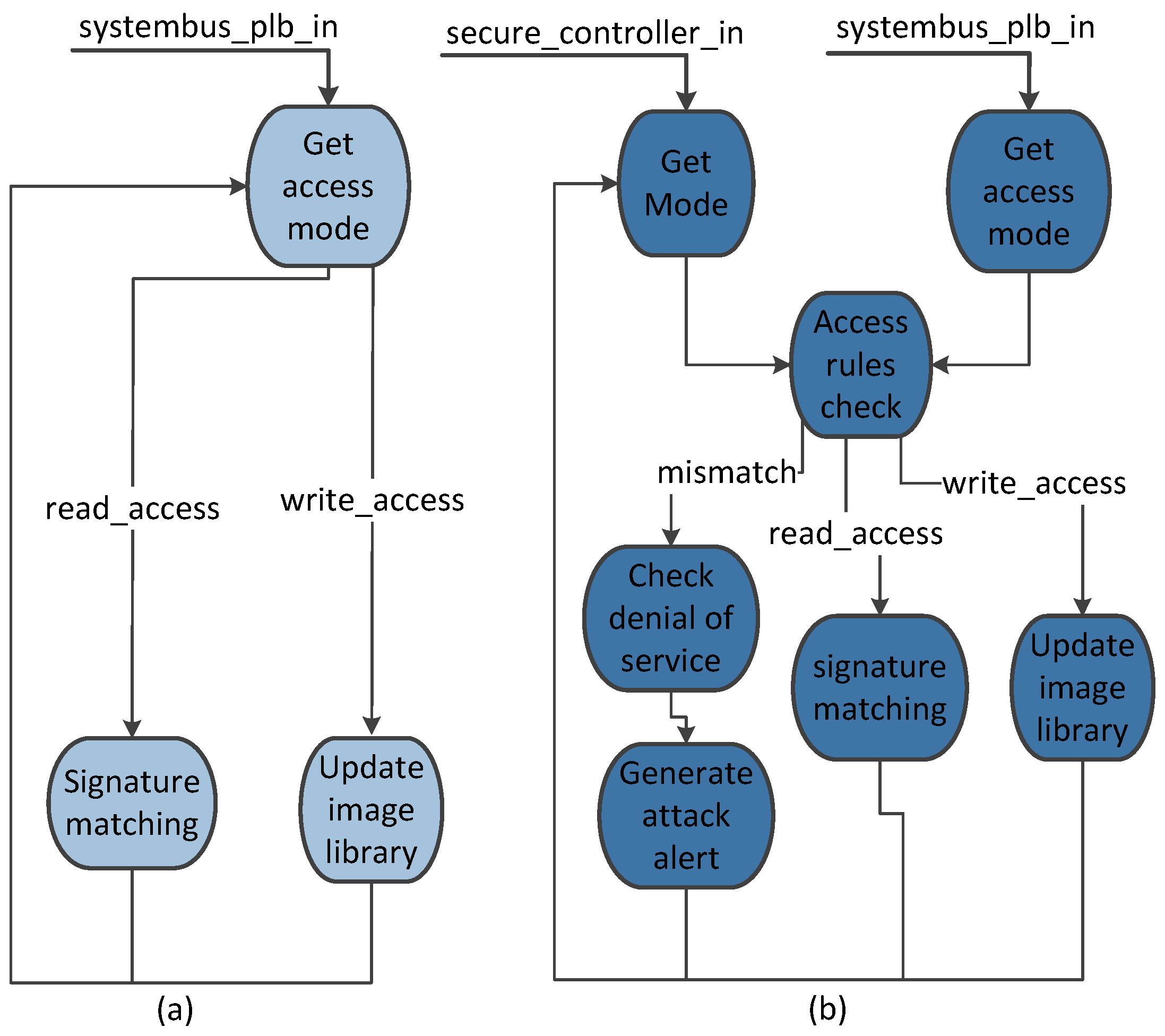

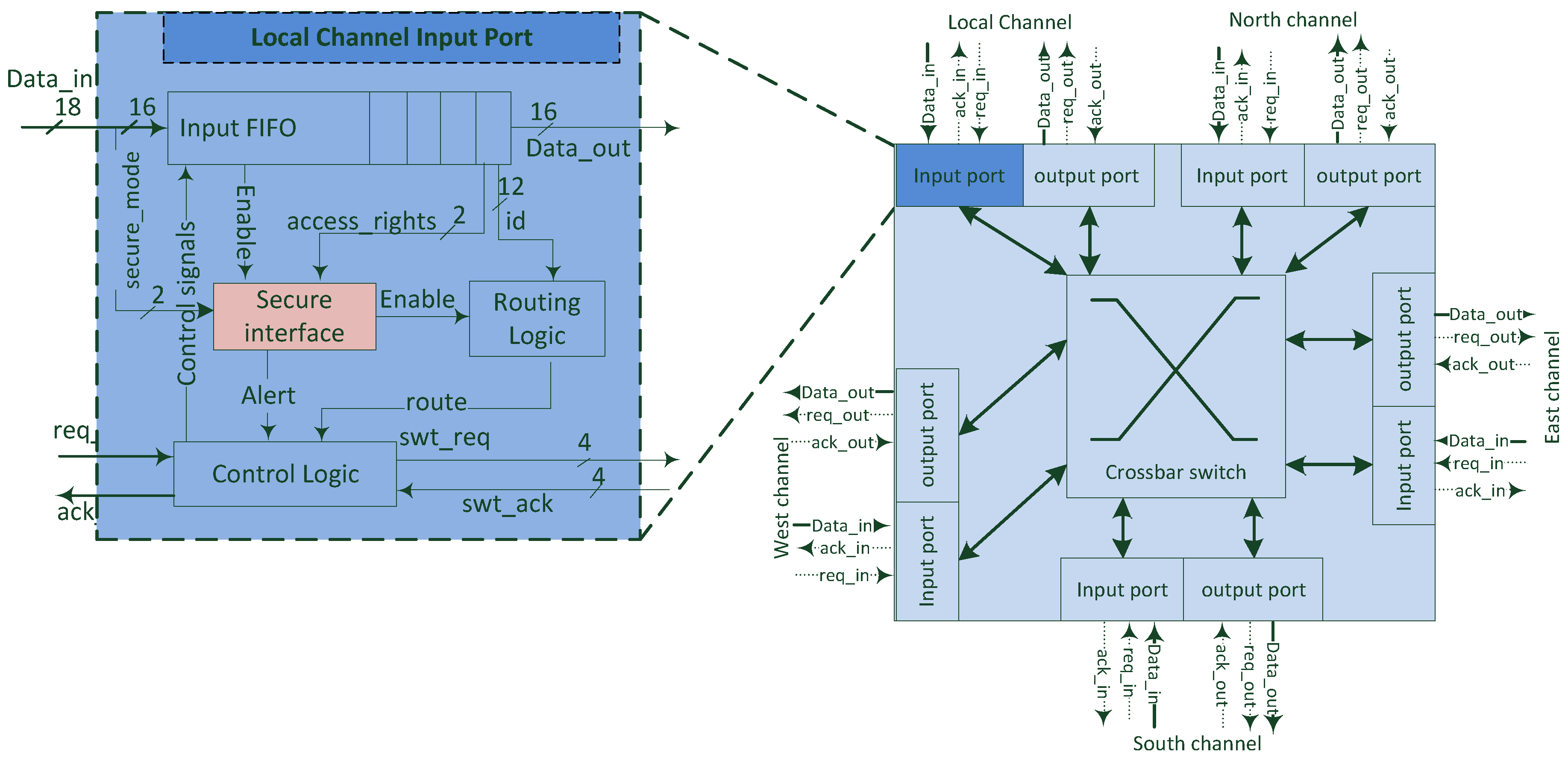

3. Design Approach and Architecture

4. FPGA-Based Embedded System

4.1. Security Effectiveness

4.2. Area and Power Consumption Overhead

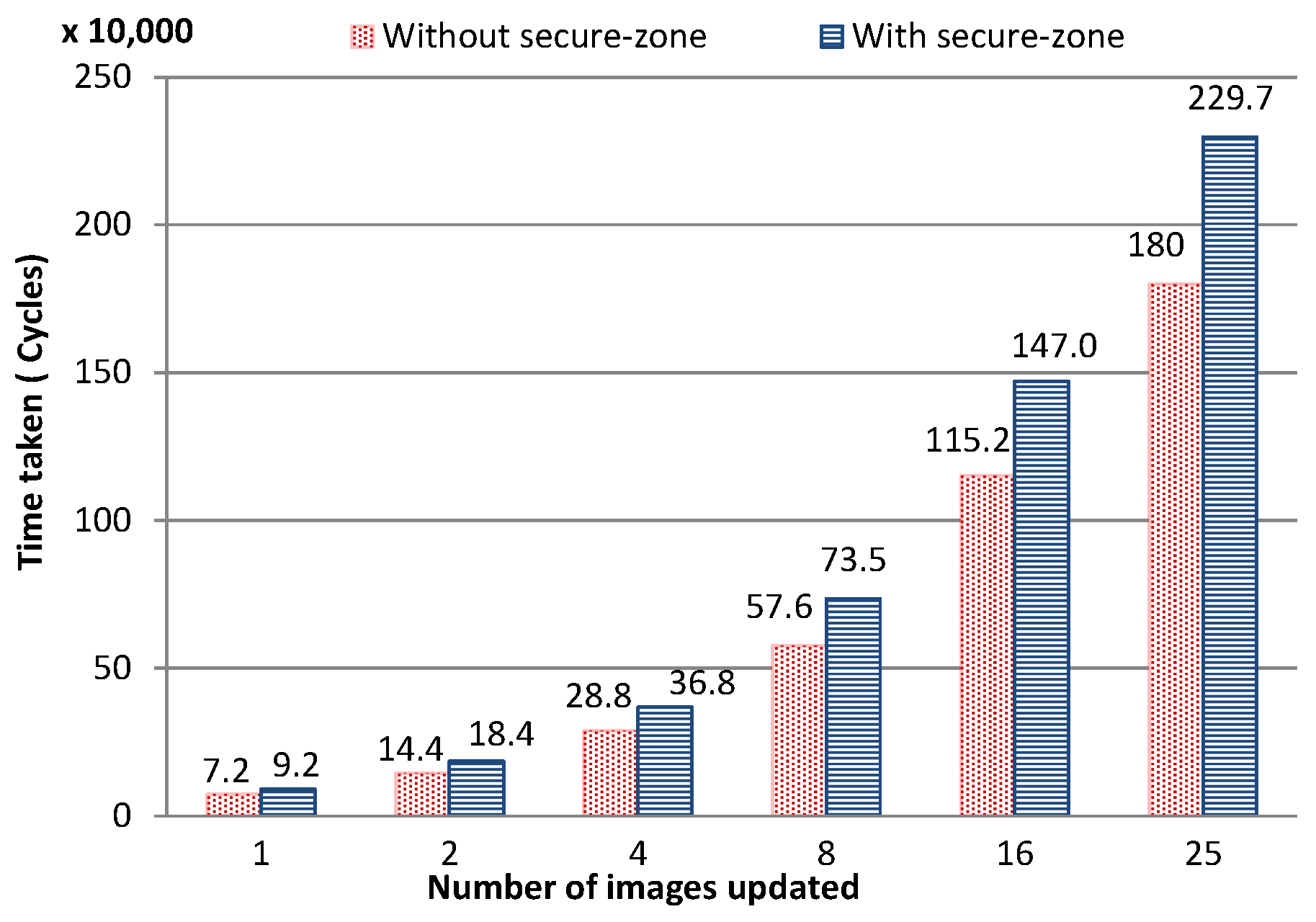

4.3. Performance Evaluation

5. NoC-Based Communication Architecture

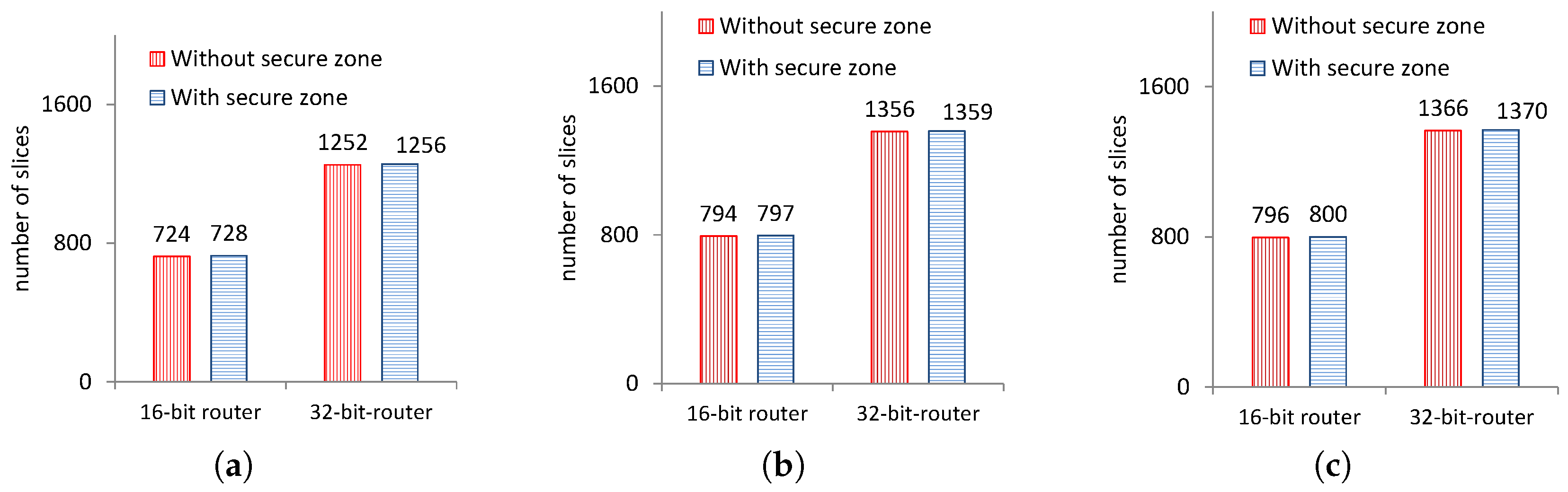

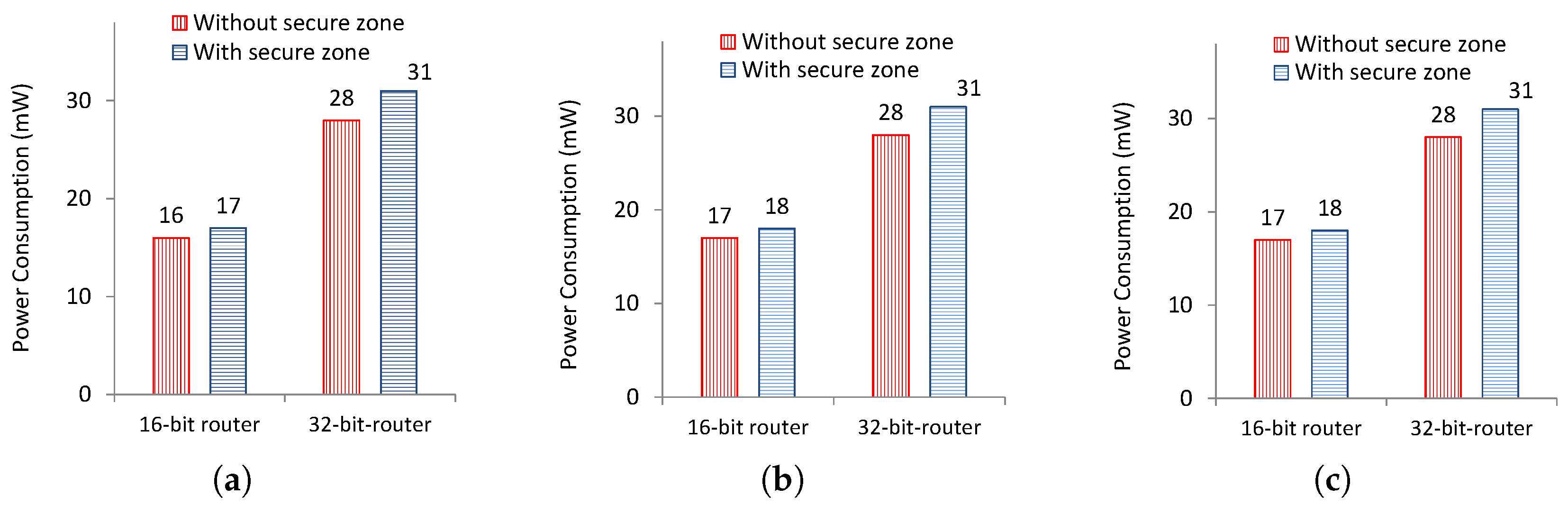

5.1. Area and Power Consumption Overhead

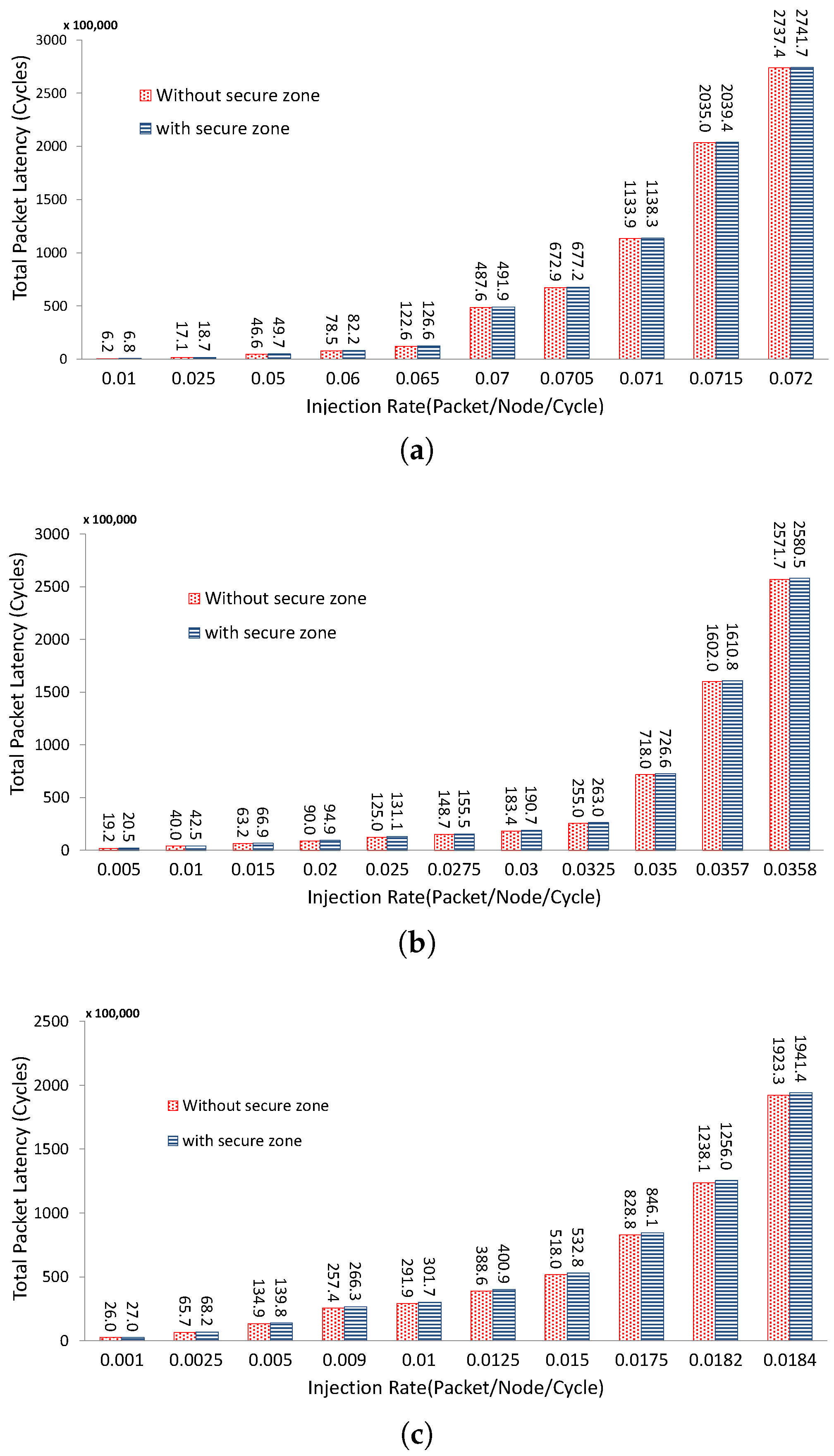

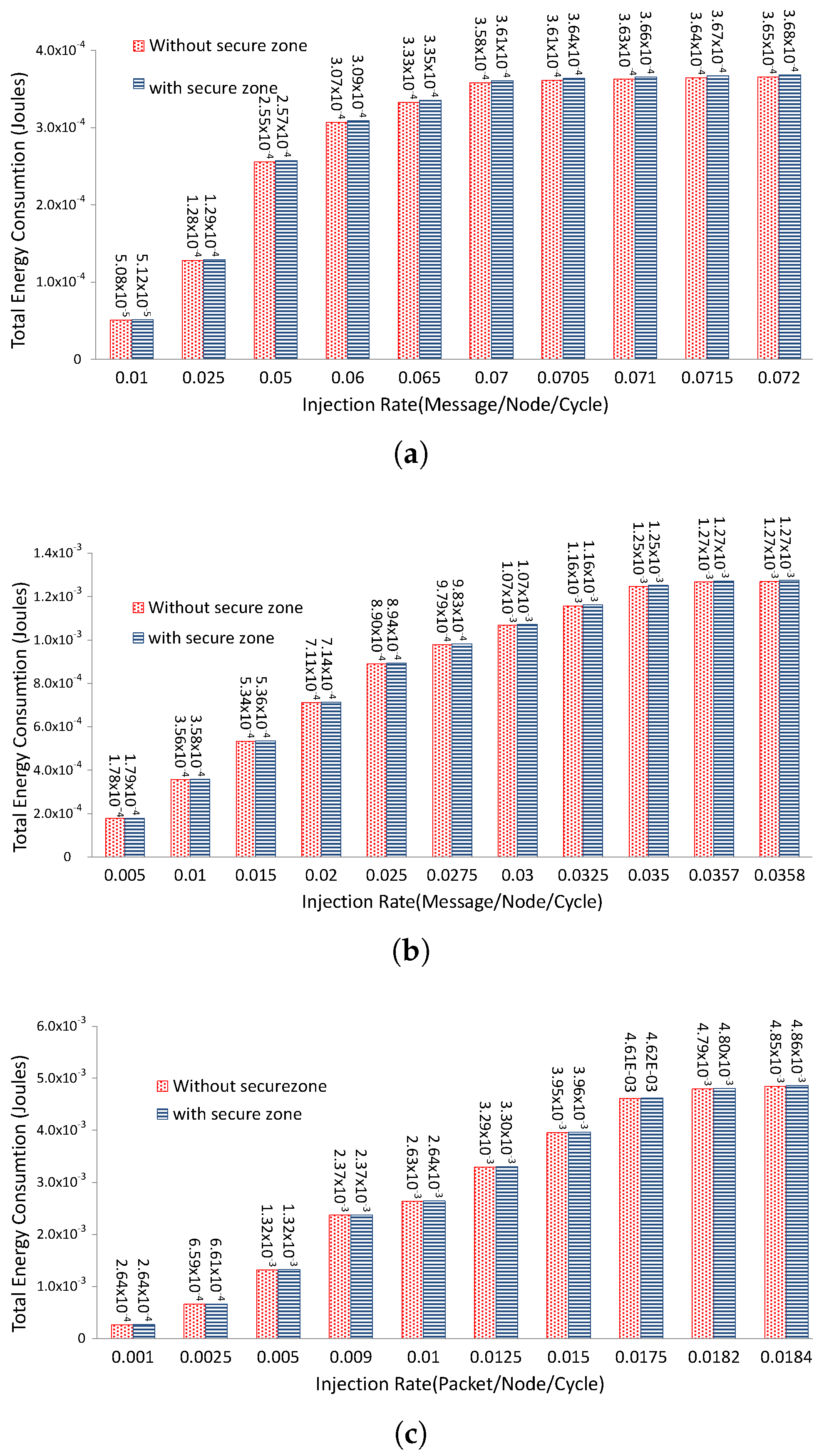

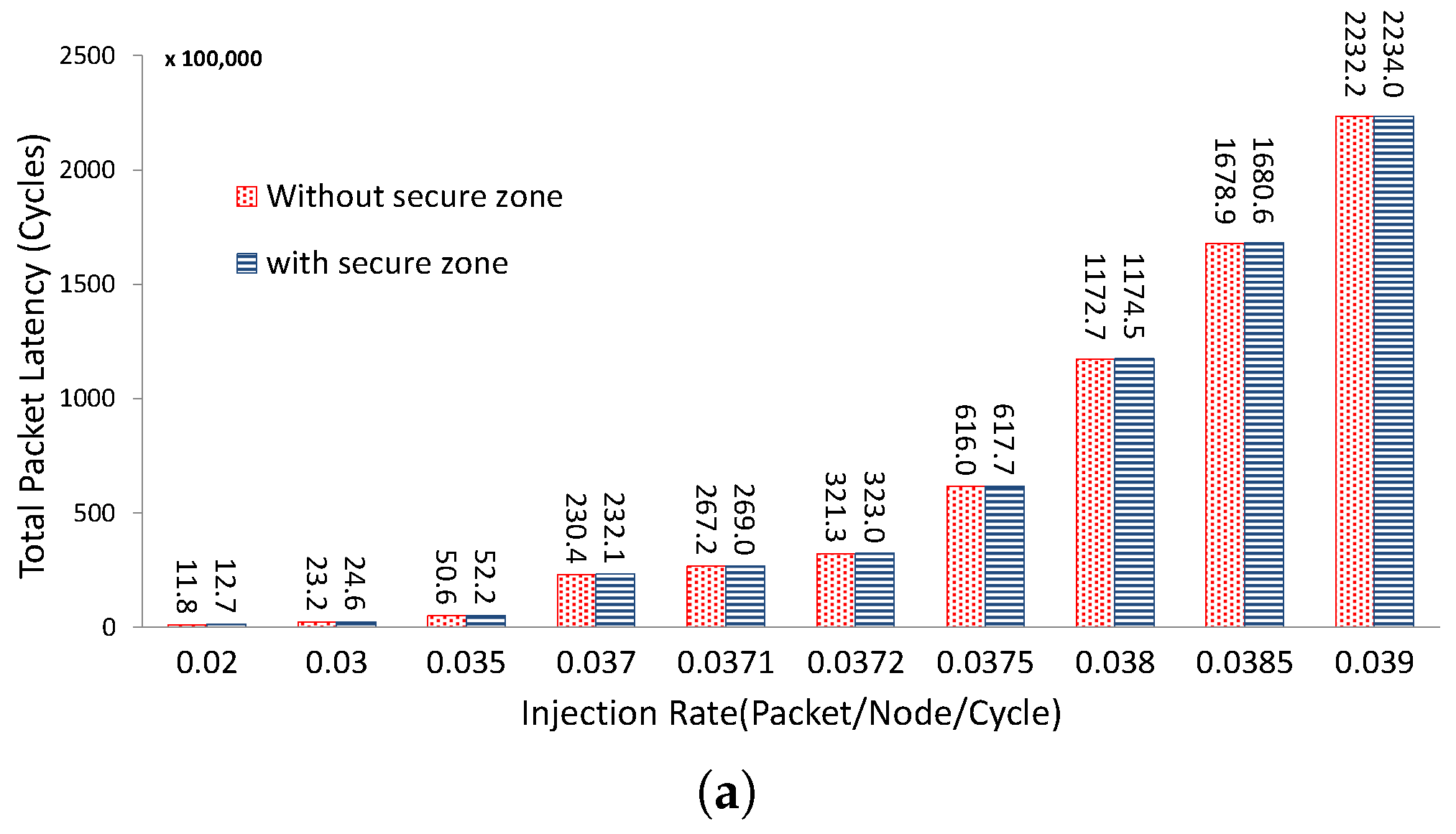

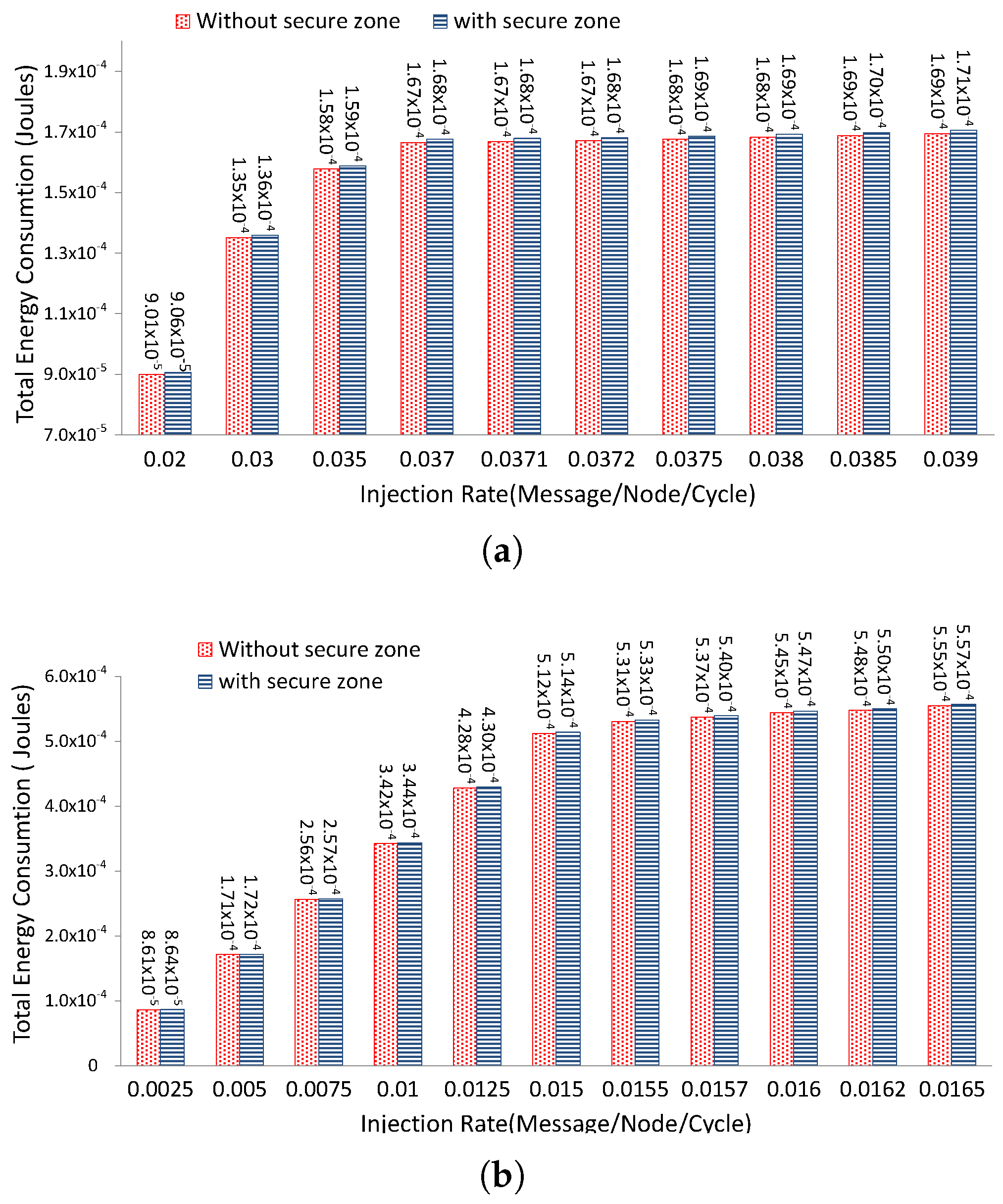

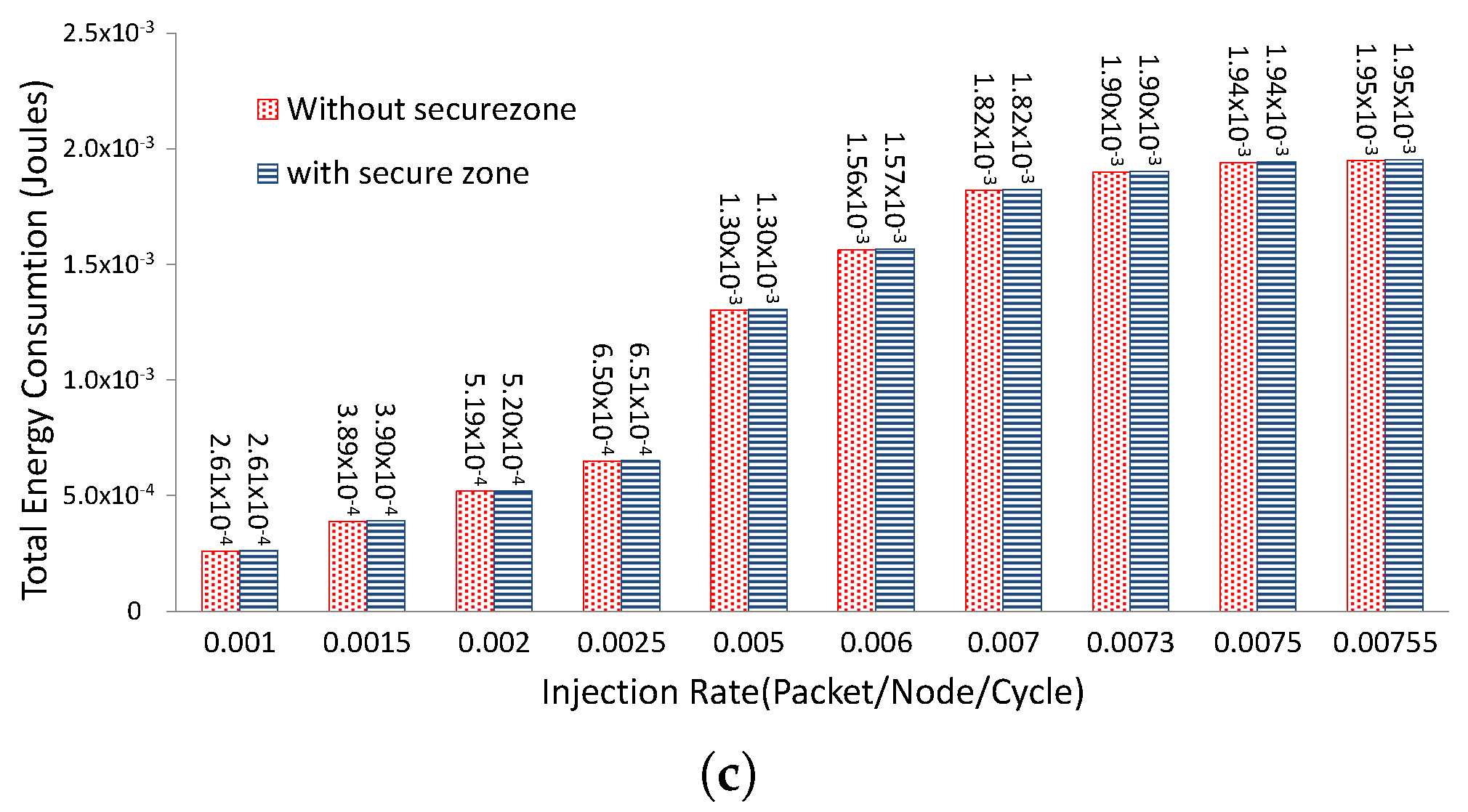

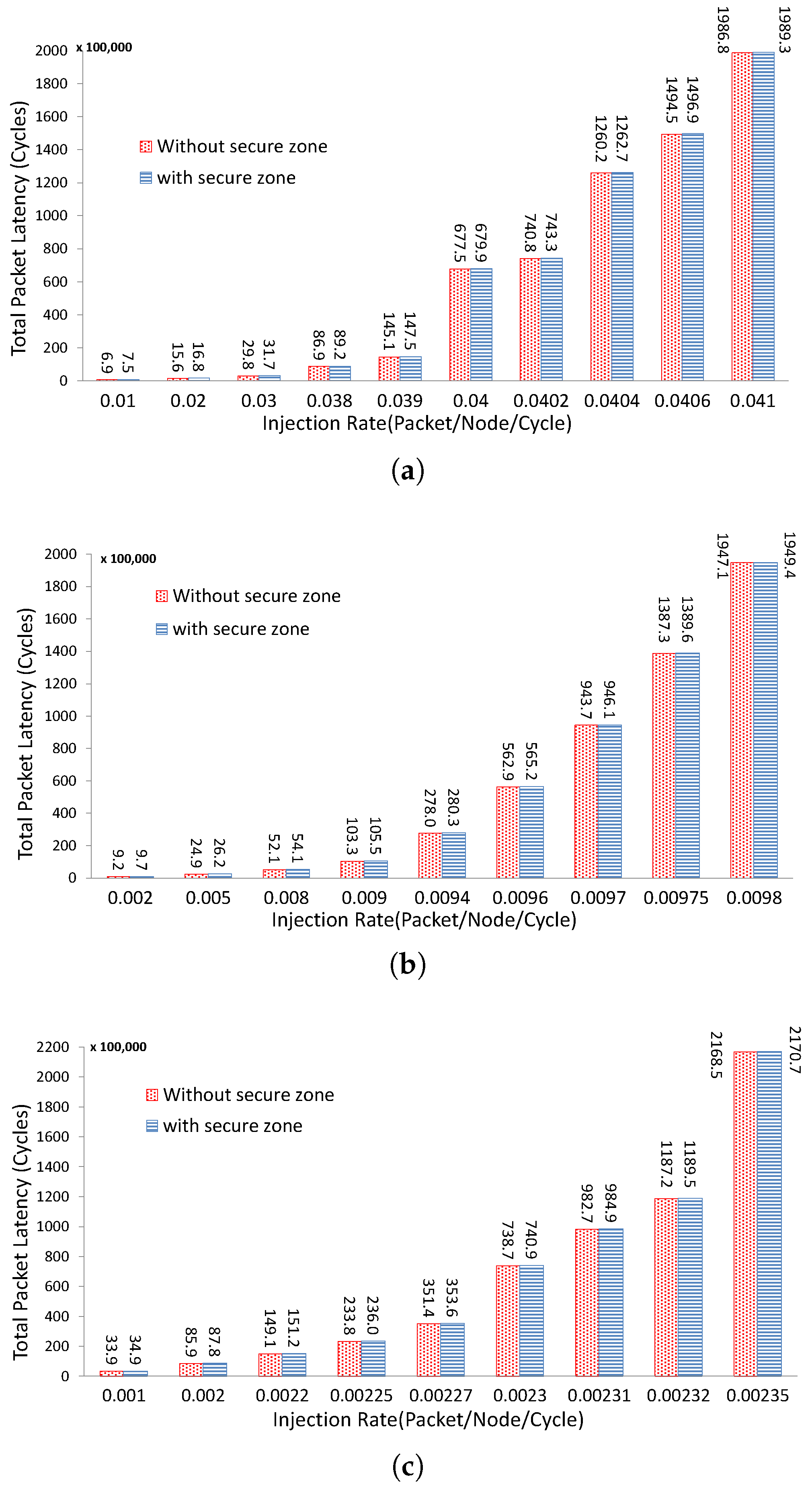

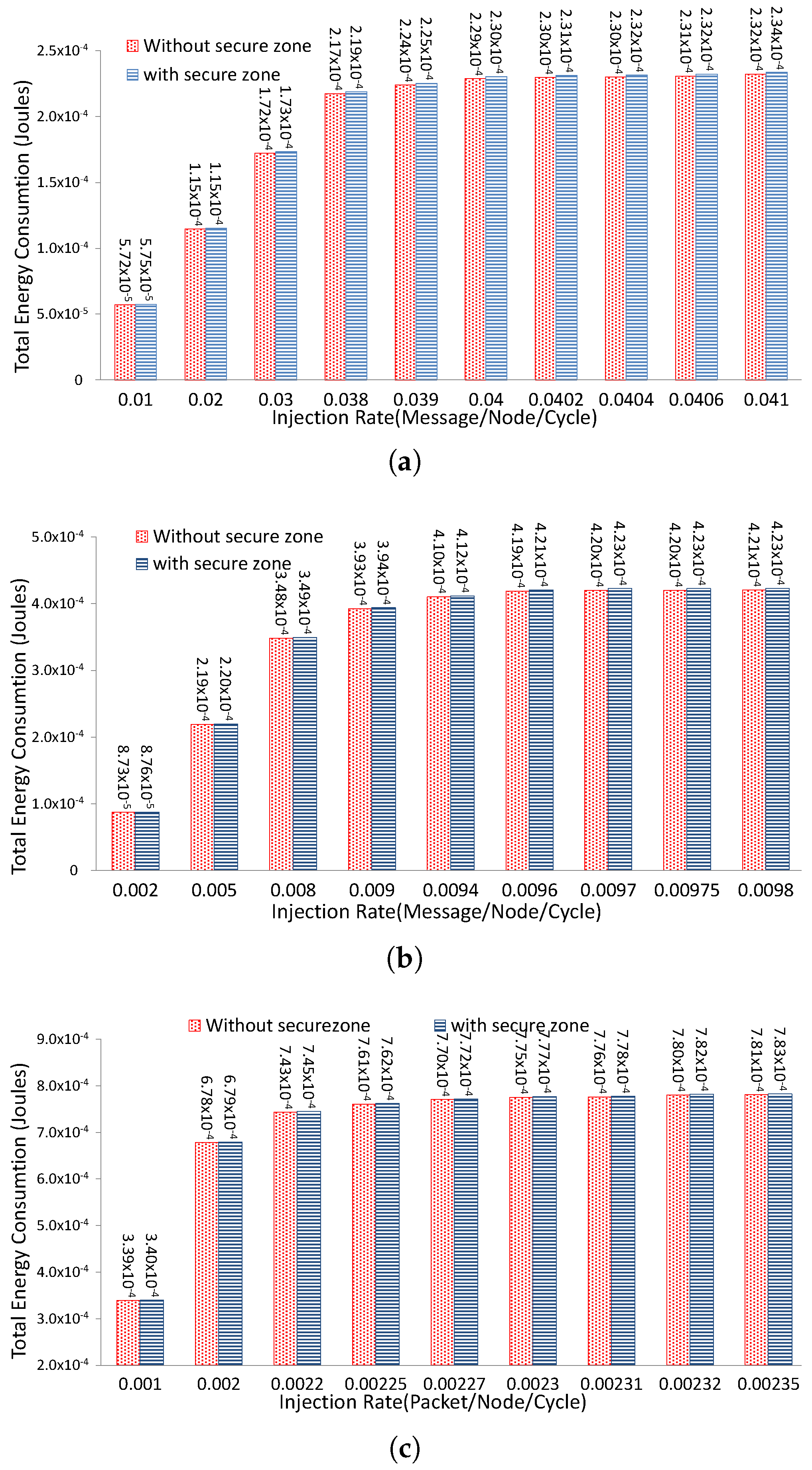

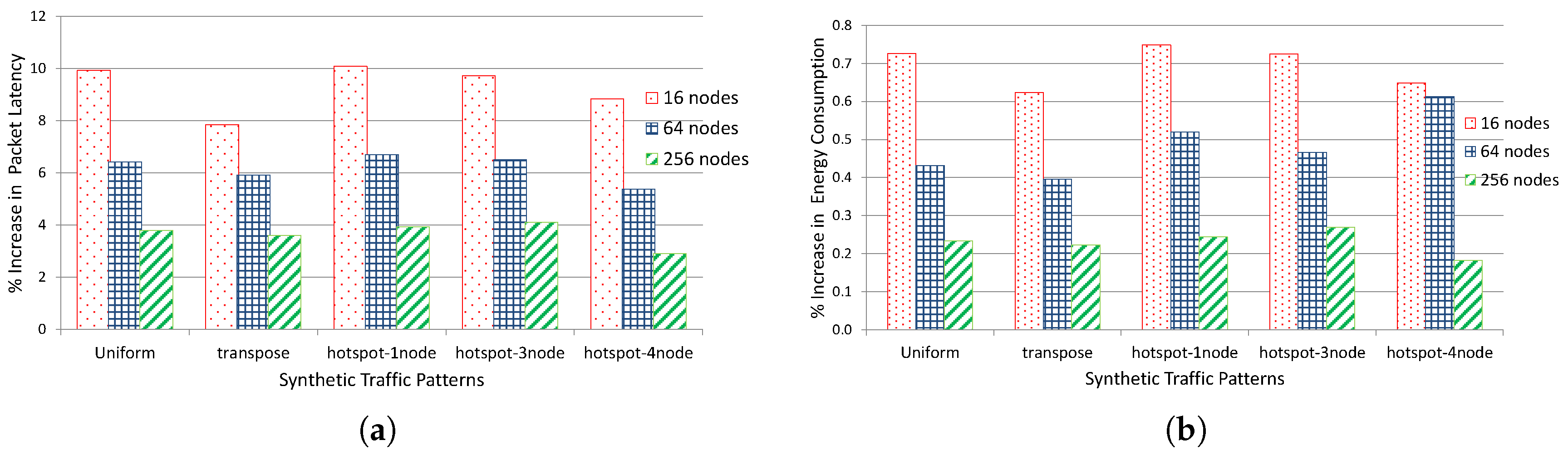

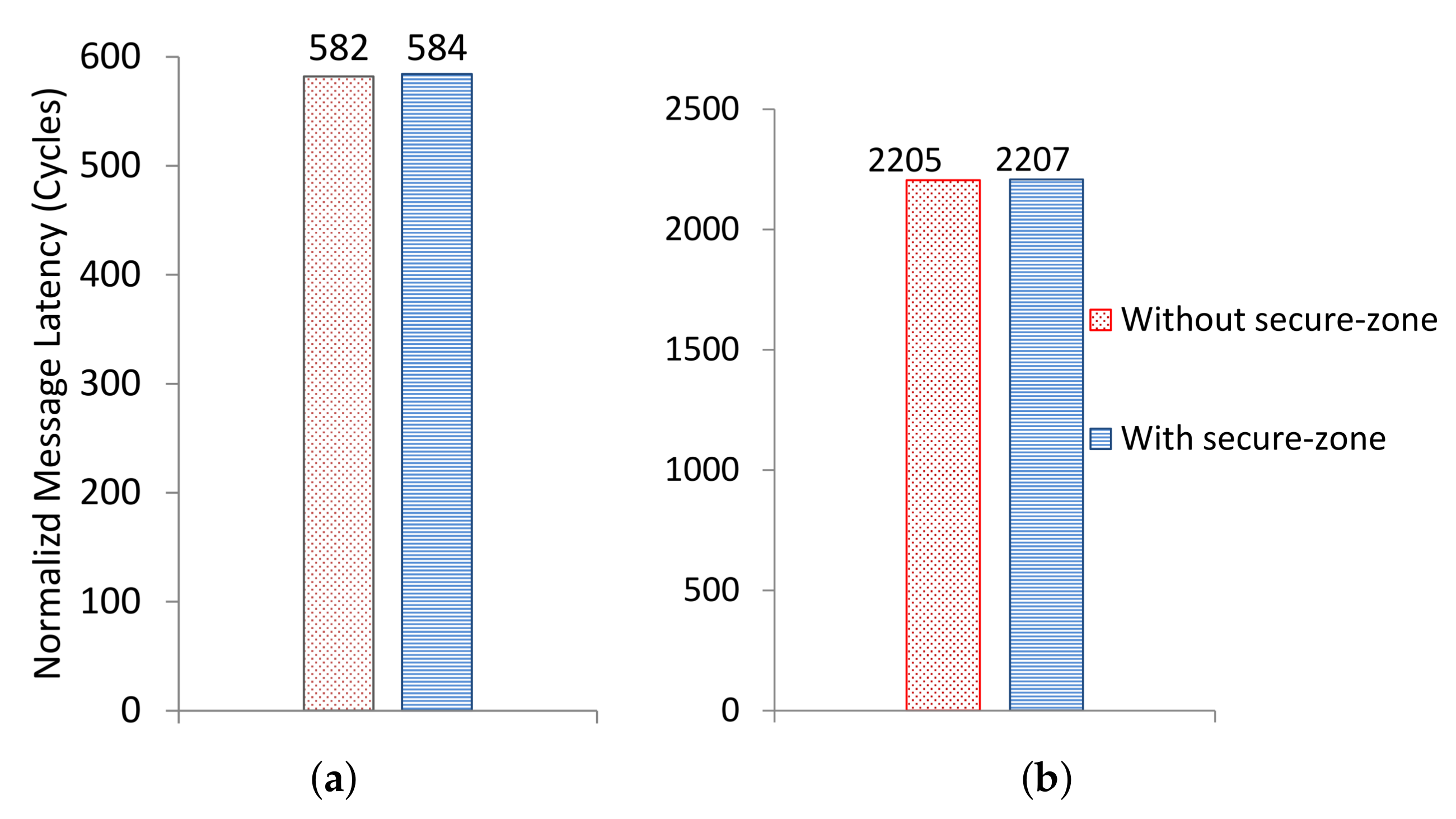

5.2. Performance and Energy Evaluation

6. Conclusions and Future Work

Author Contributions

Conflicts of Interest

References

- Schaumont, P.; Raghunathan, A. Guest Editors’ Introduction: Security and Trust in Embedded-Systems Design. IEEE Des. Test Comput. 2007, 24, 518–520. [Google Scholar] [CrossRef]

- Ravi, S.; Raghunathan, A.; Kocher, P.; Hattangady, S. Security in embedded systems: Design challenges. ACM Trans. Embed. Comput. Syst. 2004, 3, 461–491. [Google Scholar] [CrossRef]

- Song, D.X.; Wagner, D.; Tian, X. Timing Analysis of Keystrokes and Timing Attacks on SSH. In Proceedings of the USENIX Security Symposium, Washington, DC, USA, 13–17 August 2001; Volume 2001. [Google Scholar]

- Perez, R.; van Doorn, L.; Sailer, R. Virtualization and hardware-based security. IEEE Secur. Priv. 2008, 6, 24–31. [Google Scholar] [CrossRef]

- Suh, G.E.; Lee, J.W.; Zhang, D.; Devadas, S. Secure Program Execution via Dynamic Information Flow Tracking. SIGARCH Comput. Archit. News 2004, 32, 85–96. [Google Scholar] [CrossRef]

- Kornaros, G.; Pnevmatikatos, D. A survey and taxonomy of on-chip monitoring of multicore systems-on-chip. ACM Trans. Des. Autom. Electron. Syst. 2013, 18, 17. [Google Scholar] [CrossRef]

- Arora, D.; Ravi, S.; Raghunathan, A.; Jha, N. Secure embedded processing through hardware-assisted run-time monitoring. In Proceedings of the Design, Automation and Test in Europe, Munich, Germany, 7–11 March 2005; Volume 1, pp. 178–183. [Google Scholar]

- Mao, S.; Wolf, T. Hardware Support for Secure Processing in Embedded Systems. IEEE Trans. Comput. 2010, 59, 847–854. [Google Scholar] [CrossRef]

- Rahmatian, M.; Kooti, H.; Harris, I.; Bozorgzadeh, E. Hardware-Assisted Detection of Malicious Software in Embedded Systems. IEEE Embed. Syst. Lett. 2012, 4, 94–97. [Google Scholar] [CrossRef]

- Yoon, M.K.; Mohan, S.; Choi, J.; Kim, J.E.; Sha, L. SecureCore: A multicore-based intrusion detection architecture for real-time embedded systems. In Proceedings of the 2013 IEEE 19th Real-Time and Embedded Technology and Applications Symposium (RTAS), Philadelphia, PA, USA, 9–11 April 2013; pp. 21–32. [Google Scholar]

- Yan, Q.; Li, Y.; Li, T.; Deng, R. Insights into malware detection and prevention on mobile phones. In Security Technology; Springer: New York, NY, USA, 2009; pp. 242–249. [Google Scholar]

- Rathgeb, C.T.; Peterson, G.D. Secure Processing Using Dynamic Partial Reconfiguration. In Proceedings of the 5th Annual Workshop on Cyber Security and Information Intelligence Research: Cyber Security and Information Intelligence Challenges and Strategies, CSIIRW ’09, Oak Ridge, TN, USA, 13–15 April 2009; p. 58. [Google Scholar]

- Lie, D.; Thekkath, C.; Mitchell, M.; Lincoln, P.; Boneh, D.; Mitchell, J.; Horowitz, M. Architectural Support for Copy and Tamper Resistant Software. SIGPLAN Not. 2000, 35, 168–177. [Google Scholar] [CrossRef]

- Suh, G.E.; Clarke, D.; Gassend, B.; van Dijk, M.; Devadas, S. AEGIS: Architecture for Tamper-evident and Tamper-resistant Processing. In Proceedings of the 17th Annual International Conference on Supercomputing, ICS ’03, San Francisco, CA, USA, 23–26 June 2003; ACM: New York, NY, USA, 2003; pp. 160–171. [Google Scholar]

- Doudalis, I.; Clause, J.; Venkataramani, G.; Prvulovic, M.; Orso, A. Effective and Efficient Memory Protection Using Dynamic Tainting. IEEE Trans. Comput. 2012, 61, 87–100. [Google Scholar] [CrossRef]

- Kemerlis, V.P.; Portokalidis, G.; Jee, K.; Keromytis, A.D. Libdft: Practical Dynamic Data Flow Tracking for Commodity Systems. SIGPLAN Not. 2012, 47, 121–132. [Google Scholar] [CrossRef]

- Trusted Computing Group. TPM Spectfications for Embedded Systems. Available online: http://www.trustedcomputinggroup.org/developers/embedded_systems (accessed on 15 March 2018).

- ARM. TrustZone. Available online: http://arm.com/products/processors/technologies/trustzone (accessed on 15 March 2018).

- Intel. VT-d. Available online: http://software.intel.com/en-us/articles/intel-virtualization-technology-for-directed-io-vt-d (accessed on 15 March 2018).

- Pék, G.; Buttyán, L.; Bencsáth, B. A survey of security issues in hardware virtualization. ACM Comput. Surv. 2013, 45, 40. [Google Scholar] [CrossRef]

- La Polla, M.; Martinelli, F.; Sgandurra, D. A survey on security for mobile devices. IEEE Commun. Surv. Tutor. 2013, 15, 446–471. [Google Scholar] [CrossRef]

- Fiorin, L.; Palermo, G.; Lukovic, S.; Catalano, V.; Silvano, C. Secure Memory Accesses on Networks-on-Chip. IEEE Trans. Comput. 2008, 57, 1216–1229. [Google Scholar] [CrossRef]

- Lukovic, S.; Christianos, N. Enhancing network-on-chip components to support security of processing elements. In Proceedings of the 5th Workshop on Embedded Systems Security, WESS ’10, Scottsdale, AZ, USA, 24 October 2010; p. 12. [Google Scholar]

- Porquet, J.; Greiner, A.; Schwarz, C. NoC-MPU: A secure architecture for flexible co-hosting on shared memory MPSoCs. In Proceedings of the Design, Automation Test in Europe Conference Exhibition (DATE), Grenoble, France, 14–18 March 2011; pp. 1–4. [Google Scholar]

- Wassel, H.M.G.; Gao, Y.; Oberg, J.K.; Huffmire, T.; Kastner, R.; Chong, F.T.; Sherwood, T. SurfNoC: A Low Latency and Provably Non-interfering Approach to Secure Networks-on-chip. In Proceedings of the 40th Annual International Symposium on Computer Architecture, ISCA ’13, Tel-Aviv, Israel, 23–27 June 2013; ACM: New York, NY, USA, 2013; pp. 583–594. [Google Scholar] [CrossRef]

- Wassel, H.; Gao, Y.; Oberg, J.; Huffmire, T.; Kastner, R.; Chong, F.; Sherwood, T. Networks on Chip with Provable Security Properties. IEEE Micro 2014, 34, 57–68. [Google Scholar] [CrossRef]

- Grammatikakis, M.D.; Papadimitriou, K.; Petrakis, P.; Papagrigoriou, A.; Kornaros, G.; Christoforakis, I.; Tomoutzoglou, O.; Tsamis, G.; Coppola, M. Security in MPSoCs: A NoC Firewall and an Evaluation Framework. IEEE Trans. Comput.-Aided Des. Integr. Circuits Syst. 2015, 34, 1344–1357. [Google Scholar] [CrossRef]

- Hu, Y.; Müller-Gritschneder, D.; Sepulveda, M.J.; Gogniat, G.; Schlichtmann, U. Automatic ILP-based Firewall Insertion for Secure Application-Specific Networks-on-Chip. In Proceedings of the 2015 Ninth International Workshop on Interconnection Network Architectures: On-Chip, Multi-Chip (INA-OCMC), Amsterdam, The Netherlands, 19 January 2015; pp. 9–12. [Google Scholar] [CrossRef]

- Saeed, A.; Ahmadinia, A.; Just, M. Secure On-Chip Communication Architecture for Reconfigurable Multi-Core Systems. J. Circuits Syst. Comput. 2016, 25, 1650089. [Google Scholar] [CrossRef]

- Saeed, A.; Ahmadinia, A.; Javed, A.; Larijani, H. Intelligent Intrusion Detection in Low-Power IoTs. ACM Trans. Internet Technol. (TOIT) 2016, 16, 27. [Google Scholar] [CrossRef]

- Saeed, A.; Ahmadinia, A.; Just, M. Hardware-assisted secure communication for FPGA-based embedded systems. In Proceedings of the 2015 11th Conference on Ph.D. Research in Microelectronics and Electronics (PRIME), Glasgow, UK, 29 June–2 July 2015; pp. 216–219. [Google Scholar] [CrossRef]

- Platform Studio and the Embedded Development Kit (EDK). Available online: https://www.xilinx.com/products/design-tools/platform.html (accessed on 15 March 2108).

- Lhee, K.S.; Chapin, S.J. Buffer overflow and format string overflow vulnerabilities. Softw. Pract. Exp. 2003, 33, 423–460. [Google Scholar] [CrossRef]

- Younan, Y. 25 Years of Vulnerabilities: 1988–2012. Available online: http://labs.snort.org/ (accessed on 15 March 2018).

- Xilinx Power Estimator. Available online: http://xilinx.com/products/technology/power/xpe (accessed on 15 March 2108).

- Hu, J.; Marculescu, R. Energy- and performance-aware mapping for regular NoC architectures. IEEE Trans. Comput.-Aided Des. Integr. Circuits Syst. 2005, 24, 551–562. [Google Scholar]

- Wang, H.S.; Zhu, X.; Peh, L.S.; Malik, S. Orion: A power-performance simulator for interconnection networks. In Proceedings of the 35th Annual IEEE/ACM International Symposium on Microarchitecture (MICRO-35), Istanbul, Turkey, 18–22 November 2002; pp. 294–305. [Google Scholar]

- Hestness, J.; Grot, B.; Keckler, S.W. Netrace: Dependency-driven trace-based network-on-chip simulation. In Proceedings of the Third International Workshop on Network on Chip Architectures, Atlanta, GA, USA, 4 December 2010; ACM: New York, NY, USA, 2010; pp. 31–36. [Google Scholar]

- Hestness, J.; Keckler, S.W. Netrace: Dependency-Tracking Traces for Efficient Network-on-Chip Experimentation; Technical Report TR-10-11; The University of Texas at Austin, Department of Computer Science: Austin, TX, USA, 2011. [Google Scholar]

- Bienia, C.; Kumar, S.; Singh, J.P.; Li, K. The PARSEC benchmark suite: Characterization and architectural implications. In Proceedings of the 17th International Conference on Parallel Architectures and Compilation Techniques, Toronto, ON, Canada, 25–29 October 2008; ACM: New York, NY, USA, 2008; pp. 72–81. [Google Scholar]

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Saeed, A.; Ahmadinia, A.; Just, M. Hardware-Assisted Secure Communication in Embedded and Multi-Core Computing Systems. Computers 2018, 7, 31. https://doi.org/10.3390/computers7020031

Saeed A, Ahmadinia A, Just M. Hardware-Assisted Secure Communication in Embedded and Multi-Core Computing Systems. Computers. 2018; 7(2):31. https://doi.org/10.3390/computers7020031

Chicago/Turabian StyleSaeed, Ahmed, Ali Ahmadinia, and Mike Just. 2018. "Hardware-Assisted Secure Communication in Embedded and Multi-Core Computing Systems" Computers 7, no. 2: 31. https://doi.org/10.3390/computers7020031

APA StyleSaeed, A., Ahmadinia, A., & Just, M. (2018). Hardware-Assisted Secure Communication in Embedded and Multi-Core Computing Systems. Computers, 7(2), 31. https://doi.org/10.3390/computers7020031