1. Introduction

Natural language processing (NLP) seeks to enable machines to model, understand, and generate human language at scale. Since textual data has grown rapidly, the field has increasingly relied on unsupervised and self-supervised learning to reduce dependence on costly labeled resources. Therefore, autoencoder (AE)-based models have been the core of this shift, as they learn representations by reconstructing input text from a compressed latent encoding and by capturing semantic and syntactic structure without explicit supervision. The autoencoders introduced the framework that many representation learning and pretraining techniques now use as a foundational part of NLP systems.

Autoencoders learn latent representations by optimizing end-to-end reconstruction objectives, enabling representation learning without task-specific supervision. This paradigm is particularly effective in NLP, where textual representations are high-dimensional and labeled data is often scarce. Transformer-based masked autoencoding operationalized this idea at scale, introducing bidirectional context modeling that transfers effectively across diverse downstream tasks [

1]. In parallel, variational autoencoders (VAEs) extended auto encoding to probabilistic latent spaces, enabling controllable and diverse text generation while exposing fundamental challenges such as posterior collapse and training instability [

2]. Alongside sequence-to-sequence (seq2seq) pretraining for generative tasks such as machine translation [

3], these developments position autoencoder-style learning as a unifying framework for modern pretraining and generative modeling in NLP.

The article is intended as a structured review of autoencoder-based learning in the NLP domain with the following objectives: (i) highlight the conceptual relationships in the forms of denoising autoencoders, variational autoencoders, and masked language modeling; (ii) show best practices in the training of the networks that determine the robustness of text VAEs; (iii) introduce patterns in the applications of the dominant autoencoder models; and (iv) propose areas of future work in both monolingual and cross-lingual scenarios.

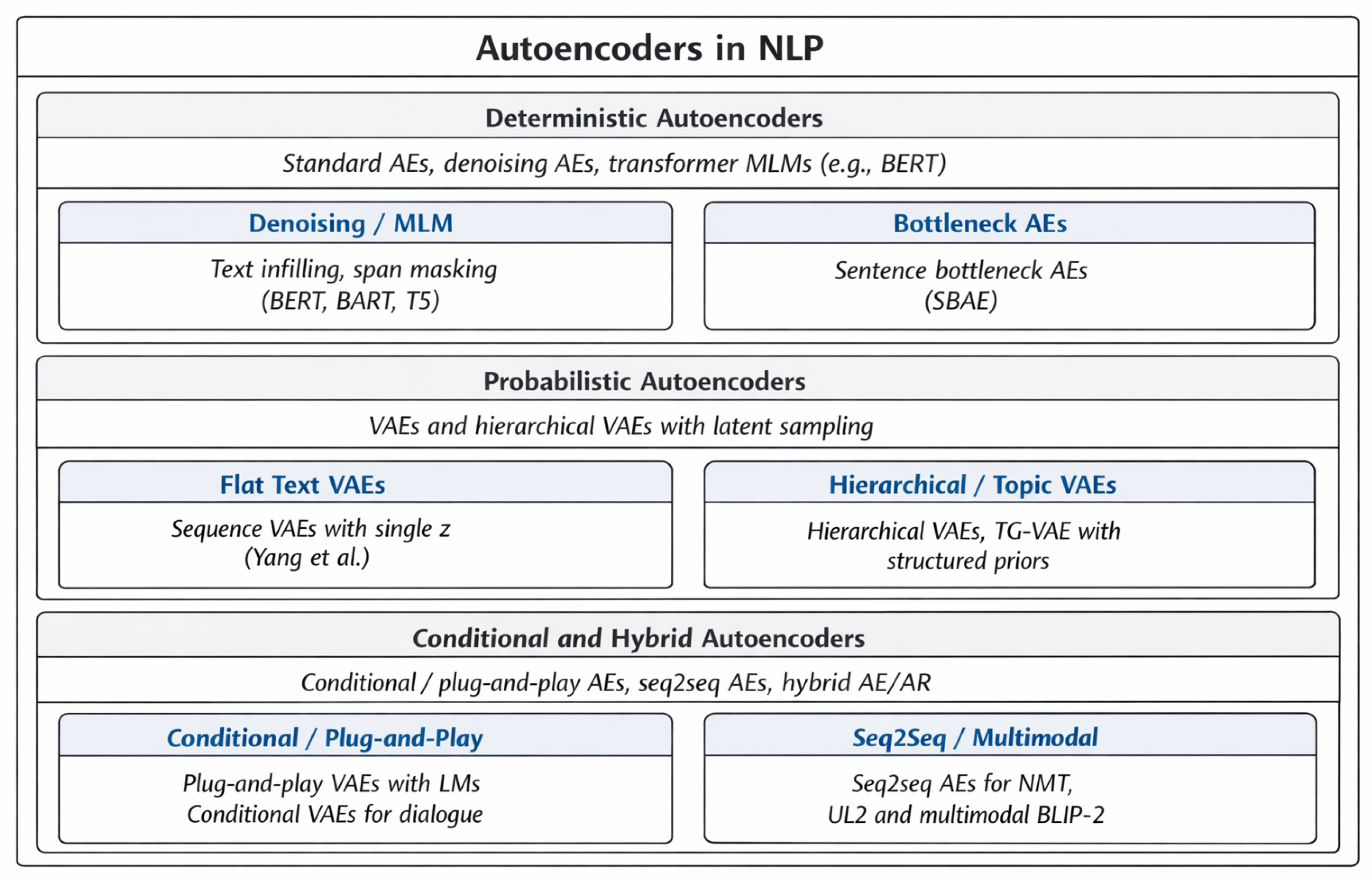

Contributions. This survey advances the understanding of autoencoder-based learning in NLP by: (a) proposing a unified taxonomy that disentangles deterministic, probabilistic, conditional, and bottleneck autoencoders; (b) critically synthesizing benchmark results across language modeling, representation learning, and interpretability to reveal recurring trends, failure modes, and limitations; and (c) articulating open research challenges and future directions related to training stability, controllability, and multilingual alignment.

Rather than proposing a new modeling architecture, this work contributes a synthesis that clarifies why certain autoencoding strategies generalize, scale, or fail under modern NLP evaluation regimes.

2. Methodology

A systematic selection process is essential for ensuring objectivity and minimizing bias in literature reviews. The present review adopted a structured literature selection procedure based on the PRISMA 2020 framework, which provides a systematic approach for study identification, screening, eligibility assessment, and inclusion [

4]. In addition, backward and forward snowballing techniques are employed to complement database searches and reduce the risk of overlooking influential studies [

5]. By explicitly defining search queries, inclusion and exclusion criteria, and screening stages, this methodology enhances the transparency, rigor, and reproducibility of the review while ensuring balanced and representative coverage of research on autoencoder-based models in natural language processing.

2.1. Review Design

This article was designed as a structured review of autoencoder-based methods in natural language processing. The review covers studies on classical autoencoders, denoising autoencoders, sparse autoencoders, variational autoencoders, and reconstruction-based transformer pretraining methods in NLP. The purpose of adopting a structured methodology was to ensure that the reviewed literature was selected systematically rather than narratively, thereby improving methodological rigor and facilitating reproducibility [

4].

2.2. Search Strategy

The identification stage began with keyword-based searches across major scientific databases commonly used in computer science and artificial intelligence research, including Scopus, Web of Science, Google Scholar, IEEE Xplore, SpringerLink, ACM Digital Library, and ScienceDirect. The search strategy was designed to capture the main concepts relevant to this review, including autoencoder variants, reconstruction-based learning, and NLP applications.

The search terms included combinations of keywords such as “autoencoder”, “variational autoencoder”, “denoising autoencoder”, “sparse autoencoder”, “masked autoencoding”, “text generation”, “representation learning”, and “natural language processing”. An example search string is presented below:

After the initial database search, backward snowballing was performed by examining the reference lists of highly relevant papers, while forward snowballing was used to identify more recent studies that cited these core works [

5]. This complementary step was especially useful for capturing seminal papers and recent follow-up studies in rapidly evolving subareas such as text VAEs, denoising sequence-to-sequence pretraining, and transformer-based reconstruction objectives.

2.3. Eligibility Criteria

Explicit inclusion and exclusion criteria were defined to determine which studies should be included in the review. Studies were included if they satisfied the following conditions: (i) they focused on autoencoder-based architectures or reconstruction-driven learning methods; (ii) they addressed NLP tasks such as language modeling, machine translation, text generation, sentiment analysis, multilingual learning, or representation learning; (iii) they were peer-reviewed journal articles, conference papers, or highly influential preprints; and (iv) they provided sufficient methodological or experimental detail relevant to the objectives of this survey.

Studies were excluded if they met any of the following conditions: (i) they focused exclusively on non-textual domains such as computer vision, speech, or medical imaging without a direct NLP contribution; (ii) they mentioned autoencoders only marginally without substantial methodological or empirical relevance; (iii) they were duplicate records retrieved from multiple databases; or (iv) they fell outside the scope of reconstruction-based learning in NLP.

2.4. Screening and Selection Process

The retrieved records were screened in multiple stages following PRISMA-style selection procedures [

4]. First, duplicate records were removed. Second, titles and abstracts were screened to exclude studies that were clearly irrelevant. Third, the remaining papers underwent full-text assessment to determine whether they satisfied the predefined eligibility criteria. When multiple papers described closely related variants of the same method, priority was given to the most complete, representative, or influential source, while retaining key follow-up works necessary to reflect the evolution of the literature.

The final set of included studies was then organized into thematic categories aligned with the objectives of this review: (i) foundational autoencoder architectures; (ii) variational and probabilistic extensions; (iii) denoising and masked reconstruction approaches; (iv) downstream NLP applications; and (v) evaluation practices, limitations, and open challenges.

2.5. Data Extraction and Synthesis

Information was extracted systematically from each included study, regarding model type, architectural design, latent space formulation, training objective, target NLP task, dataset, evaluation metrics, and principal findings. This process enabled structured comparison across studies and supported the development of the taxonomy presented in this review.

The synthesis was primarily qualitative and comparative rather than statistical, since the reviewed studies differ substantially in terms of datasets, tasks, training settings, and evaluation protocols. Accordingly, instead of conducting a formal meta-analysis, this survey organizes the literature conceptually and highlights recurring patterns in architectural design, methodological strengths, limitations, and emerging research trends. This approach is appropriate for a review intended to provide a broad, integrative understanding of autoencoder-based learning in NLP.

Figure 1 presents a PRISMA-based selection strategy for identifying and including relevant studies in this review. The process is organized into four stages: identification, screening, eligibility, and inclusion. Initially, 3213 records were retrieved from major scientific databases, including IEEE Xplore, ACM Digital Library, ScienceDirect, and Scopus. After title and abstract screening, 320 records were retained, with 260 excluded based on inclusion and exclusion criteria and 10 duplicates removed. Full-text assessment was conducted on 50 studies, resulting in 16 exclusions and the addition of two studies through backward and forward snowballing. Finally, 32 studies were included in the quality assessment and constitute the final corpus analyzed in this review.

Further details regarding datasets and sources used in the reviewed studies are summarized in

Appendix A.

2.6. Methodological Contributions to the Review

By combining PRISMA-style reporting with snowballing-based expansion of the literature set, this review clarifies the methodological foundation and improves the traceability of the study selection process. This strengthens the paper’s claim of being a comprehensive review and aligns its literature selection procedure with established evidence-based review practices [

4,

5].

The reviewed studies are organized into thematic sections reflecting the objectives of this survey. Architectural foundations are mentioned in

Section 3, Related Work are mentioned in

Section 4, Historical Evolution are mentioned in

Section 5, application domains are mentioned in

Section 6, benchmarking results are mentioned in

Section 7, and comparative taxonomy are mentioned in

Section 8.

3. Autoencoder Architectures

Autoencoder (AE). An autoencoder is a parametric model defined by a pair of functions

, where an

encoder maps an input

to a latent representation

, and a

decoder reconstructs the input as

. The model is trained to minimize a reconstruction loss measuring the discrepancy between

x and

. A common objective is the expected reconstruction loss,

where

denotes a task appropriate loss function, such as squared error for continuous inputs or negative log likelihood for discrete sequences. For example, the reconstruction loss of the negative log likelihood of the input sequence

of textual data under an autoregressive or conditional decoder is given by

which is equivalent to minimizing the token-level cross-entropy between the original sequence and the reconstructed output distribution.

Variational Autoencoder (VAE). A variational autoencoder is a probabilistic latent-variable model defined by an encoder–decoder pair . Given an input , the encoder specifies an approximate posterior distribution over latent variables , while a prior distribution regularizes the latent space. The decoder models the conditional data distribution.

Training proceeds by maximizing the evidence lower bound (ELBO) on the log-likelihood of the data,

where the first term encourages accurate reconstruction, and the second term is the Kullback–Leibler (KL) divergence that regularizes the approximate posterior toward the prior. The hyperparameter

controls the trade-off between reconstruction fidelity and latent regularization: when

, the model balances reconstruction and structure;

recovers the standard VAE objective;

enforces stronger regularization, often promoting disentanglement at the cost of reconstruction quality; and

removes the KL term entirely, reducing the model to a purely reconstruction (stochastic) autoencoder with no generative regularization. in practice, VAEs are usually trained by minimizing

.

Note. In text VAEs with expressive decoders, the KL divergence term may dominate optimization, causing the encoder to ignore the input x and collapse the approximate posterior toward the prior , a failure mode known as posterior collapse. To mitigate this issue, common strategies include KL annealing schedules and architectural constraints on decoder capacity, which encourage meaningful utilization of the latent variables.

Transformer Masked Language Modeling (MLM). Transformer-based autoencoders, such as BERT, replace full-sequence reconstruction with a masked token prediction objective. Given an input sequence

x in which a subset of token positions

is masked, the model predicts the masked tokens (

’s) conditioned on the remaining context

. The corresponding loss is

By restricting reconstruction to masked positions and conditioning on both left and right context, masked language modeling enables efficient parallel training and yields bidirectional representations that transfer effectively across a wide range of downstream NLP tasks.

Figure 2 illustrates the variational autoencoder (VAE) framework. An encoder maps the input

x to an approximate posterior distribution

in the latent space, from which a latent variable

z is sampled. The decoder reconstructs the input by modeling

, and training is guided by the evidence lower bound (ELBO), which combines a reconstruction term with a KL divergence regularizer toward the prior

.

Different autoencoder architectures exhibit distinct trade-offs in representation learning and generative modeling. Deterministic autoencoders and masked language models provide stable training and strong representation quality, but lack explicit control over latent structure. In contrast, variational autoencoders enable controllable and diverse text generation through probabilistic latent variables, at the cost of training instability and issues such as posterior collapse. Transformer-based masked autoencoders offer superior scalability and transfer performance, while hybrid and conditional variants balance controllability and fluency by integrating latent variables with pretrained language models. These differences highlight that no single architecture is universally optimal, and the choice depends on the target task and desired trade-off between scalability, interpretability, and generative flexibility.

4. Related Work

Autoencoder-based learning has a long and diverse history in natural language processing, evolving from early sequence reconstruction models to probabilistic latent-variable frameworks and, more recently, large-scale transformer-based self-supervised pretraining. Across this evolution, different architectural paradigms have addressed complementary challenges such as data efficiency, representation learning without supervision, latent space interpretability, controllable generation, and cross-lingual generalization. While several surveys review autoencoders broadly across machine learning domains [

6,

7], they do not focus on the distinctive challenges posed by language, including discrete sequence modeling, posterior collapse in expressive decoders, and multilingual alignment. This section reviews the seminal NLP-focused works that have shaped autoencoder-style learning, highlighting their motivations, methodological contributions, and limitations.

Table 1 later summarizes their core characteristics.

4.1. Foundational Sequence Autoencoders

Early seq2seq autoencoders demonstrated that reconstruction objectives could learn meaningful representations for variable-length data. Sutskever et al. [

8] introduced the general encoder–decoder framework with teacher forcing and attention, forming the backbone of modern neural generation systems. Related work extended seq2seq autoencoding beyond text to audio, confirming the modality-agnostic nature of reconstruction-based learning [

9].

4.2. Multilingual and Task-Specific Applications

Autoencoder-style objectives have also supported multilingual representation learning. Early bilingual autoencoders aligned word representations across languages via shared latent spaces [

10], while large-scale multilingual masked pretraining achieved strong zero-shot transfer without parallel data [

11]. However, multilingual autoencoders remain sensitive to data imbalance and typological diversity, particularly in morphologically rich, low-resource languages such as Arabic [

24]. Task-specific applications include semi-supervised VAEs for aspect-term sentiment analysis [

12], conditional VAEs for dialogue generation [

13], and autoencoder-based linguistic clustering in social text [

25].

4.3. Improved VAEs for Text with Dilated CNN Decoders

Although variational autoencoders (VAEs) offer a principled framework for learning continuous latent representations, early text VAEs suffered from posterior collapse, where expressive decoders ignored latent variables. Yang et al. proposed replacing recurrent decoders with dilated convolutional networks whose limited receptive fields constrain local dependencies and encourage reliance on latent codes [

2]. Training heuristics such as KL annealing and word dropout were employed to balance reconstruction and regularization. The model improved likelihood and generation diversity relative to recurrent VAEs, demonstrating that restricting decoder capacity can promote meaningful latent utilization. Finite receptive fields limited long-range coherence, and performance remained sensitive to architectural and optimization choices. This work established decoder bottlenecking as a key design principle for latent-aware text VAEs.

4.4. Hierarchically Structured VAEs

Hierarchically structured VAEs addressed limitations of sentence-level modeling by introducing multi-level latent variables. Shen et al. [

14] proposed sentence-level planning latents to guide word-level decoding, improving coherence and diversity in long-form text generation. Topic-guided VAEs further imposed semantic structure on the latent space through mixture priors aligned with learned topics, enabling interpretable and controllable generation [

15]. Complementary work explored disentangling latent factors for NLP tasks, though scalability remained limited [

26]. While effective, these approaches increased training complexity and sensitivity to hyperparameters.

4.5. BERT: Masked Autoencoding Pretraining

Back in 2019, dominant language modeling objectives were largely directional, such as left-to-right autoregressive prediction, or relied on shallow denoising schemes that failed to capture full bidirectional context. Devlin et al. argued that deep language understanding requires conditioning simultaneously on both preceding and following tokens. BERT introduced Masked Language Modeling (MLM), in which a transformer encoder reconstructs randomly masked tokens using their surrounding context, combined with a Next Sentence Prediction objective to model inter-sentence relationships [

1]. As an encoder-only architecture trained via reconstruction, BERT can be interpreted as a large-scale transformer autoencoder. BERT dramatically advanced representation learning, achieving substantial improvements across benchmarks such as GLUE and SQuAD, and demonstrated that self-supervised pretraining transfers effectively to a wide range of downstream tasks. The model is computationally expensive to train and is not directly suited for generative tasks, motivating subsequent encoder–decoder extensions such as BART and T5. BERT re-established autoencoding as a central paradigm in NLP and laid the foundation for modern masked pretraining strategies.

4.6. ALBERT: Parameter-Efficient Transformer Pretraining

Following the success of masked language modeling (MLM), concerns about computational cost and parameter redundancy in large transformer encoders led to the development of ALBERT [

27]. ALBERT improves efficiency through cross-layer parameter sharing and factorized embedding parameterization, substantially reducing memory usage and model size while maintaining competitive performance on benchmarks such as GLUE and SQuAD. Although it retains the original MLM objective, ALBERT demonstrates that masked autoencoder-style pretraining can scale more efficiently through architectural optimization.

4.7. Pretrained and Plug-and-Play VAEs

OPTIMUS combined pretrained BERT encoders and GPT-style decoders within a VAE framework, showing that large-scale pretraining can alleviate posterior collapse and support both discriminative and generative tasks [

28]. Plug-and-play VAEs further integrated latent control into frozen language models, enabling controllable generation without sacrificing fluency [

16]. A comprehensive survey by Tu et al. [

17] systematized these approaches, highlighting trade-offs in controllability, disentanglement, and evaluation.

4.8. Sentence Bottleneck Autoencoders

Sentence bottleneck autoencoders (SBAEs) aimed to extract compact sentence embeddings from pretrained transformers without full retraining. By freezing the encoder and training a shallow decoder over an attention-based bottleneck, SBAEs achieved strong performance on semantic similarity and classification benchmarks with minimal additional parameters [

18]. These models demonstrated that reconstruction can enhance semantic representations even in fixed encoders.

4.9. Seq2Seq Pretraining for Neural Machine Translation

Sequence-to-sequence autoencoding bridges reconstruction-based learning and generative modeling, particularly in machine translation. Wang et al. compared joint encoder–decoder pretraining with disjoint strategies, systematically analyzing objective alignment and data distribution mismatch [

3]. Joint pretraining improved translation diversity and low-resource performance, while disjoint approaches offered better domain adaptation. Joint pretraining incurs a high computational cost and is sensitive to domain mismatch. The study clarified how reconstruction objectives influence encoder–decoder translation architectures and informed later unified models such as mBART and T5.

4.10. Modern Hybrid and Multimodal Autoencoders

Recent work extends autoencoding principles to hybrid and multimodal settings. UL2 unified denoising, span corruption, and causal prediction within a single pretraining framework [

19]. Diffusion-based autoencoders introduced probabilistic diffusion priors to stabilize training [

20]. In multimodal learning, BLIP-2 aligned vision and language using frozen image encoders and lightweight text decoders, retaining an autoencoder-style reconstruction signal [

21]. Finally, information-theoretic objectives, such as InfoVAE, explicitly encourage mutual information between inputs and latent variables, offering a principled approach to mitigating posterior collapse [

22].

Unlike prior surveys that primarily catalog autoencoder variants or focus narrowly on controllable text generation, this review emphasizes evaluation behavior and failure modes as first-class analytical tools. In particular, we connect posterior collapse, latent underutilization, and multilingual imbalance across model families and training regimes, revealing patterns that are not apparent when methods are considered in isolation.

These developments reflect a broader trend in modern NLP, where hybrid and multimodal architectures increasingly integrate reconstruction-based objectives with large-scale pretrained language models, enabling unified frameworks for representation learning, generation, and cross-modal reasoning.

5. Historical Evolution of Autoencoder Architectures

5.1. From Denoising to Variational Designs

Early autoencoder architectures for NLP were largely motivated by denoising principles, in which inputs are deliberately corrupted, and models are trained to reconstruct the original signal. Such objectives encourage robustness and force encoders to capture salient linguistic structure rather than superficial token patterns. However, when extended to probabilistic formulations such as variational autoencoders (VAEs), text modeling introduced a fundamental challenge: posterior collapse, wherein expressive decoders learn to ignore latent variables altogether.

Addressing posterior collapse became a central design concern in text VAEs. Both architectural and objective-level interventions, including constrained or weakened decoders, KL annealing schedules, word dropout, and noise injection, were proposed to encourage meaningful latent utilization [

2].

In the VAE literature, dilated convolutional decoders have been shown to mitigate posterior collapse by limiting autoregressive capacity, thereby forcing greater reliance on latent variables and improving generation quality [

2].

Subsequent work extended these ideas through hierarchical VAEs, introducing multi-timescale latent variables to separate global planning from local realization. Such architectures improved discourse-level coherence and long-range structure in generated text, highlighting the potential of structured latent spaces for modeling extended linguistic dependencies [

14,

15]. Despite these advances, VAEs’ sensitivity to architectural choices and training heuristics remained a persistent limitation.

5.2. Sequence to Sequence Autoencoders

Sequence-to-sequence (seq2seq) autoencoders generalized autoencoding objectives to variable-length inputs and outputs, compressing entire sequences into fixed-dimensional representations prior to reconstruction. Early successes in related domains, such as audio modeling, demonstrated that unsupervised sequence representations could be learned effectively using recurrent encoder–decoder architectures [

9]. Foundational work on neural sequence learning established key training techniques, including teacher forcing, attention mechanisms, and beam search, that later became standard components of autoencoder-based pretraining and neural machine translation systems [

8].

In NLP, seq2seq autoencoders provided an important conceptual bridge between reconstruction-based learning and generative modeling. However, their reliance on compressing full sequences into a single latent vector, combined with limited parallelization and training inefficiencies, constrained scalability. As a result, although seq2seq autoencoding proved viable, it was eventually superseded by transformer-based masked objectives that better exploit large corpora and modern hardware [

9].

5.3. Transformer Based Autoencoders

Transformer-based masked autoencoding reframes reconstruction as the prediction of randomly masked tokens from bidirectional context, enabling efficient parallel training and substantially improved representation quality. This objective rapidly became the dominant pretraining paradigm for encoder models in NLP [

1]. By removing the requirement to reconstruct entire sequences and instead focusing reconstruction pressure on selected tokens, masked language modeling balances learning signal and scalability more effectively than earlier seq2seq autoencoders.

Beyond standard denoising, sentence bottleneck autoencoders augment pretrained transformers with compact, fixed-size latent representations and lightweight decoders. These models produce high-quality sentence embeddings and achieve competitive performance on single-sentence benchmarks such as GLUE with minimal additional parameters [

18]. Further analyses of encoder–decoder capacity in autoencoder style pretraining reinforce the importance of asymmetric designs, showing that lightweight decoders encourage encoders to retain richer semantic information [

27]. Collectively, these findings clarify why transformer-based autoencoders have largely supplanted earlier reconstruction paradigms in large-scale NLP.

6. Applications in Natural Language Processing

Autoencoder-based models have been applied across a broad range of natural language processing tasks, leveraging reconstruction objectives for both representation learning and controlled text generation. Depending on their architectural design and training objective, different autoencoder variants support applications such as language modeling, machine translation, sentiment analysis, and multilingual representation learning. This section reviews key application areas and highlights how autoencoder learning is adapted to specific task requirements.

6.1. Language Modeling and Text Generation

VAEs enable

controllable and

diverse text generation by sampling or manipulating latent variables [

2]. Topic-guided VAEs (TGVAEs) incorporate a neural topic model that introduces a Gaussian mixture prior, aligning latent structure with interpretable topical semantics and improving controllability and coherence [

15]. Hierarchically structured VAEs introduce sentence-level planning latents that guide word-level decoding, improving perplexity and human judgments for long-form generation [

14]. Recent plug-and-play formulations integrate VAEs with pretrained language models, injecting latents at selected layers to steer style, sentiment, and content while preserving fluency from the base transformer [

16]. Design choices such as priors, posteriors, and training tricks have been used to demonstrate controllable generation quality, as explained in [

17].

6.2. Machine Translation

Pretraining choices for neural machine translation (NMT) interact with autoencoding-style learning. Joint encoder–decoder pretraining can improve translation diversity but risks domain mismatch between pretraining and fine-tuning; remedies include objective alignment and data selection [

3]. In multilingual contexts, large cross-lingual encoders trained without parallel data (e.g., XLM-R) learn representations that transfer across languages and enable zero-shot transfer [

11].

6.3. Sentiment Analysis and Conditional Modeling

Semi-supervised VAEs disentangle aspect content from sentiment polarity for aspect term sentiment analysis, yielding gains in low-resource regimes by leveraging unlabeled data [

12]. For short-text dialogue, conditional transforming VAEs control conditioning pathways to balance diversity and relevance, improving conversational response variety while maintaining coherence [

13].

6.4. Representation Learning and Evaluation

Autoencoder encoders and bottleneck variants produce dense representations competitive on semantic similarity and sentence-level classification with minimal task-specific tuning [

18]. Evaluation benchmarks such as GLUE and SuperGLUE provide standardized tests of generalization for encoder-style models and continue to inform comparisons among autoencoding strategies [

29,

30].

6.5. Monolingual and Multilingual Contexts

Monolingual English pretraining benefits from abundant corpora and mature benchmarks, enabling specialization for idiomatic usage and syntax [

1,

29]. Multilingual autoencoders must align latent spaces across typologically diverse languages, preserve semantics despite differences in word order or morphology, and avoid high-resource dominance. Results from massive-scale cross-lingual pretraining demonstrate that sufficient capacity and data produce strong zero-shot transfer without parallel corpora [

11]. For generative tasks, pretraining must balance cross-lingual alignment with decoder specialization to mitigate domain gaps observed for seq2seq pretraining [

3].

Figure 3 highlights major application areas of autoencoder-based models in NLP. Autoencoders support controllable and diverse text generation in language modeling, influence performance and robustness in machine translation through pretraining strategies, and enable semi-supervised learning for sentiment analysis in low resource settings. They are also widely used for representation learning, producing dense semantic embeddings, and play an important role in both monolingual and multilingual contexts, where alignment and data imbalance present key challenges.

6.6. Why Variational Autoencoders Did Not Become the Dominant Paradigm in NLP

Despite their strong theoretical appeal, variational autoencoders (VAEs) did not become the dominant paradigm in natural language processing due to limitations in modeling, optimization, and scalability. One of the main challenges is that the probabilistic latent variable modeling approach does not match the discrete and highly structured nature of the language, and the autoregressive decoders, including recurrent neural network and transformer-based language models, are able to capture linguistic dependencies so effectively that the latent variables have little effect on reconstruction, leading to the well-known problem of

posterior collapse [

23,

31]. When collapse occurs, the learned latent space becomes uninformative, undermining the representational advantages that motivate VAE-based modeling.

Mitigating posterior collapse typically requires carefully tuned heuristics such as KL annealing schedules [

32], word dropout [

23], constrained or weakened decoders [

2], or auxiliary objectives that explicitly encourage mutual information between inputs and latent variables [

22]. While these techniques can improve latent utilization, they introduce additional hyperparameters and optimization sensitivity, making VAEs less robust and less reproducible than deterministic masked language modeling approaches, which scale more predictably with data and model size [

27]. Scalability considerations further favored transformer-based masked autoencoding, which supports fully parallel training, efficient utilization of large-scale corpora, and seamless integration with downstream fine-tuning pipelines [

1,

19]. In contrast, VAE objectives impose tighter coupling between encoder and decoder learning dynamics and are more difficult to scale reliably without architectural compromises or specialized plug-and-play mechanisms [

16,

28].

Moreover, several practical advantages historically associated with VAEs, such as controllable generation and structured latent representations, have increasingly been achieved through alternative techniques, including prompting, fine-tuning, and reinforcement-based alignment, without requiring explicit latent-variable inference [

17,

33]. Consequently, VAEs remain influential as a conceptual framework for studying representation learning and controllability, but have been largely eclipsed in large-scale NLP systems by simpler and more stable self-supervised objectives.

These observations reinforce that, while VAEs remain an important conceptual framework for studying latent representations and controllability, transformer-based reconstruction objectives currently dominate practical large-scale NLP systems.

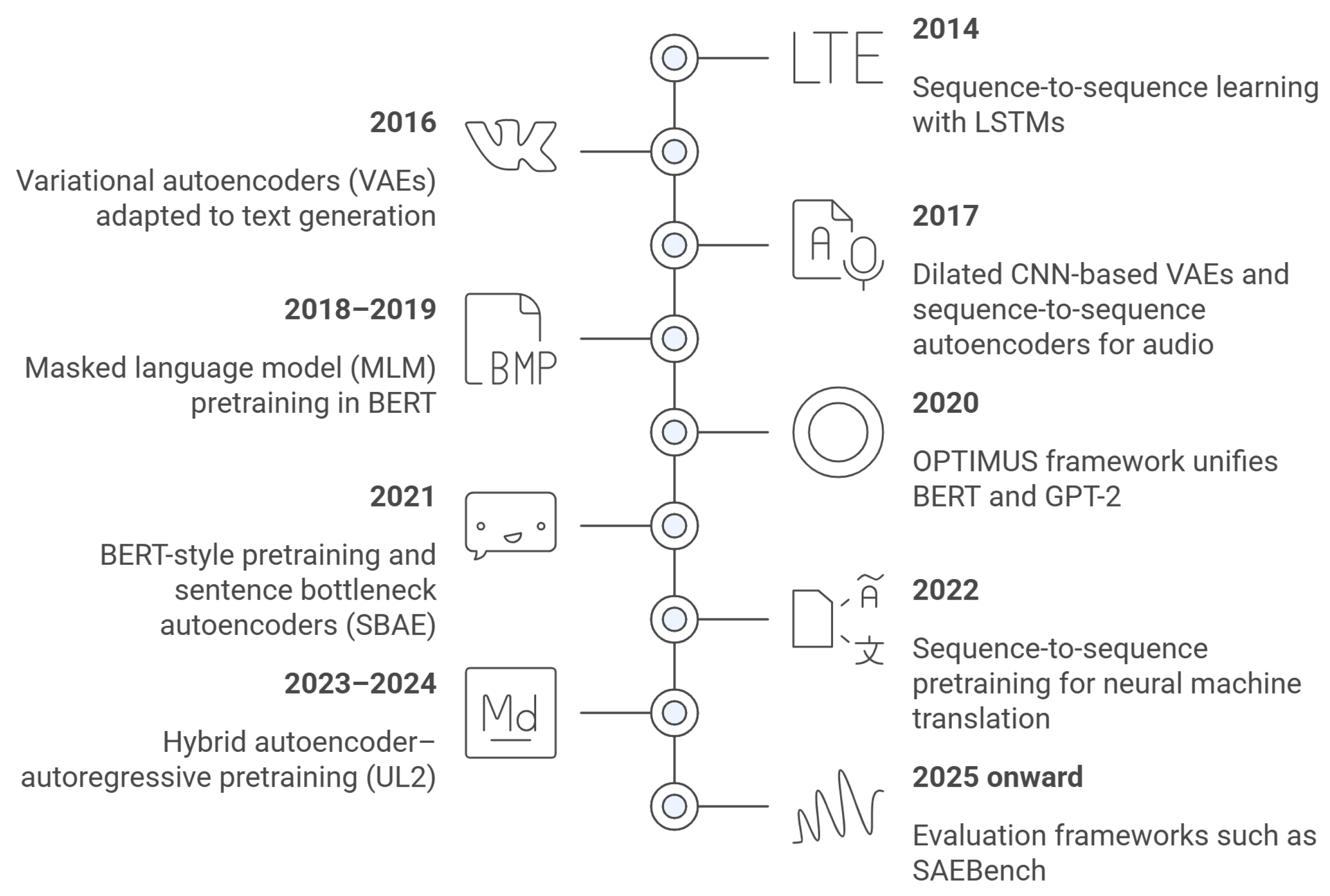

Figure 4 summarizes the evolution of autoencoder-based methods in NLP, from early sequence-to-sequence models with recurrent networks to variational autoencoders for text generation. The introduction of transformer-based masked language modeling marked a shift toward scalable self-supervised pretraining, followed by hybrid encoder–decoder and bottleneck architectures that unify representation learning and generation. More recent work emphasizes hybrid objectives and evaluation frameworks, reflecting a maturation of autoencoder-style learning in modern NLP.

7. Benchmarking and Empirical Comparisons

This section provides a systematic benchmarking of autoencoder-based methods across NLP tasks, synthesizing quantitative results from recent literature to enable trend-level empirical comparison of modeling strategies.

Because the reported results are drawn from heterogeneous experimental settings, datasets, and model configurations, the numerical values should be interpreted as indicative trends and trade-offs rather than directly comparable measurements or performance rankings. The tables are intended as structured summaries of prior work rather than new experimental contributions (see

Appendix A for details on datasets and sources).

In particular, differences in dataset composition, preprocessing pipelines, model capacity, training schedules, and evaluation setups can significantly affect reported performance. As a result, direct numerical comparison across studies should be interpreted with caution, and the presented results are intended to highlight relative trends and methodological trade-offs rather than definitive rankings.

7.1. Language Modeling Benchmarks

VAE-based language models are typically evaluated on corpora such as Penn Treebank (PTB), Yahoo Answers, and Yelp Reviews using negative log-likelihood (NLL), KL divergence, mutual information (MI), and the number of active units (AU) as primary metrics [

2,

34].

Table 2 summarizes representative performance trends for several posterior-collapse mitigation methods on a Yahoo-style long-text corpus, consolidating results from recent comparative studies [

34,

35].

Several observations emerge from these benchmarks. First, posterior-collapse mitigation strategies consistently improve over vanilla VAEs, with NLL gains on the order of 5–7 nats on Yahoo-style corpora [

34]. Second, higher MI and AU correlate with improved latent utilization but do not guarantee optimal NLL; for example, Free-Bits VAE achieves high AU but suboptimal NLL due to gradient discontinuities introduced by hard KL thresholds [

34]. Third, Scale-VAE achieves state-of-the-art NLL by scaling posterior means to enhance latent discriminability without enforcing a fixed minimum KL per dimension, leading to both high MI and full latent usage [

34].

7.2. Pretrained VAE Language Models: OPTIMUS

OPTIMUS is a large-scale pretrained VAE that combines a BERT-like encoder and a GPT-2-style decoder within a shared latent space, providing a bridge between language understanding and guided generation [

28]. Pretraining on large corpora yields a smoother latent manifold that mitigates KL vanishing and supports both feature-based classification and controllable text generation [

28].

Table 3 summarizes reported trends comparing OPTIMUS with baseline pretrained language models [

1,

28].

Reported experimental results indicate that OPTIMUS achieves lower perplexity than comparably sized GPT-2 variants under similar training conditions and maintains GLUE performance close to BERT, while uniquely enabling attribute-controlled generation through latent manipulation [

28]. In low-resource regimes, OPTIMUS latent representations provide non-trivial absolute gains over BERT on sentence classification tasks with limited labeled data [

28]. For example, in settings with limited supervision, OPTIMUS’s generative pretraining enables the model to learn richer semantic representations in the latent space, which can be leveraged for downstream classification tasks. Unlike encoder-only models such as BERT, which rely solely on discriminative features, OPTIMUS benefits from its joint generative discriminative framework, allowing it to better generalize when labeled data are limited.

7.3. Posterior Collapse Mitigation: Taxonomy and Trade-Offs

Posterior collapse remains a central and unresolved obstacle in training text VAEs, particularly when paired with powerful autoregressive decoders [

31,

38].

Table 4 categorizes major mitigation strategies and their associated trade-offs, synthesizing recent theoretical and empirical analyses [

2,

22,

31,

34,

36].

Recent studies show that posterior-scaling methods such as batch-normalized variational autoencoder (BN-VAE) and scaling variational autoencoder (Scale-VAE) achieve strong NLL while activating most latent dimensions, outperforming earlier KL-thresholding approaches in both mutual information and reconstruction quality [

34,

35]. Lagging inference networks and semi-amortized VAEs provide complementary optimization-based solutions by decoupling encoder and decoder learning dynamics, thereby reducing posterior collapse without modifying model architecture [

31,

36].

Figure 5 summarizes the practical trade-offs among these mitigation strategies.

Unlike KL-thresholding approaches that impose explicit constraints on the KL divergence, Scale-VAE modifies the posterior distribution by scaling the latent means, thereby increasing their separability and improving mutual information between inputs and latent variables. This approach avoids optimization discontinuities and enables more stable training, while preserving flexibility in how information is distributed across latent dimensions [

36].

7.4. Representation Learning and Downstream Classification

Autoencoder-based encoders are commonly evaluated through downstream sentiment and topic classification tasks, particularly on Yelp and similar review datasets, under varying labeled data budgets [

34,

35].

Table 5 reports representative accuracy trends illustrating how collapse-mitigated VAEs improve over vanilla VAEs, especially in low-resource settings [

34,

35].

These trends indicate that collapse-mitigated VAEs close much of the performance gap between deterministic encoders and probabilistic models in terms of representation quality [

34,

35]. In particular, DU-VAE and Scale-VAE attain substantial gains over standard VAEs with very few labeled examples, highlighting the importance of well-behaved latent spaces for sample-efficient transfer [

34,

35].

7.5. Sparse Autoencoders and Interpretability Benchmarks

Sparse autoencoders (SAEs) represent a distinct but increasingly important application of autoencoding, in which reconstruction objectives are used to extract interpretable, disentangled features from the internal activations of large language models rather than from raw text. Recent work evaluates SAEs using dedicated interpretability benchmarks such as SAEBench [

39], which scores models on loss recovery, feature absorption, spurious correlation removal, and interpretability through a combination of automated and human-aligned metrics.

From a broader perspective, sparse autoencoders can be viewed as a natural extension of standard autoencoder-based learning. While classical autoencoders operate on raw input data to learn compact latent representations for generation or downstream tasks, SAEs apply similar reconstruction principles to internal activations of pretrained language models. In this sense, SAEs shift the focus from representation learning to representation analysis, enabling the interpretation and disentanglement of features learned by large-scale models while preserving the core autoencoding objectives [

39].

Results indicate that TopK and BatchTopK SAEs recover more model loss and exhibit lower feature absorption than standard ReLU SAEs, while producing more interpretable, mono-semantic features [

39]. These findings suggest that sparsity–fidelity trade-offs alone are insufficient for evaluating SAEs, and that interpretability benchmarks must consider multiple axes beyond reconstruction error when used for mechanistic analysis and model steering.

One possible explanation for this difference lies in the activation behavior of ReLU-based SAEs. ReLU activations allow multiple neurons to be simultaneously active, which can lead to overlapping feature representations and higher feature absorption, where a single neuron captures multiple unrelated patterns. In contrast, TopK-based sparsity enforces explicit competition among neurons by activating only a fixed number of units, encouraging more distinct and specialized features that are easier to interpret as mono-semantic representations [

39].

Table 6 summarizes a qualitative comparison of SAE architectures across SAEBench dimensions, highlighting that TopK and BatchTopK variants achieve higher interpretability and lower feature absorption than ReLU-based SAEs.

7.6. Encoder Decoder Pretraining: BART and T5

Encoder–decoder transformers, such as BART and T5, can be interpreted as sequence-level autoencoders with task-specific corruption and reconstruction schemes [

40,

41].

Table 7 contrasts their pretraining objectives and strengths from an autoencoding perspective.

Empirical studies indicate that both BART and T5 outperform encoder-only MLMs on summarization and text generation tasks while retaining strong transfer performance for classification and question-answering [

40,

41]. From an autoencoding standpoint, BART emphasizes rich input corruptions for robustness, whereas T5 frames every task as conditional reconstruction of masked spans, unifying denoising and conditional generation within a single objective.

7.7. Evaluation Metrics for Autoencoder-Based NLP

Evaluating autoencoder-based NLP models requires a combination of intrinsic and extrinsic metrics [

42].

Intrinsic metrics assess optimization behavior and latent-space properties, such as reconstruction loss, KL divergence, mutual information, and the number of active latent units, which are particularly important for analyzing training stability and posterior collapse in VAEs [

2,

31,

34]. Extrinsic metrics evaluate downstream task performance, including classification accuracy on benchmarks such as GLUE and SuperGLUE [

29,

30], as well as generation quality measured by overlap and diversity metrics and human judgments [

42,

43].

Perplexity and NLL. Perplexity and token-level negative log-likelihood (NLL) quantify how well a generative model predicts a text sequence, and they remain standard evaluation metrics for language modeling, with lower values indicating better predictive performance [

35].

Overlap-based metrics. BLEU and ROUGE are widely used for evaluating machine translation and text summarization, as they measure n-gram overlap between system outputs and reference texts [

43,

44]. Despite their simplicity and reproducibility, these metrics are known to exhibit imperfect correlation with human judgments of fluency, coherence, and semantic adequacy [

42]. In practice, this implies that high BLEU or ROUGE scores do not necessarily correspond to better user-perceived quality, particularly for open-ended text generation tasks. These metrics tend to favor surface-level similarity and may fail to capture deeper semantic correctness or contextual relevance.

Similarly, perplexity and negative log-likelihood (NLL) measure how well a model fits the data distribution, but they often favor conservative predictions and may penalize diversity in generated text. As a result, models with lower perplexity are not always more useful in practical applications requiring creativity or controllability. Therefore, reliable evaluation of autoencoder-based NLP models requires combining multiple complementary metrics, including intrinsic, extrinsic, and human-centered evaluation, to capture both quantitative performance and qualitative aspects such as coherence, diversity, and controllability.

Diversity and controllability. Distinct-n and Self-BLEU are commonly used to assess lexical diversity in generated text, capturing complementary aspects of output variability. Attribute classification accuracy is often employed as a proxy for controllability in conditional text generation, indicating how reliably generated outputs exhibit desired attributes.

Human evaluation. Human judgments of fluency, coherence, and semantic adequacy remain essential, particularly when models trade likelihood for diversity [

42].

8. Comparative Taxonomy and Evaluation Protocols

Autoencoder-based models in NLP can be broadly categorized according to their latent structure, conditioning mechanisms, and reconstruction objectives. In this survey, we adopt the following taxonomy:

Deterministic autoencoders: standard or denoising reconstruction models that learn fixed latent representations, including transformer-based masked language models (MLMs).

Probabilistic autoencoders: variational autoencoders (VAEs) and hierarchical VAEs that introduce stochastic latent variables and probabilistic inference.

Conditional autoencoders: conditional or plug-and-play architectures that incorporate external attributes or control signals to guide generation.

Bottleneck autoencoders: models designed to produce compact sentence or document embeddings by enforcing fixed-size latent bottlenecks optimized for semantic retention.

Figure 6 summarizes this taxonomy, organizing autoencoder families along axes of determinism, probabilistic latent structure, and conditional or hybrid design. This categorization highlights how architectural and objective-level choices shape the trade-offs among scalability, controllability, and representational fidelity across autoencoder variants.

Evaluation Dimensions

Evaluating autoencoder-based models requires metrics that reflect the distinct goals of each model family. Across the literature, evaluation protocols can be grouped into four complementary dimensions:

Intrinsic: reconstruction loss, KL divergence, and mutual information, which assess optimization behavior and latent utilization.

Generative: BLEU, ROUGE, perplexity, and diversity metrics, which measure output quality and variability in text generation tasks.

Representation: downstream classification accuracy, semantic textual similarity (STS) correlation, and clustering purity, which evaluate the usefulness of learned representations.

Human-centric: human judgments of fluency, coherence, and controllability, which capture qualitative aspects not reflected by automatic metrics.

Together, these evaluation dimensions provide a unified framework for comparing autoencoder variants with differing objectives, and underscore the need for multi-faceted evaluation when assessing reconstruction-based models in NLP.

Table 8 summarizes practical guidelines for selecting autoencoder-based architectures based on specific research objectives. It organizes model families according to their strengths in areas such as training stability, controllability, long-range coherence, and interpretability. This comparison highlights how different architectural choices entail distinct trade-offs, providing an actionable framework for selecting appropriate models based on task requirements and desired properties.

9. Advantages, Limitations, and Open Problems

Autoencoder-style learning leverages large volumes of unlabeled text through reconstruction-driven objectives, providing an effective form of self-supervision at scale. Denoising and masking strategies improve robustness and generalization, while latent-variable formulations enable controllable and diverse text generation by exposing interpretable dimensions of variation.

Limitations. Despite these strengths, several limitations persist. Latent representations often lack interpretability and reliability, particularly in the presence of powerful autoregressive decoders. Training instability, most notably posterior collapse in VAEs, remains a recurring challenge. In multilingual settings, autoencoder-based models are also sensitive to data imbalance, frequently favoring high-resource languages at the expense of typological diversity.

In addition, some limitations arise from the literature selection process adopted in this review. Although the use of the PRISMA framework and snowballing strategies provides a systematic and transparent approach to identifying relevant studies, it is still possible that certain works were not captured. This may result from variations in terminology across research communities, limitations in the indexing of scientific databases, or the rapid emergence of new publications in the fast-evolving field of natural language processing. Furthermore, the search queries and inclusion criteria were designed to prioritize studies explicitly focused on autoencoder-based architectures and reconstruction-driven learning, potentially leading to the exclusion of related approaches described using different terminology or situated in adjacent research areas.

Open problems. Key open problems emerging from the literature include: (i) stabilizing VAE training when combined with strong pretrained encoders without weakening generative capacity; (ii) designing structured and hierarchical priors that support fine-grained and interpretable control; (iii) achieving robust multilingual alignment that preserves linguistic diversity while mitigating high-resource dominance; and (iv) developing standardized evaluation protocols for controllability and diversity that complement likelihood-based metrics.

Another open challenge concerns the dynamic nature of the field. Given the rapid pace of development in deep learning and NLP, new models and methodological advances continue to emerge, meaning that any literature review reflects the state of the field at a specific point in time. Future work could extend this survey by incorporating broader search strategies, expanding keyword coverage, and leveraging automated literature mining techniques to improve coverage and continuously update the review.

10. Future Directions

Future research should focus on hybrid encoder–decoder architectures that jointly optimize masked reconstruction and autoregressive generation objectives, enabling improved trade-offs between representation learning and generative flexibility. In particular, integrating transformer-based masked language modeling with latent-variable inference mechanisms may enhance controllability while preserving scalability and training stability.

Additionally, the design of structured, hierarchical latent-variable priors represents a promising direction for improving long-range coherence and interpretability in text generation. Future work may explore multi-level latent variables, disentangled representations, and information-theoretic regularization techniques (e.g., mutual information maximization) to improve latent space utilization and mitigate posterior collapse.

In multilingual settings, developing alignment strategies that incorporate typological constraints and data balancing mechanisms remains an open challenge. For example, incorporating morphological priors for morphologically rich languages such as Arabic could improve representation learning by capturing inflectional and derivational patterns more effectively. Similarly, syntax-aware bottleneck representations that encode language-specific word order and grammatical structure may enhance cross-lingual generalization. Techniques such as shared latent spaces, cross-lingual contrastive learning, and adaptive fine-tuning could further improve performance across low-resource languages while preserving linguistic diversity.

Finally, integrating autoencoder-based latent control with large-scale pretrained and instruction-tuned language models, as well as multimodal architectures, offers a promising direction for achieving controllable, efficient, and scalable text generation in real-world applications.

11. Conclusions

This paper presented a comprehensive review of autoencoder-based approaches in natural language processing, examining the evolution of reconstruction-driven learning from classical autoencoders to modern transformer-based architectures. The review provided a structured synthesis of architectural developments, training strategies, and application domains in NLP. The analysis shows that autoencoder models have played a central role in advancing unsupervised and self-supervised learning. Reconstruction-based objectives remain fundamental to representation learning, while variational approaches enable controllable text generation despite challenges such as posterior collapse and training instability. More recently, transformer-based masked autoencoding has redefined reconstruction at scale, achieving strong transfer performance across diverse NLP tasks. Despite these advances, several limitations persist, including issues of interpretability, training stability, multilingual fairness, and inconsistent evaluation practices across studies.

Overall, this review confirms that autoencoder-based methods remain a key component of modern NLP. Future work should focus on improving latent space controllability, enhancing training stability, integrating autoencoder objectives with large-scale pretrained models, and developing standardized evaluation frameworks.