Predicting Cybersickness in Virtual Reality from Head–Torso Kinematics Using a Hybrid Convolutional–Recurrent Network Model

Abstract

1. Introduction

2. Materials and Methods

3. Methodology

3.1. Data Preprocessing

3.2. Data Annotation of MS Data

3.3. Model Architecture for MS Prediction

3.3.1. C-RNN Architecture

3.3.2. Models Training and Evaluation

4. Results

5. Discussion

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Bertolini, G.; Straumann, D. Moving in a moving world: A review on vestibular motion sickness. Front. Neurol. 2016, 7, 14. [Google Scholar] [CrossRef]

- Koohestani, A.; Nahavandi, D.; Asadi, H.; Kebria, P.M.; Khosravi, A.; Alizadehsani, R.; Nahavandi, S. A Knowledge Discovery in Motion Sickness: A Comprehensive Literature Review. IEEE Access 2019, 7, 85755–85770. [Google Scholar] [CrossRef]

- Golding, J.F. Motion sickness susceptibility. Auton. Neurosci. Basic Clin. 2006, 129, 67–76. [Google Scholar] [CrossRef]

- Bos, J.E.; Bles, W.; Groen, E.L. A theory on visually induced motion sickness. Displays 2008, 29, 47–57. [Google Scholar] [CrossRef]

- Keshavarz, B.; Hecht, H. Validating an efficient method to quantify motion sickness. Hum. Factors 2011, 53, 415–426. [Google Scholar] [CrossRef] [PubMed]

- D’Amour, S.; Bos, J.E.; Keshavarz, B. The efficacy of airflow and seat vibration on reducing visually induced motion sickness. Exp. Brain Res. 2017, 235, 2811–2820. [Google Scholar] [CrossRef]

- Kennedy, R.S.; Drexler, J.; Kennedy, R.C. Research in visually induced motion sickness. Appl. Ergon. 2010, 41, 494–503. [Google Scholar] [CrossRef]

- Lubeck, A.J.; Bos, J.E.; Stins, J.F. Motion in images is essential to cause motion sickness symptoms, but not to increase postural sway. Displays 2015, 38, 55–61. [Google Scholar] [CrossRef]

- Howard, M.C.; Van Zandt, E.C. A meta-analysis of the virtual reality problem: Unequal effects of virtual reality sickness across individual differences. Virtual Real. 2021, 25, 1221–1246. [Google Scholar] [CrossRef]

- Ng, A.K.; Chan, L.K.; Lau, H.Y. A study of cybersickness and sensory conflict theory using a motion-coupled virtual reality system. Displays 2020, 61, 101922. [Google Scholar] [CrossRef]

- Yildirim, C. Cybersickness during VR gaming undermines game enjoyment: A mediation model. Displays 2019, 59, 35–43. [Google Scholar] [CrossRef]

- Abe, M.; Yoshizawa, M.; Sugita, N.; Tanaka, A.; Chiba, S.; Yambe, T.; Nitta, S.i. A method for evaluating effects of visually-induced motion sickness using ICA for photoplethysmography. In Proceedings of the 2008 30th Annual International Conference of the IEEE Engineering in Medicine and Biology Society, Vancouver, BC, Canada, 20–25 August 2008; pp. 4591–4594. [Google Scholar] [CrossRef]

- Reinhard, R.; Rutrecht, H.M.; Hengstenberg, P.; Tutulmaz, E.; Geissler, B.; Hecht, H.; Muttray, A. The best way to assess visually induced motion sickness in a fixed-base driving simulator. Transp. Res. Part F Traffic Psychol. Behav. 2017, 48, 74–88. [Google Scholar] [CrossRef]

- Salmerón-Manzano, E.; Manzano-Agugliaro, F. The Higher Education Sustainability through Virtual Laboratories: The Spanish University as Case of Study. Sustainability 2018, 10, 4040. [Google Scholar] [CrossRef]

- Hell, S.; Argyriou, V. Machine Learning Architectures to Predict Motion Sickness Using a Virtual Reality Rollercoaster Simulation Tool. In Proceedings of the 2018 IEEE International Conference on Artificial Intelligence and Virtual Reality (AIVR), Taichung, Taiwan, 10–12 December 2018; pp. 153–156. [Google Scholar] [CrossRef]

- Teaford, M.A.; Cook, H.E.; Hassebrock, J.A.; Thomas, R.D.; Smart, L.J. Perceptual Validation of Nonlinear Postural Predictors of Visually Induced Motion Sickness. Front. Psychol. 2020, 11, 1533. [Google Scholar] [CrossRef]

- Zielasko, D.; Riecke, B.E. To Sit or Not to Sit in VR: Analyzing Influences and (Dis)Advantages of Posture and Embodied Interaction. Computers 2021, 10, 73. [Google Scholar] [CrossRef]

- Ramaseri Chandra, A.N.; El Jamiy, F.; Reza, H. A Systematic Survey on Cybersickness in Virtual Environments. Computers 2022, 11, 51. [Google Scholar] [CrossRef]

- Sharifkhani, M.; Davidson, J.; MacCallum, K.; Evans-Freeman, J.; Brown, C.; Bullsmith, C.; Richards, B. Sustainable Practices in Education: Virtual Labs. In People, Partnerships and Pedagogies; Cochrane, T., Narayan, V., Brown, C., MacCallum, K., Bone, E., Deneen, C., Vanderburg, R., Hurren, B., Eds.; ASCILITE Publications: Christchurch, New Zealand, 2023; pp. 205–214. [Google Scholar] [CrossRef]

- Alcayde, A.; Robalo, I.; Montoya, F.G.; Manzano-Agugliaro, F. SCADA System for Online Electrical Engineering Education. Inventions 2022, 7, 115. [Google Scholar] [CrossRef]

- Liu, M.; Yang, B.; Xu, M.; Zan, P.; Chen, L.; Xia, X. Exploring quantitative assessment of cybersickness in virtual reality using EEG signals and a CNN-ECA-LSTM network. Displays 2024, 81, 102602. [Google Scholar] [CrossRef]

- Arcioni, B.; Palmisano, S.; Apthorp, D.; Kim, J. Postural stability predicts the likelihood of cybersickness in active HMD-based virtual reality. Displays 2019, 58, 3–11. [Google Scholar] [CrossRef]

- Balk, S.A.; Bertola, M.A.; Inman, V.W. Simulator Sickness Questionnaire: Twenty Years Later. In Proceedings of the 7th International Driving Symposium on Human Factors in Driver Assessment, Training, and Vehicle Design: Driving Assessment 2013, Bolton Landing, NY, USA, 17–20 June 2013; pp. 257–263. [Google Scholar] [CrossRef]

- Gruden, T.; Popović, N.B.; Stojmenova, K.; Jakus, G.; Miljković, N.; Tomažič, S.; Sodnik, J. Electrogastrography in autonomous vehicles—An objective method for assessment of motion sickness in simulated driving environments. Sensors 2021, 21, 550. [Google Scholar] [CrossRef]

- Keshavarz, B.; Peck, K.; Rezaei, S.; Taati, B. Detecting and predicting visually induced motion sickness with physiological measures in combination with machine learning techniques. Int. J. Psychophysiol. 2022, 176, 14–26. [Google Scholar] [CrossRef] [PubMed]

- Recenti, M.; Ricciardi, C.; Aubonnet, R.; Picone, I.; Jacob, D.; Svansson, H.Á.; Agnarsdóttir, S.; Karlsson, G.H.; Baeringsdóttir, V.; Petersen, H.; et al. Toward predicting motion sickness using virtual reality and a moving platform assessing brain, muscles, and heart signals. Front. Bioeng. Biotechnol. 2021, 9, 635661. [Google Scholar] [CrossRef]

- Zhang, L.L.L.; Wang, J.Q.Q.; Qi, R.R.R.; Pan, L.L.L.; Li, M.; Cai, Y.L.L. Motion Sickness: Current Knowledge and Recent Advance. CNS Neurosci. Ther. 2016, 22, 15–24. [Google Scholar] [CrossRef]

- Iskander, J.; Attia, M.; Saleh, K.; Nahavandi, D.; Abobakr, A.; Mohamed, S.; Asadi, H.; Khosravi, A.; Lim, C.P.; Hossny, M. From car sickness to autonomous car sickness: A review. Transp. Res. Part F Traffic Psychol. Behav. 2019, 62, 716–726. [Google Scholar] [CrossRef]

- Lackner, J.R. Motion sickness: More than nausea and vomiting. Exp. Brain Res. 2014, 232, 2493–2510. [Google Scholar] [CrossRef]

- Chang, C.H.; Chen, F.C.; Kung, W.C.; Stoffregen, T.A. Effects of physical driving experience on body movement and motion sickness during virtual driving. Aerosp. Med. Hum. Perform. 2017, 88, 985–992. [Google Scholar] [CrossRef]

- Chang, C.H.; Stoffregen, T.A.; Cheng, K.B.; Lei, M.K.; Li, C.C. Effects of physical driving experience on body movement and motion sickness among passengers in a virtual vehicle. Exp. Brain Res. 2021, 239, 491–500. [Google Scholar] [CrossRef]

- Hollosi, J.; Ballagi, A.; Kovacs, G.; Fischer, S.; Nagy, V. Bus Driver Head Position Detection Using Capsule Networks under Dynamic Driving Conditions. Computers 2024, 13, 66. [Google Scholar] [CrossRef]

- Saruchi, S.A.; Zamzuri, H.; Hassan, N.; Ariff, M.H.M. Modeling of head movements towards lateral acceleration direction via system identification for motion sickness study. In Proceedings of the 2018 International Conference on Information and Communications Technology, ICOIACT 2018, Yogyakarta, Indonesia, 6–7 March 2018; pp. 633–638. [Google Scholar] [CrossRef]

- Ozkan, A.; Uyan, U.; Celikcan, U. Effects of speed, complexity and stereoscopic VR cues on cybersickness examined via EEG and self-reported measures. Displays 2023, 78, 102415. [Google Scholar] [CrossRef]

- Hwang, J.U.; Bang, J.S.; Lee, S.W. Classification of Motion Sickness Levels using Multimodal Biosignals in Real Driving Conditions. In Proceedings of the 2022 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Prague, Czech Republic, 9–12 October 2022; pp. 1304–1309. [Google Scholar] [CrossRef]

- Islam, R.; Lee, Y.; Jaloli, M.; Muhammad, I.; Zhu, D.; Rad, P.; Huang, Y.; Quarles, J. Automatic Detection and Prediction of Cybersickness Severity using Deep Neural Networks from user’s Physiological Signals. In Proceedings of the 2020 IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Porto de Galinhas, Brazil, 9–13 November 2020; pp. 400–411. [Google Scholar] [CrossRef]

- Islam, R.; Desai, K.; Quarles, J. Cybersickness Prediction from Integrated HMD’s Sensors: A Multimodal Deep Fusion Approach using Eye-tracking and Head-tracking Data. In Proceedings of the 2021 IEEE International Symposium on Mixed and Augmented Reality (ISMAR), Virtual, 4–8 October 2021; pp. 31–40. [Google Scholar] [CrossRef]

- Hag, A.; Qazani, M.R.C.; Wei, L.; Nahavandi, S.; Asadi, H. Attention-Based Deep Learning for Quantifying Simulator Sickness using Eye and Head Motion Data in the Genesis Simulator. In Proceedings of the 2025 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Vienna, Austria, 5–8 October 2025; pp. 1045–1051. [Google Scholar] [CrossRef]

- Hag, A.; Chalak Qazani, M.R.; Asadi, H. Quantifying Motion Sickness in Virtual Reality Using a Multimodal 1CNN–GRU–Attention Approach With GSR Data. IEEE Trans. Intell. Transp. Syst. 2025, 26, 22003–22014. [Google Scholar] [CrossRef]

- Yang, A.H.X.; Kasabov, N.; Cakmak, Y.O. Machine learning methods for the study of cybersickness: A systematic review. Brain Inform. 2022, 9, 24. [Google Scholar] [CrossRef] [PubMed]

- Jeong, D.; Yoo, S.; Yun, J. Cybersickness analysis with EEG using deep learning algorithms. In Proceedings of the 26th IEEE Conference on Virtual Reality and 3D User Interfaces, VR 2019 Proceedings, Osaka, Japan, 23–27 March 2019; pp. 827–835. [Google Scholar] [CrossRef]

- Clevert, D.A.; Unterthiner, T.; Hochreiter, S. Fast and accurate deep network learning by exponential linear units (ELUs). In Proceedings of the 4th International Conference on Learning Representations, ICLR 2016 Conference Track Proceedings, San Juan, Puerto Rico, 2–4 May 2016; pp. 1–14. [Google Scholar] [CrossRef]

- Castro, J.B.; Feitosa, R.Q.; Happ, P.N. An Hybrid Recurrent Convolutional Neural Network for Crop Type Recognition Based on Multitemporal Sar Image Sequences. In Proceedings of the 2018 IEEE International Geoscience and Remote Sensing Symposium, IGARSS 2018, Valencia, Spain, 22–27 July 2018; pp. 3824–3827. [Google Scholar] [CrossRef]

- Kim, J.; Kim, W.; Oh, H.; Lee, S.; Lee, S. A Deep Cybersickness Predictor Based on Brain Signal Analysis for Virtual Reality Contents. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 10579–10588. [Google Scholar] [CrossRef]

- Lagoutaris, V.; Moustakas, K. Motion Prediction Of Traffic Agents With Hybrid Recurrent-Convolutional Neural Networks. In Proceedings of the International Conference on Digital Signal Processing, DSP, Rhodes (Rodos), Greece, 11–13 June 2023; pp. 1–5. [Google Scholar] [CrossRef]

- Li, Y.; Liu, A.; Ding, L. Machine learning assessment of visually induced motion sickness levels based on multiple biosignals. Biomed. Signal Process. Control 2019, 49, 202–211. [Google Scholar] [CrossRef]

- Laessoe, U.; Abrahamsen, S.; Zepernick, S.; Raunsbaek, A.; Stensen, C. Motion sickness and cybersickness—Sensory mismatch. Physiol. Behav. 2023, 258, 114015. [Google Scholar] [CrossRef]

- Walker, A.D.; Muth, E.R.; Switzer, F.S.; Hoover, A. Head movements and simulator sickness generated by a virtual environment. Aviat. Space Environ. Med. 2010, 81, 929–934. [Google Scholar] [CrossRef] [PubMed]

| Files | Subjects | Data Structure | Duration | Frequency Rate |

|---|---|---|---|---|

| Movement data (40 .txt files) | 40 subjects; 1 file per subject | 6 DOF (X, Y, Z, A, E, R); 3 receivers | 5–40 min | 60 Hz |

| SSQ raw data.xlsx | 1 row per subject | Gender, condition (sick/well), pre-SSQ and post-SSQ | – | – |

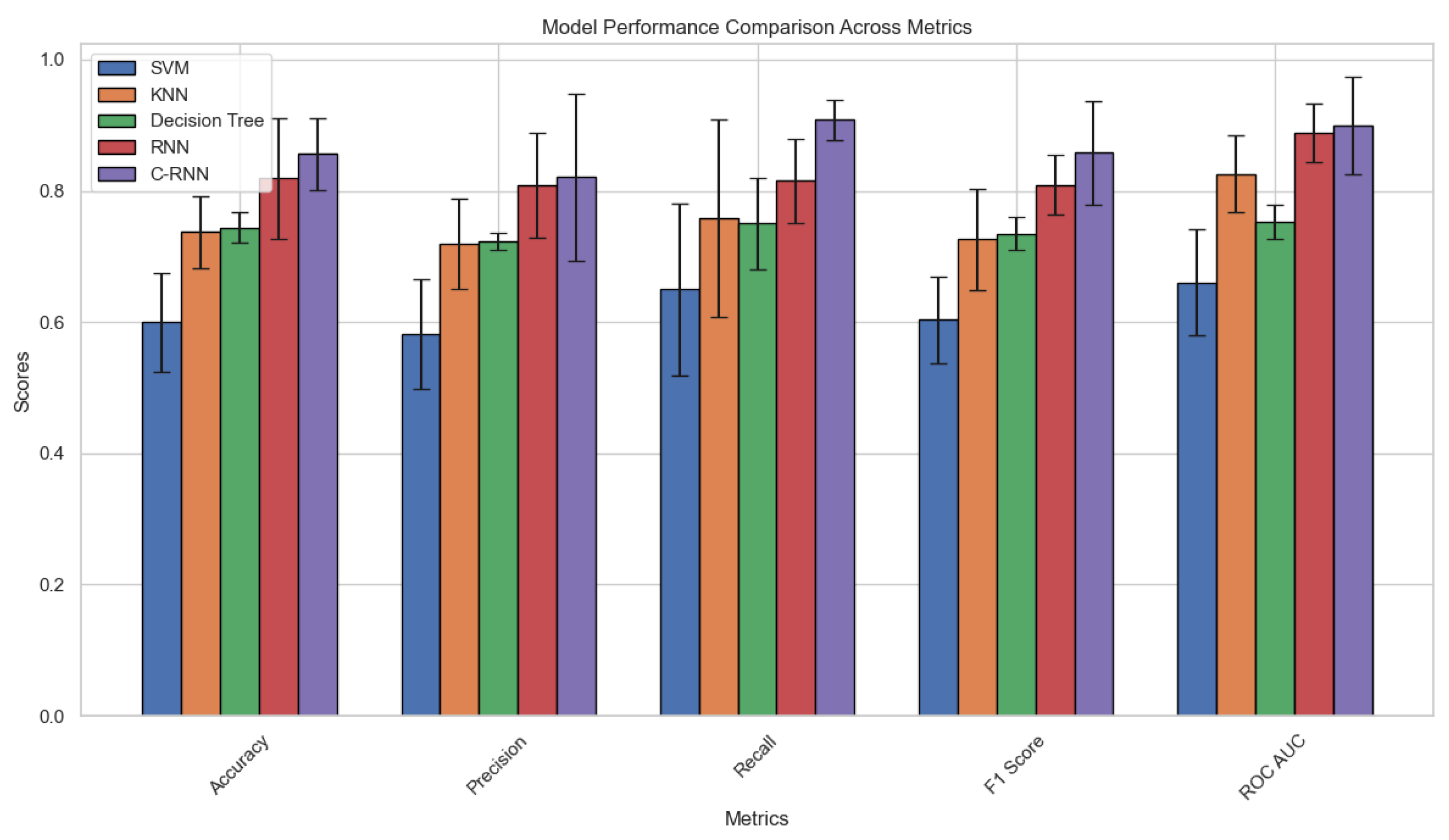

| Algorithm | Precision | Recall | F-Score | ROC AUC | Accuracy |

|---|---|---|---|---|---|

| SVM | 58% | 65% | 60% | 66% | 60% |

| KNN | 72% | 76% | 73% | 82.5% | 73.75% |

| DT | 72% | 75% | 73.5% | 75% | 74.38% |

| RNN | 80% | 78% | 79% | 88.5% | 81.88% |

| C-RNN | 83% | 89% | 86% | 90.01% | 85.63% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Hag, A.; Asadi, H.; Qazani, M.R.C.; Hoang, T.; Kulkarni, A.; Greuter, S.; Nahavandi, S. Predicting Cybersickness in Virtual Reality from Head–Torso Kinematics Using a Hybrid Convolutional–Recurrent Network Model. Computers 2026, 15, 193. https://doi.org/10.3390/computers15030193

Hag A, Asadi H, Qazani MRC, Hoang T, Kulkarni A, Greuter S, Nahavandi S. Predicting Cybersickness in Virtual Reality from Head–Torso Kinematics Using a Hybrid Convolutional–Recurrent Network Model. Computers. 2026; 15(3):193. https://doi.org/10.3390/computers15030193

Chicago/Turabian StyleHag, Ala, Houshyar Asadi, Mohammad Reza Chalak Qazani, Thuong Hoang, Ambarish Kulkarni, Stefan Greuter, and Saeid Nahavandi. 2026. "Predicting Cybersickness in Virtual Reality from Head–Torso Kinematics Using a Hybrid Convolutional–Recurrent Network Model" Computers 15, no. 3: 193. https://doi.org/10.3390/computers15030193

APA StyleHag, A., Asadi, H., Qazani, M. R. C., Hoang, T., Kulkarni, A., Greuter, S., & Nahavandi, S. (2026). Predicting Cybersickness in Virtual Reality from Head–Torso Kinematics Using a Hybrid Convolutional–Recurrent Network Model. Computers, 15(3), 193. https://doi.org/10.3390/computers15030193