3.2. Abnormal Social Interaction Modeling

We focus on how to model the social interaction part , which is used to simulate risk perception.

DVA Multivariate Gaussian Space. Previous studies [

31,

49] treat the neighbor social interactions to ego pedestrian

i as social meta-components

. In this work, we construct meta-components as

,

,

}.

Relative

Distance

. Neighbor agents exert different influences on the target agent depending on their current distances from the ego one. Formally, for any neighbor

j of ego pedestrian

i,

Absolute

Velocity

. Not only do high-velocity neighbors pose a greater threat, but static agents can too, especially if they are close by, as this may prompt the target pedestrian to take proactive avoidance measures. Formally, for any neighbor

j of an ego pedestrian

i,

Relative

Angle

. Pedestrians often take advantage of the relative orientation angle to judge their surroundings (e.g., if there are crowds to the north). Formally, for any neighbor

j of an ego pedestrian

i,

We project

into a multivariate Gaussian space

to reflect its uncertainty, where

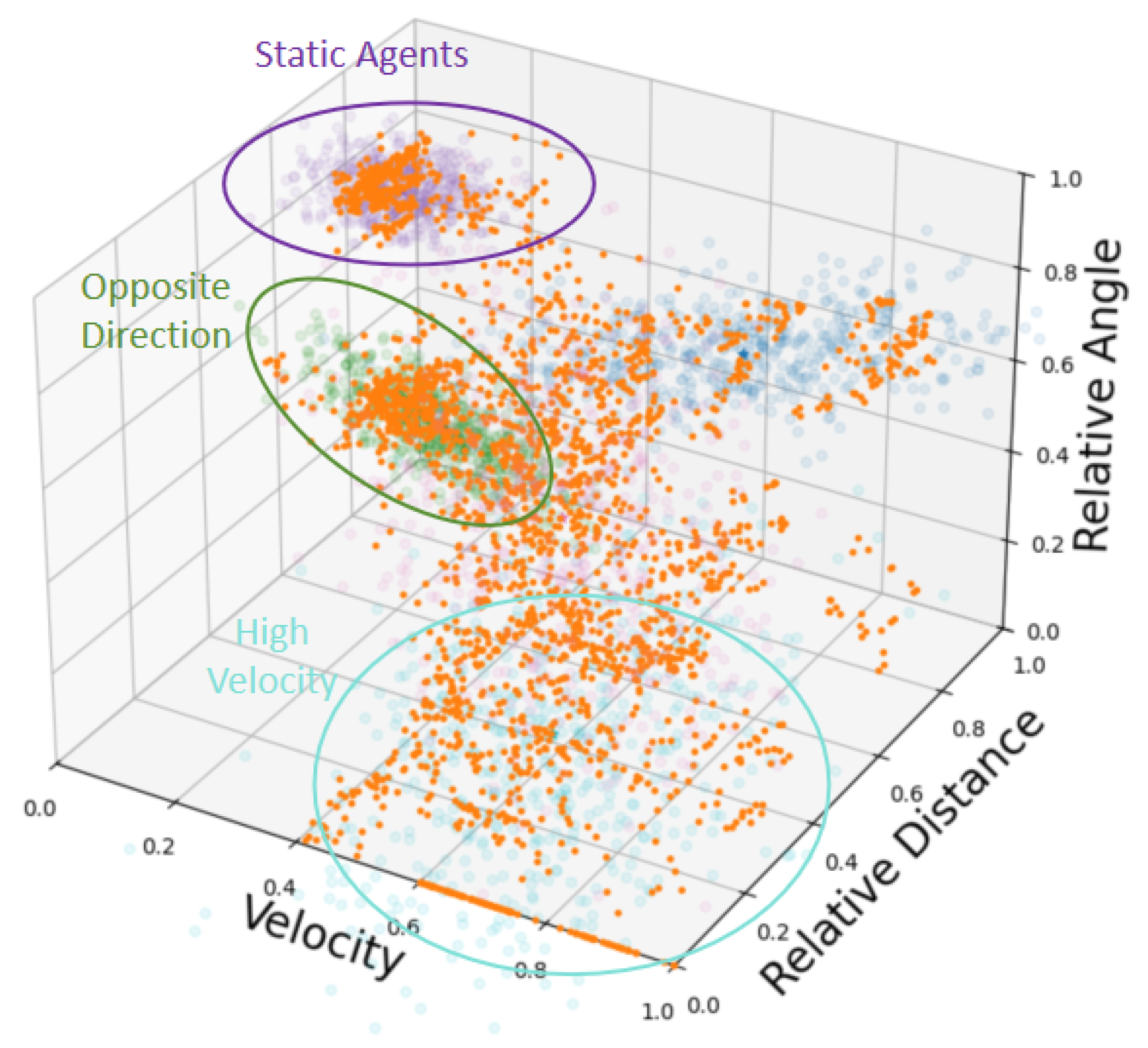

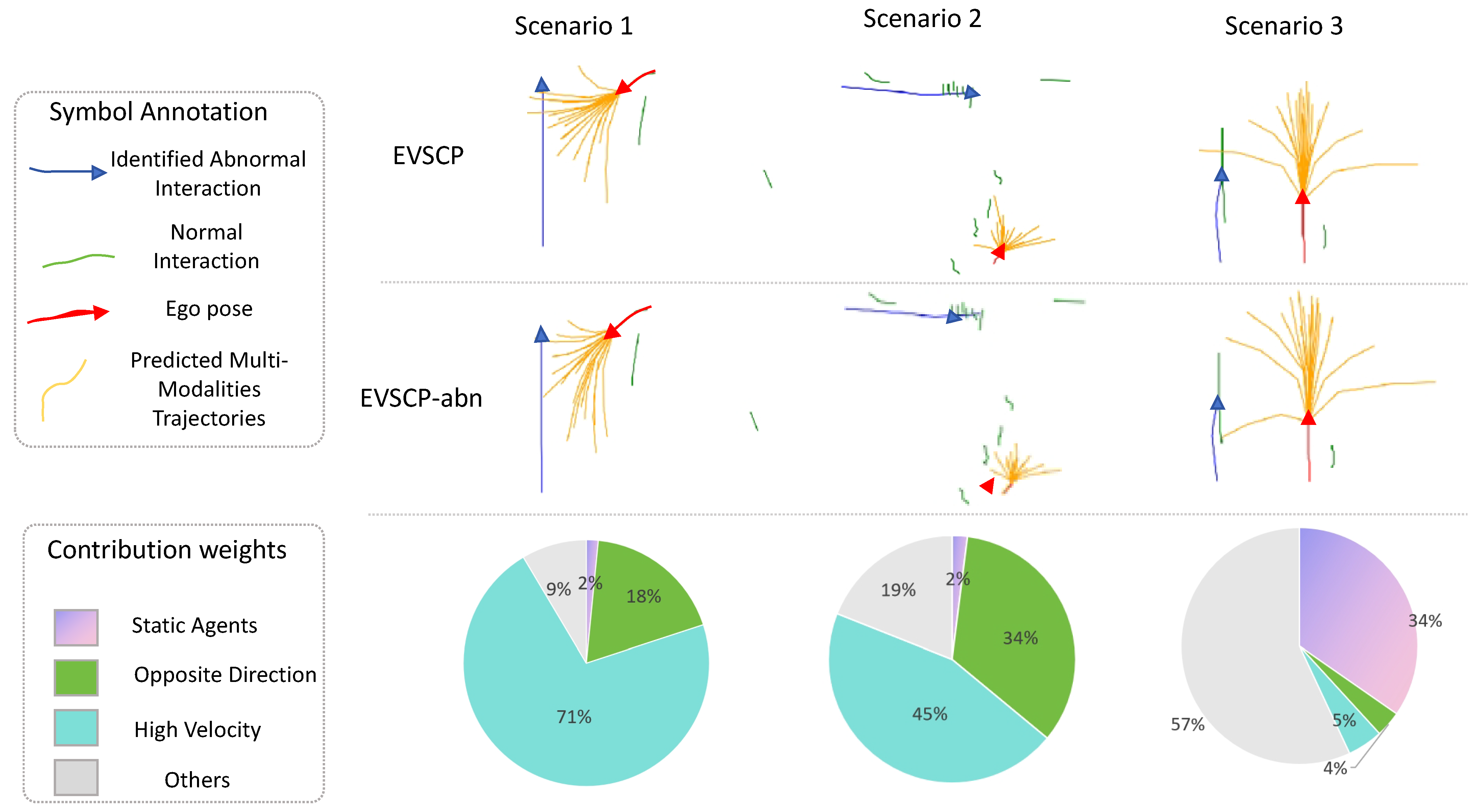

Abnormal Social Meta-Components. Abnormal social behaviors at the scene level have certain commonalities (e.g., in congested environments, agents may change direction to accelerate overtaking, while high-velocity agents will prioritize avoiding static neighbors on their paths forward). However, these interactions in DVA space may be long-tail and influenced by the combined effects of multiple independent variables (e.g., in the previous example, ‘high-velocity’ corresponds to absolute velocity

and ‘on the forward path’ corresponds to relative angle

—the two factors interact to create the aforementioned long-tail scenarios). To deal with these long-tail social behaviors, a GMM

with

components is constructed to extract abnormal interactions. Inspired by [

31], our abnormal interactions represent another

spatial interactive context so that we can handle the abnormal interaction sequence along with the object trajectory (

=

) for easy alignment and concatenation as well. In detail, for all social meta-components in the training set of

pedestrians

, we use

to fit them for the first time, the optimization goal

L of which is to maximize the maximum-likelihood function in Equation (

6) through an EM algorithm.

where

and

.

is the probability density function of a single trivariate Gaussian component representing the distribution of relative distance, absolute velocity, and relative angle.

We compute posterior probabilities

for each social meta-component in

, then extract abnormal interactions

by filtering out normal ones where

is the threshold for the definition of abnormal social interactions. After that,

manages to fit the

set for

the second time with the initial center of the normal social meta-components of the first fit stage via Equation (

7):

where

is named the

n-th abnormal interaction base after the second fit. The workflow diagram in

Figure 3 describes the above two-stage GMM fitting procedure, which can be conducted in an offline manner.

When training or performing inference online,

is the indicator function used to judge whether the pedestrian

j is an abnormal neighbor to which the pedestrian ego

i should pay attention.

is the filtering threshold for abnormal social interactions during training and evaluation.

Denote

as the abnormal neighbor set for

i. For an abnormal agent

j, for

i,

will be decomposed and projected to several abnormal social component bases generated during training via Equation (

9):

where

and

.

Under the assumption of mutual independence among abnormal interaction bases, we aggregate abnormal neighbors

according to their projection lengths

to these bases as in Equation (

10), where

n represents the

n-th abnormal social interaction base. Note that we adopt the reparameterization trick [

50] to generate the

n-th component of

here.

Our serialized abnormal social uncertainty feature

is defined as Equation (

11), where

is denoted as an MLP layer with the tanh activation function. Note that if an ego agent has no abnormal interaction component, we pad its social abnormal feature with zero.

The characteristic of abnormal social interaction

will be concatenated (∥) with the past trajectory characteristic of the ego agent

produced by the backbone encoder. Then, a temporal attention module

combined with a list of MLP modules is set to form our final social interaction feature

:

3.3. Rare Intention Modeling

In this part, we focus on modeling intention feature

, which is used to simulate goal formation through a novel prototypical contrastive learning (PCL) method to address rare intentions. We initialize a learnable waypoint slots set

with length of multi-modalities

M. Another multi-head attention layer

uses

as query

,

as key

, and value

to encode inputs of PCL

to form

via Equation (

13):

The number of our intention base is

. We adopt a two-stage GMM fitting paradigm where the first stage captures global goal distributions and the second stage focuses on long-tail intentions. In detail, we regard the last points of future trajectories in training set

as goal intentions and first use a GMM with

components

as

to fit them in the training set. The goals with the lowest log-likelihood score

among those filtered by the

ratio, denoted as

, are filtered out and defined as rare intentions. Then, we utilize another GMM

with

components to fit

. We then combine the two GMMs

and

to form the new, larger

-component GMM

of Equation (

14), where {

}, corresponding to Gaussian components

through optimization goal Equation (

15) for contrastive supervision later, which means the first

Gaussian (

) components of

are generated by all endpoints

e while the second

Gaussian components of

(

) are generated by the

long-tail endpoints

.

In intention-clustered label

prediction for sample

i,

is used to find the intention Gaussian component

with maximum posterior probability via Equation (

16):

Having obtained the clustered labels

, we proceed to leverage them in our contrastive learning framework to supervise the learning of intention features

defined in Equation (

13). Notably, a final MLP

prevents conflicts between motion forecasting loss and contrastive learning loss, which means

is passed through

as the last layer for the prototypical contrastive learning but not in intention prediction. We denote the PCL encoder module list as

= [

,

,

].

Traditional unsupervised contrastive learning methods like MoCo [

39] get feature input without gradients. To align with them, we pretrain the model with only Winner-Takes-All (WTA) ADE loss for trajectory points

, the calculation of which is described in Equation (

17), where

means the

M-th-modality predicted trajectory point of timestep

t outputted by our frequency-based decoder

D (whose details are shown in

Section 3.4) and

means the ground truth.

The WTA strategy chooses one slot among

M slots with the best ADE where the gradients exclusively backpropagate to preserve diversity. Then, we freeze (*) all parameters of the pretrained backbone prediction encoder

shown in

Figure 2a, which eliminates the need to design a dual momentum encoder

containing the dual parameters of the previous abnormal social interaction modeling when we train the PCL because the input of PCL

(which actually is the output of

as well) receives no grad.

Instead of conducting contrastive loss directly on the past trajectory context, we conduct our prototypical contrastive learning on multi-modal slots by using the WTA strategy in advance to maintain prediction diversity. Consequently, the feature clustering and updating procedure must be performed per iteration rather than per epoch because the specific slot with the best

defined in Equation (

17) to be used in loss computation remains undetermined and distinct for different samples. Since the clustering operates solely on

x and

y dimensions of intention, the computation is highly efficient.

We then focus on how to define our prototypical contrastive learning loss (PCL loss)

. In Equation (

21),

consists of instance-wise term

and instance-prototype term

. The first term brings the sample features within the class closer, while the second term maximizes inter-cluster separation. Standard PCL [

39,

40] assigns a sample to a single discrete class label

, whereas our goal intention follows a

continuous distribution. For each sample

i, we use a finite set of discrete components

with length

K to mimic the continuity. As a result, our approach handles continuous intention distributions through

K-nearest GMM component allocation in the PCL loss, effectively simulating distributional continuity with multiple discrete elements but not a single component. We verify the effectiveness of the modeling in our experiments. In detail, given an endpoint intention

, we only find the component

with the highest posterior log likelihood via Equation (

16).

For highly efficient computation, we want to directly look up the other components based on . In detail, we calculate the pairwise Kullback–Leibler (KL) divergence of any two multivariant Gaussian components in .

The indexes of the K-nearest Gaussian distributions for each intention component are calculated to look up the KL divergence of the K-nearest neighbors (including itself) from , which is used to construct continuous labels and calculate our continuous contrastive loss later.

Denoting

as the intention embeddings (

, defined in Equation (

13)) of sample

i, we construct positive sample feature pairs

and negative sample feature pairs

. Notably, to simulate continuity of endpoints in the (

x,

y) two-dimensional space,

adopts a hierarchical structure containing all samples belonging to the

K-nearest components of

. In detail,

means arbitrary samples belonging to the

k-th nearest component of sample

i. We look up

and denote KL divergences between

and

of sample

i as {

,

, …,

}. Equation (

18) shows our

, where

denotes an arbitrary sample in the same batch as

i and

r denotes batch size.

is a softmax function used to assign weights based on the KL divergence between Gaussian components, and

is the temperature coefficient.

The prototypical features

are updated per batch sample according to their maximum-likelihood intention-clustered labels

via Equation (

19), where

is the momentum coefficient if the batch has any sample with the label and

is the indicator function to judge whether

of sample

j belongs to the

n-th intention Gaussian component.

Equation (

20) shows our

, where

is the prototype of the cluster which the

k-th nearest neighbor GMM component of sample

i belongs to, and

is the prototype of an arbitrary cluster

j. In summary, our approach refines intention modeling by specifically targeting challenging edge-case scenarios through the training approach above. Algorithm 1 shows the whole process of our rare intention prototypical contrastive learning.

| Algorithm 1 Intention Prototypical Contrastive Learning |

Input: KL divergence of K-nearest intention GMM components , past trajectories X, predicted trajectory in advance , ground truth of future trajectory , past timesteps , future timesteps , cluster centroid feature C, momentum coefficient . Parameter: intention GMM , frozen backbone encoder , PCL encoder , momentum PCL encoder .

- 1:

Let - 2:

C←0. - 3:

while not MaxEpoch do - 4:

for x in DataLoader(X) do - 5:

e = . {Regard the last point of future trajectory e as intention.} - 6:

Let {Intention pseudo-labels as Equation ( 16).} - 7:

. - 8:

, . - 9:

{Lookup min ADE index in advance as Equation ( 17).} - 10:

Update prototype features C according to Equation ( 19). - 11:

Calculate based on as Equations ( 18), ( 20) and ( 21). - 12:

. - 13:

Momentum update based on . - 14:

end for - 15:

end while

|

3.4. Frequency-Sensitive Decoder Combining Interactions and Intentions

In this part, we propose a novel frequency-based decoder

D to integrate the social interaction part

introduced in

Section 3.2 and the intention part

introduced in

Section 3.3, corresponding to

, then obtain final motions

to execute. We have

and

, where

is past timesteps and

M is the number of multi-modalities. As in Equation (

22), we regress endpoints directly based on

with an FC layer intention decoder

to obtain goal endpoints

.

The key is to adopt an endpoint-driven method, and the rest of the trajectory points will be conditionally completed under the drive of the terminal location in the frequency domain through Discrete Fourier Transform (DFT). By decomposing a signal into its constituent sinusoids, DFT allows deep learning models to identify and leverage repetitive patterns and structures within the data that are often obscure in the original time domain [

51]. To interpolate intermediate trajectory points, we demonstrate a gate-weighting, as shown in Equation (

23):

where

and

are MLPs for social part

s and intention part

i, respectively, and

is an MLP with a softmax activation function used to normalize weights between the two parts. The output social weight and intention weight are gathered as

, which is used to generate the Alternate-Current Component (ACC) of the velocity in the frequency domain after passing through an FC layer

. The ACC is generated via Equation (

24), which includes the Fourier components except the first Direct-Current Component (DCC):

Because of the theorem that the real part of the DCC after the Discrete Fourier Transform (DFT) equals the sum of all points in the temporal series [

52], we concatenate goal endpoints

e with the zero image part to form the DCC, which can be regarded as the accumulation of instantaneous velocity

at all future steps. Finally, we concatenate the DCC with the ACC and employ an inverse Discrete Fourier Transform (iDFT) layer to reconstruct future velocity profiles whose cumulative sum is exactly the DCC, that is, the endpoint

e, so that we obtain accurate predicted trajectory

, as shown in Equation (

25):