Trust-Aware Federated Graph Learning for Secure and Energy-Efficient IoT Ecosystems

Abstract

1. Introduction

- We develop a trust-aware federated graph learning model that incorporates device reputation, dynamic trust updating, and malicious-node mitigation within an IoT FL context.

- We introduce a graph-pruning and adaptive communication scheduling layer to minimize energy consumption and latency while maintaining model accuracy.

- We validate the proposed framework on realistic IoT datasets and scenarios, demonstrating improvements in detection accuracy, resilience to adversarial behavior, and reductions in energy/communication overhead relative to baseline FL and GNN models.

2. Background and Related Work

2.1. Federated Learning in IoT

2.2. Graph Neural Networks for Distributed Intelligence

2.3. Trust and Security in Federated Systems

2.4. Energy Efficiency in Edge/Federated AI for IoT

- (1)

- Federated learning for distributed IoT intelligence;

- (2)

- Graph neural networks as topology-aware learning frameworks;

- (3)

- Trust and security mechanisms in federated environments; and

- (4)

- Energy efficiency for sustainable edge computing.

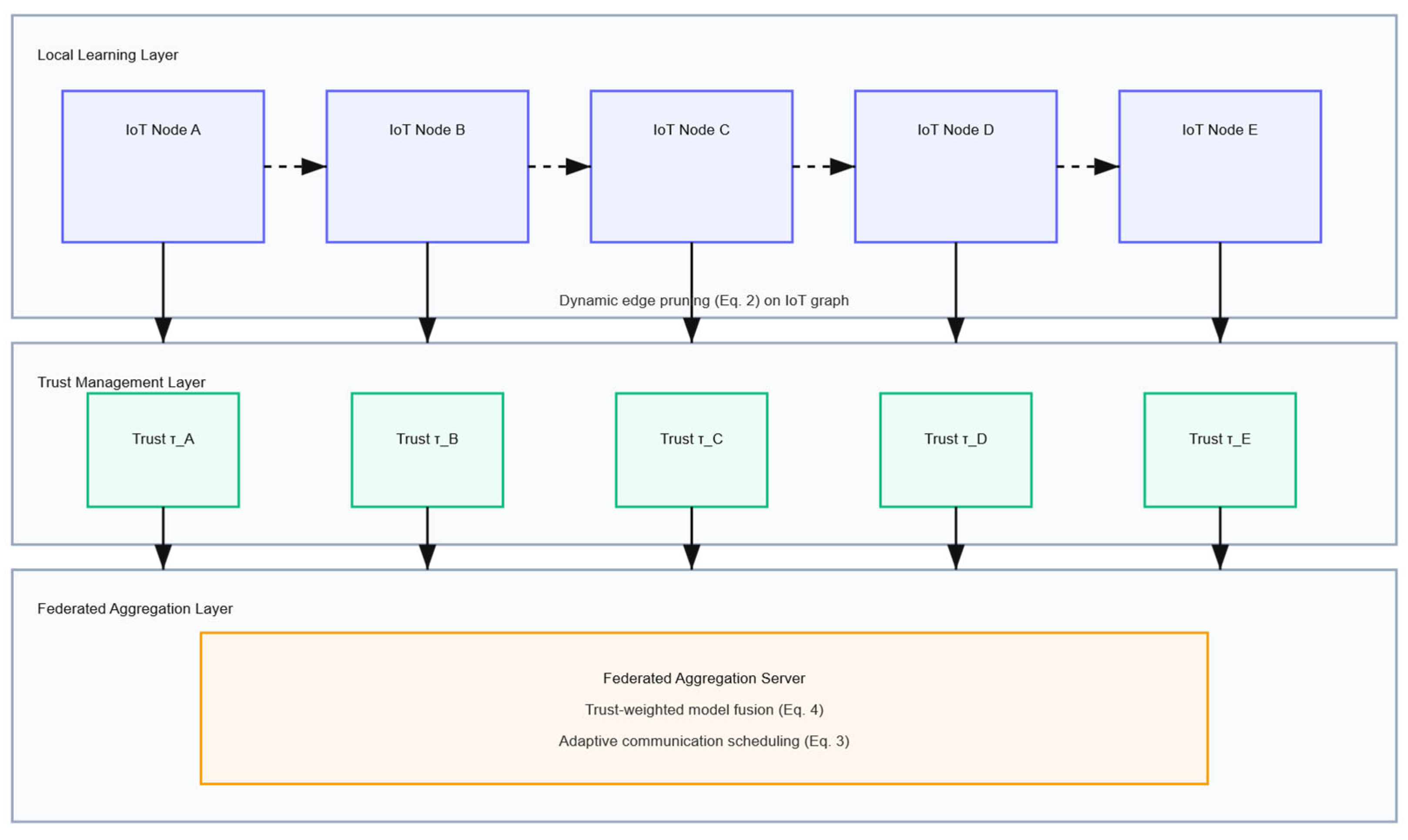

3. Proposed Framework: Trust-FedGNN

3.1. System Overview

- (1)

- A graph-based representation of the IoT network, where each device is a node and edges encode communication or data-correlation strength.

- (2)

- A trust-adaptive aggregation layer that assigns dynamic reputation weights to nodes during model fusion, discouraging contributions from potentially malicious or unreliable participants.

3.2. Trust Modeling and Reputation Update

3.2.1. Reliability Trust

3.2.2. Energy-Aware Availability Modeling

3.2.3. Discussion

3.2.4. Trust Computation Details

3.3. Dynamic Edge Pruning for Energy Efficiency

3.4. Adaptive Communication Scheduling

3.5. Algorithmic Outline

- Initialize global model and trust vector .

- For each round t:

- a.

- Each selected node i updates local parameters on its data.

- b.

- Compute trust update .

- c.

- Perform dynamic graph pruning.

- d.

- Aggregator executes trust-weighted fusion:

- e.

- Update communication schedule based on residual energy

- Repeat until convergence.

| Algorithm 1. Trust-FedGNN Training Procedure. |

| Input: Initial global model ; initial trust vector ; client set ; total communication rounds T; trust smoothing factor α; reliability weights ; energy thresholds . Output: Final global model . Initialization: Each client initializes local model and trust score . for to T do 1. Client Selection (Energy-Aware Scheduling): The server selects a subset of available clients based on the participation probability , which jointly accounts for local loss decay and residual energy availability, as defined in Section 3.4. 2. Local Graph Construction and Pruning (Client-Side): For each selected client : Construct a local interaction graph based on feature similarity or communication history. Apply dynamic edge pruning to obtain a sparse graph , removing edges with low relevance under the current energy budget. 3. Local Model Update: Each client trains a local GNN model on and computes: 4. Reliability Evaluation: For each :

5. Trust Update: Update reliability trust for each participating client: 6. Trust-Weighted Aggregation: The server updates the global model using trust-weighted aggregation: 7. Energy and Availability Update: Each client updates its residual energy estimate and availability score, which are subsequently used to compute participation probabilities in future rounds. end for |

3.6. Summary

4. Experimental Evaluation

4.1. Datasets and Experimental Setup

- TON_IoT (Telemetry-Oriented Network IoT Dataset)—a comprehensive telemetry and network dataset from the University of New South Wales, containing heterogeneous IoT, telemetry, and network traces for intrusion detection [22].

- IoT-23—a curated set of labeled benign and malicious traffic captures collected from multiple IoT devices, enabling malware detection and traffic classification [23].

- OpenFed-IoT—a synthetic yet realistic dataset for evaluating heterogeneous device participation and non-IID data distribution in federated learning [24].

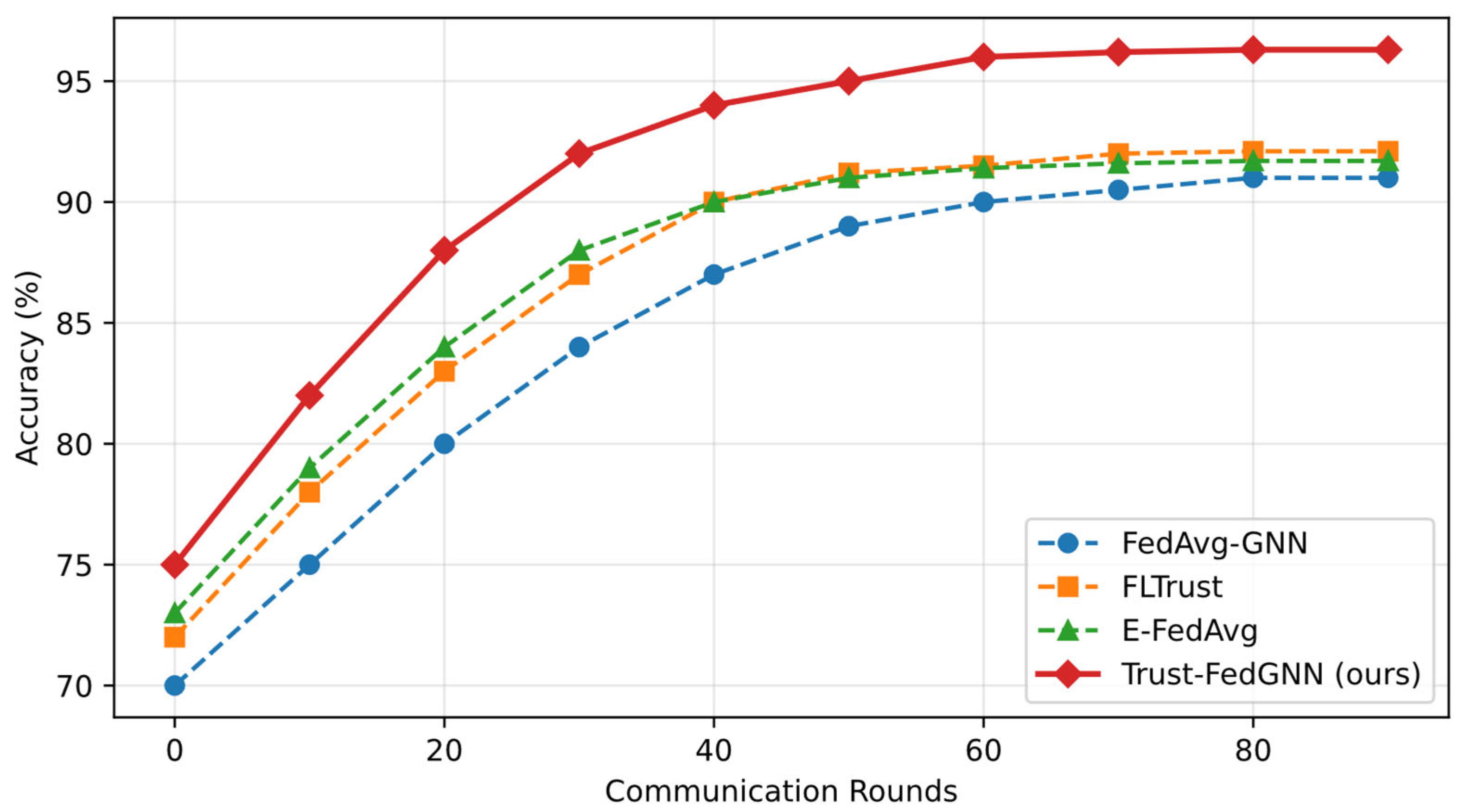

4.2. Baseline Models

- FedAvg-GNN—classical federated averaging of GNN models without trust or energy adaptation [25].

- FLTrust—trust-weighted aggregation using cosine-similarity filtering [26].

- E-FedAvg—energy-aware federated learning using client selection heuristics [19].

- Local-only GNN—each device trains a GNN independently, without federation.

4.3. Performance Metrics

4.4. Threat Model and Robustness Assumptions

4.4.1. Adversary Capabilities

4.4.2. Attack Intensity and Participation

4.4.3. Defense Scope and Limitations

4.5. Communication and Energy Accounting

4.5.1. Communication Payload Breakdown

4.5.2. Energy Measurement and Normalization

4.5.3. Fairness of Comparison

4.6. Results and Discussion

- Accuracy improvement: Trust-FedGNN achieved up to +5.8% higher accuracy and +3.1% higher F1-score on TON_IoT.

- Energy savings: A mean reduction of ~22% in energy was observed compared with E-FedAvg due to dynamic edge pruning and adaptive scheduling.

- Robustness: Under a 20% malicious-client scenario, performance degraded by only 4.2%, versus > 10% for FLTrust.

- Communication efficiency: The adaptive scheduling reduced the total transmitted payload by approximately 28% without significant convergence delay.

4.7. Summary

5. Conclusions and Future Work

- A trust-propagation model for reputation-aware aggregation, resilient to unreliable or malicious nodes.

- A graph-aware optimization strategy that captures relational dependencies among IoT devices.

- An energy-adaptive scheduling mechanism that minimizes unnecessary communication without compromising convergence.

- A comprehensive experimental evaluation confirming that the combined design improves accuracy, robustness, and energy efficiency across heterogeneous IoT datasets.

Funding

Data Availability Statement

Conflicts of Interest

References

- Dritsas, E.; Trigka, M. Federated Learning for IoT: A Survey of Techniques, Challenges, and Applications. J. Sens. Actuator Netw. 2025, 14, 9. [Google Scholar] [CrossRef]

- Khajehali, N.; Yan, J.; Chow, Y.-W.; Fahmideh, M. A Comprehensive Overview of IoT-Based Federated Learning: Focusing on Client Selection Methods. Sensors 2023, 23, 7235. [Google Scholar] [CrossRef] [PubMed]

- Hameed, R.T.; Mohamad, O.A. Federated Learning in IoT: A Survey on Distributed Decision Making. Babylon. J. Internet Things 2023, 2023, 1–7. [Google Scholar] [CrossRef] [PubMed]

- Vashisth, S.; Goyal, A. A Survey of Federated Learning for IoT: Addressing Resource Constraints and Heterogeneous Challenges. Informatica 2025, 49, 1–24. [Google Scholar] [CrossRef]

- Lyu, L.; Yu, H.; Zhao, J.; Yang, Q. Threats to Federated Learning. In Federated Learning: Privacy and Incentive; Yang, Q., Fan, L., Yu, H., Eds.; Springer International Publishing: Cham, Switzerland, 2020; pp. 3–16. ISBN 978-3-030-63076-8. [Google Scholar]

- Nalinipriya, G.; Lydia, E.L.; Sree, S.R.; Nikolenko, D.; Potluri, S.; Ramesh, J.V.N.; Jayachandran, S. Optimal Directed Acyclic Graph Federated Learning Model for Energy-Efficient IoT Communication Networks. Sci. Rep. 2024, 14, 22525. [Google Scholar] [CrossRef] [PubMed]

- Wang, L.; Li, Y.; Zuo, L. Trust Management for IoT Devices Based on Federated Learning and Blockchain. J. Supercomput. 2024, 81, 232. [Google Scholar] [CrossRef]

- Dai, E.; Zhao, T.; Zhu, H.; Xu, J.; Guo, Z.; Liu, H.; Tang, J.; Wang, S. A Comprehensive Survey on Trustworthy Graph Neural Networks: Privacy, Robustness, Fairness, and Explainability. Mach. Intell. Res. 2024, 21, 1011–1061. [Google Scholar] [CrossRef]

- Xuan Tung, N.; Tung Giang, L.; Duc Son, B.; Geun-Jeong, S.; Van Chien, T.; Hanzo, L.; Hwang, W.-J. Graph Neural Networks for Next-Generation-IoT: Recent Advances and Open Challenges. IEEE Commun. Surv. Tutor. 2026, 28, 2226–2262. [Google Scholar] [CrossRef]

- Alahmari, S.; Alghamdi, I. A Comprehensive Survey on Energy-Efficient and Privacy-Preserving Federated Learning for Edge Intelligence and IoT. Results Eng. 2025, 28, 107849. [Google Scholar] [CrossRef]

- Akhtarshenas, A.; Vahedifar, M.A.; Ayoobi, N.; Maham, B.; Alizadeh, T.; Ebrahimi, S.; López-Pérez, D. Federated Learning: A Cutting-Edge Survey of the Latest Advancements and Applications. Comput. Commun. 2024, 228, 107964. [Google Scholar] [CrossRef]

- Dong, G.; Tang, M.; Wang, Z.; Gao, J.; Guo, S.; Cai, L.; Gutierrez, R.; Campbel, B.; Barnes, L.E.; Boukhechba, M. Graph Neural Networks in IoT: A Survey. ACM Trans. Sens. Netw. 2023, 19, 1–50. [Google Scholar] [CrossRef]

- Fotia, L.; Delicato, F.; Fortino, G. Trust in Edge-Based Internet of Things Architectures: State of the Art and Research Challenges. ACM Comput. Surv. 2023, 55, 182. [Google Scholar] [CrossRef]

- Wang, M.-J.-S.; Xu, S.-H.; Jiang, J.-C.; Xiang, D.; Hsieh, S.-Y. Global Reliable Diagnosis of Networks Based on Self-Comparative Diagnosis Model and g-Good-Neighbor Property. J. Comput. Syst. Sci. 2026, 155, 103698. [Google Scholar] [CrossRef]

- Jiang, Y.; Ma, B.; Wang, X.; Yu, G.; Yu, P.; Wang, Z.; Ni, W.; Liu, R.P. Blockchained Federated Learning for Internet of Things: A Comprehensive Survey. ACM Comput. Surv. 2024, 56, 258. [Google Scholar] [CrossRef]

- Cao, Y.; Liu, D.; Zhang, S.; Wu, T.; Xue, F.; Tang, H. T-FedHA: A Trusted Hierarchical Asynchronous Federated Learning Framework for Internet of Things. Expert Syst. Appl. 2024, 245, 123006. [Google Scholar] [CrossRef]

- Xu, J.; Zhang, C.; Jin, L.; Su, C. A Trust-Aware Incentive Mechanism for Federated Learning with Heterogeneous Clients in Edge Computing. J. Cybersecurity Priv. 2025, 5, 37. [Google Scholar] [CrossRef]

- Zhou, A.; Yang, J.; Qi, Y.; Shi, Y.; Qiao, T.; Zhao, W.; Hu, C. Hardware-Aware Graph Neural Network Automated Design for Edge Computing Platforms. In Proceedings of the 2023 60th ACM/IEEE Design Automation Conference (DAC), San Francisco, CA, USA, 9–13 July 2023; pp. 1–6. [Google Scholar]

- Xia, J.; Zhang, Y.; Shi, Y. Towards Energy-Aware Federated Learning via MARL: A Dual-Selection Approach for Model and Client. In Proceedings of the 43rd IEEE/ACM International Conference on Computer-Aided Design; Association for Computing Machinery: New York, NY, USA, 2025; pp. 1–9. ISBN 979-8-4007-1077-3. [Google Scholar]

- Arouj, A.; Abdelmoniem, A.M. Towards Energy-Aware Federated Learning via Collaborative Computing Approach. Comput. Commun. 2024, 221, 131–141. [Google Scholar] [CrossRef]

- Baqer, M. Energy-Efficient Federated Learning for Internet of Things: Leveraging In-Network Processing and Hierarchical Clustering. Future Internet 2025, 17, 4. [Google Scholar] [CrossRef]

- Moustafa, N.; Ahmed, M.; Ahmed, S. Data Analytics-Enabled Intrusion Detection: Evaluations of ToN_IoT Linux Datasets. In Proceedings of the 2020 IEEE 19th International Conference on Trust, Security and Privacy in Computing and Communications (TrustCom), Guangzhou, China, 29 December 2020–1 January 2021; pp. 727–735. [Google Scholar]

- Garcia, S.; Parmisano, A.; Erquiaga, M.J. IoT-23: A Labeled Dataset with Malicious and Benign IoT Network Traffic; Zenodo: Geneva, Switzerland, 2020. [Google Scholar]

- Chen, D.; Tan, V.J.; Lu, Z.; Wu, E.; Hu, J. OpenFed: A Comprehensive and Versatile Open-Source Federated Learning Framework. In Proceedings of the 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Vancouver, BC, Canada, 17–24 June 2023; pp. 5018–5026. [Google Scholar]

- McMahan, B.; Moore, E.; Ramage, D.; Hampson, S.; Arcas, B.A. y Communication-Efficient Learning of Deep Networks from Decentralized Data. In Proceedings of the 20th International Conference on Artificial Intelligence and Statistics, Fort Lauderdale, FL, USA, 20–22 April 2017; pp. 1273–1282. [Google Scholar]

- Cao, X.; Fang, M.; Liu, J.; Gong, N.Z. FLTrust: Byzantine-Robust Federated Learning via Trust Bootstrapping. In Proceedings of the Network and Distributed Systems Security (NDSS) Symposium 2021, Virtual, 21–25 February 2021. [Google Scholar]

| Variable | Description | Formula | Range | Computed at |

|---|---|---|---|---|

| Model update divergence | Server | |||

| Divergence score | Server | |||

| Validation accuracy | empirical | Server | ||

| Normalized accuracy | min–max | Server | ||

| Instant reliability (normalized) | Server | |||

| Trust score | EMA update | Server | ||

| Participation probability | sigmoid | Server |

| Dataset | Avg. Nodes | Avg. Edges (Before) | Avg. Edges (After) | Pruning Ratio (%) |

|---|---|---|---|---|

| TON_IoT | 616.6 | 3699.6 | 2959.1 | 20.0 |

| IoT-23 | 459.6 | 2757.6 | 2205.7 | 20.0 |

| OpenFed-IoT | 102.6 | 410.4 | 327.9 | 20.1 |

| Component | Setting |

|---|---|

| Graph similarity metric | Cosine similarity |

| Neighbors per node (k) | 10 |

| Graph construction | Client-side, local only |

| Graph rebuild frequency | Every communication round |

| Dynamic pruning ratio | 20% (energy-adaptive) |

| Malicious client fraction | 20% |

| Attack types | Label-flipping (50%), model poisoning (scaling ×5) |

| Attacker participation | Subject to the same scheduling rules as benign clients |

| Data partitioning | Non-IID, label-skew (Dirichlet, α = 0.5) |

| Number of clients | 10 |

| Random seeds | 3 independent runs |

| Metric | Description |

|---|---|

| Accuracy/F1-score | Model classification performance on unseen IoT data. |

| Energy (J/round) | Total energy consumed per client over the full training process. |

| Communication Overhead (Megabyte-MB) | Mean transmitted payload size per communication round. |

| Latency (ms) | End-to-end aggregation delay. |

| Trust Robustness (%) | Accuracy retention under adversarial conditions, relative to benign training |

| Convergence Rounds | Number of rounds to reach 95% of best accuracy. |

| Component | Description | Payload Size (Relative) |

|---|---|---|

| Local model update | GNN weights or gradients after local training | Dominant term |

| Trust scalar | Reliability trust score | Negligible (1 scalar) |

| Pruning mask/sparsity indicator | Binary or indexed representation of retained edges/parameters | Low |

| Scheduling metadata | Availability or participation flag | Negligible |

| Model | Accuracy (%) | F1-Score | Energy (J/Round) | Comm. (MB) | Latency (ms) | Trust Robustness (%) |

|---|---|---|---|---|---|---|

| Local-only GNN | 87.2 ± 0.6 | 0.861 ± 0.009 | 3.24 ± 0.10 | 0.0± 0.0 | 0 ± 0 | 68.5 ± 1.2 |

| FedAvg-GNN | 90.6± 0.5 | 0.892 ± 0.007 | 3.10 ± 0.09 | 11.3 ± 0.4 | 84 ± 3 | 71.4 ± 1.5 |

| FLTrust | 92.1 ± 0.4 | 0.904 ± 0.006 | 3.05 ± 0.08 | 10.9 ± 0.3 | 86 ± 2 | 78.6 ± 1.3 |

| E-FedAvg | 91.7 ± 0.5 | 0.898 ± 0.008 | 2.68 ± 0.07 | 9.8 ± 0.3 | 81 ± 3 | 74.3 ± 1.4 |

| Trust-FedGNN (ours) | 96.3 ± 0.4 | 0.945 ± 0.006 | 2.09 ± 0.08 | 7.8 ± 0.2 | 73 ± 2 | 91.1± 1.0 |

| Variant | Trust Layer | Edge Pruning | Adaptive Scheduling | Accuracy (%) | Energy (J/round) |

|---|---|---|---|---|---|

| Trust-FedGNN (full) | Yes | Yes | Yes | 96.3 ± 0.4 | 2.09 ± 0.08 |

| w/o Trust Layer | No | Yes | Yes | 93.8 ± 0.5 | 2.12 ± 0.07 |

| w/o Edge Pruning | Yes | No | Yes | 94.1 ± 0.6 | 2.89 ± 0.11 |

| w/o Adaptive Scheduling | Yes | Yes | No | 94.4 ± 0.5 | 2.65 ± 0.10 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Reis, M.J.C.S. Trust-Aware Federated Graph Learning for Secure and Energy-Efficient IoT Ecosystems. Computers 2026, 15, 121. https://doi.org/10.3390/computers15020121

Reis MJCS. Trust-Aware Federated Graph Learning for Secure and Energy-Efficient IoT Ecosystems. Computers. 2026; 15(2):121. https://doi.org/10.3390/computers15020121

Chicago/Turabian StyleReis, Manuel J. C. S. 2026. "Trust-Aware Federated Graph Learning for Secure and Energy-Efficient IoT Ecosystems" Computers 15, no. 2: 121. https://doi.org/10.3390/computers15020121

APA StyleReis, M. J. C. S. (2026). Trust-Aware Federated Graph Learning for Secure and Energy-Efficient IoT Ecosystems. Computers, 15(2), 121. https://doi.org/10.3390/computers15020121