Joint Inference of Image Enhancement and Object Detection via Cross-Domain Fusion Transformer

Abstract

1. Introduction

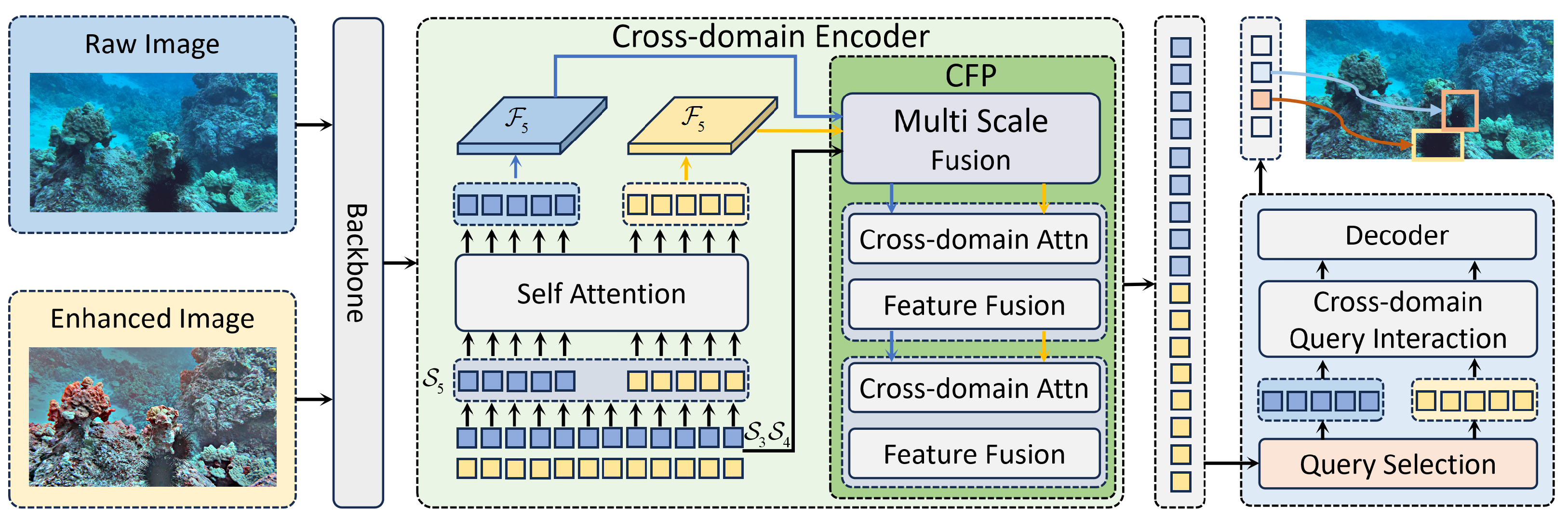

- We propose UCF-DETR, a cross-domain fusion Transformer-based detector for underwater object detection. By explicitly integrating complementary representations from the enhanced and original image domains, the proposed method effectively mitigates common underwater degradations, such as low visibility and poor contrast.

- We design a Cross-Domain Feature Pyramid (CFP) that enables bidirectional, multi-scale feature interactions between the enhanced and original domains. By leveraging complementary information extracted from the enhanced domain to strengthen feature representations in the original domain, CFP substantially improves the model’s representational capacity under severely degraded underwater conditions.

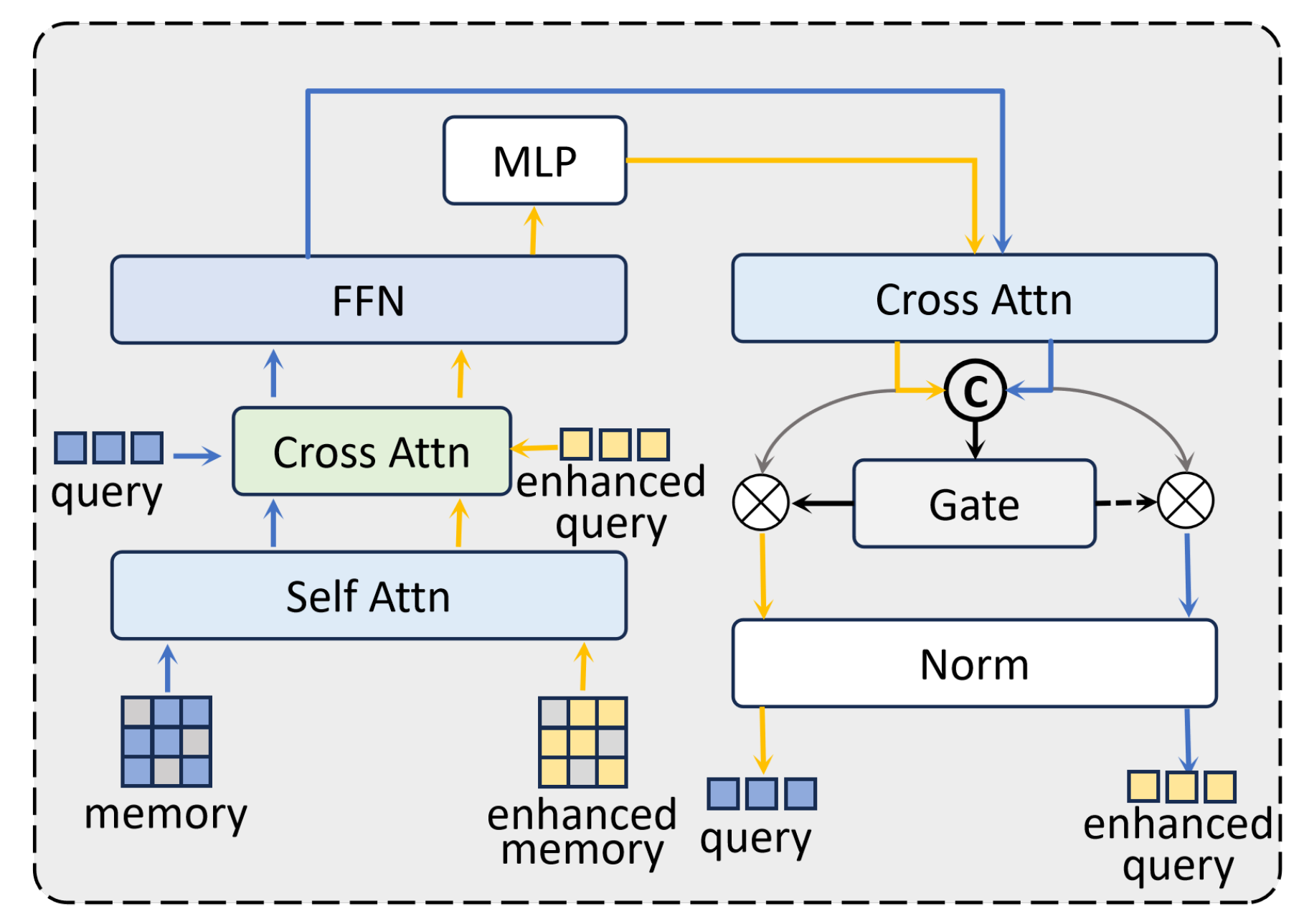

- We introduce a cross-domain query interaction mechanism to explicitly model the relationships between query embeddings from the enhanced and original domains, thereby facilitating cross-domain instance-level information exchange.

- We validate the proposed approach on two challenging underwater benchmarks, DUO and UDD. Extensive experimental results demonstrate that UCF-DETR achieves superior performance on both datasets. The code is available at https://github.com/bibabu555/UCF-DETR.git.

2. Related Work

2.1. Underwater Image Enhancement

2.2. Underwater Object Detection

3. Method

3.1. Overview

3.2. Retinex-Based Model for Underwater Image Enhancement

3.3. Cross-Domain Feature Pyramid

3.4. Cross-Domain Query Interaction

4. Experiment

4.1. Evaluation Metrics

4.1.1. Datasets

4.1.2. Experiment Setup

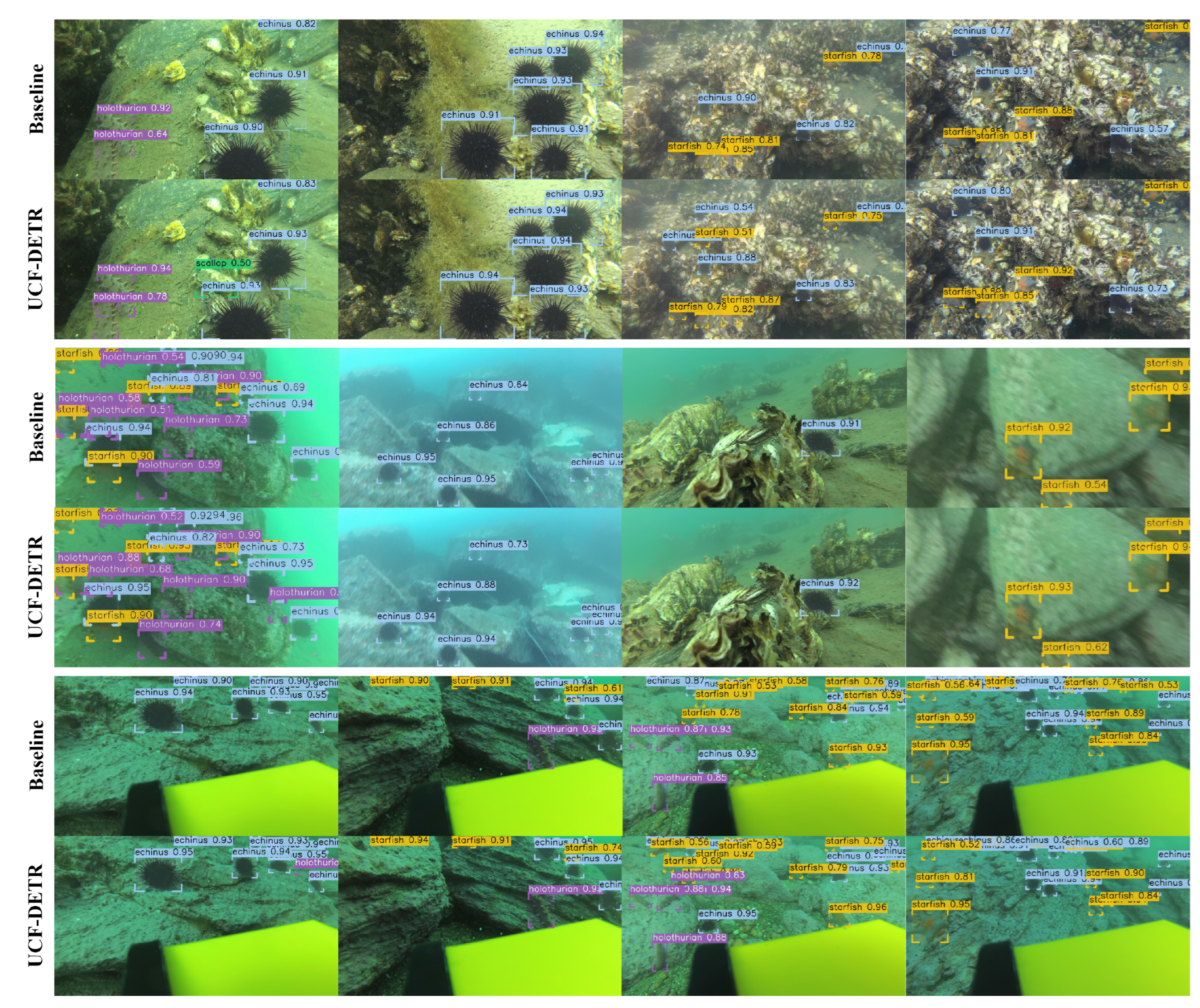

4.2. Experiment Results

4.2.1. Results on DUO

| Model | Backbone | Epochs | Params (M) | FPS | |||

|---|---|---|---|---|---|---|---|

| General Object Detector | |||||||

| YOLO11 | - | 36 | 20.13 | 67.7 | 85.7 | - | 74.1 |

| DETR [41] | ResNet50 | 50 | 41.28 | 51.7 | 78.8 | 60.2 | 8.7 |

| DINO [40] | ResNet50 | 12 | 42.28 | 64.9 | 85.1 | 71.3 | 21.3 |

| RT-DETR [38] | ResNet50 | 12 | 36.57 | 64.2 | 84.5 | 71.9 | 57.9 |

| Relation DETR [39] | ResNet50 | 12 | 43.48 | 65.7 | 85.2 | 72.6 | 20.7 |

| Underwater Object Detector | |||||||

| Boosting-RCNN [42] | ResNet50 | 12 | 48.07 | 60.8 | 80.6 | 69.0 | 37.0 |

| DJL-Net [19] | ResNet50 | 12 | 58.48 | 65.6 | 84.2 | 73.0 | - |

| RoIAttn [43] | ResNet50 | 12 | - | 62.3 | 82.8 | 71.4 | 14.2 |

| GCC-Net [12] | Swin-T | 12 | 39.46 | 61.1 | 81.6 | 67.3 | 20.8 |

| Underwater Cross-Domain Fusion Transformer Detector (ours) | |||||||

| UCF-DETR | ResNet50 | 12 | 44.28 | 66.4 | 85.5 | 73.9 | 18.9 |

4.2.2. Results on UDD

4.3. Ablation Study

4.3.1. Effect of Underwater Image Enhancement

4.3.2. Effect of Cross-Domain Feature Fusion in CFP

4.4. Analysis

4.5. Limitations

- (a)

- Severe color distortion: In this case, the texture of the starfish becomes nearly indistinguishable from the surrounding background due to extreme visual degradation. Moreover, under such severely degraded conditions, noise artifacts introduced by the UIE module adversely affect the quality of feature representations in the enhanced domain. Although the proposed UCF-DETR is designed to extract complementary cues from the restored enhanced domain to improve detection performance, severe degradation may corrupt these informative features, preventing them from providing reliable semantic guidance and ultimately leading to the observed missed detection.

- (b)

- Low-light conditions: The contour and texture information of the holothurian are severely attenuated, causing the object to blend into the background and leading to detection failure.

- (c)

- Turbid and low-contrast environments: Background textures are spuriously activated by the model, resulting in the scallop being incorrectly detected as a foreground object.

- (d)

- Low-contrast hazy blur: The combined effects of haze and contrast loss cause severe degradation of visual features. Under such conditions, the model simultaneously fails to detect the starfish and erroneously classifies background regions as echinus.

- (e)

- Blurred and densely crowded scenes: in severely blurred underwater environments with high biological density, marine organisms often exhibit substantial spatial overlap, while small-scale targets lack well-defined boundaries and discriminative texture cues. Under these adverse conditions, the detection model is prone to confusion among overlapping structures and ambiguous contours. This results in frequent false positives for echinus, triggered by cluttered background patterns, as well as missed detections of starfish whose fine-grained visual characteristics are obscured by blur and occlusion. This failure case underscores the intrinsic challenge of accurately distinguishing small, densely clustered objects in degraded underwater imaging scenarios.

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Cai, W.; Zhu, J.; Zhang, M.; Liu, M. Optical Flow Prompts Distractor-Aware Siamese Network for Tracking Autonomous Underwater Vehicle with Sonar and Camera Videos. Neural Netw. 2025, 196, 108328. [Google Scholar] [CrossRef]

- Liu, K.; Peng, L.; Tang, S. Underwater object detection using TC-YOLO with attention mechanisms. Sensors 2023, 23, 2567. [Google Scholar] [CrossRef]

- Wang, Q.; Zhang, Y.; He, B. Automatic seabed target segmentation of AUV via multilevel adversarial network and marginal distribution adaptation. IEEE Trans. Ind. Electron. 2023, 71, 749–759. [Google Scholar] [CrossRef]

- Dodić, D.; Vujović, V.; Jovković, S.; Milutinović, N.; Trpkoski, M. SAHI-Tuned YOLOv5 for UAV Detection of TM-62 Anti-Tank Landmines: Small-Object, Occlusion-Robust, Real-Time Pipeline. Computers 2025, 14, 448. [Google Scholar] [CrossRef]

- Bai, J.; Zhu, W.; Nie, Z.; Yang, X.; Xu, Q.; Li, D. HFC-YOLO11: A Lightweight Model for the Accurate Recognition of Tiny Remote Sensing Targets. Computers 2025, 14, 195. [Google Scholar] [CrossRef]

- Chen, X.; Yuan, M.; Fan, C.; Chen, X.; Li, Y.; Wang, H. Research on an underwater object detection network based on dual-branch feature extraction. Electronics 2023, 12, 3413. [Google Scholar] [CrossRef]

- Liu, Z.; Wang, B.; Li, Y.; He, J.; Li, Y. UnitModule: A lightweight joint image enhancement module for underwater object detection. Pattern Recognit. 2024, 151, 110435. [Google Scholar] [CrossRef]

- Chang, L.; Wang, Y.; Du, B.; Xu, C. Rectangling and enhancing underwater stitched image via content-aware warping and perception balancing. Neural Netw. 2025, 181, 106809. [Google Scholar] [CrossRef]

- Cao, R.; Zhang, R.; Yan, X.; Zhang, J. BG-YOLO: A bidirectional-guided method for underwater object detection. Sensors 2024, 24, 7411. [Google Scholar] [CrossRef]

- Shao, J.; Zhang, H.; Miao, J. LAMSNN: Learnable adaptive modulation for artifact suppression in spiking underwater image enhancement networks. Neural Netw. 2025, 195, 108210. [Google Scholar] [CrossRef]

- Zhang, W.; Li, X.; Huang, Y.; Xu, S.; Tang, J.; Hu, H. Underwater image enhancement via frequency and spatial domains fusion. Opt. Lasers Eng. 2025, 186, 108826. [Google Scholar] [CrossRef]

- Dai, L.; Liu, H.; Song, P.; Liu, M. A gated cross-domain collaborative network for underwater object detection. Pattern Recognit. 2024, 149, 110222. [Google Scholar] [CrossRef]

- Fu, Z.; Wang, W.; Huang, Y.; Ding, X.; Ma, K.K. Uncertainty inspired underwater image enhancement. In Computer Vision—ECCV 2022; Springer: Cham, Switzerland, 2022; pp. 465–482. [Google Scholar]

- Wang, Y.; Guo, J.; He, W.; Gao, H.; Yue, H.; Zhang, Z.; Li, C. Is underwater image enhancement all object detectors need? IEEE J. Ocean. Eng. 2023, 49, 606–621. [Google Scholar] [CrossRef]

- Fu, C.; Liu, R.; Fan, X.; Chen, P.; Fu, H.; Yuan, W.; Zhu, M.; Luo, Z. Rethinking general underwater object detection: Datasets, challenges, and solutions. Neurocomputing 2023, 517, 243–256. [Google Scholar] [CrossRef]

- Chen, L.; Jiang, Z.; Tong, L.; Liu, Z.; Zhao, A.; Zhang, Q.; Dong, J.; Zhou, H. Perceptual underwater image enhancement with deep learning and physical priors. IEEE Trans. Circuits Syst. Video Technol. 2020, 31, 3078–3092. [Google Scholar] [CrossRef]

- Saleem, A.; Awad, A.; Paheding, S.; Lucas, E.; Havens, T.C.; Esselman, P.C. Understanding the influence of image enhancement on underwater object detection: A quantitative and qualitative study. Remote Sens. 2025, 17, 185. [Google Scholar] [CrossRef]

- Liu, W.; Ren, G.; Yu, R.; Guo, S.; Zhu, J.; Zhang, L. Image-adaptive YOLO for object detection in adverse weather conditions. Proc. AAAI Conf. Artif. Intell. 2022, 36, 1792–1800. [Google Scholar] [CrossRef]

- Wang, B.; Wang, Z.; Guo, W.; Wang, Y. A dual-branch joint learning network for underwater object detection. Knowl.-Based Syst. 2024, 293, 111672. [Google Scholar] [CrossRef]

- Liu, R.; Jiang, Z.; Yang, S.; Fan, X. Twin adversarial contrastive learning for underwater image enhancement and beyond. IEEE Trans. Image Process. 2022, 31, 4922–4936. [Google Scholar] [CrossRef]

- Zhang, W.; Zhuang, P.; Sun, H.H.; Li, G.; Kwong, S.; Li, C. Underwater image enhancement via minimal color loss and locally adaptive contrast enhancement. IEEE Trans. Image Process. 2022, 31, 3997–4010. [Google Scholar] [CrossRef]

- Song, Y.; She, M.; Köser, K. Advanced underwater image restoration in complex illumination conditions. ISPRS J. Photogramm. Remote Sens. 2024, 209, 197–212. [Google Scholar] [CrossRef]

- Berman, D.; Levy, D.; Avidan, S.; Treibitz, T. Underwater single image color restoration using haze-lines and a new quantitative dataset. IEEE Trans. Pattern Anal. Mach. Intell. 2020, 43, 2822–2837. [Google Scholar] [CrossRef]

- Peng, L.; Zhu, C.; Bian, L. U-shape transformer for underwater image enhancement. IEEE Trans. Image Process. 2023, 32, 3066–3079. [Google Scholar] [CrossRef]

- Wang, H.; Zhang, W.; Xu, Y.; Li, H.; Ren, P. WaterCycleDiffusion: Visual-textual fusion empowered underwater image enhancement. Inf. Fusion 2025, 127, 103693. [Google Scholar] [CrossRef]

- Du, D.; Li, E.; Si, L.; Zhai, W.; Xu, F.; Niu, J.; Sun, F. UIEDP: Boosting underwater image enhancement with diffusion prior. Expert Syst. Appl. 2025, 259, 125271. [Google Scholar] [CrossRef]

- Zhang, S.; Zhao, S.; An, D.; Li, D.; Zhao, R. LiteEnhanceNet: A lightweight network for real-time single underwater image enhancement. Expert Syst. Appl. 2024, 240, 122546. [Google Scholar] [CrossRef]

- Zhou, J.; He, Z.; Zhang, D.; Liu, S.; Fu, X.; Li, X. Spatial residual for underwater object detection. IEEE Trans. Pattern Anal. Mach. Intell. 2025, 47, 4996–5013. [Google Scholar] [CrossRef]

- Ji, X.; Chen, S.; Hao, L.Y.; Zhou, J.; Chen, L. FBDPN: CNN-Transformer hybrid feature boosting and differential pyramid network for underwater object detection. Expert Syst. Appl. 2024, 256, 124978. [Google Scholar] [CrossRef]

- Zhang, D.; Yu, C.; Li, Z.; Qin, C.; Xia, R. A lightweight network enhanced by attention-guided cross-scale interaction for underwater object detection. Appl. Soft Comput. 2025, 184, 113811. [Google Scholar] [CrossRef]

- Yeh, C.H.; Lin, C.H.; Kang, L.W.; Huang, C.H.; Lin, M.H.; Chang, C.Y.; Wang, C.C. Lightweight deep neural network for joint learning of underwater object detection and color conversion. IEEE Trans. Neural Netw. Learn. Syst. 2021, 33, 6129–6143. [Google Scholar] [CrossRef] [PubMed]

- Yuan, G.; Song, J.; Li, J. IF-USOD: Multimodal information fusion interactive feature enhancement architecture for underwater salient object detection. Inf. Fusion 2025, 117, 102806. [Google Scholar] [CrossRef]

- Zhang, D.; Wu, C.; Zhou, J.; Zhang, W.; Lin, Z.; Polat, K.; Alenezi, F. Robust underwater image enhancement with cascaded multi-level sub-networks and triple attention mechanism. Neural Netw. 2024, 169, 685–697. [Google Scholar] [CrossRef] [PubMed]

- Lin, T.Y.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature pyramid networks for object detection. In 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Piscataway, NJ, USA, 2017; pp. 2117–2125. [Google Scholar]

- Zhu, X.; Su, W.; Lu, L.; Li, B.; Wang, X.; Dai, J. Deformable DETR: Deformable Transformers for End-to-End Object Detection. In Proceedings of the International Conference on Learning Representations, Vienna, Austria, 4 May 2021. [Google Scholar]

- Liu, C.; Li, H.; Wang, S.; Zhu, M.; Wang, D.; Fan, X.; Wang, Z. A dataset and benchmark of underwater object detection for robot picking. In 2021 IEEE International Conference on Multimedia & Expo Workshops (ICMEW); IEEE: Piscataway, NJ, USA, 2021; pp. 1–6. [Google Scholar]

- Jiang, L.; Wang, Y.; Jia, Q.; Xu, S.; Liu, Y.; Fan, X.; Li, H.; Liu, R.; Xue, X.; Wang, R. Underwater species detection using channel sharpening attention. In Proceedings of the 29th ACM International Conference on Multimedia; Association for Computing Machinery: New York, NY, USA, 2021; pp. 4259–4267. [Google Scholar]

- Zhao, Y.; Lv, W.; Xu, S.; Wei, J.; Wang, G.; Dang, Q.; Liu, Y.; Chen, J. Detrs beat yolos on real-time object detection. In 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Piscataway, NJ, USA, 2024; pp. 16965–16974. [Google Scholar]

- Hou, X.; Liu, M.; Zhang, S.; Wei, P.; Chen, B.; Lan, X. Relation detr: Exploring explicit position relation prior for object detection. In Computer Vision—ECCV 2024; Springer: Cham, Switzerland, 2024; pp. 89–105. [Google Scholar]

- Zhang, H.; Li, F.; Liu, S.; Zhang, L.; Su, H.; Zhu, J.; Ni, L.; Shum, H.Y. DINO: DETR with Improved DeNoising Anchor Boxes for End-to-End Object Detection. arXiv 2022, arXiv:2203.03605. [Google Scholar]

- Carion, N.; Massa, F.; Synnaeve, G.; Usunier, N.; Kirillov, A.; Zagoruyko, S. End-to-end object detection with transformers. In Computer Vision—ECCV 2020; Springer: Cham, Switzerland, 2020; pp. 213–229. [Google Scholar]

- Song, P.; Li, P.; Dai, L.; Wang, T.; Chen, Z. Boosting R-CNN: Reweighting R-CNN samples by RPN’s error for underwater object detection. Neurocomputing 2023, 530, 150–164. [Google Scholar] [CrossRef]

- Liang, X.; Song, P. Excavating roi attention for underwater object detection. In 2022 IEEE International Conference on Image Processing (ICIP); IEEE: Piscataway, NJ, USA, 2022; pp. 2651–2655. [Google Scholar]

- Girshick, R. Fast r-cnn. In 2015 IEEE International Conference on Computer Vision (ICCV); IEEE: Piscataway, NJ, USA, 2015; pp. 1440–1448. [Google Scholar]

- Chen, Z.; Yang, C.; Li, Q.; Zhao, F.; Zha, Z.J.; Wu, F. Disentangle your dense object detector. In Proceedings of the 29th ACM International Conference on Multimedia; Association for Computing Machinery: New York, NY, USA, 2021; pp. 4939–4948. [Google Scholar]

| Model | Epoch | ||||||

|---|---|---|---|---|---|---|---|

| General Object Detector | |||||||

| Faster R-CNN [44] | 12 | 27.3 | 65.9 | 17.2 | 15.9 | 26.3 | 31.3 |

| Relation-DETR [39] | 12 | 27.3 | 68.1 | 12.4 | 17.9 | 25.1 | 29.6 |

| DINO [40] | 12 | 28.3 | 68.7 | 14.8 | 18.5 | 26.3 | 33.0 |

| DDOD [45] | 12 | 28.3 | 66.0 | 16.8 | 17.7 | 26.5 | 31.0 |

| RT-DETR [38] | 12 | 26.8 | 60.4 | 17.2 | 15.3 | 24.8 | 33.1 |

| Underwater Object Detector | |||||||

| Boosting-R-CNN [42] | 12 | 28.4 | 64.3 | 18.8 | 16.4 | 28.0 | 30.6 |

| RoIAttn [43] | 12 | 28.3 | 64.5 | 16.9 | 15.0 | 28.3 | 31.5 |

| DJL-Net [19] | 12 | 31.5 | 72.3 | 19.1 | 17.5 | 30.7 | 33.1 |

| GCC-Net [12] | 12 | 26.3 | 64.6 | 14.1 | 15.2 | 24.7 | 29.4 |

| Underwater Cross-Domain Fusion Transformer Detector (ours) | |||||||

| UCF-DETR | 12 | 30.9 | 71.1 | 19.3 | 18.7 | 30.1 | 31.3 |

| CFP | CQI | ||||||

|---|---|---|---|---|---|---|---|

| 64.2 | 84.5 | 71.9 | 51.6 | 65.7 | 63.4 | ||

| ✓ | 65.8 (↑1.6) | 85.5 (↑1.0) | 73.9 (↑2.0) | 56.1 (↑4.5) | 67.7 (↑2.0) | 65.2 (↑1.8) | |

| ✓ | ✓ | 66.4 (↑0.6) | 85.5 (↓0.0) | 73.9 (↓0.0) | 58.6 (↑1.5) | 68.1 (↑0.4) | 65.0 (↓0.2) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhao, B.; Chen, Y. Joint Inference of Image Enhancement and Object Detection via Cross-Domain Fusion Transformer. Computers 2026, 15, 43. https://doi.org/10.3390/computers15010043

Zhao B, Chen Y. Joint Inference of Image Enhancement and Object Detection via Cross-Domain Fusion Transformer. Computers. 2026; 15(1):43. https://doi.org/10.3390/computers15010043

Chicago/Turabian StyleZhao, Bingxun, and Yuan Chen. 2026. "Joint Inference of Image Enhancement and Object Detection via Cross-Domain Fusion Transformer" Computers 15, no. 1: 43. https://doi.org/10.3390/computers15010043

APA StyleZhao, B., & Chen, Y. (2026). Joint Inference of Image Enhancement and Object Detection via Cross-Domain Fusion Transformer. Computers, 15(1), 43. https://doi.org/10.3390/computers15010043