Efficient Low-Precision GEMM on Ascend NPU: HGEMM’s Synergy of Pipeline Scheduling, Tiling, and Memory Optimization

Abstract

1. Introduction

- AIC/AIV Dual-Stream Pipeline Scheduling. Both AIC and AIV streams are deployed, with elaborately orchestrated pipelining adopted to achieve high-efficiency HGEMM, which synchronizes padding operations, matrix–matrix multiplications, and element-wise instructions across hierarchical buffers and compute units.

- Self-Adaptive Tiling Design. A suite of tiling strategies along with a corresponding strategy selection mechanism is proposed to determine the optimal tiling strategy for each specific matrix size. This mechanism balances the reduction in accumulation loops in the K direction and the maximization of hardware utilization in the M and N directions. Such an approach is also applicable to other SPMD-based GPU, NPU, or TPU architectures aiming to exploit hardware capabilities.

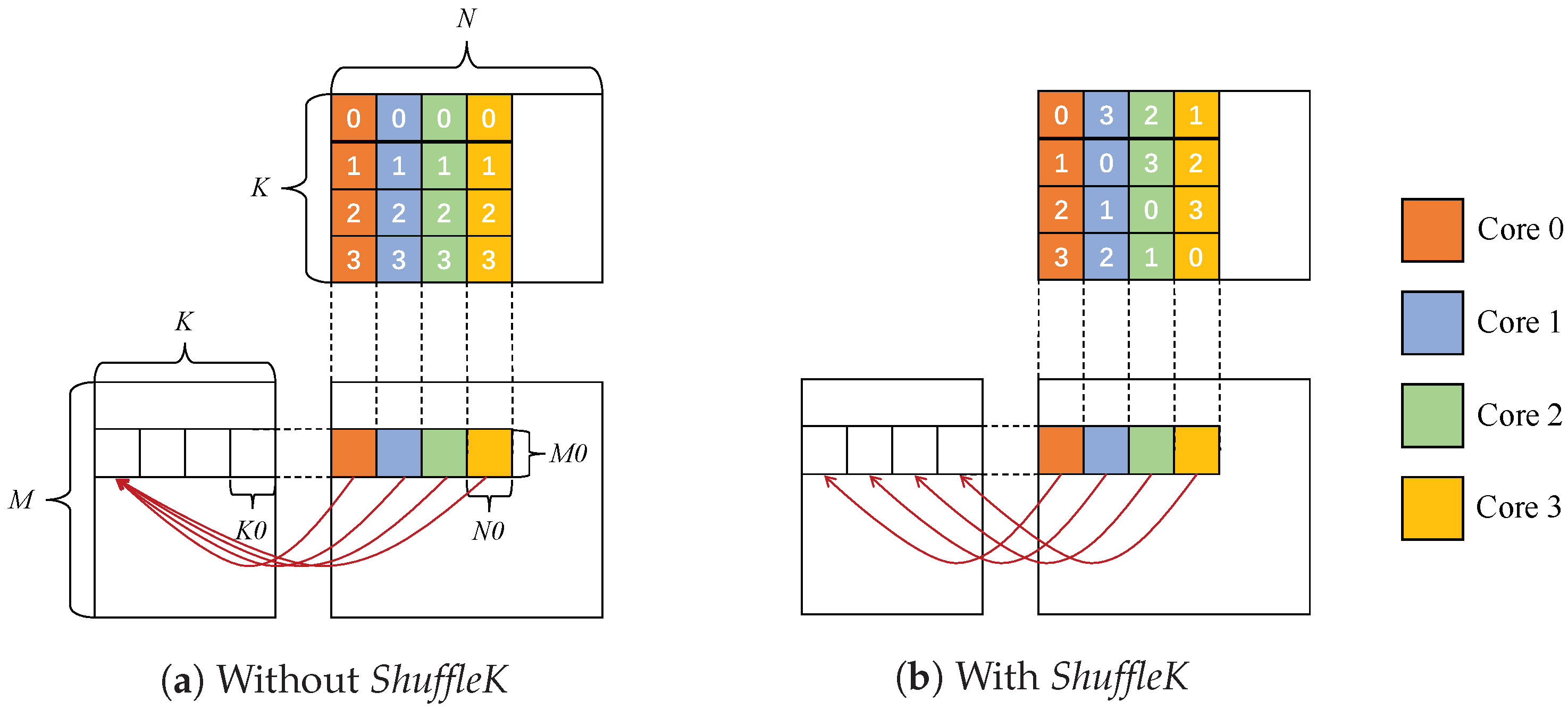

- Memory Access and Hardware Occupancy Utilization. The ShuffleK and SplitK methods are proposed to address the challenges of memory access efficiency and AI Core occupancy, respectively. The ShuffleK method alleviates the occurrence of serial access to shared and global memory, while the SplitK method resolves insufficient workload in small matrix scenarios, thereby improving HGEMM efficiency across a broad range of workloads.

2. Motivation and Related Works

2.1. Motivation

2.2. Related Works

3. Backgrounds

3.1. GEMM Routine

3.2. Ascend NPU Architecture

4. Designing and Optimizing HGEMM on Ascend NPU

4.1. HGEMM at a Glance

| Algorithm 1 Code skeleton of HGEMM execution design on Ascend NPU |

CPU Instructions:

AIV Instructions:

AIC Instructions:

|

4.2. Tiling Strategy Design in HGEMM

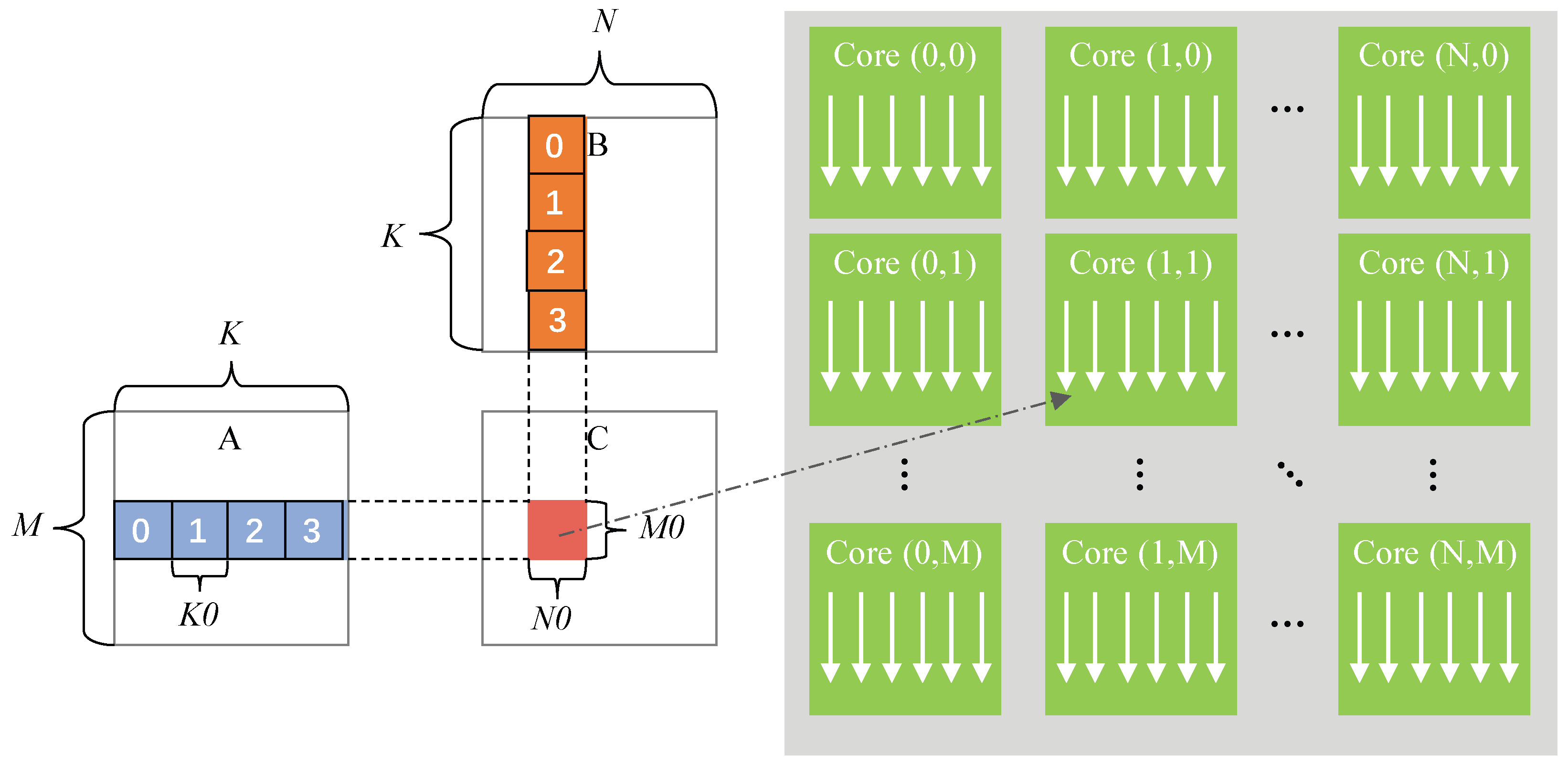

4.2.1. Parallelism Model and Single Core Model

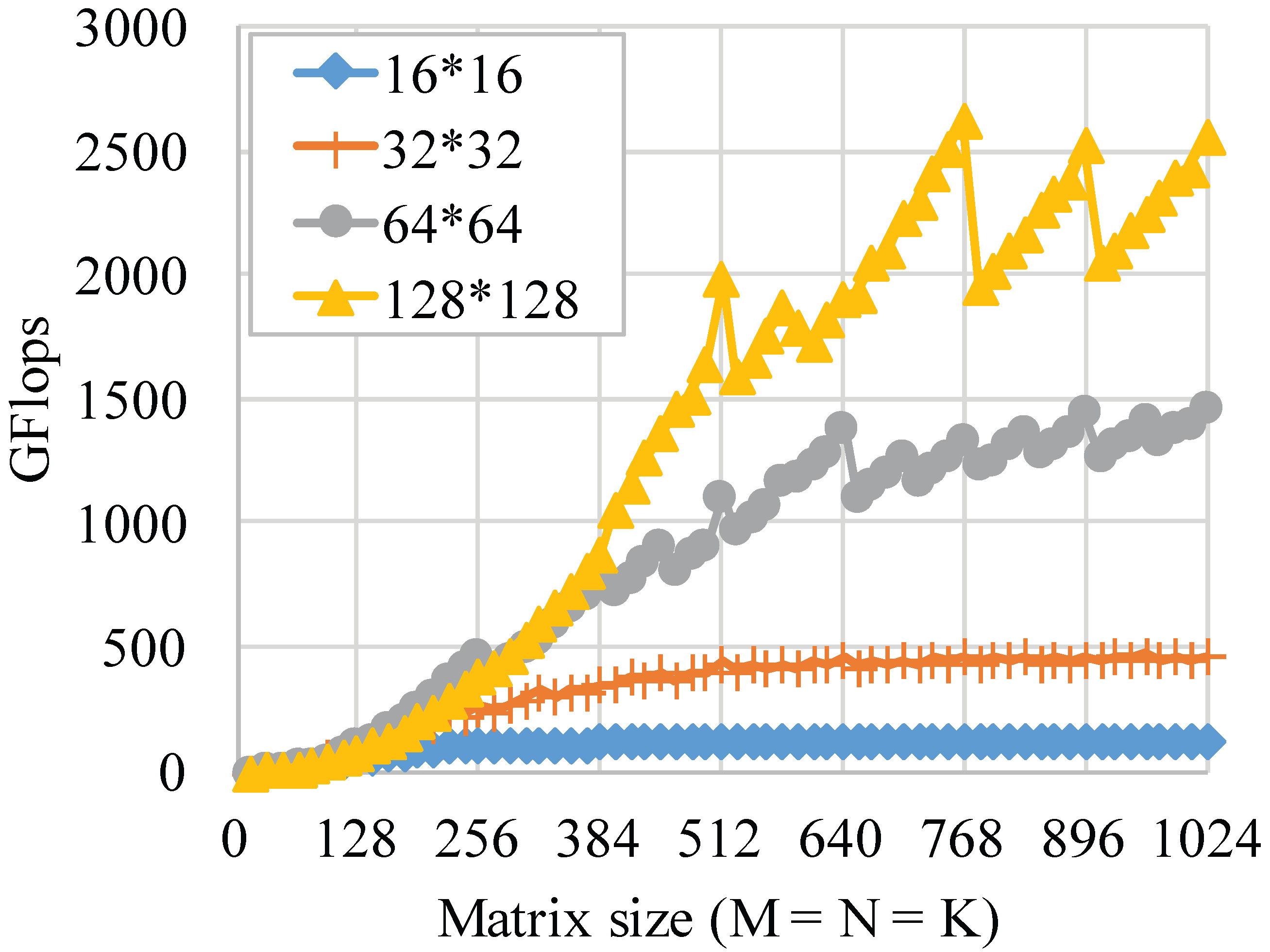

4.2.2. Effects from Tiling Sizes Along M, N, and K Directions

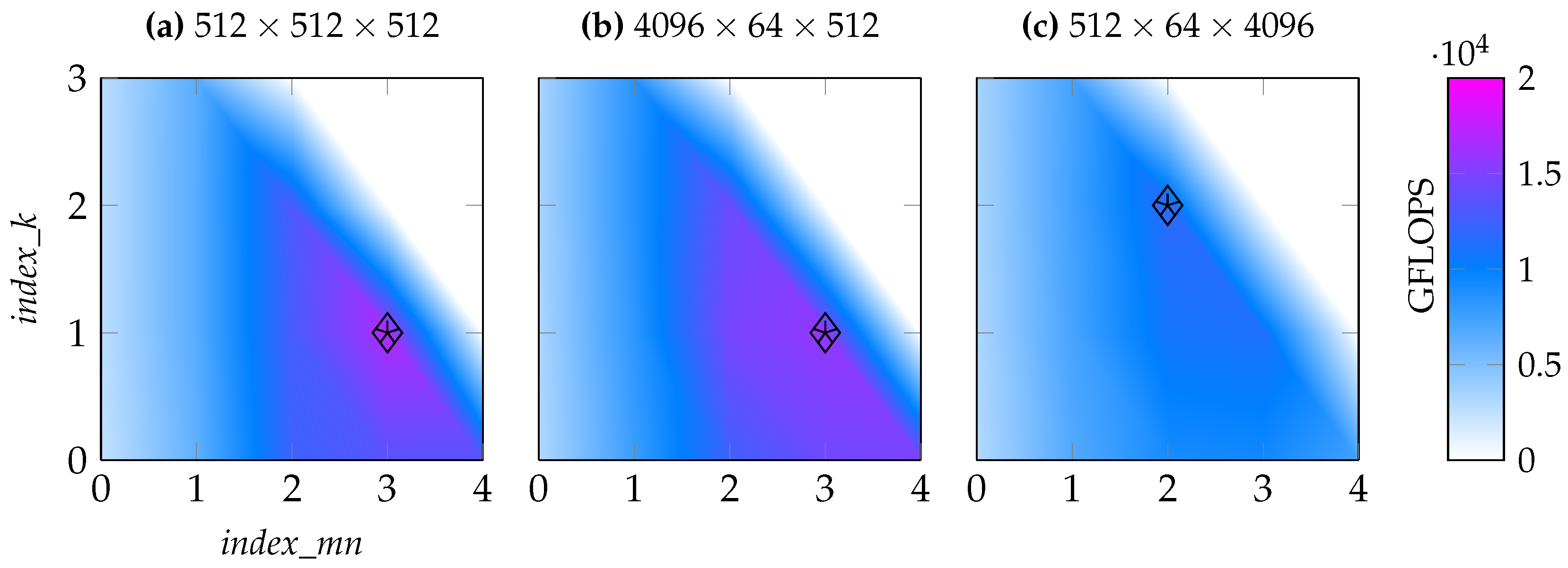

4.2.3. Tiling Strategy Algorithm Design

- Determine selectable as listed in Table 2, depending on input of K, which should satisfy , and put all available into priority queue A where larger is given higher priority.

- Pop from priority queue A.

- Determine and selectable as listed in Table 1, where the corresponding index_mn must not exceed index_mn_max which is obtained from popped , and put all available combination of and into priority queue B where smaller and combination is given higher priority. This is to ensure that each block for both input and output matrices does not overflow from L0A, L0B, and L0C buffers.

- Compare aicore_num with the threshold. If aicore_num is smaller than the threshold, current , , and are selected as the final solution of the tiling size. If not, then (i) if corresponding combination is not the last element in queue B, pop the next combination in queue B and return to step 4, and (ii) if this is already the last element in queue B, the next in queue A is popped, and one must return to step 3. Furthermore, if the corresponding is already the last element in queue A, we will select current , , and as the final solution, which should satisfy .

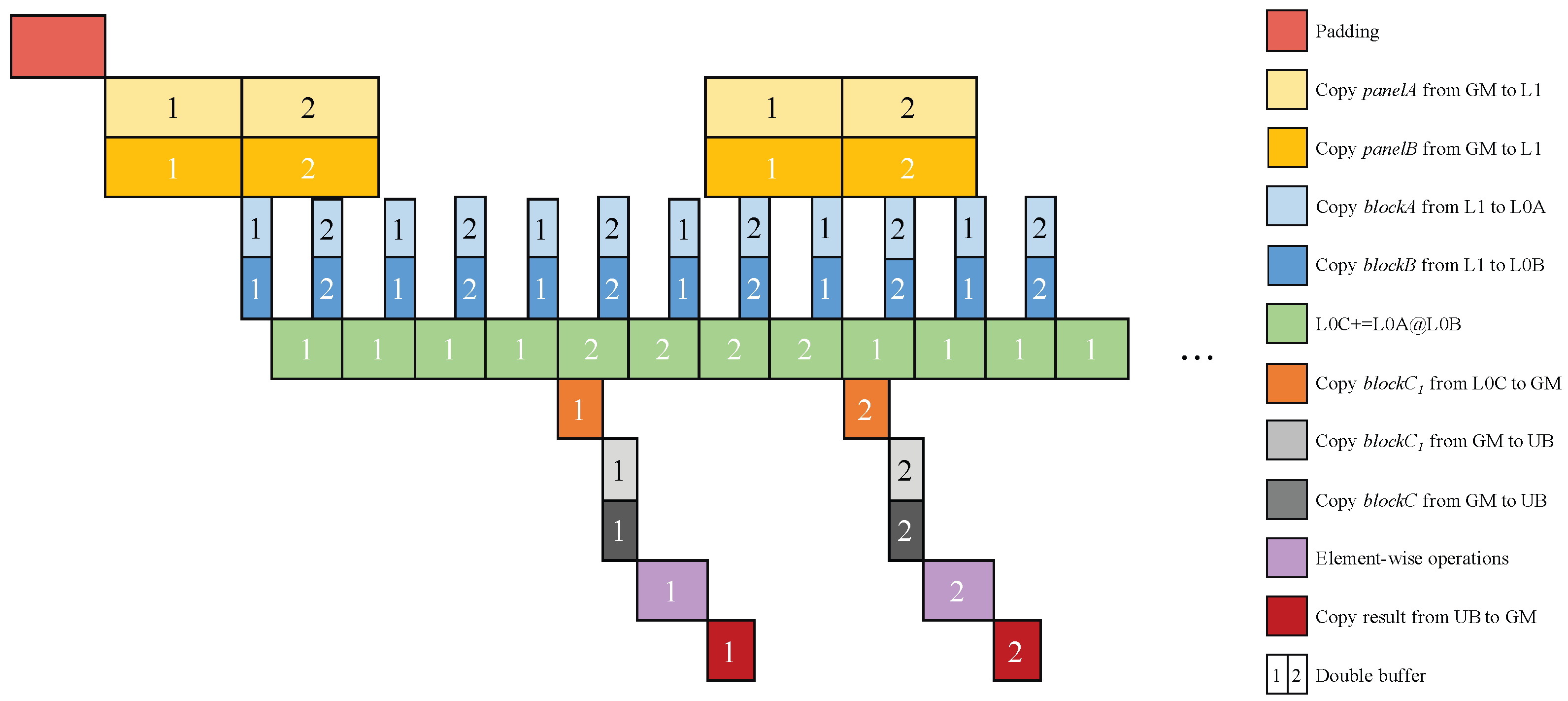

4.3. Pipelining Optimizations on HGEMM

4.3.1. Block-Wise Pipelining Optimizations on HGEMM

4.3.2. ShuffleK: Eliminating Memory Access Conflicts in HGEMM

4.3.3. SplitK: Utilizing AI Core Occupancy for Small Matrix Scenarios

5. Evaluation

5.1. Experimental Setup

5.2. HGEMM Performance on General Random Workloads

5.3. HGEMM Performance on HPL-MxP-Based Benchmark Workloads

5.4. HGEMM Performance on OPT-Inspired Workloads

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Cicek, N.M.; Shen, X.; Ozturk, O. Energy Efficient Boosting of GEMM Accelerators for DNN via Reuse. ACM Trans. Des. Autom. Electron. Syst. 2022, 27, 1–26. [Google Scholar] [CrossRef]

- Drmač, Z. A LAPACK Implementation of the Dynamic Mode Decomposition. ACM Trans. Math. Softw. 2024, 50, 1–32. [Google Scholar] [CrossRef]

- Nair, H.; Vellaisamy, P.; Chen, A.; Finn, J.; Li, A.; Trivedi, M.; Shen, J.P. tuGEMM: Area-Power-Efficient Temporal Unary GEMM Architecture for Low-Precision Edge AI. In Proceedings of the 2023 IEEE International Symposium on Circuits and Systems (ISCAS), Monterey, CA, USA, 21–25 May 2023; pp. 1–5. [Google Scholar] [CrossRef]

- NVIDIA. cuBLAS (v12.5). Available online: https://docs.nvidia.com/cuda/archive/12.5.0 (accessed on 21 May 2024).

- Advanced Micro Devices; Inc. rocBLAS 4.1.2 Documentation. Available online: https://rocm.docs.amd.com/projects/rocBLAS/en/docs-6.1.2/index.html (accessed on 4 June 2024).

- Xu, R.G.; Van Zee, F.G.; van de Geijn, R.A. Towards a Unified Implementation of GEMM in BLIS. In Proceedings of the 37th ACM International Conference on Supercomputing, ICS ’23, Orlando, FL, USA, 21–23 June 2023; pp. 111–121. [Google Scholar] [CrossRef]

- Abdelfattah, A.; Haidar, A.; Tomov, S.; Dongarra, J. Novel HPC techniques to batch execution of many variable size BLAS computations on GPUs. In Proceedings of the International Conference on Supercomputing, ICS ’17, Chicago, IL, USA, 13–16 November 2017. [Google Scholar] [CrossRef]

- Nvidia. CUDA Templates for Linear Algebra Subroutines. Available online: https://github.com/NVIDIA/cutlass (accessed on 29 October 2025).

- Kerr, A.; Merrill, D.; Demouth, J.; Tran, J. CUTLASS: Fast Linear Algebra in CUDA C++. Nvidia. 5 December 2017. Available online: https://developer.nvidia.com/blog/cutlass-linear-algebra-cuda/ (accessed on 6 January 2026).

- Huawei. Catlass: CANN Templates for Linear Algebra Subroutines. Available online: https://gitcode.com/cann/catlass (accessed on 29 October 2025).

- Chen, Y.; Lu, L. AscQLUT: A Decode-Fused INT4 GEMM Kernel for Accelerating Low-Bit Quantized Matrix Multiplication via Lookup Tables on Ascend 910B NPU. preprint 2025. [Google Scholar] [CrossRef]

- Ma, Z.; Wang, H.; Feng, G.; Zhang, C.; Xie, L.; He, J.; Chen, S.; Zhai, J. Efficiently emulating high-bitwidth computation with low-bitwidth hardware. In Proceedings of the 36th ACM International Conference on Supercomputing, ICS ’22, Virtual Event, 28–30 June 2022. [Google Scholar] [CrossRef]

- Hong, K.; Dai, G.; Xu, J.; Mao, Q.; Li, X.; Liu, J.; Chen, K.; Dong, Y.; Wang, Y. FlashDecoding++: Faster Large Language Model Inference with Asynchronization, Flat GEMM Optimization, and Heuristics. Mach. Learn. Syst. 2024, 6, 148–161. [Google Scholar]

- Touvron, H.; Martin, L.; Stone, K.; Albert, P.; Almahairi, A.; Babaei, Y.; Bashlykov, N.; Batra, S.; Bhargava, P.; Bhosale, S.; et al. Llama 2: Open Foundation and Fine-Tuned Chat Models. arXiv 2023, arXiv:2307.09288. [Google Scholar] [CrossRef]

- Dettmers, T.; Lewis, M.; Belkada, Y.; Zettlemoyer, L. GPT3.int8(): 8-bit Matrix Multiplication for Transformers at Scale. Adv. Neural Inf. Process. Syst. 2022, 35, 30318–30332. [Google Scholar]

- Xia, H.; Zheng, Z.; Wu, X.; Chen, S.; Yao, Z.; Youn, S.; Bakhtiari, A.; Wyatt, M.; Zhuang, D.; Zhou, A.; et al. Quant-LLM: Accelerating the Serving of Large Language Models via FP6-Centric Algorithm-System Co-Design on Modern GPUs. In Proceedings of the 2024 USENIX Annual Technical Conference (USENIX ATC 24), Santa Clara, CA, USA, 10–12 July 2024; pp. 699–713. [Google Scholar]

- Xi, H.; Li, C.; Chen, J.; Zhu, J. Training Transformers with 4-bit Integers. Adv. Neural Inf. Process. Syst. 2023, 36, 49146–49168. [Google Scholar]

- Wu, X.; Li, C.; Yazdani Aminabadi, R.; Yao, Z.; He, Y. Understanding Int4 Quantization for Language Models: Latency Speedup, Composability, and Failure Cases. In Proceedings of the 40th International Conference on Machine Learning, PMLR, Honolulu, HI, USA, 23–29 July 2023; Volume 202, pp. 37524–37539. [Google Scholar]

- Abdelfattah, A.; Haidar, A.; Tomov, S.; Dongarra, J. Performance, Design, and Autotuning of Batched GEMM for GPUs. In High Performance Computing; Kunkel, J.M., Balaji, P., Dongarra, J., Eds.; Springer: Cham, Switzerland, 2016; pp. 21–38. [Google Scholar]

- Dongarra, J.; Hammarling, S.; Higham, N.J.; Relton, S.D.; Valero-Lara, P.; Zounon, M. The Design and Performance of Batched BLAS on Modern High-Performance Computing Systems. Procedia Comput. Sci. 2017, 108, 495–504. [Google Scholar] [CrossRef]

- Abdelfattah, A.; Costa, T.; Dongarra, J.; Gates, M.; Haidar, A.; Hammarling, S.; Higham, N.J.; Kurzak, J.; Luszczek, P.; Tomov, S.; et al. A Set of Batched Basic Linear Algebra Subprograms and LAPACK Routines. ACM Trans. Math. Softw. 2021, 47, 1–23. [Google Scholar] [CrossRef]

- Jiang, L.; Yang, C.; Ma, W. Enabling Highly Efficient Batched Matrix Multiplications on SW26010 Many-core Processor. ACM Trans. Archit. Code Optim. 2020, 17, 1–23. [Google Scholar] [CrossRef]

- Mijić, N.; Davidović, D. Batched matrix operations on distributed GPUs with application in theoretical physics. In Proceedings of the 2022 45th Jubilee International Convention on Information, Communication and Electronic Technology (MIPRO), Opatija, Croatia, 23–27 May 2022; pp. 293–299. [Google Scholar] [CrossRef]

- Li, X.; Liang, Y.; Yan, S.; Jia, L.; Li, Y. A coordinated tiling and batching framework for efficient GEMM on GPUs. In Proceedings of the 24th Symposium on Principles and Practice of Parallel Programming, PPoPP ’19, Washington, DC, USA, 16–20 February 2019; pp. 229–241. [Google Scholar] [CrossRef]

- Ernst, D.; Hager, G.; Thies, J.; Wellein, G. Performance engineering for real and complex tall & skinny matrix multiplication kernels on GPUs. Int. J. High Perform. Comput. Appl. 2021, 35, 5–19. [Google Scholar] [CrossRef]

- Amrouch, H.; Zervakis, G.; Salamin, S.; Kattan, H.; Anagnostopoulos, I.; Henkel, J. NPU Thermal Management. IEEE Trans. Comput.-Aided Des. Integr. Circuits Syst. 2020, 39, 3842–3855. [Google Scholar] [CrossRef]

- Georgie, P. What Is an NPU? Here’s Why Everyone’s Suddenly Talking About Them; Digital Trends Media Group: Portland, OR, USA, 27 December 2023. [Google Scholar]

- Lee, K.J. Chapter Seven - Architecture of neural processing unit for deep neural networks. In Hardware Accelerator Systems for Artificial Intelligence and Machine Learning; Kim, S., Deka, G.C., Eds.; Elsevier: Amsterdam, The Netherlands, 2021; Volume 122, Advances in Computers, pp. 217–245. [Google Scholar] [CrossRef]

- Liao, H.; Tu, J.; Xia, J.; Liu, H.; Zhou, X.; Yuan, H.; Hu, Y. Ascend: A Scalable and Unified Architecture for Ubiquitous Deep Neural Network Computing: Industry Track Paper. In Proceedings of the 2021 IEEE International Symposium on High-Performance Computer Architecture (HPCA), Virtually, 27 February–3 March 2021; pp. 789–801. [Google Scholar] [CrossRef]

- Hoffmann, J.; Borgeaud, S.; Mensch, A.; Buchatskaya, E.; Cai, T.; Rutherford, E.; de Las Casas, D.; Hendricks, L.A.; Welbl, J.; Clark, A.; et al. Training Compute-Optimal Large Language Models. arXiv 2022, arXiv:2203.15556. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.u.; Polosukhin, I. Attention is All you Need. In Advances in Neural Information Processing Systems; Guyon, I., Luxburg, U.V., Bengio, S., Wallach, H., Fergus, R., Vishwanathan, S., Garnett, R., Eds.; Curran Associates, Inc.: Red Hook, NY, USA, 2017; Volume 30. [Google Scholar]

- Guo, H.; Guo, N.; Meinel, C.; Yang, H. Low-bit CUTLASS GEMM Template Auto-tuning using Neural Network. In Proceedings of the 2024 IEEE International Symposium on Parallel and Distributed Processing with Applications (ISPA), Kaifeng, China, 30 October–2 November 2024; pp. 394–401. [Google Scholar] [CrossRef]

- Xue, Y.; Liu, Y.; Nai, L.; Huang, J. V10: Hardware-Assisted NPU Multi-tenancy for Improved Resource Utilization and Fairness. In Proceedings of the 50th Annual International Symposium on Computer Architecture, ISCA ’23, Orlando, FL, USA, 17–21 June 2023. [Google Scholar] [CrossRef]

- Wang, C.; Pang, W.; Wu, X.; Jun, G.; Romero, L.; Taka, E.; Marculescu, D.; Nowatzki, T.; Vasireddy, P.; Melber, J.; et al. Can Asymmetric Tile Buffering Be Beneficial? arXiv 2025, arXiv:2511.16041. [Google Scholar] [CrossRef]

- Hovhannisyan, A. Optimizing DGEMM Using Vectorized Micro-Kernels and Memory-Aware Parallelization. In Proceedings of the Computer Science and Information Technologies (CSIT) Workshop, CSIT 2025, London, UK, 26–27 July 2025. [Google Scholar] [CrossRef]

- Zhang, Z.; Wang, H.; Xu, H.; Yang, D.; Zhou, X.; Cheng, D. HyTiS: Hybrid Tile Scheduling for GPU GEMM with Enhanced Wave Utilization and Cache Locality. In Proceedings of the International Conference for High Performance Computing, Networking, Storage and Analysis, SC ’25, St. Louis, MO, USA, 16–21 November 2025; pp. 1604–1618. [Google Scholar] [CrossRef]

- Rivera, C.; Chen, J.; Xiong, N.; Zhang, J.; Song, S.L.; Tao, D. TSM2X: High-performance tall-and-skinny matrix–matrix multiplication on GPUs. J. Parallel Distrib. Comput. 2021, 151, 70–85. [Google Scholar] [CrossRef]

- Tang, H.; Komatsu, K.; Sato, M.; Kobayashi, H. Efficient Mixed-Precision Tall-and-Skinny Matrix-Matrix Multiplication for GPUs. Int. J. Netw. Comput. 2021, 11, 267–282. [Google Scholar] [CrossRef]

- Park, G.; Park, B.; Kim, M.; Lee, S.; Kim, J.; Kwon, B.; Kwon, S.J.; Kim, B.; Lee, Y.; Lee, D. LUT-GEMM: Quantized Matrix Multiplication based on LUTs for Efficient Inference in Large-Scale Generative Language Models. arXiv 2024, arXiv:2206.09557. [Google Scholar]

- Heo, G.; Lee, S.; Cho, J.; Choi, H.; Lee, S.; Ham, H.; Kim, G.; Mahajan, D.; Park, J. NeuPIMs: NPU-PIM Heterogeneous Acceleration for Batched LLM Inferencing. In Proceedings of the 29th ACM International Conference on Architectural Support for Programming Languages and Operating Systems, La Jolla, CA, USA, 27 April–1 May 2024; Volume 3, pp. 722–737. [Google Scholar] [CrossRef]

- Hu, H.; Xiao, B.; Sun, S.; Yin, J.; Zhang, Z.; Luo, X.; Jiang, C.; Xu, W.; Jia, X.; Liu, X.; et al. LiquidGEMM: Hardware-Efficient W4A8 GEMM Kernel for High-Performance LLM Serving. In Proceedings of the International Conference for High Performance Computing, Networking, Storage and Analysis, SC ’25, St. Louis, MO, USA, 16–21 November 2025; pp. 1619–1630. [Google Scholar] [CrossRef]

- Sadasivan, H.; Ozturk, M.E.; Osama, M.; Millette, C.; Rai, A.; Podkorytov, M.; Afaganis, J.; Huang, C.; Zhang, J.; Liu, J. Stream-K++: Adaptive GPU GEMM Kernel Selection and Scheduling for AI Using Bloom Filters. In High Performance Computing; Neuwirth, S., Paul, A.K., Weinzierl, T., Carson, E.C., Eds.; Springer: Cham, Switzerland, 2026; pp. 480–493. [Google Scholar]

- Taka, E.; Roesti, A.; Melber, J.; Vasireddy, P.; Denolf, K.; Marculescu, D. Striking the Balance: GEMM Performance Optimization Across Generations of Ryzen AI NPUs. arXiv 2025, arXiv:2512.13282. [Google Scholar] [CrossRef]

- Huawei. Non-Contiguous-to-Contiguous Conversion (Vector Operators)-Basic Tuning-Operator Computation Perform. Huawei, 7 March 2024. [Google Scholar]

- Huawei. AI Core-Background Knowledge-TBE&AI CPU Operator Development-Operator development-7.0.0-CANN commercial edition-Ascend Documentation-Ascend Community. Huawei, 6 February 2024. [Google Scholar]

- Huawei. Hardware Architecture-Operator development-8.0.RC2.alpha003-CANN community edition-Ascend Documentation-Ascend Community. Huawei, 25 June 2024. (In Chinese) [Google Scholar]

- Anderson, A.; Vasudevan, A.; Keane, C.; Gregg, D. High-Performance Low-Memory Lowering: GEMM-based Algorithms for DNN Convolution. In Proceedings of the 2020 IEEE 32nd International Symposium on Computer Architecture and High Performance Computing (SBAC-PAD), Hilo, HI, USA, 13–15 November 2020; pp. 99–106. [Google Scholar] [CrossRef]

- Han, Q.; Hu, Y.; Yu, F.; Yang, H.; Liu, B.; Hu, P.; Gong, R.; Wang, Y.; Wang, R.; Luan, Z.; et al. Extremely Low-bit Convolution Optimization for Quantized Neural Network on Modern Computer Architectures. In Proceedings of the 49th International Conference on Parallel Processing, ICPP ’20, Edmonton, AB, Canada, 17–20 August 2020. [Google Scholar] [CrossRef]

- Yang, Z.; Lu, L.; Wang, R. A batched GEMM optimization framework for deep learning. J. Supercomput. 2022, 78, 13393. [Google Scholar] [CrossRef]

- Nath, R.; Tomov, S.; Dongarra, J. An Improved Magma Gemm For Fermi Graphics Processing Units. Int. J. High Perform. Comput. Appl. 2010, 24, 511–515. [Google Scholar] [CrossRef]

- Huawei. Atlas 300T A2 Training Card User Guide 03. Available online: https://support.huawei.com/enterprise/en/doc/EDOC1100338863/5549b5ec/performance?idPath=23710424|251366513|22892968|252309113|254184749 (accessed on 24 October 2023).

- Nvidia. NVIDIA A800 40GB Active Graphics Card. Available online: https://www.nvidia.com/en-us/products/workstations/a800 (accessed on 24 October 2023).

- Zhang, S.; Roller, S.; Goyal, N.; Artetxe, M.; Chen, M.; Chen, S.; Dewan, C.; Diab, M.; Li, X.; Lin, X.V.; et al. OPT: Open Pre-trained Transformer Language Models. arXiv 2022, arXiv:2205.01068. [Google Scholar] [CrossRef]

- Dongarra, J.; Luszczek, P. HPL-MxP benchmark: Mixed-precision algorithms, iterative refinement, and scalable data generation. Int. J. High Perform. Comput. Appl. 2025. [Google Scholar] [CrossRef]

- Xue, W.; Yang, K.; Liu, Y.; Fan, D.; Xu, P.; Tian, Y. Unlocking High Performance with Low-Bit NPUs and CPUs for Highly Optimized HPL-MxP on Cloud Brain II. In Proceedings of the SC24: International Conference for High Performance Computing, Networking, Storage and Analysis, Atlanta, GA, USA, 17 November 2024; pp. 1–16. [Google Scholar] [CrossRef]

| index_mn | ||

|---|---|---|

| 0 | 16 | 16 |

| 1 | 32 | 32 |

| 2 | 64 | 64 |

| 3 | 128 | 128 |

| 4 | 256 | 128 |

| index_k | index_mn_max | |

|---|---|---|

| 0 | 128 | 4 |

| 1 | 256 | 3 |

| 2 | 512 | 2 |

| 3 | 1024 | 1 |

| Huawei Atlas 300T A2 | Nvidia A800 | |

|---|---|---|

| Buffers | L1 512 KB (per core) | L1 192 KB (per SM) |

| Caches | L2 192 MB | L2 40 MB |

| Memory | 64 GB HBM2e | 80 GB HBM2e |

| Memory Bandwidth | 1.6 TB/s | 2.04 TB/s |

| Theoretical FP16 Computability | 280 TFLOPS | 312 TFLOPS 1 |

| Programming languages | Ascend C | C++ |

| Compilers | Bisheng and gcc | NVCC |

| Software version | CANN 8.0.0 | cuBLAS 12.6 |

| GEMM implementation | (1) Our proposed HGEMM (2) GemmExample | cublasHgemm |

| Kernel | Hardware | Maximum TFLOPS | Average TFLOPS | Average Speedup |

|---|---|---|---|---|

| GemmExample | Huawei Atlas 300T A2 | 69.10 | 51.25 | 1.0× |

| cublasHgemm | Nvidia A800 | 292.89 | 86.86 | 1.7× |

| Our proposed HGEMM | Huawei Atlas 300T A2 | 259.19 | 182.62 | 3.6× |

| M | cublasHGEMM | GemmExample | Our Proposed HGEMM | |||

|---|---|---|---|---|---|---|

| TFLOPS | Duration | TFLOPS | Duration | TFLOPS | Duration | |

| 8 | 12.48 | 263.55 | 2.54 | 1292.42 | 5.97 | 550.90 |

| 16 | 25.47 | 258.18 | 4.93 | 1333.13 | 12.86 | 511.33 |

| 32 | 49.16 | 267.58 | 9.28 | 1417.23 | 26.13 | 503.34 |

| 64 | 88.81 | 296.22 | 19.73 | 1333.62 | 52.37 | 502.37 |

| 128 | 143.85 | 365.76 | 35.33 | 1489.37 | 104.72 | 502.40 |

| 256 | 190.37 | 552.74 | 36.89 | 2852.70 | 197.55 | 532.67 |

| 512 | 243.56 | 864.06 | 37.47 | 5617.02 | 244.00 | 862.50 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhang, E.; Xu, P.; Lu, L. Efficient Low-Precision GEMM on Ascend NPU: HGEMM’s Synergy of Pipeline Scheduling, Tiling, and Memory Optimization. Computers 2026, 15, 39. https://doi.org/10.3390/computers15010039

Zhang E, Xu P, Lu L. Efficient Low-Precision GEMM on Ascend NPU: HGEMM’s Synergy of Pipeline Scheduling, Tiling, and Memory Optimization. Computers. 2026; 15(1):39. https://doi.org/10.3390/computers15010039

Chicago/Turabian StyleZhang, Erkun, Pengxiang Xu, and Lu Lu. 2026. "Efficient Low-Precision GEMM on Ascend NPU: HGEMM’s Synergy of Pipeline Scheduling, Tiling, and Memory Optimization" Computers 15, no. 1: 39. https://doi.org/10.3390/computers15010039

APA StyleZhang, E., Xu, P., & Lu, L. (2026). Efficient Low-Precision GEMM on Ascend NPU: HGEMM’s Synergy of Pipeline Scheduling, Tiling, and Memory Optimization. Computers, 15(1), 39. https://doi.org/10.3390/computers15010039