Development of an Educational Application for Software Engineering Learning

Abstract

:1. Introduction

2. Architecture and Data Model

- User table. Stores profile information and manages the three types of users present in the application.

- Topic table. Stores the information of the topics present in the application and their description.

- Question table. Stores the information of the question repository present in the application.

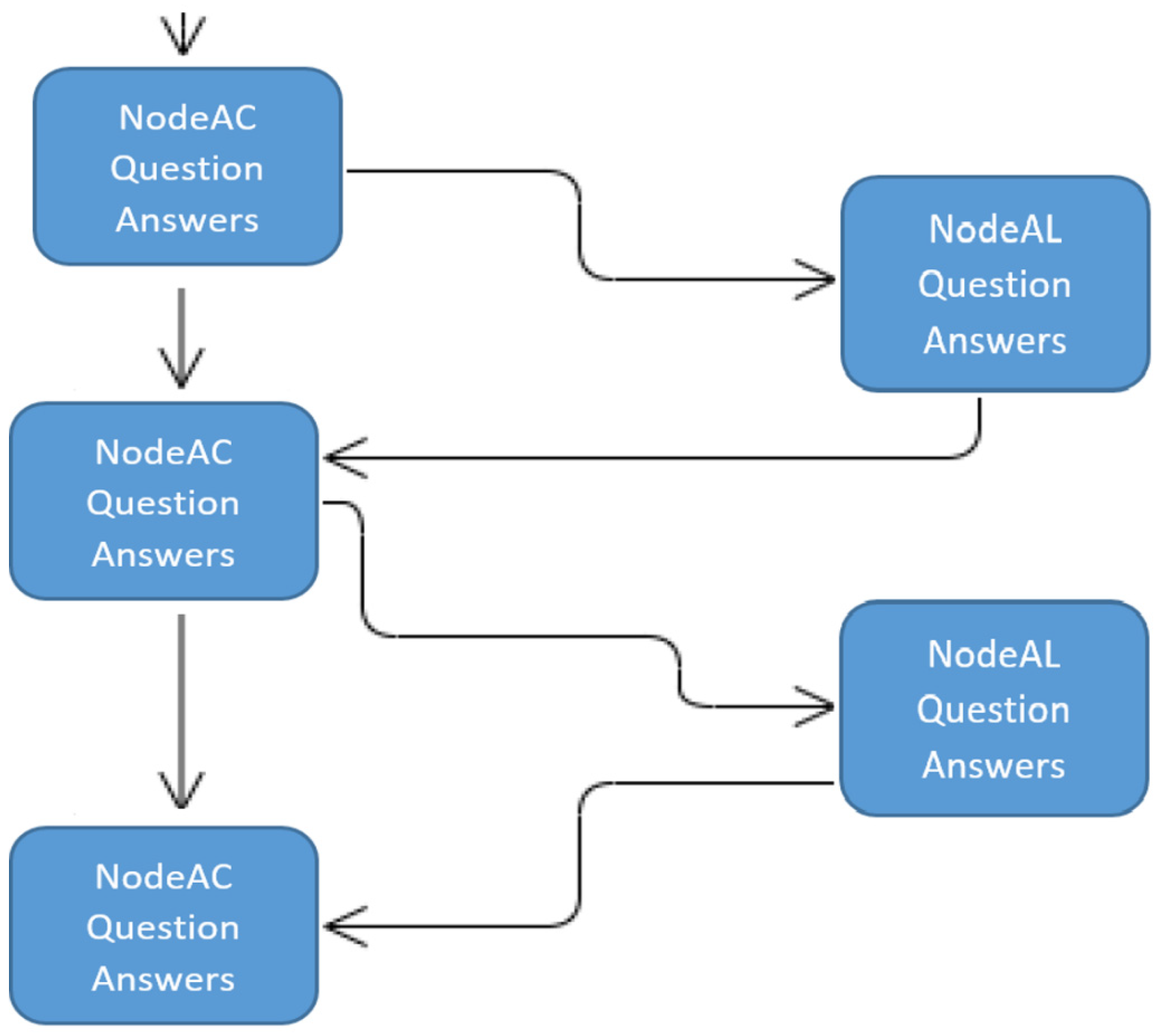

- NodoCA table. Represents a node in the tree that contains an exam question that will be shown to the student user. It contains information about the question, a pointer to the node with the alternative question, a pointer to the topic that the question refers to, and a pointer to the teacher user who created the question.

- Alternative node table. Describes the information that represents the “Object” defined as an alternative question in the exam, if the student fails the main question. This entity represents a node in the tree structure formed for the learning path.

- Achievements table. This describes the information represented in the Achievements table, which represents the level obtained by a student user in a certain topic, so it is related to both tables.

- Answer table. Represents the information of an answer to a specific question, so it contains a pointer to the question from which it came.

- Statistics table. It contains the information that a student has obtained in a certain exam, that is, number of correct and failed answers, average response time.

- Question_Suspended table. It contains the information that represents the information of the questions that a student has failed in a certain exam, that is, the ids of these questions.

- User Module. It contains all the services related to the user’s profile, login and registration, all the achievements and milestones corresponding to the students and their statistics. The base URL on which these services would be mounted would be “/user”. The description of the available endpoints is shown in Table 1.

- Question module. It contains all the services related to the questions of the common repository for all teachers. The base URL on which these services will be mounted will be “/question”. The description of the available endpoints is shown in Table 2.

- Topic module. It contains all the services related to the themes of the common repository for all users. The base URL on which these services will be mounted will be “/topic”. The description of the available endpoints is shown in Table 3.

- NodoCA module. It contains all the services related to the nodes that together will represent a complete exam, that is, the tree corresponding to a learning path created by a teacher. The base URL on which these services will be mounted will be “/nodeca”. The description of the available endpoints is shown in Table 4.

3. Functionality

3.1. The Quizz

- If the user had an initial level and obtains a score ≥5 in the exam, they will pass to the bronze level;

- If the user was bronze level and gets a score ≥7 in the exam, they will pass to silver level;

- If the user had a silver level and gets a score ≥8 in the exam, they will pass to the gold level;

3.2. Student

- Take an exam. Figure 3 shows the screen where the different content modules appear. When clicking on a module, the user is asked if they want to take an exam to level up, and if they accept, the exam to take is shown. To do this, the student clicks on the “Game” (“Juego”) menu tab, and a screen is displayed with all the available topics and the level of each one of them. Next, if it is clicked on one of the topics, then the student will be able to take an exam to obtain the next level in that particular topic. For it, the student must click on the “Play” (“Jugar”) button. If there are published exams for that topic and that level, the student will be able to do the exam. Otherwise, the initial screen is returned and it is reported that there are no exams in the repository to obtain the next level.

- View statistics. Figure 4 shows the screen where the modules that a user has made appear. When you click on an exam, the statistics of each module appear, and the incorrect answers are shown for each exam performed. The user can see the statistics of the exams taken, the average response time or the level obtained in each topic. To do this, the user must go to the Ranking screen of the menu where a list of all students is displayed, and click on the own profile or that of any other student, showing the levels in each topic. Regarding statistics, only the own statistics are visible but not those of the rest of students. On the other hand, if it is clicked on the “Show Fails” (“Mostrar Suspensos”) button, a screen will be displayed with a summary with the exams it has been failed, and by clicking on a specific exam; the content of the exam and the failed questions will be displayed.

- Reset state. The student can reset the game counter and clear all the statistics and tests taken. To do this, on the main screen it must be clicked on the “Reset Score and Achievements” (“Resetear Puntuación y Logros”) button.

3.3. Teacher

- Check the status of a student. It is possible to view the ranking and the statistics associated with any student registered in the application.

- Manage question repository. Figure 5 shows the teacher’s screen with all the content modules. When you click on a module, another screen is displayed where the teacher can create or edit exams for that module. When a teacher user accesses the menu tab called “Game” (“Juego”), it is possible to view an interface where the course topics are listed but without levels associated with each topic. If it is clicked on a topic, a screen is displayed where the next actions are shown:

- Add question. Figure 6b shows the teacher’s screen from which a new question can be created to include in the question repository. To do this, it is necessary to click on the “Create Question” (“Crear Pregunta”) button that will display a new screen where the data for the question are entered. Then, click on the “Save” (“Guardar”) button, being stored in the common repository of questions for all teacher users. The questions in the repository can be modified, deleted or used to create an exam by any teacher.

- Create a question repository. Figure 6a shows the teacher’s screen from which a question repository can be created. To do this, it is necessary to click on the “Create repository” (“Crear Repositorio”) button, which will display a form where the number of questions in the repository must be indicated. The same process of creating questions is repeated as many times as the number of questions indicated.

- Delete a question. To do this, it is necessary to click on the “Delete Question” (“Borrar Pregunta”) button, showing a screen with all the questions available in the common repository of teachers. If it is clicked on any of the questions, it will be asked if the user would like to delete that question. If it is confirmed, then it will permanently delete it from the repository. If the question is used in some exam, then it cannot be deleted and an informational message will be displayed to the user.

- Modify question. To do this, it is necessary to click on the “Modify Question” (“Modificar Pregunta”) button and a screen will appear with all of the questions available in the common repository of teachers. If it is clicked on any of the questions, the same screen used for “Add Question” appears but with the data filled in. Next, the data is modified, and when the modification is finished, it must be clicked on “Save” (“Guardar”) button.

- Create an exam. Figure 7a shows the screen that shows the list of exams that have not been published and Figure 7b shows the screen for creating a new exam. The process is the following. It must be clicked on the “Create CA” (“Crear CA”) button, and a screen will be displayed where it must be indicated the level of the exam (bronze, silver or gold). Next, it is shown the question repository where it must be repeated 10 times: choose a main question and choose an alternate question each time. The repository must have at least 11 questions in order to create an exam.

- Modify exam. Figure 7c shows the screen for modifying an exam and Figure 7d shows the screen that allows you to delete a specific exam. The process is the following. It is clicked on the “Modify CA” (“Modificar CA”) button, showing a screen with a summary of the exams that have not yet been published. If it is clicked on one of them, all the information of the exam will be displayed, and below the repository of questions. If one of the exam questions is selected, the application will ask if the user would like to replace the main question or the alternative. The user must then select a question from the repository to replace the previous one. Finally, it must be clicked on the “Modify” (“Modificar”) button.

- Delete exam. Figure 7d shows the screen that allows you to delete a specific exam. The process is the following. It is clicked on the “Delete CA” (“Borrar CA”) button, showing a screen with a summary of all published and unpublished exams. If it is clicked on an exam, all the information of the exam will be displayed. Next, it must be clicked on “Delete” (“Borrar”) button to delete it from the system.

- Publish exam. It is clicked on the “Publish CA” (“Publicar CA”) button, showing a screen with a summary of all unpublished exams. If it is clicked on an exam, all the information of the exam will be displayed. Next, it must click on “Publish” (“Publicar”) button to publish it in the system.

3.4. Administrator

- Deactivate teacher Figure 8a shows the screen where a teacher can be deactivated. The process is the following. The administrator must search for the user that it must be deactivated, select it and click on the button “Unsubscribe Users” (“Dar de baja usuarios”).

- Activate teacher. Figure 8b shows how to activate a teacher. The process is the following. If there is a teacher user pending activation, when the administrator logs in a notification is displayed. Next, to activate the user, the administrator must click on the notification, and a screen appears where the activation must be confirmed by clicking “Accept” (“Aceptar”).

3.5. Other Common Functions

- Login. Figure 9a shows the login screen. The process is as follows: It is necessary to be registered in the application. In the login screen, the username and password must be entered. When the session starts, the user’s home page is displayed. Always, it is possible to log out and return to the login screen using the log out (“Cerrar sesión”) button.

- Register. Figure 9b shows the screen for editing a user’s profile. The process is as follows: the “Register” (“Registro”) button that appears on the login screen must be clicked, and the user must enter the requested data on the screen that appears. In particular, it must be indicated if the user will register as a teacher or as a student.

- Edit profile. Figure 9c shows the screen for registering. The process is the following. To do this, click on the edit button that appears on the user’s home page and a screen is displayed with the user’s data that can be modified: password, image and others. To confirm the changes, it must be clicked on the “Update” (“Actualizar”) button.

- Unsubscribe user. Figure 10c shows the screen to unsubscribe from the application. To do this, it must be clicked on the “Unsubscribe” (“Dar de baja”) button that appears on the user’s home page, and confirm the action.

- Check ranking. Figure 10a shows the application options menu and Figure 10b shows the user profile edit screen. To do this, it must be clicked on the menu located at the top right of the user’s home page. As a result, a list of students is shown, so that if it is selected one, then the name, nick, email and percentage of the game completed will be displayed.

4. Evaluation

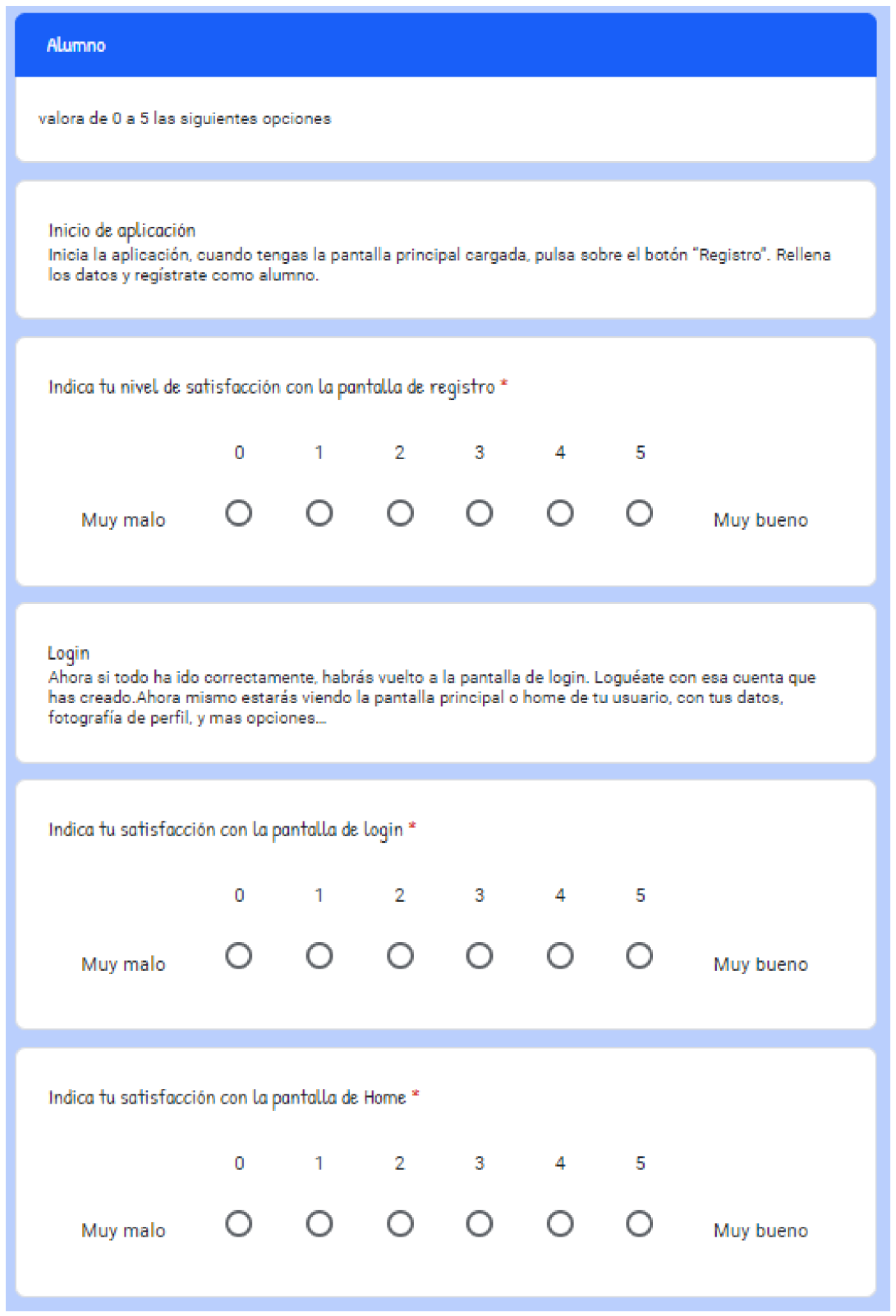

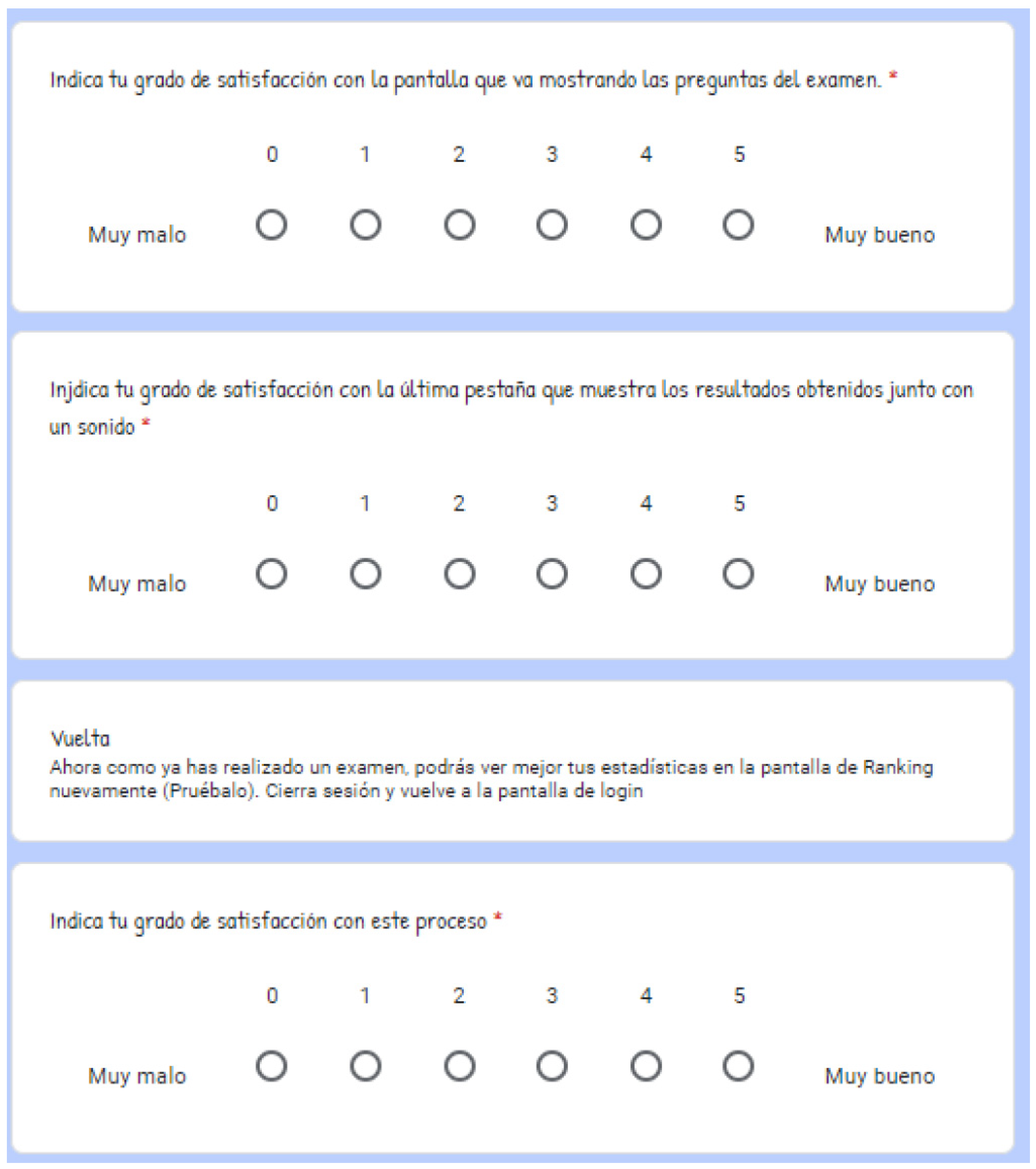

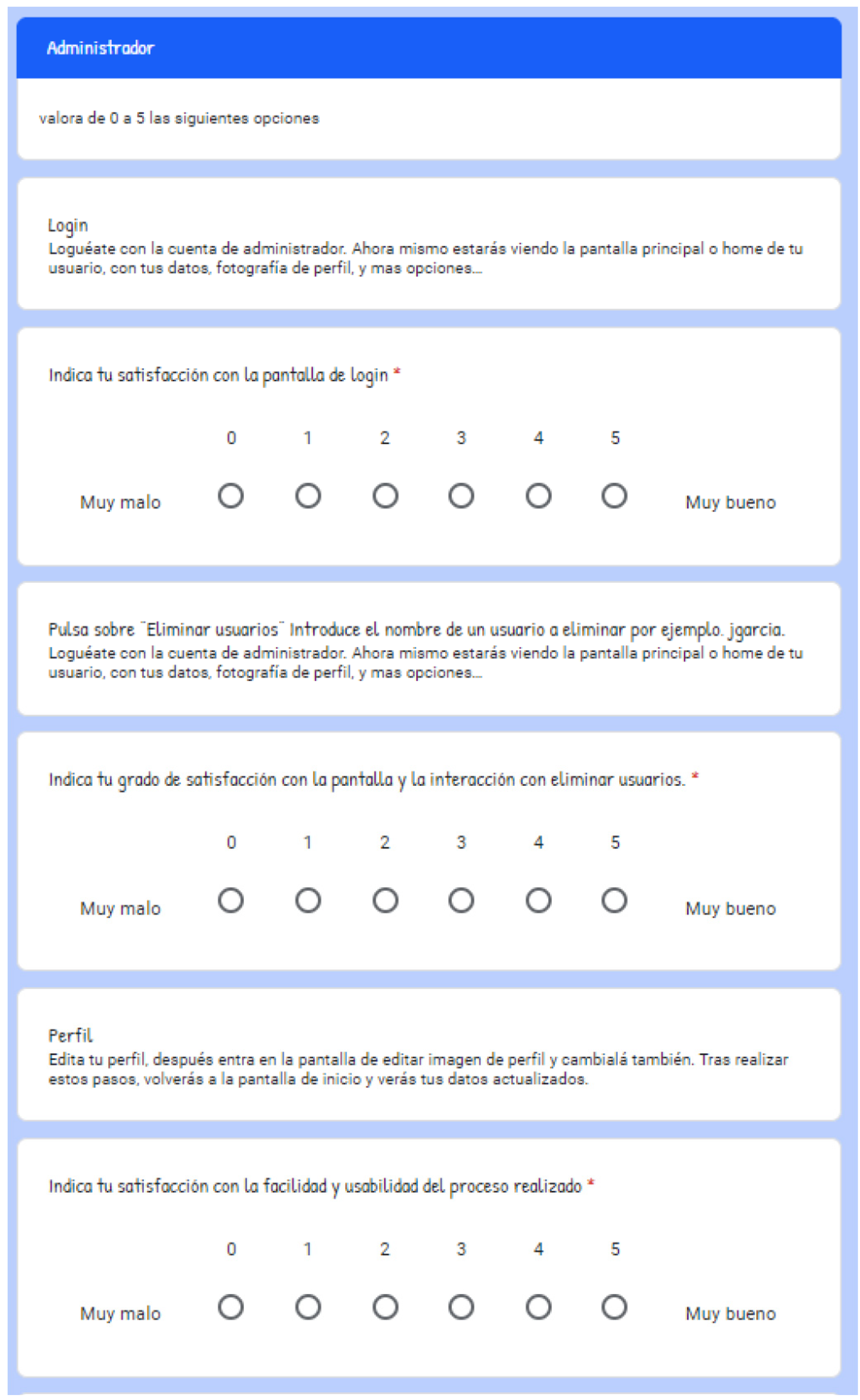

- The first block of questions shows general questions related to age, relationship with university, and gender.

- The second block shows questions related to the user’s role. At this point, the user must follow the steps indicated before evaluating the questions.

- View the screen horizontally.

- Eliminate background sound when pressing.

- Improve the colors and design of the interface.

- Improve the editing of an exam.

- Improve the display of some texts in the application.

- Improve the color and font of some texts

- Improve the identification of possible answers in question tests.

5. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

- (b)

- Assessment questions for a registered teacher role (Figure A6, Figure A7, Figure A8, Figure A9 and Figure A10)

- (c)

- Assessment questions for an administrator role (Figure A11 and Figure A12).

References

- Ouhbi, S.; Pombo, N. Software engineering education: Challenges and perspectives. In Proceedings of the IEEE Global Engineering Education Conference (EDUCON), Porto, Portugal, 27–30 April 2020; IEEE: Piscataway, NJ, USA; pp. 202–209. [Google Scholar]

- Streveler, R.A.; Pitterson, N.P.; Hira, A.; Rodriguez-Simmonds, H.; Alvarez, J.O. Learning about engineering education research: What conceptual difficulties still exist for a new generation of scholars? In Proceedings of the IEEE Frontiers in Education Conference (FIE), El Paso, TX, USA, 21–24 October 2015; IEEE: Piscataway, NJ, USA; pp. 1–6. [Google Scholar]

- Cheah, C.S. Factors contributing to the difficulties in teaching and learning of computer programming: A literature review. Contemp. Educ. Technol. 2020, 12, ep272. [Google Scholar] [CrossRef]

- Bosse, Y.; Gerosa, M.A. Why is programming so difficult to learn? Patterns of Difficulties Related to Programming Learning Mid-Stage. ACM SIGSOFT Softw. Eng. Notes 2017, 41, 1–6. [Google Scholar] [CrossRef]

- Jaccheri, L.; Morasca, S. On the importance of dialogue with industry about software engineering education. In Proceedings of the 2006 International Workshop on Summit on Software Engineering Education, Shanghai, China, 20 May 2006; IEEE: Piscataway, NJ, USA; pp. 5–8. [Google Scholar]

- Barkley, E.F.; Major, C.H. Student Engagement Techniques: A Handbook for College Faculty; John Wiley & Sons: Hoboken, NJ, USA, 2020; pp. 167–205. [Google Scholar]

- Hodges, C.B. Designing to motivate: Motivational techniques to incorporate in e-learning experiences. J. Interact. Online Learn. 2004, 2, 1–7. [Google Scholar]

- Verner, J.M.; Babar, M.A.; Cerpa, N.; Hall, T.; Beecham, S. Factors that motivate software engineering teams: A four country empirical study. J. Syst. Softw. 2014, 92, 115–127. [Google Scholar] [CrossRef]

- Sarasa-Cabezuelo, A. Desarrollo de competencias mediante la realización de proyectos informáticos. Experiencia en la asignatura de Ingeniería del Software. In Actas del Congreso Virtual: Avances en Tecnologías, Innovación y Desafíos de la Educación Superior (ATIDES 2020); Servei de Comunicació i Publicacions: Castelló de la Plana, Spain, 2020; pp. 137–151. [Google Scholar]

- Goñi, A.; Ibáñez, J.; Iturrioz, J.; Vadillo, J.Á. Aprendizaje Basado en Proyectos usando metodologías ágiles para una asignatura básica de Ingeniería del Software. In Actas de las Jornadas de Enseñanza Universitaria de la Informática; Universidad de Oviedo: Oviedo, Spain, 2014; pp. 20–35. [Google Scholar]

- Knutas, A.; Hynninen, T.; Hujala, M. To get good student ratings should you only teach programming courses? Investigation and implications of student evaluations of teaching in a software engineering context. In Proceedings of the IEEE/ACM 43rd International Conference on Software Engineering: Software Engineering Education and Training (ICSE-SEET), Madrid, Spain, 22–30 May 2021; IEEE: Piscataway, NJ, USA; pp. 253–260. [Google Scholar]

- Herranz, E.; Palacios, R.C.; de Amescua Seco, A.; Sánchez-Gordón, M.L. Towards a Gamification Framework for Software Process Improvement Initiatives: Construction and Validation. J. Univ. Comput. Sci. 2016, 22, 1509–1532. [Google Scholar]

- Monteiro, R.H.B.; de Almeida Souza, M.R.; Oliveira, S.R.B.; dos Santos Portela, C.; de Cristo Lobato, C.E. The Diversity of Gamification Evaluation in the Software Engineering Education and Industry: Trends, Comparisons and Gaps. In Proceedings of the IEEE/ACM 43rd International Conference on Software Engineering: Software Engineering Education and Training (ICSE-SEET), Madrid, Spain, 22–30 May 2021; IEEE: Piscataway, NJ, USA; pp. 154–164. [Google Scholar]

- Rodríguez, G.; González-Caino, P.C.; Resett, S. Serious games for teaching agile methods: A review of multivocal literature. In Computer Applications in Engineering Education; John Wiley & Sons: Hoboken, NJ, USA, 2021; pp. 207–225. [Google Scholar]

- Alhammad, M.M.; Moreno, A.M. Gamification in software engineering education: A systematic mapping. J. Syst. Softw. 2018, 141, 131–150. [Google Scholar] [CrossRef]

- Ivanova, G.; Kozov, V.; Zlatarov, P. Gamification in software engineering education. In Proceedings of the 2019 42nd International Convention on Information and Communication Technology, Electronics and Microelectronics (MIPRO), Opatija, Croatia, 20–24 May 2019; IEEE: Piscataway, NJ, USA; pp. 1445–1450. [Google Scholar]

- García, F.; Pedreira, O.; Piattini, M.; Cerdeira-Pena, A.; Penabad, M. A framework for gamification in software engineering. J. Syst. Softw. 2017, 132, 21–40. [Google Scholar] [CrossRef]

- Berkling, K.; Thomas, C. Gamification of a Software Engineering course and a detailed analysis of the factors that lead to it’s failure. In Proceedings of the 2013 International Conference on Interactive Collaborative Learning (ICL), Kazan, Russia, 25–27 September 2013; IEEE: Piscataway, NJ, USA; pp. 525–530. [Google Scholar]

- Vera, R.A.A.; Arceo, E.E.B.; Mendoza, J.C.D.; Pech, J.P.U. Gamificación para la mejora de procesos en ingeniería de software: Un estudio exploratorio. ReCIBE Rev. Electrónica Comput. Inf. Biomédica Y Electrónica 2019, 8, C1. [Google Scholar]

- Morschheuser, B.; Hassan, L.; Werder, K.; Hamari, J. How to design gamification? A method for engineering gamified software. Inf. Softw. Technol. 2018, 95, 219–237. [Google Scholar] [CrossRef] [Green Version]

- Sattarov, A.; Khaitova, N. Mobile learning as new forms and methods of increasing the effectiveness of education. Eur. J. Res. Reflect. Educ. Sci. 2020, 7, 1169–1175. [Google Scholar]

- Tangirov, K. Didactical possibilities of mobile applications in individualization and informatization of education. Ment. Enlight. Sci. Methodol. J. 2020, 2020, 76–84. [Google Scholar]

- Raelovich, S.A.; Mikhlievich, Y.R.; Norbutaevich, K.F.; Mamasolievich, J.D.; Karimberdievich, A.F.; Suyunbaevich, K.U. Some didactic opportunities of application of mobile technologies for improvement in the educational process. J. Crit. Rev. 2020, 7, 348–352. [Google Scholar]

- Zhang, X.; Lo, P.; So, S.; Chiu, D.K.; Leung, T.N.; Ho, K.K.; Stark, A. Medical students’ attitudes and perceptions towards the effectiveness of mobile learning: A comparative information-need perspective. J. Librariansh. Inf. Sci. 2021, 53, 116–129. [Google Scholar] [CrossRef]

- Qureshi, M.I.; Khan, N.; Hassan Gillani, S.M.A.; Raza, H. A Systematic Review of Past Decade of Mobile Learning: What we Learned and Where to Go. Int. J. Interact. Mob. Technol. 2020, 14, 67–81. [Google Scholar] [CrossRef]

- Qureshi, M.I.; Khan, N.; Raza, H.; Imran, A.; Ismail, F. Digital Technologies in Education 4.0. Does it Enhance the Effectiveness of Learning? A Systematic Literature Review. Int. J. Interact. Mob. Technol. 2021, 15, 31–47. [Google Scholar] [CrossRef]

- Shaqour, A.; Salha, S.; Khlaif, Z. Students’ Characteristics Influence Readiness to Use Mobile Technology in Higher Education. Educ. Knowl. Soc. (EKS) 2021, 22, e23915. [Google Scholar] [CrossRef]

- Gupta, Y.; Khan, F.M.; Agarwal, S. Exploring Factors Influencing Mobile Learning in Higher Education–A Systematic Review. iJIM 2021, 15, 141. [Google Scholar]

- Venkataraman, J.B.; Ramasamy, S. Factors influencing mobile learning: A literature review of selected journal papers. Int. J. Mob. Learn. Organ. 2018, 12, 99–112. [Google Scholar] [CrossRef]

- Hu, S.; Laxman, K.; Lee, K. Exploring factors affecting academics’ adoption of emerging mobile technologies-an extended UTAUT perspective. Educ. Inf. Technol. 2020, 25, 4615–4635. [Google Scholar] [CrossRef]

| ENDPOINT | HTTP Method | Answer | Description |

|---|---|---|---|

| / | POST | Error code (200 or 400) with msg | Insert a user in DB |

| /{nick} | GET | Error code (200 or 400) with user object in body | Gets a user from the DB |

| / | GET | Error code (200 or 400) with users | Get list of registered users |

| /{nick} | PUT | Error code (200 or 400) with msg | Update a user in DB |

| /level/{nick}/reset | PUT | Error code with msg | Update user statistics |

| /{nick} | DELETE | Error code with msg | Delete a user from the DB |

| /teachers | GET | Error code with msg and users (teachers) objects in Json | Gets all teachers who have request submitted for registration and not approved by admin |

| /students | GET | Error msg and users (students) objects in Json | Gets all student users sorted by percentage |

| /activate/{nick} | PUT | Msg with error code | Activates a teacher user |

| /porc/{nick}/{porcentaje} | PUT | Msg with error code | Updates a user’s game completion percentage |

| /picture/{nick} | POST | Msg with error code | Updates a user’s profile picture |

| /pictures | GET | User images | Get the profile images of the students |

| /level/{user}/{IdTema} | GET | Error msg along with user level | Gets the level of a student user in a given topic |

| /levels/{user} | GET | Error msg, along with array of levels per topic | Gets all levels of a user per topic |

| /level/increment/{nick}/{idTema} | PUT | Msg with response code | Increase the level of a user in a certain topic |

| /level/decrement/{nick}/{idTema} | PUT | Msg with response code | Decreases the level of a user in a certain topic |

| /stadistics/{nick}/{nodoCA} | PUT | Msg with response code | Updates a user’s statistics based on their current level |

| /stadistics/{user} | GET | Msg with response code, if ok, array of user statistics | Get all user statistics for all levels |

| /exam/suspended/{user} | GET | Msg with response code, if ok, exam object array | Gets the failed exams of a student |

| /{idUsuario}/{idNodoCa} | DELETE | Msg with response code | Removing a user’s statistics |

| ENDPOINT | HTTP Method | Answer | Description |

|---|---|---|---|

| / | POST | Error code (200 or 400) with msg | Insert a question in DB |

| /questions/{idTema} | GET | Error code (200 or 400) with question array object | Get all questions for a given topic |

| /questions/{idTema}/{language} | GET | Error code (200 or 400) with question array object | Get all questions for a given topic and language |

| /{id} | GET | Error code (200 or 400) with msg and in case of ok, object asks. | Get a question |

| / | PUT | Error code with msg | Update all data for a question |

| /{id} | DELETE | Error code with msg | Delete a question with its answers from the DB |

| /suspended/{userNick}/{nodoCa} | GET | Error code with msg and query object array | Get a list of failed questions in an exam |

| ENDPOINT | HTTP Method | Answer | Description |

|---|---|---|---|

| / | GET | Error code (200 or 400) with msg and in case of ok, topic object array | Get all topics |

| /{id} | GET | Error code (200 or 400) with msg and in case of ok, topic object | Get a topic |

| ENDPOINT | HTTP Method | Answer | Description |

|---|---|---|---|

| / | POST | Error code with msg | Insert a node in DB |

| /saveCA | GET | Error code with msg | Add a complete CA, that is, save an exam in the DB. |

| /getCA/{nick}/{tema}/{nivel}/{language} | GET | Error code (200 or 400) with question array object | Get complete random CA for a given topic at a given level (initial, bronze, silver, gold) and language that is published |

| /list/nopublished/{tema}/{teacher}/{language} | GET | Error code with msg and in case of ok, full nodeca object array (tree structure) | Get all unpublished CAs (Exams) from a teacher on a certain topic |

| /list/{tema}/{teacher}/{language} | GET | Error code with msg and in case of ok, full nodeca object array (tree structure) | Get all the CAs of a teacher in a certain topic |

| /{id} | GET | Error code with msg and in case of ok, CA node object without children | Get a CA node |

| /public/{idParent} | PUT | Error code with msg | Publish a CA or exam (Parameter id of the parent node) |

| /{idParent} | DELETE | Error code with msg | Delete an exam from the DB |

| /original/{idParent}/{idNodoASust}/{idQuestionaAn} | PUT | Error code with msg | Update a CA modifying original question {idQuestioASust} id of the question we want to replace, {idQuestionaAn} id of the question to add |

| /alt/{idParent}/{idNodoASust}/{idQuestionaAn} | PUT | Error code with msg | Update a CA by modifying Alternative question, {idQuestioASust} id of the question we want to replace, {idQuestionaAn} id of the question to add |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sarasa-Cabezuelo, A.; Rodrigo, C. Development of an Educational Application for Software Engineering Learning. Computers 2021, 10, 106. https://doi.org/10.3390/computers10090106

Sarasa-Cabezuelo A, Rodrigo C. Development of an Educational Application for Software Engineering Learning. Computers. 2021; 10(9):106. https://doi.org/10.3390/computers10090106

Chicago/Turabian StyleSarasa-Cabezuelo, Antonio, and Covadonga Rodrigo. 2021. "Development of an Educational Application for Software Engineering Learning" Computers 10, no. 9: 106. https://doi.org/10.3390/computers10090106

APA StyleSarasa-Cabezuelo, A., & Rodrigo, C. (2021). Development of an Educational Application for Software Engineering Learning. Computers, 10(9), 106. https://doi.org/10.3390/computers10090106