Identifying Ovarian Cancer in Symptomatic Women: A Systematic Review of Clinical Tools

Abstract

:Simple Summary

Abstract

1. Introduction

2. Methods

2.1. Eligibility Criteria and Searches

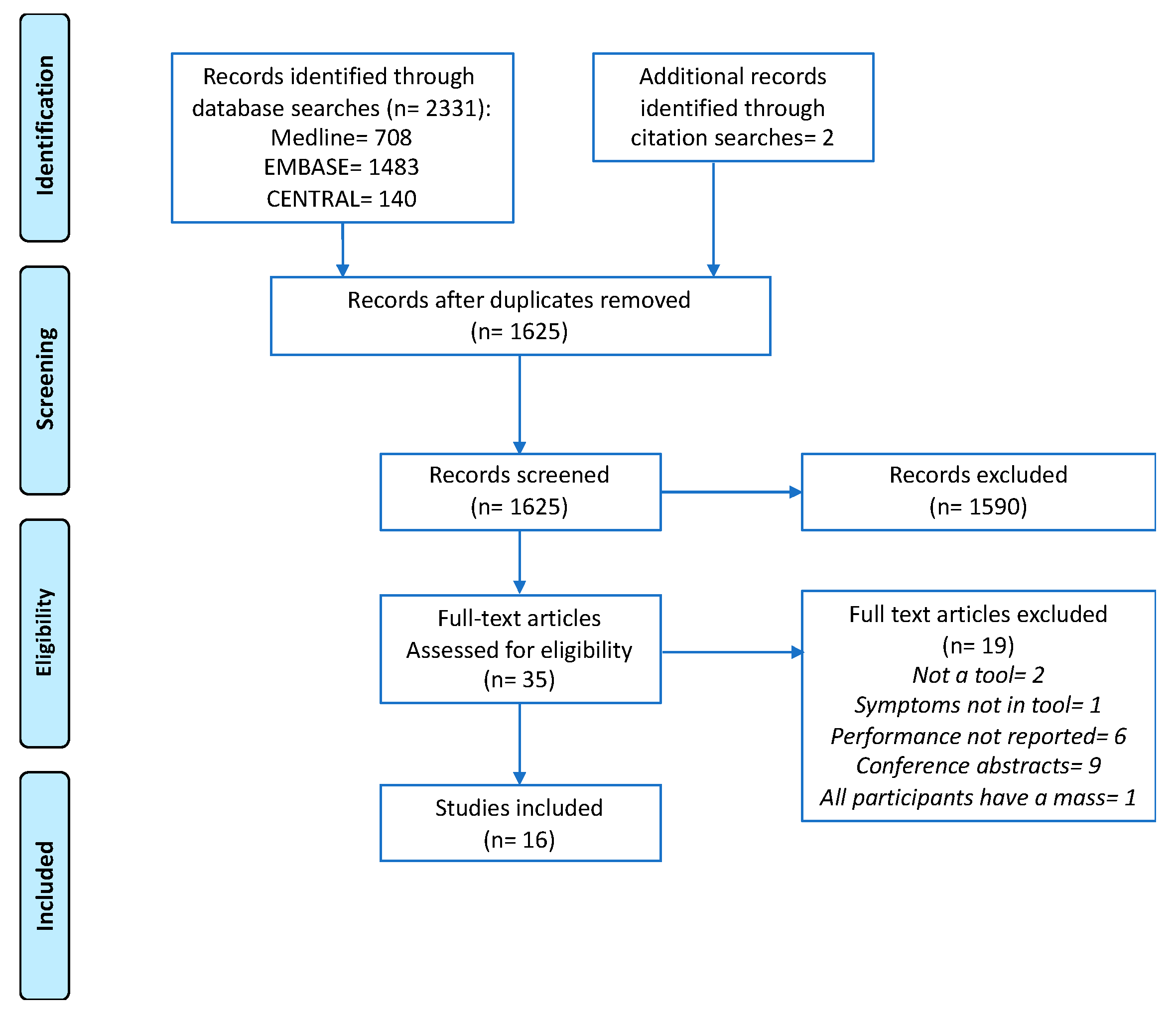

2.2. Study Selection

2.3. Data Extraction and Synthesis

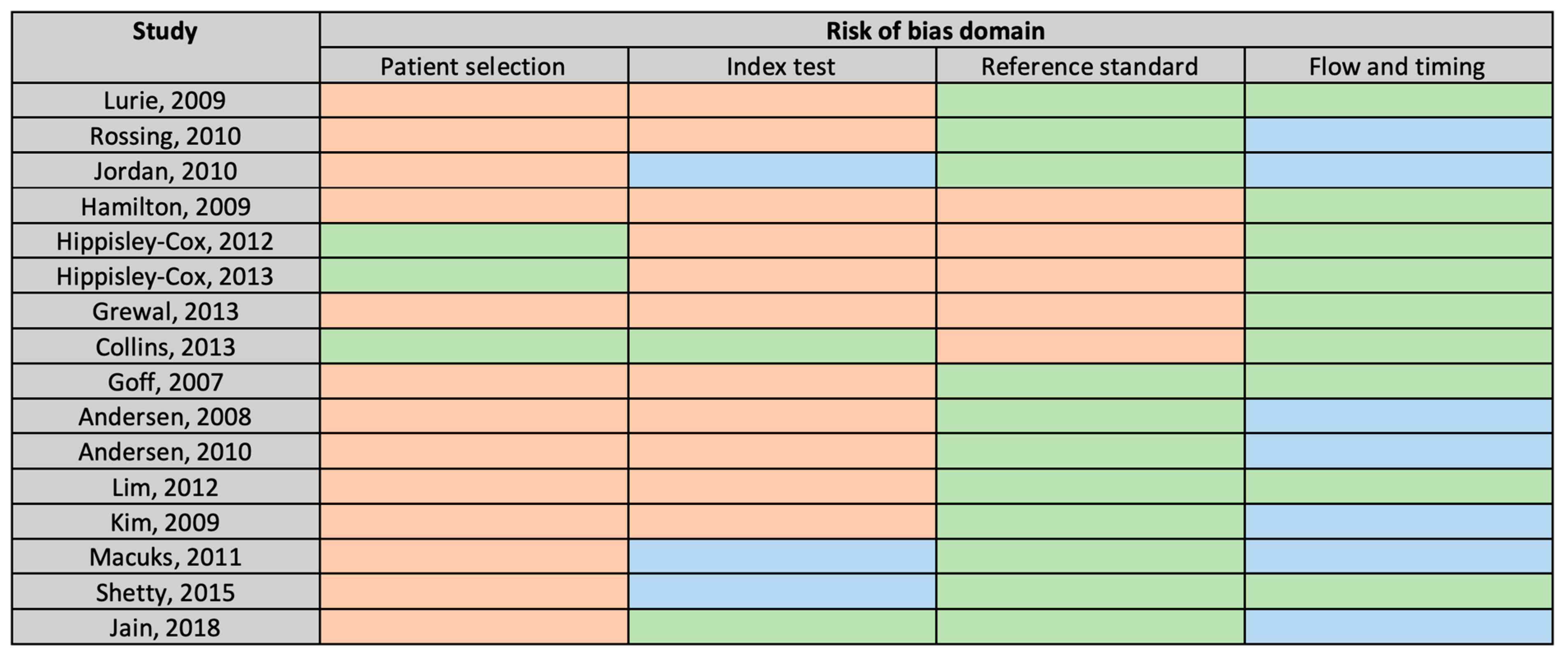

2.4. Risk of Bias Assessment

3. Results

3.1. Study Selection

3.2. Study Characteristics

3.3. Risk of Bias

4. Tool Variables

4.1. Evaluation of Tool Performance

4.2. Tool Diagnostic Accuracy

4.2.1. Hospital Setting

4.2.2. Population Setting

4.2.3. Primary Care

4.2.4. Positive Predictive Values

5. Discussion

5.1. Study Strengths and Limitations

5.2. Comparison of Tools

5.3. Clinical Relevance

6. Conclusions

Supplementary Materials

Author Contributions

Funding

Conflicts of Interest

References

- Bray, F.; Ferlay, J.; Soerjomataram, I.; Siegel, R.L.; Torre, L.A.; Jemal, A. Global cancer statistics 2018: GLOBOCAN estimates of incidence and mortality worldwide for 36 cancers in 185 countries. CA Cancer J. Clin. 2018, 68, 394–424. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Cancer Research UK. Ovarian Cancer Survival Statistics. Available online: http://www.cancerresearchuk.org/health-professional/cancer-statistics/statistics-by-cancer-type/ovarian-cancer/survival#heading-Three (accessed on 20 May 2020).

- Jacobs, I.J.; Menon, U.; Ryan, A.; Gentry-Maharaj, A.; Burnell, M.; Kalsi, J.K.; Amso, N.N.; Apostolidou, S.; Benjamin, E.; Cruickshank, D.; et al. Ovarian cancer screening and mortality in the UK Collaborative Trial of Ovarian Cancer Screening (UKCTOCS): A randomised controlled trial. Lancet 2016, 387, 945–956. [Google Scholar] [CrossRef] [Green Version]

- Pinsky, P.F.; Yu, K.; Kramer, B.S.; Black, A.; Buys, S.S.; Partridge, E.; Gohagan, J.; Berg, C.D.; Prorok, P.C. Extended mortality results for ovarian cancer screening in the PLCO trial with median 15 years follow-up. Gynecol. Oncol. 2016, 143, 270–275. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Barrett, J.; Sharp, D.J.; Stapley, S.; Stabb, C.; Hamilton, W. Pathways to the diagnosis of ovarian cancer in the UK: A cohort study in primary care. BJOG 2010, 117, 610–614. [Google Scholar] [CrossRef]

- National Cancer Intellegence Network. Routes to Diagnosis 2006–2016 by year, V2.1a. Available online: http://www.ncin.org.uk/publications/routes_to_diagnosis (accessed on 21 May 2020).

- Funston, G.; Melle, V.M.; Ladegaard Baun, M.-L.; Jensen, H.; Helpser, C.; Emery, J.; Crosbie, E.; Thompson, M.; Hamilton, W.; Walter, F.M. Variation in the initial assessment and investigation for ovarian cancer in symptomatic women: A systematic review of international guidelines. BMC Cancer 2019, 19, 1028. [Google Scholar] [CrossRef] [Green Version]

- Ebell, M.H.; Culp, M.B.; Radke, T.J. A Systematic Review of Symptoms for the Diagnosis of Ovarian Cancer. Am. J. Prev. Med. 2016, 50, 384–394. [Google Scholar] [CrossRef]

- Gilbert, L.; Basso, O.; Sampalis, J.; Karp, I.; Martins, C.; Feng, J.; Piedimonte, S.; Quintal, L.; Ramanakumar, A.V.; Takefman, J.; et al. Assessment of symptomatic women for early diagnosis of ovarian cancer: Results from the prospective DOvE pilot project. Lancet Oncol. 2012, 13, 285–291. [Google Scholar] [CrossRef]

- Goff, B.A.; Lowe, K.A.; Kane, J.C.; Robertson, M.D.; Gaul, M.A.; Andersen, M.R. Symptom triggered screening for ovarian cancer: A pilot study of feasibility and acceptability. Gynecol. Oncol. 2012, 124, 230–235. [Google Scholar] [CrossRef] [Green Version]

- Andersen, M.R.; Lowe, K.A.; Goff, B.A. Value of Symptom-Triggered Diagnostic Evaluation for Ovarian Cancer. Obstet. Gynecol. 2014, 123, 73–79. [Google Scholar] [CrossRef] [Green Version]

- Moons, K.G.M.; Altman, D.G.; Reitsma, J.B.; Ioannidis, J.P.A.; Macaskill, P.; Steyerberg, E.W.; Vickers, A.J.; Ransohoff, D.F.; Collins, G.S. Transparent reporting of a multivariable prediction model for individual prognosis or diagnosis (TRIPOD): Explanation and elaboration. Ann. Intern. Med. 2015, 162, W1–W73. [Google Scholar] [CrossRef] [Green Version]

- Goff, B.A.; Mandel, L.S.; Melancon, C.H.; Muntz, H.G. Frequency of symptoms of ovarian cancer in women presenting to primary care clinics. JAMA 2004, 291, 2705–2712. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Hamilton, W.; Peters, T.J.; Bankhead, C.; Sharp, D. Risk of ovarian cancer in women with symptoms in primary care: Population based case-control study. BMJ 2009, 339, b2998. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Laupacis, A.; Sekar, N.; Stiell, I.G. Clinical prediction rules: A review and suggested modifications of methodological standards. J. Am. Med. Assoc. 1997, 277, 488–494. [Google Scholar] [CrossRef]

- Ouzzani, M.; Hammady, H.; Fedorowicz, Z.; Elmagarmid, A. Rayyan-a web and mobile app for systematic reviews. Syst. Rev. 2016, 5, 210. [Google Scholar] [CrossRef] [Green Version]

- Usher-Smith, J.A.; Sharp, S.J.; Griffin, S.J. The spectrum effect in tests for risk prediction, screening, and diagnosis. BMJ 2016, 353, i3139. [Google Scholar] [CrossRef] [Green Version]

- Whiting, P.F.; Rutjes, A.W.S.; Westwood, M.E.; Mallett, S.; Deeks, J.J.; Reitsma, J.B.; Leeflang, M.M.G.; Sterne, J.A.C.; Bossuyt, P.M.M. Quadas-2: A revised tool for the quality assessment of diagnostic accuracy studies. Ann. Intern. Med. 2011, 155, 529–536. [Google Scholar] [CrossRef]

- Lurie, G.; Thompson, P.J.; McDuffie, K.E.; Carney, M.E.; Goodman, M.T. Prediagnostic symptoms of ovarian carcinoma: A case-control study. Gynecol. Oncol. 2009, 114, 231–236. [Google Scholar] [CrossRef] [Green Version]

- Rossing, M.A.; Wicklund, K.G.; Cushing-Haugen, K.L.; Weiss, N.S. Predictive value of symptoms for early detection of ovarian cancer. J. Natl. Cancer Inst. 2010, 102, 222–229. [Google Scholar] [CrossRef] [Green Version]

- Jordan, S.J.; Coory, M.D.; Webb, P.M. Re: Predictive Value of Symptoms for Early Detection of Ovarian Cancer. JNCI J. Natl. Cancer Inst. 2010, 102, 1599–1601. [Google Scholar] [CrossRef] [Green Version]

- Hippisley-Cox, J.; Coupland, C. Identifying women with suspected ovarian cancer in primary care: Derivation and validation of algorithm. BMJ 2012, 344, d8009. [Google Scholar] [CrossRef] [Green Version]

- Hippisley-Cox, J.; Coupland, C. Symptoms and risk factors to identify women with suspected cancer in primary care: Derivation and validation of an algorithm. Br. J. Gen. Pract. 2013, 63, e11–e21. [Google Scholar] [CrossRef] [Green Version]

- Collins, G.S.; Altman, D.G. Identifying women with undetected ovarian cancer: Independent and external validation of QCancer® (Ovarian) prediction model. Eur. J. Cancer Care (Engl.) 2013, 22, 423–429. [Google Scholar] [CrossRef]

- Grewal, K.; Hamilton, W.; Sharp, D. Ovarian cancer prediction: Development of a scoring system for primary care. BJOG An Int. J. Obstet. Gynaecol. 2013, 120, 1016–1019. [Google Scholar] [CrossRef] [Green Version]

- Kim, M.-K.; Kim, K.; Kim, S.M.; Kim, J.W.; Park, N.-H.; Song, Y.-S.; Kang, S.-B. A hospital-based case-control study of identifying ovarian cancer using symptom index. J. Gynecol. Oncol. 2009, 20, 238–242. [Google Scholar] [CrossRef]

- Macuks, R.; Baidekalna, I.; Donina, S. Diagnostic test for ovarian cancer composed of ovarian cancer symptom index, menopausal status and ovarian cancer antigen CA125. Eur. J. Gynaecol. Oncol. 2011, 32, 286–288. [Google Scholar]

- Shetty, J.; Priyadarshini, P.; Pandey, D.; Manjunath, A.P. Modified Goff Symptom Index: Simple triage tool for ovarian malignancy. Sultan Qaboos Univ. Med. J. 2015, 15, e370–e375. [Google Scholar] [CrossRef]

- Jain, S.; Danodia, K.; Suneja, A.; Mehndiratta, M.; Chawla, S. Symptom index for detection of ovarian malignancy in indian women: A hospital-based study. J. Indian Acad. Clin. Med. 2018, 19, 27–32. [Google Scholar]

- Goff, B.A.; Mandel, L.S.; Drescher, C.W.; Urban, N.; Gough, S.; Schurman, K.M.; Patras, J.; Mahony, B.S.; Robyn Andersen, M. Development of an ovarian cancer symptom index: Possibilities for earlier detection. Cancer 2007, 109, 221–227. [Google Scholar] [CrossRef]

- Andersen, M.R.; Goff, B.A.; Lowe, K.A.; Scholler, N.; Bergan, L.; Dresher, C.W.; Paley, P.; Urban, N. Combining a symptoms index with CA 125 to improve detection of ovarian cancer. Cancer 2008, 113, 484–489. [Google Scholar] [CrossRef] [Green Version]

- Andersen, M.R.; Goff, B.A.; Lowe, K.A.; Scholler, N.; Bergan, L.; Drescher, C.W.; Paley, P.; Urban, N. Use of a Symptom Index, CA125, and HE4 to predict ovarian cancer. Gynecol. Oncol. 2010, 116, 378–383. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Lim, A.W.W.; Mesher, D.; Gentry-Maharaj, A.; Balogun, N.; Jacobs, I.; Menon, U.; Sasieni, P. Predictive value of symptoms for ovarian cancer: Comparison of symptoms reported by questionnaire, interview, and general practitioner notes. J. Natl. Cancer Inst. 2012, 104, 114–124. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Merritt, M.A.; Green, A.C.; Nagle, C.M.; Webb, P.M.; Bowtell, D.; Chenevix-Trench, G.; Green, A.; Webb, P.; DeFazio, A.; Gertig, D.; et al. Talcum powder, chronic pelvic inflammation and NSAIDs in relation to risk of epithelial ovarian cancer. Int. J. Cancer 2008, 122, 170–176. [Google Scholar] [CrossRef] [PubMed]

- QResearch The QResearch Database. Available online: https://www.qresearch.org (accessed on 8 January 2019).

- THIN® The Health Improvement Network. Available online: https://www.the-health-improvement-network.com/en/ (accessed on 9 November 2020).

- Lowe, K.A.; Shah, C.; Wallace, E.; Anderson, G.; Paley, P.; McIntosh, M.; Andersen, M.R.; Scholler, N.; Bergan, L.; Thorpe, J.; et al. Effects of personal characteristics on serum CA125, mesothelin, and HE4 levels in healthy postmenopausal women at high-risk for ovarian cancer. Cancer Epidemiol. Biomark. Prev. 2008, 17, 2480–2487. [Google Scholar] [CrossRef] [Green Version]

- Menon, U.; Gentry-Maharaj, A.; Hallett, R.; Ryan, A.; Burnell, M.; Sharma, A.; Lewis, S.; Davies, S.; Philpott, S.; Lopes, A.; et al. Sensitivity and specificity of multimodal and ultrasound screening for ovarian cancer, and stage distribution of detected cancers: Results of the prevalence screen of the UK Collaborative Trial of Ovarian Cancer Screening (UKCTOCS). Lancet Oncol. 2009, 10, 327–340. [Google Scholar] [CrossRef] [Green Version]

- Buys, S.S.; Partridge, E.; Greene, M.H.; Prorok, P.C.; Reding, D.; Riley, T.L.; Hartge, P.; Fagerstrom, R.M.; Ragard, L.R.; Chia, D.; et al. Ovarian cancer screening in the Prostate, Lung, Colorectal and Ovarian (PLCO) cancer screening trial: Findings from the initial screen of a randomized trial. Am. J. Obstet. Gynecol. 2005, 193, 1630–1639. [Google Scholar] [CrossRef]

- Jacobs, I.; Prys Davies, A.; Bridges, J.; Stabile, I.; Fay, T.; Lower, A.; Grudzinskas, J.G.; Oram, D. Prevalence screening for ovarian cancer in postmenopausal women by CA 125 measurement and ultrasonography. Br. Med. J. 1993, 306, 1030–1034. [Google Scholar] [CrossRef] [Green Version]

- Cancer Incidence Projections Australia 2002 to 2011; Australian Institute of Health and Welfare; Australasian Association of Cancer Registries; National Cancer Strategies Group: Canberra, Australia, 2005.

- Australian Demographic Statistics; Australian Bureau of Statistics D: Canberra, Australia, 2007.

- Chima, S.; Milley, K.; Reece, J.C.; Milton, S.; McIntosh, J.G.; Emery, J.D. Decision support tools to improve cancer diagnostic decision making in primary care: A systematic review. Br. J. Gen. Pract. 2019, 69, e809–e818. [Google Scholar] [CrossRef]

- Chiang, P.C.; Glance, D.; Walker, J.; Walter, F.M.; Emery, J.D. Implementing a qcancer risk tool into general practice consultations: An exploratory study using simulated consultations with Australian general practitioners. Br. J. Cancer 2015, 112, S77–S83. [Google Scholar] [CrossRef] [Green Version]

- Hamilton, W. Electronic Risk Assessment for Cancer for Patients in General Practice (ISRCTN22560297). Available online: http://www.isrctn.com/ISRCTN22560297 (accessed on 30 October 2020).

- Banks, J.; Hollinghurst, S.; Bigwood, L.; Peters, T.J.; Walter, F.M.; Hamilton, W. Preferences for cancer investigation: A vignette-based study of primary-care attendees. Lancet Oncol. 2014, 15, 232–240. [Google Scholar] [CrossRef] [Green Version]

- Funston, G.; Hamilton, W.; Abel, G.; Crosbie, E.J.; Rous, B.; Walter, F.M. The diagnostic performance of CA125 for the detection of ovarian and non-ovarian cancer in primary care: A population-based cohort study. PLoS Med. 2020, 17, e1003295. [Google Scholar] [CrossRef]

| Author, Date, Country | Design | Objective | Primary Outcome | Candidate Variable Data Sources | Participants | Study Sample | |||

|---|---|---|---|---|---|---|---|---|---|

| Case Control | Cohort | Develop New Tool | Modify Existing Tool | Externally Validate Existing Tool | |||||

| Population based | |||||||||

| Lurie, 2009, USA | ● | ● | Primary invasive ovarian carcinoma | In-person patient interviews using a structured survey | Cases: Women aged 19–88 years histologically-confirmed primary invasive ovarian carcinoma (1993–2007) Controls: Aged ≥ 18 years, Hawaii resident ≥ 1 year, randomly selected from statutory state survey Frequency-matched to cases (1:1) by age, ethnicity, interview time | Cases: 432 Controls: 491 | |||

| Rossing, 2010, USA | ● | ● | Primary invasive epithelial OC a | In-person interviews | Cases: Residents in western Washington State, aged 35–74 years, diagnosed with a primary invasive epithelial ovarian tumour (January 2002–December 2005) Controls: Selected by random digit dialling with stratified sampling in 5-year age categories, 1-year calendar intervals and two (urban vs. suburban or rural) county strata | Cases: 594 Controls: 1313 | |||

| Jordan, 2010, Australia | ● | ● | Invasive epithelial OC | Patient survey | Cases: Aged 20–79 years with suspected OC, subsequently diagnosed with invasive epithelial OC (January 2002–June 2005) Controls: Frequency-matched based on age (5-year groups) and state of residence identified from electoral roll [34] | Cases: 1215 Controls: 1456 | |||

| Primary care population | |||||||||

| Hamilton, 2009, England | ● | ● | Primary OC, including borderline | Researcher-coded GP records | Cases: Aged ≥ 40 years with primary OC diagnosed between 2000 and 2007 Controls: Matched on age, sex and GP practice | Cases: 212 Controls: 1060 | |||

| Hippisley-Cox, 2012, England and Wales | ● | ● | OC (NOS) | QResearch database [35] | Aged 30–84 years, registered with GP practices between 1 January 2000 and 30 September 2010 | Development (2/3)—1,158,723 women with 976 OCs Validation (1/3)—608,862 women with 538 OCs | |||

| Hippisley-Cox, 2013, England and Wales | ● | ● | OC (NOS) and 10 other cancers | QResearch database [35] | Aged 25–89 years, registered with GP practices between 1 January 2000 and 1 April 2012 | Development (2/3)—1,240,864 women with 1279 OCs Validation (1/3)—667,603 women with 606 OCs | |||

| Grewal, 2013, England | ● | ● | Primary OC, including borderline | Researcher-coded GP records | Cases: Aged ≥ 40 years with primary OC diagnosed between 2000 and 2007 Controls: Matched on age, sex and GP practice | Cases: 212 Controls: 1060 | |||

| Collins, 2013, UK | ● | ● | OC (NOS) | THIN database [36] | Women aged 30–84 years registered with GP practices between 1 January 2000 and 30 June 2008 | 1,054,818 women with 735 cancers | |||

| Hospital + screening populations | |||||||||

| Goff, 2007, USA | ● | ● | OC, including borderline | Patient survey | Cases: Women with a pelvic mass recruited in secondary care prior to OC diagnosis Controls: (a) Healthy ‘high-risk’ b women enrolled in a screening study [37], (b) women who presented for pelvic/abdominal US | Development Cases: 74 Controls: 243 Validation Cases: 75 Controls: 245 | |||

| Andersen, 2008, USA | ● | ● | OC (NOS) | Patient survey, blood sample | Cases: Women with a pelvic mass, recruited prior to OC diagnosis Controls: Healthy ‘high risk’ b women enrolled in a screening study [37] | Cases: 75 Controls: 254 | |||

| Andersen, 2010, USA | ● | ● | OC (NOS) | Patient survey, blood sample | Cases: Women with a pelvic mass recruited in secondary care prior to OC diagnosis Controls: Healthy ‘high risk’ b women enrolled in a screening study [37], frequency matched to cases on age (</>50 years) | Cases: 74 Controls: 137 | |||

| Lim, 2012, UK | ● | ● | ● | OC, including borderline | (a) Survey, (b) telephone interview, (c) GP notes | Cases: Women aged 50–79 years with primary OC recruited prior to diagnosis (February 2006–February 2008) Controls: Screening trial participants [38], frequency matched on year of birth and agreement to a telephone interview | Cases: 194 c Controls: 268 c | ||

| Hospital based population | |||||||||

| Kim, 2009, Korea | ● | ● | ● | Epithelial OC (NOS) | Patient survey, blood sample | Cases: OC diagnosis Controls: Women with benign ovarian cysts recruited prior to surgery and those undergoing routine pap smear | Cases: 116 Controls: 209 (Benign: 74, Pap smear: 135) | ||

| Macuks, 2011, Latvia | ● | ● | ● | Epithelial OC (NOS) | Patient survey, blood sample | Cases: Women with epithelial OC recruited prior to surgery/diagnosis Controls: Age-matched ‘healthy women’ attending a gynaecology outpatient clinic d | Cases: 24 Controls: 31 d | ||

| Shetty, 2015, India | ● | ● | ● | OC, excluding borderline | Patient survey | Cases: Women admitted to hospital for investigation and subsequently diagnosed with OC Controls: (a) Women with benign ovarian pathology; (b) those undergoing a ‘gynaecological check-up’ | Cases: 74 Controls: 218 (benign: 144, gynaecological check-up: 74) | ||

| Jain, 2018, India | ● | ● | ● | OC, excluding borderline | Patient survey, blood sample | Cases: Women undergoing surgery for a pelvic mass, subsequently diagnosed with ovarian cancer Controls: First-degree healthy relatives of cases | Cases: 45 Controls: 90 | ||

| Tool (Study, Year) | Demographics | Personal/Family History | Symptoms | Test Results | ||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Age | Other | PMH | FH | Abdo. Pain | Pelvic Pain | Increase Abdo. Size/Distens. | Bloat. | Appetite Loss | Feeling Full | Difficulty Eating | Weight Loss | Postmen. Bleeding | Rectal Bleeding | Palpable Abdo. Mass/lump | Urinary Freq. | Other | Hb | CA125 | HE4 | |

| Symptom checklists | ||||||||||||||||||||

| Goff SI (Goff, 2007) | ● | ● | ● | ● | ● | ● | ||||||||||||||

| Modified Goff SI 1 (Kim, 2009) | ● | ● | ● | ● | ● | ● | ● | Urinary urgency | ||||||||||||

| Lurie 7-SI (Lurie, 2009 | ● | ● | ● | ● | ● | ● | ● | Bowel symptoms, difficulty emptying bladder, dysuria, fatigue, abnormal vaginal bleed. | ||||||||||||

| Lurie 5-SI (Lurie, 2009) | ● | ● | ● | ● | ● | Difficulty emptying bladder, dysuria, abnormal vaginal bleed. | ||||||||||||||

| Lurie 4-SI (Lurie, 2009) | ● | ● | ● | ● | Abnormal vaginal bleed. | |||||||||||||||

| Lurie 3-SI (Lurie, 2009) | ● | ● | Abnormal vaginal bleed. | |||||||||||||||||

| Hamilton SI (Hamilton, 2009) | ● | ● | ● | ● | ● | ● | ● | |||||||||||||

| SGO consensus criteria * (Rossing, 2010) | ● | ● | ● | ● | ● | Urinary urgency | ||||||||||||||

| Lim SI 1 (Lim, 2012) | ● | ● | ● | ● | ● | ● | ● | ● | ||||||||||||

| Lim SI 2 (Lim, 2012) | ● | ● | ● | ● | ● | Vaginal discharge | ||||||||||||||

| Hippisley-Cox SI (Hippisley-Cox, 2012) | ● | ● | ● | ● | ● | ● | ||||||||||||||

| Modified Goff SI 2 (Shetty, 2015) | ● | ● | ● | ● | ● | ● | ● | ● | ● | Urinary urgency | ||||||||||

| Augmented symptom checklists | ||||||||||||||||||||

| Goff SI + CA125 (Andersen, 2008) | ● | ● | ● | ● | ● | ● | ● | |||||||||||||

| Goff SI + HE4 (Andersen, 2010) | ● | ● | ● | ● | ● | ● | ● | |||||||||||||

| Goff SI + HE4 + CA125 (Andersen, 2010) | ● | ● | ● | ● | ● | ● | ● | ● | ||||||||||||

| Goff SI + CA125 + menopause (Macuks, 2011) | Menopause | ● | ● | ● | ● | ● | ● | ● | ||||||||||||

| Prediction models | ||||||||||||||||||||

| QCancer Ovarian (Hippisley-Cox, 2012) | ● | OC | ● | ● | ● | ● | ● | ● | ● | |||||||||||

| QCancer Female (Hippisley-Cox, 2013) | ● | Townsend score, smoking, alcohol, BMI | T2DM, COPD, endomet. hyperplasia or polyp, chronic pancreatitis | OC, GI cancer, breast cancer | ● | ● | ● | ● | ● | ● | Difficulty swallowing, heartburn/indigestion, blood in urine, blood in vomit, blood when cough, irregular menstrual bleeding, vaginal bleeding after sex, breast lump, breast skin tethering or nipple discharge, breast pain, lump in neck, night sweats, venous thromboembolism, CIBH, constipation, cough, unexplained bruising | ● | ||||||||

| OC Score A (Grewal, 2013) | ● | ● | ● | ● | ● | ● | ● | |||||||||||||

| OC Score B (Grewal, 2013) | ● | ● | ● | ● | ● | ● | ● | |||||||||||||

| OC Score C (Grewal, 2013) | ● | ● | ● | ● | ● | ● | ● | ● | ||||||||||||

| Tool | Study | Recruitment | Source of Accuracy Estimate | Sensitivity (95% CI) | Specificity (95% CI) | PPV | AUC (95% CI) | |||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Population Level | 1° Care | Hospital + Screening | Hospital | Apparent Performance | Internal Validation | External Validation | ||||||

| Symptom checklists | ||||||||||||

| Goff SI | Goff, 2007 | ● | ● | ≥50 yrs: 66.7 <50 yrs: 86.7 | ≥50 yrs: 90 <50 yrs: 86.7 | - | - | |||||

| Andersen, 2008 a | ● | ● | 64 (52.1–74.8) | 88.2 (83.6–91.9) | - | - | ||||||

| Kim, 2009 | ● | ● | 56.9 | 87.6 | - | - | ||||||

| Rossing, 2010 | ● | ● | 67.5 (65.4–69.6) | 94.9 (93.9–95.8) | 0.77–1.12 b | - | ||||||

| Jordan, 2010 | ● | ● | 68.1 (65.5–70.7) | 85.3 | 0.09 c (≥55 yrs: 0.21–0.31 <55 yrs: 0.04) d | - | ||||||

| Andersen, 2010 a | ● | ● | 63.5 (51.5–74.4) | 88.3 (81.7–93.2) | - | - | ||||||

| Macuks, 2011 | ● | ● | 83.3 | 48.3 | - | - | ||||||

| Jain, 2018 | ● | ● | 77.8 | 87.8 | - | - | ||||||

| Lim, 2012 | ● | ● | 61.4–75.7 e | 89.6–98.9 e | - | - | ||||||

| Modified Goff SI 1 | Kim, 2009 | ● | ● | 65.5 | 84.7 | - | - | |||||

| Shetty, 2015 | ● | ● | 71.6 | 88.5 | - | - | ||||||

| 7-symptom Index | Lurie, 2009 | ● | ● | 85 | 40 | - | - | |||||

| 5-symptom Index | Lurie, 2009 | ● | ● | 80 | 63 | - | - | |||||

| 4-symptom Index | Lurie, 2009 | ● | ● | 74 | 77 | - | - | |||||

| 3-symptom Index | Lurie, 2009 | ● | ● | 54 | 93 | - | - | |||||

| Hamilton SI | Hamilton, 2009 | ● | ● | 85 | 85 | - | - | |||||

| SGO consensus criteria | Rossing, 2010 | ● | ● | 65.3 (63.1–67.4) | 93.9 (92.8–95) | 0.63–0.92 b | - | |||||

| Jordan, 2010 | ● | ● | 71.5 (69–74.1) | 82.9 (81–84.8) | 0.08 c (≥55 yrs: 0.18–0.27 <55 yrs: 0.05) d | - | ||||||

| Lim SI 1 | Lim, 2012 | ● | ● | 69.6–91 e | 76–91 e | - | - | |||||

| Lim SI 2 | Lim, 2012 | ● | ● | 67.3–91 e | 82.4–94 e | - | - | |||||

| Hippisley-Cox SI | Hippisley-Cox, 2012 | ● | ● | 71.9 | 82.9 | 0.5 | - | |||||

| Modified Goff SI 2 | Shetty, 2015 | ● | ● | 77 | 88.5 | - | - | |||||

| Augmented symptom checklists | ||||||||||||

| Goff SI or CA125 f | Andersen, 2008 | ● | ● | 89.3 (80.1–95.3) | 83.5 (78.3–87.8) | - | - | |||||

| Goff SI or CA125 (>35 U/mL) | Jain, 2018 | ● | ● | 97.8 | 68.9 | - | - | |||||

| Goff SI & CA125 (>21 U/mL) | Macuks, 2011 | ● | ● | 79.1 | 100 | - | - | |||||

| Goff SI & CA125 (>35 U/mL) | Macuks, 2011 | ● | ● | 70.8 | 100 | - | - | |||||

| Goff SI & CA125 (>65 U/mL) | Macuks, 2011 | ● | ● | 70.8 | 100 | - | - | |||||

| Goff SI or CA125 f | Andersen, 2010 | ● | ● | 91.9 (83.2–97) | 83.2 (75.9–89) | - | - | |||||

| Goff SI or HE4 f | Andersen, 2010 | ● | ● | 91.9 (83.2–97) | 84.7 (77.5–90.3) | - | - | |||||

| Any 1 of 3 (Goff SI or CA125 or HE4) f | Andersen, 2010 | ● | ● | 94.6 (86.7–98.5) | 79.6 (71.8–86) | - | - | |||||

| Any 2 of 3 (Goff SI or CA125 or HE4) f | Andersen, 2010 | ● | ● | 83.8 (73.4–91.3) | 98.5 (94.8–99.8) | - | - | |||||

| Goff SI & 1 or more of CA125 or HE4 f | Andersen, 2010 | ● | ● | 58.1 (46.1–69.5) | 98.5 (94.8–99.8) | - | - | |||||

| Goff SI & CA125 (>25 U/mL) & menopause | Macuks, 2011 | ● | ● | 50 | 100 | - | - | |||||

| Goff SI & CA125 (>35 U/mL) & menopause | Macuks, 2011 | ● | ● | 45.8 | 100 | - | - | |||||

| Goff SI & CA125 (>65 U/mL) & menopause | Macuks, 2011 | ● | ● | 45.8 | 100 | - | - | |||||

| Prediction models | ||||||||||||

| QCancer Ovarian (Top 10% risk) | Hippisley-Cox, 2012 | ● | ● | 63.2 | 90.8 | 0.8 | 084 (0.83–0.86) | |||||

| Collins, 2013 | ● | ● | 64.1 | 90.1 | 0.5 | 0.86 (0.84–0.87) | ||||||

| QCancer Ovarian (Top 5% risk) | Hippisley-Cox, 2012 | ● | ● | 42.2 | 95.6 | 1.1 | - | |||||

| Collins, 2013 | ● | ● | 43.8 | 95 | 0.6 | - | ||||||

| QCancer Ovarian (Top 1% risk) | Hippisley-Cox, 2012 | ● | ● | 13.9 | 99.3 | 2.1 | - | |||||

| QCancer Ovarian (Top 0.5% risk) | Hippisley-Cox, 2012 | ● | ● | 11 | 99.6 | 3.2 | - | |||||

| QCancer Ovarian (Top 0.1% risk) | Hippisley-Cox, 2012 | ● | ● | 3.9 | 99.9 | 5.5 | - | |||||

| QCancer Female (Top 10% risk) | Hippisley-Cox, 2013 | ● | ● | 61.6 | 90 | 0.6 | 0.84 (0.82–0.86) | |||||

| OC Score A (Score ≥ 3) | Grewal, 2013 | ● | ● | 58.5 | 97.3 | - | 0.89 | |||||

| OC Score A (Score ≥ 4) | Grewal, 2013 | ● | ● | 57.6 | 97.3 | - | ||||||

| OC Score B (Score ≥ 3) | Grewal, 2013 | ● | ● | 75 | 90.1 | - | 0.89 | |||||

| OC Score B (Score ≥ 4) | Grewal, 2013 | ● | ● | 58.9 | 97.3 | - | ||||||

| OC Score C (Score ≥ 3) | Grewal, 2013 | ● | ● | 85.4 | 85.1 | - | 0.88 | |||||

| OC Score C (Score ≥ 4) | Grewal, 2013 | ● | ● | 72.6 | 91.3 | - | ||||||

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Funston, G.; Hardy, V.; Abel, G.; Crosbie, E.J.; Emery, J.; Hamilton, W.; Walter, F.M. Identifying Ovarian Cancer in Symptomatic Women: A Systematic Review of Clinical Tools. Cancers 2020, 12, 3686. https://doi.org/10.3390/cancers12123686

Funston G, Hardy V, Abel G, Crosbie EJ, Emery J, Hamilton W, Walter FM. Identifying Ovarian Cancer in Symptomatic Women: A Systematic Review of Clinical Tools. Cancers. 2020; 12(12):3686. https://doi.org/10.3390/cancers12123686

Chicago/Turabian StyleFunston, Garth, Victoria Hardy, Gary Abel, Emma J. Crosbie, Jon Emery, Willie Hamilton, and Fiona M. Walter. 2020. "Identifying Ovarian Cancer in Symptomatic Women: A Systematic Review of Clinical Tools" Cancers 12, no. 12: 3686. https://doi.org/10.3390/cancers12123686

APA StyleFunston, G., Hardy, V., Abel, G., Crosbie, E. J., Emery, J., Hamilton, W., & Walter, F. M. (2020). Identifying Ovarian Cancer in Symptomatic Women: A Systematic Review of Clinical Tools. Cancers, 12(12), 3686. https://doi.org/10.3390/cancers12123686