Collaboration and Task Planning of Turtle-Inspired Multiple Amphibious Spherical Robots

Abstract

1. Introduction

2. Design Criteria

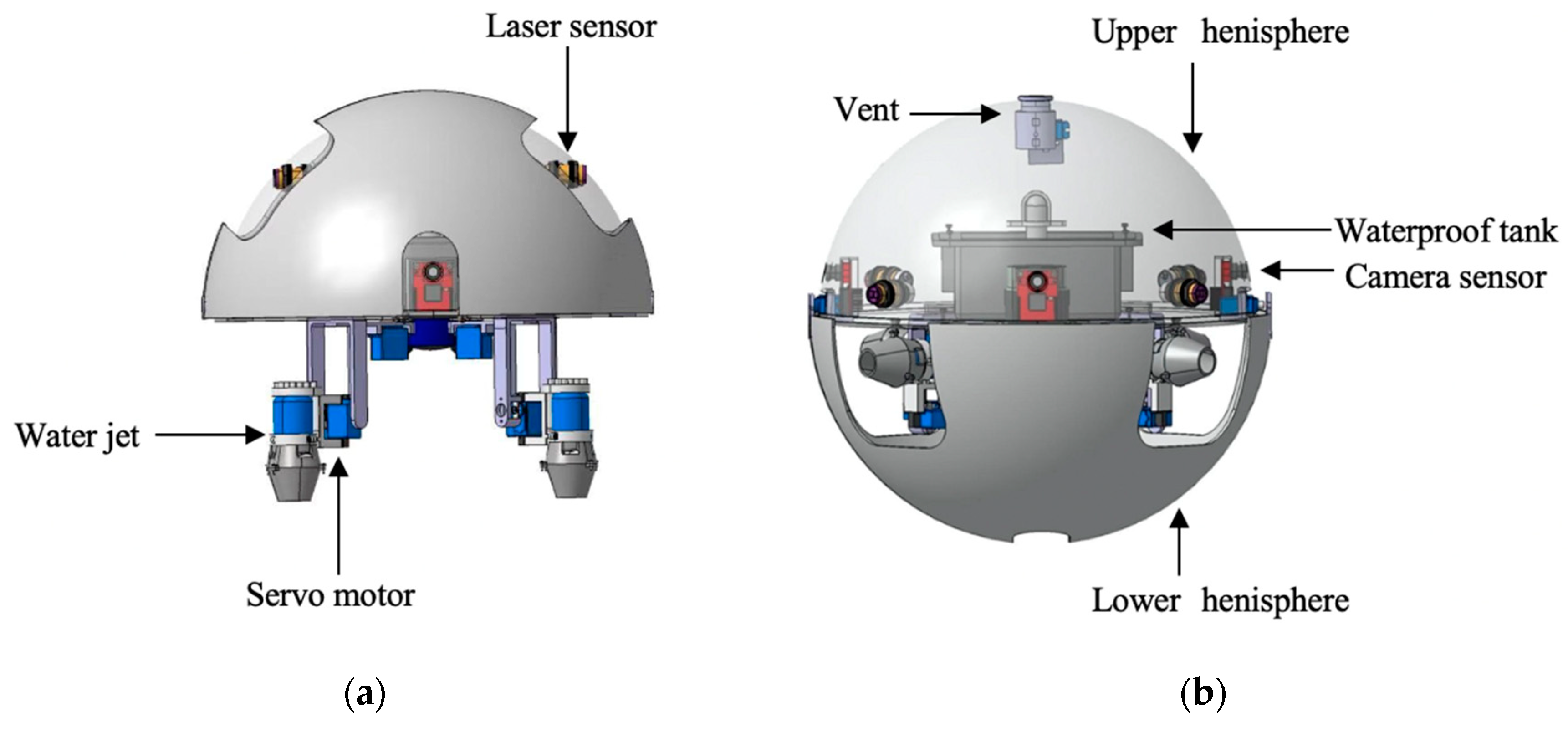

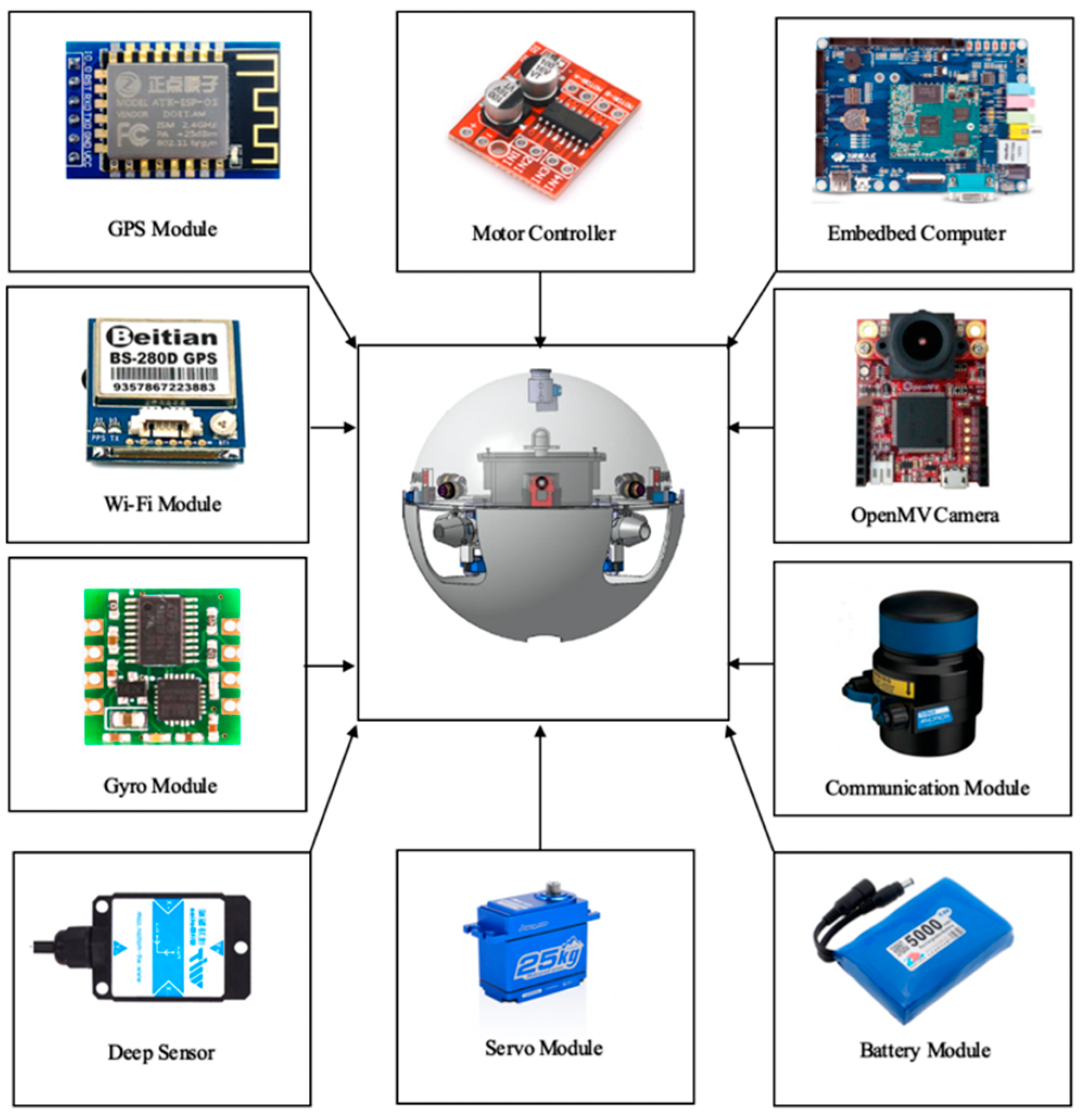

2.1. Mechanical Design

2.2. Communication Module

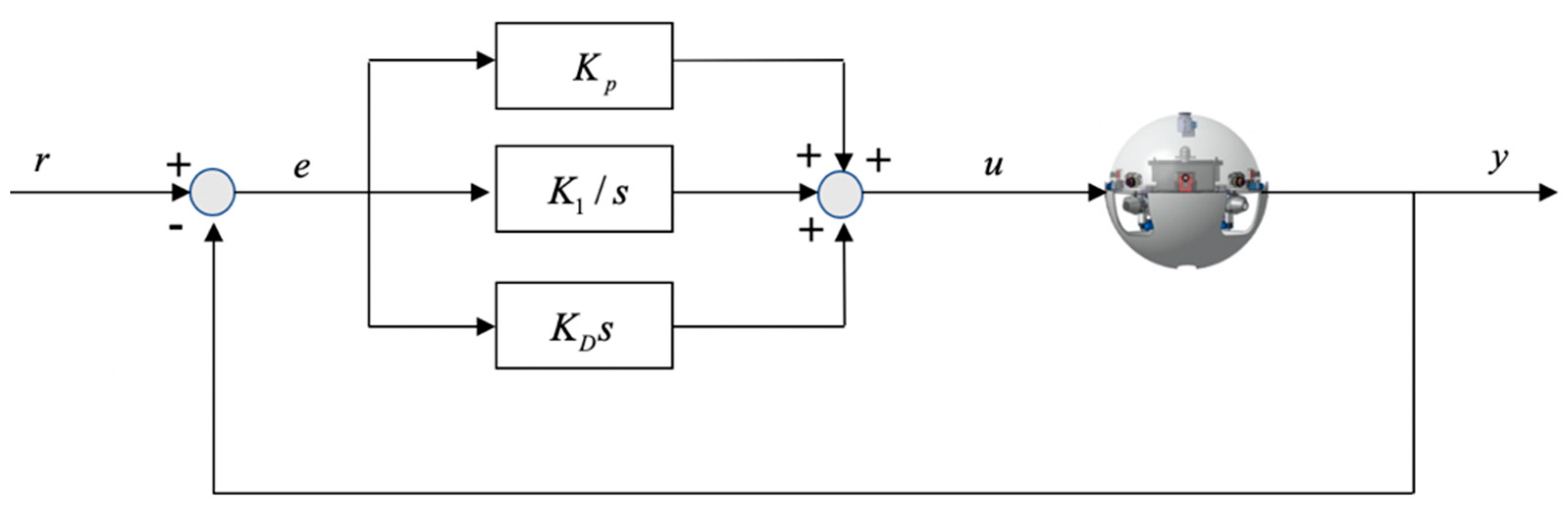

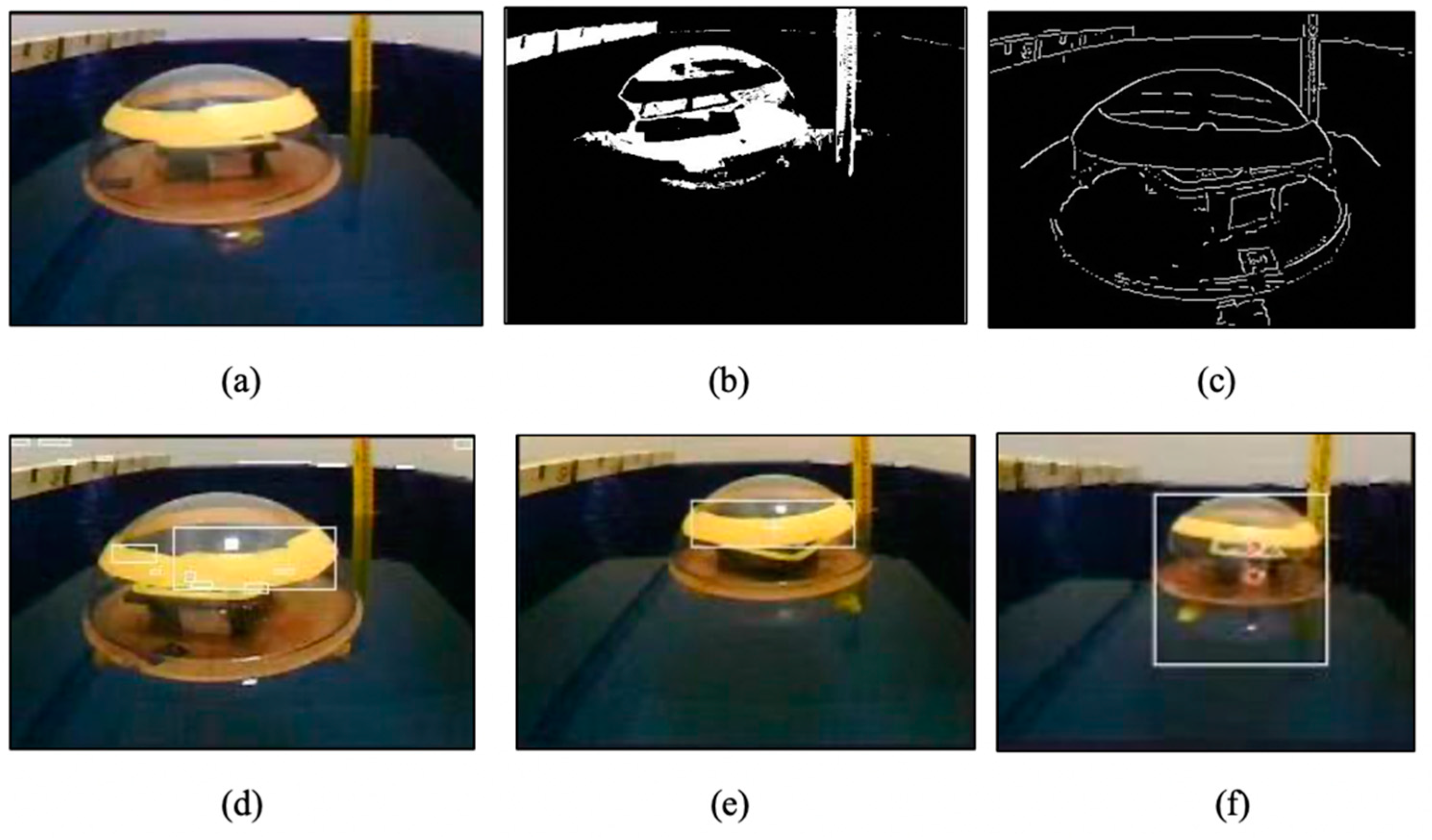

2.3. Visual Servoing Evaluation

3. Motion Algorithm and Modeling

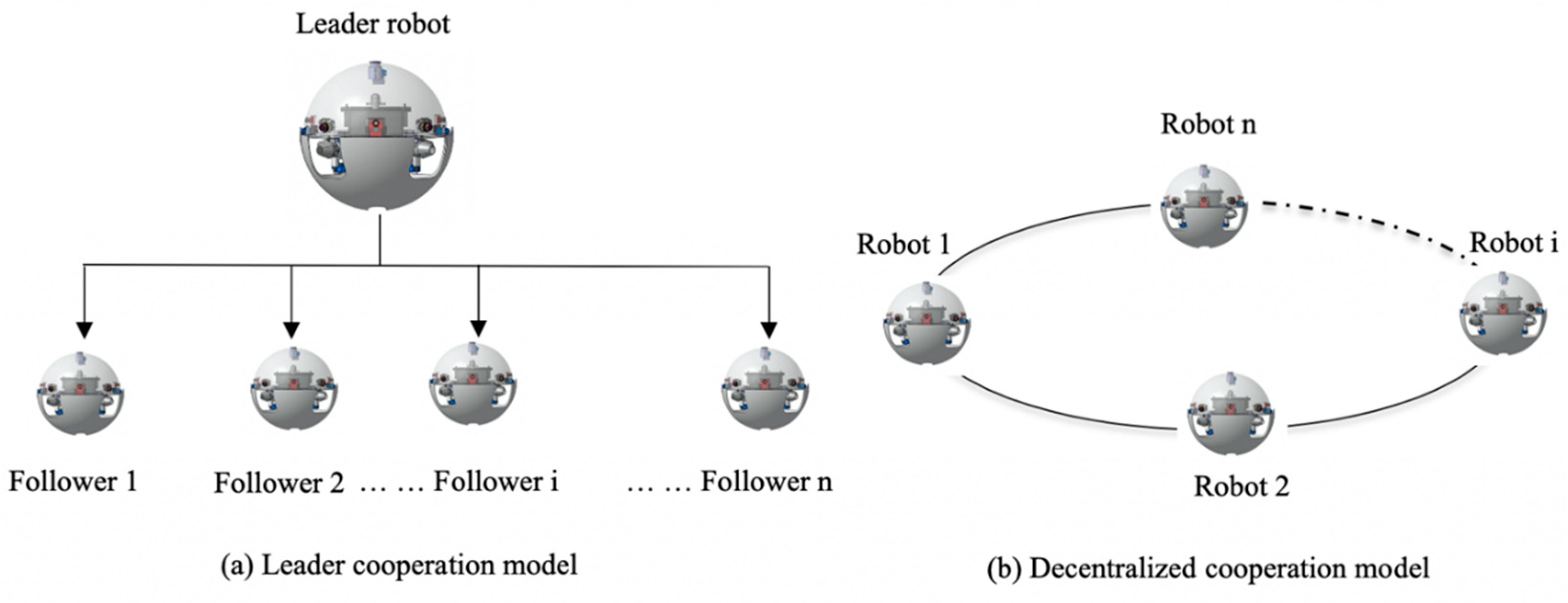

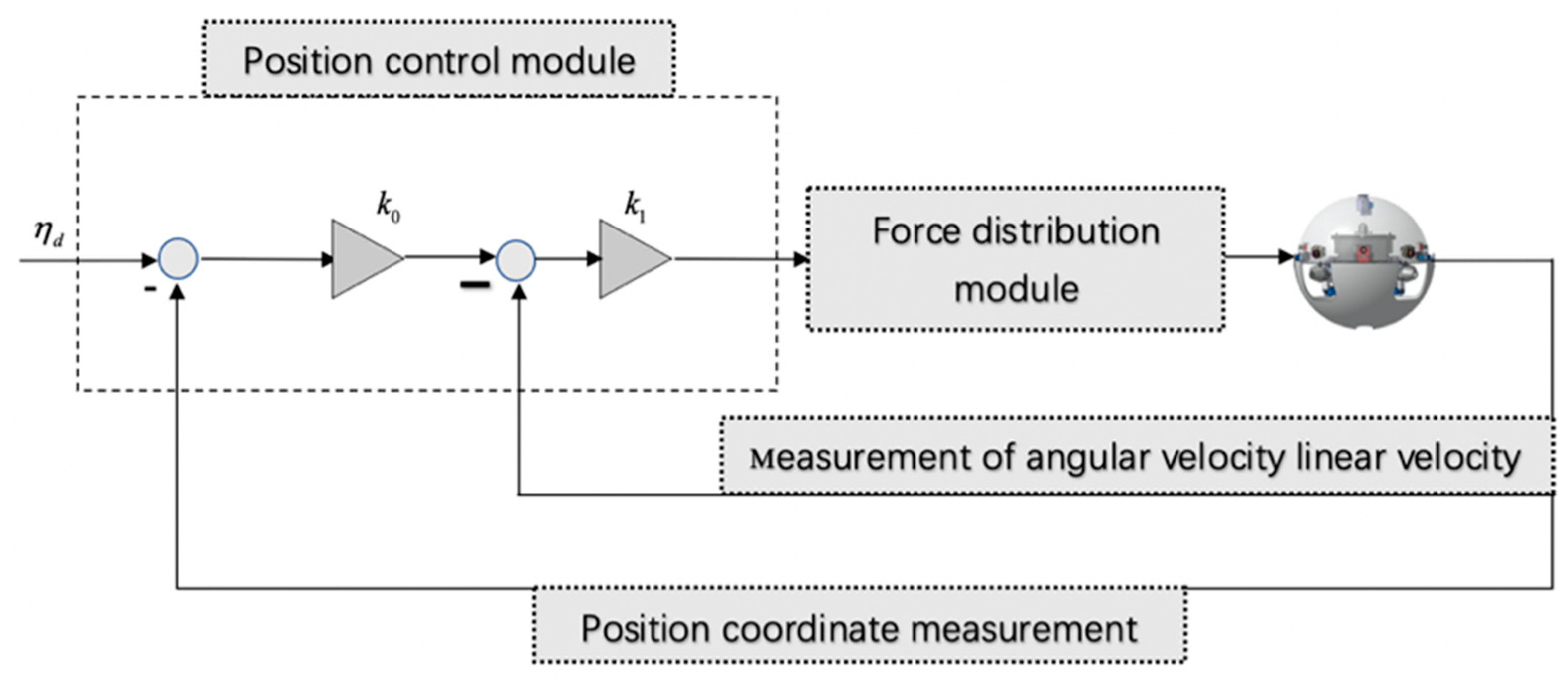

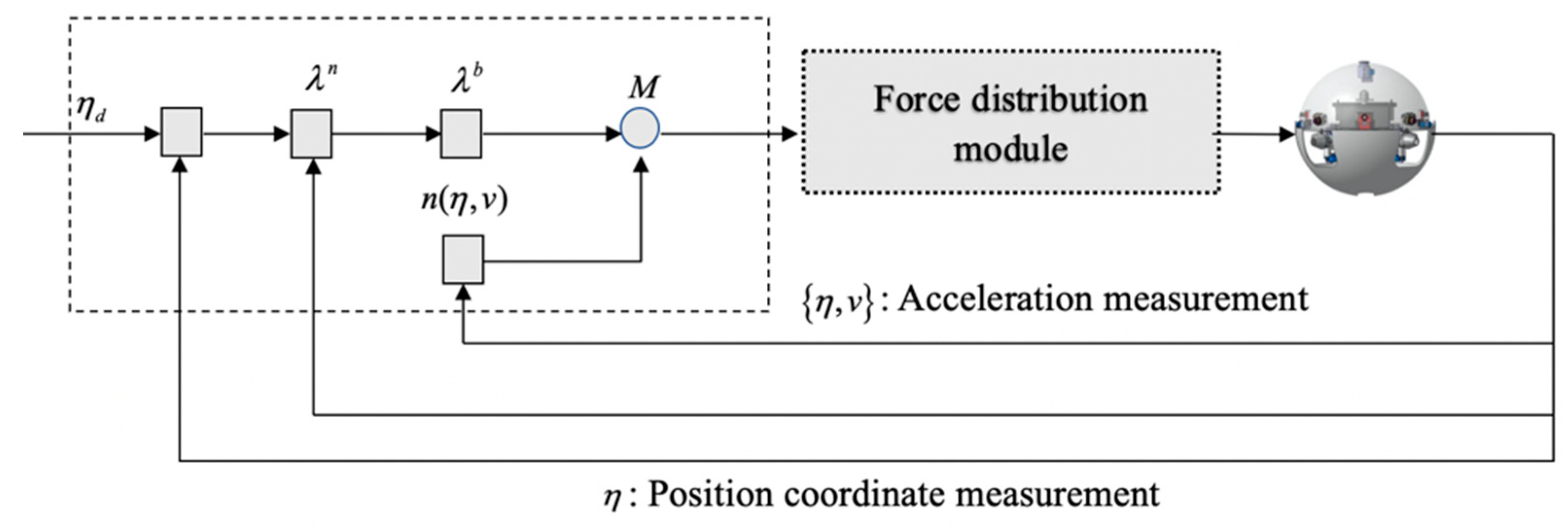

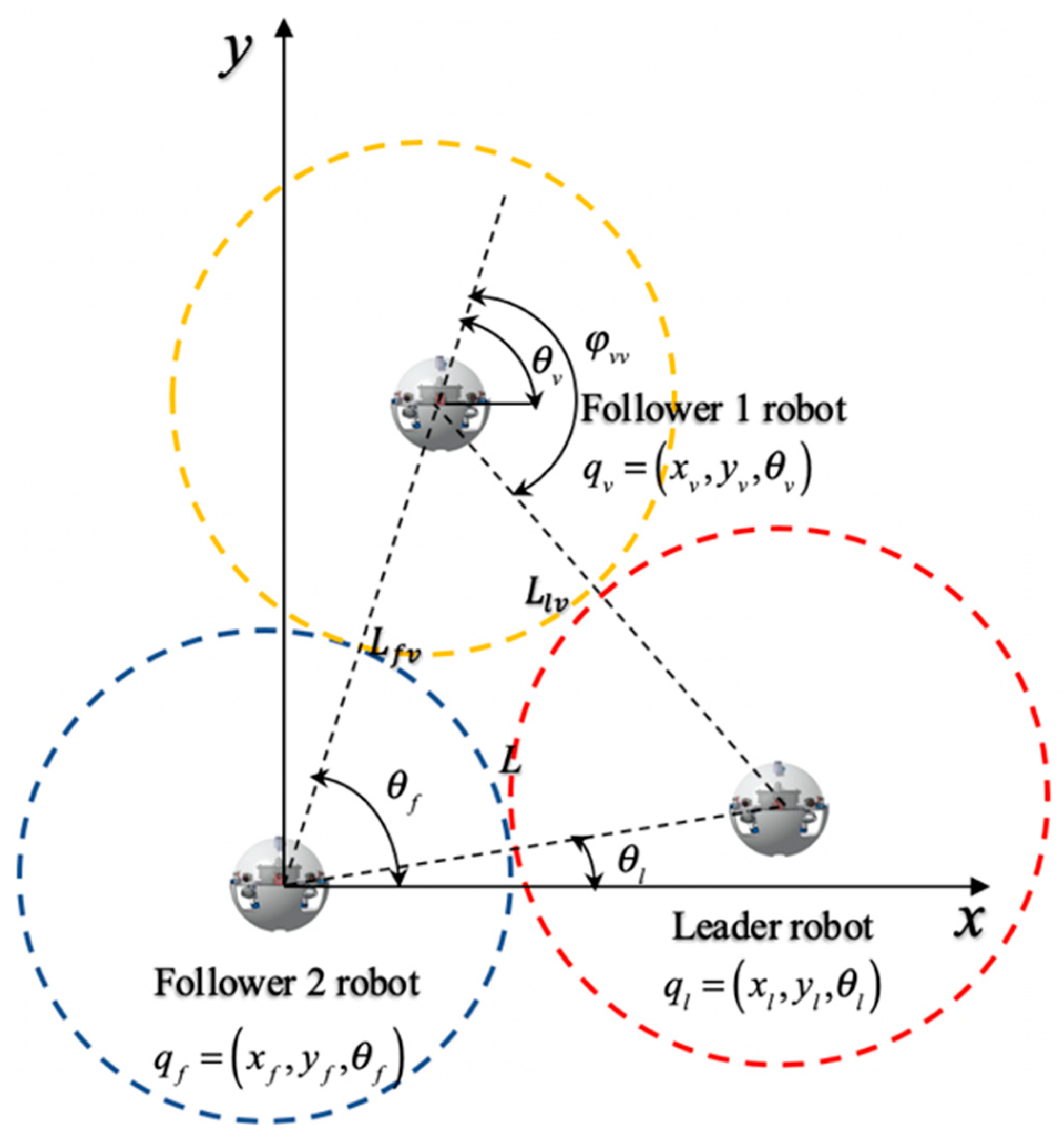

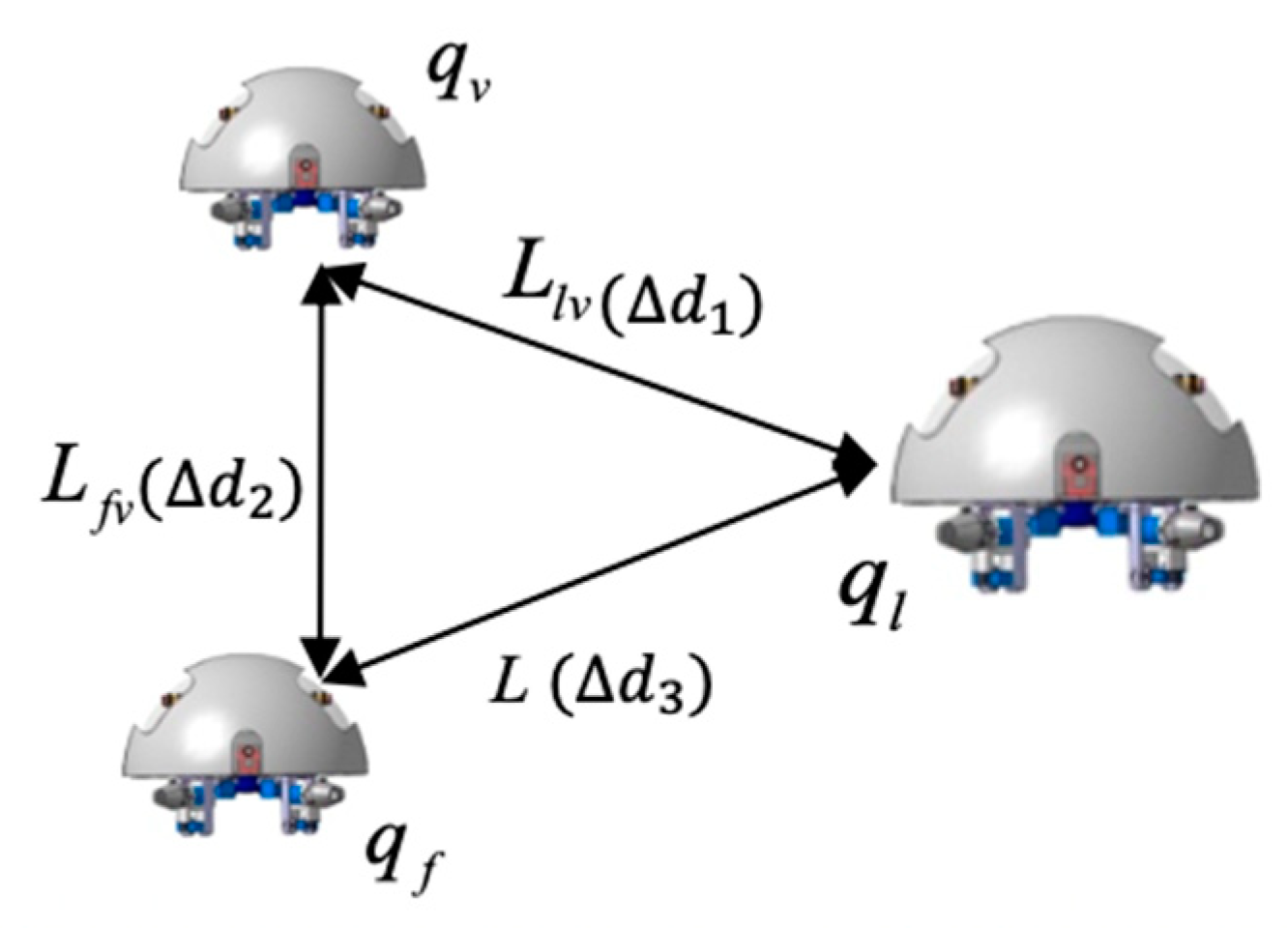

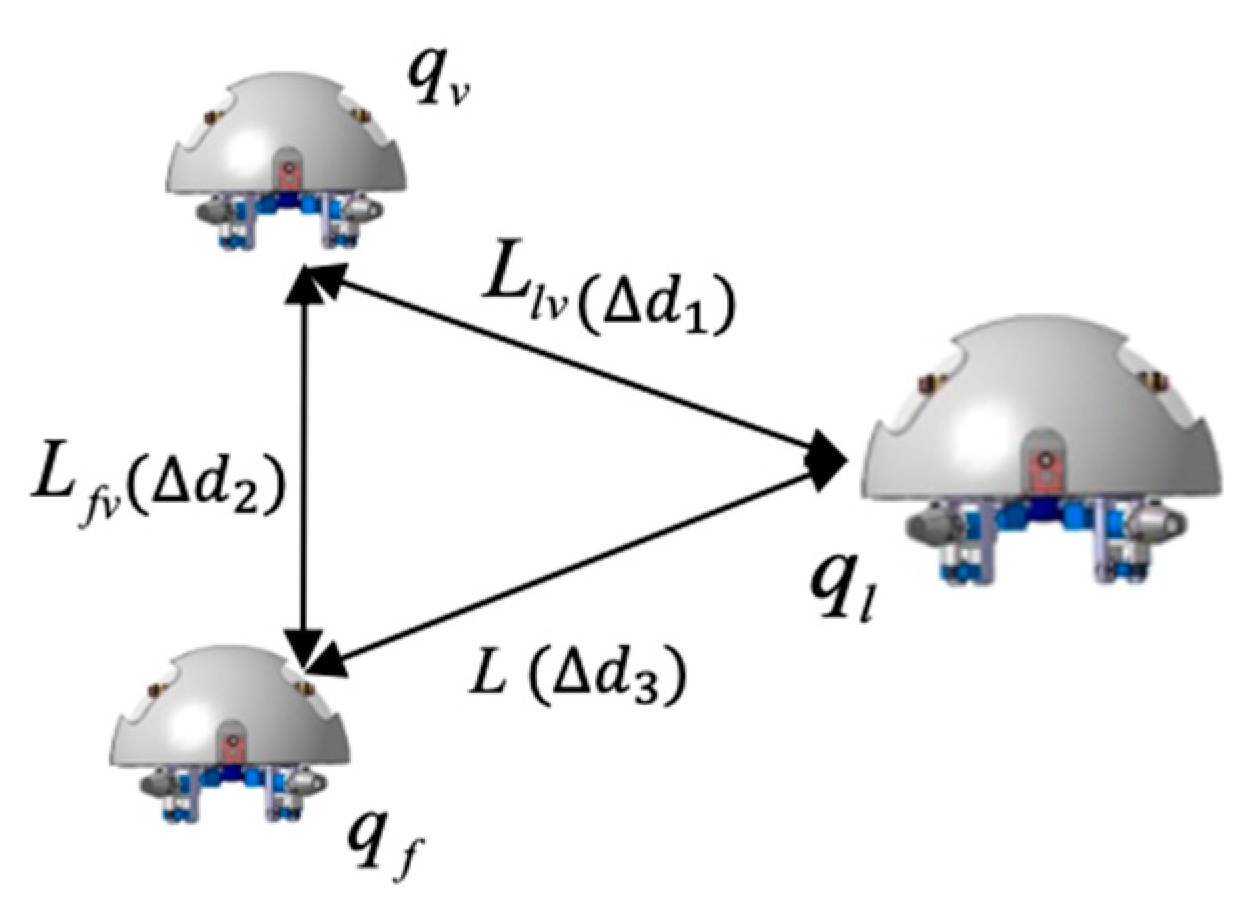

3.1. Formation Control Modeling

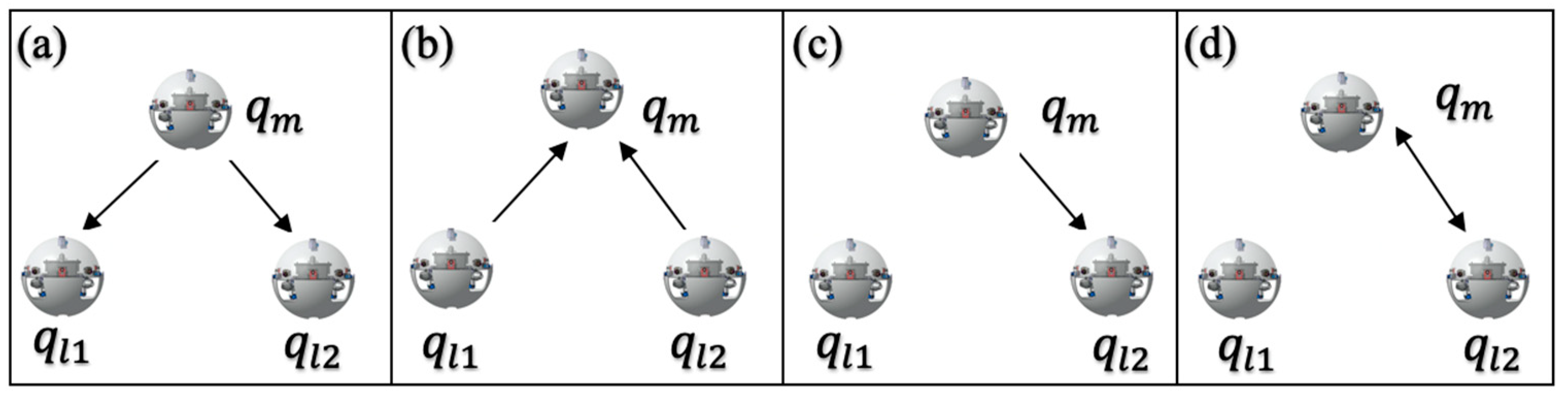

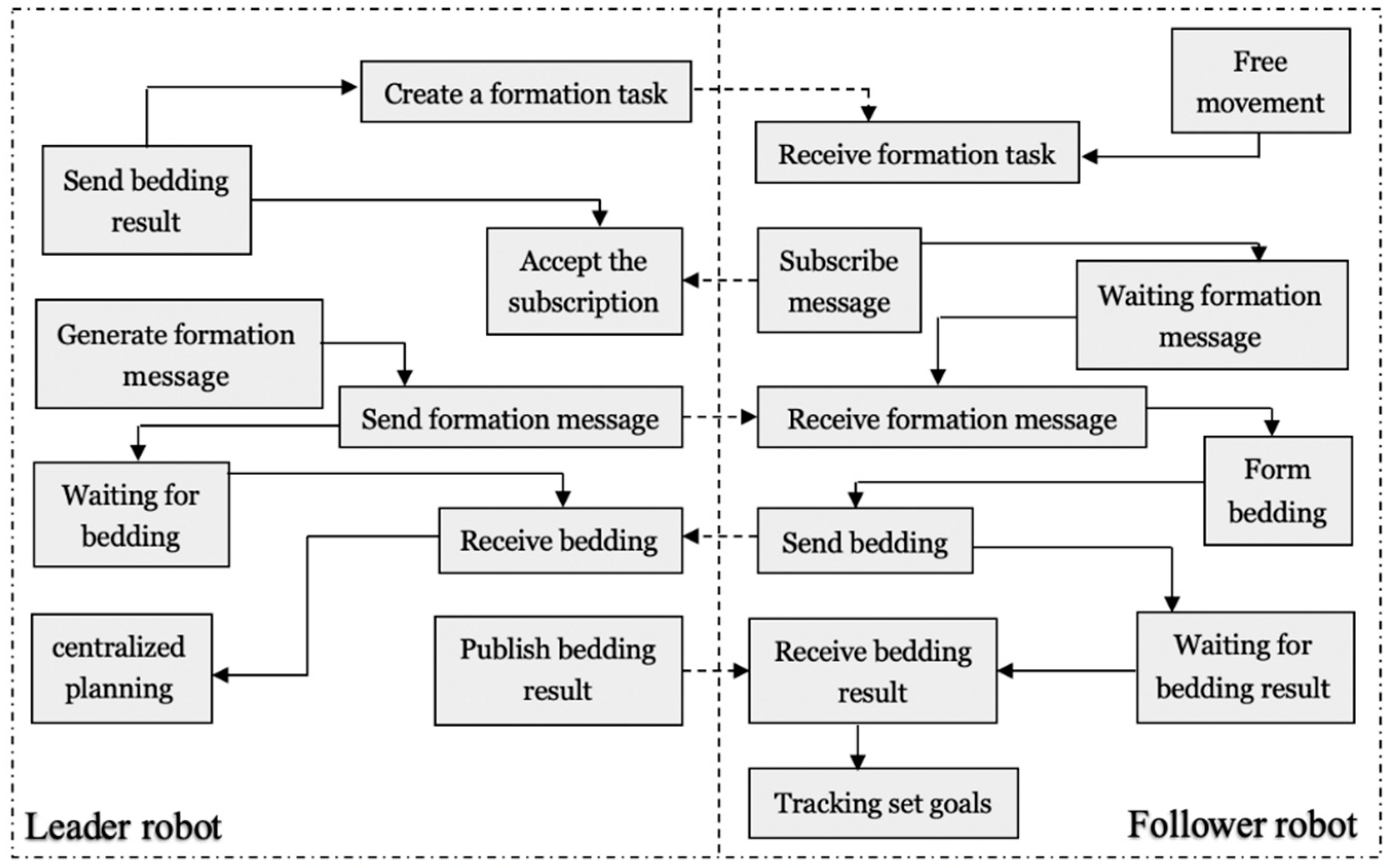

3.2. Bidding-Based Queue

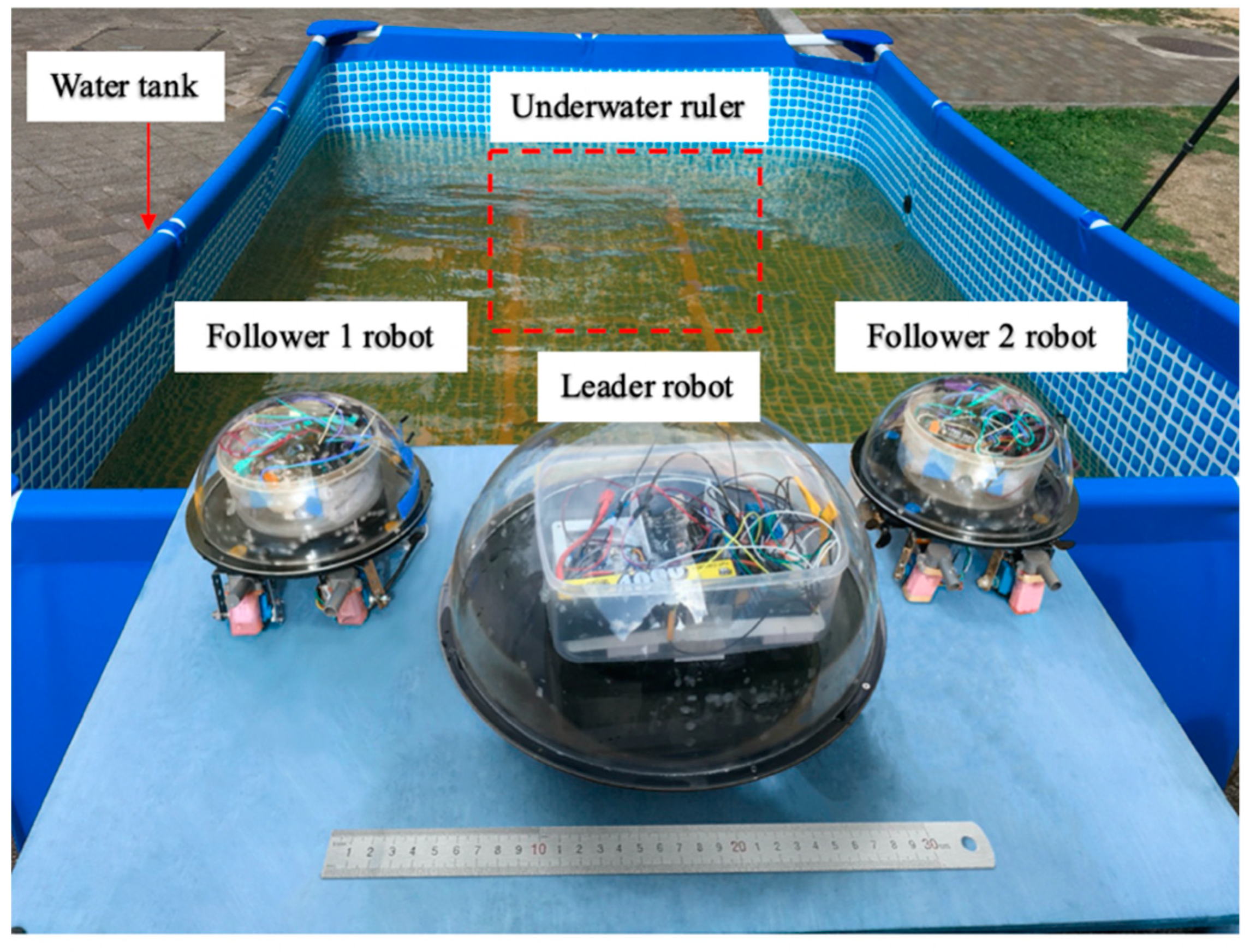

4. Performance Verification

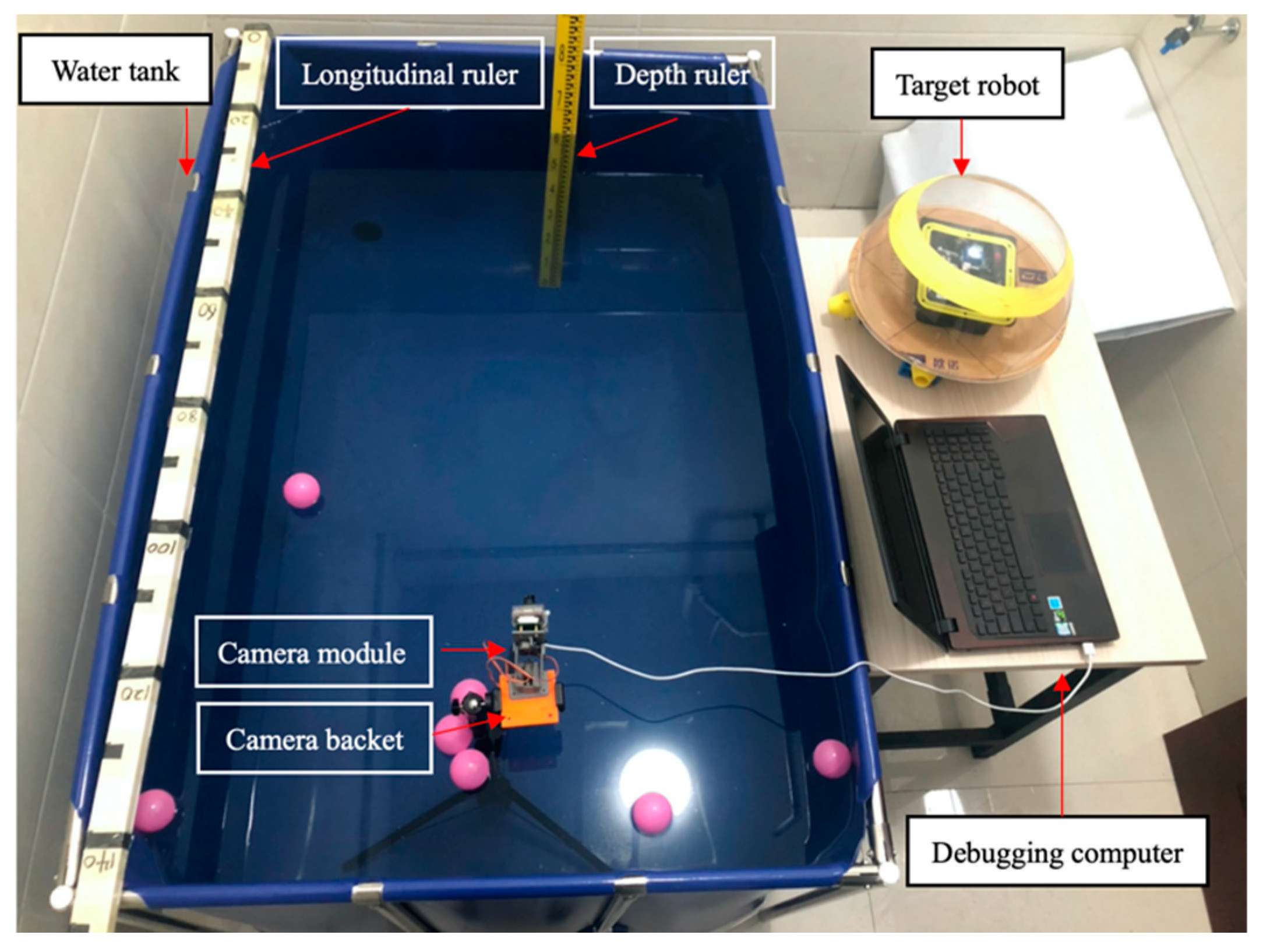

4.1. Experiment I: Robot Stability Evaluation

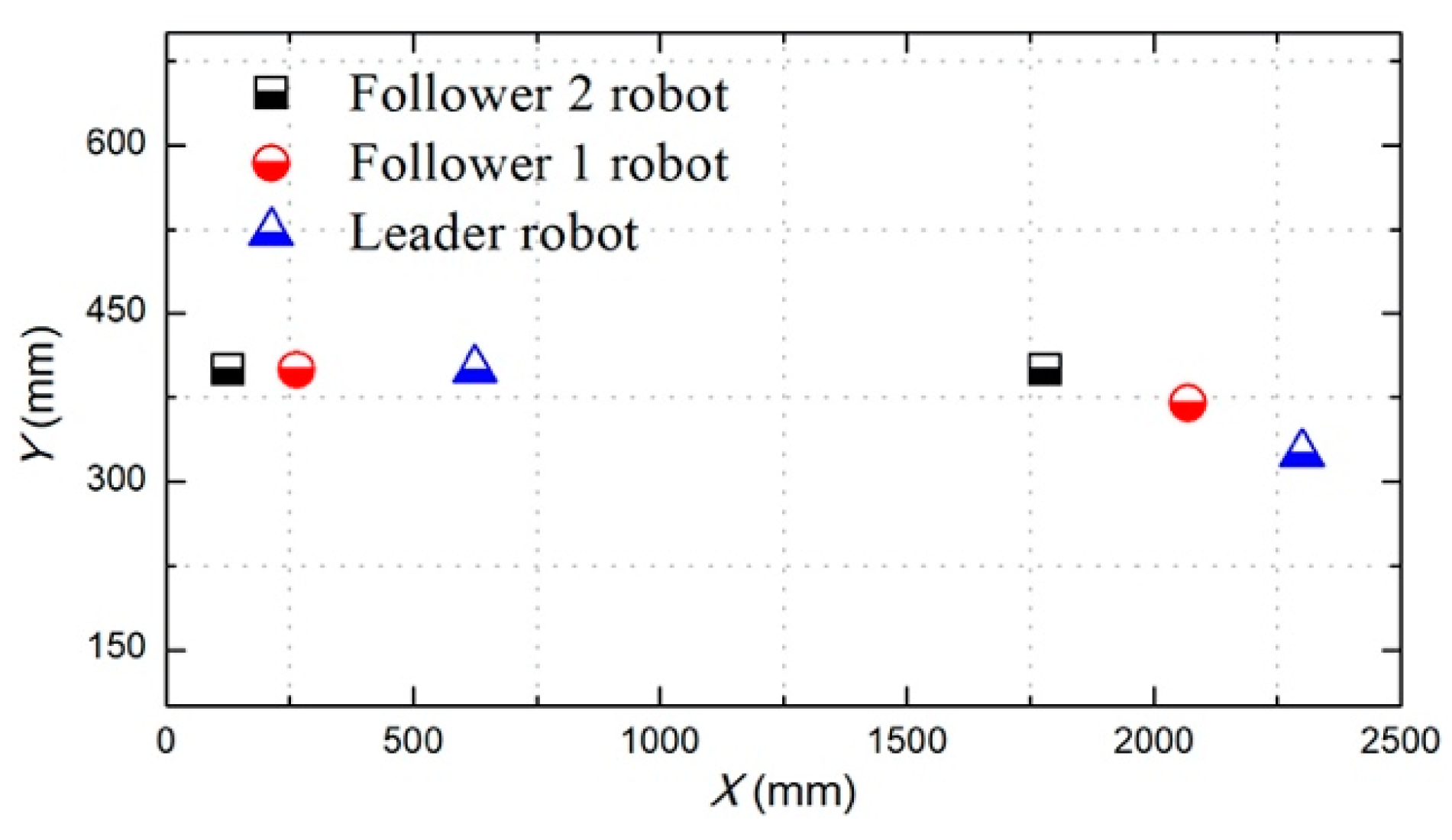

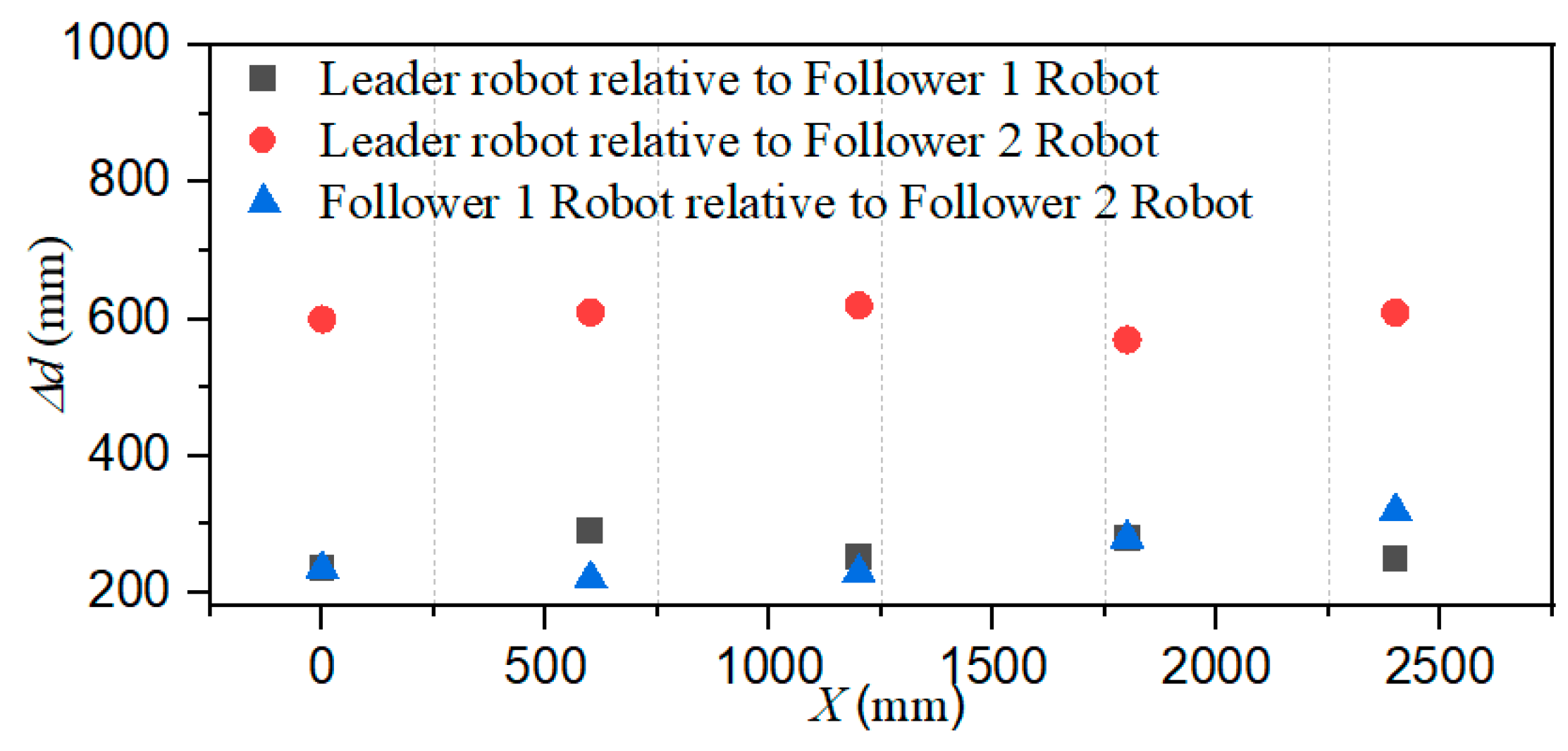

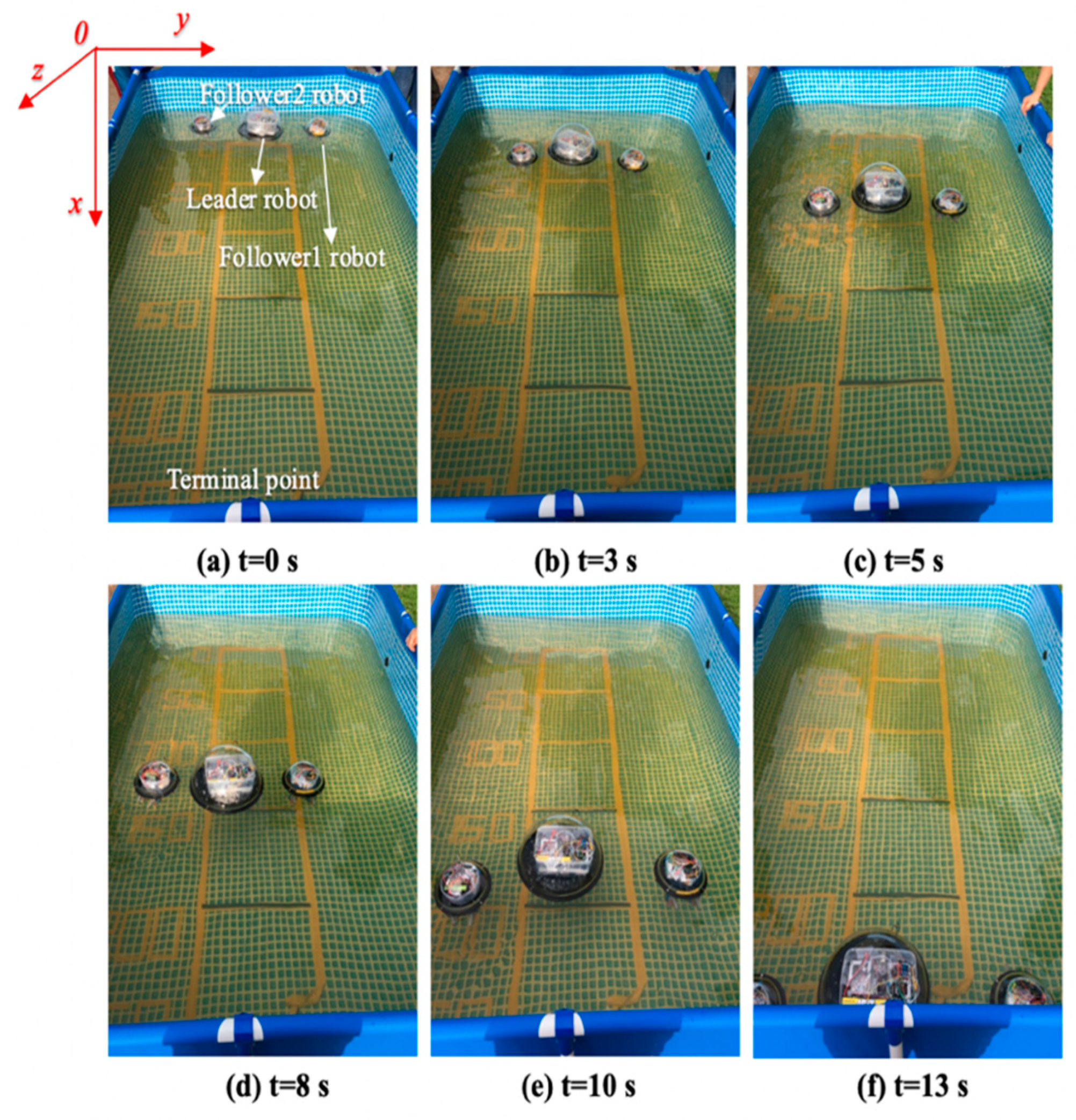

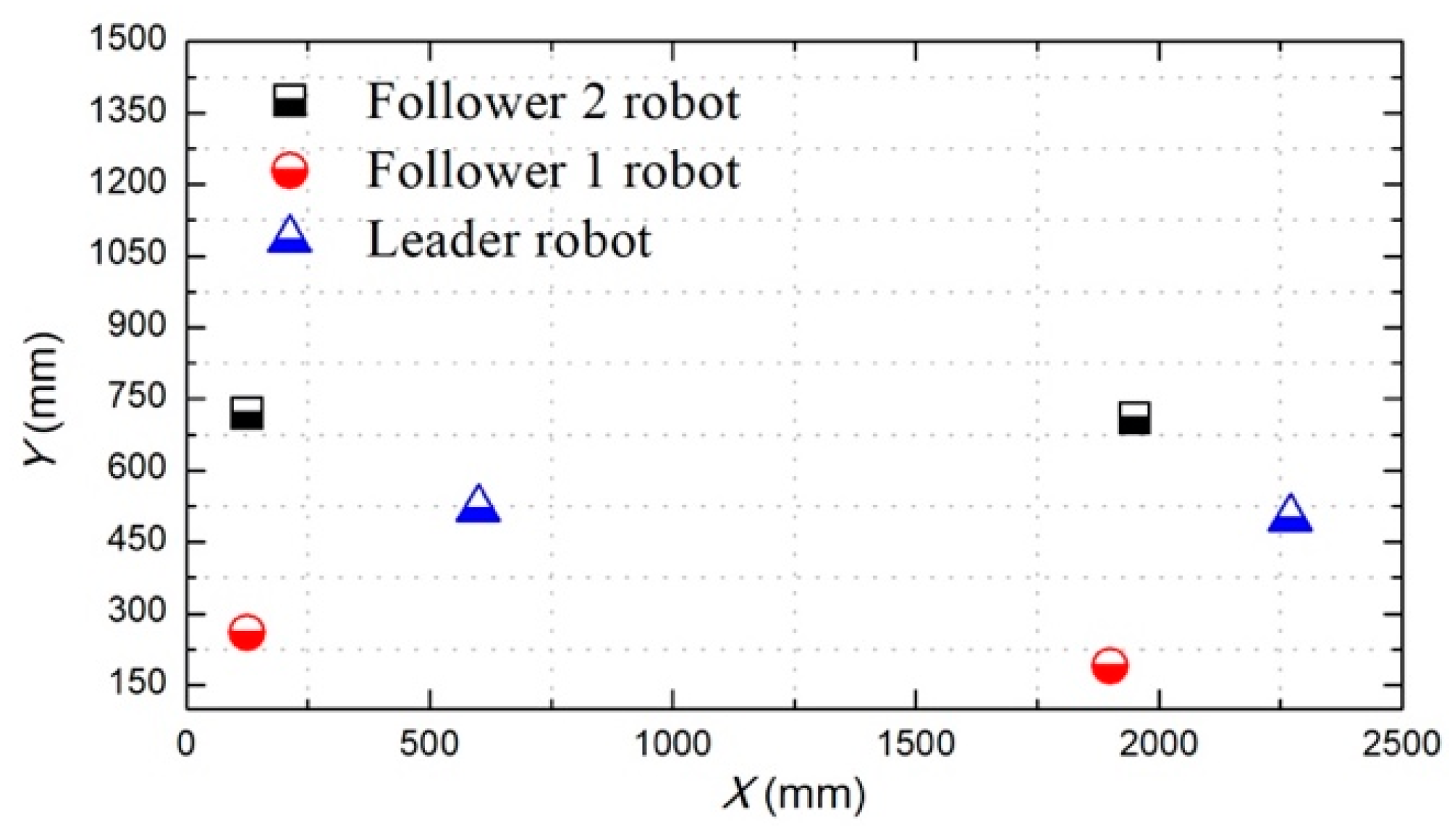

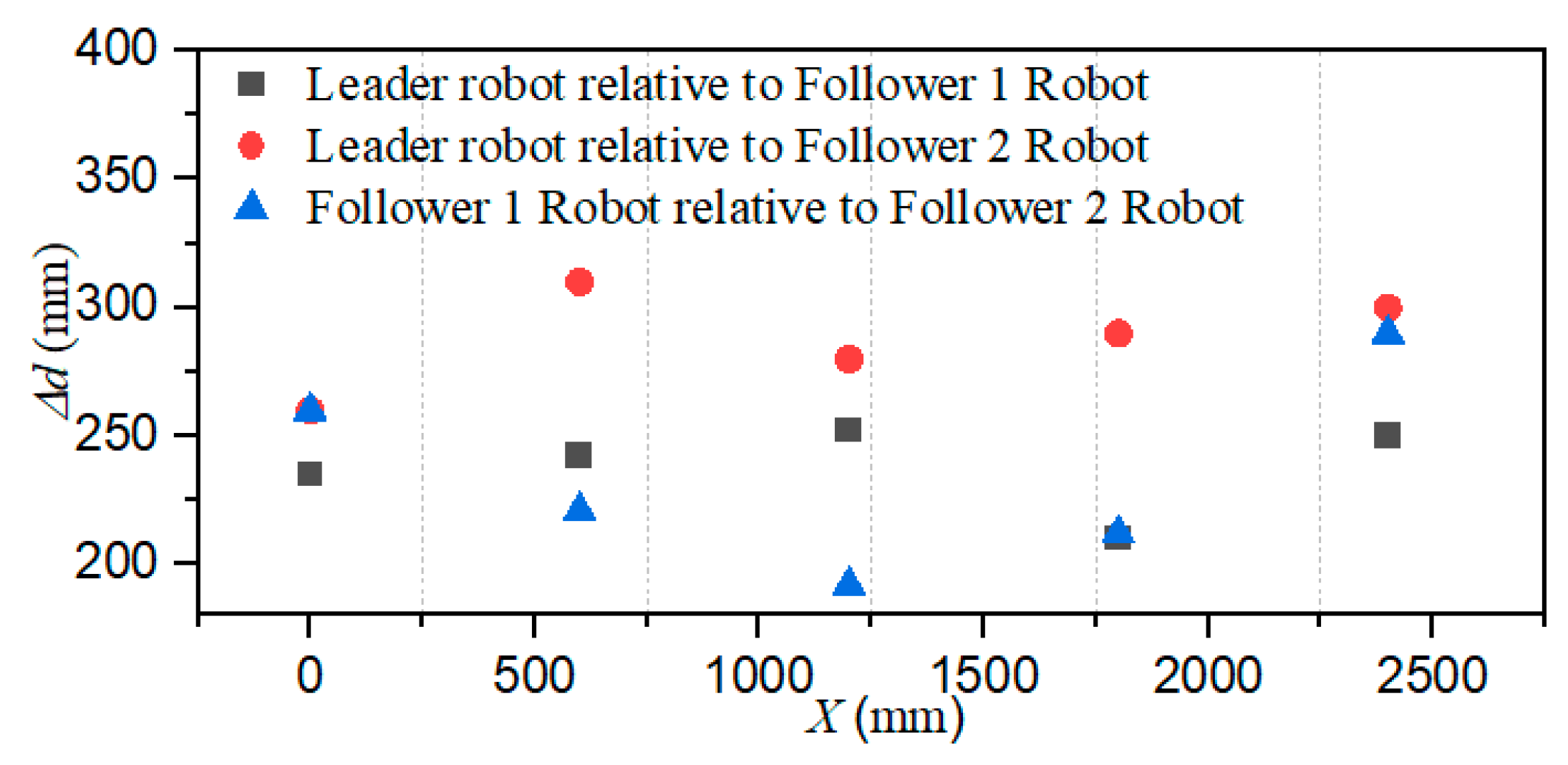

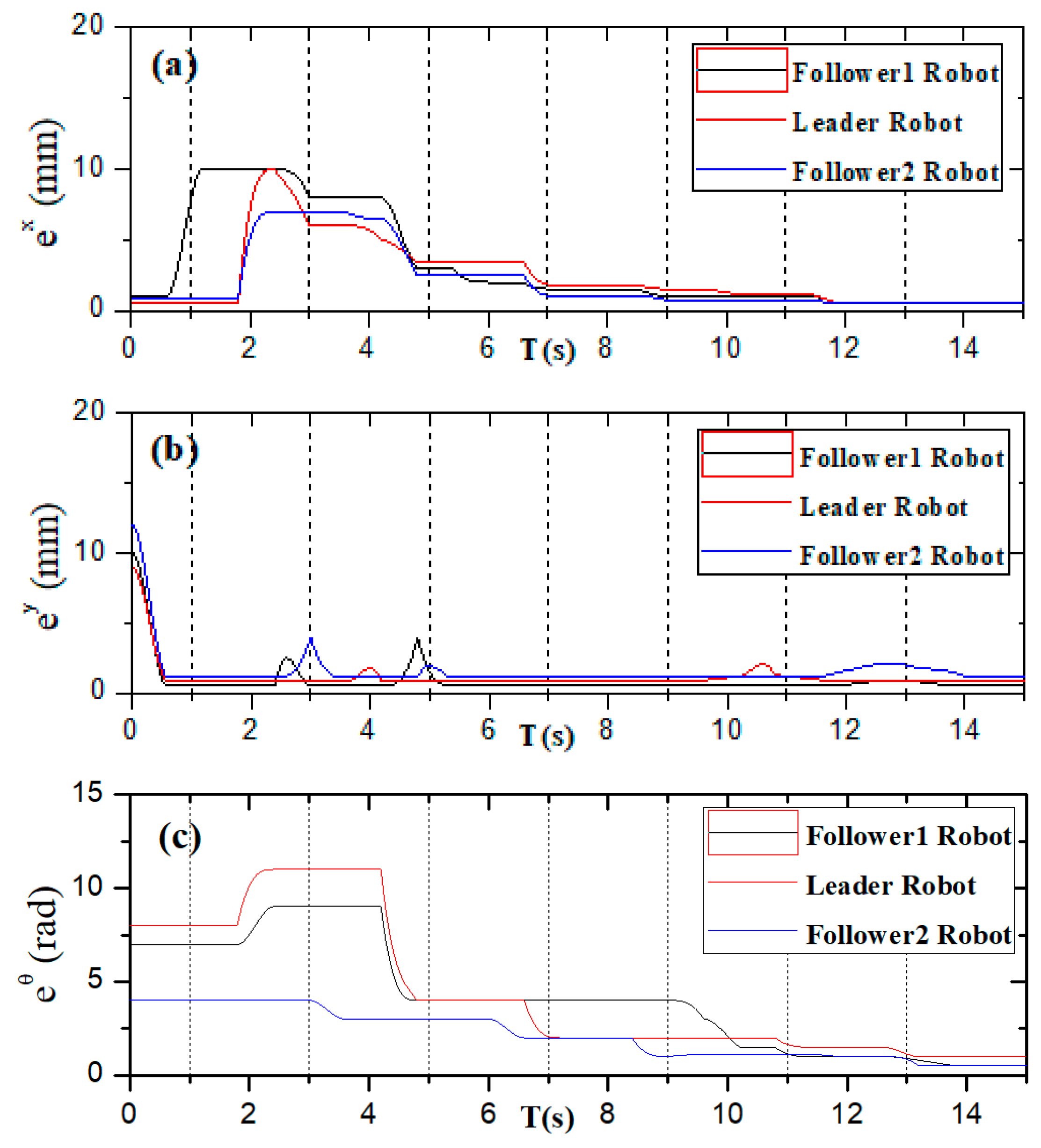

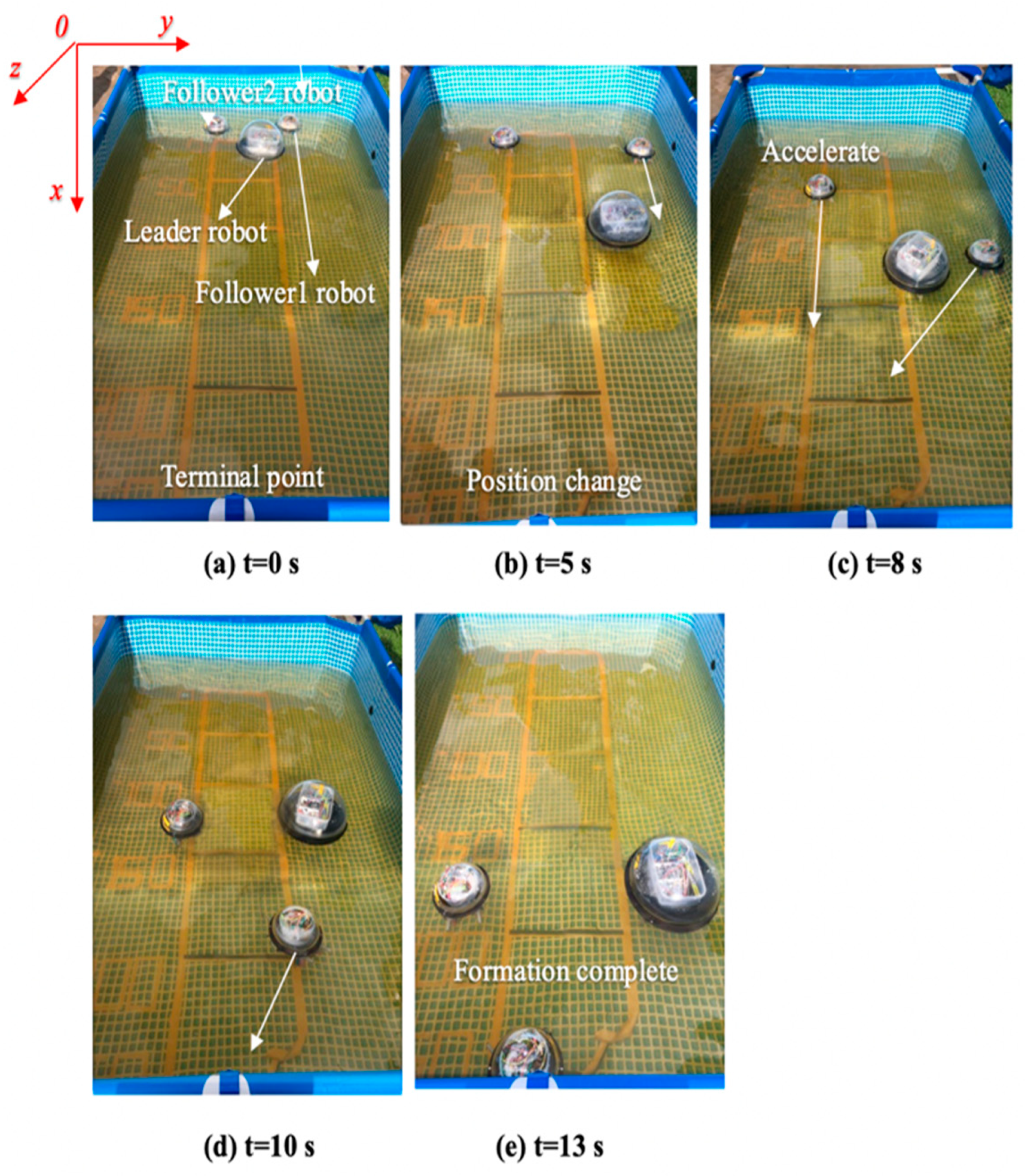

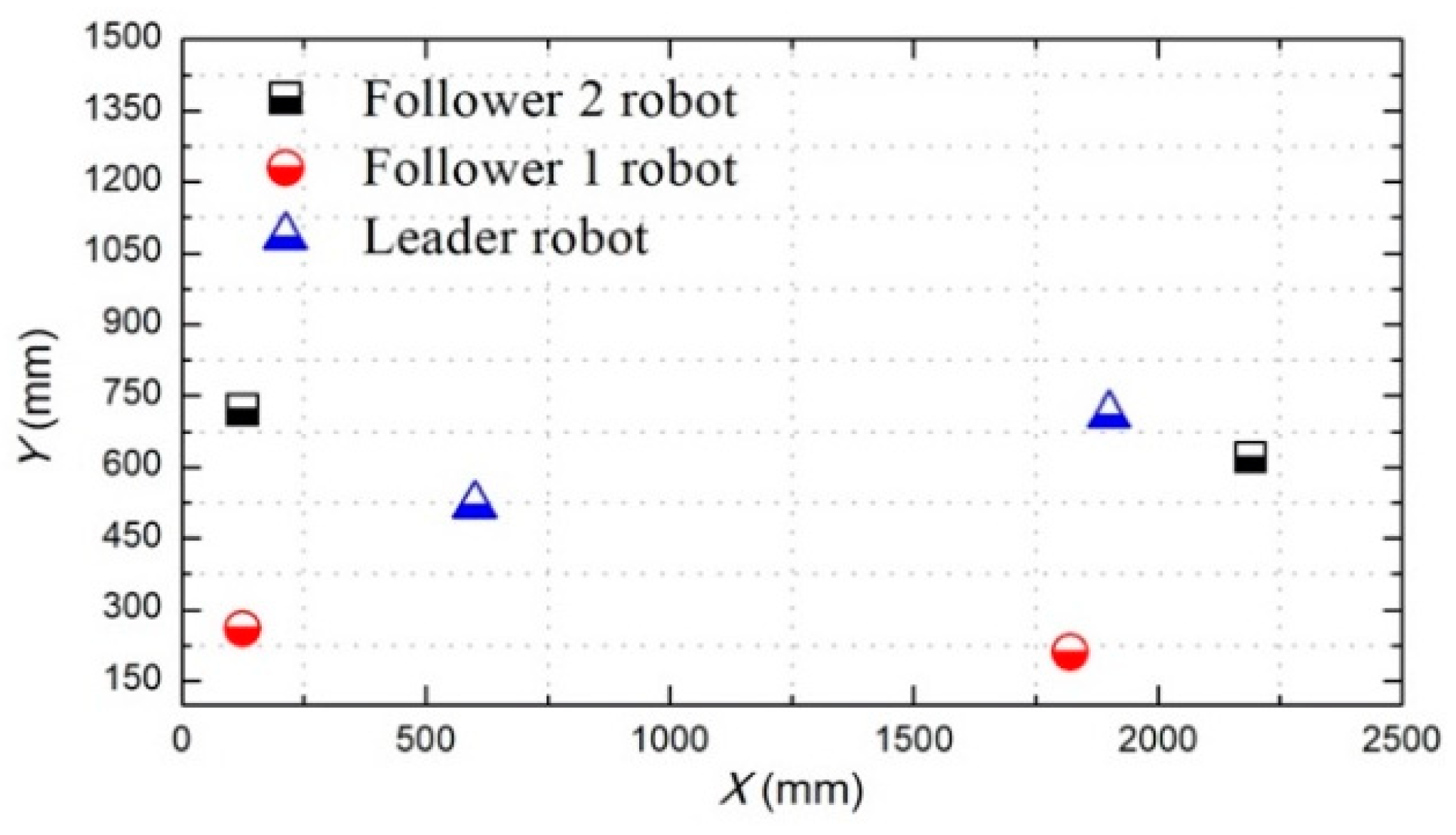

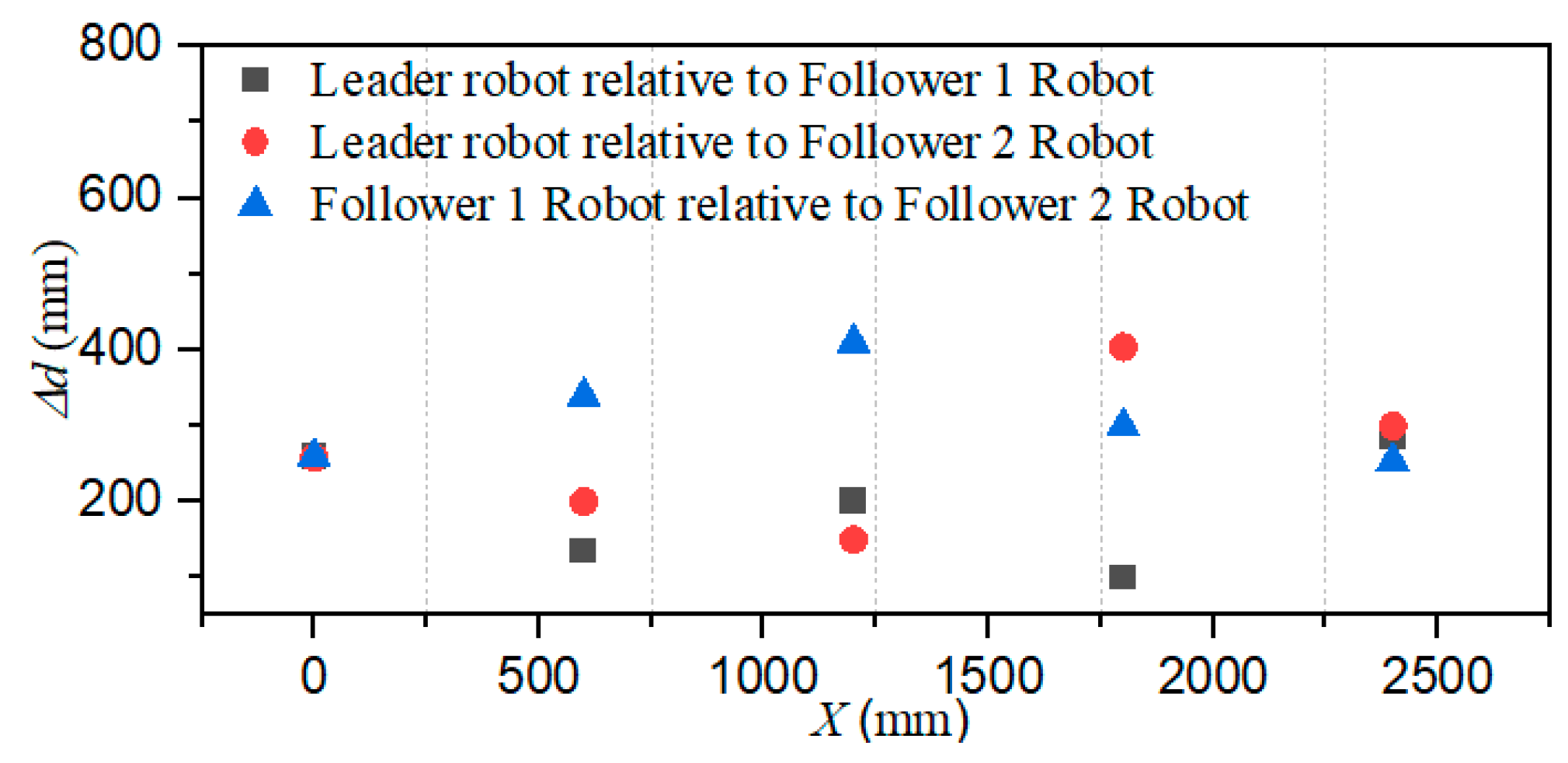

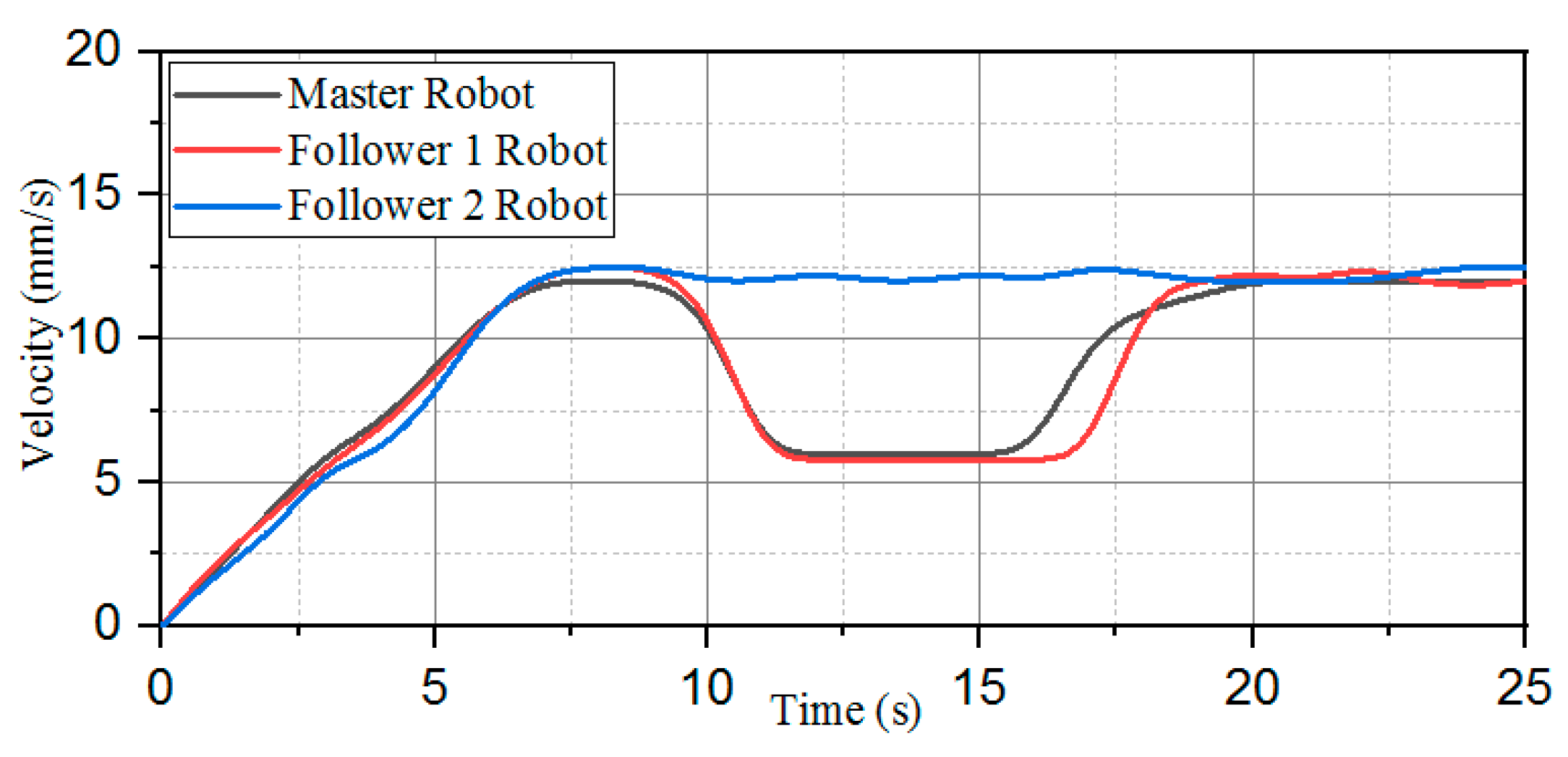

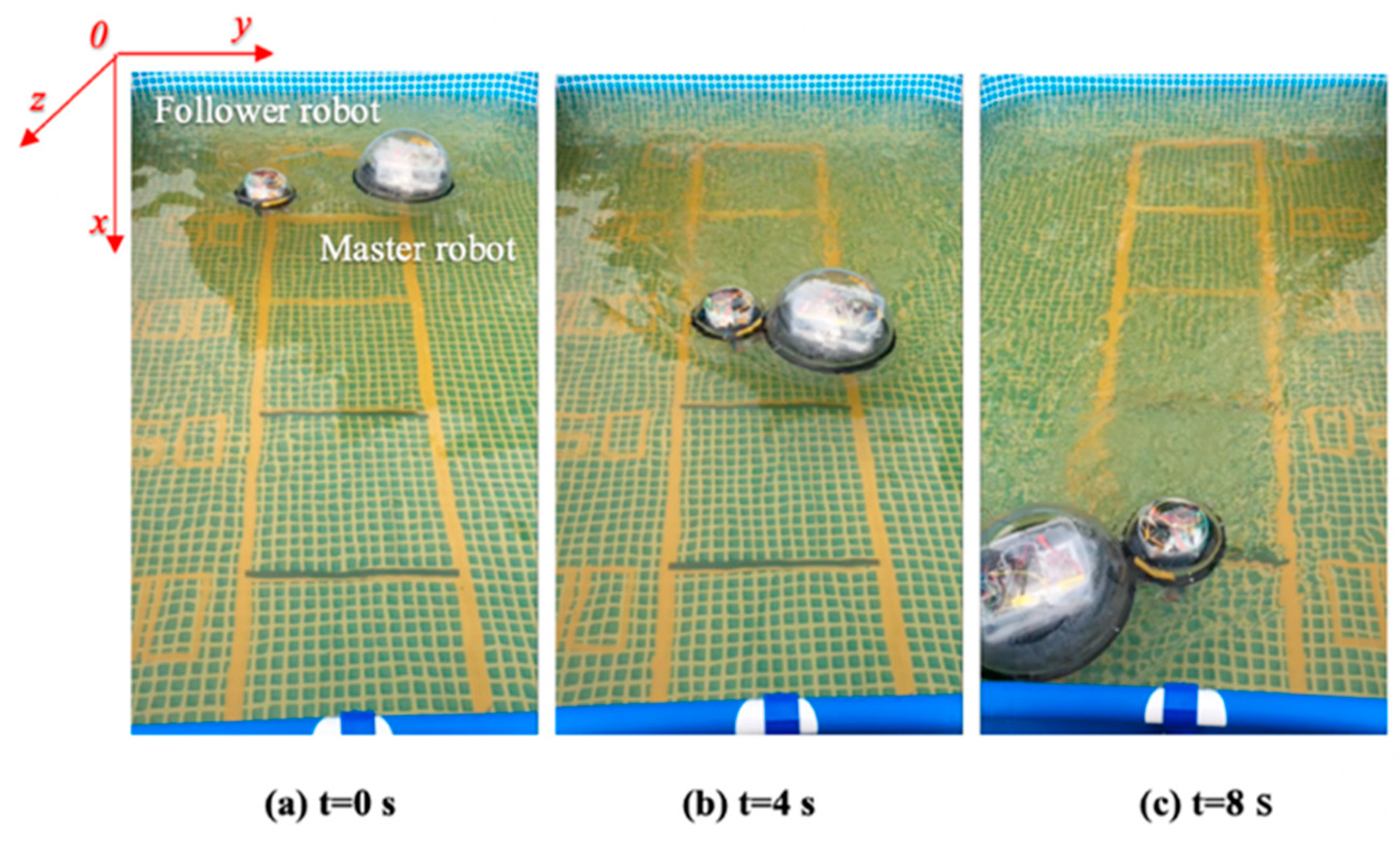

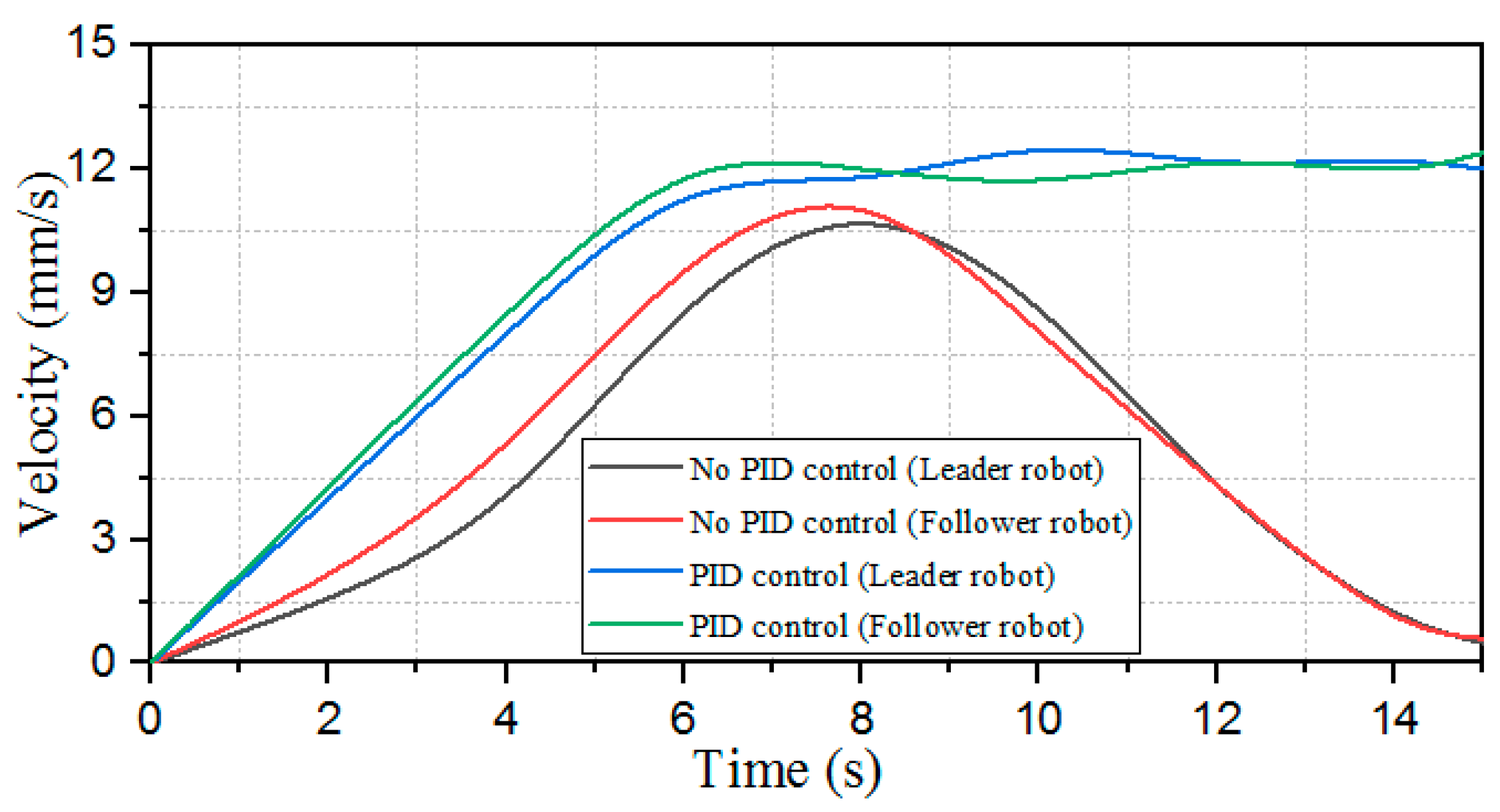

4.2. Experiment II: Formation Control Evaluation

4.3. Experiment III: Bidding Strategy for Multi Robot

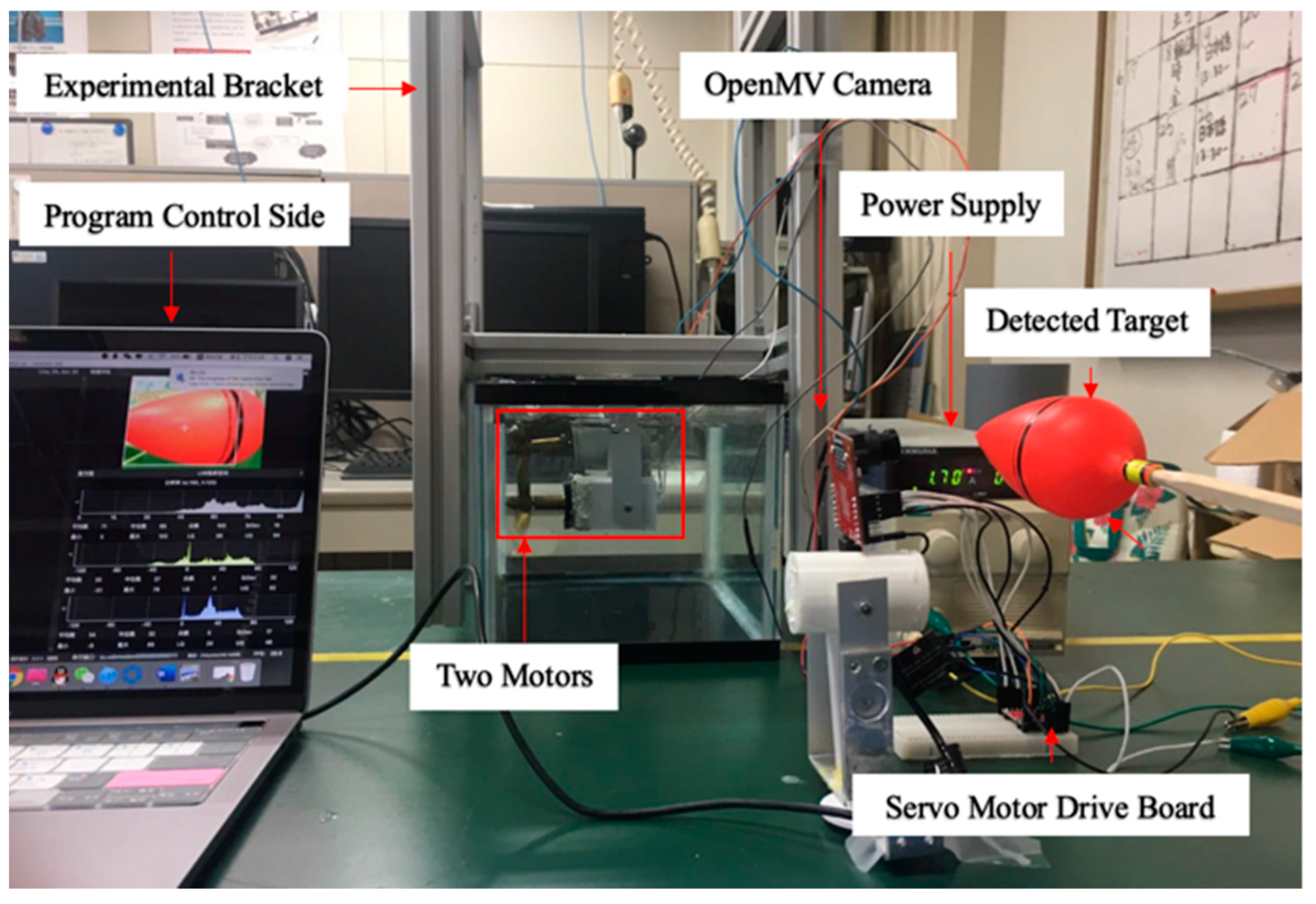

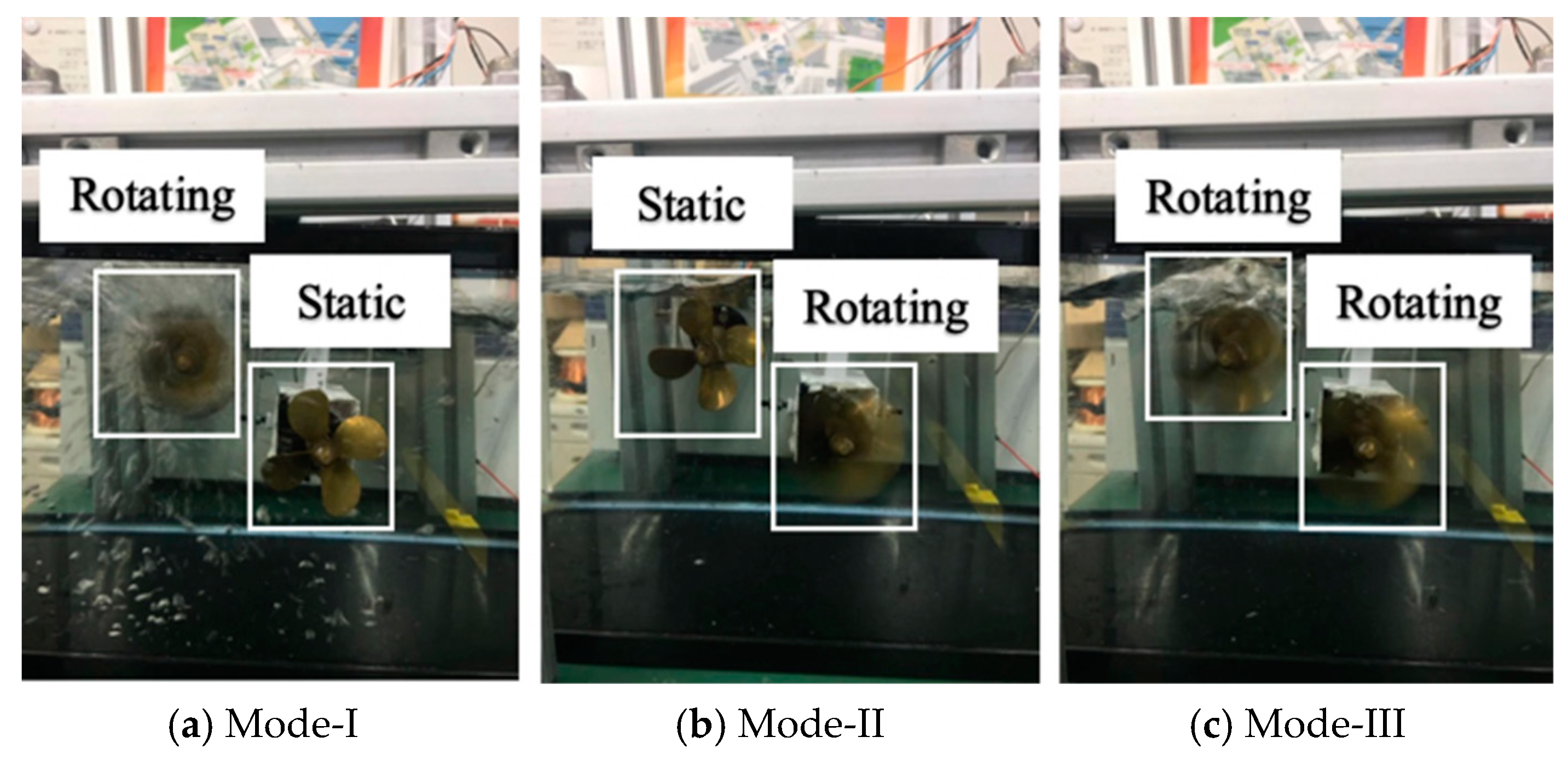

4.4. Experiment IV: Visual Servoing Experiment

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Zheng, L.; Guo, S.; Piao, Y.; Gu, S.; An, R.; Sui, W. Study on cooperative control algorithm of two spherical amphibious robots. In Proceedings of the 2019 IEEE International Conference on Mechatronics and Automation (ICMA), Tianjin, China, 4–7 August 2019; pp. 76–80. [Google Scholar]

- Verma, S.; Xu, J.X. Analytic modeling for precise speed tracking of multilink robotic fish. IEEE Trans. Ind. Electron. 2017, 65, 5665–5672. [Google Scholar] [CrossRef]

- Wu, Z.; Liu, J.; Yu, J.; Fang, H. Development of a novel robotic dolphin and its application to water quality monitoring. IEEE/ASME Trans. Mechatron. 2017, 22, 2130–2140. [Google Scholar] [CrossRef]

- Bi, C.; Guix, M.; Johnson, B.; Jing, W.; Cappelleri, D. Design of microscale magnetic tumbling robots for locomotion in multiple environments and complex terrains. Micromachines 2018, 9, 68. [Google Scholar] [CrossRef]

- Wang, W.; Yan, G.; Wang, Z.; Jiang, P.; Meng, Y.; Chen, F.; Xue, R. A Novel Expanding Mechanism of Gastrointestinal Microrobot: Design, Analysis and Optimization. Micromachines 2019, 10, 724. [Google Scholar] [CrossRef] [PubMed]

- Hu, T.; Low, K.H.; Shen, L.; Xu, X. Effective phase tracking for bioinspired undulations of robotic fish models: A learning control approach. IEEE/ASME Trans. Mechatron. 2012, 19, 191–200. [Google Scholar] [CrossRef]

- Giorgio-Serchi, F.; Arienti, A.; Laschi, C. Underwater soft-bodied pulsed-jet thrusters: Actuator modeling and performance profiling. Int. J. Robot. Res. 2016, 35, 1308–1329. [Google Scholar]

- Kohl, A.M.; Kelasidi, E.; Mohammadi, A.; Maggiore, M.; Pettersen, K.Y. Planar maneuvering control of underwater snake robots using virtual holonomic constraints. Bioinspiration Biomim. 2016, 11, 065005. [Google Scholar] [CrossRef]

- Ailon, A.; Zohar, I. Control strategies for driving a group of nonholonomic kinematic mobile robots in formation along a time-parameterized path. IEEE/ASME Trans. Mechatron. 2011, 17, 326–336. [Google Scholar] [CrossRef]

- Xing, H.; Shi, L.; Tang, K.; Guo, S.; Hou, X.; Liu, Y.; Hu, Y. Robust RGB-D Camera and IMU Fusion-based Cooperative and Relative Close-range Localization for Multiple Turtle-inspired Amphibious Spherical Robots. J. Bionic Eng. 2019, 16, 442–454. [Google Scholar] [CrossRef]

- Zhang, H.; Wang, W.; Zhou, Y.; Wang, C.; Fan, R.; Xie, G. CSMA/CA-based electrocommunication system design for underwater robot groups. In Proceedings of the 2017 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Vancouver, BV, Canada, 24–28 September 2017; pp. 2415–2420. [Google Scholar]

- Ortiz, A.; Bonnin-Pascual, F.; Garcia-Fidalgo, E. Vision-based corrosion detection assisted by a micro-aerial vehicle in a vessel inspection application. Sensors 2016, 16, 2118. [Google Scholar] [CrossRef]

- Garcia-Fidalgo, E.; Ortiz, A.; Massot-Campos, M. Vision-Based Control for an AUV in a Multi-robot Undersea Intervention Task. In Proceedings of the Iberian Robotics Conference, Porto, Portugal, 20–22 November 2017; Springer: Cham, Switzerland, 2017. [Google Scholar]

- Bonnin-Pascual, F.; Ortiz, A.; Garcia-Fidalgo, E. Testing the Control Architecture of a Micro-Aerial Vehicle for Visual Inspection of Vessels. In Proceedings of the Iberian Robotics Conference, Porto, Portugal, 20–22 November 2017; Springer: Cham, Switzerland, 2017. [Google Scholar]

- Cai, H.; Huang, J. The leader-following consensus for multiple uncertain Euler-Lagrange systems with an adaptive distributed observer. IEEE Trans. Autom. Control 2015, 61, 3152–3157. [Google Scholar] [CrossRef]

- Wang, H. Second-order consensus of networked thrust-propelled vehicles on directed graphs. IEEE Trans. Autom. Control 2015, 61, 222–227. [Google Scholar] [CrossRef]

- Ghapani, S.; Mei, J.; Ren, W.; Song, Y. Fully distributed flocking with a moving leader for Lagrange networks with parametric uncertainties. Automatica 2016, 67, 67–76. [Google Scholar] [CrossRef]

- Meng, Z.; Xia, W.; Johansson, K.H.; Hirche, S. Stability of positive switched linear systems: Weak excitation and robustness to time-varying delay. IEEE Trans. Autom. Control 2016, 62, 399–405. [Google Scholar] [CrossRef]

- Dong, X.; Hu, G. Time-varying formation tracking for linear multiagent systems with multiple leaders. IEEE Trans. Autom. Control 2017, 62, 3658–3664. [Google Scholar] [CrossRef]

- Wei, H.; Lv, Q.; Duo, N.; Wang, G.; Liang, B. Consensus Algorithms Based Multi-Robot Formation Control under Noise and Time Delay Conditions. Appl. Sci. 2019, 9, 1004. [Google Scholar] [CrossRef]

- Wen, G.; Zhao, Y.; Duan, Z.; Yu, W.; Chen, G. Containment of higher-order multi-leader multi-agent systems: A dynamic output approach. IEEE Trans. Autom. Control 2015, 61, 1135–1140. [Google Scholar] [CrossRef]

- Mu, X.; Liu, K. Containment control of single-integrator network with limited communication data rate. IEEE Trans. Autom. Control 2015, 61, 2232–2238. [Google Scholar] [CrossRef]

- Pearce, M.; Mutlu, B.; Shah, J.; Radwin, R. Optimizing makespan and ergonomics in integrating collaborative robots into manufacturing processes. IEEE Trans. Autom. Sci. Eng. 2018, 15, 1772–1784. [Google Scholar] [CrossRef]

- Biswas, S.; Anavatti, S.G.; Garratt, M.A. A Time-Efficient Co-Operative Path Planning Model Combined with Task Assignment for Multi-Agent Systems. Robotics 2019, 8, 35. [Google Scholar] [CrossRef]

- Bit-Monnot, A.; Leofante, F.; Pulina, L.; Tacchella, A. SMT-based Planning for Robots in Smart Factories. In Proceedings of the International Conference on Industrial, Engineering and Other Applications of Applied Intelligent Systems, Graz, Austria, 9–11 July 2019; Springer: Cham, Switzerland, 2019. [Google Scholar]

- Zhang, J.; Ding, D.W.; An, C. Fault-Tolerant Containment Control for Linear Multi-Agent Systems: An Adaptive Output Regulation Approach. IEEE Access 2019, 7, 89306–89315. [Google Scholar] [CrossRef]

- Yu, J.; Liu, J.; Wu, Z.; Fang, H. Depth control of a bioinspired robotic dolphin based on sliding-mode fuzzy control method. IEEE Trans. Ind. Electron. 2017, 65, 2429–2438. [Google Scholar] [CrossRef]

- Zhao, L.; Yu, J.; Shi, P. Command Filtered Backstepping-Based Attitude Containment Control for Spacecraft Formation. IEEE Trans. Syst. Man Cybern. Syst. 2019, 20, 1–10. [Google Scholar] [CrossRef]

- Zhang, Y.; Li, J.; Zakharov, Y.V.; Li, J.; Li, Y.; Lin, C.; Li, X. Deep learning based single carrier communications over time-varying underwater acoustic channel. IEEE Access 2019, 7, 38420–38430. [Google Scholar] [CrossRef]

- Park, K.-H. IEEE Access Special Section Editorial: Underwater Wireless Communications and Networking. IEEE Access 2019, 7, 52288–52294. [Google Scholar] [CrossRef]

- Siddiqui, S.I.; Dong, H. Time Diversity Passive Time Reversal for Underwater Acoustic Communications. IEEE Access 2019, 7, 24258–24266. [Google Scholar] [CrossRef]

- Centelles, D.; Soriano-Asensi, A.; Martí, J.V.; Marín, R.; Sanz, P.J. Underwater Wireless Communications for Cooperative Robotics with UWSim-NET. Appl. Sci. 2019, 9, 3526. [Google Scholar] [CrossRef]

- Xu, F.; Wang, H.; Wang, J.; Au, K.W.S.; Chen, W. Underwater Dynamic Visual Servoing for A Soft Robot Arm with Online Distortion Correction. IEEE/ASME Trans. Mechatron. 2019, 24, 979–989. [Google Scholar] [CrossRef]

- Li, J.; Huang, H.; Xu, Y.; Wu, H.; Wan, L. Uncalibrated Visual Servoing for Underwater Vehicle Manipulator Systems with an Eye in Hand Configuration Camera. Sensors 2019, 19, 5469. [Google Scholar] [CrossRef]

- Sheng, M.; Tang, S.; Qin, H.; Wan, L. Clustering Cloud-Like Model-Based Targets Underwater Tracking for AUVs. Sensors 2019, 19, 370. [Google Scholar] [CrossRef]

- Zheng, L.; Guo, S.; Gu, S. The communication and stability evaluation of amphibious spherical robots. Microsyst. Technol. 2018, 25, 2625–2636. [Google Scholar] [CrossRef]

- Zheng, L.; Guo, S.; Gu, S. Structure Improvement and Stability for an Amphibious Spherical Robot. In Proceedings of the 2018 IEEE International Conference on Mechatronics and Automation (ICMA), Changchun, China, 5–8 August 2018; IEEE: Piscataway Township, NJ, USA, 2018. [Google Scholar]

- Gu, S.; Guo, S. Performance evaluation of a novel propulsion system for the spherical underwater robot (SURIII). Appl. Sci. 2017, 7, 1196. [Google Scholar] [CrossRef]

- Xing, H.; Guo, S.; Shi, L. Hybrid locomotion evaluation for a novel amphibious spherical robot. Appl. Sci. 2018, 8, 156. [Google Scholar] [CrossRef]

- Hou, X.; Guo, S.; Shi, L. Hydrodynamic analysis-based modeling and experimental verification of a new water-jet thruster for an amphibious spherical robot. Sensors 2019, 19, 259. [Google Scholar] [CrossRef] [PubMed]

- Zheng, L.; Piao, Y.; Ma, Y. Development and control of articulated amphibious spherical robot. Microsyst. Technol. in press. [CrossRef]

- Guo, S.; He, Y.; Shi, L.; Pan, S.; Tang, K.; Xiao, R.; Guo, P. Modal and fatigue analysis of critical components of an amphibious spherical robot. Microsyst. Technol. 2017, 23, 2233–2247. [Google Scholar] [CrossRef]

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zheng, L.; Guo, S.; Piao, Y.; Gu, S.; An, R. Collaboration and Task Planning of Turtle-Inspired Multiple Amphibious Spherical Robots. Micromachines 2020, 11, 71. https://doi.org/10.3390/mi11010071

Zheng L, Guo S, Piao Y, Gu S, An R. Collaboration and Task Planning of Turtle-Inspired Multiple Amphibious Spherical Robots. Micromachines. 2020; 11(1):71. https://doi.org/10.3390/mi11010071

Chicago/Turabian StyleZheng, Liang, Shuxiang Guo, Yan Piao, Shuoxin Gu, and Ruochen An. 2020. "Collaboration and Task Planning of Turtle-Inspired Multiple Amphibious Spherical Robots" Micromachines 11, no. 1: 71. https://doi.org/10.3390/mi11010071

APA StyleZheng, L., Guo, S., Piao, Y., Gu, S., & An, R. (2020). Collaboration and Task Planning of Turtle-Inspired Multiple Amphibious Spherical Robots. Micromachines, 11(1), 71. https://doi.org/10.3390/mi11010071