Sensors that Learn: The Evolution from Taste Fingerprints to Patterns of Early Disease Detection

Abstract

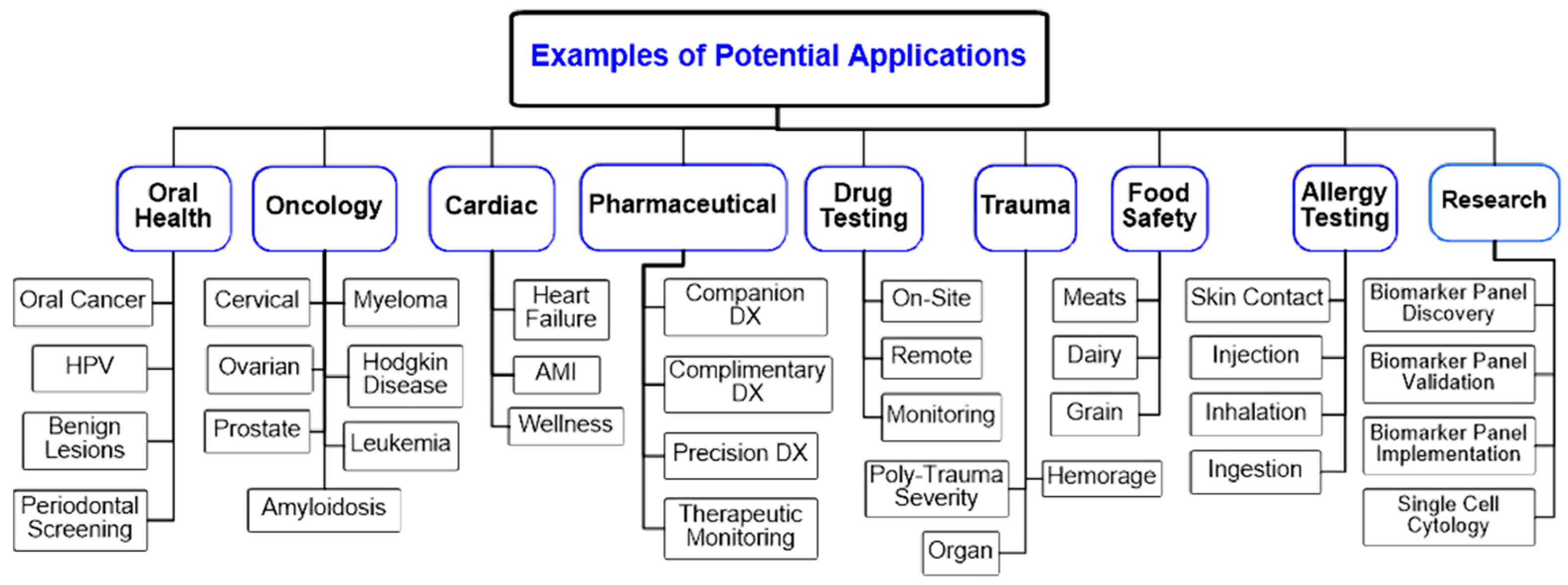

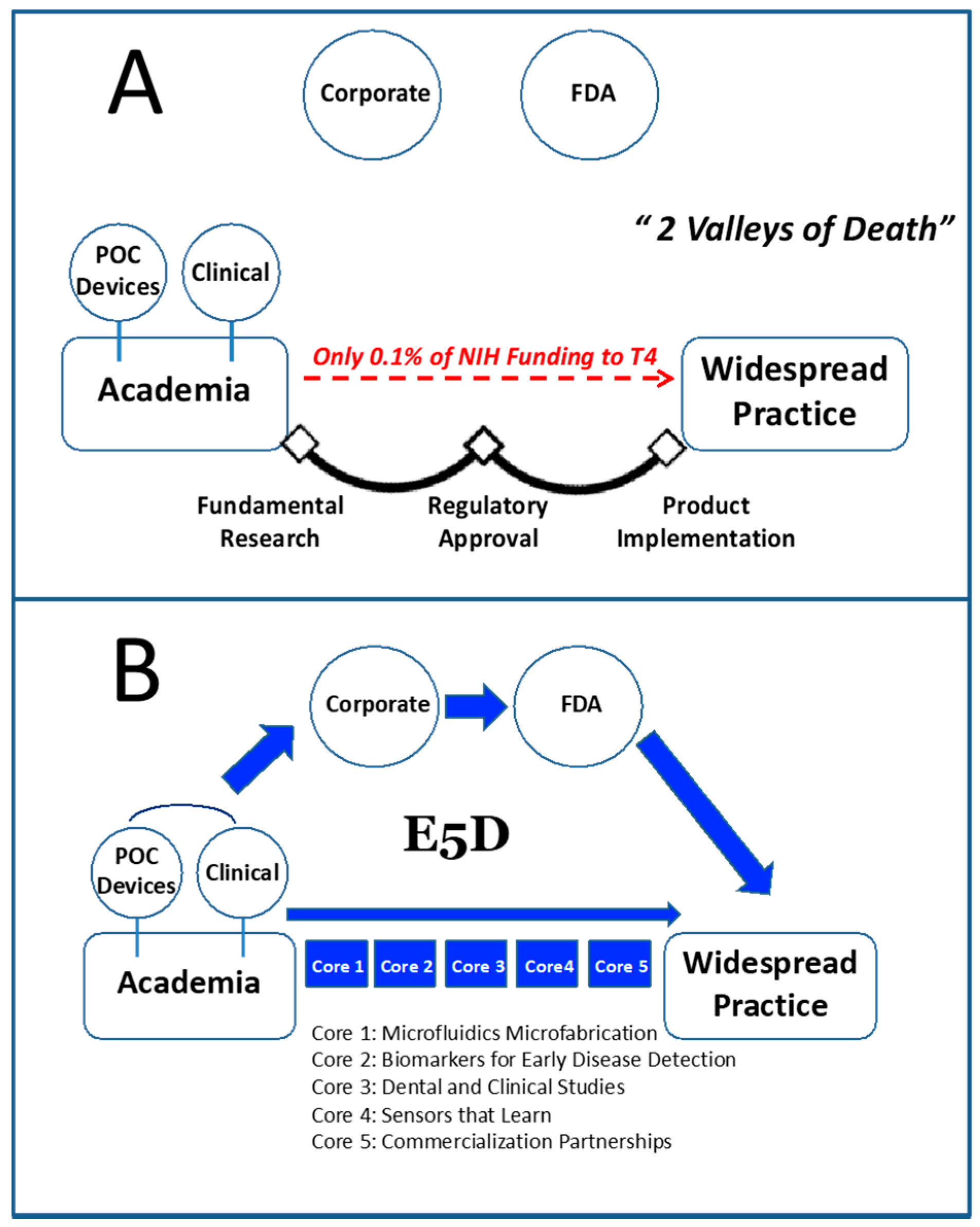

1. Introduction

- (1)

- To develop and integrate LOC methods for general chemistry, proteomic, genomic, and cellular analyses.

- (2)

- To accelerate the translation of laboratory findings into point of need sensors for real-world applications; that is, moving technologies from bench-to-bedside.

- (3)

- To create an efficient pipeline of medical diagnostic devices hosting relevant tests to measure condition-specific relevant biomarkers and identification of disease-specific fingerprints.

2. Sense of Taste

3. Background on Electronic Nose vs. Electronic Tongue

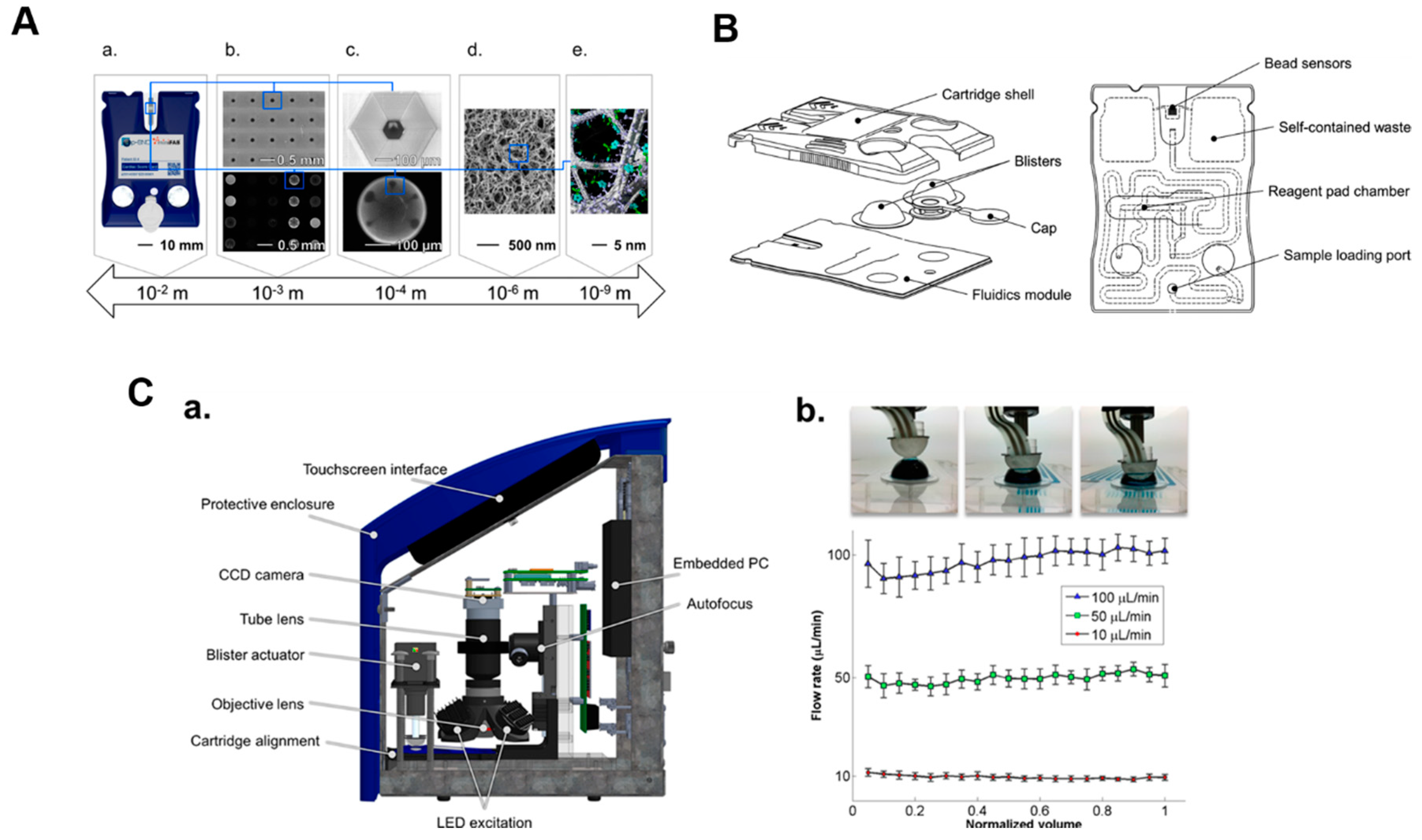

4. Electronic Taste Chip

5. Moving Past Taste and into a Programmable Sensing Platform

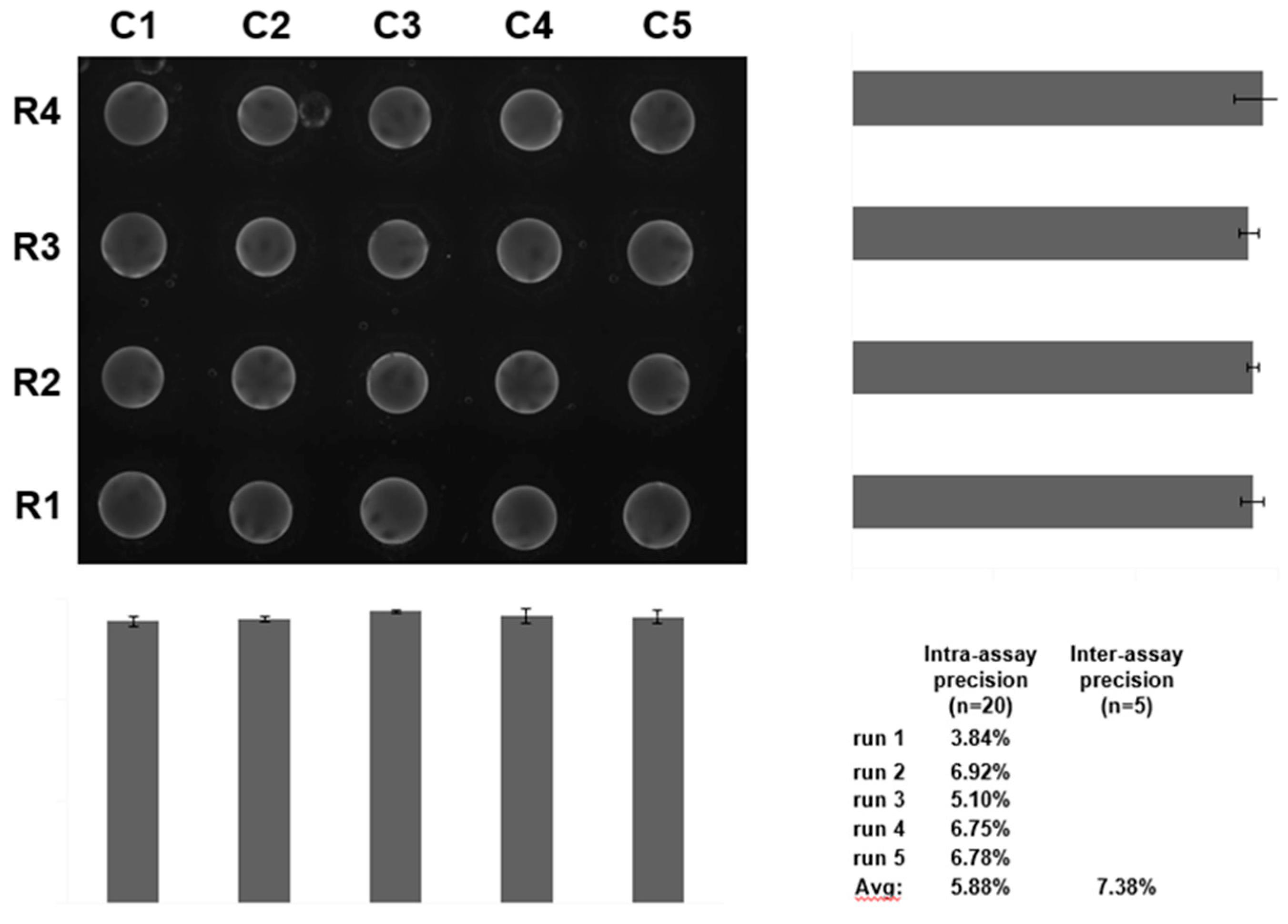

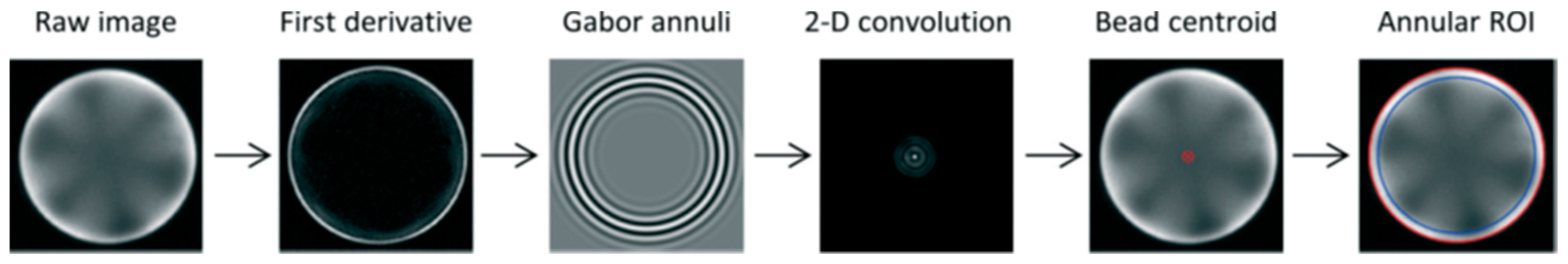

6. Image Analysis for the Bead-Based Programmable Bio-Nano-Chip (p-BNC) Sensor

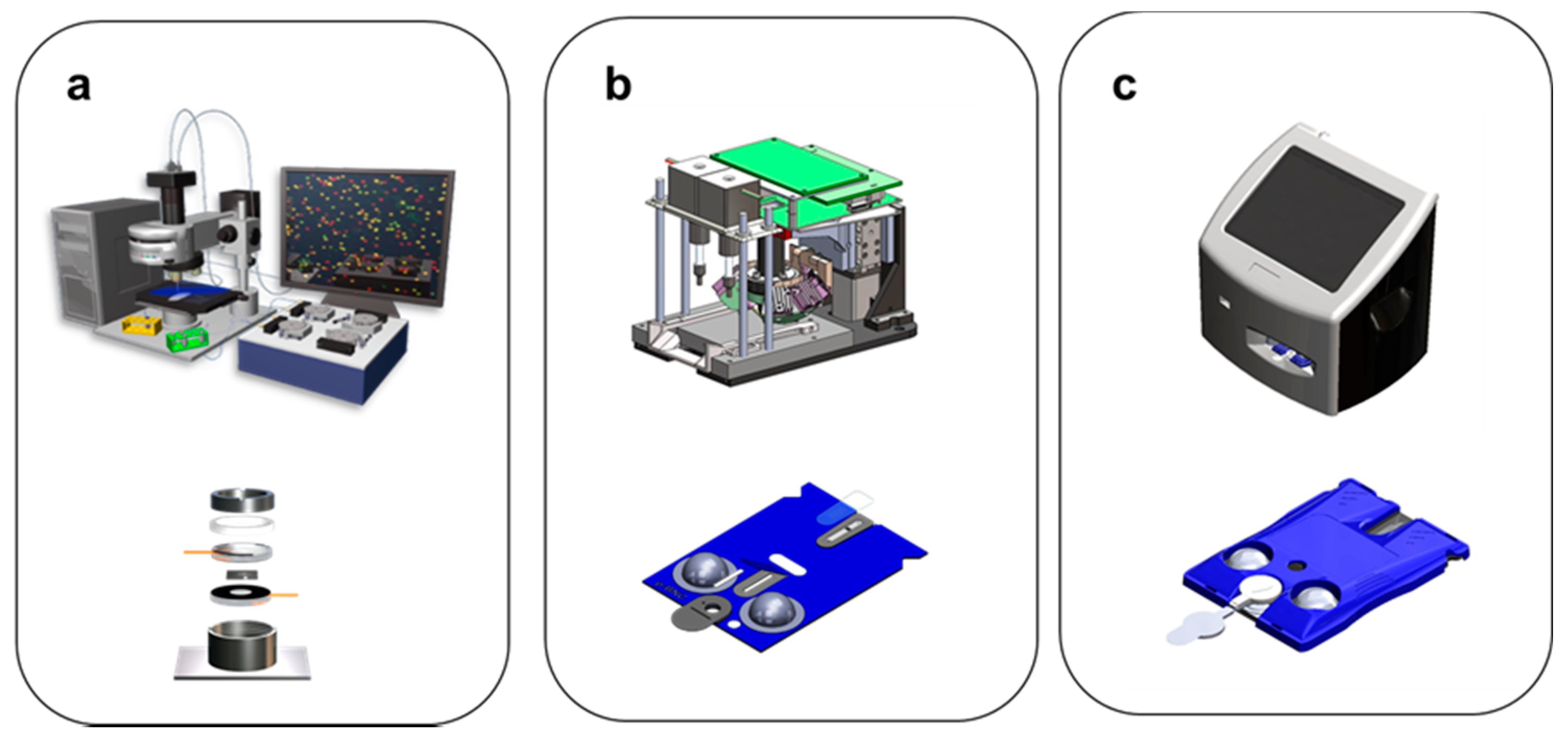

7. Evolution of the Smart Sensor Platform

- MACRO electronic taste chip (not integrated, chemistry of taste focus)

- MICRO p-BNC (partial integration, biomarker focus)

- Bead configuration for solution phase detection

- Membrane configuration

- MICRO platform to digitize biology (full integration, scalable cartridges)

- Bead configuration cartridge for solution phase detection

- Membrane cartridge configuration

- Both configurations compatible with universal image instrumentation

8. Expansion of the Menu: Membrane-Based /Dual-Function Sensors and Additional Bead-Based Assays

9. Sensor Integration into Clinical Flow

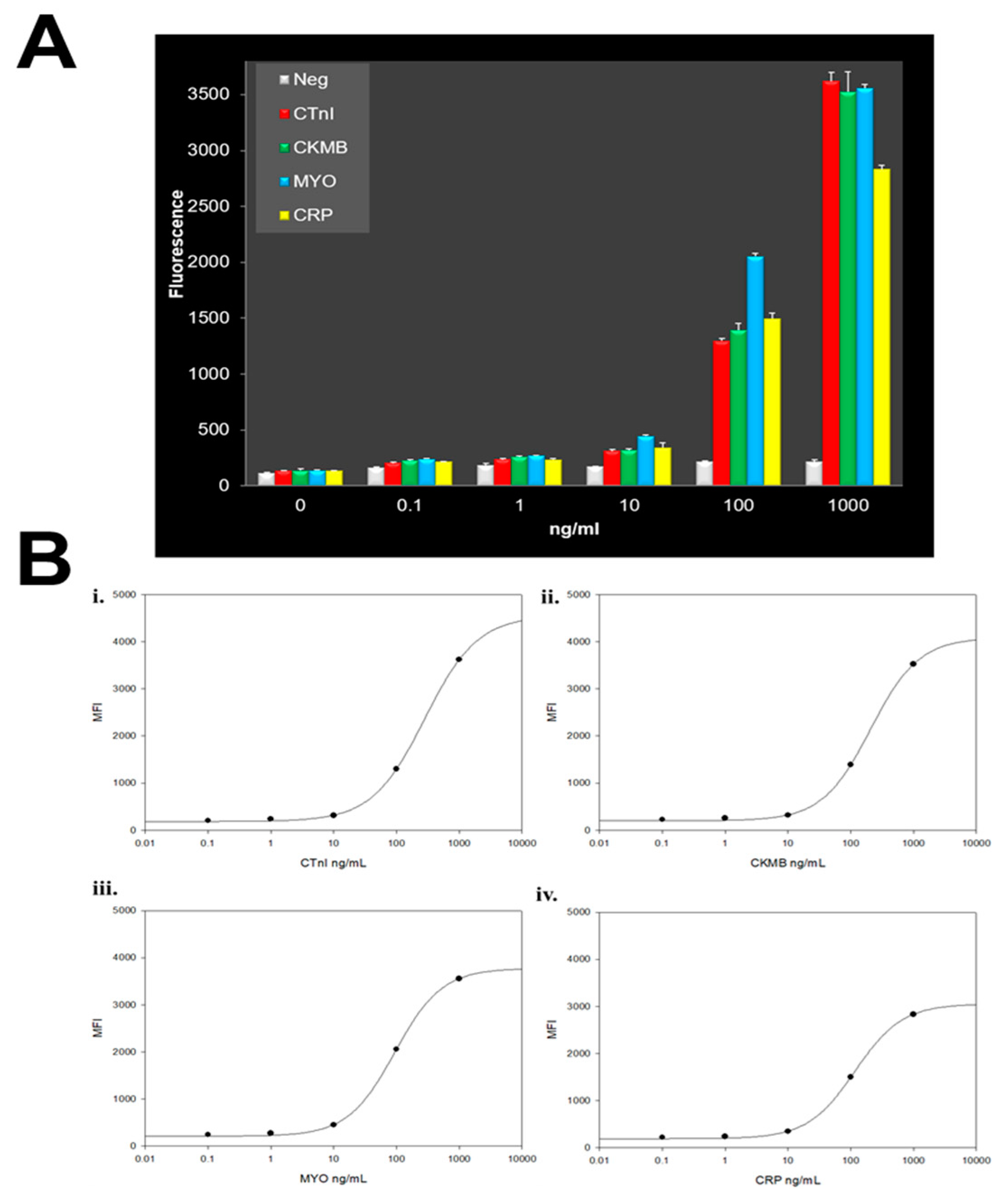

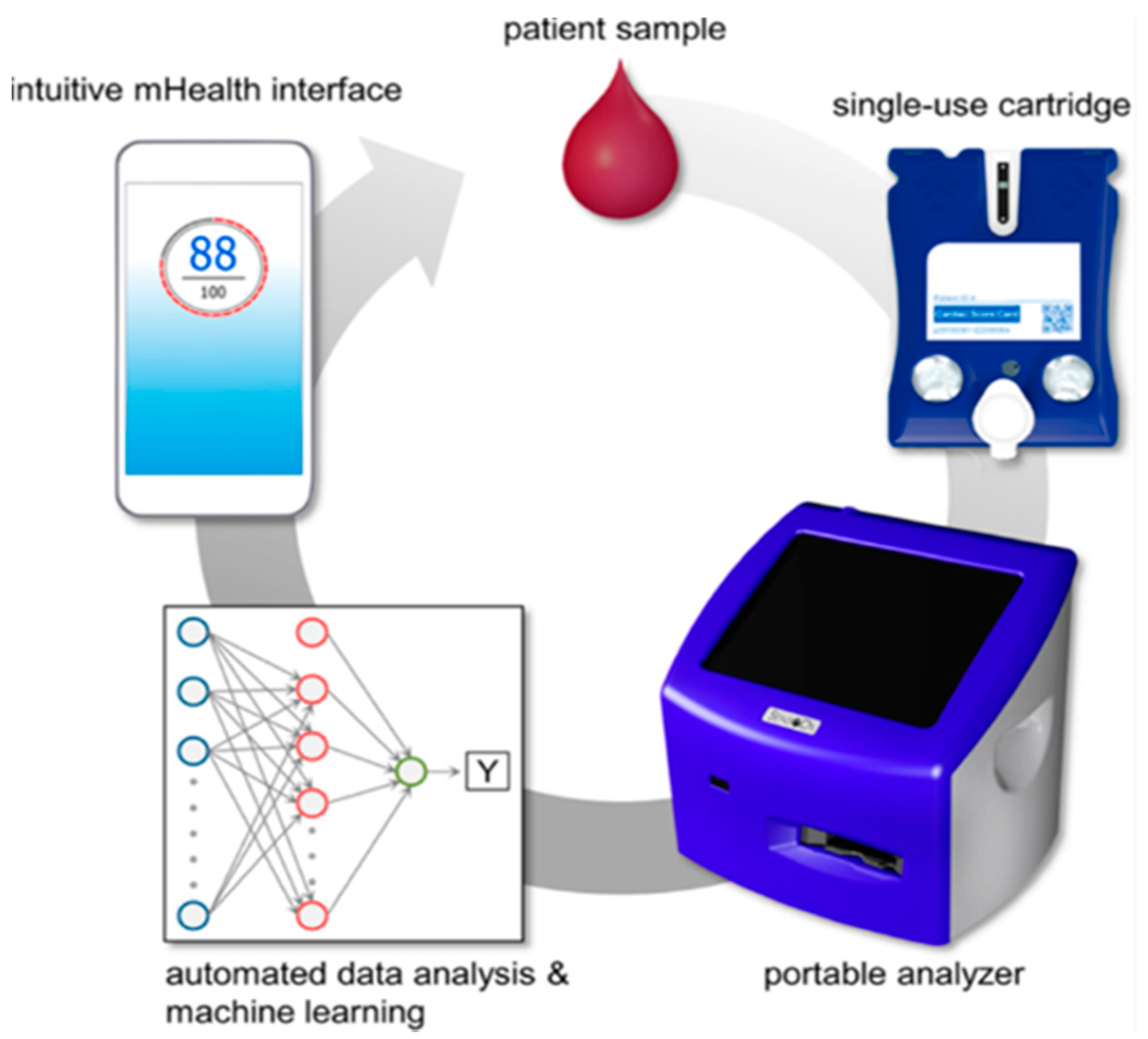

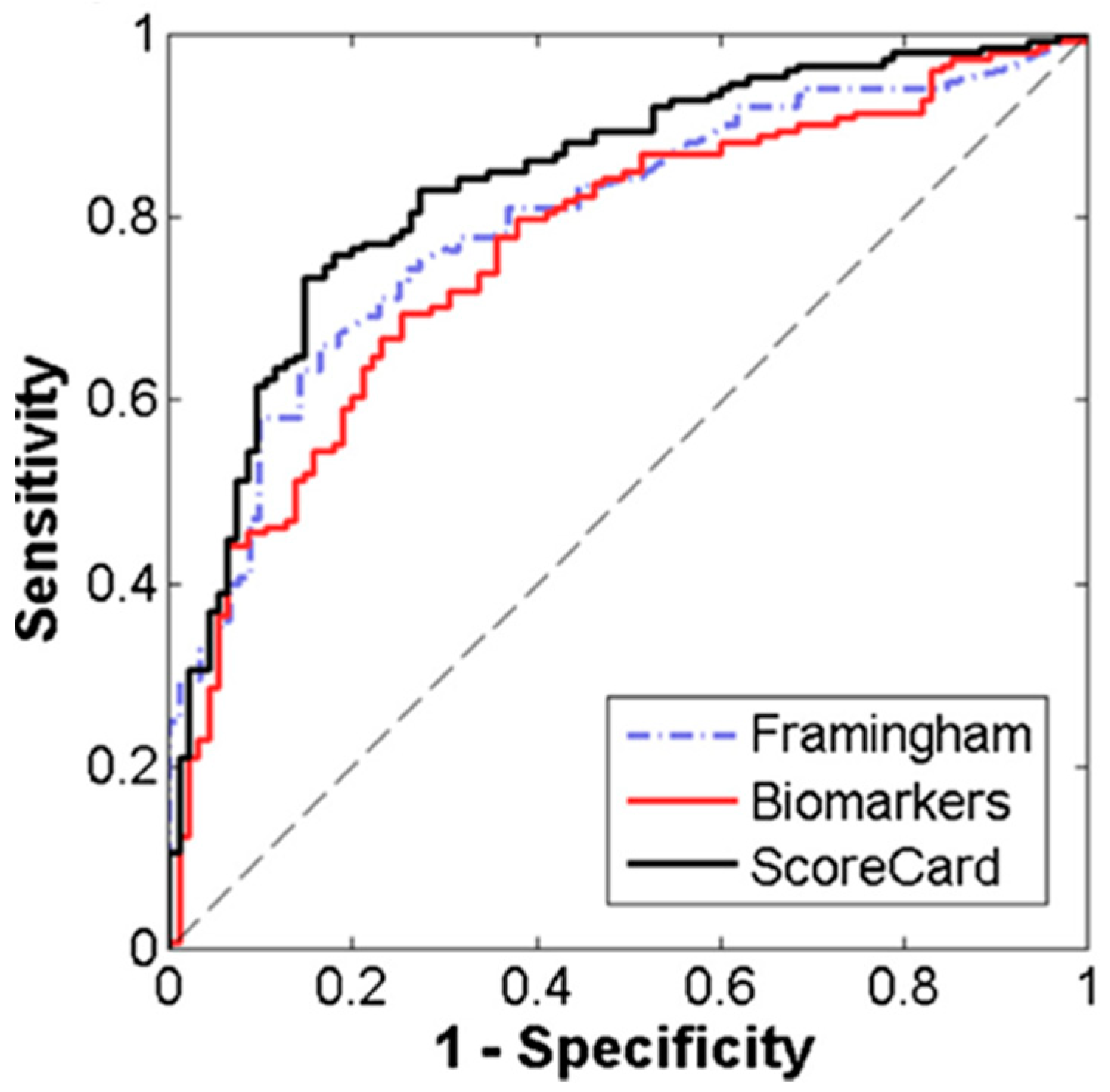

10. Artificial Intelligence-Linked Diagnostic Platforms for Early Cardiac Disease Detection

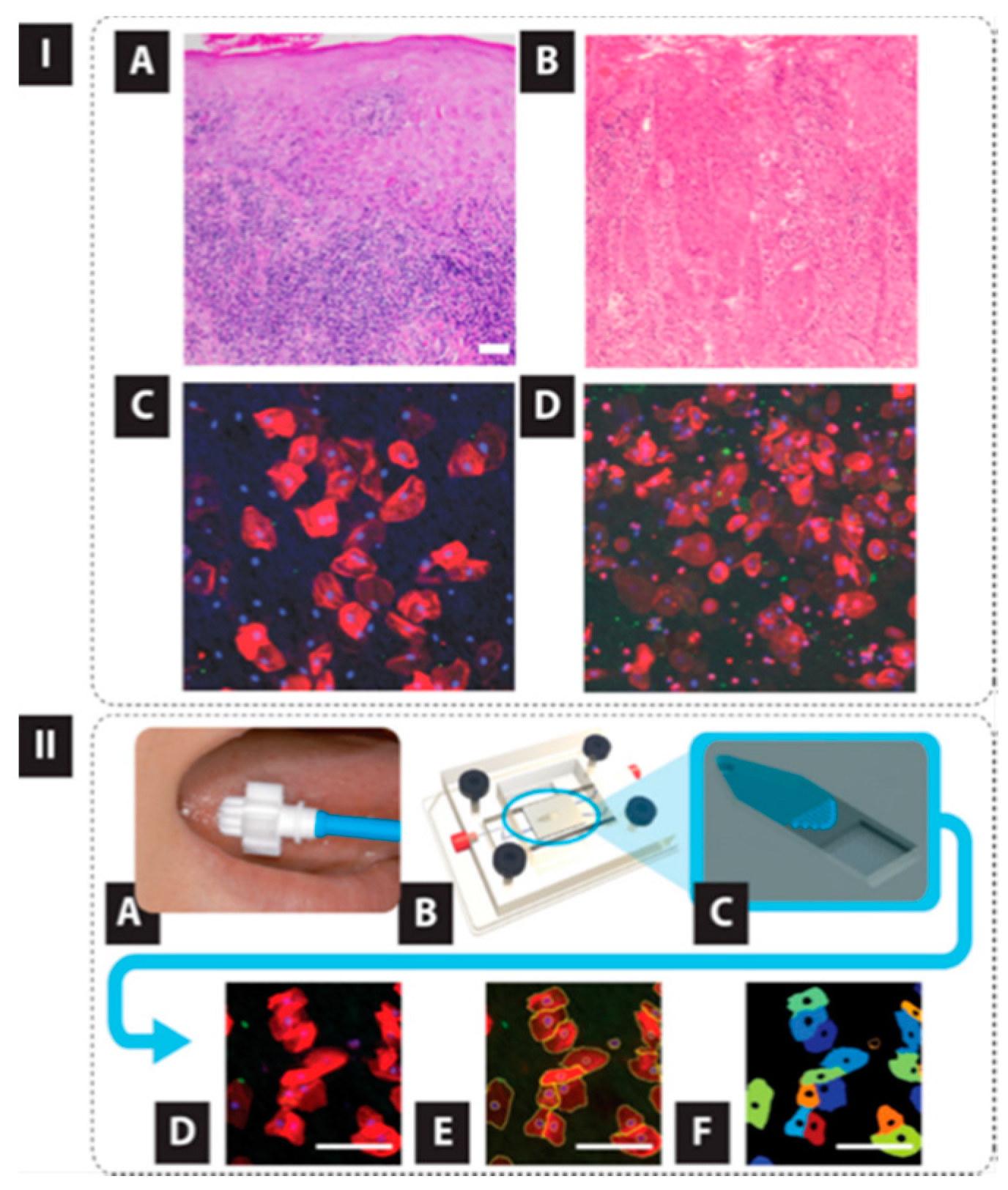

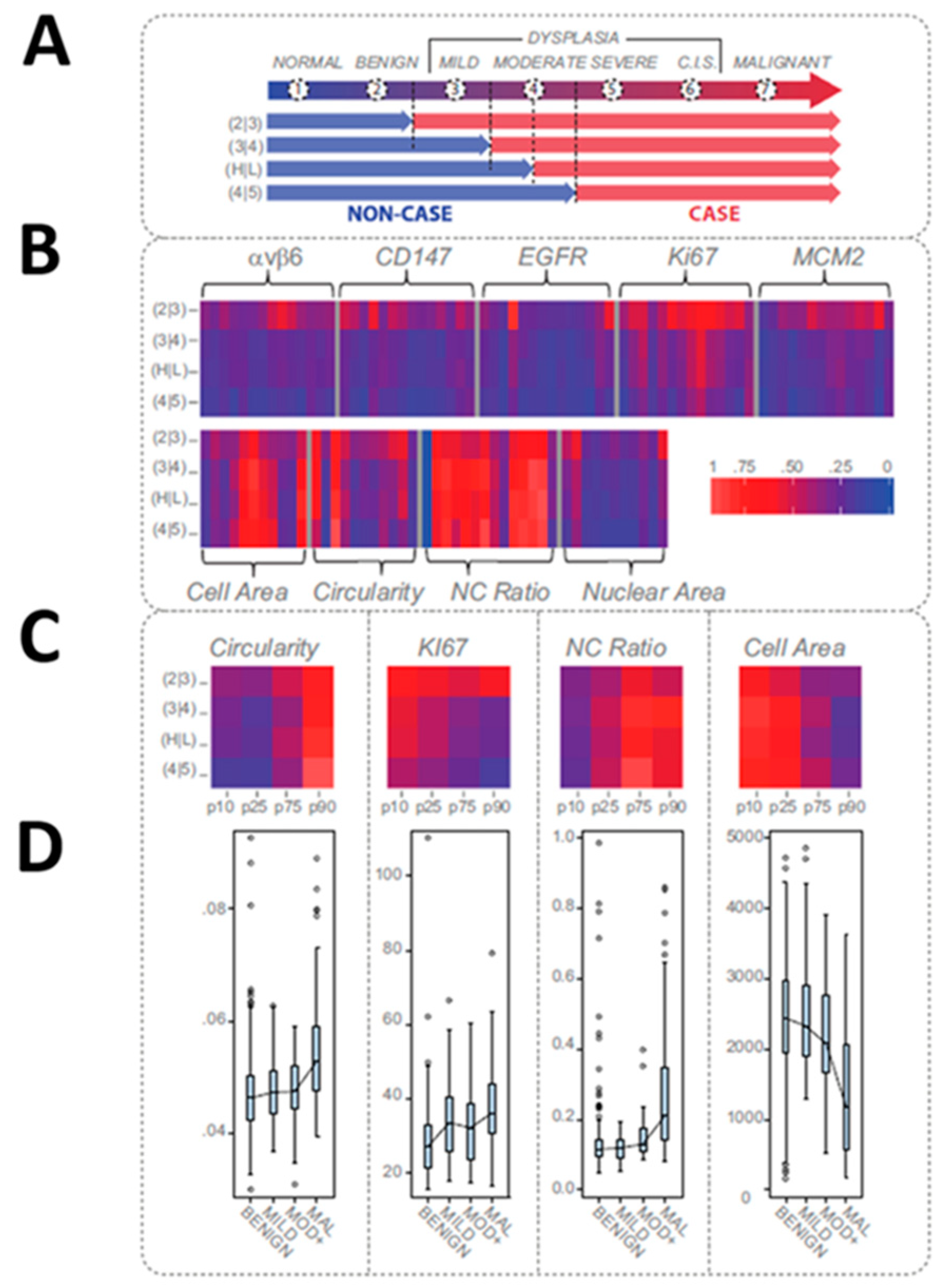

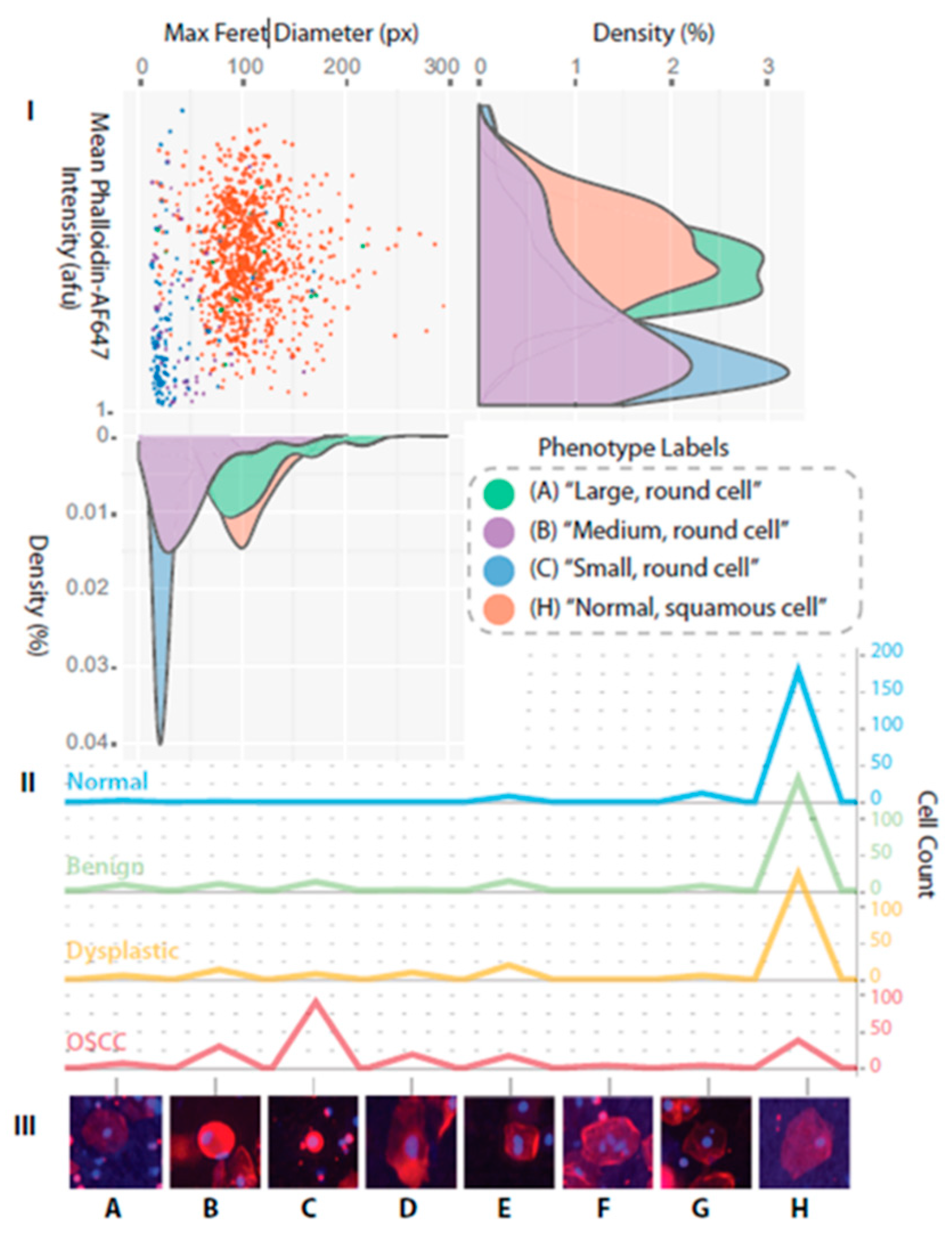

11. Point of Care Oral Cytopathology Tool (POCOCT) for Precision Oncology

12. Discussion

13. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Vitzthum, F.; Behrens, F.; Anderson, N.L.; Shaw, J.H. Proteomics: From basic research to diagnostic application. A review of requirements & needs. J. Prot. Res. 2005, 4, 1086–1097. [Google Scholar]

- Becker, H. One size fits all? LOC 2010, 10, 1894–1897. [Google Scholar] [CrossRef]

- Whitesides, G.M. The origins and the future of microfluidics. Nature 2006, 442, 368–373. [Google Scholar] [CrossRef] [PubMed]

- Yager, P.; Edwards, T.; Fu, E.; Helton, K.; Nelson, K.; Tam, M.R.; Weigl, B.H. Microfluidic diagnostic technologies for global public health. Nature 2006, 442, 412–418. [Google Scholar] [CrossRef] [PubMed]

- Chin, C.; Chin, S.; Laksanasopin, T.; Sia, S. Low-cost microdevices for point-of-care testing. In Point-of-Care Diagnostics on a Chip; Issadore, D., Westervelt, R.M., Eds.; Springer: Berlin/Heidelberg, Germany, 2013; pp. 3–21. [Google Scholar]

- Menella, J.A.; Beauchamp, G.K. The Early Development of Human Flavor Preferences. In Why We Eat What We Eat; The Psychology of Eating; American Psychological Association: Washington, DC, USA, 1996. [Google Scholar]

- Yeomans, M.R.; Gould, N.J.; Mobini, S.; Prescott, J. Acquired flavor acceptance and intake facilitated by monosodium glutamate in humans. Physiol. Behav. 2008, 93, 958–966. [Google Scholar] [CrossRef]

- Birch, L.L.; Fisher, J.A. The Role of Early Experience in the Development of Children’s Eating Behavior. In Why We Eat What WE Eat. The Psychology of Eating; American Psychological Association: Washington, DC, USA, 1996. [Google Scholar]

- Persaud, K.; Dodd, G. Analysis of discrimination mechanisms in the mammalian olfactory system using a model nose. Nature 1982, 299, 352–355. [Google Scholar] [CrossRef]

- Shi, H.; Zhang, M.; Adhikari, B. Advances of electronic nose and its application in fresh foods: A review. Crit. Rev. Food Sci. Nutr. 2018, 58, 2700–2710. [Google Scholar] [CrossRef]

- Tahara, Y.; Toko, K. Electronic Tongues–A Review. IEEE Sens. 2013, 8, 3001. [Google Scholar] [CrossRef]

- Vlasov, Y.; Legin, A.; Rudnitskaya, A. Electronic tongues and their analytical application. Anal. Bioanal. Chem. 2002, 373, 136–146. [Google Scholar] [CrossRef]

- Ciosek, P.; Wesoły, M.; Zabadaj, M.; Lisiecka, J.; Sołłohub, K.; Cal, K.; Wróblewski, W. Towards flow-through/flow injection electronic tongue for the analysis of pharmaceuticals. Sens. Actuators B Chem. 2015, 207, 1087–1094. [Google Scholar] [CrossRef]

- Ha, D.; Sun, Q.; Su, K.; Wan, H.; Li, H.; Xu, N.; Sun, F.; Zhuang, L.; Hu, N.; Wang, P. Recent achievements in electronic tongue and bioelectronic tongue as taste sensors. Sens. Actuators B Chem. 2015, 207, 1136–1146. [Google Scholar] [CrossRef]

- Jiang, H.; Zhang, M.; Bhandari, B.; Adhikari, B. Application of electronic tongue for fresh foods quality evaluation: A review. Rev. Int. 2018, 34, 1–24. [Google Scholar] [CrossRef]

- Medina, S.; Perestrelo, R.; Silva, P.; Pereira, J.A.; Câmara, J.S. Current trends and recent advances on food authenticity technologies and chemometric approaches. Sci. Technol. 2019, 85, 163–176. [Google Scholar] [CrossRef]

- Magro, K.; Mateus, E.P.; Raposo, M.; Ribeiro, A. Overview of electronic tongue sensing in environmental aqueous matrices: Potential for monitoring emerging organic contaminants. Environ. Rev. 2018. [Google Scholar] [CrossRef]

- Escuder-Gilabert, L.; Peris, M. Review: Highlights in recent applications of electronic tongues in food analysis. Anal. Chim. 2010, 665, 15–25. [Google Scholar] [CrossRef]

- Wright, A.T.; Anslyn, E.V. Differential Receptor Arrays and Assays for Solution-Based Molecular Recognition. ChemInform 2006, 35, 14–28. [Google Scholar]

- Di Rosa, A.R.; Leone, F.; Cheli, F.; Chiofalo, V. Fusion of electronic nose, electronic tongue and computer vision for animal source food authentication and quality assessment—A review. J. Eng. 2017, 210, 62–75. [Google Scholar] [CrossRef]

- Buratti, S.; Benedetti, S.; Scampicchio, M.; Pangerod, E. Characterization and classification of Italian Barbera wines by using an electronic nose and an amperometric electronic tongue. Anal. Chim. 2004, 525, 133–139. [Google Scholar] [CrossRef]

- Legin, A.; Rudnitskaya, A.; Lvova, L.; Vlasov, Y.; Di Natale, C.; D’Amico, A. Evaluation of Italian wine by the electronic tongue: Recognition, quantitative analysis and correlation with human sensory perception. Anal. Chim. Acta 2003, 484, 33–34. [Google Scholar] [CrossRef]

- Zhang, C.; Suslick, K.S. A Colorimetric Sensor Array for Organics in Water. J. Am. Chem. Soc. 2005, 127, 11548–11549. [Google Scholar] [CrossRef]

- Chen, Q.; Zhang, D.; Pan, W.; Ouyang, Q.; Li, H.; Urmila, K.; Zhao, J. Recent developments of green analytical techniques in analysis of tea’s quality and nutrition. Sci. Technol. 2015, 43, 63–82. [Google Scholar] [CrossRef]

- Staii, C.; Johnson, A.T.; Chen, M.; Gelperin, A. DNA-Decorated Carbon Nanotubes for Chemical Sensing. Nano Lett. 2005, 5, 1774–1778. [Google Scholar] [CrossRef] [PubMed]

- Dickinson, T.A.; White, J.; Kauer, J.S.; Walt, D.R. A chemical-detecting system based on a cross-reactive optical sensor array. Nature 1996, 382, 697–700. [Google Scholar] [CrossRef] [PubMed]

- Severin, E.J.; Doleman, B.J.; Lewis, N.S. An Investigation of the Concentration Dependence and Response to Analyte Mixtures of Carbon Black/Insulating Organic Polymer Composite Vapor Detectors. Anal. Chem. 2000, 72, 658–668. [Google Scholar] [CrossRef]

- Lonergan, M.C.; Severin, E.J.; Doleman, B.J.; Beaber, S.A.; Grubbs, R.H.; Lewis, N.S. Array-based vapor sensing using chemically sensitive, carbon black-polymer resistors. Chem. Mater. 1996, 8, 2298–2312. [Google Scholar] [CrossRef]

- Freund, M.S.; Lewis, N.S.; Freund, M. A chemically diverse conducting polymer-based “electronic nose”. Proc. Natl. Acad. Sci. USA 1995, 92, 2652–2656. [Google Scholar] [CrossRef]

- Walt, D.R. Fiber Optic Imaging Sensors. Acc. Chem. Res. 1998, 31, 267–278. [Google Scholar] [CrossRef]

- Heilig, A.; Barsan, N.; Weimer, U.; Schweizer-Berberich, M.; Gardner, J.W.; Göpel, W. Gas identification by modulating temperatures of SnO2-based thick-film sensors. Sens. Actuators B 1997, 43, 45–51. [Google Scholar] [CrossRef]

- Gardner, J.W.; Shurmer, H.V.; Tan, T.T. Application of an Electronic Nose to the Discrimination of Coffees. Sens. Actuators B 1992, 6, 71–75. [Google Scholar] [CrossRef]

- Crooks, R.M.; Ricco, A.J. New Organic Materials Suitable for Use in Chemical Sensor Arrays. Acc. Chem. Res. 1998, 31, 219–227. [Google Scholar] [CrossRef]

- Grate, J.W.; Abraham, M.H. Solubility Interactions and the Design of Chemically Selective Sorbent Coatings for Chemical Sensors and Arrays. Sens. Actuators B 1991, 3, 85–111. [Google Scholar] [CrossRef]

- Fukunishi, K.; Nakamoto, T.; Moriizumi, T. Identification capability of odor sensor using quartz-resonator array and neural-network pattern recognition. Sens. Actuators B Chem. 1990, 1, 473–476. [Google Scholar]

- Nakamoto, T.; Fukuda, A.; Moriizumi, T.; Asakura, Y. Improvement of identification capability in an odor-sensing system. Sens. Actuators B Chem. 1991, 3, 221–226. [Google Scholar] [CrossRef]

- Rakow, N.A.; Suslick, K.S. A colorimetric sensor array for odour visualization. Nature 2000, 406, 710–713. [Google Scholar] [CrossRef]

- Vlasov, Y.; Legin, A.; Rudnitskaya, A.; Di Natale, C.; D’Amico, A. Nonspecific sensor arrays (“electronic tongue”) for chemical analysis of liquids (IUPAC Technical Report). Pure Appl. Chem. 2005, 77, 1965–1983. [Google Scholar] [CrossRef]

- Hayashi, K.; Yamanaka, M.; Toko, K.; Yamafuji, K. Multichannel taste sensor using lipid membranes. Sens. Actuators B Chem. 1990, 2, 205–213. [Google Scholar] [CrossRef]

- Anand, V.; Kataria, M.; Kukkar, V.; Saharan, V.; Choudhury, P.K.; Saharan, V.A. The latest trends in the taste assessment of pharmaceuticals. Drug Discov. 2007, 12, 257–265. [Google Scholar] [CrossRef]

- Toko, K. Taste sensor with global selectivity. Mater. Sci. Eng. C 1996, 4, 69–82. [Google Scholar] [CrossRef]

- Toko, K. Taste sensor. Sens. Actuators B Chem. 2000, 64, 205–215. [Google Scholar] [CrossRef]

- Toko, K. Biomimetic Sensor Technology; Cambridge Univ. Press: Cambridge, UK, 2000. [Google Scholar]

- Toko, K.; Habara, M. Taste sensor. Chem. Sens. 2005, 30, i256–i257. [Google Scholar] [CrossRef]

- Habara, M.; Toko, K. Taste sensor. In Encyclopedia of Sensors; Scientific: San Francisco, CA, USA, 2006; pp. 107–119. [Google Scholar]

- Ciosek, P.; Wróblewski, W. Sensor arrays for liquid sensing—electronic tongue systems. Analyst 2007, 132, 963. [Google Scholar] [CrossRef]

- Citterio, D.; Suzuki, K. Smart taste sensors. Anal. Chem. 2008, 80, 3965–3972. [Google Scholar]

- Savage, N. Technology: The taste of things to come. Nature 2012, 486, S18–S19. [Google Scholar] [CrossRef] [PubMed]

- Legin, A.V.; Rudnitskaya, A.M.; Vlasov, Y.G.; Di Natale, C.; D’Amico, A. The features of the electronic tongue in comparison with the characteristics of the discrete ion-selective sensors. Sens. Actuators B Chem. 1999, 58, 464–468. [Google Scholar] [CrossRef]

- Ghasemi-Varnamkhasti, M.; Mohtasebi, S.S.; Siadat, M. Biomimetic-based odor and taste sensing systems to food quality and safety characterization: An overview on basic principles and recent achievements. J. Eng. 2010, 100, 377–387. [Google Scholar] [CrossRef]

- Winquist, F. Voltammetric electronic tongues—Basic principles and applications. Microchim. Acta 2008, 163, 3–10. [Google Scholar] [CrossRef]

- Woertz, K.; Tissen, C.; Kleinebudde, P.; Breitkreutz, J. A comparative study on two electronic tongues for pharmaceutical formulation development. J. Pharm. Biomed. Anal. 2011, 55, 272–281. [Google Scholar] [CrossRef] [PubMed]

- Woertz, K.; Tissen, C.; Kleinebudde, P.; Breitkreutz, J.; Breitkreutz, J. Taste sensing systems (electronic tongues) for pharmaceutical applications. Int. J. Pharm. 2011, 417, 256–271. [Google Scholar] [CrossRef] [PubMed]

- Kobayashi, Y.; Habara, M.; Ikezazki, H.; Chen, R.; Naito, Y.; Toko, K. Advanced Taste Sensors Based on Artificial Lipids with Global Selectivity to Basic Taste Qualities and High Correlation to Sensory Scores. Sensors 2010, 10, 3411–3443. [Google Scholar] [CrossRef]

- Steemers, F.J.; Ferguson, J.A.; Walt, D.R. Screening unlabeled DNA targets with randomly ordered fiber-optic gene arrays. Nat. Biotechnol. 2000, 18, 91–94. [Google Scholar] [CrossRef]

- Walt, D.R. Techview: Molecular biology. Bead-based fiber-optic arrays. Science 2000, 287, 451–452. [Google Scholar] [CrossRef]

- Ferguson, J.A.; Steemers, F.J.; Walt, D.R. High-Density Fiber-Optic DNA Random Microsphere Array. Anal. Chem. 2000, 72, 5618–5624. [Google Scholar] [CrossRef]

- Epstein, J.; Lee, M.; Walt, D.R. High-density fiberoptic genosensor microsphere array capable of zeptomole (10-21 mol) detection limits. Anal. Chem. 2002, 74, 1836–1840. [Google Scholar] [CrossRef]

- Epstein, J.R.; Biran, I.; Walt, D.R. Fluorescence-based nucleic acid detection and microarrays. Anal. Chim. 2002, 469, 3–36. [Google Scholar] [CrossRef]

- Epstein, J.R.; Leung, A.P.; Lee, K.-H.; Walt, D.R. High-density, microsphere-based fiber optic DNA microarrays. Biosens. Bioelectron. 2003, 18, 541–546. [Google Scholar] [CrossRef]

- Epstein, J.R.; Ferguson, J.A.; Lee, K.-H.; Walt, D.R. Combinatorial Decoding: An Approach for Universal DNA Array Fabrication. J. Am. Chem. Soc. 2003, 125, 13753–13759. [Google Scholar] [CrossRef]

- Shepard, J.R.E.; Danin-Poleg, Y.; Kashi, Y.; Walt, D.R. Array-Based Binary Analysis for Bacterial Typing. Anal. Chem. 2005, 77, 319–326. [Google Scholar] [CrossRef]

- Duan, B.K.; Li, X.; Walt, D.R.; Cavanagh, P.E. Ultrasensitive Single-Molecule Enzyme Detection and Analysis Using a Polymer Microarray. Anal. Chem. 2018, 90, 3091–3098. [Google Scholar] [CrossRef]

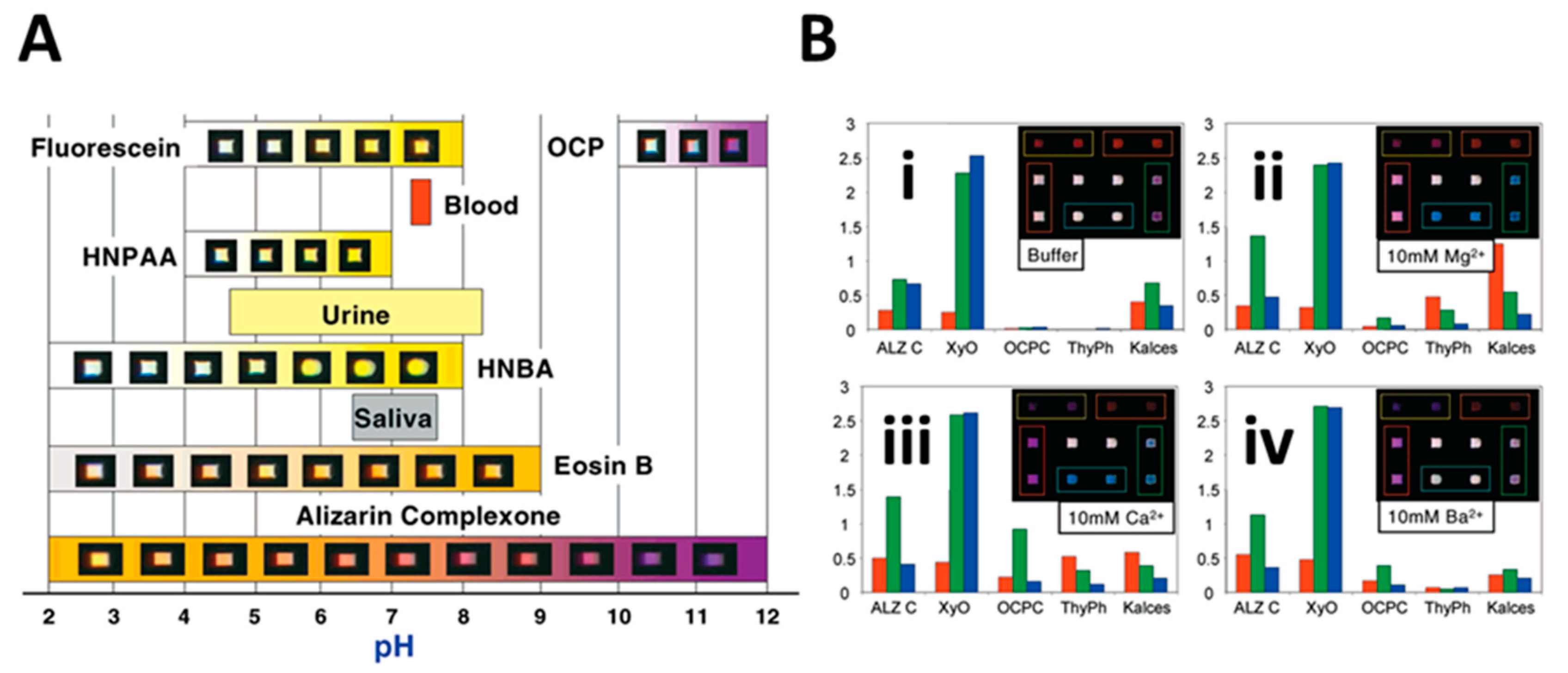

- Lavigne, J.J.; Savoy, S.; Clevenger, M.B.; Ritchie, J.E.; McDoniel, B.; Yoo, S.-J.; Anslyn, E.V.; McDevitt, J.T.; Shear, J.B.; Neikirk, D. Solution-Based Analysis of Multiple Analytes by a Sensor Array: Toward the Development of an “Electronic Tongue”. J. Am. Chem. Soc. 1998, 120, 6429–6430. [Google Scholar] [CrossRef]

- Curey, T.E.; Goodey, A.; Tsao, A.; Lavigne, J.; Sohn, Y.; McDevitt, J.T.; Anslyn, E.V.; Neikirk, D.; Shear, J.B. Characterization of Multicomponent Monosaccharide Solutions Using an Enzyme-Based Sensor Array. Anal. Biochem. 2001, 293, 178–184. [Google Scholar] [CrossRef]

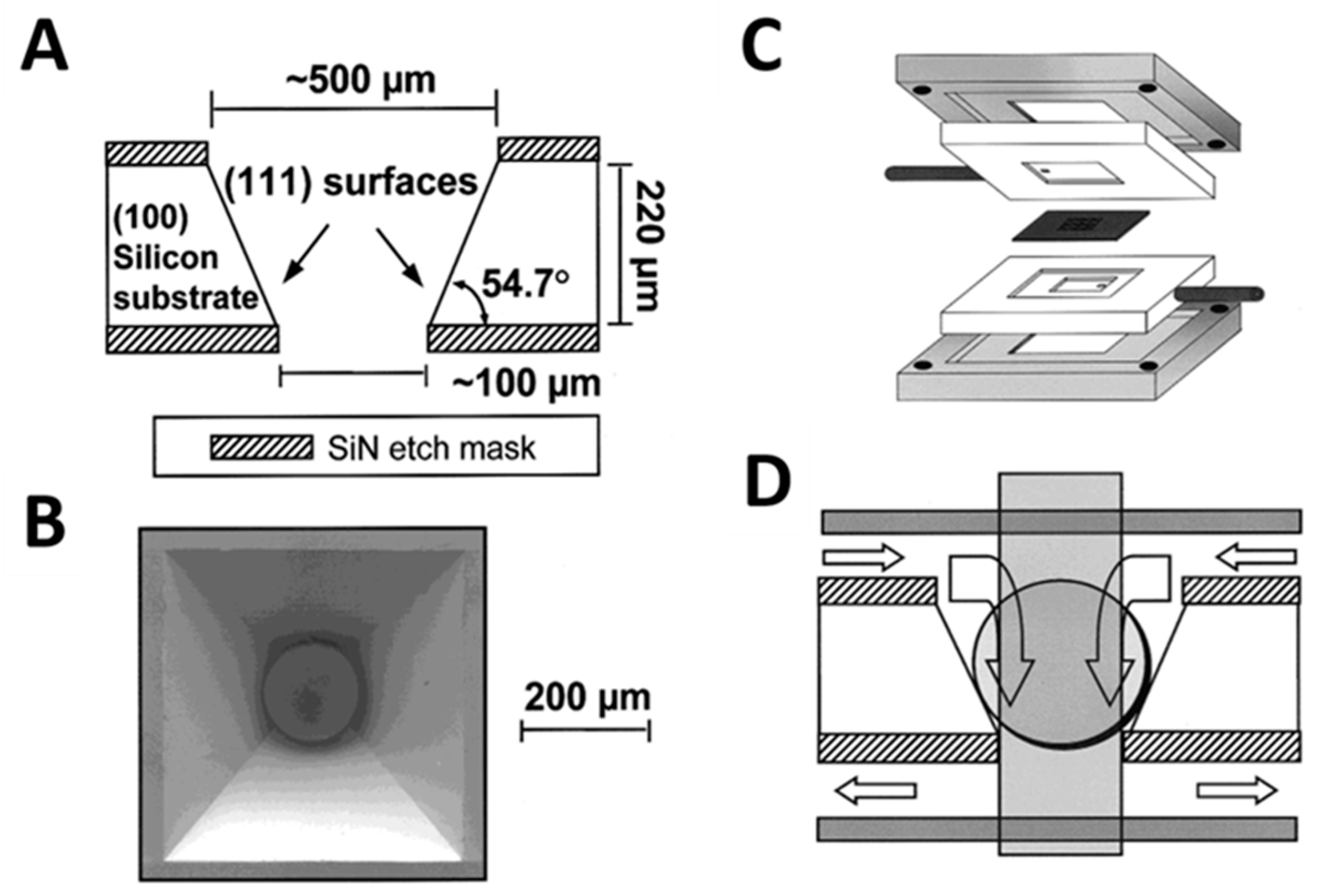

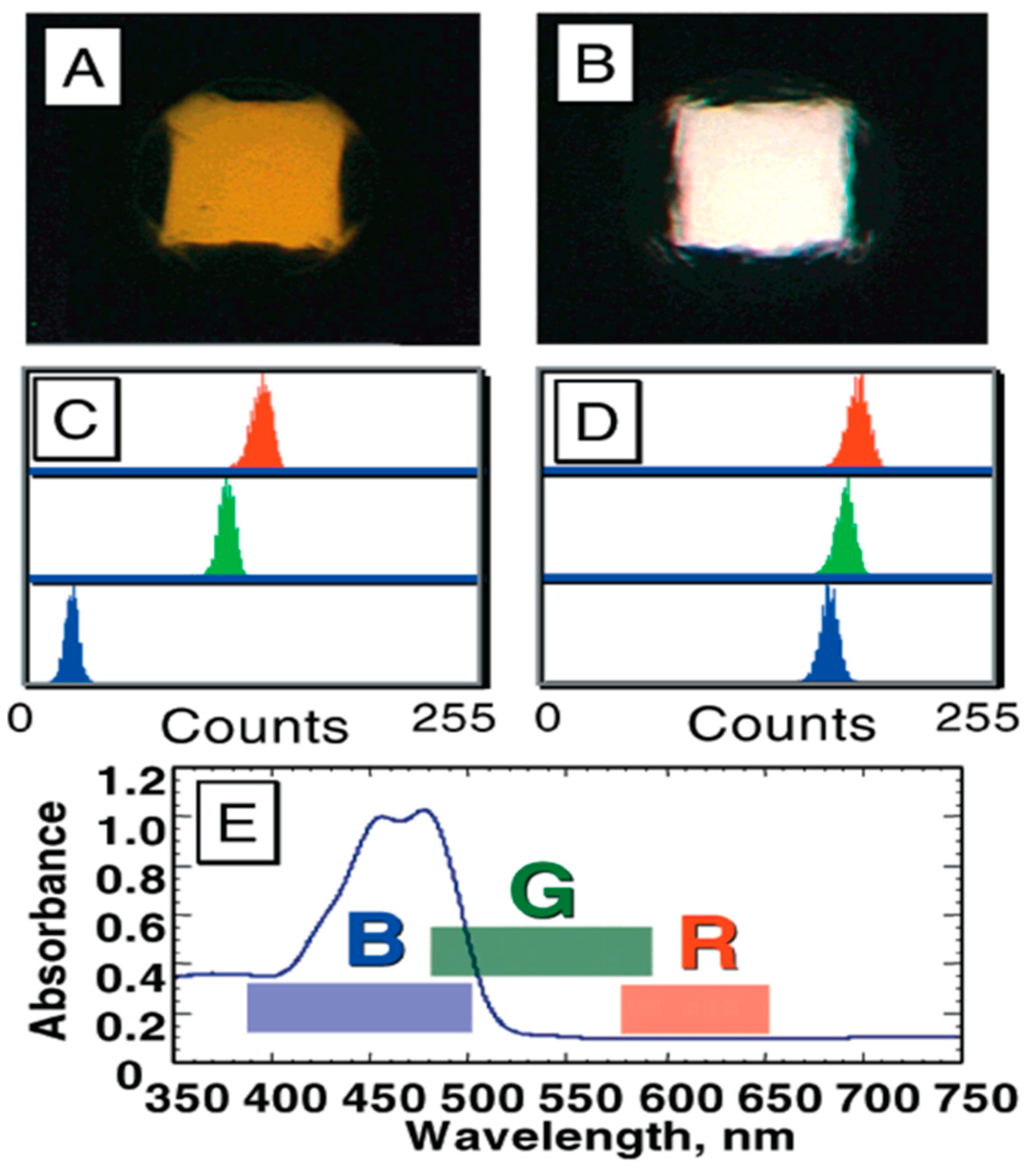

- Goodey, A.; Lavigne, J.J.; Savoy, S.M.; Rodriguez, M.D.; Curey, T.; Tsao, A.; Simmons, G.; Wright, J.; Yoo, S.-J.; Sohn, Y.; et al. Development of Multianalyte Sensor Arrays Composed of Chemically Derivatized Polymeric Microspheres Localized in Micromachined Cavities. J. Am. Chem. Soc. 2001, 123, 2559–2570. [Google Scholar] [CrossRef]

- Schmale, H.; Ahlers, C.; Blaker, M.; Kock, K.; Spielman, A.I. The Molecular Basis of Small and Taste Transduction; Chadwick, D., Marsh, J., Goode, J., Eds.; John Wiley & Sons: Chichester, UK, 1993; p. 167185. [Google Scholar]

- Getchell, T.V. Functional properties of vertebrate olfactory receptor neurons. Physiol. Rev. 1986, 66, 772–818. [Google Scholar] [CrossRef] [PubMed]

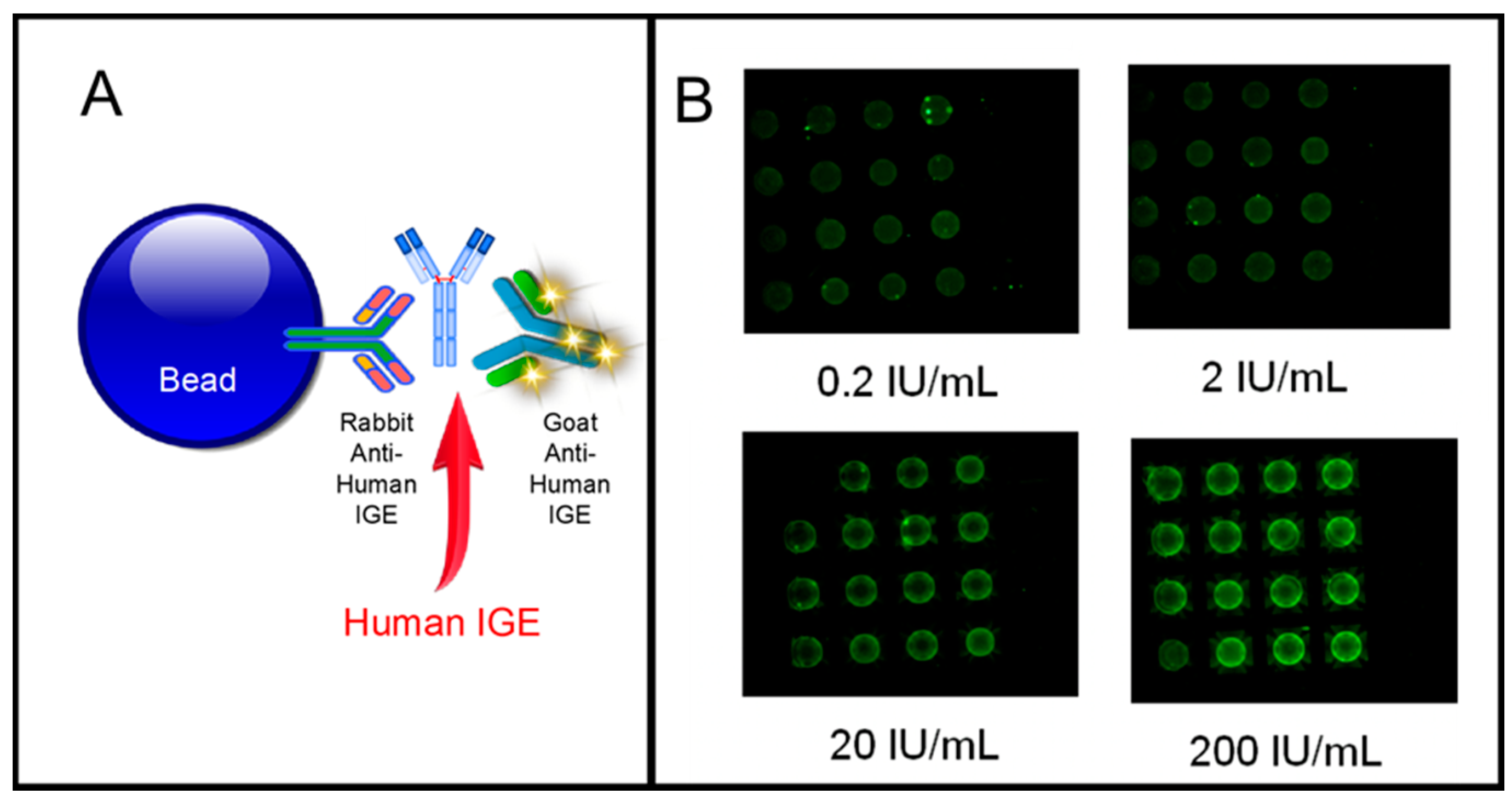

- Jokerst, J.V.; McDevitt, J.T. Programmable nano-bio-chips: Multifunctional clinical tools for use at the point-of-care. Nanomedicine 2010, 5, 143–155. [Google Scholar] [CrossRef]

- Jokerst, J.V.; Floriano, P.N.; Christodoulides, N.; McDevitt, J.T.; Jacobson, J.W.; Bhagwandin, B.D. Programmable Nano-Bio-Chip Sensors: Analytical Meets Clinical. Anal. Chem. 2010, 82, 1571–1579. [Google Scholar] [CrossRef] [PubMed]

- Christodoulides, N.; Tran, M.; Floriano, P.N.; Rodriguez, M.; Goodey, A.; Ali, M.; Neikirk, D.; McDevitt, J.T. A Microchip-Based Multianalyte Assay System for the Assessment of Cardiac Risk. Anal. Chem. 2002, 74, 3030–3036. [Google Scholar] [CrossRef] [PubMed]

- Jokerst, J.V.; Raamanathan, A.; Christodoulides, N.; Floriano, P.N.; Pollard, A.A.; Simmons, G.W.; Wong, J.; Gage, C.; Furmaga, W.B.; Redding, S.W.; et al. Nano-Bio-Chips for High Performance Multiplexed Protein Detection: Determinations of Cancer Biomarkers in Serum and Saliva using Quantum Dot Bioconjugate Labels. Biosens. Bioelectron. 2009, 24, 3622–3629. [Google Scholar] [CrossRef]

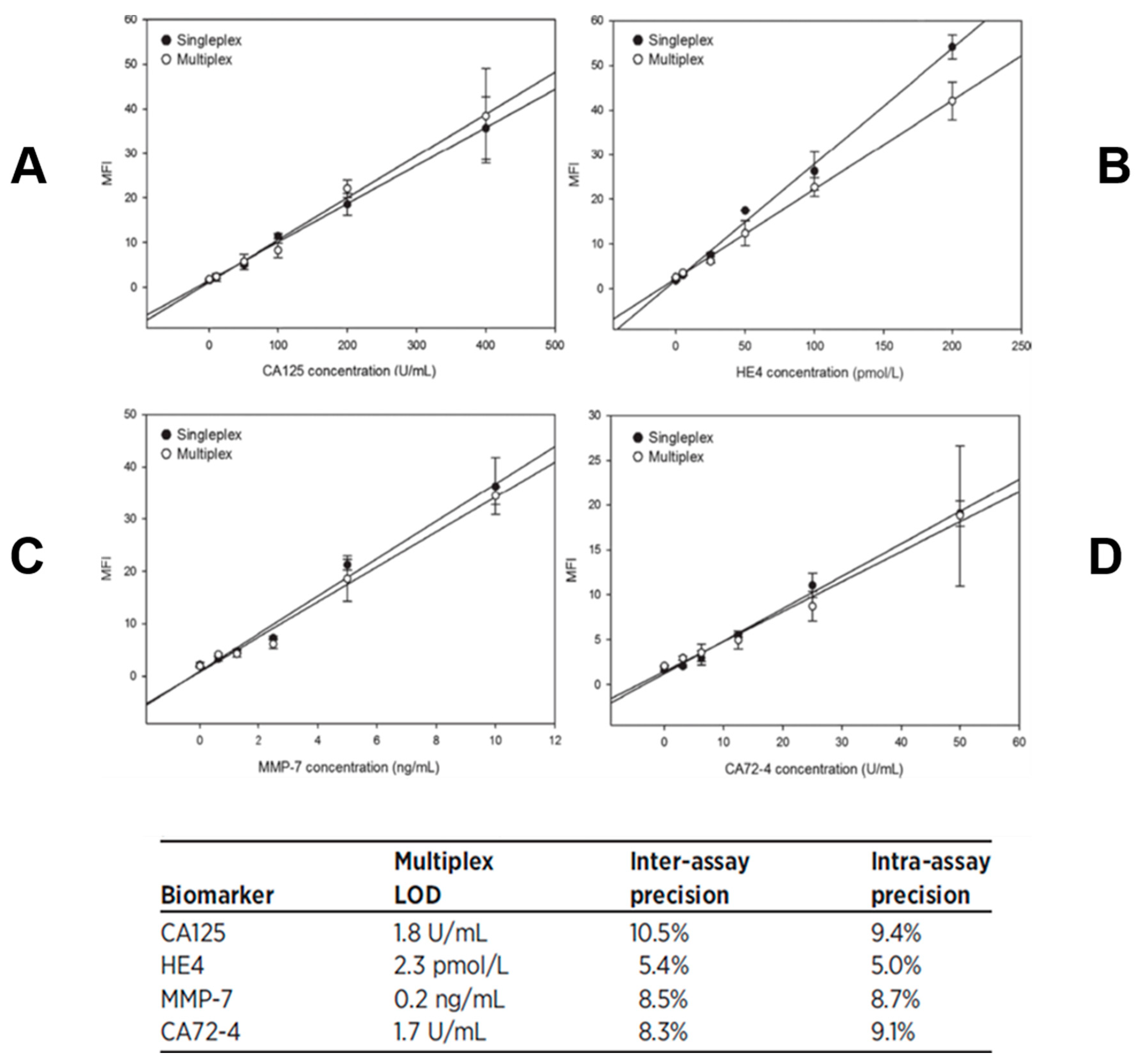

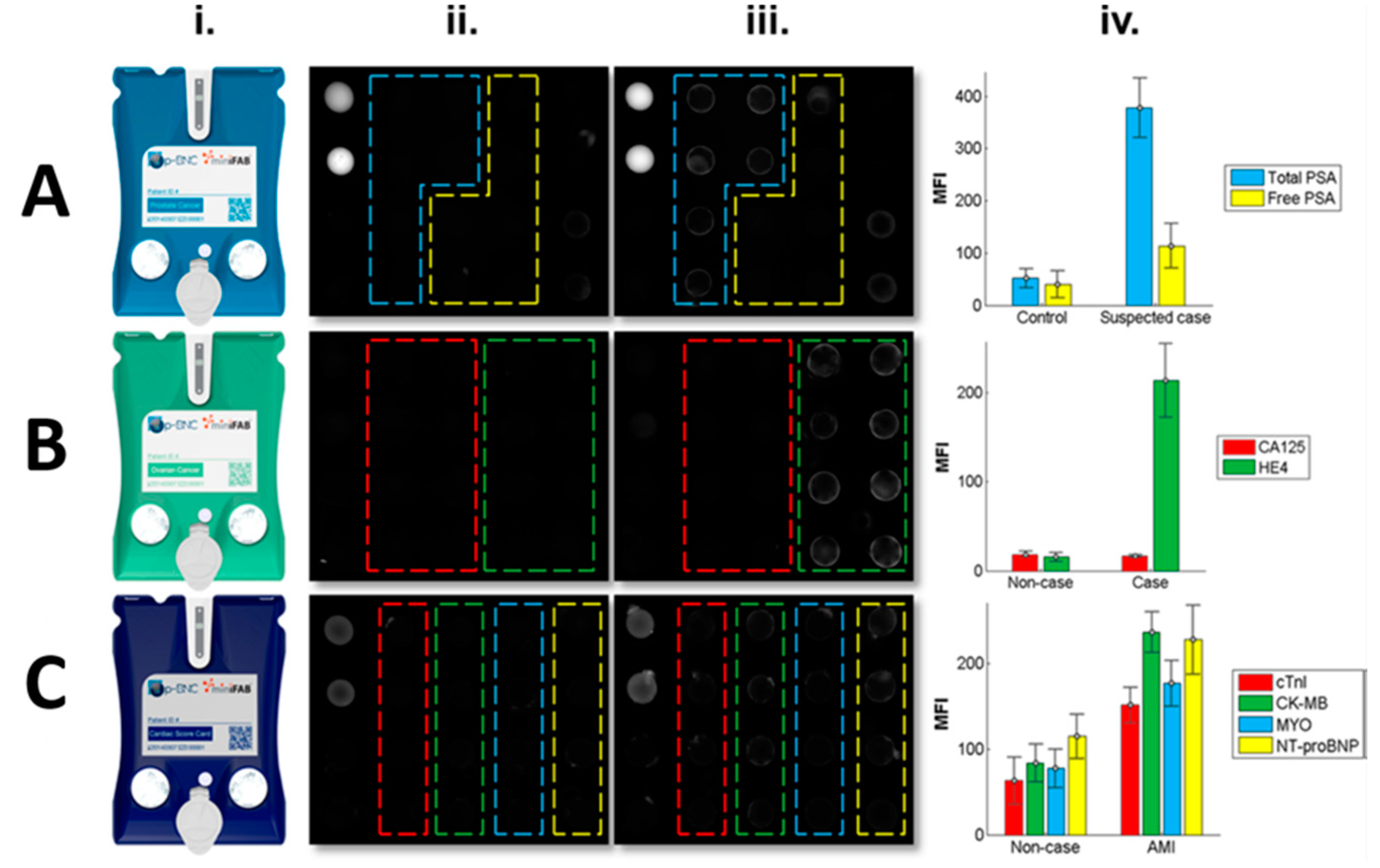

- Shadfan, B.; Simmons, A.; Simmons, G.; Ho, A.; Wong, J.; Lu, K.; Bast, R.; McDevitt, J. A Multiplexable, Microfluidic Platform for the Rapid Quantitation of a Biomarker Panel for Early Ovarian Cancer Detection at the Point-of-Care. Cancer Prevent. Res. 2015, 8, 42–53. [Google Scholar] [CrossRef]

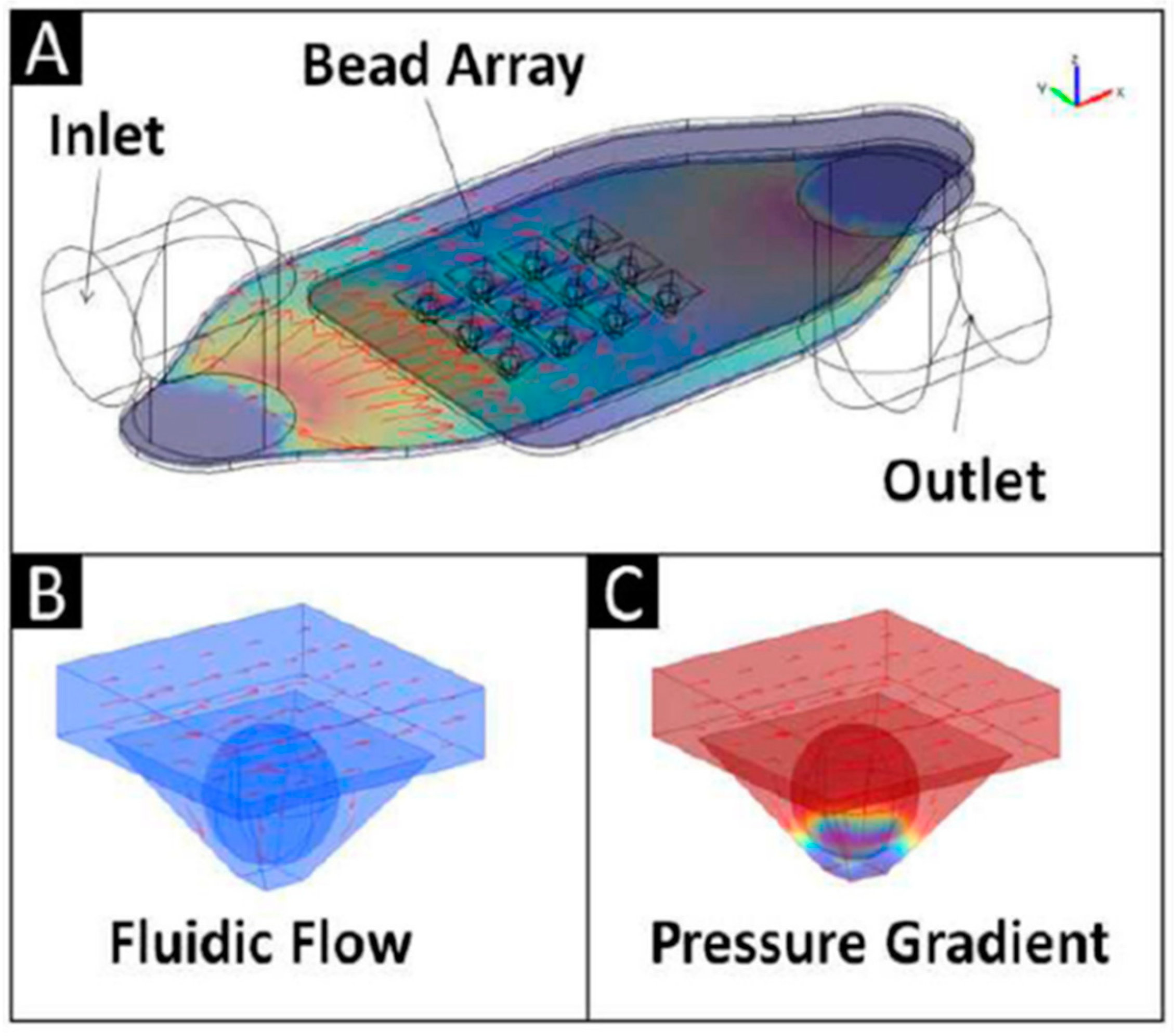

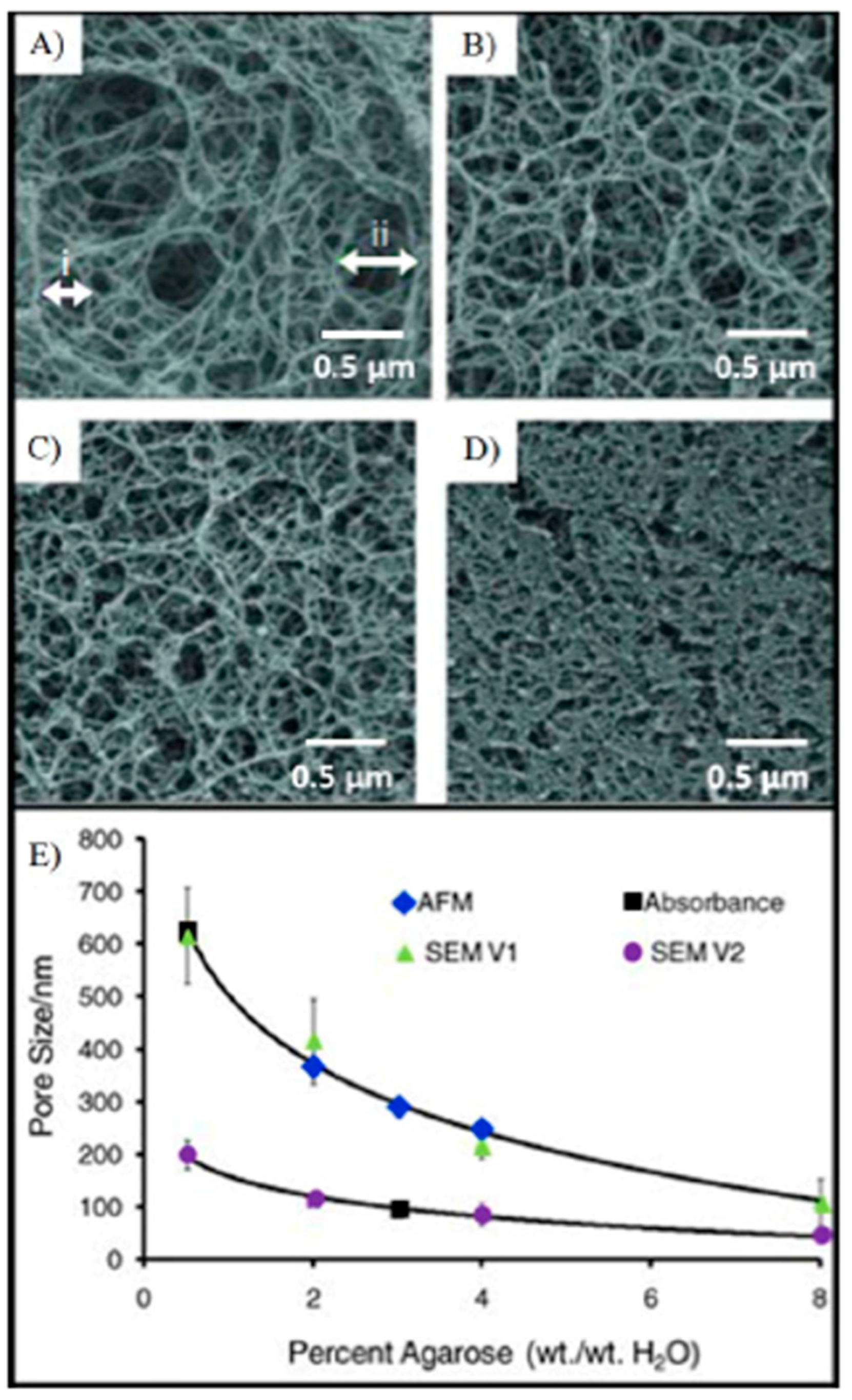

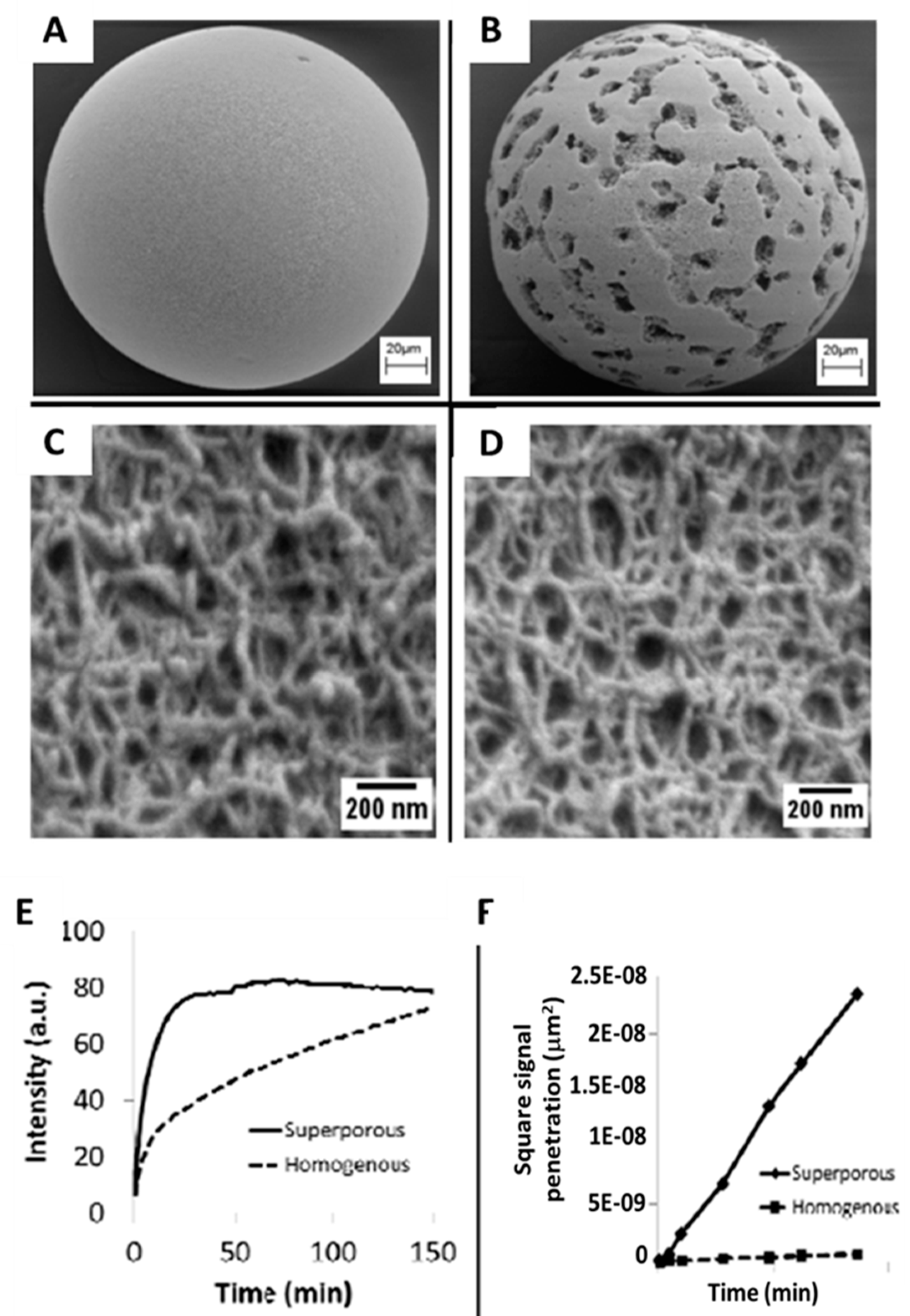

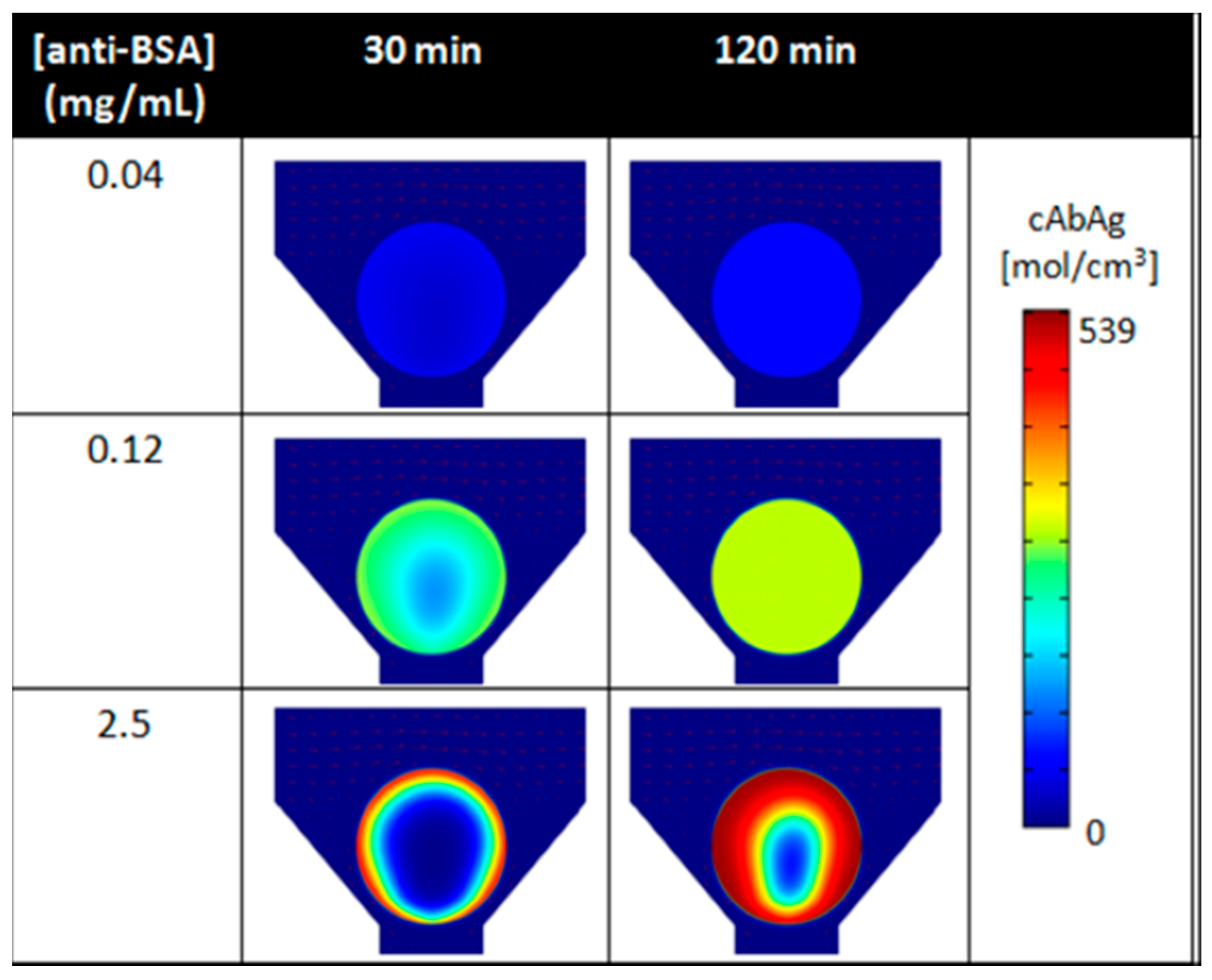

- Chou, J.; Lennart, A.; Wong, J.; Ali, M.F.; Floriano, P.N.; Christodoulides, N.; Camp, J.; McDevitt, J.T. Modeling Analyte Transport and Capture in Porous Bead Sensors. Anal. Chem. 2012, 84, 2569–2575. [Google Scholar] [CrossRef]

- Chou, J.; Wong, J.; Christodoulides, N.; Floriano, P.N.; Sánchez, X.; McDevitt, J.T. Porous Bead-Based Diagnostic Platforms: Bridging the Gaps in Healthcare. Sensors 2012, 12, 15467–15499. [Google Scholar] [CrossRef]

- Chou, J.; Li, L.E.; Kulla, E.; Christodoulides, N.; Floriano, P.N.; McDevitt, J.T. Effects of Sample Delivery on Analyte Capture in Porous Bead Sensors. Lab Chip 2012, 12, 5249–5256. [Google Scholar] [CrossRef]

- Zhan, W.; Seong, G.H.; Crooks, R.M. Hydrogel-Based Microreactors as a Functional Component of Microfluidic Systems. Anal. Chem. 2002, 74, 4647–4652. [Google Scholar] [CrossRef] [PubMed]

- Rubina, A.; Pan’Kov, S.; Dementieva, E.; Pen’Kov, D.; Butygin, A.; Vasiliskov, V.; Chudinov, A.; Mikheikin, A.; Mikhailovich, V.; Mirzabekov, A.; et al. Hydrogel drop microchips with immobilized DNA: Properties and methods for large-scale production. Anal. Biochem. 2004, 325, 92–106. [Google Scholar] [CrossRef] [PubMed]

- Khademhosseini, A.; Yeh, J.; Jon, S.; Eng, G.; Suh, K.Y.; Burdick, J.A.; Langer, R. Molded polyethylene glycol microstructures for capturing cells within microfluidic channels. Lab Chip 2004, 4, 425–430. [Google Scholar] [CrossRef] [PubMed]

- Sung, W.-C.; Chen, H.-H.; Makamba, H.; Chen, S.-H. Functionalized 3D-Hydrogel Plugs Covalently Patterned Inside Hydrophilic Poly(dimethylsiloxane) Microchannels for Flow-Through Immunoassays. Anal. Chem. 2009, 81, 7967–7973. [Google Scholar] [CrossRef] [PubMed]

- Jokerst, J.V.; Chou, J.; Camp, J.P.; Wong, J.; Lennart, A.; Pollard, A.A.; Floriano, P.N.; Christodoulides, N.; Simmons, G.W.; Zhou, Y.; et al. Location of Biomarkers and Reagents within Agarose Beads of a Programmable Bio-nano-chip. Small 2011, 7, 613–624. [Google Scholar] [CrossRef] [PubMed]

- Gustavsson, P.-E.; Axelsson, A.; Larsson, P.-O. Direct measurements of convective fluid velocities in superporous agarose beads. J. Chromatogr. A 1998, 795, 199–210. [Google Scholar] [CrossRef]

- Gustavsson, P.-E.; Axelsson, A.; Larsson, P.-O. Superporous agarose beads as a hydrophobic interaction chromatography support. J. Chromatogr. A 1999, 830, 275–284. [Google Scholar] [CrossRef]

- Du, K.-F.; Bai, S.; Dong, X.-Y.; Sun, Y. Fabrication of superporous agarose beads for protein adsorption: Effect of CaCO3 granules content. J. Chromatogr. A 2010, 1217, 5808–5816. [Google Scholar] [CrossRef]

- Gottschalk, I.; Gustavsson, P.-E.; Ersson, B.; Lundahl, P. Improved lectin-mediated immobilization of human red blood cells in superporous agarose beads. J. Chromatogr. B 2003, 784, 203–208. [Google Scholar] [CrossRef]

- Larsson, P.-O.; Gustavsson, P.-E.; Axelsson, A. Direct measurement of intraparticle fluid velocity in superporous agarose beads. J. Mol. Recognit. 1998, 11, 270–272. [Google Scholar] [CrossRef]

- Yang, Y.; Nam, S.-W.; Lee, N.Y.; Kim, Y.S.; Park, S. Superporous agarose beads as a solid support for microfluidic immunoassay. Ultramicroscopy 2008, 108, 1384–1389. [Google Scholar] [CrossRef]

- McRae, M.P.; Simmons, G.W.; Wong, J.; Shadfan, B.; Gopalkrishnan, S.; Christodoulides, N.; McDevitt, J.T. Programmable bio-nano-chip system: A flexible point-of-care platform for bioscience and clinical measurements. Lab Chip 2015, 15, 4020–4031. [Google Scholar] [CrossRef] [PubMed]

- Shen, L.; Bai, L. A review on Gabor wavelets for face recognition. Pattern Anal. Appl. 2006, 9, 273–292. [Google Scholar] [CrossRef]

- McRae, M.P.; Simmons, G.; Mc Devitt, J.T. Challenges and opportunities for translating medical microdevices: Insights from the programmable bio-nano-chip. Bioanalysis 2016, 8, 905–919. [Google Scholar] [CrossRef]

- McCleskey, S.C.; Floriano, P.N.; Wiskur, S.L.; Anslyn, E.V.; McDevitt, J.T. Citrate and calcium determination in flavored vodkas using artificial neural networks. Tetrahedron 2003, 59, 10089–10092. [Google Scholar] [CrossRef]

- Ali, M.F.; Kirby, R.; Goodey, A.P.; Rodriguez, M.D.; Ellington, A.D.; Neikirk, D.P.; McDevitt, J.T. DNA Hybridization and Discrimination of Single-Nucleotide Mismatches Using Chip-Based Microbead Arrays. Anal. Chem. 2003, 75, 4732–4739. [Google Scholar] [CrossRef] [PubMed]

- Christodoulides, N.; Mohanty, S.; Miller, C.S.; Langub, M.C.; Floriano, P.N.; Dharshan, P.; Ali, M.F.; Bernard, B.; Romanovicz, D.; Anslyn, E.; et al. Application of microchip assay system for the measurement of C-reactive protein in human saliva. Lab Chip 2005, 5, 261. [Google Scholar] [CrossRef]

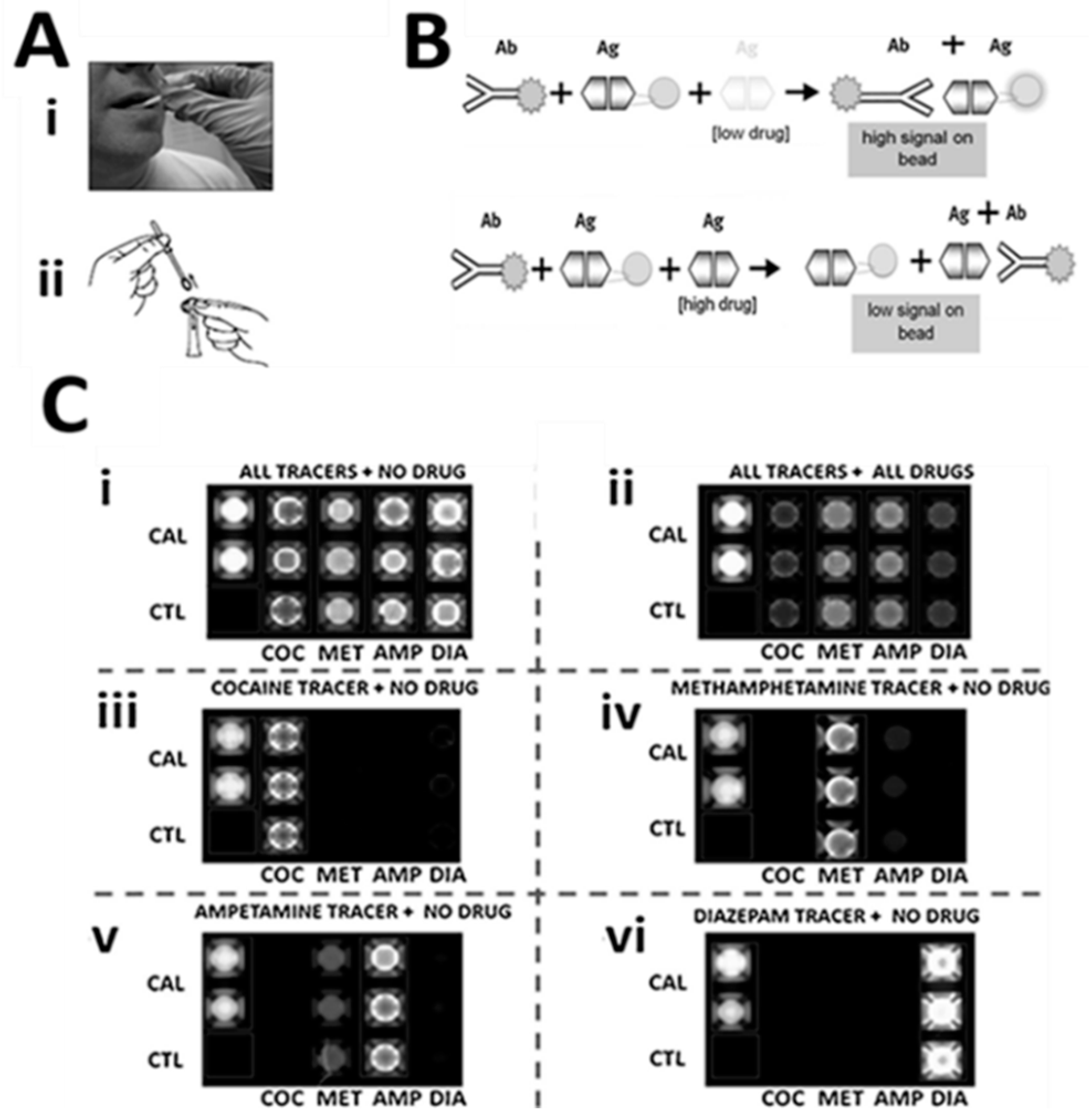

- Christodoulides, N.; De La Garza, R.; Simmons, G.W.; McRae, M.P.; Wong, J.; Newton, T.F.; Smith, R.; Iii, J.J.M.; Hohenstein, J.; Gomez, S.; et al. Application of Programmable Bio-Nano-Chip System for the Quantitative Detection of Drugs of Abuse in Oral Fluids*. Drug Depend. 2015, 153, 306–313. [Google Scholar] [CrossRef]

- Christodoulides, N.; De La Garza, R.; Simmons, G.W.; McRae, M.P.; Wong, J.; Kosten, T.R.; Miller, C.S.; Ebersole, J.L.; McDevitt, J.T. Programmable bio-nano-chip system for saliva diagnostics. SPIE Sens. Technol. Appl. 2014, 9112, 911206. [Google Scholar]

- Floriano, P.N.; Christodoulides, N.; Romanovicz, D.; Bernard, B.; Simmons, G.W.; Cavell, M.; McDevitt, J.T. Membrane-based on-line optical analysis system for rapid detection of bacteria and spores. Biosens. Bioelectron. 2005, 20, 2079–2088. [Google Scholar] [CrossRef]

- Rodriguez, W.R.; Christodoulides, N.; Floriano, P.N.; Graham, S.; Mohanty, S.; Dixon, M.; Hsiang, M.; Peter, T.; Zavahir, S.; Thior, I.; et al. A Microchip CD4 Counting Method for HIV Monitoring in Resource-Poor Settings. Plos Med. 2005, 2, e182. [Google Scholar] [CrossRef]

- Floriano, P.N.; Acosta, S.; Christodoulides, N.; Weigum, S.; McDevitt, J.T. Microchip-Based Enumeration of Human White Blood Cells. Microinjection 2007, 385, 53–64. [Google Scholar]

- Weigum, S.E.; Floriano, P.N.; Christodoulides, N.; McDevitt, J.T. Cell-based sensor for analysis of EGFR biomarker expression in oral cancer. Lab Chip 2007, 7, 995. [Google Scholar] [CrossRef] [PubMed]

- Weigum, S.; Redding, S.; Yeh, C.; McGuff, H.; Vigneswaran, N.; McDevitt, J.T. Lab-on-a-chip sensor for analysis of cellular biomarkers in oral exfoliative cytology: A new diagnostic tool for early detection of oral cancer. Oral Oncol. Suppl. 2009, 3, 111. [Google Scholar] [CrossRef]

- Weigum, S.E.; Floriano, P.N.; Redding, S.W.; Yeh, C.-K.; Westbrook, S.D.; McGuff, H.S.; Lin, A.; Miller, F.R.; Villarreal, F.; Rowan, S.D.; et al. Nano-bio-chip sensor platform for examination of oral exfoliative cytology. Prev. Res. 2010, 3, 518–528. [Google Scholar] [CrossRef]

- Christodoulides, N.; Floriano, P.N.; Acosta, S.A.; Michael Ballard, K.L.; Weigum, S.E.; Mohanty, S.; Dharshan, P.; Romanovicz, D.; McDevitt, J.T.M. Toward the Development of a Lab-on-a-Chip Dual-Function Leukocyte and C-Reactive Protein Analysis Method for the Assessment of Inflammation and Cardiac Risk. Clin. Chem. 2005, 51, 2391–2395. [Google Scholar] [CrossRef]

- Margolis, K.; Manson, J.; Greenland, P. Leukocyte Count as a Predictor of Cardiovascular Events and Mortality in Postmenopausal Women. Acc Curr. J. Rev. 2005, 14, 12–13. [Google Scholar] [CrossRef]

- Furman, M.I.; Becker, R.C.; Yarzebski, J.; Savegeau, J.; Gore, J.M.; Goldberg, R.J. Effect of Elevated Leukocyte Count on In-Hospital Mortality Following Acute Myocardial Infarction. Am. J. Cardiol. 1996, 78, 945–948. [Google Scholar] [CrossRef]

- Furman, M.I.; Gore, J.M.; Anderson, F.A.; Budaj, A.; Goodman, S.G.; Avezum, Á.; López-Sendón, J.; Klein, W.; Mukherjee, D.; Eagle, K.A.; et al. Elevated leukocyte count and adverse hospital events in patients with acute coronary syndromes: Findings from the Global Registry of Acute Coronary Events (GRACE). Am. Heart J. 2004, 147, 42–48. [Google Scholar] [CrossRef]

- Ridker, P.M.; Rifai, N.; Pfeffer, M.A.; Sacks, F.M.; Moye, L.A.; Goldman, S.; Flaker, G.C.; Braunwald, E. Inflammation, pravastatin, and the risk of coronary events after myocardial infarction in patients with mean cholesterol levels. Circulation 1998, 98, 839–844. [Google Scholar] [CrossRef]

- Christodoulides, N.; Floriano, P.N.; Sanchez, X.; Li, L.; Hocquard, K.; Patton, A.; Muldoon, R.; Miller, C.S.; Ebersole, J.L.; Redding, S.; et al. Programmable Bio-Nanochip Technology For The Diagnosis Of Cardiovascular Disease At The Point Of Care. Debakey Cardiovasc. J. 2012, 8, 6–12. [Google Scholar] [CrossRef]

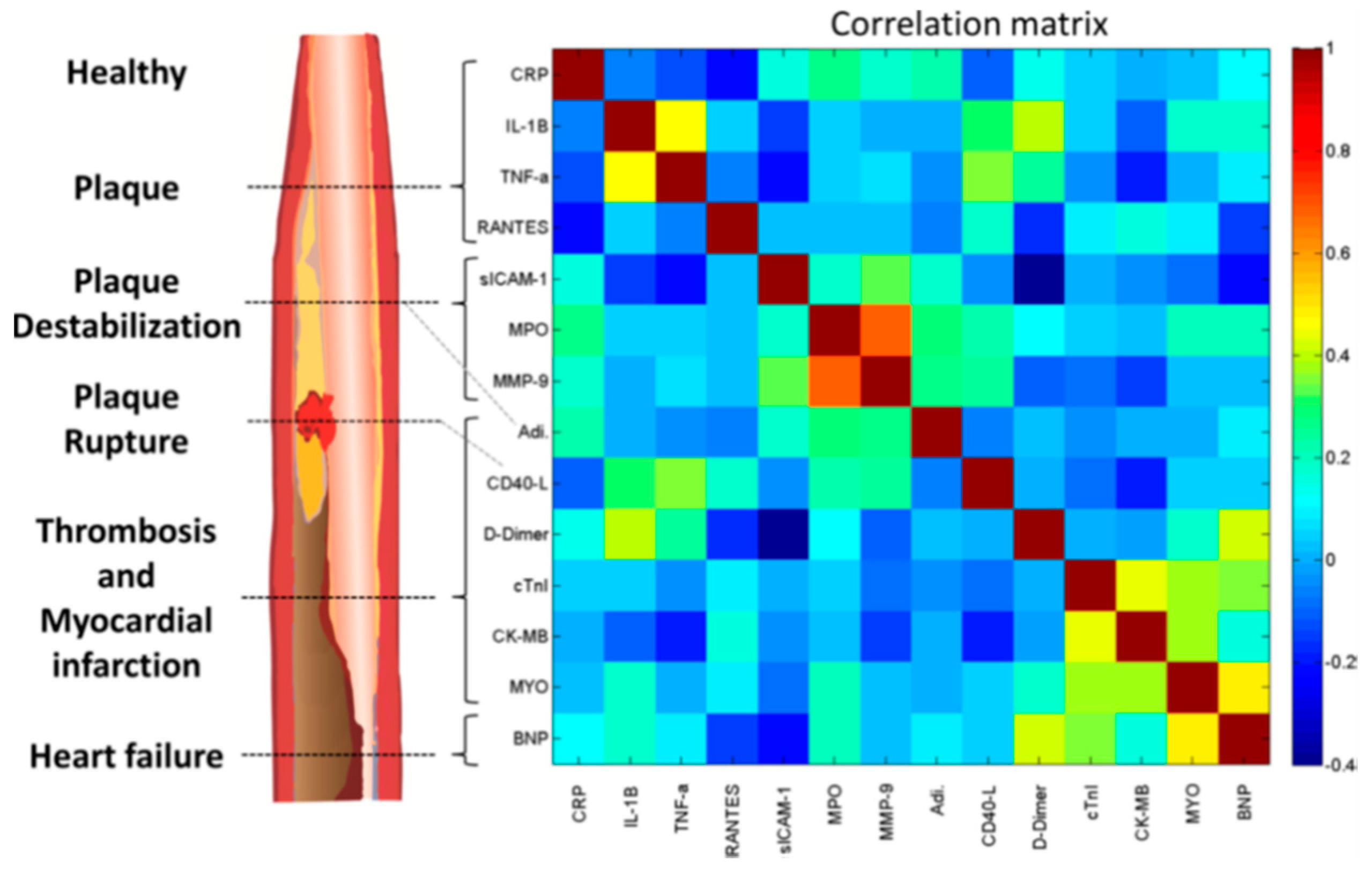

- McRae, M.P.; Bozkurt, B.; Ballantyne, C.M.; Sanchez, X.; Christodoulides, N.; Simmons, G.; Nambi, V.; Misra, A.; Miller, C.S.; Ebersole, J.L.; et al. Cardiac ScoreCard: A diagnostic multivariate index assay system for predicting a spectrum of cardiovascular disease. Syst. Appl. 2016, 54, 136–147. [Google Scholar] [CrossRef]

- Raamanathan, A.; Simmons, G.W.; Christodoulides, N.; Floriano, P.N.; Furmaga, W.B.; Redding, S.W.; Lu, K.H.; Bast, R.C.; McDevitt, J.T. Programmable Bio-Nano-Chip Systems for Serum CA125 Quantification: Towards Ovarian Cancer Diagnostics at the Point-of-Care. Prev. Res. 2012, 5, 706–716. [Google Scholar] [CrossRef]

- McRae, M.P.; Simmons, G.; Wong, J.; McDevitt, J.T. Programmable Bio-nanochip Platform: A Point-of-Care Biosensor System with the Capacity To Learn. Acc. Chem. Res. 2016, 49, 1359–1368. [Google Scholar] [CrossRef]

- Emerj, Machine Learning in Healthcare: Expert Consenses from 50+ Executives. Available online: https://emerj.com/ai-market-research/machine-learning-in-healthcare-executive-consensus/ (accessed on 18 March 2019).

- Floriano, P.; Kerr, A.; Schmidt, B.; Corby, P.; Castilla, I.; Thornhill, M.; D’Apice, K.; Murdoch, C.; Speight, P.; Redding, S.; et al. Results of a 950-Patient Phase 2/3 Clinical Characterization-Association Study to Classify Patients with Potentially Malignant Oral Disorders (Pmods) Using a Non-Invasive Lab-on-a-Chip (Loc) Approach. Oral Oncol. 2013, 49, S70. [Google Scholar] [CrossRef]

- Speight, P.M.; Abram, T.J.; Floriano, P.N.; James, R.; Vick, J.; Thornhill, M.H.; Murdoch, C.; Freeman, C.; Hegarty, A.M.; Kerr, A.R.; et al. Inter-Observer Agreement in Dysplasia Grading: Towards an Enhanced Gold Standard for Clinical Pathology Trials. Oral Surg. Oral Med. Oral Pathol. Oral Radiol. 2015, 120, 474–482. [Google Scholar] [CrossRef]

- Floriano, P.; Abram, T.; Taylor, L.; Le, C.; Talavera, H.; Nguyen, M.; Raja, R.; Gillenwater, A.; McDevitt, J.; Vigneswaran, N. Programmable Bio-Nano-Chip Based Cytologic Testing of Oral Potentially Malignant Disorders in Fanconi Anemia. Oral Dis. 2015. [Google Scholar] [CrossRef]

- Abram, T.J.; Floriano, P.N.; Christodoulides, N.; James, R.; Kerr, A.R.; Thornhill, M.H.; Redding, S.W.; Vigneswaran, N.; Speight, P.M.; Vick, J.; et al. ‘Cytology-on-a-Chip’ Based Sensors for Monitoring of Potentially Malignant Oral Lesions. Oral Oncol. 2016, 60, 103–111. [Google Scholar] [CrossRef]

- Schully, S.D.; Benedicto, C.B.; Gillanders, E.M.; Wang, S.S.; Khoury, M.J. Translational research in cancer genetics: The road less traveled. Public Health Genom. 2011, 14, 1–8. [Google Scholar] [CrossRef] [PubMed]

| Biomarker | Clinical Use |

|---|---|

| C-reactive protein | AMI, Risk Definition |

| Soluble CD40 ligand | Cardiac Risk Definition |

| Monocyte chemoattractant protein-1 | Cardiac Risk Definition |

| Myeloperoxidase | Cardiac Risk Definition |

| Myeloperoxidase (multiplexed) | Cardiac Risk Definition |

| Interleukin-1beta | Cardiac Risk Definition |

| Interleukin-6 | Cardiac Risk Definition |

| Tumor necrosis factor-alpha | Cardiac Risk Definition |

| Cardiac troponin I | AMI Diagnosis |

| Myoglobin | AMI Diagnosis |

| CK-MB | AMI Diagnosis |

| Apolipoprotein A1 | Risk for AMI recurrence/Prognosis |

| Apolipoprotein B | Risk for AMI recurrence/Prognosis |

| Brain natriuretic peptide | Congestive Heart Failure |

| N-Terminal proBNP | Congestive Heart Failure |

| Human serum albumin | Cardiac Risk Assessment |

| Transferrin | Blood contamination in saliva |

| Carcinoembryonic antigen | Ovarian Cancer Panel |

| Cancer antigen 125 | Ovarian Cancer Panel |

| Human ep growth fact Rec. 2-neu | Ovarian Cancer Panel |

| Prostate-specific antigen | Prostate Cancer Panel |

| Free prostate-specific antigen | Prostate Cancer Panel |

| Complexed prostate-specific antigen | Prostate Cancer Panel |

| Cocaine | Drug Testing |

| Diazepam | Drug Testing |

| Tetrahydrocannabinol | Drug Testing |

| D-Amphetamine | Drug Testing |

| Methamphetamine | Drug Testing |

| Oxazepam | Drug Testing |

| Nordiazepam | Drug Testing |

| Temazepam | Drug Testing |

| Morphine | Drug Testing |

| Methadone | Drug Testing |

| MDA | Drug Testing |

| MDMA | Drug Testing |

| Study | Biomarkers | Area | Subjects | Clinical Site | Sponsor |

|---|---|---|---|---|---|

| Development of a Lab-on-a-Chip System for Saliva-Based Diagnostics | 15 proteins | Cardiac Disease | 1000 | Baylor College of Medicine-Houston | National Institute of Dental and Craniofacial Research (NIDCR) |

| Advanced Bio-Nano-Chips for Saliva Based Drug Tests at the Point of Arrest | 12 drugs | Drugs of Abuse | 240 | Home Office-Center of Applied Science and Technology | |

| Next Generation Tools for Onsite Monitoring and Treatment of Drug of Abuse-Dependent Persons | 3 drugs | Drugs of Abuse | 10 (multiple time points) | National Institute on Drug Abuse (NIDA) | |

| Texas Cancer Diagnostics Pipeline Consortium | 4 proteins | Ovarian Cancer | 1250 | MD Anderson Cancer Clinic-Houston | Cancer Prevention Research Institute of Texas (CPRIT) |

| 3 proteins | Prostate Cancer | 400 | UT Health Science Center-San Antonio | ||

| Pilot and Prospective Studies for the Development of the Trauma Chip | 5 proteins | Acute Kidney Failure | 120 | UT Health Science Center-Houston | Texas Emerging Technology Fund |

| Development of p-BNCs for the Monitoring of Anti-Epilepsy Drugs Levels in Saliva | 3 proteins | Epilepsy | 100 patients | John S. Dunn Foundation |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Christodoulides, N.; McRae, M.P.; Simmons, G.W.; Modak, S.S.; McDevitt, J.T. Sensors that Learn: The Evolution from Taste Fingerprints to Patterns of Early Disease Detection. Micromachines 2019, 10, 251. https://doi.org/10.3390/mi10040251

Christodoulides N, McRae MP, Simmons GW, Modak SS, McDevitt JT. Sensors that Learn: The Evolution from Taste Fingerprints to Patterns of Early Disease Detection. Micromachines. 2019; 10(4):251. https://doi.org/10.3390/mi10040251

Chicago/Turabian StyleChristodoulides, Nicolaos, Michael P. McRae, Glennon W. Simmons, Sayli S. Modak, and John T. McDevitt. 2019. "Sensors that Learn: The Evolution from Taste Fingerprints to Patterns of Early Disease Detection" Micromachines 10, no. 4: 251. https://doi.org/10.3390/mi10040251

APA StyleChristodoulides, N., McRae, M. P., Simmons, G. W., Modak, S. S., & McDevitt, J. T. (2019). Sensors that Learn: The Evolution from Taste Fingerprints to Patterns of Early Disease Detection. Micromachines, 10(4), 251. https://doi.org/10.3390/mi10040251