Quantifying the Effect of Aerial Imagery Resolution in Automated Hydromorphological River Characterisation

Abstract

:1. Introduction

- 1

- To quantify the performance of an ANN based operational framework [19] for hydromorphological feature identification at different aerial imagery resolutions.

- 2

- To identify the optimal aerial imagery resolution required for robust automated hydromorphological assessment.

- 3

- To assess the implications of results obtained from (1) and (2) in a regulatory context.

2. Methodology

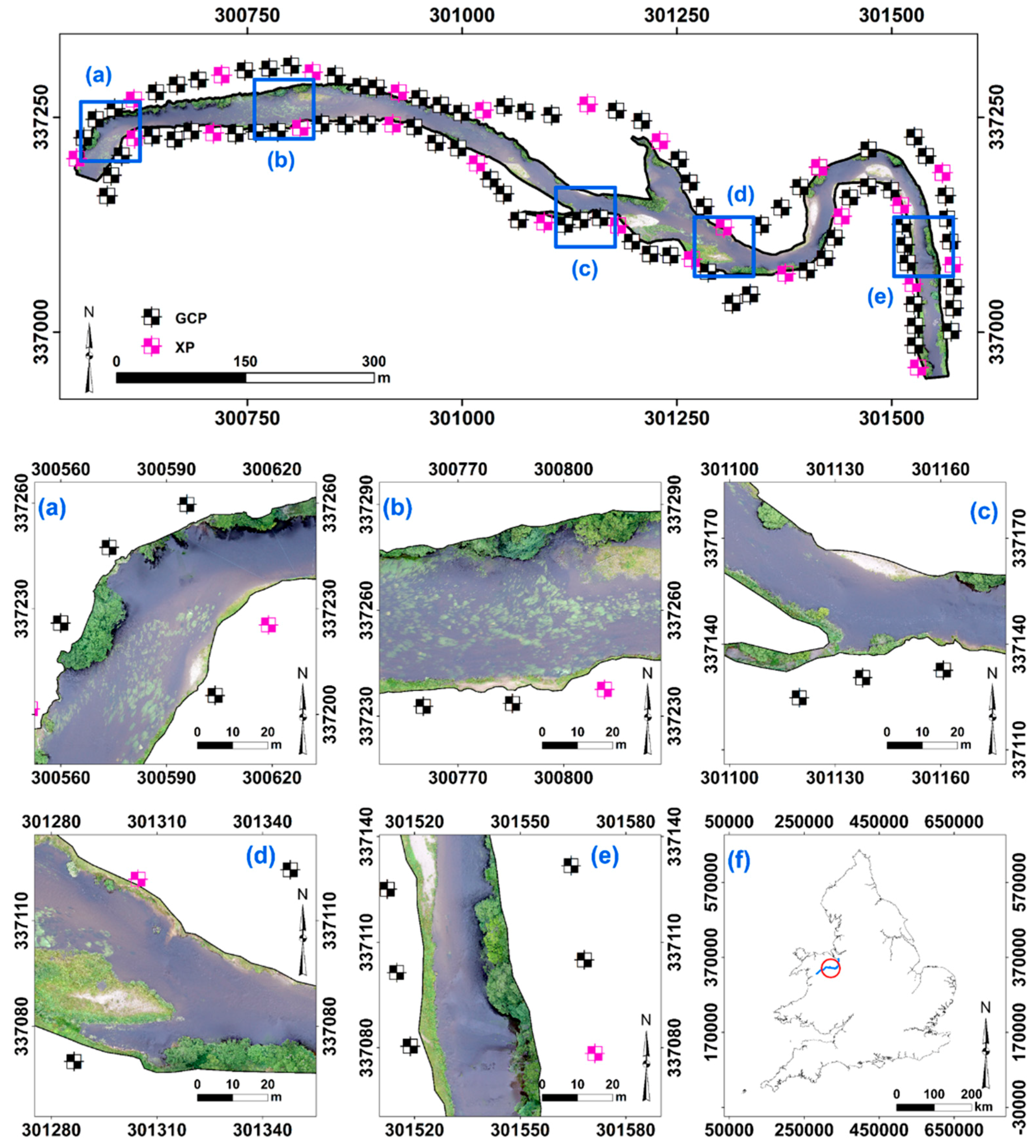

2.1. Study Site

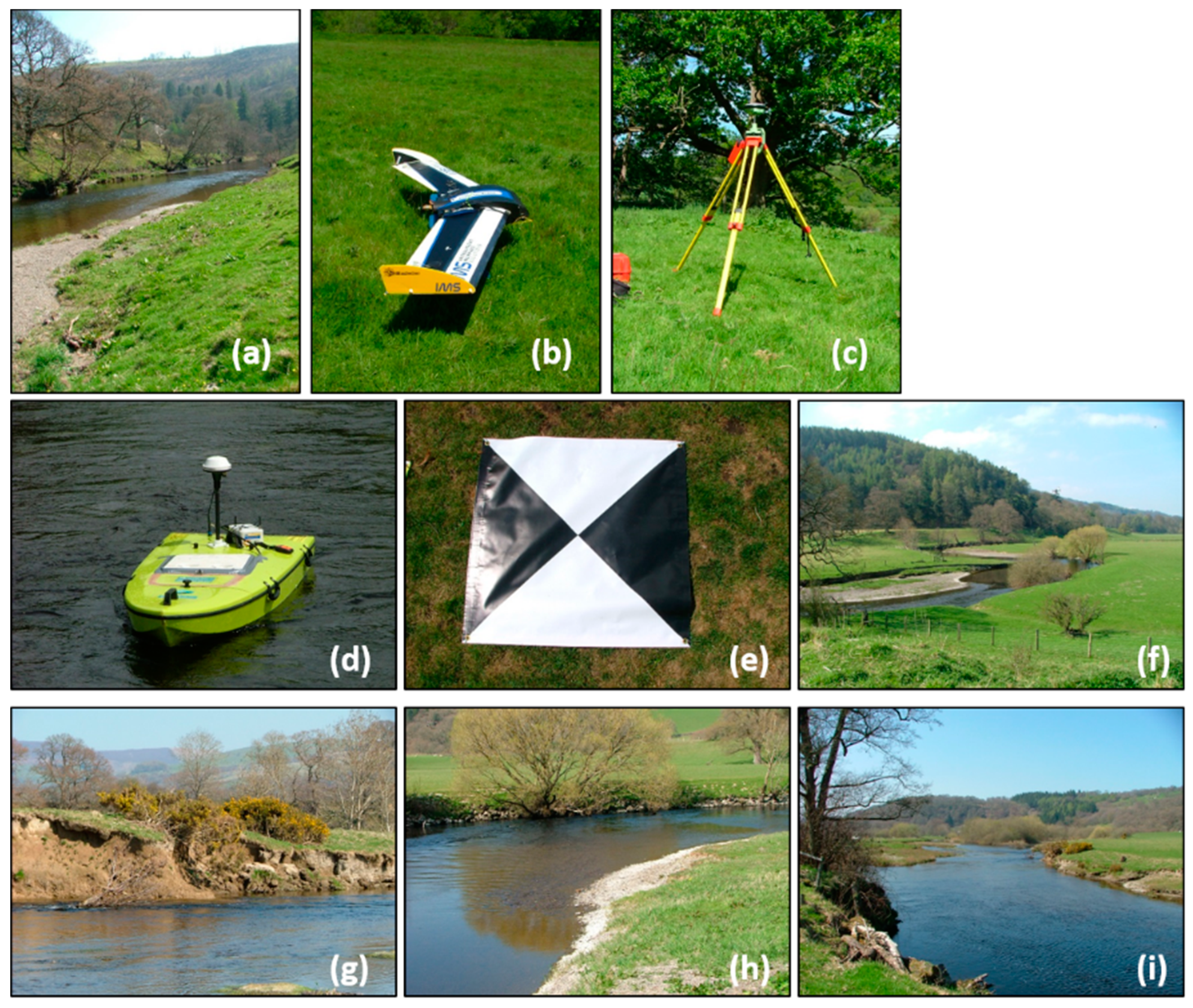

2.2. Sampling Design and Data Collection

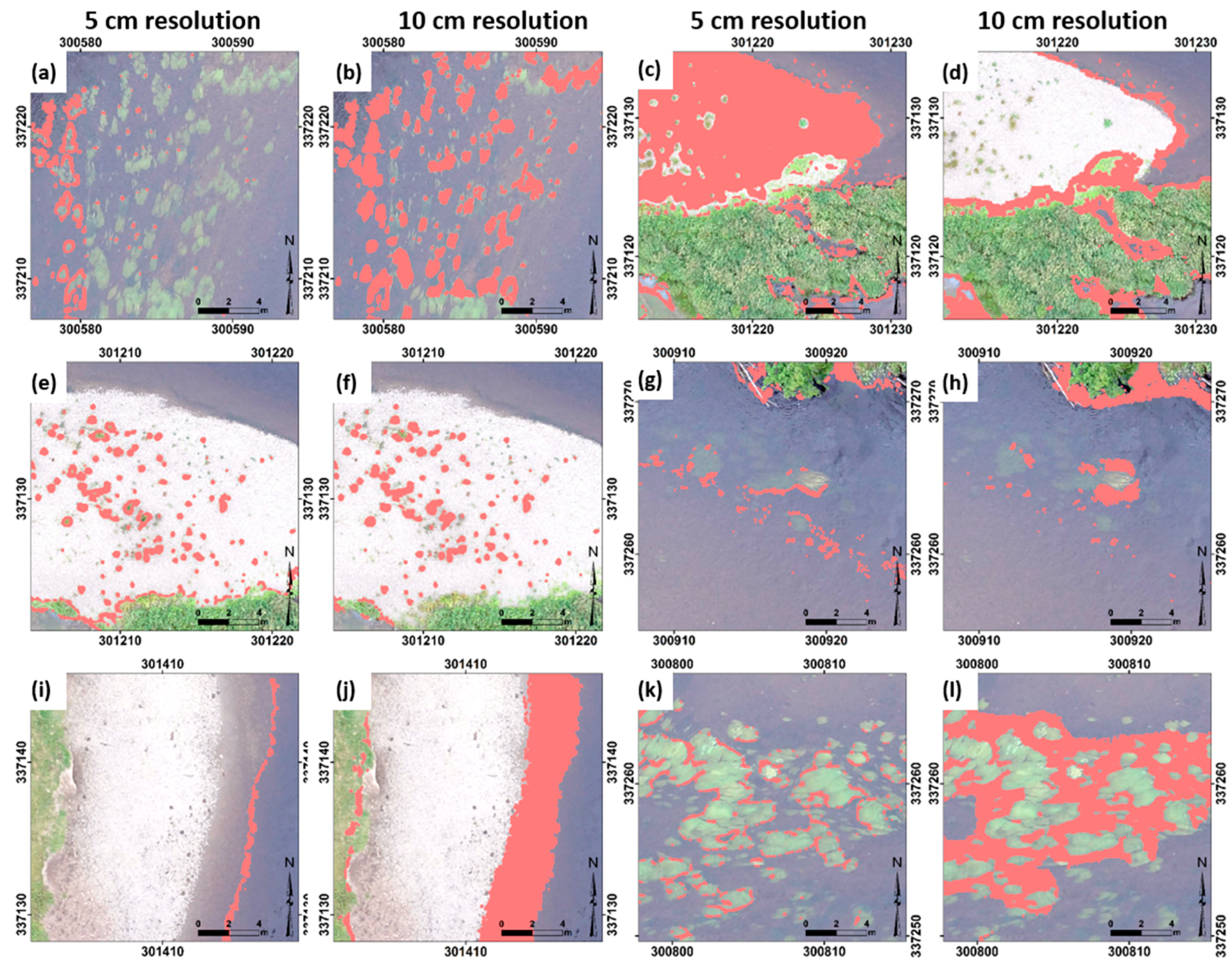

2.3. Photogrammetry and Image Classification

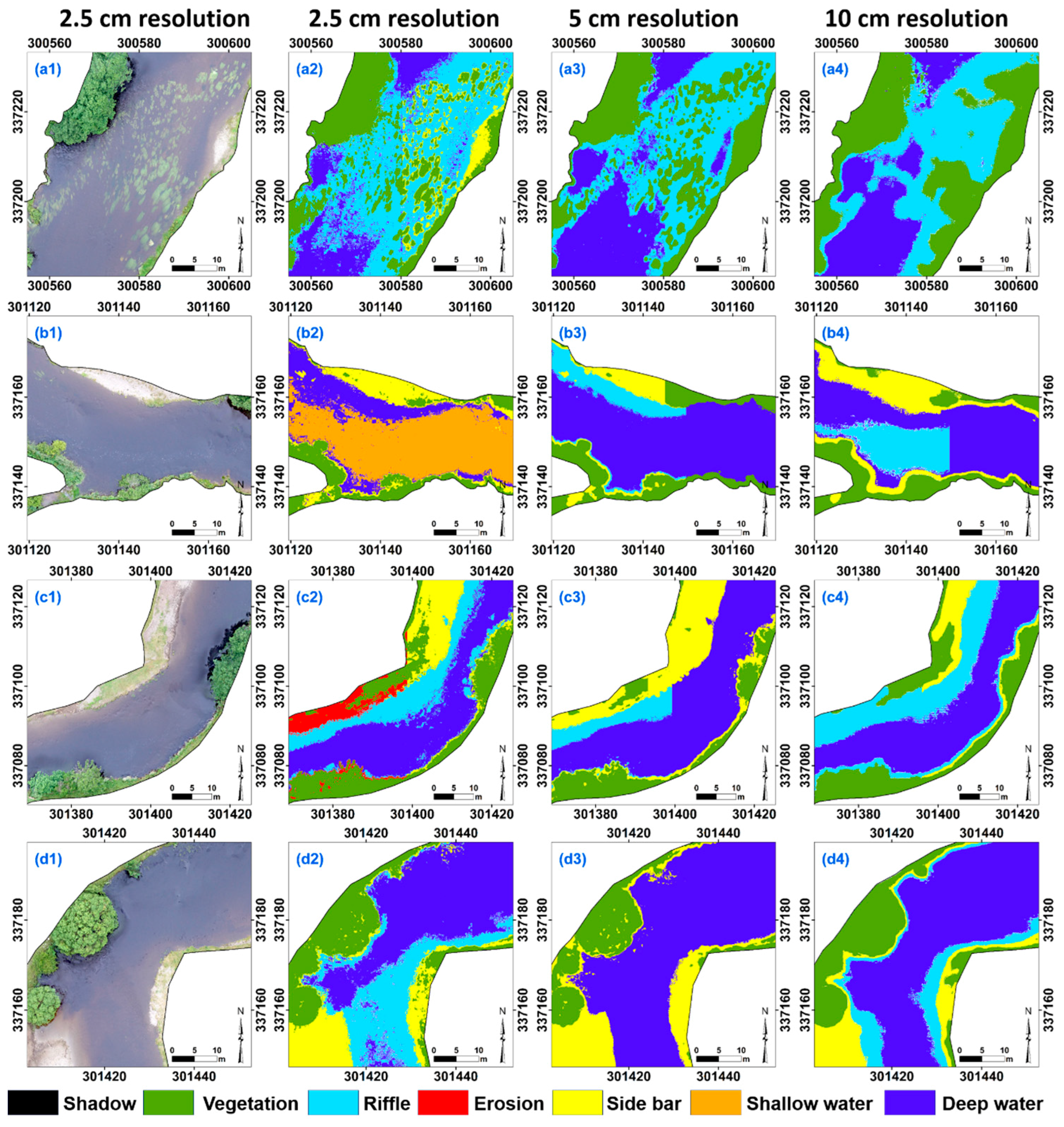

2.4. Comparison of Resolutions

3. Results

4. Discussion

5. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Australian and New Zealand Environment Conservation Council. National Water Quality Management Strategy: Australian and New Zealand Guidelines for Fresh and Marine Water Quality; Australian and New Zealand Environment and Conservation Council and Agriculture and Resource Management Council of Australian and New Zealand: Canberra, Australia, 2000. [Google Scholar]

- U.S. Environment Protection Agency. Clean Water Act. In Federal Water Act of 1972 (Codified as Amended at 33 U.S.C.); U.S. Environment Protection Agency: Washington, DC, USA, 2006. [Google Scholar]

- European Commission Directive 2000/60/EC of the European Parliament and of the Council of 23 October 2000 Establishing a Framework for Community Action in the Field of Water Policy. Available online: http://eur-lex.europa.eu/resource.html?uri=cellar:5c835afb-2ec6-4577-bdf8-756d3d694eeb.0004.02/DOC_1&format=PDF (accessed on 1 June 2016).

- Belletti, B.; Rinaldi, M.; Buijse, A.D.; Gurnell, A.M.; Mosselman, E. A review of assessment methods for river hydromorphology. Environ. Earth Sci. 2014, 73, 2079–2100. [Google Scholar] [CrossRef]

- Newson, M.D.; Large, A.R.G. “Natural” rivers, “hydromorphological quality” and river restoration: A challenging new agenda for applied fluvial geomorphology. Earth Surf. Processes Landf. 2006, 31, 1606–1624. [Google Scholar] [CrossRef]

- Vaughan, I.P.; Diamond, M.; Gurnell, A.M.; Hall, K.A.; Jenkins, A.; Milner, N.J.; Naylor, L.A.; Sear, D.A.; Woodward, G.; Ormerod, S.J. Integrating ecology with hydromorphology: A priority for river science and management. Aquat. Conserv. Mar. Freshw. Ecosyst. 2009, 19, 113–125. [Google Scholar] [CrossRef]

- Buffagni, A.; Armanini, D.G.; Erba, S. Does the lentic-lotic character of rivers affect invertebrate metrics used in the assessment of ecological quality? J. Limnol. 2009, 68, 92–105. [Google Scholar] [CrossRef]

- Padmore, C.L. Biotopes and their hydraulics: A method for defining the physical component of freshwater quality. In Freshwater Quality: Defining the Indefinable; Boon, P.J., Howell, D.L., Eds.; Scottish Natural Heritage, Edinburgh Statonery Office: Edinburgh, UK, 1997; pp. 251–257. [Google Scholar]

- Gilvear, D.J.; Davids, C.; Tyler, A.N. The use of remotely sensed data to detect channel hydromorphology; River Tummel, Scotland. River Res. Appl. 2004, 20, 795–811. [Google Scholar] [CrossRef]

- Scheifhacken, N.; Haase, U.; Gram-Radu, L.; Kozovyi, R.; Berendonk, T.U. How to assess hydromorphology? A comparison of Ukrainian and German approaches. Environ. Earth Sci. 2011, 65, 1483–1499. [Google Scholar] [CrossRef]

- Raven, P.J.; Holmes, N.T.H.; Charrier, P.; Dawson, F.H.; Naura, M.; Boon, P.J. Towards a harmonized approach for hydromorphological assessment of rivers in Europe: A qualitative comparison of three survey methods. Aquat. Conserv. Mar. Freshw. Ecosyst. 2002, 12, 405–424. [Google Scholar] [CrossRef]

- Raven, P.J.; Holmes, N.T.H.; Dawson, F.H.; Fox, P.J.A.; Everard, M.; Fozzaed, I.R.; Rouen, K.J. River Habitat Quality: The Physical Character of Rivers and Streams in the UK and Isle of Man; River Habitat Survey Report; Environment Agency: Bristol, UK, 1998.

- MacVicar, B.J.; Piégay, H.; Henderson, A.; Comiti, F.; Oberlin, C.; Pecorari, E. Quantifying the temporal dynamics of wood in large rivers: Field trials of wood surveying, dating, tracking, and monitoring techniques. Earth Surf. Processes Landf. 2009, 34, 2031–2046. [Google Scholar] [CrossRef]

- Gómez-Candón, D.; De Castro, A.I.; López-Granados, F. Assessing the accuracy of mosaics from unmanned aerial vehicle (UAV) imagery for precision agriculture purposes in wheat. Precis. Agric. 2014, 15, 44–56. [Google Scholar] [CrossRef]

- Garcia-Ruiz, F.; Sankaran, S.; Maja, J.M.; Lee, W.S.; Rasmussen, J.; Ehsani, R. Comparison of two aerial imaging platforms for identification of Huanglongbing-infected citrus trees. Comput. Electron. Agric. 2013, 91, 106–115. [Google Scholar] [CrossRef]

- Baker, B.A.; Warner, T.A.; Conley, J.F.; McNeil, B.E. Does spatial resolution matter? A multi-scale comparison of object-based and pixel-based methods for detecting change associated with gas well drilling operations. Int. J. Remote Sens. 2013, 34, 1633–1651. [Google Scholar] [CrossRef]

- Torres-Sánchez, J.; López-Granados, F.; De Castro, A.I.; Peña-Barragán, J.M. Configuration and Specifications of an Unmanned Aerial Vehicle (UAV) for Early Site Specific Weed Management. PLoS ONE 2013, 8. [Google Scholar] [CrossRef] [PubMed]

- Kavvadias, A.; Psomiadis, E.; Chanioti, M.; Gala, E.; Michas, S. Precision Agriculture—Comparison and Evaluation of Innovative Very High Resolution (UAV) and LandSat Data. In Proceedings of the 7th International Conference on Information and Communication Technologies in Agriculture, Food and Environment (HAICTA 2015), Kavala, Greece, 17–20 September 2015; pp. 376–386.

- Rivas Casado, M.; Ballesteros Gonzalez, R.; Kriechbaumer, T.; Veal, A. Automated Identification of River Hydromorphological Features Using UAV High Resolution Aerial Imagery. Sensors 2015, 15, 27969–27989. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Woodget, A.S.; Carbonneau, P.E.; Visser, F.; Maddock, I.P. Quantifying submerged fluvial topography using hyperspatial resolution UAS imagery and structure from motion photogrammetry. Earth Surf. Process. Landf. 2015, 40, 47–64. [Google Scholar] [CrossRef]

- Richards, J.A. Remote Sensing Digital Image Analysis; Springer-Verlag: Berlin/Heidelberg, Germany, 2013. [Google Scholar]

- Zhang, C.; Kovacs, J.M. The application of small unmanned aerial systems for precision agriculture: A review. Precis. Agric. 2012, 13, 693–712. [Google Scholar] [CrossRef]

- Ballesteros, R.; Ortega, J.F.; Hernández, D.; Moreno, M.A. Applications of georeferenced high-resolution images obtained with unmanned aerial vehicles. Part I: Description of image acquisition and processing. Precis. Agric. 2014, 15, 579–592. [Google Scholar] [CrossRef]

- Sankaran, S.; Khot, L.R.; Espinoza, C.Z.; Jarolmasjed, S.; Sathuvalli, V.R.; Vandemark, G.J.; Miklas, P.N.; Carter, A.H.; Pumphrey, M.O.; Knowles, N.R.; et al. Low-altitude, high-resolution aerial imaging systems for row and field crop phenotyping: A review. Eur. J. Agron. 2015, 70, 112–123. [Google Scholar] [CrossRef]

- Tarolli, P. High-resolution topography for understanding Earth surface processes: Opportunities and challenges. Geomorphology 2014, 216, 295–312. [Google Scholar] [CrossRef]

- Woodget, A.S.; Visser, F.; Maddock, I.P.; Carbonneau, P.E. The Accuracy and Reliability of Traditional Surface Flow Type Mapping: Is it Time for a New Method of Characterizing Physical River Habitat? River Res. Appl. 2016. [Google Scholar] [CrossRef]

- Legleiter, C.J.; Marcus, A.; Lawrence, R.L. Effects of Sensor Resolution on Mapping In-Stream Habitats. Photogramm. Eng. Remote Sens. 2002, 68, 801–807. [Google Scholar]

- Anker, Y.; Hershkovitz, Y.; Ben Dor, E.; Gasith, A. Application of aerial digital photography for macrophyte cover and composition survey in small rural streams. River Res. Appl. 2014, 30, 925–937. [Google Scholar] [CrossRef]

- Carbonneau, P.E.; Lane, S.N.; Bergeron, N.E. Catchment-scale mapping of surface grain size in gravel bed rivers using airborne digital imagery. Water Resour. Res. 2004, 40, 1–11. [Google Scholar] [CrossRef]

- Vericat, D.; Brasington, J.; Wheaton, J.; Cowie, M. Accuracy assessment of aerial photographs acquired using lighter-than-air blimps: LOW-cost tools for mapping river corridors. River Res. Appl. 2009, 25, 985–1000. [Google Scholar] [CrossRef]

- Environment Agency. River Habitat Survey in Britain and Ireland; Environment Agency: Bristol, UK, 2003.

- Foody, G.M. Harshness in image classification accuracy assessment. Int. J. Remote Sens. 2008, 29, 3137–3158. [Google Scholar] [CrossRef]

- Allouche, O.; Tsoar, A.; Hadmon, R. Assessing the accuracy of species distribution models: Prevalence, kappa and true skill statistics (TSS). J. Appl. Ecol. 2006, 43, 1223–1232. [Google Scholar] [CrossRef]

- Pontius, R.G.; Millones, M. Death to Kappa: Birth of quantity disagreement and allocation disagreement for accuracy assessment. Int. J. Remote Sens. 2011, 32, 4407–4429. [Google Scholar] [CrossRef]

- Congalton, R.G.; Green, K. Assessing the Accuracy of Remotely Sensed Data: Principles and Practices, 2nd ed.; CRC Press: Boca Raton, FL, USA, 2009. [Google Scholar]

- Fleiss, J.L.; Levin, B.; Paik, M.C. Statistical Methods for Rates and Proportions; Wiley Series in Probability and Statistics; John Wiley & Sons, Inc.: Hoboken, NJ, USA, 2003. [Google Scholar]

- Sokal, R.R.; Rohlf, F.J. Biometry: The Principles and Practice of Statistics in Biological Research; W.H. Freeman: New York, NY, USA, 2012. [Google Scholar]

- Armstrong, J.D.; Kemp, P.S.; Kennedy, G.J.A.; Ladle, M.; Milner, N.J. Habitat requirements of Atlantic salmon and brown trout in rivers and streams. Fish. Res. 2003, 62, 143–170. [Google Scholar] [CrossRef]

- Kondolf, G.M.; Wolman, M.G. The sizes of salmonid spawning gravels. Water Resour. Res. 1993, 29, 2275–2285. [Google Scholar] [CrossRef]

- Elliott, S.R.; Coe, T.A.; Helfield, J.M.; Naiman, R.J. Spatial variation in environmental characteristics of Atlantic salmon (Salmo salar) rivers. Can. J. Fish. Aquat. Sci. 1998, 55, 267–280. [Google Scholar] [CrossRef]

- Crisp, D.T. The environmental requirements of salmon and trout in fresh water. Freshw. Forum 1993, 3, 176–202. [Google Scholar]

- Petr, T. Interactions between Fish and Aquatic Macrophytes in Inland Waters: A Review; FAO: Rome, Italy, 2000. [Google Scholar]

- Gurnell, A. Plants as river system engineers. Earth Surf. Processes Landf. 2014, 39, 4–25. [Google Scholar] [CrossRef]

- Parker, C.; Clifford, N.J.; Thorne, C.R. Automatic delineation of functional river reach boundaries for river research and applicatons. River Res. Appl. 2012, 28, 1708–1725. [Google Scholar] [CrossRef]

- Mckay, P.; Blain, C.A. An automated approach to extracting river bank locations from aerial imagery using image texture. River Res. Appl. 2014, 30, 1048–1055. [Google Scholar] [CrossRef]

- Schmitt, R.; Bizzi, S.; Castelletti, A. Characterizing fluvial systems at basin scale by fuzzy signatures of hydromorphological drivers in data scarce environments. Geomorphology 2014, 214, 69–83. [Google Scholar] [CrossRef]

- Marcus, W.A.; Legleiter, C.J.; Aspinall, R.J.; Boardman, J.W.; Crabtree, R.L. High spatial resolution hyperspectral mapping of in-stream habitats, depths, and woody debris in mountain streams. Geomorphology 2003, 55, 363–380. [Google Scholar] [CrossRef]

- Roux, C.; Alber, A.; Bertrand, M.; Vaudor, L.; Piégay, H. “FluvialCorridor”: A new ArcGIS toolbox package for multiscale riverscape exploration. Geomorphology 2014, 242, 29–37. [Google Scholar] [CrossRef]

- Leviandier, T.; Alber, A.; Le Ber, F.; Piégay, H. Comparison of statistical algorithms for detecting homogeneous river reaches along a longitudinal continuum. Geomorphology 2012, 138, 130–144. [Google Scholar] [CrossRef] [Green Version]

- Gurnell, A.M.; Rinaldi, M.; Buijse, A.D.; Brierley, G.; Piégay, H. Hydromorphological frameworks: Emerging trajectories. Aquat. Sci. 2016, 78, 135–138. [Google Scholar] [CrossRef]

- Civil Aviation Authority CAP1361: CAA Approved Commercial Small Unmanned Aircraft (SUA) Operators. Available online: http://publicapps.caa.co.uk/modalapplication.aspx?appid=11&mode=detail&id=7078 (accessed on 13 May 2016).

- Poikane, S.; Zampoukas, N.; Borja, A.; Davies, S.P.; van de Bund, W.; Birk, S. Intercalibration of aquatic ecological assessment methods in the European Union: Lessons learned and way forward. Environ. Sci. Policy 2014, 44, 237–246. [Google Scholar] [CrossRef]

- Poikane, S.; Birk, S.; Böhmer, J.; Carvalho, L.; de Hoyos, C.; Gassner, H.; Hellsten, S.; Kelly, M.; Lyche Solheim, A.; Olin, M.; et al. A hitchhiker’s guide to European lake ecological assessment and intercalibration. Ecol. Indic. 2015, 52, 533–544. [Google Scholar] [CrossRef]

| Feature | Description | |

|---|---|---|

| Substrate features | Side bars | Consolidated river bed material along the margins of a reach which is exposed at low flow. |

| Erosion | Predominantly derived from eroding cliffs, which are vertical or undercut banks, with a minimum height of 0.5 m and less than 50% vegetation cover. | |

| Water features | Riffle | Area within the river channel presenting shallow and fast-flowing water. Generally over gravel, pebble or cobble substrate with disturbed (rippled) water surface (i.e., waves can be perceived on the water surface). The average depth is 0.5 m with an average velocity ≈1 m·s−1. |

| Deep water (Glides and pools) | Deep glides are deep homogeneous areas within the channel with visible flow movement along the surface. Pools are localised deeper parts of the channel created by scouring. Both present fine substrate, non-turbulent and slow flow. The average depth is 1.3 m and the average velocity ≈0.3 m·s−1. | |

| Shallow water | Includes any slow flowing and non-turbulent areas. The average depth is 0.8 m with an average velocity of ≈0.5 m·s−1. | |

| Vegetation features | Vegetation | This includes trees obscuring the aerial view of the river channel, side bars presenting plant cover, vegetated banks, plants rooted on the riverbed with either floating leaves (submerged free floating vegetation) or floating leaves on the water surface (emergent free floating vegetation) and grass present along the bank. |

| Shadows | Includes shading of channel and overhanging vegetation. | |

| Characteristics | Sony NEX 7 E-Mount SELP1650 | Panasonic Lumix DMC-LX7 |

|---|---|---|

| Sensor (Type) | APS-C CMOS Sensor | APS-C CMOS Sensor |

| Sensor diameter (mm) | 23.5 × 15.6 | 7.6 × 5.7 |

| Million effective pixels | 24.3 | 10.1 |

| Pixel size (mm) | 0.04 | 0.0018 |

| Range of focal length (mm) | 24–75 (35) | 24–90 (35) |

| Focal length applied (mm) | 24 (35) | 24 (35) |

| Maximum Resolution (MP) | 24.3 | 10.10 |

| Resolution | |||

|---|---|---|---|

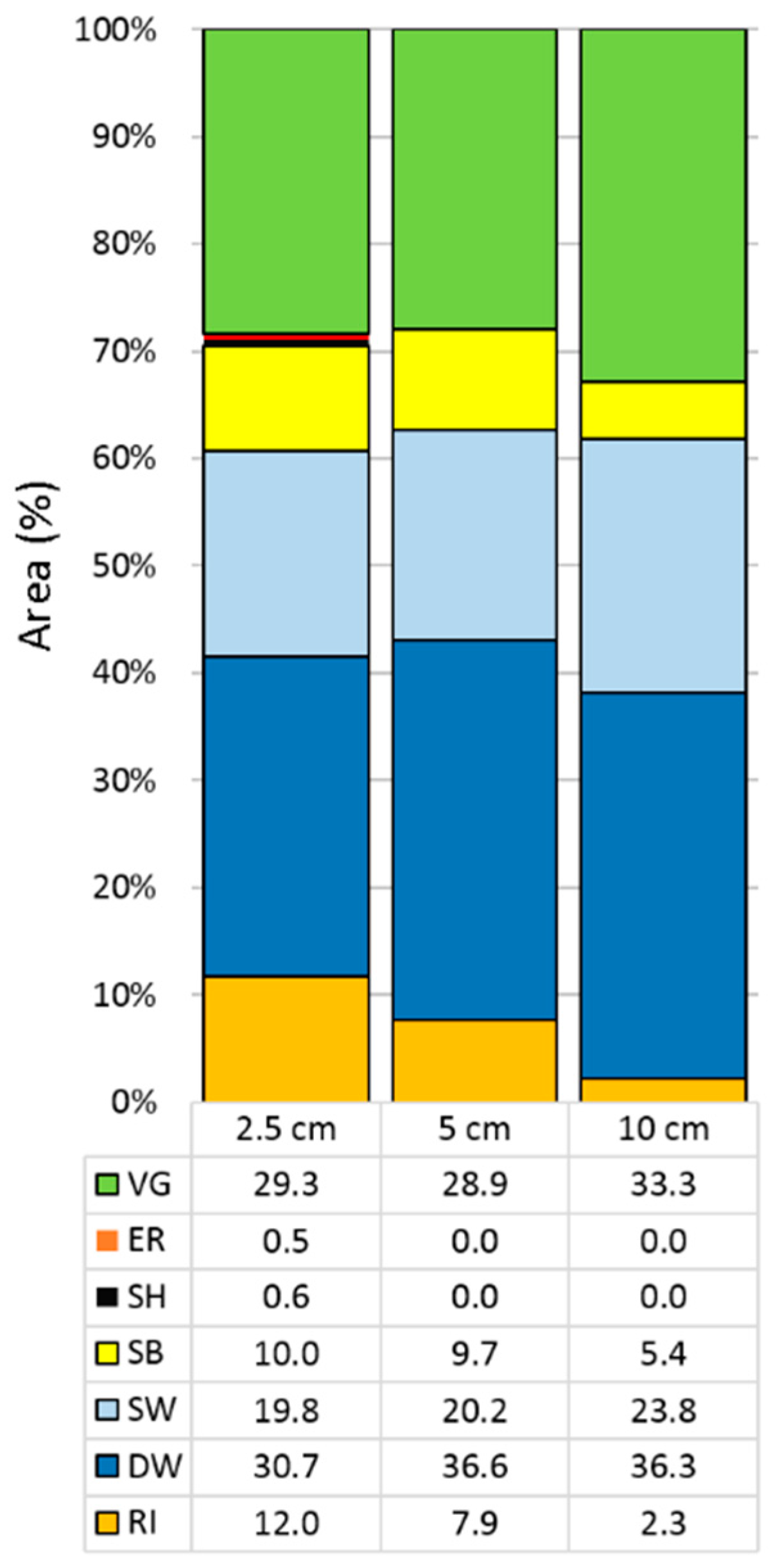

| Parameter | 2.5 cm | 5 cm | 10 cm |

| Flight height (m) | 116 | 133 | 259 |

| Total GCP error in x (m) | 0.0136 | 0.0132 | 0.1863 |

| Total GCP error in y (m) | 0.0134 | 0.0112 | 0.2399 |

| Total GCP error in z (m) | 0.0223 | 0.0295 | 0.3107 |

| Total XP error in x (m) | 0.0139 | 0.0162 | 0.1872 |

| Total XP Error in y (m) | 0.0135 | 0.0195 | 0.8336 |

| Total XP Error in z (m) | 0.0260 | 0.0521 | 0.5521 |

| XP RMSE | 0.0451 | 0.1574 | 3.0574 |

| Processing time (h) | 16 | 12 | 10 |

| Camera | Sony NEX-7 | Sony NEX-7 | Panasonic Lumix |

| Resolution (cm) | AC (%) | κ | C | Q |

|---|---|---|---|---|

| 2.5 | 68.4 (75.8) | 0.62 | 0.064 | 0.264 |

| 5 | 64.8 (72.4) | 0.48 | 0.113 | 0.233 |

| 10 | 62.8 (66.6) | 0.38 | 0.091 | 0.276 |

| 2.5 cm | ||||

| Feature | TPR | TNR | FNR | FPR |

| SB | 85.7 | 61.9 | 14.3 | 6.5 |

| ER | 13.6 | 63.4 | 86.4 | 0.4 |

| RI | 47.1 (94.9) | 65.5 | 52.9 | 6.7 |

| DW | 78.8 (87.5) | 58.8 | 21.2 | 16.3 |

| SW | 41.5 (56.9) | 71.5 | 58.5 | 6.4 |

| SH | 0 | 63.8 | 82.2 | 1.5 |

| VG | 80.8 | 55.1 | 19.1 | 4.2 |

| 5 cm | ||||

| Feature | TPR | TNR | FNR | FPR |

| SB | 73.6 | 59.7 | 26.4 | 6.8 |

| ER | 0 | 60.9 | 100 | 0 |

| RI | 34.9 (94.6) | 64.4 | 64.1 | 4.1 |

| DW | 82.5 (86.8) | 54.5 | 17.5 | 23.4 |

| SW | 40.6 (50.6) | 68.1 | 59.4 | 9.2 |

| SH | 0 | 61.1 | 100 | 0 |

| VG | 78.2 | 52.4 | 21.7 | 4.2 |

| 10 cm | ||||

| Feature | TPR | TNR | FNR | FPR |

| SB | 1.4 | 54.8 | 98.6 | 5.6 |

| ER | 0 | 54.3 | 100 | 0 |

| RI | 13.2 (97.3) | 60.4 | 86.8 | 0.6 |

| DW | 80.1 (81.5) | 46.9 | 19.9 | 24.0 |

| SW | 40.4 (43.2) | 59.1 | 59.6 | 16.8 |

| SH | 0 | 54.5 | 100 | 0 |

| VG | 73.4 | 44.3 | 26.6 | 10.8 |

| Feature | Total Pixels | Matching Pixels (%) | Cochran Test | ||

|---|---|---|---|---|---|

| 2.5 cm | 5 cm | 10 cm | Q | p-Value | |

| SB | 6,751,738 | 66.84 | 35.01 | 5,674,126 | <0.001 |

| ER | 359,621 | 0 | 0 | - | - |

| RI | 8,117,895 | 26.82 | 4.91 | 12,076,900 | <0.001 |

| DW | 20,708,273 | 83.34 | 78.94 | 4,988,222 | <0.001 |

| SW | 13,353,493 | 61.43 | 56.92 | 7,582,029 | <0.001 |

| SH | 376,473 | 0 | 0 | - | - |

| VG | 19,748,122 | 88.62 | 87.40 | 3,275,528 | <0.001 |

© 2016 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC-BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Rivas Casado, M.; Ballesteros Gonzalez, R.; Wright, R.; Bellamy, P. Quantifying the Effect of Aerial Imagery Resolution in Automated Hydromorphological River Characterisation. Remote Sens. 2016, 8, 650. https://doi.org/10.3390/rs8080650

Rivas Casado M, Ballesteros Gonzalez R, Wright R, Bellamy P. Quantifying the Effect of Aerial Imagery Resolution in Automated Hydromorphological River Characterisation. Remote Sensing. 2016; 8(8):650. https://doi.org/10.3390/rs8080650

Chicago/Turabian StyleRivas Casado, Monica, Rocio Ballesteros Gonzalez, Ros Wright, and Pat Bellamy. 2016. "Quantifying the Effect of Aerial Imagery Resolution in Automated Hydromorphological River Characterisation" Remote Sensing 8, no. 8: 650. https://doi.org/10.3390/rs8080650

APA StyleRivas Casado, M., Ballesteros Gonzalez, R., Wright, R., & Bellamy, P. (2016). Quantifying the Effect of Aerial Imagery Resolution in Automated Hydromorphological River Characterisation. Remote Sensing, 8(8), 650. https://doi.org/10.3390/rs8080650