Review of Automatic Feature Extraction from High-Resolution Optical Sensor Data for UAV-Based Cadastral Mapping

Abstract

:1. Introduction

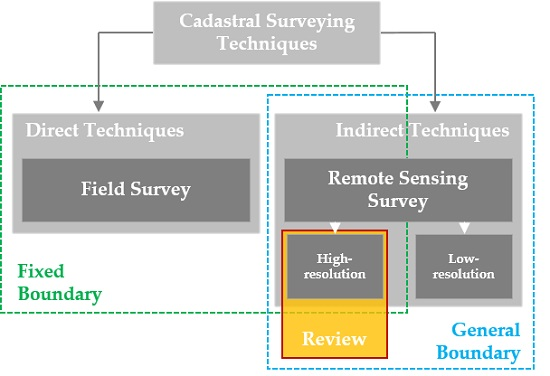

1.1. Application of UAV-Based Cadastral Mapping

1.2. Boundary Delineation for UAV-Based Cadastral Mapping

1.3. Objective and Organization of This Paper

- (i)

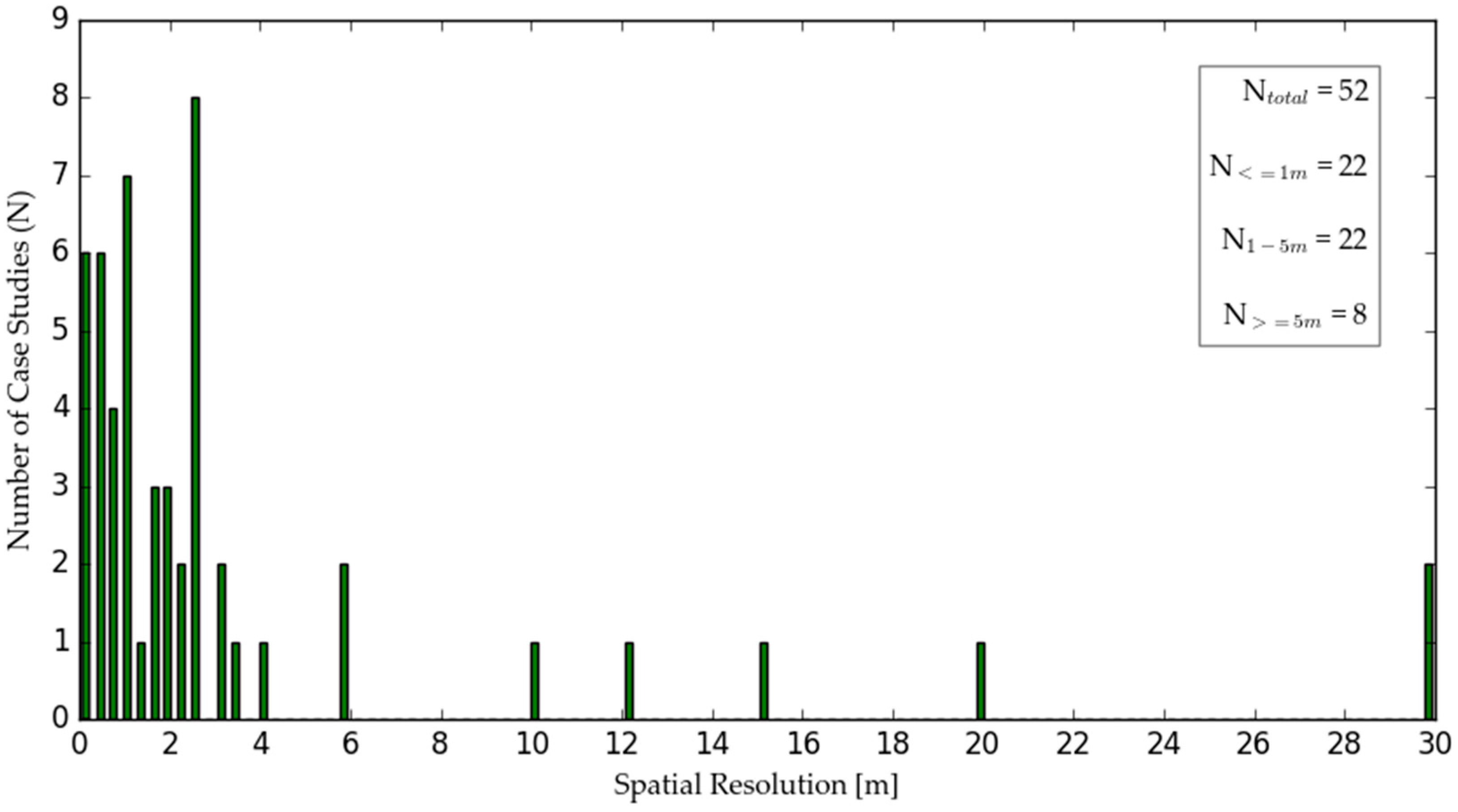

- UAV data includes dense point clouds from which DSMs are derived as well as high-resolution imagery. Such products can be similarly derived from other high-resolution optical sensors. Therefore, methods based on other high-resolution optical sensor data such as High-Resolution Satellite Imagery (HRSI) and aerial imagery are equally considered in this review. Methods applied solely on 3D point clouds are excluded. Methods that are based on the derived DSM are considered in this review. Methods that combine 3D point clouds and aerial or satellite imagery are considered in terms of methods based on the aerial or satellite imagery.

- (ii)

- The review includes methods that aim to extract features other than cadastral boundaries having similar characteristics, which are outlined in the next section. Suitable methods are not intended to extract the entirety of boundary features, since some boundaries are not visible to optical sensors.

2. Review of Feature Extraction and Evaluation Methods

2.1. Cadastral Boundary Characteristics

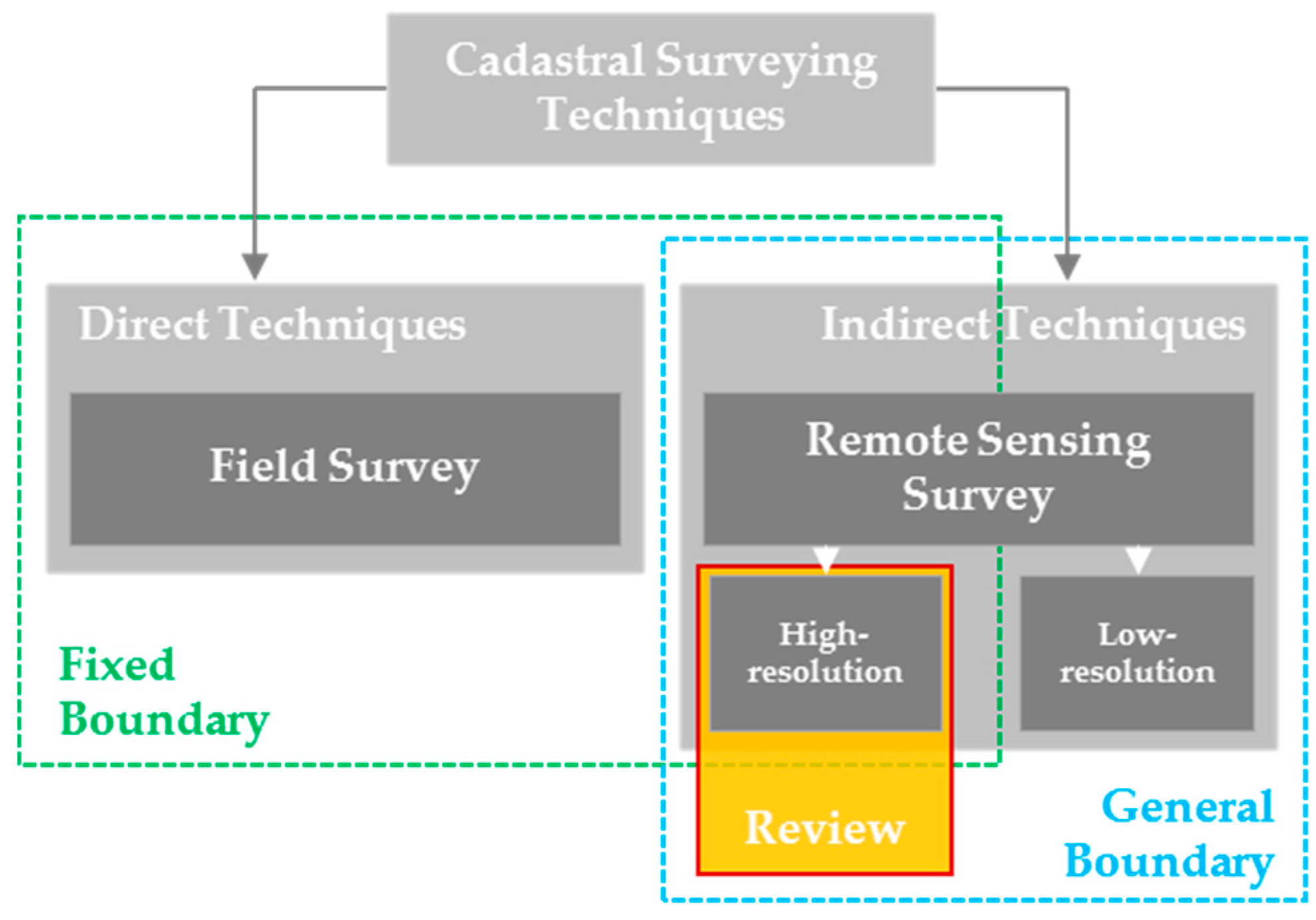

2.2. Feature Extraction Methods

2.2.1. Preprocessing

- ■

- ■

- Wallis filter is an image filter method for detail enhancement through local contrast adjustment. The algorithm subdivides an image into non-overlapping windows of the same size to then adjust the contrast and minimize radiometric changes of each window [100].

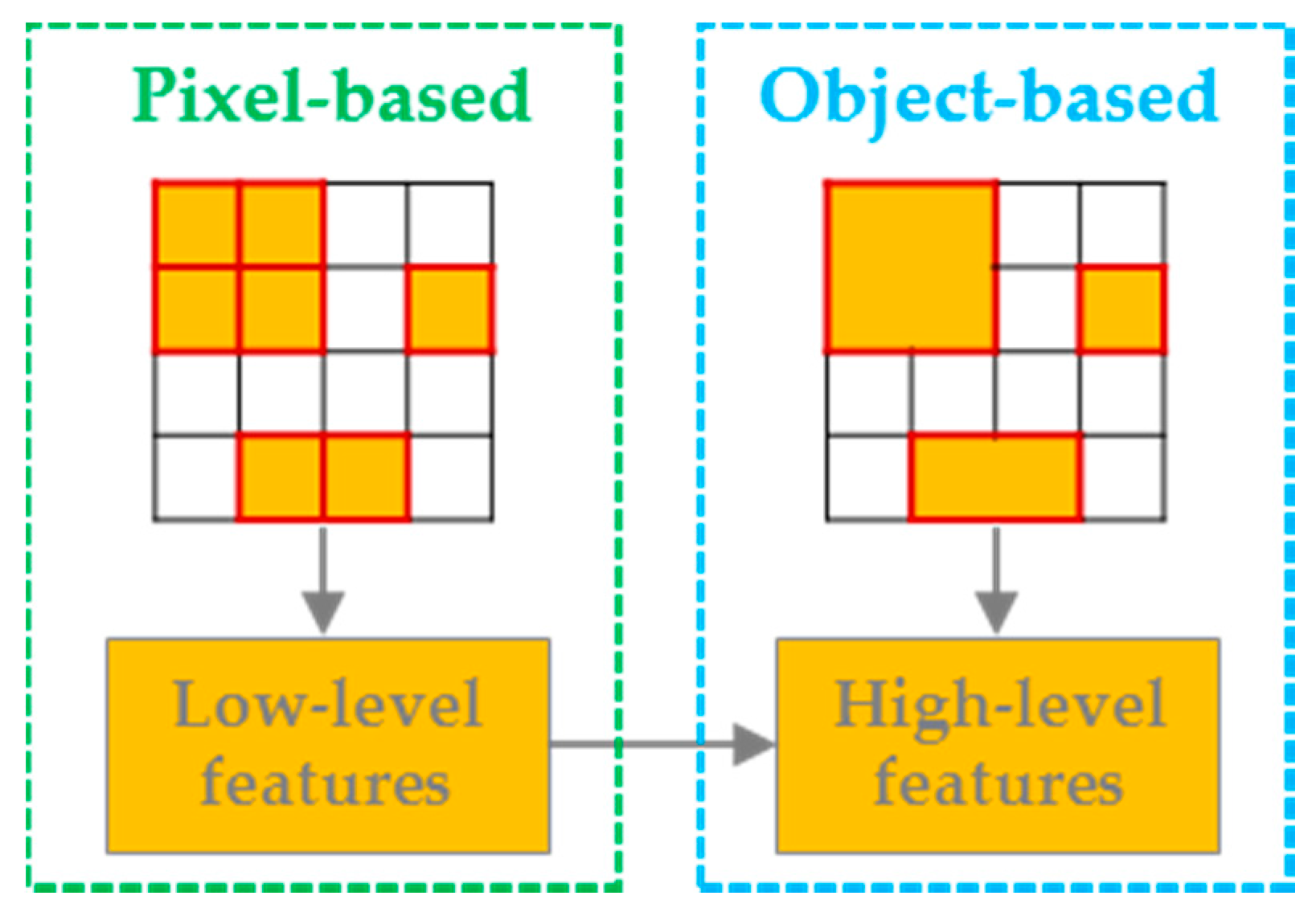

2.2.2. Image Segmentation

- (i)

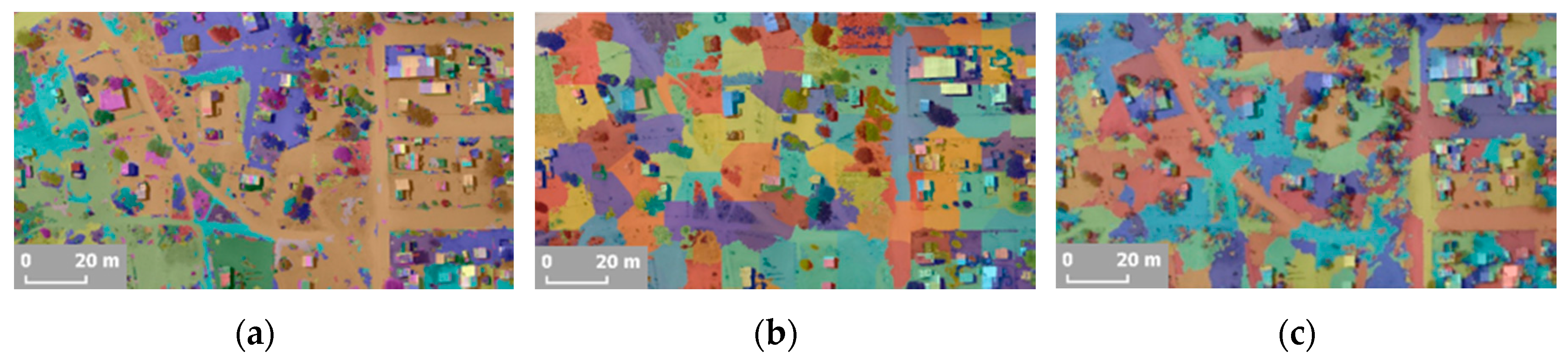

- Unsupervised approaches include methods in which segmentation parameters are defined that describe color, texture, spectral homogeneity, size, shape, compactness and scale of image segments. The challenge lies within defining appropriate segmentation parameters for features varying in size, shape, scale and spatial location. Thereafter, the image is automatically segmented according to these parameters [90]. Popular approaches are described in the following and visualized in Figure 8: these were often applied in the case studies investigated for this review. A list of further approaches can be found in [102].

- ■

- Graph-based image segmentation is based on color and is able to preserve details in low-variability image regions while ignoring details in high-variability regions. The algorithm performs an agglomerative clustering of pixels as nodes on a graph such that each superpixel is the minimum spanning tree of the constituent pixels [104,105].

- ■

- Simple Linear Iterative Clustering (SLIC) is an algorithm that adapts a k-mean clustering approach to generate groups of pixels, called superpixels. The number of superpixels and their compactness can be adapted within the memory efficient algorithm [106].

- ■

- Watershed algorithm is an edge-based image segmentation method. It is also referred to as a contour filling method and applies a mathematical morphological approach. First, the algorithm transforms an image into a gradient image. The image is seen as a topographical surface, where grey values are deemed as elevation of the surface of each pixel’s location; Then, a flooding process starts in which water effuses out of the minimum grey values. When the flooding across two minimum values converges, a boundary that separates the two identified segments is defined [101,102].

- ■

- Wavelet transform analyses textures and patterns to detect local intensity variations and can be considered as a generalized combination of three other operations: Multi-resolution analysis, template matching and frequency domain analysis. The algorithm decomposes an image into a low frequency approximation image and a set of high frequency, spatially oriented detailed images [107].

- (ii)

- Supervised methods often consist of methods from machine learning and pattern recognition. These can be performed by learning a classifier to capture the variation in object appearances and views from a training dataset. In the training dataset, object shape descriptors are defined and used to label the training dataset. Then, the classifier is learned based on a set of regions with object shape descriptors resulting in their corresponding predicted labels. The automation of machine learning approaches might be limited, since some classifiers need to be trained with samples that require manual labeling. The aim of training is to model the process of data generation such that it can predict the output for unforeseen data. Various possibilities exist to select training sets and features [108] as well as to select a classifier [90,109]. In contrast to the unsupervised methods, these methods go beyond image segmentation as they additionally add a semantic meaning to each segment. A selection of popular approaches that have been applied in case studies investigated for this review are described in the following. A list of further approaches can be found in [90].

- ■

- Convolutional Neural Networks (CNN) are inspired by biological processes being made up of neurons that have learnable weights and biases. The algorithm creates multiple layers of small neuron collections which process parts of an image, referred to as receptive fields. Then, local connections and tied weights are analyzed to aggregate information from each receptive field [96].

- ■

- Markov Random Fields (MRF) are a probabilistic approach based on graphical models. They are used to extract features based on spatial texture by classifying an image into a number of regions or classes. The image is modelled as a MRF and a maximum a posteriori probability approach is used for classification [110].

- ■

- Support Vector Machines (SVM) consist of a supervised learning model with associated learning algorithms that support linear image classification into two or more categories through data modelling. Their advantages include a generalization capability, which concerns the ability to classify shapes that are not within the feature space used for training [111].

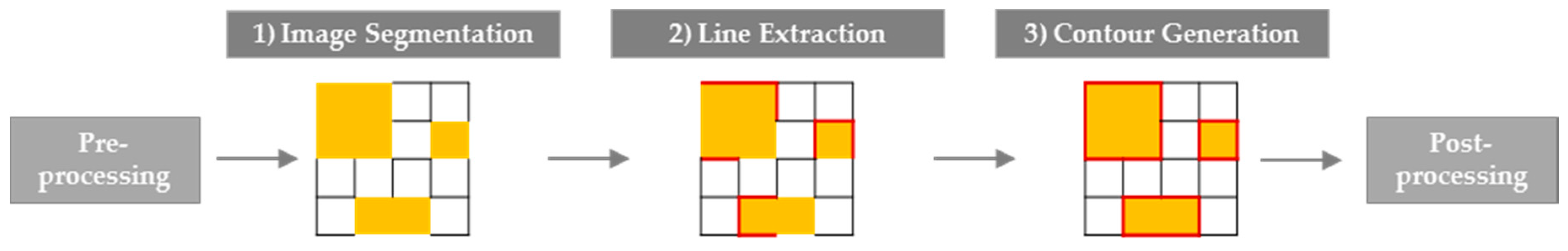

2.2.3. Line Extraction

- ■

- Edge detection can be divided into (i) first and (ii) second order derivative based edge detection. An edge has the one-dimensional shape of a ramp and calculating the derivative of the image can highlight its location. (i) First order derivative based methods detect edges by looking for the maximum and minimum in the first derivative of the image to locate the presence of the highest rate of change between adjacent pixels. The most prominent representative is the Canny edge detection that fulfills the criteria of a good detection and localization quality and the avoidance of multiple responses. These criteria are combined into one optimization criterion and solved using the calculus of variations. The algorithm consists of Gaussian smoothing, gradient filtering, non-maximum suppression and hysteresis thresholding [161]. Further representatives based on first order derivatives are the Robert’s cross, Sobel, Kirsch and Prewitt operators; (ii) Second order derivative based methods detect edges by searching for zero crossings in the second derivative of the image to find edges. The most prominent representative is the Laplacian of Gaussian, which highlights regions of rapid intensity change. The algorithm applies a Gaussian smoothing filter, followed by a derivative operation [162,163].

- ■

- Straight line extraction is mostly done with the Hough transform. This is a connected component analysis for line, circle and ellipse detection in a parameter space, referred to as Hough space. Each candidate object point is transformed into Hough space, in order to detect clusters within that space that represent the object to be detected. The standard Hough transform detects analytic curves, while a generalized Hough transform can be used to detect arbitrary shaped templates [164]. As an alternative, the Line Segment Detector (LSD) algorithm could be applied. For this method, the gradient orientation that represents the local direction of the intensity value, and the global context of the intensity variations are utilized to group pixels into line-support regions and to determine the location and properties of edges [84]. The method is applied for line extraction in [165,166]. The visualization in Figure 9 is based on source code provided in [166].

2.2.4. Contour Generation

- (i)

- A human operator outlines a small segment of the feature to be extracted. Then, a line tracking algorithm recursively predicts feature characteristics, measures these with profile matching and updates the feature outline respectively. The process continues until the profile matching fails. Perceptual grouping, explained in the following, can be used to group feature characteristics. Case studies that apply such line tracking algorithms can be found in [174,178,179].

- (ii)

- Instead of outlining a small segment of the feature to be extracted, the human operator can also provide a rough outline of the entire feature. Then, an algorithm applies a deformable template and refines this initial template to fit the contour of the feature to be extracted. Snakes, which are explained in the following, are an example for this procedure.

- ■

- Perceptual grouping is the ability to impose structural organization on spatial data based on a set of principles namely proximity, similarity, closure, continuation, symmetry, common regions and connectedness. If elements are close together, similar to one another, form a closed contour, or move in the same direction, then they tend to be grouped perceptually. This allows to group fragmented line segments to generate an optimized continuous contour [180]. Perceptual grouping is applied under various names such as line grouping, linking, merging or connection in the case studies listed in Table 3.

- ■

- Snakes also referred to as active contours are defined as elastic curves that dynamically adapt a vector contour to a region of interest by applying energy minimization techniques that express geometric and photometric constraints. The active contour is a set of points that aims to continuously enclose the feature to be extracted [181]. They are listed here, even though they could also be applied in previous steps, such as image segmentation [112,117]. In this step, they are applied to refine the geometrical outline of extracted features [80,131,135].

2.2.5. Postprocessing

2.3. Accuracy Assessment Methods

- ■

- The completeness measures the percentage of the reference data which is explained by the extracted data, i.e., the percentage of the reference data which could be extracted. The value ranges from 0 to 1, with 1 being the optimum value.

- ■

- The correctness represents the percentage of correctly extracted data, i.e., the percentage of the extraction, which is in accordance with the reference data. The value ranges from 0 to 1, with 1 being the optimum value.

- ■

- The redundancy represents the percentage to which the correct extraction is redundant, i.e., extracted features overlap themselves. The value ranges from 0 to 1, with 0 being the optimum value.

- ■

- The Root-Mean Square (RMS) difference expresses the average distance between the matched extracted and the matched reference data, i.e., the geometrical accuracy potential of the extracted data. The optimum value for RMS is 0.

3. Discussion

3.1. UAV-based Cadastral Mapping

3.2. Cadastral Boundary Characteristics

3.3. Feature Extraction Methods

- (i)

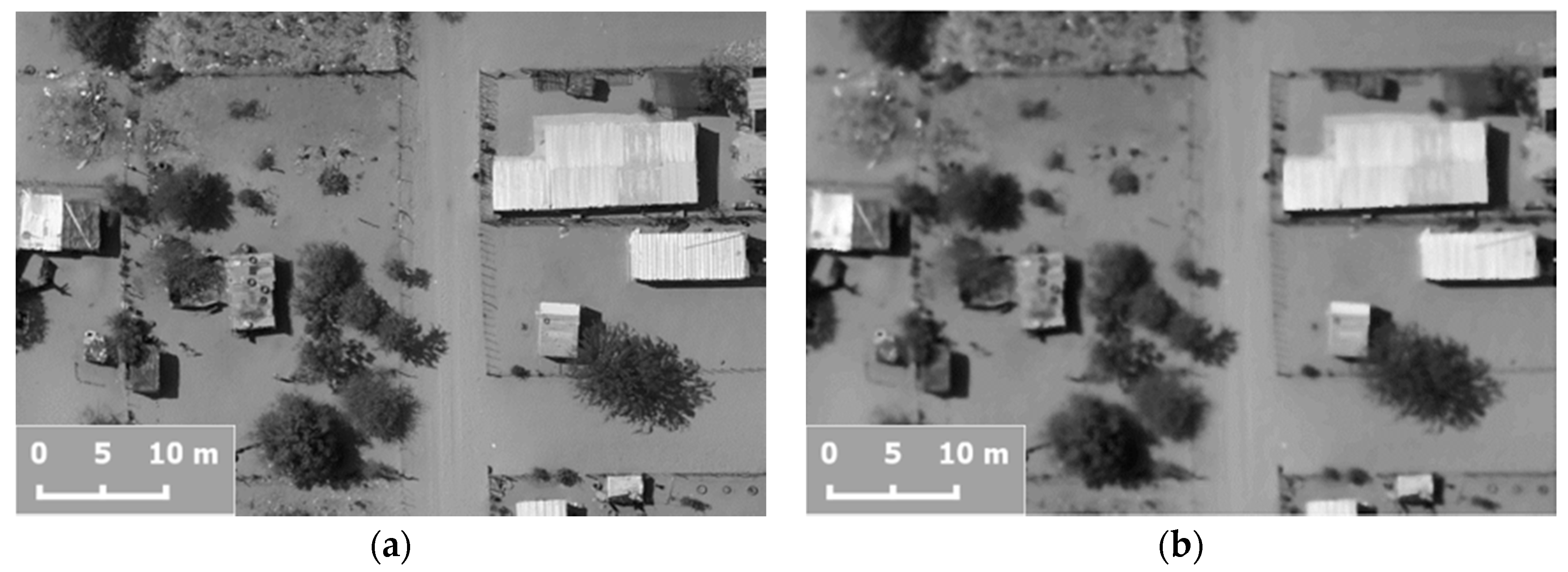

- Preprocessing steps that include image enhancement and filtering are often applied in case studies that use high-resolution data below 1 m [118,121,126]. This might be due to the large amount of detail in such images, which can be reduced with filtering techniques. Without such preprocessing, oversegmentation might result—as well as too many non-relevant edges obtained through edge detection. One drawback of applying such preprocessing steps is the need to set thresholds for image enhancement and filtering. Standard parameters might lead to valuable results, but might also erase crucial image details. Selecting parameters hinders the automation of the entire workflow.

- (ii)

- Image segmentation is listed as a crucial first step for linear feature extraction in corresponding review papers [64,77,109]. Overall, image segments distinct in color, texture, spectral homogeneity, size, shape, compactness and scale are generally better distinguishable than images that are inhomogeneous in terms of these aspects. The methods reviewed in this paper are classified into supervised and unsupervised approaches. More studies apply an unsupervised approach, which might be due to their higher degree of automation. The supervised approaches taken from machine learning suffer from their extensive input requirements, such as the definition of features with corresponding object descriptors, labeling of objects, training a classifier and applying the trained classifier on test data [86,147]. Furthermore, the ability of machine learning approaches to classify an image into categories of different labels is not necessarily required in the scope of this workflow step, since the image only needs to be segmented. Machine learning approaches, such as CNNs, can also be employed in further workflow steps, i.e., for edge detection as shown in [224]. A combination of edge detection and image segmentation based on machine learning is proposed in [225]. A large number of case studies are based on SVM [111]. SVMs are appealing due to their ability to generalize well from a limited amount and quality of training data, which appears to be a common limitation in remote sensing. Mountrakis et al. found that SVMs can be based on fewer training data, compared to other approaches. However, they state that selecting parameters such as kernel size strongly affects the results and is frequently solved in a trial-and-error approach, which again limits the automation [111]. Furthermore, SVMs are not optimized for noise removal, which makes image preprocessing indispensable for high-resolution data. Approaches such as the Bag-of-Words framework, as applied in [226], have the advantage of automating the feature selection and labeling, before applying a supervised learning algorithm. Further state-of-the-art approaches including AdaBoost and random forest are discussed in [90].

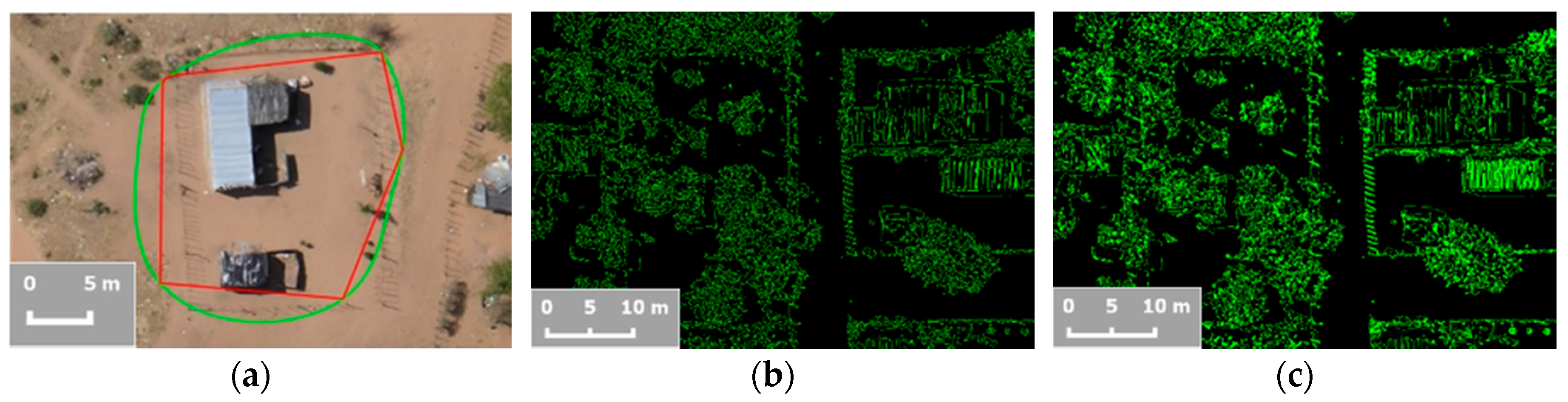

- (iii)

- Line extraction makes up the majority of case studies on linear feature extraction, with Canny edge detection being the most prominent approach. The Canny edge detector is capable of reducing noise while a second order derivative such as the Laplacian of Gaussian that responds to transitions in intensity, is sensitive to noise. When comparing different edge detection approaches, it has been shown that the Canny edge detector performs better than the Laplacian of Gaussian and first order derivatives as the Robert’s cross, Sobel and Prewitt operator [162,163]. In terms of line extraction, the Hough transform is the most commonly used method. The LSD appears as an alternative that requires no parameter tuning while giving accurate results.

- (iv)

- Contour Generation is not represented in all case studies, since it is not as essential as the two previous workflow steps for linear feature extraction. The exceptions are case studies on road network extraction, which name contour generation, especially based on snakes, as a crucial workflow step [77,221]. Snakes deliver most accurate results when initialized with seed points close to features to be extracted [193]. Furthermore, these methods require parameter tuning in terms of the energy field, which limits their automation [80]. Perceptual grouping is rarely applied in the case studies investigated, especially not in those based on high-resolution data.

- (v)

- Postprocessing is utilized more often than preprocessing. Especially morphological filtering is applied in the majority of case studies. Admittedly, it is not always employed as a form of postprocessing to improve the final output, but equally during the workflow to smooth the result of a workflow step before further processing [73,120,121,126]. When applied at the end of the workflow in case studies on road extraction, morphological filtering is often combined with skeletons to extract the vectorized centerline of the road [79,125,142,196,198].

3.4. Accuracy Assessment Methods

4. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

Abbreviations

| CNN | Convolutional Neural Networks |

| DSM | Digital Surface Model |

| FIG | International Federation of Surveyors |

| FN | False Negatives |

| FP | False Positives |

| GIS | Geographical Information System |

| GNSS | Global Navigation Satellite System |

| GSD | Ground Sample Distance |

| HRSI | High-Resolution Satellite Imagery |

| IoU | Intersection over Union |

| ISPRS | International Society for Photogrammetry and Remote Sensing |

| LSD | Line Segment Detector |

| MRF | Markov Random Field |

| OBIA | Object Based Image Interpretation |

| RMS | Root-Mean Square |

| SLIC | Simple Linear Iterative Clustering |

| SVM | Support Vector Machine |

| TP | True Positives |

| UAV | Unmanned Aerial Vehicle |

References

- Colomina, I.; Molina, P. Unmanned aerial systems for photogrammetry and remote sensing: A review. ISPRS J. Photogramm. Remote Sens. 2014, 92, 79–97. [Google Scholar] [CrossRef]

- Harwin, S.; Lucieer, A. Assessing the accuracy of georeferenced point clouds produced via multi-view stereopsis from unmanned aerial vehicle (UAV) imagery. Remote Sens. 2012, 4, 1573–1599. [Google Scholar] [CrossRef]

- Gerke, M.; Przybilla, H.-J. Accuracy analysis of photogrammetric UAV image blocks: Influence of onboard RTK-GNSS and cross flight patterns. Photogramm. Fernerkund. Geoinf. 2016, 14, 17–30. [Google Scholar] [CrossRef]

- Nex, F.; Remondino, F. UAV for 3D mapping applications: A review. Appl. Geomat. 2014, 6, 1–15. [Google Scholar] [CrossRef]

- Tahar, K.N.; Ahmad, A. An evaluation on fixed wing and multi-rotor UAV images using photogrammetric image processing. Int. J. Comput. Electr. Autom. Control Inf. Eng. 2013, 7, 48–52. [Google Scholar]

- Toth, C.; Jóźków, G. Remote sensing platforms and sensors: A survey. ISPRS J. Photogramm. Remote Sens. 2016, 115, 22–36. [Google Scholar] [CrossRef]

- Eisenbeiss, H.; Sauerbier, M. Investigation of UAV systems and flight modes for photogrammetric applications. Photogramm. Rec. 2011, 26, 400–421. [Google Scholar] [CrossRef]

- Fernández-Hernandez, J.; González-Aguilera, D.; Rodríguez-Gonzálvez, P.; Mancera-Taboada, J. Image-based modelling from Unmanned Aerial Vehicle (UAV) photogrammetry: An effective, low-cost tool for archaeological applications. Archaeometry 2015, 57, 128–145. [Google Scholar] [CrossRef]

- Zhang, C.; Kovacs, J.M. The application of small unmanned aerial systems for precision agriculture: A review. Precis. Agric. 2012, 13, 693–712. [Google Scholar] [CrossRef]

- Berni, J.; Zarco-Tejada, P.; Suárez, L.; González-Dugo, V.; Fereres, E. Remote sensing of vegetation from UAV platforms using lightweight multispectral and thermal imaging sensors. Proc. ISPRS 2009, 38, 22–29. [Google Scholar]

- Puri, A.; Valavanis, K.; Kontitsis, M. Statistical profile generation for traffic monitoring using real-time UAV based video data. In Proceedings of the Mediterranean Conference on Control & Automation, Athens, Greece, 27–29 June 2007.

- Bendea, H.; Boccardo, P.; Dequal, S.; Giulio Tonolo, F.; Marenchino, D.; Piras, M. Low cost UAV for post-disaster assessment. Proc. ISPRS 2008, 37, 1373–1379. [Google Scholar]

- Chou, T.-Y.; Yeh, M.-L.; Chen, Y.C.; Chen, Y.H. Disaster monitoring and management by the unmanned aerial vehicle technology. Proc. ISPRS 2010, 35, 137–142. [Google Scholar]

- Irschara, A.; Kaufmann, V.; Klopschitz, M.; Bischof, H.; Leberl, F. Towards fully automatic photogrammetric reconstruction using digital images taken from UAVs. Proc. ISPRS 2010, 2010, 1–6. [Google Scholar]

- Binns, B.O.; Dale, P.F. Cadastral Surveys and Records of Rights in Land Administration. Available online: http://www.fao.org/docrep/006/v4860e/v4860e03.htm (accessed on 10 June 2016).

- Williamson, I.; Enemark, S.; Wallace, J.; Rajabifard, A. Land Administration for Sustainable Development; ESRI Press Academic: Redlands, CA, USA, 2010. [Google Scholar]

- Alemie, B.K.; Bennett, R.M.; Zevenbergen, J. Evolving urban cadastres in Ethiopia: The impacts on urban land governance. Land Use Policy 2015, 42, 695–705. [Google Scholar] [CrossRef]

- Nations, U. Land Administrtation in the UNECE Region: Development Trends and Main Principles; UNECE Information Service: Geneva, Switzerland, 2005. [Google Scholar]

- Kelm, K. UAVs revolutionise land administration. GIM Int. 2014, 28, 35–37. [Google Scholar]

- Enemark, S.; Bell, K.C.; Lemmen, C.; McLaren, R. Fit-For-Purpose Land Administration; International Federation of Surveyors: Frederiksberg, Denmark, 2014. [Google Scholar]

- Zevenbergen, J.; Augustinus, C.; Antonio, D.; Bennett, R. Pro-poor land administration: Principles for recording the land rights of the underrepresented. Land Use Policy 2013, 31, 595–604. [Google Scholar] [CrossRef]

- Zevenbergen, J.; De Vries, W.; Bennett, R.M. Advances in Responsible Land Administration; CRC Press: Padstow, UK, 2015. [Google Scholar]

- Maurice, M.J.; Koeva, M.N.; Gerke, M.; Nex, F.; Gevaert, C. A photogrammetric approach for map updating using UAV in Rwanda. In Proceedings of the GeoTechRwanda, Kigali, Rwanda, 18–20 November 2015.

- Mumbone, M.; Bennett, R.; Gerke, M.; Volkmann, W. Innovations in boundary mapping: Namibia, customary lands and UAVs. In Proceedings of the World Bank Conference on Land and Poverty, Washington, DC, USA, 23–27 March 2015.

- Volkmann, W.; Barnes, G. Virtual surveying: Mapping and modeling cadastral boundaries using Unmanned Aerial Systems (UAS). In Proceedings of the FIG Congress: Engaging the Challenges—Enhancing the Relevance, Kuala Lumpur, Malaysia, 16–21 June 2014; pp. 1–13.

- Barthel, K. Linking Land Policy, Geospatial Technology and Community Participation. Available online: http://thelandalliance.org/2015/06/early-lessons-learned-from-testing-uavs-for-geospatial-data-collection-and-participatory-mapping/ (accessed on 10 June 2016).

- Pajares, G. Overview and current status of remote sensing applications based on unmanned aerial vehicles (UAVs). Photogramm. Eng. Remote Sens. 2015, 81, 281–329. [Google Scholar] [CrossRef]

- Everaerts, J. The use of unmanned aerial vehicles (UAVs) for remote sensing and mapping. Proc. ISPRS 2008, 37, 1187–1192. [Google Scholar]

- Remondino, F.; Barazzetti, L.; Nex, F.; Scaioni, M.; Sarazzi, D. UAV photogrammetry for mapping and 3D modeling—Current status and future perspectives. Proc. ISPRS 2011, 38, C22. [Google Scholar] [CrossRef]

- Watts, A.C.; Ambrosia, V.G.; Hinkley, E.A. Unmanned aircraft systems in remote sensing and scientific research: Classification and considerations of use. Remote Sens. 2012, 4, 1671–1692. [Google Scholar] [CrossRef]

- Ali, Z.; Tuladhar, A.; Zevenbergen, J. An integrated approach for updating cadastral maps in Pakistan using satellite remote sensing data. Int. J. Appl. Earth Obs. Geoinform. 2012, 18, 386–398. [Google Scholar] [CrossRef]

- Corlazzoli, M.; Fernandez, O. In SPOT 5 cadastral validation project in Izabal, Guatemala. Proc. ISPRS 2004, 35, 291–296. [Google Scholar]

- Konecny, G. Cadastral mapping with earth observation technology. In Geospatial Technology for Earth Observation; Li, D., Shan, J., Gong, J., Eds.; Springer: Boston, MA, USA, 2010; pp. 397–409. [Google Scholar]

- Ondulo, J.-D. High spatial resolution satellite imagery for Pid improvement in Kenya. In Proceedings of the FIG Congress: Shaping the Change, Munich, Germany, 8–13 October 2006; pp. 1–9.

- Tuladhar, A. Spatial cadastral boundary concepts and uncertainty in parcel-based information systems. Proc. ISPRS 1996, 31, 890–893. [Google Scholar]

- Christodoulou, K.; Tsakiri-Strati, M. Combination of satellite image Pan IKONOS-2 with GPS in cadastral applications. In Proceedings of the Workshop on Spatial Information Management for Sustainable Real Estate Market, Athens, Greece, 28–30 May 2003.

- Alkan, M.; Marangoz, M. Creating cadastral maps in rural and urban areas of using high resolution satellite imagery. Appl. Geo-Inform. Soc. Environ. 2009, 2009, 89–95. [Google Scholar]

- Törhönen, M.P. Developing land administration in Cambodia. Comput. Environ. Urban Syst. 2001, 25, 407–428. [Google Scholar] [CrossRef]

- Greenwood, F. Mapping in practise. In Drones and Aerial Observation; New America: New York, NY, USA, 2015; pp. 49–55. [Google Scholar]

- Manyoky, M.; Theiler, P.; Steudler, D.; Eisenbeiss, H. Unmanned aerial vehicle in cadastral applications. Proc. ISPRS 2011, 3822, 57. [Google Scholar] [CrossRef]

- Mesas-Carrascosa, F.J.; Notario-García, M.D.; de Larriva, J.E.M.; de la Orden, M.S.; Porras, A.G.-F. Validation of measurements of land plot area using UAV imagery. Int. J. Appl. Earth Obs. Geoinform. 2014, 33, 270–279. [Google Scholar] [CrossRef]

- Rijsdijk, M.; Hinsbergh, W.H.M.V.; Witteveen, W.; Buuren, G.H.M.; Schakelaar, G.A.; Poppinga, G.; Persie, M.V.; Ladiges, R. Unmanned aerial systems in the process of juridical verification of cadastral border. Proc. ISPRS 2013, 61, 325–331. [Google Scholar] [CrossRef]

- Van Hinsberg, W.; Rijsdijk, M.; Witteveen, W. UAS for cadastral applications: Testing suitability for boundary identification in urban areas. GIM Int. 2013, 27, 17–21. [Google Scholar]

- Cunningham, K.; Walker, G.; Stahlke, E.; Wilson, R. Cadastral audit and assessments using unmanned aerial systems. Proc. ISPRS 2011, 2011, 1–4. [Google Scholar] [CrossRef]

- Cramer, M.; Bovet, S.; Gültlinger, M.; Honkavaara, E.; McGill, A.; Rijsdijk, M.; Tabor, M.; Tournadre, V. On the use of RPAS in national mapping—The EuroSDR point of view. Proc. ISPRS 2013, 11, 93–99. [Google Scholar] [CrossRef]

- Haarbrink, R. UAS for geo-information: Current status and perspectives. Proc. ISPRS 2011, 35, 1–6. [Google Scholar] [CrossRef]

- Eyndt, T.; Volkmann, W. UAS as a tool for surveyors: From tripods and trucks to virtual surveying. GIM Int. 2013, 27, 20–25. [Google Scholar]

- Barnes, G.; Volkmann, W. High-Resolution mapping with unmanned aerial systems. Surv. Land Inf. Sci. 2015, 74, 5–13. [Google Scholar]

- Heipke, C.; Woodsford, P.A.; Gerke, M. Updating geospatial databases from images. In ISPRS Congress Book; Taylor & Francis Group: London, UK, 2008; pp. 355–362. [Google Scholar]

- Jazayeri, I.; Rajabifard, A.; Kalantari, M. A geometric and semantic evaluation of 3D data sourcing methods for land and property information. Land Use Policy 2014, 36, 219–230. [Google Scholar] [CrossRef]

- Zevenbergen, J.; Bennett, R. The visible boundary: More than just a line between coordinates. In Proceedings of the GeoTechRwanda, Kigali, Rwanda, 17–25 May 2015.

- Bennett, R.; Kitchingman, A.; Leach, J. On the nature and utility of natural boundaries for land and marine administration. Land Use Policy 2010, 27, 772–779. [Google Scholar] [CrossRef]

- Smith, B. On drawing lines on a map. In Spatial Information Theory: A Theoretical Basis for GIS; Springer: Berlin/Heidelberg, Germany, 1995; pp. 475–484. [Google Scholar]

- Lengoiboni, M.; Bregt, A.K.; van der Molen, P. Pastoralism within land administration in Kenya—The missing link. Land Use Policy 2010, 27, 579–588. [Google Scholar] [CrossRef]

- Fortin, M.-J.; Olson, R.; Ferson, S.; Iverson, L.; Hunsaker, C.; Edwards, G.; Levine, D.; Butera, K.; Klemas, V. Issues related to the detection of boundaries. Landsc. Ecol. 2000, 15, 453–466. [Google Scholar] [CrossRef]

- Fortin, M.-J.; Drapeau, P. Delineation of ecological boundaries: Comparison of approaches and significance tests. Oikos 1995, 72, 323–332. [Google Scholar] [CrossRef]

- Richardson, K.A.; Lissack, M.R. On the status of boundaries, both natural and organizational: A complex systems perspective. J. Complex. Issues Organ. Manag. Emerg. 2001, 3, 32–49. [Google Scholar] [CrossRef]

- Fagan, W.F.; Fortin, M.-J.; Soykan, C. Integrating edge detection and dynamic modeling in quantitative analyses of ecological boundaries. BioScience 2003, 53, 730–738. [Google Scholar] [CrossRef]

- Dale, P.; McLaughlin, J. Land Administration; University Press: Oxford, UK, 1999. [Google Scholar]

- Cay, T.; Corumluoglu, O.; Iscan, F. A study on productivity of satellite images in the planning phase of land consolidation projects. Proc. ISPRS 2004, 32, 1–6. [Google Scholar]

- Barnes, G.; Moyer, D.D.; Gjata, G. Evaluating the effectiveness of alternative approaches to the surveying and mapping of cadastral parcels in Albania. Comput. Environ. Urban Syst. 1994, 18, 123–131. [Google Scholar] [CrossRef]

- Lemmen, C.; Zevenbergen, J.A.; Lengoiboni, M.; Deininger, K.; Burns, T. First experiences with high resolution imagery based adjudication approach for social tenure domain model in Ethiopia. In Proceedings of the FIG-World Bank Conference, Washington, DC, USA, 31 September 2009.

- Rao, S.; Sharma, J.; Rajashekar, S.; Rao, D.; Arepalli, A.; Arora, V.; Singh, R.; Kanaparthi, M. Assessing usefulness of High-Resolution Satellite Imagery (HRSI) for re-survey of cadastral maps. Proc. ISPRS 2014, 2, 133–143. [Google Scholar] [CrossRef]

- Quackenbush, L.J. A review of techniques for extracting linear features from imagery. Photogramm. Eng. Remote Sens. 2004, 70, 1383–1392. [Google Scholar] [CrossRef]

- Edwards, G.; Lowell, K.E. Modeling uncertainty in photointerpreted boundaries. Photogramm. Eng. Remote Sens. 1996, 62, 377–390. [Google Scholar]

- Aien, A.; Kalantari, M.; Rajabifard, A.; Williamson, I.; Bennett, R. Advanced principles of 3D cadastral data modelling. In Proceedings of the 2nd International Workshop on 3D Cadastres, Delft, The Netherlands, 16–18 November 2011.

- Ali, Z.; Ahmed, S. Extracting parcel boundaries from satellite imagery for a land information system. In Proceedings of the International Conference on Recent Advances in Space Technologies (RAST), Istanbul, Turkey, 12–14 June 2013.

- Lin, G.; Shen, C.; Reid, I. Efficient Piecewise Training of Deep Structured Models for Semantic Segmentation. Available online: http://arxiv.org/abs/1504.01013 (accessed on 12 August 2016).

- Hay, G.J.; Blaschke, T.; Marceau, D.J.; Bouchard, A. A comparison of three image-object methods for the multiscale analysis of landscape structure. ISPRS J. Photogramm. Remote Sens. 2003, 57, 327–345. [Google Scholar] [CrossRef]

- Blaschke, T. Object based image analysis for remote sensing. ISPRS J. Photogramm. Remote Sens. 2010, 65, 2–16. [Google Scholar] [CrossRef]

- Blaschke, T.; Hay, G.J.; Kelly, M.; Lang, S.; Hofmann, P.; Addink, E.; Queiroz Feitosa, R.; van der Meer, F.; van der Werff, H.; van Coillie, F.; et al. Geographic object-based image analysis—Towards a new paradigm. ISPRS J. Photogramm. Remote Sens. 2014, 87, 180–191. [Google Scholar] [CrossRef] [PubMed]

- Benz, U.C.; Hofmann, P.; Willhauck, G.; Lingenfelder, I.; Heynen, M. Multi-resolution, object-oriented fuzzy analysis of remote sensing data for GIS-ready information. ISPRS J. Photogramm. Remote Sens. 2004, 58, 239–258. [Google Scholar] [CrossRef]

- Babawuro, U.; Beiji, Z. Satellite imagery cadastral features extractions using image processing algorithms: A viable option for cadastral science. Int. J. Comput. Sci. Issues 2012, 9, 30–38. [Google Scholar]

- Luhmann, T.; Robson, S.; Kyle, S.; Harley, I. Close Range Photogrammetry: Principles, Methods and Applications; Whittles: Dunbeath, UK, 2006. [Google Scholar]

- Selvarajan, S.; Tat, C.W. Extraction of man-made features from remote sensing imageries by data fusion techniques. In Proceedings of the Asian Conference on Remote Sensing, Singapore, 5–9 November 2001.

- Wang, J.; Zhang, Q. Applicability of a gradient profile algorithm for road network extraction—Sensor, resolution and background considerations. Can. J. Remote Sens. 2000, 26, 428–439. [Google Scholar] [CrossRef]

- Singh, P.P.; Garg, R.D. In road detection from remote sensing images using impervious surface characteristics: Review and implication. Proc. ISPRS 2014, 40, 955–959. [Google Scholar] [CrossRef]

- Trinder, J.C.; Wang, Y. Automatic road extraction from aerial images. Digit. Signal Process. 1998, 8, 215–224. [Google Scholar] [CrossRef]

- Jin, H.; Feng, Y.; Li, B. Road network extraction with new vectorization and pruning from high-resolution RS images. In Proceedings of the International Conference on Image and Vision Computing (IVCNZ), Christchurch, New Zealand, 26–28 November 2008; pp. 1–6.

- Wolf, B.-M.; Heipke, C. Automatic extraction and delineation of single trees from remote sensing data. Mach. Vis. Appl. 2007, 18, 317–330. [Google Scholar] [CrossRef]

- Haralick, R.M.; Shapiro, L.G. Image segmentation techniques. Comput. Vis. Graph. Image Process. 1985, 29, 100–132. [Google Scholar] [CrossRef]

- Pal, N.R.; Pal, S.K. A review on image segmentation techniques. Pattern Recognit. 1993, 26, 1277–1294. [Google Scholar] [CrossRef]

- Sonka, M.; Hlavac, V.; Boyle, R. Image Processing, Analysis, and Machine Vision; Chapman & Hal: London, UK, 2014. [Google Scholar]

- Burns, J.B.; Hanson, A.R.; Riseman, E.M. Extracting straight lines. IEEE Trans. Pattern Anal. Mach. Intell. 1986, PAMI-8, 425–455. [Google Scholar] [CrossRef]

- Sharifi, M.; Fathy, M.; Mahmoudi, M.T. A classified and comparative study of edge detection algorithms. In Proceedings of the International Conference on Information Technology: Coding and Computing, Las Vegas, NV, USA, 8–10 April 2002.

- Martin, D.R.; Fowlkes, C.C.; Malik, J. Learning to detect natural image boundaries using local brightness, color, and texture cues. IEEE Trans. Pattern Anal. Mach. Intell. 2004, 26, 530–549. [Google Scholar] [CrossRef] [PubMed]

- Chen, J.; Dowman, I.; Li, S.; Li, Z.; Madden, M.; Mills, J.; Paparoditis, N.; Rottensteiner, F.; Sester, M.; Toth, C.; et al. Information from imagery: ISPRS scientific vision and research agenda. ISPRS J. Photogramm. Remote Sens. 2016, 115, 3–21. [Google Scholar] [CrossRef]

- Nixon, M. Feature Extraction & Image Processing; Elsevier: Oxford, UK, 2008. [Google Scholar]

- Petrou, M.; Petrou, C. Image Processing: The Fundamentals, 2nd ed.; John Wiley & Sons: West Sussex, UK, 2010. [Google Scholar]

- Cheng, G.; Han, J. A survey on object detection in optical remote sensing images. ISPRS J. Photogramm. Remote Sens. 2016, 117, 11–28. [Google Scholar] [CrossRef]

- Steger, C.; Ulrich, M.; Wiedemann, C. Machine Vision Algorithms and Applications; Wiley-VCH: Weinheim, Germany, 2008. [Google Scholar]

- Scikit. Available online: http://www.scikit-image.org (accessed on 10 June 2016).

- OpenCV. Available online: http://www.opencv.org (accessed on 10 June 2016).

- MathWorks. Available online: http://www.mathworks.com (accessed on 10 June 2016).

- VLFeat. Available online: http://www.vlfeat.org (accessed on 10 June 2016).

- Egmont-Petersen, M.; de Ridder, D.; Handels, H. Image processing with neural networks—A review. Pattern Recognit. 2002, 35, 2279–2301. [Google Scholar] [CrossRef]

- Buades, A.; Coll, B.; Morel, J.-M. A review of image denoising algorithms, with a new one. Multiscale Model. Simul. 2005, 4, 490–530. [Google Scholar] [CrossRef]

- Perona, P.; Malik, J. Scale-space and edge detection using anisotropic diffusion. IEEE Trans. Pattern Anal. Mach. Intell 1990, 12, 629–639. [Google Scholar] [CrossRef]

- Black, M.J.; Sapiro, G.; Marimont, D.H.; Heeger, D. Robust anisotropic diffusion. IEEE Trans. Image Process. 1998, 7, 421–432. [Google Scholar] [CrossRef] [PubMed]

- Tan, D. Image enhancement based on adaptive median filter and Wallis filter. In Proceedings of the National Conference on Electrical, Electronics and Computer Engineering, Xi’an, China, 12–13 December 2015.

- Schiewe, J. Segmentation of high-resolution remotely sensed data-concepts, applications and problems. Proc. ISPRS 2002, 34, 380–385. [Google Scholar]

- Dey, V.; Zhang, Y.; Zhong, M. A review on image segmentation techniques with remote sensing perspective. Proc. ISPRS 2010, 38, 31–42. [Google Scholar]

- Cheng, H.-D.; Jiang, X.; Sun, Y.; Wang, J. Color image segmentation: Advances and prospects. Pattern Recognit. 2001, 34, 2259–2281. [Google Scholar] [CrossRef]

- Felzenszwalb, P.F.; Huttenlocher, D.P. Efficient graph-based image segmentation. Int. J. Comput. Vis. 2004, 59, 167–181. [Google Scholar] [CrossRef]

- Francisco, J.E.; Allan, D.J. Benchmarking image segmentation algorithms. Int. J. Comput. Vis. 2009, 85, 167–181. [Google Scholar]

- Achanta, R.; Shaji, A.; Smith, K.; Lucchi, A.; Fua, P.; Susstrunk, S. SLIC superpixels compared to state-of-the-art superpixel methods. IEEE Trans. Pattern Anal. Mach. Intell. 2012, 34, 2274–2282. [Google Scholar] [CrossRef] [PubMed]

- Ishida, T.; Itagaki, S.; Sasaki, Y.; Ando, H. Application of wavelet transform for extracting edges of paddy fields from remotely sensed images. Int. J. Remote Sens. 2004, 25, 347–357. [Google Scholar] [CrossRef]

- Ma, L.; Cheng, L.; Li, M.; Liu, Y.; Ma, X. Training set size, scale, and features in geographic object-based image analysis of very high resolution unmanned aerial vehicle imagery. ISPRS J. Photogramm. Remote Sens. 2015, 102, 14–27. [Google Scholar] [CrossRef]

- Liu, Y.; Li, M.; Mao, L.; Xu, F.; Huang, S. Review of remotely sensed imagery classification patterns based on object-oriented image analysis. Chin. Geogr. Sci. 2006, 16, 282–288. [Google Scholar] [CrossRef]

- Blaschke, T.; Lang, S.; Lorup, E.; Strobl, J.; Zeil, P. Object-oriented image processing in an integrated GIS/remote sensing environment and perspectives for environmental applications. Environ. Inf. Plan. Politics Pub. 2000, 2, 555–570. [Google Scholar]

- Mountrakis, G.; Im, J.; Ogole, C. Support vector machines in remote sensing: A review. ISPRS J. Photogramm. Remote Sens. 2011, 66, 247–259. [Google Scholar] [CrossRef]

- Vakilian, A.A.; Vakilian, K.A. A new satellite image segmentation enhancement technique for weak image boundaries. Ann. Fac. Eng. Hunedoara 2012, 10, 239–243. [Google Scholar]

- Radoux, J.; Defourny, P. A quantitative assessment of boundaries in automated forest stand delineation using very high resolution imagery. Remote Sens. Environ. 2007, 110, 468–475. [Google Scholar] [CrossRef]

- Mueller, M.; Segl, K.; Kaufmann, H. Edge-and region-based segmentation technique for the extraction of large, man-made objects in high-resolution satellite imagery. Pattern Recognit. 2004, 37, 1619–1628. [Google Scholar] [CrossRef]

- Wang, Y.; Li, X.; Zhang, L.; Zhang, W. Automatic road extraction of urban area from high spatial resolution remotely sensed imagery. Proc. ISPRS 2008, 86, 1–25. [Google Scholar]

- Kumar, M.; Singh, R.; Raju, P.; Krishnamurthy, Y. Road network extraction from high resolution multispectral satellite imagery based on object oriented techniques. Proc. ISPRS 2014, 2, 107–110. [Google Scholar] [CrossRef]

- Butenuth, M. Segmentation of imagery using network snakes. Photogramm. Fernerkund. Geoinf. 2007, 2007, 1–7. [Google Scholar]

- Vetrivel, A.; Gerke, M.; Kerle, N.; Vosselman, G. In segmentation of UAV-based images incorporating 3D point cloud information. Proc. ISPRS 2015, 40, 261–268. [Google Scholar] [CrossRef]

- Fernandez Galarreta, J.; Kerle, N.; Gerke, M. UAV-based urban structural damage assessment using object-based image analysis and semantic reasoning. Nat. Hazards Earth Syst. Sci. 2015, 15, 1087–1101. [Google Scholar] [CrossRef]

- Grigillo, D.; Kanjir, U. Urban object extraction from digital surface model and digital aerial images. Proc. ISPRS 2012, 22, 215–220. [Google Scholar] [CrossRef]

- Awad, M.M. A morphological model for extracting road networks from high-resolution satellite images. J. Eng. 2013, 2013, 1–9. [Google Scholar] [CrossRef]

- Ünsalan, C.; Boyer, K.L. A system to detect houses and residential street networks in multispectral satellite images. Comput. Vis. Image Underst. 2005, 98, 423–461. [Google Scholar] [CrossRef]

- Sohn, G.; Dowman, I. Building extraction using Lidar DEMs and IKONOS images. Proc. ISPRS 2003, 34, 37–43. [Google Scholar]

- Mena, J.B. Automatic vectorization of segmented road networks by geometrical and topological analysis of high resolution binary images. Knowl. Based Syst. 2006, 19, 704–718. [Google Scholar] [CrossRef]

- Mena, J.B.; Malpica, J.A. An automatic method for road extraction in rural and semi-urban areas starting from high resolution satellite imagery. Pattern Recognit. Lett. 2005, 26, 1201–1220. [Google Scholar] [CrossRef]

- Jin, X.; Davis, C.H. An integrated system for automatic road mapping from high-resolution multi-spectral satellite imagery by information fusion. Inf. Fusion 2005, 6, 257–273. [Google Scholar] [CrossRef]

- Chen, T.; Wang, J.; Zhang, K. A wavelet transform based method for road extraction from high-resolution remotely sensed data. In Proceedings of the IEEE International Geoscience and Remote Sensing Symposium (IGARSS), Toronto, ON, Canada, 24–28 June 2002.

- Liow, Y.-T.; Pavlidis, T. Use of shadows for extracting buildings in aerial images. Comput. Vis. Graph. Image Process. 1990, 49, 242–277. [Google Scholar] [CrossRef]

- Liu, H.; Jezek, K. Automated extraction of coastline from satellite imagery by integrating Canny edge detection and locally adaptive thresholding methods. Int. J. Remote Sens. 2004, 25, 937–958. [Google Scholar] [CrossRef]

- Wiedemann, C.; Heipke, C.; Mayer, H.; Hinz, S. Automatic extraction and evaluation of road networks from MOMS-2P imagery. Proc. ISPRS 1998, 32, 285–291. [Google Scholar]

- Tiwari, P.S.; Pande, H.; Kumar, M.; Dadhwal, V.K. Potential of IRS P-6 LISS IV for agriculture field boundary delineation. J. Appl. Remote Sens. 2009, 3, 1–9. [Google Scholar] [CrossRef]

- Karathanassi, V.; Iossifidis, C.; Rokos, D. A texture-based classification method for classifying built areas according to their density. Int. J. Remote Sens. 2000, 21, 1807–1823. [Google Scholar] [CrossRef]

- Qiaoping, Z.; Couloigner, I. Automatic road change detection and GIS updating from high spatial remotely-sensed imagery. Geo-Spat. Inf. Sci. 2004, 7, 89–95. [Google Scholar] [CrossRef]

- Sharma, O.; Mioc, D.; Anton, F. Polygon feature extraction from satellite imagery based on colour image segmentation and medial axis. Proc. ISPRS 2008, 37, 235–240. [Google Scholar]

- Butenuth, M.; Straub, B.-M.; Heipke, C. Automatic extraction of field boundaries from aerial imagery. In Proceedings of the KDNet Symposium on Knowledge-Based Services for the Public Sector, Bonn, Germany, 3–4 June 2004.

- Stoica, R.; Descombes, X.; Zerubia, J. A Gibbs point process for road extraction from remotely sensed images. Int. J. Comput. Vis. 2004, 57, 121–136. [Google Scholar] [CrossRef]

- Mokhtarzade, M.; Zoej, M.V.; Ebadi, H. Automatic road extraction from high resolution satellite images using neural networks, texture analysis, fuzzy clustering and genetic algorithms. Proc. ISPRS 2008, 37, 549–556. [Google Scholar]

- Zhang, C.; Baltsavias, E.; Gruen, A. Knowledge-based image analysis for 3D road reconstruction. Asian J. Geoinform. 2001, 1, 3–14. [Google Scholar]

- Shao, Y.; Guo, B.; Hu, X.; Di, L. Application of a fast linear feature detector to road extraction from remotely sensed imagery. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2011, 4, 626–631. [Google Scholar] [CrossRef]

- Ding, X.; Kang, W.; Cui, J.; Ao, L. Automatic extraction of road network from aerial images. In Proceedings of the International Symposium on Systems and Control in Aerospace and Astronautics (ISSCAA), Harbin, China, 19–21 January 2006.

- Udomhunsakul, S.; Kozaitis, S.P.; Sritheeravirojana, U. Semi-automatic road extraction from aerial images. Proc. SPIE 2004, 5239, 26–32. [Google Scholar]

- Amini, J.; Saradjian, M.; Blais, J.; Lucas, C.; Azizi, A. Automatic road-side extraction from large scale imagemaps. Int. J. Appl. Earth Obs. Geoinform. 2002, 4, 95–107. [Google Scholar] [CrossRef]

- Drăguţ, L.; Blaschke, T. Automated classification of landform elements using object-based image analysis. Geomorphology 2006, 81, 330–344. [Google Scholar] [CrossRef]

- Saeedi, P.; Zwick, H. Automatic building detection in aerial and satellite images. In Proceedings of the 10th International Conference on Control, Automation, Robotics and Vision, Hanoi, Vietnam, 17–20 December 2008.

- Song, Z.; Pan, C.; Yang, Q. A region-based approach to building detection in densely build-up high resolution satellite image. In Proceedings of the IEEE International Conference on Image Processing, Atlanta, GA, USA, 8–11 October 2006.

- Song, M.; Civco, D. Road extraction using SVM and image segmentation. Photogramm. Eng. Remote Sens. 2004, 70, 1365–1371. [Google Scholar] [CrossRef]

- Momm, H.; Gunter, B.; Easson, G. Improved feature extraction from high-resolution remotely sensed imagery using object geometry. Proc. SPIE 2010. [Google Scholar] [CrossRef]

- Chaudhuri, D.; Kushwaha, N.; Samal, A. Semi-automated road detection from high resolution satellite images by directional morphological enhancement and segmentation techniques. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2012, 5, 1538–1544. [Google Scholar] [CrossRef]

- Hofmann, P. Detecting buildings and roads from IKONOS data using additional elevation information. GeoBIT/GIS 2001, 6, 28–33. [Google Scholar]

- Hofmann, P. Detecting urban features from IKONOS data using an object-oriented approach. In Proceedings of the First Annual Conference of the Remote Sensing & Photogrammetry Society, Nottingham, UK, 12–14 September 2001.

- Yager, N.; Sowmya, A. Support vector machines for road extraction from remotely sensed images. In Computer Analysis of Images and Patterns; Springer: Berlin/Heidelberg, Germany, 2003; pp. 285–292. [Google Scholar]

- Wang, Y.; Tian, Y.; Tai, X.; Shu, L. Extraction of main urban roads from high resolution satellite images by machine learning. In Computer Vision-ACCV 2006; Springer: Berlin/Heidelberg, Germany, 2006; pp. 236–245. [Google Scholar]

- Zhao, B.; Zhong, Y.; Zhang, L. A spectral–structural bag-of-features scene classifier for very high spatial resolution remote sensing imagery. ISPRS J. Photogramm. Remote Sens. 2016, 116, 73–85. [Google Scholar] [CrossRef]

- Gerke, M.; Xiao, J. Fusion of airborne laserscanning point clouds and images for supervised and unsupervised scene classification. ISPRS J. Photogramm. Remote Sens. 2014, 87, 78–92. [Google Scholar] [CrossRef]

- Gerke, M.; Xiao, J. Supervised and unsupervised MRF based 3D scene classification in multiple view airborne oblique images. Proc. ISPRS 2013, 2, 25–30. [Google Scholar] [CrossRef]

- Guindon, B. Application of spatial reasoning methods to the extraction of roads from high resolution satellite imagery. In Proceedings of the IEEE International Geoscience and Remote Sensing Symposium (IGARSS), Seattle, WA, USA, 6–10 July 1998.

- Rydberg, A.; Borgefors, G. Integrated method for boundary delineation of agricultural fields in multispectral satellite images. IEEE Trans. Geosci. Remote Sens. 2001, 39, 2514–2520. [Google Scholar] [CrossRef]

- Mokhtarzade, M.; Ebadi, H.; Valadan Zoej, M. Optimization of road detection from high-resolution satellite images using texture parameters in neural network classifiers. Can. J. Remote Sens. 2007, 33, 481–491. [Google Scholar] [CrossRef]

- Mokhtarzade, M.; Zoej, M.V. Road detection from high-resolution satellite images using artificial neural networks. Int. J. Appl. Earth Obs. Geoinform. 2007, 9, 32–40. [Google Scholar] [CrossRef]

- Zheng, Y.-J. Feature extraction and image segmentation using self-organizing networks. Mach. Vis. Appl. 1995, 8, 262–274. [Google Scholar] [CrossRef]

- Canny, J. A computational approach to edge detection. IEEE Trans. Pattern Anal. Mach. Intell. 1986, 8, 679–698. [Google Scholar] [CrossRef] [PubMed]

- Juneja, M.; Sandhu, P.S. Performance evaluation of edge detection techniques for images in spatial domain. Int. J. Comput. Theory Eng. 2009, 1, 614. [Google Scholar] [CrossRef]

- Shrivakshan, G.T.; Chandrasekar, C. A comparison of various edge detection techniques used in image processing. Int. J. Comput. Sci. Issues 2012, 9, 269–276. [Google Scholar]

- Hough, P.V. Method and Means For Recognizing Complex Patterns. US Patent 3069654 1962, 18 December 1962. [Google Scholar]

- Wu, J.; Jie, S.; Yao, W.; Stilla, U. Building boundary improvement for true orthophoto generation by fusing airborne LiDAR data. In Proceedings of the Joint Urban Remote Sensing Event (JURSE), Munich, Germany, 11–13 April 2011.

- Von Gioi, R.G.; Jakubowicz, J.; Morel, J.-M.; Randall, G. LSD: A line segment detector. Image Process. Line 2012, 2, 35–55. [Google Scholar] [CrossRef]

- Babawuro, U.; Beiji, Z. Satellite imagery quality evaluation using image quality metrics for quantitative cadastral analysis. Int. J. Comput. Appl. Eng. Sci. 2011, 1, 391–395. [Google Scholar]

- Turker, M.; Kok, E.H. Field-based sub-boundary extraction from remote sensing imagery using perceptual grouping. ISPRS J. Photogramm. Remote Sens. 2013, 79, 106–121. [Google Scholar] [CrossRef]

- Hu, J.; You, S.; Neumann, U. Integrating LiDAR, aerial image and ground images for complete urban building modeling. In Proceedings of the IEEE-International Symposium on 3D Data Processing, Visualization, and Transmission, Chapel Hill, NC, USA, 14–16 June 2006.

- Hu, J.; You, S.; Neumann, U.; Park, K.K. Building modeling from LiDAR and aerial imagery. In Proceedings of the ASPRS, Denver, CO, USA, 23–28 May 2004.

- Liu, Z.; Cui, S.; Yan, Q. Building extraction from high resolution satellite imagery based on multi-scale image segmentation and model matching. In Proceedings of the International Workshop on Earth Observation and Remote Sensing Applications, Beijing, China, 30 June–2 July 2008.

- Bartl, R.; Petrou, M.; Christmas, W.J.; Palmer, P. Automatic registration of cadastral maps and Landsat TM images. Proc. SPIE 1996. [Google Scholar] [CrossRef]

- Wang, Z.; Liu, W. Building extraction from high resolution imagery based on multi-scale object oriented classification and probabilistic Hough transform. In Proceedings of the International Geoscience and Remote Sensing Symposium (IGARSS), Seoul, Korea, 25–29 July 2005.

- Park, S.-R.; Kim, T. Semi-automatic road extraction algorithm from IKONOS images using template matching. In Proceedings of the Asian Conference on Remote Sensing, Singapore, 5–9 November 2001.

- Goshtasby, A.; Shyu, H.-L. Edge detection by curve fitting. Image Vis. Comput. 1995, 13, 169–177. [Google Scholar] [CrossRef]

- Venkateswar, V.; Chellappa, R. Extraction of straight lines in aerial images. IEEE Trans. Pattern Anal. Mach. Intell. 1992, 14, 1111–1114. [Google Scholar] [CrossRef]

- Lu, H.; Aggarwal, J.K. Applying perceptual organization to the detection of man-made objects in non-urban scenes. Pattern Recognit. 1992, 25, 835–853. [Google Scholar] [CrossRef]

- Torre, M.; Radeva, P. In Agricultural field extraction from aerial images using a region competition algorithm. Proc. ISPRS 2000, 33, 889–896. [Google Scholar]

- Vosselman, G.; de Knecht, J. Road tracing by profile matching and Kalman filtering. In Automatic Extraction of Man-Made Objects from Aerial and Space Images; Gruen, A., Kuebler, O., Agouris, P., Eds.; Springer: Boston, MA, USA, 1995; pp. 265–274. [Google Scholar]

- Sarkar, S.; Boyer, K.L. Perceptual organization in computer vision: A review and a proposal for a classificatory structure. IEEE Trans. Syst. Man Cybern. 1993, 23, 382–399. [Google Scholar] [CrossRef]

- Kass, M.; Witkin, A.; Terzopoulos, D. Snakes: Active contour models. Int. J. Comput. Vis. 1988, 1, 321–331. [Google Scholar] [CrossRef]

- Hasegawa, H. A semi-automatic road extraction method for Alos satellite imagery. Proc. ISPRS 2004, 35, 303–306. [Google Scholar]

- Montesinos, P.; Alquier, L. Perceptual organization of thin networks with active contour functions applied to medical and aerial images. In Proceedings of the International Conference on Pattern Recognition, Vienna, Austria, 25–29 August 1996.

- Yang, J.; Wang, R. Classified road detection from satellite images based on perceptual organization. Int. J. Remote Sens. 2007, 28, 4653–4669. [Google Scholar] [CrossRef]

- Mohan, R.; Nevatia, R. Using perceptual organization to extract 3D structures. IEEE Trans. Pattern Anal. Mach. Intell. 1989, 11, 1121–1139. [Google Scholar] [CrossRef]

- Jaynes, C.O.; Stolle, F.; Collins, R.T. Task driven perceptual organization for extraction of rooftop polygons. In Proceedings of the Second IEEE Workshop on Applications of Computer Vision, Sarasota, FL, USA, 5–7 December 1994.

- Lin, C.; Huertas, A.; Nevatia, R. Detection of buildings using perceptual grouping and shadows. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 21–23 June 1994.

- Noronha, S.; Nevatia, R. Detection and description of buildings from multiple aerial images. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Fort Collins, CO, USA, 17–19 June 1997.

- Yang, M.Y.; Rosenhahn, B. Superpixel cut for figure-ground image segmentation. In Proceedings of the ISPRS Annals of the Photogrammetry—Remote Sensing and Spatial Information Sciences, Prague, Czech Republic, 12–19 July 2016.

- Mayer, H.; Laptev, I.; Baumgartner, A. Multi-scale and snakes for automatic road extraction. In Computer Vision-ECCV1998; Springer: Berlin/Heidelberg, Germany, 1998. [Google Scholar]

- Gruen, A.; Li, H. Semi-automatic linear feature extraction by dynamic programming and LSB-snakes. Photogramm. Eng. Remote Sens. 1997, 63, 985–994. [Google Scholar]

- Laptev, I.; Mayer, H.; Lindeberg, T.; Eckstein, W.; Steger, C.; Baumgartner, A. Automatic extraction of roads from aerial images based on scale space and snakes. Mach. Vis. Appl. 2000, 12, 23–31. [Google Scholar] [CrossRef]

- Agouris, P.; Gyftakis, S.; Stefanidis, A. Dynamic node distribution in adaptive snakes for road extraction. In Proceedings of the Vision Interface, Ottawa, ON, Canada, 7–9 June 2001.

- Saalfeld, A. Topologically consistent line simplification with the Douglas-Peucker algorithm. Cartogr. Geogr. Inf. Sci. 1999, 26, 7–18. [Google Scholar] [CrossRef]

- Dong, P. Implementation of mathematical morphological operations for spatial data processing. Comput. Geosci. 1997, 23, 103–107. [Google Scholar] [CrossRef]

- Guo, X.; Dean, D.; Denman, S.; Fookes, C.; Sridharan, S. Evaluating automatic road detection across a large aerial imagery collection. In Proceedings of the International Conference on Digital Image Computing Techniques and Applications, Noosa, Australia, 6–8 December 2011.

- Heipke, C.; Englisch, A.; Speer, T.; Stier, S.; Kutka, R. Semiautomatic extraction of roads from aerial images. Proc. SPIE 1994. [Google Scholar] [CrossRef]

- Amini, J.; Lucas, C.; Saradjian, M.; Azizi, A.; Sadeghian, S. Fuzzy logic system for road identification using IKONOS images. Photogramm. Rec. 2002, 17, 493–503. [Google Scholar] [CrossRef]

- Ziems, M.; Gerke, M.; Heipke, C. Automatic road extraction from remote sensing imagery incorporating prior information and colour segmentation. Proc. ISPRS 2007, 36, 141–147. [Google Scholar]

- Mohammadzadeh, A.; Tavakoli, A.; Zoej, M.V. Automatic linear feature extraction of Iranian roads from high resolution multi-spectral satellite imagery. Proc. ISPRS 2004, 20, 764–768. [Google Scholar]

- Corcoran, P.; Winstanley, A.; Mooney, P. Segmentation performance evaluation for object-based remotely sensed image analysis. Int. J. Remote Sens. 2010, 31, 617–645. [Google Scholar] [CrossRef]

- Baumgartner, A.; Steger, C.; Mayer, H.; Eckstein, W.; Ebner, H. Automatic road extraction based on multi-scale, grouping, and context. Photogramm. Eng. Remote Sens. 1999, 65, 777–786. [Google Scholar]

- Wiedemann, C. External evaluation of road networks. Proc. ISPRS 2003, 34, 93–98. [Google Scholar]

- Overby, J.; Bodum, L.; Kjems, E.; Iisoe, P. Automatic 3D building reconstruction from airborne laser scanning and cadastral data using Hough transform. ISPRS Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2004, 35, 296–301. [Google Scholar]

- Radoux, J.; Bogaert, P.; Fasbender, D.; Defourny, P. Thematic accuracy assessment of geographic object-based image classification. Int. J. Geogr. Inf. Sci. 2011, 25, 895–911. [Google Scholar] [CrossRef]

- Stehman, S.V. Selecting and interpreting measures of thematic classification accuracy. Remote Sens. Environ. 1997, 62, 77–89. [Google Scholar] [CrossRef]

- Zhan, Q.; Molenaar, M.; Tempfli, K.; Shi, W. Quality assessment for geo-spatial objects derived from remotely sensed data. Int. J. Remote Sens. 2005, 26, 2953–2974. [Google Scholar] [CrossRef]

- Foody, G.M. Status of land cover classification accuracy assessment. Remote Sens. Environ. 2002, 80, 185–201. [Google Scholar] [CrossRef]

- Congalton, R.G.; Green, K. Assessing the Accuracy of Remotely Sensed Data: Principles and Practices; CRC Press: Boca Raton, FL, USA, 2008. [Google Scholar]

- Lizarazo, I. Accuracy assessment of object-based image classification: Another STEP. Int. J. Remote Sens. 2014, 35, 6135–6156. [Google Scholar] [CrossRef]

- Rottensteiner, F.; Sohn, G.; Gerke, M.; Wegner, J.D.; Breitkopf, U.; Jung, J. Results of the ISPRS benchmark on urban object detection and 3D building reconstruction. ISPRS J. Photogramm. Remote Sens. 2014, 93, 256–271. [Google Scholar] [CrossRef]

- Winter, S. Uncertain topological relations between imprecise regions. Int. J. Geogr. Inf. Sci. 2000, 14, 411–430. [Google Scholar] [CrossRef]

- Clementini, E.; Di Felice, P. An algebraic model for spatial objects with indeterminate boundaries. In Geographic Objects with Indeterminate Boundaries; Burrough, P.A., Frank, A., Eds.; Taylor & Francis: London, UK, 1996; pp. 155–169. [Google Scholar]

- Worboys, M. Imprecision in finite resolution spatial data. GeoInformatica 1998, 2, 257–279. [Google Scholar] [CrossRef]

- Rutzinger, M.; Rottensteiner, F.; Pfeifer, N. A comparison of evaluation techniques for building extraction from airborne laser scanning. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2009, 2, 11–20. [Google Scholar] [CrossRef]

- Heipke, C.; Mayer, H.; Wiedemann, C.; Jamet, O. Evaluation of automatic road extraction. Proc. ISPRS 1997, 32, 151–160. [Google Scholar]

- Wiedemann, C.; Ebner, H. Automatic completion and evaluation of road networks. Proc. ISPRS 2000, 33, 979–986. [Google Scholar]

- Harvey, W.A. Performance evaluation for road extraction. Bull. Soc. Fr. Photogramm. Télédétec. 1999, 153, 79–87. [Google Scholar]

- Shi, W.; Cheung, C.K.; Zhu, C. Modelling error propagation in vector-based buffer analysis. Int. J. Geogr. Inf. Sci. 2003, 17, 251–271. [Google Scholar] [CrossRef]

- Suetens, P.; Fua, P.; Hanson, A.J. Computational strategies for object recognition. ACM Comput. Surv. (CSUR) 1992, 24, 5–62. [Google Scholar] [CrossRef]

- Mena, J.B. State of the art on automatic road extraction for GIS update: A novel classification. Pattern Recognit. Lett. 2003, 24, 3037–3058. [Google Scholar] [CrossRef]

- Tien, D.; Jia, W. Automatic road extraction from aerial images: A contemporary survey. In Proceedings of the International Conference in IT and Applications (ICITA), Harbin, China, 15–18 January 2007.

- Mayer, H.; Hinz, S.; Bacher, U.; Baltsavias, E. A test of automatic road extraction approaches. Proc. ISPRS 2006, 36, 209–214. [Google Scholar]

- Xie, S.; Tu, Z. In Holistically-nested edge detection. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015.

- Arbelaez, P.; Maire, M.; Fowlkes, C.; Malik, J. Contour detection and hierarchical image segmentation. Pattern Analysis and Machine Intelligence. IEEE Trans. Patten Anal. Mach. Intell. 2011, 33, 898–916. [Google Scholar] [CrossRef] [PubMed]

- Vetrivel, A.; Gerke, M.; Kerle, N.; Vosselman, G. Identification of structurally damaged areas in airborne oblique images using a visual-Bag-of-Words approach. Remote Sens. 2016. [Google Scholar] [CrossRef]

- Gülch, E.; Müller, H.; Hahn, M. Semi-automatic object extraction—Lessons learned. Proc. ISPRS 2004, 34, 488–493. [Google Scholar]

- Hecht, A.D.; Fiksel, J.; Fulton, S.C.; Yosie, T.F.; Hawkins, N.C.; Leuenberger, H.; Golden, J.S.; Lovejoy, T.E. Creating the future we want. Sustain. Sci. Pract. Policy 2012, 8, 62–75. [Google Scholar]

- Baltsavias, E.P. Object extraction and revision by image analysis using existing geodata and knowledge: Current status and steps towards operational systems. ISPRS J. Photogramm. Remote Sens. 2004, 58, 129–151. [Google Scholar] [CrossRef]

- Schwering, A.; Wang, J.; Chipofya, M.; Jan, S.; Li, R.; Broelemann, K. SketchMapia: Qualitative representations for the alignment of sketch and metric maps. Spat. Cognit. Comput. 2014, 14, 220–254. [Google Scholar] [CrossRef]

| Image Segmentation Method | Resolution < 5 m | Resolution > 5 m | Unknown Resolution |

|---|---|---|---|

| Unsupervised | [79,80,112,113,114,115,116,117,118,119,120,121,122,123,124,125,126,127,128] | [107,129,130,131,132,133] | [125,134,135,136,137,138,139,140,141,142,143,144,145] |

| Supervised | [72,75,108,146,147,148,149,150,151,152,153,154,155] | [156,157] | [86,137,138,158,159,160] |

| Line Extraction Method | Resolution < 5 m | Resolution > 5 m | Unknown Resolution |

|---|---|---|---|

| Canny edge detection | [75,121,151,167] | [129,168] | [138,144,169,170] |

| Hough transform | [73,120,126,171] | [172] | [140,169,173] |

| Line segment detector | [128,165,171] | [144,145,174,175,176,177] |

© 2016 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC-BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Crommelinck, S.; Bennett, R.; Gerke, M.; Nex, F.; Yang, M.Y.; Vosselman, G. Review of Automatic Feature Extraction from High-Resolution Optical Sensor Data for UAV-Based Cadastral Mapping. Remote Sens. 2016, 8, 689. https://doi.org/10.3390/rs8080689

Crommelinck S, Bennett R, Gerke M, Nex F, Yang MY, Vosselman G. Review of Automatic Feature Extraction from High-Resolution Optical Sensor Data for UAV-Based Cadastral Mapping. Remote Sensing. 2016; 8(8):689. https://doi.org/10.3390/rs8080689

Chicago/Turabian StyleCrommelinck, Sophie, Rohan Bennett, Markus Gerke, Francesco Nex, Michael Ying Yang, and George Vosselman. 2016. "Review of Automatic Feature Extraction from High-Resolution Optical Sensor Data for UAV-Based Cadastral Mapping" Remote Sensing 8, no. 8: 689. https://doi.org/10.3390/rs8080689

APA StyleCrommelinck, S., Bennett, R., Gerke, M., Nex, F., Yang, M. Y., & Vosselman, G. (2016). Review of Automatic Feature Extraction from High-Resolution Optical Sensor Data for UAV-Based Cadastral Mapping. Remote Sensing, 8(8), 689. https://doi.org/10.3390/rs8080689