1. Introduction

Operational global agricultural monitoring products will play an important role in addressing some challenges that the world will have to face in the coming years. The importance food security increases the relevance of mapping the world’s agricultural landscapes [

1]. At the global scale, monitoring food supply and demand is important for providing early warning on potential price spikes or food shortages. Accordingly, several international efforts aim at improving the timely mapping of crops grown, and to monitor crop growth.

Remote sensing satellite imaging has significantly contributed to the monitoring of agricultural areas [

2]. Optical satellite images are a valuable resource for gathering information on crops over large areas with a high revisit frequency. However, mapping crop areas through the analysis of multi-temporal satellite images is not a straightforward problem. A reliable global crop monitoring system needs satellite data acquired on a regular basis with broad coverage. Unfortunately, obtaining a series of cloud free images at high spatial resolution over an extended period of time is challenging [

3]. Snow can also hamper earth surface observations during long periods of time.

Mapping crops across the great diversity of the world’s landscapes is complex. Cropland areas can be composed of different crop types and field sizes [

4,

5]. Moreover, the spectral response of cropland areas varies significantly among the crop phenological stage and the cropping calendars applied around the world [

6]. Individual crop types show variability in their spectral response because of climate and soil conditions, and differences between practices from country to country, such as the use of fertilizers or the human practices, are yet another contributing factor. In addition, cereals can easily be confused with natural grasslands at some periods of the cycle.

Satellite-based monitoring of agriculture started in the early 1970s as described by Becker-Reshef

et al. [

7]. In most local to regional scale mapping efforts, cropland has not been a single land cover class, but was contained within mosaic classes. This is typical for global land cover products, such as GLC2000 [

8], GlobCover 2005/2009 [

9,

10], GLCShare [

11], and MODIS Land Cover [

12], which do not specifically target the agricultural component of the landscape. Even the most recent and more precise ESA Climate Change Initiative (CCI) Land Cover products [

13] obtained from a multi-year multi-sensor approach still consider croplands as a land cover class. Cropland is a land use category rather than a type of land cover. Accordingly, a land cover typology is not well suited to the detection of cropland areas.

Furthermore, croplands are often included in mosaics or mixed classes, where crops are classified with natural vegetation such as grassland and shrubland. Therefore, the cropland mask obtained by using this mixed land cover class is not very useful in agricultural applications. Nevertheless, a few global crop maps have been produced recently [

4,

14], with an emphasis on water management: the global map of rainfed cropland areas (GMRCA) [

15] and the global irrigated area map (GIAM) [

16]. However, their coarse spatial resolution (10 km) does not meet the needs of operational applications and they suffer from large uncertainties [

17] especially in complex farming systems in Africa. The notable limitations of cropland maps in Africa has given rise to the development of several studies and products such as the GEOLAND-2 SATCHMO product [

18], which uses 10 × 10 km Landsat images. The goal of this product is to construct cropland maps at 30-m spatial resolution over many countries in order to deliver statistics on cropped areas at national scale.

Many programs have been set up by agricultural agencies to provide regular local and global agricultural monitoring [

19]. One of the leading sources of global information on food production and food security is the Global Information and Early Warning System (GIEWS) established by the FAO [

20]. In the same context, one of the oldest and longest running global crop monitoring activities is carried out by the USDA Foreign Agricultural Service (FAS) [

21]. The Monitoring Agricultural ResourceS (MARS) [

22] and Global Monitoring of Food Security (GMFS) [

23] programs are operated by the European Commission, while the Institute of Remote Sensing and Digital Earth (RADI) within the Chinese Academy of Science is responsible for the Chinese Crop Watch Program [

24].

One of the most important efforts in the last decade has been the Global Agricultural Monitoring (GLAM) Project [

7] developed by NASA, UMD (University of Maryland) and USDA. Another important initiative has been the GEO Global Agricultural Monitoring initiative (GEOGLAM) [

25], which aims to strengthen the international community’s capacity to produce and disseminate relevant, timely and accurate agricultural information.

The global extent of cropland at 250 m spatial resolution is obtained by using multi-year MODIS (MODerate Resolution Imaging Spectroradiometer) time series and thermal data [

14]. Since crop monitoring needs a high revisit frequency, NASA’s MODIS sensor has been widely used [

12,

26,

27,

28] for the purpose. The use of this coarser sensor has proved suitable for identifying major crops over large areas of low diversity. However, its coarse resolution does not have the ability to provide crop specific information with an adequate spatial resolution for small, heterogeneous croplands [

29,

30,

31]. This drawback comes from subpixel heterogeneity, which is more obvious where individual fields are smaller than individual pixels [

29]. Considering these limitations, new efforts are in progress to develop a Global Cropland Area Database at 30 m resolution (GCAD30) through Landsat and MODIS Data Fusion for the years 2010 and 1990 [

32].

The upcoming Sentinel-2 mission offers new opportunities for agriculture monitoring on a regional to global scale. New possibilities for crop monitoring are offered by its 10-20 m spatial resolution, its 5-day revisit frequency, its global coverage and its compatibility with the Landsat missions. In this context, the Sentinel-2 for Agriculture (Sen2-Agri) project S2Agri has been recently launched by ESA with the aim of providing validated algorithms to derive Earth observation products that are relevant to crop monitoring for the agricultural user community at national and international level. Development and benchmarking of algorithms will lead to a range of products dedicated to agricultural monitoring [

33]. Selected algorithms will be implemented in an open-source processing system, which will be validated and demonstrated in collaboration with national and international users.

The dynamic binary cropland mask (i.e., crop/non-crop) that is introduced in this article is one example of these products being developed within the Sen2-Agri project for operational use. It distinguishes between annual cropland and other land cover classes. From the first dates of the season, the goal of this product is to forecast the cropland mask for the end of the season. Accordingly, the binary cropland mask is updated every time a new image is available during the agricultural season. At the end of the season, the cropland mask will contain the regions where at least one crop has been planted as the season progresses (not including grasslands, perennial crops or woody vegetation). Although the methodology may be used anywhere in the world, it requires local in-situ data and therefore a large amount of work to produce it at country—not to mention global- scale.

In the framework of the Sen2-Agri project, this work explores different processing chains in order to construct the dynamic cropland mask presented here. These systems rely on the two classic pattern recognition techniques of feature extraction and supervised classification.

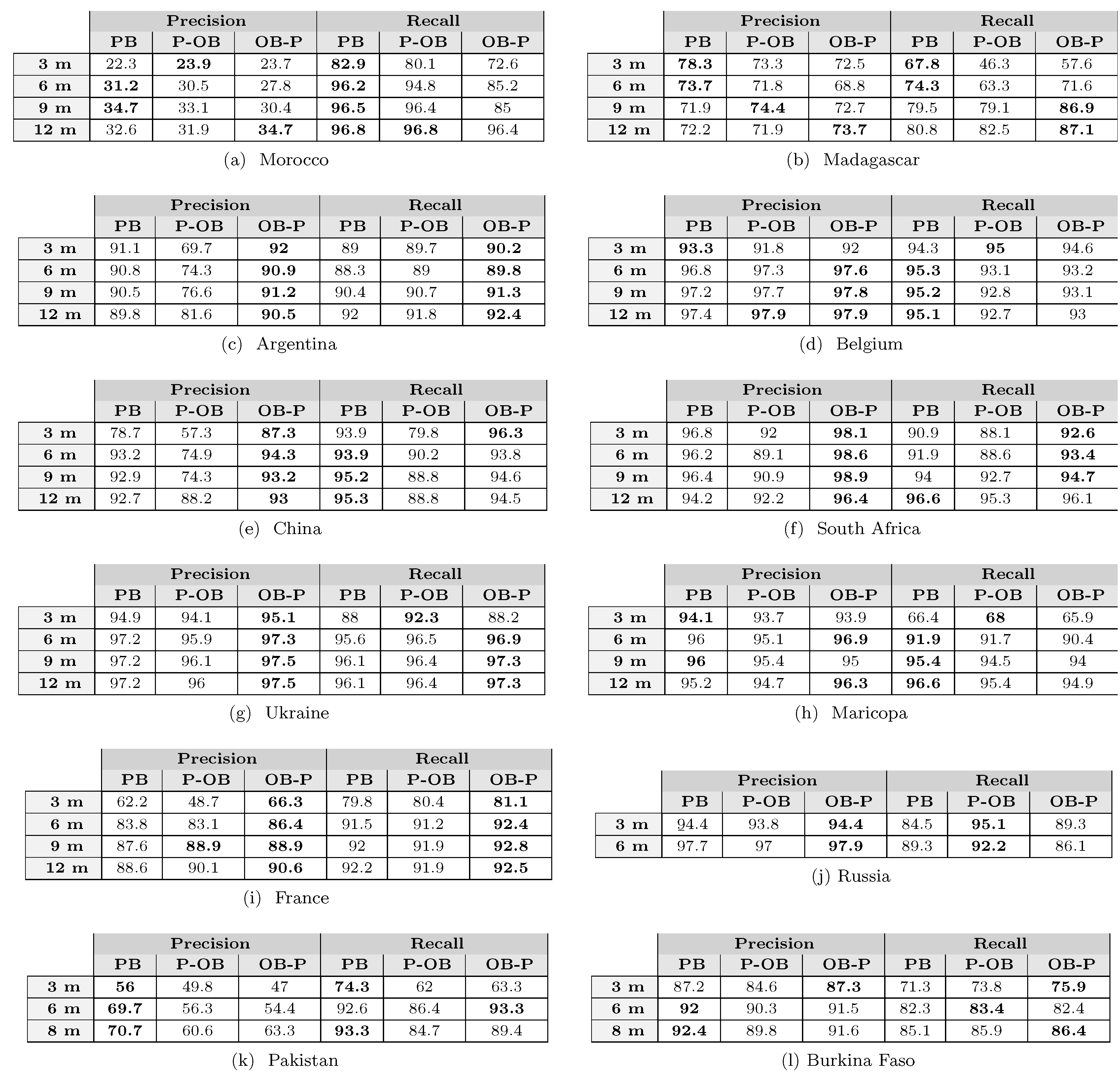

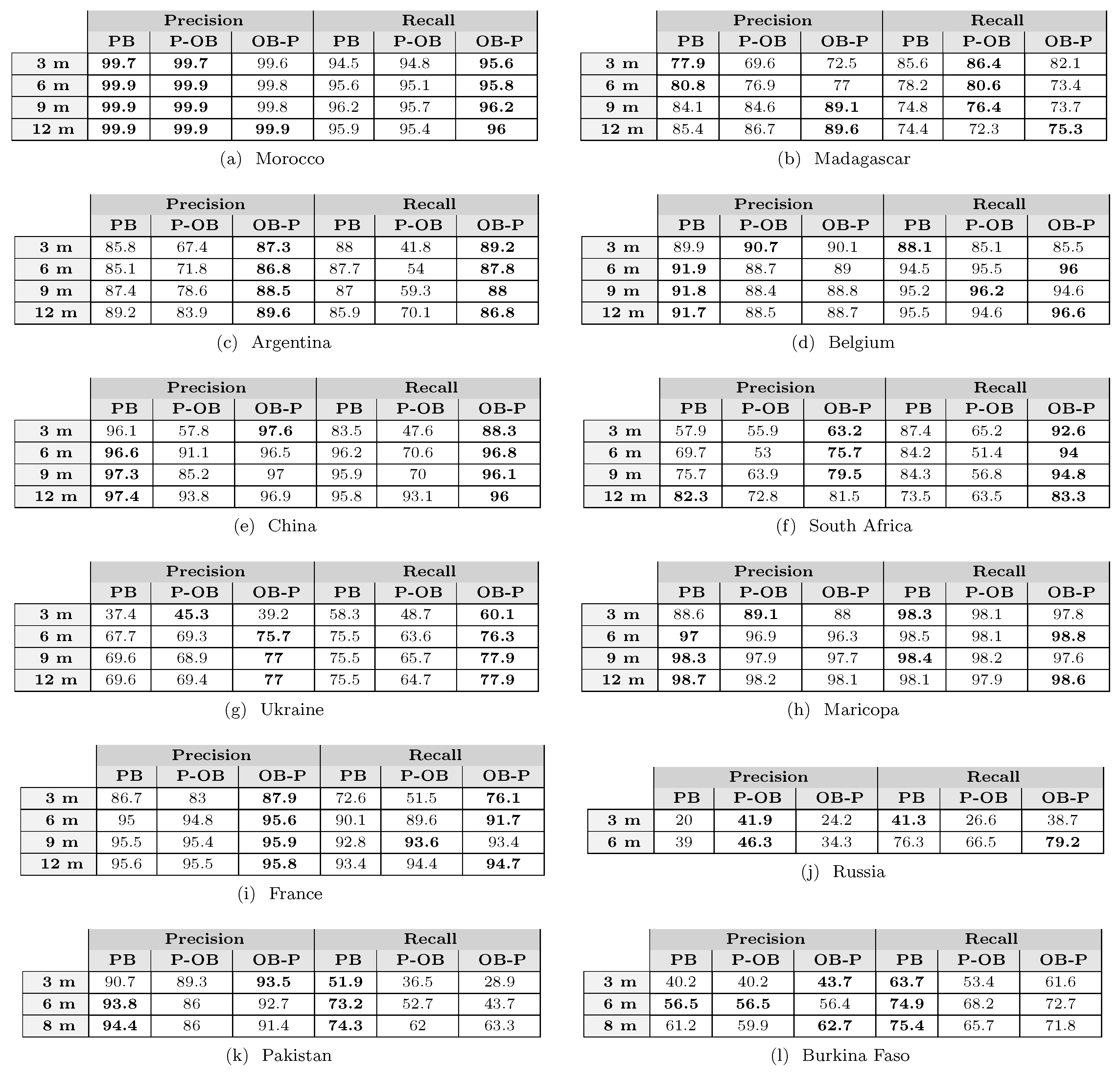

Three different classification strategies are studied and proposed here in order to assess the differences between pixel- and object-based methodologies: Pixel-Based (PB), Object-Based with a Pre-filtering task (P-OB) and Object-Based with a Post-filtering task (OB-P). The first strategy studied is a pixel-wise classification system that does not consider information about the spatial structures of the image. In it, the classification map is constructed by taking the temporal properties of individual pixels into account. In contrast, the two object-based classification methodologies take the spatial relationship among pixels into account. For both object-based classification methodologies, the spatial information is included in the classification system by using a segmentation result of the original image. In the first case, the segmentation result is used to filter the image by using a pre-processing task. In the second, the segmentation result is used in a post-processing task, which applies a majority voting decision algorithm.

To evaluate the three classification strategies, a test phase to develop, tune and validate the generation of cropland mask products was performed at 12 sites around the world, in preparation for operational expansion to the global level once Sentinel-2 data are systematically acquired. These experiments rely on the use of SPOT4-Take5 data (

https://spot-take5.org), complemented by LANDSAT 8 as a proxy for Sentinel-2 [

34]. For test sites that were not covered by SPOT4-Take5, RapidEye images (

http://www.satimagingcorp.com/satellite-sensors/other-satellite-sensors/rapideye/) were used. The test sites are described in detail in [

33], and cover a range of agricultural systems intended to be representative of the global diversity of agricultural landscapes and satellite conditions. The sites are part of the Joint Experiment of Crop Assessment and Monitoring (JECAM) (

http://www.jecam.org) and are distributed across the globe. The three proposed classification systems are described in

Section 3. Experimental results are reported in

Section 5 and conclusions are drawn in

Section 6.

2. Sentinel-2 Proxy Data

Simulations of Sentinel-2 time series [

34] were obtained by using SPOT4-Take5 (or RapidEye in the absence of SPOT4-Take5) images complemented by LANDSAT-8 data. A weighted linear interpolation technique was used to fill the gaps and all the data were resampled to the spatial resolution of 20 m.

The distribution of the 12 selected test sites all around the world ensured a coverage of a diversity of landscape patterns, different agricultural practices and several satellite observation conditions as the presence of clouds. The list of sites covered Europe (France, Belgium, Ukraine, Russia), Africa (Morocco, Madagascar, Burkina Faso, South Africa), Asia (China, Pakistan), North America (USA) and South America (Argentina). A detailed description of the test sites, demonstrating how they represent the diversity of cropping conditions, can be found in [

33]. The cropland mask construction was evaluated at different time intervals (3 months, 6 months, 9 months and 12 months) during the season. For each test site, the interval of time was computed from the first available image. Consequently, as

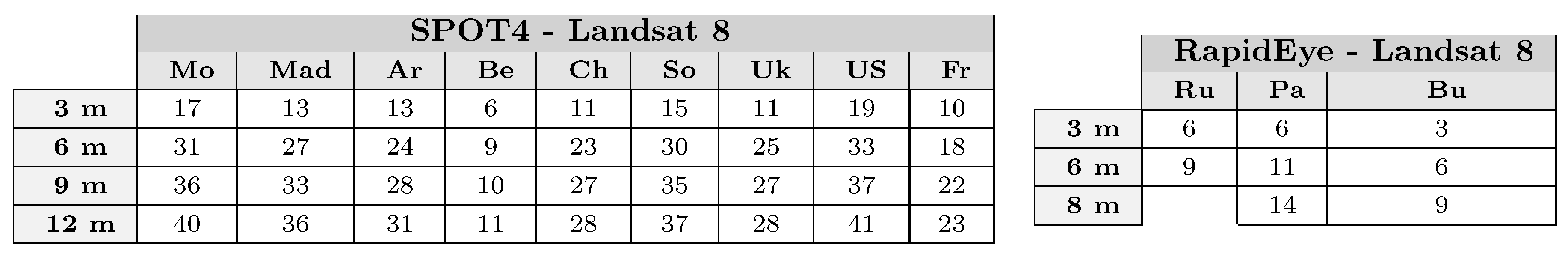

Figure 1 shows, the number of available images not fully cloudy (90% of clouds) for each interval of time was different.

Figure 1.

Number of images with less than 90% cloud cover for the different Test Sites. Mo: Morocco, Mad: Madagascar, Ar: Argentina, Be: Belgium, Ch: China, So: South Africa, Uk: Ukraine, US: United States (Maricopa), Fr: France, Ru: Russia, Pa: Pakistan, Bu: Burkina Faso.

Figure 1.

Number of images with less than 90% cloud cover for the different Test Sites. Mo: Morocco, Mad: Madagascar, Ar: Argentina, Be: Belgium, Ch: China, So: South Africa, Uk: Ukraine, US: United States (Maricopa), Fr: France, Ru: Russia, Pa: Pakistan, Bu: Burkina Faso.

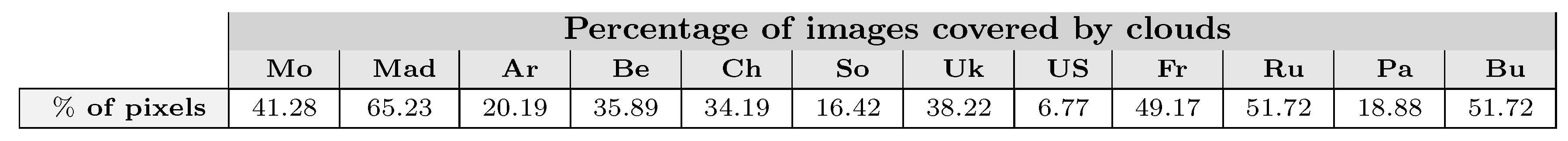

For the various test sites, the cloud coverage on the complete image time series was quite different as shown in

Figure 2. Therefore, the number of images and the quality of the image time series was not homogeneous across the test sites. For instance, for the Argentina and Maricopa (USA) sites a large number of images was available, whereas for the Pakistan and Russian sites this was much less. The number of images of Burkina Faso was also small because no images were available during the rainy period.

Figure 2.

Percentage of images covered by clouds. Mo: Morocco, Mad: Madagascar, Ar: Argentina, Be: Belgium, Ch: China, So: South Africa, Uk: Ukraine, US: United States (Maricopa), Fr: France, Ru: Russia, Pa: Pakistan, Bu: Burkina Faso.

Figure 2.

Percentage of images covered by clouds. Mo: Morocco, Mad: Madagascar, Ar: Argentina, Be: Belgium, Ch: China, So: South Africa, Uk: Ukraine, US: United States (Maricopa), Fr: France, Ru: Russia, Pa: Pakistan, Bu: Burkina Faso.

Besides the satellite imagery,

in-situ data provided by site managers were used. All the test sites belonged to the JECAM network except for the US and Pakistan data set. JECAM is an initiative to reach a convergence of approaches, and develop monitoring and reporting protocols and best practices for a variety of global agricultural systems. The standardized field data JECAM protocol has been used to collect field data. It was composed of crop pixels (wheat, sunflower, maize,

etc.) and no-crop pixels (urban areas, water surfaces, grassland,

etc.). A single label (crop or no-crop) described the pixel class during the complete image time series (even if the crop appeared in the last months).

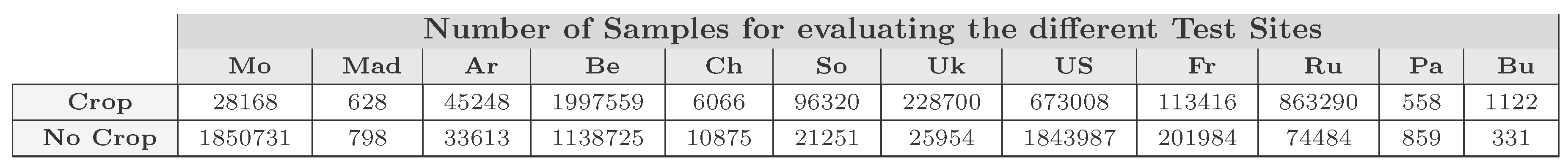

Figure 3 shows the number of samples used in the benchmarking evaluation for all the test sites studied.

Figure 3.

Number of pixels from Sentinel proxy data, having a spatial resolution of 20 m, used in the validation of cropland masks. Mo: Morocco, Mad: Madagascar, Ar: Argentina, Be: Belgium, Ch: China, So: South Africa, Uk: Ukraine, US: United States (Maricopa), Fr: France, Ru: Russia, Pa: Pakistan, Bu: Burkina Faso.

Figure 3.

Number of pixels from Sentinel proxy data, having a spatial resolution of 20 m, used in the validation of cropland masks. Mo: Morocco, Mad: Madagascar, Ar: Argentina, Be: Belgium, Ch: China, So: South Africa, Uk: Ukraine, US: United States (Maricopa), Fr: France, Ru: Russia, Pa: Pakistan, Bu: Burkina Faso.

Depending on the site, the number of samples composing the in-situ data varied widely. In particular, the number of samples in Madagascar, Pakistan and Burkina Faso was quite small.

3. Dynamic Global Cropland Mask Processing Chain

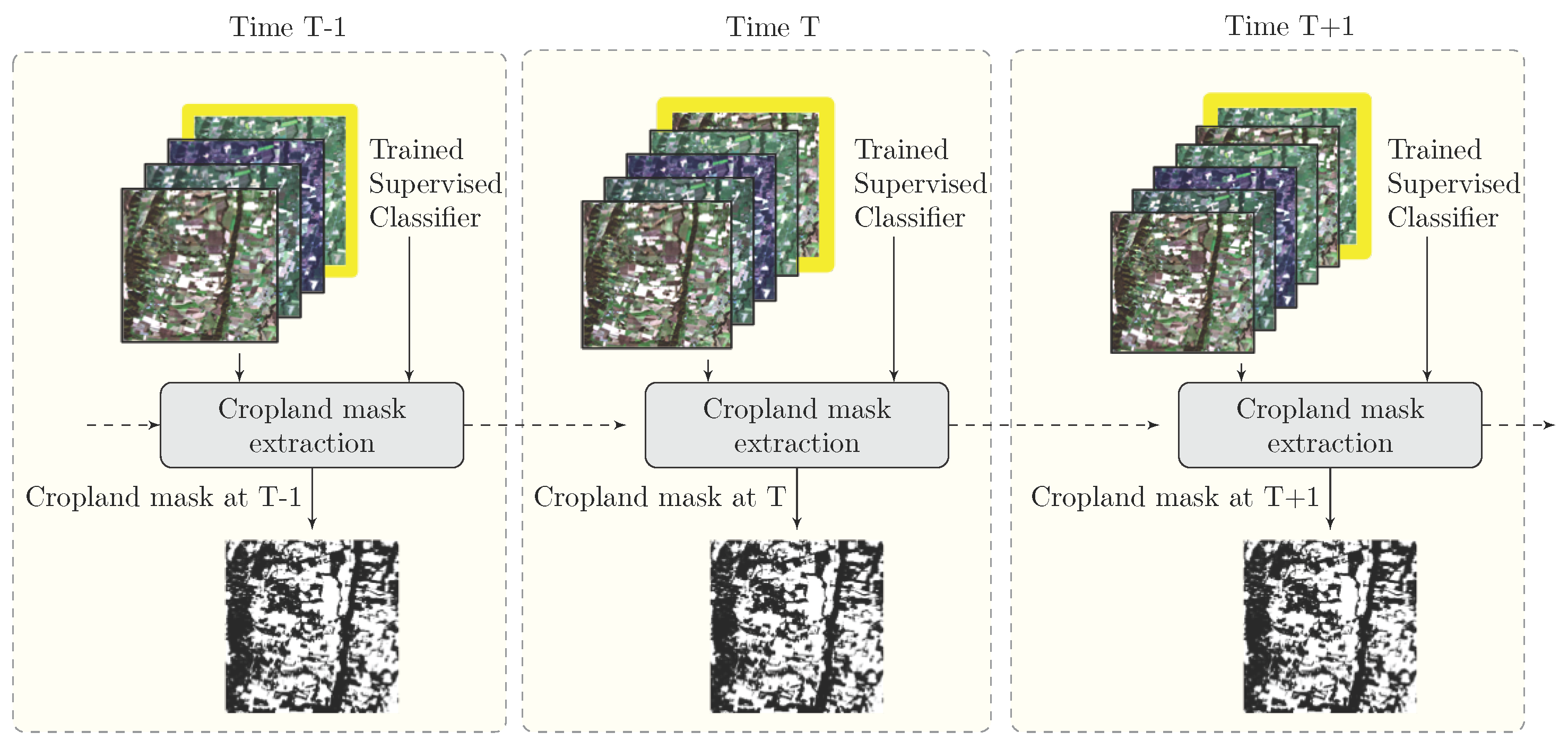

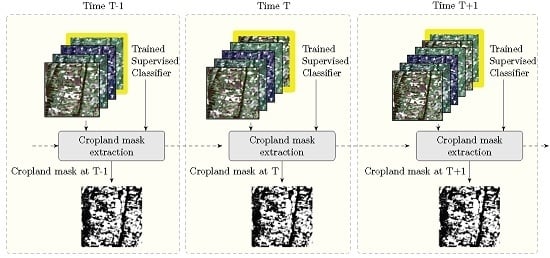

The crop mask consisted of a binary map separating annual cropland areas and other areas. Annual cropland was defined as a piece of land with a minimum area of 0.25 ha, actually sowed/planted and harvestable at least once within the year following the sowing date. This binary map was produced during the whole agricultural season, to serve as a mask for monitoring crop growing conditions. Its accuracy was expected to increase as long as additional images were integrated into the development process. To do this, the dynamic classification system shown in

Figure 4 was proposed, in which the cropland area was extracted progressively after each real-time data acquisition captured at instant of time

T. At the end of the season, the cropland mask contained the regions where at least one crop was planted during the year.

Figure 4 shows that two forms of input data are required in the classification system at each time

T. The first input corresponds to a supervised classifier, which is trained by using an image time series acquired over the complete year preceding the season. This classification model is needed in order to progressively update the cropland mask as each new acquisition arrives. The second type of input data corresponds to the image time series acquired from the beginning of the season until the instant of time in question.

Figure 4.

The proposed dynamic classification system.

Figure 4.

The proposed dynamic classification system.

The satellite data corresponded to a gap-filled image time series without missing data. In order to estimate the value of the missing pixels, a weighted linear interpolation was used. The no-data values were determined thanks to available missing value masks, which show pixels affected by clouds, cloud shadows or saturation effects. The weights used for the linear interpolation were computed by measuring the temporal linear distance between the interpolated pixel and the sampling valid pixels.

Using the flowchart described in

Figure 4, three different strategies were proposed to perform the cropland mask extraction step: Pixel-Based (PB), Object-Based with a Pre-filtering task (P-OB) and Object-Based with a Post-filtering task (OB-P).

3.1. Pixel-Based Supervised Classification (PB)

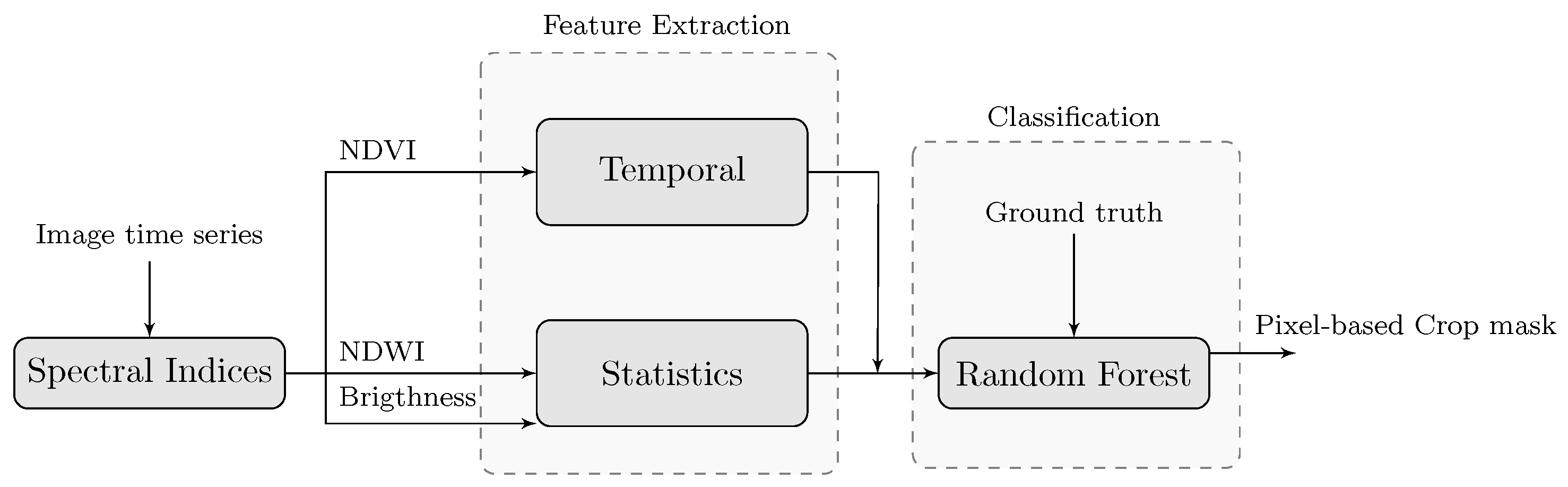

For this first approach, the processing chain design is illustrated in

Figure 5, which should be read from left to right. Three different steps, detailed in the following sections, compose this flowchart: the computation of several spectral indices, a feature extraction step and the classification step. The classification was performed by the Random Forest classifier [

35]. The input data corresponded to the total number of patterns computed in the feature extraction step. In order to train the classifier, ground truth data containing crop and no-crop samples was mandatory in our system. In the framework of the Sen2-Agri Project [

33], alternative, unsupervised approaches have been also studied [

36], which do not rely on

in-situ training data.

Figure 5.

Supervised classification strategy for pixel-based approach.

Figure 5.

Supervised classification strategy for pixel-based approach.

3.1.1. Computation of the Spectral Indices

Three well-known spectral indices were used: The Normalized Difference Vegetation Index (NDVI) [

37], the Normalized Difference Water Index (NDWI) [

38] and the Brightness index, which is the modulus of the spectral vector computed as

where

G,

R,

and

are the green, red, near-infrared and shortwave infrared radiance values. The interest of using multi-temporal NDVI phenological profiles has been extensively confirmed in the context of crop identification [

14,

28]. The use of NDWI and Brightness provided complementary information improving the discrimination between crop and no-crop areas.

3.1.2. Feature Extraction

As input to the Random Forest classifier we extracted an input feature set from the image time series. The goal is to use these multi-year metrics as independent variables for the cropland mapping analysis. Metrics characterizing crops over a complete year, such as image time series reflectances or the Normalized Difference Vegetation Index (NDVI) [

26,

28,

39], are traditionally used for crop monitoring. Specifically aimed at detecting crops at the global scale, a set of 39 multi-year MODIS metrics incorporating four MODIS land bands, NDVI (Normalized Difference Vegetation Index) and thermal data was introduced by Pittman

et al. [

14]. These NDVI metrics are computed on NDVI profiles calculated for specific crop classes during a complete year and they consist of the mean values of some reflectance bands or the mean NDVI value. The main problem of these features is that they do not take account of the fact that the NDVI profiles have specific shape due to the crop’s phenological cycle. This shape can be modeled by a mathematical function such a double logistic function [

40]. Following this crop model, crop NDVI profiles have strong transitions due to greenness and the onset of senescence, which are not usually found in other natural vegetation areas.

Considering the above drawbacks, our goal was to define features that could be computed dynamically as the satellite acquisitions became available. For instance, the construction of a global cropland mask implies the use of different satellite footprints that have been acquired at different (but close) dates. Also, the study of a large area implies that some crop characteristics, e.g., the peak of greenness, occur in different time periods. Accordingly, it was considered that the proposed crop features could not depend directly on the acquisition dates. Therefore, some features, such as the NDVI value of a pixel at a specific instant of time, were not considered as relevant. Also, the date of the maximum NDVI value was not considered as a discriminative feature since it could show strong variability in a given satellite footprint. Concerning the real-time constraint, the proposed features should be computed iteratively during the complete year without waiting for the end of the crop season.

Bearing this in mind, the computation of two different categories of features was proposed according to the computed spectral indices (as shown in

Figure 5). In the case of NDVI, 17 temporal features characterizing the evolution of a crop profile were proposed, and five statistical features were computed for each of the other two spectral indices.

Table 1.

NDVI Time Features proposed as an input feature set for Random Forest classifier.

Table 1.

NDVI Time Features proposed as an input feature set for Random Forest classifier.

| Notation | Description |

|---|

| Maximum value |

| Mean value |

| Standard deviation value |

| Maximum difference in a sliding temporal neighborhood having a size w (default value w=2) |

| Minimum difference in a sliding temporal neighborhood having a size w (default value w=2). |

| Difference between and value estimating the transition jump |

| Maximum mean value in a sliding temporal neighborhood having a size w (default value w=2). |

| Length of the flat interval containing the peak area associated to value. |

| Surface of the flat interval area containing the peak value associated to |

| Maximum surface of the first positive derivative interval |

| Length of the first positive derivative interval associated to value |

| Rate of the first positive derivative interval associated to |

| Maximum surface of the first negative derivative interval |

| Length of the first negative derivative interval associated to value. |

| Rate of senescence of the first negative derivative interval associated to |

| Flag detecting if there is a bare soil transition before the positive derivative |

| Flag detecting if there is a bare soil transition after the negative derivative |

NDVI Time Features: Global patterns are often observed in crop NDVI signatures, describing the three most important crop stages: the onset of greenness, a maturity period and the onset of senescence. To exploit this information, phenological features extracted from NDVI time series have been proposed in the literature. One popular tool for extracting phenological features is the TIMESAT software [

41]. Unfortunately, these features cannot be used with our classification system for several reasons. The first is that TIMESAT computes features using time series with regular temporal sampling (the time between two consecutive image acquisitions is the same). The second important limitation is that these features are computed on time series data representing at least one complete year. This requirement is mandatory since the features are computed after fitting the data with a well-known double logistic regular function describing a regular crop model. In our case, this could not be done since our goal was to extract crop features that could be computed dynamically from the first image acquisitions. For this reason, the temporal features presented in

Table 1 related to the phenological cycle of the crops were used.

Brightness and NDWI statistical features: The aim of incorporating these features was to better discriminate the land cover classes not included in cropland areas. These features were based on the computation of the global statistics on the spectral index profiles. These features are presented in

Table 2 and

Table 3.

Table 2.

Brightness Statistical Features.

Table 2.

Brightness Statistical Features.

| Notation | Description |

|---|

| Maximum value |

| Minimum value |

| Mean value |

| Standard deviation value |

| Median value |

Table 3.

NDWI Statistical Features.

Table 3.

NDWI Statistical Features.

| Notation | Description |

|---|

| Maximum value |

| Minimum value |

| Mean value |

| Standard deviation value |

| Median value |

3.1.3. Supervised Classification: Random Forest

In the context of crop mapping, the supervised Decision Tree classifier used by Wardlow

et al. [

28] and Johnson

et al. [

42] has shown that MODIS-NDVI features can produce good accuracy levels. A very similar strategy is presented in Pittman

et al. [

14], who apply a set of global classification tree models using a bagging (Bootstrap AGGregatING) methodology [

43]. Following the same philosophy, Random Forests (RF) were proposed by Breiman [

35] in order to improve bagging results. The effectiveness of the RF classifier for the generation of Land Cover maps has been demonstrated by various studies [

44,

45,

46,

47,

48].

3.2. Object-Based Supervised Classification with a Pre-Filtering Task (P-OB)

Pixel-based procedures analyze the information provided by each individual pixel, without taking the spatial or contextual information related to the pixel of interest into account. If the neighborhood information is not used, these classification techniques tend to produce salt and pepper noisy results that contain many wrongly classified pixels. In order to solve the limitations of local decisions taken by these methods, object-based image analysis techniques have been proposed [

49,

50,

51].

Object-based image analysis focuses on analyzing images at the object level instead of working at the pixel level. Objects are image regions composed of a groups of pixels that are similar to one another according to a property measure. The fact that the local properties from neighboring pixels are considered explains the significant improvement that can be introduced by these methods. The general procedure for object-based image analysis is that first an image is segmented into homogenous regions according to a predefined criterion. Then, the image is classified by assigning each object to a class based on features and criteria set by the user.

Various studies have compared pixel and object-based image analysis aiming at classifying remote sensing images (Gao

et al. [

52] or Cleve

et al. [

53]). An important consideration for the evaluation of the two approaches is the image spatial resolution, which refers to the size of the smallest feature that can be detected. In the case of coarser spatial resolution images, object-based image analysis shows no great advantages over the pixel-based one. In general, object-based approaches are particularly suitable for high resolution images and lead to more robust and less noisy results. This can be easily understood since objects are not made up of several pixels in the case of low spatial resolution images.

Because low spatial resolution imagery has been used for most crop mapping exercises, and low spatial resolution imagery is not well-suited to object-based classification approaches. However, some interesting object-based works have been proposed for cropland mapping, e.g., by Lobo

et al. [

49,

54,

55]

In the object-based classification method presented here, our first goal was to detect the agricultural field boundaries defining the spatial relationship of the image pixels. To do that, a segmentation strategy of the entire image was proposed. The construction of an image segmentation result is acknowledged to be a difficult task that depends on the final application. Here, the work focused on obtaining regions representing homogeneous agricultural areas having the same temporal features.

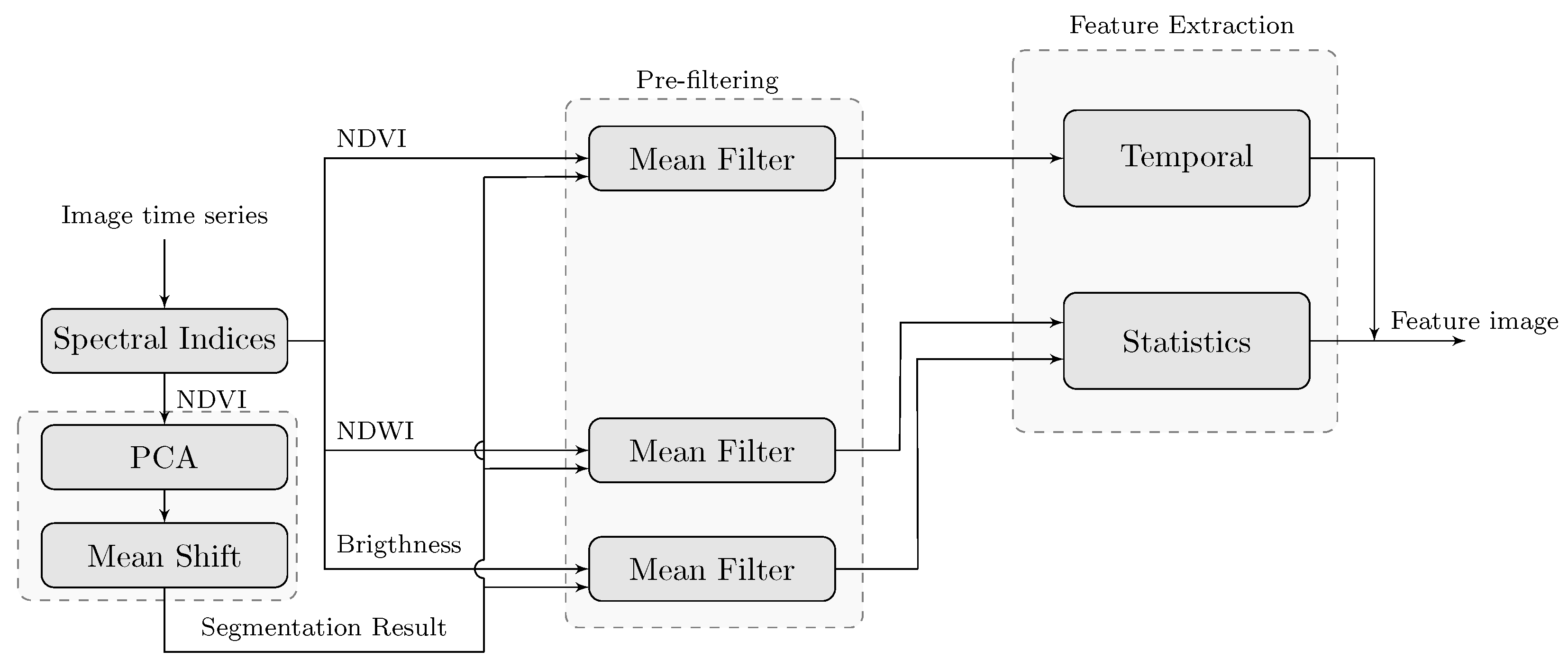

The resulting homogeneous regions were then used to reduce the intensity variation between one pixel and its surrounding neighborhood. This was carried out by a pre-processing task that corresponded to a mean filtering. Finally, the features obtained by applying the feature extraction task to the filtered image were used on the RF classification task. These different steps are shown in the flowchart of

Figure 6, which can be divided in three steps: the construction of a segmentation of the original image, the pre-processing filtering and the feature extraction steps.

Figure 6.

The proposed object-based classification system with a pre-filtering task.

Figure 6.

The proposed object-based classification system with a pre-filtering task.

The pre-processing filtering was done by replacing each pixel value with the mean value of its neighbors, including itself, as shown in

Figure 6. The segmentation result was obtained by concatenating two classical techniques: Principal Component Analysis [

56] and the Mean-Shift segmentation algorithm [

57]. The input data required for these methods is the NDVI image time series, which was chosen given our crop monitoring application. The purpose was to group pixels representing agricultural areas having similar temporal NDVI profiles, which is very common for pixels belonging to the same crop field. In a similar context, the interest of using NDVI profiles to partition the image into coherent groups has already been shown [

58,

59].

Image Segmentation

Principal Components Analysis [

56] is a multivariate statistical technique that can be used for data compression or time series evaluation. The work introduced by Eastman

et al. (1993) [

60] showed how the use of principal components could satisfactorily extract the information on temporal vegetation changes. In our case, PCA was computed in order to characterize important NDVI transitions on crop profiles.

The Mean Shift (MS) segmentation algorithm [

57] is a nonparametric clustering technique, found in some classification works, such as [

61]. In this work, the MS segmentation algorithm was applied to PCA space, obtained by applying the PCA transformation to the NDVI time series.

The algorithm requires the definition of three parameters: the spatial bandwidth , the spectral bandwidth (or range information) , and the minimum number of pixels in a region (i.e., regions containing less than pixels will be eliminated and merged into the neighboring region).

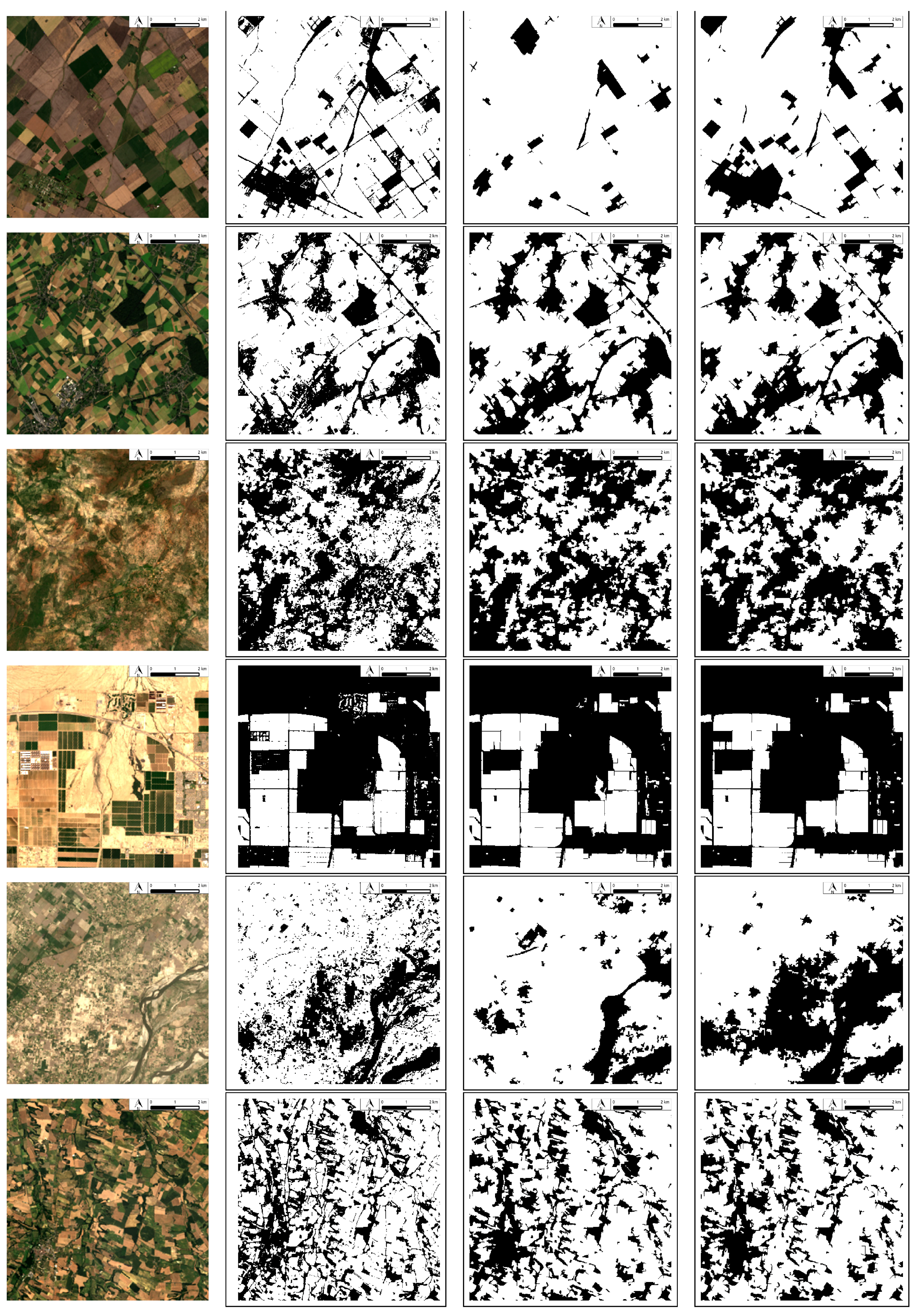

Figure 7.

MS segmentation results obtained for different agricultural landscapes. The MS segmentation algorithm was applied to the six first principal components for all the sites. The same set of MS parameters was used for the four examples : , and . From left to right, the first row contains the Argentina, France, Pakistan, Maricopa (US) true color RGB compositions. The second row contains the MS segmentation results obtained.

Figure 7.

MS segmentation results obtained for different agricultural landscapes. The MS segmentation algorithm was applied to the six first principal components for all the sites. The same set of MS parameters was used for the four examples : , and . From left to right, the first row contains the Argentina, France, Pakistan, Maricopa (US) true color RGB compositions. The second row contains the MS segmentation results obtained.

Figure 7 shows four MS segmentation results obtained with different agricultural landscapes. In the case of the Argentina test site, the cropland area is composed of large rectangular crop fields. In contrast, the agricultural landscape of France, shown in the second row, is composed of small crop fields. The third row shows the Pakistan test site where the landscape is composed of heterogeneous and textured areas. The last row shows Maricopa (US), which is composed of large, isolated crop areas. The results obtained show that most of the crop fields were correctly detected as individual objects of the image.

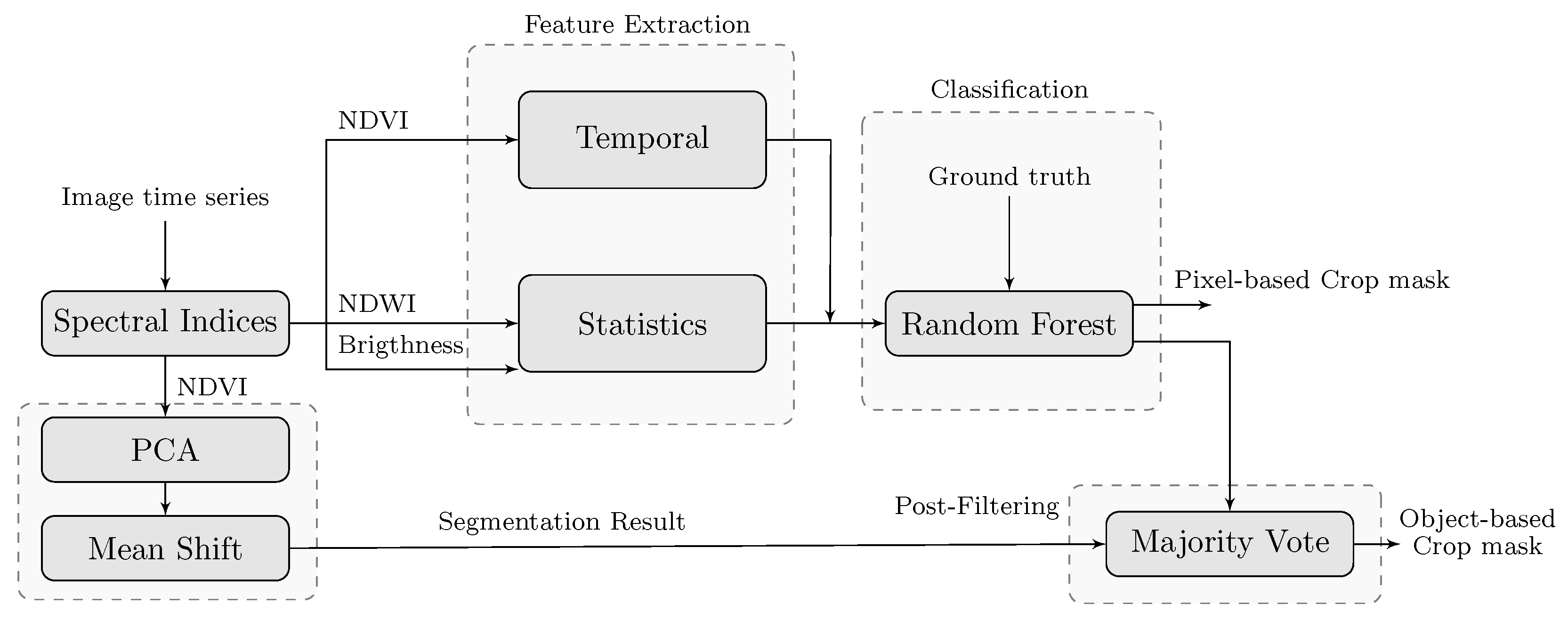

3.3. Object-Based Supervised Classification with Post-Filtering Task (OB-P)

A different object-based approach was proposed here in order to reduce pixel-wise classification noise known as salt-and-pepper. The main difference compared to the last approach was that the spatial information was introduced as a post-filtering task as shown in

Figure 8. The goal was to reduce the noise of the classification map obtained in

Section 3.1 by applying a majority vote regularization. Compared to the pixel-wise classification system, two additional steps were included: first, the obtention of a segmentation result from the original image, which was performed by following the strategy presented in

Section 3.2 and, second, a post-filtering task corresponding to majority vote filtering.

Figure 8.

The proposed object-based classification system with a post-processing task.

Figure 8.

The proposed object-based classification system with a post-processing task.

The voting ensemble proposed here carried out a spatial regularization on the pixel-wise classification obtained by an RF pixel-wise classifier. The regularization process tried to improve the cropland mask results by making more homogeneous crop areas. The spatial neighborhoods defined by the segmentation result were used to delimitate the region to which the majority vote decision was applied. For each region, all the pixels voted for the class label, and the majority vote among the class labels was then assigned to all pixels of the region.

4. Validation Approach

The dynamic time cropland masks were computed on all the test sites by the three supervised classification systems proposed. To do this, the reference data was split into 2 disjoint subsets, the training set and the validation set. In order to avoid correlation between training and testing data as far as possible, two samples belonging to the same object were placed in the same subset. The first subset was composed of 1/3 of polygons and was used for training the RF classifier. Despite working with a binary classification, this subset contained the representation of all the possible classes (maize, grassland, water, etc.). The second subset contained the rest of the in-situ data, which was used for the validation of the algorithms.

To avoid highly correlated results concerning the splitting task of the in-situ data, 10 random splits were made for each site. The mean of the 10 experiments was then compared for the three different classification strategies. The supervised classifier learnt on the polygons not used in validation. From this data set, 1000 crop and 1000 no-crop samples were selected for the RF classifier training. In the case of Madagascar, Burkina Faso and Pakistan, the number of the training samples used was lower, given to the limited number of in-situ samples. Specifically, the numbers of training samples used for each class in Madagascar, Burkina Faso and Pakistan were 314, 165 and 279 respectively. Concerning the object-based approaches, the MS segmentation algorithm was applied to the first six principal components () for all the sites. The same set of MS parameters was used: , and .

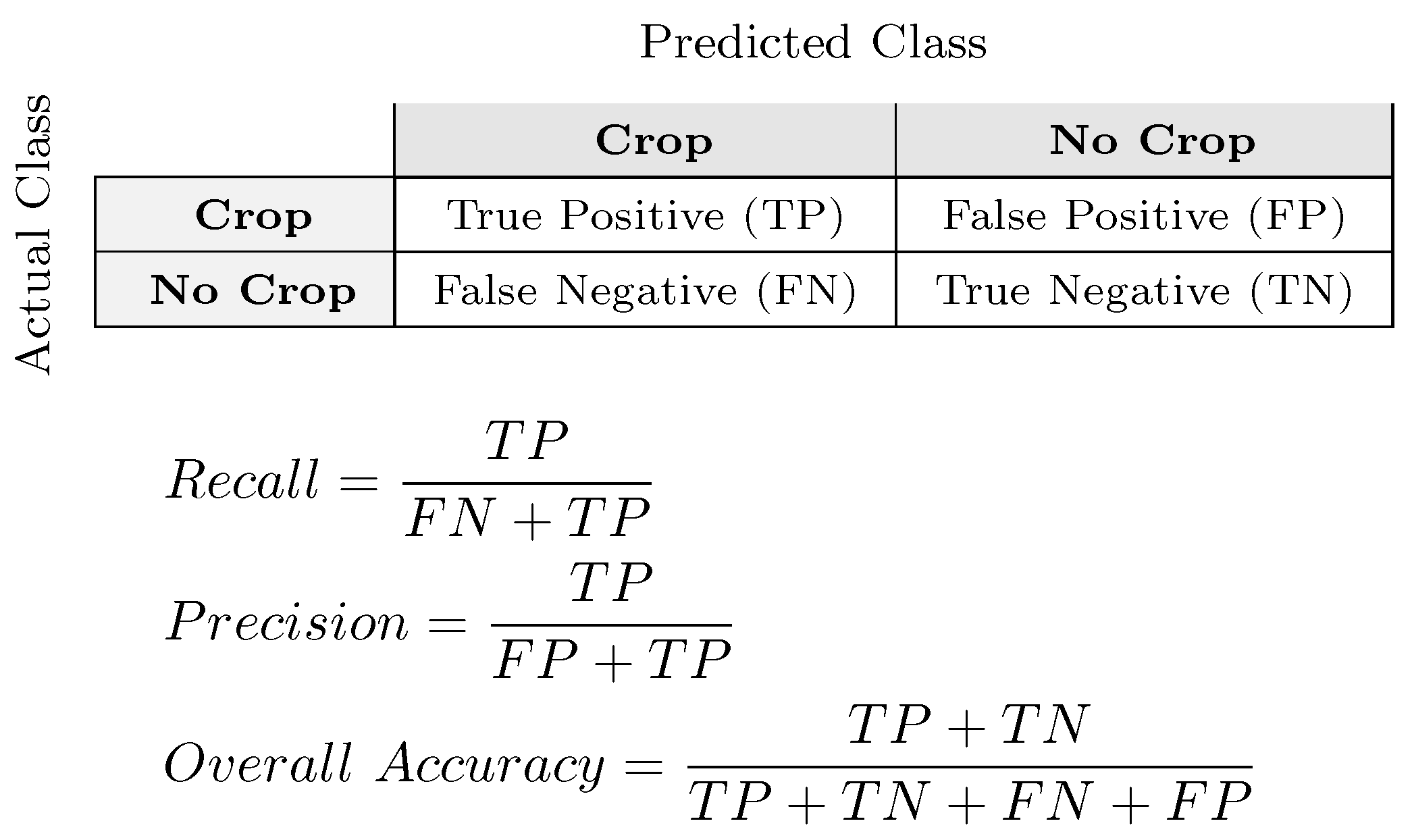

Different classical measures were used to compare the binary cropland masks obtained at the different time intervals. The first quality measures used to evaluate crop and no-crop areas were the Precision and the Recall ratios. Precision was the ability of the classifier not to label as positive a sample that was negative. Recall was the ability of the classifier to find all the positive samples. In addition to these two ratios, Overall Accuracy (OA) [

62] was computed in order to globally evaluate the fraction of crops and no-crops correctly classified.

Figure 9 shows how these measures were computed.

Figure 9.

On the left, the 2x2 confusion matrix displaying the number of correct and incorrect predictions made by the classifier. On the right, equations of the classical quality measures used in the following.

Figure 9.

On the left, the 2x2 confusion matrix displaying the number of correct and incorrect predictions made by the classifier. On the right, equations of the classical quality measures used in the following.

Finally, the Kappa coefficient [

63] was also computed to globally evaluate the detection of crop and no-crop areas. This measure evaluated the relationship between beyond chance agreement and expected disagreement.

6. Conclusions

In the framework of the Sen2-Agri project, this study aimed to develop a dynamic classification system that could be applied globally when enough in-situ data are available. The goal was to use Sentinel-2 proxy data to construct accurate maps of the extent of croplands in different countries, which will work when Sentinel-2 data becomes operationally available. The cropland masks constructed contain plots of land with a minimum area of 0.25 ha, actually sowed/planted and harvestable at least once within the year following the sowing date (not including grasslands or woody vegetation). From the first dates of the season, the goal was to forecast the cropland mask for the end of the season.

Three different classification strategies were proposed and compared on 12 different test sites spread across the globe. Different agricultural landscapes were studied that were characterized by differences in cloud coverage, the presence of snow or the availability of in-situ data.

The classification approaches studied relied on the same feature extraction and supervised classification tasks. However, they presented different strategies in order to explore the advantage of incorporating the spatial information during the classification decision. Temporal vegetation and spectral indices were used in the feature extraction step to derive crop features.

The resulting set of crop features, computed dynamically during the agricultural season was proposed for the characterization of crop profiles. The set of features showed its effectiveness for crop classification. The supervised Random Forest classifier was used and it demonstrated its good performance. The spatial information was incorporated into two of the three proposed classification systems by using a segmentation result. The same methodology for partitioning the original image in order to look for similar cropland areas was proposed for all the sites studied.

The results clearly showed that the cropland extent accuracy increased when the number of images increased through the agricultural season. The real-time classification results yielded very promising accuracies (around in the middle of the season) achieving an accuracy around at the end of the season. As expected, the importance of the quantity and the quality of images to achieve real-time crop detection was confirmed. The impact of missing observations in some periods of the year was also found to be a serious limiting factor. Working with a supervised classification system also showed that the number of the in-situ data and their quality could have a significant effect on the classifier.

Comparisons of the different approaches showed that the object-based classification system using a pre-filtering task gave the worst results. In contrast, the pixel-based quantitative results were very similar to the results obtained by the Object-Based classification system using a Post-filtering task (OB-P). However, visual assessment of the cropland maps obtained showed less noisy results when the OB-P approach was used. One of the main limitations of the OB-P classification system was the detection of small crop fields, which was not always possible using the spatial resolution of 20 m. On the other hand, the results obtained by this last object-based methodology are expected to improve when real Sentinel-2 data is used. This is explained by the fact that the spatial resolution of SPOT4-Take5 and LANDSAT-8 (20 and 30 m), which were used to provide proxy data, is lower than future Sentinel-2 images.

Besides the spatial resolution, Sentinel-2 will also increase the temporal resolution and the number of spectral bands including the red-edge spectrum. Hence, future works will focus on the inclusion of these new sources of information in the OB-P classification system.