Abstract

Ecological measurements in marine settings are often constrained in space and time, with spatial heterogeneity obscuring broader generalisations. While advances in remote sensing, integrative modelling and meta-analysis enable generalisations from field observations, there is an underlying need for high-resolution, standardised and geo-referenced field data. Here, we evaluate a new approach aimed at optimising data collection and analysis to assess broad-scale patterns of coral reef community composition using automatically annotated underwater imagery, captured along 2 km transects. We validate this approach by investigating its ability to detect spatial (e.g., across regions) and temporal (e.g., over years) change, and by comparing automated annotation errors to those of multiple human annotators. Our results indicate that change of coral reef benthos can be captured at high resolution both spatially and temporally, with an average error below 5%, among key benthic groups. Cover estimation errors using automated annotation varied between 2% and 12%, slightly larger than human errors (which varied between 1% and 7%), but small enough to detect significant changes among dominant groups. Overall, this approach allows a rapid collection of in-situ observations at larger spatial scales (km) than previously possible, and provides a pathway to link, calibrate, and validate broader analyses across even larger spatial scales (10–10,000 km2).

1. Introduction

Understanding the underlying drivers and causal factors determining the existence and sustainability of coral reefs has been propelled by the rapid degradation of these ecosystems [1,2]. These issues include Crown-of-Thorns outbreaks [3], coral bleaching and mortality [4,5], and damage from tropical storms [6], in addition to the impacts of sedimentation, nutrient run-off, and pollution [7]. Given the large footprint and cumulative effect of perturbations caused by these stressors, understanding their net effect on coral reef communities requires system-wide analysis, and generates research and management demand for broad-scale (>10,000 km2), standardised data sets. This is especially important given the rapid ocean warming and acidification, effects of which are predicted to produce large-scale changes that are beginning to occur across the planet at regional and global scales [8,9].

While meta-analysis can be an efficient way to synthesise underwater assessment efforts and generalise within and throughout regions [10,11,12,13,14], variability across spatial scales, multiple observers, metrics and methodologies can pose serious challenges for broad generalisations [1,14]. Alternatively, integrative and multi-disciplinary approaches using extensive field observations, optical remote-sensing datasets (satellite- and aerial-derived products) and modelling tools have the potential to enable investigators to scale up field observations in order to understand processes driving change in coral reefs [6,15,16,17]. Irrespective of the approach used to understand reef functioning and change, there is a fundamental need for broad-scale and standardised field data to accurately record and understand reefs under transition in order to provide informed management advice in a timely manner [1,2].

Optical remote sensing provides broad-scale aerial coverage of coral reef systems (e.g., 10,000 km2) with every pixel assessed relative to its habitat composition and with pixel size determining the level of benthic detail mapped, resulting in 100% coverage of the study area [18]. However, optical remote-sensing products do not provide sufficient detail and reliability when compared to field-based measurements [19]. Conversely, field-based observations, while critical for the calibration of remote-sensing imagery and validation of the resulting maps [17,20], typically cover only small areas (<1%) of the study site [21].

Over the past three decades, underwater photography and videography has become increasingly accessible and is now widely used for monitoring coral reef benthic communities. Recent advances in digital photographic technology have enabled more efficient ways of obtaining observations and collecting data on the state of coral reef ecosystems [17,22,23]. Underwater vehicles, as well as diver-acquired methods [24,25,26,27], have also extended the capability of capturing large volumes of photographic records. Such is the case of the approach we evaluate here, the XL Catlin Seaview Survey (CSS), a method aimed at evaluating spatial and temporal patterns of benthic community structure in coral reefs using high-resolution imagery collected across linear transects (~2 km in length) by a customised underwater diver propulsion vehicle [24]. Insofar as the necessity for field data persists, the underlying challenge is shifting from a focus on the generation of information to a focus on the capacity of new tools to decode such information into meaningful metrics, which can extend our understanding of how coral reefs are impacted by a rapidly changing environment.

The challenge of rapid and accurate analysis of large volumes of images has led to productive collaborations between marine and computer science. While automated image analysis is extensively used in satellite image analysis [28] and plankton ecology [29], its application to coral reef systems is relatively new. Such methods, which typically rely on machine learning to map visual attributes of images to semantic classes, are enabling marine scientists to extract useful ecological data from photographic records [30,31,32] at speeds significantly faster than manual methods [24]. Furthermore, the development of photographic sensors and computer vision methods has enabled integration of new approaches in coral reef ecology to quantify a range of other metrics relevant to the discipline (e.g., reef terrain complexity [33,34] and fish abundance [35]).

Previous studies have revealed the potential of automated image annotation to rapidly optimise data mining from underwater imagery and generate reliable ecological metrics relative to coral reefs [30,36,37,38]. While there is high fidelity between human and automated annotations, the latter tend to introduce a level of variability or “noise” to the benthic coverage estimations, perhaps attributed to changes in image quality over time and space (e.g., light, water clarity and distance of the camera from the substrate, etc.) [24,30]. This raises the question of whether such methodologies as those used in the CSS produce viable outcomes for detecting and monitoring reef-scale changes in benthic composition over time and at large spatial extensions (>10,000 km2)?

Here, we validate the application of automated image analysis on field imagery collected by the CSS [24] with a central aim of scaling up underwater observations of coral reefs. In particular, we explored and analysed the error introduced by automated image classification when it is used to estimate changes in benthic community composition across large spatial (regional) and temporal scales (years). Using imagery collected at multiple sites across 49 reefs from the Great Barrier Reef (GBR) and Coral Sea Commonwealth Marine Reserve (CSCMR), we discuss the fidelity of automated and human estimations in the context of variability introduced by multiple observers, in order to understand, describe and offer potential applications and limitations of this technology.

2. Material and Methods

2.1. Study Site

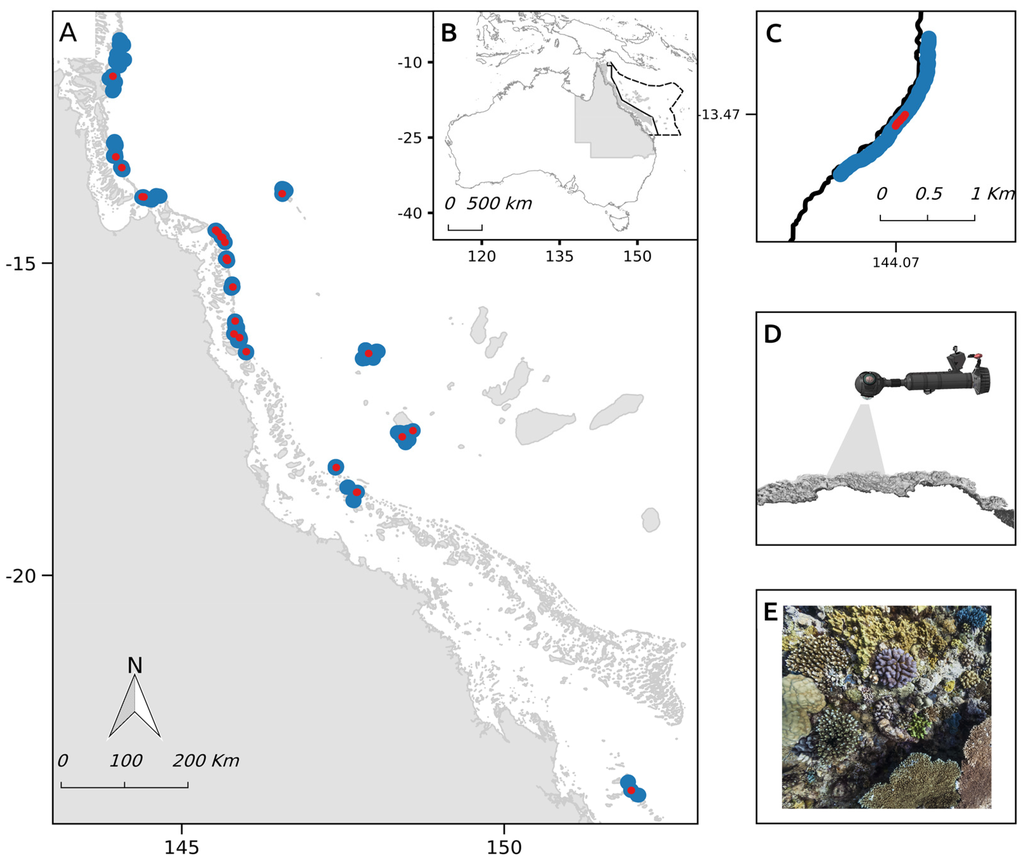

Data were collected in 2012 and 2014, and extracted from a total of 107 transects, comprised of 126,700 images and totalling 325,000 m2 of area surveyed across the outer reefs of the GBR and three main atolls in the CSCMR: the Flinders, Holmes and Osprey reef atolls (Figure 1). A subset of these transects was resurveyed in 2014 in the northern GBR (Figure 1), following the impact of category 5 Tropical Cyclone Ita. The re-survey data were used to evaluate the ability of the technique to detect temporal changes.

Figure 1.

(A) Survey locations of the XL Catlin Seaview Survey in the (B) Great Barrier Reef (solid line) and Coral Sea Commonwealth Marine Reserve (dotted line), Queensland, Australia. Survey transects are shown in blue, while test sites are highlighted in red; (C) A detail of a specific transect is shown, where the sampling unit is depicted in red; (D) Images are collected along each 2-km transect using a customised diver propulsion vehicle; (E) Capturing underwater imagery of the reef benthos every three seconds.

2.2. Field Image Collection

A customised diver propulsion vehicle with a camera system mounted on it (named SVII, Figure 1D), consisting of three synchronised Cannon 5D-MkII cameras, was used to survey the fore-reef habitats in the outer reefs of the GBR and CSCMR. Images (21 Mp resolution) were collected every three seconds, approximately every 2 m, following a linear transect, averaging 1.8 km in length, along the 10 m depth contour (Figure 1C–E). Images were geo-referenced using a surface GPS unit tethered to the diver [24]. An on-board tablet computer enabled the diver to control camera settings (exposure and shutter speed) according to light conditions. Depth and altitude of the camera relative to the surface and the reef substrate were logged at half-second intervals using a Micron Tritech transponder (altitude) and pressure sensor (depth). This meta-data allowed for selection of imagery within a particular depth and altitude range (9–10 m depth and 0.5–2 m altitude) needed to maintain consistency and address variable environmental conditions, as well as ensuring a spatial resolution for each image of approximately 10 pixels·cm−1 (ratio between the number of pixels contained in the diagonal of the image and its estimated size in centimetres). Using the geometry of the lens and altitude values, this pixel to centimetre ratio was calculated to crop the image to standardised 1m2 photo quadrants. Details are provided in González-Rivero et al. [24].

2.3. Image Analysis

2.3.1. Label Set for Benthic Categories

A label set of 19 functional categories was established (Table 1). These categories were chosen for their functional relevance to coral reef ecosystems and their ability to be reliably identified from images by human annotators [38]. Four broad groups represent the main benthic components of coral reefs in the GBR and CSCMR: “Hard Corals”, “Soft Corals”, “Algae”, and “Others”. Hard corals comprise 11 functional groups classified based on a combination of taxonomy (i.e., family) and colony shape (i.e., branching, massive, encrusting, plating, and tabular). These groups were derived, modified and simplified from existing classification schemes [39,40]. Soft corals were classified and represented by three main functional groups: (1) Alcyoniidae soft corals, in particular the dominant genera; (2) sea fans and plumes from the family Gorgoniidae; and (3) other soft corals.

Table 1.

Label set defining of the benthic categories employed for the classification of coral reefs benthos in the Great Barrier Reef (GBR) and Coral Sea Commonwealth Marine Reserve (CSCMR), Australia.

| Group | Short Name | Taxonomic Description | Overall Functional Attributes | Ref. |

|---|---|---|---|---|

| Hard Coral | Acr. branching | Family Acroporidae, branching morphology (excluding hispidose type branching). | Major reef framework builders. Competitive life-history strategy: fast-growing species, spawning reproduction, high susceptibility to thermal stress (bleaching) and wave action. Provide habitat to a range of other reef-dwelling species. | [41,42,43,44,45,46,47] |

| Acr. hispidose | Family Acroporidae, hispidose morphology | Competitive life-history strategy: fast-growing species, spawning reproduction, high susceptibility to thermal stress (bleaching) and wave action. | [44,45,46,47] | |

| Acr. other | Other corals from the family Acroporidae (e.g., Isopora) | Brooding reproduction, severe/high susceptibility to thermal stress, and low/moderate susceptibility to wave action. | [44,45,47,48] | |

| Acr. encrusting | Family Acroporidae, plate and encrusting morphologies | Major reef framework builders. Competitive and generalist life-history strategies: fast/moderate growth rates, spawning reproduction, high/severe susceptibility to thermal stress, moderate to low susceptibility to wave action. | [43,44,45,46,48] | |

| Acr. Tabular | Family Acroporidae, table, corymbose and digitate morphologies | Major reef framework builders. Competitive life-history strategy: fast-growing species, spawning reproduction, high/severe susceptibility to thermal stress (bleaching), and high to low susceptibility to wave action. High to moderate susceptibility to disease outbreaks. Largely contribute to reef structural complexity. | [43,44,45,46,49,50] | |

| Massive meandroid | Families Favidae and Mussidae, massive and meandroid morphologies | Major reef framework builders. Stress-tolerant life history: slow-growing species, spawning reproduction, moderate susceptibility to thermal stress, and low susceptibility to wave action. | [43,44,45,46,48] | |

| Other corals | Other hard coral including all other groups not represented by the other coral categories of this label set. | Mixed attributes. Low/moderate susceptibility to thermal stress. | [51] | |

| Pocillopora | Family Pocilloporidae | Reef framework builders. Competitive and weedy life-history strategies: early colonisers in reef succession trajectories, fast-growing species, brooding reproduction, highly susceptible to thermal stress, but moderate resistant to wave action. High prevalence of coral diseases. | [43,44,45,46,48,51,52] | |

| Por. branching | Family Poritidae, branching morphology | Weedy life-history strategy: spawning reproduction, high/moderate susceptibility to thermal stress. | [45,46,53] | |

| Por. encrusting | Family Poritidae, encrusting morphology | Brooding reproduction. Low/moderate susceptible to thermal stress. | [51,54] | |

| Por. massive | Family Poritidae, massive morphology | Major reef framework builders. Stress-tolerant life history: slow-growing species, spawning reproduction, low/moderate susceptibility to thermal stress, and low susceptibility to wave action. | [43,44,45,46,48] | |

| Algae | CCA | Crustose Coralline Algae | Major reef framework builders and cementers. Provide key contribution to coral reef primary production. Facilitation of coral recruitment. | [55,56,57,58] |

| Macroalgae | Macroalgae. All genera. | Key contribution to coral reef primary production. Provide food source and habitat to a range of other reef dwelling species. Critical role during phase shifts. | [59,60,61,62] | |

| Turf | Multi-specific algal assemblage of 1 cm or less in height | Provide key contribution to coral reef primary production. Nitrogen fixation. Provide food source and habitat to a range of other reef dwelling species. | [63,64,65,66] | |

| Others | Sand | Unconsolidated reef sediment | Not applicable (N/A) | N/A |

| Other Invert. | Other sessile invertebrates | Mixed attributes. | N/A | |

| Soft Coral | Alc. Soft coral | Soft coral, family Alcyoniidae, genera Lobophytum and Sarcophytum. | An important contributor to reef’s structural complexity and biodiversity, providing habitat to a range of other reef-dwelling species. Slow-growing and suspension feeders. Spawning and asexual propagation. Deplete large amounts of suspended particulate matter. | [67,68,69,70] |

| Gorg. Soft coral | Sea fans and plumes | Provide habitat to a range of other reef-dwelling species. Spawning and brooding reproduction. Suspension feeders. | [67,68,69] | |

| Other Soft coral | Other Soft corals | Mixed attributes. | N/A |

The main algae groups were categorised according to their functional relevance: (1) Crustose Coralline Algae (CCA); (2) Macroalgae; and (3) Turf Algae. The latter is considered a grazed assemblage of algae species of up to 1 cm in height. The remaining group, categorised as “Other”, consisted of sand and other benthic invertebrates (Table 1).

2.3.2. Point Annotations

All manual image annotations were conducted using point-sampling methods, sensu the Coral Point Count Method [71], adapted to GBR species and functional groups (Table 1). In this method a number of points are overlaid over the image at random locations, and the substrate types at each point location are assigned to one of the labels (Table 1). We used the point annotation tool of CoralNet for all manual annotation work [72]. A summary of the images and point annotations used in this work is provided in Table 2.

Table 2.

Summary statistics of the annotation effort employed in this study for training the machine and validating the automated image estimations.

| Description | # Images | # Points·Image−1 | # Sites |

|---|---|---|---|

| Training of automated annotator | 1237 | 100 | N/A |

| Spatial error | 1042 | 40 | 41 |

| Temporal error | 335 | 40 | 7 |

| Inter-observer variability | 124 | 40 | 5 |

2.3.3. Automated Estimation of Benthic Composition

In order to automatically estimate benthic composition from collected imagery, we used a machine learning method, Support Vector Machine (SVM) [73], to automatically classify or identify benthic substrate categories from images based on a training provided by human annotators. In general terms, SVM are supervised classification models with associated learning algorithms that recognise patterns from data (in our case, visual parameters described below) to discretise categories assigned a priori (Table 1). Given a set of training examples, each one marked for belonging to a given category, a SVM training algorithm builds a classifier that assigns new examples into one category or another.

A total of 1237 images were randomly drawn from the pool of 126,700 images and used as training data for the automated annotator. Training images were annotated by a human annotator at 100-point locations per image (Table 2), where the substrate beneath each point was identified to the taxonomic resolution described herein (Table 1). The goal of the automated annotation method was to learn from the human annotations and to automatically analyse the remaining 125,463 images (representing approximately 1% of the total number of images in the dataset). Although the automated annotation method can technically annotate every single pixel in every single remaining image, here we followed the standard point sampling protocol and only created automated annotations at 100 randomly selected points per image. The reasoning for this was twofold: (1) to ensure straight-forward comparisons to percentage cover estimates from the human annotations; and (2) because studies indicate that the estimation error is small if the number of points per image is sufficiently large (>10) [71].

We adopted the automated annotation method of Beijbom et al. [30,38]. In this method, the visual parameters of texture and colour descriptors are first encoded as 24-dimensional vectors for all pixels in all images. This information is then summarised into a 250 × 250 pixel neighbourhood [38] (which roughly corresponds to 25 × 25 cm of substrate) around each point of interest (e.g., a labelled point in the trained data or a point in the unlabelled images) using Fisher Encoding [74], resulting in a 1920 dimensional “feature” vector. The feature vectors extracted from the training data are used to train a linear SVM and the trained SVM is finally used to annotate entire datasets from 2012 and 2014, including the “validation set” discussed below. We use the publicly available code [75] to extract the features and liblinear [76] to train the SVM, all performed within a MATLAB environment (MATLAB 2014a, MathWorks, Inc., Natick, MA, USA). Feature extraction required ~20 s per image, SVM training required ~1 h for training and <1 s per image with 100 points per image, which approximately translates to an automated analysis rate of 170 images per hour for benthic coverage.

2.4. Validation of Automated Analysis of Field Imagery

In order to validate automated annotations, we performed a cross-validation exercise between machine and human estimations of benthic cover. Specifically, we evaluated two types of errors for detecting patterns in benthic composition using automated image annotation: (1) error variability across a large spatial extent (GBR and CSCMR), accounting for inter-reef variability in composition, appearance and light conditions; and (2) error for detecting temporal change in a given reef. These analyses are contextualised by comparing machine-introduced variability (error) to human inter-observer variability, which enabled the determination of thresholds for pattern detection using automated annotation. These results provide an important benchmark regarding the opportunities and limitations of rapid, broad-scale and automated assessment tools.

2.4.1. Evaluation Sites

Coral reefs are heterogeneous ecosystems composed of spatial patterns that range from the scale of an individual to that of an ecosystem [77,78]. At the broadest spatial scales, physical processes are the dominant determinant of spatial structures, whereas biological processes override the effects of environmental processes at fine spatial scales [79,80]. Here, we use spatial autocorrelation analysis to set a scale of reference to depict assemblages of communities on the reef where behaviour is more predictable. Without a set reference, we are left with a spectrum of spatial scales where, at one end, we are describing the noise in the data (spatial units smaller than the spatial range of the pattern), while at the other end we are failing to detect patterns because multiple assemblages are aggregated into a single spatial unit (spatial units larger than the spatial range of the pattern).

Therefore, it was necessary to identify a minimum sampling unit from the kilometre-scale transects derived from the CSS in order to assess the ability of the machine to capture benthic community composition patterns across time and space in a replicable and predictable fashion. To do so, we determined a standard scale for replicable and predictable patterns of community composition by investigating the multivariate spatial autocorrelation of benthic coverage (Appendix). Using this approach, we were able to standardise a sampling unit (hereafter: “site”) from the 2 km transects collected by the CSS to 70 m subsections which best account for local heterogeneity of a given reef (Figure 1), and we ensured replicability by maximising the detection of change across space and time (details provided in the Appendix).

2.4.2. Estimating Error across Space

A total of 41 sites containing a total of 1042 images were randomly selected across the GBR and CSCMR transects from 2012 and 2014. These images are hereafter collectively denoted “validation set”, and were selected to eliminate overlap with the set used to train the machine learning algorithm (Figure 1, Table 2). All images in the validation set were manually annotated at 40 points per image using the random point methodology detailed above. The relative cover of 19 benthic categories (Table 1) was averaged for all the images present within each site, and the error estimated as the absolute difference between the machine and a human annotator (Equation (1)):

where S is the spatial error of automated annotation across sites (z) for a given benthic category or label (k), c represents the mean percentage cover of label k at site z, as estimated by the human (i) or the automated (j) annotator.

The distribution of the absolute errors followed a non-normal and skewed distribution, thus the geometric mean was calculated to represent the average error among sites for each label category. Non-parametric bootstrap simulations were used to estimate the 95% confidence intervals around the mean [81].

While S (Equation (1)) provides the necessary details for understanding the limitations of the methods for each category, a broad analysis on the differences between automated and manual estimations was provided using a community approach. Specifically, Diversity (Shannon-Weiner Index—H’), Evenness (Pielou index—J’), and Community Dissimilarity (Bray-Curtis index) indices were used to describe the variability in the automated estimates compared to manual estimates from a compositional perspective.

Diversity indices measure the community biodiversity by considering the number of species, or groups, and their proportional abundance. While diversity is central to the compositional structure of the community, comparative analysis may be difficult because diversity indices combine both the number of species (richness) and their dominance (evenness). Therefore, we also included an index for evenness (Pielou index—Jʹ) derived from the Shannon Index, which is commonly used in ecology [82], to evaluate the capacity of machine annotations to capture the species contribution to the community composition. Bray-Curtis dissimilarity (Equation (2)) was used as a summary metric to quantify the pair-wise compositional dissimilarity between automatic and manual estimations, based on the percentage cover of each benthic category.

where BC represents the Bray-Curtis dissimilarity and c is the relative abundance of each benthic category (k) for a given site (z) where percentage cover has been estimated manually (i) and automatically (j). Using this metric, a value of zero means a complete congruence between manual and automated estimations of the community composition, while a value of 100 means complete dissimilarity.

2.4.3. Estimating Error in Detecting Change over Time

Following a major cyclone impact on the GBR in 2014, a subset of 40 transects from 2012 were re-surveyed in 2014. Using this dataset, we evaluated whether temporal changes in benthic composition can be resolved using automated image annotations. For this purpose, seven sites were selected within a gradient range of impact assessed at being between 0 and 40% loss in coral cover. Images contained within these sites for 2012 and 2014 surveys were automatically annotated and contrasted against human annotations. For each site, the benthic coverage for each label category was averaged and the error of detecting change was calculated as the absolute difference between the automated and human estimates of change for each of the 19 labels (Equation (3)):

where TE is the temporal error of machine annotation in detecting the change for each label (k) at a given site (z), and ∆c is the difference of mean percent cover between 2012 and 2014 (change after the cyclone) estimated by both the human (i) and the automated (j) annotators for each label and site.

As with the spatial error, the distribution of the temporal error followed non-normal and rather skewed distributions. Therefore, the geometric mean and bootstrap 95% confidence interval were estimated for each label category [81].

2.4.4. Inter-Observer Variability

In order to maximise the sampling size to evaluate the error introduced by automated annotation, previous error estimates were conducted using one human annotator as a reference. However, underlying variability across annotators, when identifying benthic substrate [23], can compromise automated estimates and hinder the application of this tool. On the other hand, machine estimations that resemble or even improve human error can serve as an ideal tool. While it is expected that human observations have higher levels of agreement than automated annotations, differences among human annotators across the label set can help contextualise the capacity of automated annotation in resolving different labels being assigned. To explore inter-observer variability further, we used three different human annotators with similar ecological backgrounds and expertise to annotate five randomly selected sites in order to enable estimation of variability among human annotators. We assumed the average percent cover estimates for each label across the three human annotators to be a close approximation to the true abundance of benthic groups than any individual estimates. Human estimates in conjunction with automated estimates for each label were compared against the average estimation among human annotators for each sampling unit. Hence, the error estimation was calculated as the absolute difference between each annotator (including the automated annotator) and the average estimation for each label. The geometric mean of the difference and the bootstrap 95% confidence intervals across sampling units were calculated as the error followed a non-normal distribution.

3. Results

3.1. Estimating Error across Space

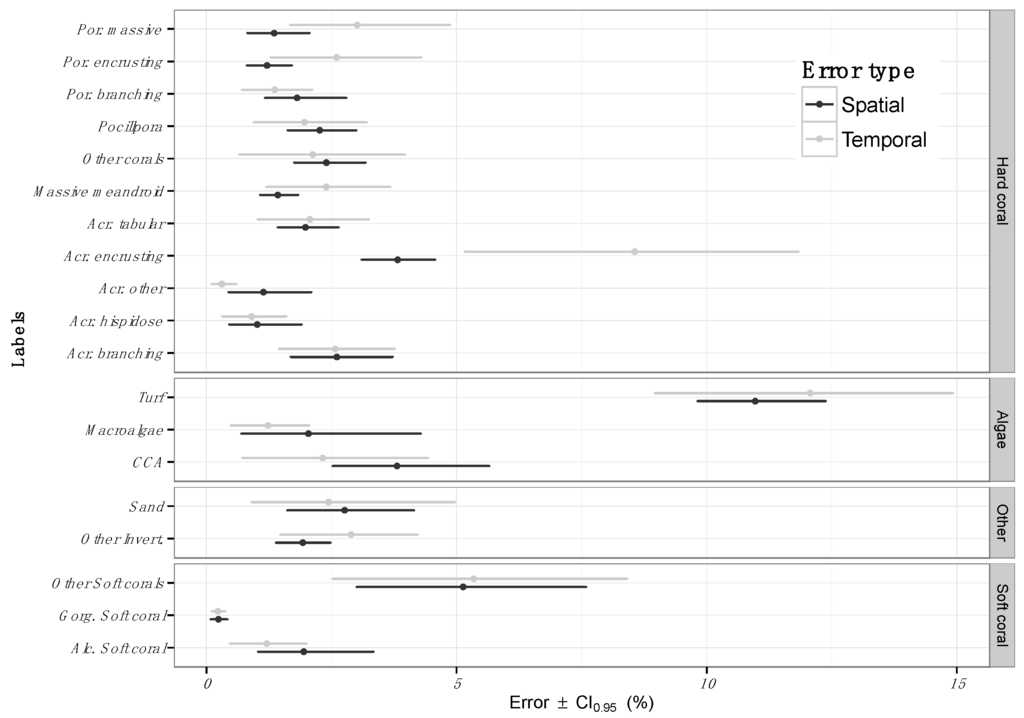

On average, automated image annotation introduced an absolute error of 2.5% ± 0.2% for all 19 benthic categories and across 41 randomly selected sites in the GBR and CSCMR. This means machine predictions of benthic cover were, on average, 2.5% different from the estimations provided by human annotators. Furthermore, differences were observed across different benthic categories (Figure 2). Within the algal functional group, Turf algae estimates showed the largest error: 10.9% ± 2.5%. Broad categories, such as “Other Soft Corals” and “Turf algae”, which include a number of species with different archetypes and morphologies, generally exhibited the largest automated annotation errors. Conversely, and despite the large phenotypic plasticity as well as the number of species grouped into these categories, a high fidelity between the automated and human annotations was recorded.

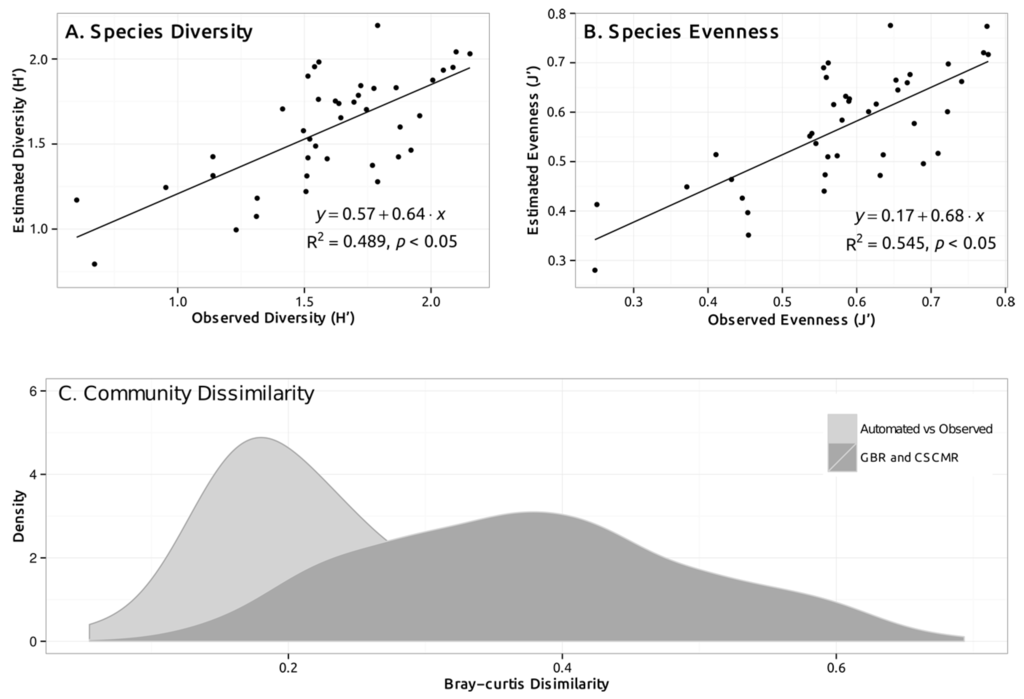

Spatial patterns of benthic community structure across GBR and CSCMR sites, described by indices of diversity and evenness, were captured by automated estimations (Figure 3, Linear Regression, R2 = 0.5, p < 0.05). A relatively disperse relationship between automated and human estimates of diversity and evenness was observed, showing increased variability when compared to more consistent patterns observed for the spatial error (Figure 3). Using the Bray-Curtis distance as an overall metric of dissimilarity between automated and human annotations at a community level, automated estimations differed by 24.5% ± 2.9% from human estimates. As a comparison, the Bray-Curtis distance among 41 sampling units for the GBR and CSCMR atolls for manually annotation averaged 37.7% ± 0.8%.

Figure 2.

Spatial (S) and temporal (T) errors from automated benthic estimations across the GBR and CSCMR. Errors are presented for each one of the 19 labels presented in Table 1, where the short name for each label is detailed, and aggregated by functional groups (“Hard Corals”, “Algae”, “Other” and “Soft Coral”). Points represent the mean machine error and error bars represent the machine error margins.

Figure 3.

Comparisons of automated and manually estimated benthic composition among test sites, using: (A) Diversity index of Shannon; (B) Evenness index of Pielou; and (C) Community dissimilarity index of Bray-Curtis. The first two panels (A,B) represent a correlation plot of estimated (by the machine) and observed (by human annotator) values of diversity and evenness for each test site. The continuous line in both plots represents a fitted linear regression model to evaluate the relationship between observed and estimated values. Linear fit, Coefficient of Determination (R2) and significance (p-value) are shown in the graph. In panel C, the range of community dissimilarity values between automatically and manually estimated benthic composition for each test site is presented in a density histogram (light grey). Overall dissimilarity among all test sites (manually estimated only) across the GBR and CSCMR (dark grey) is included for context.

3.2. Estimating Error in Detecting Change over Time

Comparisons of survey data prior to and after a major cyclone impact on the Northern GBR (Tropical Cyclone Ita) demonstrated that automated predictions of change in benthic communities had an error of 4.2% ± 3.3% (Figure 2). When examining each benthic category in detail, we found differences in the amount of error introduced by the automated annotation among the labels, similar to the differences found in spatial errors (Figure 2). Within the coral functional group, the temporal error averaged 2.5% ± 0.6% and remained below 5% for most of the labels. The exception was the group of hard corals from the family Acroporidae with an encrusting morphology (Acr. encrusting), where the estimated error was 8.6% ± 3.9%. In regard to the algae groups, turf algae again showed the largest error (12.1% ± 2.8%). The error for other substrate types, including “Soft Corals”, remained below 5% with the exception of the broad category of soft corals, “Other Soft Corals”, which included the largest diversity of soft corals species (5.3% ± 2.8%).

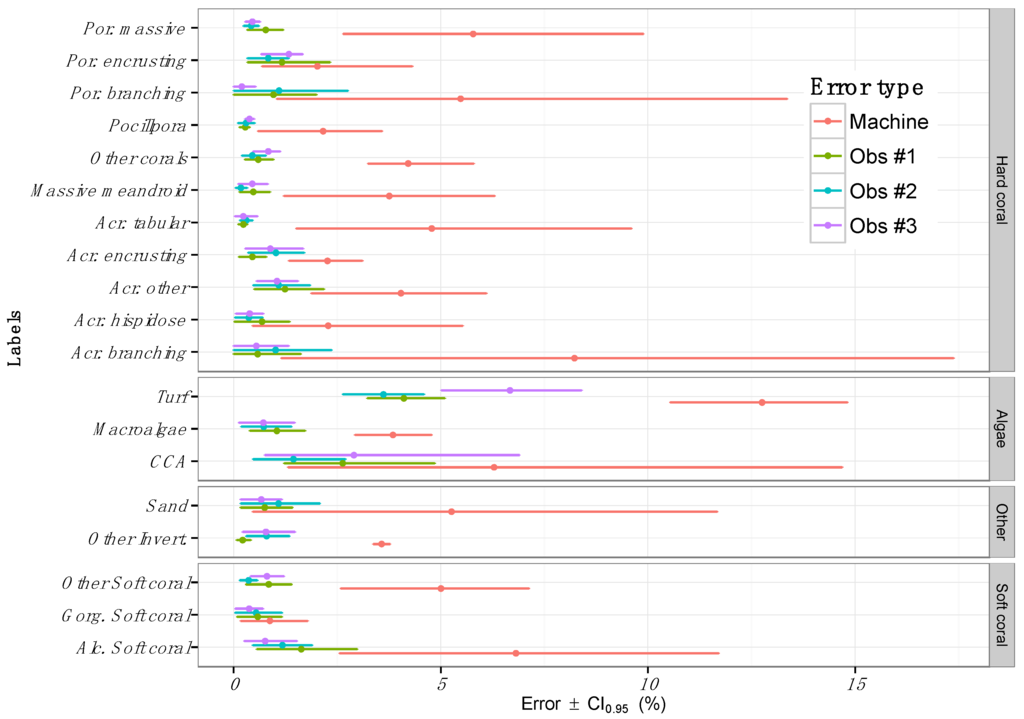

3.3. Inter-Observer Variability

Human annotations were consistently more precise than machine annotations, with human error ranging between 1% and 7% among categories (Figure 4). However, similar to automated annotation errors, considerable variation was noted across labels, with turf algae showing the highest error among human annotators 4.8% ± 0.78%. The turf algae errors were also the largest for the automated annotator. Note that the error estimates from machine annotations are greater than in Figure 2. This is most likely due to a lower sampling size for this exercise (41 vs. 5 sites). Therefore, our results were interpreted based on the relative difference between machine estimation errors and inter-observer variability.

Figure 4.

Inter-observer variability in the estimation of benthic composition for a subset of sites (n = 5) compared against the error introduced by the automated annotator. Error is presented for each one of the 19 labels presented in Table 1 and aggregated by functional groups (“Hard Corals”, “Algae”, “Other” and “Soft Coral”). Points represent the mean machine error and error bars represent the machine error margins.

4. Discussion

Overall, the percentage cover estimated from automated annotations captured spatial and temporal patterns of benthic community composition across the GBR and CSCMR, but with higher quantification errors the than inter-observer variability among human annotators. Our study reveals that machine estimates can measure changes in benthic composition, over time and space, with a minimum detection threshold ranging from 2% to 12% among 19 benthic categories. Using this approach, the method described here has the capacity to gather ecological metrics at kilometre scales with consistently low errors. The generation of standardised, high-definition and spatially sound datasets, via the methods described here, presents an opportunity to fill key data gaps (e.g., stock assessment, biodiversity, temporal trends) [17] and to track and understand the functional attributes of coral reef systems across broad temporal (e.g., years to decades) and spatial (e.g., >10,000 km) scales. This methodology is limited by a narrower taxonomic precision when compared to many smaller and more controlled photographic or in-situ surveys. These are clearly trade-offs that need to be considered in applying “hands-on” versus semi-automated survey technologies [22,23,30]. Advances and further improvements in image capturing and analysis tools are likely to reduce this limitation over time and are further discussed herein.

4.1. Error across Space

Mean estimations of the spatial error, introduced by the automated analysis, varied among categories and averaged 2.5%. These errors increased with the functional aggregation of communities (e.g., diversity and dissimilarity indices). Overall, the relative impact on this error of the data interpretations will depend on the relative abundance of the organisms, taxonomic resolution and the ecological relevance of the variability recorded. While we observed a relatively low estimation error, the noise introduced by the automated analysis may lead to misinterpretations of rare categories for which the average abundance is similar to the quantification errors estimated here (2%–3%). The impact of automated analysis errors on the assessment of more dominant benthic groups, on the other hand, is less pronounced. For example, the mean abundance of hard corals in the GBR and CSMCR ranged from 21% to 31%, in accordance with other studies [50,83,84], and the error of automated estimations averaged to <5%. The errors reported here fall within the expected values for these sites considering the complexity of their respective substrates.

Such variability in the representation of rare and dominant categories is carried over in community structure metrics, where indices of diversity and evenness are sensitive to the abundance of rare categories [85]. Hence, greater variability in the automated estimations of diversity and evenness was observed, although the ability of the machine to capture spatial trends was preserved. Community structure estimation errors can be summarised by measuring the dissimilarity in the community assemblage estimated by the machine when compared to a human annotator, following a traditional approach in community ecology [86]. In this study, we observed that automated estimations of benthic composition differed, on average, from the reference (human estimations) by 25% (Bray-Curtis dissimilarity). However, large heterogeneity of benthic community assemblages has been found in the GBR and CSCMR [83,87], where dissimilarity metrics of benthic assemblages typically range from 40% to 60% [84], and concurs with these results as observed in the Bray-Curtis dissimilarity of reference sites across the GBR and CSCMR (Figuew 3C). Therefore, while the method described here provides advantages for broad-scale community assessments, restrictions may apply to fine comparisons of benthic assemblages where automated annotation errors may obscure subtle differences among communities.

4.2. Error in Detecting Change over Time

The automated annotator estimated the temporal change of benthic composition with similar levels to the spatial error recorded (2%–12%, mean among labels). As with intra-year comparisons, the noise added by the automated annotator for temporal change relative to community composition may affect the interpretation of subtle changes, suggesting the applications of this approach as more suitable for mid-range temporal scales (years or decades) as opposed to subtle inter-seasonal fluctuations. As a reference, coral cover has decreased in the GBR by as much as 25%–30% over the past three decades [6,50], while less representative coral species can fluctuate around 5% [83]. Our results suggest the detection of subtle temporal variations of coral categories (<5%) may be hindered by the noise of automated estimations. Nonetheless, this approach has the capacity to provide detailed and broad-scale information on significant temporal changes (>2%–12%, depending on the categories) in coral reef benthic communities, thus providing data required for assessing causes and consequences of accelerated coral reef degradation over large extents [1].

4.3. Sources of Error

Errors introduced by the automated estimation of percentage cover of benthic groups across the GBR and CSCMR (spatial and temporal errors) can be attributed to two methodological caveats: (a) image quality as a result of variability in reef appearance, underwater light irradiance and distance of the camera to the substrate; and (b) complexity of the label set, where groups enclosing many species with high morphological variation and phenotypic plasticity introduce a large variability due to overlapping visual features that challenges classification [30]. Since the imaging technique does not use artificial light, underwater light irradiance and reef light reflection pose imaging challenges. To compensate for this, fish-eye lenses, high-dynamic range cameras, and flexibility to adjust the camera ISO (International Standards Organization) settings on the fly, optimise the amount of light captured by the camera [24]. An on-board altitude sensor records the distance from the camera to the substrate, which enables the selection of only those images taken within a range from 0.5 to 2 m above the substrate to maintain a fixed resolution for the imagery [24].

Taxonomic resolution and morphological plasticity within the label set can also affect the capacity of automated and manual methods to accurately estimate composition and abundance [23,30]. Quantification of benthic composition from underwater images is limited by the level of taxonomic identification that can be resolved [23,24,30], whereas high-taxonomic resolution (e.g., species level) requires quantifying micro-scale morphology and internal structures of the reef organisms. In addition, species and groups of species exhibiting large morphological variations [88] may have visual attributes or features that overlap among groups, therefore hindering the capacity of automated classification to accurately resolve these classes. Furthermore, depending on the taxonomic aims of the study, taxonomically challenging categories are more prone to human errors, which are carried across to machine estimations from training data sets [22,23,30]. Therefore, the classification reference or label set needs to be designed in such a way that it conveys the taxonomic resolution which is functionally relevant for the intended study while acknowledging the taxonomic limitations of underwater image analysis, both manually and automatically [30,40].

4.4. Future Directions

While we acknowledge a higher taxonomic resolution can be achieved from underwater images [22,23,40], here we used a conservative approach. In this study, we amplified the benthic categorization compared to a previous study [24] whilst maintaining a relatively low number of taxonomic groups categorised by their functional traits (Table 1) and ensuring minimal overlap of visual features among labels. Further research is needed to evaluate the feasibility of expanding the resolution of taxonomic identifications and the capacity of automated methods to discern among labels.

Looking forward, our results indicate that there is room for improvement and the errors reported here can be further reduced by two orthogonal developments. The first involves recent development in the field of automated image analysis using deep Convolutional Neural Networks (CNNs) which have dramatically increased the classification accuracy for a wide range of images [89,90]. The second involves the opportunity to complement the RGB camera used here with multi-spectral or fluorescence cameras [91]. In this case, collecting additional spectral information could improve the ability to detect additional spectral signals, thereby improving precision and accuracy when distinguishing visually overlapping categories.

5. Conclusions

Overall, automated quantification of the relative abundance of coral reef functional groups, over time and space in the GBR and CSCMR, resulted in a relatively low error (2%–12% among labels) when compared to the human variability of benthic abundance estimations by multiple human annotators (1%–7% among labels). While limitations apply to the interpretation of this semi-automatically generated data, overall spatio-temporal changes in benthic community structure can be captured by this technology. Acknowledging the limitations previously discussed, this approach allows the scaling up of coral reef assessments by capturing underwater-water observations over large extensions (2 km transects) and processing such observations (images) at much faster rates than manual image analysis (about 170 image·h−1). Data generated in this way can also contribute to more geographically comprehensive and integrative approaches (e.g., [27,92]) to improve assessment of the functionality and temporal variability for reef systems at regional scales (10–10,000 km) and provide important and informed management advice [93].

Acknowledgments

The study was conducted as part of the “XL Catlin Seaview Survey” funded by XL Catlin, with support from Global Change Institute (Ove Hoegh-Guldberg. and Manuel González-Rivero). Funding was also provided by the Australian Research Council (ARC Laureate to Ove Hoegh-Guldberg), an Early Career Grant at the University of Queensland (Manuel González-Rivero), the Waitt Foundation (Manuel González-Rivero and Ove Hoegh-Guldberg) and the EMBC+ programme at the Ghent University (Yeray González-Marrero). The authors would like to thank the contribution from XL Catlin Seaview Survey, Global Change Institute, Underwater Earth and Waitt Foundation teams for their essential support in field operations. Special thanks to Abbie Taylor and Eugenia Sampayo for their support in annotations of a large number of images for this exercise, and to Sara Naylor, Rachael Hazell and the four anonymous reviewers for their editorial comments on the manuscript.

Author Contributions

Manuel González-Rivero and Oscar Beijbom conceived and designed the study and analyses. Manuel González-Rivero, Oscar Beijbom, Alberto Rodriguez-Ramirez, Anjani Ganase and Yeray González-Marrero contributed towards image processing and analysis. Manuel González-Rivero, Oscar Beijbom, Tadzio Holtrop conducted data analysis and summary. Manuel González-Rivero, Oscar Beijbom, Alberto Rodriguez-Ramirez, Chris Roelfsema, Stuart Phinn and Ove Hoegh-Guldberg contributed to the writing of the manuscript.

Conflicts of Interest

The authors declare no conflict of interest.

Appendix

Defining Sampling Units by Structure Functions

Spatial autocorrelation is a common phenomenon in ecosystems and underlines the first law of geography: “Everything is related to everything else, but near things are more related than distant things” [94]. The structure function defined by this spatial correlation results from both environmental (induced) processes and biotic (inherent) processes interacting on the community [95,96]. Here we described the relation between the spatial structure of each transect and the inter-image distance by means of structure functions [93,94] where autocorrelation is quantified by the Mantel statistic Equation (A1) [95]:

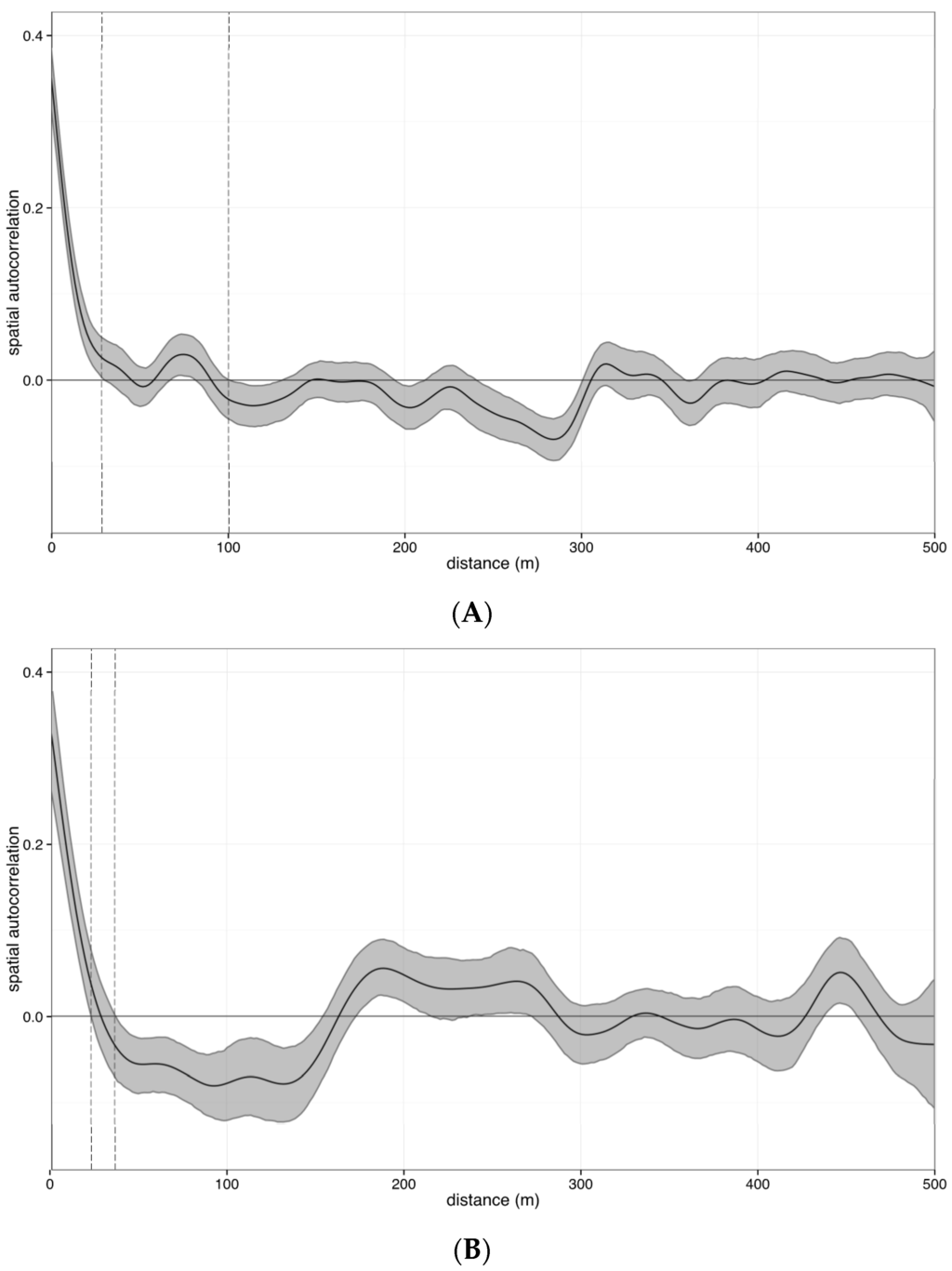

where Zm is the mantel statistic; wij is Euclidean distance (geographic) matrix among n images per transect; and xij is Hellinger distance (community) matrix among images. Hellinger distance was calculated as the Euclidean distance of Hellinger transformed benthic coverage per image [97]. To compute a Mantel correlogram, the matrix wij was converted to a connectivity distance class matrix. A spline correlogram was then used to describe the structure function of the Mantel index as a function of continuous distance, allowing (1) a continuous estimate of the spatial covariance function instead of a discretised approximation like correlograms; and (2) a confidence envelope calculated around the spline using a bootstrap algorithm [98]. Large-scale spatial trends on the data were minimised, or de-trended, by identifying significant spatial trends associated with environmental gradients using polynomial regressions of all variables (benthic cover of 19 categories) on the X-Y coordinates [99]. For the de-trending of data, residuals from selected polynomial regression models (step-wise selection) were used to construct the correlograms. The structure of spatial autocorrelation was calculated individually for each surveyed transect, and the x-intercept of the spline and bootstrap confidence interval envelop was used to estimate the spatial range of benthic communities.

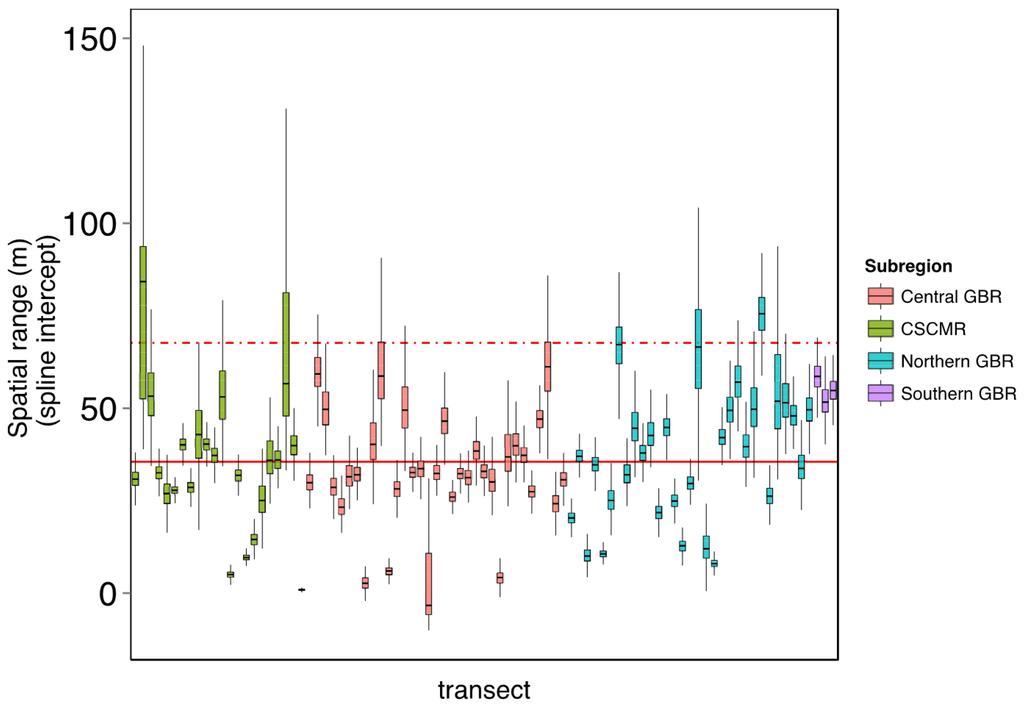

On average, the scale of patterns of benthic assemblages in the GBR and CSMR varied between 30 and 170 m along each transect (Figure A1). Within this range, the spatial autocorrelation was the highest and community composition was more homogeneous, therefore representing an ideal sampling size to better describe the attributes of community assembly. Here we use the upper limit of the confidence interval around the mean across 107 transects (70 m) as the size threshold of the sampling size for evaluating the performance of automated annotations in describing the benthic structure of coral reefs in the GBR and the Coral Sea (Figure A2).

Figure A1.

Examples of multivariate spatial autocorrelation of benthic communities in the GBR and CSCMR. These correlograms show the spatial autocorrelation structure of two transects as an example of the spatial structure of reef benthic assemblages with (A) large and (B) small spatial range. Autocorrelation is calculated by Mantel correlation index of spatially de-trended data as a function of linear distance between sample points. The line represents the fitted spline after 1000 permutations of the data and the shaded polygon shows the standard deviation of these permutations. Vertical dotted lines represent the range of the standard deviation where the splines intercept the x axis, or the range of distance between sampling points from where spatial autocorrelation is minimal (spatial range).

Figure A2.

Spatial range (patch size) of benthic community assemblages across the GBR and CSCMR using 107 transects automatically annotated (see main manuscript for details). Here, the spatial range has been defined by the x-intercept of spatial autocorrelation splines, the point where the correlation between community distance and geographic distance becomes minimal. Each box-plot represents the outputs of 1000 bootstrap simulations of the autocorrelation spline for each transect, colour-coded according to the geographic location. The horizontal solid line shows the overall mean of the spline intercept and the dotted line represents the upper 95% percentile across the dataset.

References

- Hughes, T.P.; Graham, N.A.J.; Jackson, J.B.C.; Mumby, P.J.; Steneck, R.S. Rising to the challenge of sustaining coral reef resilience. Trends Ecol. Evol. 2010, 25, 633–642. [Google Scholar] [CrossRef] [PubMed]

- Knowlton, N.; Jackson, J.B.C. Shifting baselines, local impacts, and global change on coral reefs. PLoS Biol. 2008, 6. [Google Scholar] [CrossRef] [PubMed]

- Fabricius, K.E.; Okaji, K.; De’ath, G. Three lines of evidence to link outbreaks of the crown-of-thorns seastar acanthaster planci to the release of larval food limitation. Coral Reefs 2010, 29, 593–605. [Google Scholar] [CrossRef]

- Baker, A.C.; Glynn, P.W.; Riegl, B. Climate change and coral reef bleaching: An ecological assessment of long-term impacts, recovery trends and future outlook. Estuar Coast. Shelf Sci. 2008, 80, 435–471. [Google Scholar] [CrossRef]

- Hoegh-Guldberg, O. Climate change, coral bleaching and the future of the world’s coral reefs. Mar. Freshw. Res. 1999, 50, 839–866. [Google Scholar] [CrossRef]

- De’ath, G.; Fabricius, K.E.; Sweatman, H.; Puotinen, M. The 27-year decline of coral cover on the great barrier reef and its causes. Proc. Natl. Acad. Sci. USA 2012, 109, 17995–17999. [Google Scholar] [CrossRef] [PubMed]

- Brodie, J.; Fabricius, K.; De’ath, G.; Okaji, K. Are increased nutrient inputs responsible for more outbreaks of crown-of-thorns starfish? An appraisal of the evidence. Mar. Pollut. Bull. 2005, 51, 266–278. [Google Scholar] [CrossRef] [PubMed]

- Hoegh-Guldberg, O.; Cai, R.; Poloczanska, E.S.; Brewer, P.G.; Sundby, S.; Hilmi, K.; Fabry, V.J.; Jung, S. The ocean. In Climate Change 2014: Impacts, Adaptation, and Vulnerability. Part B: Regional Aspects; Barros, V.R., Field, C.B., Dokken, D.J., Mastrandrea, M.D., Mach, K.J., Bilir, T.E., Chatterjee, M., Ebi, K.L., Estrada, Y.O., Genova, R.C., et al., Eds.; Cambridge University Press: Cambridge, UK, 2014; pp. 1655–1734. [Google Scholar]

- Gattuso, J.P.; Magnan, A.; Billé, R.; Cheung, W.; Howes, E.; Joos, F.; Allemand, D.; Bopp, L.; Cooley, S.; Eakin, C. Contrasting futures for ocean and society from different anthropogenic CO2 emissions scenarios. Science 2015, 349. [Google Scholar] [CrossRef] [PubMed]

- Gardner, T.A.; Cote, I.M.; Gill, J.A.; Grant, A.; Watkinson, A.R. Long-term region-wide declines in caribbean corals. Science 2003, 301, 958–960. [Google Scholar] [CrossRef] [PubMed]

- Schutte, V.G.; Selig, E.R.; Bruno, J.F. Regional spatio-temporal trends in caribbean coral reef benthic communities. Mar. Ecol. Prog. Ser. 2010, 402, 115–122. [Google Scholar] [CrossRef]

- Hughes, T.; Baird, A.; Dinsdale, E.; Harriott, V.; Moltschaniwskyj, N.; Pratchett, M.; Tanner, J.; Willis, B. Detecting regional variation using meta-analysis and large-scale sampling: Latitudinal patterns in recruitment. Ecology 2002, 83, 436–451. [Google Scholar] [CrossRef]

- Parmesan, C.; Yohe, G. A globally coherent fingerprint of climate change impacts across natural systems. Nature 2003, 421, 37–42. [Google Scholar] [CrossRef] [PubMed]

- Côté, I.; Gill, J.; Gardner, T.; Watkinson, A. Measuring coral reef decline through meta-analyses. Philos. Trans. R. Soc. B Biol. Sci. 2005, 360, 385–395. [Google Scholar] [CrossRef] [PubMed]

- Scopélitis, J.; Andréfouët, S.; Largouët, C. Modelling coral reef habitat trajectories: Evaluation of an integrated timed automata and remote sensing approach. Ecol. Model. 2007, 205, 59–80. [Google Scholar] [CrossRef]

- Mumby, P.J.; Hastings, A.; Edwards, H.J. Thresholds and the resilience of caribbean coral reefs. Nature 2007, 450, 98–101. [Google Scholar] [CrossRef] [PubMed]

- Roelfsema, C.; Phinn, S. Integrating field data with high spatial resolution multispectral satellite imagery for calibration and validation of coral reef benthic community maps. J. Appl. Remote Sens. 2010, 4. [Google Scholar] [CrossRef]

- Mumby, P.J.; Skirving, W.; Strong, A.E.; Hardy, J.T.; LeDrew, E.F.; Hochberg, E.J.; Stumpf, R.P.; David, L.T. Remote sensing of coral reefs and their physical environment. Mar. Pollut. Bull. 2004, 48, 219–228. [Google Scholar] [CrossRef] [PubMed]

- Hedley, J.D.; Roelfsema, C.M.; Phinn, S.R.; Mumby, P.J. Environmental and sensor limitations in optical remote sensing of coral reefs: Implications for monitoring and sensor design. Remote Sens. 2012, 4, 271–302. [Google Scholar] [CrossRef]

- Wooldridge, S.; Done, T. Learning to predict large-scale coral bleaching from past events: A bayesian approach using remotely sensed data, in-situ data, and environmental proxies. Coral Reefs 2004, 23, 96–108. [Google Scholar]

- Roelfsema, C.; Phinn, S.; Udy, N.; Maxwell, P. An integrated field and remote sensing approach for mapping seagrass cover, moreton bay, australia. J. Spat. Sci. 2009, 54, 45–62. [Google Scholar] [CrossRef]

- Carleton, J.H.; Done, T.J. Quantitative video sampling of coral reef benthos: Large-scale application. Coral Reefs 1995, 14, 35–46. [Google Scholar] [CrossRef]

- Ninio, R.; Delean, J.; Osborne, K.; Sweatman, H. Estimating cover of benthic organisms from underwater video images: Variability associated with multiple observers. Mar. Ecol. Prog. Ser. 2003, 265, 107–116. [Google Scholar] [CrossRef] [Green Version]

- González-Rivero, M.; Bongaerts, P.; Beijbom, O.; Pizarro, O.; Friedman, A.; Rodriguez-Ramirez, A.; Upcroft, B.; Laffoley, D.; Kline, D.; Bailhache, C.; et al. The Catlin Seaview Survey—Kilometre-scale seascape assessment, and monitoring of coral reef ecosystems. Aquat. Conserv. Mar. Freshw. Ecosyst. 2014, 24, 184–198. [Google Scholar] [CrossRef]

- Williams, S.B.; Pizarro, O.; Webster, J.M.; Beaman, R.J.; Mahon, I.; Johnson-Roberson, M.; Bridge, T.C. Autonomous underwater vehicle–assisted surveying of drowned reefs on the shelf edge of the Great Barrier Reef, Australia. J. Field Robot. 2010, 27, 675–697. [Google Scholar] [CrossRef]

- Armstrong, R.A.; Singh, H.; Torres, J.; Nemeth, R.S.; Can, A.; Roman, C.; Eustice, R.; Riggs, L.; Garcia-Moliner, G. Characterizing the deep insular shelf coral reef habitat of the hind bank marine conservation district (US Virgin islands) using the seabed autonomous underwater vehicle. Cont. Shelf Res. 2006, 26, 194–205. [Google Scholar] [CrossRef]

- Roelfsema, C.; Lyons, M.; Dunbabin, M.; Kovacs, E.M.; Phinn, S. Integrating field survey data with satellite image data to improve shallow water seagrass maps: The role of auv and snorkeller surveys? Remote Sens. Lett. 2015, 6, 135–144. [Google Scholar] [CrossRef]

- Mountrakis, G.; Im, J.; Ogole, C. Support vector machines in remote sensing: A review. ISPRS J. Photogram. Remote Sens. 2011, 66, 247–259. [Google Scholar] [CrossRef]

- Culverhouse, P.F.; Williams, R.; Benfield, M.; Flood, P.R.; Sell, A.F.; Mazzocchi, M.G.; Buttino, I.; Sieracki, M. Automatic image analysis of plankton: Future perspectives. Mar. Ecol. Prog. Ser. 2006, 312, 297–309. [Google Scholar] [CrossRef]

- Beijbom, O.; Edmunds, P.J.; Roelfsema, C.; Smith, J.; Kline, D.I.; Neal, B.P.; Dunlap, M.J.; Moriarty, V.; Fan, T.-Y.; Tan, C.-J. Towards automated annotation of benthic survey images: Variability of human experts and operational modes of automation. PLoS ONE 2015, 10. [Google Scholar] [CrossRef] [PubMed]

- Gleason, A.C.R.; Reid, R.P.; Voss, K.J. Automated classification of underwater multispectral imagery for coral reef monitoring. In Proceedings of the 2007 OCEANS, Vancouver, BC, USA, 29 September–4 October 2007; pp. 1–8.

- Marcos, M.S.A.; Soriano, M.; Saloma, C. Classification of coral reef images from underwater video using neural networks. Opt. Express 2005, 13, 8766–8771. [Google Scholar] [CrossRef] [PubMed]

- Friedman, A.; Pizarro, O.; Williams, S.B. Rugosity, slope and aspect from bathymetric stereo image reconstructions. In Proceedings of the 2010 IEEE OCEANS, Sydney, NSW, Australia, 24–27 May 2010; pp. 1–9.

- Leon, J.X.; Roelfsema, C.M.; Saunders, M.I.; Phinn, S.R. Measuring coral reef terrain roughness using “Structure-from-Motion”close-range photogrammetry. Geomorphology 2015, 242, 21–28. [Google Scholar] [CrossRef]

- Boom, B.J.; He, J.; Palazzo, S.; Huang, P.X.; Beyan, C.; Chou, H.M.; Lin, F.P.; Spampinato, C.; Fisher, R.B. A research tool for long-term and continuous analysis of fish assemblage in coral-reefs using underwater camera footage. Ecol. Inform. 2014, 23, 83–97. [Google Scholar] [CrossRef]

- Shihavuddin, A.; Gracias, N.; Garcia, R.; Gleason, A.C.; Gintert, B. Image-based coral reef classification and thematic mapping. Remote Sens. 2013, 5, 1809–1841. [Google Scholar] [CrossRef]

- Pizarro, O.; Rigby, P.; Johnson-Roberson, M.; Williams, S.B.; Colquhoun, J. Towards image-based marine habitat classification. In Proceedings of the 2008 OCEANS, Quebec City, QC, Canada, 15–18 September 2008; pp. 1–7.

- Beijbom, O.; Edmunds, P.J.; Kline, D.I.; Mitchell, B.G.; Kriegman, D. Automated annotation of coral reef survey images. In Proceedings of the 2012 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Providence, RI, USA, 16–21 June 2012; pp. 1170–1177.

- Wallace, C. Staghorn Corals of the World: A Revision of the Genus Acropora; CSIRO Publishing: Clayton, VIC, Australia, 1999. [Google Scholar]

- Althaus, F.; Hill, N.; Ferrari, R.; Edwards, L.; Przeslawski, R.; Schönberg, C.H.L.; Stuart-Smith, R.; Barrett, N.; Edgar, G.; Colquhoun, J.; et al. A standardised vocabulary for identifying benthic biota and substrata from underwater imagery: The catami classification scheme. PLoS ONE 2015, 10. [Google Scholar] [CrossRef] [PubMed]

- Graham, N.A.J.; Nash, K.L. The importance of structural complexity in coral reef ecosystems. Coral Reefs 2013, 32, 315–326. [Google Scholar] [CrossRef]

- Vytopil, E.; Willis, B. Epifaunal community structure in Acropora spp. (Scleractinia) on the Great Barrier Reef: Implications of coral morphology and habitat complexity. Coral Reefs 2001, 20, 281–288. [Google Scholar]

- Van Woesik, R.; Done, T.J. Coral communities and reef growth in the southern great barrier reef. Coral Reefs 1997, 16, 103–115. [Google Scholar] [CrossRef]

- Marshall, P.A.; Baird, A.H. Bleaching of corals on the great barrier reef: Differential susceptibilities among taxa. Coral Reefs 2000, 19, 155–163. [Google Scholar] [CrossRef]

- Richmond, R.H.; Hunter, C.L. Reproduction and recruitment of corals: Comparisons among the Caribbean, the Tropical Pacific, and the Red Sea. Mar. Ecol. Prog. Ser. Oldendorf. 1990, 60, 185–203. [Google Scholar] [CrossRef]

- Darling, E.S.; Alvarez-Filip, L.; Oliver, T.A.; McClanahan, T.R.; Côté, I.M. Evaluating life-history strategies of reef corals from species traits. Ecol. Lett. 2012, 15, 1378–1386. [Google Scholar] [CrossRef] [PubMed]

- Madin, J. Mechanical limitations of reef corals during hydrodynamic disturbances. Coral Reefs 2005, 24, 630–635. [Google Scholar] [CrossRef]

- Hughes, T.P.; Connell, J.H. Multiple stressors on coral reefs: A long-term perspective. Limnol. Oceanogr. 1999, 44, 932–940. [Google Scholar] [CrossRef]

- Madin, J.S.; Baird, A.H.; Dornelas, M.; Connolly, S.R. Mechanical vulnerability explains size-dependent mortality of reef corals. Ecol. Lett. 2014, 17, 1008–1015. [Google Scholar] [CrossRef] [PubMed]

- Bruno, J.F.; Selig, E.R. Regional decline of coral cover in the indo-pacific: Timing, extent, and subregional comparisons. PLoS ONE 2007, 2. [Google Scholar] [CrossRef] [PubMed]

- Wooldridge, S. Differential thermal bleaching susceptibilities amongst coral taxa: Re-posing the role of the host. Coral Reefs 2014, 33, 15–27. [Google Scholar] [CrossRef]

- Willis, B.L.; Page, C.A.; Dinsdale, E.A. Coral disease on the Great Barrier Reef. In Coral Health and Disease; Springer: Berlin, Germany, 2014; pp. 69–104. [Google Scholar]

- Guest, J.R.; Baird, A.H.; Maynard, J.A.; Muttaqin, E.; Edwards, A.J.; Campbell, S.J.; Yewdall, K.; Affendi, Y.A.; Chou, L.M. Contrasting patterns of coral bleaching susceptibility in 2010 suggest an adaptive response to thermal stress. PLoS ONE 2012, 7. [Google Scholar] [CrossRef] [PubMed]

- Kolinski, S.P.; Cox, E.F. An update on modes and timing of gamete and planula release in Hawaiian scleractinian corals with implications for conservation and management. Pac. Sci. 2003, 57, 17–27. [Google Scholar] [CrossRef]

- Chisholm, J.R. Primary productivity of reef-building crustose coralline algae. Limnol. Oceanogr. 2003, 48, 1376–1387. [Google Scholar]

- Chisholm, J.R.M. Calcification by crustose coralline algae on the northern great barrier reef, Australia. Limnol. Oceanogr. 2000, 45, 1476–1484. [Google Scholar] [CrossRef]

- Harrington, L.; Fabricius, K.; De’Ath, G.; Negri, A. Recognition and selection of settlement substrata determine post-settlement survival in corals. Ecology 2004, 85, 3428–3437. [Google Scholar] [CrossRef]

- Heyward, A.J.; Negri, A.P. Natural inducers for coral larval metamorphosis. Coral Reefs 1999, 18, 273–279. [Google Scholar] [CrossRef]

- Diaz-Pulido, G. Macroalgae. In The Great Barrier Reef: Biology, Environment and Management; Hutchings, P., Kingsford, M.J., Hoegh-Guldberg, O., Eds.; CSIRO Publishing: Clayton, VIC, Australia, 2008; pp. 145–155. [Google Scholar]

- Done, T.J. Effects of tropical cyclone waves on ecological and geomorphological structures on the Great Barrier Reef. Cont. Shelf Res. 1992, 12, 859–872. [Google Scholar] [CrossRef]

- Hughes, T.P. Catastrophes, phase-shifts, and large-scale degradation of a caribbean coral reef. Science 1994, 265, 1547–1551. [Google Scholar] [CrossRef] [PubMed]

- Schaffelke, B.; Klumpp, D. Biomass and productivity of tropical macroalgae on three nearshore fringing reefs in the central Great Barrier Reef, Australia. Bot. Mar. 1997, 40, 373–384. [Google Scholar] [CrossRef]

- Hatcher, B.; Larkum, A. An experimental analysis of factors controlling the standing crop of the epilithic algal community on a coral reef. J. Exp. Mar. Biol. Ecol. 1983, 69, 61–84. [Google Scholar] [CrossRef]

- Hatcher, B.G. Coral reef primary productivity: A beggar’s banquet. Trends Ecol. Evol. 1988, 3, 106–111. [Google Scholar] [CrossRef]

- Klumpp, D.D.; McKinnon, D.A. Community structure, biomass and productivity of epilithic algal communities on the Great Barrier reef: Dynamics at different spatial scales. Mar. Ecol. Prog. Ser. 1992, 86, 77–89. [Google Scholar] [CrossRef]

- Larkum, A.; Kennedy, I.; Muller, W. Nitrogen fixation on a coral reef. Mar. Biol. 1988, 98, 143–155. [Google Scholar] [CrossRef]

- Alderslade, P.P.; Fabricius, K.K. Octocorals. In The Great Barrier Reef: Biology, Environment and Management; Hutchings, P., Kingsford, M.J., Hoegh-Guldberg, O., Eds.; CSIRO Publishing: Clayton, VIC, Australia, 2008; pp. 222–245. [Google Scholar]

- Fabricius, K.K.; Alderslade, P.P. Soft Corals and Sea Fans: A Comprehensive Guide to the Tropical Shallow Water Genera of the Central-West Pacific, the Indian Ocean and the Red Sea; Australian Institute of Marine Science (AIMS): Cape Ferguson, QLD, Australia, 2001. [Google Scholar]

- Kahng, S.E.; Benayahu, Y.; Lasker, H.R. Sexual reproduction in octocorals. Mar. Ecol. Prog. Ser. 2011, 443, 265–283. [Google Scholar] [CrossRef]

- Van Oppen, M.J.H.; Mieog, J.C.; Sanchez, C.A.; Fabricius, K.E. Diversity of algal endosymbionts (zooxanthellae) in octocorals: The roles of geography and host relationships. Mol. Ecol. 2005, 14, 2403–2417. [Google Scholar] [CrossRef] [PubMed]

- Pante, E.; Dustan, P. Getting to the point: Accuracy of point count in monitoring ecosystem change. J. Mar. Biol. 2012, 2012. [Google Scholar] [CrossRef]

- Beijbom, O.; Chan, S.; Sampat, D.; Hu, A.; Sandvik, J.; Kriegman, D.; Belongie, S.; Kline, D.I.; Treibitz, T.; Neal, B.; et al. Coralnet. Available online: http://www.coralnet.ucsd.edu (accessed on 1 June 2014).

- Cortes, C.; Vapnik, V. Support-vector networks. Mach. Learn. 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Fan, R.-E.; Chang, K.-W.; Hsieh, C.-J.; Wang, X.-R.; Lin, C.-J. Liblinear: A library for large linear classification. J. Mach. Learn. Res. 2008, 9, 1871–1874. [Google Scholar]

- Beijbom, O.; Edmunds, P.J.; Kline, D.I.; Mitchell, B.G.; Kriegman, D. Moorea Labeled Corals. Available online: http://vision.ucsd.edu/content/moorea-labeled-corals (accessed on 15 October 2014).

- Liblinear—A Library for Large Linear Classification. Available oneline: https://www.csie.ntu.edu.tw/~cjlin/liblinear/ (accessed on 20 October 2014).

- Levin, S.A. The problem of pattern and scale in ecology: The robert H. Macarthur award lecture. Ecology 1992, 73, 1943–1967. [Google Scholar] [CrossRef]

- Habeeb, R.L.; Johnson, C.R.; Wotherspoon, S.; Mumby, P.J. Optimal scales to observe habitat dynamics: A coral reef example. Ecol. Appl. 2007, 17, 641–647. [Google Scholar] [CrossRef] [PubMed]

- Rietkerk, M.; van de Koppel, J. Regular pattern formation in real ecosystems. Trends Ecol. Evol. 2008, 23, 169–175. [Google Scholar] [CrossRef] [PubMed]

- Wiens, J.A. Spatial scaling in ecology. Funct. Ecol. 1989, 3, 385–397. [Google Scholar] [CrossRef]

- Wickham, H. Ggplot2: Elegant Graphics for Data Analysis; Springer: Berlin, Germany, 2009. [Google Scholar]

- Magurran, A.E. Measuring biological diversity. Afr. J. Aquat. Sci. 2004, 29, 285–286. [Google Scholar]

- Ninio, R.; Meekan, M. Spatial patterns in benthic communities and the dynamics of a mosaic ecosystem on the great barrier reef, australia. Coral Reefs 2002, 21, 95–104. [Google Scholar] [CrossRef]

- Jupiter, S.; Roff, G.; Marion, G.; Henderson, M.; Schrameyer, V.; McCulloch, M.; Hoegh-Guldberg, O. Linkages between coral assemblages and coral proxies of terrestrial exposure along a cross-shelf gradient on the southern great barrier reef. Coral Reefs 2008, 27, 887–903. [Google Scholar] [CrossRef]

- Colwell, R.K. Biodiversity: Concepts, patterns, and measurement. In The Princeton Guide to Ecology; Princeton University Press: Princeton, NJ, USA, 2009; pp. 257–263. [Google Scholar]

- Faith, D.P.; Minchin, P.R.; Belbin, L. Compositional dissimilarity as a robust measure of ecological distance. Vegetatio 1987, 69, 57–68. [Google Scholar] [CrossRef]

- Done, T.J. Patterns in the distribution of coral communities across the central great barrier reef. Coral Reefs 1982, 1, 95–107. [Google Scholar] [CrossRef]

- Todd, P.A. Morphological plasticity in scleractinian corals. Biol. Rev. 2008, 83, 315–337. [Google Scholar] [CrossRef] [PubMed]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet classification with deep convolutional neural networks. In Proceedings of the 26th Annual Conference on Neural Information Processing Systems, Lake Tahoe, NV, USA, 3–6 December 2012; pp. 1097–1105.

- Girshick, R.; Donahue, J.; Darrell, T.; Malik, J. Rich feature hierarchies for accurate object detection and semantic segmentation. In Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Columbus, OH, USA, 23–28 June 2014; pp. 580–587.

- Treibitz, T.; Neal, B.P.; Kline, D.I.; Beijbom, O.; Roberts, P.L.; Mitchell, B.G.; Kriegman, D. Wide field-of-view fluorescence imaging of coral reefs. Sci. Rep. 2015, 5, 1–9. [Google Scholar] [CrossRef] [PubMed]

- Mumby, P.J. Stratifying herbivore fisheries by habitat to avoid ecosystem overfishing of coral reefs. Aquac. Fish. Fish Sci. 2014. [Google Scholar] [CrossRef]

- Beger, M.; McGowan, J.; Treml, E.A.; Green, A.L.; White, A.T.; Wolff, N.H.; Klein, C.J.; Mumby, P.J.; Possingham, H.P. Integrating regional conservation priorities for multiple objectives into national policy. Nat. Commun. 2015, 6. [Google Scholar] [CrossRef] [PubMed]

- Tobler, W.R. A computer movie simulating urban growth in the Detroit Region. Econ. Geogr. 1970, 46, 234–240. [Google Scholar] [CrossRef]

- Dale, M.R.; Fortin, M.J. Spatial Analysis: A Guide for Ecologists; Cambridge University Press: Cambridge, UK, 2014. [Google Scholar]

- Fortin, M.J.; Dale, M.R. Spatial autocorrelation in ecological studies: A legacy of solutions and myths. Geogr. Anal. 2009, 41, 392–397. [Google Scholar] [CrossRef]

- Legendre, P.; Gallagher, E.D. Ecologically meaningful transformations for ordination of species data. Oecologia 2001, 129, 271–280. [Google Scholar] [CrossRef]

- BjØrnstad, O.N.; Falck, W. Nonparametric spatial covariance functions: Estimation and testing. Environ. Ecol. Stat. 2001, 8, 53–70. [Google Scholar] [CrossRef]

- Borcard, D.; Gillet, F.; Legendre, P. Numerical Ecology with R; Springer: Berlin, Germany, 2011. [Google Scholar]

© 2016 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons by Attribution (CC-BY) license (http://creativecommons.org/licenses/by/4.0/).