5.1. Model Transferability

The transferability of deep learning models in remote sensing applications remains a significant challenge [

60]. The success of model transfer is largely dependent on the model’s ability to adapt to different environmental conditions, particularly in regions with distinct climatic conditions, topographic structures, and cropping systems [

61]. Such environmental variability can cause shifts in crop spectral responses and spatial distribution patterns across regions and years, which may adversely affect model performance when applied to unseen domains [

62].

To evaluate the model’s generalization ability across temporal and spatial dimensions, cross-year and cross-region transfer experiments were designed in this study. Based on the comparative analysis of monthly classification performance (

Table 6), August exhibited relatively stable and superior identification performance in the main study area. Moreover, cotton during this period is in the middle-to-late growth stage, during which the spectral differences among classes are relatively distinct, thereby providing favorable conditions for stable discrimination. Therefore, August imagery was selected as the transfer evaluation window in this study.

In the within-region cross-year experiment, the overall performance remained stable (mIoU = 82.81%, F1 = 89.41%), demonstrating good temporal generalization. In the cross-region transfer experiment, although the performance decreased slightly compared with the within-region cross-year setting (mIoU = 74.56%, F1 = 86.12%), the overall identification capability remained at a relatively high level, indicating that the model maintained relatively strong transfer capability under inter-domain differences.

The ablation experiments further revealed the functions of different modules in transfer scenarios. In the within-region cross-year ablation experiment, removing the Explainer module led to decreases of 1.44% in mIoU, 0.95% in Recall, and 0.69% in F1-score, suggesting that this module contributes to more complete detection of cotton field areas. After removing the WTConv module, mIoU decreased by 0.80% and Recall by 0.73%, while Precision showed a marked decline of 0.85%, indicating that WTConv has advantages in enhancing spatial detail representation and boundary discrimination.

In the cross-region transfer experiment, this difference became more pronounced. After removing the Explainer module, mIoU and F1-score decreased by 1.25% and 1.02%, respectively, while Recall declined by 1.36%, suggesting that under inter-domain distribution shifts, the channel selection and redundancy suppression mechanism may help alleviate the instability caused by feature shifts. In contrast, after removing the WTConv module, Precision declined significantly by 1.85%, whereas Recall decreased by only 0.52%, suggesting that this module plays a more important role in controlling cross-region misclassification and confusion from complex backgrounds.

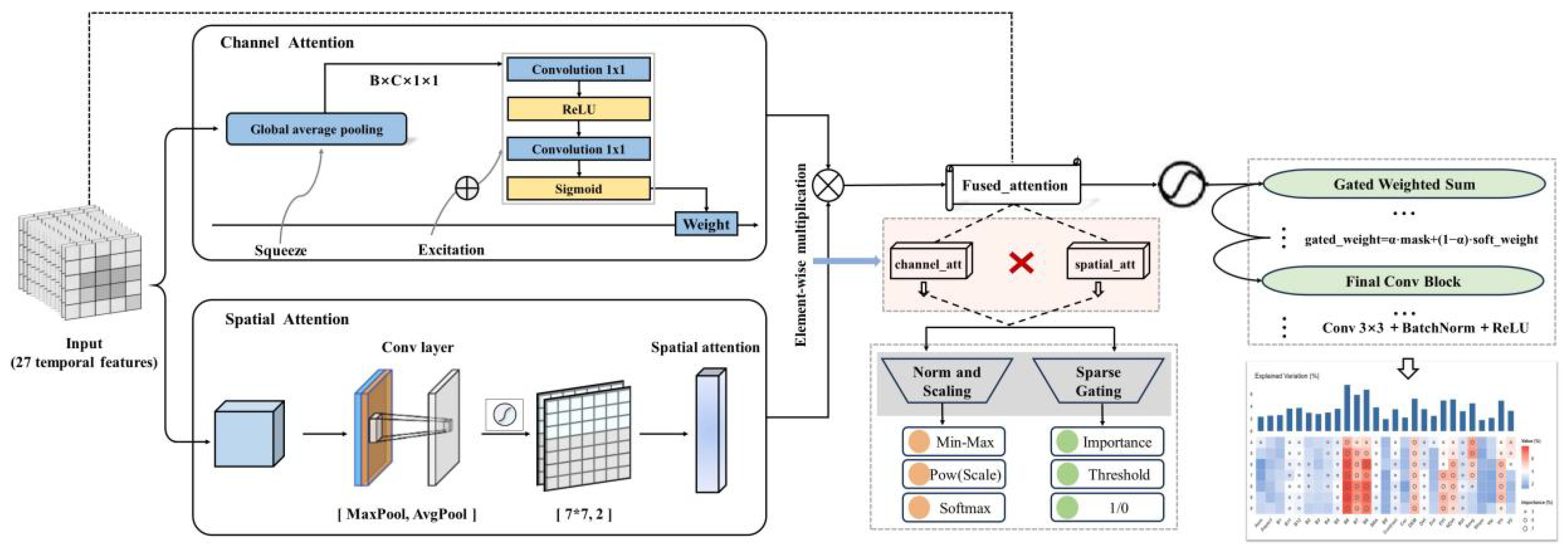

Overall, the two modules play distinct roles under transfer scenarios. The Explainer module dynamically reweights the input channels during forward propagation, which may help suppress redundant features and improve the stability of discriminative representations under domain shifts. In contrast, the WTConv module enhances boundary and detail representation through frequency-domain spatial modeling. Together, these two modules form a complementary mechanism that helps the model maintain a more consistent discriminative feature structure in cross-year and cross-region transfer tasks.

To further validate the effectiveness of the proposed method, we compared the performance of the proposed model with that of the baseline U-Net model in transfer tasks. In the within-region cross-year experiment, the proposed model (mIoU = 82.81%, F1-score = 89.41%) outperformed U-Net (mIoU = 81.17%, F1-score = 88.02%). In the cross-region experiment, the proposed model (mIoU = 74.56%, F1-score = 86.12%) likewise outperformed the U-Net model (mIoU = 71.75%, F1-score = 84.39%). These experiments further support the robustness of the proposed method in cross-domain application scenarios.

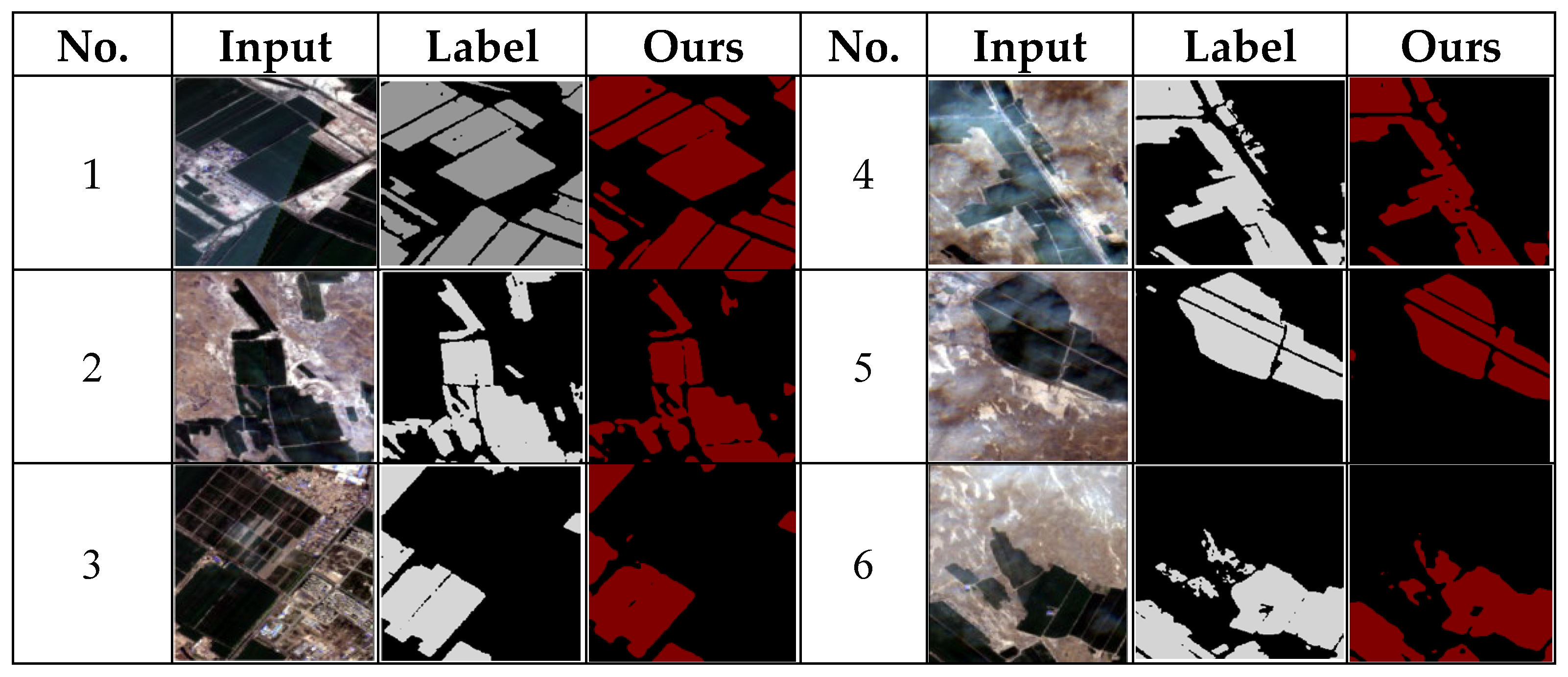

A further point worth noting is that, in the cross-region transfer experiment, the Precision and Recall metrics exhibited a certain degree of imbalance. This phenomenon provides important clues for understanding the error structure of the model under inter-domain distribution shifts. We conducted a systematic comparative analysis of the model predictions against the original remote sensing imagery and reference labels, and diagnosed model behavior using representative qualitative cases (

Figure 8). The results of Case 1 show that the model can stably and completely identify the core areas of cotton fields, and the predictions are highly consistent with the reference labels within the field interiors, thereby maintaining a high recall in cross-region applications. Cases 3 and 5 show that the predicted cotton field extent was generally smaller than or close to the actually identifiable cotton field extent, and no obvious outward expansion of cotton field area was observed. Cases 2, 4, and 6 show that model errors were mainly concentrated at field boundaries, crop transition zones, and areas with strong background heterogeneity, manifesting as local pixel-level confusion. This phenomenon may be related to differences in planting structure and spatial patterns between the Wei-Ku Oasis and Manasi County. During training in the source domain, which is characterized by complex cropping structure and fragmented fields, the model gradually learned spectral–phenological combination features with strong robustness for cotton discrimination, so as to reduce missed detections under mixed backgrounds. When transferred to the target domain dominated by large-scale and intensive cotton cultivation, this strategy helps stably identify the main body of cotton fields, but introduces a small amount of pixel-level uncertainty at field boundaries and in heterogeneous background areas, thereby resulting in a relative decline in precision.

Overall, the precision–recall imbalance in cross-region transfer is mainly manifested as local pixel-level confusion under complex boundary conditions, rather than overprediction at the overall spatial scale.

In summary, the transfer performance of the model depends not only on feature representation capability, but also on the stability of feature selection under inter-domain distribution shifts. By dynamically reweighting input channels during forward propagation, the Explainer module helps reduce the interference of stage-sensitive variables with discrimination results. In cross-region transfer scenarios, when shifts occur in the distributions of spectral and background structural characteristics, this mechanism helps maintain a relatively consistent feature response pattern. Therefore, the performance gains achieved in this study are reflected not only in improved accuracy metrics, but also in enhanced stability of discriminative features under inter-domain distribution shifts.

5.2. Phenology-Driven Analysis of Optimal Remote Sensing Time Windows for Cotton Identification

Phenological phenomena objectively reflect plants’ responses and adaptability to environmental conditions during growth and development [

63], mainly manifested as advances or delays in crop phenology and changes in the duration of developmental stages. These variations are of great significance for monitoring and evaluating crop growth status [

64]. The “optimal timing” for crop identification generally depends on the phenological cycle and seasonal rhythm of crops in the study area [

65], thereby determining the optimal temporal acquisition window for remote-sensing imagery. As shown in

Table 5, August and September are critical periods for remote-sensing identification of cotton planting areas in southern Xinjiang; during this time, wheat and maize in the region have largely been harvested [

52], background interference is relatively low, and the salience of cotton targets is enhanced. Based on the results of the improved interpretable deep-learning model developed in this study, August is identified as the optimal acquisition time for cotton extraction in the study area, which is highly consistent with existing findings [

66,

67]. However, at the national scale, some studies argue that September [

68] or June [

69] is a more optimal identification time. Climatic differences among geographic regions are an important cause of this phenomenon, leading to pronounced temporal mismatches in cotton sowing dates, growth progression, and phenological characteristics [

70]. In addition, the choice of remote-sensing data sources and feature types can substantially influence the determination of the optimal time, for example, optical vegetation indices such as NDVI and EVI are more sensitive to changes in vegetation greenness from June to August [

56]. By contrast, SAR imagery-especially the Sentinel-1 VH polarization channel-responds more strongly to changes in canopy spatial structure during the cotton boll-opening stage, effectively enhancing discrimination from bare land and other crops [

71]. Therefore, the optimal timing for remote-sensing identification of cotton planting areas is not fixed, but is jointly determined by multiple factors, including regional phenological rhythms, sensor types, feature sensitivity, and the design of classification strategies.

5.3. Evaluation of Multi-Source Remote Sensing Channels and Their Discriminative Value in Cotton Classification

Across the growing season, Sentinel-2 red-edge channels—particularly B6, followed by B8 and B7—show the highest and most persistent contributions (

Figure 7). This pattern is consistent with the close association of red-edge reflectance with chlorophyll absorption-edge behavior and canopy biochemical status, thereby strengthening cotton–non-cotton contrast during key growth stages. The consistently high ranking of red-edge features, together with the importance of NIR- and SWIR-related information, aligns with prior evidence that red-edge [

72], near-infrared [

73], and shortwave-infrared bands [

74] are among the most informative spectral domains for crop classification.

The results also suggest a stage-dependent, cross-sensor sensing mechanism. Vegetation indices (NDVI/EVI) become more influential during the mid-season, consistent with strengthened sensitivity to canopy greenness and biomass around peak growth. For SAR predictors, the relative contributions of VV and VH vary with canopy development. During April–May, VV exhibits higher importance, which is consistent with the study area’s widespread drip irrigation under plastic mulch that enhances soil/background backscatter and thus improves early-season separability [

75,

76,

77]. As the canopy becomes denser in later vegetative and reproductive stages, VH becomes more discriminative than VV, consistent with stronger crop sensitivity in cross-polarized channels as canopy structure develops [

78]. These findings indicate a seasonal shift from background-dominated to canopy-structure-dominated SAR information under single-period composites.

Topography also plays a persistent role throughout the season. The consistently non-negligible contribution of DEM suggests that terrain-related spatial organization, such as irrigation layouts and cultivation patterns constrained by relief, provides a robust spatial prior in arid irrigated landscapes, complementing spectral and SAR observations. By contrast, although GLCM contrast receives relatively high weight in some periods, its overall contribution remains limited, likely because the 10 m Sentinel-2 resolution constrains the ability of texture descriptors to capture within-field heterogeneity and fine field-boundary patterns [

39,

79].

These findings also help explain regional differences reported in the literature. Forkuor found that VV outperformed VH for discriminating multiple crop types in northwestern Benin, likely because rice cultivation and frequent irrigation produced smoother, wetter surfaces that enhanced VV contrast [

80]. In our arid irrigated cotton system, by contrast, VV dominance is mainly confined to the early season when background and soil effects are stronger, whereas VH becomes increasingly informative as canopy structure develops. Overall, the optimal predictor set is both region- and season-dependent, highlighting the importance of stage-aware quantitative feature evaluation for robust cotton mapping.

5.4. Limitations and Future Directions

Nevertheless, several limitations of this study should be acknowledged. First, although we conducted a systematic monthly diagnostic of cotton classification performance across the full growing season (April–October), classification accuracy in the early growth stages (April–May) remains substantially lower than that achieved in the mid-to-late stages. This is primarily attributed to the high spectral similarity between cotton, bare soil, and other vegetation during the emergence and seedling stages, which inherently limits the information capacity of a single monthly composite derived from medium-resolution optical imagery.

Second, cross-region transfer experiments revealed that model performance declines moderately in heterogeneous transition zones and along field boundaries, manifested as pixel-level false positives and boundary uncertainties. While the proposed method maintained high recall and stable identification of core cotton areas, this precision loss reflects the inherent trade-off of learning robust spectral–phenological features in complex source domains.

Future research may address these limitations by: (1) integrating multi-temporal or hyperspectral data to improve early-stage separability; (2) incorporating boundary-aware constraints or cross-regional adaptation strategies to reduce pixel-level uncertainty in complex transitional zones, thereby further improving cross-region transfer accuracy.