4.1. Datasets

In experiments, we adopted a stratified random sampling strategy, randomly selecting 5% of labeled samples per class for the IP dataset and 1% for the UP, SA, and Houston2013 datasets as training samples. The remaining samples were used for testing. Indian Pines (IP): The Indian Pines dataset is the first test data for HSIC, imaged by an Airborne Visual Infrared Imaging Spectrometer (AVIRIS) in 1992 on an Indian pine tree in Indiana, USA. Its spatial dimension is 145 × 145 pixels, the spectral dimension is 200 spectral bands, and it consists of 16 target categories.

Table 1 presents the category names, number of categories of the Indian Pines dataset, as well as the corresponding color annotations for each category in the visualization of classification results.

Pavia University (UP): The Pavia University dataset was acquired in 2001 over the University of Pavia campus, Italy, using the Reflective Optical System Imaging Spectrometer (ROSIS). The image comprises 610 × 340 pixels, with 115 spectral bands covering the wavelength range of 0.43–0.86 µm. After the removal of 12 noisy bands, the remaining 103 bands are commonly used for analysis. provides the category names, the number of categories, and the corresponding color annotations for this dataset.

Table 2 presents the category names, number of categories of the Pavia University dataset, as well as the corresponding color annotations for each category in the visualization of classification results.

Salinas (SA): The Salinas hyperspectral dataset was acquired by the Airborne Visible/Infrared Imaging Spectrometer (AVIRIS) over the Salinas Valley, CA, USA. The image has dimensions of 512 × 217 pixels (totaling 111,104 pixels) and originally comprises 220 spectral bands covering a wavelength range of approximately 0.4–2.5 µm. The scene is annotated into 16 distinct land cover classes.

Table 3 presents the category names, number of categories of the Salinas dataset, as well as the corresponding color annotations for each category in the visualization of classification results.

University of Houston 13 (HU): The HU dataset consists of 349 × 1905 pixels with 144 spectral channels ranging from 364 to 1046 nm and a spatial resolution of 2.5 m/pixel. In addition, the ground truth reference was subdivided into spatially disjoint subsets for training and testing, including 15 mutually exclusive urban land cover classes with 15,029 labeled pixels.

Table 4 presents the category names, number of categories of the Houston 13 dataset, as well as the corresponding color annotations for each category in the visualization of classification results.

4.4. Comparison with State-of-the-Art Methods

The proposed model is compared with representative baselines spanning different methodological paradigms in HSI classification. These include: traditional machine learning methods RF [

37] and SVM [

38] to establish fundamental benchmarks; early deep learning models like Context [

39] to trace the evolution from CNNs to advanced architectures; hybrid 2D/3D CNNs, such as HybridN [

40] and CVSSN [

41], as direct competitors sharing a similar hybrid convolutional design; attention-based networks RSSAN [

42], SSTN [

43], and SSAtt [

44] to evaluate our CSDA module against prominent attention mechanisms; and graph convolutional networks like F-GCN [

45] to assess performance across different paradigms for modeling non-Euclidean data relationships.

Table 5 shows the experimental results of different methods on the IP dataset. The results show that among the 16 categories of objects, nine categories of the proposed method achieved the best classification effect. The comprehensive classification effect is the best, with its OA reaching 96.78%, AA reaching 90.60%, and Kappa reaching 96.32%. Compared with the HybridN model with a suboptimal effect, our DACINet improves 1.54%, 0.04% and 4.02% on OA, AA and Kappa respectively. It is noteworthy that beyond the mean accuracy, DACINet often exhibits a lower standard deviation across multiple runs compared to other methods (as shown in

Table 5,

Table 6,

Table 7 and

Table 8). This indicates a more stable and reliable classification performance, which is a significant advantage in practical applications. To quantify the impact of limited training samples on classification performance, we analyze the relationship between per class sample size and accuracy. As shown in

Table 1, classes 1 (Alfalfa), 7 (Grass/pasture-mowed), and 9 (Oats) have the fewest training samples in the IP dataset, with only 46, 28, and 20 samples, respectively. Correspondingly,

Table 5 reveals that these three classes consistently achieve the lowest accuracies across all compared methods. Even with our proposed DACINet, the accuracies on these minority classes are only 70.75%, 74.42%, and 73.33%, respectively. In contrast, classes with abundant training samples, such as class 2 (Corn-notill, 1428 samples) and class 11 (Soybean-mintill, 2455 samples), achieve accuracies exceeding 95% with the DACINet. This clear positive correlation between training sample size and classification accuracy demonstrates that limited sample availability is indeed the primary factor contributing to the suboptimal performance on these minority classes.

Table 6 and

Table 7 present the experimental results of different methods on the UP and SA datasets. For the UP dataset, the results of

Table 6 show that in the nine types of land features, our proposed method achieved the best classification effect in six of them. Due to the relatively small number of land feature types in the UP dataset, as can be observed from the data in the table, the accuracy of our proposed DACINet in eight types of land features reached over 90%, and in the second and fifth types, it was as high as over 99%. Kappa is mainly used to measure whether the final classification result is consistent with the actual observation value, and it measures the stability of the entire model’s random classification. Our OA index has climbed to 97.77%, and the AA index has reached an excellent result of 96.72%. At the same time, the consistency test index Kappa is as high as 97.04%. Compared with the HybridN model that ranked second in comprehensive performance, our proposed method has improved by three percentage points in OA, jumped by 6.08% in AA, and achieved a 4.01% increase in Kappa. It can be seen that our proposed model has achieved the best overall classification effect, average classification effect, and model stability on the entire dataset.

In

Table 7, it can be seen that the OA index of our proposed DACINet model reached 99.53%, while the OA of the suboptimal HybridN network was 97.95%, an increase of 1.58% for the SA dataset. However, the OA of other methods generally fluctuated around 90%. The AA index is used to evaluate the overall classification performance of a model. According to the experimental results in the table, the overall classification effect of each model on the SA dataset was above 90%. The AA index of the DACINet model reached 99.58%, which was a significant improvement compared to SSTN and SSAtt, and it was 2.12% higher than the HybridN network. Kappa is also an important indicator for evaluating the effectiveness of a classification model. For the proposed method, Kappa reached 97.04%, and in 16 land cover categories, our proposed method achieved the best classification effect for 10 categories. This demonstrates that the DACINet can more accurately and stably complete the hyperspectral image classification.

Table 8 presents the experimental results of different methods on the HU dataset. From the experimental results, it can be observed that among the 15 land cover categories, our proposed DACINet achieves the best classification performance in nine categories, demonstrating its powerful feature discrimination capability. Particularly noteworthy is that on categories with complex textural characteristics, such as class 5 (Grass) and class 14 (Tennis Court), our method achieves accuracies of 97.55% and 96.32%, respectively, significantly outperforming other comparative methods. In terms of comprehensive evaluation metrics, our method achieves the best results across all three key indicators: OA, AA, and Kappa, reaching 86.67%, 89.20%, and 87.12%, respectively. Compared with the suboptimal A2S2K model, our DACINet improves by 1.72%, 3.85%, and 4.03% in OA, AA, and Kappa, respectively. It is worth noting that even on categories with lower sample discriminability, such as class 10 (Coastal) and class 13 (Parking Lot), our method maintains relatively stable classification performance, benefiting from the effective spectral–spatial feature capability of the CSDA module. Overall, on this challenging HU dataset, the DACINet demonstrates excellent classification performance and good generalization ability, further verifying the effectiveness and robustness of the proposed framework.

4.5. Ablation Studies

Ablation of different modules. In this section, ablation experiments are conducted to verify the functions of different modules in the DACINet. The CIFM, CSDA and hybrid convolution layer are introduced to the backbone network step by step. The results are shown in

Table 9, where √ indicates the module is used, and × indicates it is not used. It can be seen that the classification results only through the CIFM are the worst, with OA of 85.20% and Kappa of 92.90 on the IP dataset, and with OA of 90.40% and Kappa of 87.14 on the UP dataset. This is because it focuses on fusion and lacks judgment on the validity. When combined with the CIFM and hybrid convolution layer, the overall classification accuracy and stability of the three are improved, which indicates that the feature extraction after the context interaction fusion is effective. Obviously, the DACINet with all modules obtains the best classification accuracy in OA and Kappa on the three datasets, specifically OA of 99.53% and Kappa of 99.48 on the SA dataset. This indicates that all modules play their own effective roles and jointly improve the hyperspectral classification.

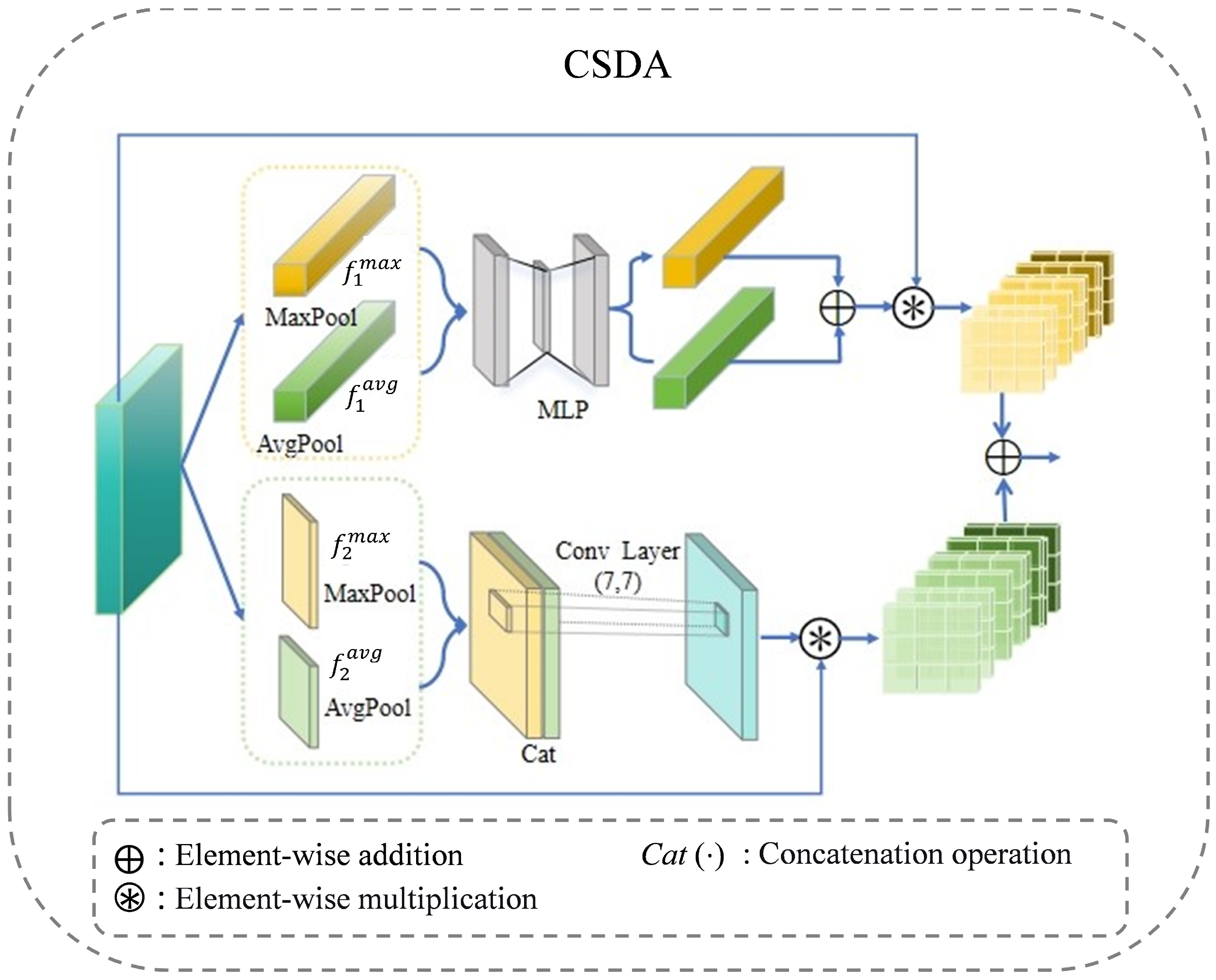

Effectiveness of CSDA. In

Table 10, we show the difference between the proposed CSDA and CBAM, where √ indicates the module is used, and × indicates it is not used. It is observed that when compared with the baseline without any attention mechanism, the CBAM mechanism dose not improve the performance on the UP and SA datasets effectively. On the IP dataset, the OA increases by 1.85%, and Kappa increases by 2.11; the performance is improved significantly. This is because the sample distribution of the IP dataset is unbalanced, and the discriminant information can be effectively screened by introducing the attention mechanism. The proposed CSDA achieves performance improvement on all three datasets, and it is higher than CBAM. This indicates that screening of spectral and spatial features through dual-channels can effectively alleviate sample imbalance, while it also improves classification performance in common scenarios.

Validity of Robustness. To verify the robustness of the proposed model, we conduct experiments on three datasets with different samples. The results on the IP dataset are shown in

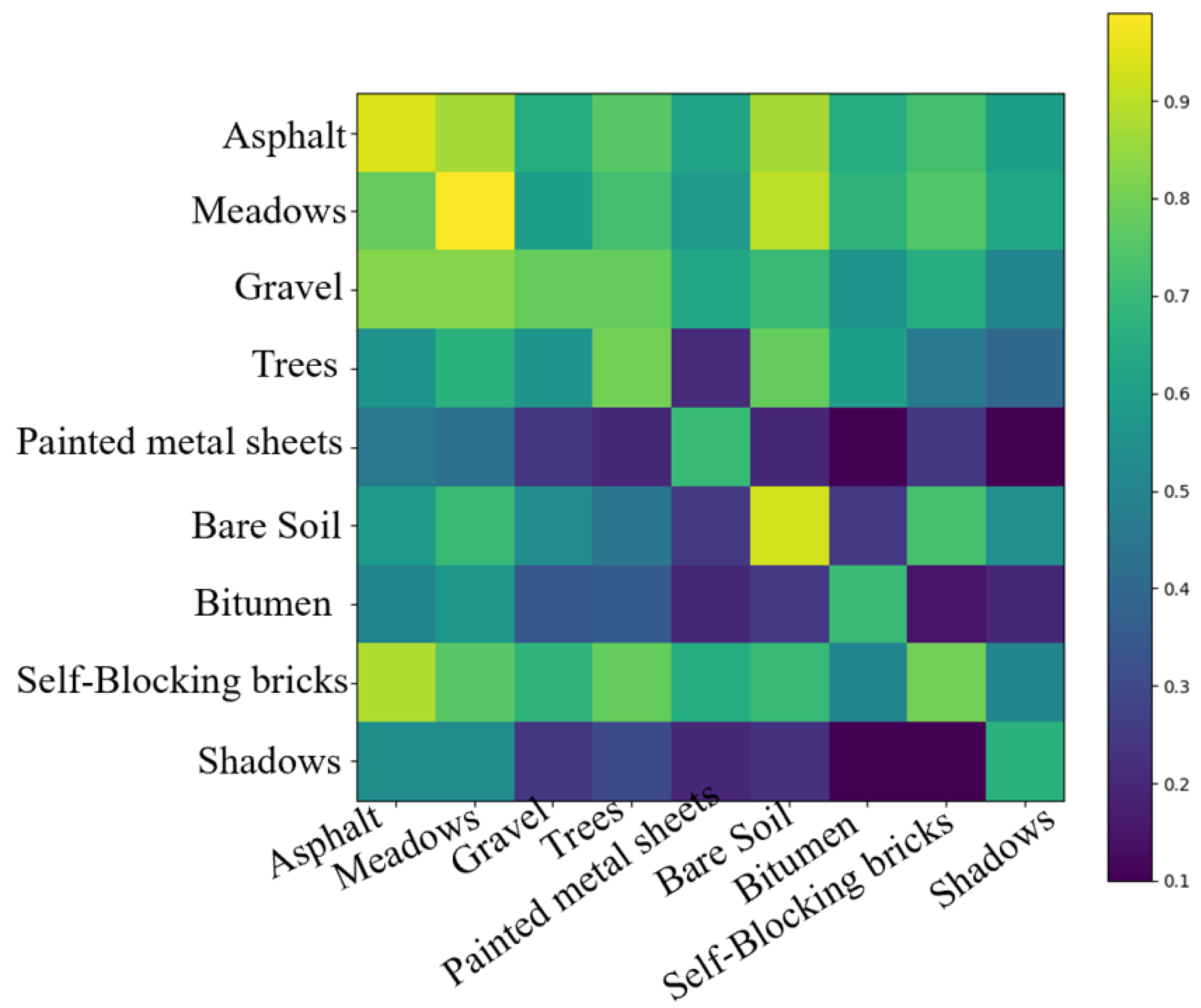

Figure 4. It can be seen that both OA and AA increase as the percentage of training samples increases. In detail, deep learning models significantly improve the classification performance of machine learning models (RF, SVM) across all sample distributions. HybridN has a lower OA and AA score on the IP dataset in few-shot samples, especially when the sample is less than 5%. Overall comparison shows that the proposed DACINet results in the best OA and AA scores across all sample distributions, which indicates the DACINet has stronger robustness than others. To further verify the impact of sample size on classification, we calculated the classification confusion matrix for the nine categories of the UP dataset, as shown in

Figure 5. The classification accuracy of the category with a smaller sample size (such as Shadows) is significantly lower than that of the category with a larger sample size (such as Meadows). This also verifies the contribution of sample size to classification accuracy. Small sample studies still need to be strengthened.

Analysis of Band Dimension. The dimensionality reduction has a certain impact on the final classification performance of the model. For this reason, we conducted relevant experiments on three datasets while keeping other conditions unchanged and varying the band dimensions. The experimental results are shown in

Figure 6. The results show that the performance of the three different datasets varies in different band dimension parameters. When the dimension reduction number is set to 36 for the IP dataset, the values of the three evaluation parameters (OA, AA, and Kappa) are the highest. When it is set to 38, the performance of the model is the worst. Among them, due to the unbalanced data sample distribution in the IP dataset, the values of the OA and Kappa parameters are not much different, while the value of the AA parameter fluctuates significantly. When the dimension reduction band number is set to 13 for the UP dataset, the performance is the best. As the number of bands set increases, the effect gradually decreases, and when it is set to 19, the decline is the greatest, and the classification effect is the worst. For the SA dataset, the values of the three evaluation parameters increase first and then decrease as the number of bands set increases. When the parameters are set to 17, the effect reaches the optimal state. Based on the experimental results, the band parameters of the IP, UP, and SA datasets are set to 36, 13, and 17 respectively.

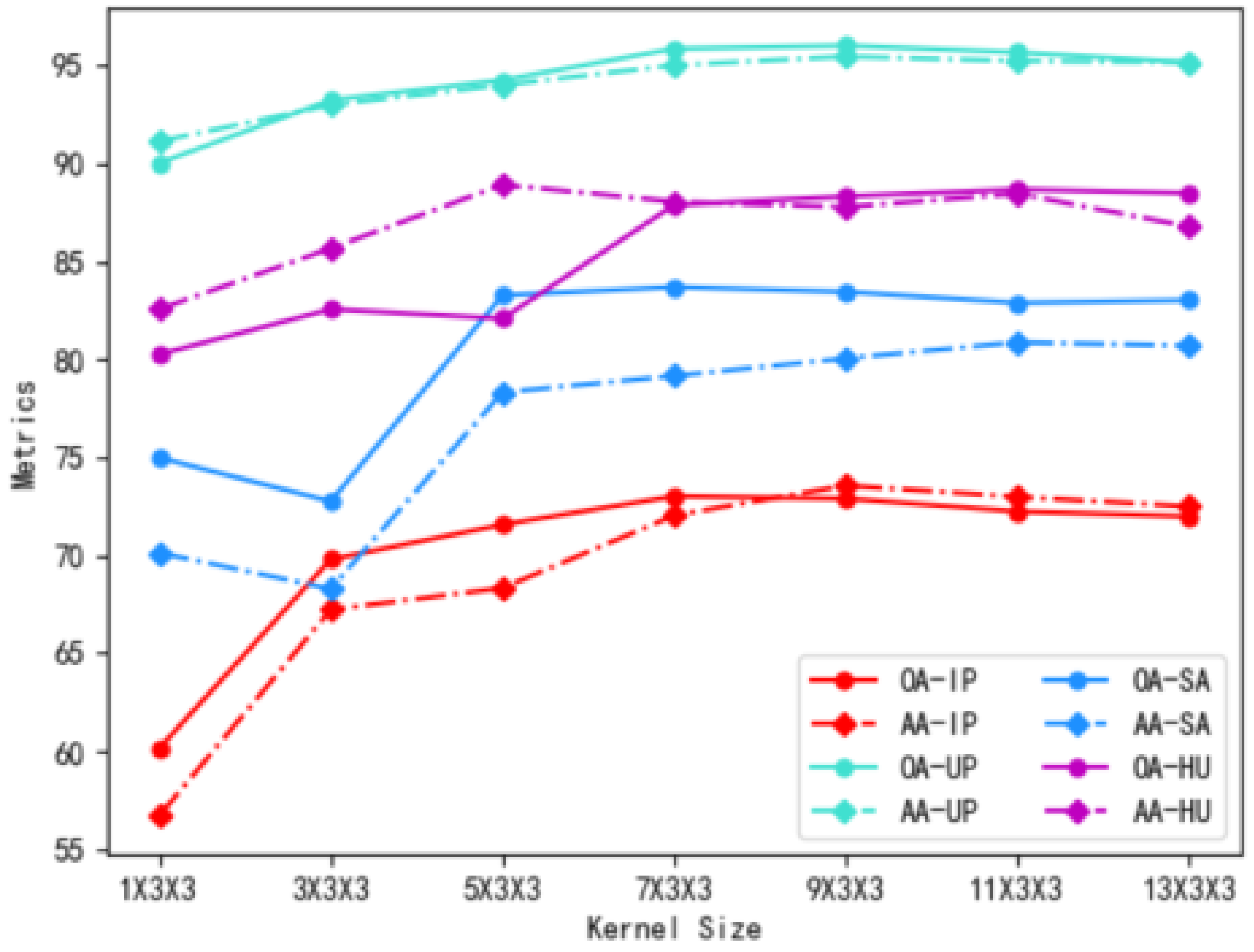

Selection of the Convolution Kernel. The size of the convolution kernel directly affects the amount of information about the adjacent pixels in the selected space. To better explore the different datasets’ requirements for the spatial dimension in the model, this paper also conducts comparative experiments with different convolution kernels. A 3 × 3 small kernel in the spatial dimension is sufficient to extract effective spatial features, and it has a relatively small number of parameters. In the spectral dimension, experiments are conducted on the selected UP, IP, SA, and HU datasets with intervals of two pixels ranging from 1× to 13×. OA and AA are used as the classification results for the four datasets. In the experiment, when the length of the original spectral bands is less than the length of the vector that needs to be mapped and filled, the triangular principle is adopted, that is, it is filled in two steps. The experimental results are shown in

Figure 7. The results show that the overall trend on the four datasets is that it first increases with the increase in the spatial window and then tends to be balanced. When the spectral size is less than seven, the performance gradually improves, and after seven, the classification accuracy tends to stabilize. Considering the overall computational cost, in all subsequent experiments, the convolution kernel is set to 7 × 3 × 3.

Analysis of the Complexity. Table 11 presents the complexity comparison of classification networks using 3D convolution kernels on the IP and UP datasets, mainly focusing on the parameters and floating-point numbers of neural networks. The results show that when using only a 3D CNN, the network parameters are the least, but the floating-point numbers are more. Since the main body of the A2S2K network adopts the residual network, its parameters are the least, but the computational load is relatively large. The proposed method ranks second in terms of both network parameters and floating-point numbers, achieving the best comprehensive evaluation effect. The proposed hybrid convolution layer integrates spatial and spectral information. Among them, the 3D convolution simultaneously captures feature information in both the spectral and spatial dimensions, and introduces 2D convolution to enhance spatial feature extraction. Compared with using only 3D convolution, this design significantly reduces the total number of parameters and the number of floating-point operations, while ensuring the model’s performance.

Analysis of Input Spatial. The size of the input space dimensions also has an impact on the final classification effect of the model. In the three datasets, while setting the optimal band parameters and keeping other conditions consistent, we changed the input space size parameter of the model for experiments to find the most suitable input space size for the three datasets. The experimental results are shown in

Figure 8. The results show that the classification performance of the three datasets varies under different input space sizes. For the IP and UP datasets, the fluctuation amplitudes of the three evaluation parameters (OA, AA, and Kappa) are consistent. As the input space size increases, they first decrease, then increase, and then decrease again. Both the IP and UP datasets achieve the best classification effect at an input size of 17 × 17. However, for the SA dataset, the three evaluation parameters show an overall trend of increasing first and then decreasing as the input size increases. The best classification effect is achieved when the input size is set to 27 × 27. Therefore, based on the experimental results, we set the input size of the IP and UP datasets to 17 × 17, and the SA dataset to 27 × 27.

Convergence Analysis of the DACINet. To verify the rationality of the Epoch parameter setting of the model, this section visualizes the training convergence process of the Indian Pines and Salinas datasets. The experimental results are shown in

Figure 9. The results indicate that the convergence speed of the IP dataset is relatively slow, and it converges completely at around 30. However, the loss function and accuracy curves of this dataset are relatively stable. The SA dataset converges faster and has reached a stable state at 10, but the loss function curve fluctuates significantly, while the accuracy curve is stable. This proves that the Epoch setting we have made is reasonable, and the proposed model has good stability and generalization ability.

4.6. Visualization Analysis

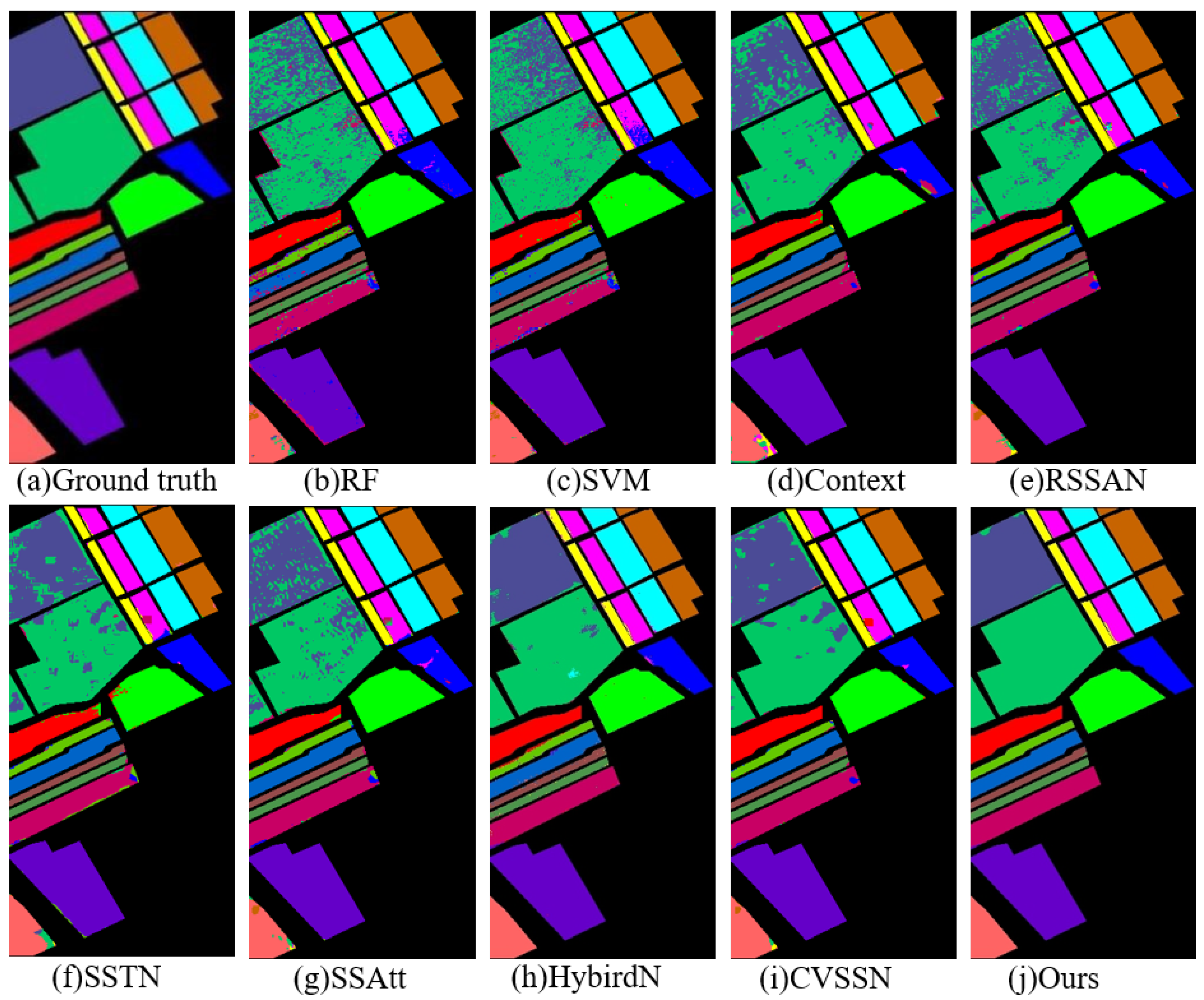

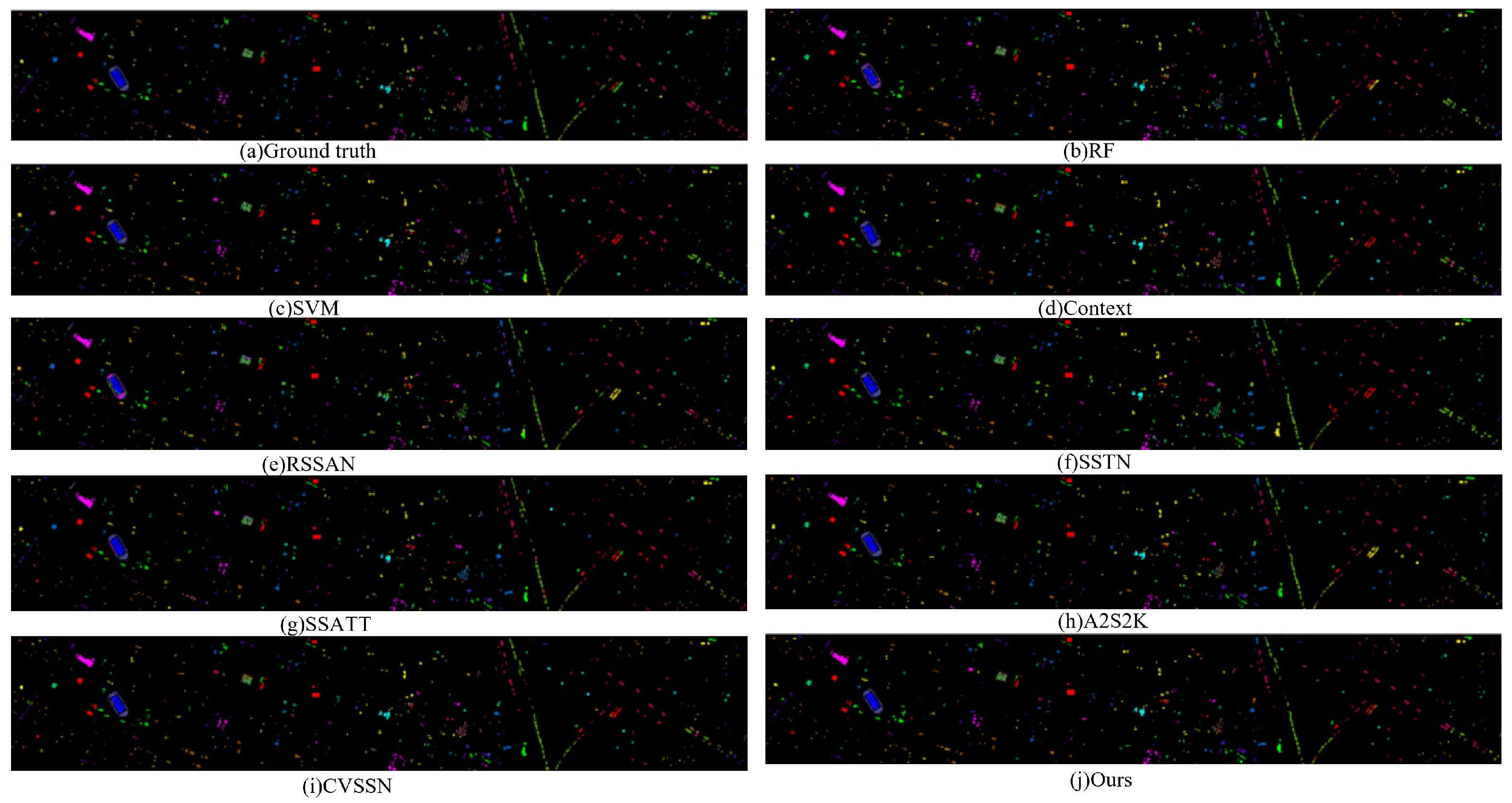

To show the effectiveness of the DACINet more intuitively, the classification results of the proposed DACINet and other representative methods on the IP dataset are visualized in

Figure 10, colors follow the same scheme as described in

Table 1. Among them, a small area in the figure indicates a small number of ground objects, and fewer samples are selected in the classification process. As observed, SSTN and SSAtt classification methods have more errors in the classification of ground objects with fewer samples. The F-GCN method is improved compared with other methods, but it tends to produce wrong results at the intersection of two categories. Clearly, the DACINet has the best overall classification performance, and the boundary accuracy has been significantly improved. The classification visualization results of datasets UP, SA and HU are also presented in

Figure 11,

Figure 12 and

Figure 13, colors follow the same scheme as described in

Table 2,

Table 3 and

Table 4.

The classification performance of the RF and SVM machine learning models on the four datasets is highly susceptible to other factors. For the IP dataset, SSTN and SSAtt are two classification methods that have more errors in the classification of land features with few samples. The HybridN method has been improved compared to other methods, but it is prone to generating incorrect results at the boundary of the two categories. For the UP dataset, SSTN, SSAtt and HybridN are three classification methods that have large errors in the classification of wheat stubble represented by sky blue. Since the UP dataset is an image captured in a university area, the land features are often distributed in narrow and elongated strips, which are prone to generating errors at the edges. The DACINet proposed in this section effectively solves the problem of classification errors in the UP dataset classification task. For the SA dataset, the visual graph results of classification methods such as RF, SSAtt and HybridN have obvious errors. The DACINet, while maintaining the advantages of other methods, significantly improves the classification accuracy of each category. For the HU dataset, the classification task is more challenging due to the complex urban scenes with diverse land cover categories. As shown in the classification visualization results, traditional machine learning methods RF and SVM produce substantial misclassifications, particularly in categories with similar spectral characteristics such as Grass-healthy, Grass-stressed, and Grass-synth. The Context and RSSAN methods show some improvement but still struggle with categories like Parking-lot1 and Parking-lot2, which have irregular shapes and scattered distributions. SSTN and SSAtt exhibit better performance in homogeneous regions like Water and Tree, yet they generate noticeable errors in complex categories such as Residential and Commercial, where mixed pixels are prevalent. A2S2K, as one of the advanced methods, achieves relatively good results but still fails to accurately classify challenging categories like Tennis-court and Running-track with limited samples. In contrast, the proposed DACINet significantly reduces misclassifications across all categories, achieving the most accurate and complete classification maps. The experimental results show that the DACINet network can better complete the classification task on the four datasets. Therefore, by assigning greater weight to spectral–spatial features via the dual attention channel, the context interaction facilitates better feature fusion. Combined with the hybrid network, this approach can alleviate classification errors at object boundaries and edge blurring, thereby improving the overall classification performance of the model.