Research on Landslide Hazard Detection in Ya’an Region Based on an Improved YOLO Model

Highlights

- MSDE-YOLO, a landslide detector based on YOLOv11, effectively addresses blurred boundaries and weakened texture features of landslides in remote sensing imagery of complex terrain, achieving high detection performance with 90.2% precision, 84.8% recall and 92.7% mAP on the self-constructed Ya’an landslide dataset.

- An autonomous multi-scale feature fusion module (MSDE) was designed and incorporated into the neck network of the model. By efficiently aggregating shallow details and deep semantic information, it enhances the model’s ability to represent fuzzy boundaries and introduces a lightweight SimAM attention mechanism. This significantly improves the feature discrimination ability of the slope area under complex imaging conditions, and simultaneously enhances the consistency of slope boundary extraction.

- The results demonstrate that the framework is applicable to landslide hazard detection in topographically complex regions like Ya’an, and is suitable for efficient screening and dynamic monitoring of geological hazards in real-world scenarios.

- This work provides a practical technical framework for intelligent landslide detection based on remote sensing imagery, highlighting the potential of improved deep learning object detection models in geohazard remote sensing applications.

Abstract

1. Introduction

- (1)

- To tackle the issue of blurred boundaries and ambiguous spatial ranges, we design a novel Multi-Scale Detail Enhancement (MSDE) module within the neck network. By aggregating features from multiple receptive fields via parallel convolutional pathways, the MSDE module simultaneously preserves high-resolution spatial details and semantic-rich contextual cues. This design specifically recovers fine-grained boundary information while maintaining global context consistency, thereby enabling precise localization even in terrain with indistinct edges.

- (2)

- To overcome the problem of weakened texture features in heterogeneous backgrounds, we integrate the parameter-free SimAM (Simple Attention Module) mechanism into the backbone. Leveraging an energy minimization principle, SimAM adaptively amplifies informative neuron responses corresponding to weak landslide indicators while suppressing complex background noise. This enhances feature selectivity and discriminability without increasing model complexity.

2. Materials and Methods

2.1. Study Area and Dataset

2.1.1. Study Area

2.1.2. Dataset Construction

2.1.3. Data Augmentation

2.2. Proposed Method

2.2.1. YOLOv11 Model

2.2.2. Model Architecture Design

2.2.3. Self-Designed Multi-Scale Feature Enhancement Module

- (1)

- Low-level features, extracted from early convolutional layers, retain high spatial resolution and precise pixel-wise localization. While effective at preserving fine-grained textural details (e.g., local surface patterns), their limited receptive fields restrict global semantic context, hindering the discrimination of landslides from visually similar backgrounds.

- (2)

- High-level features, generated through deep convolutions and pooling, encode rich semantic information via hierarchical integration. Although these features capture global characteristics—such as overall shape and spatial distribution—they suffer from reduced spatial resolution and a consequent loss of boundary fidelity.

- (1)

- Depthwise Separable Convolution Branch: Characterized by a small kernel and narrow receptive field, this branch is optimized for extracting micro-level features, such as pixel-wise edge transitions and fine-grained textures. In landslide detection, it precisely resolves subtle boundary variations, providing critical support for delineating ambiguous edges.

- (2)

- Depthwise Separable Convolution Branch: With a moderate kernel size and intermediate receptive field, this branch mediates between local detail preservation and regional contextual correlation. It effectively captures meso-scale characteristics—including local morphological structures and textural distribution patterns—thereby bridging the gap between fine-grained details and coarse-level contours.

- (3)

- Depthwise Separable Convolution Branch: Equipped with a large kernel and extensive receptive field, this branch suppresses local noise while perceiving spatial continuity over broader regions. It encapsulates global structural cues, such as the overall shape and spatial extent of landslide bodies, making it particularly effective for inferring outlines in areas with blurred boundaries or attenuated textures.

- (1)

- Dimensionality Compression: It performs a linear transformation to reduce the channel count from 2C to C, aligning the feature map with the backbone network’s subsequent stages while mitigating computational overhead associated with channel redundancy.

- (2)

- Cross-Channel Integration: It synthesizes response variations across different scales, thereby enhancing the representation of discriminative features essential for landslide detection.

- (1)

- Preservation of Feature Integrity: Fundamental information from the original input is directly propagated to the module output, mitigating detail loss caused by multiple layers of convolution and aggregation. This is particularly critical for retaining the subtle textural cues of landslides.

- (2)

- Optimized Gradient Flow: The shortcut connection provides a direct path for gradient backpropagation, effectively alleviating the vanishing gradient problem in deep networks. This facilitates faster convergence, thereby improving both training stability and model generalization.

2.2.4. SimAM Module

- is the mean of channel c,

- is the unbiased variance of channel c,

- denotes the Sigmoid activation function,

- is a small constant to ensure numerical stability.

- 1.

- Parameter-Free Efficiency: SimAM computes attention weights analytically using only intrinsic feature statistics (), introducing zero additional learnable parameters. This preserves the real-time inference capability essential for emergency landslide monitoring.

- 2.

- Deep Semantic Refinement: By operating at the deepest layer of the backbone (post-C2PSA), SimAM enhances the most abstract and semantically rich features before they are fused across scales. This ensures that noisy or ambiguous high-level representations are cleaned early, improving downstream detection accuracy.

- 3.

- Adaptive Background Suppression: Unlike fixed-threshold methods, SimAM dynamically adjusts weights per image based on local feature distribution. This enables robust performance across varying terrains, lighting conditions, and occlusion levels commonly encountered in remote sensing imagery.

3. Results

3.1. Environment

- (1)

- The experimental hardware configuration is detailed in Table 1.

- (2)

- The software environment and specific version details utilized in this experiment are summarized in Table 2.

3.2. Evaluation Indicators

- mAP@0.5: The average precision computed at a single Intersection over Union (IoU) threshold of 0.5. This metric indicates the model’s ability to detect landslides with moderate localization overlap.

- mAP@[0.5:0.95]: The primary metric for this study, defined as the mean of AP values computed over IoU thresholds ranging from 0.50 to 0.95 with a step size of 0.05 (i.e., ). This stringent metric provides a comprehensive evaluation of both detection confidence and bounding box regression accuracy.

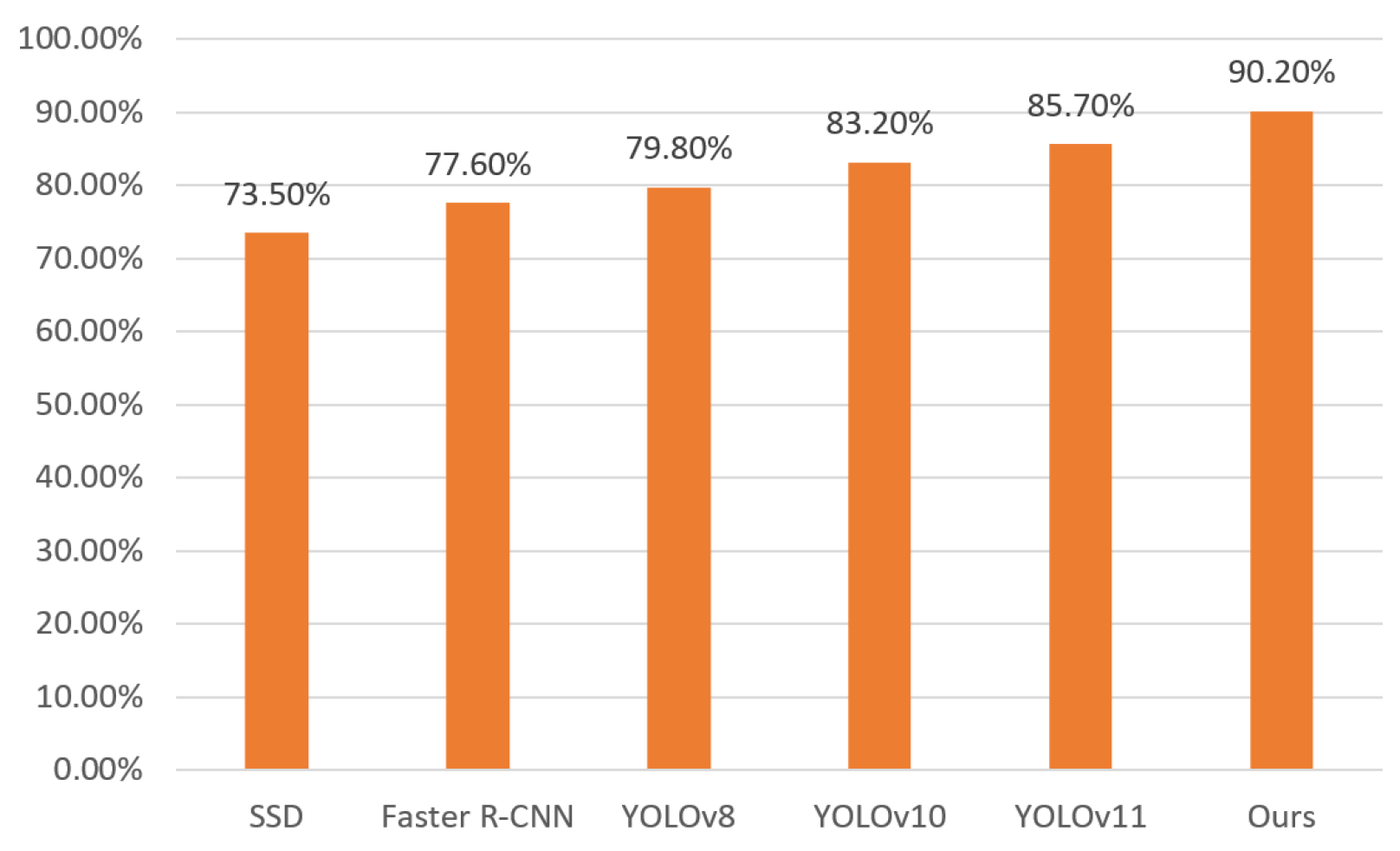

3.3. Comparison Study

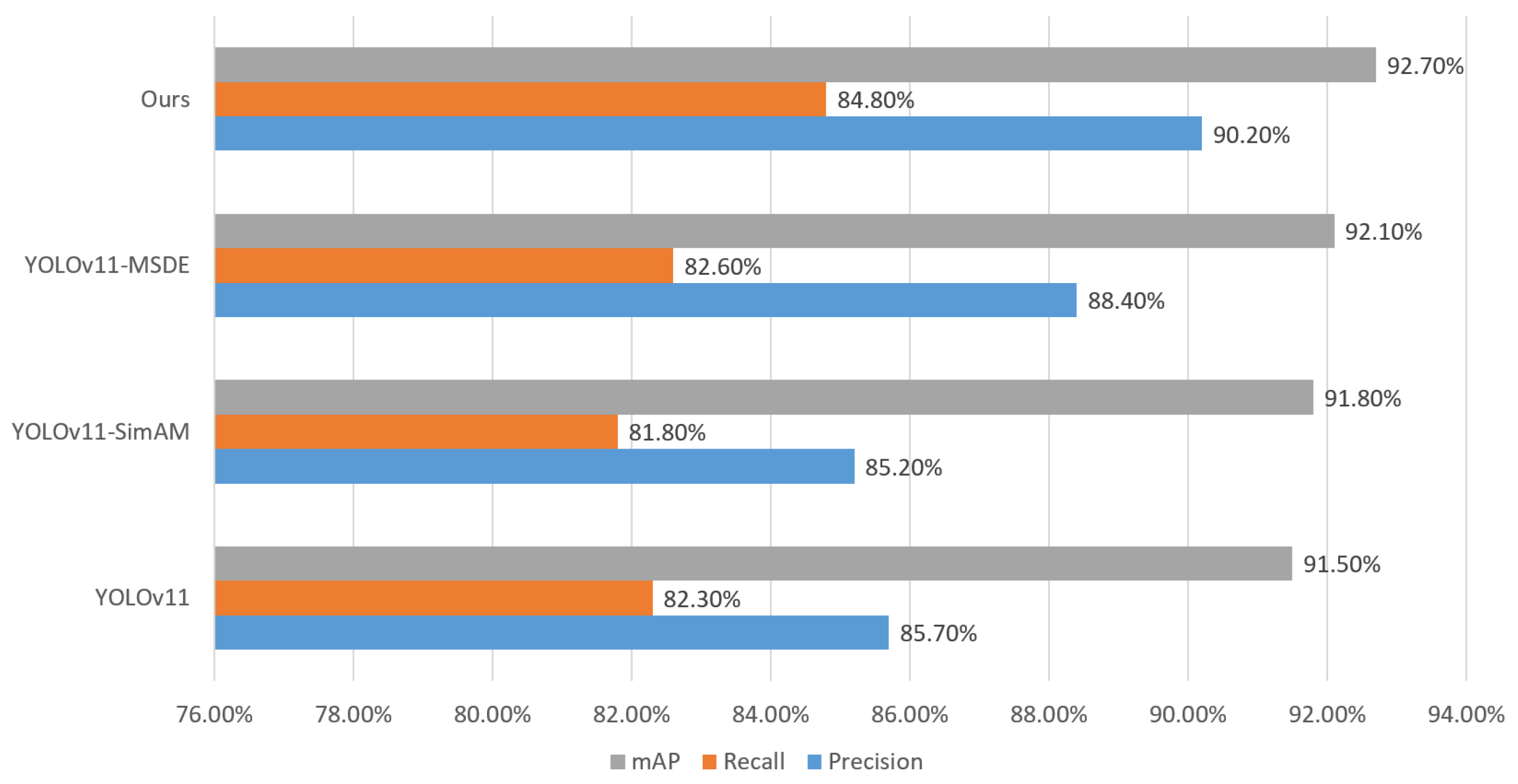

3.4. Ablation Study

4. Discussion

5. Conclusions

- (1)

- Tailored Feature Fusion: A novel framework employing cross-level alignment and depthwise separable convolutions to simultaneously capture fine-grained boundaries and global context.

- (2)

- Efficient Attention Integration: The strategic use of SimAM to boost feature discriminability with zero parameter increase, ensuring high efficiency.

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Li, J.; Wang, R.; Shi, W.; Yang, L.; Wei, J.; Liu, F.; Xiong, K. Landslide Susceptibility Assessment in Ya’an Based on Coupling of GWR and TabNet. Remote Sens. 2025, 17, 2678. [Google Scholar] [CrossRef]

- Cheng, Z.; Gong, W.; Jaboyedoff, M.; Chen, J.; Derron, M.-H.; Zhao, F. Landslide Identification in UAV Images through Recognition of Landslide Boundaries and Ground Surface Cracks. Remote Sens. 2025, 17, 1900. [Google Scholar] [CrossRef]

- Cheng, G.; Wang, Z.; Huang, C.; Yang, Y.; Hu, J.; Yan, X.; Tan, Y.; Liao, L.; Zhou, X.; Li, Y.; et al. Advances in Deep Learning Recognition of Landslides Based on Remote Sensing Images. Remote Sens. 2024, 16, 1787. [Google Scholar] [CrossRef]

- Yuan, Z.; Gong, J.; Guo, B.; Wang, C.; Liao, N.; Song, J.; Wu, Q. A Novel Landslide Identification Method for Multi-Scale and Complex Background Region Based on Multi-Model Fusion: YOLO + U-Net. Remote Sens. 2024, 16, 4265. [Google Scholar] [CrossRef]

- Zhang, W.; Liu, Z.; Zhou, S.; Qi, W.; Wu, X.; Zhang, T.; Han, L. LS-YOLO: A Novel Model for Detecting Multiscale Landslides with Remote Sensing Images. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2024, 17, 4952–4965. [Google Scholar] [CrossRef]

- Qin, H.; Wang, J.; Mao, X.; Zhao, Z.; Gao, X.; Lu, W. An Improved Faster R-CNN Method for Landslide Detection in Remote Sensing Images. J. Geovisualizat. Spat. Anal. 2024, 8, 2. [Google Scholar] [CrossRef]

- Dianqing, Y.; Yanping, M. Remote Sensing Landslide Target Detection Method Based on Improved Faster R-CNN. J. Appl. Remote Sens. 2022, 16, 044521. [Google Scholar] [CrossRef]

- Yun, L.; Zhang, X.; Zheng, Y.; Wang, D.; Hua, L. Enhance the Accuracy of Landslide Detection in UAV Images Using an Improved Mask R-CNN Model: A Case Study of Sanming, China. Sensors 2023, 23, 4287. [Google Scholar] [CrossRef]

- Wang, N.; Zhi, M. Deep Learning-Based Single-Stage General Object Detection Algorithms: A Review. Comput. Sci. Explor. 2025, 19, 1115–1140. (In Chinese) [Google Scholar]

- Zhang, Y.; Xing, J.; Chen, W.; Wang, H.; Shi, B.; Song, Y.; Huang, X.; Jiang, Z. A Novel YOLOv11-Driven Deep Learning Algorithm for UAV Multispectral Oil Spill Detection in Inland Lakes. J. King Saud Univ.—Comput. Inf. Sci. 2025, 37, 108. [Google Scholar] [CrossRef]

- Redmon, J.; Farhadi, A. YOLOv3: An Incremental Improvement. arXiv 2018, arXiv:1804.02767. [Google Scholar] [CrossRef]

- Ultralytics. YOLOv5: A State-of-the-Art Real-Time Object Detection System. Available online: https://docs.ultralytics.com (accessed on 13 February 2025).

- Sohan, M.; Ram, S.; Reddy, R.; Venkata, C. A Review on YOLOv8 and Its Advancements. In Proceedings of the International Conference on Data Intelligence and Cognitive Informatics, Tirunelveli, India, 18–20 November 2024; pp. 529–545. [Google Scholar]

- Jocher, G.; Qiu, J. Ultralytics YOLO11. 2024. Available online: https://github.com/ultralytics/ultralytics (accessed on 13 September 2024).

- Meng, S.; Shi, Z.; Pirasteh, S.; Ullo, S.L.; Peng, M.; Zhou, C.; Gonçalves, W.N.; Zhang, L. TLSTMF-YOLO: Transfer Learning and Feature Fusion Network for Earthquake-Induced Landslide Detection in Remote Sensing Images. IEEE Trans. Geosci. Remote Sens. 2025, 63, 5610712. [Google Scholar] [CrossRef]

- Wang, B.; Su, J.; Xi, J.; Chen, Y.; Cheng, H.; Li, H.; Chen, C.; Shang, H.; Yang, Y. Landslide Detection with MSTA-YOLO in Remote Sensing Images. Remote Sens. 2025, 17, 2795. [Google Scholar] [CrossRef]

- Hao, X.; Liu, L.; Yang, R.; Yin, L.; Zhang, L.; Li, X. A Review of Data Augmentation Methods of Remote Sensing Image Target Recognition. Remote Sens. 2023, 15, 827. [Google Scholar] [CrossRef]

- Lin, G.; Jiang, J.; Bai, J.; Su, Y.; Su, Z.; Liu, H. Frontiers and Developments of Data Augmentation for Image: From Unlearnable to Learnable. Inf. Fusion 2025, 114, 102660. [Google Scholar] [CrossRef]

- Wan, D.; Lu, R.; Xu, T.; Shen, S.; Lang, X.; Ren, Z. Random Interpolation Resize: A Free Image Data Augmentation Method for Object Detection in Industry. Expert Syst. Appl. 2023, 228, 120355. [Google Scholar] [CrossRef]

- Yan, Y.; Zhang, Y.; Su, N. A Novel Data Augmentation Method for Detection of Specific Aircraft in Remote Sensing RGB Images. IEEE Access 2019, 7, 56051–56061. [Google Scholar] [CrossRef]

- Chen, N.; Xu, Z.; Liu, Z.; Chen, Y.; Miao, Y.; Li, Q.; Hou, Y.; Wang, L. Data Augmentation and Intelligent Recognition in Pavement Texture Using a Deep Learning. IEEE Trans. Intell. Transp. Syst. 2022, 23, 25427–25436. [Google Scholar] [CrossRef]

- Gan, Y.; Ren, X.; Liu, H.; Chen, Y.; Lin, P. A Novel Lightweight YOLO11-Based Framework for Precisely Locating Diverse Ship Targets in Complex Optical Remote Sensing Photographs. Meas. Sci. Technol. 2025, 36, 045409. [Google Scholar] [CrossRef]

- Chen, X.; Jiang, N.; Yu, Z.; Qian, W.; Huang, T. Citrus Leaf Disease Detection Based on Improved YOLO11 with C3K2. In Proceedings of the International Conference on Computer Graphics, Artificial Intelligence, and Data Processing (ICCAID 2024), Nanchang, China, 6–8 December 2024; pp. 746–751. [Google Scholar]

- Zhou, S.; Yang, L.; Liu, H.; Zhou, C.; Liu, J.; Zhao, S.; Wang, K. A Lightweight Drone Detection Method Integrated into a Linear Attention Mechanism Based on Improved YOLOv11. Remote Sens. 2025, 17, 705. [Google Scholar] [CrossRef]

- Feng, F.; Hu, Y.; Li, W.; Yang, F. Improved YOLOv8 Algorithms for Small Object Detection in Aerial Imagery. J. King Saud Univ.—Comput. Inf. Sci. 2024, 36, 102113. [Google Scholar] [CrossRef]

- Kamal, K.C.; Yin, Z.; Wu, M.; Wu, Z. Depthwise Separable Convolution Architectures for Plant Disease Classification. Comput. Electron. Agric. 2019, 165, 104948. [Google Scholar] [CrossRef]

- Bai, L.; Zhao, Y.; Huang, X. A CNN Accelerator on FPGA Using Depthwise Separable Convolution. IEEE Trans. Circuits Syst. II Express Briefs 2018, 65, 1415–1419. [Google Scholar] [CrossRef]

- Jang, J.-G.; Quan, C.; Lee, H.D.; Kang, U. Falcon: Lightweight and Accurate Convolution Based on Depthwise Separable Convolution. Knowl. Inf. Syst. 2023, 65, 2225–2249. [Google Scholar] [CrossRef]

- Dai, Y.; Li, C.; Su, X.; Liu, H.; Li, J. Multi-Scale Depthwise Separable Convolution for Semantic Segmentation in Street–Road Scenes. Remote Sens. 2023, 15, 2649. [Google Scholar] [CrossRef]

- Liu, B.; Zou, D.; Feng, L.; Feng, S.; Fu, P.; Li, J. An FPGA-Based CNN Accelerator Integrating Depthwise Separable Convolution. Electronics 2019, 8, 281. [Google Scholar] [CrossRef]

- Zhang, R.; Zhu, F.; Liu, J.; Liu, G. Depth-Wise Separable Convolutions and Multi-Level Pooling for an Efficient Spatial CNN-Based Steganalysis. IEEE Trans. Inf. Forensics Secur. 2019, 15, 1138–1150. [Google Scholar] [CrossRef]

- Liu, R.; Jiang, D.; Zhang, L.; Zhang, Z. Deep Depthwise Separable Convolutional Network for Change Detection in Optical Aerial Images. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2020, 13, 1109–1118. [Google Scholar] [CrossRef]

- Yang, L.; Zhang, R.Y.; Li, L.; Xie, X. SimAM: A Simple, Parameter-Free Attention Module for Convolutional Neural Networks. In Proceedings of the International Conference on Machine Learning, Virtual, 18–24 July 2021; pp. 11863–11874. [Google Scholar]

- Zhang, Y.; Sun, Z. An Advanced YOLOv5s Approach for Vehicle Detection Integrating Swin Transformer and SimAM in Dense Traffic Surveillance. J. Ind. Intell. 2024, 2, 31–41. [Google Scholar] [CrossRef]

- Xu, Q.; Wei, Y.; Gao, J.; Yao, H.; Liu, Q. ICAPD Framework and SimAM-YOLOv8n for Student Cognitive Engagement Detection in Classroom. IEEE Access 2023, 11, 136063–136076. [Google Scholar] [CrossRef]

- Mahaadevan, V.C.; Narayanamoorthi, R.; Gono, R.; Moldrik, P. Automatic Identifier of Socket for Electrical Vehicles Using SWIN-Transformer and SimAM Attention Mechanism-Based EVS YOLO. IEEE Access 2023, 11, 111238–111254. [Google Scholar] [CrossRef]

- Chen, P.; Lin, B.; Chen, X. An Improved YOLOv7 Model with SimAM for Wind Turbine Blade Defects Detection. In Proceedings of the 2024 8th International Symposium on Computer Science and Intelligent Control (ISCSIC), Shenyang, China, 6–8 September 2024; pp. 421–426. [Google Scholar]

- Li, N.; Ye, T.; Zhou, Z.; Gao, C.; Zhang, P. Enhanced YOLOv8 with BiFPN-SimAM for Precise Defect Detection in Miniature Capacitors. Appl. Sci. 2024, 14, 429. [Google Scholar] [CrossRef]

- Zhao, Z.-Q.; Zheng, P.; Xu, S.-T.; Wu, X. Object Detection with Deep Learning: A Review. IEEE Trans. Neural Netw. Learn. Syst. 2019, 30, 3212–3232. [Google Scholar] [CrossRef]

- Park, I.; Kim, S. Performance Indicator Survey for Object Detection. In Proceedings of the 2020 20th International Conference on Control, Automation and Systems (ICCAS), Busan, Republic of Korea, 13–16 October 2020; pp. 284–288. [Google Scholar]

- Chen, W.; Luo, J.; Zhang, F.; Tian, Z. A Review of Object Detection: Datasets, Performance Evaluation, Architecture, Applications and Current Trends. Multimed. Tools Appl. 2024, 83, 65603–65661. [Google Scholar] [CrossRef]

- Chen, J.; Wan, L.; Zhu, J.; Xu, G.; Deng, M. Multi-Scale Spatial and Channel-Wise Attention for Improving Object Detection in Remote Sensing Imagery. IEEE Geosci. Remote Sens. Lett. 2019, 17, 681–685. [Google Scholar] [CrossRef]

| Hardware | Description |

|---|---|

| CPU | Intel Xeon Gold 6454S |

| GPU | NVIDIA RTX 5090, 24GB |

| Memory | 512G |

| Software | Description |

|---|---|

| Operating System | Ubuntu |

| Python | 3.12.1 |

| PyTorch | 2.4.1 |

| CUDA | 12.5 |

| Model | Precision (%) | Recall (%) | mAP (%) | FPS |

|---|---|---|---|---|

| SSD | 73.5 | 70.2 | 79.1 | 35.2 |

| Faster R-CNN | 77.6 | 73.8 | 82.4 | 48.6 |

| YOLOv8 | 79.8 | 77.3 | 86.2 | 68.5 |

| YOLOv10 | 83.2 | 80.6 | 87.9 | 75.4 |

| YOLOv11 | 85.7 | 82.3 | 91.5 | 82.1 |

| Ours | 90.2 | 84.8 | 92.7 | 79.5 |

| Method | Precision (%) | Recall (%) | mAP (%) |

|---|---|---|---|

| YOLOv11 | 85.7 | 82.3 | 91.5 |

| YOLOv11-SimAM | 85.2 | 81.8 | 91.8 |

| YOLOv11-MSDE | 88.4 | 82.6 | 92.1 |

| Ours (MSDE-YOLO) | 90.2 | 84.8 | 92.7 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Cui, K.; Huang, M.; Zhang, W.; Yang, G.; Huang, Y.; Wu, Z.; Zhai, Z.; Cheng, C. Research on Landslide Hazard Detection in Ya’an Region Based on an Improved YOLO Model. Remote Sens. 2026, 18, 957. https://doi.org/10.3390/rs18060957

Cui K, Huang M, Zhang W, Yang G, Huang Y, Wu Z, Zhai Z, Cheng C. Research on Landslide Hazard Detection in Ya’an Region Based on an Improved YOLO Model. Remote Sensing. 2026; 18(6):957. https://doi.org/10.3390/rs18060957

Chicago/Turabian StyleCui, Kewei, Meng Huang, Weiling Zhang, Guang Yang, Yongxiong Huang, Zhengyi Wu, Zhiwei Zhai, and Chao Cheng. 2026. "Research on Landslide Hazard Detection in Ya’an Region Based on an Improved YOLO Model" Remote Sensing 18, no. 6: 957. https://doi.org/10.3390/rs18060957

APA StyleCui, K., Huang, M., Zhang, W., Yang, G., Huang, Y., Wu, Z., Zhai, Z., & Cheng, C. (2026). Research on Landslide Hazard Detection in Ya’an Region Based on an Improved YOLO Model. Remote Sensing, 18(6), 957. https://doi.org/10.3390/rs18060957