Knowledge-Aided Multichannel SAR Clutter Suppression Algorithm in Complex Scenes

Highlights

- Superpixel segmentation was applied to the imaging result of a single channel, combined with adaptive superpixel fusion, effectively achieving refined classification of different regions within complex scenes.

- A two-step knowledge-aided clutter suppression algorithm combines multi-strategy clutter suppression preprocessing with residual clutter suppression to achieve excellent clutter suppression results while preserving the integrity of weak target echoes.

- The proposed knowledge information extraction algorithm effectively addresses the inherent timeliness and compatibility issues of traditional knowledge information, advancing the development of knowledge-aided algorithms in SAR systems.

- Knowledge-aided processing schemes provide an engineering solution for multichannel SAR clutter suppression in complex scenes, offering important insights for subsequent research.

Abstract

1. Introduction

- We propose to utilize the single-channel image as a source of knowledge information. The single-channel image is obtained from SAR echoes and thus has a good match with SAR echoes. Moreover, the imaging results are generated immediately after the echo acquisition, which is also guaranteed in terms of timeliness.

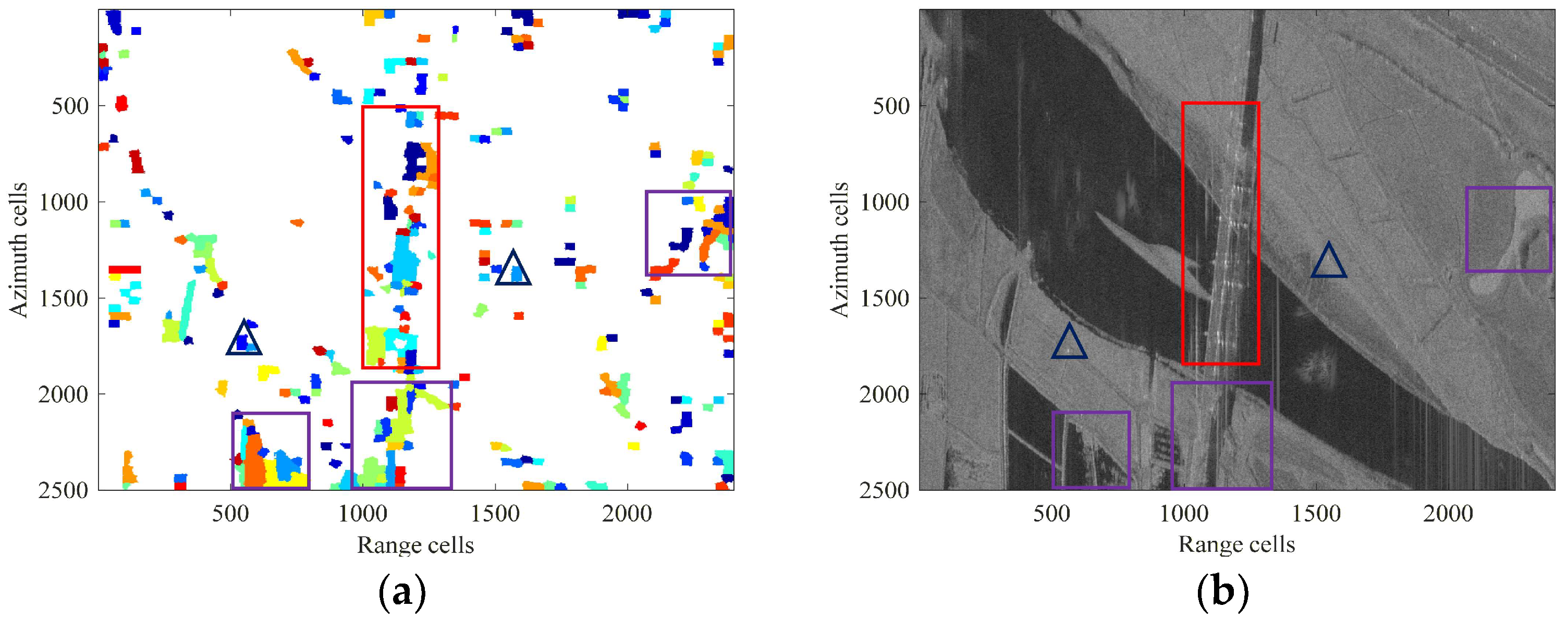

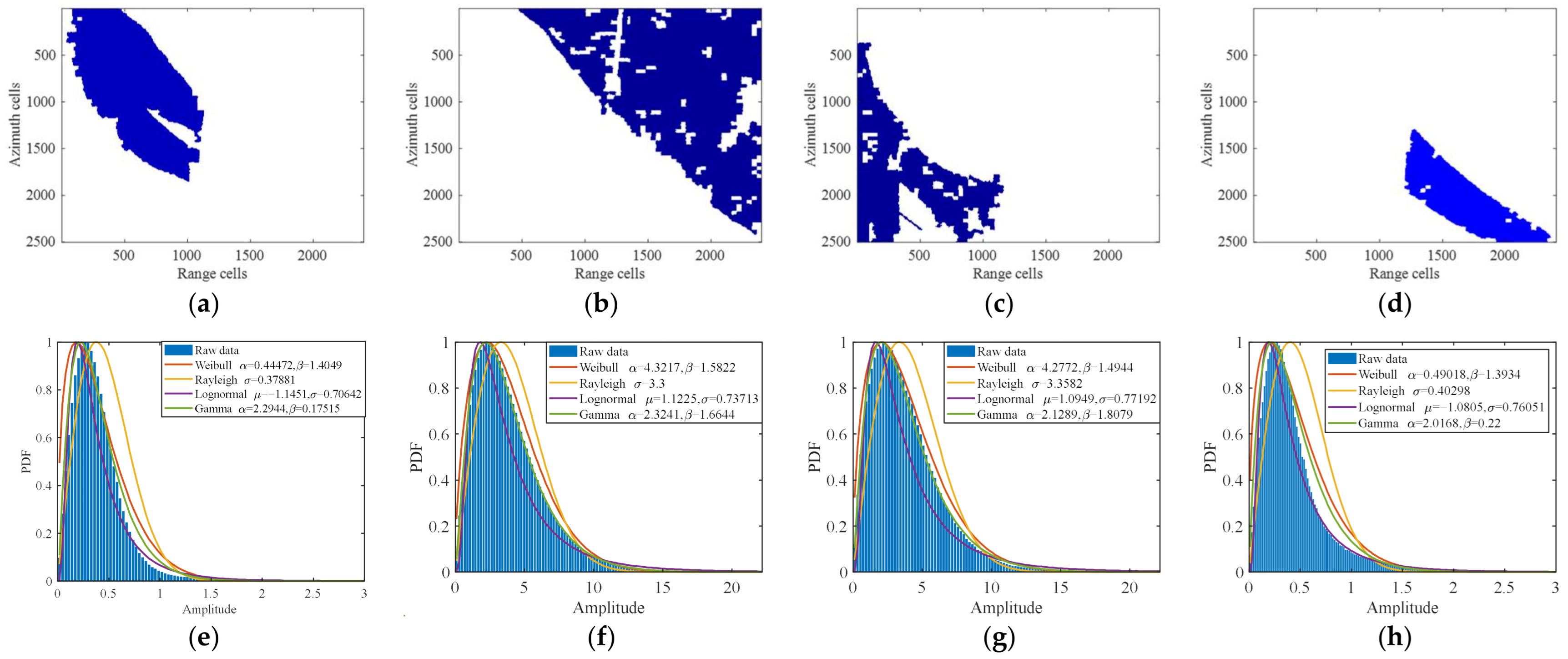

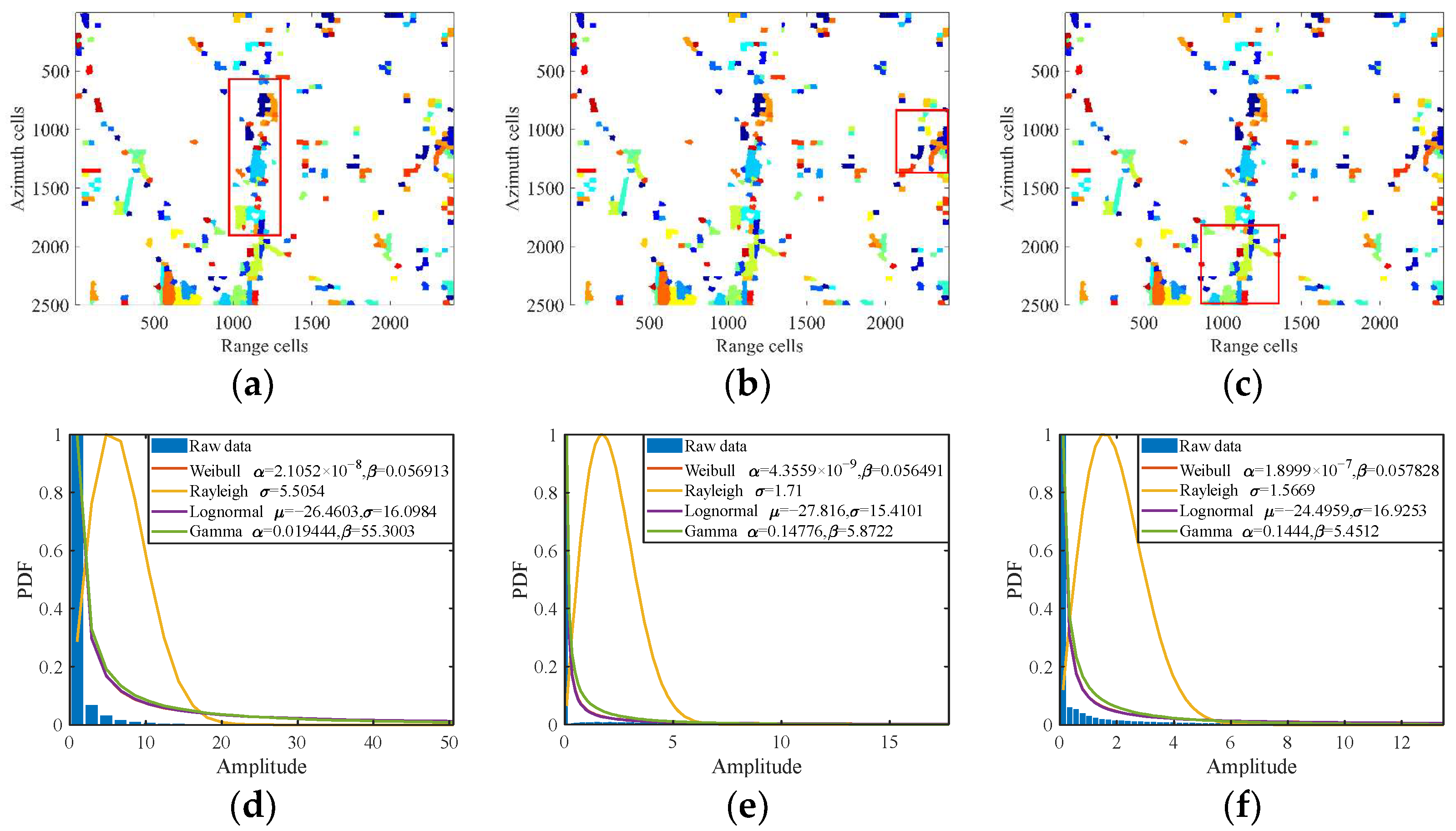

- In terms of knowledge information extraction, we propose a method that combines knowledge information sources with superpixel-level processing. Moreover, during the superpixel fusion stage, a fusion algorithm was proposed to realize adaptive classification of the scene. This algorithm enables refined classification between homogeneous and nonhomogeneous regions. The results show that there are a large number of homogeneous and nonhomogeneous regions in complex scenes, which validates the importance of research on refined classification of complex scenes.

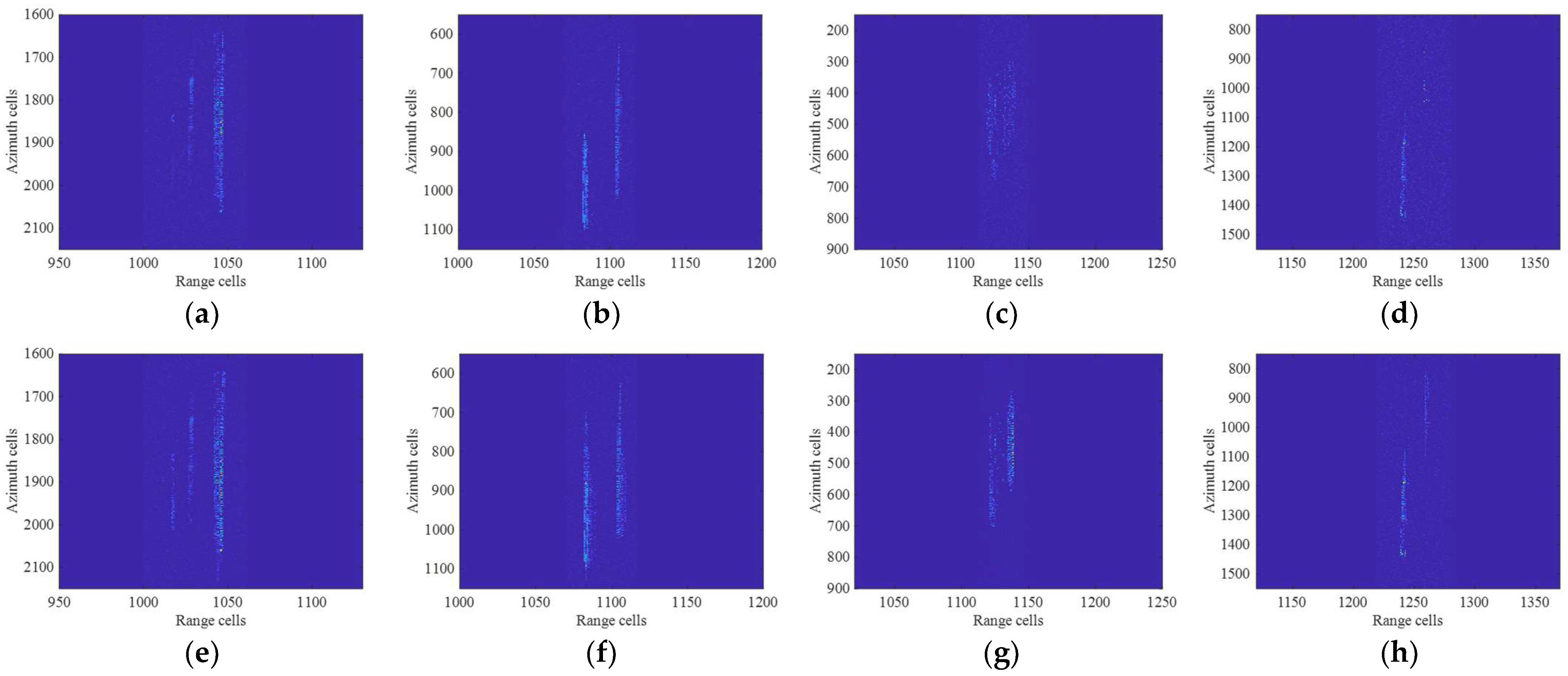

- A two-step processing method combining multi-strategy clutter suppression preprocessing and sparse Bayesian residual clutter suppression is proposed based on the refined classification results in complex scenes. And the processing effect of the two steps is analyzed separately using the measured data. Research findings indicate that incorporating knowledge information enhances processing effectiveness during the clutter suppression preprocessing stage. It also provides a flatter clutter background for residual clutter suppression. The effectiveness of the proposed algorithm for clutter suppression in complex scenes is verified.

2. Multichannel SAR Echo Model

3. The Extraction of Knowledge Information

3.1. Superpixel Segmentation Algorithm for Refined Classification of Complex Scenes

3.2. Superpixel Fusion Algorithm for Refined Classification of Complex Scenes

| Algorithm 1: An adaptive superpixel fusion |

| Inputs: superpixel segmentation results; |

| Outputs: the refined classification results of the complex scene; |

Initialization: starting superpixel Xstart, similarity label set simlabel, alternative set altlabel, dissimilarity set diffmeasure.

|

4. Knowledge-Aided Clutter Suppression Method in Complex Scene

4.1. Multi-Strategy Clutter Suppression Preprocessing

4.2. Residual Clutter Suppression Combined with Sparse Bayesian

5. Experimental Results

5.1. Extraction of Knowledge Information

5.2. Clutter Suppression Performance in Complex Scenes

5.3. Comparison of the Proposed Algorithm with Existing Algorithms

6. Discussion

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Brown, W.M. Synthetic Aperture Radar. IEEE Trans. Aerosp. Electron. Syst. 1967, AES-3, 217–229. [Google Scholar] [CrossRef]

- Pastina, D.; Turin, F. Exploitation of the COSMO-SkyMed SAR System for GMTI Applications. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2015, 8, 966–979. [Google Scholar] [CrossRef]

- Cerutti-Maori, D.; Klare, J.; Brenner, A.R.; Ender, J.H.G. Wide-Area Traffic Monitoring with the SAR/GMTI System PAMIR. IEEE Trans. Geosci. Remote Sens. 2008, 46, 3019–3030. [Google Scholar] [CrossRef]

- Song, C.; Wang, B.; Xiang, M.; Dong, Q.; Wang, Y.; Wang, Z.; Xu, W.; Wang, R. A General Framework for Slow and Weak Range-Spread Ground Moving Target Indication Using Airborne Multichannel High-Resolution Radar. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5113616. [Google Scholar] [CrossRef]

- Li, Y.; Chen, J.; Zhu, J. A New Ground Accelerating Target Imaging Method for Airborne CSSAR. IEEE Geosci. Remote Sens. Lett. 2024, 21, 4013305. [Google Scholar] [CrossRef]

- Li, H.L.; Chen, S.W. Polyhedral Corner Reflectors Multidomain Joint Characterization with Fully Polarimetric Radar. IEEE Trans. Antennas Propag. 2025, 73, 10679–10693. [Google Scholar] [CrossRef]

- Li, Y.; Liang, X.; Liang, J.; Chen, J. Image-Domain Signal Modeling and Refocusing of Air Moving Targets for MEO Multichannel SAR. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5224514. [Google Scholar] [CrossRef]

- Zhang, Y.; Zhang, X.; Li, H.; Wang, Z.; Zhuang, Y. Detection and Imaging of Moving Objects with Multichannel SAR System. In Proceedings of the 2015 IEEE International Geoscience and Remote Sensing Symposium (IGARSS), Milan, Italy, 26–31 July 2015; IEEE: New York, NY, USA, 2015; pp. 2417–2420. [Google Scholar]

- Makhoul, E.; Baumgartner, S.V.; Jager, M.; Broquetas, A. Multichannel SAR-GMTI in Maritime Scenarios with F-SAR and TerraSAR-X Sensors. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2015, 8, 5052–5067. [Google Scholar] [CrossRef]

- Raney, R. Synthetic Aperture Imaging Radar and Moving Targets. IEEE Trans. Aerosp. Electron. Syst. 1971, AES-7, 499–505. [Google Scholar] [CrossRef]

- Cerutti-Maori, D.; Sikaneta, I. A Generalization of DPCA Processing for Multichannel SAR/GMTI Radars. IEEE Trans. Geosci. Remote Sens. 2013, 51, 560–572. [Google Scholar] [CrossRef]

- Ward, J. Space-Time Adaptive Processing for Airborne Radar. In Proceedings of the 1995 International Conference on Acoustics, Speech, and Signal Processing, Detroit, MI, USA, 9–12 May 1995; IEEE: New York, NY, USA, 2002; Volume 5, pp. 2809–2812. [Google Scholar]

- Ender, J.H.G. Space-Time Processing for Multichannel Synthetic Aperture Radar. Electron. Commun. Eng. J. 1999, 11, 29–38. [Google Scholar] [CrossRef]

- Li, Z.; Ye, H.; Liu, Z.; Sun, Z.; An, H.; Wu, J.; Yang, J. Bistatic SAR Clutter-Ridge Matched STAP Method for Nonstationary Clutter Suppression. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5216914. [Google Scholar] [CrossRef]

- Duan, K.; Xie, W.; Wang, Y. A New STAP Method for Nonhomogeneous Clutter Environment. In Proceedings of the 2010 the 2nd International Conference on Industrial Mechatronics and Automation, Wuhan, China, 30–31 May 2010; IEEE: New York, NY, USA, 2010; pp. 66–70. [Google Scholar]

- Sun, Y.; Yang, X.; Long, T.; Sarkar, T.K. Robust Sparse Bayesian Learning STAP Method for Discrete Interference Suppression in Nonhomogeneous Clutter. In Proceedings of the 2017 IEEE Radar Conference (RadarConf), Seattle, WA, USA, 8–12 May 2017; IEEE: New York, NY, USA, 2017; pp. 1003–1008. [Google Scholar]

- Wang, Y.; Chen, J. Robust STAP Approach in Nonhomogeneous Clutter Environments. In Proceedings of the 2001 CIE International Conference on Radar Proceedings (Cat No.01TH8559), Beijing, China; IEEE: New York, NY, USA, 2001; pp. 753–757. [Google Scholar]

- Rangaswamy, M.; Chen, P.; Michels, J.H.; Himed, B. A Comparison of Two Non-Homogeneity Detection Methods for Space-Time Adaptive Processing. In Proceedings of the Sensor Array and Multichannel Signal Processing Workshop Proceedings, Rosslyn, VA, USA, 6 August 2002; IEEE: New York, NY, USA, 2002; pp. 355–359. [Google Scholar]

- Guo, Q.; Liu, L.; Kaliuzhnyi, M.; Wang, Y.; Qi, L. STAP Training Samples Selection Based on GIP and Volume Cross Correlation. IEEE Geosci. Remote Sens. Lett. 2022, 19, 4028205. [Google Scholar] [CrossRef]

- Rabideau, D.J.; Steinhardt, A.O. Improved Adaptive Clutter Cancellation through Data-Adaptive Training. IEEE Trans. Aerosp. Electron. Syst. 1999, 35, 879–891. [Google Scholar] [CrossRef]

- Luo, C.; Zhang, F.; Fu, Y.; Zhang, W.; Yang, W.; Yu, R. Multichannel SAR Moving-Target Detection Based on HPD Manifold in Heterogeneous Clutter. IEEE Trans. Geosci. Remote Sens. 2025, 63, 5216119. [Google Scholar] [CrossRef]

- Prünte, L. GMTI from Multichannel SAR Images Using Compressed Sensing under Off-Grid Conditions. In Proceedings of the 2013 14th International Radar Symposium (IRS), Rosslyn, VA, USA, 6 August 2002; IEEE: New York, NY, USA, 2003. [Google Scholar]

- Prünte, L. Application of Distributed Compressed Sensing for GMTI Purposes. In Proceedings of the IET International Conference on Radar Systems (Radar 2012), Glasgow, UK, 22–25 October 2012; Institution of Engineering and Technology: Hertfordshire, UK, 2012; p. 21. [Google Scholar]

- Rani, M.; Dhok, S.B.; Deshmukh, R.B. A Systematic Review of Compressive Sensing: Concepts, Implementations and Applications. IEEE Access 2018, 6, 4875–4894. [Google Scholar] [CrossRef]

- Li, J.; Zhu, X.; Stoica, P.; Rangaswamy, M. High Resolution Angle-Doppler Imaging for MTI Radar. IEEE Trans. Aerosp. Electron. Syst. 2010, 46, 1544–1556. [Google Scholar] [CrossRef]

- Maria, S.; Fuchsa, J.-J. Application of the Global Matched Filter to Stap Data an Efficient Algorithmic Approach. In Proceedings of the 2006 IEEE International Conference on Acoustics Speed and Signal Processing Proceedings, Toulouse, France, 14–19 May 2006; IEEE: New York, NY, USA, 2006; Volume 4, pp. IV-1013–IV-1016. [Google Scholar]

- Zhang, W.; An, R.; He, N.; He, Z.; Li, H. Reduced Dimension STAP Based on Sparse Recovery in Heterogeneous Clutter Environments. IEEE Trans. Aerosp. Electron. Syst. 2020, 56, 785–795. [Google Scholar] [CrossRef]

- Cui, N.; Xing, K.; Yu, Z.; Duan, K. Tensor-Based Sparse Recovery Space-Time Adaptive Processing for Large Size Data Clutter Suppression in Airborne Radar. IEEE Trans. Aerosp. Electron. Syst. 2022, 59, 907–922. [Google Scholar] [CrossRef]

- Mu, H.; Zhang, Y.; Jiang, Y.; Yang, T. STAP-Based GMTI for Multichannel SAR with Sparse Sampling. In Proceedings of the 2017 IEEE Radar Conference (RadarConf), Seattle, WA, USA, 8–12 May 2017; IEEE: New York, NY, USA, 2017; pp. 1483–1487. [Google Scholar]

- Li, X.; Yang, Z.; Tan, X.; Li, J. A Robust KA-STAP Method for Terrain Clutter Suppression in Hybrid Baseline Radar Systems. IET Conf. Proc. 2023, 2022, 500–505. [Google Scholar] [CrossRef]

- Shi, J.; Zhang, W.; He, Z.; Deng, M.; Lu, X. Joint Design of Transmit Beamforming and Stap Filter in the Modified Phased Array Based on Prior Information. In Proceedings of the IGARSS 2022 IEEE International Geoscience and Remote Sensing Symposium, Kuala Lumpur, Malaysia, 17–22 July 2022; IEEE: New York, NY, USA, 2022; pp. 2995–2998. [Google Scholar]

- Asaro, F.; Prati, C.M.; Belletti, B.; Bizzi, S.; Carbonneau, P. Land Use Analysis Using a Compact Parametrization of Multi-Temporal SAR Data. In Proceedings of the IGARSS 2018 IEEE International Geoscience and Remote Sensing Symposium, Valencia, Spain, 22–27 July 2018; IEEE: New York, NY, USA, 2018; pp. 5823–5826. [Google Scholar]

- Ohki, M.; Shimada, M. Large-Area Land Use and Land Cover Classification with Quad, Compact, and Dual Polarization SAR Data by PALSAR-2. IEEE Trans. Geosci. Remote Sens. 2018, 56, 5550–5557. [Google Scholar] [CrossRef]

- Zhu, X.; Li, J.; Stoica, P. Knowledge-Aided Space-Time Adaptive Processing. IEEE Trans. Aerosp. Electron. Syst. 2011, 47, 1325–1336. [Google Scholar] [CrossRef]

- He, M.D.; Cao, J.S. Recursive KA-STAP Algorithm Based on QR Decomposition. In Proceedings of the 2013 International Workshop on Microwave and Millimeter Wave Circuits and System Technology, Chengdu, China, 24–25 October 2013; IEEE: New York, NY, USA, 2013; pp. 391–394. [Google Scholar]

- Xiong, Y.; Xie, W.; Wang, Y.; Chen, W.; Hou, M. Short-Range Nonstationary Clutter Suppression for Airborne KA-STAP Radar in Complex Terrain Environment. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2025, 18, 2766–2776. [Google Scholar] [CrossRef]

- Xiong, Y.; Xie, W.; Li, H.; Gao, X. Colored-Loading Factor Optimization for Airborne KA-STAP Radar. IEEE Sens. J. 2023, 23, 23317–23326. [Google Scholar] [CrossRef]

- Hu, J.; Li, J.; Li, H.; Li, K.; Liang, J. A Novel Covariance Matrix Estimation via Cyclic Characteristic for STAP. IEEE Geosci. Remote Sens. Lett. 2020, 17, 1871–1875. [Google Scholar] [CrossRef]

- Du, X.; Jing, Y.; Chen, X.; Cui, G.; Zheng, J. Clutter Covariance Matrix Estimation via KA-SADMM for STAP. IEEE Geosci. Remote Sens. Lett. 2024, 21, 3507505. [Google Scholar] [CrossRef]

- Li, H.L.; Liu, S.W.; Chen, S.W. PolSAR Ship Characterization and Robust Detection at Different Grazing Angles with Polarimetric Roll-Invariant Features. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5225818. [Google Scholar] [CrossRef]

- Liu, B.; Hu, H.; Wang, H.; Wang, K.; Liu, X.; Yu, W. Superpixel-Based Classification with an Adaptive Number of Classes for Polarimetric SAR Images. IEEE Trans. Geosci. Remote Sens. 2013, 51, 907–924. [Google Scholar] [CrossRef]

- Chen, Z.; Zhong, Z.; Pan, X.; Xi, X. A Novel Improved SLIC Superpixel Segmentation Algorithm. In Proceedings of the 2022 IEEE 4th International Conference on Civil Aviation Safety and Information Technology (ICCASIT), Dali, China, 12–14 October 2022; IEEE: New York, NY, USA, 2022; pp. 1202–1206. [Google Scholar]

- Yin, J.; Wang, T.; Du, Y.; Liu, X.; Zhou, L.; Yang, J. SLIC Superpixel Segmentation for Polarimetric SAR Images. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5201317. [Google Scholar] [CrossRef]

- Feng, J.; Cao, Z.; Pi, Y. Amplitude and Texture Feature Based SAR Image Classification with a Two-Stage Approach. In Proceedings of the 2014 IEEE Radar Conference, Cincinnati, OH, USA, 19–23 May 2014; IEEE: New York, NY, USA, 2014; pp. 0360–0364. [Google Scholar]

- Reed, I.S.; Mallett, J.D.; Brennan, L.E. Rapid Convergence Rate in Adaptive Arrays. IEEE Trans. Aerosp. Electron. Syst. 1974, AES-10, 853–863. [Google Scholar] [CrossRef]

- Wu, Q.; Zhang, Y.D.; Amin, M.G.; Himed, B. Space–Time Adaptive Processing and Motion Parameter Estimation in Multistatic Passive Radar Using Sparse Bayesian Learning. IEEE Trans. Geosci. Remote Sens. 2016, 54, 944–957. [Google Scholar] [CrossRef]

- Babacan, S.D.; Molina, R.; Katsaggelos, A.K. Bayesian Compressive Sensing Using Laplace Priors. IEEE Trans. Image Process. 2010, 19, 53–63. [Google Scholar] [CrossRef]

- Ji, S.; Xue, Y.; Carin, L. Bayesian Compressive Sensing. IEEE Trans. Signal Process. 2008, 56, 2346–2356. [Google Scholar] [CrossRef]

- Tipping, M.E. Sparse Bayesian Learning and the Relevance Vector Machine. J. Mach. Learn. Res. 2001, 1, 211–244. [Google Scholar]

- Gierull, C.H.; Sikaneta, I.; Cerutti-Maori, D. Two-Step Detector for RADARSAT-2′s Experimental GMTI Mode. IEEE Trans. Geosci. Remote Sens. 2013, 51, 436–454. [Google Scholar] [CrossRef]

- Cerutti-Maori, D.; Sikaneta, I.; Gierull, C.H. Optimum SAR/GMTI Processing and Its Application to the Radar Satellite RADARSAT-2 for Traffic Monitoring. IEEE Trans. Geosci. Remote Sens. 2012, 50, 3868–3881. [Google Scholar] [CrossRef]

- Li, H.L.; Chen, S.W. General Polarimetric Correlation Pattern: A Visualization and Characterization Tool for Target Joint-Domain Scattering Mechanisms Investigation. IEEE Trans. Geosci. Remote Sens. 2026, 64, 5200417. [Google Scholar] [CrossRef]

| Parameters | Values |

|---|---|

| Platform velocity (m/s) | 87 |

| Altitude (km) | 7.5 |

| Pulse repetition frequency (Hz) | 800 |

| Baseline (m) | 0.18 |

| Sampling frequency (MHz) | 88 |

| Pulse width (μs) | 44 |

| Bandwidth (MHz) | 420 |

| Slant range (km) | 10 |

| Regions | Gamma | Weibull | Rayleigh | Lognormal |

|---|---|---|---|---|

| Homo region 1 | 0.0575 | 0.0804 | 0.2037 | 0.0465 |

| Homo region 2 | 0.0143 | 0.0319 | 0.1152 | 0.0333 |

| Homo region 3 | 0.0178 | 0.0344 | 0.1365 | 0.0367 |

| Homo region 4 | 0.0572 | 0.0730 | 0.1995 | 0.0266 |

| Regions | Gamma | Weibull | Rayleigh | Lognormal |

|---|---|---|---|---|

| Nonhomo region 1 | 0.0573 | 0.0730 | 0.8617 | 0.0580 |

| Nonhomo region 2 | 0.2269 | 0.1552 | 0.7781 | 0.1752 |

| Nonhomo region 3 | 0.0929 | 0.2037 | 0.7212 | 0.1817 |

| Regions | ||

|---|---|---|

| Homogeneous 1 | 1.001 | 43.146 |

| Homogeneous 2 | 1.237 | 42.117 |

| Homogeneous 3 | 0.9576 | 37.166 |

| Homogeneous 4 | 0.9831 | 46.245 |

| Nonhomogeneous 1 | 0.8951 | 2.574 |

| Nonhomogeneous 2 | 1.4235 | 1.485 |

| Nonhomogeneous 3 | 1.3219 | 1.746 |

| Different Regions | SCNR (dB) | ||

|---|---|---|---|

| Clutter Suppression Preprocessing | Residual Clutter Suppression | SCNR Improvement | |

| Targets of part one | 8.23 | 27.82 | 19.59 |

| Targets of part two | 6.87 | 25.04 | 18.17 |

| Targets of part three | 6.53 | 26.27 | 19.74 |

| Targets of part four | 5.48 | 24.76 | 19.28 |

| Different Regions | SCNR (dB) | ||

|---|---|---|---|

| Traditional Algorithm | The Proposed Algorithm | SCNR Improvement | |

| Targets of part one | 19.68 | 27.82 | 8.14 |

| Targets of part two | 15.23 | 25.04 | 9.81 |

| Targets of part three | 12.38 | 26.27 | 13.89 |

| Targets of part four | 17.66 | 24.76 | 7.1 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhang, Y.; Kang, N.; Huang, Z.; Hua, Q.; Ren, H. Knowledge-Aided Multichannel SAR Clutter Suppression Algorithm in Complex Scenes. Remote Sens. 2026, 18, 879. https://doi.org/10.3390/rs18060879

Zhang Y, Kang N, Huang Z, Hua Q, Ren H. Knowledge-Aided Multichannel SAR Clutter Suppression Algorithm in Complex Scenes. Remote Sensing. 2026; 18(6):879. https://doi.org/10.3390/rs18060879

Chicago/Turabian StyleZhang, Yun, Niezipeng Kang, Zuzhen Huang, Qinglong Hua, and Hang Ren. 2026. "Knowledge-Aided Multichannel SAR Clutter Suppression Algorithm in Complex Scenes" Remote Sensing 18, no. 6: 879. https://doi.org/10.3390/rs18060879

APA StyleZhang, Y., Kang, N., Huang, Z., Hua, Q., & Ren, H. (2026). Knowledge-Aided Multichannel SAR Clutter Suppression Algorithm in Complex Scenes. Remote Sensing, 18(6), 879. https://doi.org/10.3390/rs18060879