DEM-Assisted Topography-Conditioned and Orientation-Adaptive Siamese Network for Cross-Region Landslide Change Detection

Highlights

- Proposes a DEM-assisted Siamese network that combines topography-conditioned feature modulation with orientation-adaptive convolutions for cross-region landslide change detection.

- Demonstrates improved robustness and boundary delineation under a site-wise cross-region evaluation protocol, supported by ablation evidence for the two modules.

- Topography-conditioned modulation reduces pseudo-change and stabilizes landslide change detection across regions with strong domain shifts.

- Orientation-adaptive convolutions better capture elongated landslide structures, improving change-map coherence and boundary detail.

- Terrain priors from DEM can be used as physically meaningful conditioning signals to enhance generalization of optical change detectors in mountainous and heterogeneous environments.

- Geometry-aware operators are a practical design choice for hazard mapping tasks dominated by direction.

Abstract

1. Introduction

2. Related Work

2.1. Attention Mechanisms in Change Detection

2.2. Feature Modulation for Multimodal Analysis

3. Method

3.1. Siamese Encoder and Change-Aware Feature Fusion

3.2. Geomorphic-Aware Feature Modulation

3.2.1. Prior Branch: Terrain Embedding Encoder

3.2.2. Dynamic Feature Modulation

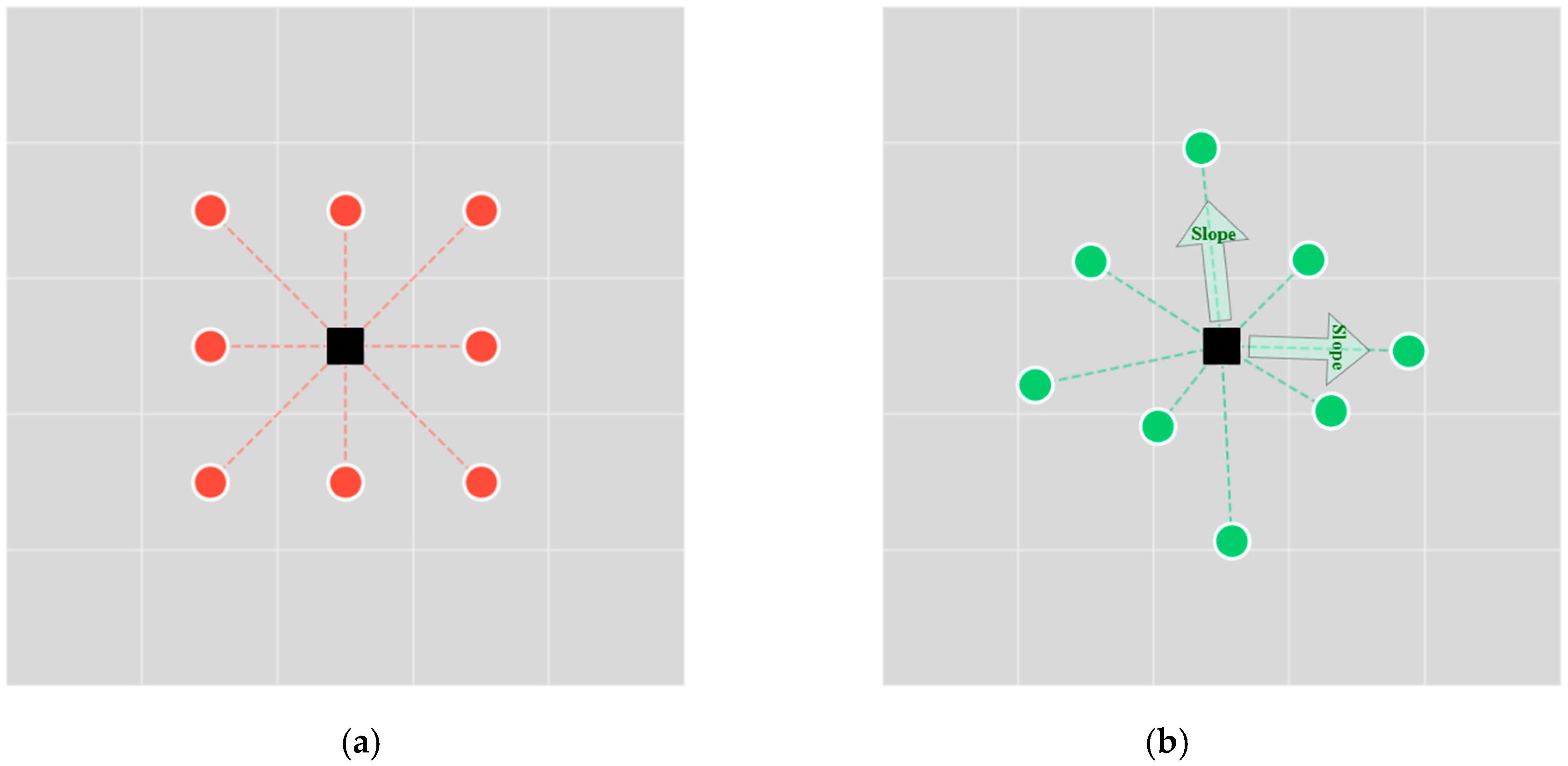

3.3. Orientation-Adaptive Attention Convolutions

3.3.1. Orientation Scoring

3.3.2. Dynamic Kernel Adaptation and Aggregation

3.3.3. Residual Feature Transformation

3.4. Decoder and Output Heads

3.4.1. ASPP for High-Level Context

3.4.2. Progressive Reconstruction Pathway

3.4.3. Dual-Task Prediction

3.5. Loss Function

3.6. Evaluation Metrics and Baseline Methods

4. Experiments and Results

4.1. Datasets

4.2. Experimental Setup

4.2.1. Data Split Strategy

4.2.2. Topographic Prior Pre-Processing

4.2.3. Implementation Details

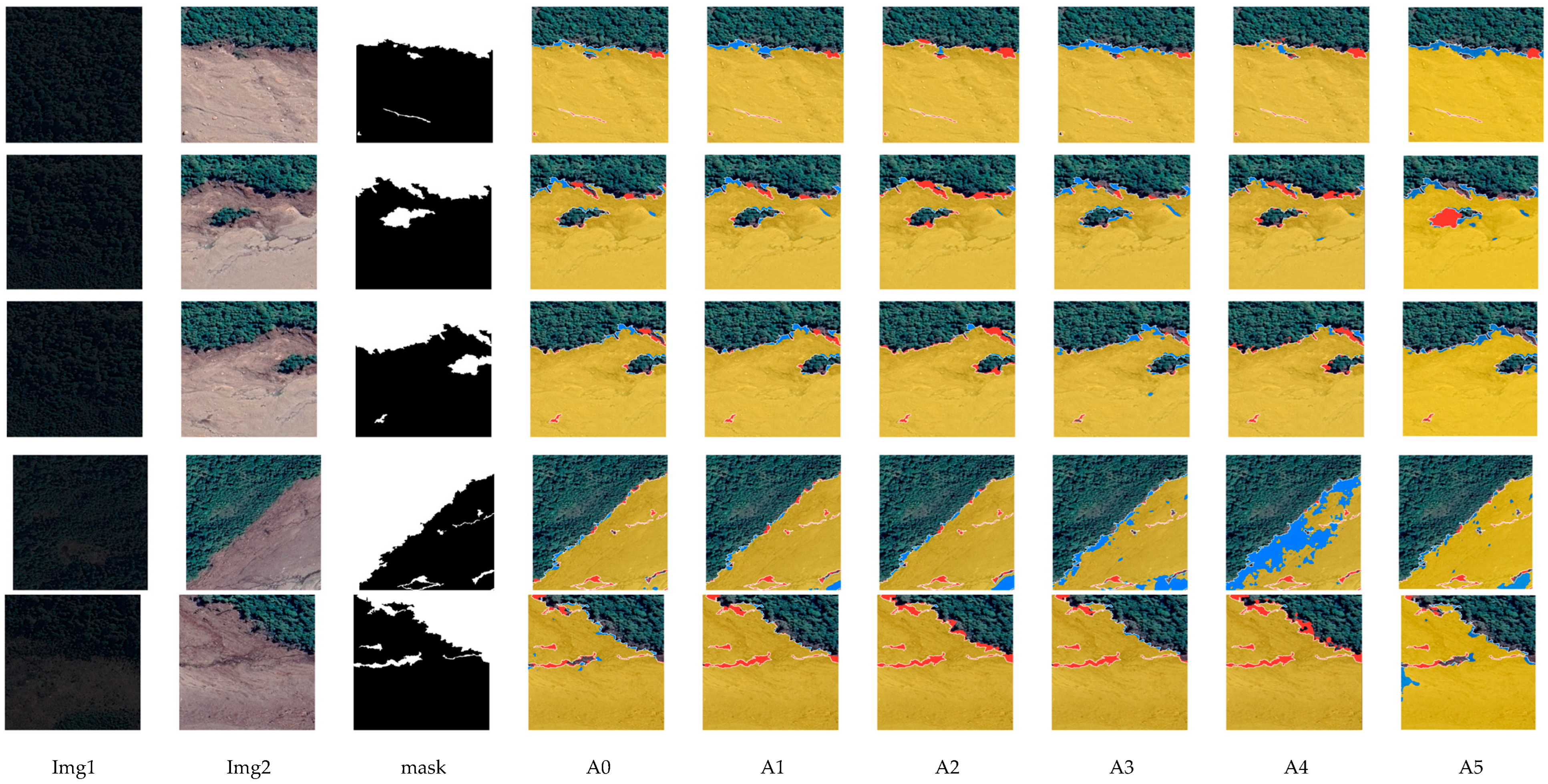

4.3. Quantitative Results and Analysis

4.4. Ablation Results and Analysis

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Casagli, N.; Intrieri, E.; Tofani, V.; Gigli, G.; Raspini, F. Landslide Detection, Monitoring and Prediction with Remote-Sensing Techniques. Nat. Rev. Earth Environ. 2023, 4, 51–64. [Google Scholar] [CrossRef]

- Keefer, D.K.; Larsen, M.C. Assessing Landslide Hazards. Science 2007, 316, 1136–1138. [Google Scholar] [CrossRef]

- Hungr, O.; Leroueil, S.; Picarelli, L. The Varnes Classification of Landslide Types, an Update. Landslides 2013, 11, 167–194. [Google Scholar] [CrossRef]

- Guzzetti, F.; Mondini, A.C.; Cardinali, M.; Fiorucci, F.; Santangelo, M.; Chang, K.-T. Landslide Inventory Maps: New Tools for an Old Problem. Earth-Sci. Rev. 2012, 112, 42–66. [Google Scholar] [CrossRef]

- Murillo-García, F.G.; Alcántara-Ayala, I.; Ardizzone, F.; Cardinali, M.; Fiourucci, F.; Guzzetti, F. Satellite Stereoscopic Pair Images of Very High Resolution: A Step Forward for the Development of Landslide Inventories. Landslides 2014, 12, 277–291. [Google Scholar] [CrossRef]

- Martha, T.R.; van Westen, C.J.; Kerle, N.; Jetten, V.; Vinod Kumar, K. Landslide Hazard and Risk Assessment Using Semi-Automatically Created Landslide Inventories. Geomorphology 2013, 184, 139–150. [Google Scholar] [CrossRef]

- Li, Z.; Shi, W.; Myint, S.W.; Lu, P.; Wang, Q. Semi-Automated Landslide Inventory Mapping from Bitemporal Aerial Photographs Using Change Detection and Level Set Method. Remote Sens. Environ. 2016, 175, 215–230. [Google Scholar] [CrossRef]

- Ji, S.; Yu, D.; Shen, C.; Li, W.; Xu, Q. Landslide Detection from an Open Satellite Imagery and Digital Elevation Model Dataset Using Attention Boosted Convolutional Neural Networks. Landslides 2020, 17, 1337–1352. [Google Scholar] [CrossRef]

- Nava, L.; Bhuyan, K.; Meena, S.R.; Monserrat, O.; Catani, F. Rapid Mapping of Landslides on SAR Data by Attention U-Net. Remote Sens. 2022, 14, 1449. [Google Scholar] [CrossRef]

- Lu, P.; Qin, Y.; Li, Z.; Mondini, A.C.; Casagli, N. Landslide Mapping from Multi-Sensor Data through Improved Change Detection-Based Markov Random Field. Remote Sens. Environ. 2019, 231, 111235. [Google Scholar] [CrossRef]

- Mora, O.E.; Lenzano, M.G.; Toth, C.K.; Grejner-Brzezinska, D.A.; Fayne, J.V. Landslide Change Detection Based on Multi-Temporal Airborne LiDAR-Derived DEMs. Geosciences 2018, 8, 23. [Google Scholar] [CrossRef]

- Zhu, X.; Helmer, E.H. An Automatic Method for Screening Clouds and Cloud Shadows in Optical Satellite Image Time Series in Cloudy Regions. Remote Sens. Environ. 2018, 214, 135–153. [Google Scholar] [CrossRef]

- Li, Z.; Shen, H.; Weng, Q.; Zhang, Y.; Dou, P.; Zhang, L. Cloud and Cloud Shadow Detection for Optical Satellite Imagery: Features, Algorithms, Validation, and Prospects. ISPRS J. Photogramm. Remote Sens. 2022, 188, 89–108. [Google Scholar] [CrossRef]

- Gong, Z.; Ge, W.; Guo, J.; Liu, J. Satellite Remote Sensing of Vegetation Phenology: Progress, Challenges, and Opportunities. ISPRS J. Photogramm. Remote Sens. 2024, 217, 149–164. [Google Scholar] [CrossRef]

- Ji, C.; Tang, H. Towards Reliable Land Cover Mapping under Domain Shift: An Overview and Comprehensive Comparative Study on Uncertainty Estimation. Earth-Sci. Rev. 2025, 263, 105070. [Google Scholar] [CrossRef]

- Wei, R.; Li, Y.; Li, Y.; Zhang, B.; Wang, J.; Wu, C.; Yao, S.; Ye, C. A Universal Adapter in Segmentation Models for Transferable Landslide Mapping. ISPRS J. Photogramm. Remote Sens. 2024, 218, 446–465. [Google Scholar] [CrossRef]

- Gao, L.; Zhang, L.M.; Chen, H.X.; Fei, K.; Hong, Y. Topography and Geology Effects on Travel Distances of Natural Terrain Landslides: Evidence from a Large Multi-Temporal Landslide Inventory in Hong Kong. Eng. Geol. 2021, 292, 106266. [Google Scholar] [CrossRef]

- Guo, J.; Wang, Y.; Li, Y. Topographic Controls on the Initiation and Transport of Landslide-Triggered Debris Flows. Geomorphology 2025, 486, 109901. [Google Scholar] [CrossRef]

- Chen, T.; Trinder, J.C.; Niu, R. Object-Oriented Landslide Mapping Using ZY-3 Satellite Imagery, Random Forest and Mathematical Morphology, for the Three-Gorges Reservoir, China. Remote Sens. 2017, 9, 333. [Google Scholar] [CrossRef]

- Chen, J.; Liu, J.; Zeng, X.; Zhou, S.; Sun, G.; Rao, S.; Guo, Y.; Zhu, J. A Cross-Domain Landslide Extraction Method Utilizing Image Masking and Morphological Information Enhancement. Remote Sens. 2025, 17, 1464. [Google Scholar] [CrossRef]

- Zhang, X.; Yu, W.; Pun, M.-O.; Shi, W. Cross-Domain Landslide Mapping from Large-Scale Remote Sensing Images Using Prototype-Guided Domain-Aware Progressive Representation Learning. ISPRS J. Photogramm. Remote Sens. 2023, 197, 1–17. [Google Scholar] [CrossRef]

- Tavakkoli Piralilou, S.; Shahabi, H.; Jarihani, B.; Ghorbanzadeh, O.; Blaschke, T.; Gholamnia, K.; Meena, S.R.; Aryal, J. Landslide Detection Using Multi-Scale Image Segmentation and Different Machine Learning Models in the Higher Himalayas. Remote Sens. 2019, 11, 2575. [Google Scholar] [CrossRef]

- Zhiyong, L.; Liu, T.; Wang, R.Y.; Benediktsson, J.A.; Saha, S. Automatic Landslide Inventory Mapping Approach Based on Change Detection Technique with Very-High-Resolution Images. IEEE Geosci. Remote Sens. Lett. 2022, 19, 6000805. [Google Scholar] [CrossRef]

- Li, Z.; Shi, W.; Lu, P.; Yan, L.; Wang, Q.; Miao, Z. Landslide Mapping from Aerial Photographs Using Change Detection-Based Markov Random Field. Remote Sens. Environ. 2016, 187, 76–90. [Google Scholar] [CrossRef]

- Ma, L.; Liu, Y.; Zhang, X.; Ye, Y.; Yin, G.; Johnson, B.A. Deep Learning in Remote Sensing Applications: A Meta-Analysis and Review. ISPRS J. Photogramm. Remote Sens. 2019, 152, 166–177. [Google Scholar] [CrossRef]

- Novellino, A.; Pennington, C.; Leeming, K.; Taylor, S.; Alvarez, I.; McAllister, E.; Arnhardt, C.; Winson, A. Mapping Landslides from Space: A Review. Landslides 2024, 21, 1041–1052. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Tek, F.B.; Çam, İ.; Karlı, D. Adaptive Convolution Kernel for Artificial Neural Networks. J. Vis. Commun. Image Represent. 2021, 75, 103015. [Google Scholar] [CrossRef]

- Luo, W.; Li, Y.; Urtasun, R.; Zemel, R. Understanding the Effective Receptive Field in Deep Convolutional Neural Networks. In Advances in Neural Information Processing Systems 29, Proceedings of the NIPS 2016; NeurIPS Foundation: San Diego, CA, USA, 2017. [Google Scholar]

- Li, L.; Lan, H.; Strom, A.; Macciotta, R. Landslide Longitudinal Shape: A New Concept for Complementing Landslide Aspect Ratio. Landslides 2022, 19, 1143–1163. [Google Scholar] [CrossRef]

- Du, J.; Song, C.; Li, Z.; Tomás, R.; Li, Z. Kinematic Behavior and Sliding Geometry of Large Anthropogenic-Induced Landslides Using Three-Dimensional Time Series InSAR: Insights from the Li-Kan Road Landslide. Landslides 2025, 22, 3319–3333. [Google Scholar] [CrossRef]

- Han, J.; Ding, J.; Xue, N.; Xia, G.-S. ReDet: A Rotation-Equivariant Detector for Aerial Object Detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 20–25 June 2021. [Google Scholar]

- Fu, K.; Chang, Z.; Zhang, Y.; Xu, G.; Zhang, K.; Sun, X. Rotation-Aware and Multi-Scale Convolutional Neural Network for Object Detection in Remote Sensing Images. ISPRS J. Photogramm. Remote Sens. 2020, 161, 294–308. [Google Scholar] [CrossRef]

- Wang, K.; Wang, Z.; Li, Z.; Su, A.; Teng, X.; Pan, E.; Liu, M.; Yu, Q. Oriented Object Detection in Optical Remote Sensing Images Using Deep Learning: A Survey. Artif. Intell. Rev. 2025, 58, 350. [Google Scholar] [CrossRef]

- Wen, L.; Cheng, Y.; Fang, Y.; Li, X. A Comprehensive Survey of Oriented Object Detection in Remote Sensing Images. Expert Syst. Appl. 2023, 224, 119960. [Google Scholar] [CrossRef]

- Li, H.; Liu, X.; Li, H.; Dong, Z.; Xiao, X. MDFENet: A Multiscale Difference Feature Enhancement Network for Remote Sensing Change Detection. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2023, 16, 3104–3115. [Google Scholar] [CrossRef]

- Wu, Y.; Bai, Z.; Miao, Q.; Ma, W.; Yang, Y.; Gong, M. A Classified Adversarial Network for Multi-Spectral Remote Sensing Image Change Detection. Remote Sens. 2020, 12, 2098. [Google Scholar] [CrossRef]

- Han, C.; Su, X.; Wei, Z.; Hu, M.; Xu, Y. HSANET: A Hybrid Self-Cross Attention Network for Remote Sensing Change Detection. In Proceedings of the 2025 IEEE International Geoscience and Remote Sensing Symposium, Brisbane, Australia, 3–8 August 2025. [Google Scholar]

- Han, C.; Wu, C.; Guo, H.; Hu, M.; Chen, H. HANet: A Hierarchical Attention Network for Change Detection with Bitemporal Very-High-Resolution Remote Sensing Images. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2023, 16, 3867–3878. [Google Scholar] [CrossRef]

- Lei, T.; Xu, Y.; Ning, H.; Lv, Z.; Min, C.; Jin, Y.; Nandi, A.K. Lightweight Structure-Aware Transformer Network for Remote Sensing Image Change Detection. IEEE Geosci. Remote Sens. Lett. 2024, 21, 6000305. [Google Scholar] [CrossRef]

- Zhang, Z.; Liu, S.; Qin, Y.; Wang, H. MATNet: Multilevel Attention-Based Transformers for Change Detection in Remote Sensing Images. Image Vis. Comput. 2024, 151, 105294. [Google Scholar] [CrossRef]

- Sun, Y.; Fu, Z.; Sun, C.; Hu, Y.; Zhang, S. Deep Multimodal Fusion Network for Semantic Segmentation Using Remote Sensing Image and LiDAR Data. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5404418. [Google Scholar] [CrossRef]

- Wei, T.; Chen, H.; Wang, J.; Liu, W. MDFNet: Multimodal Feature Decomposition and Fusion Network for Multimodal Remote Sensing Image Semantic Segmentation. In Proceedings of the 2024 IEEE International Conference on Signal, Information and Data Processing (ICSIDP), Zhuhai, China, 22–24 November 2024; pp. 1–5. [Google Scholar]

- Feng, J.; Li, S.; Dong, S. Hierarchical Feature Integration and Fusion for Remote Sensing Visual Question Answering. Displays 2025, 90, 103099. [Google Scholar] [CrossRef]

- Lu, K.; Huang, X.; Xia, R.; Zhang, P.; Shen, J. Cross Attention Is All You Need: Relational Remote Sensing Change Detection with Transformer. GIScience Remote Sens. 2024, 61, 2380126. [Google Scholar] [CrossRef]

- Caye Daudt, R.; Le Saux, B.; Boulch, A. Fully Convolutional Siamese Networks for Change Detection. In Proceedings of the 2018 25th IEEE International Conference on Image Processing (ICIP), Athens, Greece, 7–10 October 2018; pp. 4063–4067. [Google Scholar]

- Li, K.; Li, Z.; Fang, S. Siamese NestedUNet Networks for Change Detection of High Resolution Satellite Image. In Proceedings of the 2020 1st International Conference on Control, Robotics and Intelligent System, Xiamen, China, 27–29 October 2020; Association for Computing Machinery: New York, NY, USA, 2021; pp. 42–48. [Google Scholar]

- Li, Z.; Tang, C.; Liu, X.; Zhang, W.; Dou, J.; Wang, L.; Zomaya, A.Y. Lightweight remote sensing change detection with progressive feature aggregation and supervised attention. IEEE Trans. Geosci. Remote Sens. 2023, 61, 5602812. [Google Scholar] [CrossRef]

- Chen, H.; Qi, Z.; Shi, Z. Remote sensing image change detection with transformers. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5607514. [Google Scholar] [CrossRef]

- Feng, Y.; Jiang, J.; Xu, H.; Zheng, J. Change detection on remote sensing images using dual-branch multilevel intertemporal network. IEEE Trans. Geosci. Remote Sens. 2023, 61, 4401015. [Google Scholar] [CrossRef]

- Bandara, W.G.C.; Patel, V.M. A transformer-based Siamese network for change detection. In Proceedings of the IEEE International Geoscience and Remote Sensing Symposium (IGARSS), Kuala Lumpur, Malaysia, 17–22 July 2022; pp. 207–210. [Google Scholar]

- Vincent, E.; Ponce, J.; Aubry, M. Satellite image time series semantic change detection: Novel architecture and analysis of domain shift. arXiv 2024, arXiv:2407.07616. [Google Scholar] [CrossRef]

- D’Alessandro, M.; Bontempelli, R.; Uricchio, T.; Bolelli, F.; Grana, C. DDPM-CD: Denoising diffusion probabilistic models for change detection. arXiv 2022, arXiv:2206.11892. [Google Scholar]

| Country | City | Central Coordinates | Image Size | Time 1 | Time 2 | Triggers |

|---|---|---|---|---|---|---|

| Vietnam | A Luoi | 107.321°E, 16.406°N | 7346 × 4096 | 02/2018 | 02/2021 | Rainfall |

| Japan | Asakura | 130.78°E, 33.402°N | 5632 × 3584 | 04/2017 | 09/2017 | Earthquake |

| Iceland | Askja | 16.732°E, 65.106°N | 4151 × 2763 | 09/2017 | 08/2020 | Snow and glacier melting |

| United States | Big Sur | 121.43°W, 35.865°N | 1748 × 1748 | 04/2015 | 06/2017 | Loose soil and rock splitting |

| Zimbabwe | Chimanimani | 32.870°E, 19.818°S | 10,808 × 7424 | 11/2018 | 03/2019 | Tropical cyclone |

| China | Jiuzhaigou | 103.787°E, 33.288°N | 5888 × 6313 | 12/2015 | 08/2017 | Earthquake |

| New Zealand | Kaikoura | 173.824°E, 42.245°N | 4977 × 3897 | 03/2016 | 11/2016 | Earthquake |

| India | Kodagu | 75.636°E, 12.470°N | 8704 × 6912 | 03/2017 | 10/2018 | Rainfall |

| Indonesia | Kupang | 123.645°E, 10.206°N | 1946 × 1319 | 02/2021 | 04/2021 | Rainfall |

| Turkey | Kurucasile | 32.607°E, 41.802°N | 8192 × 4608 | 10/2015 | 06/2017 | Flood |

| Chile | Los Lagos | 72.384°W, 43.384°N | 8533 × 4077 | 09/2013 | 01/2018 | Glacier melting and rainfall |

| Kyrgyzstan | Osh | 73.308°E, 40.605°N | 8860 × 7193 | 06/2016 | 06/2018 | Melting snow and rainfall |

| Brazil | Santa Catarina | 49.604°W, 27.075°S | 4864 × 3072 | 11/2018 | 02/2021 | Torrential rain |

| China | Shimen | 110.652°W, 29.890°N | 1861 × 1749 | 02/2018 | 11/2020 | Rainfall |

| China | Taitung | 120.909°E, 22.851°N | 3840 × 3840 | 03/2010 | 10/2011 | Typhoon and rainfall |

| Georgia | Tbilisi | 44.674°E, 41.689°N | 5588 × 5632 | 08/2013 | 06/2015 | Flood |

| Mexico | Tenejapa | 92.551°E, 16.809°S | 4200 × 1301 | 07/2020 | 02/2021 | Hurricane |

| Model | F1 | mIOU | Precision | Recall | Acc | Params (m) | FLOP | Time (ms) |

|---|---|---|---|---|---|---|---|---|

| FC-EF | 80.12 ± 5.05 | 67.06 ± 7.19 | 77.72 ± 5.19 | 83.11 ± 8.31 | 97.73 ± 0.45 | 7.745 | 12.586 | 1.73 |

| SNUNet-CD | 77.17 ± 1.84 | 62.85 ± 2.45 | 76.29 ± 10.39 | 80.49 ± 12.68 | 97.24 ± 1.01 | 10.276 | 23.09 | 3.03 |

| BIT | 77.39 ± 1.99 | 63.15 ± 2.67 | 75.76 ± 8.57 | 81.09 ± 12.03 | 97.26 ± 0.96 | 11.913 | 8.484 | 1.95 |

| DMINET | 79.08 ± 2.58 | 65.45 ± 3.57 | 77.94 ± 7.01 | 81.56 ± 10.45 | 97.54 ± 0.68 | 6.754 | 14.476 | 11.62 |

| A2Net | 72.12 ± 2.45 | 56.44 ± 2.96 | 67.79 ± 12.00 | 80.80 ± 14.19 | 96.57 ± 1.15 | 3.78 | 3.048 | 4.03 |

| ChangeFormer | 75.45 ± 1.89 | 60.61 ± 2.44 | 77.34 ± 11.71 | 76.66 ± 14.51 | 97.15 ± 1.14 | 41.015 | 202.624 | 13.07 |

| FC-EF-W | 78.32 ± 4.50 | 60.01 ± 5.24 | 67.70 ± 4.52 | 84.30 ± 8.49 | 97.98 ± 0.78 | 70.66 | 112.25 | 6.52 |

| SitsSCD | 75.02 ± 3.46 | 59.99 ± 5.49 | 67.76 ± 8.59 | 84.00 ± 7.45 | 97.54 ± 0.69 | 0.26 | 36.48 | 9.71 |

| DDPM-CD | 79.05 ± 5.37 | 65.36 ± 6.73 | 67.76 ± 8.59 | 87.42 ± 11.23 | 97.32 ± 0.86 | 35.82 | 390 | 340.08 |

| DEMO-Net (Ours) | 85.17 ± 2.96 | 74.26 ± 4.59 | 87.39 ± 4.45 | 83.57 ± 7.55 | 98.05 ± 0.17 | 74.274 | 31.14 | 8.49 |

| Model | Description | Prior | FiLM | AOAC | Loss | F1 | mIOU | Pre | Acc | Recall |

|---|---|---|---|---|---|---|---|---|---|---|

| A0 | DEMO-Net (Ours) | √ | √ | √ | √ | 85.17 | 74.26 | 87.39 | 98.05 | 83.57 |

| A1 | AOAC only | × | × | √ | √ | 80.50 | 67.37 | 85.09 | 90.99 | 79.79 |

| A2 | w/o Focal Tversky Loss | √ | √ | √ | × | 77.82 | 61.30 | 82.48 | 93.04 | 73.54 |

| A3 | w/o FiLM Modulation | √ | × | √ | √ | 73.25 | 57.79 | 77.78 | 96.50 | 69.22 |

| A4 | w/o AOAC Module | √ | √ | × | √ | 72.30 | 56.62 | 76.80 | 97.51 | 68.30 |

| A5 | Baseline | × | × | × | √ | 67.39 | 50.81 | 69.81 | 90.15 | 65.13 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Wang, J.; Li, H.; Wu, S.; Nie, G.; Yu, Y.; Fan, Z. DEM-Assisted Topography-Conditioned and Orientation-Adaptive Siamese Network for Cross-Region Landslide Change Detection. Remote Sens. 2026, 18, 702. https://doi.org/10.3390/rs18050702

Wang J, Li H, Wu S, Nie G, Yu Y, Fan Z. DEM-Assisted Topography-Conditioned and Orientation-Adaptive Siamese Network for Cross-Region Landslide Change Detection. Remote Sensing. 2026; 18(5):702. https://doi.org/10.3390/rs18050702

Chicago/Turabian StyleWang, Jing, Haiyang Li, Shuguang Wu, Guigen Nie, Yukui Yu, and Zhaoquan Fan. 2026. "DEM-Assisted Topography-Conditioned and Orientation-Adaptive Siamese Network for Cross-Region Landslide Change Detection" Remote Sensing 18, no. 5: 702. https://doi.org/10.3390/rs18050702

APA StyleWang, J., Li, H., Wu, S., Nie, G., Yu, Y., & Fan, Z. (2026). DEM-Assisted Topography-Conditioned and Orientation-Adaptive Siamese Network for Cross-Region Landslide Change Detection. Remote Sensing, 18(5), 702. https://doi.org/10.3390/rs18050702