In this section, we first introduce the experimental settings, evaluation metrics, loss functions, and the datasets used. We then validate the effectiveness of the proposed modules on the Vaihingen and Potsdam datasets. Finally, we perform both quantitative and qualitative comparisons between our method and other mainstream approaches.

4.4. Experimental Datasets

We employed two distinct remote sensing datasets, ISPRS Vaihingen and ISPRS Potsdam, to evaluate the effectiveness of our model.

Vaihingen: This dataset contains high-resolution aerial images captured over the Vaihingen City in Germany, with fine-grained manual annotations for six land cover classes: impervious surfaces, buildings, low vegetation, trees, cars, and clutter. The dataset consists of 33 orthorectified images with a spatial resolution of 0.09 m per pixel, and image widths ranging from 1887 to 3816 pixels. For evaluation, 17 images were used for training, 1 for validation, and the remaining 15 for testing. All Top images were cropped into pixel patches.

Potsdam: This dataset includes 38 high-resolution Top image tiles, each with a size of pixels, covering the same six land cover classes as the Vaihingen dataset. It provides four multispectral bands, along with auxiliary data such as DSM and NDSM. In our experiments, only the RGB bands were used. A total of 22 images were selected for training, 1 image for validation, and the remaining 14 for testing. The images numbered 7_10 was removed due to annotation errors. The original image tiles were cropped into patches of pixels. Due to the extremely small number of pixels belonging to the clutter category in the ISPRS Vaihingen and Potsdam datasets, this class is not considered in the quantitative evaluation. This choice helps avoid potential bias in the performance metrics and allows a more accurate assessment of the model’s effectiveness on the major semantic classes.

4.5. Ablation Study

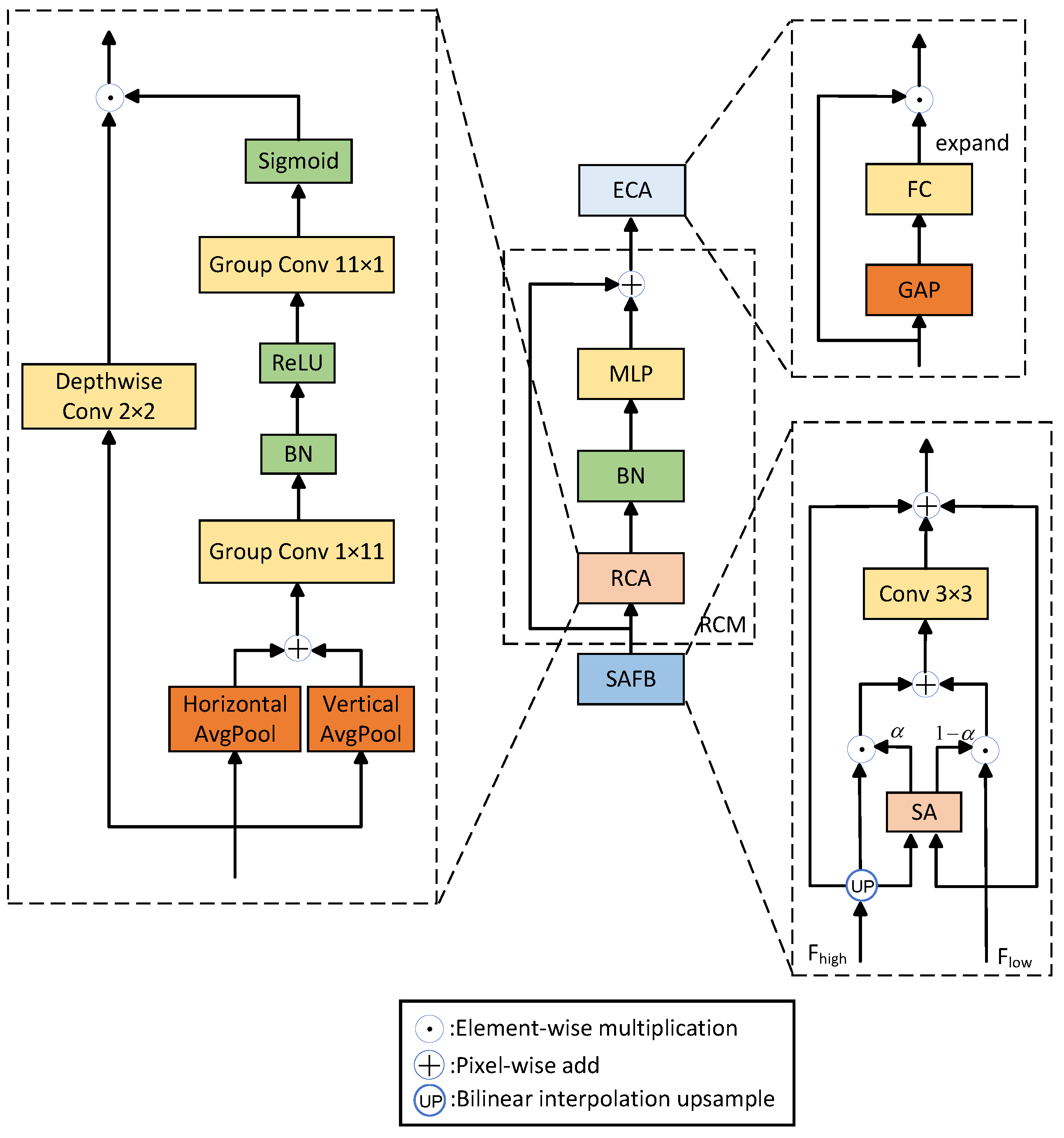

In this section, we conduct ablation studies on the overall architecture using the Vaihingen and Potsdam datasets to verify the effectiveness of each proposed improvement, including the introduction of FF and the design of the ACE, MFR, and WPPM. The overall ablation study results are presented in

Table 3 and

Table 4. Additional ablation experiments are shown in

Table 5,

Table 6,

Table 7,

Table 8 and

Table 9.

(1) Ablation Study on the Overall Architecture

Table 3 presents the ablation study results of each module on the Vaihingen dataset. We observe that the MFR module to the baseline U-Net network with a pre-trained ResNet-18 backbone enables more efficient feature representation, improving mF1, OA, and mIoU by 1.38%, 0.64%, and 2.03%, respectively. Subsequently, the adding of the ACE module further enhances the network, increasing mF1, OA, and mIoU by 0.73%, 0.41%, and 1.05%, respectively, owing to ACE’s effective context modeling. The FF module improves the semantic consistency of encoder outputs, resulting in increments of 0.63%, 0.30%, and 0.85% in mF1, OA, and mIoU. Finally, incorporating the WPPM boosts the model’s multiscale modeling capability, raising mF1, OA, and mIoU to 91.89%, 93.61%, and 84.77%, respectively. These results demonstrate the effectiveness of the designed and introduced modules.

Table 4 presents the ablation study results of each module on the Potsdam dataset. Specifically, compared with the baseline network, our model achieves increases of 1.38%, 2.80%, and 3.91% in mF1, OA, and mIoU, respectively, reaching final values of 92.80%, 91.73%, and 86.62%.

(2) Ablation Study on Different Encoders

Table 5 presents an ablation study on different encoder backbones for CFENet on the Vaihingen dataset. As shown, employing stronger encoders such as ResNet50 [

51] and Swin-Tiny [

56] leads to only marginal improvements in mIoU compared with ResNet18. Specifically, Swin-Tiny achieves the highest mIoU of 85.03%, which is only 0.26% higher than that of ResNet18. However, this minor performance gain comes at the cost of a significantly increased number of parameters and computational complexity. Considering the favorable trade-off between segmentation accuracy and model efficiency, ResNet18 is adopted as the default encoder in the following experiments.

(3) Ablation Study on the MFR Module

We conducted additional ablation experiments on the MFR module using the Vaihingen dataset. We added only the MFR module to the baseline model and varied the kernel sizes (excluding the

branch) in the parallel branches. The experimental results are shown in

Table 6.

In

Table 6, when using

convolutions, the network achieved an mF1 of 90.11%, OA of 92.71%, and mIoU of 82.39%. But when using

convolutions with a larger receptive field, there was no significant improvement in performance. On the contrary, the number of parameters and computational cost increased substantially. We preliminarily attribute this to the relatively small size of feature maps in the shallow decoder, where larger kernels do not offer a clear advantage in capturing fine details compared to smaller ones. Further increasing the kernel size to

still did not improve performance and instead added additional computational burden, further validating our hypothesis. Therefore, we chose to use

convolutions in the MFR module.

We also conducted an ablation study on the number of stacked MFR modules (denoted as

K) in the decoder, and the results are presented in

Table 7.

In

Table 7, we found that when

, the output from the ACE module is passed through a single MFR block for feature reconstruction, achieving mF1, OA, and mIoU scores of 89.12%, 92.33%, and 81.08%. When

, the performance improved by 0.99%, 0.38%, and 1.31% respectively, indicating a noticeable gain; however, this also increased the number of parameters by 2.97 M and the computational cost by 0.44 G. When

, the performance did not show significant improvement, while the computational burden further increased. Therefore, we choose to stack the MFR module twice in series in the decoder.

(4) Ablation Study on the WPPM

To verify the rationality of the WPPM design, we conducted additional ablation experiments on the Vaihingen dataset. We compared its performance with the original PAPPM module based on the same baseline model, and the results are shown in

Table 8.

As presented in

Table 8, incorporating PAPPM improved the baseline model’s mF1 score, OA, and mIoU by 0.64%, 0.14%, and 0.72%, respectively. In contrast, incorporating WPPM led to improvements of 1.15%, 0.31%, and 1.13% in mF1, OA, and mIoU, respectively.

We further visualize the effectiveness of the WPPM, as shown in

Figure 8. Compared with PAPPM, the proposed WPPM produces more accurate segmentation results for trees and buildings, which can be mainly attributed to the wavelet-based downsampling strategy adopted in the feature extraction stage. Unlike the average pooling operations with multiple window sizes used in PAPPM, the successive wavelet downsampling in WPPM effectively reduces information loss without introducing additional computational overhead. Overall, WPPM leads to more effective feature extraction and consequently delivers more accurate and stable segmentation results.

(5) Ablation Study on Network Stability

To evaluate the stability of the proposed network, we trained CFENet with different input sizes, including

,

, and

. The experimental results are shown in

Table 9.

From

Table 9, it can be demonstrated that our network exhibits good stability when processing images of varying resolutions, with only minor variations in mIoU. The model achieves the best performance with an input size of

. It is worth noting that when the input size increases to

, small objects such as cars become further reduced in scale, leading to a significant drop in recognition accuracy.

4.6. Comparative Experiments and Results

We compared our model with several mainstream methods on the Vaihingen and Potsdam datasets. The selected methods mainly include convolution-based networks (U-Net [

11], PSPNet [

41], DeepLabV3+ [

57], ABCNet [

58] and LOGCAN++ [

18]), attention-based networks (MANet [

59] and MSGCNet [

30]), transformer-based approaches (UNetFormer [

27], DC-Swin [

16], CMTFNet [

31], SAM2Former [

60], BEMS-UNetFormer [

61] and DeepKANSeg [

62]), and Mamba-based method (RS

3Mamba [

29]).

(1) Comparison with other methods on the Vaihingen test set

The comparison results with other algorithms on the Vaihingen set are presented in

Table 10.

Table 10 shows that compared to earlier classic models such as U-Net [

11] and PSPNet [

41], our model improved mF1 by 4.16% and 3.11%, OA by 2.20% and 1.46%, and mIoU by 6.10% and 4.50%. Meanwhile, compared to some recent mainstream models, such as SAM2Former [

60] and BEMS-UNetFormer [

61], our model’s mF1 increased by 0.40% and 1.30%, OA increased by 0.97% and 1.52%, and mIoU increased by 0.21% and 1.67%, respectively, demonstrating the effectiveness of our approach. Although DeepKANSeg [

62] attains slightly higher accuracy on the Tree and Car classes, this improvement can be mainly attributed to its large-scale ViT-L backbone and the nonlinear feature refinement ability introduced by KAN-based modules, which are particularly effective in handling fine-grained or irregular object boundaries. In contrast, our model achieves a more balanced segmentation performance across all land-cover categories, making it more suitable for practical remote sensing applications.

Figure 9 presents a radar-chart-based visualization of the per-class IoU comparisons between the proposed CFENet and four representative state-of-the-art methods on Vaihingen test set. As illustrated in the figure, CFENet achieves consistently competitive segmentation performance across all categories.

(2) Comparison with other methods on the Potsdam test set

The comparison results with other algorithms on the Potsdam test set are presented in

Table 11.

Table 11 shows that compared to early classic models such as U-Net [

11] and PSPNet [

41], our method improved mF1 by 6.05% and 2.44%, OA by 6.97% and 2.77%, and mIoU by 9.55% and 4.00%. Meanwhile, compared with some recent mainstream models like SAM2Former [

60] and BEMS-UNetFormer [

61], our model increased mF1 by 0.44% and 0.37%, OA by 0.67% and 0.17%, and mIoU by 0.61% and 0.50%, further demonstrating the effectiveness of our approach. In distinguishing between the two easily confused categories, “LowVeg” and “Tree”, our model achieved the best performance, with F1 scores of 88.31% and 89.36%, respectively. Additionally, in the segmentation of small targets, our model achieved an F1 score of 96.33% on the “Car” category, outperforming all comparison methods, indicating excellent performance in small-scale object recognition tasks as well. The consistent improvement across various land-cover categories highlights the effectiveness of our proposed architecture and confirms its strong potential for advancing remote sensing semantic segmentation.

Figure 10 illustrates the per-class IoU comparison between CFENet and four mainstream methods on Potsdam test set using a radar chart. The results indicate that CFENet maintains strong and balanced segmentation performance across different semantic categories.

(3) Qualitative Visualization Analysis

To demonstrate the superior performance of CFENet, we visualized the segmentation results on the Vaihingen test set. The results are shown in

Figure 11.

From

Figure 11, for the first input image, UNetFormer [

27] and RS

3Mamba [

29] perform poorly in predicting the building category, whereas our proposed model successfully segments the buildings completely. For the second image, CFENet accurately distinguishes impervious surfaces, while other methods, such as MSGCNet [

30] and RS

3Mamba [

29], misclassify these regions as low vegetation due to their similar feature representations. These results further demonstrate that CFENet possesses stronger discriminative capability in semantic recognition.

We also visualized the segmentation results on the Potsdam test set. The results are shown in

Figure 12. For the first input image, it can be seen that our algorithm improves sensitivity to complex backgrounds, significantly reducing the misclassification rate of the background. In the second input image, the proposed CFENet accurately identifies impervious surfaces, whereas other methods, such as DeepLabV3+ [

57] and RS

3Mamba [

29], misclassify these areas as buildings and background, respectively, further indicating that our algorithm demonstrates strong segmentation performance under complex backgrounds and high inter-class similarity scenarios.

Meanwhile, we present heatmaps of segmentation results for different classes on the Vaihingen test set, comparing the results before and after using the ACE module. The results are shown in

Figure 13. Before applying ACE, the model exhibits certain limitations in modeling different categories. Specifically, it struggles to accurately recognize large objects such as buildings, resulting in confusion and blurry segmentation boundaries. Moreover, the recognition of small objects like cars is also imprecise. After incorporating ACE, the model performs more refined contextual modeling for different categories, particularly for buildings and trees, which exhibit significant scale variations. At the same time, the recognition accuracy of small objects such as cars is noticeably improved.

(4) Model Parameters and Computational Complexity Analysis

We use images of size

as input to evaluate the parameter counts and computational complexity of different models. The results are shown in

Table 12.

As shown in

Table 12, CFENet has a moderate number of parameters, but its computational complexity is relatively high. This increase in complexity mainly stems from the incorporation of the FF module, which introduces additional multi-scale feature interactions and frequency-aware operations, leading to higher computational costs. Nevertheless, this design choice enables CFENet to capture richer contextual and frequency information, thereby significantly enhancing feature representation capability. Overall, CFENet maintains a mid-level model complexity while demonstrating strong performance.