A Machine Learning Model for FY-4A Cloud Detection Based on Physical Feature Fusion

Highlights

- An MLP-TSAR model incorporating meteorological factors was developed.

- Achieved a 9.7% improvement compared with the FY-4A cloud detection product.

- Reduced the overall accuracy difference between day and night from 3% to 0.7%.

- Meteorological factors drove over 30% of MLP-TSAR predictions, day and night.

Abstract

1. Introduction

2. Data and Methods

2.1. FY-4A AGRI Data

2.2. ERA5 Reanalysis Data

2.3. CALIPSO Cloud Layer Product

2.4. Data Process

2.5. Machine Learning Methods

2.5.1. RF

2.5.2. LightGBM

2.5.3. XGBoost

2.5.4. MLP

2.6. Model Training and Configuration

2.7. McNemar’s Test

2.8. Interpretation of Feature Contributions Based on SHAP

3. Results

3.1. Overview of the Overall Accuracy

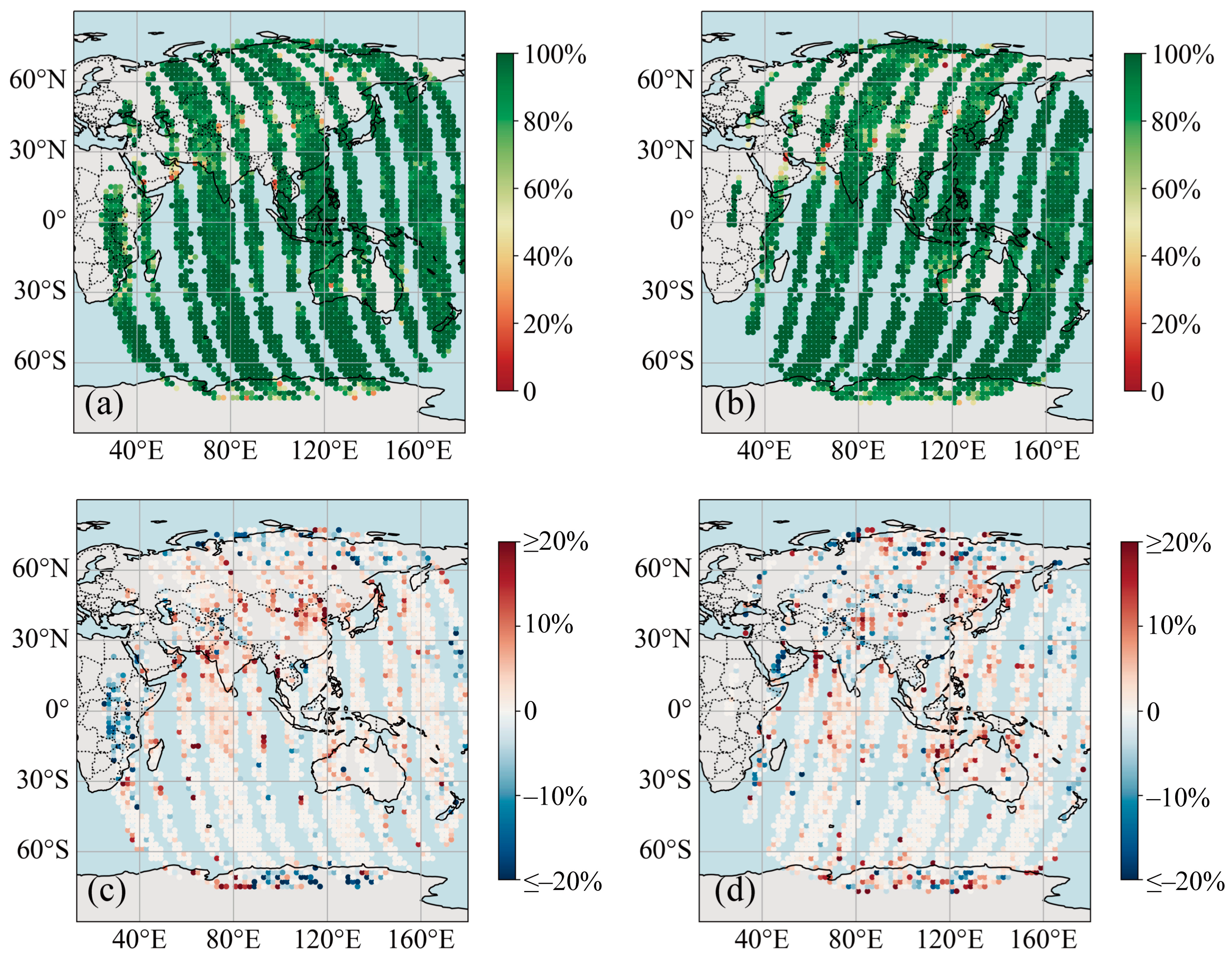

3.2. Comparison to FY-4A L2 Cloud Mask Products

3.3. Effects of Meteorological Factors on Model Performance

3.3.1. Clear Sky Detection

3.3.2. Cloud Detection

Water Cloud Conditions

Ice Cloud Conditions

3.4. Evaluation of Feature Contributions

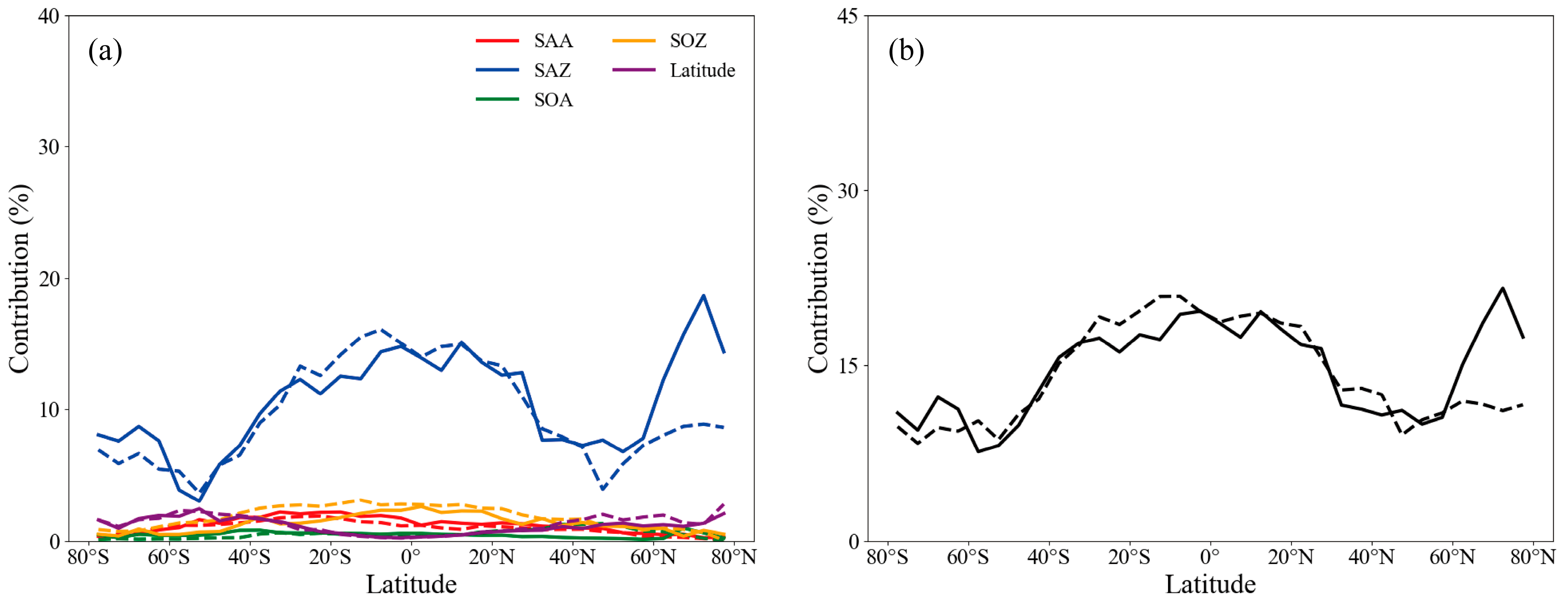

3.4.1. Geometry Parameters

3.4.2. BT

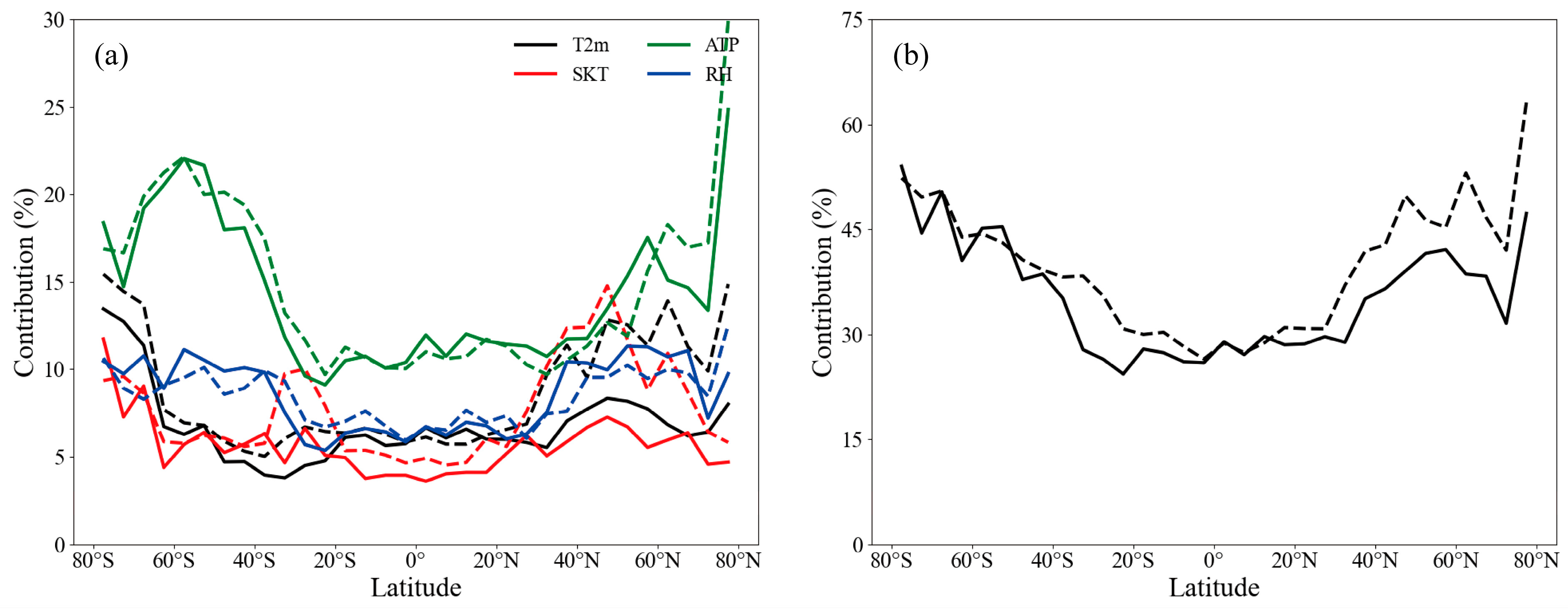

3.4.3. Meteorological Factors

4. Discussion

4.1. Methodological Advantages and Physical Mechanisms

4.2. Limitations and Prospects

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| ML | Machine learning |

| RF | Random Forest |

| LightGBM | Light Gradient Boosting Machine |

| XGBoost | eXtreme Gradient Boosting |

| MLP | Multilayer Perceptron |

| SAZ | satellite zenith angle |

| SAA | satellite azimuth angle |

| SOZ | solar zenith angle |

| SOA | solar azimuth angle |

| T2m | 2 m air temperature |

| SKT | land surface skin temperature |

| ATP | air temperature profiles |

| RH | relative humidity profiles |

References

- Zhang, Y.-C.; Rossow, W.B.; Lacis, A.A.; Oinas, V.; Mishchenko, M.I. Calculation of radiative fluxes from the surface to top of atmosphere based on ISCCP and other global data sets: Refinements of the radiative transfer model and the input data. J. Geophys. Res. 2004, 109, D19105. [Google Scholar] [CrossRef]

- Zhang, H.; Huang, Q.; Zhai, H.; Zhang, L. Multi-temporal cloud detection based on robust PCA for optical remote sensing imagery. Comput. Electron. Agric. 2021, 188, 106342. [Google Scholar] [CrossRef]

- Slingo, A.; Slingo, J.M. The response of a general circulation model to cloud longwave radiative forcing. I: Introduction and initial experiments. Q. J. R. Meteorol. Soc. 1988, 114, 1027–1062. [Google Scholar] [CrossRef]

- Fueglistaler, S.; Dessler, A.E.; Dunkerton, T.J.; Folkins, I.; Fu, Q.; Mote, P.W. Tropical tropopause layer. Rev. Geophys. 2009, 47, RG1004. [Google Scholar] [CrossRef]

- Haynes, J.M.; Vonder Haar, T.H.; L’ Ecuyer, T.; Henderson, D. Radiative heating characteristics of Earth’ s cloudy atmosphere from vertically resolved active sensors. Geophys. Res. Lett. 2013, 40, 624–630. [Google Scholar] [CrossRef]

- Li, J.D.; Wang, W.C.; Dong, X.Q.; Mao, J.Y. Cloud-radiation-precipitation associations over the Asian monsoon region: An observational analysis. Clim. Dyn. 2017, 49, 3237–3255. [Google Scholar] [CrossRef]

- King, M.D.; Tsay, S.-C.; Platnick, S.E.; Wang, M.; Liou, K.-N. Cloud retrieval algorithms for MODIS: Optical thickness, effective particle radius, and thermodynamic phase. In MODIS Algorithm Theoretical Basis Document No. ATBD-MOD-05; NASA Goddard Space Flight Center: Greenbelt, MD, USA, 1997. [Google Scholar]

- Mei, L.; Rozanov, V.; Vountas, M.; Burrows, J.P.; Levy, R.C.; Lotz, W. A cloud masking algorithm for the XBAER aerosol retrieval using MERIS data. Remote Sens. Environ. 2017, 197, 141–160. [Google Scholar] [CrossRef]

- Jafariserajehlou, S.; Mei, L.; Vountas, M.; Rozanov, V.; Burrows, J.P.; Hollmann, R. A cloud identification algorithm over the Arctic for use with AATSR-SLSTR measurements. Atmos. Meas. Tech. 2019, 12, 1059–1076. [Google Scholar] [CrossRef]

- Zhao, Z.; Zhang, F.; Wu, Q.; Li, Z.; Tong, X.; Li, J.; Han, W. Cloud identification and properties retrieval of the Fengyun-4A satellite using a ResUnet model. IEEE Trans. Geosci. Remote Sens. 2023, 61, 4102318. [Google Scholar] [CrossRef]

- Du, M.; Luo, S.; Shi, J.; Guo, W.; Zhang, J.; Gu, H.; Gu, W. Operational application of Fengyun geostationary meteorological satellites to cloud observation products. Sci. Rep. 2024, 14, 17780. [Google Scholar] [CrossRef]

- Huang, X.; Ali, S.; Wang, C.; Ning, Z.; Purushotham, S.; Wang, J.; Zhang, Z. Deep domain adaptation based cloud type detection using active and passive satellite data. In Proceedings of the 2020 IEEE International Conference on Big Data (Big Data 2020), Atlanta, GA, USA, 10–13 December 2020; IEEE: New York, NY, USA, 2020; pp. 1330–1337. [Google Scholar]

- Huang, C.; Wang, Z.; Li, Q.; Feng, L.; Zhang, M.; Qin, W.; Wang, L.; Tong, M.; Wang, Y. Cloud mask detection by combining active and passive remote sensing data. Remote Sens. 2025, 17, 3315. [Google Scholar]

- Mahajan, S.; Fataniya, B. Cloud detection methodologies: Variants and development—A review. Complex Intell. Syst. 2020, 6, 251–261. [Google Scholar] [CrossRef]

- Jedlovec, G.J.; Haines, S.L.; LaFontaine, F.J. Spatial and temporal varying thresholds for cloud detection in satellite imagery. IEEE Trans. Geosci. Remote Sens. 2008, 46, 1705–1717. [Google Scholar] [CrossRef]

- Xiong, Q.; Wang, Y.; Liu, D.; Ye, S.; Du, Z.; Liu, W.; Huang, J.; Su, W.; Zhu, D.; Yao, X.; et al. A Cloud Detection Approach Based on Hybrid Multispectral Features with Dynamic Thresholds for GF-1 Remote Sensing Images. Remote Sens. 2020, 12, 450. [Google Scholar] [CrossRef]

- Shang, H.; Nagao, T.M.; Letu, H.; Wei, L.; He, J.; Chen, L.; Nakajima, T.Y.; Shao, J.; Xu, R.; Wu, L.; et al. A hybrid cloud detection and cloud phase classification algorithm using classic threshold-based tests and extra randomized tree model. Remote Sens. Environ. 2023, 302, 113957. [Google Scholar] [CrossRef]

- Mateo-Garcia, G.; Laparra, V.; López-Puigdollers, D.; Gómez-Chova, L. Transferring deep learning models for cloud detection between Landsat-8 and Proba-V. ISPRS J. Photogramm. Remote Sens. 2020, 160, 1–17. [Google Scholar] [CrossRef]

- Ma, N.; Sun, L.; Zhou, C.; He, Y. Cloud Detection Algorithm for Multi-Satellite Remote Sensing Imagery Based on a Spectral Library and 1D Convolutional Neural Network. Remote Sens. 2021, 13, 3319. [Google Scholar] [CrossRef]

- Shi, X.; Fan, Y.; Sun, L.; Liu, X.; Liu, C.; Pang, S. Cloud detection sample generation algorithm for nighttime satellite imagery based on daytime data and machine learning application. Sci. Rep. 2024, 14, 27917. [Google Scholar] [CrossRef]

- Zhang, Q.; Yu, Y.; Zhang, W.; Luo, T.; Wang, X. Cloud Detection from FY-4A’s Geostationary Interferometric Infrared Sounder Using Machine Learning Approaches. Remote Sens. 2019, 11, 3035. [Google Scholar] [CrossRef]

- Liu, C.; Yang, S.; Di, D.; Yang, Y.; Di, D.; Zhou, C.; Hu, X.; Sohn, B. A Machine Learning-based Cloud Detection Algorithm for the Himawari-8 Spectral Image. Adv. Atmos. Sci. 2022, 39, 1994–2007. [Google Scholar] [CrossRef]

- Xu, L.; Wong, A.; Clausi, D.A. A novel Bayesian spatial-temporal random field model applied to cloud detection from remotely sensed imagery. IEEE Trans. Geosci. Remote Sens. 2017, 55, 4913–4924. [Google Scholar] [CrossRef]

- Miroszewski, A.; Mielczarek, J.; Czelusta, G.; Spychała, J. Detecting clouds in multispectral satellite images using quantum-kernel support vector machines. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2023, 16, 7601–7613. [Google Scholar] [CrossRef]

- Huang, W.; Li, Z.; Sun, L.; Zhu, X.; Yuan, Q.; Liu, L.; Cribb, M. Cloud detection for Landsat imagery by combining the random forest and superpixels extracted via energy-driven sampling segmentation approaches. Remote Sens. Environ. 2020, 248, 112005. [Google Scholar]

- Shao, Z.; Pan, Y.; Diao, C.; Cai, J. Cloud detection in remote sensing images based on multiscale features-convolutional neural network. IEEE Trans. Geosci. Remote Sens. 2019, 57, 4062–4076. [Google Scholar] [CrossRef]

- Kalesse-Los, H.; Schimmel, W.; Luke, E.; Seifert, P. Evaluating cloud liquid detection against Cloudnet using cloud radar Doppler spectra in a pre-trained artificial neural network. Atmos. Meas. Tech. 2022, 15, 279–295. [Google Scholar] [CrossRef]

- Cao, X.; Liu, B.; Gao, M.; Guo, Y. Cloud Detection for Satellite Imagery Using Attention-Based U-Net Convolutional Neural Network. Symmetry 2020, 12, 1056. [Google Scholar]

- Wang, M.; Balmes, K.A.; Thorsen, T.J.; Willick, D.; Fu, Q. An investigation of the ice cloud detection sensitivity of cloud radars using the Raman lidar at the ARM SGP site. Remote Sens. 2022, 14, 3466. [Google Scholar] [CrossRef]

- Zhao, W.; Wang, Y.; Huo, J.; Liu, B.; Lyu, D.; Li, J. Unveiling cloud vertical structures over the interior Tibetan Plateau through anomaly detection in synergetic lidar and radar observations. Adv. Atmos. Sci. 2024, 41, 2381–2398. [Google Scholar] [CrossRef]

- Deng, M.; Xu, X.; Ma, Y.; Gong, W.; Jin, S.; Hu, R. Multi-layer perceptron combined with radiative transfer model for complex land surface cloud detection. Acta Electron. Sin. 2022, 50, 932–942. [Google Scholar]

- Jiao, Y.; Zhang, M.; Wang, L.; Qin, W. A new cloud and haze mask algorithm from radiative transfer simulations coupled with machine learning. IEEE Trans. Geosci. Remote Sens. 2023, 61, 4101216. [Google Scholar] [CrossRef]

- Xu, W. Evaluation of cloud mask and cloud top height from Fengyun-4A with MODIS cloud retrievals over the Tibetan Plateau. Remote Sens. 2021, 13, 1418. [Google Scholar] [CrossRef]

- Yang, J.; Zhang, Z.; Wei, C.; Lu, F.; Guo, Q. Introducing the New Generation of Chinese Geostationary Weather Satellites, Fengyun-4. Bull. Am. Meteorol. Soc. 2017, 98, 1637–1658. [Google Scholar] [CrossRef]

- Hersbach, H.; Bell, B.; Berrisford, P.; Hirahara, S.; Horányi, A.; Muñoz-Sabater, J.; Nicolas, J.; Peubey, C.; Radu, R.; Schepers, D.; et al. The ERA5 global reanalysis. Q. J. R. Meteorol. Soc. 2020, 146, 1999–2049. [Google Scholar] [CrossRef]

- Winker, D.M.; Pelon, J.; Coakley, J.A., Jr.; Ackerman, S.A.; Charlson, R.J.; Colarco, P.R.; Flamant, P.; Fu, Q.; Hoff, R.M.; Kittaka, C.; et al. The CALIPSO Mission: A Global 3D View of Aerosols and Clouds. Bull. Am. Meteorol. Soc. 2010, 91, 1211–1229. [Google Scholar] [CrossRef]

- Singh, R.; Biswas, M.; Pal, M. Cloud detection using sentinel 2 imageries: A comparison of XGBoost, RF, SVM, and CNN algorithms. Geocarto Int. 2022, 38, 1–32. [Google Scholar] [CrossRef]

- Zhang, C.; Pan, X.; Li, H.; Gardiner, A.; Sargent, I.; Hare, J.; Atkinson, P.M. A hybrid MLP-CNN classifier for very fine resolution remotely sensed image classification. ISPRS J. Photogramm. Remote Sens. 2018, 140, 133–144. [Google Scholar] [CrossRef]

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Belgiu, M.; Drăguţ, L. Random forest in remote sensing: A review of applications and future directions. ISPRS J. Photogramm. Remote Sens. 2016, 114, 24–31. [Google Scholar] [CrossRef]

- Ke, G.; Meng, Q.; Finley, T.; Wang, T.; Chen, W.; Ma, W.; Ye, Q.; Liu, T.-Y. LightGBM: A highly efficient gradient boosting decision tree. In Advances in Neural Information Processing Systems 30 (NIPS 2017); Curran Associates, Inc.: Red Hook, NY, USA, 2017; pp. 3146–3154. [Google Scholar]

- Chen, T.; Guestrin, C. XGBoost: A Scalable Tree Boosting System. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (KDD’16), San Francisco, CA, USA, 13–17 August 2016; pp. 785–794. [Google Scholar]

- Rumelhart, D.E.; Hinton, G.E.; Williams, R.J. Learning representations by back-propagating errors. Nature 1986, 323, 533–536. [Google Scholar] [CrossRef]

- Nair, V.; Hinton, G.E. Rectified linear units improve restricted Boltzmann machines. In Proceedings of the 27th International Conference on Machine Learning (ICML-10), Haifa, Israel, 21–24 June 2010; pp. 807–814. [Google Scholar]

- Du, K.-L.; Leung, C.-S.; Mow, W.H.; Swamy, M.N.S. Perceptron: Learning, Generalization, Model Selection, Fault Tolerance, and Role in the Deep Learning Era. Mathematics 2022, 10, 4730. [Google Scholar] [CrossRef]

- Dietterich, T.G. Approximate statistical tests for comparing supervised classification learning algorithms. Neural Comput. 1998, 10, 1895–1923. [Google Scholar] [CrossRef] [PubMed]

- Lundberg, S.M.; Lee, S.I. A unified approach to interpreting model predictions. In Proceedings of the Advances in Neural Information Processing Systems (NIPS 2017), Long Beach, CA, USA, 4–9 December 2017; pp. 4765–4777. [Google Scholar]

- Li, M.; Sun, H.; Huang, Y.; Chen, H. Shapley value: From cooperative game to explainable artificial intelligence. Auton. Intell. Syst. 2024, 4, 2. [Google Scholar] [CrossRef]

- Grinsztajn, L.; Oyallon, E.; Varoquaux, G. Why do tree-based models still outperform deep learning on typical tabular data? Adv. Neural Inf. Process. Syst. 2022, 35, 507–520. [Google Scholar]

- Zhou, Y.; Yang, Y.; Gao, M.; Zhai, P. Cloud detection over snow and ice with oxygen A- and B-band observations from the Earth Polychromatic Imaging Camera (EPIC). Atmos. Meas. Tech. 2020, 13, 1575–1591. [Google Scholar] [CrossRef]

- Paul, S.; Huntemann, M. Improved machine-learning-based open-water-sea-ice-cloud discrimination over wintertime Antarctic sea ice using MODIS thermal-infrared imagery. Cryosphere 2021, 15, 1551–1565. [Google Scholar] [CrossRef]

- Payez, A. Dual view on clear-sky top-of-atmosphere albedos from Meteosat Second Generation satellites. Remote Sens. 2021, 13, 1655. [Google Scholar] [CrossRef]

- Zhong, B.; Ma, Y.; Yang, A. Radiometric performance evaluation of FY-4A/AGRI based on Aqua/MODIS. Sensors 2021, 21, 1859. [Google Scholar] [CrossRef]

- Zhu, Z.; Wang, S.; Woodcock, C.E. Improvement and expansion of the Fmask algorithm: Cloud, cloud shadow, and snow detection for Landsats 4-7, 8, and Sentinel-2 images. Remote Sens. Environ. 2015, 159, 269–277. [Google Scholar] [CrossRef]

- Wang, X.; Iwabuchi, H.; Yamashita, T. Cloud identification and property retrieval from Himawari-8 infrared measurements via a deep neural network. Remote Sens. Environ. 2022, 275, 113026. [Google Scholar] [CrossRef]

- Guo, B.; Zhang, F.; Zhao, Z.; Guo, L.; Li, W. Retrieval of cloud macro-physical properties using the FY-4A Advanced Geostationary Radiation Imager (AGRI) and the Geostationary Interferometric Infrared Sounder (GIIRS). Geophys. Res. Lett. 2024, 51, e2024GL109772. [Google Scholar] [CrossRef]

- Lee, Y.; Kummerow, C.D.; Ebert-Uphoff, I. Applying machine learning methods to detect convection using Geostationary Operational Environmental Satellite-16 (GOES-16) Advanced Baseline Imager (ABI) data. Atmos. Meas. Tech. 2021, 14, 2699–2716. [Google Scholar] [CrossRef]

- Chai, D.; Huang, J.; Wu, M.; Yang, X.; Wang, R. Remote sensing image cloud detection using a shallow convolutional neural network. ISPRS J. Photogramm. Remote Sens. 2024, 209, 66–84. [Google Scholar] [CrossRef]

- Yang, Y.; Sun, W.; Chi, Y.; Yan, X.; Fan, H.; Yang, X.; Ma, Z.; Wang, Q.; Zhao, C. Machine learning-based retrieval of day and night cloud macrophysical parameters over East Asia using Himawari-8 data. Remote Sens. Environ. 2022, 273, 112971. [Google Scholar] [CrossRef]

- Roy, D.P.; Zhang, H.K.; Ju, J.; Gomez-Dans, J.L.; Lewis, P.E.; Schaaf, C.B.; Sun, Q.; Li, J.; Huang, H.; Kovalskyy, V. A general method to normalize Landsat reflectance data to nadir BRDF adjusted reflectance. Remote Sens. Environ. 2016, 176, 255–271. [Google Scholar] [CrossRef]

- Strabala, K.I.; Ackerman, S.A.; Menzel, W.P. Cloud properties inferred from 8–12-µm data. J. Appl. Meteorol. 1994, 33, 212–229. [Google Scholar] [CrossRef]

- Garnier, A.; Pelon, J.; Pascal, N.; Mark, A.; Philippe, D.; Yang, P.; Mitchell, D. Version 4 CALIPSO Imaging Infrared Radiometer ice and liquid water cloud microphysical properties—Part II: Results over oceans. Atmos. Meas. Tech. 2021, 14, 3277–3299. [Google Scholar] [CrossRef]

- Ellrod, G.P.; Gultepe, I. Inferring low cloud base heights at night for aviation using satellite infrared and surface temperature data. Pure Appl. Geophys. 2007, 164, 1193–1205. [Google Scholar] [CrossRef]

- Hersbach, H.; Bell, B.; Berrisford, P.; Dragani, R.; Fuentes, M.; Janicki, P.; Simmons, A.; Soci, C.; Thépaut, J.-N.; Trémolet, Y. The ERA5 global reanalysis. In ECMWF Technical Memorandum No. 859; European Centre for Medium-Range Weather Forecasts: Reading, UK, 2020. [Google Scholar]

- Nygård, T.; Tjernström, M.; Naakka, T. Winter thermodynamic vertical structure in the Arctic atmosphere linked to large-scale circulation. Weather. Clim. Dyn. 2021, 2, 1263–1282. [Google Scholar] [CrossRef]

- Yao, B.; Teng, S.; Lai, R.; Xu, X.; Yin, Y.; Shi, C.; Liu, C. Can atmospheric reanalyses (CRA and ERA5) represent cloud spatiotemporal characteristics? Atmos. Res. 2020, 244, 105091. [Google Scholar] [CrossRef]

| Bands | m) | Resolution (km) | Applications |

|---|---|---|---|

| 1 | 0.47 | 1 | Clouds, dust, and aerosols |

| 2 | 0.65 | 0.5 | Clouds, dust, and snow |

| 3 | 0.825 | 1 | Clouds, aerosols, vegetation, and ocean |

| 4 | 1.375 | 2 | Cirrus (ice crystal particles) |

| 5 | 1.61 | 2 | Low cloud, snow, water/ice cloud |

| 6 | 2.25 | 2 | Cirrus, aerosol |

| 7 | 3.75 H | 2 | High albedo surface |

| 8 | 3.75 L | 4 | Low albedo surface |

| 9 | 6.25 | 4 | Water vapor |

| 10 | 7.1 | 4 | Water vapor |

| 11 | 8.5 | 4 | Water vapor, cloud |

| 12 | 10.7 | 4 | Cloud, surface temperature |

| 13 | 12.0 | 4 | Water vapor, cloud, surface temperature |

| 14 | 13.5 | 4 | Water vapor, cloud |

| Models | Time | Accuracy (%) | |||

|---|---|---|---|---|---|

| Clear Sky | Water Clouds | Ice Clouds | Overall | ||

| FY-4A L2 | Daytime | 68.3 | 95.0 | 97.3 | 84.6 |

| Nighttime | 72.2 | 83.5 | 89.1 | 81.6 | |

| All day | 70.0 | 89.3 | 92.9 | 83.1 | |

| RF | Daytime | 89.2 | 89.8 | 96.1 | 91.4 |

| Nighttime | 88.3 | 91.3 | 95.3 | 91.7 | |

| All day | 88.8 | 90.5 | 95.7 | 91.5 | |

| LightGBM | Daytime | 90.9 | 89.7 | 95.8 | 92.0 |

| Nighttime | 89.6 | 91.6 | 95.6 | 92.4 | |

| All day | 90.3 | 90.6 | 95.7 | 92.2 | |

| XGBoost | Daytime | 90.9 | 90.2 | 96.0 | 92.3 |

| Nighttime | 89.8 | 92.0 | 96.1 | 92.7 | |

| All day | 90.4 | 91.1 | 96.1 | 92.5 | |

| MLP | Daytime | 90.5 | 91.7 | 96.0 | 92.4 |

| Nighttime | 88.9 | 94.0 | 96.2 | 93.1 | |

| All day | 89.8 | 92.8 | 96.1 | 92.8 | |

| Meteorological Fator | Contributions (%) | ||

|---|---|---|---|

| Daytime | Nighttime | All Day | |

| T2m | 12.5 | 12.6 | 12.6 |

| SKT | 14.1 | 14.3 | 14.2 |

| ATP | 18.4 | 19.1 | 18.8 |

| RH | 12.0 | 13.9 | 12.9 |

| Meteorological Factors | Contributions (%) | ||

|---|---|---|---|

| Daytime | Nighttime | All Day | |

| T2m | 6.1 | 7.6 | 6.8 |

| SKT | 5.1 | 7.2 | 6.1 |

| ATP | 12.6 | 12.8 | 12.7 |

| RH | 7.7 | 7.8 | 7.7 |

| T2m + SKT + ATP + RH | 31.5 | 35.3 | 33.3 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Liang, Y.; Zhao, L.; Sun, Y.; Feng, Z.; Huang, X.; Zhong, W. A Machine Learning Model for FY-4A Cloud Detection Based on Physical Feature Fusion. Remote Sens. 2026, 18, 536. https://doi.org/10.3390/rs18040536

Liang Y, Zhao L, Sun Y, Feng Z, Huang X, Zhong W. A Machine Learning Model for FY-4A Cloud Detection Based on Physical Feature Fusion. Remote Sensing. 2026; 18(4):536. https://doi.org/10.3390/rs18040536

Chicago/Turabian StyleLiang, Yanning, Li Zhao, Yuan Sun, Zhihao Feng, Xiaogang Huang, and Wei Zhong. 2026. "A Machine Learning Model for FY-4A Cloud Detection Based on Physical Feature Fusion" Remote Sensing 18, no. 4: 536. https://doi.org/10.3390/rs18040536

APA StyleLiang, Y., Zhao, L., Sun, Y., Feng, Z., Huang, X., & Zhong, W. (2026). A Machine Learning Model for FY-4A Cloud Detection Based on Physical Feature Fusion. Remote Sensing, 18(4), 536. https://doi.org/10.3390/rs18040536