No Trade-Offs: Unified Global, Local, and Multi-Scale Context Modeling for Building Pixel-Wise Segmentation

Highlights

- This paper presents an efficient end-to-end network architecture, termed TriadFlow-Net, which endows remote sensing building extraction with three core capabilities: global semantic understanding (via GCMM), local detail restoration through LDEM, and multi-scale contextual awareness enabled by the Multi-scale Adaptive Feature Enhancement Module (MAFEM).

- Experimental results on three benchmark datasets—the WHU Building Dataset, the Massachusetts Buildings Dataset, and the Inria Aerial Image Labeling Dataset—demonstrate that the proposed TriadFlow-Net consistently outperforms state-of-the-art methods across multiple evaluation metrics while maintaining computational efficiency, thereby offering a novel and effective solution for high-resolution remote sensing building extraction.

- Through the design of three major modules, our method jointly optimizes global semantic understanding, local detail recovery, and multi-scale context awareness within a unified framework. This not only overcomes the performance bottlenecks of conventional CNNs or Transformers in remote sensing building segmentation but also provides an efficient and transferable general paradigm for other high-resolution ground object segmentation tasks.

- TriadFlow-Net achieves state-of-the-art performance across multiple public benchmarks while maintaining low computational overhead, demonstrating its strong deployability and practical viability. This advancement facilitates the transition of high-precision building extraction techniques from laboratory research to large-scale operational applications—such as national land surveys and urban renewal monitoring.

Abstract

1. Introduction

- (1)

- To address the challenge of large-scale variations in buildings, we construct the Multi-scale Attention Feature Enhancement Module (MAFEM). By deploying parallel attention modules with varying neighborhood radii, MAFEM adaptively captures multi-scale contextual information, thereby significantly enhancing the perceptual robustness of the model towards buildings with drastic scale changes.

- (2)

- To capture cross-building semantic consistency and mitigate the impact of occlusions and disconnections, we innovatively propose the Global Context Mixing Module (GCMM). This module efficiently captures long-range dependencies, substantially strengthening the model’s recognition capability in scenes with complex distributions and occlusion interference.

- (3)

- To achieve self-supervised boundary enhancement without requiring additional annotations, we fuse the original imagery with shallow decoder features. By integrating a Multi-scale High-frequency Extractor (MHFE) and a Channel-Spatial Collaborative Attention mechanism, we precisely locate and enhance high-frequency boundary information. This strategy effectively suppresses noise while significantly improving the integrity and localization accuracy of building edges.

- (4)

- In contrast to existing methods that focus solely on improving a single dimension, we systematically construct a collaborative optimization framework that integrates three core capabilities: global semantic understanding, multi-scale feature representation, and local detail restoration. Specifically, MAFEM generates an initial multi-scale representation, GCMM achieves fine-grained semantic alignment, and LDEM accurately recovers high-frequency boundary structures.

2. Materials and Methods

2.1. Datasets

2.2. Experimental Detail

2.3. Evaluation Metrics

2.4. Overall Methodological Framework

2.4.1. Multi-Scale Attentive Feature Enhancement Module

2.4.2. Global Context Mixture Module

2.4.3. Local Detail Enhancement Module (LDEM)

3. Result

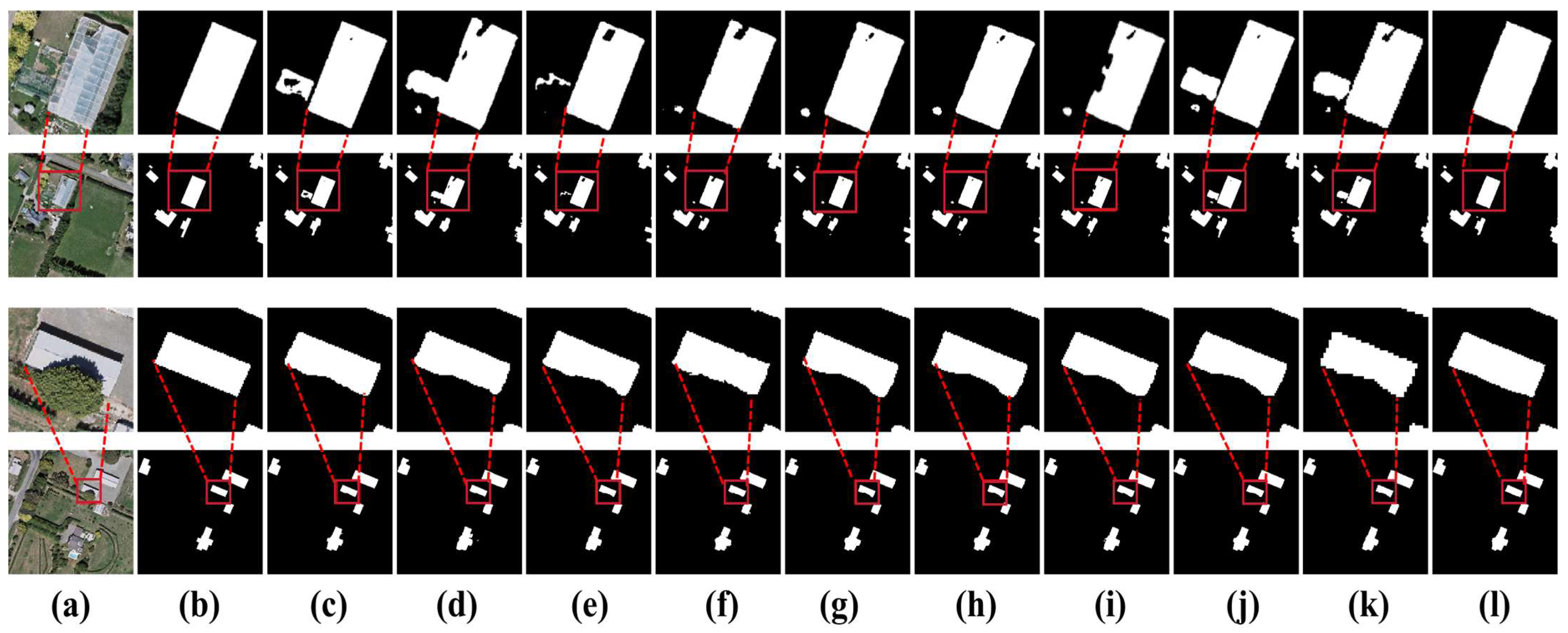

3.1. Results on WHU Building Dataset

3.2. Results on the Massachusetts Building Dataset

3.3. Results on Inria Aerial Image Labeling Dataset

4. Discussion

4.1. Ablation Study

4.2. Effectiveness Analysis of MAFEM

4.3. Effectiveness Analysis of GCMM

4.4. Effectiveness Analysis of LDEM

4.5. Analysis of Different Encoders

4.6. Model Complexity Analysis

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Wang, Z.Q.; Zhou, Y.; Wang, S.X.; Wang, F.; Xu, Z. House building extraction from high-resolution remote sensing images based on IEUN. J. Remote. Sens. 2021, 25, 2245–2254. [Google Scholar] [CrossRef]

- Rathore, M.M.; Ahmad, A.; Paul, A.; Rho, S. Urban planning and building smart cities based on the Internet of Things using big data analytics. Comput. Netw. 2016, 101, 63–80. [Google Scholar] [CrossRef]

- Zheng, Z.; Zhong, Y.; Wang, J.; Ma, A.; Zhang, L. Building damage assessment for rapid disaster response with a deep object-based semantic change detection framework: From natural disasters to man-made disasters. Remote. Sens. Environ. 2021, 265, 112636. [Google Scholar] [CrossRef]

- Li, W.; Sun, K.; Zhao, H.; Li, W.; Wei, J.; Gao, S. Extracting buildings from high-resolution remote sensing images by deep ConvNets equipped with structural-cue-guided feature alignment. Int. J. Appl. Earth Obs. Geoinf. 2022, 113, 102970. [Google Scholar] [CrossRef]

- Qiu, Y.; Wu, F.; Qian, H.; Zhai, R.; Gong, X.; Yin, J.; Liu, C.; Wang, A. AFL-net: Attentional feature learning network for building extraction from remote sensing images. Remote. Sens. 2022, 15, 95. [Google Scholar] [CrossRef]

- Awrangjeb, M.; Zhang, C.; Fraser, C.S. Improved building detection using texture information. Int. Arch. Photogramm. Remote. Sens. Spat. Inf. Sci. 2013, XXXVIII-3/W22, 143–148. [Google Scholar] [CrossRef]

- Wu, W.; Luo, J.; Shen, Z.; Zhu, Z. Building extraction from high resolution remote sensing imagery based on spatial–spectral method. Geomatics Inf. Sci. Wuhan Univ. 2012, 37, 800–805. [Google Scholar] [CrossRef]

- Shelhamer, E.; Long, J.; Darrell, T. Fully Convolutional Networks for Semantic Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 640–651. [Google Scholar] [CrossRef]

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Proceedings of the Medical Image Computing and Computer-Assisted Intervention; Springer: Berlin/Heidelberg, Germany, 2015; Volume 9351, pp. 234–241. [Google Scholar]

- Chen, L.C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. Deeplab: Semantic image segmentation with deep convolutional nets, Atrous convolution, and fully connected CRFs. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 40, 834–848. [Google Scholar] [CrossRef]

- Tian, T.Y.; Ming, W.; Chuang, Z. Multi-Scale Representations by Varying Window Attention for Semantic Segmentation. arXiv 2024, arXiv:2404.16573. [Google Scholar] [CrossRef]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision (ICCV); IEEE: New York, NY, USA, 2021; pp. 9992–10002. [Google Scholar]

- Wang, W.; Xie, E.; Li, X.; Fan, D.-P.; Song, K.; Liang, D.; Lu, T.; Luo, P.; Shao, L. Pyramid vision transformer: A versatile backbone for dense prediction without convolutions. In Proceedings of the IEEE/CVF International Conference on Computer Vision; IEEE: New York, NY, USA, 2021; pp. 568–578. [Google Scholar] [CrossRef]

- Zhang, H.; Liao, Y.; Yang, H.; Yang, G.; Zhang, L. A local–global dual-stream network for building extraction from very-high-resolution remote sensing images. IEEE Trans. Neural Netw. Learn. Syst. 2020, 33, 1269–1283. [Google Scholar] [CrossRef]

- Zhou, D.; Wang, G.; He, G.; Long, T.; Yin, R.; Zhang, Z.; Chen, S.; Luo, B. Robust building extraction for high spatial resolution remote sensing images with self-attention network. Sensors 2020, 20, 7241. [Google Scholar] [CrossRef]

- Zhang, M.; Yu, Y.; Gu, L.; Lin, T.; Tao, X. VM-UNET-V2: Rethinking Vision Mamba UNet for Medical Image Segmentation. arXiv 2024, arXiv:2403.09157. [Google Scholar] [CrossRef]

- Wang, L.; Fang, S.; Meng, X.; Li, R. Building extraction with vision transformer. IEEE Trans. Geosci. Remote. Sens. 2022, 60, 3186634. [Google Scholar] [CrossRef]

- Li, J.; He, W.; Cao, W.; Zhang, L.; Zhang, H. UANet: An uncertainty-aware network for building extraction from remote sensing images. IEEE Trans. Geosci. Remote. Sens. 2024, 62, 5608513. [Google Scholar] [CrossRef]

- Gu, A.; Goel, K.; Ré, C. Efficiently modeling long sequences with structured state spaces. arXiv 2022, arXiv:2111.00396. [Google Scholar] [CrossRef]

- Katharopoulos, A.; Vyas, A.; Pappas, N.; Fleuret, F. Transformers are RNNs: Fast Autoregressive Transformers with Linear Attention. arXiv 2006, arXiv:2006.16236. [Google Scholar] [CrossRef]

- Dao, T.; Gu, A. Transformers are SSMs: Generalized models and efficient algorithms through structured state space duality. arXiv 2024, arXiv:2405.21060. [Google Scholar] [CrossRef]

- Fu, D.Y.; Dao, T.; Saab, K.K.; Thomas, A.W.; Rudra, A.; Ré, C. Hungry hungry hippos: Towards language modeling with state space models. arXiv 2023, arXiv:2212.14052. [Google Scholar] [CrossRef]

- Gu, A.; Dao, T. Mamba: Linear-time sequence modeling with selective state spaces. arXiv 2024, arXiv:2312.00752. [Google Scholar] [CrossRef]

- Jiao, J.; Liu, Y.; Liu, Y.; Tian, Y.; Wang, Y.; Xie, L.; Ye, Q.; Yu, H.; Zhao, Y. VMamba: Visual State Space Model. arXiv 2024, arXiv:2401.10166. [Google Scholar] [CrossRef]

- Zhu, L.H.; Liao, B.C.; Zhang, Q.; Wang, X.; Liu, W.; Wang, X. Vision mamba: Efficient visual representation learning with bidirectional state space model. arXiv 2024, arXiv:2401.09417. [Google Scholar] [CrossRef]

- Gong, M.; Liu, T.; Zhang, M.; Zhang, Q.; Lu, D.; Zheng, H.; Jiang, F. Context–content collaborative network for building extraction from high-resolution imagery. Knowl.-Based Syst. 2023, 263, 110283. [Google Scholar] [CrossRef]

- Guo, H.; Du, B.; Zhang, L.; Su, X. A coarse-to-fine boundary refinement network for building footprint extraction from remote sensing imagery. ISPRS J. Photogramm. Remote. Sens. 2022, 183, 240–252. [Google Scholar] [CrossRef]

- Ji, S.; Wei, S.; Lu, M. Fully convolutional networks for multisource building extraction from an open aerial and satellite imagery dataset. IEEE Trans. Geosci. Remote. Sens. 2018, 57, 574–586. [Google Scholar] [CrossRef]

- Mnih, V. Machine Learning for Aerial Image Labeling. Ph.D. Thesis, University of Toronto, Toronto, ON, Canada, 2013. [Google Scholar]

- Maggiori, E.; Tarabalka, Y.; Charpiat, G.; Alliez, P. Can semantic labeling methods generalize to any city? The Inria aerial image labeling benchmark. In Proceedings of the 2017 IEEE International Geoscience and Remote Sensing Symposium (IGARSS); IEEE: New York, NY, USA, 2017; pp. 3226–3229. [Google Scholar] [CrossRef]

- Loshchilov, I.; Hutter, F. Fixing Weight Decay Regularization in Adam. arXiv 2019, arXiv:1711.05101. [Google Scholar] [CrossRef]

- Liu, W.Z.; Lu, H.; Fu, H.; Cao, Z.G. Learning to Upsample by Learning to Sample. In 2023 IEEE/CVF International Conference on Computer Vision (ICCV); IEEE: New York, NY, USA, 2023; pp. 6004–6014. [Google Scholar] [CrossRef]

- Ali, H.; Steven, W.; Jiachen, L.; Shen, L.; Humphrey, S. Neighborhood Attention Transformer. arXiv 2023, arXiv:2204.07143. [Google Scholar] [CrossRef] [PubMed]

- Ali, H.; Humphrey, S. Dilated Neighborhood Attention Transformer. arXiv 2022, arXiv:2209.15001. [Google Scholar] [CrossRef]

- Ali, H.; Hwu, W.-M.; Humphrey, S. Faster Neighborhood Attention: Reducing the O(n^2) Cost of Self Attention at the Threadblock Level. arXiv 2024, arXiv:2403.04690. [Google Scholar] [CrossRef]

- Hassani, A.; Zhou, F.; Kane, A.; Huang, J.; Chen, C.-Y.; Shi, M.; Walton, S.; Hoehnerbach, M.; Thakkar, V.; Isaev, M.; et al. Generalized Neighborhood Attention: Multi-dimensional Sparse Attention at the Speed of Light. arXiv 2025, arXiv:2504.16922. [Google Scholar] [CrossRef]

- Cui, S.; Chen, W.; Xiong, W.; Xu, X.; Shi, X.; Li, C. SiMultiF: A Remote Sensing Multimodal Semantic Seg-mentation Network with Adaptive Allocation of Modal Weights for Siamese Structures in Multiscene. IEEE Trans. Geosci. Remote. Sens. 2025, 63, 4406817. [Google Scholar] [CrossRef]

- Chen, L.C.; Zhu, Y.; Papandreou, G.; Schroff, F.; Adam, H. Encoder-decoder with Atrous separable convolution for semantic image segmentation. In 15th European Conference on Computer Vision; Springer: Munich, Germany, 2018; pp. 833–851. [Google Scholar] [CrossRef]

- Xie, E.; Wang, W.; Yu, Z.; Anandkumar, A.; Alvarez, J.M.; Luo, P. SegFormer: Simple and Efficient Design for Semantic Segmentation with Transformers. arXiv 2021, arXiv:2105.15203. [Google Scholar] [CrossRef]

- Cao, H.; Wang, Y.; Chen, J.; Jiang, D.; Zhang, X.; Tian, Q.; Wang, M. Swin-Unet:Unet-likepuretransformerfor medicalimagesegmenta-tion. arXiv 2020, arXiv:2105.05537. [Google Scholar] [CrossRef]

- Chen, K.; Chen, B.; Liu, C.; Li, W.; Zou, Z.; Shi, Z. Remote Sensing Image Classification with State Space Model. IEEE Geosci. Remote. Sens. Lett. 2024, 21, 8002605. [Google Scholar] [CrossRef]

- Yang, M.W.; Zhao, L.; Ye, L.; Jia, W.; Jiang, H.; Yang, Z. An Edge Guidance and Scale-Aware Adaptive Fusion Network for Building Extraction from Remote Sensing Images. IEEE Trans. Geosci. Remote. Sens. 2025, 63, 4700513. [Google Scholar] [CrossRef]

| Baseline | Years | WHU (%) | Massachusetts (%) | Inria (%) | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Iou | F1 | Pre | Recall | Iou | F1 | Pre | Recall | Iou | F1 | Pre | Recall | ||

| UNet | 2015 | 90.03 | 94.75 | 94.76 | 94.76 | 71.08 | 83.09 | 83.50 | 82.68 | 80.25 | 89.04 | 89.20 | 88.88 |

| DeeplabV3+ | 2018 | 87.09 | 93.10 | 93.45 | 92.75 | 70.50 | 82.70 | 83.42 | 81.98 | 79.84 | 88.79 | 88.97 | 88.61 |

| SegFormer | 2021 | 88.84 | 94.09 | 94.71 | 93.48 | 71.96 | 83.69 | 83.60 | 83.78 | 81.19 | 89.22 | 89.60 | 88.85 |

| Swin-Unet | 2022 | 88.72 | 94.02 | 93.73 | 94.32 | 69.90 | 82.28 | 85.79 | 79.05 | 78.35 | 87.86 | 87.80 | 87.92 |

| RS-mamba | 2024 | 89.60 | 94.51 | 94.82 | 94.20 | 71.08 | 83.10 | 82.72 | 83.48 | 81.37 | 89.73 | 90.26 | 89.20 |

| VM-UNetv2 | 2024 | 88.84 | 94.09 | 94.37 | 93.80 | 68.35 | 81.20 | 81.99 | 80.42 | 79.34 | 88.48 | 88.99 | 87.97 |

| BuildFormer | 2022 | 89.60 | 94.51 | 94.56 | 94.47 | 72.73 | 84.26 | 84.97 | 83.59 | 80.29 | 89.07 | 89.66 | 88.49 |

| UANet | 2024 | 90.23 | 94.84 | 94.69 | 95.01 | 72.21 | 83.86 | 83.45 | 84.28 | 81.69 | 89.92 | 90.50 | 89.35 |

| EGAFNet | 2025 | 88.79 | 94.06 | 93.79 | 94.33 | 71.45 | 83.34 | 83.05 | 83.63 | 80.86 | 89.42 | 89.80 | 89.04 |

| Ours | / | 90.69 | 95.12 | 95.20 | 95.03 | 73.97 | 85.04 | 85.59 | 84.49 | 83.28 | 90.87 | 91.62 | 90.14 |

| Baseline | MAFEM | GCMM | LDEM | WHU (%) | Inria (%) | Massachusetts (%) | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Iou | F1 | Pre | Recall | Iou | F1 | Pre | Recall | Iou | F1 | Pre | Recall | ||||

| √ | × | × | × | 88.81 | 94.07 | 94.43 | 93.72 | 78.65 | 87.86 | 87.89 | 87.92 | 70.33 | 83.41 | 84.76 | 82.11 |

| √ | √ | × | × | 89.62 | 94.52 | 95.13 | 93.92 | 81.75 | 89.95 | 90.81 | 89.11 | 71.51 | 83.67 | 85.04 | 82.34 |

| √ | × | √ | × | 89.65 | 94.54 | 95.19 | 93.90 | 82.76 | 90.56 | 90.85 | 89.56 | 71.69 | 83.92 | 85.11 | 82.76 |

| √ | × | × | √ | 88.85 | 94.09 | 94.44 | 93.75 | 78.66 | 87.93 | 87.91 | 87.95 | 70.35 | 83.45 | 84.79 | 82.15 |

| √ | √ | √ | × | 90.23 | 94.86 | 95.05 | 94.67 | 83.02 | 90.72 | 91.56 | 89.63 | 72.42 | 84.09 | 85.32 | 82.89 |

| √ | √ | × | √ | 90.36 | 94.85 | 94.96 | 94.75 | 82.95 | 90.64 | 91.52 | 89.77 | 71.53 | 83.73 | 85.15 | 82.35 |

| √ | × | √ | √ | 90.57 | 95.03 | 95.15 | 94.92 | 83.05 | 90.75 | 91.56 | 89.96 | 73.68 | 84.51 | 85.48 | 83.57 |

| √ | √ | √ | √ | 90.69 | 95.12 | 95.20 | 95.03 | 83.28 | 90.87 | 91.62 | 90.14 | 73.97 | 85.04 | 85.59 | 84.49 |

| Case | Iou (%) | F1 (%) | Pre (%) | Recall (%) |

|---|---|---|---|---|

| Case1 | 89.13 | 94.24 | 94.50 | 94.01 |

| Case2 | 89.39 | 94.39 | 95.07 | 93.73 |

| Case3 | 90.46 | 94.89 | 94.69 | 95.01 |

| Case4 | 90.69 | 95.12 | 95.20 | 95.03 |

| Case | Iou (%) | F1 (%) | Pre (%) | Recall (%) |

|---|---|---|---|---|

| case1 | 89.62 | 94.52 | 95.13 | 93.92 |

| case2 | 89.63 | 94.53 | 95.19 | 93.90 |

| case3 | 89.65 | 94.74 | 95.05 | 94.02 |

| case4 | 90.01 | 94.78 | 95.39 | 94.10 |

| case5 | 89.95 | 94.54 | 94.95 | 94.00 |

| case6 | 90.12 | 94.81 | 95.05 | 94.54 |

| case7 | 90.23 | 94.86 | 95.09 | 94.67 |

| Case | Iou (%) | F1 (%) | Pre (%) | Recall (%) |

|---|---|---|---|---|

| case1 | 90.23 | 94.52 | 95.13 | 93.92 |

| case2 | 90.45 | 94.67 | 95.09 | 94.50 |

| case3 | 90.69 | 95.12 | 95.20 | 95.03 |

| Method | Iou (%) | F1 (%) | Pre (%) | Recall (%) |

|---|---|---|---|---|

| ConvNeXt-B | 91.25 | 95.67 | 95.71 | 95.58 |

| SwinV2-B | 91.83 | 96.15 | 96.20 | 96.07 |

| ResNet50 | 90.69 | 95.12 | 95.20 | 95.03 |

| Baseline | Years | Parameters (M) | FLOPS (G) | FPS | Iou (%) |

|---|---|---|---|---|---|

| UNet | 2015 | 71.98 | 235.99 | 39.49 | 80.25 |

| DeeplabV3+ | 2018 | 32.74 | 101.10 | 49.41 | 79.84 |

| SegFormer | 2021 | 24.72 | 50.15 | 58.95 | 81.19 |

| Swin-Unet | 2022 | 34.22 | 81.09 | 33.37 | 78.35 |

| RS-mamba | 2024 | 50.38 | 92.14 | - | 81.37 |

| VM-UNetv2 | 2024 | 22.77 | 42.40 | - | 79.34 |

| BuildFormer | 2022 | 40.52 | 232.72 | 32.35 | 80.29 |

| UANet | 2024 | 36.10 | 250.02 | 33.69 | 81.69 |

| EGAFNet | 2025 | 34.84 | 236.81 | 24.86 | 80.86 |

| Ours | / | 29.67 | 82.47 | 21.97 | 83.28 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhang, Z.; Yuan, D.; Zhou, Y.; Yang, R. No Trade-Offs: Unified Global, Local, and Multi-Scale Context Modeling for Building Pixel-Wise Segmentation. Remote Sens. 2026, 18, 472. https://doi.org/10.3390/rs18030472

Zhang Z, Yuan D, Zhou Y, Yang R. No Trade-Offs: Unified Global, Local, and Multi-Scale Context Modeling for Building Pixel-Wise Segmentation. Remote Sensing. 2026; 18(3):472. https://doi.org/10.3390/rs18030472

Chicago/Turabian StyleZhang, Zhiyu, Debao Yuan, Yifei Zhou, and Renxu Yang. 2026. "No Trade-Offs: Unified Global, Local, and Multi-Scale Context Modeling for Building Pixel-Wise Segmentation" Remote Sensing 18, no. 3: 472. https://doi.org/10.3390/rs18030472

APA StyleZhang, Z., Yuan, D., Zhou, Y., & Yang, R. (2026). No Trade-Offs: Unified Global, Local, and Multi-Scale Context Modeling for Building Pixel-Wise Segmentation. Remote Sensing, 18(3), 472. https://doi.org/10.3390/rs18030472