GRASS: Glass Reflection Artifact Suppression Strategy via Virtual Point Removal in LiDAR Point Clouds

Highlights

- A dual-module GRASS framework is proposed, which robustly estimates complete and continuous glass planes involved in reflections by combining multi-echo properties with geometric segmentation, overcoming the limitations of previous sparse or incomplete glass estimations.

- High-precision identification of virtual points is achieved by fusing reflection symmetry with learned geometric similarity, enabling accurate removal of sparse, low-structural-continuity reflection artifacts and significantly outperforming existing methods.

- Provides a high-quality 3D data foundation for building surveying, offering cleaner and more precise data for downstream applications like architectural modeling and infrastructure inspection by effectively suppressing glass reflection artifacts in TLS point clouds.

- Enhances the robustness of LiDAR-based perception systems by offering novel theoretical insights and practical solutions for 3D reflection problems, significantly improving reliability in complex glass-rich environments.

Abstract

1. Introduction

1.1. Motivation

1.2. Contribution and Paper Organization

- We propose a robust glass detection strategy leveraging multi-echo properties and geometrically segmented regions, which significantly enhances the completeness and reflective surface estimation.

- By jointly exploiting reflection symmetry and geometric similarity between reflected and real-world points, we develop an effective virtual point detection module that eliminates reflection artifacts without relying on handcrafted geometric constraints.

- We evaluated the quantitative performance of virtual point removal by simulating the glass reflection artifact using multiple diverse public or self-collected 3DPCs datasets. Extensive experiments demonstrate the effectiveness of the proposed solution on glass reflection artifact suppression over the existing methods.

2. Related Work

2.1. Indoor GRAS

2.2. Outdoor GRAS

- Echo-based methods. Research on virtual point removal in outdoor 3D point clouds remains scarce. Refs. [22,25] introduced a method that identifies glass surfaces using multi-return echoes. After detecting the dominant glass plane, they used a score function based on local geometric features to identify virtual points. However, their methods fail to extract glass surfaces in a completed form, leaving residual reflection artifacts undetected. To achieve complete glass extraction, refs. [23,30] adopt image-domain glass inference strategies, in which glass regions are first estimated or completed in the 2D panoramic image space and then projected into the 3D point cloud. Specifically, ref. [23] performs super-pixel segmentation followed by morphological operations to fill glass holes, while ref. [30] employs learned image segmentation to complete glass regions based on a count map representation. However, these image-domain completion approaches tend to introduce background structures located behind the glass into the inferred glass regions, particularly in areas where laser returns are missing due to total reflection. When reprojected into 3D space, such filled regions do not correspond to physical samples on the true glass plane, leading to ambiguity between glass surfaces and background objects.

- Intensity-based methods. Differing from the echo-based method [22,23,25,30], Shao et al. [24] and Fang et al. [26] extract mirror-like reflective surfaces based on intensity instead, where the intensity values returned by glass objects are much lower than those of other objects. However, some glass components with curtains drawn behind them cannot be detected due to their high intensity values returned. As a result, the virtual points reflected by them cannot be removed.

2.3. GRAS in SLAM

3. Methodology

3.1. Overview

- Glass plane detection module (Section 3.2): We first project the 3D points onto a 2D count map and record echo count returns per pixel, then assign the count values per pixel to its corresponding first echoes of point clouds. Next, a planar segmentation method is applied to decompose the entire scene into simple planar geometric structures. Subsequently, glass objects are identified using a rule-based extraction approach.

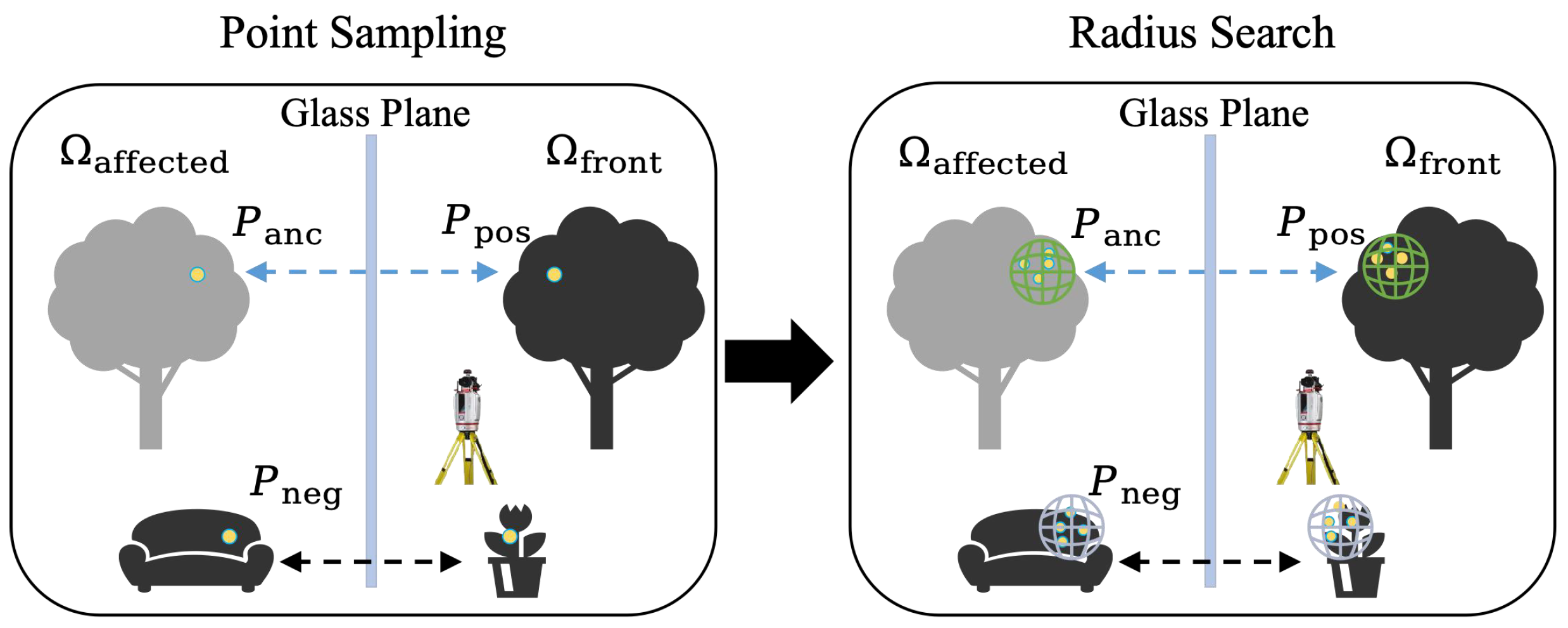

- Virtual points removal module (Section 3.3): We present a learning-based approach for virtual point removal in which the 3D feature similarity estimation network is trained independently. Specifically, geometric similarities are computed between pairs of points located at symmetric positions with respect to the estimated glass plane. Virtual points are then identified by applying a threshold to the product of reflection symmetry and the estimated feature similarity at each point.

3.2. Glasses Plane Detection

3.2.1. Detection Principle via Multi-Echo Count

3.2.2. Challenges of Point-Wise Count-Based Glass Estimation

- According to the count map projection mechanism shown in Figure 4a, the count value of a single pixel depends on the number of valid return signals within its spatial domain. However, the ambiguous multi-echo point clouds will lead to uneven count distribution in the same glasses in Figure 4b, resulting in sparse extraction of reflective area based on count-based threshold. Subsequently, sparse reflective area points result in incomplete and inaccurate orientation and position estimation for the reflective plane. Finally, the method struggles to detect the virtual points it creates, thereby decreasing the accuracy of textcolorredvirtual points removal.

- It has also been proved that the high reflectivity of glass surfaces may lead to total specular reflection, especially at large incidence angles or under perpendicular incidence, resulting in significant missing point cloud data from glass surfaces [35]. As a result, even real objects and far-away reflection artifacts inside the building planes exhibit dense point clusters, no points can be sampled on the missing glass regions. Instead, these signals may be projected onto real planar objects (e.g., room partition) inside the building, resulting in a higher point count on planar objects behind the glass than on the actual glass surfaces. As shown in Figure 4b, many pixels with high multi-count values correspond to points located on indoor objects, not glass planes. In such case, it is challenging to use an iterative RANSAC to extract glass surfaces. Since RANSAC relies on the highest number of fitted points to select plane parameters in each iteration, this purely statistical criterion may not be reliable for such scenarios [36].

3.2.3. Surface Growing Segmentation

3.2.4. Dominant Glass Plane Extraction and Refinement

3.3. Virtual Point Removal

3.3.1. Reflection Symmetry

3.3.2. Geometric Similarity

3.3.3. Detection of Virtual Points

4. Evaluations and Results

4.1. Results of Glass Plane Estimation

4.2. Results of Virtual Point Removal

4.2.1. Datasets

4.2.2. Experimental Setup

4.2.3. Quantitative Performance

4.2.4. Qualitative Performance

4.2.5. Runtime and Efficiency Evaluation

4.3. Ablation Study

5. Discussion

- Limitations in glass surface identification: Glass region detection in existing methods often relies on rule-based heuristics tailored to specific scanning configurations and scene layouts, requiring prior knowledge of structural assumptions. As a result, irregular or curved glass surfaces (e.g., ring-shaped or convex panels), particularly when freestanding rather than wall-embedded, are difficult to detect reliably. Our method follows a similar geometric paradigm and likewise assumes that glass surfaces form dominant planar structures embedded in walls that can be geometrically distinguished in LiDAR data. Under this assumption, planar glass surfaces provide sufficient geometric evidence to support robust glass segmentation. Even when LiDAR returns on glass are sparse, fragmented, or entirely missing due to total reflection, partial glass observations can be aggregated through surface-level merging to form a dominant glass plane hypothesis. This aggregated planar representation subsequently provides a reliable geometric basis for subsequent robust virtual point detection and removal. It is noted that in extreme cases where reflection points become excessively sparse, the glass reflection count may drop below a reliable level, making glass surface estimation inherently difficult for count-based methods, including ours.

- Limitations in geometry-based virtual point detection: Virtual point detection typically relies on geometric features between corresponding point pairs across glass surfaces. However, due to occlusions, some real points that correspond to virtual points may not be captured. In such case, these virtual points cannot be correctly classified. Moreover, non-glass reflective materials such as water surfaces, polished stone, or marble floors in buildings can also produce some misleading reflection artifacts. These artifacts often exhibit different shapes, densities, or echo behaviors compared to real objects, which is still a challenging detection scenario.

- Limitations in Data Availability and Benchmarking: Due to the high cost of TLS data acquisition, existing datasets are not only limited in volume but particularly scarce for scenarios involving reflective glass surfaces. Consequently, current existing studies are based on self-collected datasets, and the research community lacks adequate open source benchmarks to support broader research in this area.

6. Conclusions

Supplementary Materials

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Shih, Y.-C.; Krishnan, D.; Durand, F.; Freeman, W.T. Reflection removal using ghosting cues. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 3193–3201. [Google Scholar] [CrossRef]

- Arvanitopoulos, N.; Achanta, R.; Süsstrunk, S. Single image reflection suppression. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 1752–1760. [Google Scholar] [CrossRef]

- Martinez, J.; Pistonesi, S.; Maciel, M.C.; Flesia, A.G. Multi-scale fidelity measure for image fusion quality assessment. Inf. Fusion 2019, 50, 197–211. [Google Scholar] [CrossRef]

- RahmaniKhezri, H.; Kim, S.; Hefeeda, M. Unsupervised single-image reflection removal. IEEE Trans. Multimed. 2023, 25, 4958–4971. [Google Scholar] [CrossRef]

- Fan, Q.; Yang, J.; Hua, G.; Chen, B.; Wipf, D. A generic deep architecture for single image reflection removal and image smoothing. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 3258–3267. [Google Scholar] [CrossRef]

- Wan, R.; Shi, B.; Duan, L.-Y.; Tan, A.-H.; Kot, A.C. CRRN: Multi-scale guided concurrent reflection removal network. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–22 June 2018; pp. 4777–4785. [Google Scholar] [CrossRef]

- Yang, J.; Gong, D.; Liu, L.; Shi, Q. Seeing deeply and bidirectionally: A deep learning approach for single image reflection removal. In Proceedings of the Computer Vision—ECCV 2018: 15th European Conference, Munich, Germany, 8–14 September 2018; pp. 675–691. [Google Scholar] [CrossRef]

- Wei, K.; Yang, J.; Fu, Y.; Wipf, D.; Huang, H. Single image reflection removal exploiting misaligned training data and network enhancements. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; pp. 8170–8179. [Google Scholar] [CrossRef]

- Wieschollek, P.; Gallo, O.; Gu, J.; Kautz, J. Separating reflection and transmission images in the wild. In Computer Vision—ECCV 2018: Proceedings of the 15th European Conference, Munich, Germany, 8–14 September 2018; Proceedings, Part XIII; Springer: Berlin/Heidelberg, Germany, 2018; pp. 90–105. [Google Scholar] [CrossRef]

- Guo, X.; Cao, X.; Ma, Y. Robust separation of reflection from multiple images. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 2195–2202. [Google Scholar] [CrossRef]

- Prasad, B.H.; Mitra, K. Burst reflection removal using reflection motion aggregation cues. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), Waikoloa, HI, USA, 2–7 January 2023; pp. 239–248. [Google Scholar] [CrossRef]

- Han, B.-J.; Sim, J.-Y. Reflection removal using low-rank matrix completion. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 3872–3880. [Google Scholar] [CrossRef]

- Hu, Q.; Guo, X. Single image reflection separation via component synergy. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 1–6 October 2023; pp. 13092–13101. [Google Scholar] [CrossRef]

- Kong, N.; Tai, Y.-W.; Shin, J.S. A physically-based approach to reflection separation: From physical modeling to constrained optimization. IEEE Trans. Pattern Anal. Mach. Intell. 2014, 36, 209–221. [Google Scholar] [CrossRef]

- Ambrosino, A.; Di Benedetto, A.; Fiani, M. Hybrid Denoising Algorithm for Architectural Point Clouds Acquired with SLAM Systems. Remote Sens. 2024, 16, 4559. [Google Scholar] [CrossRef]

- Gonizzi Barsanti, S.; Marini, M.R.; Malatesta, S.G.; Rossi, A. Evaluation of Denoising and Voxelization Algorithms on 3D Point Clouds. Remote Sens. 2024, 16, 2632. [Google Scholar] [CrossRef]

- Zheng, Z.; Zha, B.; Zhou, Y.; Huang, J.; Xuchen, Y.; Zhang, H. Single-Stage Adaptive Multi-Scale Point Cloud Noise Filtering Algorithm Based on Feature Information. Remote Sens. 2022, 14, 367. [Google Scholar] [CrossRef]

- Wang, L.; Chen, Y.; Xu, H. Point Cloud Denoising in Outdoor Real-World Scenes Based on Measurable Segmentation. Remote Sens. 2024, 16, 2347. [Google Scholar] [CrossRef]

- Zhao, Y.; Zhang, J.; Xu, S.; Ma, J. Deep learning-based low overlap point cloud registration for complex scenarios: A review. Inf. Fusion 2024, 107, 102305. [Google Scholar] [CrossRef]

- Wu, Y.; Liu, J.; Gong, M.; Miao, Q.; Ma, W.; Xu, C. Joint semantic segmentation using representations of LiDAR point clouds and camera images. Inf. Fusion 2024, 108, 102370. [Google Scholar] [CrossRef]

- Xiong, B.; Jin, Y.; Li, F.; Chen, Y.; Zou, Y.; Zhou, Z. Knowledge-driven inference for automatic reconstruction of indoor detailed as-built BIMs from laser scanning data. Autom. Constr. 2023, 156, 105097. [Google Scholar] [CrossRef]

- Yun, J.-S.; Sim, J.-Y. Reflection removal for large-scale 3D point clouds. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 4597–4605. [Google Scholar] [CrossRef]

- Yun, J.-S.; Sim, J.-Y. Cluster-wise removal of reflection artifacts in large-scale 3D point clouds using superpixel-based glass region estimation. In Proceedings of the IEEE International Conference on Image Processing (ICIP), Taipei, Taiwan, 22–25 September 2019; pp. 1780–1784. [Google Scholar] [CrossRef]

- Shao, W.; Kakizaki, K.; Araki, S.; Mukai, T. Reflections removal produced by multiple transparent and reflective glass objects in TLS measurements. In Proceedings of the IEEE 47th Annual Computers, Software, and Applications Conference (COMPSAC), Torino, Italy, 26–30 June 2023; pp. 370–379. [Google Scholar] [CrossRef]

- Yun, J.-S.; Sim, J.-Y. Virtual point removal for large-scale 3D point clouds with multiple glass planes. IEEE Trans. Pattern Anal. Mach. Intell. 2021, 43, 729–744. [Google Scholar] [CrossRef] [PubMed]

- Fang, L.; Li, T.; Lin, Y.; Zhou, S.; Yao, W. A coupled optical–radiometric modeling approach to removing reflection noise in TLS data of urban areas. ISPRS J. Photogramm. Remote Sens. 2025, 220, 217–231. [Google Scholar] [CrossRef]

- Rusu, R.B.; Blodow, N.; Beetz, M. Fast point feature histograms (FPFH) for 3D registration. In Proceedings of the IEEE International Conference on Robotics and Automation, Kobe, Japan, 12–17 May 2009; pp. 3212–3217. [Google Scholar] [CrossRef]

- Gao, R.; Park, J.; Hu, X.; Yang, S.; Cho, K. Reflective noise filtering of large-scale point cloud using multi-position LiDAR sensing data. Remote Sens. 2021, 13, 3058. [Google Scholar] [CrossRef]

- Gao, R.; Li, M.; Yang, S.-J.; Cho, K. Reflective noise filtering of large-scale point cloud using transformer. Remote Sens. 2022, 14, 577. [Google Scholar] [CrossRef]

- Lee, O.; Joo, K.; Sim, J.-Y. Learning-based reflection-aware virtual point removal for large-scale 3D point clouds. IEEE Robot. Autom. Lett. 2023, 8, 8510–8517. [Google Scholar] [CrossRef]

- Koch, R.; May, S.; Murmann, P.; Nüchter, A. Identification of transparent and specular reflective material in laser scans to discriminate affected measurements for faultless robotic SLAM. Robot. Auton. Syst. 2017, 87, 296–312. [Google Scholar] [CrossRef]

- Koch, R.; May, S.; Koch, P.; Kühn, M.; Nüchter, A. Detection of specular reflections in range measurements for faultless robotic SLAM. In Robot 2015: Second Iberian Robotics Conference. Advances in Intelligent Systems and Computing; Reis, L., Moreira, A., Lima, P., Montano, L., Muñoz-Martinez, V., Eds.; Springer: Cham, Switzerland, 2015; Volume 417. [Google Scholar] [CrossRef]

- Zhao, X.; Yang, Z.; Schwertfeger, S. Mapping with reflection: Detection and utilization of reflection in 3D lidar scans. In Proceedings of the IEEE International Symposium on Safety, Security, and Rescue Robotics, Abu Dhabi, United Arab Emirates, 4–6 November 2020; pp. 27–33. [Google Scholar] [CrossRef]

- Li, Y.; Zhao, X.; Schwertfeger, S. Detection and utilization of reflections in LiDAR scans through plane optimization and plane SLAM. Sensors 2024, 24, 4794. [Google Scholar] [CrossRef]

- Born, M.; Wolf, E. Principles of Optics: Electromagnetic Theory of Propagation, Interference and Diffraction of Light, 7th ed.; Cambridge University Press: Cambridge, UK, 2013. [Google Scholar]

- Xu, Y.; Boerner, R.; Yao, W.; Hoegner, L.; Stilla, U. Pairwise coarse registration of point clouds in urban scenes using voxel-based 4-planes congruent sets. ISPRS J. Photogramm. Remote Sens. 2019, 151, 106–123. [Google Scholar] [CrossRef]

- Vosselman, G. Point cloud segmentation for urban scene classification. Int. Arch. Photogramm. Remote Sens. Spatial Inf. Sci. 2013, XL-7/W2, 257–262. [Google Scholar] [CrossRef]

- Vosselman, G.; Gorte, B.G.H.; Sithole, G.; Rabbani, T. Recognising structure in laser scanner point clouds. Int. Arch. Photogramm. Remote Sens. Spatial Inf. Sci. 2004, 46, 33–38. [Google Scholar]

- Thomas, H.; Qi, C.R.; Deschaud, J.-E.; Marcotegui, B.; Goulette, F.; Guibas, L. KPConv: Flexible and deformable convolution for point clouds. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Republic of Korea, 27 October–2 November 2019; pp. 6410–6419. [Google Scholar] [CrossRef]

- Hackel, T.; Savinov, N.; Ladicky, L.; Wegner, J.D.; Schindler, K.; Pollefeys, M. SEMANTIC3D.NET: A new large-scale point cloud classification benchmark. ISPRS Ann. Photogramm. Remote Sens. Spatial Inf. Sci. 2017, IV-1/W1, 91–98. [Google Scholar] [CrossRef]

- RIEGL Laser Measurement Systems. Available online: http://www.riegl.com/ (accessed on 15 November 2025).

- Zhao, X.; Schwertfeger, S. 3DRef: 3D dataset and benchmark for reflection detection in RGB and lidar data [dataset]. In Proceedings of the International Conference on 3D Vision, Davos, Switzerland, 18–21 March 2024; pp. 225–234. [Google Scholar] [CrossRef]

| Parameter | Default Value | Description |

|---|---|---|

| seed_min_number | 3 | Minimum number of points required to form a seed plane |

| seed_search_radius | 0.3 m | Neighborhood radius for seed detection |

| seed_max_distance | 0.2 m | Distance threshold for evaluating point-to-seed-plane consistency |

| segment_min_number | 20 | Minimum number of points required to form a valid segment |

| segment_search_radius | 0.5 m | Neighborhood radius for surface growing |

| segment_search_nearest_k | 20 | Maximum number of nearest neighbors considered within the search radius (for efficiency in dense regions) |

| recompute_min_distance | 0.2 m | Distance threshold triggering plane re-fitting |

| recompute_min_number | 1 | Minimum number of newly added points required to trigger plane re-estimation |

| Method | (a) | (b) | (c) | (d) | (e) | (f) | Average |

|---|---|---|---|---|---|---|---|

| [23] | 0.615 | 0.585 | 0.729 | 0.880 | 0.520 | 0.709 | 0.673 |

| [25] | 0.694 | 0.822 | 0.627 | 0.777 | 0.379 | - | 0.659 |

| [30] | 0.766 | 0.862 | 0.781 | 0.924 | 0.862 | - | 0.839 |

| Proposed | 0.822 | 0.846 | 0.923 | 0.976 | 0.875 | 0.811 | 0.876 |

| Scenario | ODR (%) | IDR (%) | Accuracy (%) | SNR (dB) | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| [25] | [26] | Proposed | [25] | [26] | Proposed | [25] | [26] | Proposed | [25] | [26] | Proposed | |||||

| Scan 04 | outdoor | 75.12 | 87.30 | 89.72 | 99.28 | 98.68 | 98.78 | 91.42 | 95.00 | 95.83 | 8.96 | 11.28 | 12.09 | |||

| Scan 05 | outdoor | 50.24 | 88.53 | 90.52 | 97.49 | 97.48 | 97.55 | 88.32 | 95.74 | 96.18 | 8.39 | 12.77 | 13.25 | |||

| Scan 10 | indoor | 47.81 | 41.83 | 82.93 | 74.95 | 84.31 | 87.78 | 69.00 | 75.00 | 86.71 | 4.01 | 4.94 | 7.69 | |||

| Scan 11 | indoor | 97.43 | 73.20 | 77.13 | 78.09 | 94.56 | 94.86 | 79.59 | 92.90 | 93.48 | 6.55 | 11.14 | 11.51 | |||

| average | 67.65 | 72.71 | 85.07 | 87.45 | 93.75 | 94.74 | 82.08 | 89.66 | 93.05 | 6.98 | 10.03 | 11.14 | ||||

| Model | Number of Points | Processing Time (s) | ||

|---|---|---|---|---|

| Glass Region Estimation | Virtual Point Detection | Total | ||

| (a) | 5,562,972 | 66.72 | 1.26 | 67.98 |

| (b) | 6,140,383 | 46.31 | 0.78 | 47.09 |

| (c) | 9,720,671 | 88.90 | 1.84 | 90.74 |

| (d) | 4,913,710 | 32.25 | 0.71 | 32.96 |

| (e) | 5,000,902 | 79.83 | 1.52 | 81.35 |

| (f) | 8,316,360 | 59.64 | 0.26 | 59.90 |

| Scan 04 | 5,038,858 | 32.11 | 1.88 | 33.99 |

| Scan 05 | 3,342,466 | 26.22 | 0.79 | 27.01 |

| Scan 10 | 1,880,837 | 10.31 | 0.58 | 10.89 |

| Scan 11 | 1,942,031 | 12.42 | 0.30 | 12.72 |

| Methods | Modules | (a) | (b) | (c) | (d) | (e) | (f) | Average |

|---|---|---|---|---|---|---|---|---|

| Proposed | RS + GS(Loss) | 0.822 | 0.846 | 0.923 | 0.976 | 0.875 | 0.811 | 0.876 |

| A | GS(Loss) | 0.782 | 0.791 | 0.857 | 0.980 | 0.868 | 0.851 | 0.855 |

| B | RS + GS(Loss) | 0.722 | 0.774 | 0.820 | 0.909 | 0.826 | 0.758 | 0.805 |

| C | RS + GS(Loss) | 0.802 | 0.842 | 0.888 | 0.959 | 0.874 | 0.782 | 0.858 |

| D | RS + GS(Loss) | 0.767 | 0.805 | 0.908 | 0.967 | 0.848 | 0.762 | 0.843 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Shao, W.; Zhang, Y.; Xue, Y.; Ji, T.; Lao, Y. GRASS: Glass Reflection Artifact Suppression Strategy via Virtual Point Removal in LiDAR Point Clouds. Remote Sens. 2026, 18, 332. https://doi.org/10.3390/rs18020332

Shao W, Zhang Y, Xue Y, Ji T, Lao Y. GRASS: Glass Reflection Artifact Suppression Strategy via Virtual Point Removal in LiDAR Point Clouds. Remote Sensing. 2026; 18(2):332. https://doi.org/10.3390/rs18020332

Chicago/Turabian StyleShao, Wanpeng, Yu Zhang, Yifei Xue, Tie Ji, and Yizhen Lao. 2026. "GRASS: Glass Reflection Artifact Suppression Strategy via Virtual Point Removal in LiDAR Point Clouds" Remote Sensing 18, no. 2: 332. https://doi.org/10.3390/rs18020332

APA StyleShao, W., Zhang, Y., Xue, Y., Ji, T., & Lao, Y. (2026). GRASS: Glass Reflection Artifact Suppression Strategy via Virtual Point Removal in LiDAR Point Clouds. Remote Sensing, 18(2), 332. https://doi.org/10.3390/rs18020332