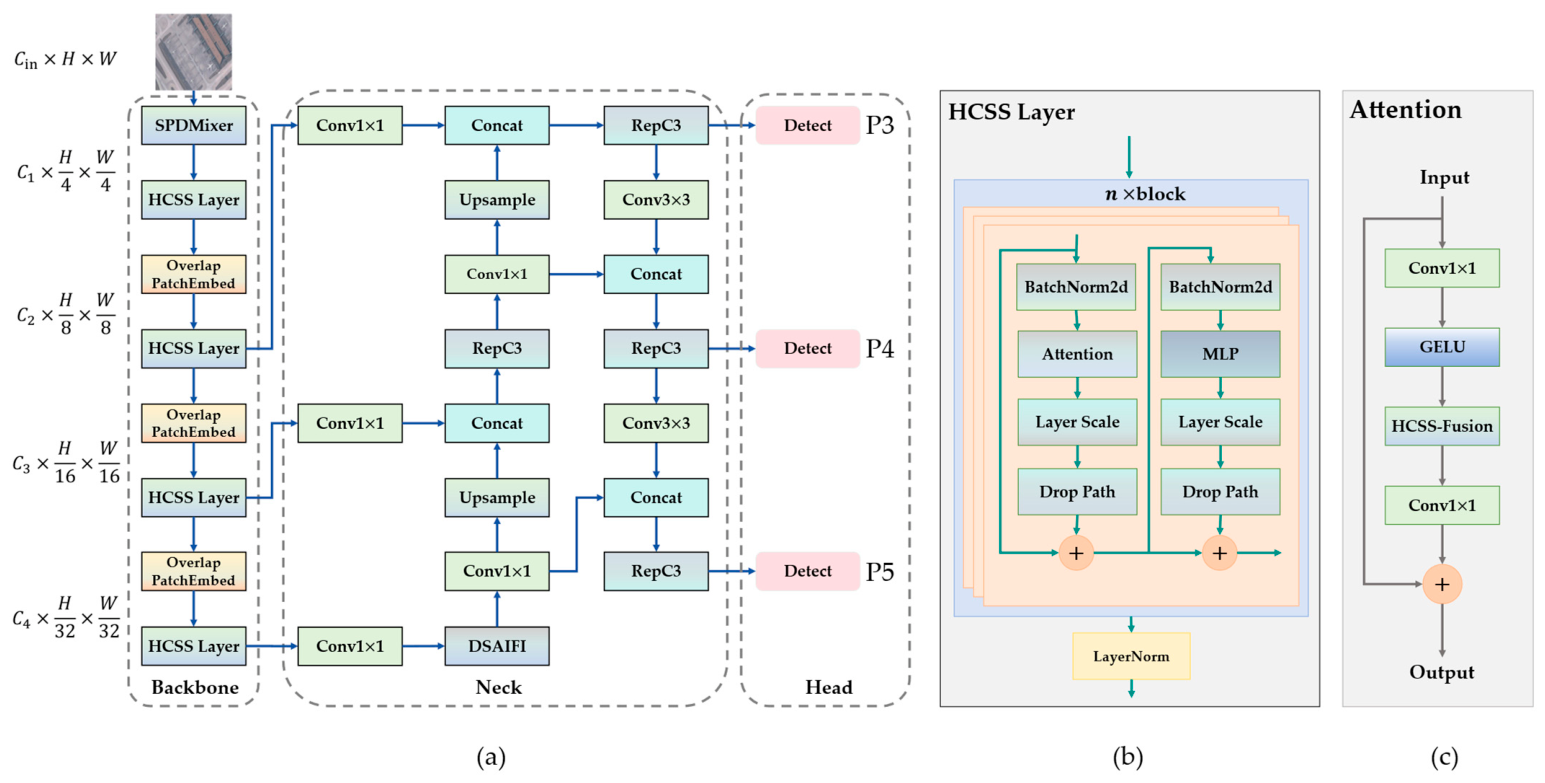

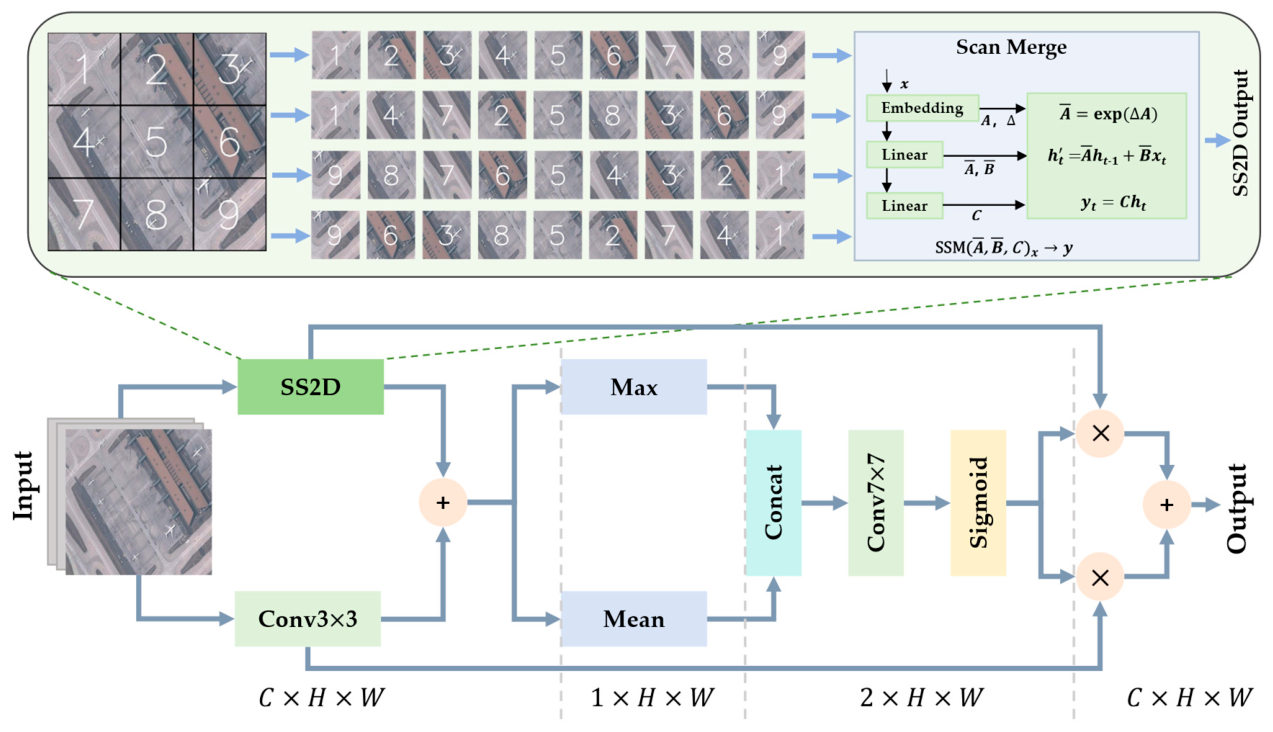

3.2. Hybrid Convolution and Selective Scanning Fusion Module

Existing lightweight backbones often struggle with complex remote sensing backgrounds, failing to achieve discriminative feature representation under stringent computational constraints. To address this, as illustrated in

Figure 2, we propose the HCSS-Fusion module to enhance feature capture capabilities for small objects. In optical remote sensing, micro-targets are frequently submerged in vast backgrounds. This environment poses a challenge for lightweight architectures relying purely on CNNs or pure SSMs.

CNNs offer excellent local inductive biases for capturing sharp geometries. However, their localized receptive fields restrict broad contextual understanding. In contrast, SSMs achieve a global receptive field efficiently. Yet, applying 1D sequential scanning to 2D vision data can disrupt spatial proximity. During long-range state integration, the fragile features of small objects—often comprising just a few pixels—are susceptible to being washed out or over-smoothed.

This challenge provides an opportunity for mitigation through frequency-domain complementarity. Theoretically, because its compact, localized kernel inherently captures rapid spatial variations such as edges and fine textures, pure convolution acts as a high-frequency structural filter

. Functioning as a localized spatial anchor, its high-frequency response

at spatial coordinates

for an input

is expressed as:

where

represents the local neighborhood (receptive field), and (

) are the relative spatial offsets within this neighborhood.

refers to the learnable convolution kernel weights, and

is the input feature value at the offset position.

Simultaneously, the 2D selective scan (SS2D) mechanism functions as a low-frequency contextual filter

. It executes a global linear attention mechanism to aggregate dependencies while maintaining the current spatial resolution. Its contextual output

along the

feature slice is formulated as:

where

,

, and

are query, key, and value matrices projected from the input.

represents the previous hidden state vector, and

is the causal mask matrix ensuring sequential scanning logic. The symbol

denotes the element-wise product. The distance-aware decay tensor

enables the network to aggregate low-frequency global contexts by weighting long-range dependencies.

Leveraging this theoretical synergy, the HCSS-Fusion module captures information through a parallel structure. The local context branch employs a

depthwise convolution to preserve fragile geometric priors. This compact kernel focuses on high-frequency textures and prevents spatial over-smoothing. Parallel to this, the global context branch introduces SS2D to capture long-range dependencies. Since small objects rely heavily on surrounding cues (e.g., vehicles on roads), SS2D provides efficient global guidance. To ensure robust representation power, we set the state dimension to

and the expansion ratio to

. The output features of the two branches,

and

, are calculated in Equation (3):

To achieve adaptive complementarity between local details and global context, we design the Hybrid Scale Selection Mechanism. First, we fuse the extracted local and global features via element-wise addition to obtain a unified intermediate representation

, which efficiently aggregates both local structural details and long-range dependencies. This process is denoted in Equation (4):

To explicitly model the spatial saliency across this fused representation, we apply average pooling and max pooling along the channel dimension of

, generating two 2D spatial descriptors

and

. After concatenating these spatial descriptors, a large-kernel

convolution layer is applied for spatial information interaction, followed by a Sigmoid activation function to generate the hybrid spatial selection mask

in Equation (5):

where

denotes concatenation. The two channels of the mask

and

(corresponding to the first and second channels of

), serve as the spatial attention weights for the projected local and global features, respectively. Finally, we compute the weighted sum of the original branch features using the generated spatial masks to obtain the final module output

in Equation (6):

where

denotes element-wise multiplication.

Through this process, HCSS-Fusion is able to adaptively reinforce the feature saliency of small targets while maintaining computational efficiency, thereby significantly improving the model’s perception and capture capabilities for small targets.

3.3. Space-to-Depth Mixer Module

To prevent irreversible information loss, we propose SPDMixer as the foundational stem of our architecture. By replacing conventional strided convolutions, SPDMixer ensures that the dense pixel-level semantics required for subsequent modeling are fully preserved through lossless space-to-depth transformation. As illustrated in

Figure 3, SPDMixer integrates lossless Space-to-Depth (SPD) transformation with re-parameterized convolutions to perform lossless feature compression and reconstruction while preserving pixel-level information during downsampling.

In the feature embedding stage, SPDMixer discards traditional pooling or large-kernel convolutions. Instead, it introduces an SPD layer to perform lossless downsampling on the input image

. This process rearranges spatial neighborhood pixel information into the channel dimension by slicing the input along spatial dimensions using odd and even indices. Specifically, four sub-feature maps are generated and concatenated along the channel dimension to yield the intermediate feature

in Equation (7):

where

denotes the array slicing operator with an omitted stop index, indicating that the extraction continues to the end of the dimension at the specified step size. To efficiently encode these spatially folded features, we employ a Re-Parameterized Encoder composed of cascaded MobileOne Blocks to enhance the reconstruction capability of local features. Specifically, the spatially folded input is first processed by a stride-1 MobileOne block to project it to the embedding dimension and reconstruct local correlations. Subsequently, a stride-2 depthwise MobileOne block performs secondary downsampling. The process concludes with a final stride-1 MobileOne block, functioning as a pointwise convolution, to achieve thorough channel fusion and output the encoded feature representation.

This design effectively achieves rigorous information preservation during downsampling. By strictly retaining raw pixel data via SPD and reconstructing semantic features via efficient re-parameterized convolutions, SPDMixer ensures acute perception of small objects from the network’s stem. Consequently, this architectural design significantly enhances detection performance in complex scenarios.

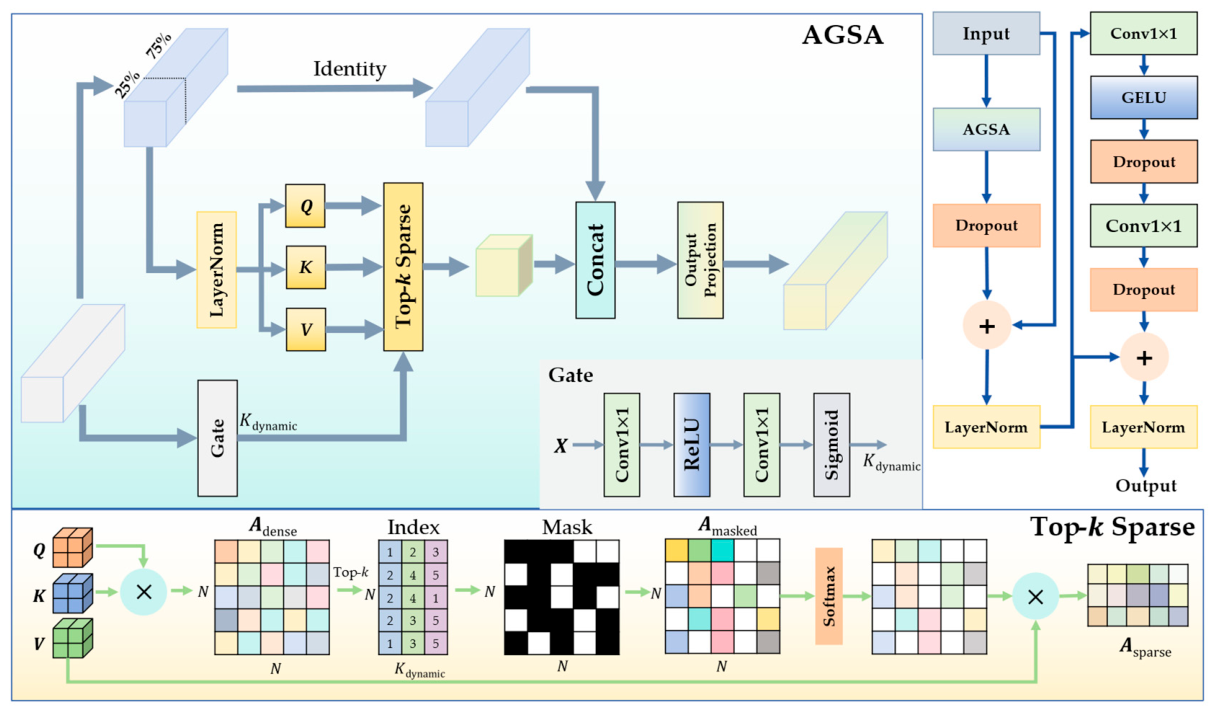

3.4. Dynamic Sparse Adaptive Intra-Scale Feature Interaction

While HCSS-Fusion establishes a rich feature space, we integrate DSAIFI as an obligatory feature purifier within the framework. Through adaptive gated sparse attention, it suppresses redundant signals and focuses computational resources exclusively on the salient features of micro-targets. The core of this module lies in our innovatively designed adaptive gated sparse attention (AGSA) mechanism, which replaces traditional dense attention calculations, achieving adaptive feature selection and interaction.

As illustrated in

Figure 4, AGSA is designed to enhance feature representational capability through intelligent feature selection. To focus on key features while preserving original context information, AGSA employs a partial channel processing strategy. Given the input features

, they are split along the channel dimension into two parts: “active features”

for attention interaction (accounting for 25% of channels) and “passive features”

for information retention (accounting for 75% of channels).

The passive features are transmitted directly to the output via an identity mapping, which not only effectively simplifies the processing of non-salient regions but also preserves the background context of the image. The active features are first normalized via a layer normalization (LN) and then mapped through convolutions to generate the query (), key (), and value () vectors required for the attention mechanism.

Concurrently, a lightweight gating network processes the global statistical information of the input feature

. This network adaptively predicts a dynamic factor, which reflects the sparsity of targets in the current image. We denote this dynamic factor as

, which is calculated by Equation (8):

where

denotes the Sigmoid activation function. To define the operation scale, the total number of spatial tokens

is first calculated as follows:

where

and

represent the height and width of the features, respectively. Based on this, the number of semantic tokens to retain, denoted as

, is formulated by Equation (10):

where

denotes the floor operation to ensure an integer value.

Based on

, the model executes a Top-

sparse selection strategy. First, the dense attention correlation map

is calculated using Equation (11):

where

is the channel dimension of

and

. To retain only the Top-

correlation weights with the strongest responses, we extract the indices of the largest

values along the sequence dimension of

to formulate a binary mask

, which is defined by Equation (12):

where

denote the token indices, and

denotes the index set of the largest

values in the

-th sequence

. The remaining irrelevant background connections are forcibly masked out by replacing their attention scores with negative infinity, yielding

, which is defined by Equation (13):

This dynamic sparse mechanism ensures that the model automatically optimizes the distribution of attention according to the complexity of the image content, precisely focusing attention on salient regions containing potential targets. The masked attention is then passed through a softmax layer and multiplied by

to obtain the aggregated sparse attention features, denoted as

, which is calculated using Equation (14):

Finally, the features aggregated via sparse attention

are concatenated with the original passive features

along the channel dimension. They are then fused through an output projection layer—comprising a sigmoid linear unit (SiLU) activation and a convolutional layer—to restore the original dimensions and yield the output

, which is formulated by Equation (15):

where

denotes the output projection operation.

Building upon the robust feature extraction capabilities of AGSA, we construct the complete DSAIFI module. As shown in

Figure 4, input features first enter the AGSA module for global sparse context modeling, utilizing the aforementioned mechanism to effectively filter out background noise. Subsequently, the processed features undergo fusion through a dropout layer and a residual connection to prevent gradient vanishing, followed by layer normalization. To further enhance non-linear representation capability and integrate channel information, the fused features are fed into a feed-forward network (FFN). Unlike traditional fully connected layer designs, we employ a convolutional FFN consisting of two

convolutional layers, a Gaussian error linear unit (GELU) activation function, and two dropout layers. This design is more conducive to preserving the spatial locality of RS images during feature interaction. Finally, after a second residual connection with dropout and a final layer normalization, DSAIFI outputs the enhanced feature representation. By integrating inner sparse selection with outer residual enhancement, DSAIFI effectively harmonizes dynamic noise suppression and the preservation of spatial locality for sparse targets.

3.5. Optimization of Loss Function

Finally, to translate the purified semantic representations into precise coordinates, we propose RF-MPDIoU as the definitive supervisory mechanism. Through the geometric alignment mechanism, RF-MPDIoU effectively bridges the feature extraction achievements of the preceding components, ensuring that the accuracy gains of the architecture in small object perception are maximized during the supervisory phase.

Specifically, pure geometric overlap metrics struggle to provide stable supervision signals due to the extreme sensitivity of small object positions on discrete pixel grids. As a baseline for the bounding box regression task, the standard IoU, denoted as

, is first calculated as shown in Equation (16):

where

is the ground truth box, and

is the predicted box.

and

denote the top-left and bottom-right coordinates of the predicted box, respectively. Similarly,

and

represent those of the ground truth box.

Furthermore, in the context of small object detection, the severe imbalance between samples during training often leads to gradients being dominated by a vast number of simple samples. This phenomenon suppresses the effective optimization of the sparse and challenging features of small objects. To mitigate this, we introduce a focal mapping mechanism to reweight the

by mapping it into a specified interval. The mapped IoU,

, can be formulated as Equation (17):

where the parameters

and

are hyperparameters representing the lower and upper bounds of the truncation interval, which are empirically set to 0 and 0.95, respectively, to ensure stable gradient propagation. Subsequently, to smoothly adapt to the optimization difficulty and dynamically adjust the penalization, a rational function transformation is applied to

. The transformed metric,

, can be expressed as Equation (18):

where the parameter

is empirically set to 1.0 to adjust the smoothness of the gradient distribution.

To maintain strong geometric constraints on the actual boundaries, a corner distance penalty is integrated. The squared Euclidean distances between the corresponding corners,

and

, are defined in Equation (19):

To ensure scale invariance, these distances are normalized by the width

and height

of the input image. Therefore, the final RF-MPDIoU metric, denoted as

, is given by Equation (20):

Finally, the corresponding bounding box regression loss,

, is obtained by Equation (21):