A Dual-Modal Mixture-of-Experts Attention U-Net (DMoE-AttU-Net) for Change Detection Using Heterogeneous Optical and SAR Remote Sensing Images

Highlights

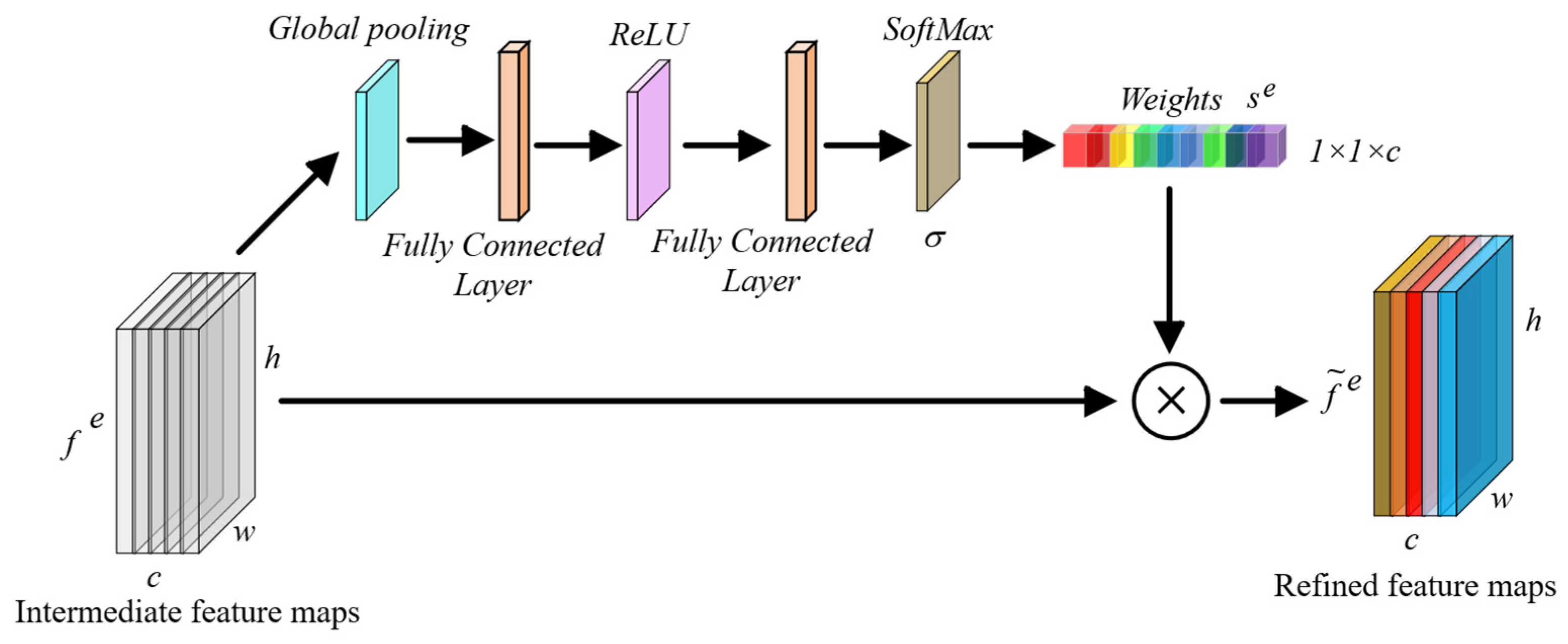

- A novel dual-modal architecture (DMoE-AttU-Net) is proposed for heterogeneous optical–SAR change detection, integrating SAR-specific MoE and hierarchical attention mechanisms.

- Modality-aware design with selective MoE in the SAR branch effectively mitigates speckle noise while preserving complementary optical information.

- The coordinated use of channel and spatial attention enhances boundary delineation, supporting more reliable multimodal change detection in complex remote sensing scenarios.

Abstract

1. Introduction

2. Materials and Methods

2.1. Proposed DMoE-AttU-Net Architecture

2.2. Dataset Description

- The first dataset (Figure 3a) covers flood-affected areas in California, USA [19], including parts of Sacramento, Yuba, and Sutter Counties. It integrates a multispectral optical image from Landsat-8 and a multi-polarized SAR image from Sentinel-1A with a spatial resolution of 15 m. The SAR input includes VV, VH, and their intensity ratio as channels. The reference change map highlights land cover transitions associated with flood events and was generated by leveraging auxiliary SAR observations acquired during the same time period. The dataset exhibits a significant class imbalance. The ratio of changed to unchanged pixels is approximately 1:23.

- The second dataset (Figure 3b), referred to as Gloucester I [20], focuses on an urban area in the United Kingdom (UK). It combines high-resolution optical imagery from QuickBird-2 with SAR data from TerraSAR-X acquired in StripMap mode with HH polarization and a spatial resolution of 0.65 m. The dataset captures complex urban changes related to flood dynamics. Ground truth annotations were manually produced by domain experts based on pre- and post-event visual interpretation and ancillary information. The ratio of changed to unchanged pixels in this dataset is approximately 1:7.

- The third dataset (Figure 3c), Gloucester II [20], also corresponds to a region in Gloucester, UK, and contains earlier-generation satellite data. It includes SPOT optical imagery and ERS-1 SAR data with spatial resolution of 25 m, captured before and after a historical flood event. Despite its lower resolution and limited spectral diversity, this dataset provides a valuable benchmark for evaluating the robustness of change detection methods under challenging conditions. Ground truth labels were manually delineated to reflect flood-induced land cover changes.

2.3. Experimental Settings and Implementation Details

3. Experimental Results and Discussion

3.1. Visual Comparison

3.2. Quantitative Comparison

3.3. Computational Complexity Analysis

3.4. Ablation Experiments

3.5. Limitations and Future Research Directions

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Zhu, Z.; Woodcock, C.E. Continuous change detection and classification of land cover using all available Landsat data. Remote Sens. Environ. 2014, 144, 152–171. [Google Scholar] [CrossRef]

- Mohsenifar, A.; Mohammadzadeh, A.; Jamali, S. Unsupervised Rural Flood Mapping from Bi-Temporal Sentinel-1 Images Using an Improved Wavelet-Fusion Flood-Change Index (IWFCI) and an Uncertainty-Sensitive Markov Random Field (USMRF) Model. Remote Sens. 2025, 17, 1024. [Google Scholar] [CrossRef]

- Moghimi, A.; Mohammadzadeh, A.; Khazai, S. Integrating Thresholding with Level Set Method for Unsupervised Change Detection in Multitemporal SAR Images. Can. J. Remote Sens. 2017, 43, 412–431. [Google Scholar] [CrossRef]

- Khankeshizadeh, E.; Mohammadzadeh, A.; Moghimi, A.; Mohsenifar, A. FCD-R2U-net: Forest change detection in bi-temporal satellite images using the recurrent residual-based U-net. Earth Sci. Inform. 2022, 15, 2335–2347. [Google Scholar] [CrossRef]

- Khankeshizadeh, E.; Tahermanesh, S.; Mohsenifar, A.; Moghimi, A.; Mohammadzadeh, A. FBA-DPAttResU-Net: Forest burned area detection using a novel end-to-end dual-path attention residual-based U-Net from post-fire Sentinel-1 and Sentinel-2 images. Ecol. Indic. 2024, 167, 112589. [Google Scholar] [CrossRef]

- Liu, Q.; Ren, K.; Meng, X.; Shao, F. Domain Adaptive Cross Reconstruction for Change Detection of Heterogeneous Remote Sensing Images via a Feedback Guidance Mechanism. IEEE Trans. Geosci. Remote Sens. 2023, 61, 4507216. [Google Scholar] [CrossRef]

- Lv, Z.; Huang, H.; Sun, W.; Lei, T.; Benediktsson, J.A.; Li, J. Novel Enhanced UNet for Change Detection Using Multimodal Remote Sensing Image. IEEE Geosci. Remote Sens. Lett. 2023, 20, 2505405. [Google Scholar] [CrossRef]

- Li, X.; Du, Z.; Huang, Y.; Tan, Z. A deep translation (GAN) based change detection network for optical and SAR remote sensing images. ISPRS J. Photogramm. Remote Sens. 2021, 179, 14–34. [Google Scholar] [CrossRef]

- Zhu, J.Y.; Park, T.; Isola, P.; Efros, A.A. Unpaired Image-to-Image Translation Using Cycle-Consistent Adversarial Networks. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; IEEE: New York, NY, USA, 2017. [Google Scholar] [CrossRef]

- Liu, J.; Gong, M.; Qin, K.; Zhang, P. A Deep Convolutional Coupling Network for Change Detection Based on Heterogeneous Optical and Radar Images. IEEE Trans. Neural Netw. Learn. Syst. 2018, 29, 545–559. [Google Scholar] [CrossRef] [PubMed]

- Niu, X.; Gong, M.; Zhan, T.; Yang, Y. A Conditional Adversarial Network for Change Detection in Heterogeneous Images. IEEE Geosci. Remote Sens. Lett. 2019, 16, 45–49. [Google Scholar] [CrossRef]

- Luppino, L.T.; Kampffmeyer, M.; Bianchi, F.M.; Moser, G.; Serpico, S.B.; Jenssen, R.; Anfinsen, S.N. Deep Image Translation with an Affinity-Based Change Prior for Unsupervised Multimodal Change Detection. IEEE Trans. Geosci. Remote Sens. 2022, 60, 4700422. [Google Scholar] [CrossRef]

- Zhang, C.; Feng, Y.; Hu, L.; Tapete, D.; Pan, L.; Liang, Z.; Cigna, F.; Yue, P. A domain adaptation neural network for change detection with heterogeneous optical and SAR remote sensing images. Int. J. Appl. Earth Obs. Geoinf. 2022, 109, 102769. [Google Scholar] [CrossRef]

- Liu, Z.; Zhang, J.; Wang, W.; Gu, Y. M2CD: A Unified MultiModal Framework for Optical-SAR Change Detection with Mixture of Experts and Self-Distillation. IEEE Geosci. Remote Sens. Lett. 2025, 22, 4012105. [Google Scholar] [CrossRef]

- Daudt, R.C.; Le Saux, B.; Boulch, A. Fully convolutional Siamese networks for change detection. In Proceedings of the International Conference on Image Processing, ICIP, Athens, Greece, 7–10 October 2018; IEEE: New York, NY, USA, 2018. [Google Scholar] [CrossRef]

- Peng, D.; Zhang, Y.; Guan, H. End-to-end change detection for high resolution satellite images using improved UNet++. Remote Sens. 2019, 11, 1382. [Google Scholar] [CrossRef]

- He, X.; Zhang, S.; Xue, B.; Zhao, T.; Wu, T. Cross-modal change detection flood extraction based on convolutional neural network. Int. J. Appl. Earth Obs. Geoinf. 2023, 117, 103197. [Google Scholar] [CrossRef]

- Yan, T.; Wan, Z.; Zhang, P.; Cheng, G.; Lu, H. TransY-Net: Learning Fully Transformer Networks for Change Detection of Remote Sensing Images. IEEE Trans. Geosci. Remote Sens. 2023, 61, 4410012. [Google Scholar] [CrossRef]

- Luppino, L.T.; Bianchi, F.M.; Moser, G.; Anfinsen, S.N. Unsupervised image regression for heterogeneous change detection. IEEE Trans. Geosci. Remote Sens. 2019, 57, 9960–9975. [Google Scholar] [CrossRef]

- Mignotte, M. A Fractal Projection and Markovian Segmentation-Based Approach for Multimodal Change Detection. IEEE Trans. Geosci. Remote Sens. 2020, 58, 8046–8058. [Google Scholar] [CrossRef]

- Jadon, S. A survey of loss functions for semantic segmentation. In Proceedings of the 2020 IEEE Conference on Computational Intelligence in Bioinformatics and Computational Biology, CIBCB 2020, Vina del Mar, Chile, 27–29 October 2020; IEEE: New York, NY, USA, 2020. [Google Scholar] [CrossRef]

- Sudre, C.H.; Li, W.; Vercauteren, T.; Ourselin, S.; Cardoso, M.J. Generalised dice overlap as a deep learning loss function for highly unbalanced segmentations. In Lecture Notes in Computer Science (Including Subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics); Springer: Cham, Switzerland, 2017. [Google Scholar] [CrossRef]

- Brahim, E.; Amri, E.; Barhoumi, W.; Bouzidi, S. Fusion of UNet and ResNet decisions for change detection using low and high spectral resolution images. Signal Image Video Process. 2024, 18, 695–702. [Google Scholar] [CrossRef]

- Cummings, S.; Kondmann, L.; Zhu, X.X. Siamese Attention U-Net for Multi-Class Change Detection. In Proceedings of the International Geoscience and Remote Sensing Symposium (IGARSS), Kuala Lumpur, Malaysia, 17–22 July 2022; IEEE: New York, NY, USA, 2022. [Google Scholar] [CrossRef]

- Pang, L.; Sun, J.; Chi, Y.; Yang, Y.; Zhang, F.; Zhang, L. CD-TransUNet: A Hybrid Transformer Network for the Change Detection of Urban Buildings Using L-Band SAR Images. Sustainability 2022, 14, 9847. [Google Scholar] [CrossRef]

- Chen, L.C.; Papandreou, G.; Schroff, F.; Adam, H. Rethinking atrous convolution for semantic image segmentation. arXiv 2017, arXiv:1706.05587. [Google Scholar] [CrossRef]

- Li, K.; Li, Z.; Fang, S. Siamese NestedUNet Networks for Change Detection of High Resolution Satellite Image. In ACM International Conference Proceeding Series; Association for Computing Machinery: New York, NY, USA, 2020. [Google Scholar] [CrossRef]

- Chen, H.; Wu, C.; Du, B.; Zhang, L.; Wang, L. Change Detection in Multisource VHR Images via Deep Siamese Convolutional Multiple-Layers Recurrent Neural Network. IEEE Trans. Geosci. Remote Sens. 2020, 58, 2848–2864. [Google Scholar] [CrossRef]

| Dataset | Time 1 Datatype/Sensor/ Acquisition Time | Time 2 Datatype/Sensor/ Acquisition Time | Image Size (Pixel × Pixel) | Spatial Resolution (m) |

|---|---|---|---|---|

| California (USA) | Optic/Landsat-8/ 8 January 2017 | SAR/Sentinel-1A/ 18 February 2017 | 2000 × 3500 | ≈15 |

| Gloucester I (UK) | Optic/Quickbird-2/ July 2006 | SAR/TerraSAR-X/ July 2007 | 2325 × 4135 | 0.65 |

| Gloucester II (UK) | Optic/SPOT/ September 1999 | SAR/ERS-1/ November 2000 | 1250 × 2600 | ≈25 |

| Models | Precision | Recall | F1-Score | IoU | mIoU | KC | OA | ||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Background | Change | Background | Change | Background | Change | Background | Change | ||||

| U-Net [23] | 0.954 | 0.923 | 0.994 | 0.611 | 0.974 | 0.736 | 0.948 | 0.582 | 0.765 | 0.71 | 0.952 |

| AttU-Net [24] | 0.962 | 0.854 | 0.986 | 0.687 | 0.974 | 0.761 | 0.949 | 0.615 | 0.782 | 0.736 | 0.953 |

| DeepLabV3 [26] | 0.968 | 0.798 | 0.977 | 0.738 | 0.973 | 0.767 | 0.947 | 0.622 | 0.784 | 0.739 | 0.951 |

| TransU-Net [25] | 0.958 | 0.934 | 0.994 | 0.643 | 0.976 | 0.762 | 0.953 | 0.616 | 0.784 | 0.739 | 0.956 |

| M2CD [14] | 0.962 | 0.946 | 0.995 | 0.667 | 0.978 | 0.782 | 0.958 | 0.643 | 0.800 | 0.762 | 0.961 |

| Siam-NestedU-Net [27] | 0.964 | 0.944 | 0.995 | 0.698 | 0.979 | 0.803 | 0.959 | 0.671 | 0.815 | 0.783 | 0.962 |

| FC-Siam-Diff [15] | 0.978 | 0.814 | 0.977 | 0.822 | 0.977 | 0.818 | 0.956 | 0.692 | 0.824 | 0.795 | 0.96 |

| SiamCRNN [28] | 0.989 | 0.776 | 0.967 | 0.916 | 0.978 | 0.84 | 0.958 | 0.724 | 0.841 | 0.818 | 0.962 |

| DMoE-AttU-Net | 0.987 | 0.818 | 0.975 | 0.896 | 0.981 | 0.855 | 0.963 | 0.747 | 0.855 ± 0.004 | 0.836 ± 0.005 | 0.967 ± 0.002 |

| Models | Params (M) | FLOPs (G) | Inference Time (ms) |

|---|---|---|---|

| U-Net | 31.0 | 45 | 18 |

| AttU-Net | 33.5 | 48 | 20 |

| DeepLabV3 | 42.3 | 65 | 28 |

| TransU-Net | 11.3 | 55 | 25 |

| M2CD | 7.0 | 38 | 22 |

| Siam-NestedU-Net | 12.0 | 40 | 17 |

| FC-Siam-Diff | 1.3 | 12 | 8 |

| SiamCRNN | 39.6 | 70 | 32 |

| DMoE-AttU-Net | 69.5 | 95 | 38 |

| SAR Encoder | Attention Gates | SE Attention | MoE | IoU | mIoU | KC | OA | |

|---|---|---|---|---|---|---|---|---|

| Background | Change | |||||||

| ✓ | ✓ | ✓ | 0.935 | 0.604 | 0.769 | 0.720 | 0.941 | |

| ✓ | ✓ | ✓ | 0.941 | 0.618 | 0.779 | 0.734 | 0.946 | |

| ✓ | ✓ | ✓ | 0.953 | 0.685 | 0.819 | 0.789 | 0.957 | |

| ✓ | ✓ | ✓ | 0.946 | 0.647 | 0.797 | 0.758 | 0.951 | |

| ✓ | ✓ | ✓ | ✓ | 0.963 | 0.747 | 0.855 | 0.836 | 0.967 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Khankeshizadeh, S.E.; Mohammadzadeh, A.; Jamali, A.; Jamali, S. A Dual-Modal Mixture-of-Experts Attention U-Net (DMoE-AttU-Net) for Change Detection Using Heterogeneous Optical and SAR Remote Sensing Images. Remote Sens. 2026, 18, 1508. https://doi.org/10.3390/rs18101508

Khankeshizadeh SE, Mohammadzadeh A, Jamali A, Jamali S. A Dual-Modal Mixture-of-Experts Attention U-Net (DMoE-AttU-Net) for Change Detection Using Heterogeneous Optical and SAR Remote Sensing Images. Remote Sensing. 2026; 18(10):1508. https://doi.org/10.3390/rs18101508

Chicago/Turabian StyleKhankeshizadeh, Seyed Ehsan, Ali Mohammadzadeh, Ali Jamali, and Sadegh Jamali. 2026. "A Dual-Modal Mixture-of-Experts Attention U-Net (DMoE-AttU-Net) for Change Detection Using Heterogeneous Optical and SAR Remote Sensing Images" Remote Sensing 18, no. 10: 1508. https://doi.org/10.3390/rs18101508

APA StyleKhankeshizadeh, S. E., Mohammadzadeh, A., Jamali, A., & Jamali, S. (2026). A Dual-Modal Mixture-of-Experts Attention U-Net (DMoE-AttU-Net) for Change Detection Using Heterogeneous Optical and SAR Remote Sensing Images. Remote Sensing, 18(10), 1508. https://doi.org/10.3390/rs18101508