Effect Analysis of Spectral and Spatial Variations on Attention-Based Cropland Extraction Networks

Highlights

- Spectral and spatial resolutions exhibit clear linear relationships with cropland segmentation accuracy in attention-based models.

- A spectral–spatial coupling model based on Iso-IoU effectively quantifies the trade-off between band number and spatial resolution.

- Spectral information can partially compensate for spatial resolution loss, especially for models with stronger spectral utilization capability.

- The proposed framework provides practical guidance for optimizing input configurations and model selection in agricultural remote sensing applications.

Abstract

1. Introduction

2. Study Areas and Datasets

3. Models

3.1. BsiNet

3.2. REAUnet

3.3. Implementation Details

4. Experiments

5. Results

5.1. Spectral Variations Experiment Results

5.2. Spatial Variations Experiment Results

5.3. Spectral–Spatial Coupling Experiments Results

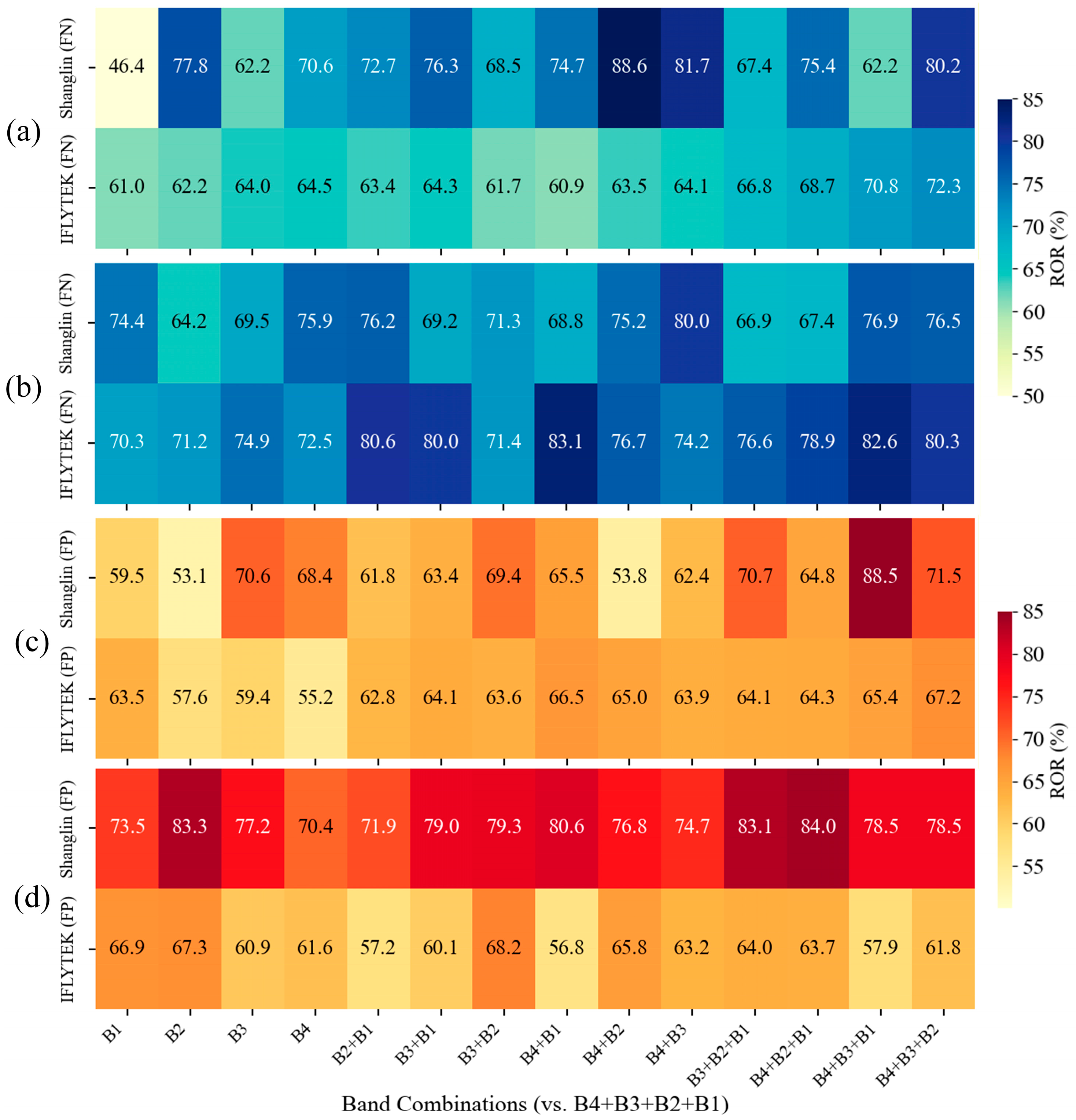

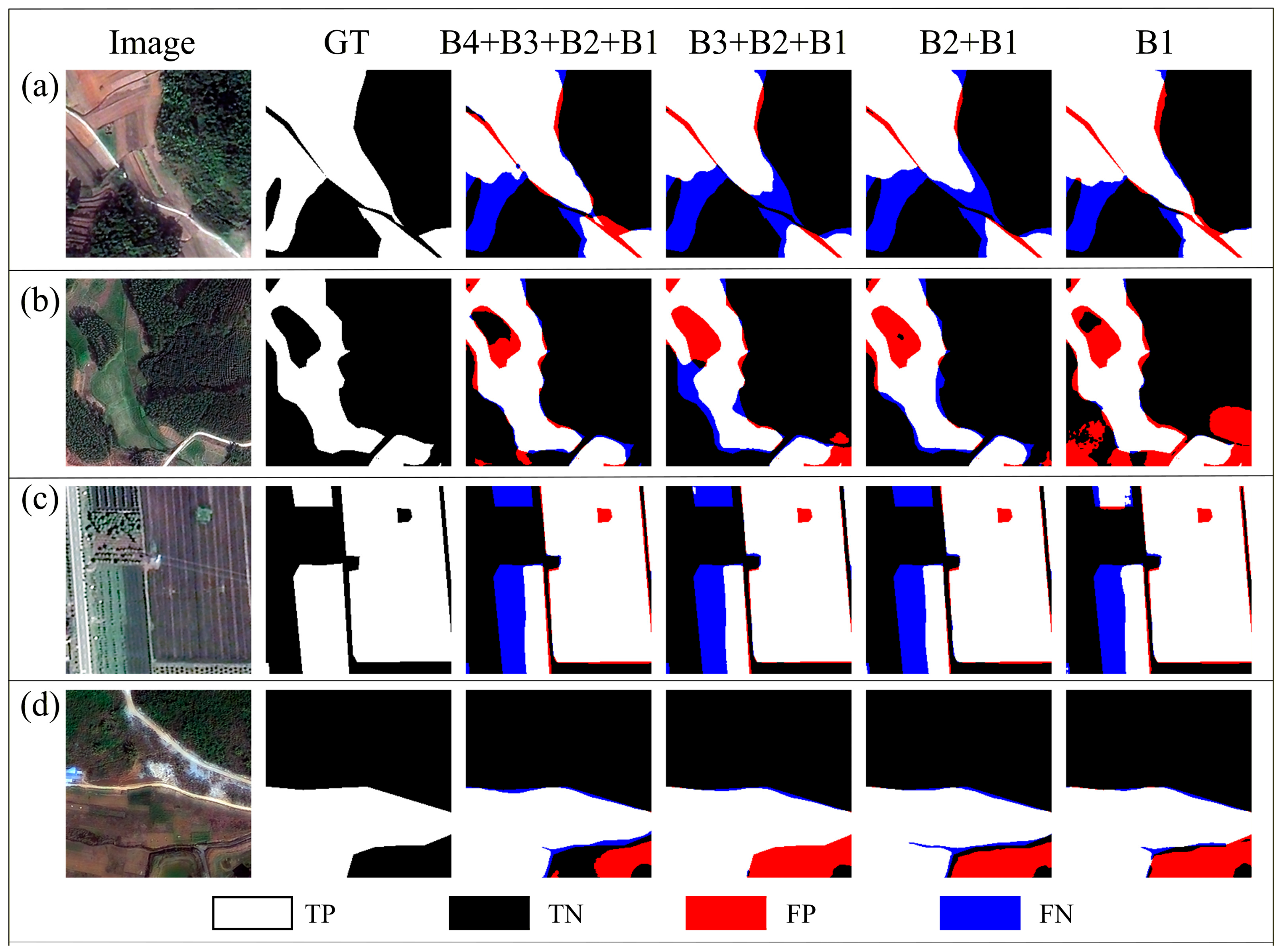

5.4. Error Tendency Experiment Results

6. Discussion

6.1. Spectral–Spatial Trade-Offs and the Role of Global Context

6.2. Error Tendency and Attention Mechanisms

6.3. Limitations

7. Conclusions and Future Work

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Xie, Z.; Zhang, Q.; Jiang, C.; Yao, R. Cropland Compensation in Mountainous Areas in China Aggravates Non-Grain Production: Evidence from Fujian Province. Land Use Policy 2024, 138, 107026. [Google Scholar] [CrossRef]

- Yang, R.; Yang, Z.; Zhong, C.; Yang, S.; Cao, L. Study on the Relationship between Farmland and Grain Abundance and Households’ Income in China. J. Nat. Resour. 2024, 39, 2619–2638. [Google Scholar] [CrossRef]

- Zhao, S.; Yin, M. Change of Urban and Rural Construction Land and Driving Factors of Arable Land Occupation. PLoS ONE 2023, 18, e0286248. [Google Scholar] [CrossRef] [PubMed]

- Aung, H.L.; Uzkent, B.; Burke, M.; Lobell, D.; Ermon, S. Farm Parcel Delineation Using Spatio-Temporal Convolutional Networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Seattle, WA, USA, 14–19 June 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 340–349. [Google Scholar] [CrossRef]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional Networks for Biomedical Image Segmentation. In Medical Image Computing and Computer-Assisted Intervention—MICCAI 2015; Navab, N., Hornegger, J., Wells, W., Frangi, A., Eds.; Lecture Notes in Computer Science; Springer: Cham, Switzerland, 2015; Volume 9351, pp. 234–241. [Google Scholar] [CrossRef]

- Chen, L.C.; Zhu, Y.; Papandreou, G.; Schroff, F.; Adam, H. Encoder-Decoder with Atrous Separable Convolution for Semantic Image Segmentation. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 October 2018; Springer: Cham, Switzerland, 2018; pp. 833–851. [Google Scholar] [CrossRef]

- Badrinarayanan, V.; Kendall, A.; Cipolla, R. SegNet: A Deep Convolutional Encoder-Decoder Architecture for Image Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 2481–2495. [Google Scholar] [CrossRef]

- Zhu, X.X.; Tuia, D.; Mou, L.; Xia, G.S.; Zhang, L.; Xu, F.; Fraundorfer, F. Deep Learning in Remote Sensing: A Comprehensive Review and List of Resources. IEEE Geosci. Remote Sens. Mag. 2017, 5, 8–36. [Google Scholar] [CrossRef]

- Ma, L.; Liu, Y.; Zhang, X.; Ye, Y.; Yin, G.; Johnson, B.A. Deep Learning in Remote Sensing Applications: A Meta-Analysis and Review. ISPRS J. Photogramm. Remote Sens. 2019, 152, 166–177. [Google Scholar] [CrossRef]

- Wang, M.; Wang, J.; Cui, Y.; Liu, J.; Chen, L. Agricultural Field Boundary Delineation with Satellite Image Segmentation for High-Resolution Crop Mapping: A Case Study of Rice Paddy. Agronomy 2022, 12, 2342. [Google Scholar] [CrossRef]

- Cai, Z.; Hu, Q.; Zhang, X.; Yang, J.; Wei, H.; He, Z.; Song, Q.; Wang, C.; Yin, G.; Xu, B. An Adaptive Image Segmentation Method with Automatic Selection of Optimal Scale for Extracting Cropland Parcels in Smallholder Farming Systems. Remote Sens. 2022, 14, 3067. [Google Scholar] [CrossRef]

- Long, J.; Shelhamer, E.; Darrell, T. Fully Convolutional Networks for Semantic Segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; IEEE: Piscataway, NJ, USA, 2015; pp. 3431–3440. [Google Scholar] [CrossRef]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep Learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef]

- Blaschke, T. Object Based Image Analysis for Remote Sensing. ISPRS J. Photogramm. Remote Sens. 2010, 65, 2–16. [Google Scholar] [CrossRef]

- Chang, B.; Wang, J.; Luo, Y.; Wang, Y.; Wang, Y. Cultivated Land Extraction Based on GF-1/WFV Remote Sensing in Shenwu Irrigation Area of Hetao Irrigation District. Trans. Chin. Soc. Agric. Eng. 2017, 33, 188–195. (In Chinese) [Google Scholar] [CrossRef]

- Wang, Y.; Yang, M.; Zhang, T.; Hu, S.; Zhuang, Q. DAENet: A Deep Attention-Enhanced Network for Cropland Extraction in Complex Terrain from High-Resolution Satellite Imagery. Agriculture 2025, 15, 1318. [Google Scholar] [CrossRef]

- Zhang, X.; Huang, J.; Ning, T. Progress and Prospect of Cultivated Land Extraction from High-Resolution Remote Sensing Images. Geomat. Inf. Sci. Wuhan Univ. 2023, 48, 1582–1590. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; IEEE: Piscataway, NJ, USA, 2016; pp. 770–778. [Google Scholar] [CrossRef]

- Wu, Y.H.; Zhang, S.C.; Liu, Y.; Zhang, L.; Zhan, X.; Zhou, D.; Feng, J.; Cheng, M.M.; Zhen, L. Low-Resolution Self-Attention for Semantic Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2025, 47, 8180–8192. [Google Scholar] [CrossRef]

- Fu, J.; Liu, J.; Tian, H.; Li, Y.; Bao, Y.; Fang, Z.; Lu, H. Dual Attention Network for Scene Segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 3141–3149. [Google Scholar] [CrossRef]

- Zhang, Z.; Huang, L.; Tang, B.-H.; Le, W.; Wang, M.; Cheng, J.; Wu, Q. MATNet: Multiattention Transformer Network for Cropland Semantic Segmentation in Remote Sensing Images. Int. J. Digit. Earth 2024, 17, 2392845. [Google Scholar] [CrossRef]

- Wang, L.; Li, R.; Zhang, C.; Fang, S.; Duan, C.; Meng, X.; Atkinson, P.M. UNetFormer: A UNet-like Transformer for Efficient Semantic Segmentation of Remote Sensing Urban Scene Imagery. ISPRS J. Photogramm. Remote Sens. 2022, 190, 196–214. [Google Scholar] [CrossRef]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-Excitation Networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR 2018), Salt Lake City, UT, USA, 18–23 June 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 7132–7141. [Google Scholar] [CrossRef]

- Woo, S.; Park, J.; Lee, J.Y.; Kweon, I.S. CBAM: Convolutional Block Attention Module. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; Springer: Cham, Switzerland, 2018; pp. 3–19. [Google Scholar] [CrossRef]

- Zhao, Q.; Liu, J.; Li, Y.; Zhang, H. Semantic Segmentation with Attention Mechanism for Remote Sensing Images. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5403913. [Google Scholar] [CrossRef]

- Jaderberg, M.; Simonyan, K.; Zisserman, A.; Kavukcuoglu, K. Spatial Transformer Networks. In Advances in Neural Information Processing Systems 28 (NeurIPS 2015); Curran Associates: Red Hook, NY, USA, 2015; pp. 2017–2025. [Google Scholar]

- Zhao, H.; Shi, J.; Qi, X.; Wang, X.; Jia, J. PSANet: Point-Wise Spatial Attention Network for Scene Parsing. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; Springer: Cham, Switzerland, 2018; pp. 267–283. [Google Scholar] [CrossRef]

- Wang, Q.; Wu, B.; Zhu, P.; Li, P.; Zuo, W.; Hu, Q. ECA-Net: Efficient Channel Attention for Deep Convolutional Neural Networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR 2020), Seattle, WA, USA, 14–19 June 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 11531–11539. [Google Scholar] [CrossRef]

- Price, J.C. Spectral Band Selection for Visible-near Infrared Remote Sensing: Spectral–Spatial Resolution Tradeoffs. IEEE Trans. Geosci. Remote Sens. 1997, 35, 1277–1285. [Google Scholar] [CrossRef]

- Xu, F.; Yao, X.; Zhang, K.; Yang, H.; Feng, Q.; Li, Y.; Yan, S.; Gao, B.; Li, S.; Yang, J.; et al. Deep Learning in Cropland Field Identification: A Review. Comput. Electron. Agric. 2024, 222, 109042. [Google Scholar] [CrossRef]

- Zhang, L.; Zhang, L.; Du, B. Deep Learning for Remote Sensing Data: A Technical Tutorial on the State of the Art. IEEE Geosci. Remote Sens. Mag. 2016, 4, 22–40. [Google Scholar] [CrossRef]

- Huang, L.; Jiang, B.; Lv, S.; Liu, Y.; Fu, Y. Deep-Learning-Based Semantic Segmentation of Remote Sensing Images: A Survey. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2024, 17, 8370–8396. [Google Scholar] [CrossRef]

- Skosana, T.E.; Esler, K.J.; Rebelo, A.J. Exploring the Trade-Offs between Spatial and Spectral Resolution in Mapping Invasive Alien Trees. Ecol. Inform. 2025, 92, 103448. [Google Scholar] [CrossRef]

- Chen, Y.; Lin, Z.; Zhao, X.; Wang, G.; Gu, Y. Deep Learning-Based Classification of Hyperspectral Data. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2014, 7, 2094–2107. [Google Scholar] [CrossRef]

- Plaza, A.; Benediktsson, J.A.; Boardman, J.W.; Brazile, J.; Bruzzone, L.; Camps-Valls, G.; Chanussot, J.; Fauvel, M.; Gamba, P.; Gualtieri, A.; et al. Recent Advances in Techniques for Hyperspectral Image Processing. Remote Sens. Environ. 2009, 113, S110–S122. [Google Scholar] [CrossRef]

- Thenkabail, P.S.; Smith, R.B.; De Pauw, E. Hyperspectral Vegetation Indices and Their Relationships with Agricultural Crop Characteristics. Remote Sens. Environ. 2000, 71, 158–182. [Google Scholar] [CrossRef]

- Xie, Y.; Sha, Z.; Yu, M. Remote Sensing Imagery in Vegetation Mapping: A Review. J. Plant Ecol. 2008, 1, 9–23. [Google Scholar] [CrossRef]

- Radoux, J.; Defourny, P. A Quantitative Assessment of Boundaries in Automated Forest Stand Delineation Using Very High Resolution Imagery. Remote Sens. Environ. 2007, 110, 468–475. [Google Scholar] [CrossRef]

- Chang, M.; Li, S.; Peng, S.; He, Z.; Anders, K. Cropland Segmentation Leveraging a Synergistic Edge Enhancement and Temporal Difference-Aware Network with Sentinel-2 Time-Series Imagery. Int. J. Digit. Earth 2025, 18, 2554350. [Google Scholar] [CrossRef]

- Jia, J.; Chen, J.; Zheng, X.; Wang, Y.; Guo, S.; Sun, H.; Jiang, C.; Karjalainen, M.; Karila, K.; Duan, Z.; et al. Tradeoffs in the Spatial and Spectral Resolution of Airborne Hyperspectral Imaging Systems: A Crop Identification Case Study. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5510918. [Google Scholar] [CrossRef]

- Im, J.; Jensen, J.R. Hyperspectral Remote Sensing of Vegetation. Geogr. Compass 2008, 2, 1943–1961. [Google Scholar] [CrossRef]

- Forzieri, G.; Moser, G.; Catani, F. Assessment of Hyperspectral MIVIS Sensor Capability for Heterogeneous Landscape Classification. ISPRS J. Photogramm. Remote Sens. 2012, 74, 175–184. [Google Scholar] [CrossRef]

- Atkinson, P.M.; Aplin, P. Spatial Variation in Land Cover and Choice of Spatial Resolution for Remote Sensing. Int. J. Remote Sens. 2004, 25, 3687–3702. [Google Scholar] [CrossRef]

- Sun, H.; Zheng, X.; Lu, X.; Wu, S. Spectral–Spatial Attention Network for Hyperspectral Image Classification. IEEE Trans. Geosci. Remote Sens. 2020, 58, 3232–3245. [Google Scholar] [CrossRef]

- Cui, Y.; Yu, Z.; Han, J.; Gao, S.; Wang, L. Dual-Triple Attention Network for Hyperspectral Image Classification Using Limited Training Samples. IEEE Geosci. Remote Sens. Lett. 2022, 19, 5504705. [Google Scholar] [CrossRef]

- Zhu, Y.; Pan, Y.; Hu, T.; Zhang, D.; Zhao, C.; Gao, Y. A Generalized Framework for Agricultural Field Delineation from High-Resolution Satellite Imageries. Int. J. Digit. Earth 2024, 17, 2297947. [Google Scholar] [CrossRef]

- Cui, W.; Wang, F.; He, X.; Zhang, D.; Xu, X.; Yao, M.; Wang, Z.; Huang, J. Multi-Scale Semantic Segmentation and Spatial Relationship Recognition of Remote Sensing Images Based on an Attention Model. Remote Sens. 2019, 11, 1044. [Google Scholar] [CrossRef]

- Li, R.; Zheng, S.; Zhang, C.; Duan, C.; Su, J.; Wang, L.; Atkinson, P.M. Multiattention Network for Semantic Segmentation of Fine-Resolution Remote Sensing Images. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5607713. [Google Scholar] [CrossRef]

- Blaes, X.; Vanhalle, L.; Defourny, P. Efficiency of Crop Identification Based on Optical and SAR Image Time Series. Remote Sens. Environ. 2005, 96, 352–365. [Google Scholar] [CrossRef]

- Kussul, N.; Lavreniuk, M.; Skakun, S.; Shelestov, A. Deep Learning Classification of Land Cover and Crop Types Using Remote Sensing Data. IEEE Geosci. Remote Sens. Lett. 2017, 14, 778–782. [Google Scholar] [CrossRef]

- Long, J.; Li, M.; Wang, X.; Stein, A. Delineation of Agricultural Fields Using Multi-Task BsiNet from High-Resolution Satellite Images. Int. J. Appl. Earth Obs. Geoinf. 2022, 112, 102871. [Google Scholar] [CrossRef]

- Lu, R.; Zhang, Y.; Huang, Q.; Zeng, P.; Shi, Z.; Ye, S. A Refined Edge-Aware Convolutional Neural Networks for Agricultural Parcel Delineation. Int. J. Appl. Earth Obs. Geoinf. 2024, 133, 104084. [Google Scholar] [CrossRef]

- Zhao, Z.; Liu, Y.; Zhang, G.; Tang, L.; Hu, X. The Winning Solution to the IFLYTEK Challenge 2021 Cultivated Land Extraction from High-Resolution Remote Sensing Images. In Proceedings of the International Conference on Advanced Computational Intelligence (ICACI 2022), Nanjing, China, 15–17 July 2022; IEEE: Piscataway, NJ, USA, 2022; pp. 376–380. [Google Scholar] [CrossRef]

- Shao, Z.; Tang, P.; Wang, Z.; Saleem, N.; Yam, S.; Sommai, C. BRRNet: A Fully Convolutional Neural Network for Automatic Building Extraction from High-Resolution Remote Sensing Images. Remote Sens. 2020, 12, 1050. [Google Scholar] [CrossRef]

- Li, X.; Hu, X.; Yang, J. Spatial Group-wise Enhance: Improving Semantic Feature Learning in Convolutional Networks. arXiv 2019, arXiv:1905.09646. [Google Scholar] [CrossRef]

- Roy, A.G.; Navab, N.; Wachinger, C. Concurrent Spatial and Channel ‘Squeeze & Excitation’ in Fully Convolutional Networks. In Medical Image Computing and Computer-Assisted Intervention—MICCAI 2018; Frangi, A., Schnabel, J., Davatzikos, C., Alberola-López, C., Fichtinger, G., Eds.; Lecture Notes in Computer Science; Springer: Cham, Switzerland, 2018; Volume 11070, pp. 421–429. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Delving Deep into Rectifiers: Surpassing Human-Level Performance on ImageNet Classification. In Proceedings of the 2015 IEEE International Conference on Computer Vision (ICCV), Las Condes, Chile, 11–18 December 2015; IEEE: Piscataway, NJ, USA; pp. 1026–1034. [CrossRef]

- Kingma, D.P.; Ba, J. Adam: A Method for Stochastic Optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Loshchilov, I.; Hutter, F. Decoupled Weight Decay Regularization. In Proceedings of the International Conference on Learning Representations (ICLR), New Orleans, LA, USA, 6–9 May 2019. [Google Scholar] [CrossRef]

- Chen, X.; Wang, D.; Chen, J.; Wang, C.; Shen, M. The Mixed Pixel Effect in Land Surface Phenology: A Simulation Study. Remote Sens. Environ. 2018, 211, 338–344. [Google Scholar] [CrossRef]

- Plaza, A.; Martinez, P.; Perez, R.; Plaza, J. A New Approach to Mixed Pixel Classification of Hyperspectral Imagery Based on Extended Morphological Profiles. Pattern Recognit. 2004, 37, 1097–1116. [Google Scholar] [CrossRef]

| Datasets | Satellite | Resolution | Bands (ID) | Train/Val/Test Number |

|---|---|---|---|---|

| Shanglin | GF-2 | 0.8 m | Blue (1), Green (2), Red (3), NIR (4) | 4214/1054/1060 |

| iFLYTEK | Jilin-1 | 0.75–1.1 m | Blue (1), Green (2), Red (3), NIR (4) | 14613/3654/3736 |

| Datasets | Band Count () | Mean IoU (%) | Min IoU (%) | Max IoU (%) | Range (%) |

|---|---|---|---|---|---|

| iFLYTEK | 1 | 77.33 | 76.24 | 78.44 | 2.20 |

| 2 | 78.86 | 78.08 | 79.60 | 1.52 | |

| 3 | 79.48 | 78.36 | 80.31 | 1.95 | |

| 4 | 80.36 | 80.36 | 80.36 | 0.00 | |

| Shanglin | 1 | 79.22 | 78.64 | 79.97 | 1.33 |

| 2 | 81.04 | 80.27 | 82.54 | 2.27 | |

| 3 | 82.00 | 81.16 | 83.13 | 1.97 | |

| 4 | 83.05 | 83.05 | 83.05 | 0.00 |

| Datasets | Band Count ( ) | Mean IoU (%) | Min IoU (%) | Max IoU (%) | Range (%) |

|---|---|---|---|---|---|

| iFLYTEK | 1 | 84.70 | 84.28 | 85.23 | 0.95 |

| 2 | 84.76 | 84.20 | 85.22 | 1.02 | |

| 3 | 85.73 | 85.60 | 85.88 | 0.28 | |

| 4 | 86.17 | 86.17 | 86.17 | 0.00 | |

| Shanglin | 1 | 84.24 | 83.30 | 84.83 | 1.53 |

| 2 | 85.00 | 84.65 | 85.25 | 0.60 | |

| 3 | 85.24 | 85.01 | 85.70 | 0.69 | |

| 4 | 85.78 | 85.78 | 85.78 | 0.00 |

| Model | Band Combination | Resolution | ACC (%) | F1 (%) | IoU (%) | Pre (%) | Recall (%) |

|---|---|---|---|---|---|---|---|

| BsiNet | B4 + B3 + B2 + B1 | 0.75–1.1 m | 89.50 | 89.07 | 80.36 | 88.95 | 89.20 |

| BsiNet | B4 + B3 + B2 + B1 | 1.5–2.2 m | 88.66 | 88.57 | 79.50 | 88.51 | 88.65 |

| BsiNet | B4 + B3 + B2 + B1 | 3.0–4.4 m | 85.70 | 85.69 | 74.97 | 85.8 | 85.72 |

| REAUnet | B4 + B3 + B2 + B1 | 0.75–1.1 m | 92.16 | 91.74 | 86.17 | 92.14 | 92.71 |

| REAUnet | B4 + B3 + B2 + B1 | 1.5–2.2 m | 91.68 | 90.31 | 83.81 | 92.02 | 89.74 |

| REAUnet | B4 + B3 + B2 + B1 | 3.0–4.4 m | 90.99 | 88.65 | 80.89 | 90.56 | 87.81 |

| Model | Band Combination | Resolution | ACC (%) | F1 (%) | IoU (%) | Pre (%) | Recall (%) |

|---|---|---|---|---|---|---|---|

| BsiNet | B4 + B3 + B2 | 0.8 m | 90.88 | 90.78 | 83.13 | 90.89 | 90.69 |

| BsiNet | B4 + B3 + B2 | 1.6 m | 89.90 | 89.75 | 81.42 | 89.68 | 89.83 |

| BsiNet | B4 + B3 + B2 | 3.2 m | 88.35 | 87.37 | 77.74 | 87.24 | 87.50 |

| REAUnet | B4 + B3 + B2 + B1 | 0.8 m | 92.42 | 92.06 | 85.78 | 93.78 | 91.07 |

| REAUnet | B4 + B3 + B2 + B1 | 1.6 m | 91.29 | 89.16 | 81.20 | 89.65 | 89.90 |

| REAUnet | B4 + B3 + B2 + B1 | 3.2 m | 91.12 | 87.00 | 77.47 | 87.56 | 87.36 |

| Network | Dataset | Resolution | Type | ROR (%) | Type | ROR (%) |

|---|---|---|---|---|---|---|

| BsiNet | iFLYTEK | 1.5–2.2 m | FN | 69.31 | FP | 58.44 |

| iFLYTEK | 3.0–4.4 m | FN | 53.77 | FP | 51.80 | |

| Shanglin | 1.6 m | FN | 72.07 | FP | 67.67 | |

| Shanglin | 3.2 m | FN | 65.37 | FP | 58.73 | |

| REAUnet | iFLYTEK | 1.5–2.2 m | FN | 68.46 | FP | 47.78 |

| iFLYTEK | 3.0–4.4 m | FN | 64.97 | FP | 39.44 | |

| Shanglin | 1.6 m | FN | 55.40 | FP | 66.00 | |

| Shanglin | 3.2 m | FN | 51.26 | FP | 59.05 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Cheng, L.; Deng, C.; Zhou, C.; Zhang, Y.; Lu, H.; Li, Z.; Chen, S. Effect Analysis of Spectral and Spatial Variations on Attention-Based Cropland Extraction Networks. Remote Sens. 2026, 18, 1501. https://doi.org/10.3390/rs18101501

Cheng L, Deng C, Zhou C, Zhang Y, Lu H, Li Z, Chen S. Effect Analysis of Spectral and Spatial Variations on Attention-Based Cropland Extraction Networks. Remote Sensing. 2026; 18(10):1501. https://doi.org/10.3390/rs18101501

Chicago/Turabian StyleCheng, Lin, Cailong Deng, Chaohu Zhou, Yong Zhang, Haojian Lu, Zhen Li, and Shiyu Chen. 2026. "Effect Analysis of Spectral and Spatial Variations on Attention-Based Cropland Extraction Networks" Remote Sensing 18, no. 10: 1501. https://doi.org/10.3390/rs18101501

APA StyleCheng, L., Deng, C., Zhou, C., Zhang, Y., Lu, H., Li, Z., & Chen, S. (2026). Effect Analysis of Spectral and Spatial Variations on Attention-Based Cropland Extraction Networks. Remote Sensing, 18(10), 1501. https://doi.org/10.3390/rs18101501