1. Introduction

3D urban models, as a form of earth observation data, are typically composed of triangular meshes and texture maps, providing detailed representations of urban environments and serving as essential data assets in digital economy applications, smart city management, and spatial information services [

1,

2,

3,

4,

5]. However, during real-world data acquisition and modeling, texture maps inevitably capture sensitive information, including personal identities, confidential facilities, and critical infrastructure details [

6]. Unauthorized disclosure of such information may lead to severe privacy violations and even pose threats to public and national security [

7,

8,

9,

10]. Consequently, increasingly strict regulations on data sharing have significantly limited the utilization of large-scale 3D urban datasets, highlighting the urgent need for effective texture privacy-preserving techniques.

Texture privacy-preserving aims to eliminate the identifiability of sensitive content through detection and modification while preserving the usability of the original data [

11,

12,

13,

14]. Early approaches relied heavily on manual editing, which is inefficient and impractical for large-scale datasets. With the advancement of computer vision, automated methods based on object detection and image inpainting have been widely adopted [

15,

16,

17]. However, directly applying these techniques to 3D urban models remains challenging due to their unique structural characteristics.

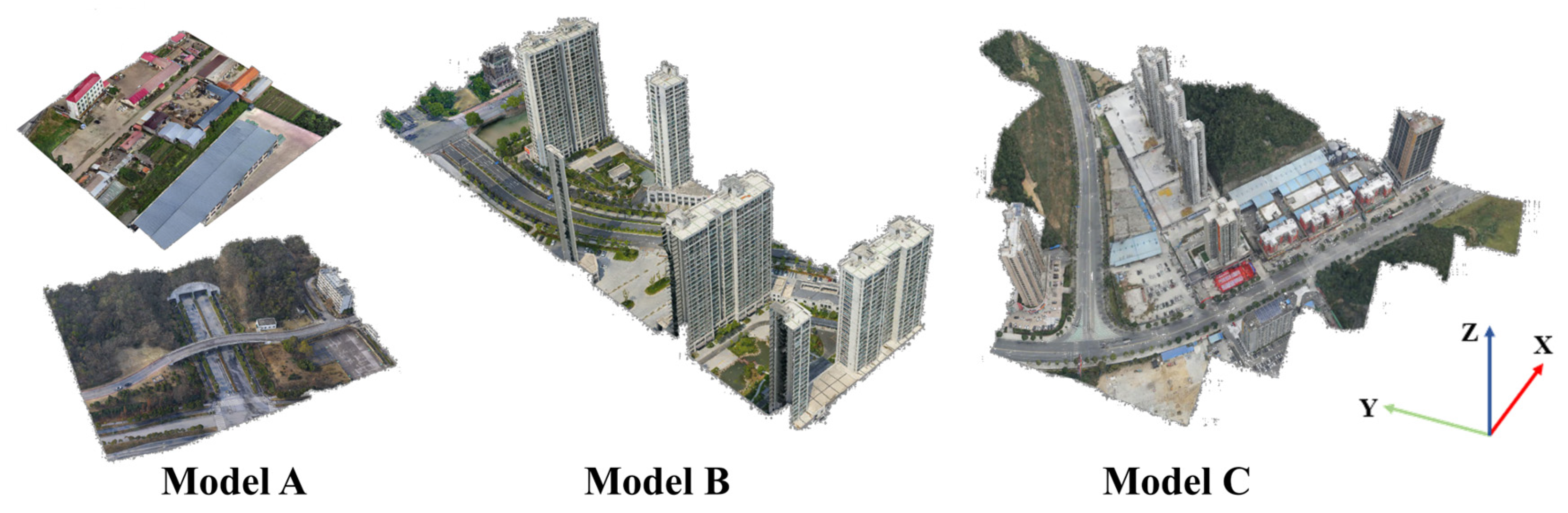

3D urban models generated through oblique photogrammetry consist of massive irregular triangular meshes and are typically organized in hierarchical Level of Detail (LOD) structures, where different levels correspond to varying spatial resolutions and texture granularities. During reconstruction, texture images are partitioned into numerous independent texture blocks, resulting in highly fragmented texture representations [

18,

19]. As illustrated in

Figure 1, while simple geometric surfaces can be mapped to continuous texture domains, textures in 3D urban models exhibit discontinuous spatial distributions. Moreover, as shown in

Figure 2, texture characteristics vary significantly across LOD levels: higher levels provide finer details but exhibit more severe fragmentation, whereas lower levels preserve more complete contextual information but suffer from insufficient resolution. Since texture restoration relies heavily on contextual information, these characteristics introduce significant challenges for achieving visually coherent privacy-preserving results.

Recent studies on privacy preservation in 3D urban models can be broadly categorized into three groups: texture camouflage-based methods, multi-view image-based methods, and texture map-based methods.

Texture camouflage-based methods aim to reduce the visual saliency of sensitive targets by blending them into surrounding backgrounds under specific viewpoints or rendering conditions [

20,

21,

22,

23,

24]. For example, Guo et al. proposed GANmouflage, which learns texture distributions in continuous 3D space using neural implicit functions to achieve viewpoint-dependent visual camouflage [

22]. However, these methods primarily operate at the rendering level and do not irreversibly remove or replace sensitive textures at the data level, leaving potential risks of sensitive information recovery.

Multi-view image-based methods perform sensitive content detection and modification on images captured during the data acquisition stage, such as UAV or oblique photogrammetry, prior to 3D reconstruction [

25,

26,

27,

28,

29]. For instance, Li et al. integrated semantic segmentation networks with generative adversarial networks to automatically detect and repair privacy-sensitive regions in multi-view images [

26]. Although these approaches effectively prevent sensitive information from appearing in reconstructed models, they require access to the original imagery and therefore cannot be directly applied to existing 3D urban models, limiting their practical applicability.

Texture map-based methods operate directly on 2D texture maps exported from 3D models and offer strong applicability and data-level security [

30,

31,

32,

33]. For instance, Xu et al. combined YOLOv5s with PatchMatch to perform sensitive target detection and texture replacement, demonstrating the feasibility of this approach [

32]. However, these methods process fragmented texture patches independently and ignore spatial relationships in the 3D scene, often resulting in noticeable visual discontinuities and inconsistent restoration across LODs.

In summary, texture camouflage-based methods provide limited data-level security, while multi-view image-based methods suffer from restricted applicability. Although texture map-based methods are widely applicable, they struggle to maintain spatial continuity and inter-level consistency due to fragmented texture representations. Therefore, constructing continuous texture representations from fragmented textures and ensuring coherent texture restoration across multiple LODs remains a critical challenge.

To address these issues, this paper proposes a scene-context-aware texture privacy-preserving method for 3D urban models. The proposed method establishes a mapping between 3D scene geometry and continuous texture representations, enabling scene-context-guided and spatially coherent texture reconstruction. Specifically, a fragmentation-aware detection strategy is introduced to select appropriate texture levels, a scene-aware reconstruction method is designed to achieve context-consistent restoration, and a multi-level mapping mechanism is employed to propagate reconstructed textures across different LODs. Experimental results demonstrate that the proposed method effectively removes sensitive content while significantly improving visual continuity compared with existing approaches.

The remainder of this paper is organized as follows.

Section 2 presents the proposed method.

Section 3 reports experimental results and analysis, followed by discussion and conclusions in

Section 4 and

Section 5.

2. Materials and Methods

2.1. Overview of the Proposed Method

3D urban models are typically organized as hierarchical structures composed of multiple nodes, where each node stores both geometric information and associated texture maps. Texture privacy-preserving for such models involves two fundamental tasks: sensitive content localization and sensitive content removal. Existing methods generally operate on 2D texture maps within individual nodes, performing detection and inpainting independently. However, this paradigm neglects the spatial relationships of textures in 3D scenes, often resulting in degraded visual continuity and inconsistent outcomes across different LODs.

To overcome these limitations, the proposed method departs from conventional 2D texture-based processing and introduces a scene-aware framework guided by 3D geometric constraints. Specifically, a fragmentation-aware strategy is first employed to identify suitable detection levels, enabling sensitive object detection to be performed under a balanced condition between texture resolution and structural integrity. Based on the detected regions, planar fitting is conducted on the corresponding triangular surfaces to estimate local geometric structures. An orthographic projection coordinate system is then constructed to establish an accurate mapping between 2D texture regions and their corresponding 3D scene surfaces, allowing texture reconstruction to be guided by continuous and spatially coherent contextual information.

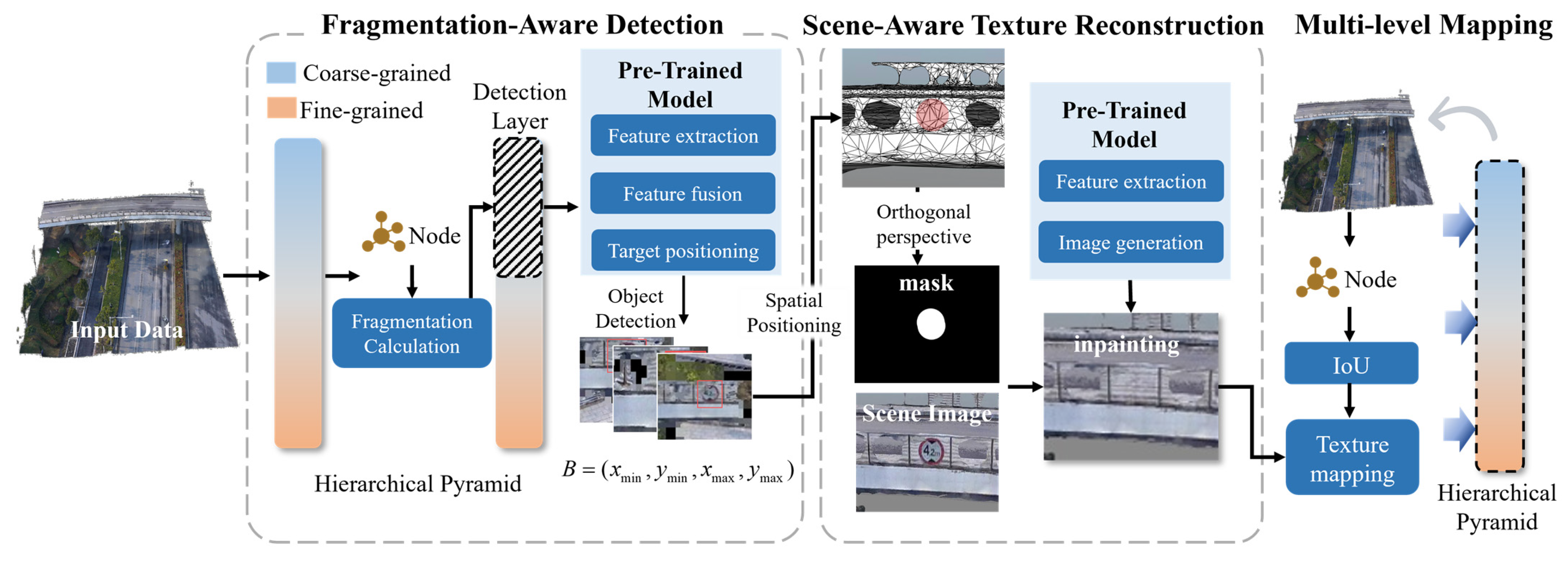

The overall framework of the proposed method is illustrated in

Figure 3 and consists of three key components:

- (1)

Fragmentation-aware sensitive object detection module, which adaptively selects appropriate texture levels to ensure reliable detection under fragmented texture representations, thereby providing accurate inputs for subsequent processing;

- (2)

Scene-aware texture reconstruction module, which leverages 3D geometric constraints to establish continuous contextual representations and enables high-quality texture restoration;

- (3)

Multi-level stable mapping module, which propagates the reconstructed textures back to the original 3D urban model, ensuring global consistency across different LODs.

2.2. Fragmentation-Aware Detection Strategy

Sensitive object detection is a prerequisite for texture privacy preservation, aiming to accurately localize privacy-related targets within complex multi-level texture data. In this study, sensitive objects refer to scene elements containing privacy-related information, such as license plates and textual signs. A pre-trained object detection model is adopted as the front-end component to provide initial localization results. In this study, the detector is further adapted to the target domain through light-weight fine-tuning on a combination of publicly available datasets and domain-specific samples collected from photogrammetric 3D urban models. These samples are manually annotated to include privacy-related categories relevant to urban scenes.

Existing methods typically perform detection independently on texture maps at each node or level. Such exhaustive processing not only introduces substantial computational overhead, but also leads to unreliable detection results, including missed detections at low-resolution levels and false detections at highly fragmented levels. To address these issues, a fragmentation-aware detection strategy is proposed to adaptively select a subset of appropriate texture levels for detection. By restricting detection to selected levels rather than all nodes, the proposed strategy effectively reduces computational cost while ensuring a balance between texture integrity and spatial resolution.

To quantify texture fragmentation, a fragmentation indicator is defined based on the proportion of non-informative pixels in texture images. During texture unwrapping, unmapped regions are typically filled with uniform black pixels, which can be regarded as non-informative areas. Let the total number of LOD levels in a 3D urban model be denoted as

. The model hierarchy is traversed from the root node. For each node at level

, its corresponding texture image is denoted as

. The fragmentation degree at level

is defined as:

where

is the number of nodes at level

,

represents the number of non-informative pixels, and

denotes the total number of pixels in

. A higher value of

indicates a larger proportion of non-informative regions, higher texture fragmentation, and consequently a higher risk of missed detections.

Based on the fragmentation measure, detection levels are adaptively determined. Starting from lower levels, levels with are selected to form the detection level set , where is a predefined fragmentation threshold. This selection process ensures that detection is performed only on texture images with sufficient contextual continuity, while avoiding highly fragmented or low-resolution levels. As a result, redundant detection across all nodes is avoided, further improving computational efficiency.

Sensitive object detection is then conducted on texture images corresponding to levels in

using a pre-trained YOLOv11 model [

34]. The model outputs 2D bounding boxes, confidence scores, and object categories. Valid detections are filtered based on a confidence threshold and collected into the detection set

:

where

denotes the pixel coordinates of sensitive targets.

To establish the connection between 2D detection results and the 3D model, the detected bounding boxes are first mapped to UV space:

where

and

denote the width and height of the texture image, respectively, and

represents the UV coordinates of sensitive targets.

Subsequently, triangular faces whose UV regions overlap with the detected regions are identified to obtain candidate sensitive triangles:

where

denotes a triangular face in 3D space,

is its corresponding UV region,

denotes the intersection-over-union metric, and

is the overlap threshold.

Finally, a 3D bounding box is computed for the triangle set, and the detection results are updated to form , which includes pixel coordinates, UV coordinates, 3D spatial coordinates of sensitive targets and associated triangle sets.

This process produces a unified set of sensitive regions with consistent spatial localization, providing reliable inputs for subsequent scene-aware texture reconstruction.

2.3. Scene-Aware Texture Reconstruction

Scene-aware texture reconstruction is the core component of the proposed method, designed to overcome the limitations of fragmented texture maps by introducing continuous scene-level contextual information. Unlike conventional methods that perform independent inpainting on each texture map, the proposed approach reconstructs each sensitive target only once in a unified scene representation. This strategy avoids redundant processing across multiple texture fragments and significantly reduces computational cost while improving reconstruction consistency.

To generate high-quality scene images for texture restoration, two key requirements must be satisfied: (1) the sensitive region should be fully observable to avoid occlusion, and (2) sufficient spatial resolution should be preserved to prevent the loss of fine-grained texture details during projection.

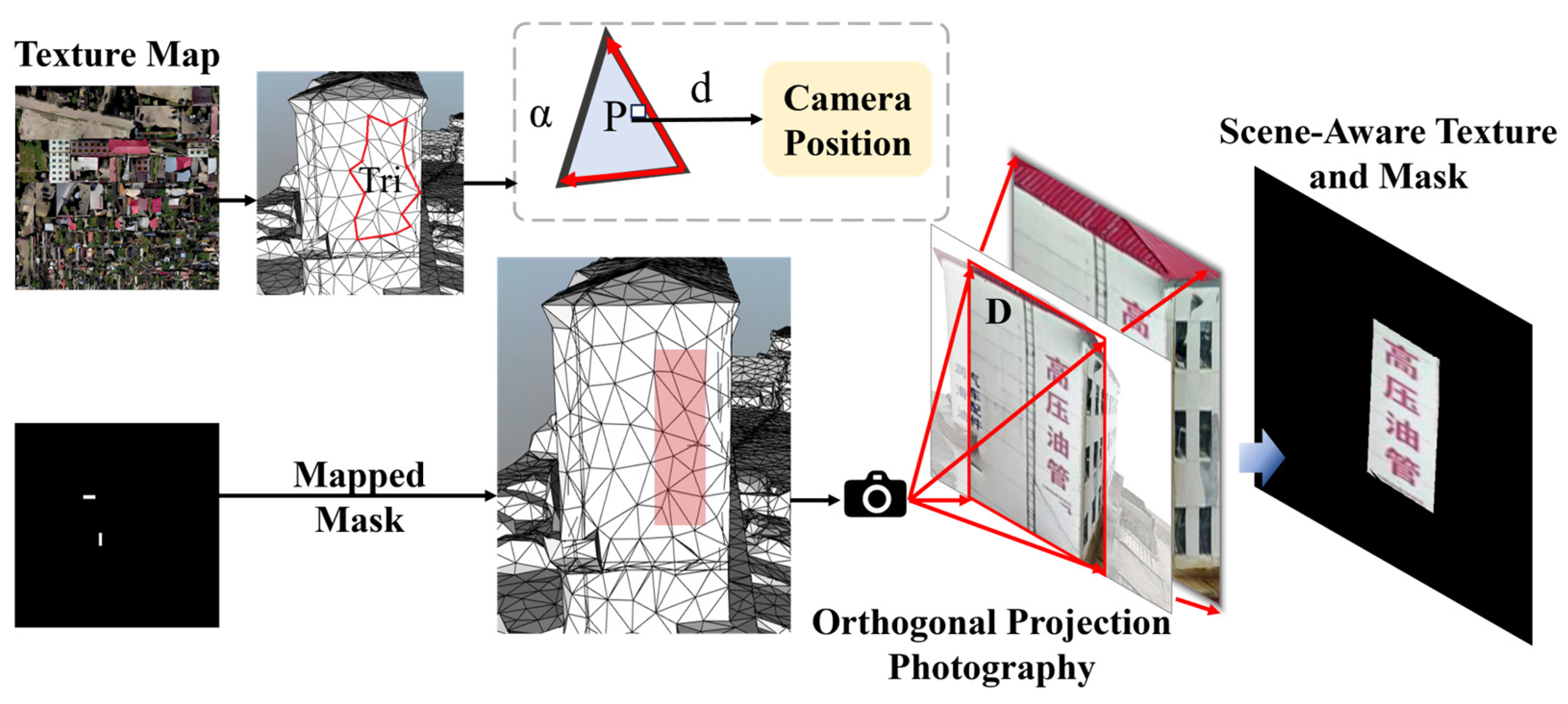

To meet these requirements, an orthographic projection coordinate system is constructed based on the geometric characteristics of the sensitive region, as illustrated in

Figure 4. Specifically, while the detection stage is performed on selected levels (

Section 2.2), the scene rendering stage preferentially accesses texture data from the highest available level of detail. This design decouples detection efficiency from reconstruction quality, enabling the method to achieve both reduced computational cost and high-resolution texture reconstruction.

Starting from the detection results in

Section 2.2, the 2D sensitive object mask is first mapped into 3D space to obtain the corresponding spatial region

. Based on the extracted sensitive triangle set

, a local planar approximation is constructed to describe the geometric structure of the region. Specifically, a plane

is fitted to the triangle vertices using a least-squares method, and the unit normal vector

is derived to represent the local surface orientation.

Based on this geometric representation, a virtual orthographic camera is defined. The center of the 3D bounding box

of the sensitive region is selected as the reference point

, ensuring that the camera is oriented toward the target. To obtain a fronto-parallel observation and minimize geometric distortion, the camera is positioned along the normal direction of the fitted plane

:

where

denotes the camera position and

is an adaptive offset distance along the normal direction. In this study,

is determined proportionally to the spatial extent of the bounding box, defined as:

where

is an empirical scaling factor which is set to 1.5. This design ensures that the sensitive region is fully visible within the camera view while avoiding excessive scale distortion.

Since orthographic projection eliminates perspective effects, the viewing volume is defined as a rectangular window centered at the reference point

. Its size is determined based on the spatial extent of the sensitive region with an additional margin:

where

is a scale factor which is set to 1.5,

and

denote the width and length of the sensitive target within the

, respectively. This guarantees that the projected image fully covers both the target and its surrounding context.

Under the constructed orthographic coordinate system, scene rendering is performed to obtain a continuous image . During this process, texture data from the highest available level of detail are preferentially utilized to ensure that the rendered image preserves maximal spatial resolution and fine-grained texture details.

Compared with directly processing fragmented 2D texture maps, the rendered scene image

provides a compact, continuous, and semantically coherent representation of the sensitive region and its surroundings. This significantly reduces redundant and non-informative content while providing stronger contextual constraints for subsequent reconstruction. The rendered scene image is then fed into a pre-trained inpainting network BrushNet [

35] released in 2024 to perform texture restoration, yielding the final inpainted result

.

Overall, this design transforms fragmented texture representations into a unified scene-based representation, enabling efficient and high-quality texture reconstruction while maintaining both spatial continuity and computational efficiency.

2.4. Multi-Level Stable Mapping

Due to inherent differences in texture resolution and fragmentation across multiple levels of a 3D urban model, independently performing texture replacement at each level often leads to noticeable inter-level inconsistencies.

To address this issue, the entire model is formulated as a hierarchical texture pyramid, and a multi-level stable mapping strategy is proposed to ensure globally consistent texture reconstruction across all levels. Unlike conventional approaches that perform independent inpainting at each level, the proposed method conducts texture reconstruction only once at the selected detection levels (

Section 2.2) using scene-aware context (

Section 2.3), and subsequently propagates the reconstructed results to other levels through a geometry-guided seamless re-projection mechanism. This design effectively avoids redundant inpainting operations and improves computational efficiency.

A key characteristic of the proposed strategy is that texture updating is performed at the pixel level rather than the triangle level, enabling fine-grained and seamless integration of reconstructed textures.

Specifically, for each node, all associated triangular faces are first traversed to identify sensitive triangles based on their spatial relationship with the detected sensitive regions:

where

represents the spatial coordinates of a triangle,

denotes the sensitive target bounding box, and

is the volume intersection-over-volume metric. If the condition is satisfied, the triangle is considered to contain sensitive texture information and is selected for texture replacement.

For the selected triangles, their UV coordinates are mapped to pixel coordinates in the scene image using the orthographic camera parameters established in

Section 2.3. Based on the corresponding scene mask, pixels that fall within sensitive regions are further identified. Only those pixels satisfying the mask constraint are updated, ensuring that texture replacement is restricted to precise sensitive areas rather than entire triangles. The texture update is then performed as:

where

denotes the texture map of the current node,

represents the pixel value at UV coordinates

, and

is the corresponding pixel value in the reconstructed scene image.

Through the joint constraints of triangle selection and pixel-wise mask filtering, the reconstructed textures are seamlessly re-projected onto the original 3D surfaces. Compared with conventional triangle-level replacement strategies, this approach effectively eliminates boundary artifacts and avoids visual discontinuities at region transitions.

Finally, the reconstructed textures are consistently propagated across all relevant levels in the hierarchical structure, ensuring that identical sensitive regions are uniformly updated throughout the model. This multi-level mapping strategy not only maintains global texture coherence but also reduces redundant computations, thereby improving both visual consistency and computational efficiency.

4. Discussion

4.1. Impact of the Fragmentation Degree Threshold on Accuracy and Efficiency

The fragmentation degree threshold directly determines the adaptive partitioning of detection layers. This subsection analyzes the impact of different fragmentation degree threshold settings on the overall performance of the proposed method, with the objective of identifying a reasonable parameter range for practical applications. For clarity, the texture fragmentation degree threshold is denoted as FD.

To conduct a statistical analysis, texture images corresponding to a total of 13,083 nodes from the experimental dataset were used as inputs for fragmentation degree computation. Outliers were removed according to Equation (16), after which the distribution of valid FD values was statistically analyzed and visualized using box plots. The corresponding results are presented in

Table 11 and

Figure 13.

where

and

represent the first and third quartiles of the FD distribution, respectively, while

denotes the interquartile range.

As shown in

Figure 13, across different hierarchical levels, the minimum fragmentation degree consistently remains at a relatively low level, whereas both the mean and maximum FD values exhibit a clear increasing trend with increasing hierarchy depth. These statistics indicate that when the mean FD is adopted as the criterion for measuring texture fragmentation at each level, an increasing γ causes progressively more high-level texture nodes to be classified as detection layers and thus involved in sensitive object detection. For example, when the γ is set to 0.1, only levels up to level 20 are included in the detection process. When the γ reaches 0.4, all hierarchical levels in the experimental dataset are incorporated into the detection scope. From a theoretical perspective, this demonstrates that the γ not only directly affects the completeness and accuracy of sensitive object detection but also has a significant impact on the computational cost of the subsequent privacy-preserving process.

To quantitatively validate these effects, sensitive object detection accuracy and efficiency were evaluated under different γ settings on the experimental dataset. The corresponding results are reported in

Table 12,

Table 13 and

Table 14 and illustrated in

Figure 14.

As shown in

Figure 14, when the γ increases from 0.1 to 0.2, a substantial improvement in detection performance can be observed. Specifically, the recall increases from approximately 0.5 to 0.9, while the IoU improves from about 0.6 to 0.9. However, when the γ is further increased beyond 0.2, detection accuracy improves only marginally, exhibiting a clear diminishing returns effect.

In contrast, efficiency shows a negative correlation with the γ. As the threshold increases, the number of hierarchical levels involved in detection grows accordingly, requiring the object detection network to be repeatedly. This results in a continuous increase in total processing time.

In summary, although increasing the γ can enhance sensitive object detection accuracy to a certain extent, privacy-preserving efficiency remains a critical factor for practical applicability. Based on the experimental results, an FD value of 0.2 was selected under the experimental conditions, as it provides high detection accuracy while maintaining a relatively desirable level of efficiency. It should be noted that this threshold is still an empirical choice derived from a limited dataset. Future work will focus on developing more systematic, data-driven adaptive parameter selection mechanisms to further improve the scientific robustness of γ determination.

4.2. Robustness Under Different Texture Organization Conditions

Although

Section 4.1 has validated the effectiveness of the fragmentation degree threshold γ in typical OSGB datasets, in practical applications, the texture organization of 3D urban models—such as texture atlas partitioning and fragmentation levels—may vary significantly due to differences in photogrammetric reconstruction workflows and parameter settings. These variations may affect the statistical distribution of texture fragmentation, thereby influencing the applicability of the threshold γ. Therefore, it is necessary to evaluate the adaptability and robustness of the proposed method under different texture organization conditions.

To this end, while keeping the geometric structure and semantic information unchanged, we construct four types of datasets with different texture organization characteristics by adjusting texture-related parameters, as summarized in

Table 15.

Based on these datasets, a unified FD threshold of γ = 0.2 is applied for sensitive texture target detection. Recall is adopted as the evaluation metric, and the experimental results are presented in

Table 16.

As shown in

Table 16, under different texture organization conditions, the use of γ = 0.2 for sensitive target detection achieves stable performance, maintaining consistently high recall values. Specifically, when the texture structure becomes more regular (Datatype = 2), the overall FD distribution shifts downward. Under a fixed threshold, more layers are included in the detection scope, ensuring sufficient coverage of sensitive targets and thus maintaining detection performance. In contrast, when the texture fragmentation level increases significantly (Datatype = 3), the FD distribution shifts upward, resulting in fewer layers being selected for detection, which may slightly reduce coverage. Therefore, in such scenarios that deviate from typical texture organization patterns, it is necessary to appropriately increase the threshold γ according to application requirements to maintain detection effectiveness. When only the texture storage format is changed (Datatype = 4), without affecting the FD distribution, the detection performance remains unchanged. This indicates that the proposed method is insensitive to texture storage formats.

In summary, γ = 0.2 can be regarded as a generally effective empirical parameter with good generalization capability for texture privacy-preserving tasks in different types of 3D urban models. Meanwhile, in practical applications, the parameter can be further adjusted according to specific platform characteristics and texture organization patterns, especially in highly fragmented scenarios, to enhance the adaptability and robustness of the method.

4.3. Challenges and Future Research Directions

Although the proposed privacy-preserving texture processing method for 3D urban models has been validated through both theoretical analysis and experimental evaluation in terms of security and usability, there remains room for further improvement.

From a methodological perspective, the current privacy-preserving strategy still relies on sensitive object detection paradigms operating in 2D texture image space. Such approaches implicitly assume that sensitive objects appear as continuous, complete regions with stable visual characteristics in image space. However, as analyzed in

Section 2.1, textures in 3D urban models are often fragmented into multiple spatially discontinuous patches and stored in a distributed manner. This structural fragmentation makes it difficult for conventional convolutional neural networks to perceive sensitive objects holistically, inevitably leading to missed detections or incomplete detection results in practical applications.

Furthermore, certain types of sensitive objects in 3D scenes exhibit strong correlations with geometric structures. These objects often lack stable and distinguishable visual patterns in two-dimensional texture space, and their discriminative characteristics rely more heavily on spatial configuration, geometric relationships, or high-level semantic information. Such requirements exceed the representational capability of traditional object detection networks. This limitation constitutes a fundamental challenge for current methods, particularly in accurately identifying three-dimensional sensitive targets within texture space and supporting geometry–texture collaborative privacy-preserving processing.

Based on the above analysis, a key direction for future research lies in introducing more global scene-aware mechanisms into sensitive object detection and privacy-preserving workflows, thereby reducing reliance on purely two-dimensional texture representations. In addition, from a three-dimensional perspective, exploring collaborative privacy-preserving methods that integrate semantic information, geometric structure, and texture features is expected to further advance privacy protection technologies for 3D geospatial data.

5. Conclusions

Addressing the problem of insufficient visual continuity in repaired textures caused by fragmented texture storage in 3D urban models remains a critical challenge in privacy-preserving processing. By considering the continuity of texture representation during 3D scene rendering, this study proposes a scene-context-aware texture privacy-preserving method. Specifically, a mapping between the 3D scene structure and continuous 2D texture representations is established, allowing fragmented texture patches to be processed under global scene constraints. This design effectively alleviates the visual discontinuity problem commonly observed in existing texture map-based privacy-preserving methods. In addition, an adaptive detection-layer partitioning strategy based on the degree of texture fragmentation is introduced to improve the completeness of sensitive object detection. By dynamically adjusting detection regions according to texture distribution characteristics, the proposed mechanism enhances the reliability of sensitive content identification.

Experimental results demonstrate that the proposed method significantly improves both sensitive object detection accuracy and the visual continuity of repaired textures compared with existing approaches. In particular, the proposed method improves post-preservation texture continuity by approximately 56.9–79.5% compared with representative texture map-based privacy-preserving methods. These results indicate that incorporating scene-aware texture representation can effectively bridge the gap between strong data-level privacy protection and high visual fidelity in 3D urban models.

Overall, the proposed framework provides a new perspective for privacy-preserving processing of large-scale 3D urban models and shows strong potential for practical deployment in smart city data sharing, digital twin systems, and geospatial data governance. Future work will explore the integration of geometric semantics and neural rendering techniques to further improve privacy-preserving performance in complex 3D environments.