1. Introduction

Infrared imaging technology provides unique advantages for environmental perception in complex scenes due to its ability to capture the thermal radiation emitted by objects. It has been widely used in military and civilian fields, such as autonomous driving [

1], search and rescue [

2], and industrial inspection [

3], playing a particularly critical role in remote sensing platforms based on unmanned aerial vehicles (UAVs). As a key method for acquiring surface thermal information, UAV-mounted infrared imaging systems are required to deliver high-precision environmental sensing under strict payload constraints. This necessitates the miniaturization and lightweight design of the imaging hardware. However, traditional infrared systems often rely on complex optical components, which increase the overall size and weight of the system. As a result, there is a significant trade-off between system performance and compactness [

4], which presents challenges for applications requiring both portability and high image quality.

Computational imaging provides a promising approach to overcome the physical limitations of conventional imaging systems by adopting a co-design framework that integrates optical encoding with algorithmic decoding. The core concept involves actively modulating light field information at the optical front end—including phase, spectrum, polarization and depth of field—followed by reconstructing multidimensional data through backend algorithms [

5,

6,

7,

8,

9]. Through this hardware–software co-design paradigm, imaging systems can achieve performance comparable to that of traditional complex optical systems while reducing optical complexity. As a result, computational imaging offers a viable path toward realizing both lightweight and high-performance imaging. With the advancement of micro-nano optical devices, emerging technologies such as metasurfaces and diffractive optical elements (DOEs) have substantially improved the flexibility and efficiency of light field manipulation, promoting the transition of computational imaging from theoretical research to practical engineering applications. Notably, single-lens cameras based on the deep integration of micro-nano optics and computational imaging have already been realized [

9,

10,

11,

12,

13,

14]. These systems feature simple structures and low manufacturing costs, demonstrating strong adaptability in applications with budget constraints and requirements for flexible deployment. By effectively integrating computational imaging methods, it becomes feasible to enhance the overall performance of imaging systems without increasing hardware complexity, thereby offering strong support for the future development of compact optical systems.

However, these computational imaging approaches still encounter new challenges. The sophisticated algorithms they rely on often require substantial computational resources, a limitation that becomes especially critical in dynamic scene perception. As UAVs are increasingly deployed in real-time decision-making tasks, such as disaster monitoring and border patrol, video-rate infrared imaging has become an essential requirement. Existing methods struggle to meet the demands of latency-sensitive video processing applications.

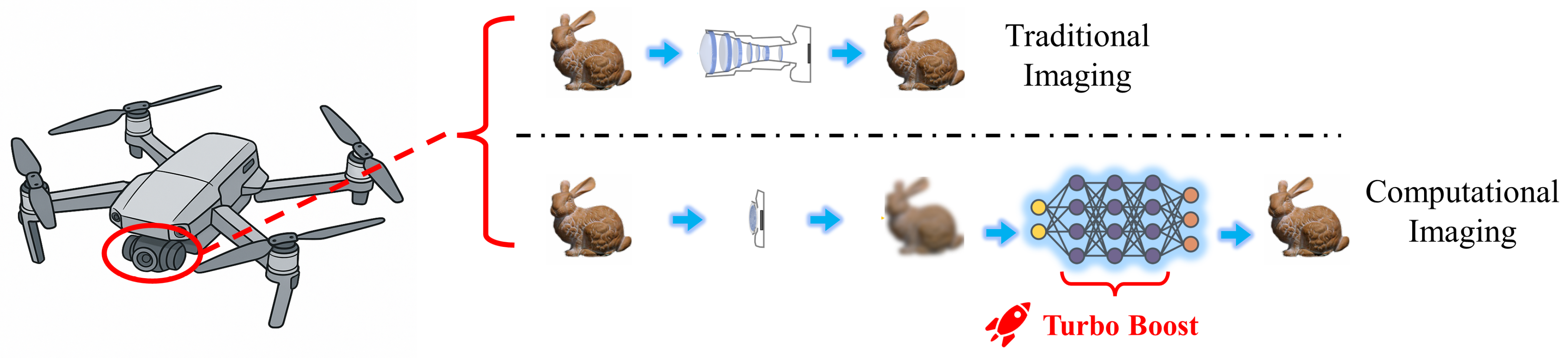

Figure 1 presents a comparative analysis of different imaging methods. Although computational imaging methods offer reduced hardware complexity compared to traditional multi-lens imaging systems, the extra latency can limit their practical applications. To address this challenge, lightweight network design has emerged as a research direction, aiming to reduce computational complexity and accelerate inference through model compression techniques such as model pruning [

15,

16] and quantization [

17,

18,

19]. Our team previously implemented video-rate infrared imaging using a U-Net-based architecture combined with model pruning [

20], and further enhanced performance through sensitivity analysis [

21], validating the effectiveness of traditional strategies. Nevertheless, experimental results suggest that model compression techniques are approaching their performance limits, making further improvements in inference speed difficult. Moreover, most current networks designs primarily focus on statistical image quality metrics such as peak signal-to-noise ratio (PSNR) and structural similarity index (SSIM), with insufficient attention paid to key optical evaluation parameters like the modulation transfer function (MTF). This discrepancy leads to a mismatch between algorithmic outputs and the actual requirements of imaging systems.

In recent years, lightweight convolutional neural networks (CNNs), exemplified by MobileNet, have achieved an effective balance between performance and computational efficiency on resource-constrained edge devices. This success is attributed to innovative architectural components such as depthwise separable convolutions, linear bottlenecks and inverted residual blocks. These network designs have been widely adopted in high-level vision tasks such as image classification [

22] and object detection [

23,

24]. Unlike high-level tasks that prioritize semantic understanding, low-level tasks require precise pixel-level reconstruction. This transformation requires selective modifications based on the core principles of MobileNet. For example, depthwise separable convolution modules have been utilized to construct efficient feature extractors, and multiscale feature fusion mechanisms have been incorporated to improve the recovery of fine image details [

25,

26,

27,

28,

29,

30,

31,

32]. This modular design philosophy not only inherits the efficiency advantages of lightweight networks, but also provides greater architectural flexibility for complex low-level vision tasks. As a result, it facilitates the practical deployment of high-precision, lightweight image restoration models on mobile and embedded platforms.

Building on these advances, this work proposes Mobile Wavelet Restoration-Net (MWR-Net), a lightweight deep learning framework tailored for real-time image restoration in single-lens infrared computational imaging. The network adopts MobileNetV4 [

33] as the encoder backbone and pairs it with a compact decoder based on residual blocks, reducing computational complexity while maintaining restoration accuracy. A modular architecture is designed to flexibly balance performance and efficiency across different stages. To better preserve high-frequency details such as edges, we introduce a wavelet-domain loss function that enhances the network’s sensitivity to fine structures. Furthermore, the MTF is integrated as a complementary metric to evaluate perceptual and optical fidelity, addressing the limitations of conventional pixel-wise metrics. The proposed framework is implemented on the RK3588 edge computing platform, enabling real-time video processing via its NPU acceleration. This study demonstrates the feasibility of combining lightweight network design with frequency-aware constraints for practical, high-performance infrared image restoration under strict resource constraints.

Specifically, the main contributions of this work are summarized as follows:

We propose a lightweight image restoration network based on MobileNet, designed for real-time infrared computational imaging with high efficiency and strong restoration performance;

A wavelet-domain loss function is introduced to explicitly preserve high-frequency details, particularly edges, and the MTF is adopted as a complementary metric to better evaluate perceptual quality;

The network is optimized for edge deployment and demonstrates real-time inference on the RK3588 embedded NPU platform, showing strong potential for practical applications.

2. Related Works

2.1. Image Restoration

Image restoration is typically modeled as a degradation process:

where

k denotes the degradation kernel,

x represents the clear image, ∗ represents convolution, and

n is additive noise. Traditional image restoration methods focus on degradation caused by external disturbances, such as motion blur, haze, rain, and sensor noises [

34,

35,

36]. These methods usually rely on physical a priori knowledge to model the degradation mechanisms. Although they perform well in specific scenarios, their effectiveness is heavily based on the accuracy of these assumptions, which limits their applicability to more complex degradation patterns in real optical systems.

In contrast, image restoration in computational imaging faces unique challenges, its degradation stems from the intrinsic physical limitations of the optical system, and manifests itself in resolution degradation and aberrations due to non-ideal point spread functions (PSFs). Early research has focused on combining PSF estimation with nonblind deconvolution algorithms. For example, Schuler et al. [

37] proposed a PSF estimation method based on a single-lens setup and subsequently used nonblind deconvolution for image restoration, Heide et al. [

38] further improved the performance by introducing cross-channel a priori information in the restoration process, and Zhan et al. [

39] proposed a normal Sinh–Arcsinh model based on noisy image pairs for PSF estimation of a single-lens camera. In addition, Cai et al. [

40] introduced a circular segmentation strategy for the estimation of PSF and achieved high-quality image recovery through nonblind deconvolution.

In recent years, deep learning has introduced a new paradigm for image restoration, enabling data-driven models to learn the process of mapping to high-quality images end-to-end from coded measurements. Li et al. [

41] proposed a deep neural network for PSF awareness. Peng et al. [

12] introduced a generative adversarial model specifically designed for correcting aberrations in high-resolution, large field-of-view (FoV) lens systems. Gong et al. [

42] designed a deep neural network based on orthogonal non-negative matrix decomposition for efficient compensation of optical aberrations in the low-dimensional space.

Although these deep learning-based methods significantly outperform traditional algorithms in quantitative metrics such as PSNR and SSIM, they tend to have higher computational complexity. This raises memory bandwidth bottlenecks and inference latency issues when deployed on embedded platforms, making it difficult to meet the stringent real-time requirements in edge computing scenarios.

2.2. Lightweight Neural Networks

The design of lightweight CNNs emphasizes on achieving a balance between efficiency and performance, aiming for deployment in resource-constrained environments. In classic lightweight optimization designs, the MobileNet series [

33,

43,

44,

45] is one of the earliest designs to adopt depthwise separable convolutions and inverted residual blocks. In a later version, MobileNetV3 integrates neural architecture search, which can automatically find the best connection pattern within a predefined search space. However, inference latency can vary significantly across hardware platforms due to memory constraints. ShuffleNets [

46,

47] reduce computational costs through group convolutions and channel shuffling, yet the shuffle operation itself can become a bottleneck on highly parallel architectures like GPUs. GhostNets [

48,

49,

50] generated a large number of “ghost” feature maps via inexpensive linear transformations and introduced hardware-friendly attention modules, making them particularly suitable for deployment on common edge devices like ARM CPUs. MobileOne [

51] re-evaluated the relationship between parameter count, FLOPs, and model efficiency, identifying ReLU as the activation function with the lowest latency, and transformed multi-branch training structures into single-path inference models via structural reparameterization, it is suited for modern mobile devices. While this approach is well-suited for deployments, the reparameterization process can lead to accuracy degradation under quantization, a common trade-off in many lightweight architectures designed for real-world deployment.

Meanwhile, Vision Transformers (ViTs) and their variants have achieved state-of-the-art performance across various computer vision tasks due to their long-range modeling capabilities. Lightweight ViT variants have also made significant progress toward edge deployment. MobileFormer [

52] combines local detail extraction and global semantic understanding through a parallel architecture integrating MobileNet and Transformer components. MobileViT [

53] enhances the inverted residual structure of MobileNetV2 by inserting compact MobileViT modules at strategic locations. However, such hybrid designs can introduce operational heterogeneity, such as alternating between convolutional and self-attention operators, which may reduce deployment efficiency on runtimes heavily optimized for CNNs. EfficientFormer [

54] simplifies the computation graph by maintaining consistent token dimensions and determines optimal configurations through latency-aware architecture search. EfficientViT [

55] reduces mobile inference latency by employing multi-scale linear attention mechanisms. Despite these advances, contemporary edge hardware and compiler stacks tend to offer more mature support for convolutional operators. Consequently, well-optimized convolutional architectures often maintain practical advantages in scenarios demanding low latency and high throughput. It is partly for this reason that we select MobileNetV4 as our backbone, not only owing to its contemporary design but also due to its explicit optimization across diverse hardware platforms, including CPUs, GPUs, DSPs, and NPUs.

2.3. Frequency-Domain Learning

Frequency-domain analysis offers a global perspective on image restoration that complements spatial-domain modeling. In this context, high-frequency components correspond to edges and fine textures, while low-frequency components represent smooth regions and gradually varying regions of an image. Advances in tools such as wavelet transforms and Fourier analysis have significantly contributed to the development of more effective techniques in this area.

In terms of frequency-domain constraints, Jiang et al. [

56] proposed the Focal Frequency Loss, inspired by the class imbalance handling mechanism in Focal Loss. This method enhances the reconstruction of critical frequency bands through adaptive frequency weighting. Fuoli et al. [

57] introduced a Fourier-domain discriminator, which encourages the generator to align its output with real data in the frequency domain, thereby improving perceptual quality in super-resolution tasks. Korkmaz et al. [

58] explored a GAN-based framework that focuses on detail sub-bands while omitting the low–low(LL) sub-band, effectively reducing image artifacts during reconstruction.

Hybrid-domain architectural innovations have also emerged to better exploit frequency characteristics. For instance, DeepRFT [

59] proposes a frequency-domain residual convolution framework. Spatial feature maps are transformed into the frequency domain, where 1 × 1 convolutions are applied to decouple high- and low-frequency components for targeted learning. LoFormer [

60] introduces a local channel self-attention mechanism in the frequency domain, capturing cross-covariance across frequency bands within localized windows. Another study [

61] proposes multi-branch and content-aware modules that dynamically and locally decompose features into independent frequency sub-bands, selectively emphasizing the most informative components for restoration. Building upon this work, MFSNet [

62] modulates frequency information in skip connections to improve information flow efficiency.

Despite their theoretical advantages, frequency-domain network modules often face practical deployment challenges on edge devices. For example, complex FFT/IFFT operators require strong hardware support, which is typically not well optimized on NPUs or other embedded accelerators. Therefore, this paper incorporates frequency-domain learning into the loss function rather than the network architecture. This approach aims to enhance the recovery of high-frequency details while maintaining hardware compatibility and ensuring efficient deployment in edge computing environments.

In summary, current deep learning-based restoration methods often face a trade-off between accuracy and efficiency, making them difficult to deploy in real-time edge applications. Moreover, frequency-domain techniques, while effective for detail preservation, are typically computationally intensive and poorly optimized for embedded hardware. These limitations highlight the need for lightweight, hardware-compatible approaches capable of high-quality infrared image restoration, particularly in preserving critical high-frequency information.

3. Proposed Method

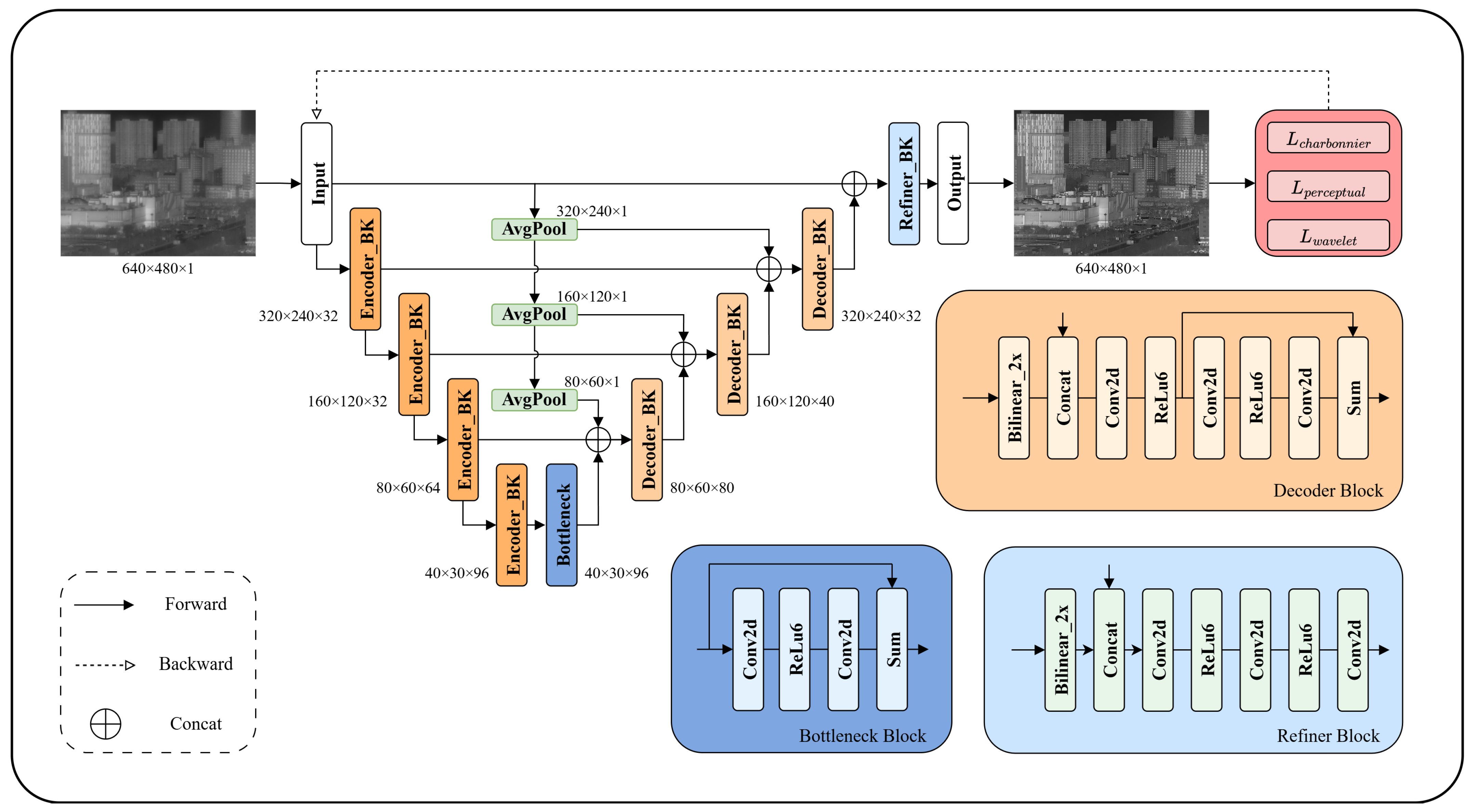

This study proposes a lightweight image restoration framework tailored for edge computing scenarios, based on the MobileNetV4-Small architecture. From the perspective of lightweight design, the encoder employs depthwise separable convolutions as the fundamental operators, reducing computational complexity while maintaining effective feature representation. A four-level feature pyramid is constructed through the optimized distribution of convolutional layers. In the first two stages, we use fewer layers while the resolution is still high, and in the last two stages we use more layers. This design reduces the memory usage of activation tensors, thereby alleviating potential memory bottlenecks in edge devices. In the decoder design, a multiscale feature fusion strategy is adopted to integrate information from the former block, pooled original input and encoded features from skip connections. The channel dimension is expanded to enhance decoding capability. Furthermore, a detail refiner module is introduced before the final output to enhance texture preservation. The overall network architecture is illustrated in

Figure 2. To improve perceptual consistency, we propose a multi-level joint optimization objective. During training, the Charbonnier loss, perceptual loss, and wavelet loss are jointly incorporated to form a cross-domain collaborative optimization mechanism. Experimental results demonstrate that this multi-dimensional loss function significantly enhances high-frequency detail recovery and global structural fidelity, leading to notable improvements in the MTF metric.

3.1. Feature Extraction Encoder

The proposed method adopts MobileNetV4-Small, a general-purpose lightweight architecture developed by Google, as the backbone for feature extraction. This network achieves strong feature representation capabilities and low inference latency across different platform. Thus, we consider it suitable for the basis of our design. Its core design philosophy focuses on optimizing operational intensity and balancing computation with memory bandwidth, enabling near-Pareto-optimal performance with carefully designed modules.

To better adapt the architecture to image restoration tasks, we first follow common practices in low-level vision tasks [

63,

64] by removing the batch normalization (BN) layers from the original architecture. BN has been shown to have the tendency to normalize fine-grained details, which can negatively impact feature expressiveness in restoration tasks. Subsequently, we build a multiscale feature pyramid is constructed across four resolution levels: 1/2, 1/4, 1/8, and 1/16 of the original image size, with corresponding output channel dimensions set to 32, 32, 64, and 96, respectively. Additionally, a bottleneck layer is added at the 1/16 resolution level to further enhance feature abstraction.

This memory-aware architecture improves the model’s ability to capture both global structures and local details at a lightweight scale. As a result, it is better equipped to address complex degradation patterns commonly encountered in computional image restoration tasks.

3.2. Decoder

The decoder adopts a lightweight multiscale fusion architecture, where each decoding stage consists of three input branches:

Upsampled feature: the output from the preceding decoder block;

Multi-scale pooled feature: global semantic information extracted by applying multi-scale average pooling to the original input image;

Encoder skip connection: shallow features preserved and fused from corresponding encoder layers to retain fine-grained spatial details.

In implementation, bilinear interpolation is first used to upsample the output of the last decoder block to the twice of its resolution. Meanwhile, multi-scale average pooling is applied to the original input image to extract global features at different levels. Then, all input features from the three sources are concatenated along the channel dimension and mixed through a 1 × 1 convolutional layer. Next is a naive residual block composed of two 3 × 3 convolutional layers, which performs feature fusion and detail reconstruction. At each decoding stage, the numbers of output channels in these blocks are set to 80, 64, 40, and 32. In addition, we use the ReLU6 activation function instead of standard ReLU to improve quantization performance, because it limits the range of weight distribution during training.

In the final decoding stage, an additional detail refinement module is introduced corresponding to the original image resolution, consisting of three convolutional layers. This module further refines the restored image, enhances texture quality, and overall visual fidelity.

3.3. Loss Function Design

We adopt a composite loss function that integrates three components: the Charbonnier loss, perceptual loss, and wavelet loss. This combination enables the model to achieve higher reconstruction quality at the pixel level, feature level, and frequency domain, respectively.

The Charbonnier loss serves as the primary loss term. As a robust variant of the L1 loss, it is particularly effective in handling noise and outliers. It introduces a small constant

to smooth the gradient computation, and is defined as:

where

x and

y denote the predicted and ground-truth images, respectively, and

is typically set to

.

In addition, we incorporate a VGG-based perceptual loss to enhance semantic consistency and visual quality. The perceptual loss measures the discrepancy between high-level features of the predicted and ground-truth images in the VGG feature space. It is formulated as:

where

denotes the feature map extracted from the

i -th layer of the VGG network, and

represents the weight assigned to that layer. By adjusting the weights across different layers, this loss guides the model to reconstruct more realistic and visually pleasing results.

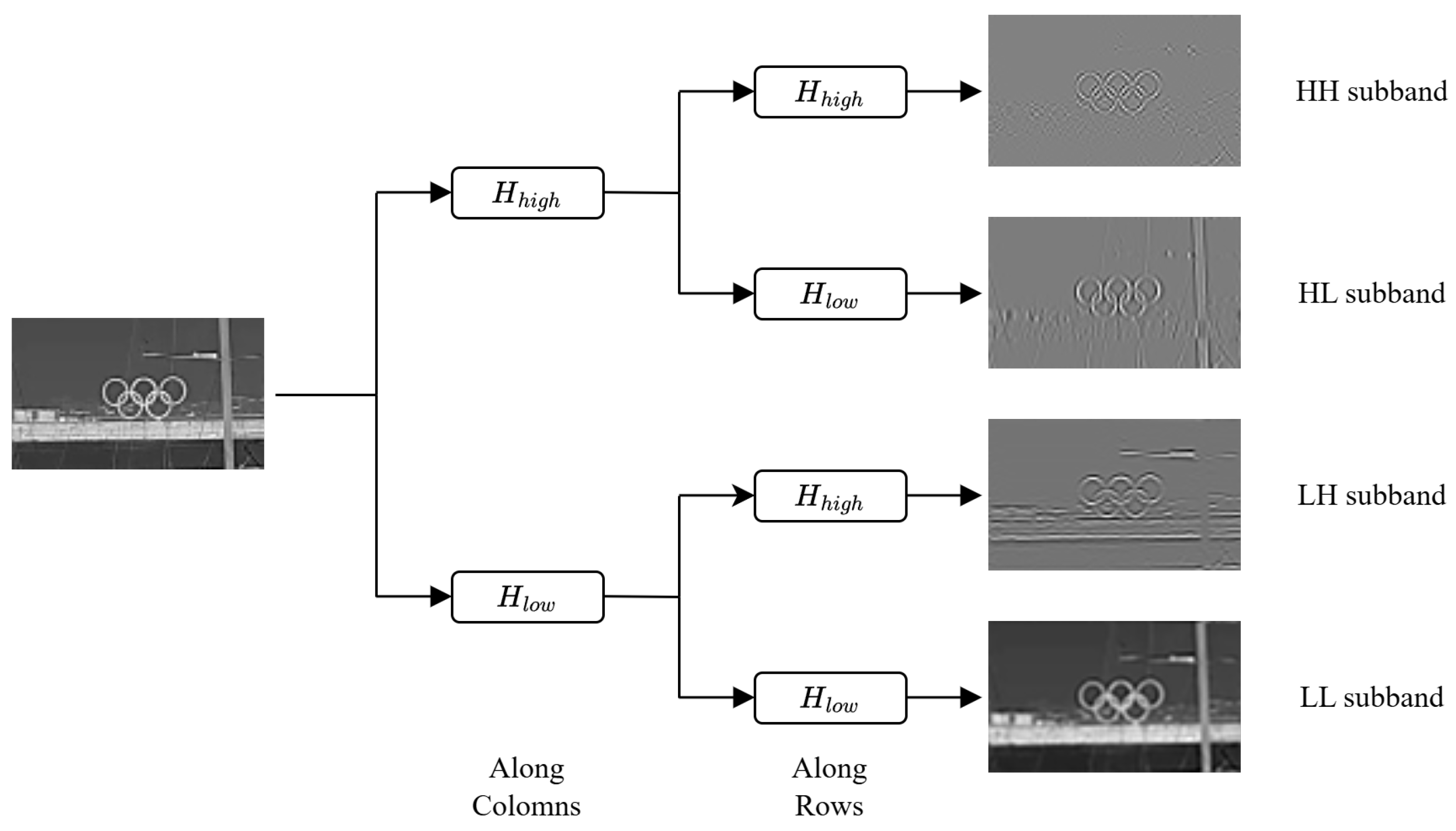

Furthermore, considering that image restoration—especially deblurring, relies heavily on accurate recovery of high-frequency information, we propose a stationary wavelet transform (SWT)-based wavelet loss to explicitly constrain differences in the frequency domain, as shows in

Figure 3. Compared to the conventional discrete wavelet transform (DWT), SWT avoids downsampling and preserves spatial resolution consistency across sub-bands.

Specifically, we apply symlet wavelets to decompose both the input and ground-truth images into four sub-bands: low–low (LL), low–high (LH), high–low (HL), and high–high (HH). An L1 distance is then computed for each sub-band:

where

denotes the weight assigned to each sub-band. This frequency-aware loss helps the model better recover fine textures and edge structures, which are critical for high-quality image restoration.

3.4. Experimental Setups

Based on a single-lens infrared camera newly designed by our team, which has a focal length of 55 mm and an aperture of f/1.0. The system employs a hybrid refractive–diffractive design: the front surface features an aspheric profile with a diffractive zone, and the rear surface is fully diffractive [

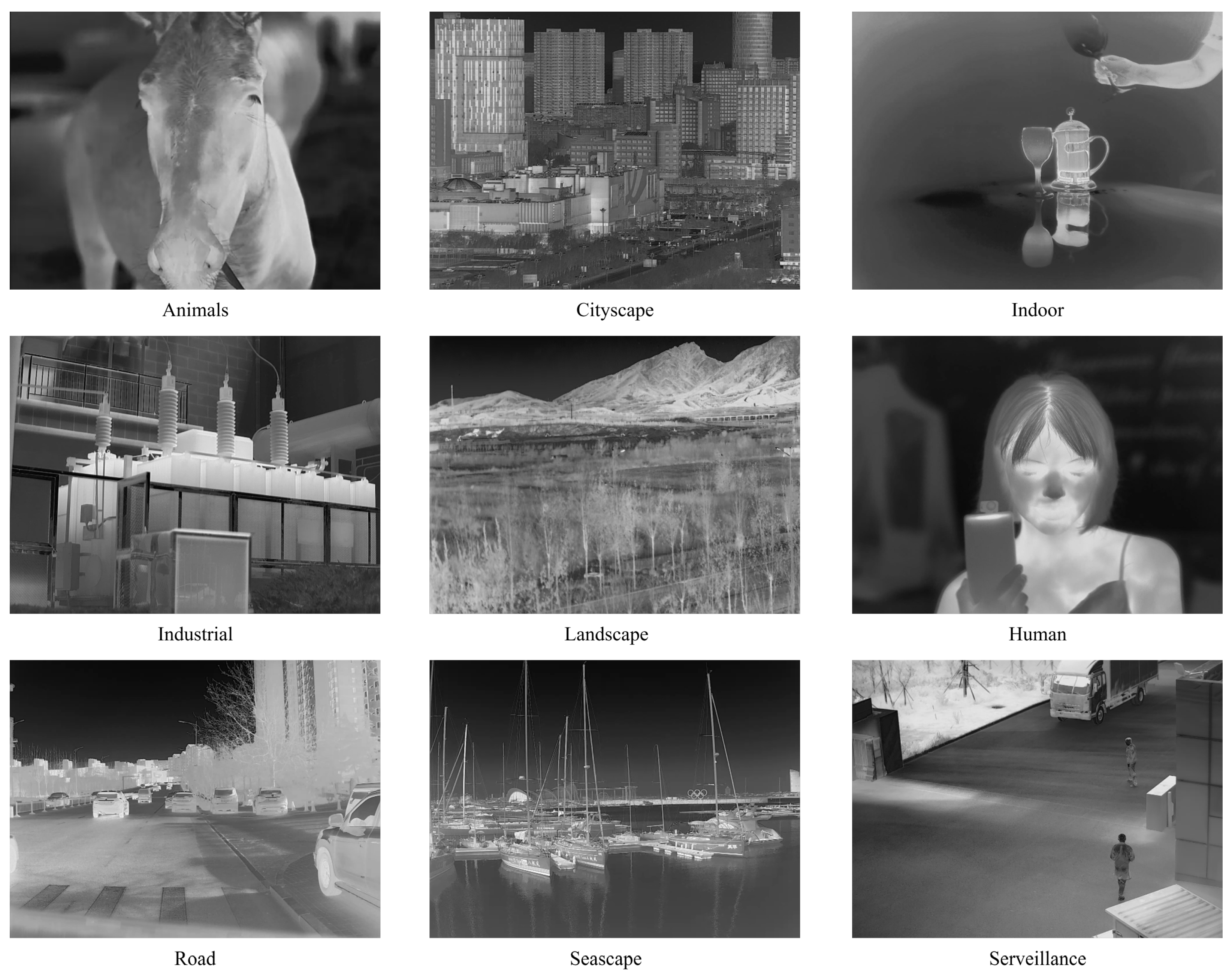

20]. This configuration provides sufficient focusing ability while maintaining a simple, monolithic structure. We successfully calibrated the PSF of the camera across nine different fields of view through a series of detailed experiments. To construct the training dataset, we selected the public dataset provided by Raytron, which contains 7224 images. These images were randomly split into a training set with 6498 images and a test set with 726 images, maintaining a ratio of approximately 9:1. The dataset covers a diverse range of scene types, including seascapes, animals, industrial scenes, cityscapes, landscapes, indoor scenes, portraits, surveillance views, and vehicle-mounted views—totaling nine categories, as shows in

Figure 4.

Using the calibrated PSF data, we simulated the degradation process during imaging through the lens and image signal processor (ISP), thereby generating a high-precision aligned dataset. This dataset serves as a solid foundation for model training, ensuring that the model can learn to recover sharp images from blurred inputs. During training, we employed the Adam optimizer with hyperparameters set to = 0.9 and = 0.999, using a batch size of 8. The entire training process lasted 400 epochs, with the initial learning rate set to and gradually decayed to . This learning rate adjustment strategy facilitates rapid convergence in the early stages of training while enabling fine-tuning of model parameters in later stages.

To enhance the generalization capability of the model and prevent overfitting, we applied horizontal and vertical flipping as data augmentation techniques during training, along with multiscale Gaussian noise injection. Additionally, we used the Thop toolkit to measure the model’s parameter count at an input resolution of 480 × 640. The inference speed was evaluated using RKNN-Toolkit2 v2.3.0 on the RK3588 platform.

All quantitative restoration metrics (PSNR and SSIM) reported in the results are averaged over the entire test set (), ensuring statistical reliability. The MTF values are obtained from measurements of slit targets averaged across 9 field-of-view positions. Inference speed is computed as the average of 100 independent runs on the embedded platform after 5 warm-up iterations to eliminate system initialization overhead and stabilize hardware performance.

3.5. Evaluation Metrics

We adopted PSNR, SSIM, and MTF as quantitative evaluation metrics. PSNR and SSIM are widely used in image restoration tasks and primarily assess pixel-level fidelity between reconstructed and ground-truth images. However, these metrics do not always correlate well with perceptual quality; in some cases, excessively high PSNR values may even result in visually over-smoothed or blurred outputs. The PSNR is defined as

where

x and

y denote the restored and ground-truth images,

denotes the maximum possible pixel value of the image and

denotes the mean squared error.

The SSIM index evaluates structural similarity by considering luminance, contrast, and structural information, and is defined as

where

,

,

,

and

represent the local means, standard deviations, and cross-covariance of

x and

y, respectively.

and

are small constants to stabilize the division.

To address this limitation, we introduce MTF at the Nyquist frequency as a supplementary evaluation criterion, which is defined as

where

and

represent the maximum and minimum intensity values in the high-contrast edge or texture regions of the image. This formulation quantifies the system’s ability to preserve contrast at the highest resolvable spatial frequency. MTF is a standard metric in optical system evaluation and effectively characterizes the system’s ability to preserve high-frequency information. By incorporating MTF into the evaluation framework, we obtain a more comprehensive and physically meaningful assessment of image restoration performance, particularly in terms of perceptual sharpness and detail recovery.

4. Experiments and Results

4.1. Quantitative Evaluation

To evaluate the effectiveness of the proposed method, we conducted comparative experiments with a previously developed model from our team. The original model achieved strong performance on conventional image quality metrics, yielding a PSNR of 36.97 dB and an SSIM of 0.962. However, it was relatively heavy in terms of model size, with 8.63 million parameters and a computational complexity of 68.12 G MACs. It’s unsuitable for real-time or edge deployment. In order to create a more deployable baseline, we applied structured pruning with a reduction ratio of 50%, resulting in a lightweight variant of the original model. This pruned version reduced the parameter count to 3.92 million and lowered MACs to 28.44 G. Despite these reductions, the pruned model still maintained competitive performance, achieving a PSNR of 36.37 dB and an SSIM of 0.959. Notably, its MTF performance was also well preserved. With a value of 0.5001, it closely matches the original model, the MTF value of which is 0.5190. However, visual evaluations and frequency-domain analysis revealed that the pruned baseline still exhibited limitations in reconstructing high-frequency details, indicating room for improvement in perceptual and structural fidelity.

MWR-Net innovatively integrates the lightweight architecture of MobileNetV4 with a wavelet-domain constrained loss function, achieving both high efficiency and strong restoration performance. At FP32 precision, MWR-Net significantly outperforms the baseline model (

Table 1). Specifically, it contains only 666.34 K parameters and 6.17 G MACs, making it highly suitable for edge deployment. In terms of image quality, MWR-Net achieves a PSNR of 36.63 dB, which is 0.26 dB higher than that of the baseline, while maintaining an SSIM of 0.962, demonstrating efficient utilization of model parameters. Furthermore, by incorporating the wavelet-domain constrained loss function, the model’s performance is further improved to a PSNR of 37.10 dB and an SSIM of 0.964, delivering the best results among all tested models in terms of MTF. Specifically, the MTF value reaches 0.6903, substantially exceeding the baseline’s 0.5001. This significant improvement highlights MWR-Net’s superior capability in reconstructing high-frequency details, which is critical for high-quality image restoration in real-world applications.

In practical deployment tests (

Table 2), MWR-Net demonstrates robust performance under model quantization. Evaluated on the RK3588 chip with 6 TOPS NPU computing power, the results reveal that the baseline model suffers a noticeable performance degradation at INT8 precision. Specifically, its PSNR drops to 35.44 dB, SSIM decreases to 0.951, and the MTF value is 0.5144. In contrast, MWR-Net maintains a PSNR of 35.52 dB and an SSIM of 0.948 after quantization, with MTF value remaining at 0.6767. Particularly noteworthy is that the inference speed of MWR-Net reaches 42 FPS, representing a 27.3% improvement over the baseline’s 33 FPS. This performance gain is achieved while still preserving superior capability in high-frequency information restoration, underscoring MWR-Net’s strong hardware compatibility and computational efficiency.

Experimental results demonstrate that MWR-Net effectively balances accuracy and efficiency under FP32 precision, and exhibits significant robustness advantages in INT8 quantization scenarios. These findings indicate that the proposed framework offers a more optimal solution for video-rate, resource-constrained deployment. The architectural innovations introduced in this study not only validate the synergistic benefits of lightweight network design and wavelet-domain constraints, but also provide a promising technical pathway for the development of efficient, real-time video processing systems in remote sensing and related applications.

4.2. Visual Evaluation

To evaluate the image restoration performance of the proposed method in visual comparison, we selected representative samples from the test set and conducted reconstruction experiments using three models: the baseline model, MWR-Net (without wavelet loss), and MWR-Net (with wavelet loss). The visual results are presented in

Figure 5,

Figure 6 and

Figure 7. The restoration results indicate that MWR-Net provides better overall reconstruction quality compared to the baseline. Moreover, the incorporation of the wavelet-domain loss function further improves the recovery of high-frequency details—such as edges and textures—showcasing its effectiveness in enhancing perceptual sharpness and structural fidelity.

Specifically, as shown in

Figure 5, MWR-Net demonstrates superior performance in reconstructing fine textures on human subjects, accurately recovering clothing patterns and fabric details. In contrast, the baseline output suffers from noticeable blurring and residual noise. The incorporation of the wavelet-domain loss further enhances edge sharpness and the rendering of fine structures, contributing to improved perceptual quality. Moreover, as illustrated in

Figure 6 and

Figure 7, in scenes containing building structures and large-scale urban environments, the proposed method better preserves structural boundaries and textural features. This results in clearer representation of high-frequency elements—such as signage, window grids, and architectural contours. These results are critical not only for visual realism but also for supporting downstream vision tasks like object detection and semantic segmentation.

Figure 8 presents the visual results of MTF testing conducted at a room temperature of 20 °C. The MTF values exceed 0.5 across all FoVs, further confirming MWR-Net’s ability to perceive and recover high-frequency details. In summary, the proposed method not only achieves better objective evaluation metrics, but also obtains better subjective visual evaluation, especially in terms of edge reconstruction accuracy and fine detail restoration.

In summary, the proposed method not only achieves superior subjective visual quality but also demonstrates significant improvements in objective evaluation metrics, particularly in edge reconstruction accuracy and fine-detail restoration while maintaining higher inference speed.

5. Discussion

5.1. Interpretation of Key Results

This study successfully validates the integration of lightweight architecture with frequency-domain constraints in MWR-Net, achieving superior image restoration quality alongside significantly enhanced efficiency. Compared to the pruned baseline model, MWR-Net reduces parameter count by 83% and computational complexity by 78%, while attaining a 0.26 dB higher PSNR. This “lighter yet stronger” performance demonstrates that employing MobileNetV4 as a natively lightweight backbone provides greater architectural advantages than post hoc pruning of large models, enabling more efficient feature extraction. Crucially, the wavelet-based loss function directly addresses the baseline’s deficiency in reconstructing high-frequency details. MWR-Net’s MTF value of 0.6903 represents a 38% improvement over the baseline’s 0.5001, objectively confirming that frequency-domain constraints effectively guide the model toward sharper image reconstruction. Visual results consistently demonstrate this loss function’s dual benefit of enhancing textures and edges while suppressing artifacts and noise.

5.2. Comparison with Lightweight Design Strategies

Our comparative analysis reveals distinctive advantages and limitations of different lightweight design approaches. The pruned baseline model—representing a common strategy of compressing complex models like UNet. While metrically competitive, it exhibits inherent limitations. Its architecture remains suboptimal as pruning cannot fundamentally alter inefficient computational graphs. This structural deficiency manifests in pronounced quantization vulnerability, with a 0.93 dB PSNR drop under INT8 quantization, indicating sensitive weight distributions. Furthermore, its design origin lacks high-frequency optimization results in perceptually smooth but detail-deficient outputs. In contrast, MWR-Net embodies a “natively lightweight” philosophy by directly incorporating MobileNetV4, which is architecturally optimized for mobile deployment. This foundational difference enables superior quantization robustness and measurable inference speed advantages, demonstrating that intrinsic lightweight design outperforms post hoc compression for edge deployment scenarios.

5.3. Limitations and Further Analysis

Despite its advantages, MWR-Net’s performance boundaries reflect inherent trade-offs in lightweight design. The compact architecture inevitably constrains representational capacity, particularly in extreme low signal-to-noise ratio scenarios or exceptionally complex noise patterns where larger models would maintain superiority—a deliberate trade-off of generalization capability for efficiency. Additionally, the method’s effectiveness remains contingent on accurate PSF modeling, with imperfect PSF estimation leading to inferior restoration and limiting plug-and-play adaptability across diverse imaging systems. The current empirical selection of general-purpose wavelet bases presents another limitation, as these may not optimally represent infrared spectral characteristics, thereby constraining the full potential of frequency-domain optimization.

5.4. Future Work Directions

Building on these insights, future research will pursue two primary directions to overcome current limitations. First, we will develop learnable PSF estimation modules to integrate optical characterization with image restoration within an end-to-end optimized framework, enhancing adaptability to varying imaging systems while reducing dependency on specialized hardware calibration. Second, we will investigate task-driven wavelet learning mechanisms to adaptively generate optimal wavelet bases specific to infrared image characteristics, transforming frequency-domain constraints from empirically designed to data-driven components for improved effectiveness. Furthermore, we intend to develop a visible-light image restoration variant of MWR-Net by adapting the network architecture to account for the intrinsic differences between infrared and visible imaging modalities. This includes refining feature representation modules and reconstruction mechanisms to better accommodate the distinct characteristics of visible images. Such structural adaptation is expected to improve the model’s flexibility and broaden its applicability in different imaging scenarios. These directions aim to advance both the theoretical foundation and practical applicability of lightweight computational imaging systems.

6. Conclusions

This study addresses the trade-off between system compactness and performance, as well as the challenge of restoring high-frequency details in single-lens infrared imaging systems. We propose a lightweight, end-to-end image restoration network, MWR-Net, which integrates MobileNetV4 as the encoder backbone and incorporates a wavelet-domain loss function for frequency-domain constraints.

Experimental results show that MWR-Net maintains reconstruction performance despite substantial reductions in both parameter count and computational cost, which successfully overcomes the conventional trade-off between accuracy and efficiency in lightweight model design. The introduction of wavelet-based loss functions and MTF metrics further reveal that the network performs well in the frequency domain, leading to a more-detailed image output. This approach achieving high scores both in traditional objective evaluation indicators and subjective visual quality.

MWR-Net proves to be highly suitable for video-level signal processing. It is well equipped to handle high-throughput data streams while maintaining performance in resource-limited environments. Deployment on embedded platforms confirms its real-time inference capability, making it a practical choice for time-critical applications like UAV-based remote sensing.

Overall, this study highlights the value of co-optimizing lightweight architectures with frequency-domain guidance, offering a promising direction for future real-time infrared computational imaging systems. Future work will focus on enhancing its adaptability to extreme degradation scenarios, and expand its applicability in real-world imaging tasks.