1. Introduction

In recent years, with the continuous development of internet technology and big data, people’s functional demands have evolved from merely detecting past events to real-time monitoring and further to predicting future occurrences [

1,

2,

3,

4,

5]. By analyzing vast amounts of data, it is possible to predict the likelihood of events occurring, helping decision makers formulate practical plans and avoid one-sidedness and errors in decision making. In the context of expensive experiments or research, predictive analysis models can facilitate simulation experiments, thereby achieving cost savings. For example, in the machinery industry, accurate temperature prediction can ensure product quality, improve production efficiency, and ensure safety. In the field of ecology, sea surface temperature (SST) plays a crucial role in the energy balance at the Earth’s surface as well as in the exchange of energy, momentum, and moisture between the ocean and atmosphere [

6]. Variations in SST can influence biological processes such as the distribution and reproduction of marine life; this has significant impacts on marine ecosystems [

7]. In addition, in some industries, certain phenomena or numerical changes are extremely fast, such as high-precision experiments, so it is not only necessary to ensure the accuracy of the predicted results, but also to ensure the accuracy of the prediction method at specific times [

8,

9,

10].

The core of prediction lies in identifying patterns embedded in time series data that are closely related to time [

11,

12]. Typically, data points that are closer in time have a greater impact on the results. However, the evolution of patterns within time series data is not fixed and can vary significantly over different periods [

13,

14,

15]. Additionally, some entities undergo slow changes, such as the evolution of geological structures, which require a long time to capture. This means that if these entities undergo the same change twice, the magnitude of the change in a particular property value may be small, but the time span of the change may vary significantly. For example, it took 10 years for the height of a mountain to drop by 1 cm for the first time, and 20 years for it to drop by 1 cm for the second time. Thus, incorporating the time can help amplify subtle changes and capture more precise patterns. These considerations highlight the critical role of time in multivariate time series prediction tasks and underscore its indispensable nature.

Traditional prediction algorithms include Autoregressive Integrated Moving Average (ARIMA) [

16], Logistic Regression, k-Nearest Neighbors (k-NN) [

17], etc. These algorithms are suitable for simple relationships and small datasets but are not suitable for prediction tasks in the big data era. With the rapid development of deep learning, time series forecasting [

18,

19,

20,

21] models have shown excellent ability in handling nonlinear, high-frequency multidimensional, and discontinuous data, such as Recurrent Neural Networks (RNN) for time-series data modeling [

22,

23,

24]. Each layer of the network utilizes the information from the previous layer, making it sensitive to sequence data. It can retain previous information to better understand the current information, but it cannot solve long-distance dependency issues. Gated Recurrent Unit (GRU) is based on RNN and also a chain-like neural network repeating module [

25]. GRU adds update gates and reset gates, combining them into one update gate to control information updating and flow, solving the long-term dependency problem. Long Short-Term Memory (LSTM) is similar to GRU but includes three gates: forget gate, input gate, and output gate [

26]. It uses a cell state and a hidden state to transmit information, which can better control what information is forgotten and what is remembered, thus more accurately controlling the transmission of long-term information. Bidirectional Long Short-Term Memory (BiLSTM) consists of forward LSTM and backward LSTM, solving the problem that LSTM can only predict from front to back and cannot predict from back to front.

The most commonly used methods for time series prediction currently include Recurrent Neural Network (RNN) architectures and their variants, particularly the combination of LSTM and other models. These approaches rely on the sequential order of time series data for prediction. The bidirectional LSTM-CRF model combines a bidirectional LSTM network with a Conditional Random Field (CRF) layer, utilizing LSTM to capture contextual dependencies in sequence data and CRF to manage dependencies between output labels, thereby enhancing the model’s robustness in sequence prediction tasks [

12,

27]. Mohammadi et al. combined a many-to-many LSTM (MTM-LSTM) and a Multilayer Perceptron (MLP), using MTM-LSTM to approximate the target at each step and MLP to integrate these approximations [

28]. Wang et al. proposed a Graph Attention Network (GAT) method based on LSTM for vehicle trajectory prediction, where LSTM was used to encode vehicle trajectory information, and GAT was employed to represent vehicle interactions [

29]. Ishida et al. integrated one-dimensional Convolutional Neural Networks (CNN) with LSTM to reduce input data size and improve computational efficiency and accuracy in hourly rainfall runoff modeling [

30]. Wanigasekara et al. applied Fast Multivariate Ensemble Empirical Mode Decomposition Convolutional LSTM (Fast MEEMD ConvLSTM) for sea surface temperature prediction [

31], where the Fast MEEMD method decomposed the SST spatiotemporal dataset [

32,

33]. Xu et al. proposed a novel approach for retrieving atmospheric temperature profiles using a Tree-structured Parzen Estimator (TPE) and a multi-layer perceptron (MLP) algorithm [

34]. Long Short-Term Memory Neural Network (LSTNet) leverages CNN to capture short-term patterns and LSTM or GRU to retain longer-term dependencies [

35]. Although these methods combine LSTM with other architectures, which offer inherent interpretability for sequential data such as language or speech, they exhibit poor interpretability for temporal data because the dependencies between time steps in the series are crucial, and the degree of reliance on variables at each time step varies. Some scholars have addressed this issue by introducing attention mechanisms. For instance, AT-LSTM assigns different weights to input features at each time step, effectively selecting relevant feature sequences as inputs to the LSTM neural network, using trend features for forecasting the next time frame [

36]. EA-LSTM uses evolutionary attention-based LSTM training with competitive random search, where shared parameters allow LSTM to incorporate an evolutionary attention learning approach [

37], which was ultimately applied to forecast PM2.5 levels and indoor temperatures in Beijing. These methods focus on enhancing the attention mechanism by quantitatively assigning attention weights to specific time steps within sequence features, enabling the model to prioritize key variables rather than time steps, thus addressing the instantaneous dispersion limitations of traditional LSTM models. However, these methods do not fully utilize temporal information, as the attention weights are solely based on the output of each time step, limiting their ability to explain temporal dependencies between different time steps. While they can distinguish the importance of various features at different time steps, they fall short in modeling temporal relationships. The Temporal Fusion Transformer (TFT) combines Transformers, LSTM, and other technological modules to incorporate time information as input features [

38]. The LSTM module captures short-term dependencies, while the self-attention mechanism handles long-term dependencies, enabling time information to influence the model’s predictions. However, TFT does not consider the temporal distance between elements in the time series data and the target prediction time.

The general approach of these methods using LSTM and its variants is to first sort the dataset by time, select a fixed-length sequence, and use one or more subsequent elements as the prediction value for that sequence. For instance, the first thirty elements in a dataset might be selected as a sequence, with the thirty-first element serving as the prediction value. Subsequently, the sliding window method is employed to sequentially select sequences and prediction values according to a set step size. Based on this approach, existing prediction models are divided into two categories: (1) the vast majority of models do not consider time and only focus on how to improve the expression of input features in the model, such as Fast MEEMD–ConvLSTM. However, the time intervals between consecutive elements are different, and these time intervals can range from a few days to several months. This approach is clearly inadequate for addressing complex prediction problems in reality. (2) A small number of models do consider time, but they only use time to strengthen the input features of the sequence, neglecting the temporal distance between sequence elements and the predicted target time, a relationship essential for identifying patterns such as periodicity, trends, and other temporal dynamics. Moreover, the extraction of time features is often insufficient, and the utilization of time information is not comprehensive, as seen in models like LSTNet. Therefore, these limitations restrict the model to making predictions solely based on the order of occurrence, allowing it to only answer questions such as “What will happen next?”. Consequently, the model performs poorly when tasked with prediction questions with explicit time, such as “What will happen on 21 May 2024 12:00:00?”

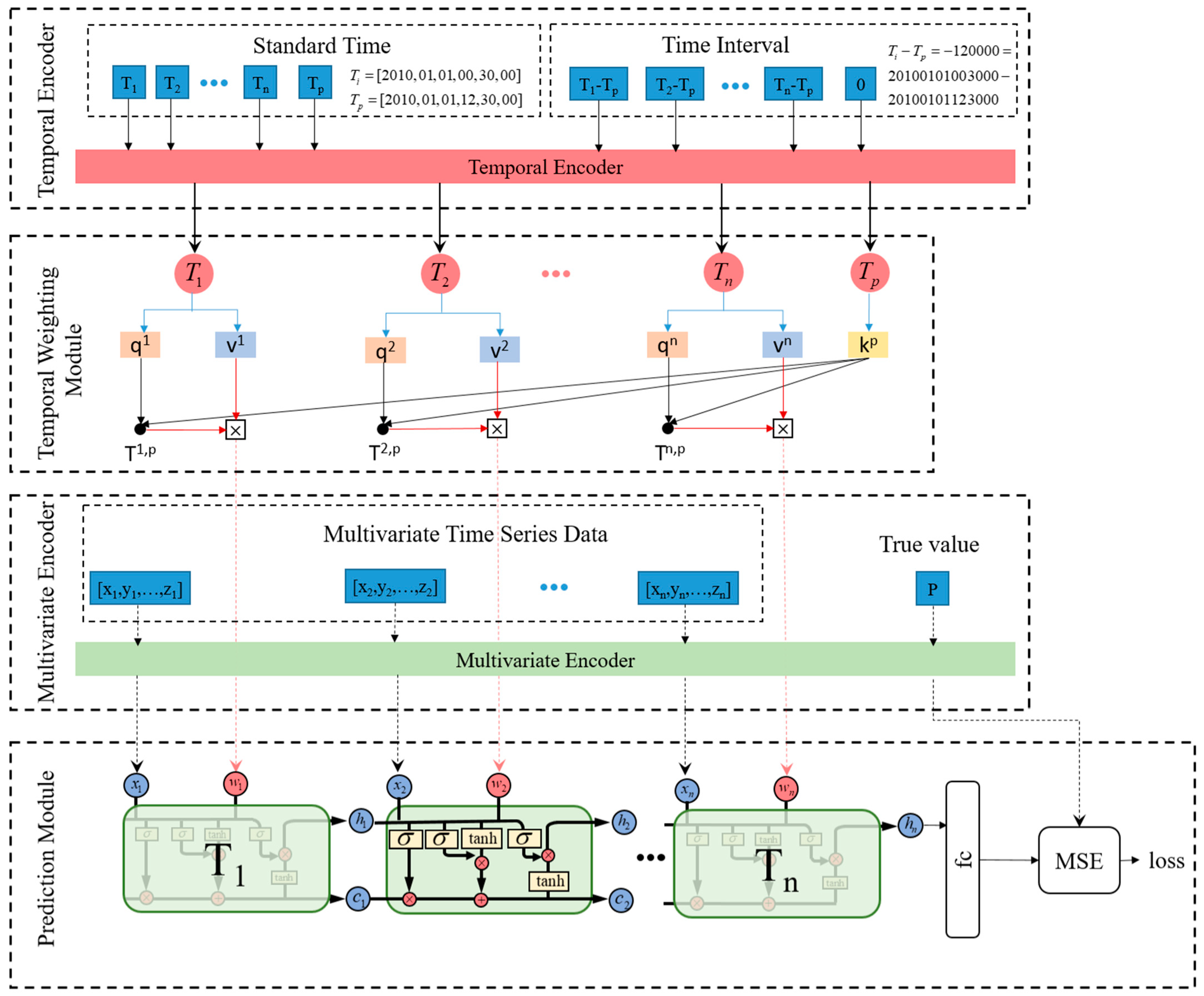

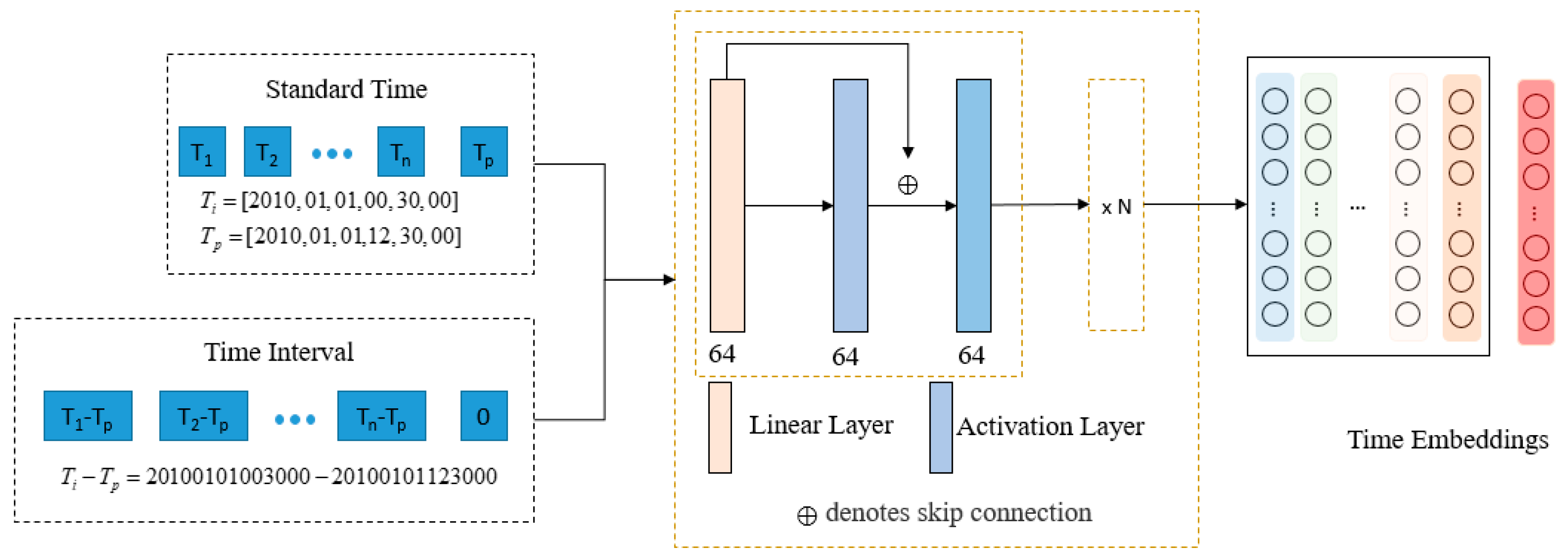

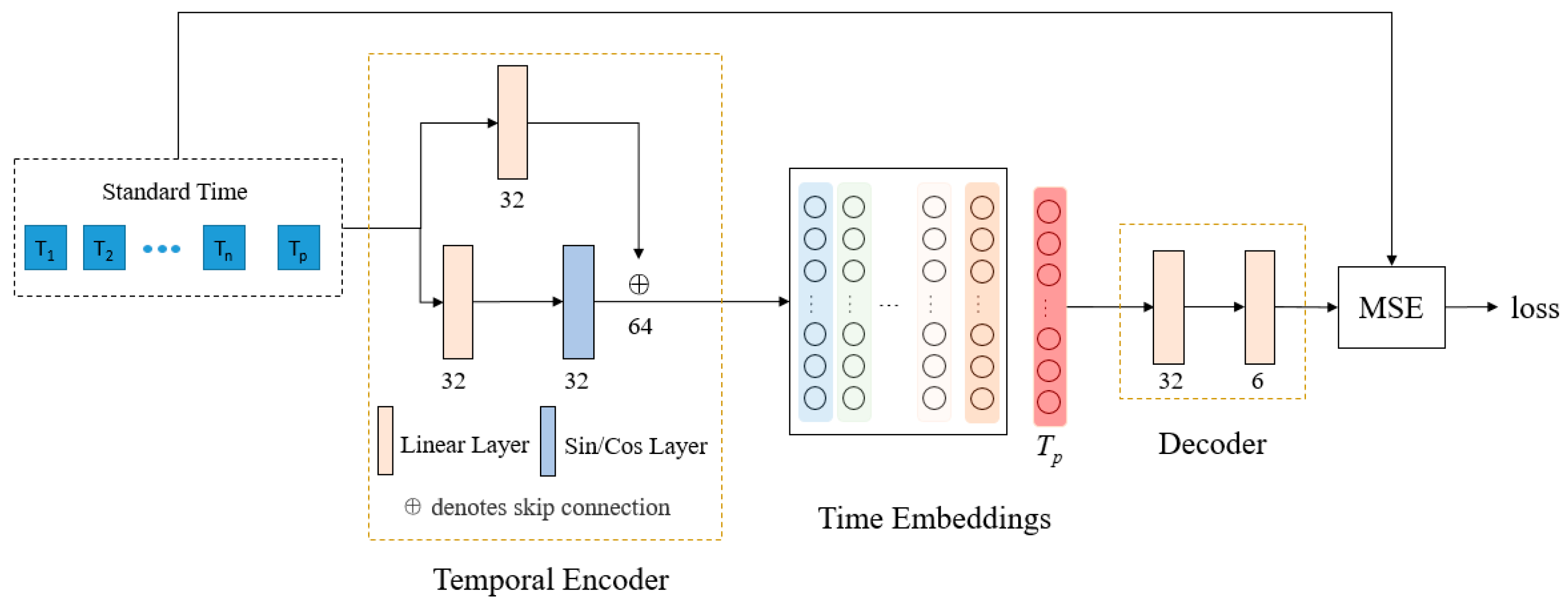

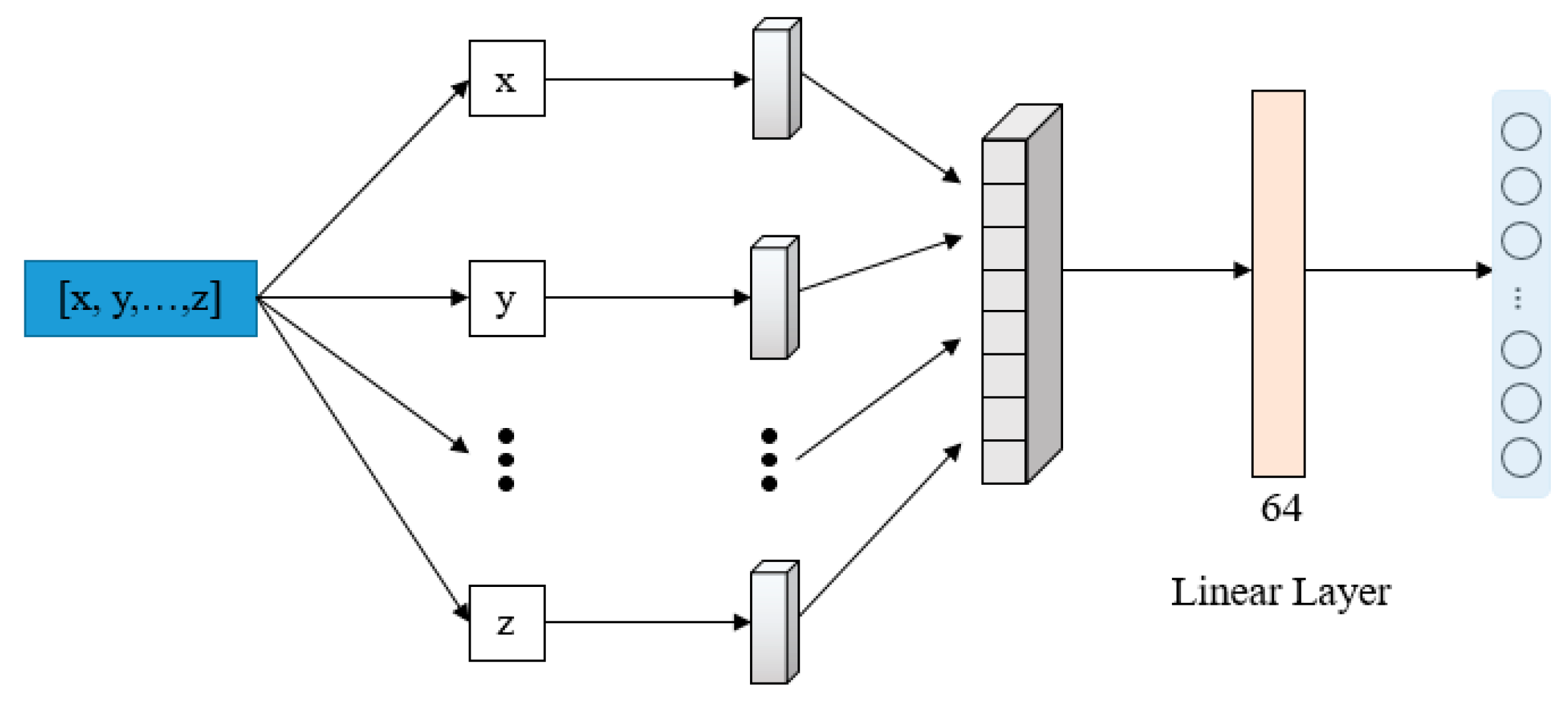

To address these issues, we propose TE-LSTM, an LSTM model that takes into account the temporal evolution of entities. This model is based on the LSTM model. First, to make full use of temporal information, we use two forms of time: the original time of the data and the time intervals between each element and the predicted target. The original time captures global patterns, while the time intervals capture local patterns. Then, we use FFN and Time2Vec to encode these two forms of time, fully extracting the temporal features. A temporal weighted strategy is employed to capture the time relationships between each element in the sequence and the target to be predicted. During each time step calculation of the LSTM, the corresponding temporal weights are integrated, replacing the original order positions to improve prediction accuracy. Finally, we analyze the model’s performance across different time granularities and validate its ability to answer prediction questions with explicit time.

Overall, this model incorporates time into the prediction process by combining LSTM with a temporal weighted strategy. By introducing a temporal weighted strategy, different temporal weights are dynamically calculated for each time step, which improves the model’s ability to process input sequences while enabling the model to consider the temporal information corresponding to these time steps and the temporal relationship with the target to be predicted. This combination not only demonstrates strong performance and flexibility in handling complex temporal tasks but also improves interpretability, enabling the model to excel in various tasks that require sequence data processing.

3. Results and Discussion

We use probability (P) (P = 1 − MSE) as the evaluation metric.

3.1. Verify the Accuracy of TE-LSTM under Different Temporal Encoders

To verify the role of time in the model, we select the X-th (X = {1, 2, 3}) element after the sequence as the prediction value. Under the same conditions, the predicted data have the same occurrence order, but the time and time interval are different. For standard time, we use both FFN and Time2Vec time encoding methods, while for time interval, only FFN encoding is applicable. The model results are shown in

Table 2.

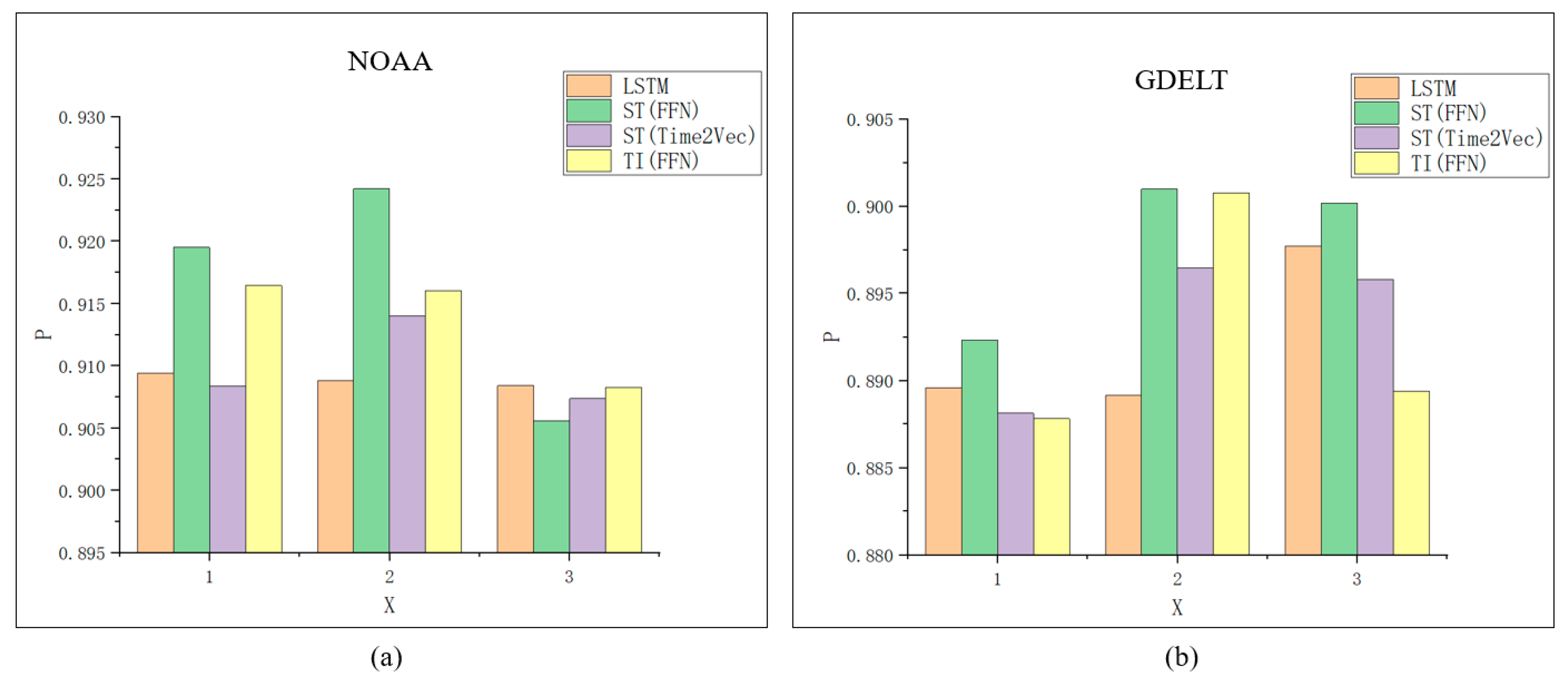

As shown in

Figure 7, for the NOAA dataset, ST (FFN) demonstrates the best performance, with only a slight decrease in P at X = 3. In contrast, ST (Time2Vec) exhibits the worst performance, with a minor increase in P at X = 2. According to

Table 2, when X = 1, ST (FFN) results increased by 1.0083%, TI (FFN) results increased by 0.7034%, and ST (Time2Vec) results decreased by 0.1045%. When X = 2, ST (FFN) results increased by 1.5413%, TI (FFN) results increased by 0.7229%, and ST (Time2Vec) results increased by 0.5175%. When X = 3, the performance of all three methods slightly decreased: ST (FFN) results decreased by 0.2841%, TI (FFN) results decreased by 0.0153%, and ST (Time2Vec) results decreased by 0.1049%. On average, ST (FFN) shows a mean increase of 0.7552%, TI (FFN) shows a mean increase of 0.4703%, and ST (Time2Vec) shows a mean increase of 0.1027%. For the GDELT dataset, ST (FFN) again demonstrates the best performance, while TI (FFN) performs the worst. According to

Table 2, when X = 1, ST (FFN) results increased by 0.2743%, TI (FFN) results decreased by 0.1748%, and ST (Time2Vec) results decreased by 0.1442%. When X = 2, ST (FFN) results increased by 1.1818%, TI (FFN) results increased by 1.1598%, and ST (Time2Vec) results increased by 0.7296%. When X = 3, ST (FFN) results increased by 0.2456%, TI (FFN) results decreased by 0.8327%, and ST (Time2Vec) results decreased by 0.1899%. On average, ST (FFN) shows a mean increase of 0.5672%, TI (FFN) shows a mean increase of 0.0508%, and ST (Time2Vec) shows a mean increase of 0.1318%.

In conclusion, incorporating temporal information significantly enhances model prediction accuracy, with ST (FFN) being the most effective method for encoding time. The use of standard time facilitates the precise location of elements on the time axis, enabling the capture of comprehensive patterns, and is suitable for various types of datasets. Time interval captures the relative position between the time of elements in the current sequence and the target element, focusing solely on the evolution pattern of the sequence itself. This method is particularly suitable for datasets with short-term and periodic changes. In the NOAA dataset, where temperature is the prediction target, the periodic nature of temperature changes makes the TI (FFN) method more appropriate. Experimental results confirm the superiority of TI (FFN) in capturing periodicity. However, the TI (FFN) results still fall short compared to ST (FFN), indicating that the local information-focused approach of time interval is not the optimal solution. In the era of big data, datasets are characterized by large volumes, long time spans, and rich temporal information, necessitating comprehensive global information for more accurate predictions. The GDELT dataset, which uses events as prediction results, exhibits greater uncertainty, rendering the local pattern-focused TI (FFN) the worst performer. Therefore, for TE-LSTM, regardless of the type of dataset, the ST (FFN) temporal encoder can be used.

3.2. Verify the Accuracy of TE-LSTM under Different Time Granularities

The results of Experiment 1 verified the necessity and reliability of incorporating time information. Next, we evaluated the model’s performance under different time granularities. For NOAA data with richer time information, we set X = 1 and established five different time granularities G = {1, 2, 3, 6, 12}. For GDELT data, we set X = 1 and established three different time granularities G = {1, 2, 3}, due to the lesser time information in each sequence of GDELT data. Elements within the same time granularity are assigned the same time. For example, with a time granularity of G = 2 days, if 1 January 1979 and 2 January 1979 fall within the same time granularity, all elements within these two days are set to 1 January 1979. This approach is used to verify the model’s performance under different time granularities. The experimental results using the NOAA dataset are shown in

Table 3.

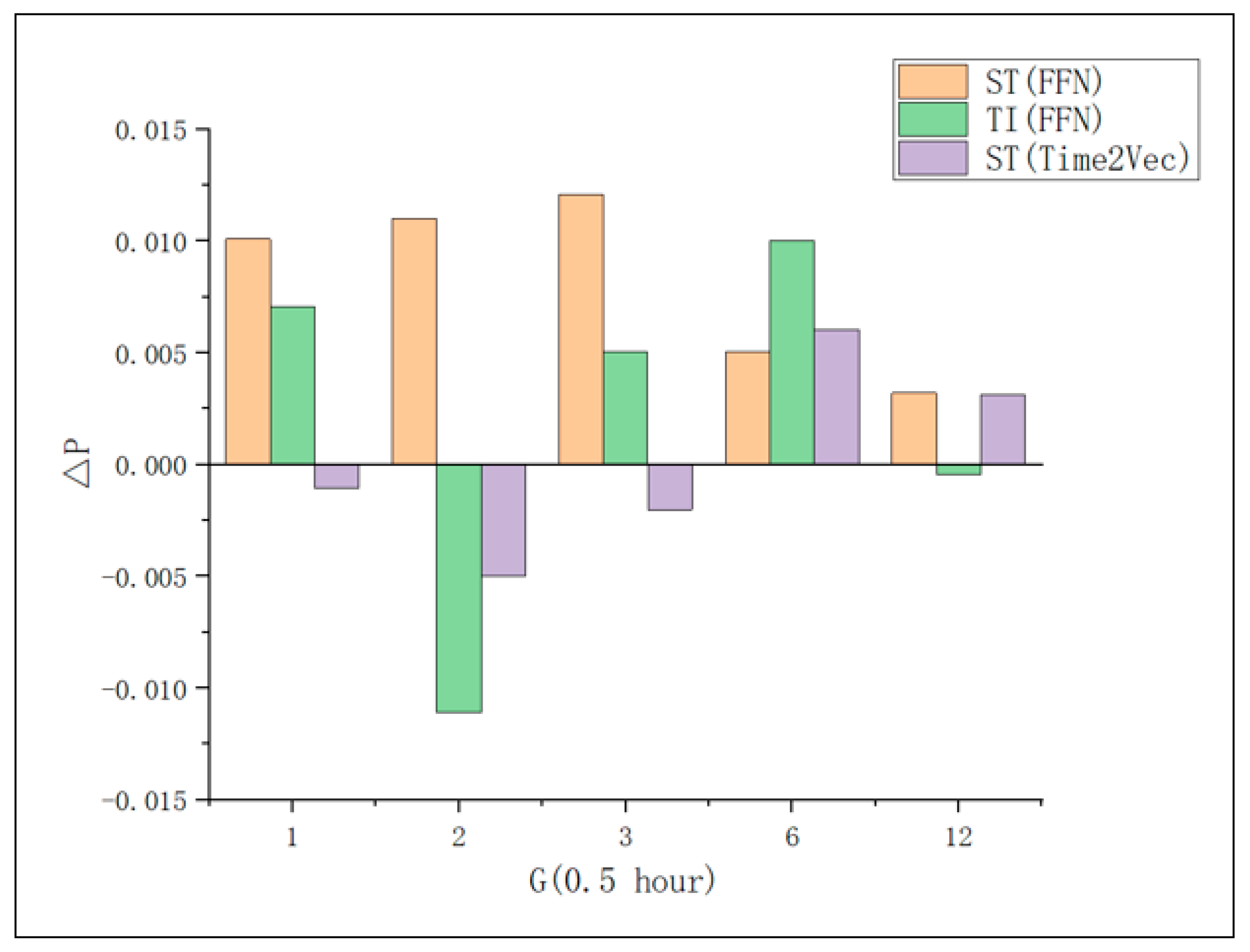

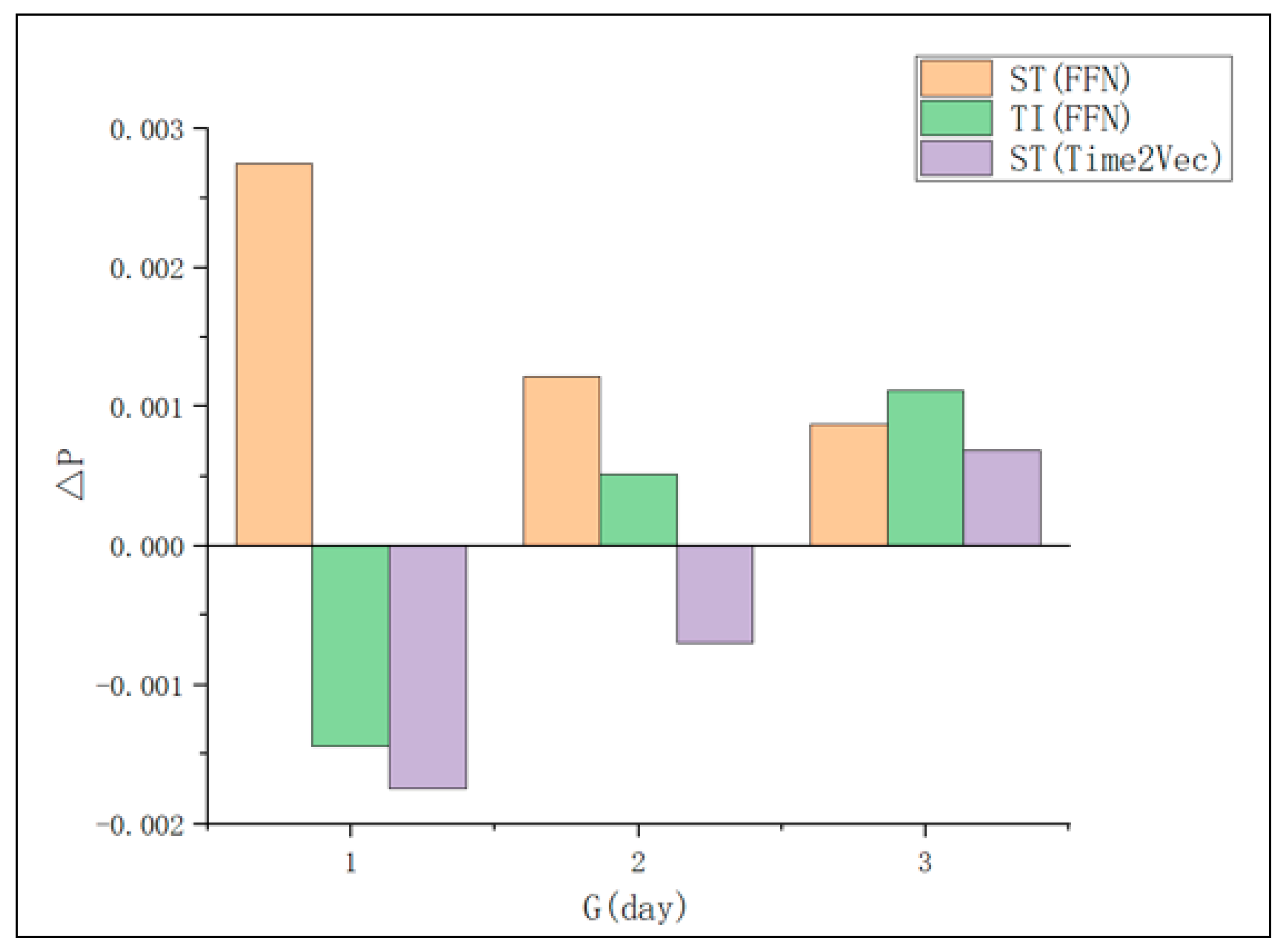

To comparatively analyze the impact of different time granularities on the model’s accuracy, we examined the probability changes between TE-LSTM, using different encoding methods, and LSTM at the same time granularity.

From

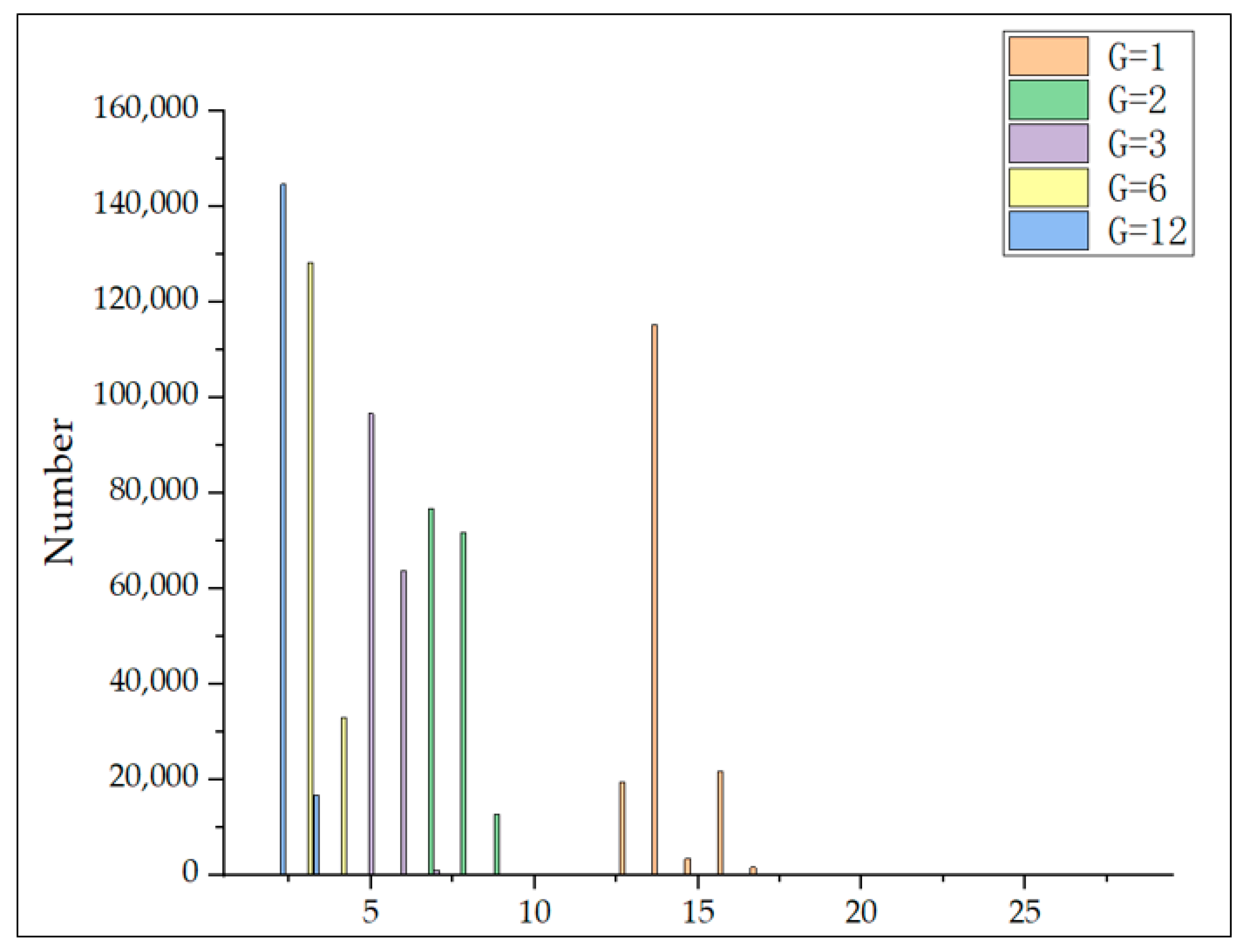

Figure 8, it can be observed that as the time granularity increases, regardless of whether time fusion improves or degrades accuracy, the magnitude of change becomes smaller. This indicates that the influence of time in the model decreases. When analyzing the dataset itself, the multivariate time sequence length was set to 30 during dataset creation. We counted the occurrences of different time information in the sequences at various time granularities, as shown in

Figure 9. The number of different times in the sequence is defined as follows: If a sequence contains 3 occurrences of 12:00:00 on 21 May 2024, 5 occurrences of 13:00:00 on 21 May 2024, 10 occurrences of 14:00:00 on 21 May 2024, and 2 occurrences of 15:00:00 on 21 May 2024, then this sequence has four non-repeating times.

From

Figure 9, it can be observed that when G = 1, the time quantity is concentrated around 14; when G = 2, the time quantity is concentrated around 8; when G = 3, the time quantity is concentrated around 5; when G = 6, the time quantity is concentrated around 3; and when G = 12, the time quantity is concentrated around 2. As the time granularity increases, the number of time points in the sequence gradually decreases, leading to a decline in the richness of time information.

For ST (FNN), the pattern is captured through the absolute position of time. When the richness of time decreases, the model accuracy begins to decline. For ST (Time2Vec) and TI (FNN), both methods can capture periodicity. When the time granularity is small, too much time information introduces noise, making it difficult to capture patterns. As the time granularity increases, the model accuracy begins to slowly rise. Therefore, when the appropriate time granularity is chosen, the accuracy of these two methods will outperform the base model.

In summary, changes in time granularity significantly affect model accuracy. Appropriate time granularity can significantly enhance model performance. For ST (FNN), smaller time granularity yields better results, and larger time granularity should be avoided. For ST (Time2Vec) and TI (FNN), the model performs poorly with smaller time granularity. In this experiment, with a sequence length of 30, a time granularity of 6 or 12 is suitable, ensuring the number of time points in the sequence is around 3.

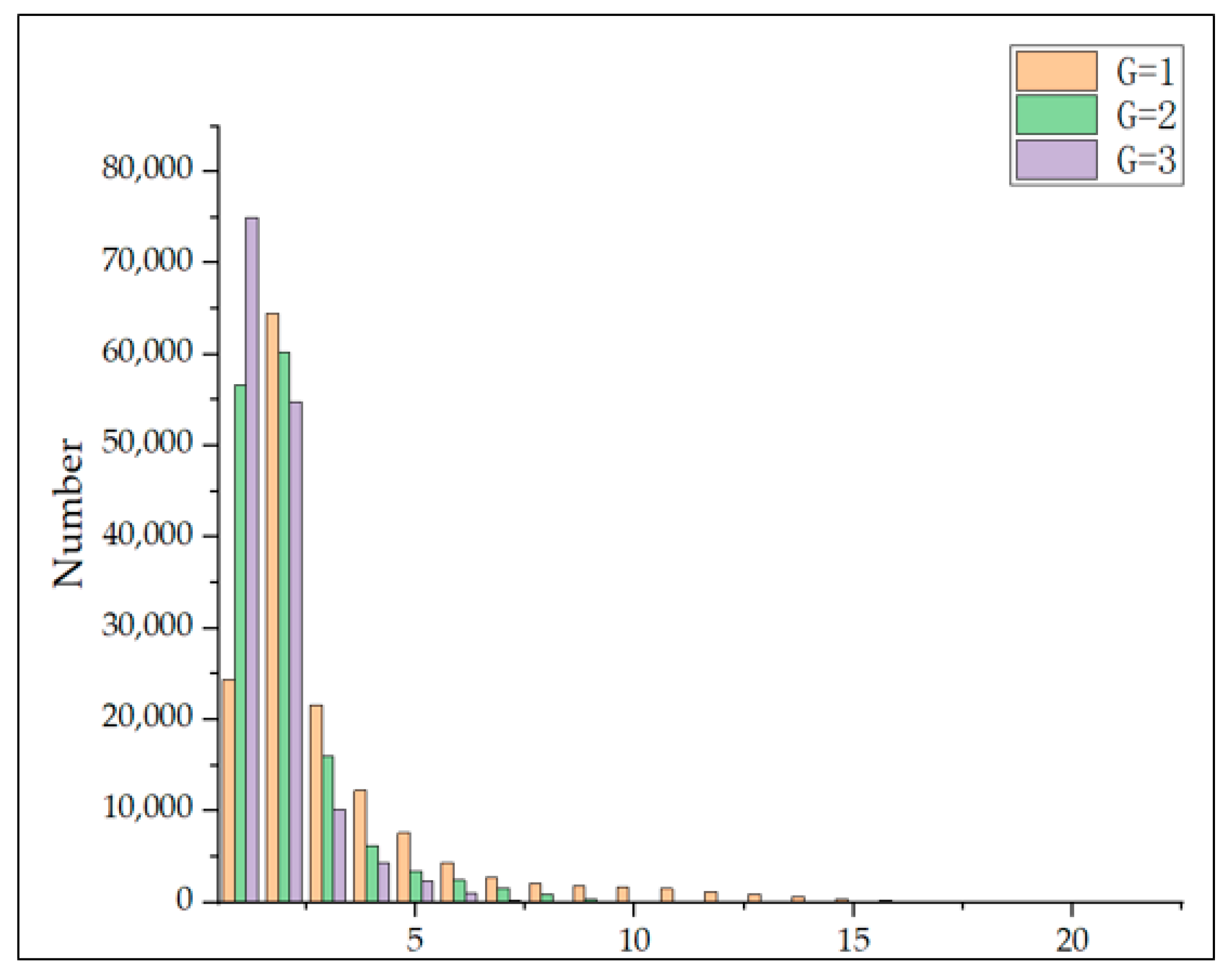

The experimental results using the GDELT dataset are shown in

Table 4. We also examined the probability changes, as shown in

Figure 10. From

Figure 10, it can be observed that although the GDELT data do not exhibit a clear periodicity, the results of all three methods show a consistent pattern with those obtained using NOAA data. Similarly, the quantity of different time points in the sequence under different time granularities is also counted, as shown in

Figure 11. When the complexity of time information is low, ST (Time2Vec) and TI (FNN) perform well. When the complexity of time information is high, ST (FFN) performs well.

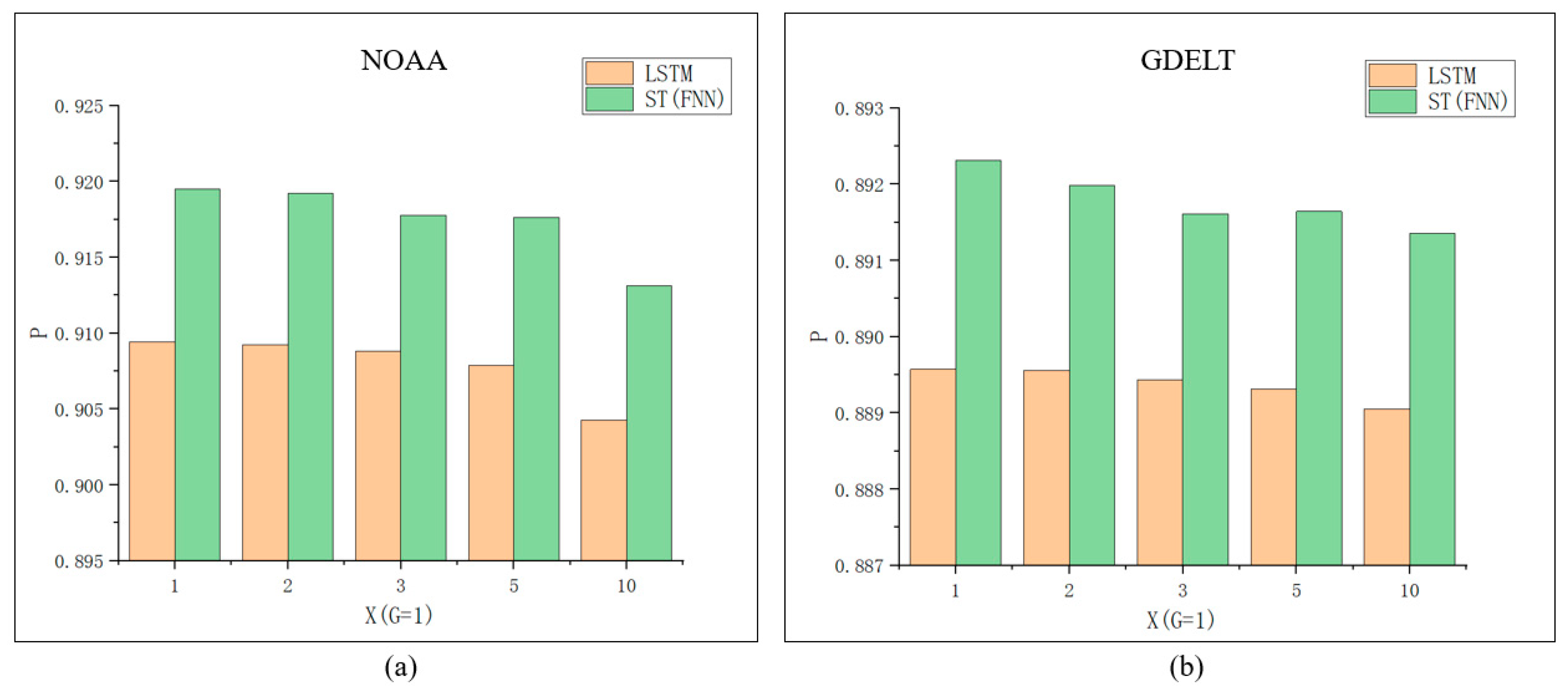

3.3. Verify the Performance of TE-LSTM in Predicting Questions with Specific Times

We aimed to address the issue where existing models perform well in answering questions like “What will happen next?” but perform poorly in answering questions with specific times such as “What will happen on 21 May 2024 12:00:00?” Therefore, the model trained with X = 1, G = 1 was used as the baseline model. Then, this trained baseline model was used to predict data with X = {2, 3, 5, 10}, G = 1. When X is determined, the prediction target relative to the sequence order will be fixed, but the time of the prediction target will vary, and so will the time interval distance from the sequence. Therefore, this approach can be used to evaluate the performance of the proposed method in answering predictive questions with specific times. The results using the model trained with X = 1, G = 1 for X = {2, 3, 5, 10}, G = 1 data are shown in

Table 5.

From the previous two experiments, we know that when X = 1, G = 1, ST (FFN) performs the best. Therefore, we only analyzed the comparison between TE-LSTM using ST (FFN) temporal encoder and LSTM. From

Figure 12, for both two datasets, it can be seen that the performance of the ST (FFN) is significantly better than the baseline model. As the prediction steps increase, the probability P of the LSTM model shows a decreasing trend, indicating that the prediction results become less accurate. For the NOAA dataset, the ST (FFN)’s results remain stable around 91.55%. For GDELT data, the ST (FFN)’s results remain stable around 89.2%. This stability is because the ST (FFN) calculates the weight of each element’s time relative to the target’s time, capturing the time interval positions of each element with respect to the target element. This significantly enhances the results. However, when X = 10, both methods show a slight decrease. This is because the target to be predicted is too far from the corresponding sequence, weakening the regularity and correlation between the sequence and the target element. The role of temporal weight as an auxiliary enhancement diminishes. In contrast, LSTM treats all targets to be predicted as an element occurring at X = 1, and thus the prediction accuracy decreases. This result proves the excellent performance of TE-LSTM in answering questions with specific time predictions.

3.4. Comparison of TE-LSTM with State-of-the-Art (SOTA) Methods and Validation of TE-LSTM Performance on LSTM Variant Models

We chose two SOTA methods, TFT and Informer [

38,

46]. TFT is a hybrid model that combines the Transformer architecture with traditional LSTM, enabling it to capture long-term dependencies while handling both dynamic and static features. Moreover, TFT provides built-in interpretability, which is advantageous for understanding model predictions. Informer, an efficient Transformer variant specifically designed for long-term time series forecasting, reduces computational complexity through a sparse self-attention mechanism, significantly enhancing the efficiency of long-term series predictions. We first verified the ability of the three models to predict targets at different locations. In this experiment, the time granularity G is set to 1, and TE-LSTM employs the ST (FFN) time encoder, which demonstrated the best performance at this granularity. The final results are presented in

Table 6.

From

Table 6, it can be observed that when using the NOAA dataset, the average accuracy of TE-LSTM is 0.916434, TFT is 0.912098, and Informer is 0.914771, with TE-LSTM showing the highest accuracy. When using the GDELT dataset, the average accuracy of TE-LSTM is 0.897824, TFT is 0.885061, and Informer is 0.898338, with Informer achieving the highest accuracy. TE-LSTM’s accuracy is 0.051433% lower than Informer’s. TE-LSTM is more suitable for datasets that exhibit regularity and simplicity. For datasets characterized by higher randomness and more complex patterns, traditional LSTM architectures and their variants tend to perform worse than Transformer architectures and their variants. This is because Transformers have a stronger capacity to capture long-range dependencies compared to LSTM. Due to its recursive nature, LSTM is more adept at capturing short-term dependencies and local patterns, such as trends and periodicity, which also explains why TE-LSTM outperforms Informer on datasets with certain periodic patterns. The GDELT dataset, with its high degree of randomness and weak local patterns, requires the ability to capture patterns over a longer time span. Therefore, Informer, with its superior ability to capture long-range dependencies, achieves the highest accuracy. However, across both datasets, TE-LSTM consistently outperforms TFT, indicating the effectiveness of TE-LSTM’s weighting strategy.

We also compare the ability of these three models to answer time-specific prediction questions, and the results are presented in

Table 7. Neither the TFT nor Informer methods take into account the distance relationship between each time step in the time series and the target time to be predicted. When using a pre-trained model with X = 1 and G = 1 data to predict different positions, the model cannot adaptively adjust based on the predicted target time. As the predicted position increases, the model’s accuracy continues to decline, and the rate of decline is relatively rapid. TE-LSTM, on the other hand, uses the time weights of each time step and the predicted target time, instead of relying solely on the time sequence, which directly impacts the prediction results. This allows the model to make adaptive predictions based on the predicted target time, even when the predicted position changes. As a result, even if TE-LSTM’s pre-training accuracy is initially lower than Informer’s, its accuracy ultimately surpasses Informer’s as the predicted position increases. This demonstrates the effectiveness of the time-weighting method proposed via TE-LSTM, showcasing its superior performance in addressing time prediction problems with clear temporal constraints.

TE-LSTM uses LSTM as the base model and integrates time information into the prediction process, ultimately improving prediction accuracy. The model is not combined with other methods and has a relatively simple architecture. However, it is precisely this characteristic that allows TE-LSTM to be applied across all LSTM variant models. TE-LSTM can directly replace the LSTM module in these variants. In this study, we selected TFT as the base model and replaced the original LSTM module in TFT with TE-LSTM to evaluate the performance of different LSTM architectures and their variants under TE-LSTM. The experimental results are presented in

Table 8. After replacing the original LSTM module in TFT with TE-LSTM, a slight improvement in model accuracy was observed. This demonstrates TE-LSTM’s strong compatibility, as it can be integrated into any LSTM variant model to enhance prediction accuracy.

3.5. Discussion

In this study, we introduce an innovative multivariate time series data prediction model: TE-LSTM, an LSTM variant that considers the temporal evolution of entities. To address the limitations of existing models that do not fully utilize the time dimension, we designed multiple time encoding methods for comparative experiments to comprehensively extract temporal features. Additionally, to account for the relationship between the time of each element in the time series and the predicted target time, we developed a time-weighting strategy that calculates the weight between each element’s time and the predicted target time. This weight is then incorporated into each time step, replacing the original sequence order, enabling the model to handle prediction tasks with explicit temporal constraints. Our study primarily focuses on temperature prediction, and we also validated the model’s performance on non-periodic and complex datasets using the GDELT dataset.

To validate the effectiveness of TE-LSTM, we predicted targets at different positions and compared the performance of three time encoders, as shown in

Table 2. TE-LSTM, based on LSTM, leverages LSTM’s recursive nature to capture local changes in the sequence. The ST (FFN) encoder, which captures the absolute position of time, focuses on global changes, balancing long- and short-term dependencies. This made it suitable for various datasets, as demonstrated by its strong performance across both datasets. On the other hand, the TI (FFN) encoder uses time intervals between each element in the series and the predicted target time, focusing on local changes and strengthening the capture of short-term dependencies. This led to its particularly strong performance on the NOAA dataset, which exhibits periodicity, but it had weaker performance on the GDELT dataset, which lacks such periodic structures.

In environmental and meteorological research, time granularities of hours, days, or larger intervals can reveal vastly different patterns of change. Coarser time granularity may obscure key precursor changes, while finer granularity may introduce excessive noise, making it difficult to identify long-term trends. After verifying TE-LSTM’s effectiveness, we further investigated the impact of temporal richness on the results. We employed multi-scale analysis methods, exploring a range of time granularities from coarse to fine. Our findings indicate that the ST (FFN) method exhibits a negative correlation with time granularity, where larger granularities result in lower model accuracy. Consequently, ST (FFN) is more appropriate for datasets with rich temporal information. Both TI (FFN) and ST (Time2Vec) effectively capture periodicity, but they are less suitable when temporal information is abundant. As temporal granularity increases, the performance of these two methods initially improves but then declines, reaching peak accuracy at an optimal granularity. Therefore, selecting the appropriate time granularity is crucial for their application.

We also assessed the model’s ability to address time-specific prediction questions by pre-training it using a dataset with fixed prediction locations and then applying this pre-trained model to predict targets at other locations. The experimental results demonstrate that as the number of predicted positions increases, the accuracy of the TE-LSTM model remains relatively stable, with only a gradual decline, as shown in

Table 5. Moreover, the performance of TE-LSTM was further validated by comparing it with SOTA methods such as TFT and Informer, as shown in

Table 6 and

Table 7. While TE-LSTM proved to be more effective for datasets with certain regularities, such as NOAA data, its accuracy on more complex and irregular datasets was lower than that of Informer. This difference is attributable to the inherent characteristics of TE-LSTM, which is based on LSTM. However, these characteristics also give TE-LSTM strong compatibility and applicability, allowing it to be integrated into various LSTM-based models. In this study, we replaced the original LSTM module in TFT with TE-LSTM, resulting in improved prediction accuracy and confirming the model’s compatibility and scalability.

The ability of TE-LSTM to answer time-specific prediction questions makes it applicable to multiple fields. In meteorological forecasting, for instance, it can be used to predict changes in temperature, rainfall, wind speed, and other meteorological variables at specific time points. This capability is critical for natural disaster warnings, such as for typhoons and rainstorms, as well as for agricultural production planning. Similarly, in environmental monitoring and disaster management, precise time prediction can forecast the occurrence of natural disasters such as earthquakes, landslides, and floods. This improves emergency response efficiency, minimizes losses, and provides a scientific basis for the formulation of more targeted response strategies. Additionally, in logistics and supply chain management, accurate time prediction can optimize transportation routes and inventory management, ensuring goods are delivered at the optimal time, thus reducing logistics costs and enhancing operational efficiency.

This study also has certain limitations. First, we were unable to obtain long-term data on temperature-related indicators such as solar radiation, wind speed, and wind direction. This limitation has a slight impact on the accuracy of temperature modeling. In future research, we aim to expand the inclusion of these indicators to improve the accuracy of the model’s temperature prediction. Additionally, we were unable to find datasets with ultra-long time spans, such as those related to geological evolution, to validate the model’s applicability. Datasets of this type require extended time periods to reveal accurate patterns. Since this method is based on LSTM, which excels at capturing short-term dependencies due to its recursive nature, its performance on datasets requiring long-term dependencies remains uncertain. Further validation is needed to accurately determine the model’s scope of applicability in such cases. Second, the study did not propose a unified method for determining the most appropriate time granularity. In environmental, meteorological, or geological research, the choice of time granularity—whether hours, days, or longer intervals—can reveal vastly different patterns of change. The optimal time granularity varies across different fields and requirements. Therefore, establishing a recommended framework or standard for selecting the appropriate time granularity based on data characteristics, research objectives, and prior experience is necessary to minimize the uncertainty caused by arbitrary selection. Additionally, the model did not consider spatial location, which can also affect prediction outcomes. Incorporating spatial information to develop spatiotemporal prediction models would be a promising direction for future research.