Photovoltaics Plant Fault Detection Using Deep Learning Techniques

Abstract

:1. Introduction

2. Materials and Methods

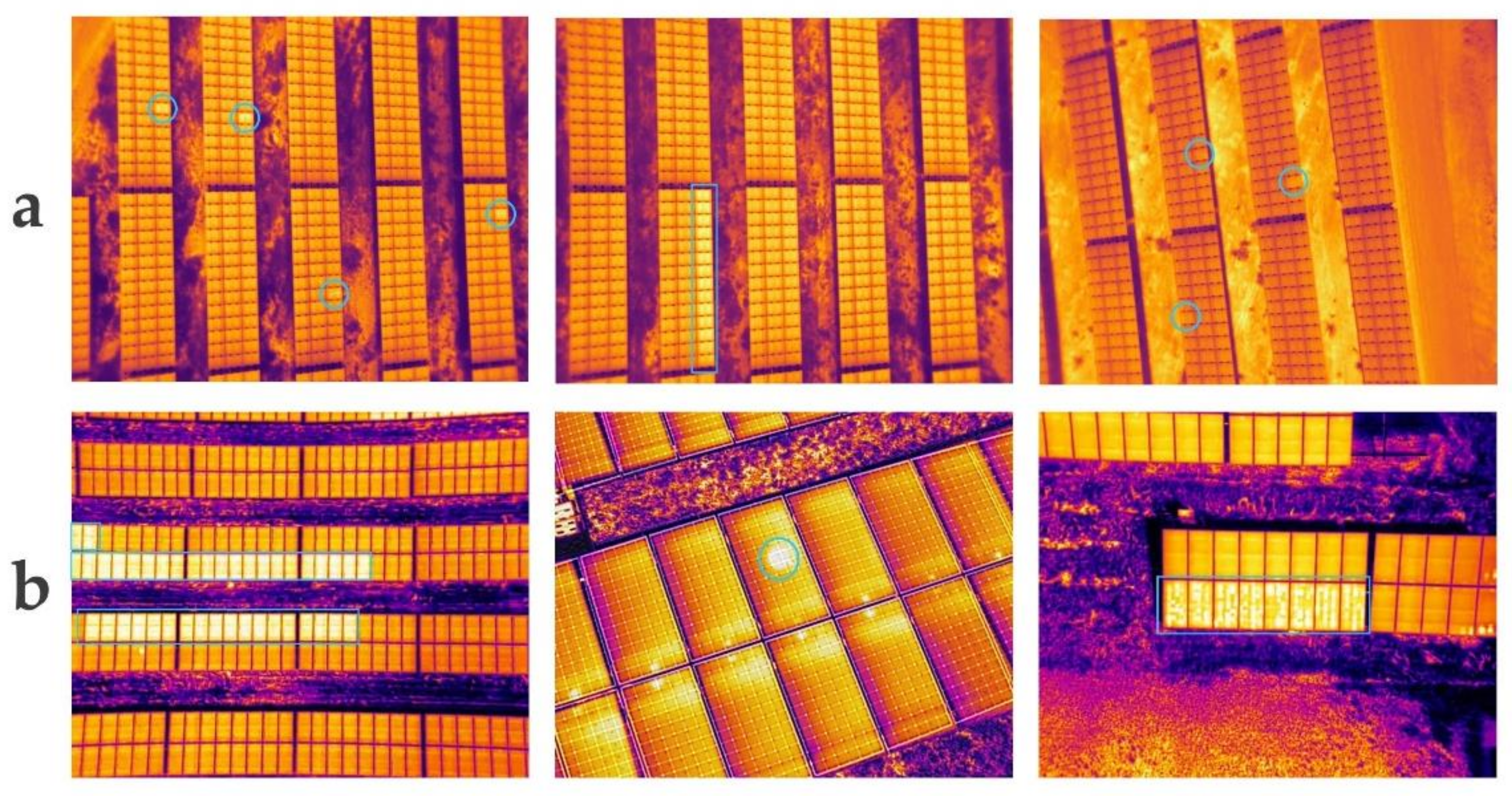

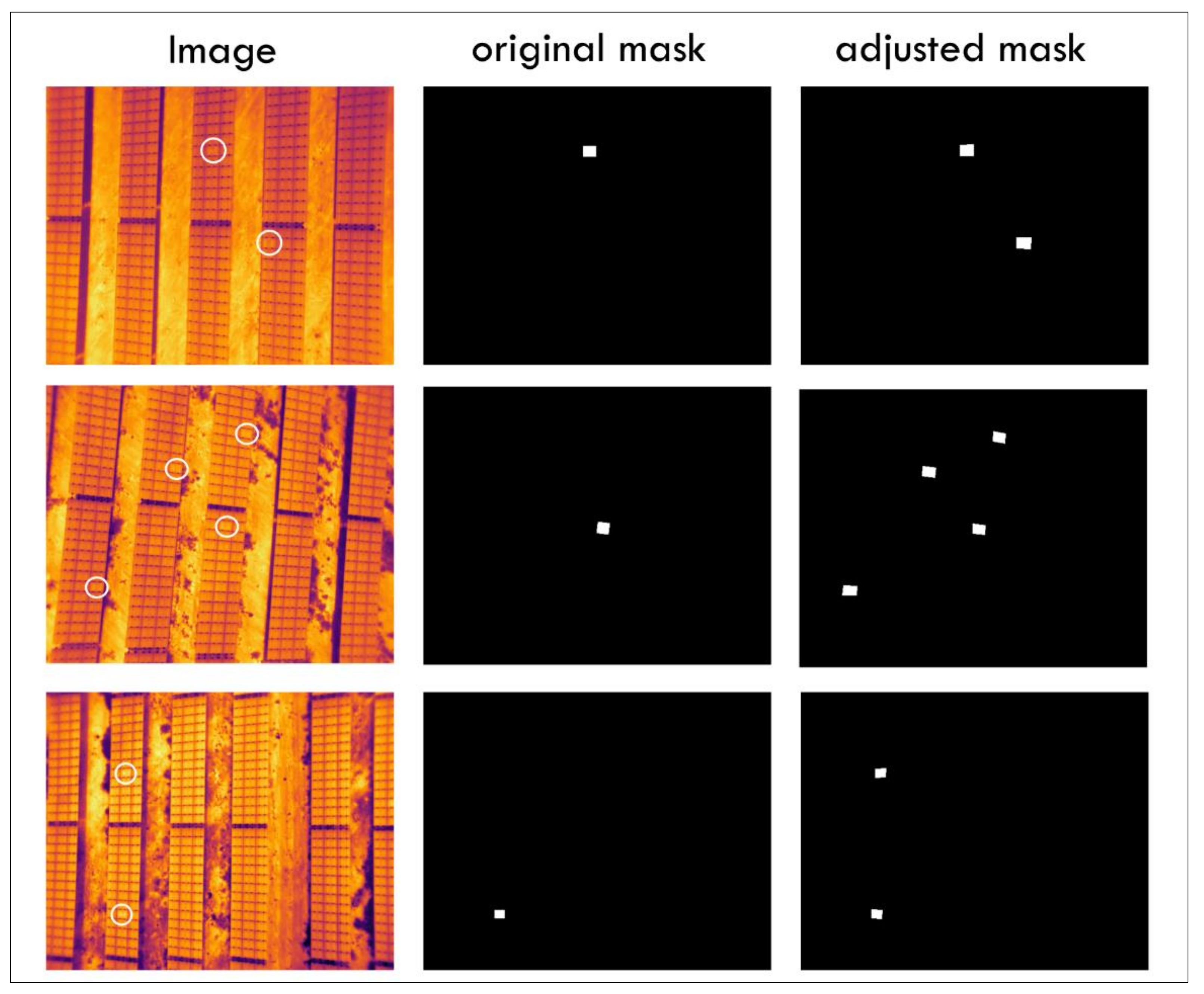

2.1. Dataset

2.2. Photovoltaics Plant Faults Segmentation Using Deep Learning

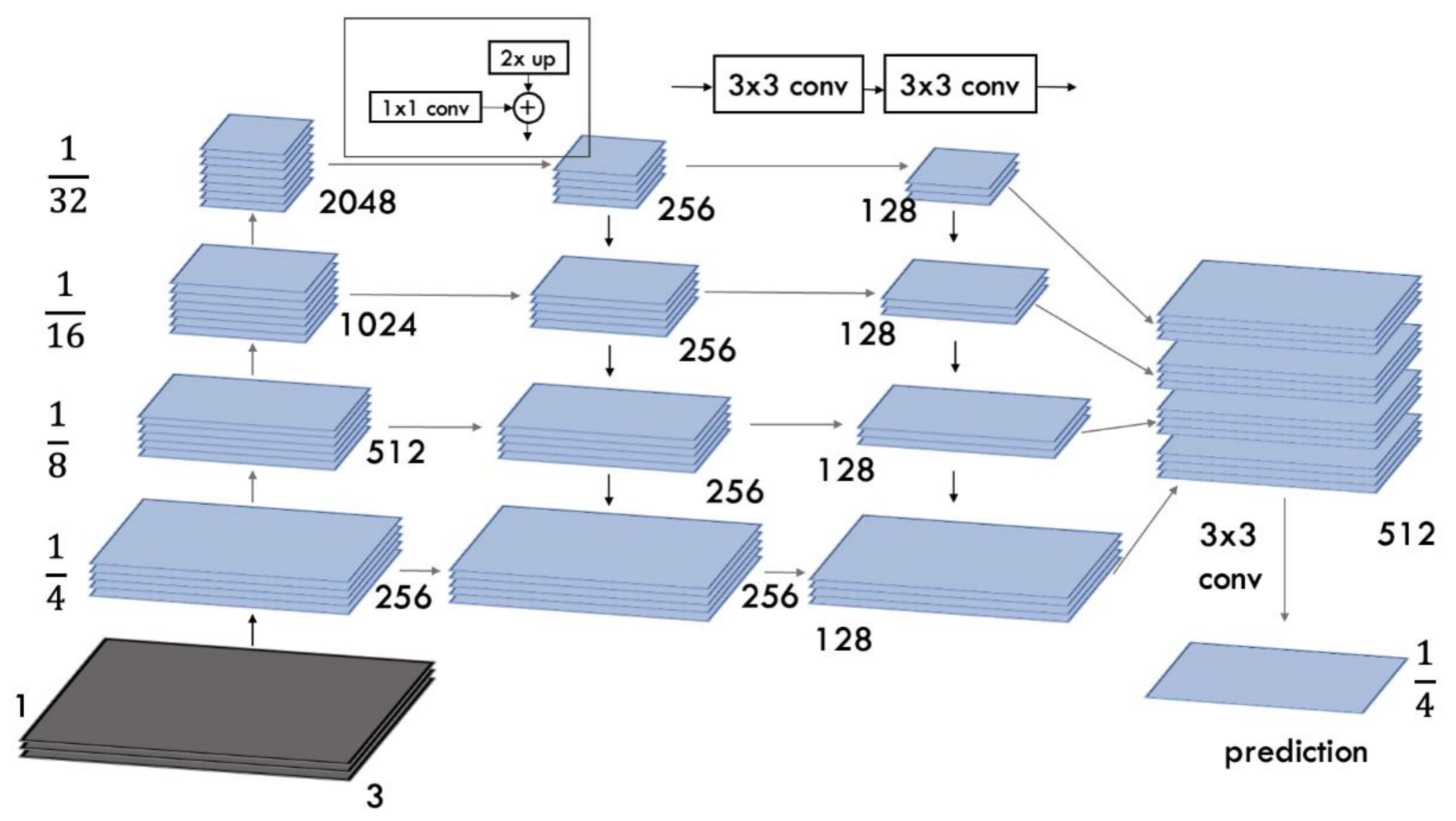

2.2.1. FPN

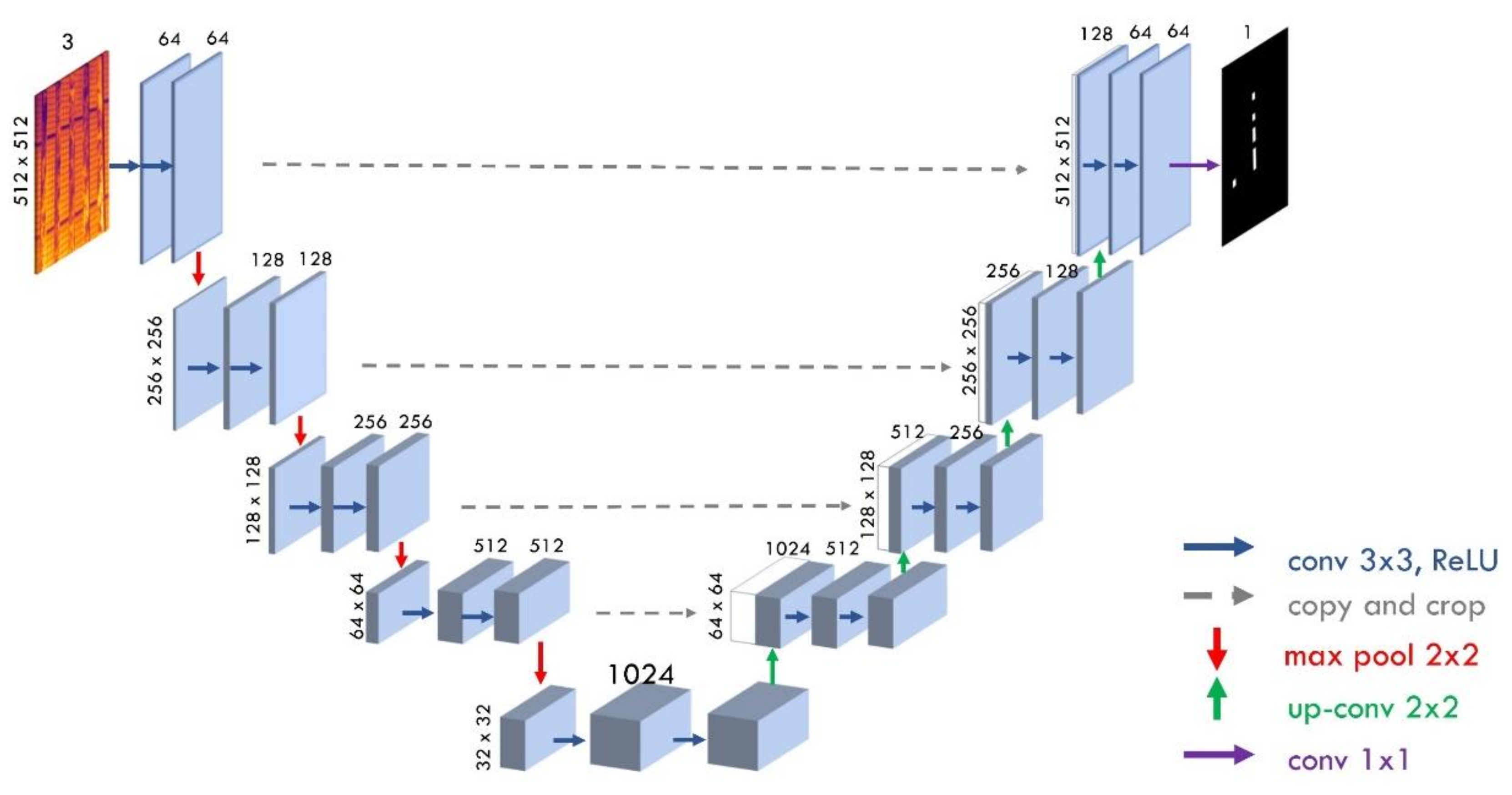

2.2.2. U-Net

2.2.3. DeepLabV3+

3. Results and Discussion

3.1. Performance Evaluation

3.2. Visualization Results of Solar Plant Fault Detection Experiments

4. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Zhou, D.; Zhou, T.; Tian, Y.; Zhu, X.; Tu, Y. Perovskite-based solar cells: Materials, methods, and future perspectives. J. Nanomater. 2018, 2018, 8148072. [Google Scholar] [CrossRef]

- Dhanraj, J.A.; Mostafaeipour, A.; Velmurugan, K.; Techato, K.; Chaurasiya, P.K.; Solomon, J.M.; Gopalan, A.; Phoungthong, K. An effective evaluation on fault detection in solar panels. Energies 2021, 14, 7770. [Google Scholar] [CrossRef]

- Köntges, M.; Kurtz, S.; Packard, C.E.; Jahn, U.; Berger, K.; Kato, K.; Friesen, T.; Liu, H.; Van Iseghem, M.; Wohlgemuth, J.; et al. Review of Failures of Photovoltaic Modules; Report EAI-PVPS T13-01:2014; International Energy Agency: Paris, France, 2014. [Google Scholar]

- Lee, D.H.; Park, J.H. Developing inspection methodology of solar energy plants by thermal infrared sensor on board unmanned aerial vehicles. Energies 2019, 12, 2928. [Google Scholar] [CrossRef] [Green Version]

- Shihavuddin, A.S.M.; Rashid, M.R.A.; Maruf, M.H.; Hasan, M.A.; ul Haq, M.A.; Ashique, R.H.; Al Mansur, A. Image based surface damage detection of renewable energy installations using a unified deep learning approach. Energy Rep. 2021, 7, 4566–4576. [Google Scholar] [CrossRef]

- Deitsch, S.; Christlein, V.; Berger, S.; Buerhop-Lutz, C.; Maier, A.; Gallwitz, F.; Riess, C. Automatic classification of defective photovoltaic module cells in electroluminescence images. Sol. Energy 2019, 185, 455–468. [Google Scholar] [CrossRef] [Green Version]

- Elmeseiry, N.; Alshaer, N.; Ismail, T. A detailed survey and future directions of unmanned aerial vehicles (uavs) with potential applications. Aerospace 2021, 8, 363. [Google Scholar] [CrossRef]

- An Overview of Semantic Image Segmentation. Available online: https://www.jeremyjordan.me/semantic-segmentation/ (accessed on 27 September 2021).

- Brostow, G.J.; Fauqueur, J.; Cipolla, R. Semantic object classes in video: A high-definition ground truth database. Pattern Recognit. Lett. 2009, 30, 88–97. [Google Scholar] [CrossRef]

- Lee, M.Y.; Bedia, J.S.; Bhate, S.S.; Barlow, G.L.; Phillips, D.; Fantl, W.J.; Nolan, G.P.; Schürch, C.M. CellSeg: A robust, pre-trained nucleus segmentation and pixel quantification software for highly multiplexed fluorescence images. BMC Bioinform. 2022, 23, 46. [Google Scholar] [CrossRef]

- Niu, Z.; Li, H. Research and analysis of threshold segmentation algorithms in image processing. In Journal of Physics: Conference Series; IOP Publishing: Bristol, UK, 2019; Volume 1237, p. 022122. [Google Scholar] [CrossRef]

- Sun, R.; Lei, T.; Chen, Q.; Wang, Z.; Du, X.; Zhao, W.; Nandi, A. Survey of Image Edge Detection. Front. Signal Process. 2022, 2, 826967. [Google Scholar] [CrossRef]

- Yi, F.; Moon, I. Image segmentation: A survey of graph-cut methods. In Proceedings of the 2012 International Conference on Systems and Informatics (ICSAI2012), Yantai, China, 19–20 May 2012; pp. 1936–1941. [Google Scholar]

- Long, J.; Shelhamer, E.; Darrell, T. Fully convolutional networks for semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 3431–3440. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar] [CrossRef] [Green Version]

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. In International Conference on Medical Image Computing and Computer-Assisted Intervention; Springer: Cham, Switzerland, 2015; pp. 234–241. [Google Scholar] [CrossRef] [Green Version]

- Chen, L.C.; Papandreou, G.; Kokkinos, I.; Murphy, K.; Yuille, A.L. Deeplab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected crfs. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 40, 834–848. [Google Scholar] [CrossRef] [Green Version]

- Berardone, I.; Garcia, J.L.; Paggi, M. Analysis of electroluminescence and infrared thermal images of monocrystalline silicon photovoltaic modules after 20 years of outdoor use in a solar vehicle. Sol. Energy 2018, 173, 478–486. [Google Scholar] [CrossRef]

- Tang, W.; Yang, Q.; Xiong, K.; Yan, W. Deep learning based automatic defect identification of photovoltaic module using electroluminescence images. Sol. Energy 2020, 201, 453–460. [Google Scholar] [CrossRef]

- Deitsch, S.; Buerhop-Lutz, C.; Sovetkin, E.; Steland, A.; Maier, A.; Gallwitz, F.; Riess, C. Segmentation of Photovoltaic Module Cells in Electroluminescence Images. arXiv 2018, arXiv:1806.06530. [Google Scholar] [CrossRef]

- Nie, J.; Luo, T.; Li, H. Automatic hotspots detection based on UAV infrared images for large-scale PV plant. Electron. Lett. 2020, 56, 993–995. [Google Scholar] [CrossRef]

- Cipriani, G.; D’Amico, A.; Guarino, S.; Manno, D.; Traverso, M.; Di Dio, V. Convolutional neural network for dust and hotspot classification in PV modules. Energies 2020, 13, 6357. [Google Scholar] [CrossRef]

- Akram, M.W.; Li, G.; Jin, Y.; Chen, X.; Zhu, C.; Zhao, X.; Aleem, M.; Ahmad, A. Improved outdoor thermography and processing of infrared images for defect detection in PV modules. Sol. Energy 2019, 190, 549–560. [Google Scholar] [CrossRef]

- Balasubramani, G.; Thangavelu, V.; Chinnusamy, M.; Subramaniam, U.; Padmanaban, S.; Mihet-Popa, L. Infrared thermography based defects testing of solar photovoltaic panel with fuzzy rule-based evaluation. Energies 2020, 13, 1343. [Google Scholar] [CrossRef] [Green Version]

- Seferbekov, S.; Iglovikov, V.; Buslaev, A.; Shvets, A. Feature pyramid network for multi-class land segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Salt Lake City, UT, USA, 18–22 June 2018; pp. 272–275. [Google Scholar] [CrossRef]

- Chen, L.C.; Zhu, Y.; Papandreou, G.; Schroff, F.; Adam, H. Encoder-decoder with atrous separable convolution for semantic image segmentation. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 801–818. [Google Scholar] [CrossRef]

- Tan, M.; Le, Q. Efficientnet: Rethinking model scaling for convolutional neural networks. In Proceedings of the International Conference on Machine Learning, Long Beach, CA, USA, 9–15 June 2019; pp. 6105–6114. [Google Scholar] [CrossRef]

- Xie, S.; Girshick, R.; Dollár, P.; Tu, Z.; He, K. Aggregated residual transformations for deep neural networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 1492–1500. [Google Scholar] [CrossRef]

- Sandler, M.; Howard, A.; Zhu, M.; Zhmoginov, A.; Chen, L.C. Mobilenetv2: Inverted residuals and linear bottlenecks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 4510–4520. [Google Scholar] [CrossRef]

- Zhao, W. Research on the deep learning of the small sample data based on transfer learning. In AIP Conference Proceedings; AIP Publishing LLC: Guangzhou, China, 2017; Volume 1864, p. 020018. [Google Scholar]

- Pierdicca, R.; Paolanti, M.; Felicetti, A.; Piccinini, F.; Zingaretti, P. Automatic Faults Detection of Photovoltaic Farms: solAIr, a Deep Learning-Based System for Thermal Images. Energies 2020, 13, 6496. [Google Scholar] [CrossRef]

- The Drone Life. Available online: https://thedronelifenj.com/ (accessed on 12 March 2022).

- Buslaev, A.; Iglovikov, V.I.; Khvedchenya, E.; Parinov, A.; Druzhinin, M.; Kalinin, A.A. Albumentations: Fast and flexible image augmentations. Information 2020, 11, 125. [Google Scholar] [CrossRef] [Green Version]

- Northcutt, C.G.; Athalye, A.; Mueller, J. Pervasive label errors in test sets destabilize machine learning benchmarks. arXiv 2021, arXiv:2103.14749. [Google Scholar] [CrossRef]

- Russell, B.C.; Torralba, A.; Murphy, K.P.; Freeman, W.T. LabelMe: A database and web-based tool for image annotation. Int. J. Comput. Vis. 2008, 77, 157–173. [Google Scholar] [CrossRef]

- Dong, R.; Pan, X.; Li, F. DenseU-net-based semantic segmentation of small objects in urban remote sensing images. IEEE Access 2019, 7, 65347–65356. [Google Scholar] [CrossRef]

- Ioffe, S.; Szegedy, C. Batch normalization: Accelerating deep network training by reducing internal covariate shift. In Proceedings of the International Conference on Machine Learning, Lille, France, 6–11 July 2015; pp. 448–456. [Google Scholar] [CrossRef]

- Nair, V.; Hinton, G.E. Rectified Linear Units Improve Restricted Boltzmann Machines. In Proceedings of the 27th International Conference on Machine Learning, Haifa, Israel, 21 June 2010; pp. 807–814. [Google Scholar]

- Chen, L.C.; Papandreou, G.; Schroff, F.; Adam, H. Rethinking atrous convolution for semantic image segmentation. arXiv 2017, arXiv:1706.05587. [Google Scholar] [CrossRef]

- Sudre, C.H.; Li, W.; Vercauteren, T.; Ourselin, S.; Jorge Cardoso, M. Generalised dice overlap as a deep learning loss function for highly unbalanced segmentations. In Deep Learning in Medical Image Analysis and Multimodal Learning for Clinical Decision Support; Springer: Cham, Switzerland, 2017; pp. 240–248. [Google Scholar] [CrossRef] [Green Version]

| Overview | Description |

|---|---|

| FPA | 640 × 512 |

| Scene Range (high gain) | −25° to 135 °C |

| Scene Range (low gain) | −40° to 550 °C |

| Image Format | JPEG, TIFF, R-JPEG |

| Weight | Approx. 4.69 kg (with two TB55 batteries) |

| Max Takeoff Weight | 6.14 kg |

| Max Payload | 1.45 kg |

| Operating Temperature | −4° to 122 °F (−20° to 50 °C) |

| Max Flight Time (with two TB55 batteries) | 38 min (no payload), 24 min (takeoff weight: 6.14 kg) |

| Overview | Description |

|---|---|

| Number of images | 1153 |

| Image resolution | 640 × 512 |

| Type of use | Solar plant |

| Image format | JPG |

| Number of images with two and more defective cells | 313 |

| Dataset Split | 8.1.1 |

| Configuration and Hyperparameters | Description |

|---|---|

| input_size | 512 × 512 |

| backbone | resnet-50, resnext-101, efficientnet-b3, mobilenet-v2 |

| used_pretrained_model | ImageNet |

| algorithm | fpn: Feature Pyramid Network deeplab: DeepLabV3+ unet: U-Net |

| num_classes | 2: defected cell and background |

| optimizer | Adam |

| activation | “sigmoid” |

| learning_rate | 0.001 |

| batch_size | 8 |

| normalization_mean | [0.485, 0.456, 0.406] |

| normalization_std | [0.229, 0.224, 0.225] |

| loss function | DiceLoss |

| Model | IoU Score | Dice Score | Fscore | Precision | Recall | Accuracy |

|---|---|---|---|---|---|---|

| FPN | ||||||

| Resnet50 | 0.7981 | 0.8842 | 0.9436 | 0.9982 | 0.8947 | 0.9984 |

| Resnext50 | 0.8162 | 0.8906 | 0.9545 | 0.9995 | 0.9134 | 0.9999 |

| Efficientnet-b3 | 0.8472 | 0.9233 | 0.9567 | 0.9959 | 0.9205 | 0.9963 |

| MobilenetV2 | 0.8064 | 0.8827 | 0.9398 | 0.9977 | 0.8883 | 0.9987 |

| DeepLabV3+ | ||||||

| Resnet50 | 0.7470 | 0.8421 | 0.9017 | 0.9977 | 0.8226 | 0.9993 |

| Resnext50 | 0.7851 | 0.8692 | 0.9342 | 0.9998 | 0.8766 | 0.8766 |

| Efficientnet-b3 | 0.7867 | 0.8864 | 0.9367 | 0.9786 | 0.8983 | 0.999 |

| MobilenetV2 | 0.7788 | 0.8735 | 0.9321 | 0.9954 | 0.8764 | 0.9975 |

| U-Net | ||||||

| Resnet50 | 0.7971 | 0.8819 | 0.9364 | 0.9851 | 0.8923 | 0.9923 |

| Resnext50 | 0.7878 | 0.8527 | 0.9438 | 0.9989 | 0.8945 | 0.9945 |

| Efficientnet-b3 | 0.8571 | 0.9378 | 0.9680 | 0.9980 | 0.9398 | 0.9992 |

| MobilenetV2 | 0.855 | 0.9368 | 0.9665 | 0.9936 | 0.9409 | 0.9979 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jumaboev, S.; Jurakuziev, D.; Lee, M. Photovoltaics Plant Fault Detection Using Deep Learning Techniques. Remote Sens. 2022, 14, 3728. https://doi.org/10.3390/rs14153728

Jumaboev S, Jurakuziev D, Lee M. Photovoltaics Plant Fault Detection Using Deep Learning Techniques. Remote Sensing. 2022; 14(15):3728. https://doi.org/10.3390/rs14153728

Chicago/Turabian StyleJumaboev, Sherozbek, Dadajon Jurakuziev, and Malrey Lee. 2022. "Photovoltaics Plant Fault Detection Using Deep Learning Techniques" Remote Sensing 14, no. 15: 3728. https://doi.org/10.3390/rs14153728

APA StyleJumaboev, S., Jurakuziev, D., & Lee, M. (2022). Photovoltaics Plant Fault Detection Using Deep Learning Techniques. Remote Sensing, 14(15), 3728. https://doi.org/10.3390/rs14153728