A Review of Multi-Sensor Fusion SLAM Systems Based on 3D LIDAR

Abstract

:1. Introduction

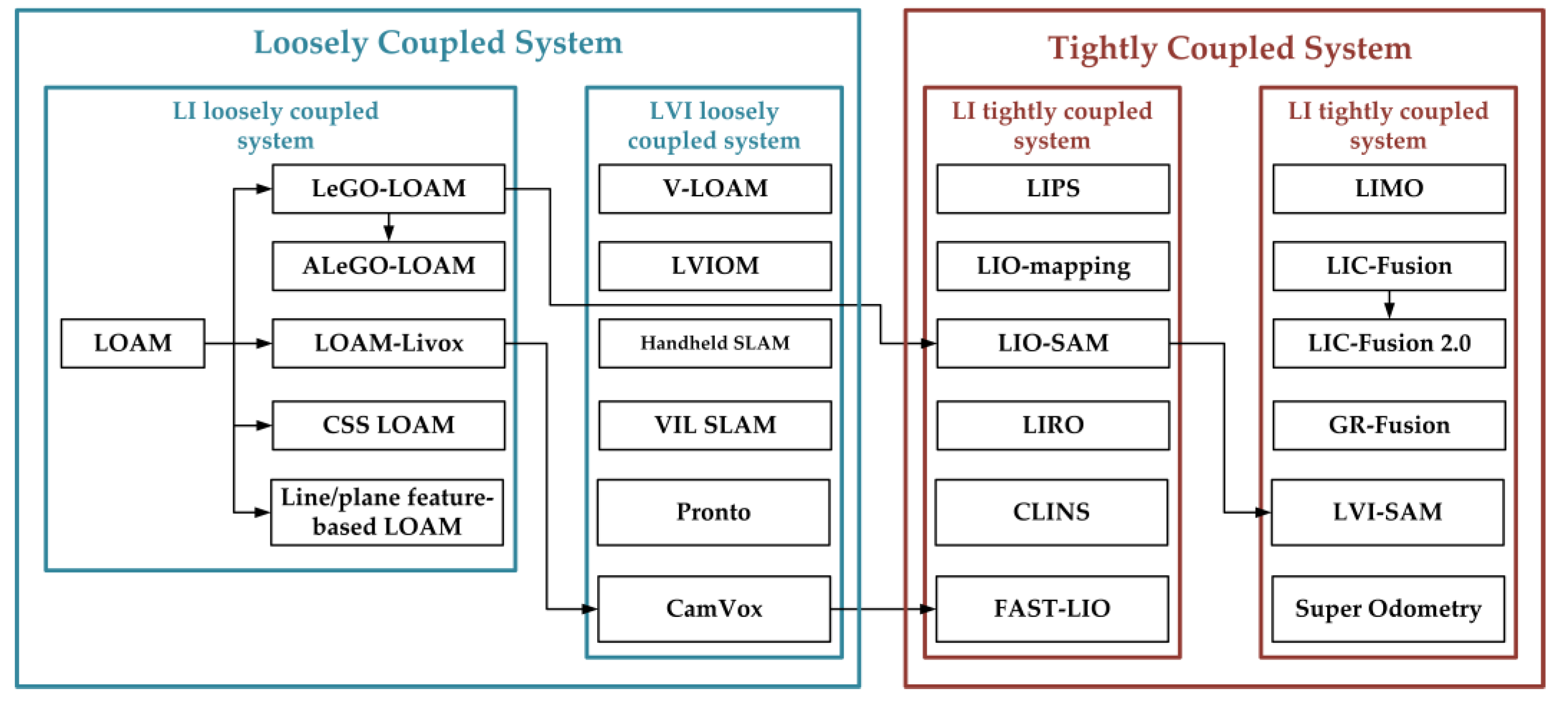

- The multi-sensor fusion SLAM systems in recent years are categorized and summarized according to the types of fused sensors and the means of data coupling.

- This work fully demonstrates the development of multi-sensor fusion positioning and reviews the works of both loosely coupled and tightly coupled systems, so as to help readers better understand the development and latest progress of multi-sensor fusion SLAM.

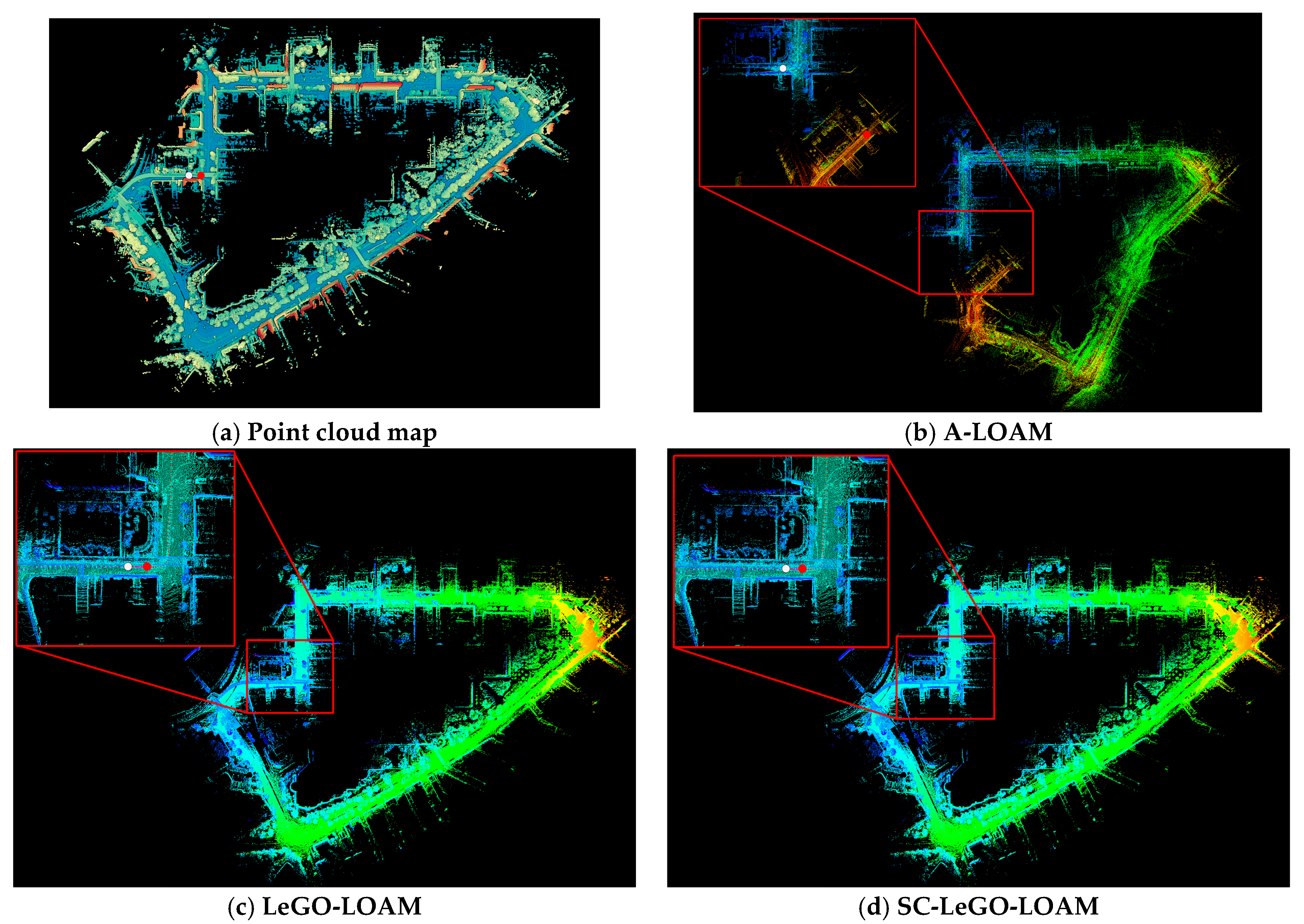

- This paper reviews some SLAM datasets and compares the performance of five open-source algorithms using the UrbanNav dataset.

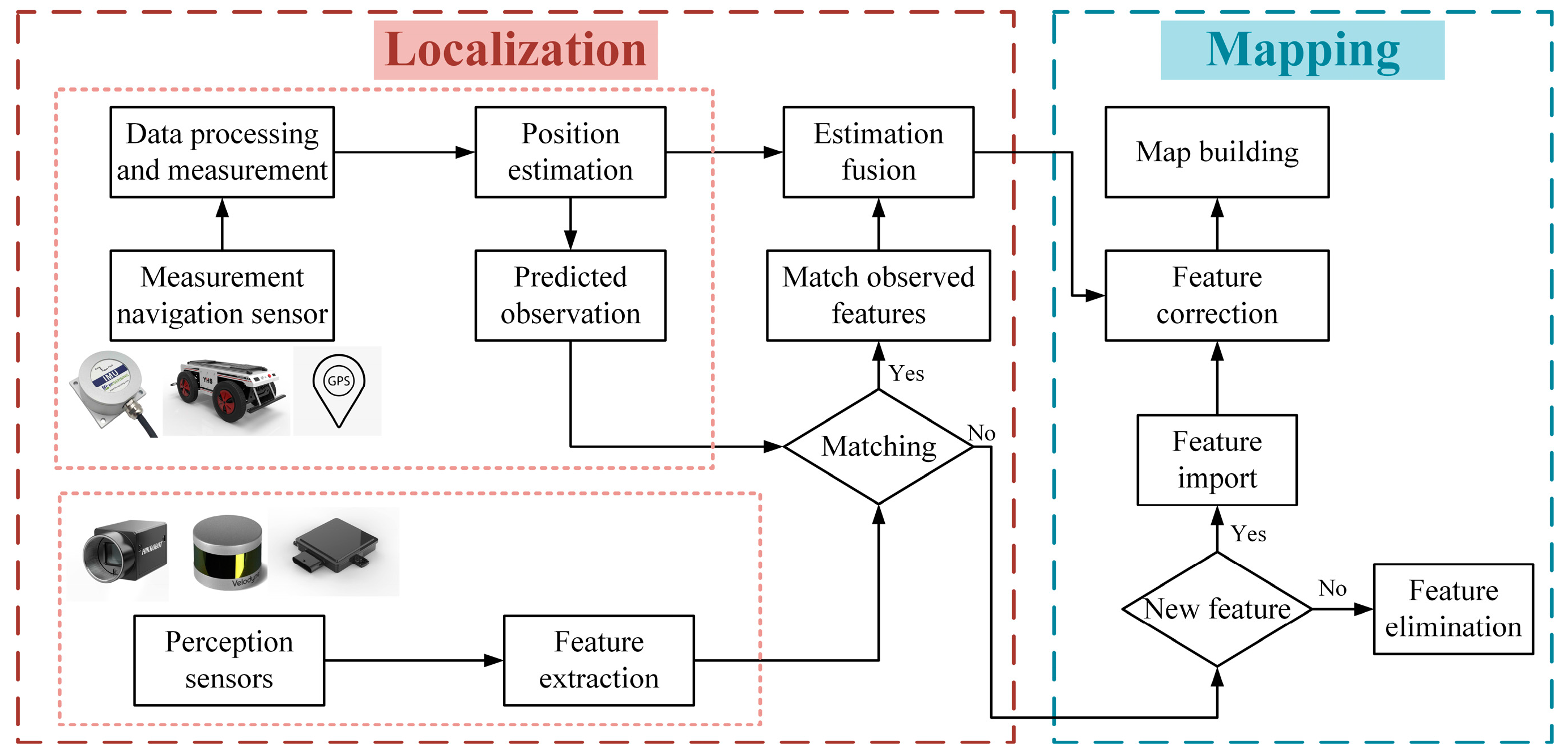

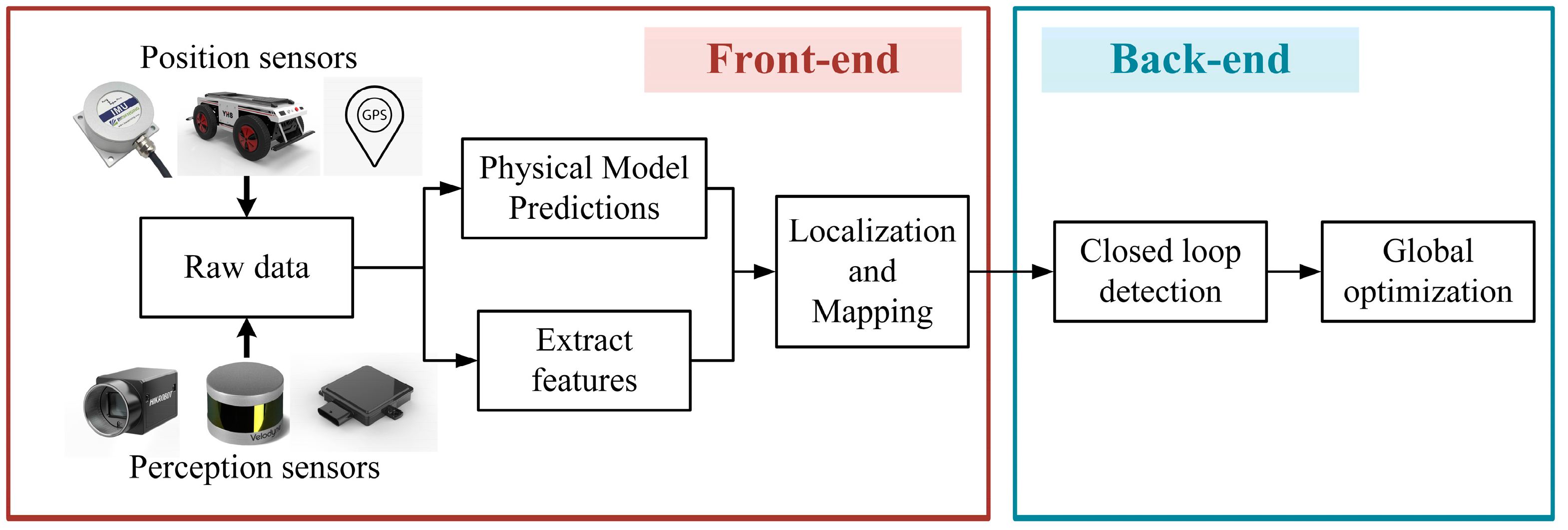

2. Simultaneous Localization and Mapping System

3. Multi-Sensor Loosely Coupled System Based on LIDAR

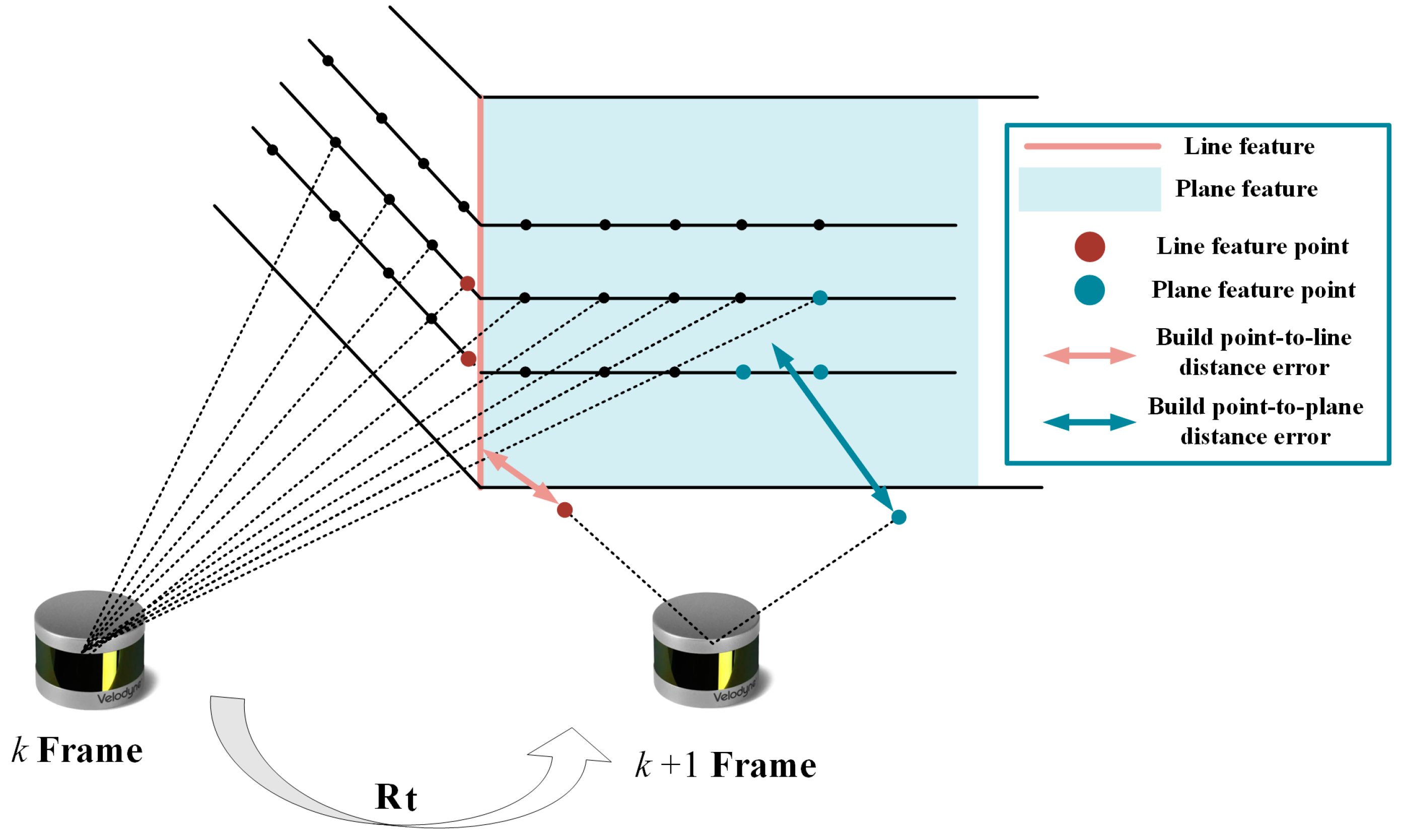

3.1. LIDAR-IMU Loosely Coupled System

3.2. LIDAR-Visual-IMU Loosely Coupled System

4. Multi-Sensor Tightly Coupled System Based on LIDAR

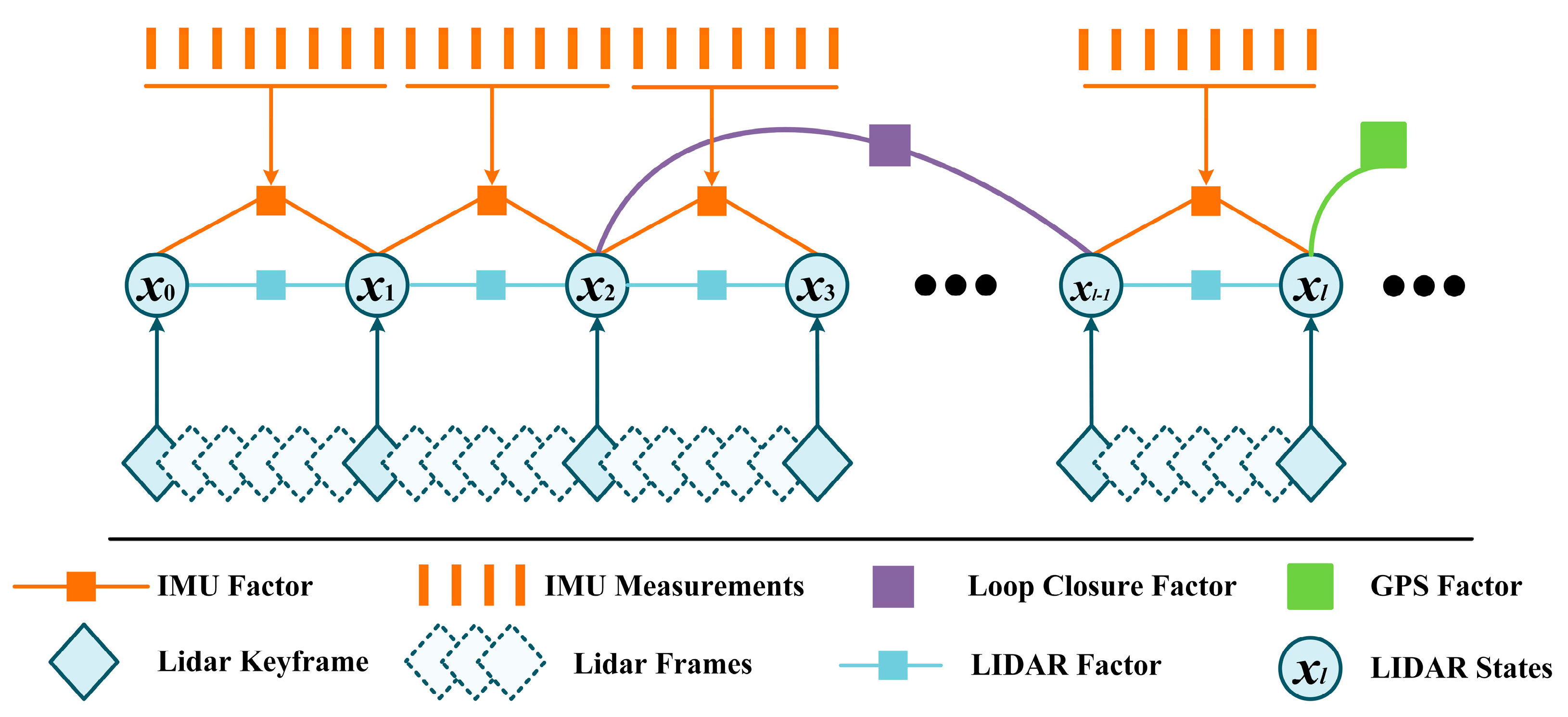

4.1. LIDAR-IMU Tightly Coupled System

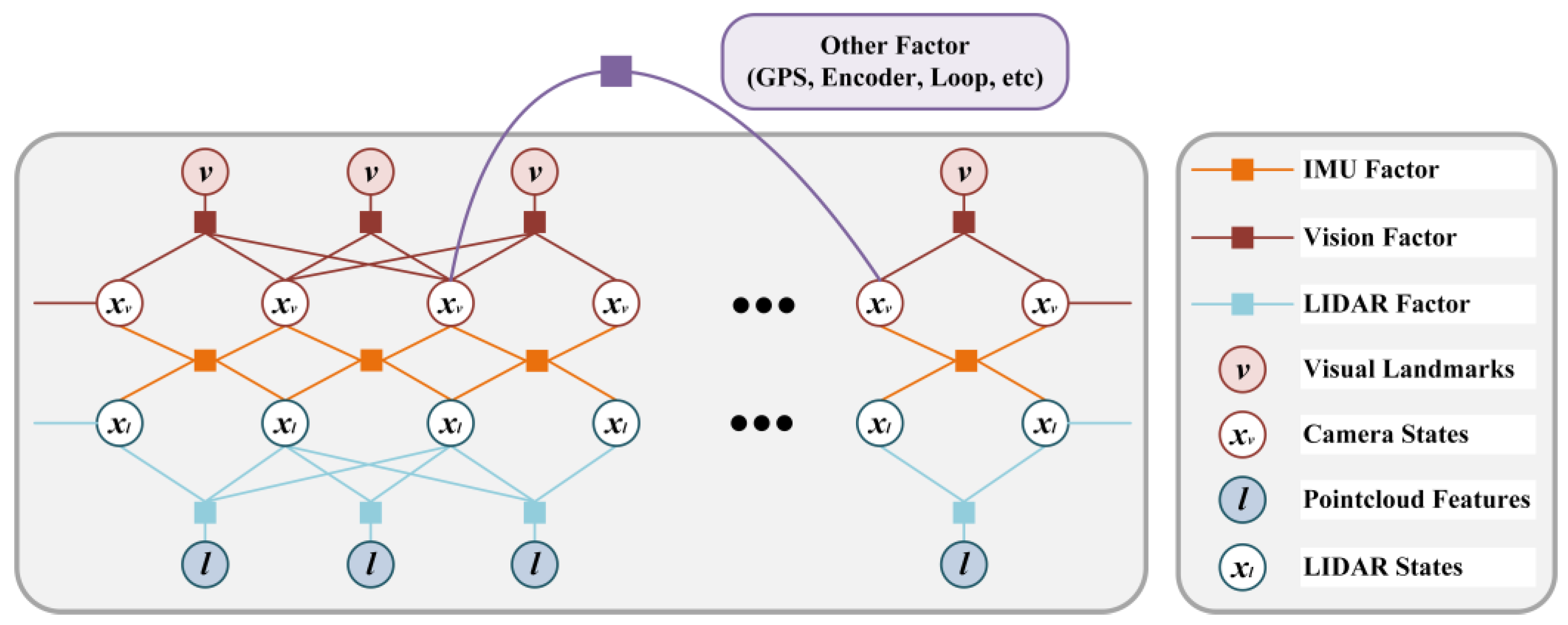

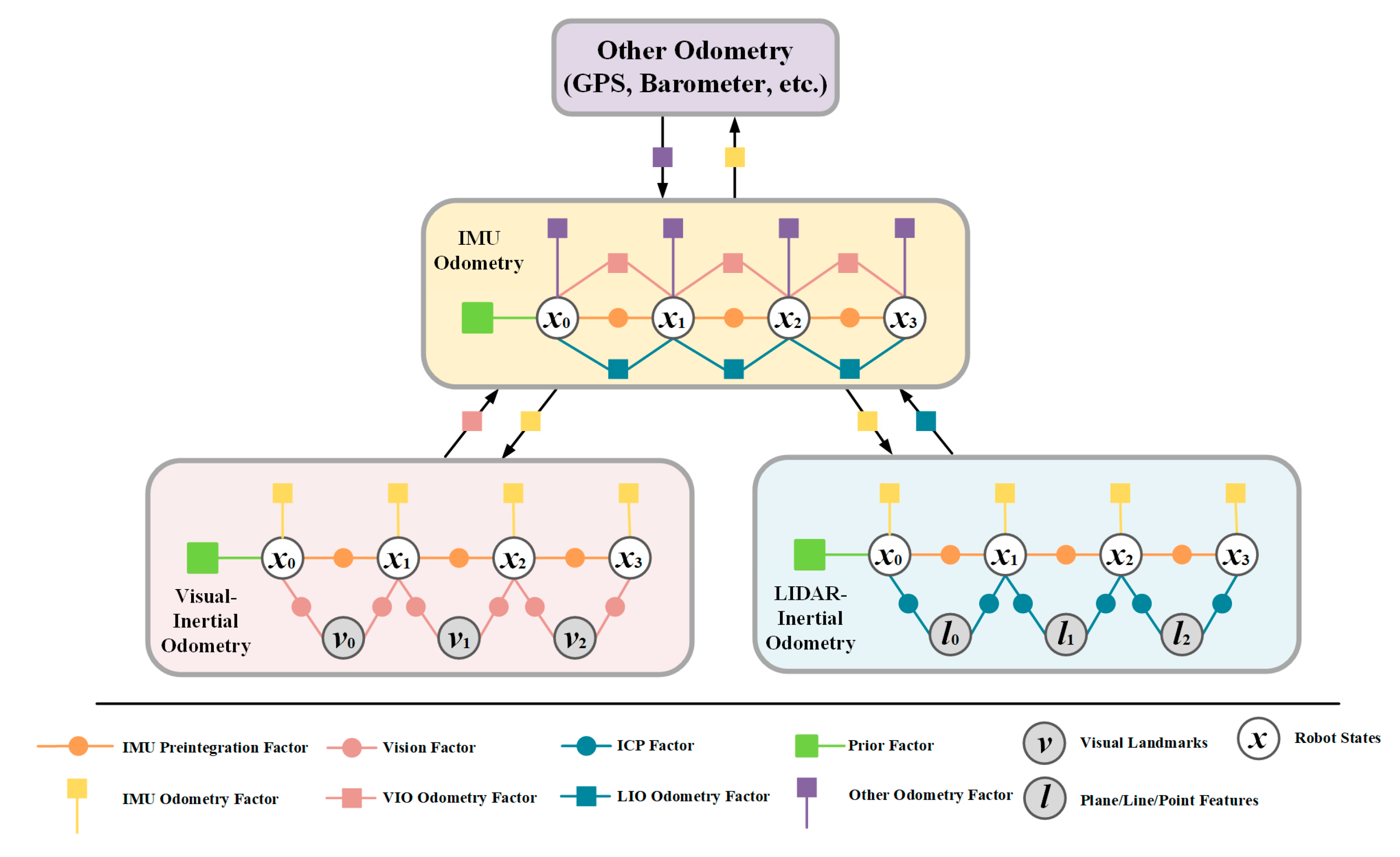

4.2. LIDAR-Visual-IMU Tightly Coupled System

5. Performance Evaluation

5.1. SLAM Datasets

5.2. Performance Comparison

6. Conclusions and Future Outlook

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Montemerlo, M.; Becker, J.; Bhat, S.; Dahlkamp, H.; Dolgov, D.; Ettinger, S.; Haehnel, D.; Hilden, T.; Hoffmann, G.; Huhnke, B.; et al. Junior: The Stanford Entry in the Urban Challenge. J. Field Robot. 2008, 25, 569–597. [Google Scholar] [CrossRef] [Green Version]

- Levinson, J.; Askeland, J.; Becker, J.; Dolson, J.; Held, D.; Kammel, S.; Kolter, J.Z.; Langer, D.; Pink, O.; Pratt, V.; et al. Towards Fully Autonomous Driving: Systems and Algorithms. In Proceedings of the 2011 IEEE Intelligent Vehicles Symposium (IV), Baden-Baden, Germany, 5–9 June 2011; pp. 163–168. [Google Scholar]

- He, X.; Gao, W.; Sheng, C.Z.; Zhang, Z.T.; Pan, S.G.; Duan, L.J.; Zhang, H.; Lu, X.Y. LiDAR-Visual-Inertial Odometry Based on Optimized Visual Point-Line Features. Remote Sens. 2022, 14, 662. [Google Scholar] [CrossRef]

- Tee, Y.K.; Han, Y.C. Lidar-Based 2D SLAM for Mobile Robot in an Indoor Environment: A Review. In Proceedings of the 2021 International Conference on Green Energy, Computing and Sustainable Technology (GECOST), Miri, Malaysia, 7–9 July 2021; pp. 1–7. [Google Scholar]

- Bresson, G.; Alsayed, Z.; Yu, L.; Glaser, S. Simultaneous Localization and Mapping: A Survey of Current Trends in Autonomous Driving. IEEE Trans. Intell. Vehic. 2017, 2, 194–220. [Google Scholar] [CrossRef] [Green Version]

- Cadena, C.; Carlone, L.; Carrillo, H.; Latif, Y.; Scaramuzza, D.; Neira, J.; Reid, I.; Leonard, J.J. Past, Present, and Future of Simultaneous Localization and Mapping: Toward the Robust-Perception Age. IEEE Trans. Robot. 2016, 32, 1309–1332. [Google Scholar] [CrossRef] [Green Version]

- Debeunne, C.; Vivet, D. A Review of Visual-LiDAR Fusion Based Simultaneous Localization and Mapping. Sensors 2020, 20, 2068. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Taheri, H.; Xia, Z.C. SLAM; Definition and Evolution. Eng. Appl. Artif. Intell. 2021, 97, 104032. [Google Scholar] [CrossRef]

- Zhiguo, Z.; Jiangwei, C.; Shunfan, D. Overview of 3D Lidar SLAM Algorithms. Chin. J. Sci. Instrum. 2021, 42, 13–27. [Google Scholar]

- Leonard, J.J.; Durrant-Whyte, H.F. Directed Sonar Sensing for Mobile Robot. Navigation; Kluwer Academic Publishers: Norwood, MA, USA, 1992. [Google Scholar]

- Ji, Z.; Singh, S. LOAM: Lidar Odometry and Mapping in Real-Time. In Proceedings of the Robotics: Science and Systems Conference (RSS), Berkeley, CA, USA, 12–14 July 2014. [Google Scholar]

- Shan, T.; Englot, B. LeGO-LOAM: Lightweight and Ground-Optimized Lidar Odometry and Mapping on Variable Terrain. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 4758–4765. [Google Scholar]

- Zermas, D.; Izzat, I.; Papanikolopoulos, N. Fast Segmentation of 3D Point Clouds: A Paradigm on LiDAR Data for Autonomous Vehicle Applications. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA), Singapore, 29 May–3 June 2017; pp. 5067–5073. [Google Scholar]

- Huo, X.; Dou, L.; Lu, H.; Tian, B.; Du, M. A Line/Plane Feature-Based Lidar Inertial Odometry and Mapping. In Proceedings of the 2019 Chinese Control Conference (CCC), Guangzhou, China, 27–30 July 2019; pp. 4377–4382. [Google Scholar]

- Zhang, S.; Xiao, L.; Nie, Y.; Dai, B.; Hu, C. Lidar Odometry and Mapping Based on Two-Stage Feature Extraction. In Proceedings of the 2020 39th Chinese Control Conference (CCC), Shenyang, China, 27–29 July 2020; pp. 3966–3971. [Google Scholar]

- Gonzalez, C.; Adams, M. An Improved Feature Extractor for the Lidar Odometry and Mapping (LOAM) Algorithm. In Proceedings of the 2019 International Conference on Control, Automation and Information Sciences (ICCAIS), Chengdu, China, 23–26 October 2019; pp. 1–7. [Google Scholar]

- Lee, S.-W.; Hsu, C.-M.; Lee, M.-C.; Fu, Y.-T.; Atas, F.; Tsai, A. Fast Point Cloud Feature Extraction for Real-Time SLAM. In Proceedings of the 2019 International Automatic Control Conference (CACS), Keelung, Taiwan, 13–16 November 2019; pp. 1–6. [Google Scholar]

- Yokozuka, M.; Koide, K.; Oishi, S.; Banno, A. LiTAMIN2: Ultra Light LiDAR-Based SLAM Using Geometric Approximation Applied with KL-Divergence. In Proceedings of the 2021 IEEE International Conference on Robotics and Automation (ICRA), Xi’an, China, 30 May–5 June 2021; pp. 11619–11625. [Google Scholar]

- Behley, J.; Stachniss, C. Efficient Surfel-Based SLAM Using 3D Laser Range Data in Urban Environments. In Proceedings of the 14th Conference on Robotics-Science and Systems (RSS), Pittsburgh, PA, USA, 26–30 June 2018. [Google Scholar]

- Park, C.; Moghadam, P.; Kim, S.; Elfes, A.; Fookes, C.; Sridharan, S. Elastic LiDAR Fusion: Dense Map-Centric Continuous-Time SLAM. In Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA), Brisbane, QLD, Australia, 21–25 May 2018; pp. 1206–1213. [Google Scholar]

- Droeschel, D.; Behnke, S. Efficient Continuous-Time SLAM for 3D Lidar-Based Online Mapping. In Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA), Brisbane, QLD, Australia, 21–25 May 2018; pp. 5000–5007. [Google Scholar]

- Pfister, H.; Zwicker, M.; van Baar, J.; Gross, M. Surfels: Surface Elements as Rendering Primitives. In Proceedings of the Computer Graphics Annual Conference, New Orleans, LA, USA, 23–28 July 2000; pp. 335–342. [Google Scholar]

- Pan, Y.; Xiao, P.C.A.; He, Y.J.; Shao, Z.L.; Li, Z.S. MULLS: Versatile LiDAR SLAM via Multi-Metric Linear Least Square. In Proceedings of the 2021 IEEE International Conference on Robotics and Automation (ICRA), Xi’an, China, 30 May–5 June 2021; pp. 11633–11640. [Google Scholar]

- Kim, G.; Kim, A.; Kosecka, J. Scan Context: Egocentric Spatial Descriptor for Place Recognition within 3D Point Cloud Map. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 4802–4809. [Google Scholar]

- Wang, H.; Wang, C.; Xie, L.H. Intensity Scan Context: Coding Intensity and Geometry Relations for Loop Closure Detection. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May–31 August 2020; pp. 2095–2101. [Google Scholar]

- Lin, J.; Zhang, F. A Fast, Complete, Point Cloud Based Loop Closure for LiDAR Odometry and Mapping. arXiv 2019. [Google Scholar] [CrossRef]

- Lin, J.; Zhang, F. Loam Livox: A Fast, Robust, High-Precision LiDAR Odometry and Mapping Package for LiDARs of Small FoV. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May–31 August 2020; pp. 3126–3131. [Google Scholar]

- Zhang, J.; Singh, S. Visual-Lidar Odometry and Mapping: Low-Drift, Robust, and Fast. In Proceedings of the 2015 IEEE International Conference on Robotics and Automation (ICRA), Seattle, WA, USA, 26–30 May 2015; pp. 2174–2181. [Google Scholar]

- Zhang, J.; Singh, S. Laser-Visual-Inertial Odometry and Mapping with High Robustness and Low Drift. J. Field Robot. 2018, 35, 1242–1264. [Google Scholar] [CrossRef]

- Caselitz, T.; Steder, B.; Ruhnke, M.; Burgard, W. Monocular Camera Localization in 3D LiDAR Maps. In Proceedings of the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Daejeon, Korea, 9–14 October 2016; pp. 1926–1931. [Google Scholar]

- Zhang, L.; Xu, X.; Cao, C.; He, J.; Ran, Y.; Tan, Z.; Luo, M. Robot Pose Estimation Method Based on Image and Point Cloud Fusion with Dynamic Feature Elimination. Chin. J. Lasers 2022, 49, 0610001. [Google Scholar]

- Qin, T.; Li, P.; Shen, S. VINS-Mono: A Robust and Versatile Monocular Visual-Inertial State Estimator. IEEE Trans. Robot. 2018, 34, 1004–1020. [Google Scholar] [CrossRef] [Green Version]

- Zhang, M.; Han, S.; Wang, S.; Liu, X.; Hu, M.; Zhao, J. Stereo Visual Inertial Mapping Algorithm for Autonomous Mobile Robot. In Proceedings of the 2020 3rd International Conference on Intelligent Robotic and Control Engineering (IRCE), Oxford, UK, 10–12 August 2020; pp. 97–104. [Google Scholar]

- Qin, T.; Cao, S.; Pan, J.; Shen, S. A General Optimization-Based Framework for Global Pose Estimation with Multiple Sensors. arXiv 2019. [Google Scholar] [CrossRef]

- Wang, Z.; Zhang, J.; Chen, S. Robust High Accuracy Visual-Inertial-Laser SLAM System. In Proceedings of the 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Macau, China, 4–8 November 2019; pp. 6636–6641. [Google Scholar]

- Shao, W.; Vijayarangan, S.; Li, C.; Kantor, G. Stereo Visual Inertial LiDAR Simultaneous Localization and Mapping. In Proceedings of the 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Macau, China, 4–8 November 2019; pp. 370–377. [Google Scholar]

- Kaess, M.; Ranganathan, A.; Dellaert, F. iSAM: Incremental Smoothing and Mapping. IEEE Trans. Robot. 2008, 24, 1365–1378. [Google Scholar] [CrossRef]

- Kaess, M.; Johannsson, H.; Roberts, R. iSAM2: Incremental Smoothing and Mapping with Fluid Relinearization and Incremental Variable Reordering. In Proceedings of the 2011 IEEE International Conference on Robotics and Automation (ICRA), Shanghai, China, 9–13 May 2011; pp. 3281–3288. [Google Scholar]

- Khattak, S.; Nguyen, H.D.; Mascarich, F. Complementary Multi-Modal Sensor Fusion for Resilient Robot Pose Estimation in Subterranean Environments. In Proceedings of the 2020 International Conference on Unmanned Aircraft Systems (ICUAS), Athens, Greece, 1–4 September 2020; pp. 1024–1029. [Google Scholar]

- Camurri, M.; Ramezani, M.; Nobili, S.; Fallon, M. Pronto: A Multi-Sensor State Estimator for Legged Robots in Real-World Scenarios. Front. Robot. AI 2020, 7, 18. [Google Scholar] [CrossRef] [PubMed]

- Lowe, T.; Kim, S.; Cox, M. Complementary Perception for Handheld SLAM. IEEE Robot. Autom. Lett. 2018, 3, 1104–1111. [Google Scholar] [CrossRef]

- Zhu, Y.; Zheng, C.; Yuan, C. CamVox: A Low-cost and Accurate Lidar-assisted Visual SLAM System. In Proceedings of the 2021 IEEE International Conference on Robotics and Automation (ICRA), Xi’an, China, 30 May–5 June 2021; pp. 5049–5055. [Google Scholar]

- Mur-Artal, R.; Tardós, J.D. ORB-SLAM2: An Open-Source SLAM System for Monocular, Stereo, and RGB-D Cameras. IEEE Trans. Robot. 2017, 33, 1255–1262. [Google Scholar] [CrossRef] [Green Version]

- Shin, Y.; Park, Y.; Kim, A. Direct Visual SLAM Using Sparse Depth for Camera-LiDAR System. In Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA), Brisbane, QLD, Australia, 21–25 May 2018; pp. 5144–5151. [Google Scholar]

- Engel, J.; Koltun, V.; Cremers, D. Direct Sparse Odometry. IEEE Trans. Pattern Anal. Mach. Intell. 2016, 40, 611–625. [Google Scholar] [CrossRef]

- Reinke, A.; Chen, X.; Stachniss, C. Simple but Effective Redundant Odometry for Autonomous Vehicles. In Proceedings of the 2021 IEEE International Conference on Robotics and Automation (ICRA), Xi’an, China, 30 May–5 June 2021; pp. 9631–9637. [Google Scholar]

- Segal, A.; Hhnel, D.; Thrun, S. Generalized-ICP. In Proceedings of the Robotics: Science and Systems V (RSS), Seattle, WA, USA, 28 June–1 July 2009. [Google Scholar]

- Rusinkiewicz, S.; Levoy, M. Efficient variants of the ICP algorithm. In Proceedings of the Proceedings Third International Conference on 3-D Digital Imaging and Modeling, Quebec, QC, Canada, 28 May–1 June 2001; pp. 145–152. [Google Scholar]

- Biber, P.; Strasser, W. The normal distributions transform: A new approach to laser scan matching. In Proceedings of the Proceedings 2003 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Las Vegas, NV, USA, 27–31 October 2003; pp. 2743–2748. [Google Scholar]

- Park, J.; Zhou, Q.Y.; Koltun, V. Colored Point Cloud Registration Revisited. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 143–152. [Google Scholar]

- Huang, K.; Stachniss, C. Joint ego-motion estimation using a laser scanner and a monocular Camera through relative orientation estimation and 1-DoF ICP. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 671–677. [Google Scholar]

- Wang, P.; Fang, Z.; Zhao, S. Vanishing Point Aided LiDAR-Visual-Inertial Estimator. In Proceedings of the 2021 IEEE International Conference on Robotics and Automation (ICRA), Xi’an, China, 30 May–5 June 2021; pp. 13120–13126. [Google Scholar]

- Forster, C.; Carlone, L.; Dellaert, F.; Scaramuzza, D. IMU Preintegration on Manifold for Efficient Visual-Inertial Maximum-a-Posteriori Estimation. In Proceedings of the 2015 Robotics Science and Systems (RSS), Rome, Italy, 17 July 2015. [Google Scholar]

- Forster, C.; Carlone, L.; Dellaert, F. On-Manifold Preintegration for Real-Time Visual--Inertial Odometry. IEEE Trans. Robot. 2017, 33, 1–21. [Google Scholar] [CrossRef] [Green Version]

- Geneva, P.; Eckenhoff, K.; Yang, Y. LIPS: LiDAR-Inertial 3D Plane SLAM. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 123–130. [Google Scholar]

- Gentil, C.L.; Vidal-Calleja, T.; Huang, S. IN2LAMA: INertial Lidar Localisation And Mapping. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 20–24 May 2019; pp. 6388–6394. [Google Scholar]

- Ye, H.; Chen, Y.; Liu, M. Tightly Coupled 3D Lidar Inertial Odometry and Mapping. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 20–24 May 2019; pp. 3144–3150. [Google Scholar]

- Hess, W.; Kohler, D.; Rapp, H. Real-time loop closure in 2D LIDAR SLAM. In Proceedings of the 2016 IEEE International Conference on Robotics and Automation (ICRA), Stockholm, Sweden, 16–21 May 2016; pp. 4322–4328. [Google Scholar]

- Ding, W.; Hou, S.; Gao, H. LiDAR Inertial Odometry Aided Robust LiDAR Localization System in Changing City Scenes. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May–31 August 2020; pp. 1271–1278. [Google Scholar]

- Ceres Solver. Available online: http://ceres-solver.org (accessed on 4 May 2022).

- Shan, T.; Englot, B.; Meyers, D.; Wang, W.; Ratti, C.; Rus, D. LIO-SAM: Tightly-Coupled Lidar Inertial Odometry via Smoothing and Mapping. In Proceedings of the 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Las Vegas, NV, USA, 24 October–24 January 2021; pp. 5135–5142. [Google Scholar]

- Moore, T.; Stouch, D. A Generalized Extended Kalman Filter Implementation for the Robot Operating System. In Proceedings of the 13th International Conference on Intelligent Autonomous Systems (IAS), Padova, Italy, 15–18 July 2016; pp. 335–348. [Google Scholar]

- Nguyen, T.-M.; Cao, M.; Yuan, S.; Lyu, Y.; Nguyen, T.H.; Xie, L. LIRO: Tightly Coupled Lidar-Inertia-Ranging Odometry. In Proceedings of the 2021 IEEE International Conference on Robotics and Automation (ICRA), Xi’an, China, 30 May–5 June 2021; pp. 14484–14490. [Google Scholar]

- Chen, W.; Zhao, H.; Shen, Q.; Xiong, C.; Zhou, S.; Liu, Y.-H. Inertial Aided 3D LiDAR SLAM with Hybrid Geometric Primitives in Large-Scale Environments. In Proceedings of the 2021 IEEE International Conference on Robotics and Automation (ICRA), Xi’an, China, 30 May–5 June 2021; pp. 11566–11572. [Google Scholar]

- Li, W.; Hu, Y.; Han, Y.; Li, X. KFS-LIO: Key-Feature Selection for Lightweight Lidar Inertial Odometry. In Proceedings of the 2021 IEEE International Conference on Robotics and Automation (ICRA), Xi’an, China, 30 May–5 June 2021; pp. 5042–5048. [Google Scholar]

- Lv, J.; Hu, K.; Xu, J.; Liu, Y.; Ma, X.; Zuo, X. CLINS: Continuous-Time Trajectory Estimation for LiDAR-Inertial System. In Proceedings of the 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Prague, Czech Republic, 27 September–1 October 2021; pp. 6657–6663. [Google Scholar]

- RF-LIO: Removal-First Tightly-Coupled Lidar Inertial Odometry in High Dynamic Environments. In Proceedings of the 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Prague, Czech Republic, 27 September–1 October 2021; pp. 4421–4428.

- Xu, W.; Zhang, F. FAST-LIO: A Fast, Robust LiDAR-Inertial Odometry Package by Tightly-Coupled Iterated Kalman Filter. IEEE Robot. Autom. Lett. 2021, 6, 3317–3324. [Google Scholar] [CrossRef]

- Graeter, J.; Wilczynski, A.; Lauer, M. LIMO: Lidar-Monocular Visual Odometry. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 7872–7879. [Google Scholar]

- Szeliski, R. Computer Vision: Algorithms and Applications; Springer-Verlag: London, UK, 2010. [Google Scholar]

- Hartley, R.; Zisserman, A. Multiple View Geometry in Computer Vision, 2nd ed.; Cambridge University Press: Cambridge, UK, 2004. [Google Scholar]

- Huang, S.-S.; Ma, Z.-Y.; Mu, T.-J.; Fu, H.; Hu, S.-M. Lidar-Monocular Visual Odometry Using Point and Line Features. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May–31 August 2020; pp. 1091–1097. [Google Scholar]

- Amblard, V.; Osedach, T.P.; Croux, A.; Speck, A.; Leonard, J.J. Lidar-Monocular Surface Reconstruction Using Line Segments. In Proceedings of the 2021 IEEE International Conference on Robotics and Automation (ICRA), Xi’an, China, 30 May–5 June 2021; pp. 5631–5637. [Google Scholar]

- Wang, J.; Rünz, M.; Agapito, L. DSP-SLAM: Object Oriented SLAM with Deep Shape Priors. In Proceedings of the 2021 International Conference on 3D Vision (3DV), London, UK, 1–3 December 2021; pp. 1362–1371. [Google Scholar]

- Wang, T.; Su, Y.; Shao, S.; Yao, C.; Wang, Z. GR-Fusion: Multi-Sensor Fusion SLAM for Ground Robots with High Robustness and Low Drift. In Proceedings of the 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Prague, Czech Republic, 27 September–1 October 2021; pp. 5440–5447. [Google Scholar]

- Jia, Y.; Luo, H.; Zhao, F.; Jiang, G.; Li, Y.; Yan, J.; Jiang, Z.; Wang, Z. Lvio-Fusion: A Self-Adaptive Multi-Sensor Fusion SLAM Framework Using Actor-Critic Method. In Proceedings of the 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Prague, Czech Republic, 27 September–1 October 2021; pp. 286–293. [Google Scholar]

- Shan, T.; Englot, B.; Ratti, C.; Rus, D. LVI-SAM: Tightly-Coupled Lidar-Visual-Inertial Odometry via Smoothing and Mapping. In Proceedings of the Proceedings-IEEE International Conference on Robotics and Automation, Xi’an, China, 30 May–5 June 2021; pp. 5692–5698. [Google Scholar]

- Zhao, S.; Zhang, H.; Wang, P.; Nogueira, L.; Scherer, S. Super Odometry: IMU-Centric LiDAR-Visual-Inertial Estimator for Challenging Environments. In Proceedings of the 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Prague, Czech Republic, 27 September–1 October 2021; pp. 8729–8736. [Google Scholar]

- Wang, Y.; Song, W.; Zhang, Y.; Huang, F.; Tu, Z.; Lou, Y. MetroLoc: Metro Vehicle Mapping and Localization with LiDAR-Camera-Inertial Integration. arXiv 2021. [Google Scholar] [CrossRef]

- Wisth, D.; Camurri, M.; Das, S.; Fallon, M. Unified Multi-Modal Landmark Tracking for Tightly Coupled Lidar-Visual-Inertial Odometry. IEEE Robot. Autom. Lett. 2021, 6, 1004–1011. [Google Scholar] [CrossRef]

- Dellaert, F.; Kaess, M. Factor graphs for robot perception. Found. Trends Robot. 2017, 6, 1–139. [Google Scholar] [CrossRef]

- Mourikis, A.I.; Roumeliotis, S.I. A Multi-State Constraint Kalman Filter for Vision-Aided Inertial Navigation. In Proceedings of the Proceedings 2007 IEEE International Conference on Robotics and Automation, Rome, Italy, 10–14 April 2007; pp. 3565–3572. [Google Scholar]

- Yang, Y.; Geneva, P.; Zuo, X.; Eckenhoff, K.; Liu, Y.; Huang, G. Tightly-Coupled Aided Inertial Navigation with Point and Plane Features. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 20–24 May 2019; pp. 6094–6100. [Google Scholar]

- Zuo, X.; Geneva, P.; Lee, W.; Liu, Y.; Huang, G. LIC-Fusion: LiDAR-Inertial-Camera Odometry. In Proceedings of the 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Macau, China, 3–8 November 2019; pp. 5848–5854. [Google Scholar]

- Zuo, X.; Yang, Y.; Geneva, P.; Lv, J.; Liu, Y.; Huang, G.; Pollefeys, M. LIC-Fusion 2.0: LiDAR-Inertial-Camera Odometry with Sliding-Window Plane-Feature Tracking. In Proceedings of the 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Las Vegas, NV, USA, 10 February 2020; pp. 5112–5119. [Google Scholar]

- Lin, J.; Chunran, Z.; Xu, W.; Zhang, F. R2LIVE: A Robust, Real-Time, LiDAR-Inertial-Visual Tightly-Coupled State Estimator and Mapping. IEEE Robot. Autom. Lett. 2021, 6, 7469–7476. [Google Scholar] [CrossRef]

- Geiger, A.; Lenz, P.; Urtasun, R. Are we ready for autonomous driving? the kitti vision benchmark suite. In Proceedings of the 2012 IEEE Conference on Computer Vision and Pattern Recognition, Providence, RI, USA, 16–21 June 2012; pp. 3354–3361. [Google Scholar]

- Waymo Open Dataset. Available online: https://waymo.com/open/data (accessed on 4 May 2022).

- PandaSet Open Datasets. Available online: https://scale.com/open-datasets/pandaset (accessed on 4 May 2022).

- Maddern, W.; Pascoe, G.; Linegar, C.; Newman, P. 1 year, 1000 km: The Oxford RobotCar dataset. Int. J. Robot. Res. 2016, 36, 3–15. [Google Scholar] [CrossRef]

- Hsu, L.T.; Kubo, N.; Wen, W.; Chen, W.; Liu, Z.; Suzuki, T.; Meguro, J. UrbanNav: An open-sourced multisensory dataset for benchmarking positioning algorithms designed for urban areas. In Proceedings of the 34th International Technical Meeting of the Satellite Division of The Institute of Navigation, St. Louis, MO, USA, 20–24 September 2021; pp. 226–256. [Google Scholar]

- Huang, F.; Wen, W.; Zhang, J.; Hsu, L.T. Point wise or Feature wise? Benchmark Comparison of public Available LiDAR Odometry Algorithms in Urban Canyons. arXiv 2021. [Google Scholar] [CrossRef]

- Jonnavithula, N.; Lyu, Y.; Zhang, Z. LiDAR Odometry Methodologies for Autonomous Driving: A Survey. arXiv 2021. [Google Scholar] [CrossRef]

- LOAM. Available online: https://github.com/HKUST-Aerial-Robotics/A-LOAM (accessed on 4 May 2022).

- Wang, H.; Wang, C.; Chen, C.-L.; Xie, L. F-loam: Fast lidar odometry and mapping. In Proceedings of the 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Prague, Czech Republic, 27 September–1 October 2021; pp. 4390–4396. [Google Scholar]

- Chen, S.; Zhou, B.; Jiang, C.; Xue, W.; Li, Q. A LiDAR/Visual SLAM Backend with Loop Closure Detection and Graph Optimization. Remote Sens. 2021, 13, 2720. [Google Scholar] [CrossRef]

| Full Name | Abbreviation |

|---|---|

| Simultaneous Localization and Mapping | SLAM |

| Laser Detection and Ranging | LIDAR |

| Degrees of Freedom | DOF |

| Micro Electro-Mechanical System | MEMS |

| Ultra-Wide Band | UWB |

| Inertial Measurement Unit | IMU |

| Iterative Closest Point | ICP |

| Graphic Processing Unit | GPU |

| Robot Operating System | ROS |

| LIDAR Odometry and Mapping | LOAM |

| Lidar Odometry | LO |

| Visual Odometry | VO |

| Visual-Inertial Odometry | VIO |

| LIDAR-Inertial Odometry | LIO |

| LIDAR-Visual-Inertial | LVI |

| Extended Kalman Filter | EKF |

| Multi-State Constrained Kalman Filter | MSCKF |

| Unmanned Aerial Vehicles | UAV |

| Year | Method | Author | Strength | Problem |

|---|---|---|---|---|

| 2014 | LOAM [11] | J. Zhang et al. | Low-drift. Low-computational complexity. | Lack of closed loop and backend optimization. |

| 2018 | LeGO-LOAM [12] | T. Shan et al. | Ground segmentation. Two-stage optimization. | Closed loop detection accuracy is low. Complex terrain failure. |

| 2018 | SuMa [19] | J. Behley et al. | A surfel-based map can be used for pose estimation and loop closure detection. | High complexity. High computational cost. |

| 2019 | Line/plane feature-based LOAM [14] | X. Huo et al. | Explicit line/plane features. | Limited to an unstructured environment. |

| 2019 | ALeGO-LOAM [17] | S. Lee et al. | Adaptive cloud sampling method. | Limited to an unstructured environment. |

| 2019 | CSS-based LOAM [16] | C. Gonzalez et al. | Curvature scale space method. | High complexity. |

| 2019 | A loop closure for LOAM [26] | J. Lin et al. | 2D histogram-based closed loop. | Ineffective in open large scenes. |

| 2020 | Two-stage feature-based LOAM [15] | S. Zhang et al. | Two-stage features. Surface normal vector estimation. | High complexity. |

| 2020 | Loam-livox [27] | J. Lin et al. | Solid State LIDAR. Intensity values assist in feature extraction. Interpolation to resolve motion distortion. | No backend. No inertial system |

| 2021 | MULLS [23] | Y. Pan et al. | Optimized point cloud features. Strong real-time. | Limited to an unstructured environment. |

| 2021 | LiTAMIN2 [18] | M. Yokozuka et al. | More accurate front-end registration. Faster point cloud registration. | Lack of backend optimization. |

| Year | Method | Author | Strength | Problem |

|---|---|---|---|---|

| 2015 | V-LOAM [28] | J. Zhang et al. | Visual feature fusion point cloud depth. | Weak correlation between vision and LIDAR. |

| 2016 | Monocular Camera Localization [30] | T. Caselitz et al. | Rely on a priori maps Local BA. | Unknown environment failure. |

| 2018 | LVIOM [29] | J. Zhang et al. | VIO preprocessing. Addresses sensor degradation issues. Staged pose estimation. | Inertial system state stops updating when vision fails. |

| 2018 | Handheld SLAM [41] | T. Lowe et al. | The incorporation of depth uncertainty. Unified parameterization of different features. | System failure when vision is unavailable. |

| 2018 | Direct Visual SLAM for Camera-LiDAR System [44] | Y. Shin et al. | Direct method. Sliding window-based pose graph optimization. | Not available in open areas. Poor closed-loop detection performance. |

| 2019 | VIL SLAM [35] | Z. Wang et al. | VIO and LO assist each other. Addresses sensor degradation issues. | Closed loop unavailable when vision fails. |

| 2019 | Stereo Visual Inertial LiDAR SLAM [36] | W. Shao et al. | Stereo VIO provides initial pose. Factor graph optimization. | No raw data association between VIO and LO. Sngle factors. |

| 2020 | Pronto [40] | M. Camurri et al. | EKF Fusion Leg Odometer and IMU. LO and VO corrected pose estimation. | Drifts seriously. Not bound by historical data. |

| 2020 | CamVox [42] | Y. Zhu et al. | Livox LIDAR aids depth estimation. An automatic calibration method. | No inertial system.. No LO. |

| 2021 | Redundant Odometry [46] | A. Reinke et al. | Multiple algorithms in parallel. Filter the best results. | High computational cost. No data association. |

| 2021 | LiDAR-Visual-Inertial Estimator [52] | P. Wang et al. | LIDAR assists the VIO system Voxel map structures share depth. Vanishing Point optimizes rotation estimation. | System failure when vision is unavailable. |

| Year | Method | Author | Strength | Problem |

|---|---|---|---|---|

| 2018 | LIPS [55] | P. Geneva et al. | The singularity free plane factor. Preintegration factor. Graph optimization. | High computational cost. No backend or local optimization. |

| 2019 | IN2LAMA [56] | C. Le Gentil et al. | Pre-integration to remove distortion. Unified representation of inertial data and point cloud. | The open outdoor scene fails. |

| 2019 | LIO-mapping [57] | H. Ye et al. | Sliding window. Local optimization. | High computational cost. Not real time. |

| 2020 | LiDAR Inertial Odometry [58] | W. Ding et al. | The occupancy grid based LO. Map updates in dynamic environments. | Degradation in unstructured scenes. |

| 2020 | LIO-SAM [61] | T. Shan et al. | Sliding window. Add GPS factor. Marginalize historical frames and generate local maps. | Poor closed loop detection. Degradation in open scenes. |

| 2021 | LIRO [63] | T.-M. Nguyen et al. | UWB constraints. Build fusion error. | UWB usage scenarios are limited. |

| 2021 | Inertial Aided 3D LiDAR SLAM [64] | W. Chen et al. | Refine point cloud feature classification. Closed Loop Detection Based on LPD-Net. | Degradation in unstructured scenes. |

| 2021 | KFS-LIO [65] | W. Li et al. | Point cloud feature filtering Efficient Computing. | Poor closed loop detection. |

| 2021 | CLINS [66] | J. Lv et al. | The two-state continuous-time trajectory correction method. Optimization based on dynamic and static control points. | High computational cost. Affected by sensor degradation. |

| 2021 | RF-LIO [67] | IEEE | Remove dynamic objects. Match scan to the submap. | Low dynamic object removal rate. |

| 2021 | FAST-LIO [68] | W. Xu et al. | Iterated Kalman Filter. Fast and efficient. | Cumulative error. No global optimization. |

| Year | Method | Author | Strength | Problem |

|---|---|---|---|---|

| 2018 | LIMO [69] | J. Graeter et al. | Point cloud scene segmentation to optimize depth estimation. Epipolar Constraint. Optimization PnP Solution | Unused LO. Sparse map. |

| 2019 | Tightly-coupled aided inertial navigation [83] | Y. Yang et al. | MSCKF. Point and plane features. | LIDAR is unnecessary. |

| 2019 | LIC-Fusion [84] | X. Zuo et al. | MSCKF. Point and Line Features. | High computational cost. Time synchronization is sensitive.. Unresolved sensor degradation. |

| 2020 | LIC-Fusion 2.0 [85] | X. Zuo et al. | MSCKF. Sliding window based plane feature tracking. | Time synchronization is sensitive.. Unresolved sensor degradation. |

| 2020 | LIDAR-Monocular Visual Odometry [72] | S.-S. Huang et al. | Reprojection error combined with ICP. Get depth of point and line features simultaneously. | Poor closed-loop detection performance. High computational cost. |

| 2021 | LIDAR-Monocular Surface Reconstruction [73] | V. Amblard et al. | Match line features of point clouds and images. Calculate reprojection error for points and lines. | Inertial measurement not used. |

| 2021 | GR-Fusion [75] | T. Wang et al. | Factor graph optimization. Address sensor degradation. GNSS global constraints. | No apparent problem. |

| 2021 | Lvio-Fusion [76] | Y. Jia et al. | Two-stage pose estimation. Factor graph optimization. Reinforcement learning adjusts factor weights. | High computational cost. Difficult to deploy. |

| 2021 | LVI-SAM [77] | T. Shan et al. | Factor graph optimization. VIS and LIS complement each other. Optimize depth information. | Poor closed loop performance. |

| 2021 | Super Odometry [78] | S. Zhao et al. | IMU as the core. LIO and VIO operate independently. Jointly optimized pose results. Address sensor degradation. | High computational cost. |

| 2021 | Tightly Coupled LVI Odometry [80] | D. Wisth et al. | Factor graph optimization. Unified feature representation. Efficient time synchronization. | Unresolved sensor degradation. |

| 2021 | DSP-SLAM [74] | J. Wang et al. | Add object reconstruction to factor graph. The DeepSDF network extracts objects. | No coupled inertial system. Poor closed loop performance. |

| 2021 | R2LIVE [86] | J. Lin et al. | The error-state iterated Kalman filter. Factor graph optimization. | No closed loop detection and overall backend optimization. |

| Methods | Relative Translation Error (m) | Relative Rotation Error (deg) | Odometry APTPF (s) | ||

|---|---|---|---|---|---|

| RMSE | Mean | RMSE | Mean | ||

| A-LOAM | 1.532 | 0.963 | 1.467 | 1.054 | 0.013 |

| LeGO-LOAM | 0.475 | 0.322 | 1.263 | 0.674 | 0.009 |

| SC-LeGO-LOAM | 0.482 | 0.325 | 1.278 | 0.671 | 0.009 |

| LIO-SAM | 0.537 | 0.374 | 0.836 | 0.428 | 0.012 |

| F-LOAM | 0.386 | 0.287 | 1.125 | 0.604 | 0.005 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Xu, X.; Zhang, L.; Yang, J.; Cao, C.; Wang, W.; Ran, Y.; Tan, Z.; Luo, M. A Review of Multi-Sensor Fusion SLAM Systems Based on 3D LIDAR. Remote Sens. 2022, 14, 2835. https://doi.org/10.3390/rs14122835

Xu X, Zhang L, Yang J, Cao C, Wang W, Ran Y, Tan Z, Luo M. A Review of Multi-Sensor Fusion SLAM Systems Based on 3D LIDAR. Remote Sensing. 2022; 14(12):2835. https://doi.org/10.3390/rs14122835

Chicago/Turabian StyleXu, Xiaobin, Lei Zhang, Jian Yang, Chenfei Cao, Wen Wang, Yingying Ran, Zhiying Tan, and Minzhou Luo. 2022. "A Review of Multi-Sensor Fusion SLAM Systems Based on 3D LIDAR" Remote Sensing 14, no. 12: 2835. https://doi.org/10.3390/rs14122835

APA StyleXu, X., Zhang, L., Yang, J., Cao, C., Wang, W., Ran, Y., Tan, Z., & Luo, M. (2022). A Review of Multi-Sensor Fusion SLAM Systems Based on 3D LIDAR. Remote Sensing, 14(12), 2835. https://doi.org/10.3390/rs14122835