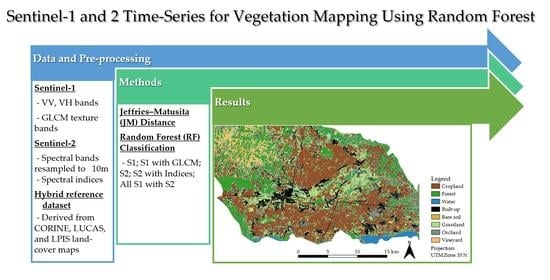

Sentinel-1 and 2 Time-Series for Vegetation Mapping Using Random Forest Classification: A Case Study of Northern Croatia

Abstract

1. Introduction

2. Study Area and Datasets

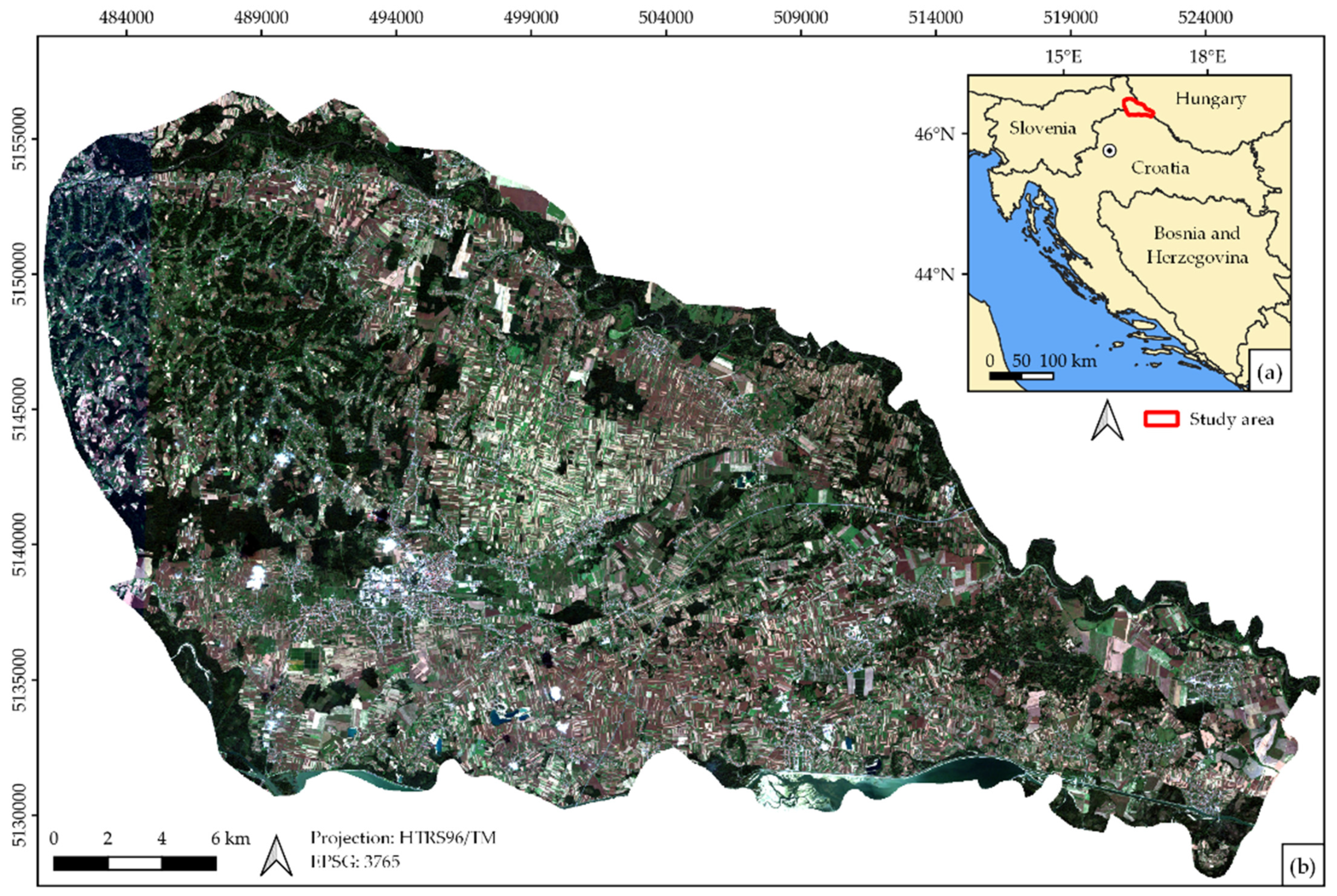

2.1. Study Area

2.2. Satellite Datasets

2.2.1. Sentinel-1 Data

2.2.2. Sentinel-2 Data

2.2.3. SAR Texture Features and Multispectral Indices

2.2.4. Topographic Data

2.3. Reference Data

3. Methods

3.1. Jeffries–Matusita (JM) Distance

3.2. Random Forest (RF) Classification

3.2.1. Hyperparameter Tuning

3.2.2. Feature Importance and Selection

3.3. Accuracy Assessment

4. Results and Discussion

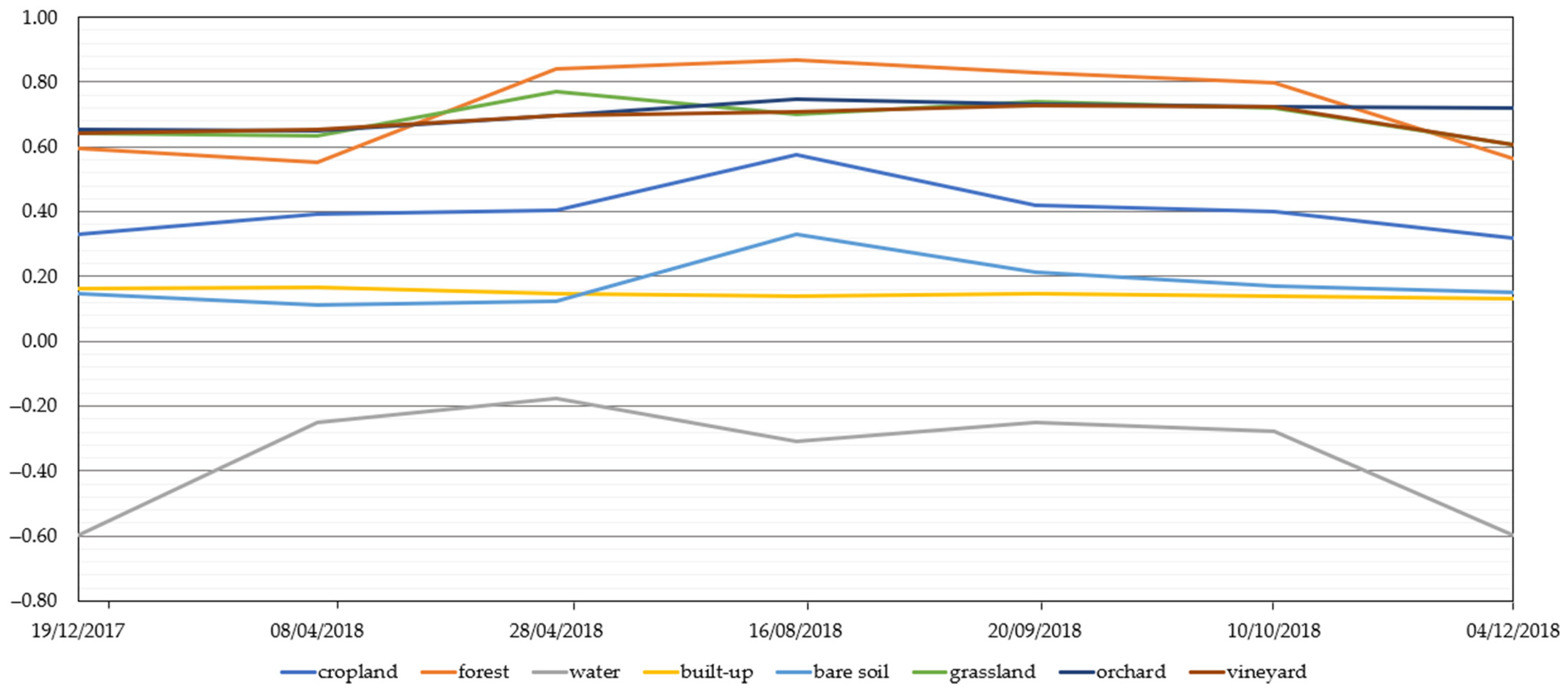

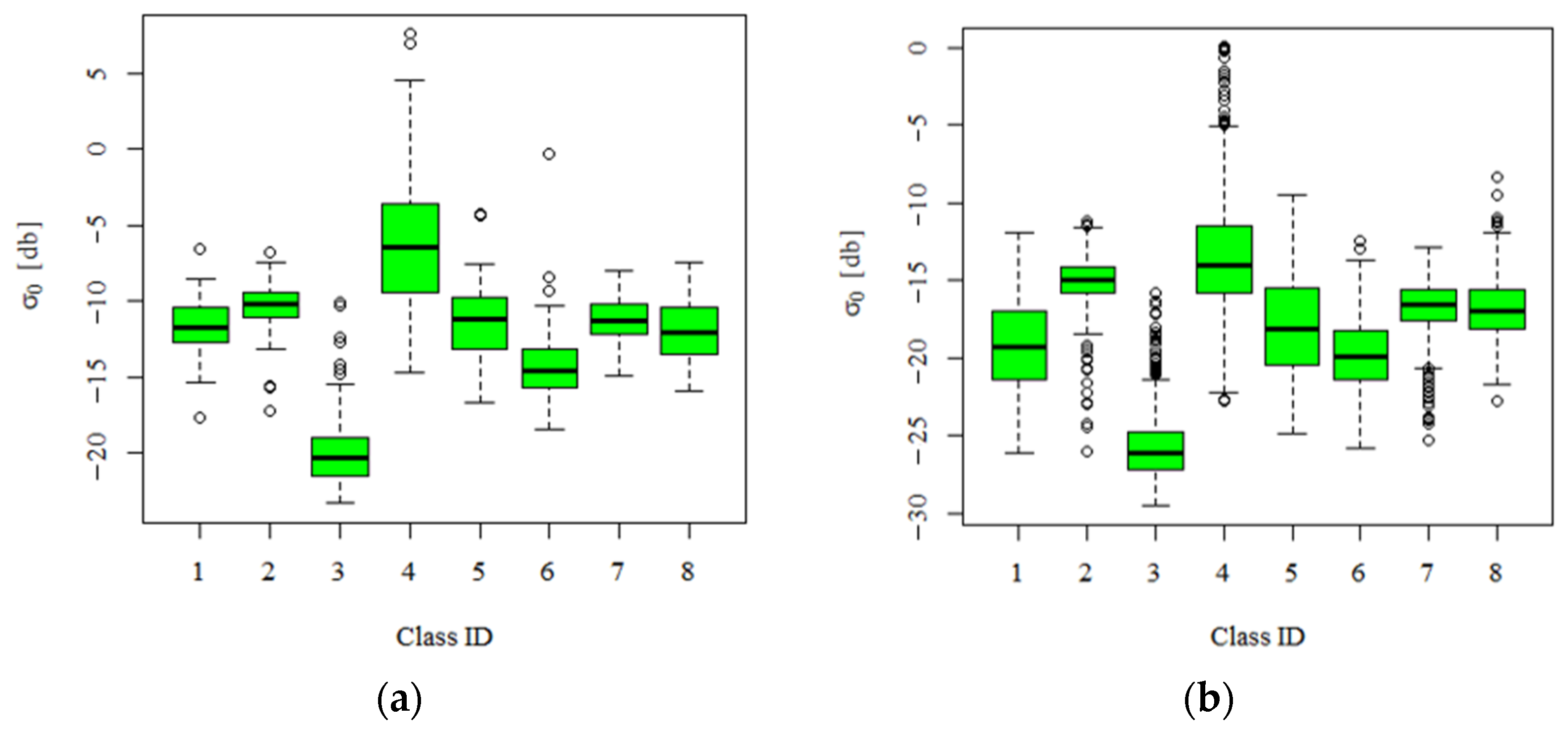

4.1. Optical NDVI and Radar Backscatter (VV, VH) Time-Series

4.2. Jeffries–Matusita (JM) Distance Variability Results of Each Class

4.3. Random Forest Hyperparameter Tuning Results

4.4. Importance and Selection of S1 and S2 Input Features for Vegetation Mapping

4.5. Accuracy Assessment

4.6. Impact of the Reference Dataset on Classification Accuracies

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Gong, P.; Wang, J.; Yu, L.; Zhao, Y.; Zhao, Y.; Liang, L.; Niu, Z.; Huang, X.; Fu, H.; Liu, S.; et al. Finer Resolution Observation and Monitoring of Global Land Cover: First Mapping Results with Landsat TM and ETM+ Data. Int. J. Remote Sens. 2013, 34, 2607–2654. [Google Scholar] [CrossRef]

- Liu, X.; Liang, X.; Li, X.; Xu, X.; Ou, J.; Chen, Y.; Li, S.; Wang, S.; Pei, F. A Future Land Use Simulation Model (FLUS) for Simulating Multiple Land Use Scenarios by Coupling Human and Natural Effects. Landsc. Urban Plan. 2017, 168, 94–116. [Google Scholar] [CrossRef]

- Mercier, A.; Betbeder, J.; Rumiano, F.; Baudry, J.; Gond, V.; Blanc, L.; Bourgoin, C.; Cornu, G.; Ciudad, C.; Marchamalo, M.; et al. Evaluation of Sentinel-1 and 2 Time Series for Land Cover Classification of Forest–Agriculture Mosaics in Temperate and Tropical Landscapes. Remote Sens. 2019, 11, 979. [Google Scholar] [CrossRef]

- Dobrinić, D.; Medak, D.; Gašparović, M. Integration Of Multitemporal Sentinel-1 And Sentinel-2 Imagery For Land-Cover Classification Using Machine Learning Methods. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2020, 43, 91–98. [Google Scholar] [CrossRef]

- Zhang, X.; Friedl, M.A.; Schaaf, C.B.; Strahler, A.H.; Hodges, J.C.F.; Gao, F.; Reed, B.C.; Huete, A. Monitoring Vegetation Phenology Using MODIS. Remote Sens. Environ. 2003, 84, 471–475. [Google Scholar] [CrossRef]

- Gao, F.; Hilker, T.; Zhu, X.; Anderson, M.; Masek, J.; Wang, P.; Yang, Y. Fusing Landsat and MODIS Data for Vegetation Monitoring. IEEE Geosci. Remote Sens. Mag. 2015, 3, 47–60. [Google Scholar] [CrossRef]

- Schultz, M.; Clevers, J.G.P.W.; Carter, S.; Verbesselt, J.; Avitabile, V.; Quang, H.V.; Herold, M. Performance of Vegetation Indices from Landsat Time Series in Deforestation Monitoring. Int. J. Appl. Earth Obs. Geoinf. 2016, 52, 318–327. [Google Scholar] [CrossRef]

- Bhandari, S.; Phinn, S.; Gill, T. Preparing Landsat Image Time Series (LITS) for Monitoring Changes in Vegetation Phenology in Queensland, Australia. Remote Sens. 2012, 4, 1856–1886. [Google Scholar] [CrossRef]

- Vuolo, F.; Neuwirth, M.; Immitzer, M.; Atzberger, C.; Ng, W.T. How Much Does Multi-Temporal Sentinel-2 Data Improve Crop Type Classification? Int. J. Appl. Earth Obs. Geoinf. 2018, 72, 122–130. [Google Scholar] [CrossRef]

- Sonobe, R.; Yamaya, Y.; Tani, H.; Wang, X.; Kobayashi, N.; Mochizuki, K.-I. Assessing the Suitability of Data from Sentinel-1A and 2A for Crop Classification. GISci. Remote Sens. 2017, 54, 918–938. [Google Scholar] [CrossRef]

- Inglada, J.; Vincent, A.; Arias, M.; Marais-Sicre, C. Improved Early Crop Type Identification by Joint Use of High Temporal Resolution Sar and Optical Image Time Series. Remote Sens. 2016, 8, 362. [Google Scholar] [CrossRef]

- Gašparović, M.; Klobučar, D. Mapping Floods in Lowland Forest Using Sentinel-1 and Sentinel-2 Data and an Object-Based Approach. Forests 2021, 12, 553. [Google Scholar] [CrossRef]

- Tomiyasu, K. Tutorial Review of Synthetic-Aperture Radar (SAR) with Applications to Imaging of the Ocean Surface. Proc. IEEE 1978, 66, 563–583. [Google Scholar] [CrossRef]

- Moreira, A.; Prats-iraola, P.; Younis, M.; Krieger, G.; Hajnsek, I.; Papathanassiou, K.P. A tutorial on synthetic aperture radar. IEEE Geosci. Remote Sens. Mag. 2013, 1, 6–43. [Google Scholar] [CrossRef]

- Gašparović, M.; Dobrinić, D. Comparative Assessment of Machine Learning Methods for Urban Vegetation Mapping Using Multitemporal Sentinel-1 Imagery. Remote Sens. 2020, 12, 1952. [Google Scholar] [CrossRef]

- Chauhan, S.; Darvishzadeh, R.; Lu, Y.; Boschetti, M.; Nelson, A. Understanding Wheat Lodging Using Multi-Temporal Sentinel-1 and Sentinel-2 Data. Remote Sens. Environ. 2020, 243, 111804. [Google Scholar] [CrossRef]

- Frantz, D.; Schug, F.; Okujeni, A.; Navacchi, C.; Wagner, W.; van der Linden, S.; Hostert, P. National-Scale Mapping of Building Height Using Sentinel-1 and Sentinel-2 Time Series. Remote Sens. Environ. 2021, 252, 112128. [Google Scholar] [CrossRef]

- Zhang, W.; Brandt, M.; Wang, Q.; Prishchepov, A.V.; Tucker, C.J.; Li, Y.; Lyu, H.; Fensholt, R. From Woody Cover to Woody Canopies: How Sentinel-1 and Sentinel-2 Data Advance the Mapping of Woody Plants in Savannas. Remote Sens. Environ. 2019, 234, 111465. [Google Scholar] [CrossRef]

- Jin, Y.; Liu, X.; Chen, Y.; Liang, X. Land-Cover Mapping Using Random Forest Classification and Incorporating NDVI Time-Series and Texture: A Case Study of Central Shandong. Int. J. Remote Sens. 2018, 39, 8703–8723. [Google Scholar] [CrossRef]

- Gašparović, M.; Dobrinić, D. Green Infrastructure Mapping in Urban Areas Using Sentinel-1 Imagery. Croat. J. For. Eng. 2021, 42, 1–20. [Google Scholar] [CrossRef]

- Isaac, E.; Easwarakumar, K.S.; Isaac, J. Urban Landcover Classification from Multispectral Image Data Using Optimized AdaBoosted Random Forests. Remote Sens. Lett. 2017, 8, 350–359. [Google Scholar] [CrossRef]

- Feng, Q.; Yang, J.; Zhu, D.; Liu, J.; Guo, H.; Bayartungalag, B.; Li, B. Integrating Multitemporal Sentinel-1/2 Data for Coastal Land Cover Classification Using a Multibranch Convolutional Neural Network: A Case of the Yellow River Delta. Remote Sens. 2019, 11, 1006. [Google Scholar] [CrossRef]

- Paris, C.; Weikmann, G.; Bruzzone, L. Monitoring of Agricultural Areas by Using Sentinel 2 Image Time Series and Deep Learning Techniques. Proc. SPIE. 2020, 11533, 115330K. [Google Scholar] [CrossRef]

- Han, H.; Guo, X.; Yu, H. Variable Selection Using Mean Decrease Accuracy and Mean Decrease Gini Based on Random Forest. In Proceedings of the 2016 7th IEEE International Conference on Software Engineering and Service Science (ICSESS), Beijing, China, 26–28 August 2016; pp. 219–224. [Google Scholar] [CrossRef]

- Saeys, Y.; Inza, I.; Larrañaga, P. A Review of Feature Selection Techniques in Bioinformatics. Bioinformatics 2007, 23, 2507–2517. [Google Scholar] [CrossRef] [PubMed]

- Jović, A.; Brkić, K.; Bogunović, N. A Review of Feature Selection Methods with Applications. In Proceedings of the 2015 38th International Convention on Information and Communication Technology, Electronics and Microelectronics, MIPRO 2015-Proceedings, Opatija, Croatia, 25–29 May 2015; pp. 1200–1205. [Google Scholar]

- Breiman, L. Random Forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Baudoux, L.; Inglada, J.; Mallet, C. Toward a Yearly Country-Scale CORINE Land-Cover Map without Using Images: A Map Translation Approach. Remote Sens. 2021, 13, 1060. [Google Scholar] [CrossRef]

- Van Tricht, K.; Gobin, A.; Gilliams, S.; Piccard, I. Synergistic Use of Radar Sentinel-1 and Optical Sentinel-2 Imagery for Crop Mapping: A Case Study for Belgium. Remote Sens. 2018, 10, 1642. [Google Scholar] [CrossRef]

- Belgiu, M.; Csillik, O. Sentinel-2 Cropland Mapping Using Pixel-Based and Object-Based Time-Weighted Dynamic Time Warping Analysis. Remote Sens. Environ. 2018, 204, 509–523. [Google Scholar] [CrossRef]

- Weigand, M.; Staab, J.; Wurm, M.; Taubenböck, H. Spatial and Semantic Effects of LUCAS Samples on Fully Automated Land Use/Land Cover Classification in High-Resolution Sentinel-2 Data. Int. J. Appl. Earth Obs. Geoinf. 2020, 88, 102065. [Google Scholar] [CrossRef]

- Balzter, H.; Cole, B.; Thiel, C.; Schmullius, C. Mapping CORINE Land Cover from Sentinel-1A SAR and SRTM Digital Elevation Model Data Using Random Forests. Remote Sens. 2015, 7, 14876–14898. [Google Scholar] [CrossRef]

- Olofsson, P.; Foody, G.M.; Herold, M.; Stehman, S.V.; Woodcock, C.E.; Wulder, M.A. Good Practices for Estimating Area and Assessing Accuracy of Land Change. Remote Sens. Environ. 2014, 148, 42–57. [Google Scholar] [CrossRef]

- Stehman, S.V. Sampling Designs for Accuracy Assessment of Land Cover. Int. J. Remote Sens. 2009, 30, 5243–5272. [Google Scholar] [CrossRef]

- Beck, H.E.; Zimmermann, N.E.; McVicar, T.R.; Vergopolan, N.; Berg, A.; Wood, E.F. Present and Future Köppen-Geiger Climate Classification Maps at 1-Km Resolution. Sci. Data 2018, 5, 1–12. [Google Scholar] [CrossRef] [PubMed]

- World Weather Online. Available online: https://www.worldweatheronline.com/cakovec-weather-history/medimurska/hr.aspx (accessed on 15 January 2021).

- Torres, R.; Snoeij, P.; Geudtner, D.; Bibby, D.; Davidson, M.; Attema, E.; Potin, P.; Rommen, B.Ö.; Floury, N.; Brown, M.; et al. GMES Sentinel-1 Mission. Remote Sens. Environ. 2012, 120, 9–24. [Google Scholar] [CrossRef]

- Lee, J.S. Digital Image Enhancement and Noise Filtering by Use of Local Statistics. IEEE Trans. Pattern Anal. Mach. Intell. 1980, 2, 165–168. [Google Scholar] [CrossRef]

- Osgouei, P.E.; Kaya, S.; Sertel, E.; Alganci, U. Separating Built-up Areas from Bare Land in Mediterranean Cities Using Sentinel-2A Imagery. Remote Sens. 2019, 11, 345. [Google Scholar] [CrossRef]

- Haralick, R.M.; Shanmugam, K.; Dinstein, I. Textural Features for Image Classification. IEEE Trans. Syst. Man Cybern. 1973, 3, 610–621. [Google Scholar] [CrossRef]

- Clerici, N.; Valbuena Calderón, C.A.; Posada, J.M. Fusion of Sentinel-1a and Sentinel-2A Data for Land Cover Mapping: A Case Study in the Lower Magdalena Region, Colombia. J. Maps 2017, 13, 718–726. [Google Scholar] [CrossRef]

- Tucker, C.J. Red and Photographic Infrared Linear Combinations for Monitoring Vegetation. Remote Sens. Environ. 1979, 8, 127–150. [Google Scholar] [CrossRef]

- McFeeters, S.K. The Use of the Normalized Difference Water Index (NDWI) in the Delineation of Open Water Features. Int. J. Remote Sens. 1996, 17, 1425–1432. [Google Scholar] [CrossRef]

- Huete, A.; Didan, K.; Miura, T.; Rodriguez, E.P.; Gao, X.; Ferreira, L.G. Overview of the Radiometric and Biophysical Performance of the MODIS Vegetation Indices. Remote Sens. Environ. 2002, 83, 195–213. [Google Scholar] [CrossRef]

- Huete, A.R. A Soil-Adjusted Vegetation Index (SAVI). Remote Sens. Environ. 1988, 25, 295–309. [Google Scholar] [CrossRef]

- Delegido, J.; Verrelst, J.; Alonso, L.; Moreno, J. Evaluation of Sentinel-2 Red-Edge Bands for Empirical Estimation of Green LAI and Chlorophyll Content. Sensors 2011, 11, 7063–7081. [Google Scholar] [CrossRef]

- Daughtry, C.S.T.; Walthall, C.L.; Kim, M.S.; De Colstoun, E.B.; McMurtrey, J.E. Estimating Corn Leaf Chlorophyll Concentration from Leaf and Canopy Reflectance. Remote Sens. Environ. 2000, 74, 229–239. [Google Scholar] [CrossRef]

- Gitelson, A.A.; Merzlyak, M.N. Remote Sensing of Chlorophyll Concentration in Higher Plant Leaves. Adv. Sp. Res. 1998, 22, 689–692. [Google Scholar] [CrossRef]

- Qi, J.; Chehbouni, A.; Huete, A.R.; Kerr, Y.H.; Sorooshian, S. A Modified Soil Adjusted Vegetation Index. Remote Sens. Environ. 1994, 48, 119–126. [Google Scholar] [CrossRef]

- Blackburn, G.A. Spectral Indices for Estimating Photosynthetic Pigment Concentrations: A Test Using Senescent Tree Leaves. Int. J. Remote Sens. 1998, 19, 657–675. [Google Scholar] [CrossRef]

- Frampton, W.J.; Dash, J.; Watmough, G.; Milton, E.J. Evaluating the Capabilities of Sentinel-2 for Quantitative Estimation of Biophysical Variables in Vegetation. ISPRS J. Photogramm. Remote Sens. 2013, 82, 83–92. [Google Scholar] [CrossRef]

- Gonzalez-Piqueras, J.; Calera, A.; Gilabert, M.A.; Cuesta, A.; De la Cruz Tercero, F. Estimation of crop coefficients by means of optimized vegetation indices for corn. Remote Sens. Agric. Ecosyst. Hydrol. V 2004, 5232, 110. [Google Scholar] [CrossRef]

- Chatziantoniou, A.; Psomiadis, E.; Petropoulos, G. Co-Orbital Sentinel 1 and 2 for LULC Mapping with Emphasis on Wetlands in a Mediterranean Setting Based on Machine Learning. Remote Sens. 2017, 9, 1259. [Google Scholar] [CrossRef]

- Jensen, J.R.; Lulla, K. Introductory Digital Image Processing: A Remote Sensing Perspective. Geocarto Int. 1987, 2, 65. [Google Scholar] [CrossRef]

- Ma, L.; Li, M.; Ma, X.; Cheng, L.; Du, P.; Liu, Y. A Review of Supervised Object-Based Land-Cover Image Classification. ISPRS J. Photogramm. Remote Sens. 2017, 130, 277–293. [Google Scholar]

- Choudhary, K.; Shi, W.; Boori, M.S.; Corgne, S. Agriculture Phenology Monitoring Using NDVI Time Series Based on Remote Sensing Satellites: A Case Study of Guangdong, China. Opt. Mem. Neural Netw. 2019, 28, 204–214. [Google Scholar] [CrossRef]

- Cánovas-García, F.; Alonso-Sarría, F. Optimal Combination of Classification Algorithms and Feature Ranking Methods for Object-Based Classification of Submeter Resolution Z/I-Imaging DMC Imagery. Remote Sens. 2015, 7, 4651–4677. [Google Scholar] [CrossRef]

- Melgani, F.; Bruzzone, L. Classification of Hyperspectral Remote Sensing Images With Support Vector Machines. IEEE Trans. Geosci. Remote Sens. 2004, 42, 1778–1790. [Google Scholar] [CrossRef]

- Htitiou, A.; Boudhar, A.; Lebrini, Y.; Hadria, R.; Lionboui, H.; Elmansouri, L.; Tychon, B.; Benabdelouahab, T. The Performance of Random Forest Classification Based on Phenological Metrics Derived from Sentinel-2 and Landsat 8 to Map Crop Cover in an Irrigated Semi-Arid Region. Remote Sens. Earth Syst. Sci. 2019, 2, 208–224. [Google Scholar] [CrossRef]

- Behnamian, A.; Millard, K.; Banks, S.N.; White, L.; Richardson, M.; Pasher, J. A Systematic Approach for Variable Selection with Random Forests: Achieving Stable Variable Importance Values. IEEE Geosci. Remote Sens. Lett. 2017, 14, 1988–1992. [Google Scholar] [CrossRef]

- Janitza, S.; Tutz, G.; Boulesteix, A.L. Random Forest for Ordinal Responses: Prediction and Variable Selection. Comput. Stat. Data Anal. 2016, 96, 57–73. [Google Scholar] [CrossRef]

- Belgiu, M.; Drăgut, L. Random Forest in Remote Sensing: A Review of Applications and Future Directions. ISPRS J. Photogramm. Remote Sens. 2016, 114, 24–31. [Google Scholar] [CrossRef]

- Pontius, R.G.; Millones, M. Death to Kappa: Birth of Quantity Disagreement and Allocation Disagreement for Accuracy Assessment. Int. J. Remote Sens. 2011, 32, 4407–4429. [Google Scholar] [CrossRef]

- Stehman, S.V.; Foody, G.M. Key Issues in Rigorous Accuracy Assessment of Land Cover Products. Remote Sens. Environ. 2019, 231, 111199. [Google Scholar] [CrossRef]

- Massetti, A.; Sequeira, M.M.; Pupo, A.; Figueiredo, A.; Guiomar, N.; Gil, A. Assessing the Effectiveness of RapidEye Multispectral Imagery for Vegetation Mapping in Madeira Island (Portugal). Eur. J. Remote Sens. 2016, 49, 643–672. [Google Scholar] [CrossRef]

- Story, M.; Congalton, R.G. Remote Sensing Brief Accuracy Assessment: A User’s Perspective. Photogramm. Eng. Remote Sens. 1986, 52, 397–399. [Google Scholar]

- Liaw, A.; Wiener, M. Classification and Regression by randomForest. R News 2002, 2, 18–22. [Google Scholar]

- Kuhn, M. Building Predictive Models in R Using the Caret Package. J. Stat. Softw. 2008, 28, 1–26. [Google Scholar] [CrossRef]

- Abdullah, A.Y.M.; Masrur, A.; Gani Adnan, M.S.; Al Baky, M.A.; Hassan, Q.K.; Dewan, A. Spatio-Temporal Patterns of Land Use/Land Cover Change in the Heterogeneous Coastal Region of Bangladesh between 1990 and 2017. Remote Sens. 2019, 11, 790. [Google Scholar] [CrossRef]

- Seo, B.; Bogner, C.; Poppenborg, P.; Martin, E.; Hoffmeister, M.; Jun, M.; Koellner, T.; Reineking, B.; Shope, C.L.; Tenhunen, J. Deriving a Per-Field Land Use and Land Cover Map in an Agricultural Mosaic Catchment. Earth Syst. Sci. Data 2014, 6, 339–352. [Google Scholar] [CrossRef]

- Huang, W.; DeVries, B.; Huang, C.; Lang, M.; Jones, J.; Creed, I.; Carroll, M. Automated Extraction of Surface Water Extent from Sentinel-1 Data. Remote Sens. 2018, 10, 797. [Google Scholar] [CrossRef]

- Koppel, K.; Zalite, K.; Voormansik, K.; Jagdhuber, T. Sensitivity of Sentinel-1 Backscatter to Characteristics of Buildings. Int. J. Remote Sens. 2017, 38, 6298–6318. [Google Scholar] [CrossRef]

- Cable, J.W.; Kovacs, J.M.; Jiao, X.; Shang, J. Agricultural Monitoring in Northeastern Ontario, Canada, Using Multi-Temporal Polarimetric RADARSAT-2 Data. Remote Sens. 2014, 6, 2343–2371. [Google Scholar] [CrossRef]

- Dabboor, M.; Howell, S.; Shokr, M.; Yackel, J. The Jeffries–Matusita Distance for the Case of Complex Wishart Distribution as a Separability Criterion for Fully Polarimetric SAR Data. Int. J. Remote Sens. 2014, 35, 6859–6873. [Google Scholar] [CrossRef]

- Klein, D.; Moll, A.; Menz, G. Land Cover/Use Classification in a Semiarid Environment in East Africa Using Multi-Temporal Alternating Polarization ENVISAT ASAR Data. In Proceedings of the 2004 Envisat & ERS Symposium (ESA SP-572), Salzburg, Austria, 6–10 September 2004. [Google Scholar]

- Harfenmeister, K.; Spengler, D. Analyzing Temporal and Spatial Characteristics of Crop Parameters Using Sentinel-1 Backscatter Data. Remote Sens. 2019, 11, 1569. [Google Scholar] [CrossRef]

- Tavares, P.A.; Beltrão, N.E.S.; Guimarães, U.S.; Teodoro, A.C. Integration of Sentinel-1 and Sentinel-2 for Classification and LULC Mapping in the Urban Area of Belém, Eastern Brazilian Amazon. Sensors 2019, 19, 1140. [Google Scholar] [CrossRef]

- Abdi, A.M. Land Cover and Land Use Classification Performance of Machine Learning Algorithms in a Boreal Landscape Using Sentinel-2 Data. GISci. Remote Sens. 2019, 57, 1–20. [Google Scholar] [CrossRef]

- Forkuor, G.; Dimobe, K.; Serme, I.; Tondoh, J.E. Landsat-8 vs. Sentinel-2: Examining the Added Value of Sentinel-2’s Red-Edge Bands to Land-Use and Land-Cover Mapping in Burkina Faso. GISci. Remote Sens. 2018, 55, 331–354. [Google Scholar] [CrossRef]

- Eklundh, L.; Harrie, L.; Kuusk, A. Investigating Relationships between Landsat ETM+ Sensor Data and Leaf Area Index in a Boreal Conifer Forest. Remote Sens. Environ. 2001, 78, 239–251. [Google Scholar] [CrossRef]

- Immitzer, M.; Atzberger, C.; Koukal, T. Tree Species Classification with Random Forest Using Very High Spatial Resolution 8-Band WorldView-2 Satellite Data. Remote Sens. 2012, 4, 2661–2693. [Google Scholar] [CrossRef]

- Veloso, A.; Mermoz, S.; Bouvet, A.; Le Toan, T.; Planells, M.; Dejoux, J.F.; Ceschia, E. Understanding the Temporal Behavior of Crops Using Sentinel-1 and Sentinel-2-like Data for Agricultural Applications. Remote Sens. Environ. 2017, 199, 415–426. [Google Scholar] [CrossRef]

- Congalton, R.G.; Oderwald, R.G.; Mead, R.A. Assessing Landsat Classification Accuracy Using Discrete Multivariate Analysis Statistical Techniques. Photogramm. Eng. Remote Sens. 1983, 27, 83–92. [Google Scholar]

- Sun, C.; Bian, Y.; Zhou, T.; Pan, J. Using of Multi-Source and Multi-Temporal Remote Sensing Data Improves Crop-Type Mapping in the Subtropical Agriculture Region. Sensors 2019, 19, 2401. [Google Scholar] [CrossRef] [PubMed]

- Zakeri, H.; Yamazaki, F.; Liu, W. Texture Analysis and Land Cover Classification of Tehran Using Polarimetric Synthetic Aperture Radar Imagery. Appl. Sci. 2017, 7, 452. [Google Scholar] [CrossRef]

- Holtgrave, A.; Röder, N.; Ackermann, A.; Erasmi, S.; Kleinschmit, B. Comparing Sentinel-1 and -2 Data and Indices for Agricultural Land Use Monitoring. Remote Sens. 2020, 12, 2919. [Google Scholar] [CrossRef]

- Bouvet, A.; Mermoz, S.; Ballère, M.; Koleck, T.; Le Toan, T. Use of the SAR Shadowing Effect for Deforestation Detection with Sentinel-1 Time Series. Remote Sens. 2018, 10, 1250. [Google Scholar] [CrossRef]

- Xiang, D.; Tang, T.; Hu, C.; Fan, Q.; Su, Y. Built-up Area Extraction from Polsar Imagery with Model-Based Decomposition and Polarimetric Coherence. Remote Sens. 2016, 8, 685. [Google Scholar] [CrossRef]

- Fritz, S.; See, L. Comparison of Land Cover Maps Using Fuzzy Agreement. Int. J. Geogr. Inf. Sci. 2005, 19, 787–807. [Google Scholar] [CrossRef]

- Pérez-Hoyos, A.; Udías, A.; Rembold, F. Integrating Multiple Land Cover Maps through a Multi-Criteria Analysis to Improve Agricultural Monitoring in Africa. Int. J. Appl. Earth Obs. Geoinf. 2020, 88, 102064. [Google Scholar] [CrossRef]

- Thinh, T.V.; Duong, P.C.; Nasahara, K.N.; Tadono, T. How Does Land Use/Land Cover Map’s Accuracy Depend on Number of Classification Classes? SOLA 2019, 15, 28–31. [Google Scholar] [CrossRef]

- Mellor, A.; Boukir, S.; Haywood, A.; Jones, S. Exploring Issues of Training Data Imbalance and Mislabelling on Random Forest Performance for Large Area Land Cover Classification Using the Ensemble Margin. ISPRS J. Photogramm. Remote Sens. 2015, 105, 155–168. [Google Scholar] [CrossRef]

- Pelletier, C.; Valero, S.; Inglada, J.; Champion, N.; Sicre, C.M.; Dedieu, G. Effect of Training Class Label Noise on Classification Performances for Land Cover Mapping with Satellite Image Time Series. Remote Sens. 2017, 9, 173. [Google Scholar] [CrossRef]

- Dabija, A.; Kluczek, M.; Zagajewski, B.; Raczko, E.; Kycko, M.; Al-Sulttani, A.H.; Tardà, A.; Pineda, L.; Corbera, J. Comparison of Support Vector Machines and Random Forests for Corine Land Cover Mapping. Remote Sens. 2021, 13, 777. [Google Scholar] [CrossRef]

- Close, O.; Benjamin, B.; Petit, S.; Fripiat, X.; Hallot, E. Use of Sentinel-2 and LUCAS Database for the Inventory of Land Use, Land Use Change, and Forestry in Wallonia, Belgium. Land 2018, 7, 154. [Google Scholar] [CrossRef]

- Phiri, D.; Simwanda, M.; Salekin, S.; Nyirenda, V.; Murayama, Y.; Ranagalage, M. Sentinel-2 Data for Land Cover/Use Mapping: A Review. Remote Sens. 2020, 12, 2291. [Google Scholar] [CrossRef]

| S1 | S1 Orbit | S2 | S2 Cloud Cover [%] | ΔS1-S2 [Days] | |

|---|---|---|---|---|---|

| Date | 04-12-2017 | ASC | 19-12-2017 | 0.0 | 15 |

| 27-04-2018 | ASC | 28-04-2018 | 0.1 | 1 | |

| 19-08-2018 | ASC | 16-08-2018 | 3.6 | 3 | |

| 12-10-2018 | ASC | 10-10-2018 | 0.2 | 2 | |

| 29-11-2018 | ASC | 04-12-2018 | 1.1 | 5 |

| Spectral Index | Equation * | S2 Bands Used | Reference |

| NDVI | [42] | ||

| NDWI | [43] | ||

| EVI | [44] | ||

| SAVI | [45] | ||

| NDI45 | [46] | ||

| MCARI | [47] | ||

| GNDVI | [48] | ||

| MSAVI | [49] | ||

| PSSRa | [50] | ||

| IRECI | [51] |

| ID | Class | CORINE | LUCAS |

|---|---|---|---|

| 1 | Cropland | 2.1 Arable land | B00 Cropland (except B70) |

| 2.4 Heterogeneous agricultural areas | |||

| 2 | Forest | 3.1 Forests | C00 Woodland |

| 3 | Water | 4.1 Inland wetlands | G00 Water areas |

| 5.1 Inland waters | |||

| 4 | Built-up | 1.1 Urban fabric | A00 Artificial land |

| 5 | Bare soil | 3.3 Open spaces with little or no vegetation | F00 Bare land and lichens/moss |

| 6 | Grassland | 2.3 Pastures | D00 Shrubland |

| 3.2 Scrub and/or herbaceous vegetation associations | E00 Grassland | ||

| 7 | Orchard | 2.2.2 Fruit trees and berry plantations | B70 Permanent crops: Fruit trees |

| 8 | Vineyard | 2.2.1 Vineyards | B82 Vineyards |

| ID # | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | |

|---|---|---|---|---|---|---|---|---|---|

| 1 | - | 0.56 | 1.35 | 0.63 | 0.07 | 0.14 | 0.34 | 0.25 | |

| 2 | 0.31 | - | 1.82 | 0.37 | 0.28 | 0.52 | 0.11 | 0.20 | |

| 3 | 1.91 | 1.93 | - | 1.86 | 1.36 | 1.27 | 1.68 | 1.59 | |

| 4 | 0.12 | 0.52 | 1.96 | - | 0.30 | 0.69 | 0.41 | 0.49 | |

| 5 | 0.27 | 0.52 | 1.99 | 0.17 | - | 0.16 | 0.18 | 0.14 | |

| 6 | 0.13 | 0.59 | 1.97 | 0.08 | 0.37 | - | 0.26 | 0.16 | |

| 7 | 0.08 | 0.31 | 1.96 | 0.08 | 0.36 | 0.06 | - | 0.05 | |

| 8 | 0.08 | 0.65 | 1.96 | 0.07 | 0.35 | 0.04 | 0.08 | - |

| ntree | ||||

|---|---|---|---|---|

| 100 | 500 | 1000 | ||

| mtry | 10 | 93.85 | 93.78 | 93.40 |

| 20 | 95.51 | 96.07 | 96.20 | |

| 30 | 96.30 | 96.41 | 96.58 | |

| Class. Scenario | OA (%) | QD (%) | AD (%) |

|---|---|---|---|

| S1 | 79.45 | 7.31 | 13.24 |

| S1 with GLCM | 81.71 | 8.57 | 9.72 |

| S2 | 89.04 | 2.74 | 8.22 |

| S2 with Indices | 90.37 | 1.83 | 7.80 |

| All | 90.78 | 1.38 | 7.84 |

| S1 with S2 | 91.78 | 1.83 | 6.39 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Dobrinić, D.; Gašparović, M.; Medak, D. Sentinel-1 and 2 Time-Series for Vegetation Mapping Using Random Forest Classification: A Case Study of Northern Croatia. Remote Sens. 2021, 13, 2321. https://doi.org/10.3390/rs13122321

Dobrinić D, Gašparović M, Medak D. Sentinel-1 and 2 Time-Series for Vegetation Mapping Using Random Forest Classification: A Case Study of Northern Croatia. Remote Sensing. 2021; 13(12):2321. https://doi.org/10.3390/rs13122321

Chicago/Turabian StyleDobrinić, Dino, Mateo Gašparović, and Damir Medak. 2021. "Sentinel-1 and 2 Time-Series for Vegetation Mapping Using Random Forest Classification: A Case Study of Northern Croatia" Remote Sensing 13, no. 12: 2321. https://doi.org/10.3390/rs13122321

APA StyleDobrinić, D., Gašparović, M., & Medak, D. (2021). Sentinel-1 and 2 Time-Series for Vegetation Mapping Using Random Forest Classification: A Case Study of Northern Croatia. Remote Sensing, 13(12), 2321. https://doi.org/10.3390/rs13122321