Relation-Constrained 3D Reconstruction of Buildings in Metropolitan Areas from Photogrammetric Point Clouds

Abstract

1. Introduction

- We designed a top-down space partitioning strategy that converts a building’s bounding space into abundant but not redundant CSG-BRep trees, based on the geometric relations in the building structure. A subset of the leaf cells from the partitioning CSG-BRep tree are then selected to build a bottom-up reconstructing CSG-BRep tree, which forms the geometric model of the building. Because these cells are produced according to the building structure, they allow for a configuration that can model buildings in arbitrary shapes, including façade intrusions and extrusions;

- To best approximate the building geometries, we developed a novel optimization strategy for the selection of the 3D space cells, where 3D facets and edges are extracted from the topological relations and used to constrain the selection process. The uses of multiple space entities introduce more measurements and constraints to the modelling, such that the completeness, fitness, and regularity of the modelling result are guaranteed simultaneously;

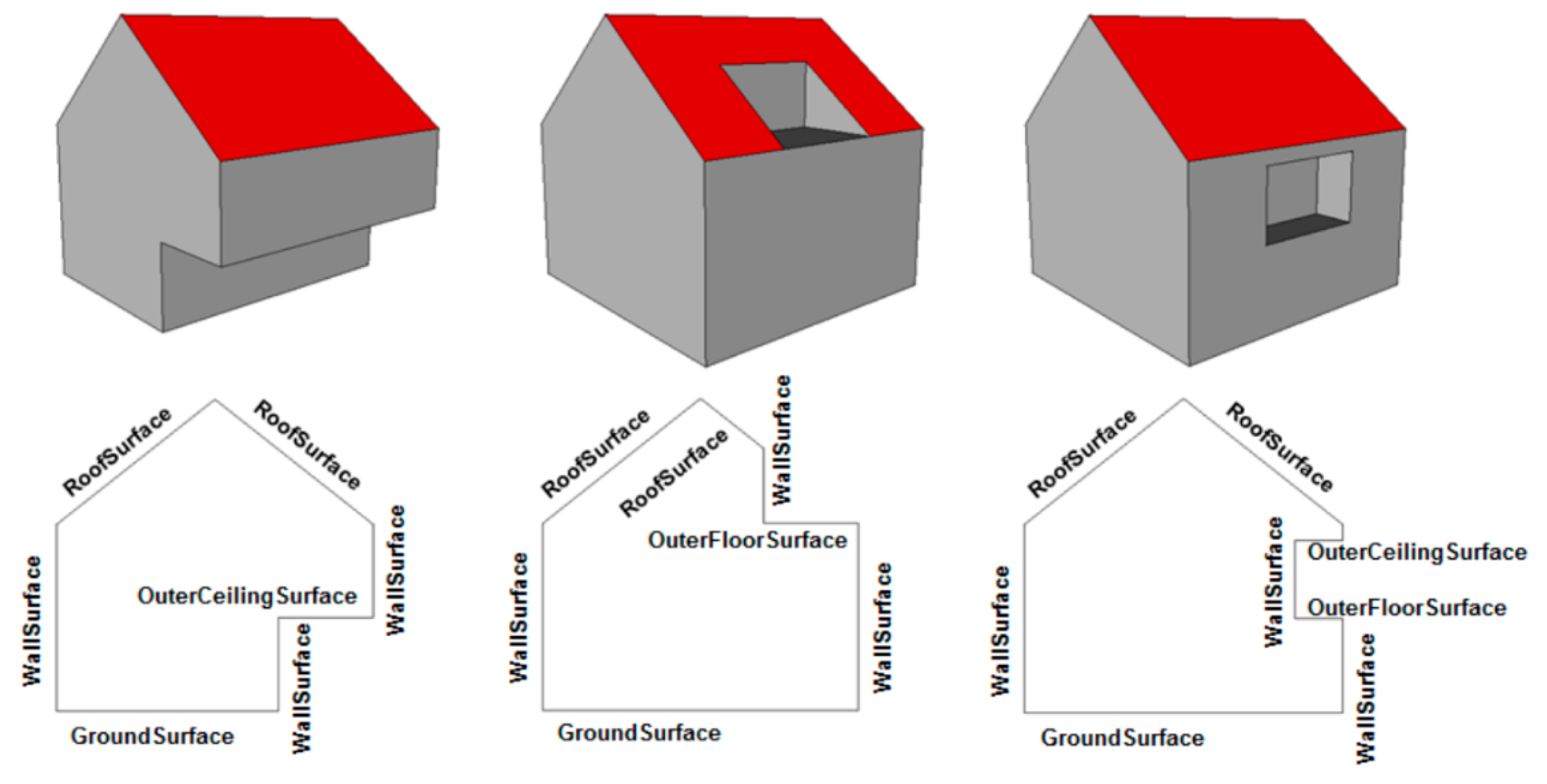

- We designed two useful rule-based schemes that can automatically analyze the topological relations between different building parts based on the CSG-BRep trees and assign each building surface with semantic information, converting the models into an international standard—CityGML [19]. Therefore, the final building models can be used not only in applications related to geometric analysis, but also in applications where topological or semantic information is required.

2. Related Work

2.1. Model-Driven Methods

2.2. Data-Driven Methods

2.3. Hybrid-Driven Methods

2.4. Space-Partition-Based Methods

2.5. CityGML Building Models

3. Relation-Constrained 3D Reconstruction of Buildings Using CSG-BRep Trees

3.1. Overview of the Approach

3.2. Partitioning CSG-BRep Tree for 3D Space Decomposition

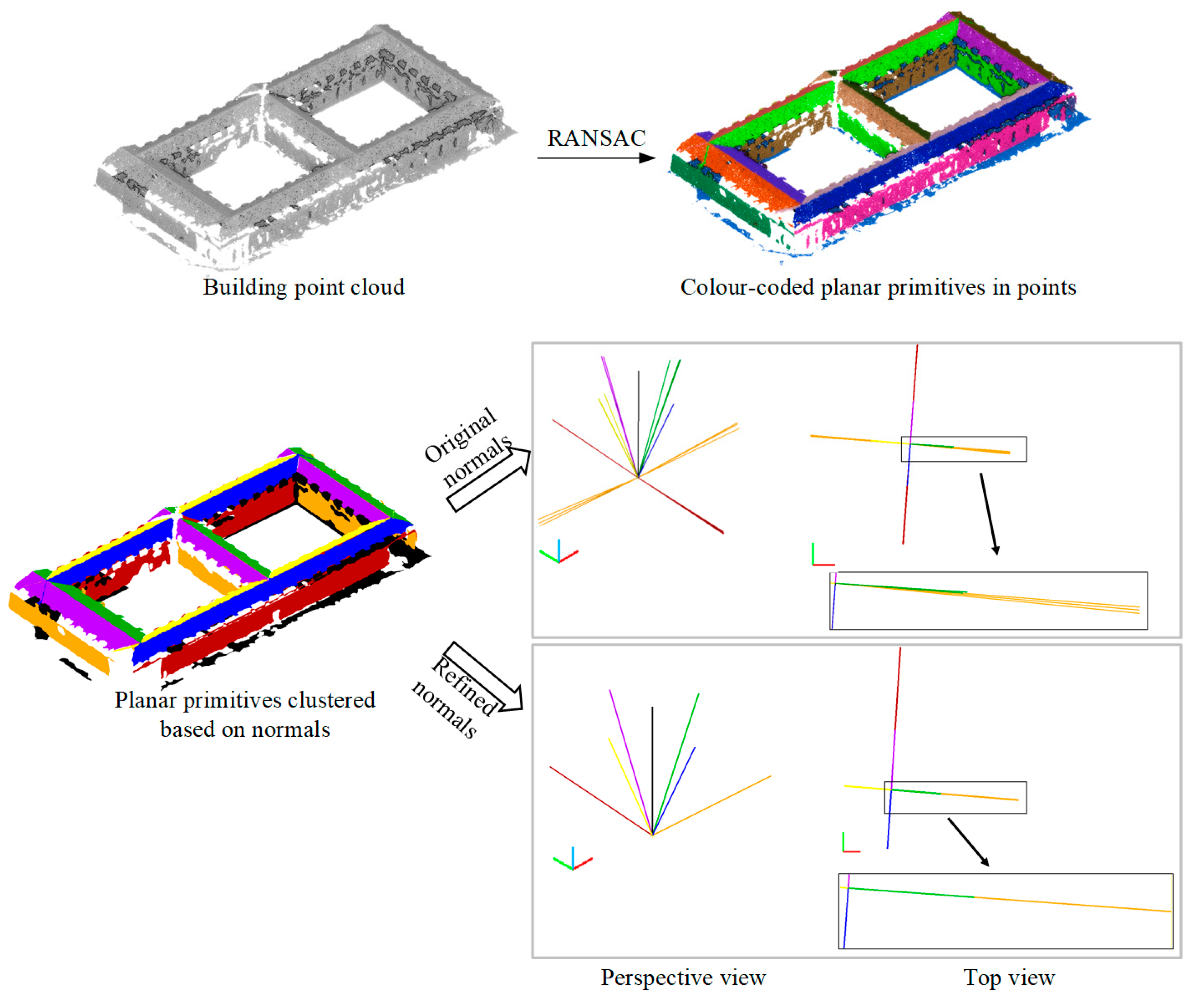

3.2.1. Extraction and Refinement of Planar Primitives Based on Geometric Relations

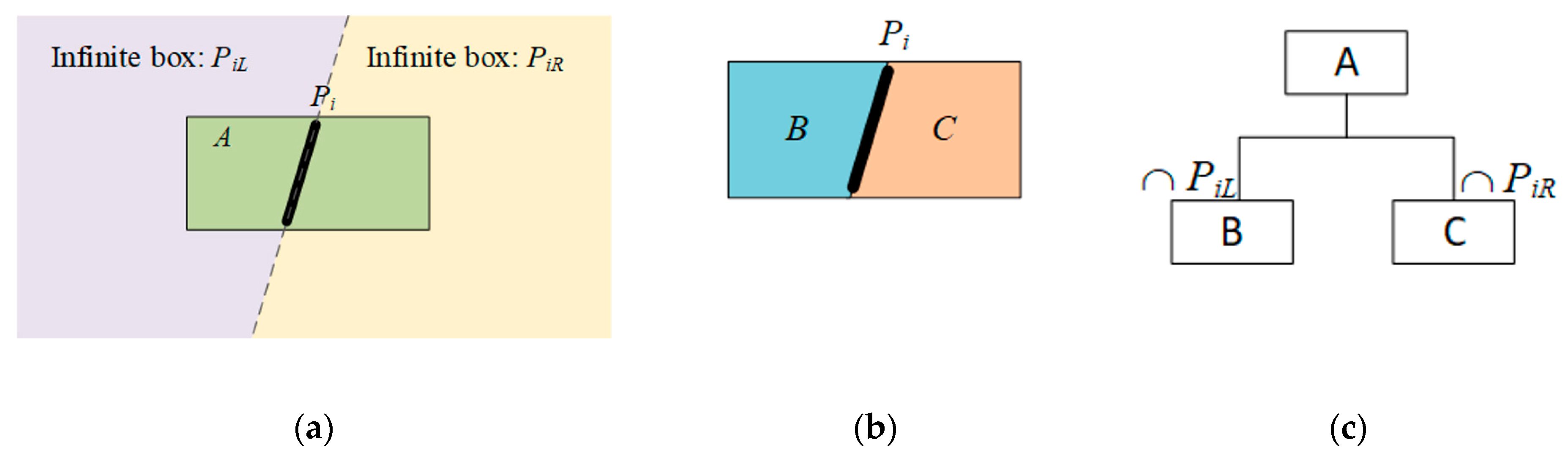

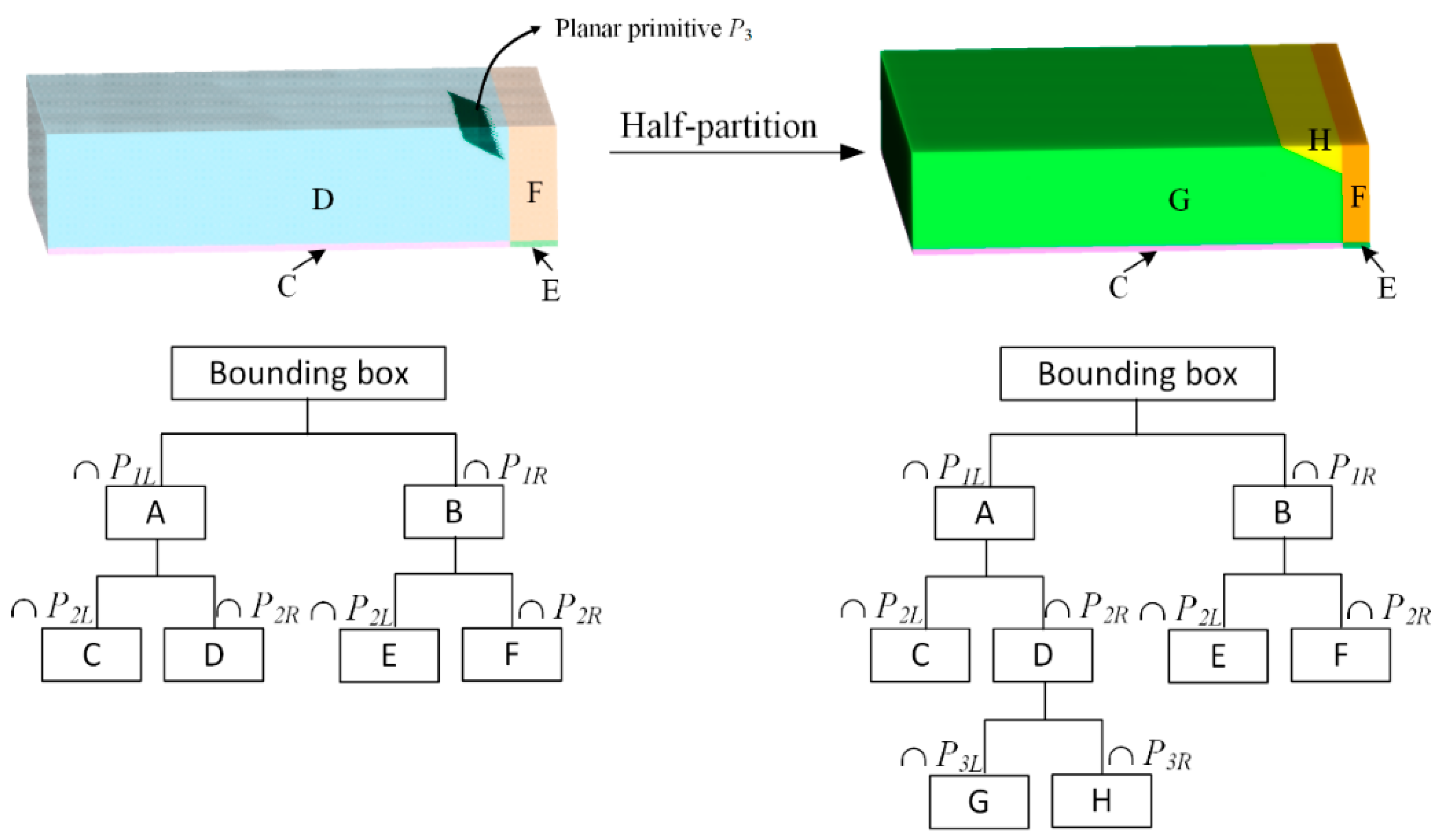

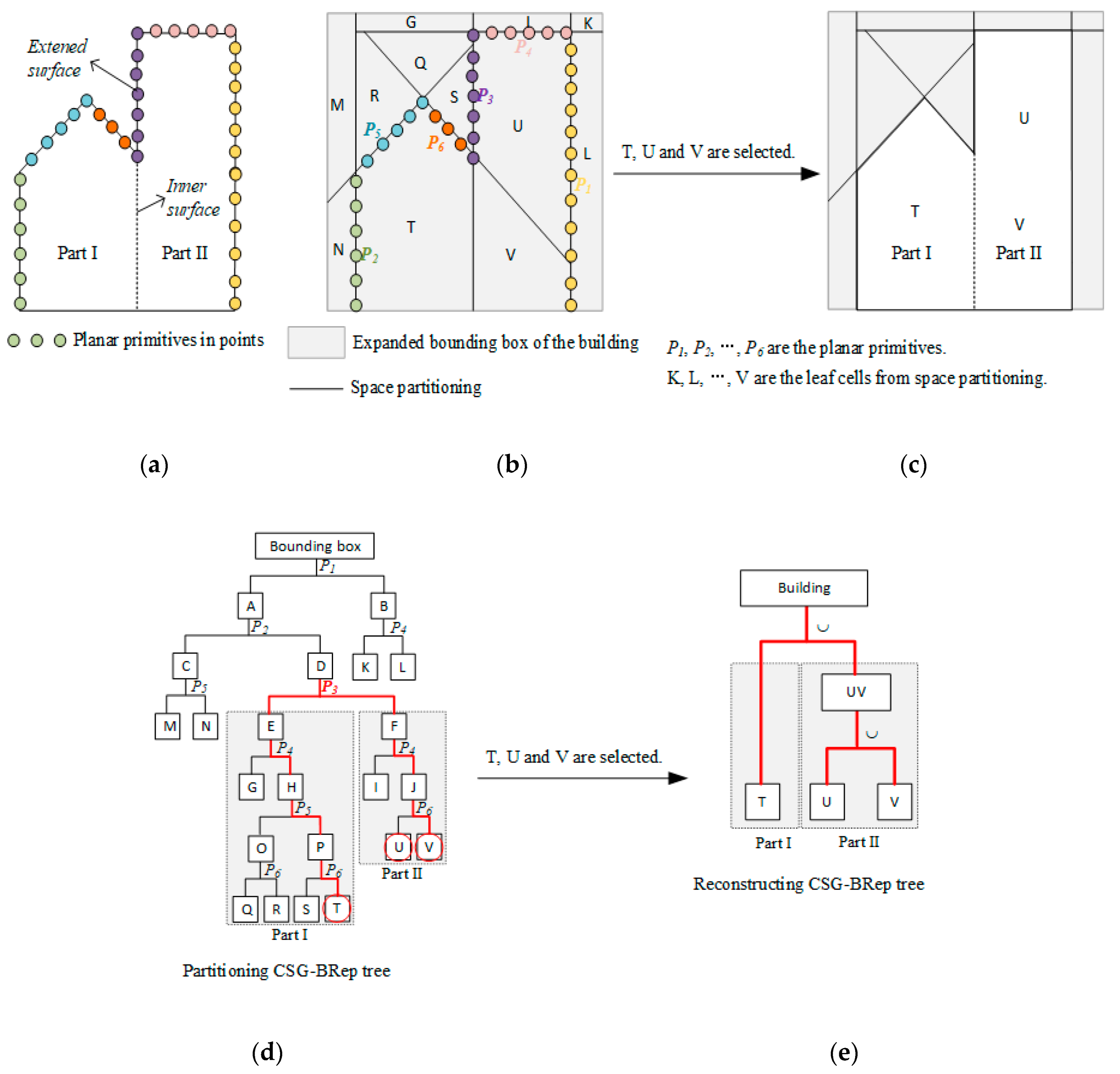

3.2.2. Generation of the Partitioning CSG-BRep Tree

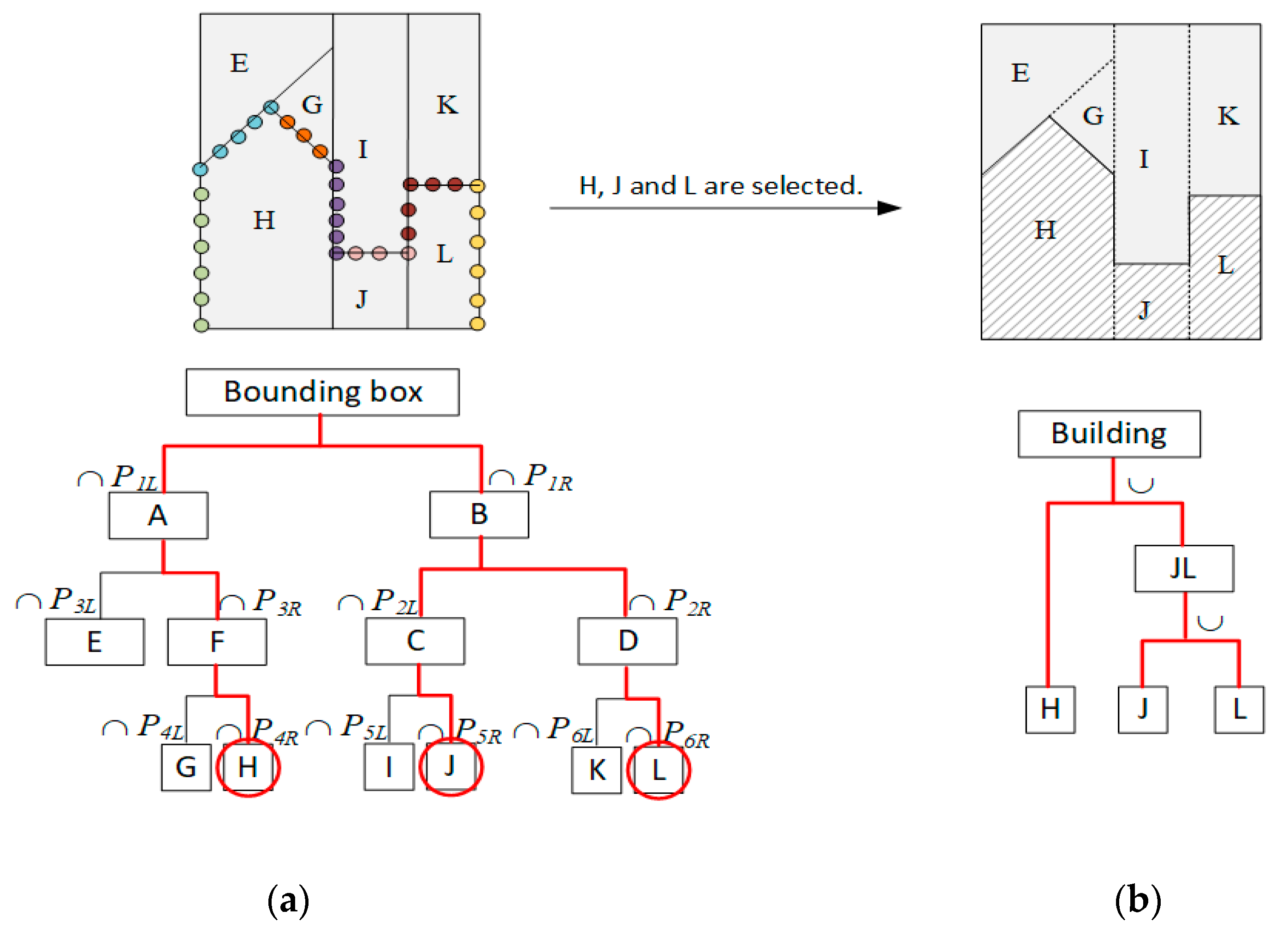

3.3. Reconstructing CSG-BRep Tree Based on Topological-relation Constraints

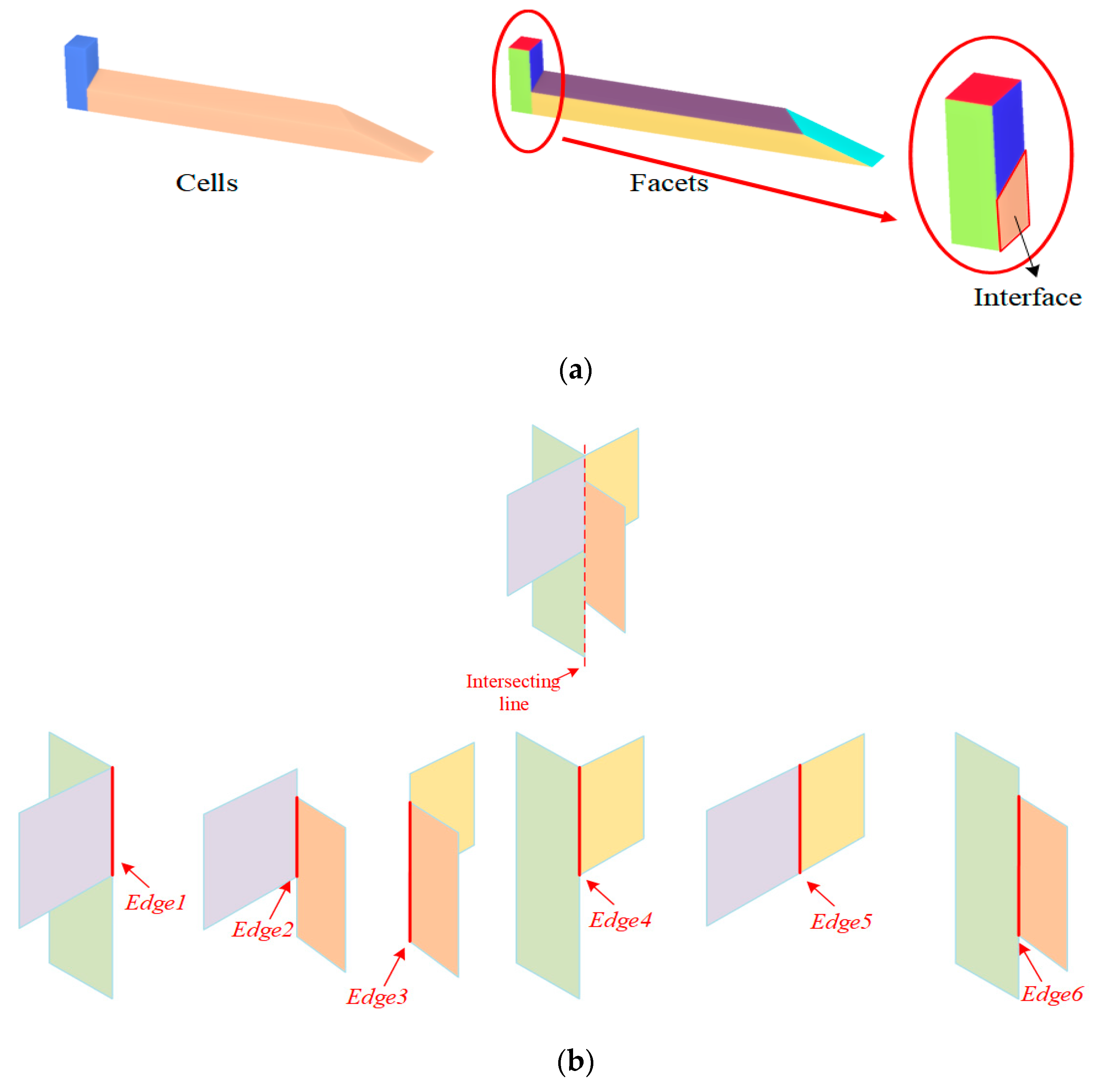

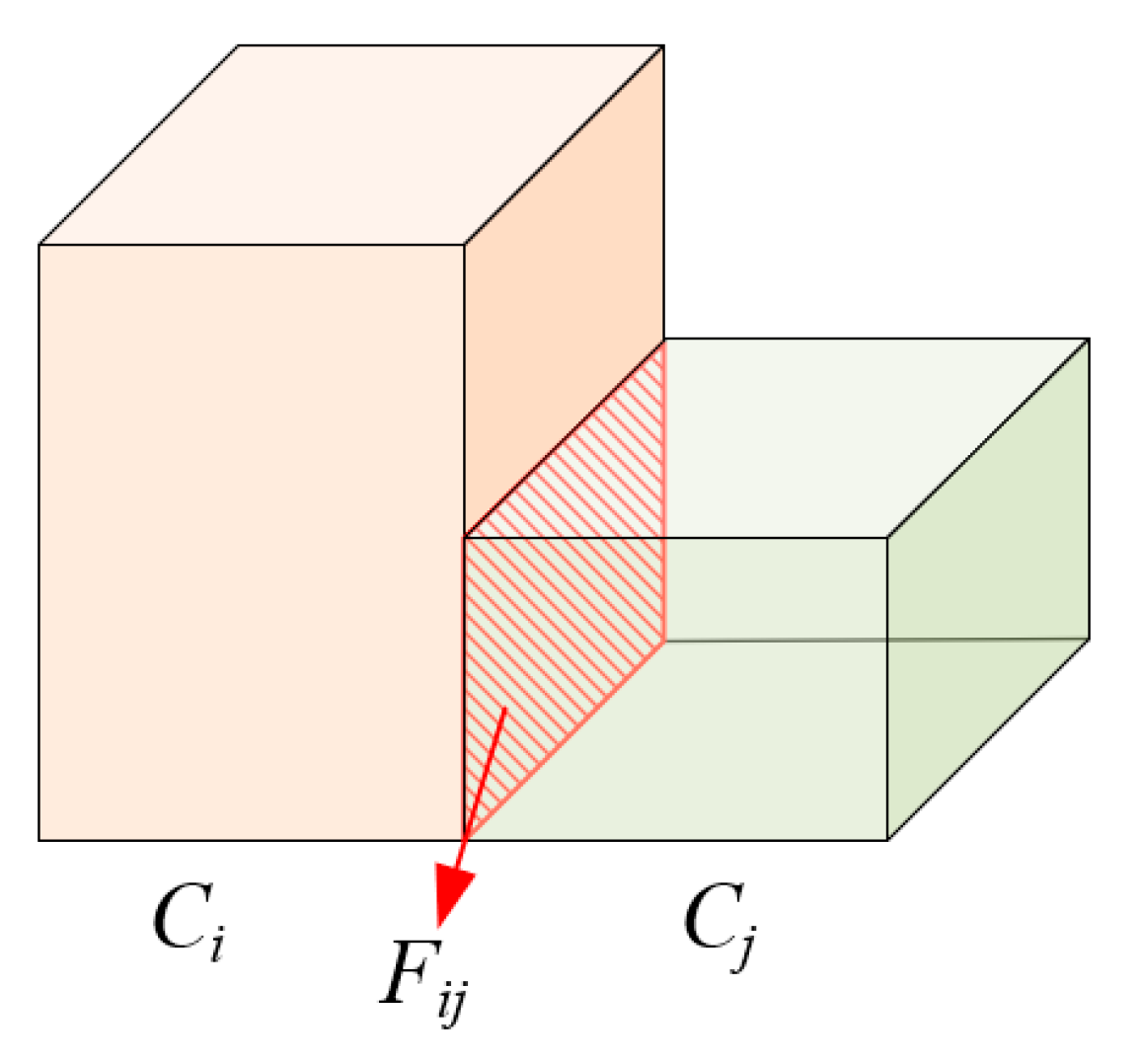

3.3.1. Extraction of 2D Topology between 3D Cells

3.3.2. Extraction of 1D Topology between 3D Cells

3.3.3. Optimal Selection with Topological-Relation Constraints

3.4. Generation of CityGML Building Models

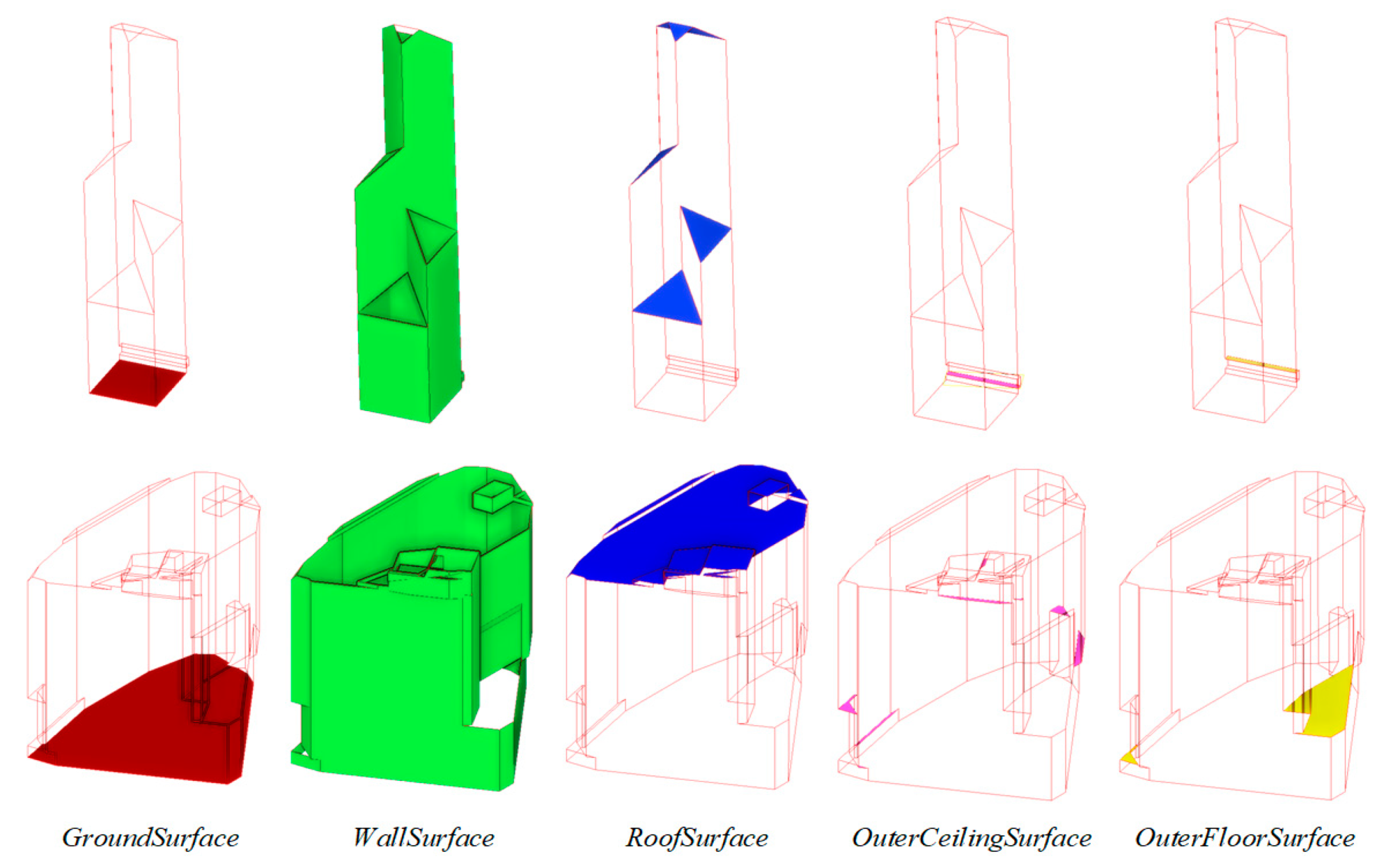

3.4.1. Retrieving Topologies between Building Parts

3.4.2. Building Surface Type Classification

4. Experimental Analysis and Discussion

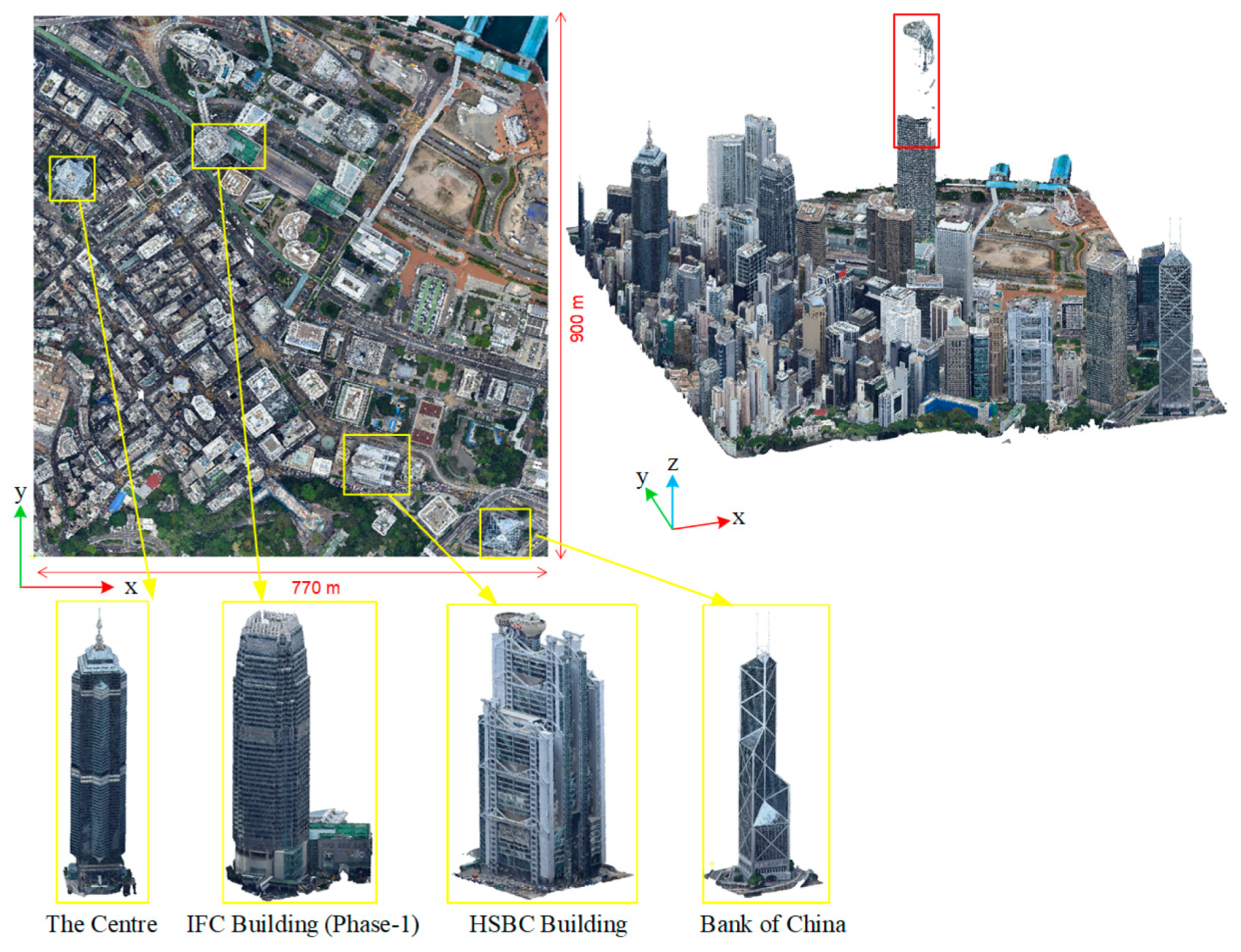

4.1. Dataset Description

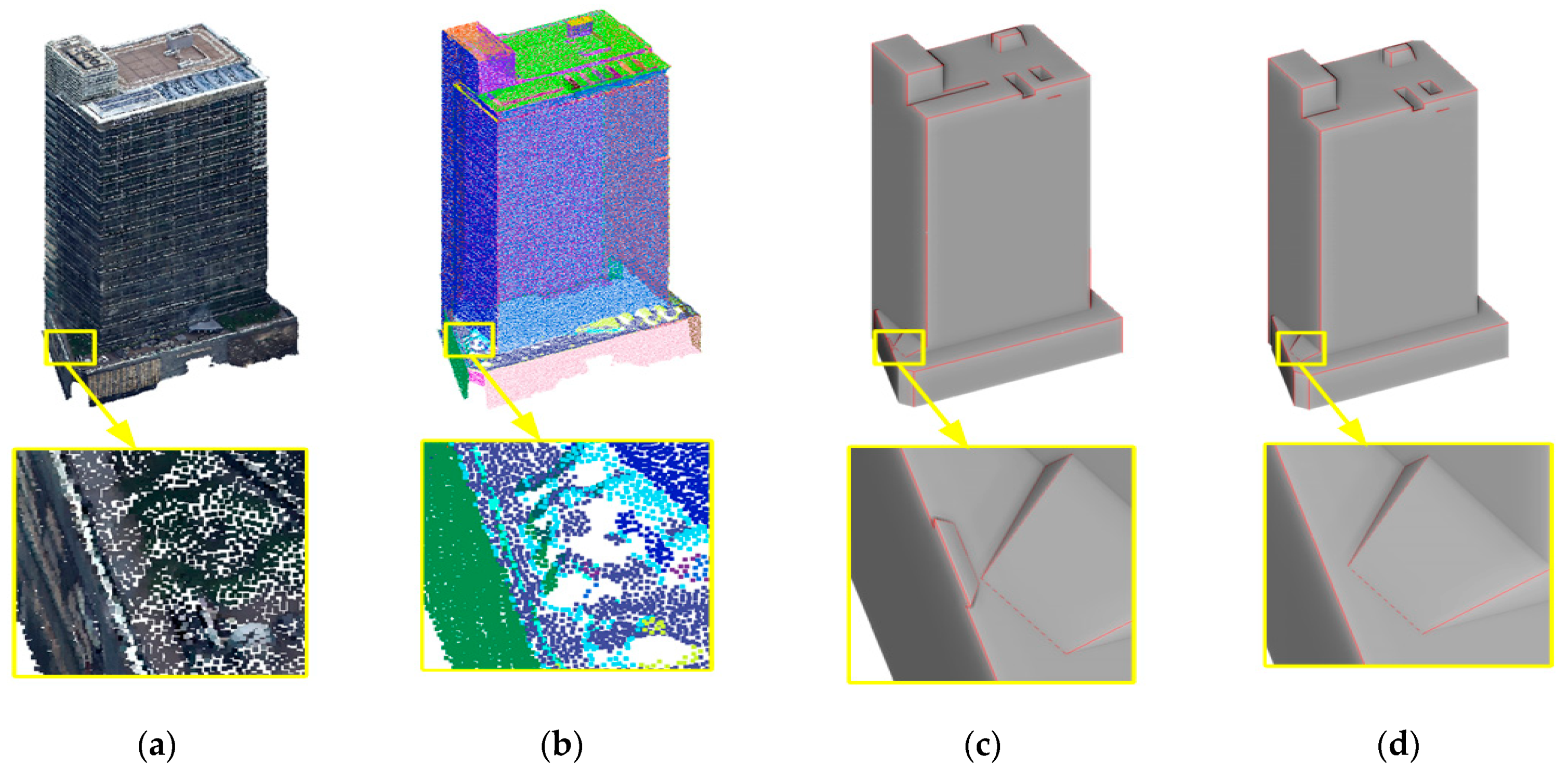

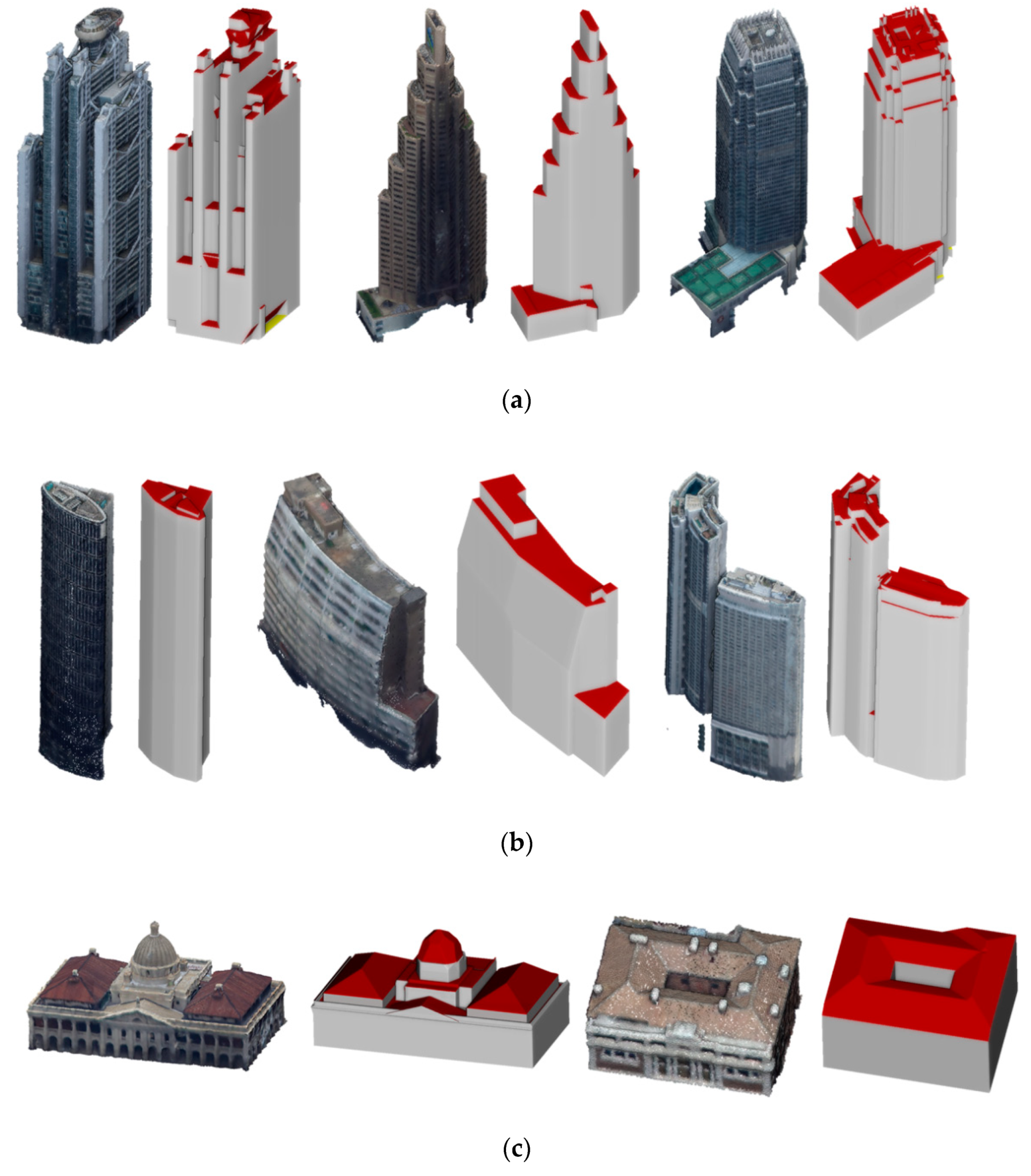

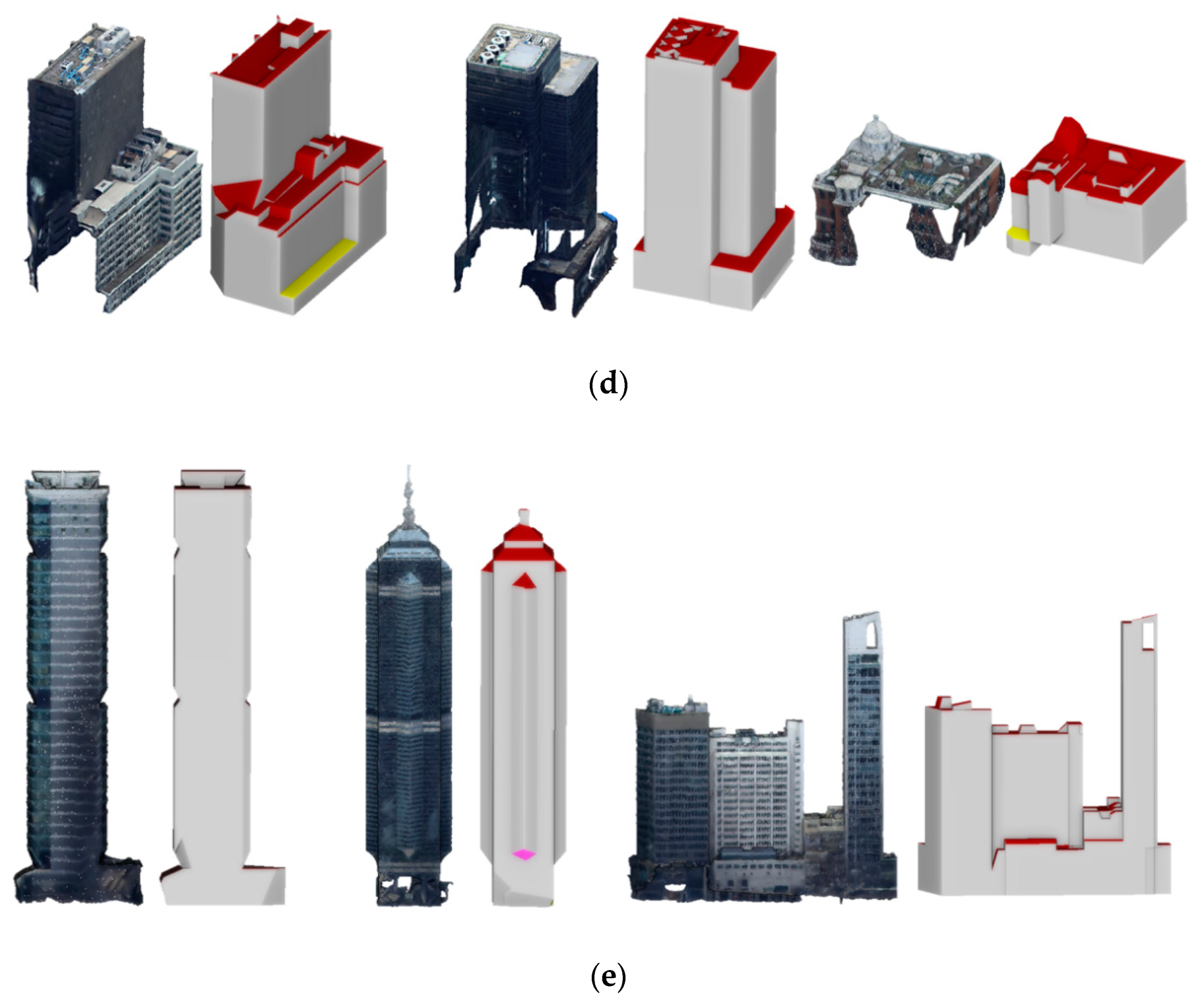

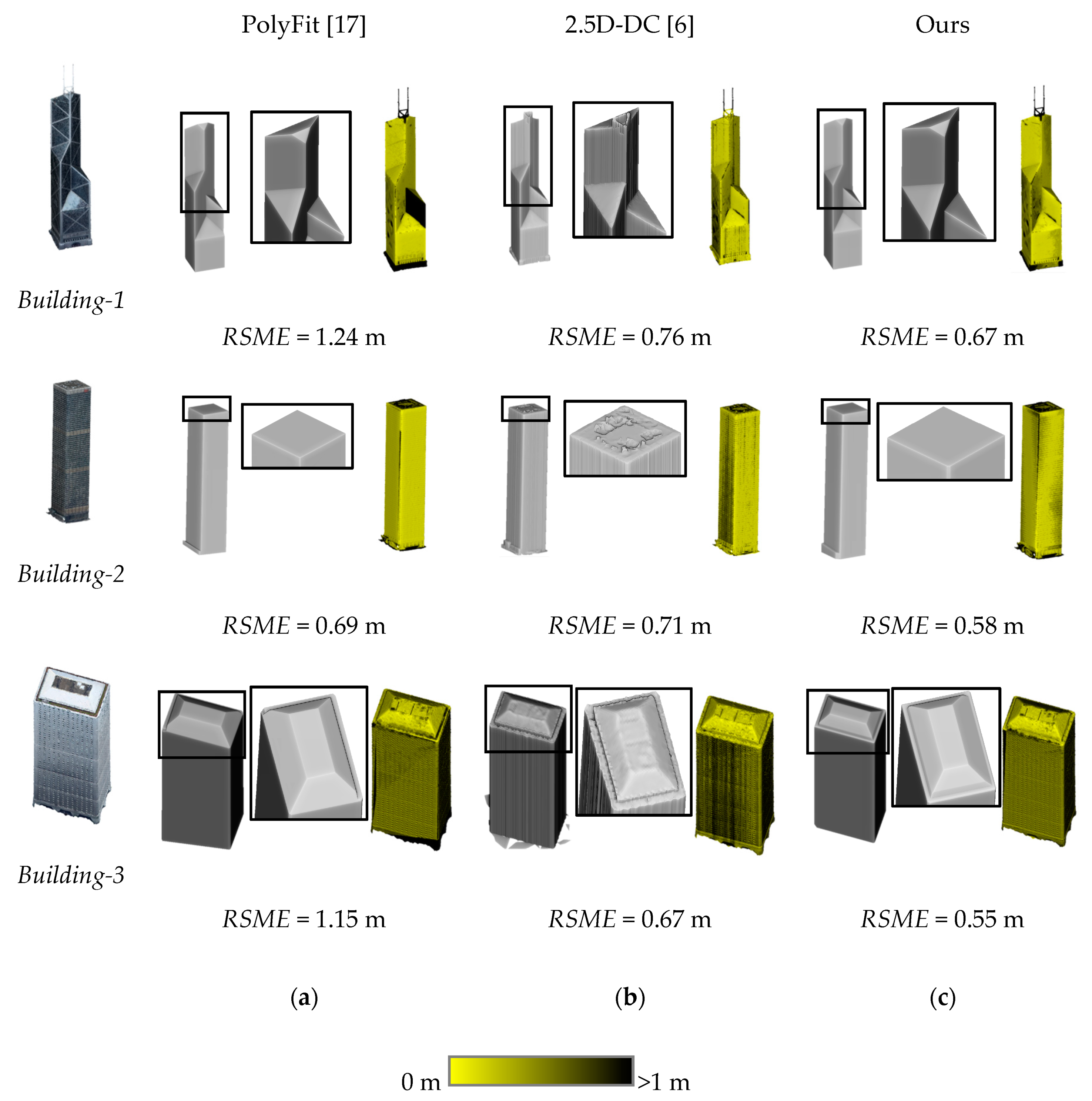

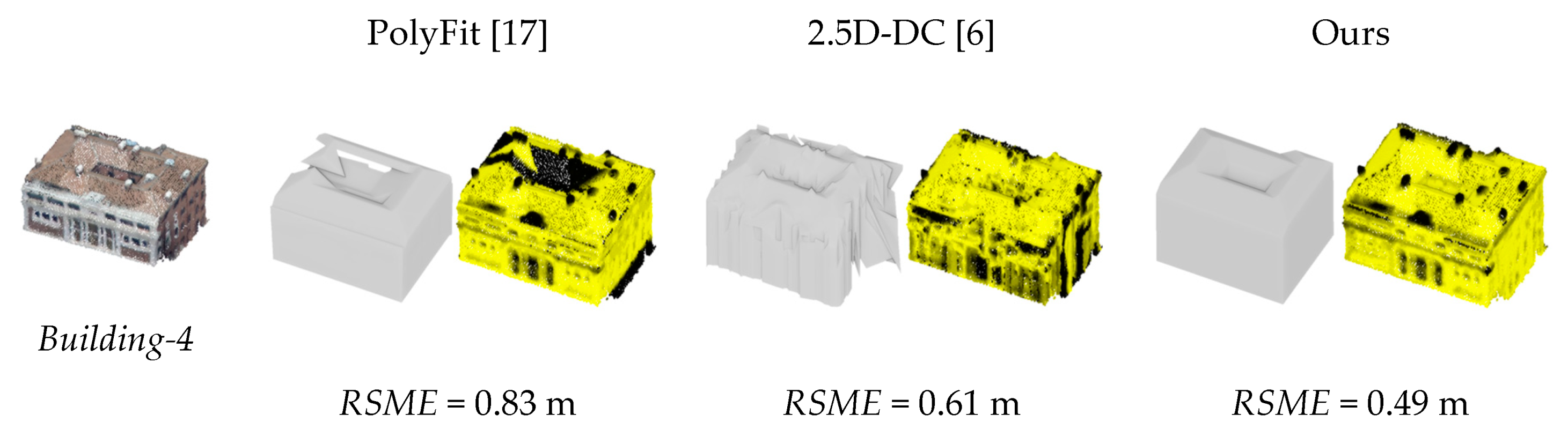

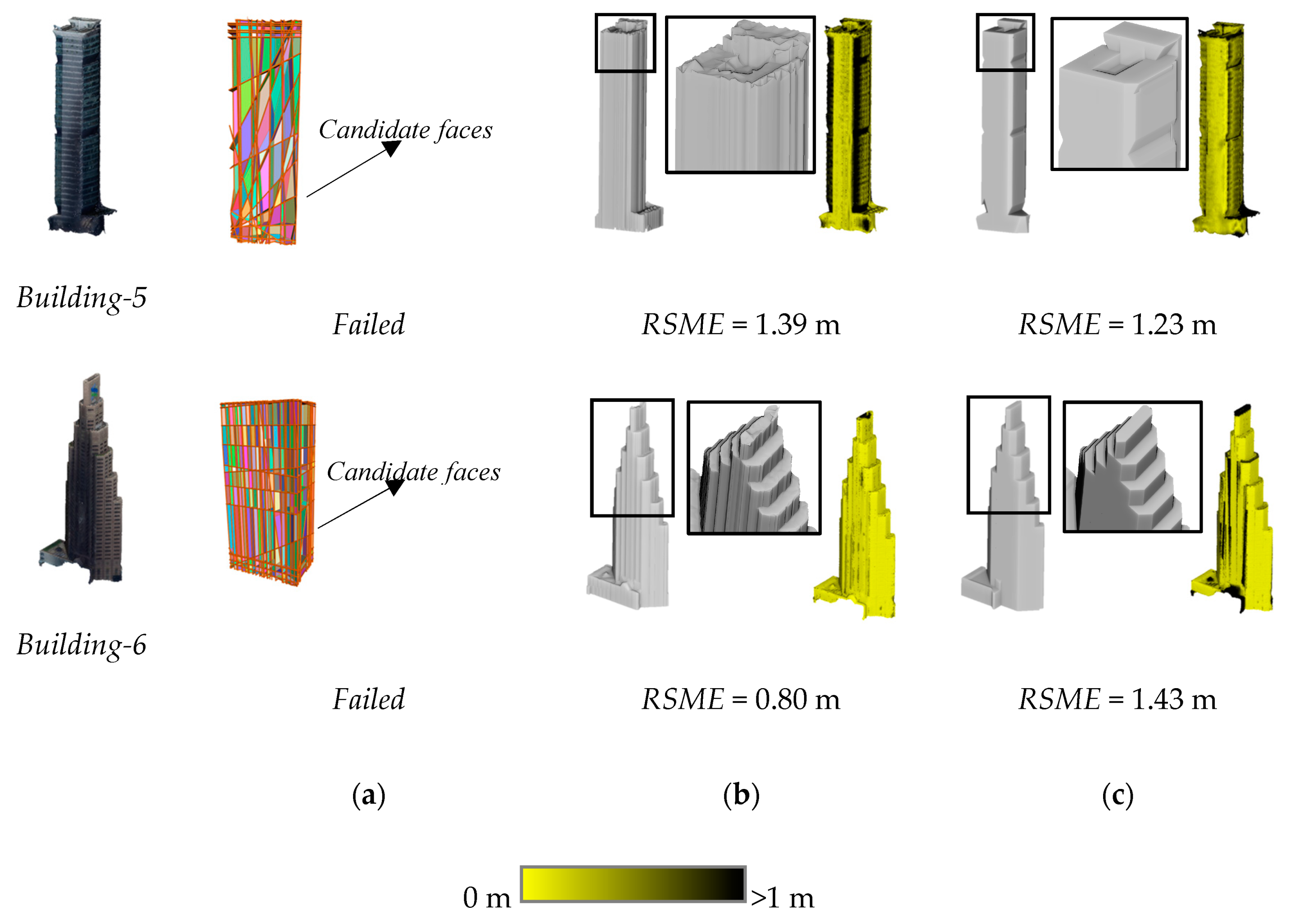

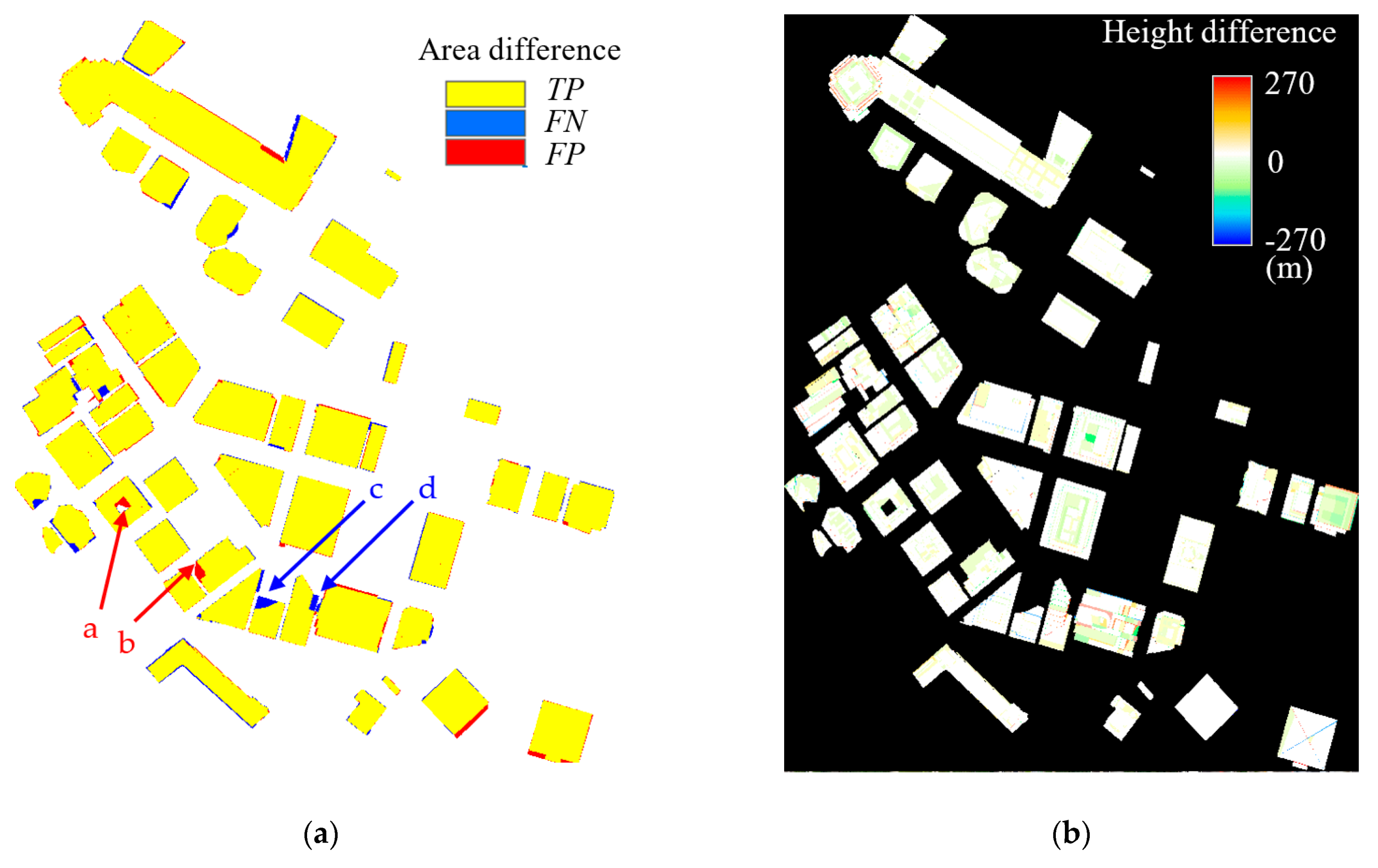

4.2. Qualitative Evaluation of the Reconstruction Results

4.3. Quantitative Evaluation and Comparisons with Previous Methods

4.4. Evaluation of Building Surface Detection and Classification

4.5. Discussion

5. Conclusions

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Lafarge, F.; Mallet, C. Creating large-scale city models from 3D point clouds: A robust approach with hybrid representation. Int. J. Comput. Vis. 2012, 99, 69–85. [Google Scholar] [CrossRef]

- Li, M.; Rottensteiner, F.; Heipke, C. Modelling of buildings from aerial LiDAR point clouds using TINs and label maps. ISPRS J. Photogramm. Remote Sens. 2019, 154, 127–138. [Google Scholar] [CrossRef]

- Zhang, L.; Li, Z.; Li, A.; Liu, F. Large-scale urban point cloud labelling and reconstruction. ISPRS J. Photogramm. Remote Sens. 2018, 138, 86–100. [Google Scholar] [CrossRef]

- Chen, D.; Zhang, L.; Mathiopoulos, P.T.; Huang, X. A methodology for automated segmentation and reconstruction of urban 3-D buildings from ALS point clouds. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2014, 7, 4199–4217. [Google Scholar] [CrossRef]

- Sampath, A.; Shan, J. Segmentation and reconstruction of polyhedral building roofs from aerial lidar point clouds. IEEE Trans. Geosci. Remote Sens. 2010, 48, 1554–1567. [Google Scholar] [CrossRef]

- Zhou, Q.Y.; Neumann, U. 2.5D dual contouring: A robust approach to creating building models from aerial lidar point clouds. In Computer Vision—ECCV 2010; Daniilidis, K., Maragos, P., Paragios, N., Eds.; Lecture Notes in Computer Science; Springer: Berlin/Heidelberg, Germany, 2010; Volume 6313. [Google Scholar]

- Zhou, Q.Y.; Neumann, U. 2.5D building modelling with topology control. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Providence, RI, USA, 20–25 June 2011; pp. 2489–2496. [Google Scholar]

- Fritsch, D.; Becher, S.; Rothermel, M. Modeling façade structures using point clouds from dense image matching. In Proceedings of the International Conference on Advances in Civil, Structural and Mechanical Engineering—CSM 2013, Hong Kong, China, 3–4 August 2013; pp. 57–64. [Google Scholar]

- Zhu, Q.; Li, Y.; Hu, H.; Wu, B. Robust point cloud classification based on multi-level semantic relationships for urban relationships for urban scenes. ISPRS J. Photogramm. Remote Sens. 2017, 129, 86–102. [Google Scholar] [CrossRef]

- Ye, L.; Wu, B. Integrated image matching and segmentation for 3D surface reconstruction in urban areas. Photogramm. Eng. Remote Sens. 2018, 84, 35–48. [Google Scholar] [CrossRef]

- Tutzauer, P.; Becker, S.; Fritsch, D.; Niese, T.; Deussen, O. A study of the human comprehension of building categories based on different 3D building representations. Photogramm. Fernerkund. Geoinf. 2016, 15, 319–333. [Google Scholar] [CrossRef]

- Kada, M.; McKinley, L. 3D building reconstruction from LiDAR based on a cell decomposition approach. Int. Arch. Photogram. Remote Sens. Spat. Inf. Sci. 2009, 38, 47–52. [Google Scholar]

- Verma, V.; Kumar, R.; Hsu, S. 3D building detection and modelling from aerial LiDAR data. In Proceedings of the 2006 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’06), New York, NY, USA; 2006; pp. 2213–2220. [Google Scholar]

- Poullis, C. A framework for automatic modelling from point cloud data. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35, 2563–2575. [Google Scholar] [CrossRef]

- Xie, L.; Zhu, Q.; Hu, H.; Wu, B.; Li, Y.; Zhang, Y.; Zhong, R. Hierarchical regularization of building boundaries in noisy aerial laser scanning and photogrammetric point clouds. Remote Sens. 2018, 10, 1996–2018. [Google Scholar] [CrossRef]

- Xiong, B.; Oude Elberink, S.; Vosselman, G. A graph edit dictionary for correcting errors in roof topology graphs reconstructed from point clouds. ISPRS J. Photogramm. Remote Sens. 2014, 93, 227–242. [Google Scholar] [CrossRef]

- Nan, L.; Wonka, P. PolyFit: Polygonal surface reconstruction from point clouds. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 2353–2361. [Google Scholar]

- Verdie, Y.; Lafarge, F.; Alliez, P. LOD generation for urban scenes. ACM Trans. Graph. 2015, 34, 15–30. [Google Scholar] [CrossRef]

- Gröger, G.; Plümer, L. CityGML—Interoperable semantic 3D city models. ISPRS J. Photogramm. Remote Sens. 2012, 71, 12–33. [Google Scholar] [CrossRef]

- Kolbe, T.H.; Gröger, G.; Plümer, L. CityGML—3D city models and their potential for emergency response. Geospat. Inf. Technol. Emerg. Response 2008, 5, 257–274. [Google Scholar]

- Biljecki, F.; Ledoux, H.; Stoter, J. Generation of multi-LOD 3D city models in CityGML with the procedural modelling engine Random3DCity. ISPRS Ann. Photogram. Remote Sens. Spat. Inf. Sci. 2016, 4, 51–59. [Google Scholar] [CrossRef]

- Tang, L.; Ying, S.; Li, L.; Biljecki, F.; Zhu, H.; Zhu, Y.; Yang, F.; Su, F. An application-driven LOD modelling paradigm for 3D building models. ISPRS J. Photogramm. Remote Sens. 2020, 161, 194–207. [Google Scholar] [CrossRef]

- Gomes, A.J.; Teixeira, J.G. Form feature modelling in a hybrid CSG/BRep scheme. Comput Graph. 1991, 15, 217–229. [Google Scholar] [CrossRef]

- Wang, R.; Peethambaran, J.; Chen, D. LiDAR point clouds to 3-D urban models: A review. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2018, 11, 606–627. [Google Scholar] [CrossRef]

- Musialski, P.; Wonka, P.; Aliaga, D.G.; Wimmer, M.; Van Gool, L.; Purgathofer, W. A survey of urban reconstruction. Comput. Graph. Forum 2013, 32, 146–177. [Google Scholar] [CrossRef]

- Haala, N.; Kada, M. An update on automatic 3D building reconstruction. ISPRS J. Photogramm. Remote Sens. 2010, 65, 570–580. [Google Scholar] [CrossRef]

- Huang, H.; Brenner, C.; Sester, M. A generative statistical approach to automatic 3D building roof reconstruction from laser scanning data. ISPRS J. Photogramm. Remote Sens. 2013, 79, 29–43. [Google Scholar] [CrossRef]

- Poullis, C.; You, S.; Neumann, U. Rapid creation of large-scale photorealistic virtual environments. In Proceedings of the 2008 IEEE Virtual Reality Conference, Reno, NV, USA; 2008; pp. 153–160. [Google Scholar]

- Dorninger, P.; Pfeifer, N. A comprehensive automated 3D approach for building extraction, reconstruction and regularization from airborne laser scanning point clouds. Sensors 2008, 8, 7323–7343. [Google Scholar] [CrossRef]

- Mass, H.G.; Vosselman, G. Two algorithms for extracting building models from raw laser altimetry data. ISPRS J. Photogramm. Remote Sens. 1999, 54, 153–163. [Google Scholar] [CrossRef]

- Vosselman, G.; Dijkman, S. 3D building model reconstruction from point clouds and ground plans. Int. Arch. Photogram. Remote Sens. Spat. Inf. Sci. 2001, 34, 37–44. [Google Scholar]

- Sohn, G.; Huang, X.; Tao, V. Using a binary space partitioning tree for reconstructing polyhedral building models from airborne lidar data. Photogramm. Eng. Remote Sens. 2008, 74, 1425–1438. [Google Scholar] [CrossRef]

- Jung, J.; Jwa, Y.; Sohn, G. Implicit regularization for reconstructing 3D building rooftop models using airborne LiDAR data. Sensors 2017, 17, 621–648. [Google Scholar] [CrossRef]

- Poullis, C.; You, S. Automatic reconstruction of cities from remote sensor data. In Proceedings of the 2009 IEEE Conference on Computer Vision and Pattern Recognition, Miami, FL, USA, 20–25 June 2009; pp. 153–160. [Google Scholar]

- Chen, D.; Wang, R.; Peethambaran, J. Topologically aware building rooftop reconstruction from airborne laser scanning point clouds. IEEE Trans. Geosci. Remote Sens. 2017, 55, 7032–7052. [Google Scholar] [CrossRef]

- Oude Elberink, S. Target graph matching for building reconstruction. Int. Arch. Photogram. Remote Sens. Spat. Inf. Sci. 2009, 38, 49–54. [Google Scholar]

- Oude Elberink, S.; Vosselman, G. Building reconstruction by target based graph matching on incomplete laser data: Analysis and limitations. Sensors 2009, 9, 6101–6118. [Google Scholar] [CrossRef]

- Li, M.; Nan, L.; Liu, S. Fitting boxes to Manhattan scenes using linear integer programming. Int. J. Digit. Earth 2016, 9, 806–817. [Google Scholar] [CrossRef][Green Version]

- Li, M.; Wonka, P.; Nan, L. Manhattan-world urban reconstruction from point clouds. In Computer Vision—14th European Conference, ECCV 2016, Proccedings; Sebe, N., Leibe, B., Welling, M., Matas, J., Eds.; Springer: Berlin, Germany, 2016; pp. 54–69. [Google Scholar]

- Schnabel, R.; Wahl, R.; Klein, R. Efficient RANSAC for point cloud shape detection. Comput. Graph. Forum 2007, 26, 214–226. [Google Scholar] [CrossRef]

- Schrijver, A. Theory of Linear and Integer Programming; John Wiley & Sons: New York, NY, USA, 1998; pp. 229–237. [Google Scholar]

- Pandi, F.; De Amicis, R.; Piffer, S.; Soave, M.; Cadzow, S.; Boxi, E.G.; D’Hondt, E. Using CityGML to deploy smart-city services for urban ecosystems. Int. Arch. Photogram. Remote Sens. Spat. Inf. Sci. 2013, 4, W1. [Google Scholar]

- Baig, S.U.; Adbul-Rahman, A. Generalization of buildings within the framework of CityGML. Geo-Spat. Inf. Sci. 2013, 16, 247–255. [Google Scholar] [CrossRef]

- Liang, J.; Edelsbrunner, H.; Fu, P.; Sudhakar, P.V.; Subramaniam, S. Analytical shape computation of macromolecules: I. Molecular area and volume through alpha shape. Proteins 1998, 33, 1–17. [Google Scholar] [CrossRef]

- Gurobi Optimization. Available online: https://www.gurobi.com/ (accessed on 31 December 2020).

- Bentley, ContextCapture. Available online: https://www.bentley.com/en/products/brands/contextcapture (accessed on 31 December 2020).

- Li, Y.; Wu, B.; Ge, X. Structural segmentation and classification of mobile laser scanning point clouds with large variations in point density. ISPRS J. Photogramm. Remote Sens. 2019, 153, 151–165. [Google Scholar] [CrossRef]

- Gruen, A.; Schrotter, G.; Schubiger, S.; Qin, R.; Xiong, B.; Xiao, C.; Li, J.; Ling, X.; Yao, S. An operable system for LoD3 model Generation Using Multi-Source Data and User-Friendly Interactive Editing; ETH Centre, Future Cities Laboratory: Singapore, 2020. [Google Scholar]

| Relation Type | Description |

|---|---|

| Horizontality | Pi is vertical if θ (ni, nz) < ε. |

| Verticality | Pi is vertical if θ (ni, nz) > π/2 − ε. |

| Parallelism | Pi and Pj are parallel if θ (ni, nj) < ε. |

| Orthogonality | Pi and Pj are orthogonal if θ (ni, nj) > π/2 − ε. |

| Z-symmetry | Pi and Pj are z-symmetric if |θ (ni, nz) − θ (nj, nz)| <ε. |

| XY-parallelism | Pi and Pj are xy-parallel if θ (i, ) < ε. |

| Co-planarity | Two parallel plane primitives Pi and Pj are co-planar if |di − dj| < d. |

| Topological-Relation | Output | Operations | ||

|---|---|---|---|---|

| Separated |  | Add Fi to F1. | |

| Connecting |  | Add Fi to F1. | |

| Full-overlapping |  | Remove Fj from F1; add Fij to F2. | |

| Partially-overlapping 1 |  | Remove Fj from F1; add Fij to F2; add Fj’ to F1. | |

| Partially-overlapping 2 |  | Remove Fj from F1; add Fij to F2; add Fi’ to F1. | |

| Partially-overlapping 3 |  | Remove Fj from F1; add Fij to F2; add Fj’, Fj’’ to F1. | |

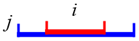

| Partially-overlapping 4 |  | Remove Fj from F1; add Fij to F2; add Fi’, Fi’’ to F1. | |

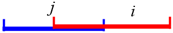

| Contained |  | Remove Fj from F1; add Fij to F2; add Fj’, Fj’’ to F1. | |

| Containing |  | Remove Fj from F1; add Fij to F2; add Fi’, Fi’’ to F1. | |

| Intersecting |  | Remove Fj from F1; add Fij to F2; add Fi’ to F1; and Fj’ to F1. | |

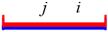

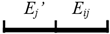

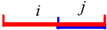

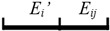

| Topological-Relation | Output | Operations | |

|---|---|---|---|

| Separated |  | Add Ei to E1. |

| Connecting |  | Add Ei to E1. |

| Fully-overlapping |  | Remove Ej from E1; add Eij to E2. |

| Partially-overlapping1 |  | Remove Ej from E1; add Eij to E2; add Ej’ to E1. |

| Partially-overlapping2 |  | Remove Ej from E1; add Eij to E2; add Ei’ to E1. |

| Contained |  | Remove Ej from E1; add Eij to E2; add Ej’, Ej’’ to E1. |

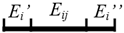

| Containing |  | Remove Ej from E1; add Eij to E2; add Ei’, Ei’’ to E1. |

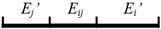

| Intersecting |  | Remove Ej from E1; add Eij to E2; add Ei’ to E1; add Ej’ to E1. |

| Normal Direction | Elevation Information | Surface Type | |

|---|---|---|---|

| |θ (n’,nz)| ≥ π/2 − ε | - | WallSurface | |

| ε < |θ (n’, nz)| < π/2 − ε | n’, nz > 0 | - | RoofSurface |

| n’, nz < 0 | - | WallSurface | |

| |θ (n’, nz)| ≤ ε | n’, nz > 0 | < zmin + (zmax − zmin)/3 and < 10 m | OuterFloorSurface |

| otherwise | RoofSurface | ||

| n’, nz < 0 | < zmin + d | GroundSurface | |

| otherwise | OuterCeilingSurface | ||

| GT | Ground Surface | Wall Surface | Roof Surface | OuterFloor Surface | OuterCeiling Surface | Precision |

|---|---|---|---|---|---|---|

| GroundSurface | 105 | 0 | 0 | 0 | 0 | 100% |

| WallSurface | 0 | 4170 | 242 | 0 | 0 | 95% |

| RoofSurface | 0 | 104 | 2735 | 0 | 0 | 96% |

| OuterFloorSurface | 0 | 0 | 0 | 24 | 0 | 100% |

| OuterCeilingSurface | 0 | 0 | 0 | 0 | 241 | 100% |

| Recall | 100% | 98% | 92% | 100% | 100% |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Li, Y.; Wu, B. Relation-Constrained 3D Reconstruction of Buildings in Metropolitan Areas from Photogrammetric Point Clouds. Remote Sens. 2021, 13, 129. https://doi.org/10.3390/rs13010129

Li Y, Wu B. Relation-Constrained 3D Reconstruction of Buildings in Metropolitan Areas from Photogrammetric Point Clouds. Remote Sensing. 2021; 13(1):129. https://doi.org/10.3390/rs13010129

Chicago/Turabian StyleLi, Yuan, and Bo Wu. 2021. "Relation-Constrained 3D Reconstruction of Buildings in Metropolitan Areas from Photogrammetric Point Clouds" Remote Sensing 13, no. 1: 129. https://doi.org/10.3390/rs13010129

APA StyleLi, Y., & Wu, B. (2021). Relation-Constrained 3D Reconstruction of Buildings in Metropolitan Areas from Photogrammetric Point Clouds. Remote Sensing, 13(1), 129. https://doi.org/10.3390/rs13010129