Vision-Based Decision Support for Rover Path Planning in the Chang’e-4 Mission

Abstract

1. Introduction

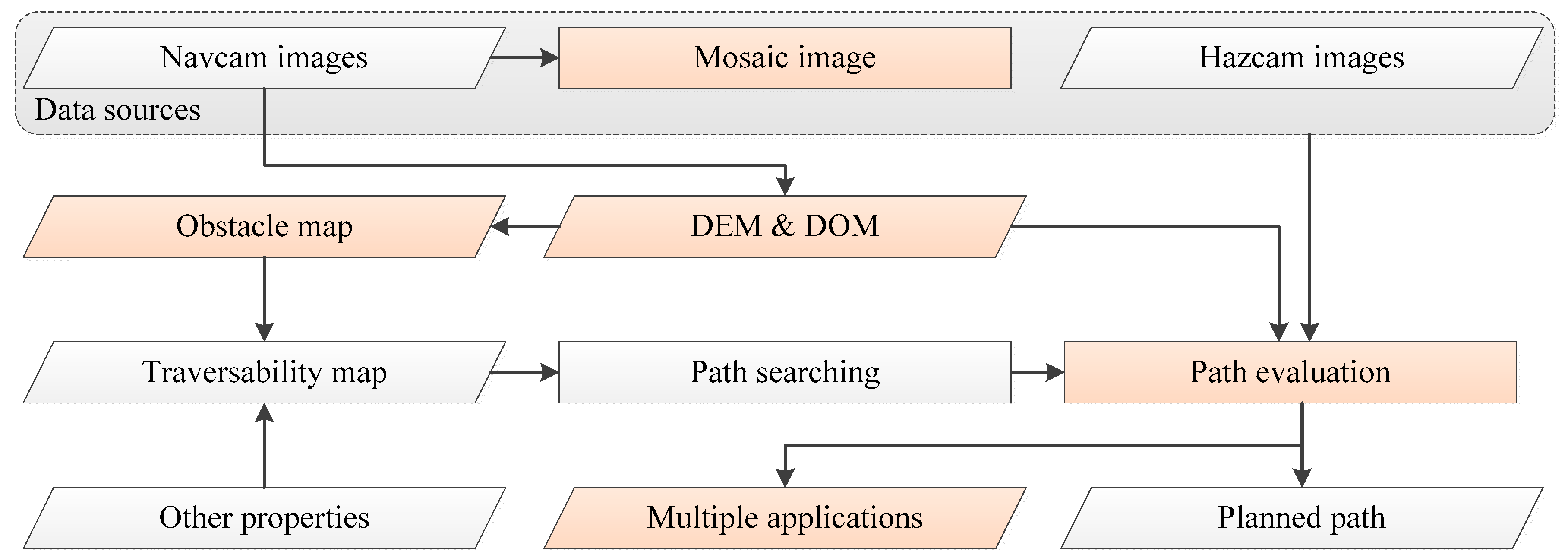

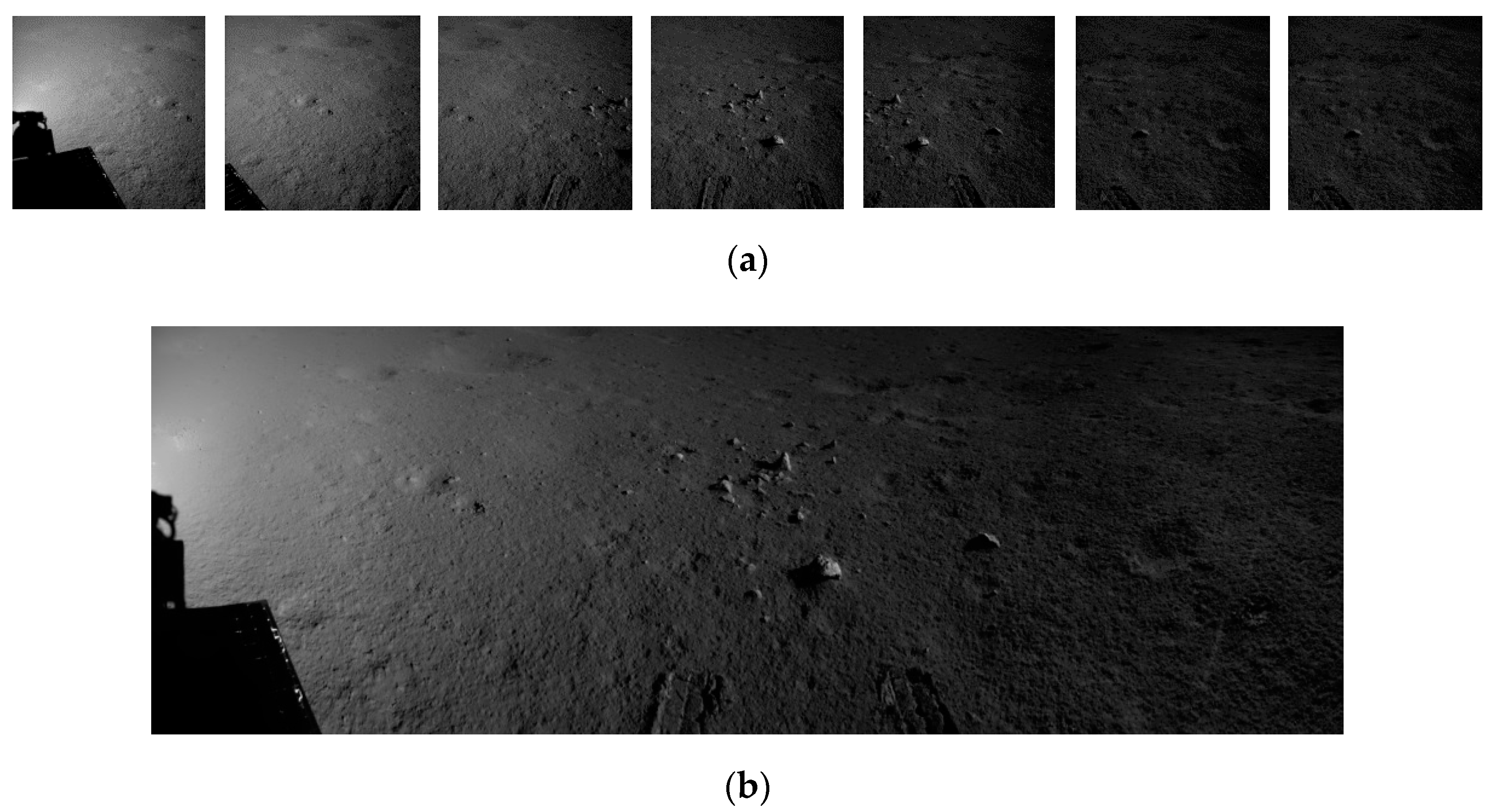

2. Data

3. Methodology

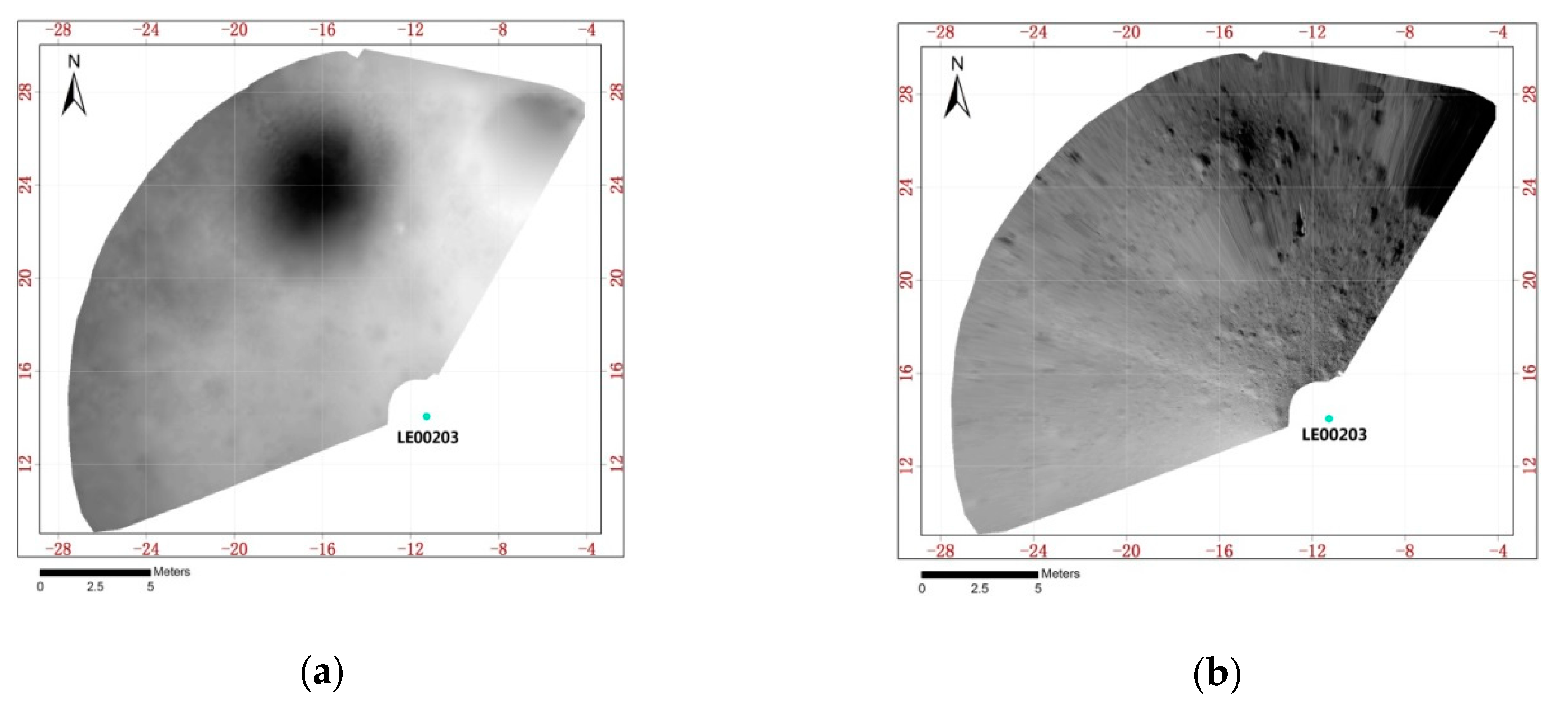

3.1. DEM and Obstacle Map Generation

3.2. Path Searching

3.3. Path Evaluation

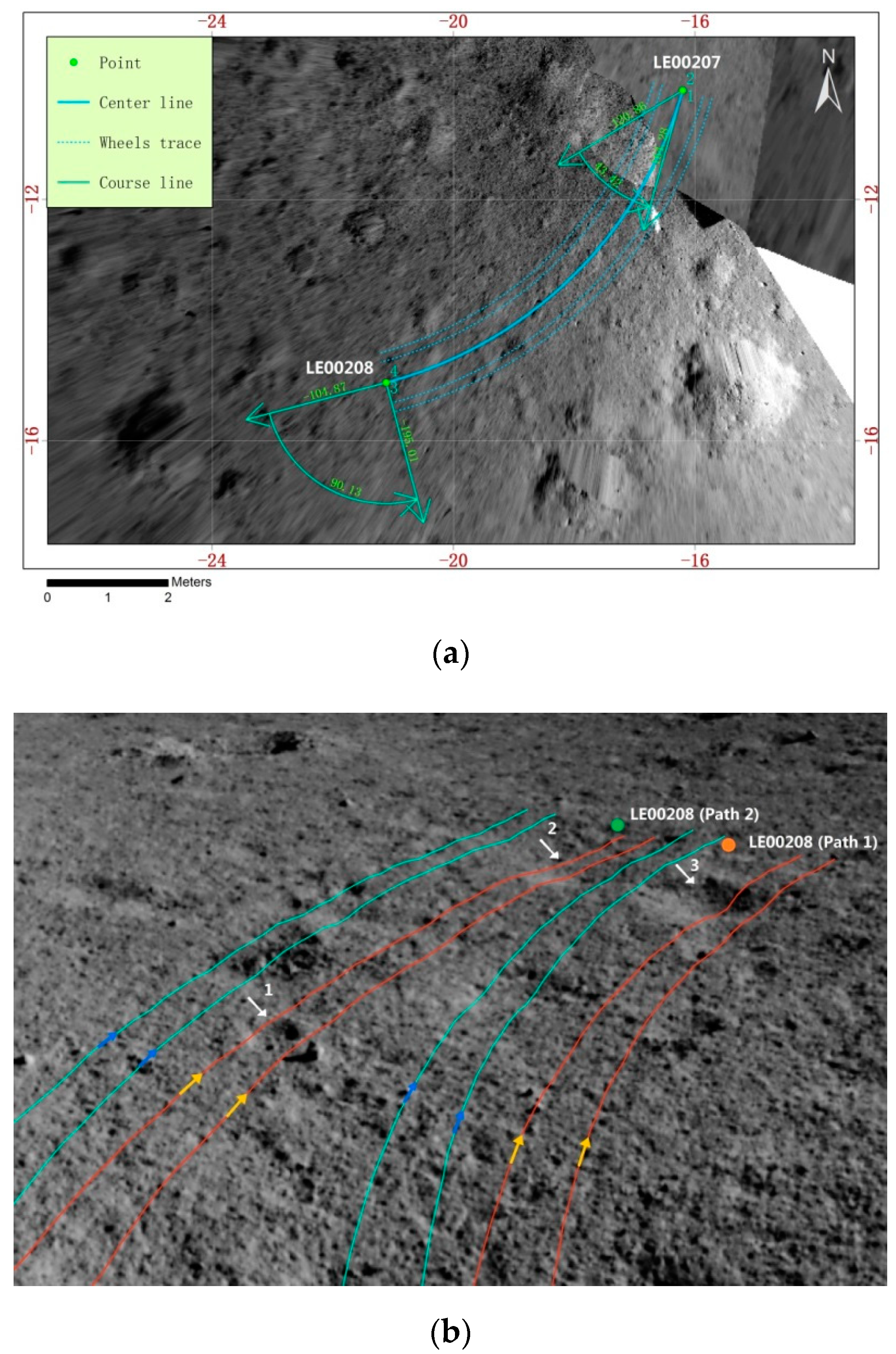

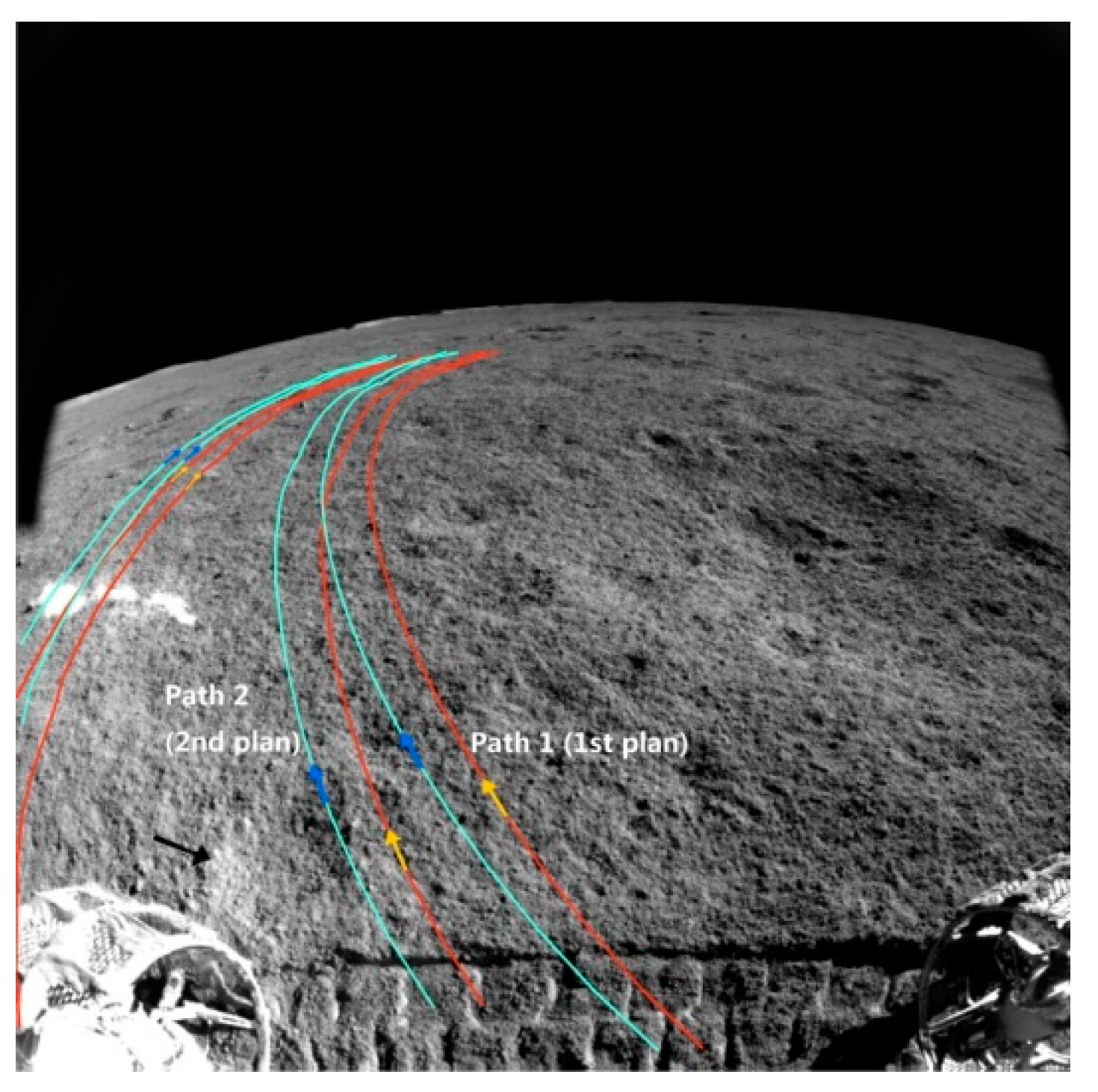

3.3.1. Traditional Path Evaluation in Overhead View

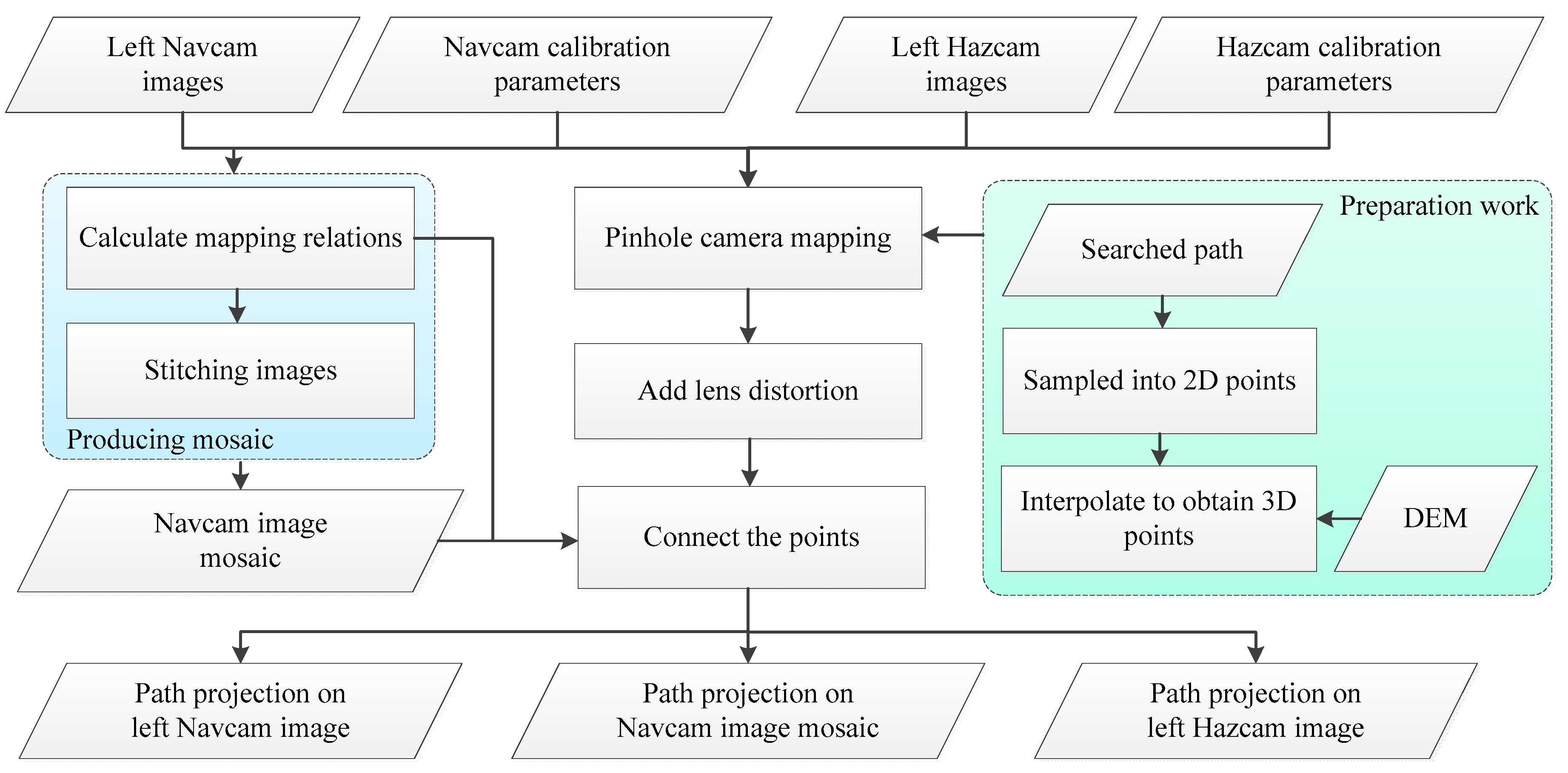

3.3.2. Path Projection into Perspective Views

- Project on DOM in 3D perspective view

- 2.

- Project back on Hazcam and Navcam images

- (1)

- Original left Navcam image

- (2)

- Mosaic image stitched by a sequence of original left Navcam images

- (3)

- Original left Hazcam image

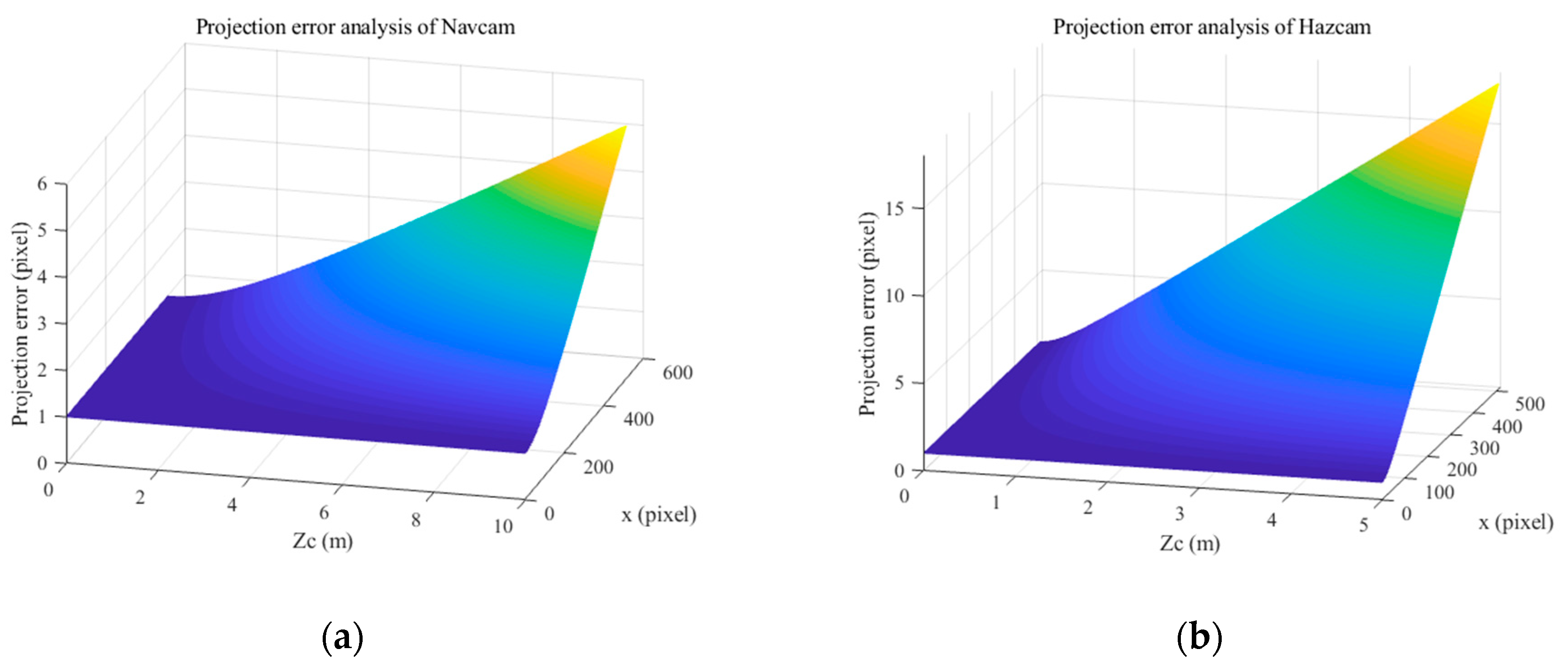

3.3.3. Error Analysis

4. Results

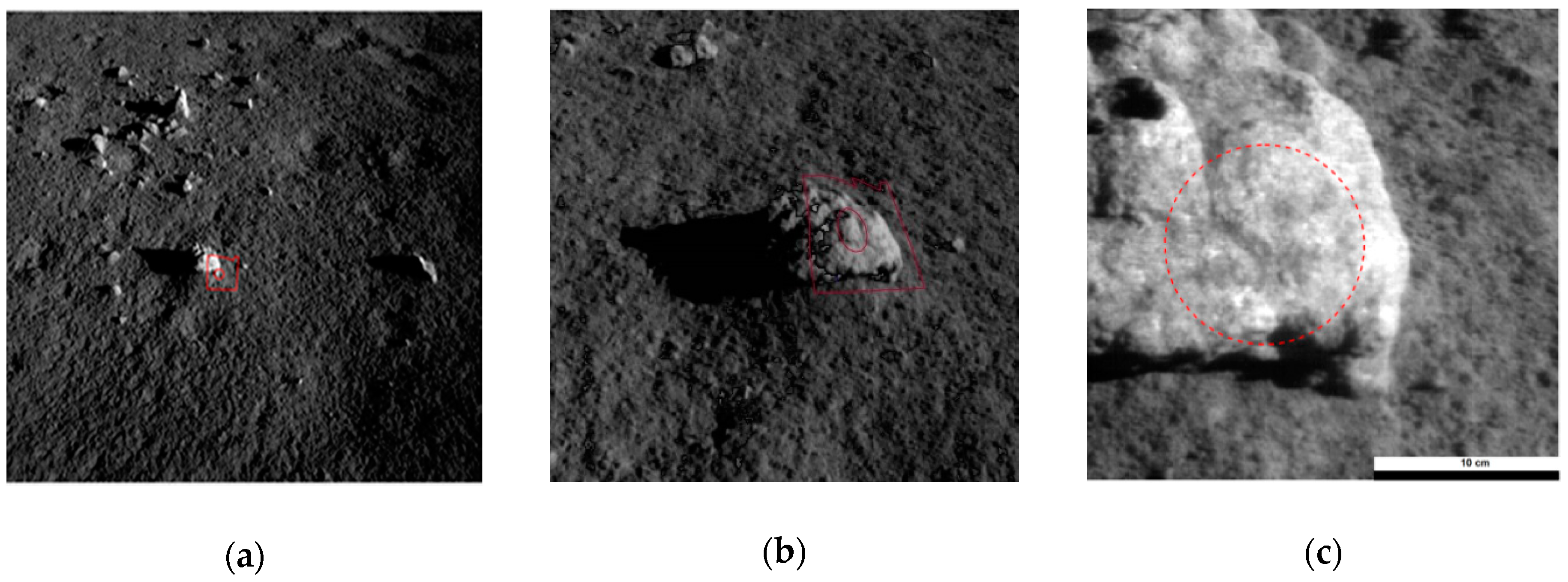

4.1. Obstacle Map Generation and Support of Path Searching

4.2. Evaluation of the Path

4.3. Error Analysis Results

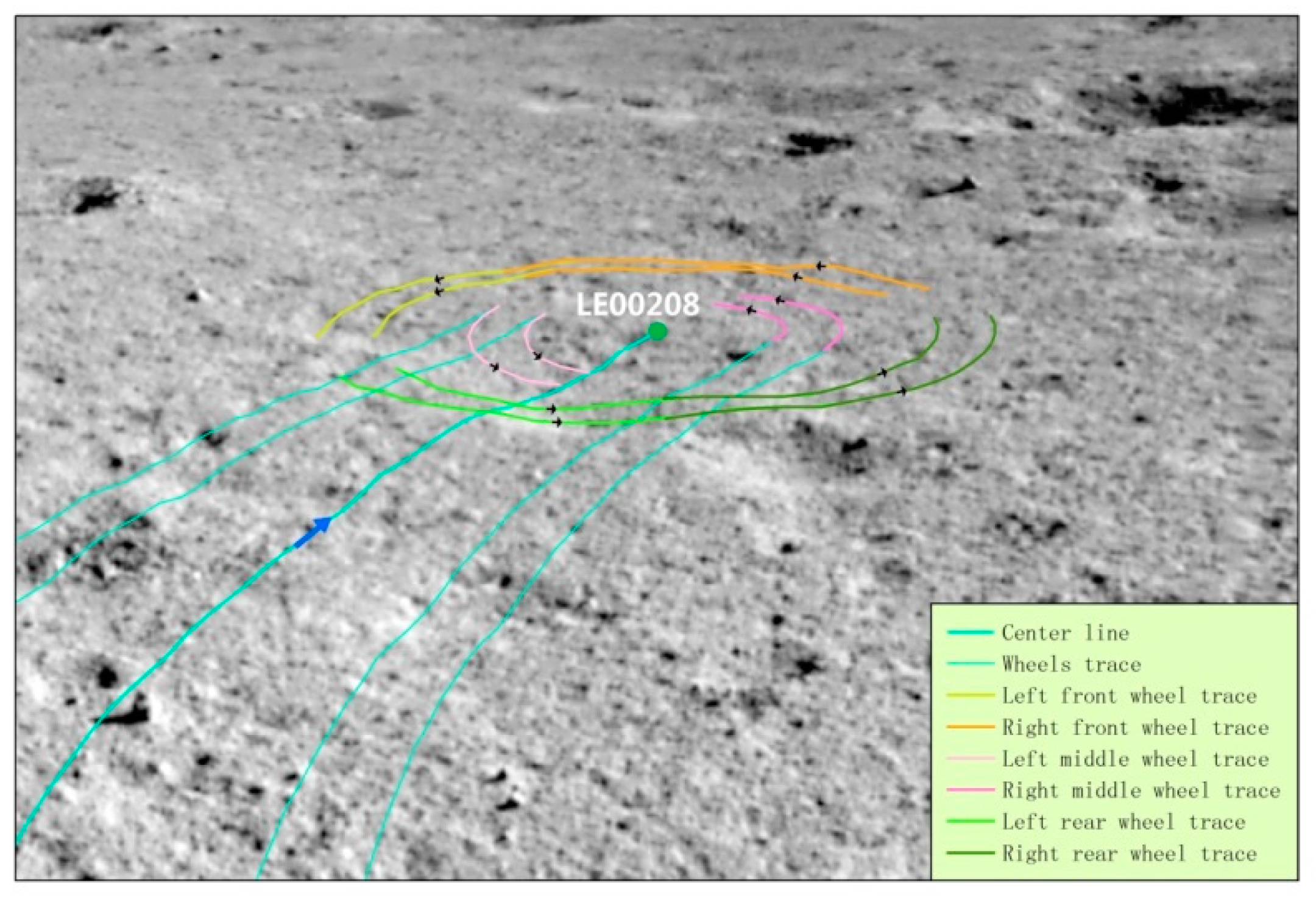

5. Discussion

5.1. Support Other Applications

- Wheels trace prediction of rover spin

- 2.

- Imaging area prediction of other sensors

5.2. Error Source Analysis

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Di, K.; Liu, Z.; Liu, B.; Wan, W.; Peng, M.; Wang, Y.; Gou, S.; Yue, Z.; Xin, X.; Jia, M.; et al. Chang’e-4 lander localization based on multi-source data. J. Remote Sens. 2019, 23, 177–184. [Google Scholar]

- Ye, P.; Sun, Z.; Zhang, H.; Zhang, L.; Wu, X.; Li, F. Mission design of Chang’e-4 probe system. Sci. Sin. Technol. 2019, 49, 124–137. [Google Scholar] [CrossRef]

- Yu, T.; Fei, J.; Li, L.; Cheng, X. Study on path planning method of lunar rover. J. Deep Space Explor. 2019, 6, 384–390. [Google Scholar]

- Liu, Z.; Di, K.; Peng, M.; Wan, W.; Liu, B.; Li, L.; Yu, T.; Wang, B.; Zhou, J.; Chen, H. High precision landing site mapping and rover localization for Chang’e-3 mission. Sci. China-Phys. Mech. Astron. 2015, 58, 1–11. [Google Scholar] [CrossRef]

- Helmick, D.M.; Angelova, A.; Livianu, M.; Matthies, L.H. Terrain Adaptive Navigation for Mars Rovers. In Proceedings of the 2007 IEEE Aerospace Conference, Big Sky, MT, USA, 3–10 March 2007; pp. 1–11. [Google Scholar]

- Carsten, J.; Rankin, A.; Ferguson, D.; Stentz, A. Global Path Planning on Board the Mars Exploration Rovers. In Proceedings of the 2007 IEEE Aerospace Conference, Big Sky, MT, USA, 3–10 March 2007; p. 1125. [Google Scholar]

- Peng, M.; Wan, W.; Wu, K.; Liu, Z.; Li, L.; Di, K.; Li, L.; Miao, Y.; Zhan, L. Topographic mapping capbility analysis of Chang’e-3 Navcam stereo images and three-dimensional terrain reconstruction for mission operations. J. Remote Sens. 2014, 18, 995–1002. [Google Scholar]

- Liu, Z.; Wan, W.; Peng, M.; Zhao, Q.; Xu, B.; Liu, B.; Liu, Y.; Di, K.; Li, L.; Yu, T.; et al. Remote sensing mapping and localizatin techniques for teleoperation of Chang’e-3 rover. J. Remote Sens. 2014, 18, 971–980. [Google Scholar]

- Chen, J.; Xing, Y.; Teng, B.; Mao, X.; Liu, X.; Jia, Y.; Zhang, J.; Wang, L. Guidance, Navigation and Control Technologies of Chang’E-3 Lunar Rover. Sci. China Technol. Sci. 2014, 44, 461–469. [Google Scholar]

- Wang, B.; MAO, X.; Tang, G. A Calibration Method for Fish-Eye Camera of Lunar Rover. J. Astronaut. 2011, 32, 933–939. [Google Scholar]

- Zhang, S.; Liu, S.; Ma, Y.; Qi, C.; Yang, H.M. Self calibration of the stereo vision system of the Chang’e-3 lunar roverbased on the bundle block adjustment. Isprs J. Photogramm. Remote Sens. 2017, 128, 287–297. [Google Scholar] [CrossRef]

- Zhang, S.; Xu, Y.; Liu, S.; Yan, D. Calibration of Chang’e-3 Lunar Rover Stereo-camera System Based on Control Field. Geomat. Inf. Sci. Wuhan Univ. 2015, 40, 1509–1513. [Google Scholar]

- Zhou, J.; Xie, Y.; Zhang, Q.; Wu, K.; Xia, Y.; Zhang, Z. Research on mission planning in teleoperation of lunar rovers. Sci. China Inf. Sci. 2014, 44, 441–451. [Google Scholar]

- Wang, Y.; Peng, M.; Di, K.; Wan, W.; Liu, Z.; Yue, Z.; Xing, Y.; Mao, X.; Teng, B. Vision Based Obstacle Detection Using Rover Stereo Images. In Proceedings of the International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences ISPRS Geospatial Week, Enschede, The Netherlands, 10–14 June 2019; pp. 1471–1477. [Google Scholar]

- Wu, W.; Zhou, J.; Wang, B.; Liu, C. Key technologies in the teleoperation of Chang’E-3 Jade Rabbit rover. Sci. China Inf. Sci. 2014, 44, 425–440. [Google Scholar]

- Li, Q.; Jia, Y.; Peng, S.; Han, L. Top Design and Implementation of the Lunar Rover Mission Planning. J. Deep Explor. 2017, 4, 58–65. [Google Scholar]

- Zhang, Y.; Xiao, J.; Zhang, X.; Liu, D.; Zou, H. Design and implementation of Chang’E-3 rover location system. Sci. China Technol. Sci. 2014, 44, 483–491. [Google Scholar]

- Shen, Z.; Zhang, W.; Jia, Y.; Sun, Z. System Design and Technical Characteristics Analysis of Chang’e-3 Lunar Rover. Spacecr. Eng. 2015, 24, 8–13. [Google Scholar]

- Lowe, D.G. Distinctive Image Features from Scale-Invariant Keypoints. Int. J. Comput. Vis. 2004, 60, 91–110. [Google Scholar] [CrossRef]

- Fischler, M.A.; Bolles, R.C. Random sample consensus: A paradigm for model fitting with applications to image analysis and automated cartography. Commun. ACM 1981, 24, 381–395. [Google Scholar] [CrossRef]

- Liang, J.; Liu, Z.; Di, K. Construction of a 3D Measurable Virtual Reality Enviroment for Planetary Exploration Applications. Spacecr. Eng. 2012, 21, 28–34. [Google Scholar]

- Brown, M.; Lowe, D.G. Automatic Panoramic Image Stitching Using Invariant Features. Int. J. Comput. Vis. 2007, 74, 59–73. [Google Scholar] [CrossRef]

- Di, K.; Li, R. Topographic Mapping Capability Analysis of Mars Exploration Rover 2003 Mission Imagery. In Proceedings of the 5th International Symposium on Mobile Mapping Technology (MMT 2007), Padua, Italy, 28–31 May 2007. [Google Scholar]

- Wang, Z. Principles of Photogrammetry (with Remote Sensing); Publishing House of Surveying and Mapping: Beijing, China, 1990; pp. 312–313. [Google Scholar]

- Jia, Y.; Zou, Y.; Ping, J.; Xue, C.; Yan, J.; Ning, Y. The scientific objectives and payloads of Chang’E-4 mission. Planet. Space Sci. 2018, 162, 207–215. [Google Scholar] [CrossRef]

- He, Z.P.; Wang, B.Y.; Lv, G.; Li, C.L.; Yuan, L.Y.; Xu, R.; Chen, K.; Wang, J.Y. Visible and near-infrared imaging spectrometer and its preliminary results from the Chang’E 3 project. Rev. Sci. Instrum. 2014, 85, 083104. [Google Scholar] [CrossRef] [PubMed]

- Li, C.; Wang, Z.; Xu, R.; Lv, G.; Yuan, L.; He, Z.; Wang, J. The Scientific Information Model of Chang’e-4 Visible and Near-IR Imaging Spectrometer (VNIS) and In-Flight Verification. Sensors 2019, 19, 2806. [Google Scholar] [CrossRef] [PubMed]

- Liu, Z.; Di, K.; Li, J.; Xie, J.; Cui, X.; Xi, L.; Wan, W.; Peng, M.; Liu, B.; Wang, Y.; et al. Landing Site Topographic Mapping and Rover Localization for Chang’e-4 Mission. Sci. China-Phys. Mech. & Astron. accepted.

- Li, C.; Liu, D.; Liu, B.; Ren, X.; Liu, J.; He, Z.; Zuo, W.; Zeng, X.; Xu, R.; Tan, X.; et al. Chang’E-4 initial spectroscopic identification of lunar far-side mantle-Deriv. materials. Nature 2019, 569, 378–382. [Google Scholar] [CrossRef] [PubMed]

- China’s Lunar Rover Travels over 367 Meters on Moon’s Far Side. Available online: https://www.chinadaily.com.cn/a/202002/03/WS5e37ce2aa310128217274769.html (accessed on 3 February 2020).

| Payload | FOV (°) | Focal Length (mm) | Baseline (mm) | Image Size (pixel × pixel) | Working Mode |

|---|---|---|---|---|---|

| Navcam | 46.6 | 17.7 | 270 | 1024 × 1024 | Fixed roll angle and changeable pitch and yaw angles, imaging along yaw angle at an interval of 20 degrees. Power on every waypoint. |

| Hazcam | 120 | 7.3 | 100 | 1024 × 1024 | Fixed on the front panel of the rover. Power on at certain waypoints. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wang, Y.; Wan, W.; Gou, S.; Peng, M.; Liu, Z.; Di, K.; Li, L.; Yu, T.; Wang, J.; Cheng, X. Vision-Based Decision Support for Rover Path Planning in the Chang’e-4 Mission. Remote Sens. 2020, 12, 624. https://doi.org/10.3390/rs12040624

Wang Y, Wan W, Gou S, Peng M, Liu Z, Di K, Li L, Yu T, Wang J, Cheng X. Vision-Based Decision Support for Rover Path Planning in the Chang’e-4 Mission. Remote Sensing. 2020; 12(4):624. https://doi.org/10.3390/rs12040624

Chicago/Turabian StyleWang, Yexin, Wenhui Wan, Sheng Gou, Man Peng, Zhaoqin Liu, Kaichang Di, Lichun Li, Tianyi Yu, Jia Wang, and Xiao Cheng. 2020. "Vision-Based Decision Support for Rover Path Planning in the Chang’e-4 Mission" Remote Sensing 12, no. 4: 624. https://doi.org/10.3390/rs12040624

APA StyleWang, Y., Wan, W., Gou, S., Peng, M., Liu, Z., Di, K., Li, L., Yu, T., Wang, J., & Cheng, X. (2020). Vision-Based Decision Support for Rover Path Planning in the Chang’e-4 Mission. Remote Sensing, 12(4), 624. https://doi.org/10.3390/rs12040624