Building Extraction Using Orthophotos and Dense Point Cloud Derived from Visual Band Aerial Imagery Based on Machine Learning and Segmentation

Abstract

1. Introduction

2. Materials and Methods

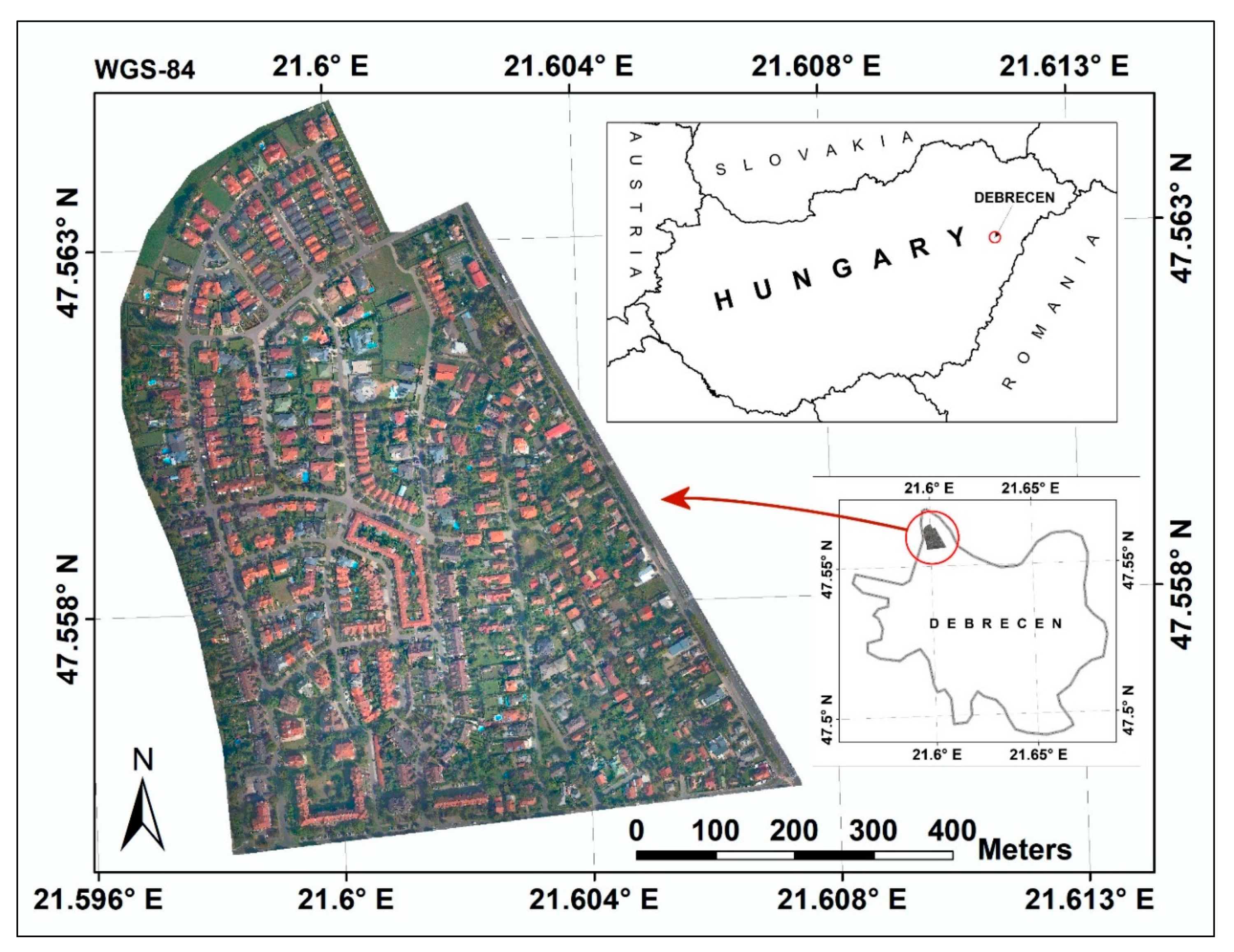

2.1. Study Area

2.2. Data

2.3. Point Cloud Classification and Derivation of Morphometric Variables

2.4. RGB Indices

2.5. Texture Information

2.6. OBIA-Based Segmentation

2.7. Variable Data Sets and Data Preparation

2.8. Classification Models

2.8.1. Random Forest

2.8.2. Support Vector Machine

2.8.3. K-Nearest Neighbor

2.8.4. Multiple Adaptive Regression Splines

2.8.5. Partial Least Squares

2.8.6. Accuracy Assessment

3. Results

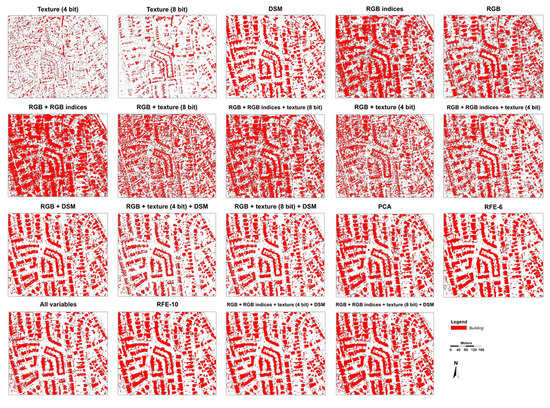

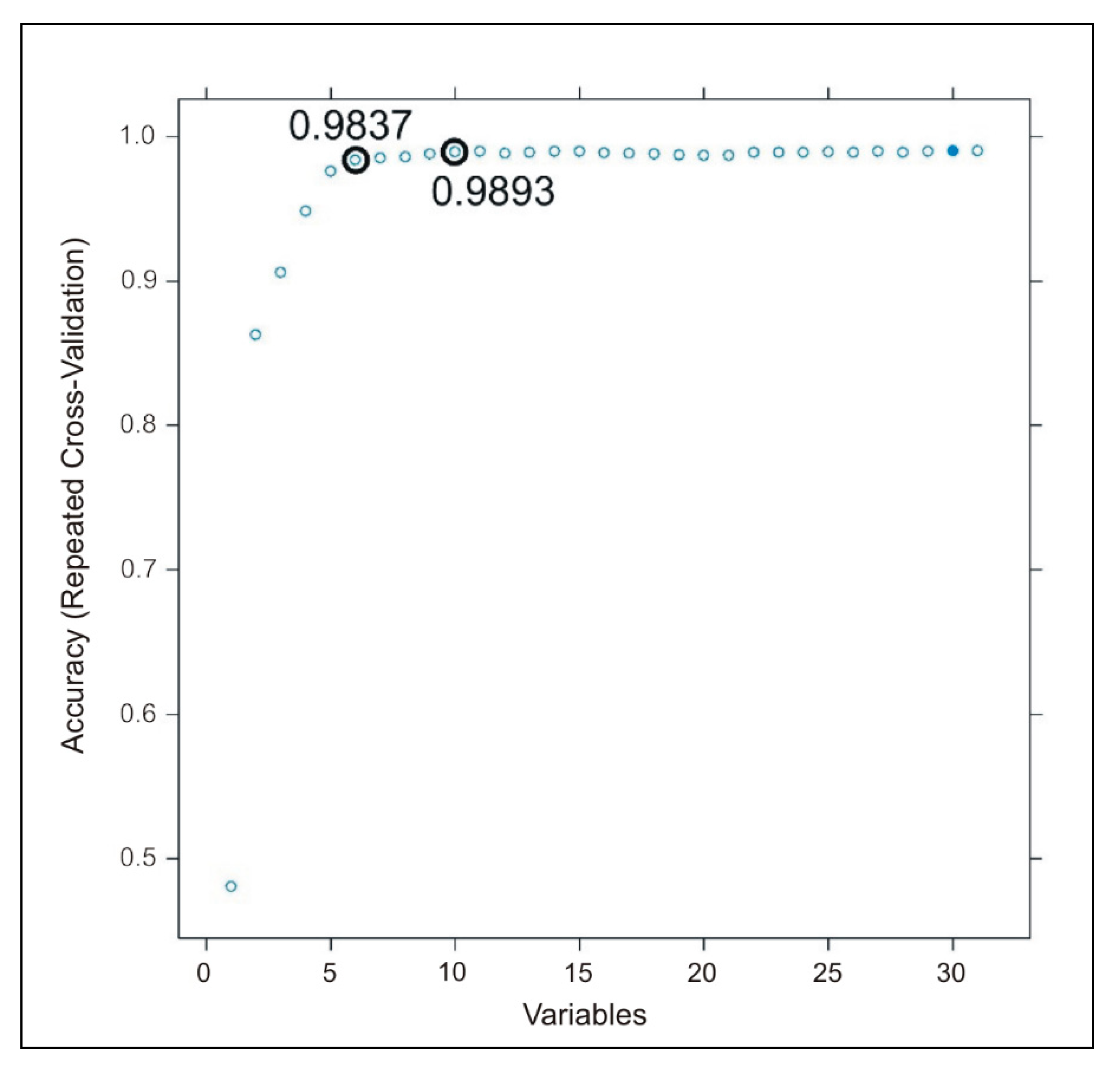

3.1. Results of Data Preparation

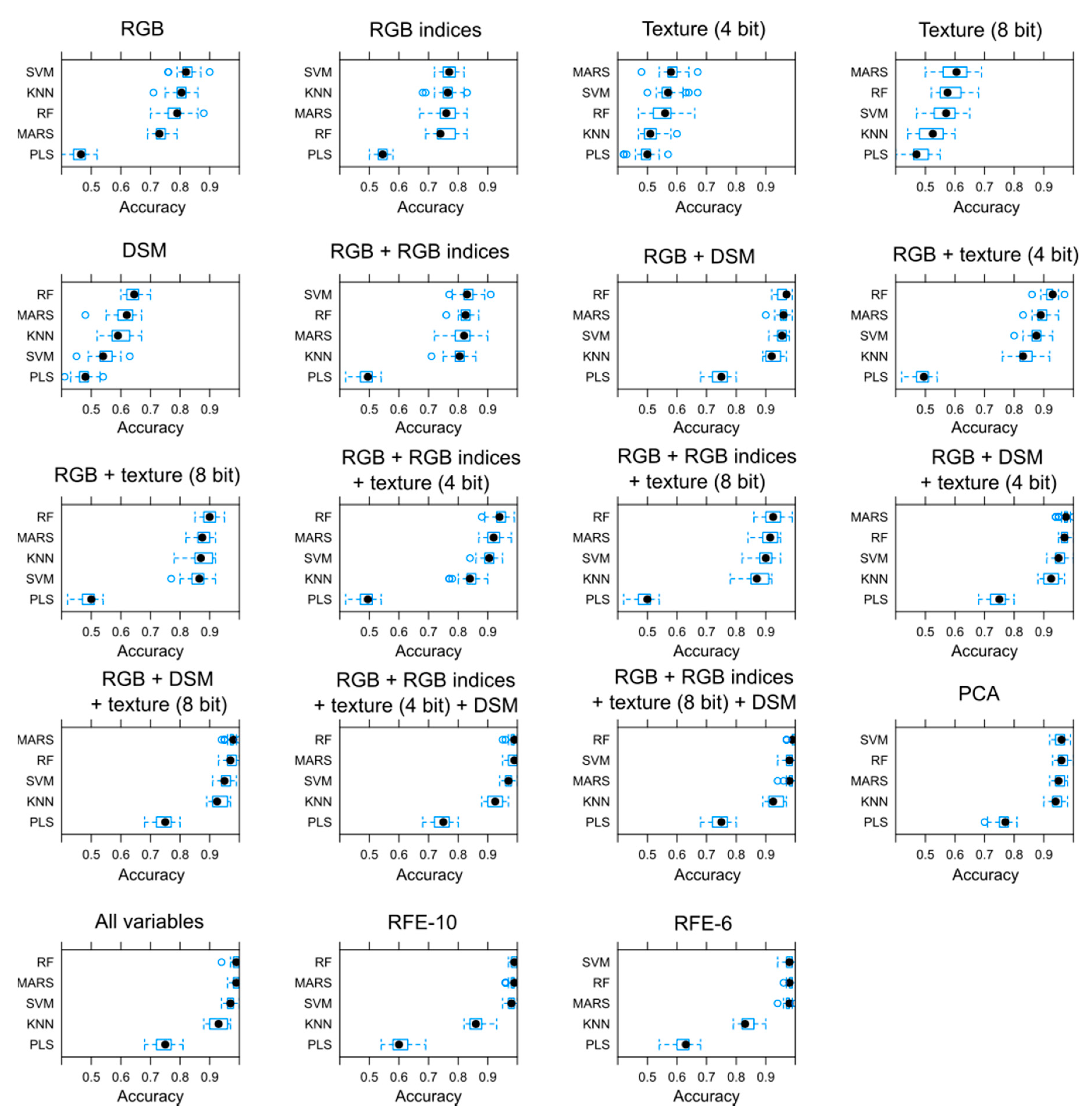

3.2. Classification Accuracies Using Different Sets of Input Variables and Classifiers

3.3. Accuracy Assessment on Category Level

3.4. Accuracy Assessment with the ISPRS Benchmark Dataset

4. Discussion

5. Conclusions

- -

- Classification performance using only one group of indices (i.e., RGB bands, texture, RGB indices or morphometric indices) varied in a wide range. Texture information was the weakest, worse when only RGB bands were used. Morphometric indices performed better on class level than on overall because DSM and its derivatives added valuable information especially in case of buildings. RGB indices had a relevant contribution in the improvement but on class level it was worse than the overall accuracy.

- -

- Combination of different group of indices ensured higher accuracy both on overall and class level. Best option is to use the morphometric indices with the RGB bands, it had >90% OA, PA, and UA.

- -

- Combining three types of indices provided the most efficient models, having >95% OA, PA, and UA. The RGB bands, RGB indices, morphometric indices and the 4 bit texture information had the largest (100% UA and 98% PA). In addition, 4 bit and 8 bit texture information had small differences in these combinations, and the most important to avoid their common application (both versions decrease the accuracy).

- -

- Model evaluation should contain the UA and PA values, and having several model solutions, visualization of these metrics helps to find the trade-offs between omission and commission errors. In addition, F1 an IoU can express it with a single value which helps to create ranks of accuracy.

- -

- RFE as variable selection method provided an importance rank, and both the six and ten variable sets were efficient, providing the same accuracy as including all variables (100% UA and 98% PA). We suggest using the fewest number of variables to avoid overfitting. However, our most important variables (nDSM, RGBVI, GLI, blue band from RGB, slope, VARI) can be different in other study areas, so the methodology and the careful and customized variable selection is more important.

- -

- Efficiency of this approach can be limited in areas where high buildings have large shadows and building roofs are flat. While shadows bias the spectral profiles, flat roofs will be identical with roads, pavements, and parking lots; thus, slope and aspect cannot discriminate buildings.

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Bramhe, V.S.; Ghosh, S.K.; Garg, P.K. Extraction of built-up area by combining textural features and spectral indices from landsat-8 multispectral image. ISPRS Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2018, XLII–5, 727–733. [Google Scholar] [CrossRef]

- Urban Development | Data. Available online: https://data.worldbank.org/topic/urban-development (accessed on 12 May 2020).

- Urbanisation Worldwide | Knowledge for Policy. Available online: https://ec.europa.eu/knowledge4policy/foresight/topic/continuing-urbanisation/urbanisation-worldwide_en (accessed on 12 May 2020).

- Orsini, H.F. Belgrade’s urban transformation during the 19th century: A space syntax approach. Geogr. Pannonica 2018, 22, 219–229. [Google Scholar] [CrossRef]

- Maktav, D.; Erbek, F.S.; Jürgens, C. Remote sensing of urban areas. Int. J. Remote Sens. 2005, 26, 655–659. [Google Scholar] [CrossRef]

- Müller, S.; Zaum, D.W. Robust building detection in aerial images. In Proceedings of the ISPRS Working Group III/4–5 and IV/3: “CMRT 2005”, Vienna, Austria, 29–30 August 2005; Volume XXXVI, pp. 143–148. [Google Scholar]

- Lai, X.; Yang, J.; Li, Y.; Wang, M. A building extraction approach based on the fusion of LiDAR point cloud and elevation map texture features. Remote Sens. 2019, 11, 1636. [Google Scholar] [CrossRef]

- Cilia, C.; Panigada, C.; Rossini, M.; Candiani, G.; Pepe, M.; Colombo, R. Mapping of asbestos cement roofs and their weathering status using hyperspectral aerial images. ISPRS Int. J. Geo Inf. 2015, 4, 928–941. [Google Scholar] [CrossRef]

- Wilk, E.; Krówczyńska, M.; Pabjanek, P. Determinants influencing the amount of asbestos-cement roofing in Poland. Misc. Geogr. 2015, 19, 82–86. [Google Scholar] [CrossRef]

- Szabó, S.; Burai, P.; Kovács, Z.; Szabó, G.; Kerényi, A.; Fazekas, I.; Paládi, M.; Buday, T.; Szabó, G. Testing algorithms for the identification of asbestos roofing based on hyperspectral data. Environ. Eng. Manag. J. 2014, 13, 2875–2880. [Google Scholar] [CrossRef]

- Dian, C.; Pongrácz, R.; Incze, D.; Bartholy, J.; Talamon, A. Analysis of the urban heat island intensity based on air temperature measurements in a renovated part of Budapest (Hungary). Geogr. Pannonica 2019, 23, 277–288. [Google Scholar] [CrossRef]

- Savic, S.; Unger, J.; Gál, T.; Milosevic, D.; Popov, Z. Urban heat island research of Novi Sad (Serbia): A review. Geogr. Pannonica 2013, 17, 32–36. [Google Scholar] [CrossRef]

- Hayakawa, K.; Tang, N.; Nagato, E.; Toriba, A.; Lin, J.M.; Zhao, L.; Zhou, Z.; Qing, W.; Yang, X.; Mishukov, V.; et al. Long-term trends in urban atmospheric polycyclic aromatic hydrocarbons and nitropolycyclic aromatic hydrocarbons: China, Russia, and Korea from 1999 to 2014. Int. J. Environ. Res. Public Health 2020, 17, 431. [Google Scholar] [CrossRef]

- Diao, B.; Ding, L.; Zhang, Q.; Na, J.; Cheng, J. Impact of urbanization on PM2.5-related health and economic loss in China 338 cities. Int. J. Environ. Res. Public Health 2020, 17, 990. [Google Scholar] [CrossRef] [PubMed]

- Molnár, V.É.; Simon, E.; Tóthmérész, B.; Ninsawat, S.; Szabó, S. Air pollution induced vegetation stress—The air pollution tolerance index as a quick tool for city health evaluation. Ecol. Indic. 2020, 113, 106234. [Google Scholar] [CrossRef]

- Xu, C.; Rahman, M.; Haase, D.; Wu, Y.; Su, M.; Pauleit, S. Surface runoff in urban areas: The role of residential cover and urban growth form. J. Clean. Prod. 2020, 262, 121421. [Google Scholar] [CrossRef]

- Łopucki, R.; Klich, D.; Kitowski, I.; Kiersztyn, A. Urban size effect on biodiversity: The need for a conceptual framework for the implementation of urban policy for small cities. Cities 2020, 98. [Google Scholar] [CrossRef]

- Chen, R.; Li, X.; Li, J. Object-based features for house detection from RGB high-resolution images. Remote Sens. 2018, 10, 451. [Google Scholar] [CrossRef]

- Awrangjeb, M.; Ravanbakhsh, M.; Fraser, C.S. Automatic building detection using LIDAR data and multispectral imagery. In Proceedings of the International Conference on Digital Image Computing: Techniques and Applications (DICTA), Sydney, NSW, Australia, 1–3 December 2010; pp. 45–51. [Google Scholar] [CrossRef]

- Vosselman, G. Fusion of laser scanning data, maps, and aerial photographs for building reconstruction. Int. Geosci. Remote Sens. Symp. 2002, 1, 85–88. [Google Scholar] [CrossRef]

- Feng, Q.; Liu, J.; Gong, J. UAV Remote sensing for urban vegetation mapping using Random Forest and texture analysis. Remote Sens. 2015, 7, 1074–1094. [Google Scholar] [CrossRef]

- Gavankar, N.L.; Ghosh, S.K. Automatic building footprint extraction from high-resolution satellite image using mathematical morphology. Eur. J. Remote Sens. 2018, 51, 182–193. [Google Scholar] [CrossRef]

- San, D.K.; Turker, M. Building extraction from high resolution satellite images using Hough transform. ISPRS Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2010, XXXVIII, 1063–1068. [Google Scholar]

- Sohn, G.; Dowman, I. Data fusion of high-resolution satellite imagery and LiDAR data for automatic building extraction. ISPRS J. Photogramm. Remote Sens. 2007, 62, 43–63. [Google Scholar] [CrossRef]

- Zhang, K.; Yan, J.; Chen, S.C. Automatic construction of building footprints from airborne LIDAR data. IEEE Trans. Geosci. Remote Sens. 2006, 44, 2523–2533. [Google Scholar] [CrossRef]

- Quang, N.T.; Sang, D.V.; Thuy, N.T.; Binh, H.T.T. An efficient framework for pixel-wise building segmentation from aerial images. In Proceedings of the 6th International Symposium on Information and Communication Technology ACM, Hue City, Vietnam, 3–4 December 2015; pp. 282–287. [Google Scholar] [CrossRef]

- Jaynes, C.; Riseman, E.; Hanson, A. Recognition and reconstruction of buildings from multiple aerial images. Comput. Vis. Image Underst. 2003, 90, 68–98. [Google Scholar] [CrossRef]

- Fischer, A.; Kolbe, T.H.; Lang, F.; Cremers, A.B.; Förstner, W.; Plümer, L.; Steinhage, V. Extracting buildings from aerial images using hierarchical aggregation in 2D and 3D. Comput. Vis. Image Underst. 1998, 72, 185–203. [Google Scholar] [CrossRef]

- Sirmaçek, B.; Ünsalan, C. Building detection from aerial images using invariant color features and shadow information. In Proceedings of the 23rd International Symposium on Computer and Information Sciences, ISCIS 2008, Istanbul, Turkey, 27–29 October 2008; pp. 105–110. [Google Scholar] [CrossRef]

- Vogtle, T.; Steinle, E. 3D modelling of buildings using laser scanning and spectral information. Int. Arch. Photogramm. Remote Sens. 2000, 33, 927–934. [Google Scholar]

- Li, Y.; Wu, H. Adaptive building edge detection by combining LiDAR data and aerial images. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2008, 37, 197–202. [Google Scholar]

- Li, E.; Femiani, J.; Xu, S.; Zhang, X.; Wonka, P. Band images using higher order CRF. Iee Trans. Geosci. Remote Sens. 2015, 53, 4483–4495. [Google Scholar] [CrossRef]

- Szabó, Z.; Tóth, C.A.; Holb, I.; Szabó, S. Aerial laser scanning data as a source of terrain modeling in a fluvial environment: Biasing factors of terrain height accuracy. Sensors 2020, 20, 63. [Google Scholar] [CrossRef]

- Rottensteiner, F.; Trinder, J.; Clode, S.; Kubik, K. Building detection by fusion of airborne laser scanner data and multi-spectral images: Performance evaluation and sensitivity analysis. ISPRS J. Photogramm. Remote Sens. 2007, 62, 135–149. [Google Scholar] [CrossRef]

- Tomljenovic, I.; Tiede, D.; Blaschke, T. A building extraction approach for airborne laser scanner data utilizing the object based image analysis paradigm. Int. J. Appl. Earth Obs. Geoinf. 2016, 52, 137–148. [Google Scholar] [CrossRef]

- Burai, P.; Bekő, L.; Lénárt, C.; Tomor, T.; Kovács, Z. Individual tree species classification using airborne hyperspectral imagery and lidar data. In Proceedings of the 2019 10th Workshop on Hyperspectral Imaging and Signal Processing: Evolution in Remote Sensing (WHISPERS), Amsterdam, The Netherlands, 24–26 September 2019; pp. 1–4. [Google Scholar] [CrossRef]

- Ambrus, A.; Burai, P.; Bekő, L.; Jolánkai, M. Precíziós növénytermesztési technológiák és nagy felbontású légi távérzékelt adatok alkalmazhatósága az őszi búza termesztésében. Acta Agron. Óváriensis 2017, 58, 85–104. [Google Scholar]

- Moussa, A.; El-Sheimy, N. A new object based method for automated extraction of urban objects from airborne sensors data. ISPRS Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2012, XXXIX-B3, 309–314. [Google Scholar] [CrossRef]

- Szabó, S.; Enyedi, P.; Horváth, M.; Kovács, Z.; Burai, P.; Csoknyai, T.; Szabó, G. Automated registration of potential locations for solar energy production with Light Detection and Ranging (LiDAR) and small format photogrammetry. J. Clean. Prod. 2016, 112, 3820–3829. [Google Scholar] [CrossRef]

- Abe, J.; Marzolff, I.; Ries, J. Small-Format Aerial Photography; Elsevier Inc.: Amsterdam, The Netherlands, 2010; ISBN 9780444532602. [Google Scholar]

- Szabó, G.; Bertalan, L.; Barkóczi, N.; Kovács, Z.; Burai, P.; Lénárt, C. Zooming on Aerial Survey. In Small Flying Drones: Applications for Geographic Observation; Casagrande, G., Sik, A., Szabó, G., Eds.; Springer International Publishing: Cham, Switzerland, 2018; pp. 91–126. ISBN 978-3-319-66577-1. [Google Scholar]

- Shen, X.; Cao, L.; Yang, B.; Xu, Z.; Wang, G. Estimation of forest structural attributes using spectral indices and point clouds from UAS-based multispectral and RGB imageries. Remote Sens. 2019, 11, 800. [Google Scholar] [CrossRef]

- Luo, N.; Wan, T.; Hao, H.; Lu, Q. Fusing high-spatial-resolution remotely sensed imagery and OpenStreetMap data for land cover classification over urban areas. Remote Sens. 2019, 11, 88. [Google Scholar] [CrossRef]

- Pessoa, G.G.; Amorim, A.; Galo, M.; Galo, M.d.L.B.T. Photogrammetric point cloud classification based on geometric and radiometric data integration. Bol. Cienc. Geod. 2019, 25, 1–17. [Google Scholar] [CrossRef]

- Schwind, M.; Starek, M. Structure-from-motion photogrammetry. Gim Int. 2017, 31, 36–39. [Google Scholar]

- Jiang, S.; Jiang, W. Efficient SfM for oblique UAV images: From match pair selection to geometrical verification. Remote Sens. 2018, 10, 1246. [Google Scholar] [CrossRef]

- Grigillo, D.; Kanjir, U. Urban object extraction from digital surface model and digital aerial images. ISPRS Ann. Photogramm. Remote Sens. Spat. Inf. Sci. 2012, 1, 215–220. [Google Scholar] [CrossRef]

- Rau, J.Y.; Jhan, J.P.; Hsu, Y.C. Analysis of oblique aerial images for land cover and point cloud classification in an Urban environment. IEEE Trans. Geosci. Remote Sens. 2015, 53, 1304–1319. [Google Scholar] [CrossRef]

- Haala, N.; Brenner, C. Extraction of buildings and trees in urban environments. ISPRS J. Photogramm. Remote Sens. 1999, 54, 130–137. [Google Scholar] [CrossRef]

- Yan, G.; Mas, J.F.; Maathuis, B.H.P.; Xiangmin, Z.; van Dijk, P.M. Comparison of pixel-based and object-oriented image classification approaches—A case study in a coal fire area, Wuda, Inner Mongolia, China. Int. J. Remote Sens. 2006, 27, 4039–4055. [Google Scholar] [CrossRef]

- Liu, D.; Xia, F. Assessing object-based classification: Advantages and limitations. Remote Sens. Lett. 2010, 1, 187–194. [Google Scholar] [CrossRef]

- Möller, M.; Lymburner, L.; Volk, M. The comparison index: A tool for assessing the accuracy of image segmentation. Int. J. Appl. Earth Obs. Geoinf. 2007, 9, 311–321. [Google Scholar] [CrossRef]

- Blaschke, T.; Lang, S.; Lorup, E.; Strobl, J.; Zeil, P. Object-oriented image processing in an integrated GIS/remote sensing environment and perspectives for environmental applications. Environmental information for planning, politics and the public. Environ. Inf. Plan. Polit. Public 2000, 2, 555–570. [Google Scholar]

- Adams, R.; Bischof, L. Seeded region growing. IEEE Trans. Pattern Anal. Mach. Intell. 1994, 16, 641–647. [Google Scholar] [CrossRef]

- Hossain, M.D.; Chen, D. Segmentation for Object-Based Image Analysis (OBIA): A review of algorithms and challenges from remote sensing perspective. ISPRS J. Photogramm. Remote Sens. 2019, 150, 115–134. [Google Scholar] [CrossRef]

- Tarsha-Kurdi, F.; Landes, T.; Grussenmeyer, P. Joint combination of point cloud and DSM for 3D building reconstruction using airborne laser scanner data. In Proceedings of the 2007 Urban Remote Sensing Joint Event (URBAN/URS), Paris, France, 11–13 April 2007; pp. 3–10. [Google Scholar] [CrossRef]

- Awrangjeb, M.; Zhang, C.; Fraser, C.S. Automatic extraction of building roofs using LIDAR data and multispectral imagery. ISPRS J. Photogramm. Remote Sens. 2013, 83, 1–18. [Google Scholar] [CrossRef]

- Maltezos, E.; Ioannidis, C. Automatic detection of building points from LiDAR and dense image matching point clouds. ISPRS Ann. Photogramm. Remote Sens. Spat. Inf. Sci. 2015, 2, 33–40. [Google Scholar] [CrossRef]

- Mahphood, A.; Arefi, H. Virtual first and last pulse method for building detection from dense LiDAR point clouds. Int. J. Remote Sens. 2020, 41, 1067–1092. [Google Scholar] [CrossRef]

- Shorter, N.; Kasparis, T. Automatic vegetation identification and building detection from a single nadir aerial image. Remote Sens. 2009, 1, 731–757. [Google Scholar] [CrossRef]

- Shi, F.; Xi, Y.; Li, X.; Duan, Y. An automation system of rooftop detection and 3D building modeling from aerial images. J. Intell. Robot. Syst. Theory Appl. 2011, 62, 383–396. [Google Scholar] [CrossRef]

- Agisoft LLC. Agisoft Metashape User Manual: Professional Edition, Version 1.5. 2019. Available online: https://www.agisoft.com/pdf/metashape-pro_1_5_en.pdf (accessed on 27 May 2020).

- Liu, H.; Wu, C. Developing a scene-based triangulated irregular network (TIN) technique for individual tree crown reconstruction with LiDAR data. Forests 2020, 11, 28. [Google Scholar] [CrossRef]

- Park, S.W.; Linsen, L.; Kreylos, O.; Owens, J.D.; Hamann, B. Discrete sibson interpolation. IEEE Trans. Vis. Comput. Graph. 2006, 12, 243–252. [Google Scholar] [CrossRef] [PubMed]

- Zhang, W.; Qi, J.; Wan, P.; Wang, H.; Xie, D.; Wang, X.; Yan, G. An easy-to-use airborne LiDAR data filtering method based on cloth simulation. Remote Sens. 2016, 8, 501. [Google Scholar] [CrossRef]

- Yan, L.; Li, Z.; Xie, H. Segmentation of unorganized point cloud from terrestrial laser scanner in urban region. In Proceedings of the 18th International Conference on Geoinformatics, Beijing, China, 18–20 June 2010; pp. 1–5. [Google Scholar] [CrossRef]

- Jochem, A.; Höfle, B.; Rutzinger, M.; Pfeifer, N. Automatic roof plane detection and analysis in airborne LiDAR point clouds for solar potential assessment. Sensors 2009, 9, 5241–5262. [Google Scholar] [CrossRef]

- Hunt, E.R.; Dean Hively, W.; Fujikawa, S.J.; Linden, D.S.; Daughtry, C.S.T.; McCarty, G.W. Acquisition of NIR-green-blue digital photographs from unmanned aircraft for crop monitoring. Remote Sens. 2010, 2, 290–305. [Google Scholar] [CrossRef]

- Zhang, X.; Zhang, F.; Qi, Y.; Deng, L.; Wang, X.; Yang, S. New research methods for vegetation information extraction based on visible light remote sensing images from an unmanned aerial vehicle (UAV). Int. J. Appl. Earth Obs. Geoinf. 2019, 78, 215–226. [Google Scholar] [CrossRef]

- Yuan, H.; Liu, Z.; Cai, Y.; Zhao, B. Research on vegetation information extraction from visible UAV remote sensing images. In Proceedings of the 5th International Workshop on Earth Observation and Remote Sensing Applications (EORSA), Xi’an, China, 18–20 June 2018; pp. 1–5. [Google Scholar] [CrossRef]

- Zhu, Y.; Yang, K.; Pan, E.; Yin, X.; Zhao, J. Extraction and analysis of urban vegetation information based on remote sensing image. In Proceedings of the 26th International Conference on Geoinformatics, Kunming, China, 28–30 June 2018; pp. 1–10. [Google Scholar] [CrossRef]

- Motohka, T.; Nasahara, K.N.; Oguma, H.; Tsuchida, S. Applicability of green-red vegetation index for remote sensing of vegetation phenology. Remote Sens. 2010, 2, 2369–2387. [Google Scholar] [CrossRef]

- Louhaichi, M.; Borman, M.M.; Johnson, D.E. Spatially located platform and aerial photography for documentation of grazing impacts on wheat. Geocarto Int. 2001, 16, 65–70. [Google Scholar] [CrossRef]

- Gitelson, A.A.; Kaufman, Y.J.; Stark, R.; Rundquist, D. Novel algorithms for remote estimation of vegetation fraction. Remote Sens. Environ. 2002, 80, 76–87. [Google Scholar] [CrossRef]

- Lussem, U.; Bolten, A.; Gnyp, M.L.; Jasper, J.; Bareth, G. Evaluation of RGB-based vegetation indices from UAV imagery to estimate forage yield in Grassland. ISPRS Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2018, 42, 1215–1219. [Google Scholar] [CrossRef]

- Hunt, E.R.; Cavigelli, M.; Daughtry, C.S.T.; McMurtrey, J.E.; Walthall, C.L. Evaluation of digital photography from model aircraft for remote sensing of crop biomass and nitrogen status. Precis. Agric. 2005, 6, 359–378. [Google Scholar] [CrossRef]

- Tucker, C.J. Red and photographic infrared linear combinations for monitoring vegetation. Remote Sens. Environ. 1979, 8, 127–150. [Google Scholar] [CrossRef]

- Bendig, J.; Yu, K.; Aasen, H.; Bolten, A.; Bennertz, S.; Broscheit, J.; Gnyp, M.L.; Bareth, G. Combining UAV-based plant height from crop surface models, visible, and near infrared vegetation indices for biomass monitoring in barley. Int. J. Appl. Earth Obs. Geoinf. 2015, 39, 79–87. [Google Scholar] [CrossRef]

- Bareth, G.; Bolten, A.; Gnyp, M.L.; Reusch, S.; Jasper, J. Comparison of uncalibrated RGBVI with spectrometer-based NDVI derived from UAV sensing systems on field scale. ISPRS Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2016, 41, 837–843. [Google Scholar] [CrossRef]

- Kupidura, P. The comparison of different methods of texture analysis for their efficacy for land use classification in satellite imagery. Remote Sens. 2019, 11, 1233. [Google Scholar] [CrossRef]

- Chaurasia, K.; Garg, P.K. The role of texture information and data fusion in topographic objects extraction from satellite data. Geod. Cartogr. 2014, 40, 116–121. [Google Scholar] [CrossRef]

- Zhang, X.; Cui, J.; Wang, W.; Lin, C. A study for texture feature extraction of high-resolution satellite images based on a direction measure and gray level co-occurrence matrix fusion algorithm. Sensors 2017, 17, 1474. [Google Scholar] [CrossRef]

- Weszka, J.S.; Dyer, C.R.; Rosenfeld, A. A comparative study of texture measures for terrain classification. IEEE Trans. Syst. Man Cybern. 1976, SMC-6, 269–285. [Google Scholar] [CrossRef]

- Zhang, Y. Optimisation of building detection in satellite images by combining multispectral classification and texture filtering. ISPRS J. Photogramm. Remote Sens. 1999, 54, 50–60. [Google Scholar] [CrossRef]

- Clausi, D.A.; Zhao, Y. Rapid extraction of image texture by co-occurrence using a hybrid data structure. Comput. Geosci. 2002, 28, 763–774. [Google Scholar] [CrossRef]

- Haralick, R.; Shanmugam, K.; Dinstein, I. Textural features for image classification. IEEE Trans. Syst. Man Cybern. 1973, SMC-3, 610–621. [Google Scholar] [CrossRef]

- Kabir, S.; He, D.C.; Sanusi, M.A.; Wan Hussina, W.M.A. Texture analysis of IKONOS satellite imagery for urban land use and land cover classification. Imaging Sci. J. 2010, 58, 163–170. [Google Scholar] [CrossRef]

- Tang, X. Texture information in run-length matrices. IEEE Trans. Image Process. 1998, 7, 1602–1609. [Google Scholar] [CrossRef] [PubMed]

- Galloway, M.M. Texture analysis using gray level run lengths. Comput. Graph. Image Process. 1975, 4, 172–179. [Google Scholar] [CrossRef]

- Bechtel, B.; Ringeler, A.; Böhner, J. Segmentation for object extraction of treed using MATLAB and SAGA. Hamburg. Beiträge Zur Phys. Geogr. Und Landsch. 2008, 19, 1–12. [Google Scholar]

- R: The R Project for Statistical Computing. Available online: https://www.r-project.org/ (accessed on 13 May 2020).

- Revelle, W. Psych: Procedures for Personality and Psychological Research, Northwerstern University, Evanston, Illinois, USA, R Package Version 1.9.12. Available online: https://cran.r-project.org/web/packages/psych/psych.pdf (accessed on 13 May 2020).

- Bernaards, C.A.; Jennrich, R.I. Gradient projection algorithms and software for arbitrary rotation criteria in factor analysis. Educ. Psychol. Meas. 2005, 65, 770–790. [Google Scholar] [CrossRef]

- Chen, Q.; Meng, Z.; Liu, X.; Jin, Q.; Su, R. Decision variants for the automatic determination of optimal feature subset in RF-RFE. Genes 2018, 9, 301. [Google Scholar] [CrossRef]

- Kuhn, M.; Wing, J.; Weston, S.; Williams, A.; Keefer, C.; Engelhardt, A.; Cooper, T.; Mayer, Z.; Kenkel, B.; R Core Team; et al. Package ‘Caret’: Classification and Regression Training. R Package Version 6.0–86. 2020. Available online: https://cran.r-project.org/web/packages/caret/caret.pdf (accessed on 13 May 2020).

- Breiman, L. Random Forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Cortes, C.; Vapnik, V. Support-vector networks. Mach. Learn. 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Crammer, K. On the algorithmic implementation of multiclass kernel-based vector machines. J. Mach. Learn. Res. JMLR 2002, 2, 265–292. [Google Scholar]

- Lee, Y.; Lin, Y.; Wahba, G. Multicategory support vector machines: Theory and application to the classification of microarray data and satellite radiance data. J. Am. Stat. Assoc. 2004, 99, 67–81. [Google Scholar] [CrossRef]

- Tong, J.C. Cross-Validation. Encycl. Syst. Biol. 2013, 508. [Google Scholar] [CrossRef]

- Friedman, J.H. Multivariate Adaptive Regression Splines. Ann. Stat. 1991, 19, 1–67. [Google Scholar] [CrossRef]

- Rotigliano, E.; Martinello, C.; Agnesi, V.; Conoscenti, C. Evaluation of debris flow susceptibility in El Salvador (CA): A comparisobetween multivariate adaptive regression splines (MARS) and binary logistic regression (BLR). Hung. Geogr. Bull. 2018, 67, 361–373. [Google Scholar] [CrossRef]

- Golub, G.H.; Heath, M.; Wahba, G. Generalized cross-validation as a method for choosing a good ridge parameter. Technometrics 1979, 21, 215–223. [Google Scholar] [CrossRef]

- Barker, M.; Rayens, W. Partial least squares for discrimination. J. Chemom. 2003, 17, 166–173. [Google Scholar] [CrossRef]

- Yeh, C.H.; Spiegelman, C.H. Partial least squares and classification and regression trees. Chemom. Intell. Lab. Syst. 1994, 22, 17–23. [Google Scholar] [CrossRef]

- Liaw, A.; Wiener, M. Classification and Regression by randomForest. R News 2002, 2, 18–22. [Google Scholar]

- Therneau, T.; Atkinson, B.; Ripley, B. rpart: Recursive Partitioning for Classification, Regression and Survival Trees. R Package Version 4.1-15. 2019. Available online: https://cran.r-project.org/web/packages/rpart/rpart.pdf (accessed on 22 May 2020).

- Mevik, B.-H.; Wehrens, R.; Liland, H. pls: Partial Least Squares and Principal Component Regression, R Package Version 2.7–2. 2019. Available online: https://cran.r-project.org/web/packages/pls/pls.pdf (accessed on 22 May 2020).

- Meyer, D.; Dimitriadou, E.; Hornik, K.; Weingessel, A.; Leisch, F. e1071: Misc Functions of the Department of Statistics, Probability Theory Group (Formerly: E1071), TU Wien, R Package Version 1.7–3. 2019. Available online: https://cran.r-project.org/web/packages/e1071/e1071.pdf (accessed on 22 May 2020).

- Kim, J.H. Estimating classification error rate: Repeated cross-validation, repeated hold-out and bootstrap. Comput. Stat. Data Anal. 2009, 53, 3735–3745. [Google Scholar] [CrossRef]

- Molinaro, A.M.; Simon, R.; Pfeiffer, R.M. Prediction error estimation: A comparison of resampling methods. Bioinformatics 2005, 21, 3301–3307. [Google Scholar] [CrossRef] [PubMed]

- Pontius, R.G.; Millones, M. Death to Kappa: Birth of quantity disagreement and allocation disagreement for accuracy assessment. Int. J. Remote Sens. 2011, 32, 4407–4429. [Google Scholar] [CrossRef]

- Powers, D.M.W. Evaluation: From precision, recall and F-Factor to ROC, informedness, markedness & correlation. J. Mach. Learn. Technol. 2007, 2, 37–63. [Google Scholar]

- Tan, P.-N.; Steinbach, M.; Karpatne, A.; Kumar, V. Introduction to Data Mining; Pearson Publisher, 2005; 792p, Available online: https://www-users.cs.umn.edu/~kumar001/dmbook/index.php (accessed on 8 July 2020).

- Congalton, R.G. A review of assessing the accuracy of classifications of remotely sensed data. Remote Sens. Environ. 1991, 37, 35–46. [Google Scholar] [CrossRef]

- ISPRS Test Project on Urban Classification and 3D Building Reconstruction. Available online: http://www2.isprs.org/commissions/comm3/wg4/detection-and-reconstruction.html (accessed on 8 July 2020).

- Varga, K.; Szabó, S.; Szabó, G.; Dévai, G.; Tóthmérész, B. Improved land cover mapping using aerial photographs and satellite images. Open Geosci. 2015, 7, 15–26. [Google Scholar] [CrossRef]

- Cots-Folch, R.; Aitkenhead, M.J.; Martínez-Casasnovas, J.A. Mapping land cover from detailed aerial photography data using textural and neural network analysis. Int. J. Remote Sens. 2007, 28, 1625–1642. [Google Scholar] [CrossRef]

- Al-Kofahi, S.; Steele, C.; VanLeeuwen, D.; Hilaire, R.S. Mapping land cover in urban residential landscapes using very high spatial resolution aerial photographs. Urban For. Urban Green. 2012, 11, 291–301. [Google Scholar] [CrossRef]

- Li, X.; Myint, S.W.; Zhang, Y.; Galletti, C.; Zhang, X.; Turner, B.L. Object-based land-cover classification for metropolitan Phoenix, Arizona, using aerial photography. Int. J. Appl. Earth Obs. Geoinf. 2014, 33, 321–330. [Google Scholar] [CrossRef]

- Deng, J.S.; Wang, K.; Li, J.; Deng, Y.H. Urban land use change detection using multisensor satellite images project supported by the national Natural Science Foundation of China (NSFC) (No. 30571112). Pedosphere 2009, 19, 96–103. [Google Scholar] [CrossRef]

- Szabó, L.; Burai, P.; Deák, B.; Dyke, G.J.; Szabó, S. Assessing the efficiency of multispectral satellite and airborne hyperspectral images for land cover mapping in an aquatic environment with emphasis on the water caltrop (Trapa natans). Int. J. Remote Sens. 2019, 40, 5192–5215. [Google Scholar] [CrossRef]

- Bakuła, K.; Kupidura, P.; Jełowicki, Ł. Testing of land cover classification from multispectral airborne laser scanning data. ISPRS Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2016, 41, 161–169. [Google Scholar] [CrossRef]

- Albregtsen, F. Statistical texture measures computed from GLCM–Matrices. Image Processing Lab. Dep. Inform. Oslo Univ. Oslo 2008, 5, 1–14. [Google Scholar]

- Pawade, P.W. Texture image classification using Support Vector Machine. Int. J. Comput. Technol. Appl. 2012, 03, 71–75. [Google Scholar]

- Bofana, J.; Zhang, M.; Nabil, M.; Wu, B.; Tian, F.; Liu, W.; Zeng, H.; Zhang, N.; Nangombe, S.S.; Cipriano, S.A.; et al. Comparison of different cropland classification methods under diversified agroecological conditions in the zambezi river basin. Remote Sens. 2020, 12, 2096. [Google Scholar] [CrossRef]

- Xu, F.; Li, Z.; Zhang, S.; Huang, N.; Quan, Z.; Zhang, W.; Liu, X.; Jiang, X.; Pan, J.; Prishchepov, A.V. Mapping winter wheat with combinations of temporally aggregated Sentinel-2 and Landsat-8 data in Shandong Province, China. Remote Sens. 2020, 12, 2065. [Google Scholar] [CrossRef]

- Van Leeuwen, B.; Mezosi, G.; Tobak, Z.; Szatmári, J.; Barta, K. Identification of inland excess water floodings using an artificial neural network. Carpathian J. Earth Environ. Sci. 2012, 7, 173–180. [Google Scholar]

- Hang, R.; Li, Z.; Ghamisi, P.; Hong, D.; Xia, G.; Liu, Q. Classification of hyperspectral and LiDAR data using coupled CNNs. IEEE Trans. Geosci. Remote Sens. 2020, 58, 4939–4950. [Google Scholar] [CrossRef]

- Hang, R.; Liu, Q.; Hong, D.; Ghamisi, P. Cascaded recurrent neural networks for hyperspectral image classification. IEEE Trans. Geosci. Remote Sens. 2019, 57, 5384–5394. [Google Scholar] [CrossRef]

- Hasan, H.; Shafri, H.Z.M.; Habshi, M. A Comparison between Support Vector Machine (SVM) and Convolutional Neural Network (CNN) models for hyperspectral image classification. IOP Conf. Ser. Earth Environ. Sci. 2019, 357. [Google Scholar] [CrossRef]

- Huang, L.; An, R.; Zhao, S.; Jiang, T.; Hu, H. A deep learning-based robust change detection approach for very high resolution remotely sensed images with multiple features. Remote Sens. 2020, 12, 1441. [Google Scholar] [CrossRef]

- Zhang, P.; Ke, Y.; Zhang, Z.; Wang, M.; Li, P.; Zhang, S. Urban land use and land cover classification using novel deep learning models based on high spatial resolution satellite imagery. Sensors 2018, 18, 3717. [Google Scholar] [CrossRef] [PubMed]

- Zhang, L.; Xia, G.S.; Wu, T.; Lin, L.; Tai, X.C. Deep Learning for remote sensing image understanding. J. Sens. 2016, 2016. [Google Scholar] [CrossRef]

- Chen, G.; Zhang, X.; Wang, Q.; Dai, F.; Gong, Y.; Zhu, K. Symmetrical dense-shortcut deep fully convolutional networks for semantic segmentation of very-high-resolution remote sensing images. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2018, 11, 1633–1644. [Google Scholar] [CrossRef]

- Ma, L.; Liu, Y.; Zhang, X.; Ye, Y.; Yin, G.; Johnson, B.A. Deep learning in remote sensing applications: A meta-analysis and review. ISPRS J. Photogramm. Remote Sens. 2019, 152, 166–177. [Google Scholar] [CrossRef]

- Kuhn, M.; Johnson, K. Over-fitting and model tuning. In Applied Predictive Modeling; Springer: New York, NY, USA, 2013; pp. 61–92. [Google Scholar]

- Hawkins, D.M. The Problem of Overfitting. J. Chem. Inf. Comput. Sci. 2004, 44, 1–12. [Google Scholar] [CrossRef] [PubMed]

- Deng, B.C.; Yun, Y.H.; Liang, Y.Z.; Cao, D.S.; Xu, Q.S.; Yi, L.Z.; Huang, X. A new strategy to prevent over-fitting in partial least squares models based on model population analysis. Anal. Chim. Acta 2015, 880, 32–41. [Google Scholar] [CrossRef] [PubMed]

- Reunanen, J. Overfitting in making comparisons between variable selection methods. J. Mach. Learn. Res. 2003, 3, 1371–1382. [Google Scholar]

- Viswanathan, V.; Viswanathan, S. R Data Analysis Cookbook; Packt Publishing: Birmingham, UK, 2015. [Google Scholar]

- Han, H.; Jiang, X. Overcome support vector machine diagnosis overfitting. Cancer Inform. 2014, 13s1, CIN.S13875. [Google Scholar] [CrossRef]

- Khuntia, S.; Mujtaba, H.; Patra, C.; Farooq, K.; Sivakugan, N.; Das, B.M. Prediction of compaction parameters of coarse grained soil using multivariate adaptive regression splines (MARS). Int. J. Geotech. Eng. 2015, 9, 79–88. [Google Scholar] [CrossRef]

- Tharwat, A. Classification assessment methods. Appl. Comput. Inform. 2018. [Google Scholar] [CrossRef]

- Dong, Y.; Zhang, L.; Cui, X.; Ai, H.; Xu, B. Extraction of buildings from multiple-view aerial images using a feature-level-fusion strategy. Remote Sens. 2018, 10, 1947. [Google Scholar] [CrossRef]

| RGB Index | Equation | Literature |

|---|---|---|

| Visible Atmospherically Resistant Index (VARI) | (G − R)/(G + R − B) | [74,75] |

| Green Leaf Index (GLI) | (2 × G – R − B)/(2 × G + R + B) | [69,73,75] |

| Normalized Green-Red Difference Index (NGRDI) | (G − R)/(G + R) | [70,75,76,77] |

| Red-Green-Blue Vegetation Index (RGBVI) | (G2 − (R × B))/(G2 + (R × B)) | [69,70,75,78,79] |

| Textural Features | Equation | Literature |

|---|---|---|

| Energy | [1,21,82,83,84,85,86,87] | |

| Entropy | [1,21,82,83,84,85,86,87] | |

| Correlation | [1,82,83,85,86,87] | |

| Inverse Difference Moment | [1,21,84,86,87] | |

| Inertia | [1,82,83,84,85,86,87] | |

| Mean | [1,21,87] | |

| Variance | [1,87] | |

| Difference Entropy | [86] | |

| Run Percentage | [88,89] | |

| Grey-Level Non-Uniformity | [88,89] |

| Statistic | PC1 | PC2 | PC3 | PC4 | PC5 |

|---|---|---|---|---|---|

| SS loadings | 6.58 | 6.41 | 4.84 | 4.26 | 2.6 |

| Proportion variance | 0.23 | 0.23 | 0.17 | 0.15 | 0.09 |

| Cumulative variance | 0.23 | 0.46 | 0.64 | 0.79 | 0.88 |

| Variable | Mean Decrease Accuracy | Mean Decrease Gini |

|---|---|---|

| nDSM | 409.5 | 211.7 |

| Blue band | 155.9 | 160.9 |

| VARI | 108.9 | 83.8 |

| Slope | 98.8 | 27.4 |

| GLI | 75.3 | 73.0 |

| RGBVI | 54.5 | 42.4 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Schlosser, A.D.; Szabó, G.; Bertalan, L.; Varga, Z.; Enyedi, P.; Szabó, S. Building Extraction Using Orthophotos and Dense Point Cloud Derived from Visual Band Aerial Imagery Based on Machine Learning and Segmentation. Remote Sens. 2020, 12, 2397. https://doi.org/10.3390/rs12152397

Schlosser AD, Szabó G, Bertalan L, Varga Z, Enyedi P, Szabó S. Building Extraction Using Orthophotos and Dense Point Cloud Derived from Visual Band Aerial Imagery Based on Machine Learning and Segmentation. Remote Sensing. 2020; 12(15):2397. https://doi.org/10.3390/rs12152397

Chicago/Turabian StyleSchlosser, Aletta Dóra, Gergely Szabó, László Bertalan, Zsolt Varga, Péter Enyedi, and Szilárd Szabó. 2020. "Building Extraction Using Orthophotos and Dense Point Cloud Derived from Visual Band Aerial Imagery Based on Machine Learning and Segmentation" Remote Sensing 12, no. 15: 2397. https://doi.org/10.3390/rs12152397

APA StyleSchlosser, A. D., Szabó, G., Bertalan, L., Varga, Z., Enyedi, P., & Szabó, S. (2020). Building Extraction Using Orthophotos and Dense Point Cloud Derived from Visual Band Aerial Imagery Based on Machine Learning and Segmentation. Remote Sensing, 12(15), 2397. https://doi.org/10.3390/rs12152397