Ship Detection Using Deep Convolutional Neural Networks for PolSAR Images

Abstract

:1. Introduction

2. Theory and Methodology

2.1. Preprocessing

2.2. Sea-Coast-Ship Segmentation

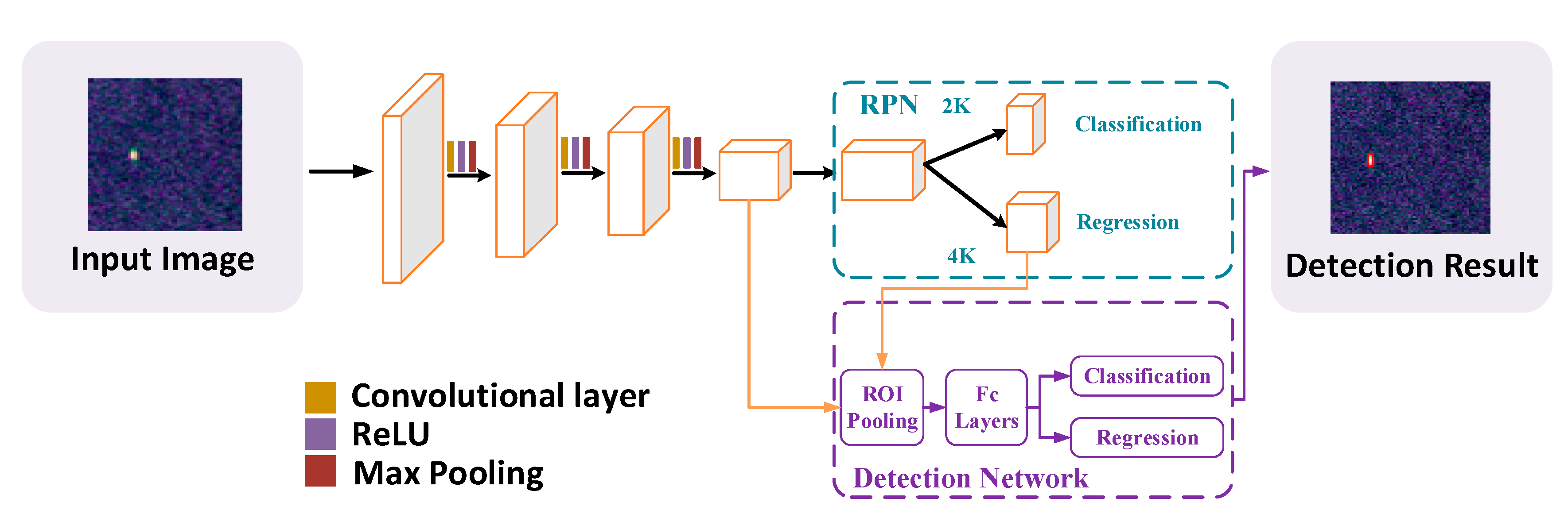

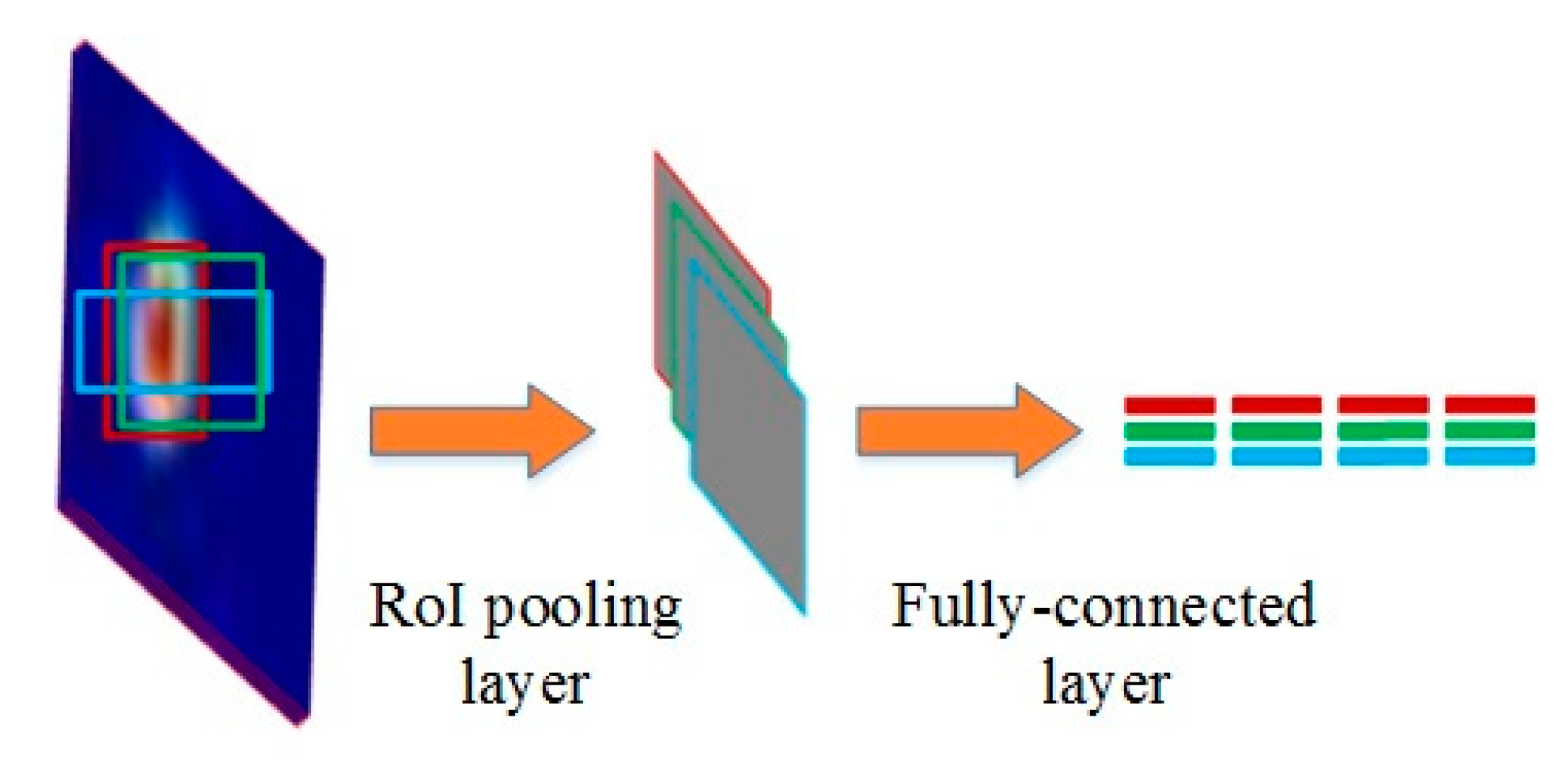

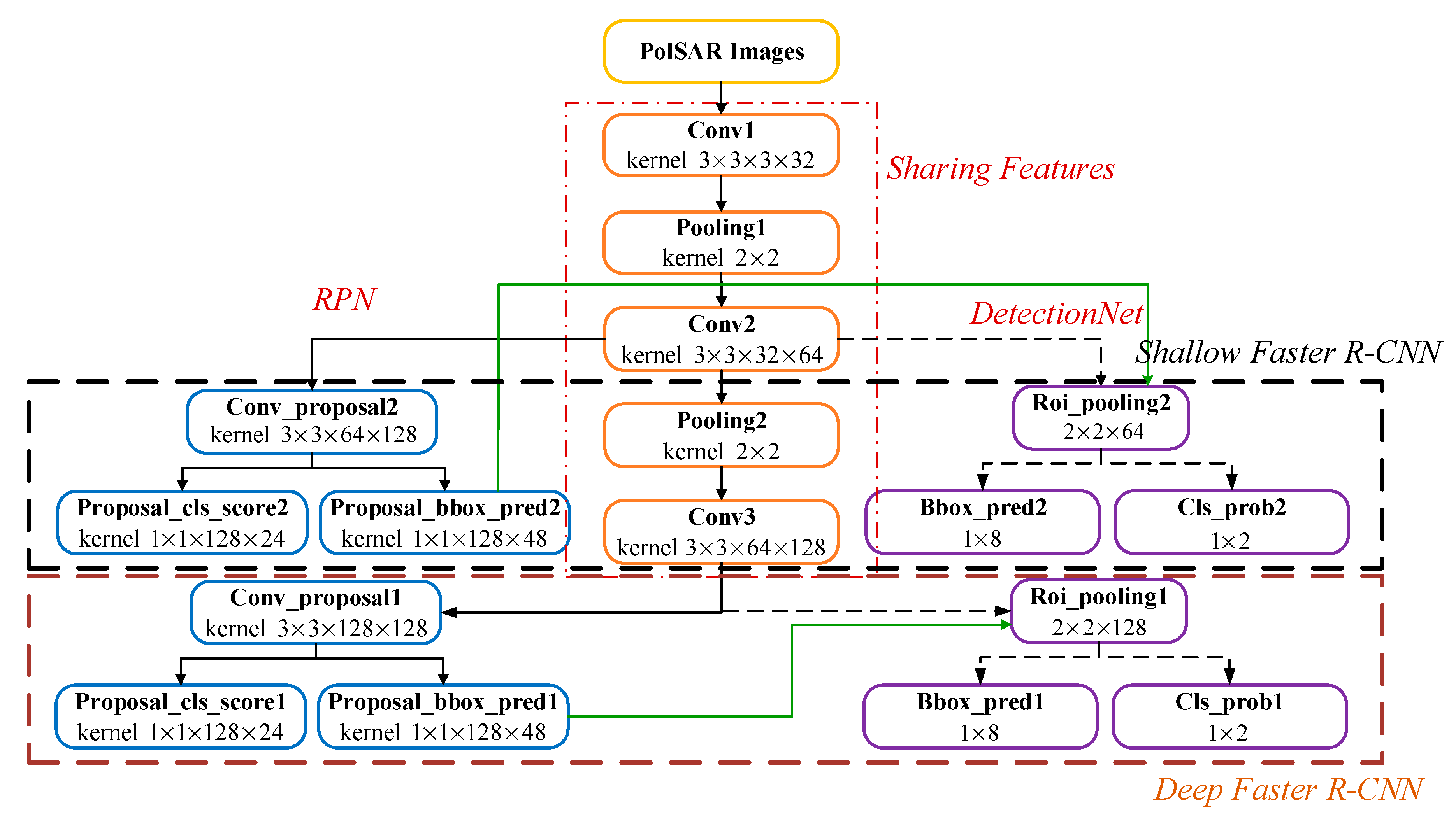

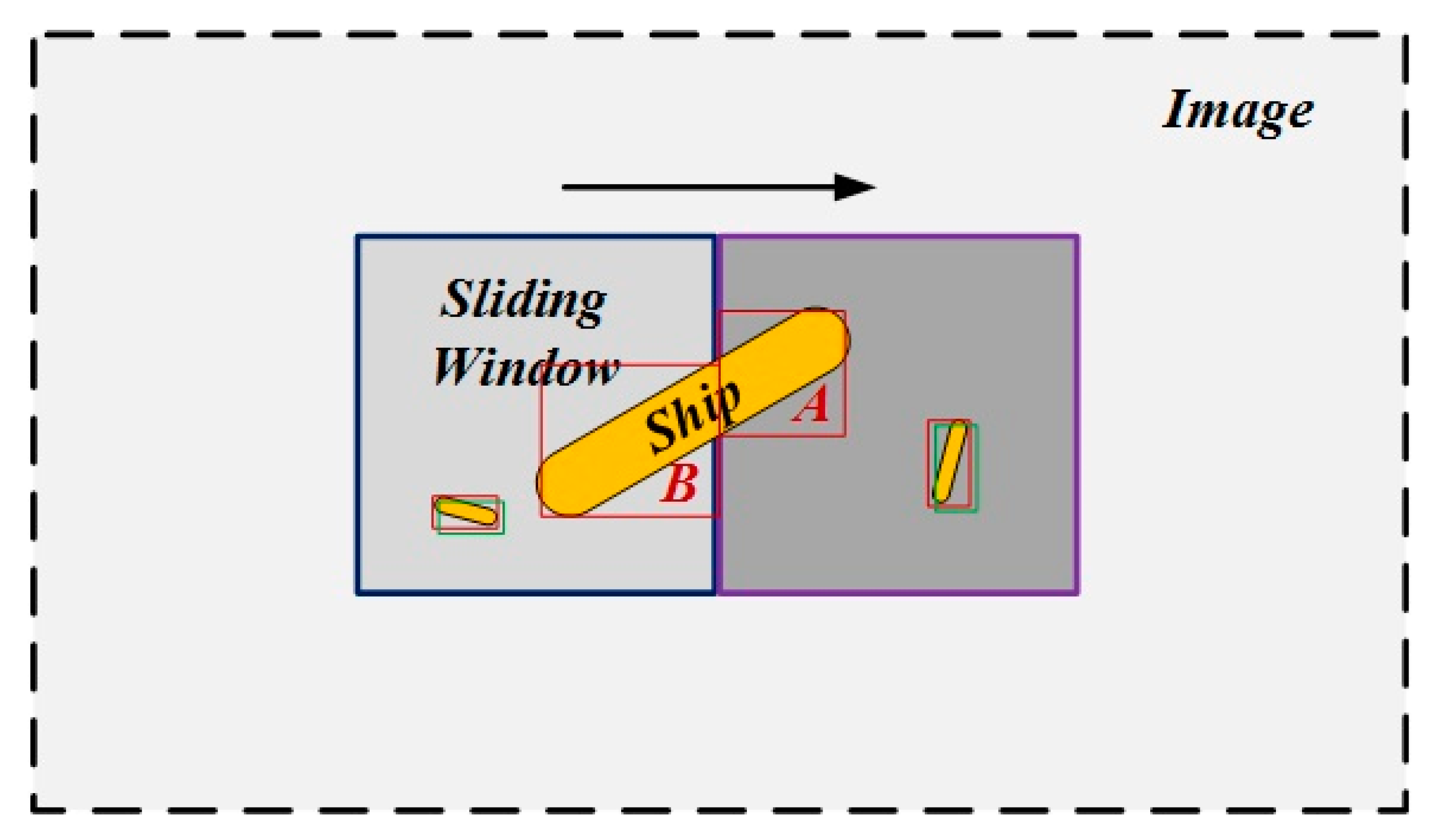

2.3. Modified Faster-RCNN

2.4. Target Fusion and Localization

3. Experimental Results

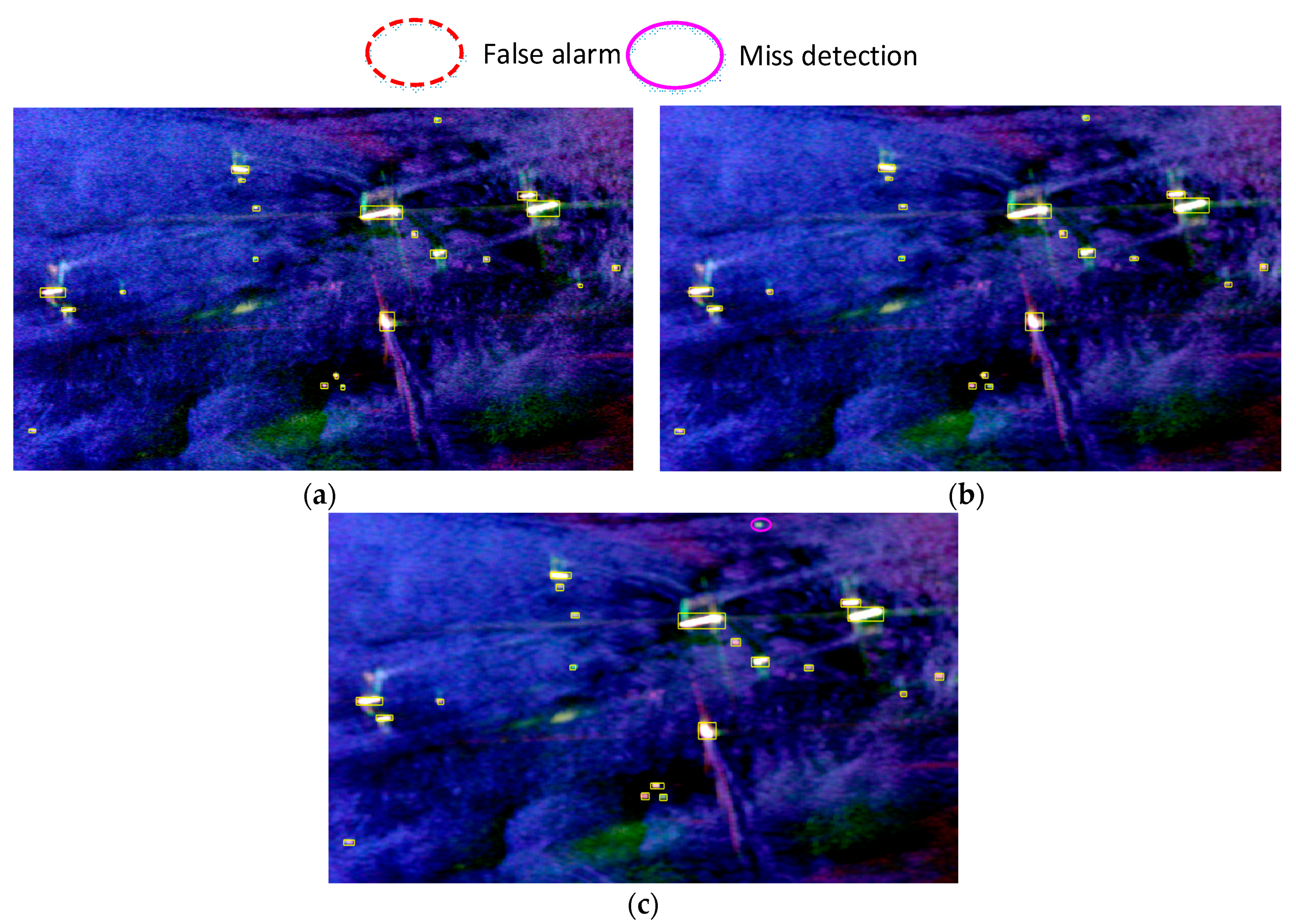

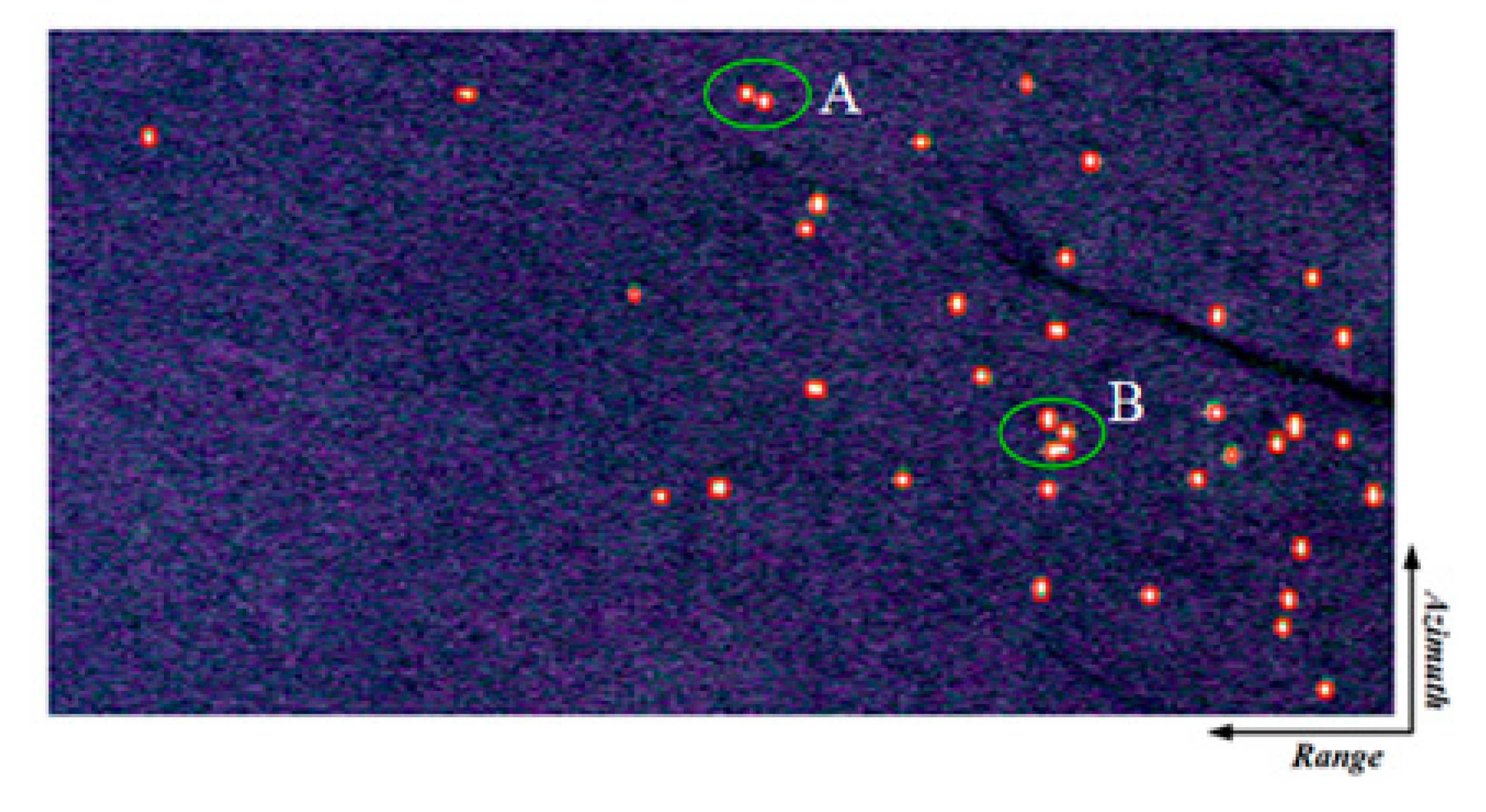

3.1. Results of AIRSAR Japan Dataset

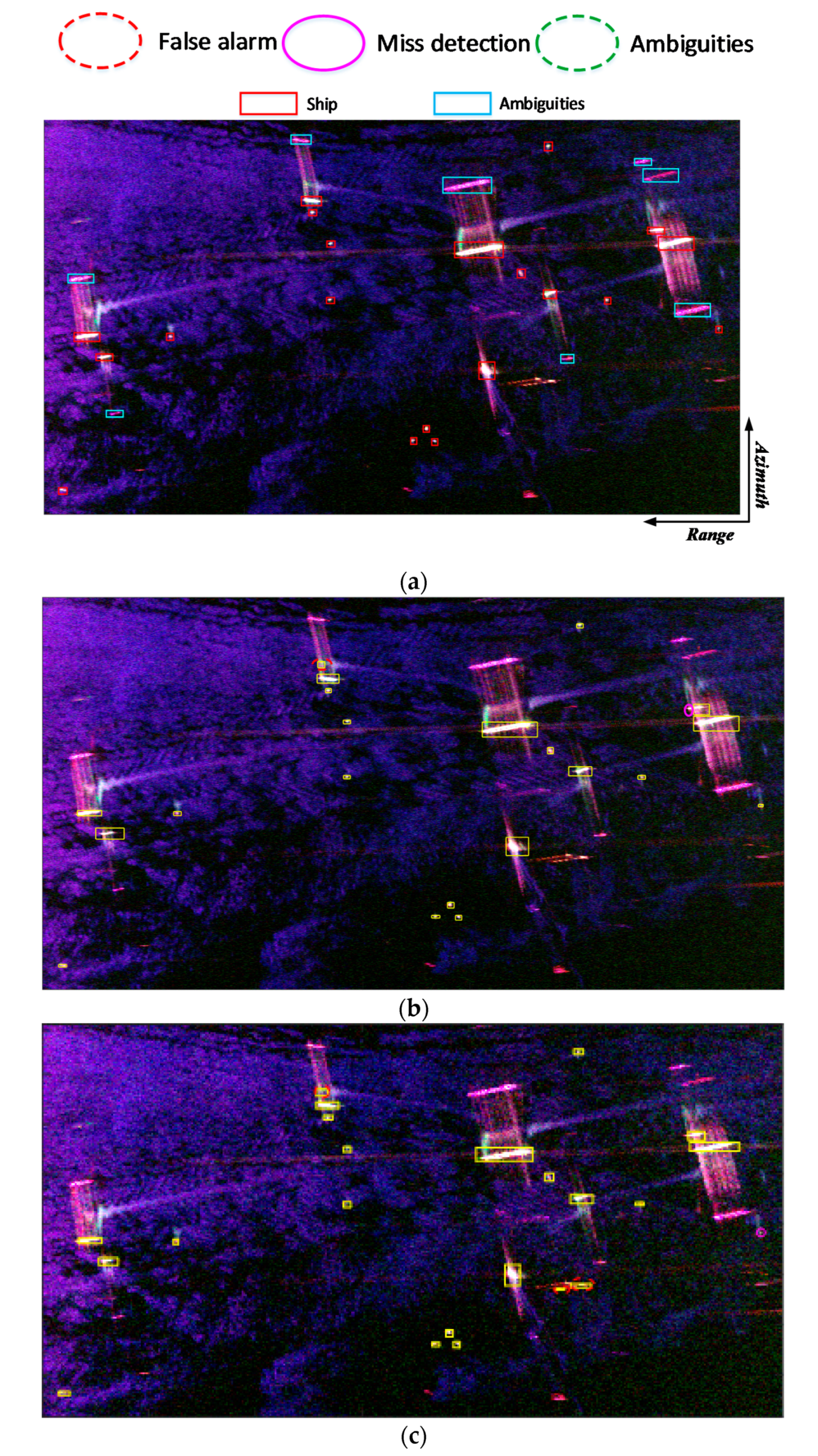

3.2. Result of UAVSAR Gulfco Area A Dataset

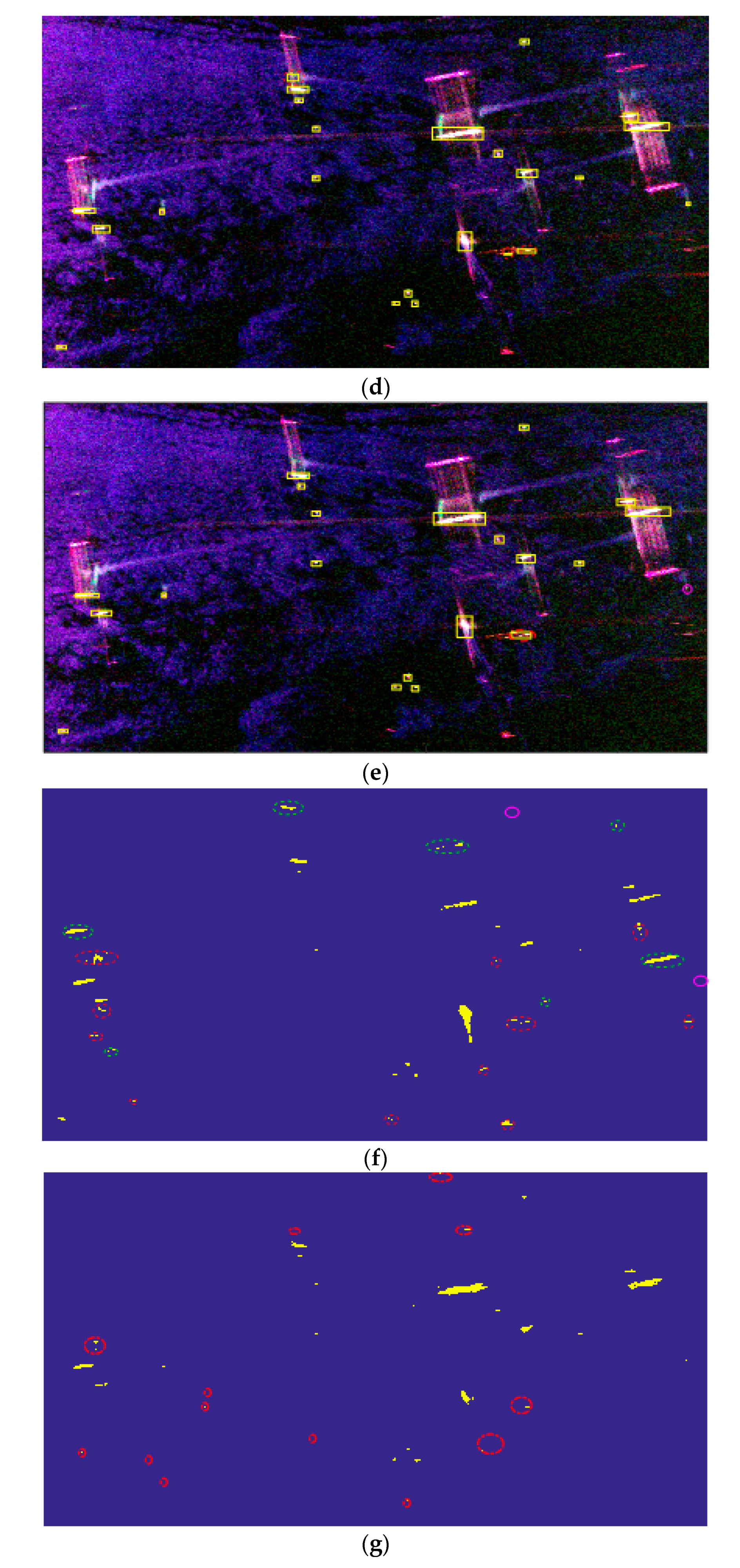

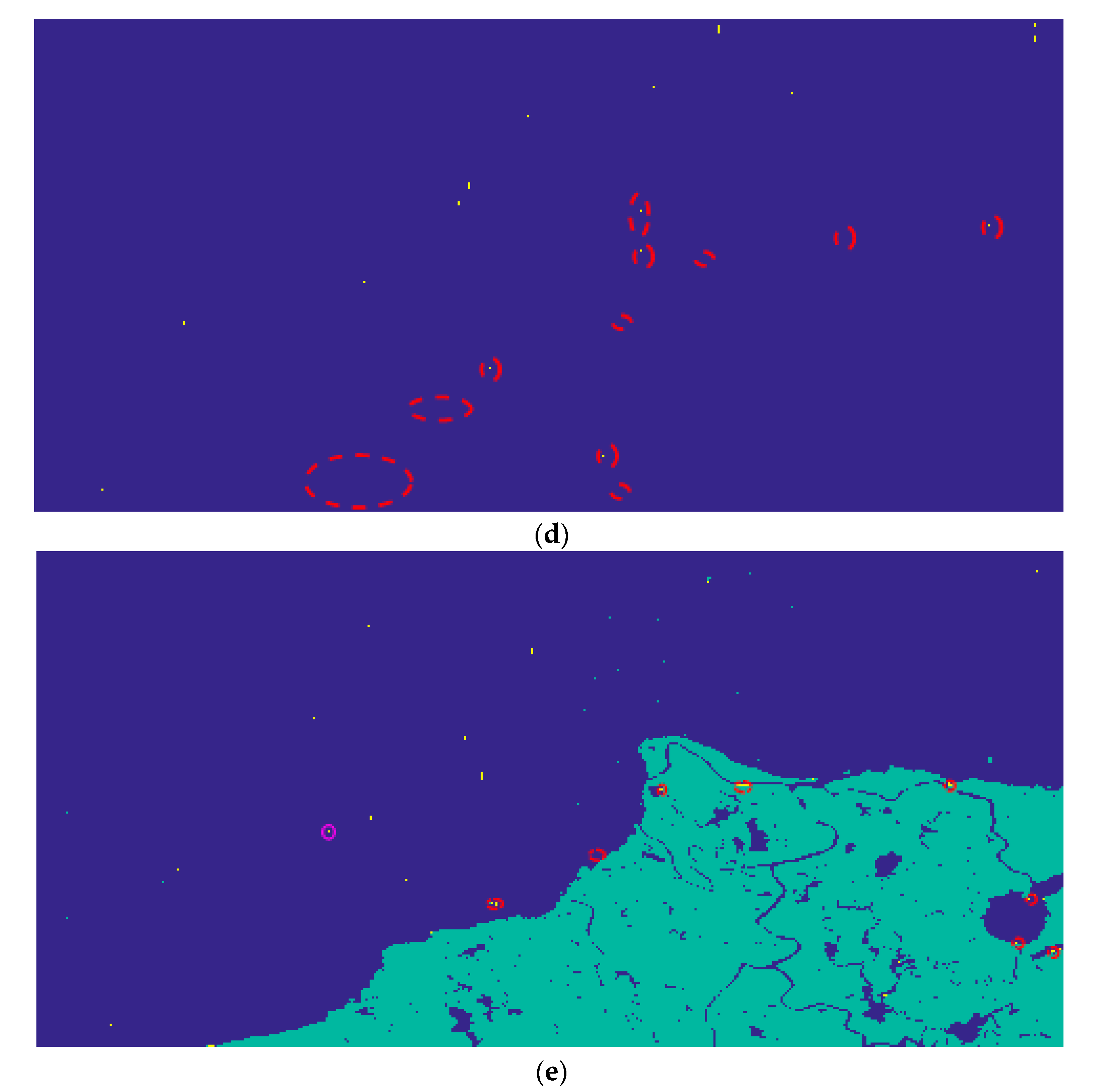

3.3. Result of UAVSAR Gulfco Area B Dataset

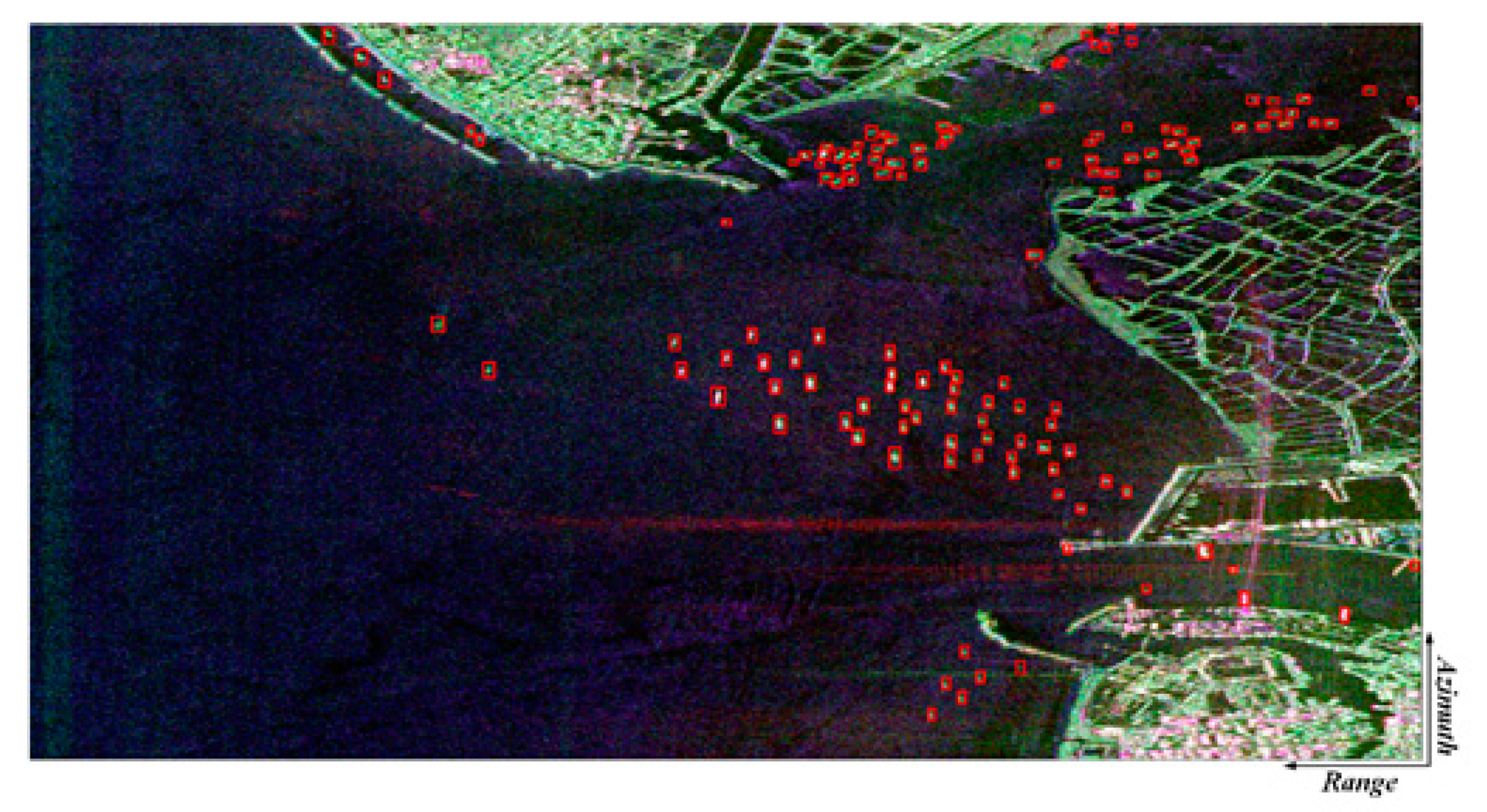

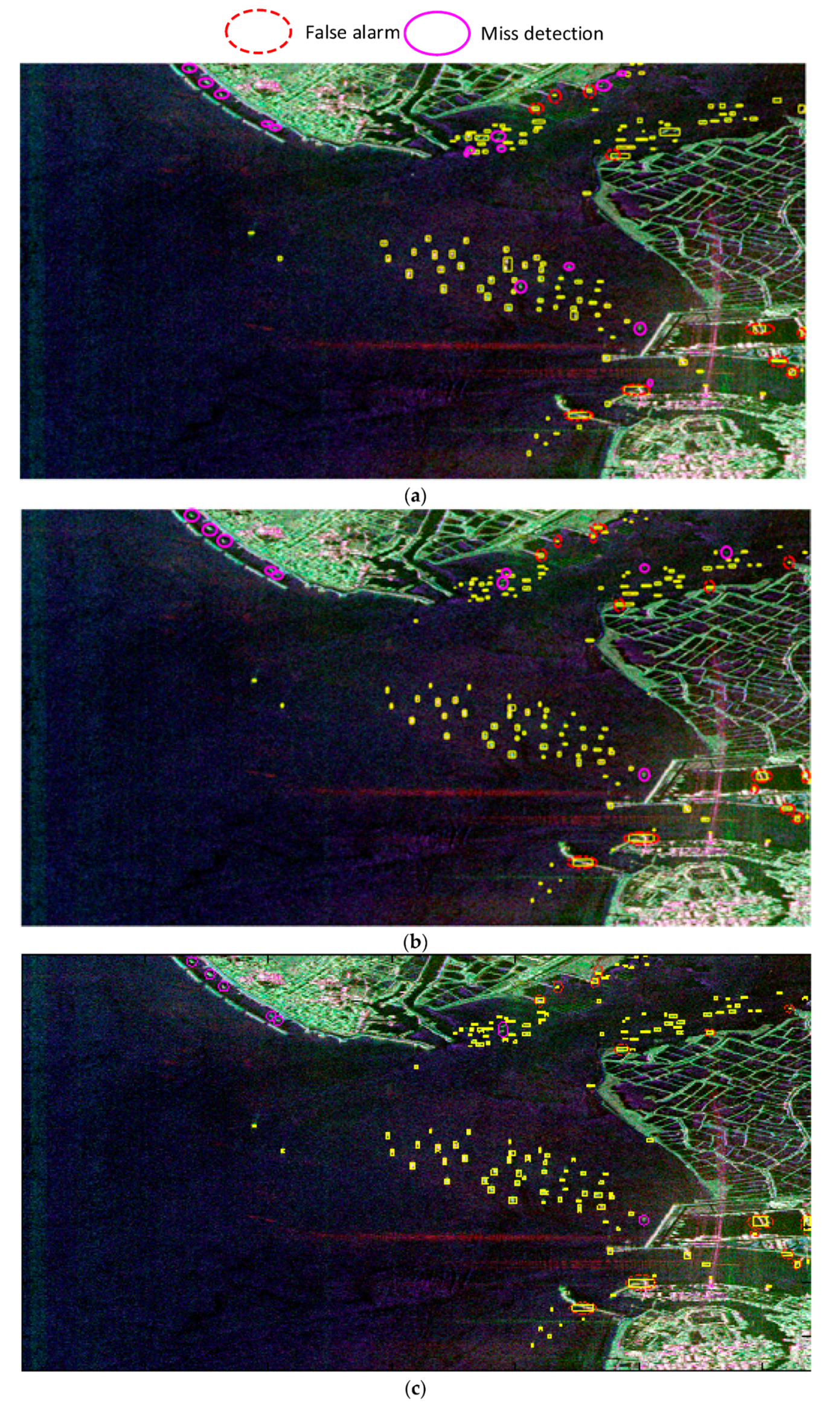

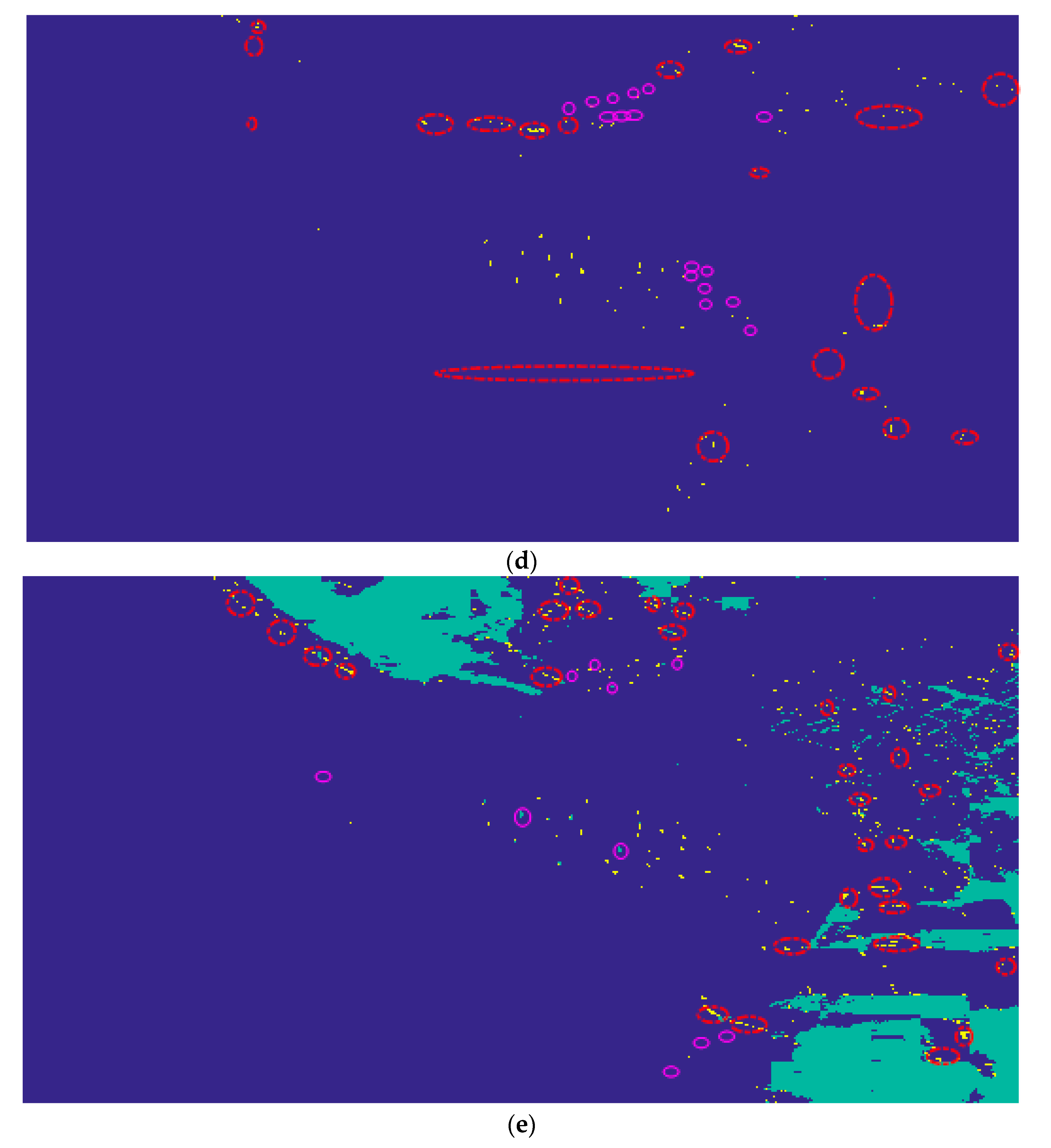

3.4. Result of AIRSAR Taiwan Area Dataset

4. Discussion

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Marino, A. A notch filter for ship detection with polarimetric SAR data. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2013, 6, 1219–1232. [Google Scholar] [CrossRef]

- Wang, Y.; Liu, H. PolSAR ship detection based on superpixel-level scattering mechanism distribution features. IEEE Geosci. Remote Sens. Lett. 2015, 12, 1780–1784. [Google Scholar] [CrossRef]

- Lin, H.; Chen, H.; Wang, H.; Yin, J.; Yang, J. Ship Detection for PolSAR Images via Task-Driven Discriminative Dictionary Learning. Remote Sens. 2019, 11, 769. [Google Scholar] [CrossRef]

- An, W.; Xie, C.; Yuan, X. An improved iterative censoring scheme for CFAR ship detection with SAR imagery. IEEE Trans. Geosci. Remote Sens. 2014, 52, 4585–4595. [Google Scholar]

- Pelich, R.; Longepe, N.; Mercier, G. AIS-based evaluation of target detectors and SAR sensors characteristics for maritime surveillance. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2015, 8, 3892–3901. [Google Scholar] [CrossRef]

- Song, S.; Xu, B.; Li, Z.; Yang, J. Ship detection in SAR imagery via variational Bayesian inference. IEEE Geosci. Remote Sens. Lett. 2015, 13, 319–323. [Google Scholar] [CrossRef]

- Smith, M.; Varshney, P. Intelligent CFAR processor based on data variability. IEEE Trans. Aerosp. Electron. Syst. 2000, 36, 837–847. [Google Scholar] [CrossRef]

- Tao, D.; Doulgeris, A.; Brekke, C. A segmentation-based CFAR detection algorithm using truncated statistics. IEEE Trans. Geosci. Remote Sens. 2016, 54, 2887–2898. [Google Scholar] [CrossRef]

- Tao, D.; Anfinsen, S.; Brekke, C. Robust CFAR detector based on truncated statistics in multiple-target situations. IEEE Trans. Geosci. Remote Sens. 2016, 54, 117–134. [Google Scholar] [CrossRef]

- Touzi, R. Calibrated polarimetric SAR data for ship detection. In Proceedings of the International Geoscience Remote Sensing Symposium, (IGARSS), Honolulu, HI, USA, 24–28 July 2000; pp. 144–146. [Google Scholar]

- Touzi, R.; Charbonneau, F. On the use of permanent symmetric scatters for ship characterization. IEEE Trans. Geosci. Remote Sens. 2004, 42, 2039–2045. [Google Scholar] [CrossRef]

- Wei, J.; Li, P.; Yang, J.; Zhang, J.; Lang, F. A new automatic ship detection method using L-band polarimetric SAR imagery. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2014, 7, 1383–1393. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. In Proceedings of the 2015 International Conference Learning Representations (ICLR), New York, NY, USA, 7–9 May 2015; pp. 1–14. [Google Scholar]

- Girshick, R.; Donahue, J.; Darrell, T.; Malik, J. Rich feature hierarchies for accurate object detection and semantic segmentation. In Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Columbus, OH, USA, 23–28 June 2014; pp. 580–587. [Google Scholar]

- Girshick, R. Fast r-cnn. In Proceedings of the 2015 IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 11–18 December 2015; pp. 1440–1448. [Google Scholar]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 1137–1149. [Google Scholar] [CrossRef] [PubMed]

- Maggiori, E.; Tarabalka, Y.; Charpiat, G.; Alliez, P. Convolutional neural networks for large-scale remote-sensing image classification. IEEE Trans. Geosci. Remote Sens. 2017, 55, 645–657. [Google Scholar] [CrossRef]

- Kang, M.; Leng, X.; Lin, Z.; Ji, K. A modified faster R-CNN based on CFAR algorithm for SAR ship detection. In Proceedings of the 2017 International Workshop on Remote Sensing with Intelligent Processing (RSIP), Shanghai, China, 19–21 May 2017; pp. 1–4. [Google Scholar]

- Lin, Z.; Ji, K.; Leng, X.; Kuang, G. Squeeze and Excitation Rank Faster R-CNN for Ship Detection in SAR Images. IEEE Geosci. Remote Sens. Lett. 2019, 16, 751–755. [Google Scholar] [CrossRef]

- Chen, S.; Tao, C.; Wang, X.; Xiao, S. Polarimetric SAR Targets Detection and Classification with Deep Convolutional Neural Network. In Proceedings of the 2018 Progress in Electromagnetics Research Symposium (PIERS-Toyama), Toyama, Japan, 1–4 August 2018; pp. 2227–2234. [Google Scholar]

- Zhang, S.; Wu, R.; Xu, K.; Wang, J.; Sun, W. R-cnn-based ship detection from high resolution remote sensing imagery. Remote Sens. 2019, 11, 631. [Google Scholar] [CrossRef]

- Fan, Q.; Chen, F.; Cheng, M.; Lou, S.; Xiao, R.; Zhang, B.; Wang, C.; Li, J. Ship detection using a fully convolutional network with compact polarimetric sar images. Remote Sens. 2019, 11, 2171. [Google Scholar] [CrossRef]

- Cao, C.; Zhang, J.; Meng, J.; Zhang, X.; Mao, X. Analysis of ship detection performance with full-compact-and dul-polarimetric sar. Remote Sens. 2019, 11, 2160. [Google Scholar] [CrossRef]

- Christian, S.; Vincent, V.; Sergey, L.; Jon, S.; Zbigniew, W. Rethinking the inception architecture for computer vision. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 26 June–1 July 2016; pp. 2818–2826. [Google Scholar]

- Ken, C.; Karen, S.; Andrea, V.; Andrew, Z. Return of the devil in the details: Delving deep into convolutional nets. In Proceedings of the British Machine Vision Conference (BMVC), Nottingham, UK, 1–5 September 2014; pp. 1–11. [Google Scholar]

- Jia, Y.; Shelhamer, E.; Donahue, J.; Karayev, S.; Long, J.; Girshick, R.; Guadarrama, S.; Darrell, T. Caffe: Convolutional architecture for fast feature embedding. In Proceedings of the 2014 ACM Conference on Multimedia, Orlando, FL, USA, 3–7 November 2014; pp. 675–678. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 26 June–1 July 2016; pp. 770–778. [Google Scholar]

- Huang, G.; Liu, Z.; Maaten, L. Densely Connected Convolutional Networks. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 2261–2269. [Google Scholar]

- Lee, S.; Pottier, E. Polarimetric Imaging: From Basics to Applications; CRC Press: Boca Raton, FL, USA, 2009. [Google Scholar]

- Paes, R.; Lorenzzetti, J.; Gherardi, D. Ship detection using TerraSAR-X images in the campos basin (Brazil). IEEE Geosci. Remote Sens. Lett. 2010, 7, 545–548. [Google Scholar] [CrossRef]

| Method | Consumed Time | |||||

|---|---|---|---|---|---|---|

| Shallow Faster R-CNN [16] | 17 | 0 | 4 | 81.0% | 81.0% | 2.53 s |

| Deep Faster R-CNN [16] | 20 | 2 | 1 | 95.2% | 87.0% | 2.71 s |

| Proposed ship detector | 21 | 2 | 0 | 100% | 91.3% | 3.03 s |

| Modified CFAR [8] | 19 | 3 | 2 | 90.5% | 79.2% | 201.90 s |

| Fully convolutional network based ship detector [22] | 21 | 5 | 0 | 100% | 80.7% | 3.48 s |

| Method | Consumed Time | |||||

|---|---|---|---|---|---|---|

| Shallow Faster R-CNN [16] | 19 | 1 | 1 | 95.0% | 90.5% | 4.20 s |

| Deep Faster R-CNN [16] | 19 | 3 | 1 | 95.0% | 82.6% | 4.50 s |

| Proposed ship detector | 20 | 3 | 0 | 100% | 86.9% | 5.30 s |

| Modified CFAR [8] | 18 | 17 | 2 | 90.0% | 48.6% | 108.10 s |

| Fully convolutional network based ship detector [22] | 20 | 13 | 0 | 100% | 60.6% | 3.37 s |

| Method | Consumed Time | |||||

|---|---|---|---|---|---|---|

| Shallow Faster R-CNN [16] | 39 | 0 | 0 | 100% | 100% | 1.80 s |

| Deep Faster R-CNN [16] | 36 | 1 | 3 | 92.3% | 90.0% | 2.10 s |

| Proposed ship detector | 39 | 0 | 0 | 100% | 100% | 2.40 s |

| Modified CFAR [8] | 39 | 0 | 0 | 100% | 100% | 6.18 s |

| Fully convolutional network based ship detector [22] | 39 | 0 | 0 | 100% | 100% | 2.07 s |

| Method | Consumed Time | |||||

|---|---|---|---|---|---|---|

| Shallow Faster R-CNN [16] | 21 | 2 | 1 | 95.5% | 87.5% | 4.48 s |

| Deep Faster R-CNN [16] | 22 | 2 | 0 | 100% | 91.7% | 5.17 s |

| Proposed ship detector | 22 | 2 | 0 | 100% | 91.7% | 8.42 s |

| Modified CFAR [8] | 22 | 11 | 0 | 100% | 66.7% | 98.6 s |

| Fully convolutional network based ship detector [22] | 21 | 8 | 1 | 95.5% | 70.0% | 6.94 s |

| Method | Consumed Time | |||||

|---|---|---|---|---|---|---|

| Shallow Faster R-CNN [16] | 118 | 10 | 14 | 89.4% | 83.1% | 17.84 s |

| Deep Faster R-CNN [16] | 122 | 14 | 10 | 92.4% | 83.6% | 18.54 s |

| Proposed ship detector | 125 | 14 | 7 | 94.7% | 85.6% | 19.83 s |

| Modified CFAR [8] | 116 | 19 | 16 | 87.9% | 78.4% | 564.48 s |

| Fully convolutional network based ship detector [22] | 122 | 30 | 10 | 92.4% | 75.3% | 7.27 s |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Fan, W.; Zhou, F.; Bai, X.; Tao, M.; Tian, T. Ship Detection Using Deep Convolutional Neural Networks for PolSAR Images. Remote Sens. 2019, 11, 2862. https://doi.org/10.3390/rs11232862

Fan W, Zhou F, Bai X, Tao M, Tian T. Ship Detection Using Deep Convolutional Neural Networks for PolSAR Images. Remote Sensing. 2019; 11(23):2862. https://doi.org/10.3390/rs11232862

Chicago/Turabian StyleFan, Weiwei, Feng Zhou, Xueru Bai, Mingliang Tao, and Tian Tian. 2019. "Ship Detection Using Deep Convolutional Neural Networks for PolSAR Images" Remote Sensing 11, no. 23: 2862. https://doi.org/10.3390/rs11232862

APA StyleFan, W., Zhou, F., Bai, X., Tao, M., & Tian, T. (2019). Ship Detection Using Deep Convolutional Neural Networks for PolSAR Images. Remote Sensing, 11(23), 2862. https://doi.org/10.3390/rs11232862