Validation of Earth Observation Time-Series: A Review for Large-Area and Temporally Dense Land Surface Products

Abstract

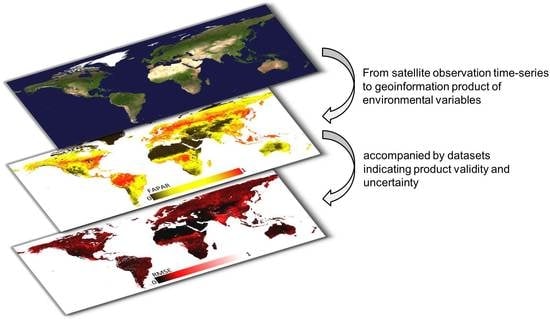

1. Introduction

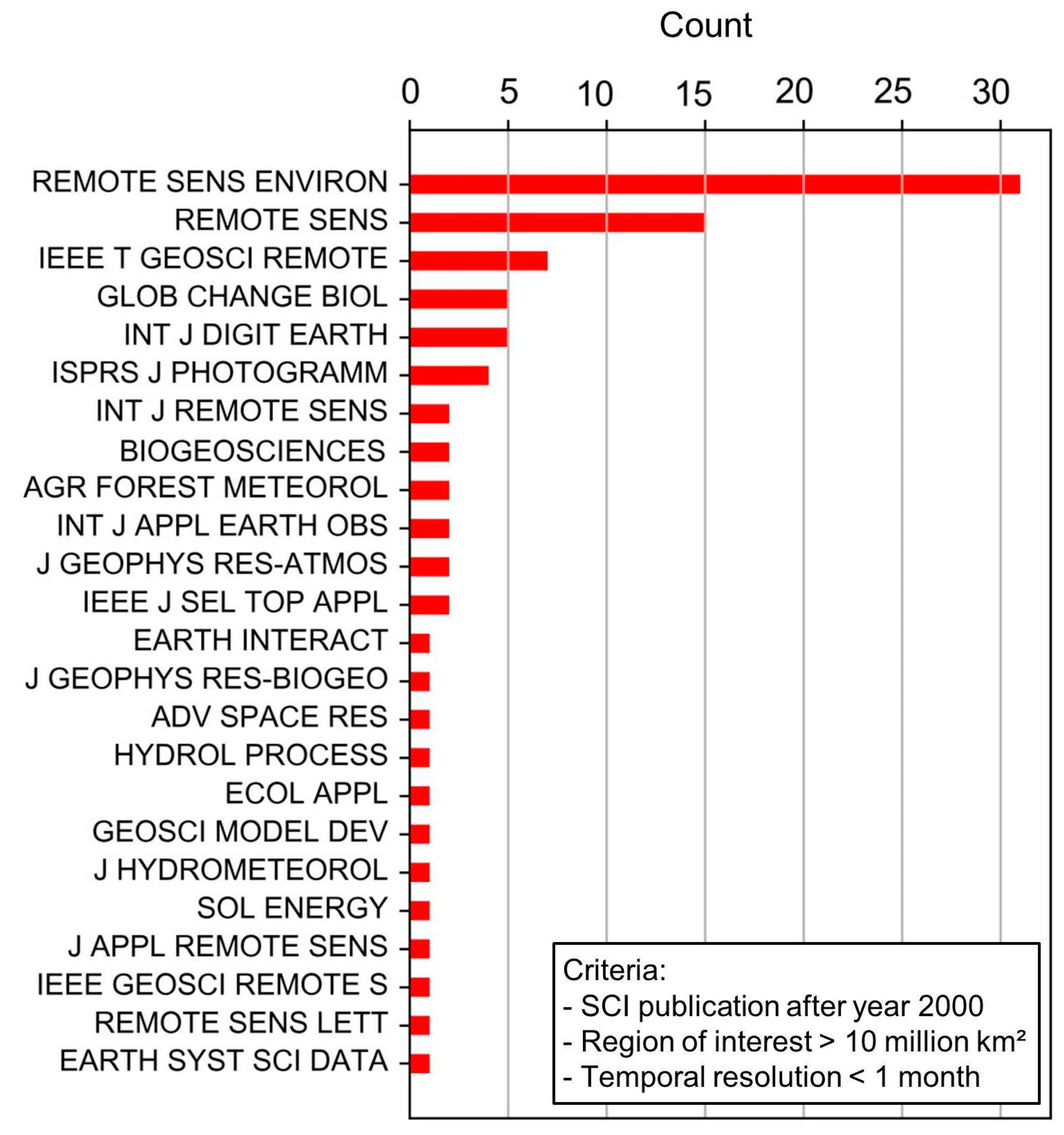

2. Theoretical Background

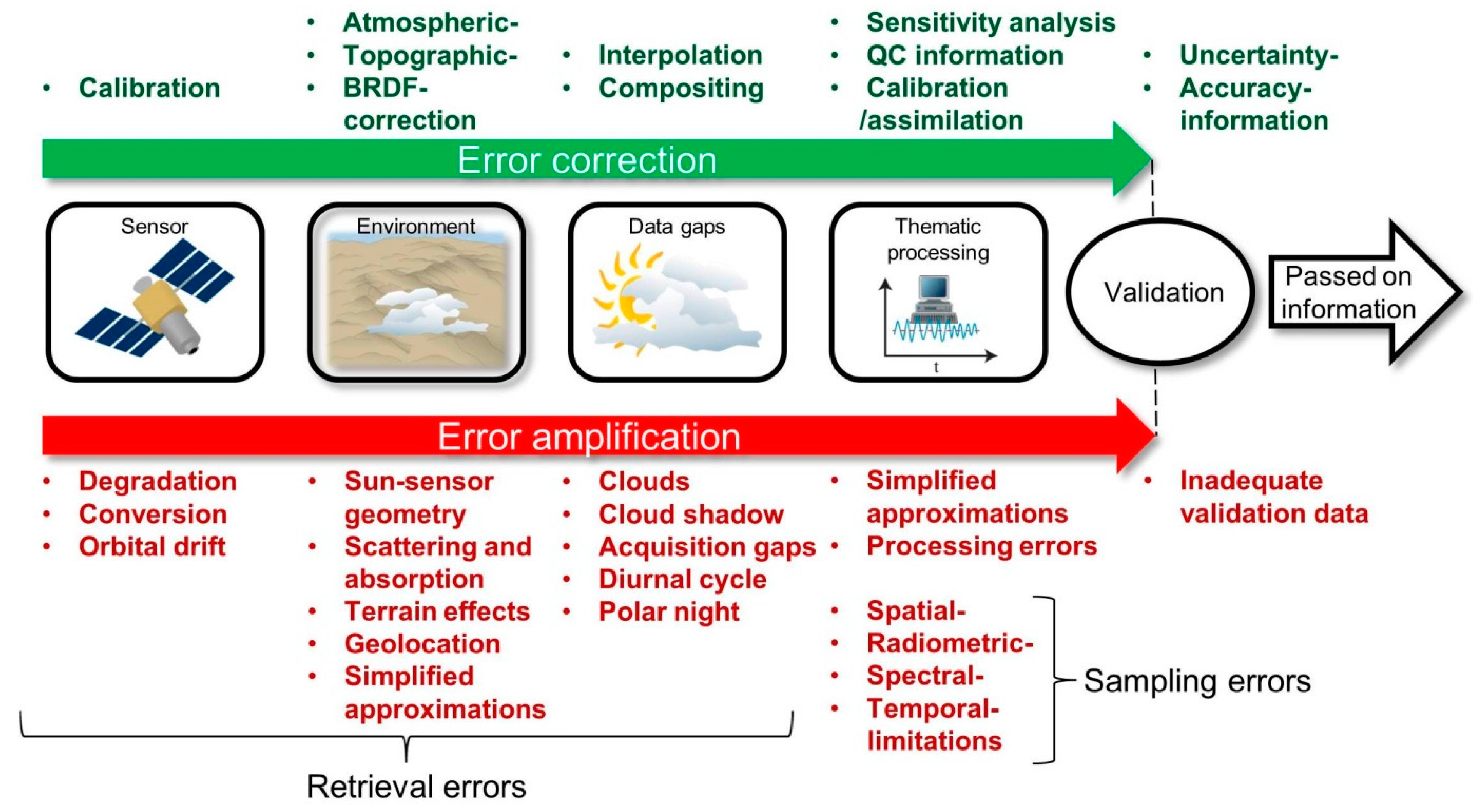

2.1. Main Error Sources of Remote Sensing Time-Series Products

2.2. General Considerations in Time-Series Validation

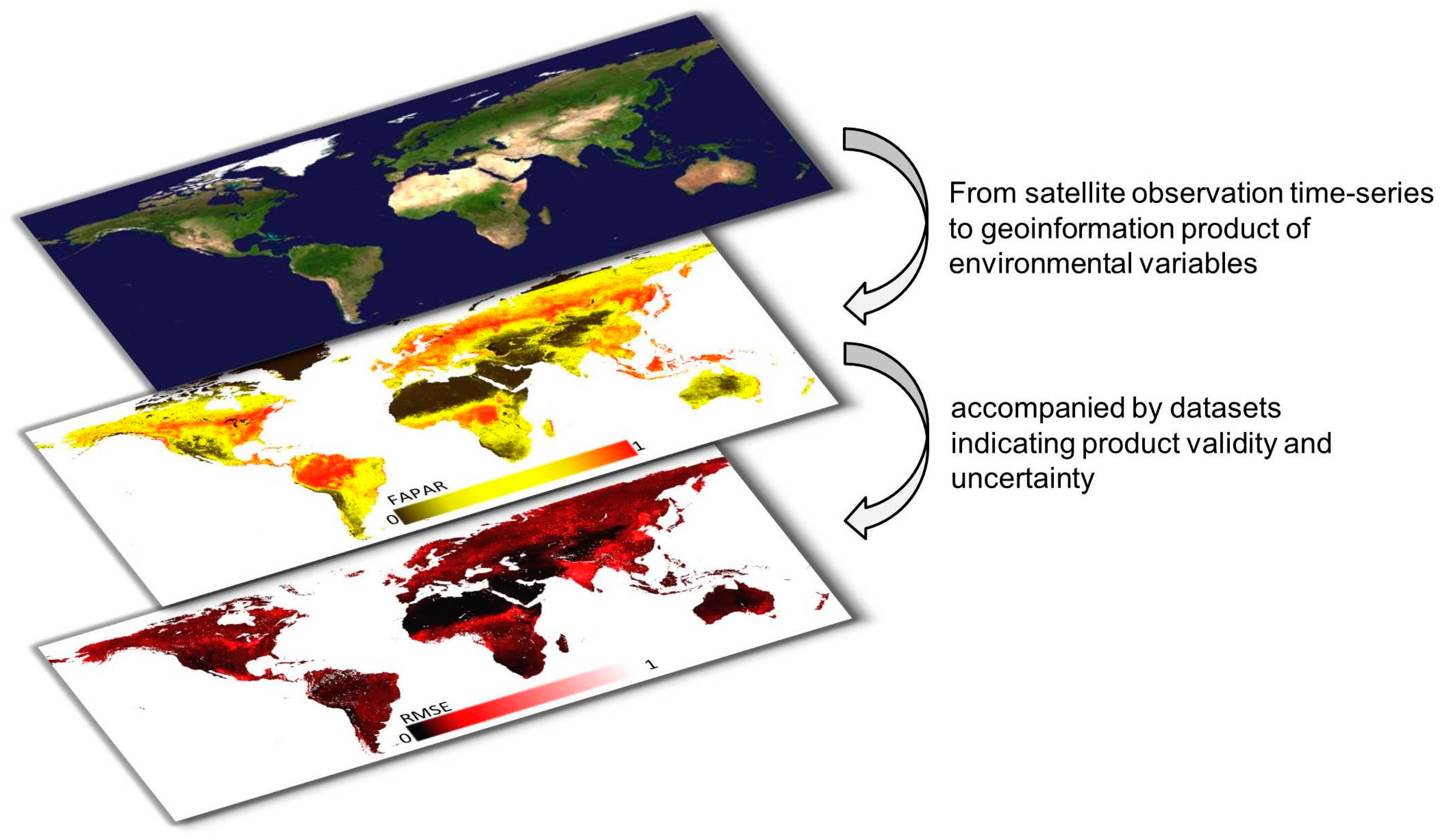

3. Characterization and Categorization of Reviewed Studies

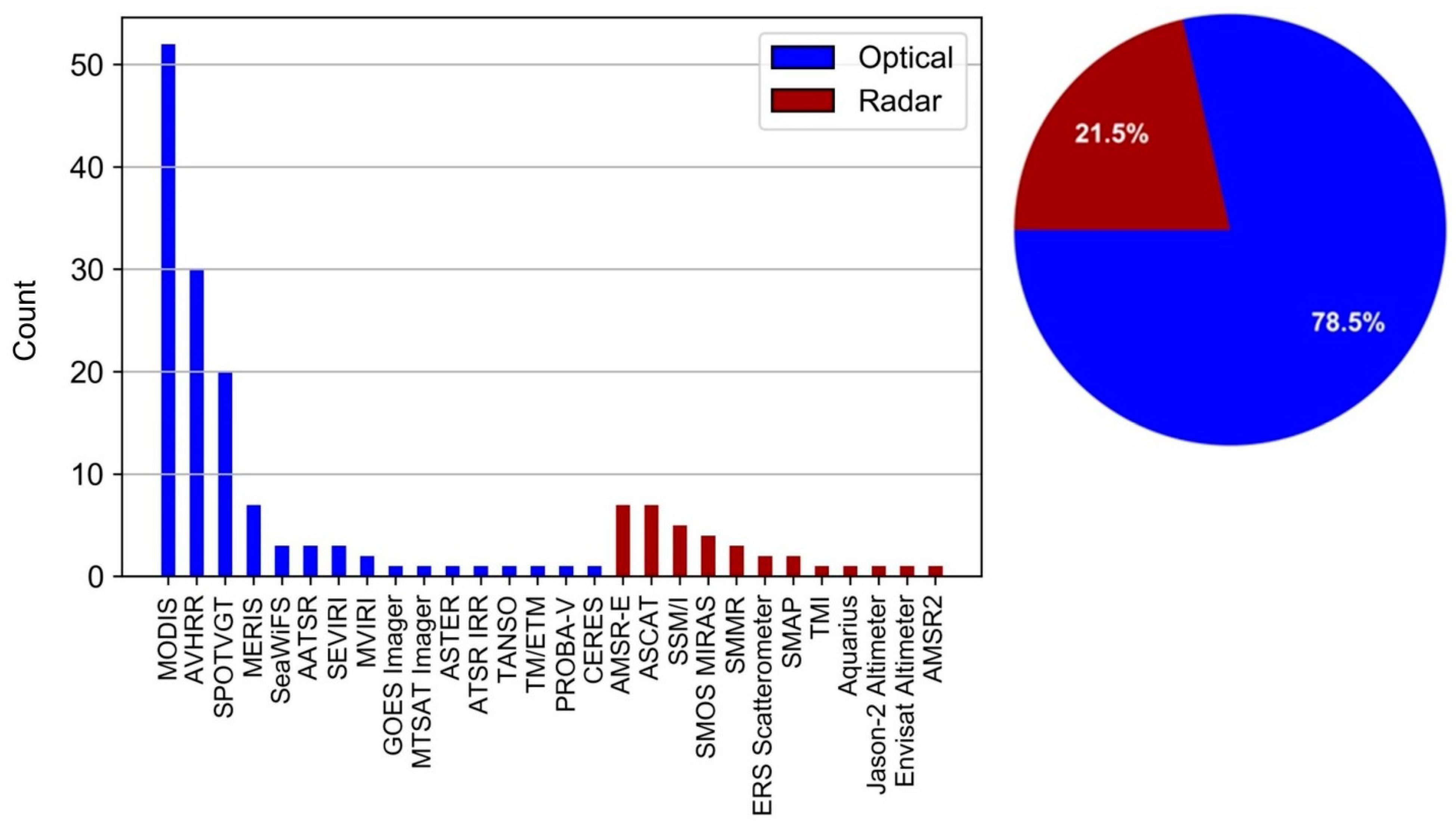

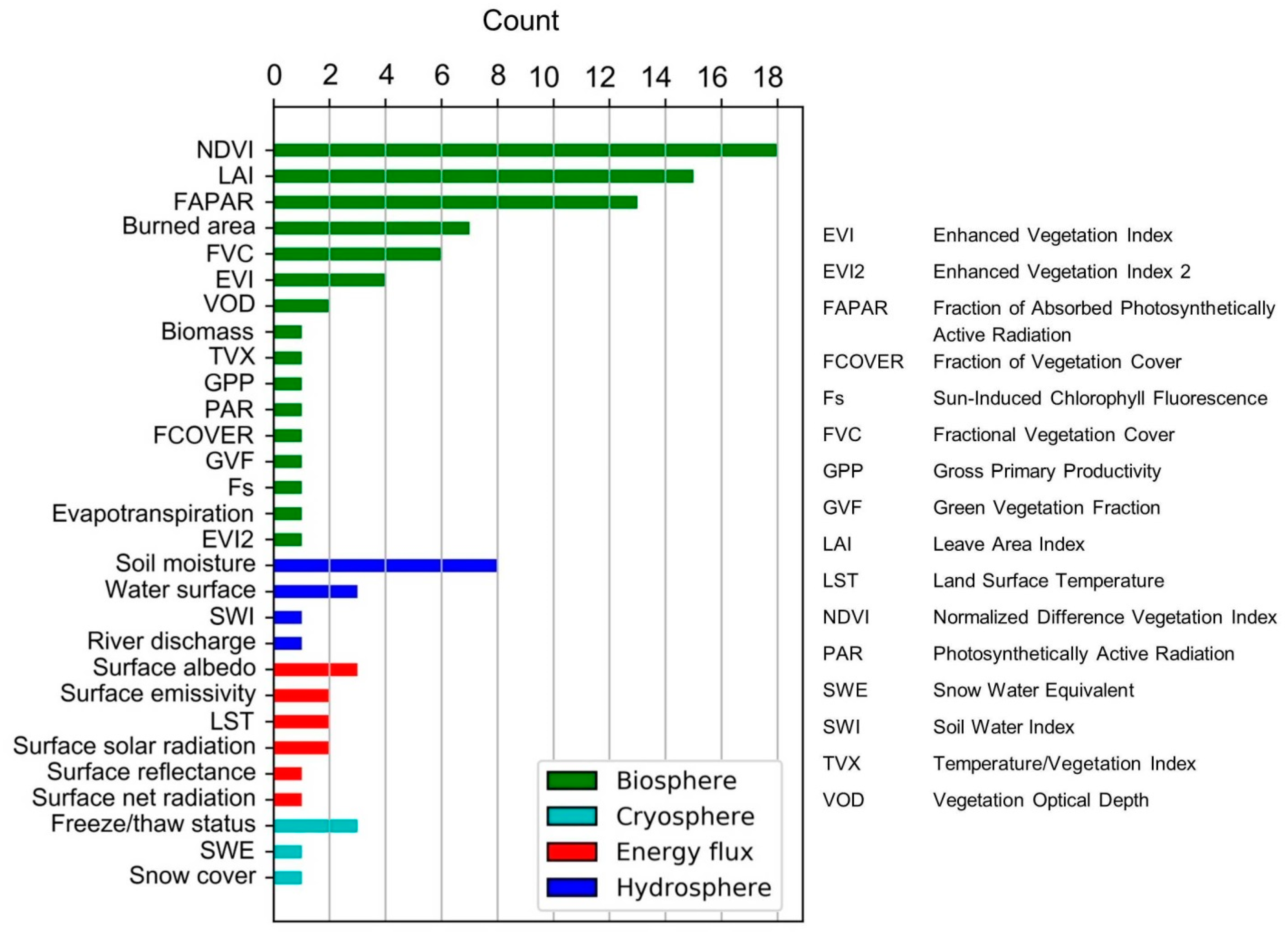

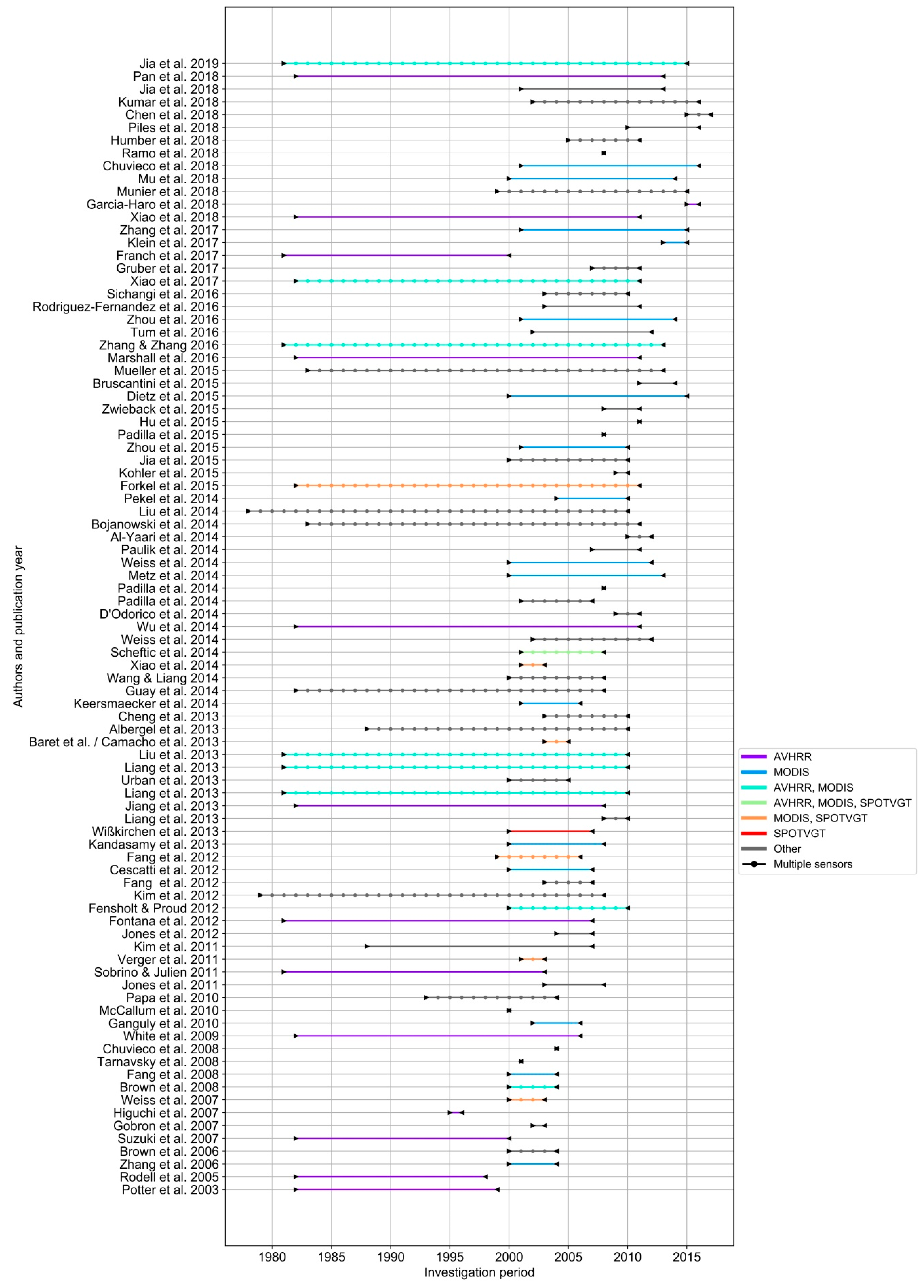

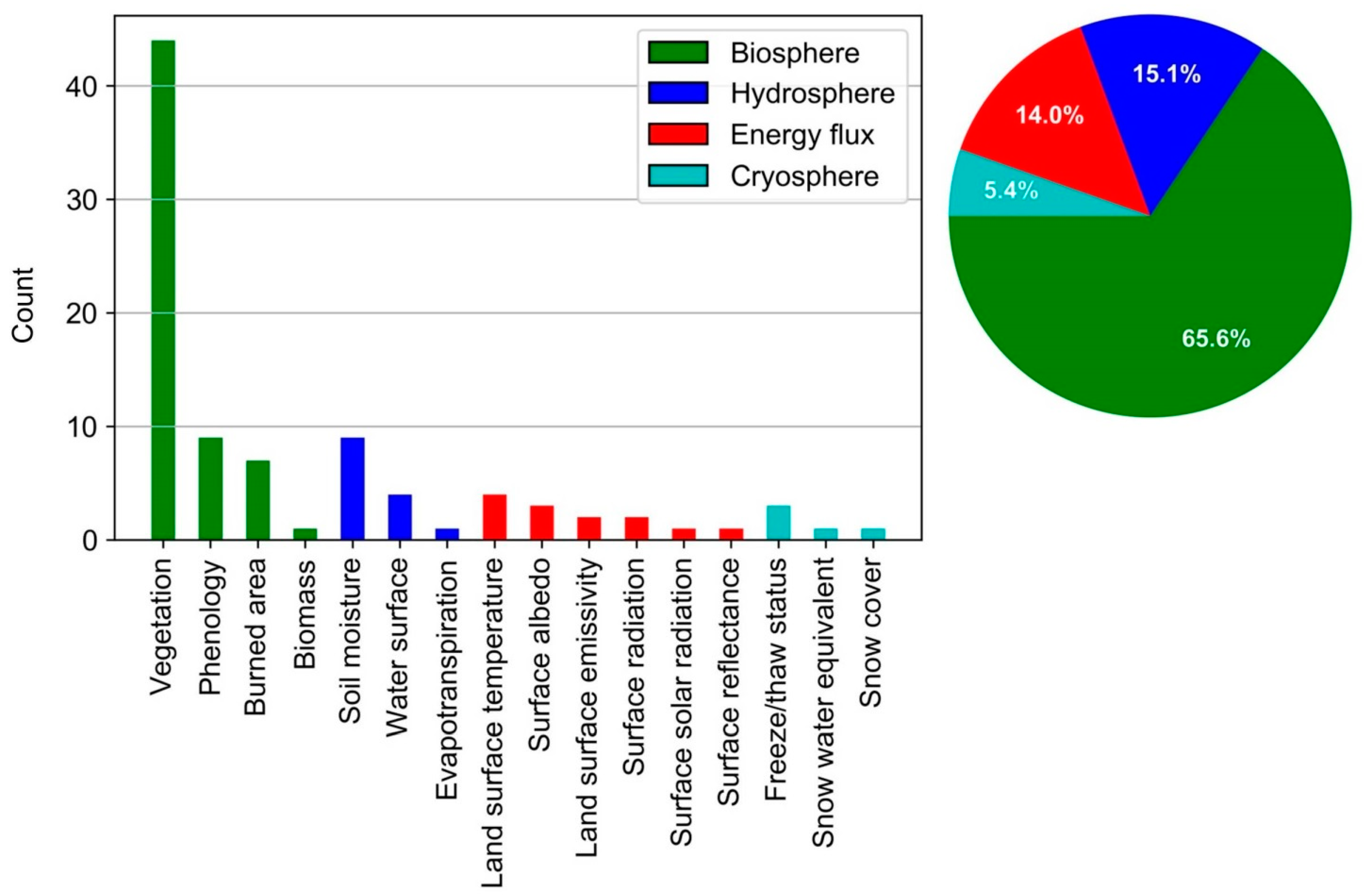

3.1. Preferred Sensors and Time-Series Variables

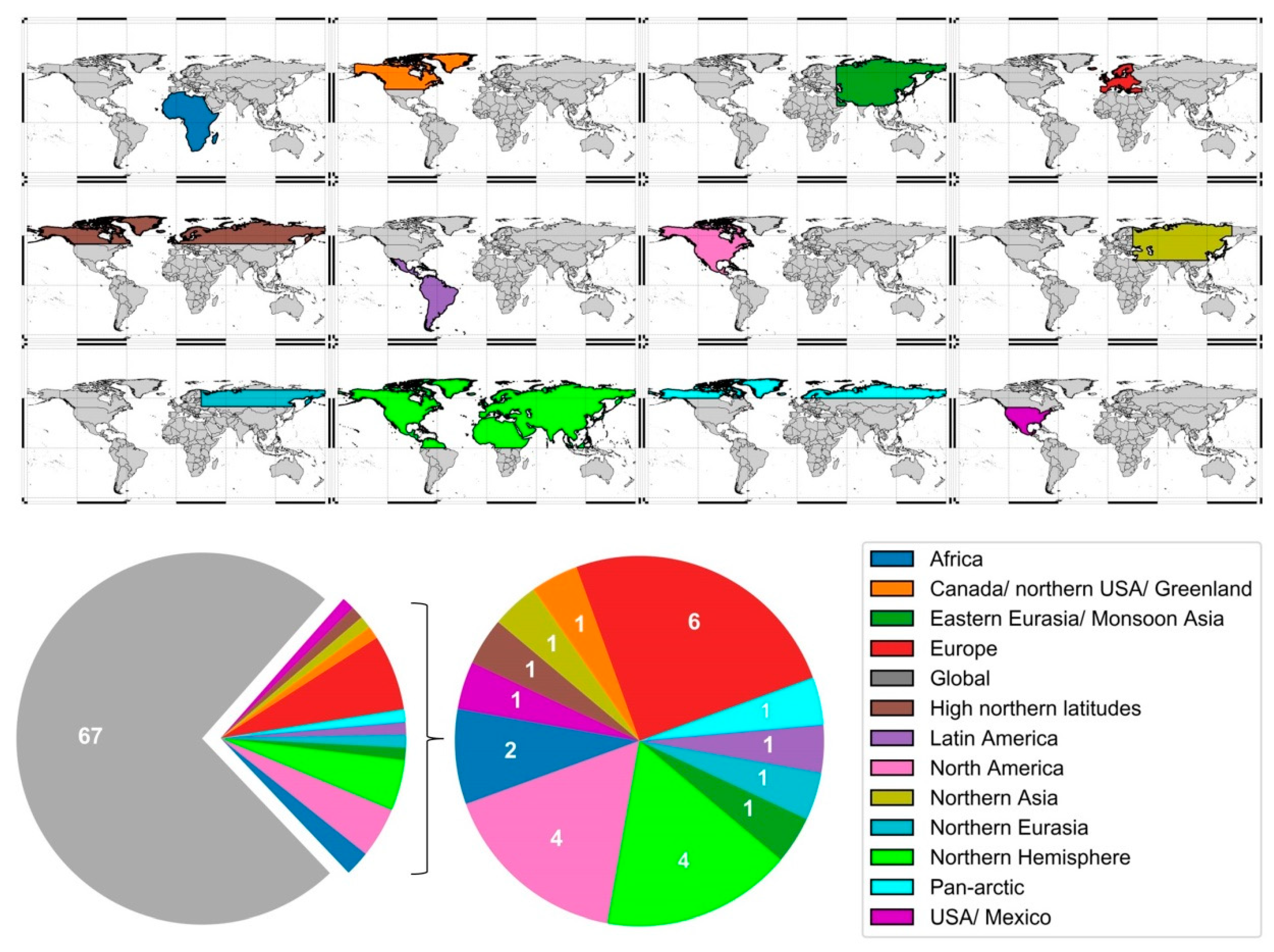

3.2. Thematic Foci and Spatial Distribution of Studies

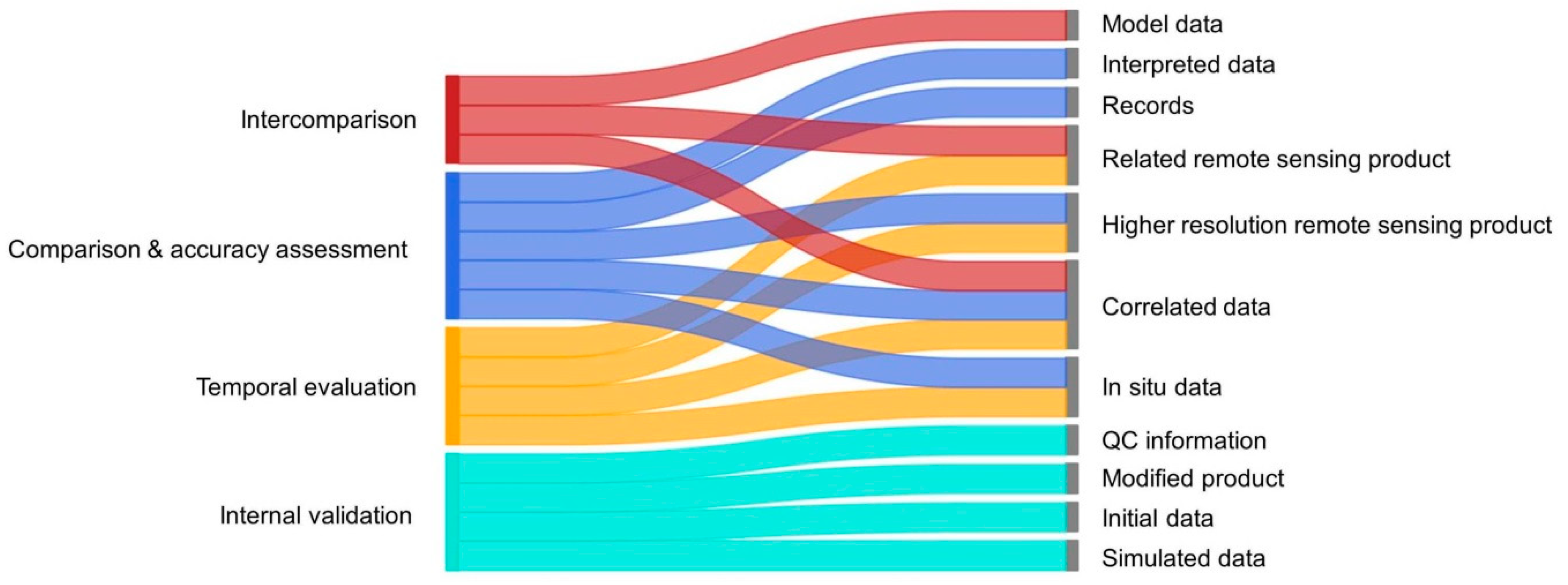

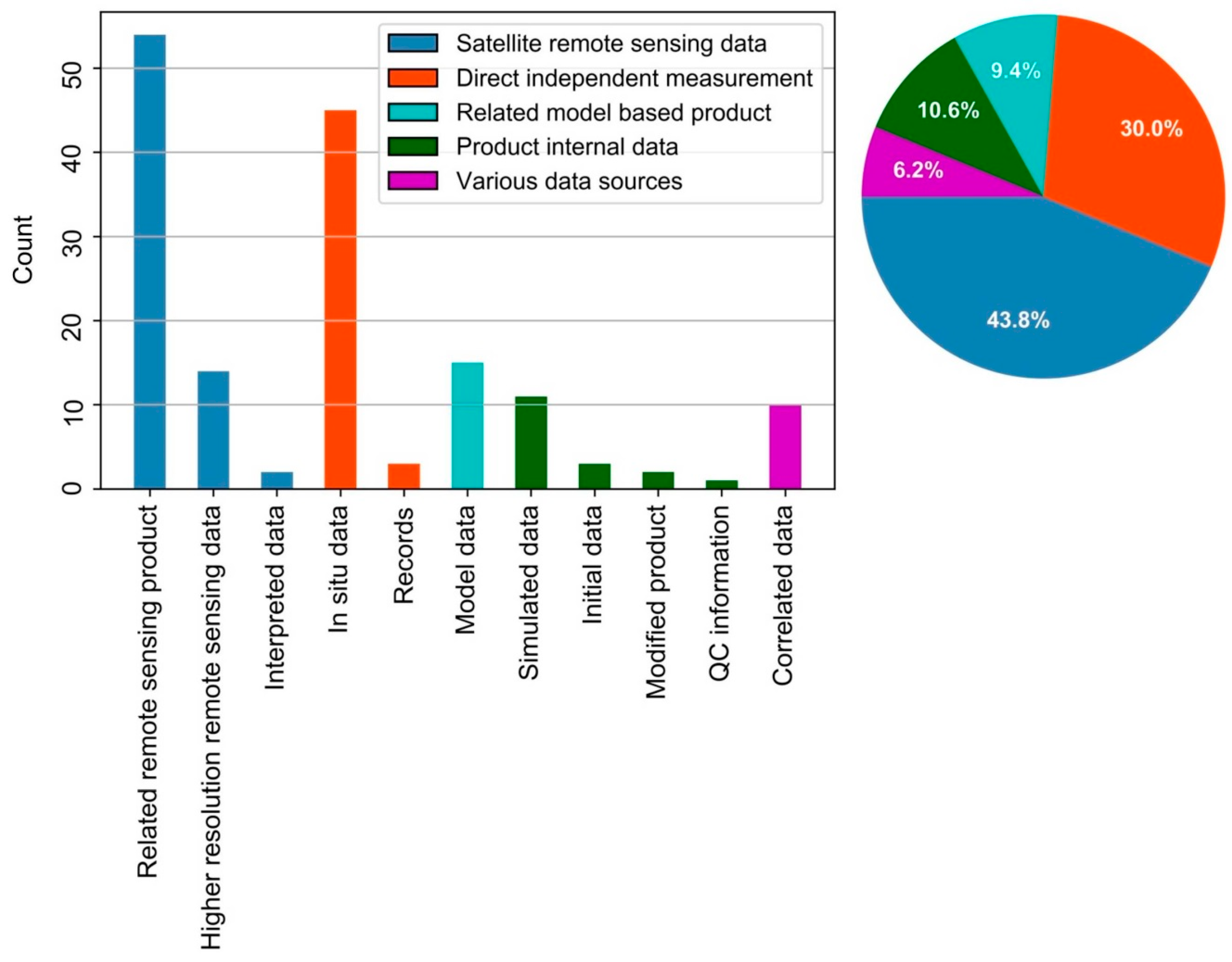

3.3. Categorization of Validation Approaches and Validation Data

4. Review of Validation Methods

4.1. Validation by Intercomparison of Related Products

4.2. Validation by Comparison to Reference Data

4.3. Accuracy Assessment

4.4. Temporal Evaluation

4.5. Internal Validation

4.6. Combination of Methods

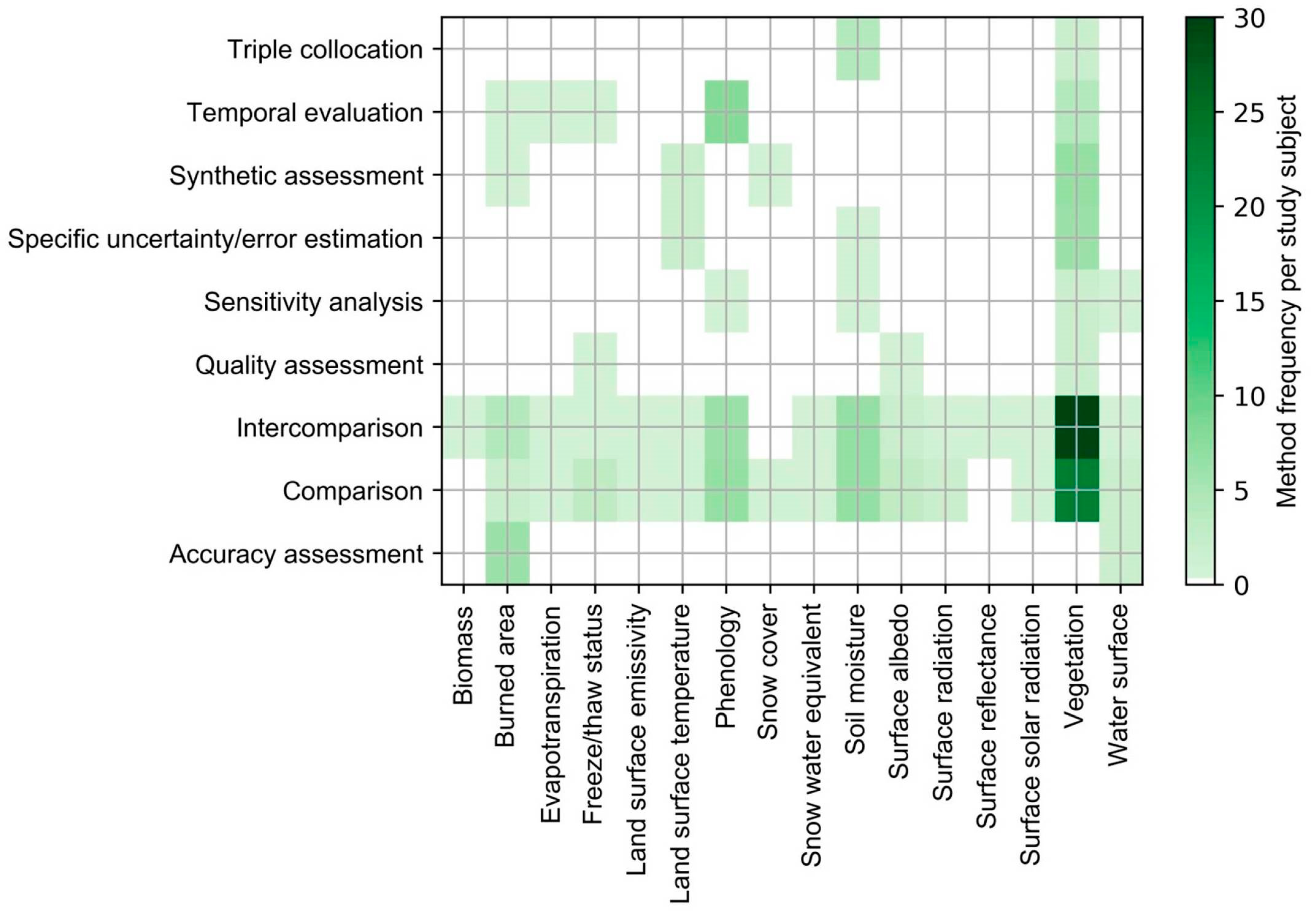

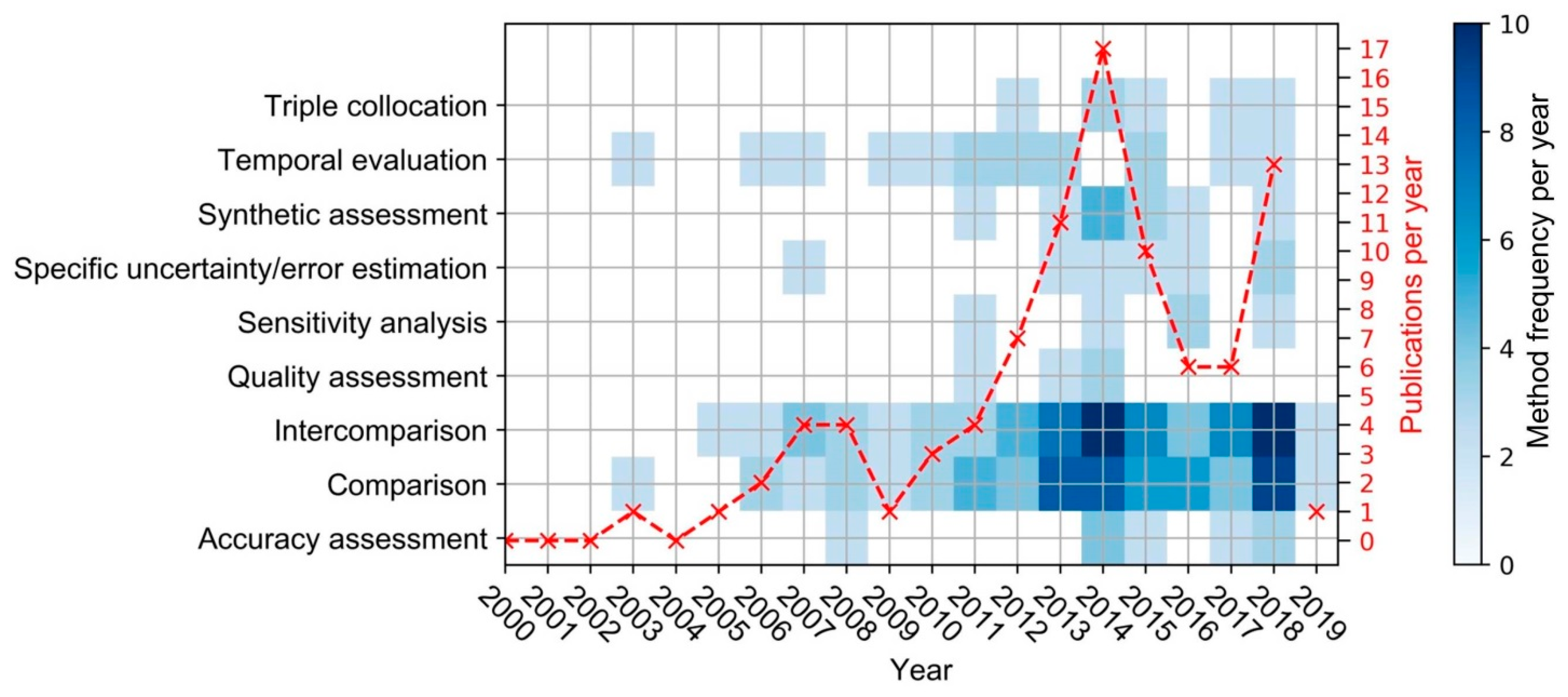

5. Summary of Validation Methods in the Reviewed Literature

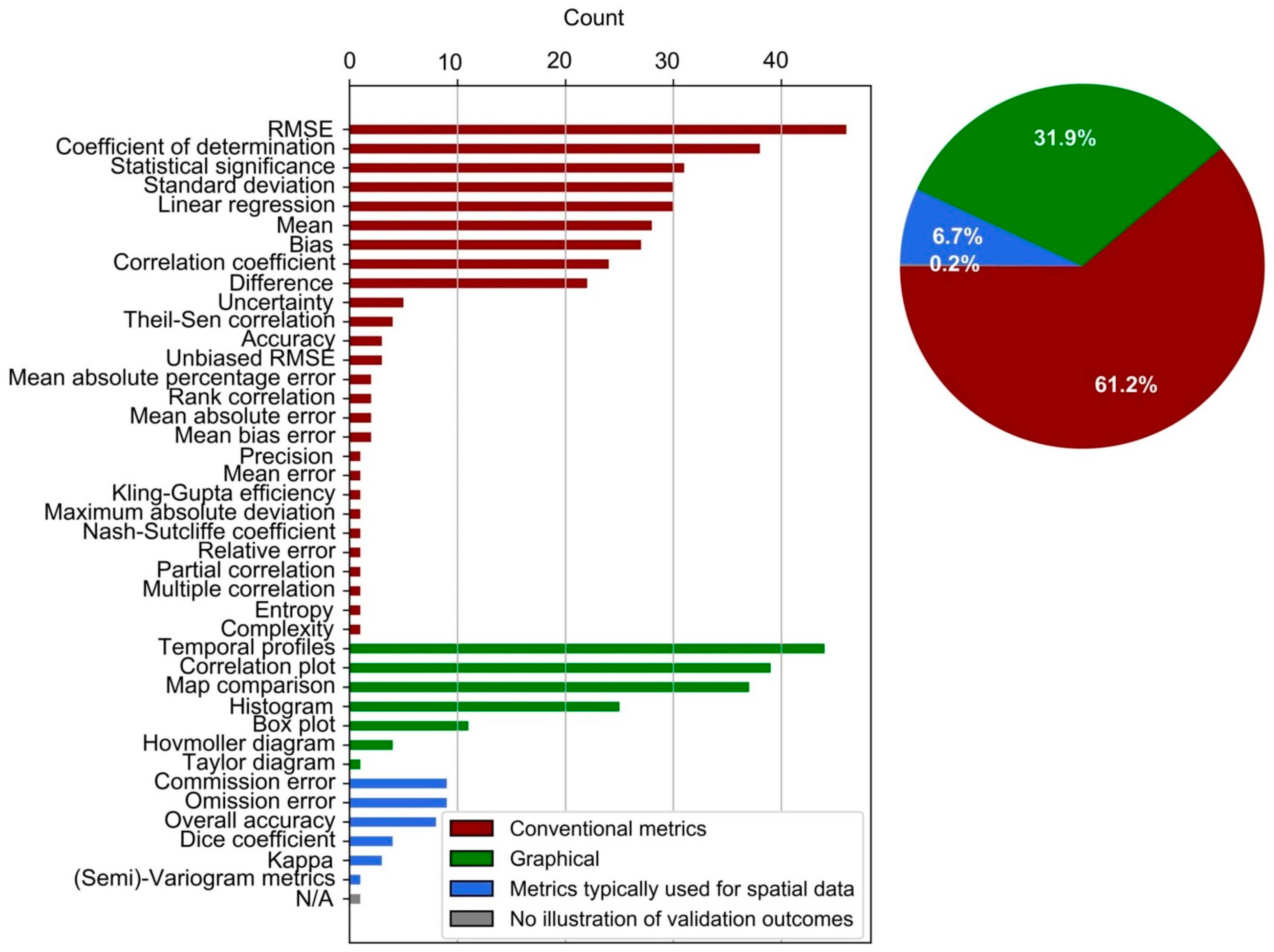

5.1. Expression of Validation Results

5.2. General Trends

6. Discussion on the Challenges and Implications of Validation

7. Conclusions and Outlook

- The main data sources of studies are optical sensors (78.5%), with MODIS, AVHRR, and SPOTVGT as major contributors.

- The dominant thematic focus is on vegetation-orientated variables (71.8%), mainly represented by time-series of NDVI, LAI, and FAPAR.

- An emphasis on a global coverage of studies is prevalent (73.6%).

- The main sources of validation data are related remote sensing products (33.7%) and in situ data (28.1%).

- For the expression of validation outcomes, conventional metrics or correlation-based metrics (RMSE, R²) are mostly calculated, along with a frequent presentation of graphical illustrations of temporal profiles, correlation plots, and map comparisons.

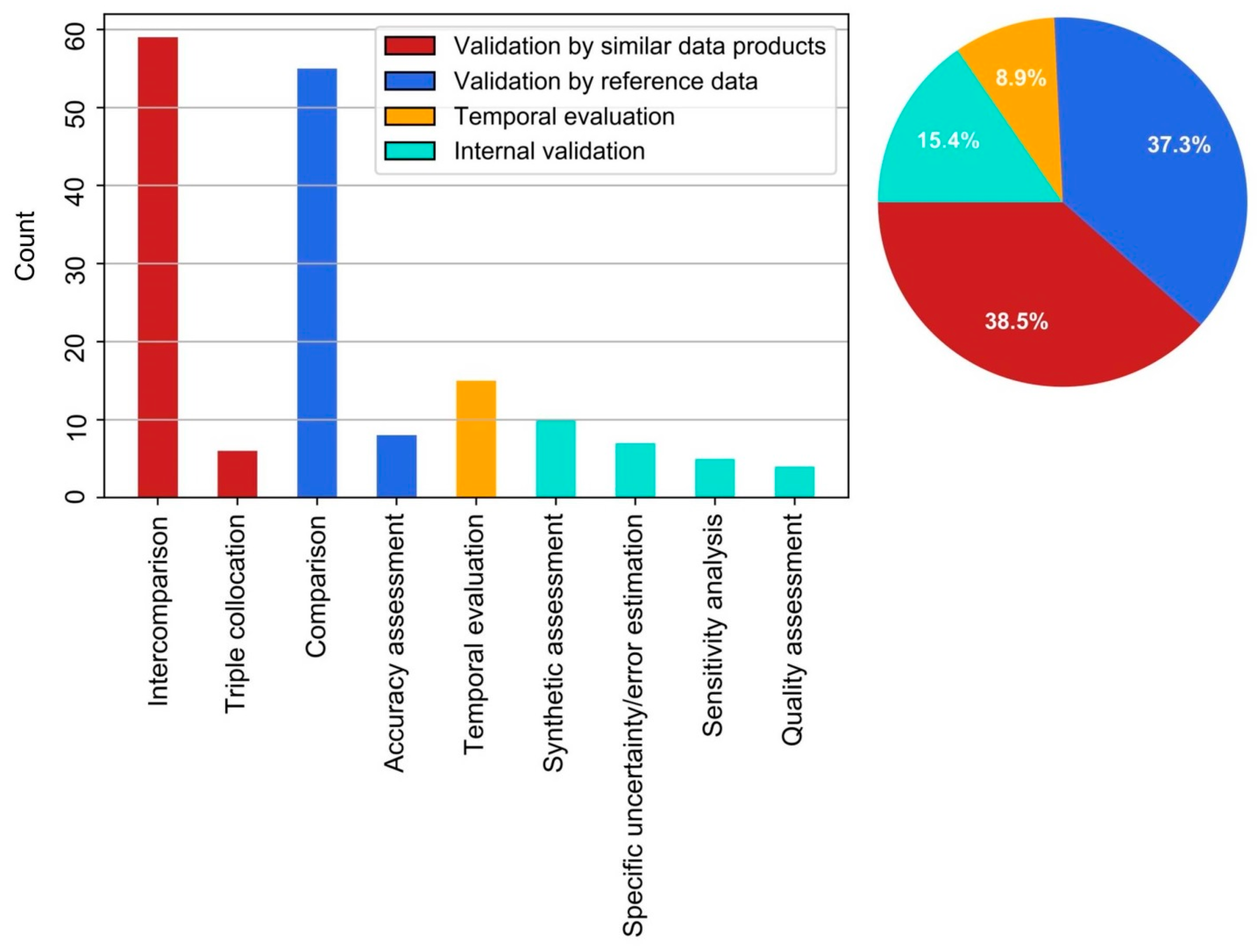

- The most commonly used validation method is the intercomparison of products (indirect validation, 38.5%), followed by the comparison to reference data (direct validation, 37.3%). The majority of studies used more than one validation method (65.9%).

- A general increase in relevant studies published per year, along with a minor diversification of the corresponding validation methods, could be observed.

- Challenges comprise a lack of adequate reference data, consequently promoting other methods.

- The issue of matching product and validation data in a reasonable spatiotemporal fashion is seen throughout studies that consider external sources for validation.

- The use of validation methods that are not bound to external validation data is limited (15.4%), as is validation by time-series-derived points in time in temporal evaluations (8.9%).

- Validation by accuracy assessment is unfavored by the majority of studies in the scope of this review (4.7%), which excludes land use/land cover products.

- Although the assessment of physical uncertainty referring to the true state of a measured variable (direct validation) is demanded by major EO-related organizations, indirect validation is frequently implemented.

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A

| Abbreviation | Full Description |

|---|---|

| AATSR | Advanced Along Track Scanning Radiometer |

| AMSR2 | Advanced Microwave Scanning Radiometer 2 |

| AMSR-E | Advanced Microwave Scanning Radiometer-Earth Observing System |

| ASCAT | Advanced SCATterometer |

| ATSR IRR | Along Track Scanning Radiometers Infra-Red Radiometer |

| AVHRR | Advanced Very High Resolution Radiometer |

| CERES | Clouds and the Earth’s Radiant Energy System |

| ERS Scatterometer | European Remote Sensing Satellite |

| GOES Imager | Geostationary Operational Environmental Satellite |

| MERIS | MEdium Resolution Imaging Spectrometer |

| MODIS | Moderate-resolution Imaging Spectroradiometer |

| MTSAT Imager | Multifunctional Transport Satellites |

| MVIRI | Meteosat Visible and Infrared Imager |

| PROBA-V | Project for On-Board Autonomy Vegetation |

| SeaWiFS | Sea-viewing Wide Field-of-view Sensor |

| SEVIRI | Spinning Enhanced Visible and InfraRed Imager |

| SMAP | Soil Moisture Active Passive |

| SMMR | Scanning Multichannel Microwave Radiometer |

| SMOS MIRAS | Soil Moisture and Ocean Salinity Microwave Imaging Radiometer with Aperture Synthesis |

| SPOTVGT | Satellite Pour l’Observation de la Terre Vegetation |

| SSM/I | Special Sensor Microwave/Imager |

| TANSO | Thermal and Near infrared Sensor for Carbon Observation |

| TM/ETM | Thematic Mapper/Enhanced Thematic Mapper |

| TMI | Tropical Rainfall Measuring Mission (TRMM) Microwave Imager |

| ADV SPACE RES | Advances in Space Research |

| AGR FOREST METEOROL | Agricultural and Forest Meteorology |

| BIOGEOSCIENCES | Biogeosciences |

| EARTH INTERACT | Earth Interactions |

| EARTH SYST SCI DATA | Earth System Science Data |

| ECOL APPL | Ecological Applications |

| GEOSCI MODEL DEV | Geoscientific Model Development |

| GLOB CHANGE BIOL | Global Change Biology |

| HYDROL PROCESS | Hydrological Processes |

| IEEE GEOSCI REMOTE S | IEEE Geoscience and Remote Sensing Letters |

| IEEE J SEL TOP APPL | IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing |

| IEEE T GEOSCI REMOTE | IEEE Transactions on Geoscience and Remote Sensing |

| INT J APPL EARTH OBS | International Journal of Applied Earth Observation and Geoinformation |

| INT J DIGIT EARTH | International Journal of Digital Earth |

| INT J REMOTE SENS | International Journal of Remote Sensing |

| ISPRS J PHOTOGRAMM | ISPRS Journal of Photogrammetry and Remote Sensing |

| J APPL REMOTE SENS | Journal of Applied Remote Sensing |

| J GEOPHYS RES-ATMOS | Journal of Geophysical Research: Atmospheres |

| J GEOPHYS RES-BIOGEO | Journal of Geophysical Research: Biogeosciences |

| J HYDROMETEOROL | Journal of Hydrometeorology |

| REMOTE SENS | Remote Sensing |

| REMOTE SENS ENVIRON | Remote Sensing of Environment |

| REMOTE SENS LETT | Remote Sensing Letters |

| SOL ENERGY | Solar Energy |

| Search Query |

|---|

| TS1= (global OR1"large scale" OR "large-scale" OR continental OR "large area") |

| AND 1 TS = ("time series" OR "time-series") |

| AND TS = ("remote sensing" OR "earth observation") |

| AND TS = (validation OR uncertainty OR error OR assessment OR accuracy) |

| NOT 1 TI 1 = atmosphere NOT TI = atmospheric NOT TI = ocean NOT TI = land-cover NOT TI = "land cover" NOT TI = ”land use” |

References

- Steffen, W.; Sanderson, R.A.; Tyson, P.D.; Jäger, J.; Matson, P.A.; Moore III, B.; Oldfield, F.; Richardson, K.; Schellnhuber, H.-J.; Turner, B.L.; et al. Global Change and the Earth System; Global Change—The IGBP Series; 2004; Springer: Berlin/Heidelberg, Germany, 2005; ISBN 91-631-5380-7. [Google Scholar]

- GCOS (Global Climate Observing System). Essential Climate Variables, Monitoring Principles and Observation Requirements for Essential Land Climate Variables 2019. Available online: https://gcos.wmo.int/en/essential-climate-variables/gcos-monitoring-principles (accessed on 22 June 2019).

- Metz, M.; Rocchini, D.; Neteler, M. Surface Temperatures at the Continental Scale. Remote Sens. 2014, 6, 3822–3840. [Google Scholar] [CrossRef]

- Pereira, H.M.; Ferrier, S.; Walters, M.; Geller, G.N.; Jongman, R.H.G.; Scholes, R.J.; Bruford, M.W.; Brummitt, N.; Butchart, S.H.M.; Cardoso, A.C.; et al. Essential Biodiversity Variables. Science 2013, 339, 277–278. [Google Scholar] [CrossRef]

- Yang, J.; Gong, P.; Fu, R.; Zhang, M.; Chen, J.; Liang, S.; Xu, B.; Shi, J.; Dickinson, R. The role of satellite remote sensing in climate change studies. Nat. Clim. Chang. 2013, 3, 875–883. [Google Scholar] [CrossRef]

- Liang, S.; Zhao, X.; Liu, S.; Yuan, W.; Cheng, X.; Xiao, Z.; Zhang, X.; Liu, Q.; Cheng, J.; Tang, H.; et al. A long-term Global LAnd Surface Satellite (GLASS) data-set for environmental studies. Int. J. Digit. Earth 2013, 6, 5–33. [Google Scholar] [CrossRef]

- Hay, S.I.; Tatem, A.J.; Graham, A.J.; Goetz, S.J.; Rogers, D.J. Global Environmental Data for Mapping Infectious Disease Distribution. In Global Mapping of Infectious Diseases: Methods, Examples and Emerging Applications; Advances in Parasitology; Elsevier: Amsterdam, The Netherlands, 2006; Volume 62, pp. 37–77. ISBN 978-0-12-031762-2. [Google Scholar]

- Justice, C.; Belward, A.; Morisette, J.; Lewis, P.; Privette, J.; Baret, F. Developments in the “validation” of satellite sensor products for the study of the land surface. Int. J. Remote Sens. 2000, 21, 3383–3390. [Google Scholar] [CrossRef]

- Weiss, M.; Baret, F.; Block, T.; Koetz, B.; Burini, A.; Scholze, B.; Lecharpentier, P.; Brockmann, C.; Fernandes, R.; Plummer, S.; et al. On Line Validation Exercise (OLIVE). Remote Sens. 2014, 6, 4190–4216. [Google Scholar] [CrossRef]

- Kuenzer, C.; Dech, S.; Wagner, W. Remote Sensing Time Series; Springer International Publishing: Cham, Switzerland, 2015; Volume 22. [Google Scholar]

- National Research Council. Climate Data Records from Environmental Satellites: Interim Report; The National Academies Press: Washington, DC, USA, 2004; ISBN 978-0-309-09168-8. [Google Scholar]

- Kuenzer, C.; Ottinger, M.; Wegmann, M.; Guo, H.; Wang, C.; Zhang, J.; Dech, S.; Wikelski, M. Earth observation satellite sensors for biodiversity monitoring. Int. J. Remote Sens. 2014, 35, 6599–6647. [Google Scholar] [CrossRef]

- MODIS Science Team 2019. Available online: https://modis.gsfc.nasa.gov/sci_team/ (accessed on 11 July 2019).

- CGLS Copernicus Global Land Service. Available online: https://land.copernicus.eu/global/ (accessed on 2 September 2019).

- Klein, C.; Bliefernicht, J.; Heinzeller, D.; Gessner, U.; Klein, I.; Kunstmann, H. Feedback of observed interannual vegetation change. Clim. Dyn. 2017, 48, 2837–2858. [Google Scholar] [CrossRef]

- Machwitz, M.; Gessner, U.; Conrad, C.; Falk, U.; Richters, J.; Dech, S. Modelling the Gross Primary Productivity of West Africa with the Regional Biomass Model RBM+, using optimized 250 m MODIS FPAR and fractional vegetation cover information. Int. J. Appl. Earth Obs. Geoinf. 2015, 43, 177–194. [Google Scholar] [CrossRef]

- Merkuryeva, G.; Merkuryev, Y.; Sokolov, B.V.; Potryasaev, S.; Zelentsov, V.A.; Lektauers, A. Advanced river flood monitoring, modelling and forecasting. J. Comput. Sci. 2015, 10, 77–85. [Google Scholar] [CrossRef]

- Wißkirchen, K.; Tum, M.; Günther, K.P.; Niklaus, M.; Eisfelder, C.; Knorr, W. Quantifying the carbon uptake by vegetation for Europe on a 1 km2 resolution using a remote sensing driven vegetation model. Geosci. Model Dev. 2013, 6, 1623–1640. [Google Scholar] [CrossRef]

- Döll, P.; Douville, H.; Güntner, A.; Müller Schmied, H.; Wada, Y. Modelling Freshwater Resources at the Global Scale. Surv. Geophys. 2016, 37, 195–221. [Google Scholar] [CrossRef]

- Glenn, E.P.; Nagler, P.L.; Huete, A.R. Vegetation Index Methods for Estimating Evapotranspiration by Remote Sensing. Surv. Geophys. 2010, 31, 531–555. [Google Scholar] [CrossRef]

- D’Odorico, P.; Gonsamo, A.; Pinty, B.; Gobron, N.; Coops, N.; Mendez, E.; Schaepman, M.E. Intercomparison of fraction of absorbed photosynthetically active radiation products derived from satellite data over Europe. Remote Sens. Environ. 2014, 142, 141–154. [Google Scholar] [CrossRef]

- Fensholt, R.; Proud, S.R. Evaluation of Earth Observation based global long term vegetation trends—Comparing GIMMS and MODIS global NDVI time series. Remote Sens. Environ. 2012, 119, 131–147. [Google Scholar] [CrossRef]

- Guay, K.C.; Beck, P.S.A.; Berner, L.T.; Goetz, S.J.; Baccini, A.; Buermann, W. Vegetation productivity patterns at high northern latitudes. Glob. Chang. Biol. 2014, 20, 3147–3158. [Google Scholar] [CrossRef]

- Jiang, N.; Zhu, W.; Zheng, Z.; Chen, G.; Fan, D. A Comparative Analysis between GIMSS NDVIg and NDVI3g for Monitoring Vegetation Activity Change in the Northern Hemisphere during 1982–2008. Remote Sens. 2013, 5, 4031–4044. [Google Scholar] [CrossRef]

- McCallum, I.; Wagner, W.; Schmullius, C.; Shvidenko, A.; Obersteiner, M.; Fritz, S.; Nilsson, S. Comparison of four global FAPAR datasets over Northern Eurasia for the year 2000. Remote Sens. Environ. 2010, 114, 941–949. [Google Scholar] [CrossRef]

- Scheftic, W.; Zeng, X.; Broxton, P.; Brunke, M. Intercomparison of Seven NDVI Products over the United States and Mexico. Remote Sens. 2014, 6, 1057–1084. [Google Scholar] [CrossRef]

- Wang, D.; Morton, D.; Masek, J.; Wu, A.; Nagol, J.; Xiong, X.; Levy, R.; Vermote, E.; Wolfe, R. Impact of sensor degradation on the MODIS NDVI time series. Remote Sens. Environ. 2012, 119, 55–61. [Google Scholar] [CrossRef]

- Weiss, D.J.; Atkinson, P.M.; Bhatt, S.; Mappin, B.; Hay, S.I.; Gething, P.W. An effective approach for gap-filling continental scale remotely sensed time-series. ISPRS J. Photogramm. Remote Sens. Off. Publ. Int. Soc. Photogramm. Remote Sens. (ISPRS) 2014, 98, 106–118. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Y.; Song, C.; Band, L.E.; Sun, G.; Li, J. Reanalysis of global terrestrial vegetation trends from MODIS products. Remote Sens. Environ. 2017, 191, 145–155. [Google Scholar] [CrossRef]

- Crosetto, M.; Tarantola, S. Uncertainty and sensitivity analysis: Tools for GIS-based model implementation. Int. J. Geogr. Inf. Sci. 2001, 15, 415–437. [Google Scholar] [CrossRef]

- WMO (World Meteorological Organization). User Requirements for Observation 2019. Available online: http://www.wmo-sat.info/oscar/observingrequirements (accessed on 16 July 2019).

- LPV (Land Product Validation). Subgroup CEOS Validation Hierarchy 2019. Available online: https://lpvs.gsfc.nasa.gov/ (accessed on 5 May 2019).

- Salminen, M.; Pulliainen, J.; Metsämäki, S.; Ikonen, J.; Heinilä, K.; Luojus, K. Determination of uncertainty characteristics for the satellite data-based estimation of fractional snow cover. Remote Sens. Environ. 2018, 212, 103–113. [Google Scholar] [CrossRef]

- Baret, F.; Weiss, M.; Lacaze, R.; Camacho, F.; Makhmara, H.; Pacholcyzk, P.; Smets, B. GEOV1: LAI and FAPAR essential climate variables and FCOVER global time series capitalizing over existing products. Part1: Principles of development and production. Remote Sens. Environ. 2013, 137, 299–309. [Google Scholar] [CrossRef]

- Camacho, F.; Cernicharo, J.; Lacaze, R.; Baret, F.; Weiss, M. GEOV1: LAI, FAPAR essential climate variables and FCOVER global time series capitalizing over existing products. Part 2: Validation and intercomparison with reference products. Remote Sens. Environ. 2013, 137, 310–329. [Google Scholar] [CrossRef]

- Klotz, M.; Kemper, T.; Geiß, C.; Esch, T.; Taubenböck, H. How good is the map? Remote Sens. Environ. 2016, 178, 191–212. [Google Scholar] [CrossRef]

- Estes, L.; Chen, P.; Debats, S.; Evans, T.; Ferreira, S.; Kuemmerle, T.; Ragazzo, G.; Sheffield, J.; Wolf, A.; Wood, E.; et al. A large-area, spatially continuous assessment of land cover map error and its impact on downstream analyses. Glob. Chang. Biol. 2018, 24, 322–337. [Google Scholar] [CrossRef]

- Congalton, R.G. A review of assessing the accuracy of classifications of remotely sensed data. Remote Sens. Environ. 1991, 37, 35–46. [Google Scholar] [CrossRef]

- García-Haro, F.J.; Campos-Taberner, M.; Muñoz-Marí, J.; Laparra, V.; Camacho, F.; Sánchez-Zapero, J.; Camps-Valls, G. Derivation of global vegetation biophysical parameters from EUMETSAT Polar System. ISPRS J. Photogramm. Remote Sens. 2018, 139, 57–74. [Google Scholar] [CrossRef]

- Olofsson, P.; Foody, G.M.; Herold, M.; Stehman, S.V.; Woodcock, C.E.; Wulder, M.A. Good practices for estimating area and assessing accuracy of land change. Remote Sens. Environ. 2014, 148, 42–57. [Google Scholar] [CrossRef]

- Jansen, L.; Di Gregorio, A. Land Cover Classification System (LCCS); 2000; Food and Agriculture Organization of the United Nations: Rome, Italy, 1998. [Google Scholar]

- Congalton, R.; Gu, J.; Yadav, K.; Thenkabail, P.; Ozdogan, M. Global Land Cover Mapping. Remote Sens. 2014, 6, 12070–12093. [Google Scholar] [CrossRef]

- Strahler, A.H.; Boschetti, L.; Foody, G.M.; Friedl, M.A.; Hansen, M.C.; Herold, M.; Mayaux, P.; Morisette, J.; Stehman, S.; Woodcock, C. Global Land Cover Validation: Recommendations for Evaluation and Accuracy Assessment of Global Land Cover Maps; Office for Official Publications of the European Communities: Luxemburg, 2006; Volume 25. [Google Scholar]

- Stehman, S.V.; Foody, G.M. Key issues in rigorous accuracy assessment of land cover products. Remote Sens. Environ. 2019, 231, 111199. [Google Scholar] [CrossRef]

- Congalton, R.G.; Green, K. Assessing the Accuracy of Remotely Sensed Data: Principles and Practices, 2nd ed.; CRC Press/Taylor & Francis: Boca Raton, FL, USA, 2009; ISBN 978-1-4200-5512-2. [Google Scholar]

- CEOS WGCV (Committee on Earth Observation Satellites Working Group on Calibration & Validation) 2019. Available online: http://ceos.org/ourwork/workinggroups/wgcv/ (accessed on 15 July 2019).

- ISO (International Organization for Standardization). Accuracy (Trueness and Precision) of Measurement Methods and Results 1994. Available online: https://www.iso.org/obp/ui/#iso:std:iso:5725:-1:ed-1:v1:en (accessed on 15 July 2019).

- Verger, A.; Baret, F.; Weiss, M. A multisensor fusion approach to improve LAI time series. Remote Sens. Environ. 2011, 115, 2460–2470. [Google Scholar] [CrossRef]

- AIAA G-077. Guide for the Verification and Validation of Computational Fluid Dynamics Simulations; American Institute of Aeronautics and Astronautics: Reston, VA, USA, 1998; ISBN 978-1-56347-285-5. [Google Scholar]

- Fisher, P.F. Models of uncertainty in spatial data. Geogr. Inf. Syst. 1999, 1, 191–205. [Google Scholar]

- Demaria, E.M.C.; Serrat-Capdevila, A. Challenges of Remote Sensing Validation. In Earth Observation for Water Resources Management: Current Use and Future Opportunities for the Water Sector; The World Bank: Washington, DC, USA, 2016; pp. 167–171. ISBN 978-1-4648-0475-5. [Google Scholar]

- Cao, C.; Xiong, X.; Wu, A.; Wu, X. Assessing the consistency of AVHRR and MODIS L1B reflectance for generating Fundamental Climate Data Records. J. Geophys. Res. 2008, 113, 33463. [Google Scholar] [CrossRef]

- Weiss, M.; Baret, F.; Garrigues, S.; Lacaze, R. LAI and fAPAR CYCLOPES global products derived from VEGETATION. Part 2: Validation and comparison with MODIS collection 4 products. Remote Sens. Environ. 2007, 110, 317–331. [Google Scholar] [CrossRef]

- Urban, M.; Eberle, J.; Hüttich, C.; Schmullius, C.; Herold, M. Comparison of Satellite-Derived Land Surface Temperature and Air Temperature from Meteorological Stations on the Pan-Arctic Scale. Remote Sens. 2013, 5, 2348–2367. [Google Scholar] [CrossRef]

- Lei, F.; Crow, W.T.; Shen, H.; Su, C.-H.; Holmes, T.R.H.; Parinussa, R.M.; Wang, G. Assessment of the impact of spatial heterogeneity on microwave satellite soil moisture periodic error. Remote Sens. Environ. 2018, 205, 85–99. [Google Scholar] [CrossRef]

- Widlowski, J.-L.; Taberner, M.; Pinty, B.; Bruniquel-Pinel, V.; Disney, M.; Fernandes, R.; Gastellu-Etchegorry, J.-P.; Gobron, N.; Kuusk, A.; Lavergne, T.; et al. Third Radiation Transfer Model Intercomparison (RAMI) exercise. J. Geophys. Res. 2007, 112, 1512. [Google Scholar] [CrossRef]

- Wang, D.; Liang, S. Improving LAI Mapping by Integrating MODIS and CYCLOPES LAI Products Using Optimal Interpolation. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2014, 7, 445–457. [Google Scholar] [CrossRef]

- Zhou, J.; Jia, L.; Menenti, M.; Gorte, B. On the performance of remote sensing time series reconstruction methods—A spatial comparison. Remote Sens. Environ. 2016, 187, 367–384. [Google Scholar] [CrossRef]

- Brown, M.E.; Pinzon, J.E.; Didan, K.; Morisette, J.T.; Tucker, C.J. Evaluation of the consistency of long-term NDVI time series derived from AVHRR, SPOT-vegetation, SeaWiFS, MODIS, and Landsat ETM+ sensors. IEEE Trans. Geosci. Remote Sens. 2006, 44, 1787–1793. [Google Scholar] [CrossRef]

- Dorigo, W.A.; Xaver, A.; Vreugdenhil, M.; Gruber, A.; Hegyiová, A.; Sanchis-Dufau, A.D.; Zamojski, D.; Cordes, C.; Wagner, W.; Drusch, M. Global Automated Quality Control of In Situ Soil Moisture Data from the International Soil Moisture Network. Vadose Zone J. 2013, 12. [Google Scholar] [CrossRef]

- Campbell, J.B. Introduction to Remote Sensing, 3rd ed.; Guilford Press: New York, NY, USA, 2002; ISBN 1-57230-640-8. [Google Scholar]

- Myneni, R.B.; Ramakrishna, R.; Nemani, R.; Running, S.W. Estimation of global leaf area index and absorbed par using radiative transfer models. IEEE Trans. Geosci. Remote Sens. 1997, 35, 1380–1393. [Google Scholar] [CrossRef]

- Padilla, M.; Stehman, S.; Litago, J.; Chuvieco, E. Assessing the Temporal Stability of the Accuracy of a Time Series of Burned Area Products. Remote Sens. 2014, 6, 2050–2068. [Google Scholar] [CrossRef]

- Fang, H.; Wei, S.; Jiang, C.; Scipal, K. Theoretical uncertainty analysis of global MODIS, CYCLOPES, and GLOBCARBON LAI products using a triple collocation method. Remote Sens. Environ. 2012, 124, 610–621. [Google Scholar] [CrossRef]

- Morisette, J.T.; Baret, F.; Privette, J.L.; Myneni, R.B.; Nickeson, J.E.; Garrigues, S.; Shabanov, N.V.; Weiss, M.; Fernandes, R.A.; Leblanc, S.G.; et al. Validation of global moderate-resolution LAI products. IEEE Trans. Geosci. Remote Sens. 2006, 44, 1804–1817. [Google Scholar] [CrossRef]

- Ge, Y.; Li, X.; Hu, M.G.; Wang, J.H.; Jin, R.; Wang, J.F.; Zhang, R.H. Technical Specifications for the Validation of Remote Sensing Products. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2013, XL-2/W1, 13–17. [Google Scholar] [CrossRef]

- McColl, K.A.; Vogelzang, J.; Konings, A.G.; Entekhabi, D.; Piles, M.; Stoffelen, A. Extended triple collocation: Estimating errors and correlation coefficients with respect to an unknown target: EXTENDED TRIPLE COLLOCATION. Geophys. Res. Lett. 2014, 41, 6229–6236. [Google Scholar] [CrossRef]

- Soto-Berelov, M.; Jones, S.; Farmer, E.; Woodgate, W. Review of Validation Standards of Earth Observation Derived Biophysical Products, 11th ed.; Held, A., Phinn, S., Soto-Berelov, M., Jones, S., Eds.; TERN AusCover: Canberra, Australia, 2015. [Google Scholar]

- Derksen, C.; Walker, A.; Goodison, B. A comparison of 18 winter seasons of in situ and passive microwave-derived snow water equivalent estimates in Western Canada. Remote Sens. Environ. 2003, 88, 271–282. [Google Scholar] [CrossRef]

- Liang, S.; Fang, H.; Chen, M.; Shuey, C.J.; Walthall, C.; Daughtry, C.; Morisette, J.; Schaaf, C.; Strahler, A. Validating MODIS land surface reflectance and albedo products: Methods and preliminary results. Remote Sens. Environ. 2002, 83, 149–162. [Google Scholar] [CrossRef]

- Xiao, Z.; Liang, S.; Wang, J.; Chen, P.; Yin, X.; Zhang, L.; Song, J. Use of General Regression Neural Networks for Generating the GLASS Leaf Area Index Product From Time-Series MODIS Surface Reflectance. IEEE Trans. Geosci. Remote Sens. 2014, 52, 209–223. [Google Scholar] [CrossRef]

- Weiss, M.; Baret, F.; Smith, G.J.; Jonckheere, I.; Coppin, P. Review of methods for in situ leaf area index (LAI) determination. Agric. For. Meteorol. 2004, 121, 37–53. [Google Scholar] [CrossRef]

- Djamai, N.; Fernandes, R.; Weiss, M.; McNairn, H.; Goïta, K. Validation of the Sentinel Simplified Level 2 Product Prototype Processor (SL2P) for mapping cropland biophysical variables using Sentinel-2/MSI and Landsat-8/OLI data. Remote Sens. Environ. 2019, 225, 416–430. [Google Scholar] [CrossRef]

- CEOS (Committee on Earth Observation Satellites). Cal/Val Portal Cal/Val Sites 2019. Available online: http://calvalportal.ceos.org/ (accessed on 16 July 2019).

- Gerstl, S.A.W. Physics concepts of optical and radar reflectance signatures A summary review. Int. J. Remote Sens. 1990, 11, 1109–1117. [Google Scholar] [CrossRef]

- Chuvieco, E.; Lizundia-Loiola, J.; Pettinari, M.L.; Ramo, R.; Padilla, M.; Tansey, K.; Mouillot, F.; Laurent, P.; Storm, T.; Heil, A.; et al. Generation and analysis of a new global burned area product based on MODIS 250 m reflectance bands and thermal anomalies. Earth Syst. Sci. Data 2018, 10, 2015–2031. [Google Scholar] [CrossRef]

- Higuchi, A.; Hiyama, T.; Fukuta, Y.; Suzuki, R.; Fukushima, Y. The behaviour of a surface temperature/vegetation index (TVX) matrix derived from 10-day composite AVHRR images over monsoon Asia. Hydrol. Process. 2007, 21, 1157–1166. [Google Scholar] [CrossRef]

- Jones, M.O.; Jones, L.A.; Kimball, J.S.; McDonald, K.C. Satellite passive microwave remote sensing for monitoring global land surface phenology. Remote Sens. Environ. 2011, 115, 1102–1114. [Google Scholar] [CrossRef]

- Rodell, M.; Chao, B.F.; Au, A.Y.; Kimball, J.S.; McDonald, K.C. Global Biomass Variation and Its Geodynamic Effects. Earth Interact. 2005, 9, 1–19. [Google Scholar] [CrossRef]

- Huete, A.; Didan, K.; Miura, T.; Rodriguez, E.P.; Gao, X.; Ferreira, L.G. Overview of the radiometric and biophysical performance of the MODIS vegetation indices. Remote Sens. Environ. 2002, 83, 195–213. [Google Scholar] [CrossRef]

- Cescatti, A.; Marcolla, B.; Santhana Vannan, S.K.; Pan, J.Y.; Román, M.O.; Yang, X.; Ciais, P.; Cook, R.B.; Law, B.E.; Matteucci, G.; et al. Intercomparison of MODIS albedo retrievals and in situ measurements across the global FLUXNET network. Remote Sens. Environ. 2012, 121, 323–334. [Google Scholar] [CrossRef]

- Humber, M.L.; Boschetti, L.; Giglio, L.; Justice, C.O. Spatial and temporal intercomparison of four global burned area products. Int. J. Digit. Earth 2019, 12, 460–484. [Google Scholar] [CrossRef]

- White, M.A.; Beurs, K.M.; Didan, K.; Inouye, D.W.; Richardson, A.D.; Jensen, O.P.; O’Keefe, J.; Zhang, G.; Nemani, R.R.; van Leeuwen, W.J.D.; et al. Intercomparison, interpretation, and assessment of spring phenology in North America estimated from remote sensing for 1982–2006. Glob. Chang. Biol. 2009, 15, 2335–2359. [Google Scholar] [CrossRef]

- Jia, K.; Yang, L.; Liang, S.; Xiao, Z.; Zhao, X.; Yao, Y.; Zhang, X.; Jiang, B.; Liu, D. Long-Term Global Land Surface Satellite (GLASS) Fractional Vegetation Cover Product Derived From MODIS and AVHRR Data. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2019, 12, 508–518. [Google Scholar] [CrossRef]

- Ganguly, S.; Friedl, M.A.; Tan, B.; Zhang, X.; Verma, M. Land surface phenology from MODIS. Remote Sens. Environ. 2010, 114, 1805–1816. [Google Scholar] [CrossRef]

- Pekel, J.-F.; Vancutsem, C.; Bastin, L.; Clerici, M.; Vanbogaert, E.; Bartholomé, E.; Defourny, P. A near real-time water surface detection method based on HSV transformation of MODIS multi-spectral time series data. Remote Sens. Environ. 2014, 140, 704–716. [Google Scholar] [CrossRef]

- Gobron, N.; Pinty, B.; Mélin, F.; Taberner, M.; Verstraete, M.M.; Robustelli, M.; Widlowski, J.-L. Evaluation of the MERIS/ENVISAT FAPAR product. Adv. Space Res. 2007, 39, 105–115. [Google Scholar] [CrossRef]

- Padilla, M.; Stehman, S.V.; Ramo, R.; Corti, D.; Hantson, S.; Oliva, P.; Alonso-Canas, I.; Bradley, A.V.; Tansey, K.; Mota, B.; et al. Comparing the accuracies of remote sensing global burned area products using stratified random sampling and estimation. Remote Sens. Environ. 2015, 160, 114–121. [Google Scholar] [CrossRef]

- Potter, C.; Tan, P.-N.; Steinbach, M.; Klooster, S.; Kumar, V.; Myneni, R.; Genovese, V. Major disturbance events in terrestrial ecosystems detected using global satellite data sets. Glob. Chang. Biol. 2003, 9, 1005–1021. [Google Scholar] [CrossRef]

- Ramo, R.; García, M.; Rodríguez, D.; Chuvieco, E. A data mining approach for global burned area mapping. Int. J. Appl. Earth Obs. Geoinf. 2018, 73, 39–51. [Google Scholar] [CrossRef]

- Zhang, X.; Zhang, Q. Monitoring interannual variation in global crop yield using long-term AVHRR and MODIS observations. ISPRS J. Photogramm. Remote Sens. 2016, 114, 191–205. [Google Scholar] [CrossRef]

- Chen, F.; Crow, W.T.; Colliander, A.; Cosh, M.H.; Jackson, T.J.; Bindlish, R.; Reichle, R.H.; Chan, S.K.; Bosch, D.D.; Starks, P.J.; et al. Application of Triple Collocation in Ground-Based Validation of Soil Moisture Active/Passive (SMAP) Level 2 Data Products. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2017, 10, 489–502. [Google Scholar] [CrossRef]

- Chen, F.; Crow, W.T.; Bindlish, R.; Colliander, A.; Burgin, M.S.; Asanuma, J.; Aida, K. Global-scale evaluation of SMAP, SMOS and ASCAT soil moisture products using triple collocation. Remote Sens. Environ. 2018, 214, 1–13. [Google Scholar] [CrossRef]

- Fang, H.; Liang, S.; Townshend, J.; Dickinson, R. Spatially and temporally continuous LAI data sets based on an integrated filtering method. Remote Sens. Environ. 2008, 112, 75–93. [Google Scholar] [CrossRef]

- Marshall, M.; Okuto, E.; Kang, Y.; Opiyo, E.; Ahmed, M. Global assessment of Vegetation Index and Phenology Lab (VIP) and Global Inventory Modeling and Mapping Studies (GIMMS) version 3 products. Biogeosciences 2016, 13, 625–639. [Google Scholar] [CrossRef]

- Xiao, Z.; Liang, S.; Sun, R. Evaluation of Three Long Time Series for Global Fraction of Absorbed Photosynthetically Active Radiation (FAPAR) Products. IEEE Trans. Geosci. Remote Sens. 2018, 56, 5509–5524. [Google Scholar] [CrossRef]

- Xiao, Z.; Liang, S.; Jiang, B. Evaluation of four long time-series global leaf area index products. Agric. For. Meteorol. 2017, 246, 218–230. [Google Scholar] [CrossRef]

- Chuvieco, E.; Opazo, S.; Sione, W.; del Valle, H.; Anaya, J.; Di Bella, C.; Cruz, I.; Manzo, L.; López, G.; Mari, N.; et al. Global Burned Area Estimation in Latin America Using MODIS Composite Data. Ecol. Appl. 2008, 18, 64–79. [Google Scholar] [CrossRef]

- Sobrino, J.A.; Julien, Y. Global trends in NDVI-derived parameters obtained from GIMMS data. Int. J. Remote Sens. 2011, 32, 4267–4279. [Google Scholar] [CrossRef]

- Tarnavsky, E.; Garrigues, S.; Brown, M.E. Multiscale geostatistical analysis of AVHRR, SPOT-VGT, and MODIS global NDVI products. Remote Sens. Environ. 2008, 112, 535–549. [Google Scholar] [CrossRef]

- Kandasamy, S.; Baret, F.; Verger, A.; Neveux, P.; Weiss, M. A comparison of methods for smoothing and gap filling time series of remote sensing observations—Application to MODIS LAI products. Biogeosciences 2013, 10, 4055–4071. [Google Scholar] [CrossRef]

- Schwartz, M.D.; Hanes, J.M. Intercomparing multiple measures of the onset of spring in eastern North America. Int. J. Climatol. 2010, 30, 1614–1626. [Google Scholar] [CrossRef]

- Wang, Q.; Tenhunen, J.; Dinh, N.; Reichstein, M.; Otieno, D.; Granier, A.; Pilegarrd, K. Evaluation of seasonal variation of MODIS derived leaf area index at two European deciduous broadleaf forest sites. Remote Sens. Environ. 2005, 96, 475–484. [Google Scholar] [CrossRef]

- Forkel, M.; Migliavacca, M.; Thonicke, K.; Reichstein, M.; Schaphoff, S.; Weber, U.; Carvalhais, N. Codominant water control on global interannual variability and trends in land surface phenology and greenness. Glob. Chang. Biol. 2015, 21, 3414–3435. [Google Scholar] [CrossRef]

- Wu, C.; Peng, D.; Soudani, K.; Siebicke, L.; Gough, C.M.; Arain, M.A.; Bohrer, G.; Lafleur, P.M.; Peichl, M.; Gonsamo, A.; et al. Land surface phenology derived from normalized difference vegetation index (NDVI) at global FLUXNET sites. Agric. For. Meteorol. 2017, 233, 171–182. [Google Scholar] [CrossRef]

- Gruber, A.; Su, C.-H.; Zwieback, S.; Crow, W.; Dorigo, W.; Wagner, W. Recent advances in (soil moisture) triple collocation analysis. Int. J. Appl. Earth Obs. Geoinf. 2016, 45, 200–211. [Google Scholar] [CrossRef]

- Jones, M.O.; Kimball, J.S.; Jones, L.A.; McDonald, K.C. Satellite passive microwave detection of North America start of season. Remote Sens. Environ. 2012, 123, 324–333. [Google Scholar] [CrossRef]

- Kim, Y.; Kimball, J.S.; McDonald, K.C.; Glassy, J. Developing a Global Data Record of Daily Landscape Freeze/Thaw Status Using Satellite Passive Microwave Remote Sensing. IEEE Trans. Geosci. Remote Sens. 2011, 49, 949–960. [Google Scholar] [CrossRef]

- Papa, F.; Prigent, C.; Aires, F.; Jimenez, C.; Rossow, W.B.; Matthews, E. Interannual variability of surface water extent at the global scale, 1993–2004. J. Geophys. Res. 2010, 115, 1147. [Google Scholar] [CrossRef]

- Zwieback, S.; Paulik, C.; Wagner, W. Frozen Soil Detection Based on Advanced Scatterometer Observations and Air Temperature Data as Part of Soil Moisture Retrieval. Remote Sens. 2015, 7, 3206–3231. [Google Scholar] [CrossRef]

- Keersmaecker, W.; Lhermitte, S.; Honnay, O.; Farifteh, J.; Somers, B.; Coppin, P. How to measure ecosystem stability? An evaluation of the reliability of stability metrics based on remote sensing time series across the major global ecosystems. Glob. Chang. Biol. 2014, 20, 2149–2161. [Google Scholar] [CrossRef]

- Al-Yaari, A.; Wigneron, J.-P.; Ducharne, A.; Kerr, Y.H.; Wagner, W.; Lannoy, G.; Reichle, R.; Al Bitar, A.; Dorigo, W.; Richaume, P.; et al. Global-scale comparison of passive (SMOS) and active (ASCAT) satellite based microwave soil moisture retrievals with soil moisture simulations (MERRA-Land). Remote Sens. Environ. 2014, 152, 614–626. [Google Scholar] [CrossRef]

- Brown, M.E.; Lary, D.J.; Vrieling, A.; Stathakis, D.; Mussa, H. Neural networks as a tool for constructing continuous NDVI time series from AVHRR and MODIS. Int. J. Remote Sens. 2008, 29, 7141–7158. [Google Scholar] [CrossRef]

- Bruscantini, C.A.; Konings, A.G.; Narvekar, P.S.; McColl, K.A.; Entekhabi, D.; Grings, F.M.; Karszenbaum, H. L-Band Radar Soil Moisture Retrieval Without Ancillary Information. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2015, 8, 5526–5540. [Google Scholar] [CrossRef]

- Cheng, J.; Liang, S.; Yao, Y.; Ren, B.; Shi, L.; Liu, H. A Comparative Study of Three Land Surface Broadband Emissivity Datasets from Satellite Data. Remote Sens. 2013, 6, 111–134. [Google Scholar] [CrossRef]

- Fontana, F.M.A.; Coops, N.C.; Khlopenkov, K.V.; Trishchenko, A.P.; Riffler, M.; Wulder, M.A. Generation of a novel 1km NDVI data set over Canada, the northern United States, and Greenland based on historical AVHRR data. Remote Sens. Environ. 2012, 121, 171–185. [Google Scholar] [CrossRef]

- Franch, B.; Vermote, E.; Roger, J.-C.; Murphy, E.; Becker-Reshef, I.; Justice, C.; Claverie, M.; Nagol, J.; Csiszar, I.; Meyer, D.; et al. A 30+ Year AVHRR Land Surface Reflectance Climate Data Record and Its Application to Wheat Yield Monitoring. Remote Sens. 2017, 9, 296. [Google Scholar] [CrossRef]

- Kohler, P.; Guanter, L.; Frankenberg, C. Simplified physically based retrieval of sun-induced chlorophyll fluorescence from GOSAT data. IEEE Geosci. Remote Sens. Lett. 2015, 12, 1446–1450. [Google Scholar] [CrossRef]

- Pan, N.; Feng, X.; Fu, B.; Wang, S.; Ji, F.; Pan, S. Increasing global vegetation browning hidden in overall vegetation greening: Insights from time-varying trends. Remote Sens. Environ. 2018, 214, 59–72. [Google Scholar] [CrossRef]

- Bojanowski, J.S.; Vrieling, A.; Skidmore, A.K. A comparison of data sources for creating a long-term time series of daily gridded solar radiation for Europe. Sol. Energy 2014, 99, 152–171. [Google Scholar] [CrossRef]

- Jia, A.; Liang, S.; Jiang, B.; Zhang, X.; Wang, G. Comprehensive Assessment of Global Surface Net Radiation Products and Uncertainty Analysis. J. Geophys. Res. Atmos. 2018, 123, 1970–1989. [Google Scholar] [CrossRef]

- Piles, M.; Ballabrera-Poy, J.; Muñoz-Sabater, J. Dominant Features of Global Surface Soil Moisture Variability Observed by the SMOS Satellite. Remote Sens. 2019, 11, 95. [Google Scholar] [CrossRef]

- Albergel, C.; Dorigo, W.; Reichle, R.H.; Balsamo, G.; Rosnay, P.; Muñoz-Sabater, J.; Isaksen, L.; Jeu, R.; Wagner, W. Skill and Global Trend Analysis of Soil Moisture from Reanalyses and Microwave Remote Sensing. J. Hydrometeorol. 2013, 14, 1259–1277. [Google Scholar] [CrossRef]

- Gruber, A.; Dorigo, W.A.; Crow, W.; Wagner, W. Triple Collocation-Based Merging of Satellite Soil Moisture Retrievals. IEEE Trans. Geosci. Remote Sens. 2017, 55, 6780–6792. [Google Scholar] [CrossRef]

- Suzuki, R.; Masuda, K.; Dye, D.G. Interannual covariability between actual evapotranspiration and PAL and GIMMS NDVIs of northern Asia. Remote Sens. Environ. 2007, 106, 387–398. [Google Scholar] [CrossRef]

- Müller, R.; Pfeifroth, U.; Träger-Chatterjee, C.; Trentmann, J.; Cremer, R. Digging the METEOSAT Treasure—3 Decades of Solar Surface Radiation. Remote Sens. 2015, 7, 8067–8101. [Google Scholar] [CrossRef]

- Munier, S.; Carrer, D.; Planque, C.; Camacho, F.; Albergel, C.; Calvet, J.-C. Satellite Leaf Area Index: Global Scale Analysis of the Tendencies Per Vegetation Type Over the Last 17 Years. Remote Sens. 2018, 10, 424. [Google Scholar] [CrossRef]

- Paulik, C.; Dorigo, W.; Wagner, W.; Kidd, R. Validation of the ASCAT Soil Water Index using in situ data from the International Soil Moisture Network. Int. J. Appl. Earth Obs. Geoinf. 2014, 30, 1–8. [Google Scholar] [CrossRef]

- Jia, K.; Liang, S.; Liu, S.; Li, Y.; Xiao, Z.; Yao, Y.; Jiang, B.; Zhao, X.; Wang, X.; Xu, S.; et al. Global Land Surface Fractional Vegetation Cover Estimation Using General Regression Neural Networks From MODIS Surface Reflectance. IEEE Trans. Geosci. Remote Sens. 2015, 53, 4787–4796. [Google Scholar] [CrossRef]

- Padilla, M.; Stehman, S.V.; Chuvieco, E. Validation of the 2008 MODIS-MCD45 global burned area product using stratified random sampling. Remote Sens. Environ. 2014, 144, 187–196. [Google Scholar] [CrossRef]

- Klein, I.; Gessner, U.; Dietz, A.J.; Kuenzer, C. Global WaterPack—A 250 m resolution dataset revealing the daily dynamics of global inland water bodies. Remote Sens. Environ. 2017, 198, 345–362. [Google Scholar] [CrossRef]

- Dietz, A.J.; Kuenzer, C.; Dech, S. Global SnowPack. Remote Sens. Lett. 2015, 6, 844–853. [Google Scholar] [CrossRef]

- Kim, Y.; Kimball, J.S.; Zhang, K.; McDonald, K.C. Satellite detection of increasing Northern Hemisphere non-frozen seasons from 1979 to 2008. Remote Sens. Environ. 2012, 121, 472–487. [Google Scholar] [CrossRef]

- Zhang, X.; Friedl, M.A.; Schaaf, C.B. Global vegetation phenology from Moderate Resolution Imaging Spectroradiometer (MODIS): Evaluation of global patterns and comparison with in situ measurements. J. Geophys. Res. 2006, 111, G04017. [Google Scholar] [CrossRef]

- Hu, G.; Jia, L.; Menenti, M. Comparison of MOD16 and LSA-SAF MSG evapotranspiration products over Europe for 2011. Remote Sens. Environ. 2015, 156, 510–526. [Google Scholar] [CrossRef]

- Mu, X.; Song, W.; Gao, Z.; McVicar, T.R.; Donohue, R.J.; Yan, G. Fractional vegetation cover estimation by using multi-angle vegetation index. Remote Sens. Environ. 2018, 216, 44–56. [Google Scholar] [CrossRef]

- Zhou, J.; Jia, L.; Menenti, M. Reconstruction of global MODIS NDVI time series. Remote Sens. Environ. 2015, 163, 217–228. [Google Scholar] [CrossRef]

- Kumar, S.V.; Dirmeyer, P.A.; Peters-Lidard, C.D.; Bindlish, R.; Bolten, J. Information theoretic evaluation of satellite soil moisture retrievals. Remote Sens. Environ. 2018, 204, 392–400. [Google Scholar] [CrossRef]

- Rodríguez-Fernández, N.; Kerr, Y.; van der Schalie, R.; Al-Yaari, A.; Wigneron, J.-P.; de Jeu, R.; Richaume, P.; Dutra, E.; Mialon, A.; Drusch, M. Long Term Global Surface Soil Moisture Fields Using an SMOS-Trained Neural Network Applied to AMSR-E Data. Remote Sens. 2016, 8, 959. [Google Scholar] [CrossRef]

- Sichangi, A.W.; Wang, L.; Yang, K.; Chen, D.; Wang, Z.; Li, X.; Zhou, J.; Liu, W.; Kuria, D. Estimating continental river basin discharges using multiple remote sensing data sets. Remote Sens. Environ. 2016, 179, 36–53. [Google Scholar] [CrossRef]

- Fang, H.; Wei, S.; Liang, S. Validation of MODIS and CYCLOPES LAI products using global field measurement data. Remote Sens. Environ. 2012, 119, 43–54. [Google Scholar] [CrossRef]

- Liu, J.; Li, Z.; Huang, L.; Tian, B. Hemispheric-scale comparison of monthly passive microwave snow water equivalent products. J. Appl. Remote Sens. 2014, 8, 084688. [Google Scholar] [CrossRef]

- Liu, Q.; Wang, L.; Qu, Y.; Liu, N.; Liu, S.; Tang, H.; Liang, S. Preliminary evaluation of the long-term GLASS albedo product. Int. J. Digit. Earth 2013, 6, 69–95. [Google Scholar] [CrossRef]

- Tum, M.; Günther, K.; Böttcher, M.; Baret, F.; Bittner, M.; Brockmann, C.; Weiss, M. Global Gap-Free MERIS LAI Time Series (2002–2012). Remote Sens. 2016, 8, 69. [Google Scholar] [CrossRef]

- Wu, D.; Wu, H.; Zhao, X.; Zhou, T.; Tang, B.; Zhao, W.; Jia, K. Evaluation of Spatiotemporal Variations of Global Fractional Vegetation Cover Based on GIMMS NDVI Data from 1982 to 2011. Remote Sens. 2014, 6, 4217–4239. [Google Scholar] [CrossRef]

- Entekhabi, D.; Reichle, R.H.; Koster, R.D.; Crow, W.T. Performance Metrics for Soil Moisture Retrievals and Application Requirements. J. Hydrometeorol. 2010, 11, 832–840. [Google Scholar] [CrossRef]

- Stanski, H.R.; Wilson, L.; Burrows, W. Survey of Common Verification Methods in Meteorology; World Meteorological Organisation: Geneva, Switzerland, 1990; Volume 8. [Google Scholar]

- Janssen, P.A.E.M.; Abdalla, S.; Hersbach, H.; Bidlot, J.-R. Error Estimation of Buoy, Satellite, and Model Wave Height Data. J. Atmos. Ocean. Technol. 2007, 24, 1665–1677. [Google Scholar] [CrossRef]

- Boschetti, L.; Roy, D.P.; Justice, C.O. International Global Burned Area Satellite Product Validation Protocol Part I—Production and Standardization of Validation Reference Data (to be Followed by Part II—Accuracy Reporting); Committee on Earth Observation Satellites: Maryland, MD, USA, 2009; pp. 1–11. [Google Scholar]

- Baret, F.; Morissette, J.T.; Fernandes, R.A.; Champeaux, J.L.; Myneni, R.B.; Chen, J.; Plummer, S.; Weiss, M.; Bacour, C.; Garrigues, S.; et al. Evaluation of the representativeness of networks of sites for the global validation and intercomparison of land biophysical products: Proposition of the CEOS-BELMANIP. IEEE Trans. Geosci. Remote Sens. 2006, 44, 1794–1803. [Google Scholar] [CrossRef]

- Garrigues, S.; Lacaze, R.; Baret, F.; Morisette, J.T.; Weiss, M.; Nickeson, J.E.; Fernandes, R.; Plummer, S.; Shabanov, N.V.; Myneni, R.B.; et al. Validation and intercomparison of global Leaf Area Index products derived from remote sensing data. J. Geophys. Res. 2008, 113. [Google Scholar] [CrossRef]

- Tan, B.; Woodcock, C.E.; Hu, J.; Zhang, P.; Ozdogan, M.; Huang, D.; Yang, W.; Knyazikhin, Y.; Myneni, R.B. The impact of gridding artifacts on the local spatial properties of MODIS data: Implications for validation, compositing, and band-to-band registration across resolutions. Remote Sens. Environ. 2006, 105, 98–114. [Google Scholar] [CrossRef]

- Hufkens, K.; Friedl, M.; Sonnentag, O.; Braswell, B.H.; Milliman, T.; Richardson, A.D. Linking near-surface and satellite remote sensing measurements of deciduous broadleaf forest phenology. Remote Sens. Environ. 2012, 117, 307–321. [Google Scholar] [CrossRef]

- Verbesselt, J.; Hyndman, R.; Newnham, G.; Culvenor, D. Detecting trend and seasonal changes in satellite image time series. Remote Sens. Environ. 2010, 114, 106–115. [Google Scholar] [CrossRef]

- See, L.; Mooney, P.; Foody, G.; Bastin, L.; Comber, A.; Estima, J.; Fritz, S.; Kerle, N.; Jiang, B.; Laakso, M.; et al. Crowdsourcing, Citizen Science or Volunteered Geographic Information? The Current State of Crowdsourced Geographic Information. IJGI Int. J. Geo Inf. 2016, 5, 55. [Google Scholar] [CrossRef]

- Wulder, M.A.; Coops, N.C.; Roy, D.P.; White, J.C.; Hermosilla, T. Land cover 2.0. Int. J. Remote Sens. 2018, 39, 4254–4284. [Google Scholar] [CrossRef]

- Houborg, R.; McCabe, M.F. A Cubesat enabled Spatio-Temporal Enhancement Method (CESTEM) utilizing Planet, Landsat and MODIS data. Remote Sens. Environ. 2018, 209, 211–226. [Google Scholar] [CrossRef]

- Peter, B.G.; Messina, J.P. Errors in Time-Series Remote Sensing and an Open Access Application for Detecting and Visualizing Spatial Data Outliers Using Google Earth Engine. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2019, 12, 1165–1174. [Google Scholar] [CrossRef]

| Validation Method | Advantages | Disadvantages |

|---|---|---|

| Comparison | Direct validation Physical uncertainties Assessment of quantitative errors | Need for independent acquisitions Complex spatiotemporal matching of validation data |

| Accuracy assessment | Adapted for spatial data Thematic map comparison with corresponding metrics Well established in remote sensing | Precise definition of classes necessary 1 |

| Intercomparison | Simplified spatiotemporal approximation of other data products | Indirect validation Only relative error metrics |

| Internal validation | Spatiotemporal match of validation data Directly accessible validation data Synthetic assessments well suited for interpolation methods | Theoretical uncertainties Validation outcomes are dependent on product properties |

| Temporal evaluation | Straightforward comparison of extensive datasets | Vulnerability to continuity distortions, weak seasonality and insufficient temporal sampling Only validity in terms of timing |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Mayr, S.; Kuenzer, C.; Gessner, U.; Klein, I.; Rutzinger, M. Validation of Earth Observation Time-Series: A Review for Large-Area and Temporally Dense Land Surface Products. Remote Sens. 2019, 11, 2616. https://doi.org/10.3390/rs11222616

Mayr S, Kuenzer C, Gessner U, Klein I, Rutzinger M. Validation of Earth Observation Time-Series: A Review for Large-Area and Temporally Dense Land Surface Products. Remote Sensing. 2019; 11(22):2616. https://doi.org/10.3390/rs11222616

Chicago/Turabian StyleMayr, Stefan, Claudia Kuenzer, Ursula Gessner, Igor Klein, and Martin Rutzinger. 2019. "Validation of Earth Observation Time-Series: A Review for Large-Area and Temporally Dense Land Surface Products" Remote Sensing 11, no. 22: 2616. https://doi.org/10.3390/rs11222616

APA StyleMayr, S., Kuenzer, C., Gessner, U., Klein, I., & Rutzinger, M. (2019). Validation of Earth Observation Time-Series: A Review for Large-Area and Temporally Dense Land Surface Products. Remote Sensing, 11(22), 2616. https://doi.org/10.3390/rs11222616