Life Signs Detector Using a Drone in Disaster Zones

Abstract

1. Introduction

2. Methods and Materials

2.1. Human Ethics Considerations

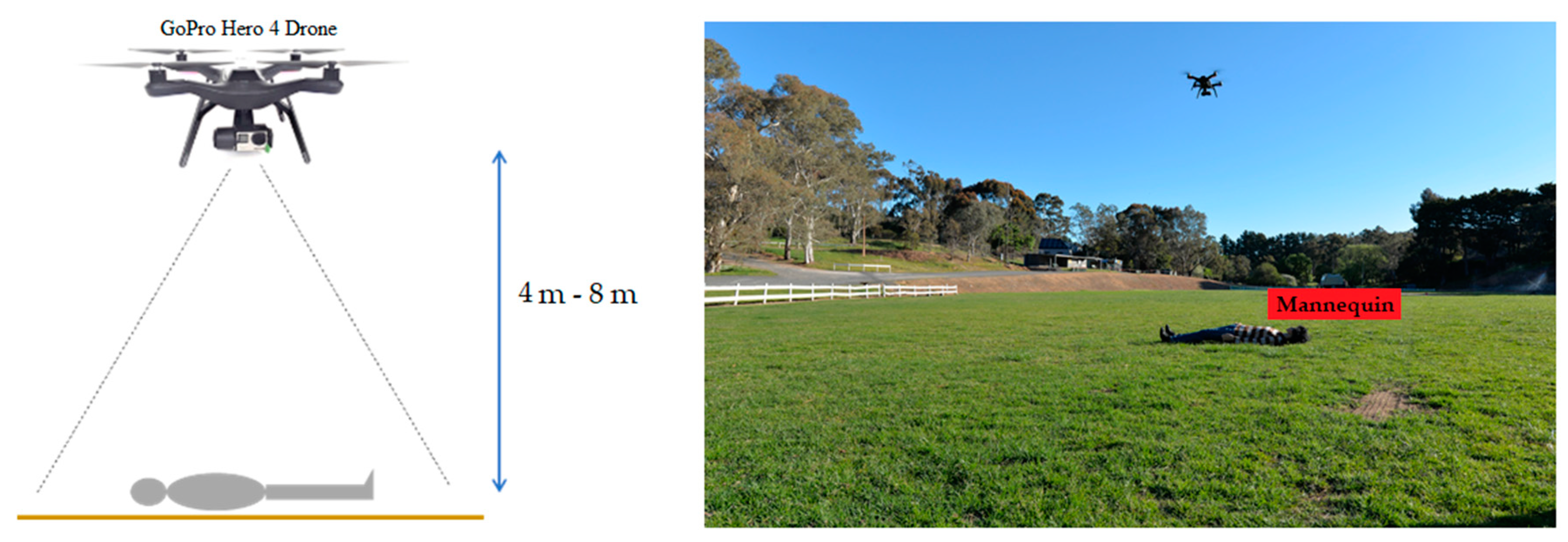

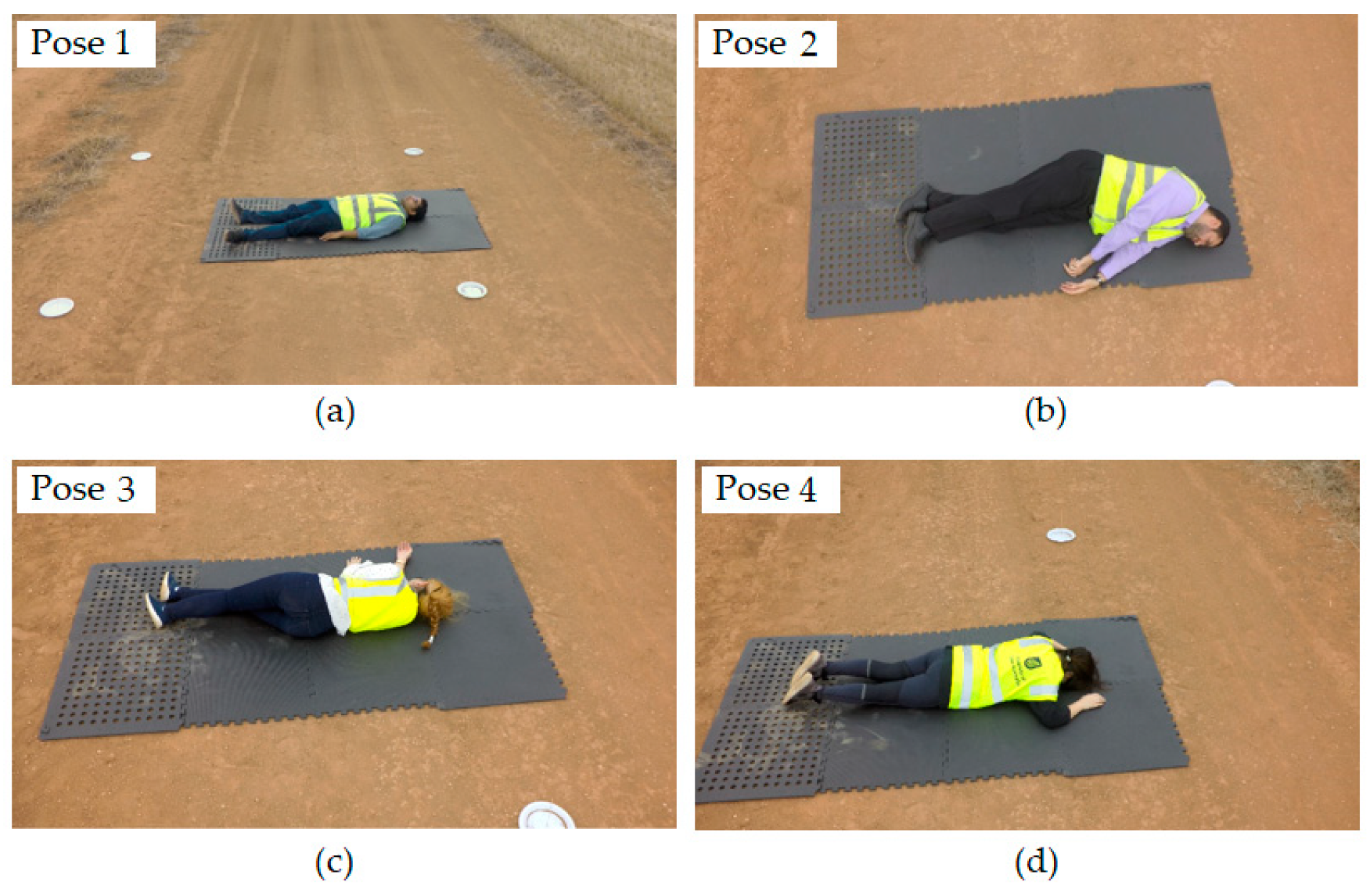

2.2. Experimental Setup and Data Acquisition

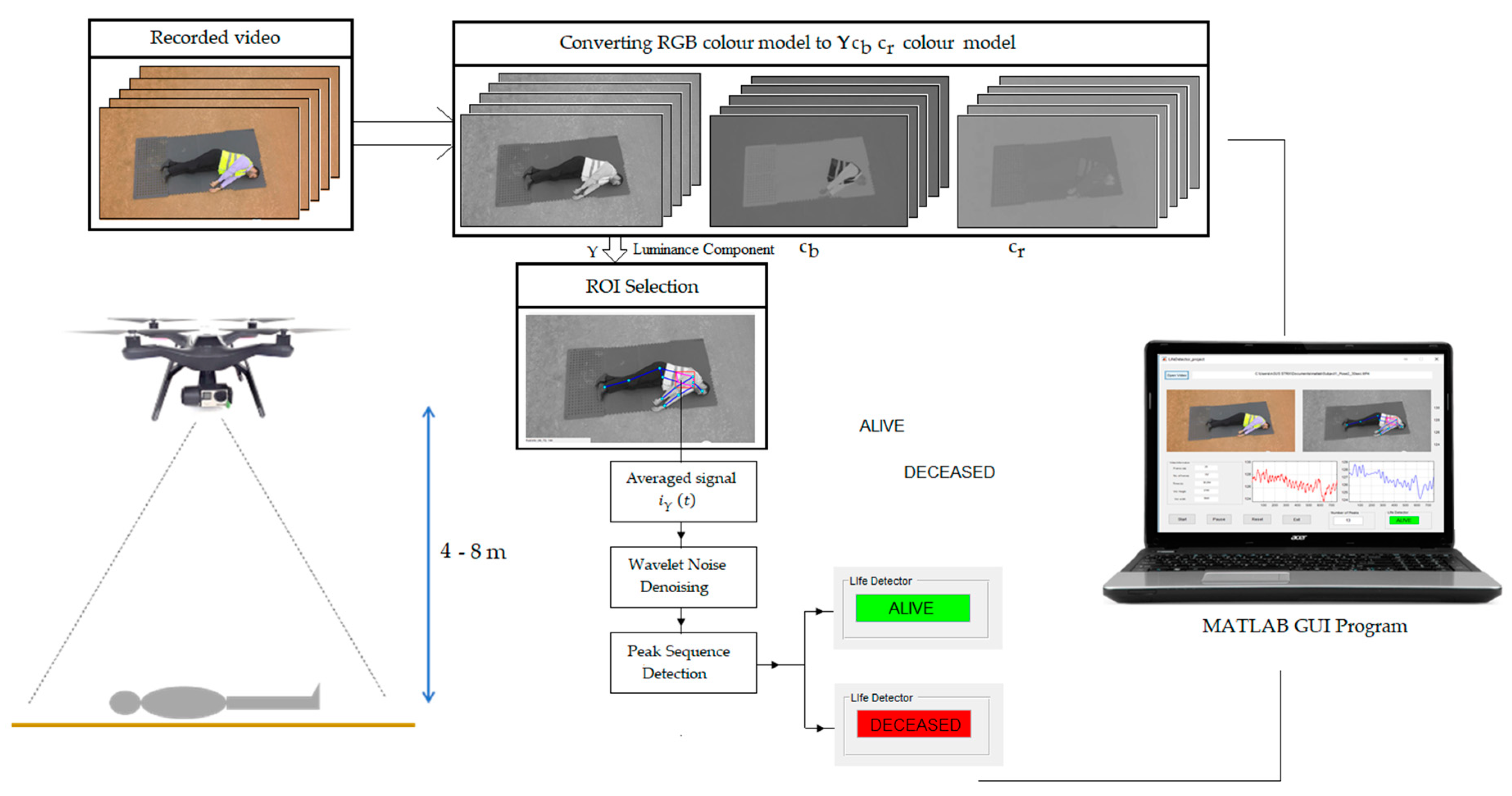

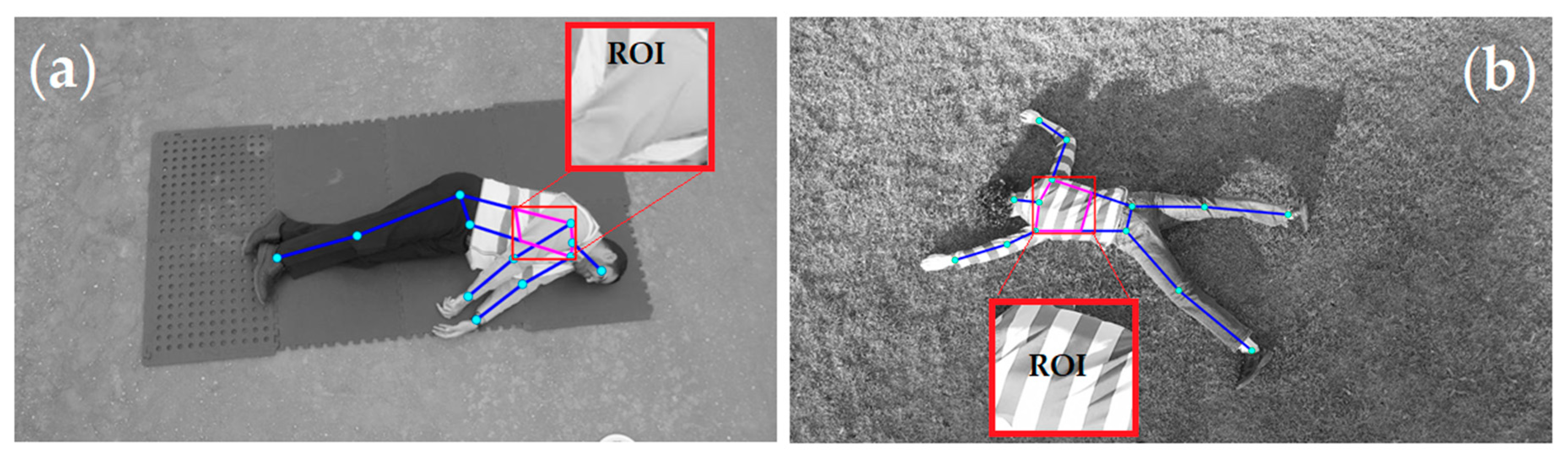

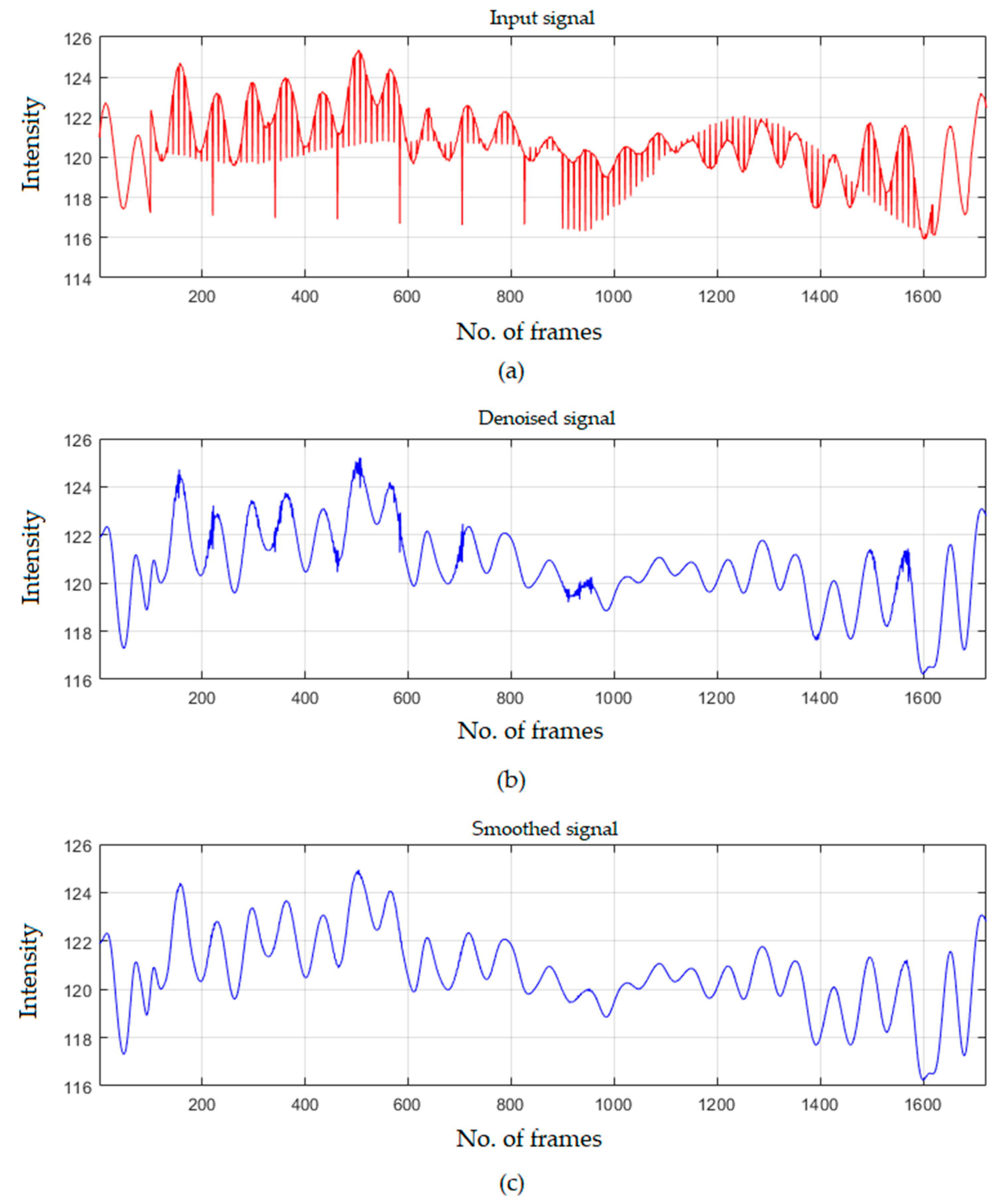

2.3. System Framework and Data Analysis

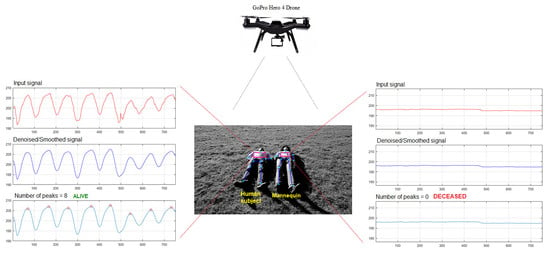

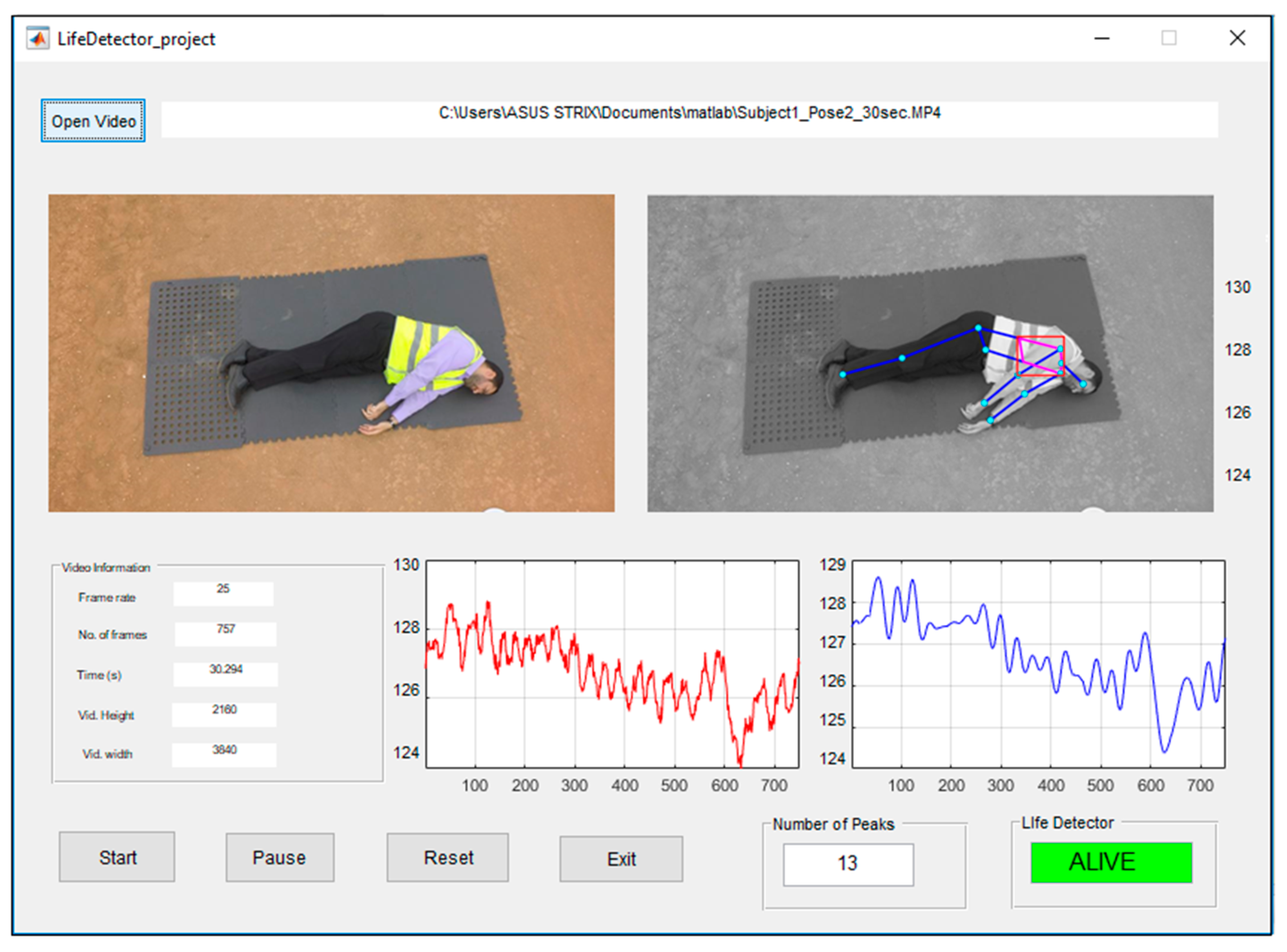

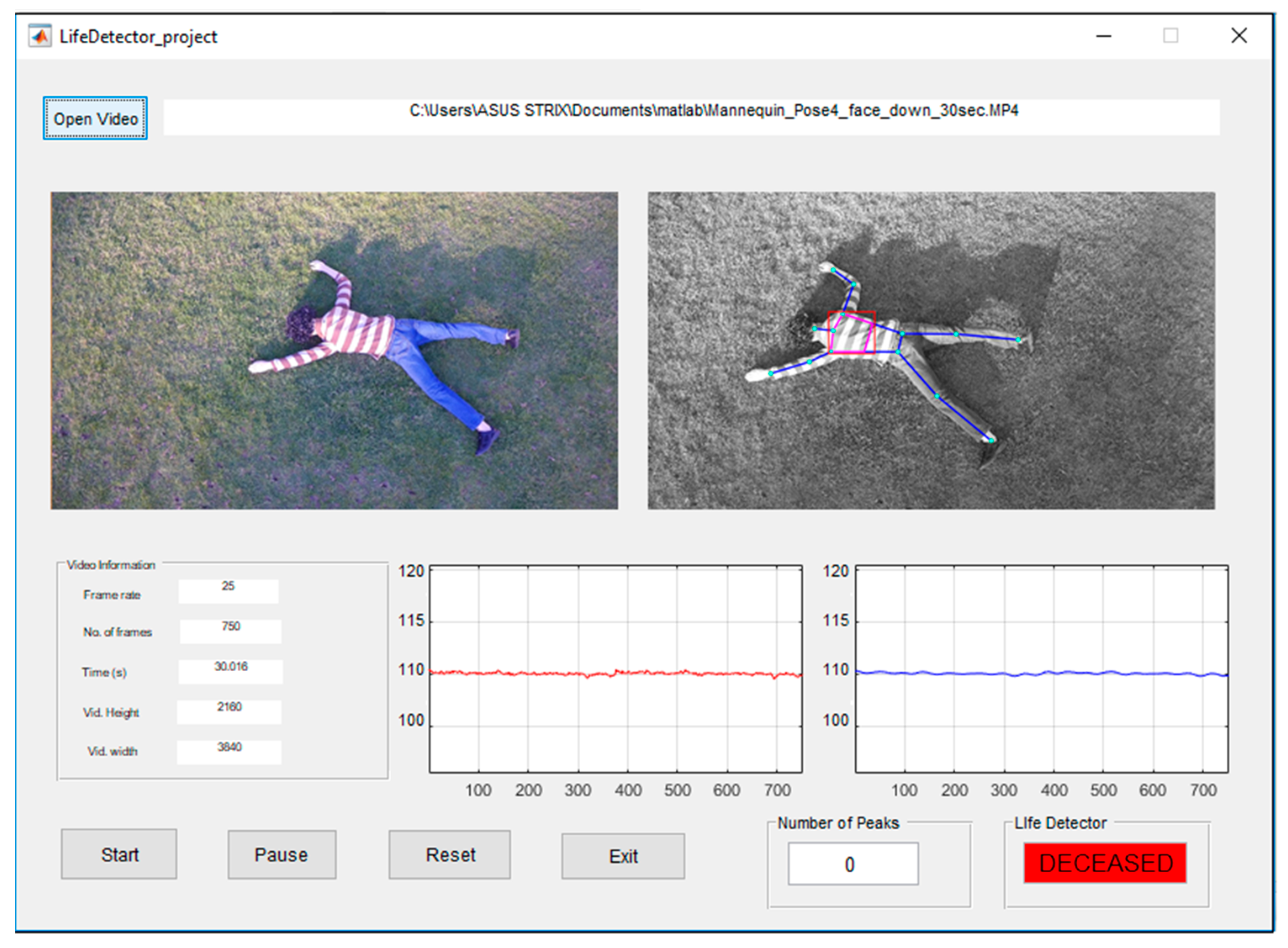

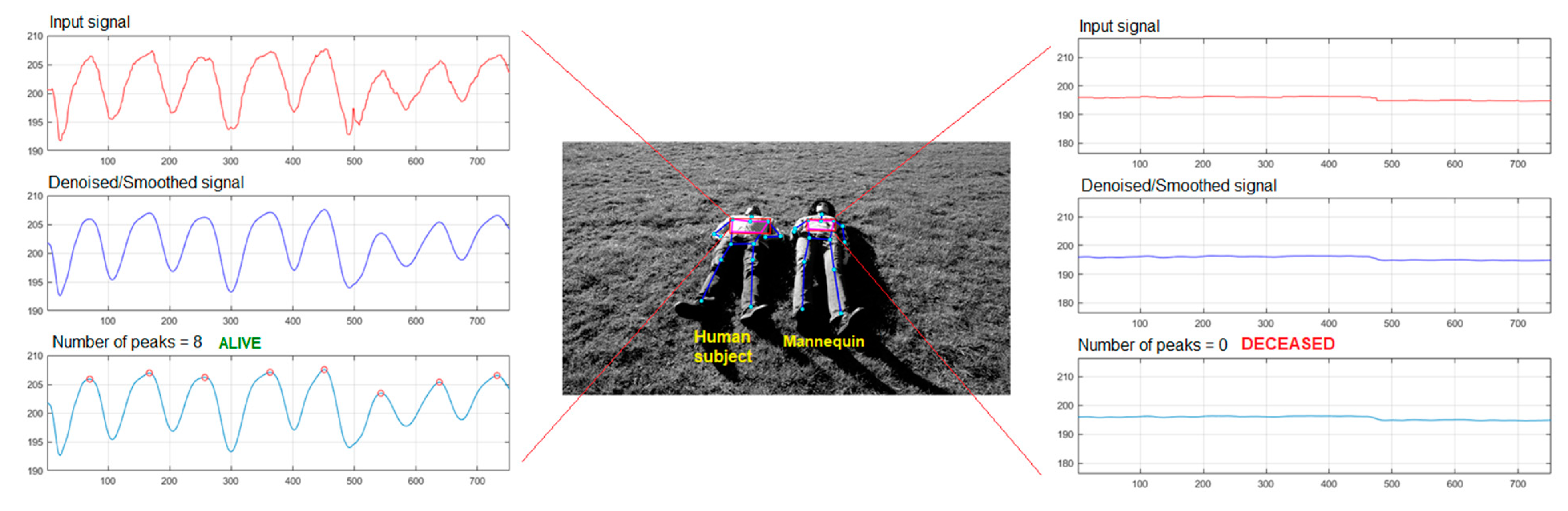

3. Experimentation and Results

4. Discussion

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Mayer, S.; Lischke, L.; Woźniak, P.W. Drones for Search and Rescue. In 1st International Workshop on Human-Drone Interaction; Ecole Nationale de l’Aviation Civile [ENAC]: Glasgow, UK, 2019. [Google Scholar]

- Bogue, R. Search and rescue and disaster relief robots: Has their time finally come? Ind. Robot Int. J. 2016, 43, 138–143. [Google Scholar] [CrossRef]

- Hildmann, H.; Kovacs, E.; Saffre, F.; Isakovic, A. Nature-Inspired Drone Swarming for Real-Time Aerial Data-Collection Under Dynamic Operational Constraints. Drones 2019, 3, 71. [Google Scholar] [CrossRef]

- Casper, J.; Murphy, R.R. Human-Robot interactions during the Robot-Assisted urban search and rescue response at the world trade center. IEEE Trans. Syst. Man Cybern. Part B Cybern. 2003, 33, 367–385. [Google Scholar] [CrossRef] [PubMed]

- Doroodgar, B.; Liu, Y.; Nejat, G. A learning-based Semi-Autonomous controller for robotic exploration of unknown disaster scenes while searching for victims. IEEE Trans. Cybern. 2014, 44, 2719–2732. [Google Scholar] [CrossRef] [PubMed]

- Perera, A.G.; Al-naji, A.; Law, Y.W.; Chahl, J. Human detection and motion analysis from a quadrotor UAV. In IOP Conference Series: Materials Science and Engineering; IOP Publishing: Bristol, UK, 2018; Volume 405, p. 012003. [Google Scholar]

- Perera, A.G.; Law, Y.W.; Al-naji, A.; Chahl, J. Human motion analysis from UAV video. Int. J. Intell. Unmanned Syst. 2018, 6, 69–92. [Google Scholar] [CrossRef]

- Lygouras, E.; Santavas, N.; Taitzoglou, A.; Tarchanidis, K.; Mitropoulos, A.; Gasteratos, A. Unsupervised Human Detection with an Embedded Vision System on a Fully Autonomous UAV for Search and Rescue Operations. Sensors 2019, 19, 3542. [Google Scholar] [CrossRef] [PubMed]

- Doherty, P.; Rudol, P. A UAV search and rescue scenario with human body detection and geolocalization. In Australasian Joint Conference on Artificial Intelligence; Springer: Berlin, Heidelberg, 2007; pp. 1–13. [Google Scholar]

- Andriluka, M.; Schnitzspan, P.; Meyer, J.; Kohlbrecher, S.; Petersen, K.; Von Stryk, O.; Roth, S.; Schiele, B. Vision based victim detection from unmanned aerial vehicles. In Proceedings of the 2010 IEEE/RSJ International Conference on Intelligent Robots and Systems, Taipei, Taiwan, 18–22 October 2010; IEEE Publishing: San Antonio, TX, USA, 2010; pp. 1740–1747. [Google Scholar]

- Câmara, D. Cavalry to the rescue: Drones fleet to help rescuers operations over disasters scenarios. In Proceedings of the 2014 IEEE Conference on Antenna Measurements & Applications (CAMA), Antibes Juan-les-Pins, France, 16–19 November 2014; IEEE Publishing: San Antonio, TX, USA, 2014; pp. 1–4. [Google Scholar]

- Sulistijono, I.A.; Risnumawan, A. From concrete to abstract: Multilayer neural networks for disaster victims detection. In Proceedings of the 2016 International Electronics Symposium (IES), Denpasar, Indonesia, 29–30 September 2016; IEEE Publishing: San Antonio, TX, USA, 2016; pp. 93–98. [Google Scholar]

- Kang, J.; Gajera, K.; Cohen, I.; Medioni, G. Detection and tracking of moving objects from overlapping EO and IR sensors. In Proceedings of the 2004 Conference on Computer Vision and Pattern Recognition Workshop, Washington, DC, USA, 27 June –2 July 2004; IEEE Publishing: San Antonio, TX, USA, 2004; p. 123. [Google Scholar]

- Portmann, J.; Lynen, S.; Chli, M.; Siegwart, R. People detection and tracking from aerial thermal views. In Proceedings of the 2014 IEEE International Conference on Robotics and Automation (ICRA), Hong Kong, China, 31 May–5 June 2014; IEEE Publishing: San Antonio, TX, USA, 2014; pp. 1794–1800. [Google Scholar]

- Rudol, P.; Doherty, P. Human body detection and geolocalization for UAV search and rescue missions using color and thermal imagery. In Proceedings of the 2008 IEEE Aerospace Conference, Big Sky, MT, USA, 1–8 March 2008; IEEE Publishing: San Antonio, TX, USA, 2008; pp. 1–8. [Google Scholar]

- Rivera, A.; Villalobos, A.; Monje, J.; Mariñas, J.; Oppus, C. Post-disaster rescue facility: Human detection and geolocation using aerial drones. In Proceedings of the 2016 IEEE Region 10 Conference (TENCON), Singapore, 22–25 November 2016; IEEE Publishing: San Antonio, TX, USA, 2016; pp. 384–386. [Google Scholar]

- Al-Kaff, A.; Gómez-Silva, M.J.; Moreno, F.M.; de la Escalera, A.; Armingol, J.M. An Appearance-Based tracking algorithm for aerial search and rescue purposes. Sensors 2019, 19, 652. [Google Scholar] [CrossRef] [PubMed]

- Alnaji, A.; Perera, A.G.; Chahl, J. Remote measurement of cardiopulmonary signal using an unmanned aerial vehicle. In IOP Conference Series: Materials Science and Engineering; IOP Publishing: Bristol, UK, 2018; Volume 405, p. 012001. [Google Scholar]

- Alnaji, A.; Perera, A.G.; Chahl, J. Remote monitoring of cardiorespiratory signals from a hovering unmanned aerial vehicle. Biomed. Eng. OnLine 2017, 16, 101. [Google Scholar] [CrossRef] [PubMed]

- John, N.; Viswanath, A.; Sowmya, V.; Soman, K. Analysis of various color space models on effective single image super resolution. In Intelligent Systems Technologies and Applications; Springer: Cham, Switzerland, 2016; pp. 529–540. [Google Scholar]

- Kumar, A.; Kaur, A.; Kumar, M. Face detection techniques: A review. Artif. Intell. Rev. 2019, 52, 927–948. [Google Scholar] [CrossRef]

- Cao, Z.; Hidalgo, G.; Simon, T.; Wei, S.-E.; Sheikh, Y. OpenPose: Realtime multi-person 2D pose estimation using Part Affinity Fields. arXiv 2018, arXiv:1812.08008. [Google Scholar] [CrossRef] [PubMed]

- Wee, A.; Grayden, D.B.; Zhu, Y.; Petkovic-Duran, K.; Smith, D. A continuous wavelet transform algorithm for peak detection. Electrophoresis 2008, 29, 4215–4225. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Z.M.; Chen, S.; Liang, Y.Z.; Liu, Z.X.; Zhang, Q.M.; Ding, L.X.; Ye, F.; Zhou, H. An intelligent Background-Correction algorithm for highly fluorescent samples in Raman spectroscopy. J. Raman Spectrosc. 2010, 41, 659–669. [Google Scholar] [CrossRef]

- Alinovi, D.; Ferrari, G.; Pisani, F.; Raheli, R. Respiratory rate monitoring by video processing using local motion magnification. In Proceedings of the 2018 26th European Signal Processing Conference (EUSIPCO), Rome, Italy, 3–7 September 2018; IEEE Publishing: San Antonio, TX, USA, 2018; pp. 1780–1784. [Google Scholar]

- Aubakir, B.; Nurimbetov, B.; Tursynbek, I.; Varol, H.A. Vital sign monitoring utilizing Eulerian video magnification and thermography. In Proceedings of the 2016 38th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Orlando, FL, USA, 16–20 August 2016; IEEE Publishing: San Antonio, TX, USA, 2016; pp. 3527–3530. [Google Scholar]

- Al-naji, A.; Chahl, J. Contactless cardiac activity detection based on head motion magnification. Int. J. Image Graph. 2017, 17, 1750001. [Google Scholar] [CrossRef]

- Al-naji, A.; Chahl, J.; Lee, S.-H. Cardiopulmonary signal acquisition from different regions using video imaging analysis. Comput. Methods Biomech. Biomed. Eng. Imaging Vis. 2019, 7, 117–131. [Google Scholar] [CrossRef]

- Ordóñez, C.; Cabo, C.; Menéndez, A.; Bello, A. Detection of human vital signs in hazardous environments by means of video magnification. PLoS ONE 2018, 13, e0195290. [Google Scholar] [CrossRef] [PubMed]

- Wu, H.-Y.; Rubinstein, M.; Shih, E.; Guttag, J.V.; Durand, F.; Freeman, W.T. Eulerian video magnification for revealing subtle changes in the world. ACM Trans. Graph. 2012, 31, 65. [Google Scholar] [CrossRef]

| Subject | Poses | No. of Peaks | The Proposed Life Signs Detector System | |

|---|---|---|---|---|

| ALIVE | DECEASED | |||

| Subject 1 | Pose 1 | 13 | ✓ | |

| Pose 2 | 13 | ✓ | ||

| Pose 3 | 12 | ✓ | ||

| Pose 4 | 12 | ✓ | ||

| Subject 2 | Pose 1 | 9 | ✓ | |

| Pose 2 | 9 | ✓ | ||

| Pose 3 | 9 | ✓ | ||

| Pose 4 | 9 | ✓ | ||

| Subject 3 | Pose 1 | 11 | ✓ | |

| Pose 2 | 11 | ✓ | ||

| Pose 3 | 11 | ✓ | ||

| Pose 4 | 12 | ✓ | ||

| Subject 4 | Pose 1 | 11 | ✓ | |

| Pose 2 | 10 | ✓ | ||

| Pose 3 | 11 | ✓ | ||

| Pose 4 | 11 | ✓ | ||

| Subject 5 | Pose 1 | 8 | ✓ | |

| Pose 2 | 8 | ✓ | ||

| Pose 3 | 8 | ✓ | ||

| Pose 4 | 8 | ✓ | ||

| Subject 6 | Pose 1 | 7 | ✓ | |

| Pose 2 | 8 | ✓ | ||

| Pose 3 | 8 | ✓ | ||

| Pose 4 | 8 | ✓ | ||

| Subject 7 | Pose 1 | 11 | ✓ | |

| Pose 2 | 10 | ✓ | ||

| Pose 3 | 10 | ✓ | ||

| Pose 4 | 11 | ✓ | ||

| Subject 8 | Pose 1 | 10 | ✓ | |

| Pose 2 | 10 | ✓ | ||

| Pose 3 | 10 | ✓ | ||

| Pose 4 | 9 | ✓ | ||

| Mannequin | Pose 1 | 1 | ✓ | |

| Pose 2 | 1 | ✓ | ||

| Pose 3 | 0 | ✓ | ||

| Pose 4 | 0 | ✓ | ||

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Al-Naji, A.; Perera, A.G.; Mohammed, S.L.; Chahl, J. Life Signs Detector Using a Drone in Disaster Zones. Remote Sens. 2019, 11, 2441. https://doi.org/10.3390/rs11202441

Al-Naji A, Perera AG, Mohammed SL, Chahl J. Life Signs Detector Using a Drone in Disaster Zones. Remote Sensing. 2019; 11(20):2441. https://doi.org/10.3390/rs11202441

Chicago/Turabian StyleAl-Naji, Ali, Asanka G. Perera, Saleem Latteef Mohammed, and Javaan Chahl. 2019. "Life Signs Detector Using a Drone in Disaster Zones" Remote Sensing 11, no. 20: 2441. https://doi.org/10.3390/rs11202441

APA StyleAl-Naji, A., Perera, A. G., Mohammed, S. L., & Chahl, J. (2019). Life Signs Detector Using a Drone in Disaster Zones. Remote Sensing, 11(20), 2441. https://doi.org/10.3390/rs11202441