Radiometric Calibration of ‘Commercial off the Shelf’ Cameras for UAV-Based High-Resolution Temporal Crop Phenotyping of Reflectance and NDVI

Abstract

1. Introduction

- Develop a method for full radiometric calibration of COTS camera imagery, with new methods for exposure normalisation and individual image incoming solar irradiance adjustment.

- Quantitatively assess the influence of the radiometric calibration steps and the final quality of the derived reflectance and NDVI datasets.

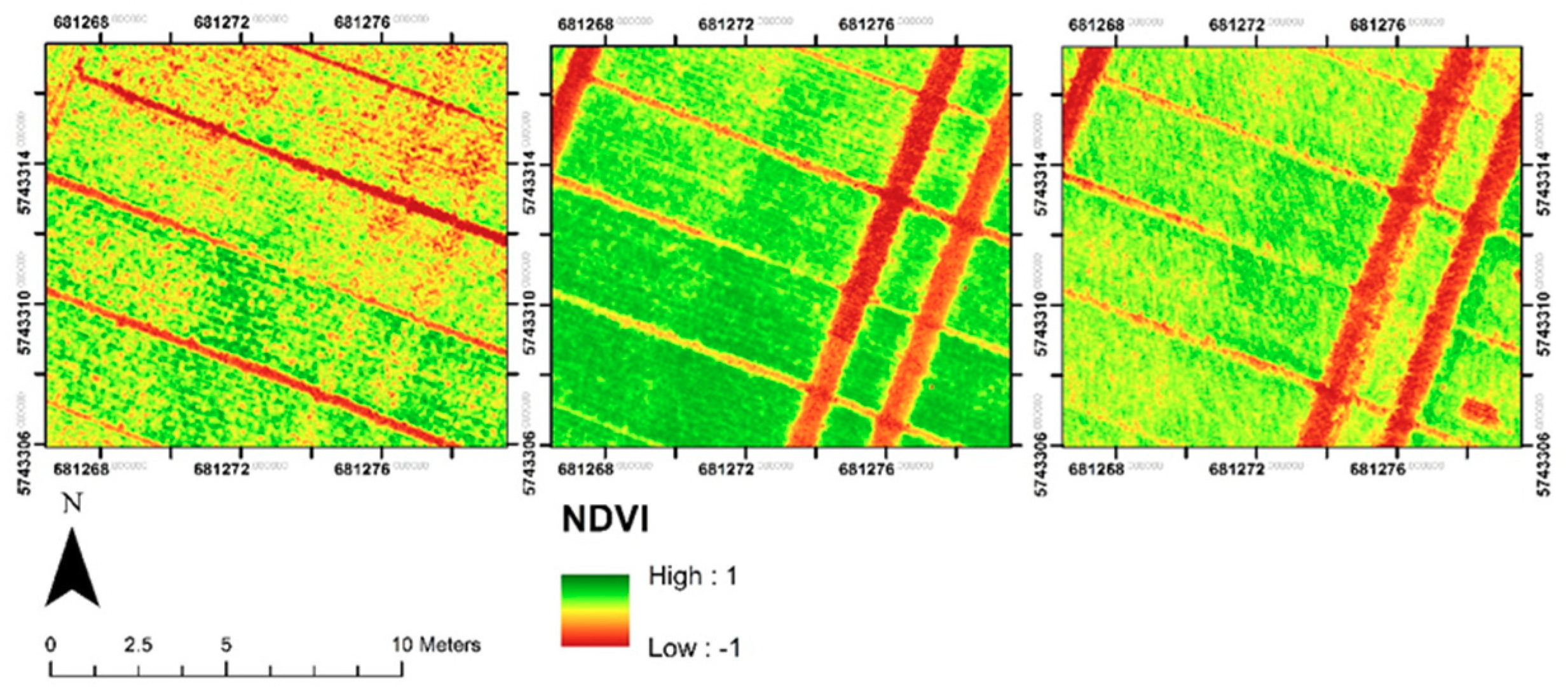

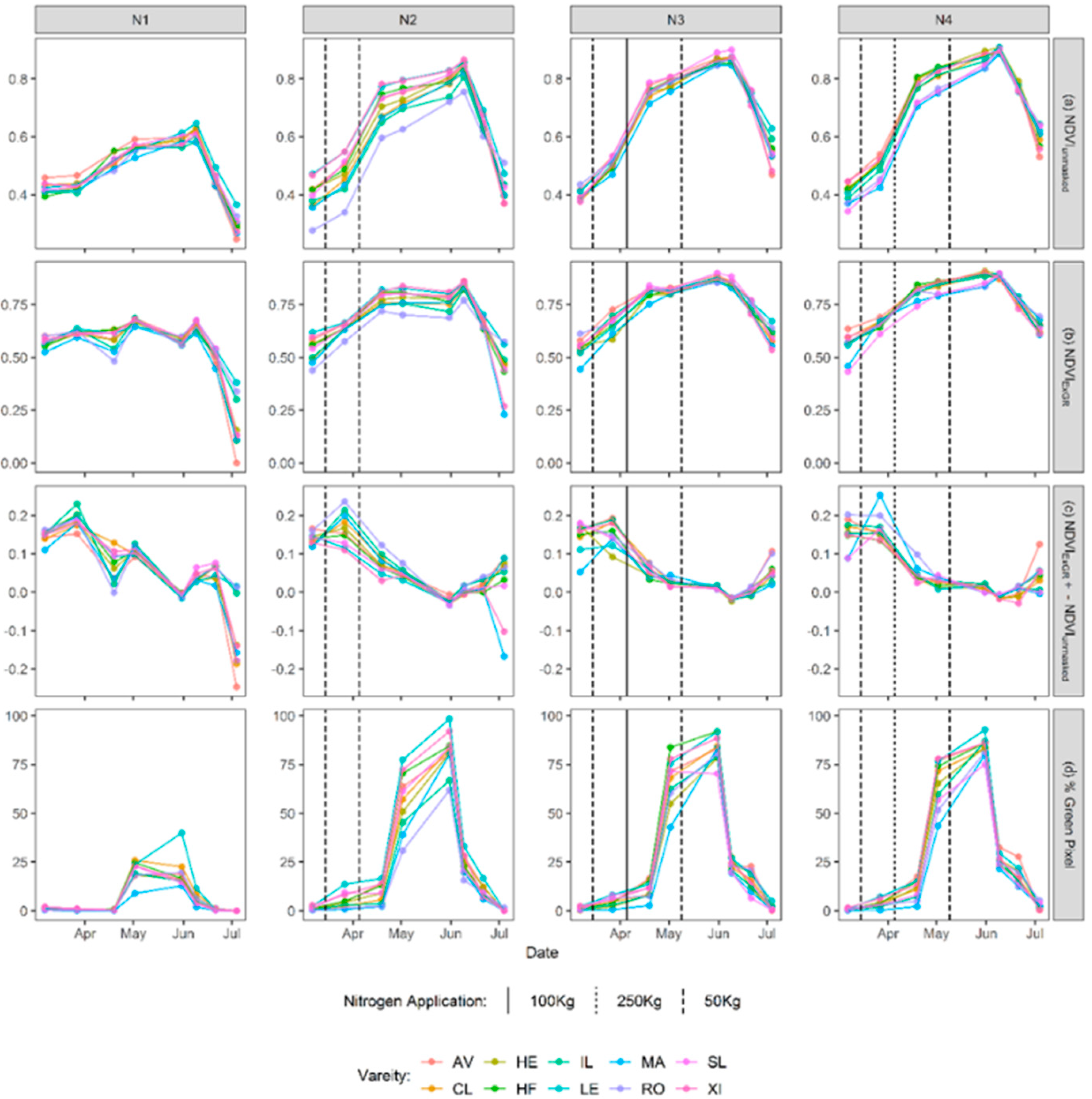

- Utilise the very high-resolution maps derived from the UAV imagery to analyse the influence of canopy cover on NDVI trends for a field-based wheat crop trial.

2. Materials and Methods

2.1. Field Site

2.2. UAV Imagery

2.3. Validation Data

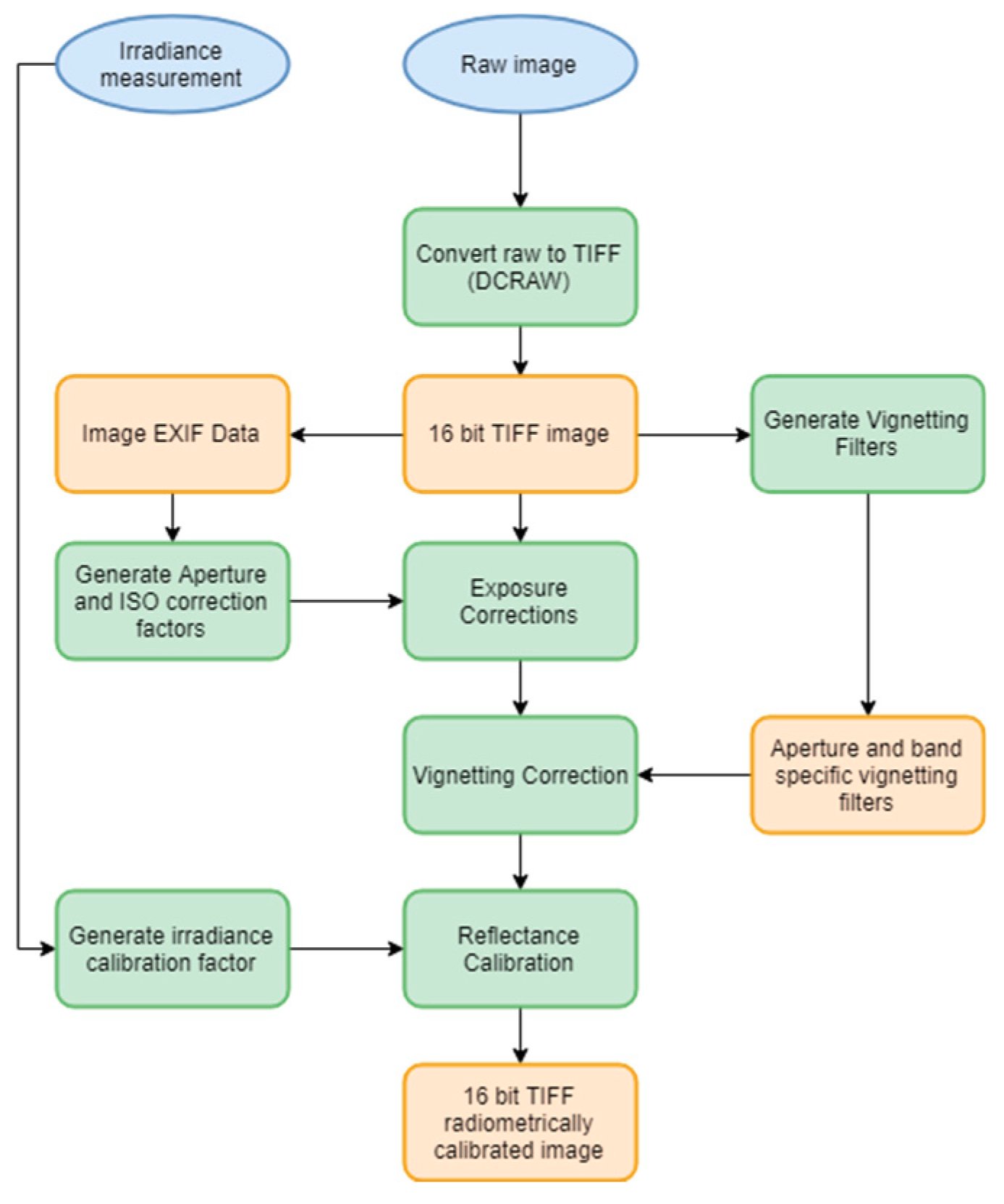

2.4. Post-Processing of Captured Imagery

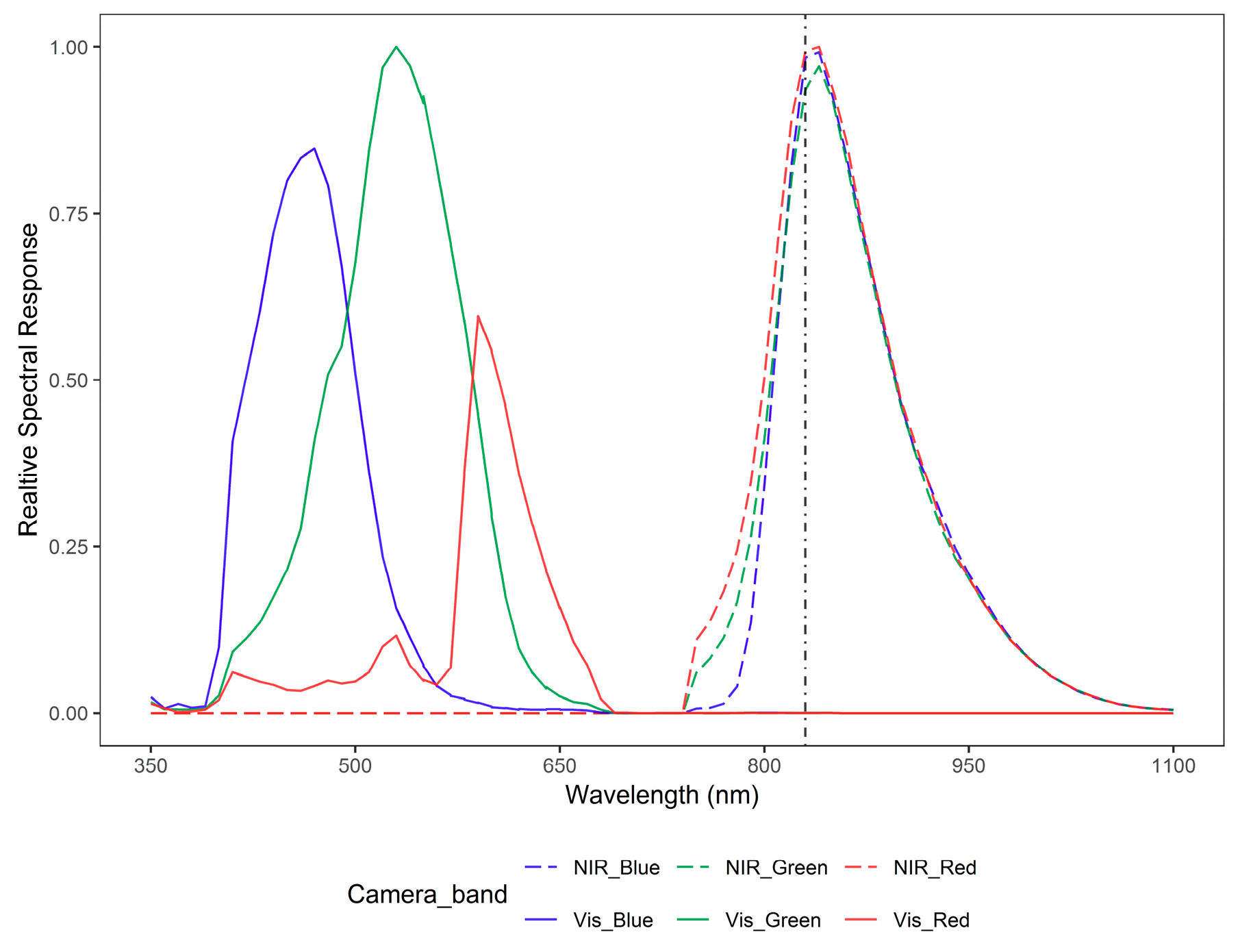

2.4.1. Relative Spectral Response

2.4.2. RAW Conversion

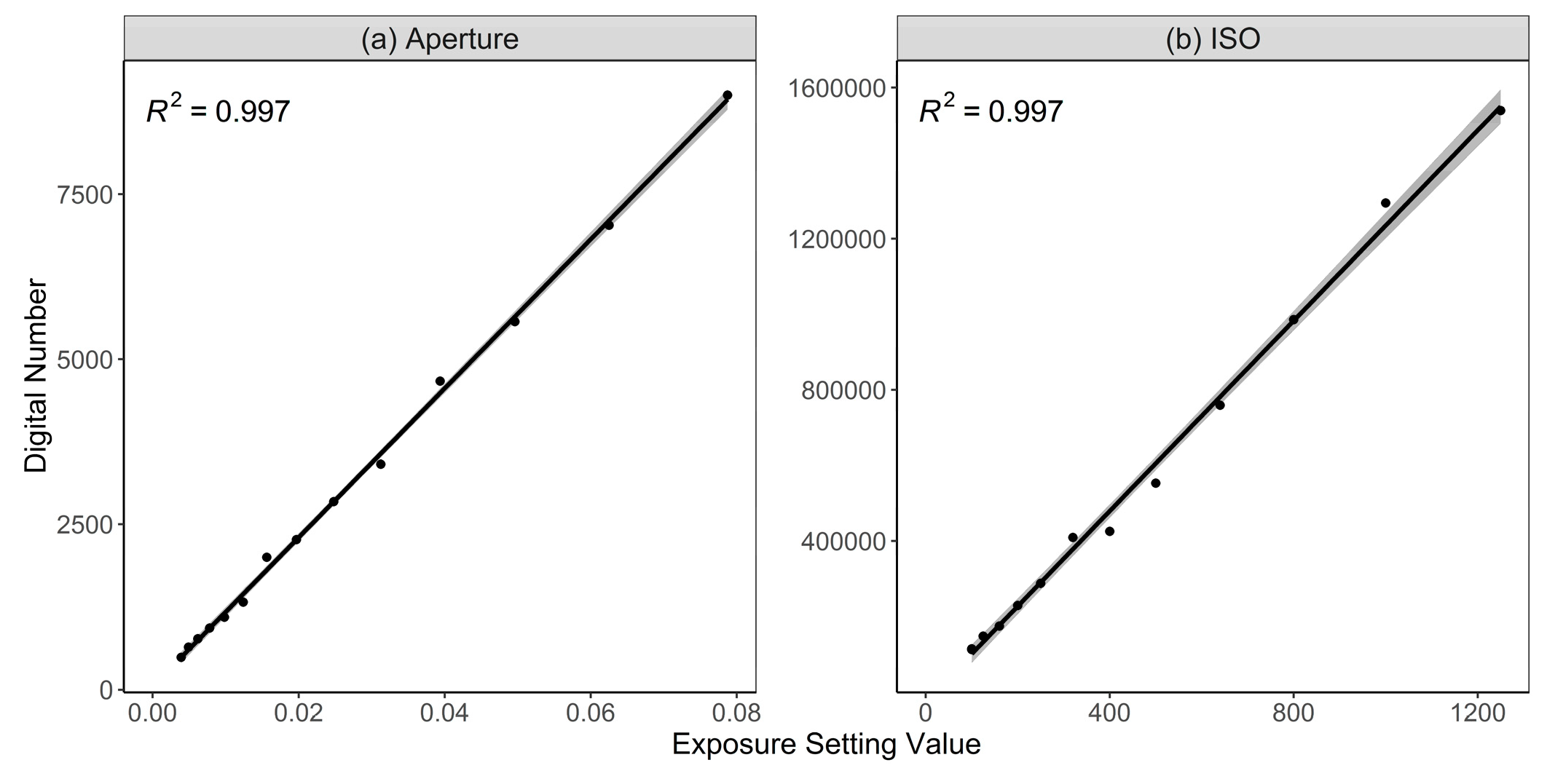

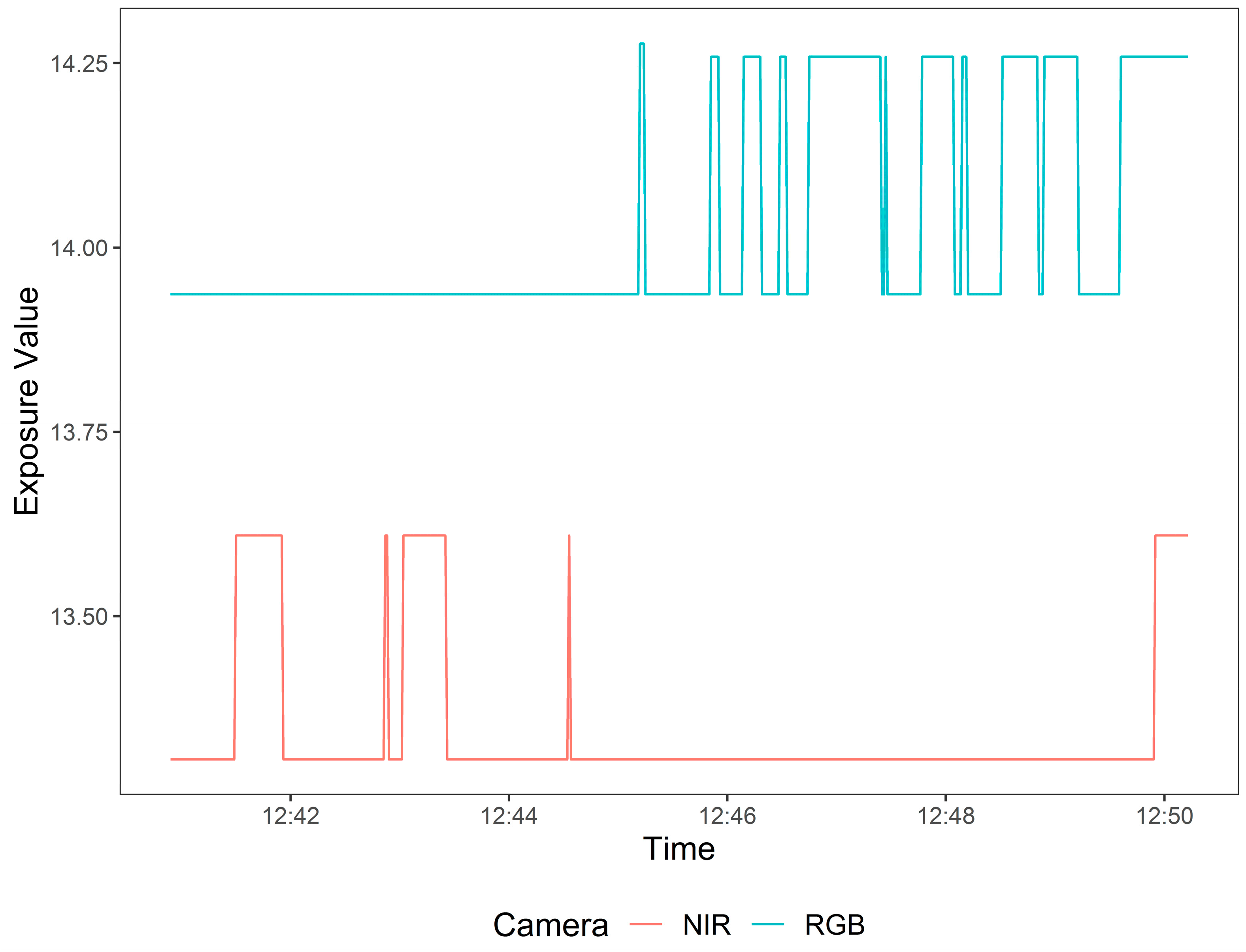

2.4.3. Exposure Corrections

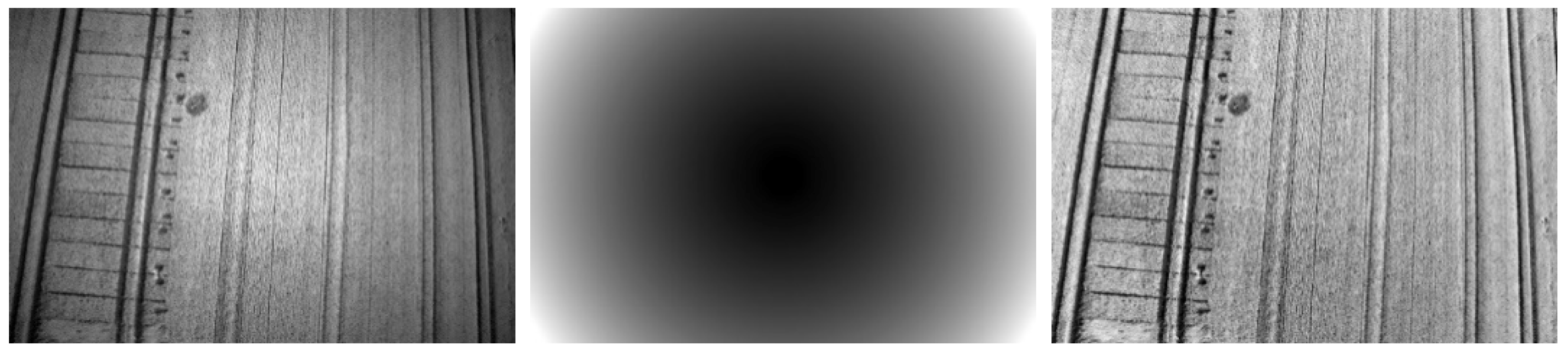

2.4.4. Vignetting Correction

- Images of matching camera, band and aperture settings were summed together and averaged.

- The radial vignetting profile of the averaged image was modelled using the median of evenly spaced concentric rings.

- A 2nd degree polynomial function interpolated the vignetting profile from the median of rings.

- The interpolation values were then divided by the minimum value to produce a multiplicative correction factor which brightened the corners.

- The concentric rings are given the value of the correction factor corresponding to its distance from the centre to produce the final vignetting filter (Figure 4 middle).

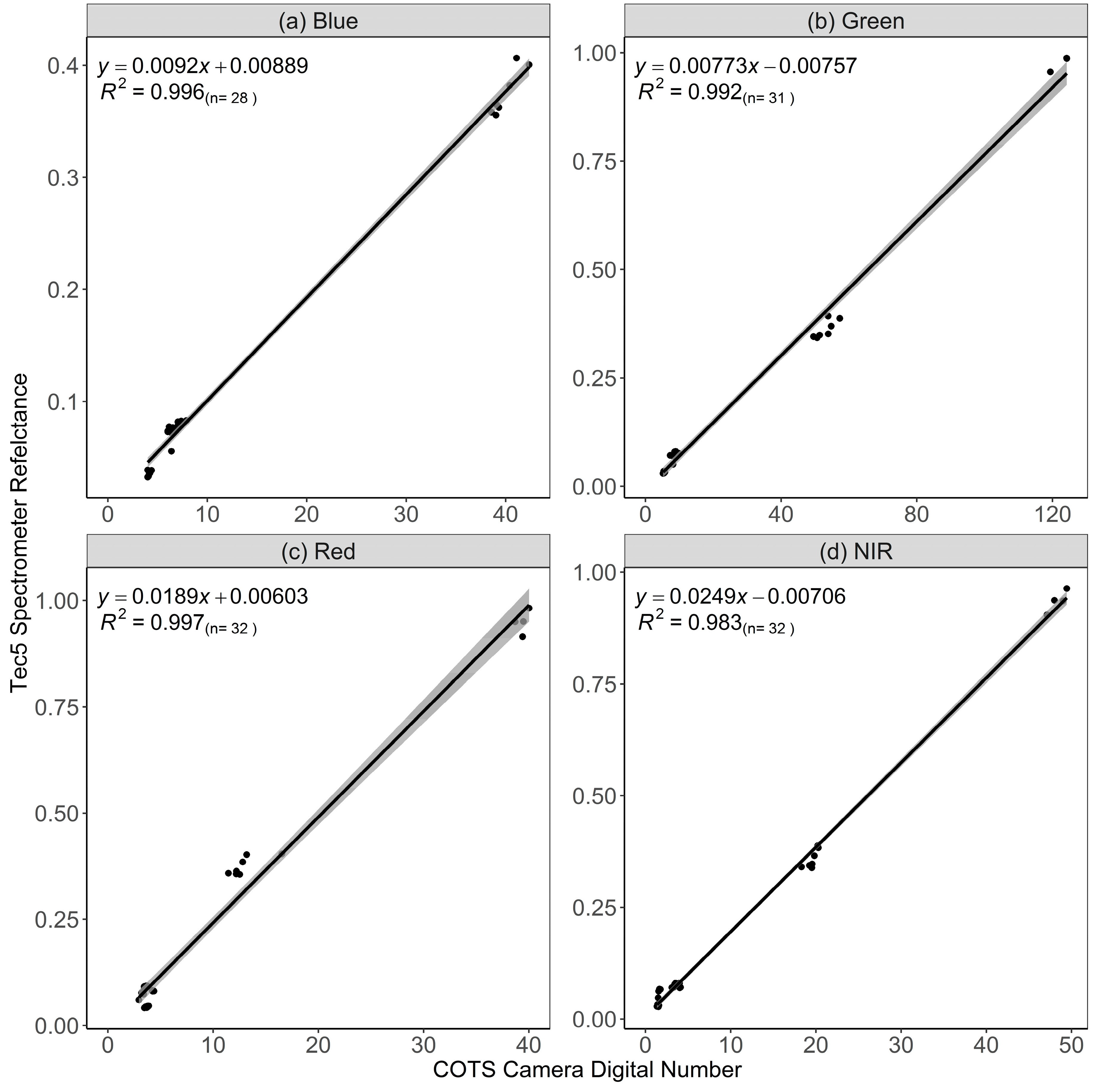

2.4.5. Cross Calibration Factor and Reflectance Calibration

2.4.6. Orthomosaic Generation

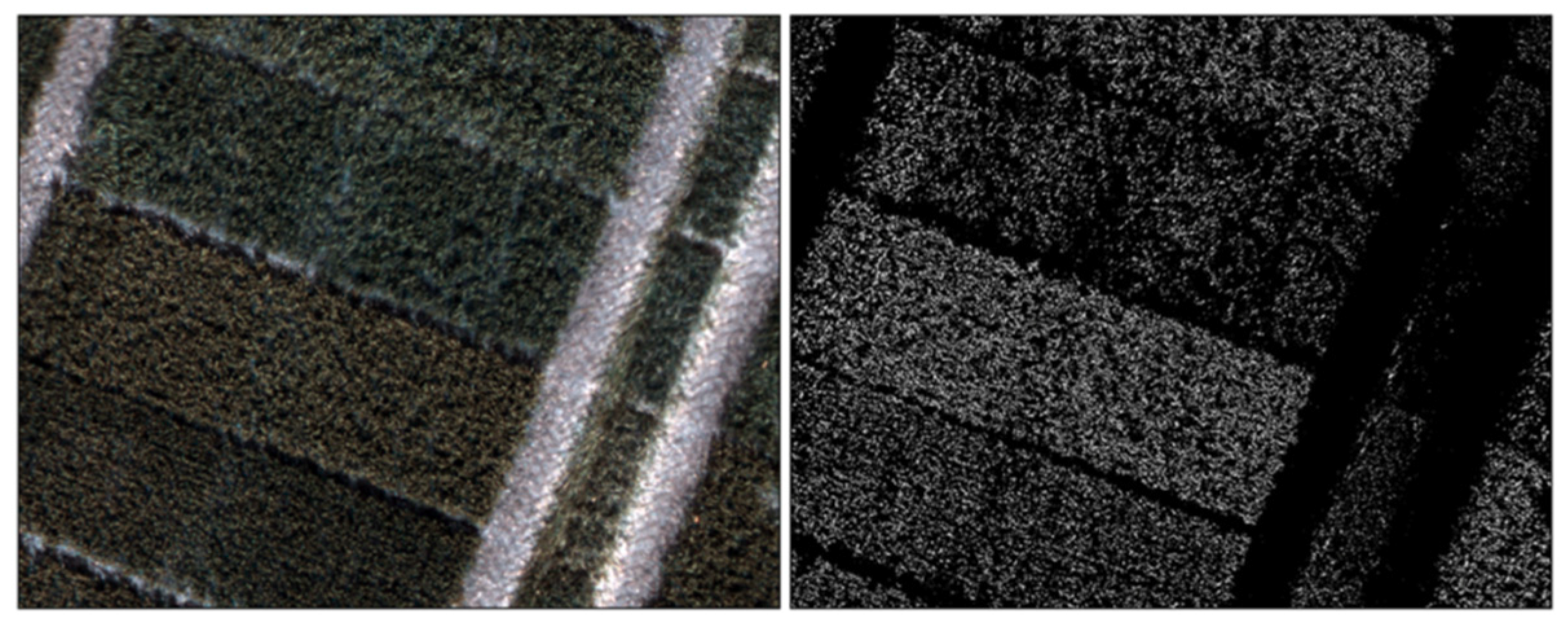

2.5. Canopy Masking

3. Results

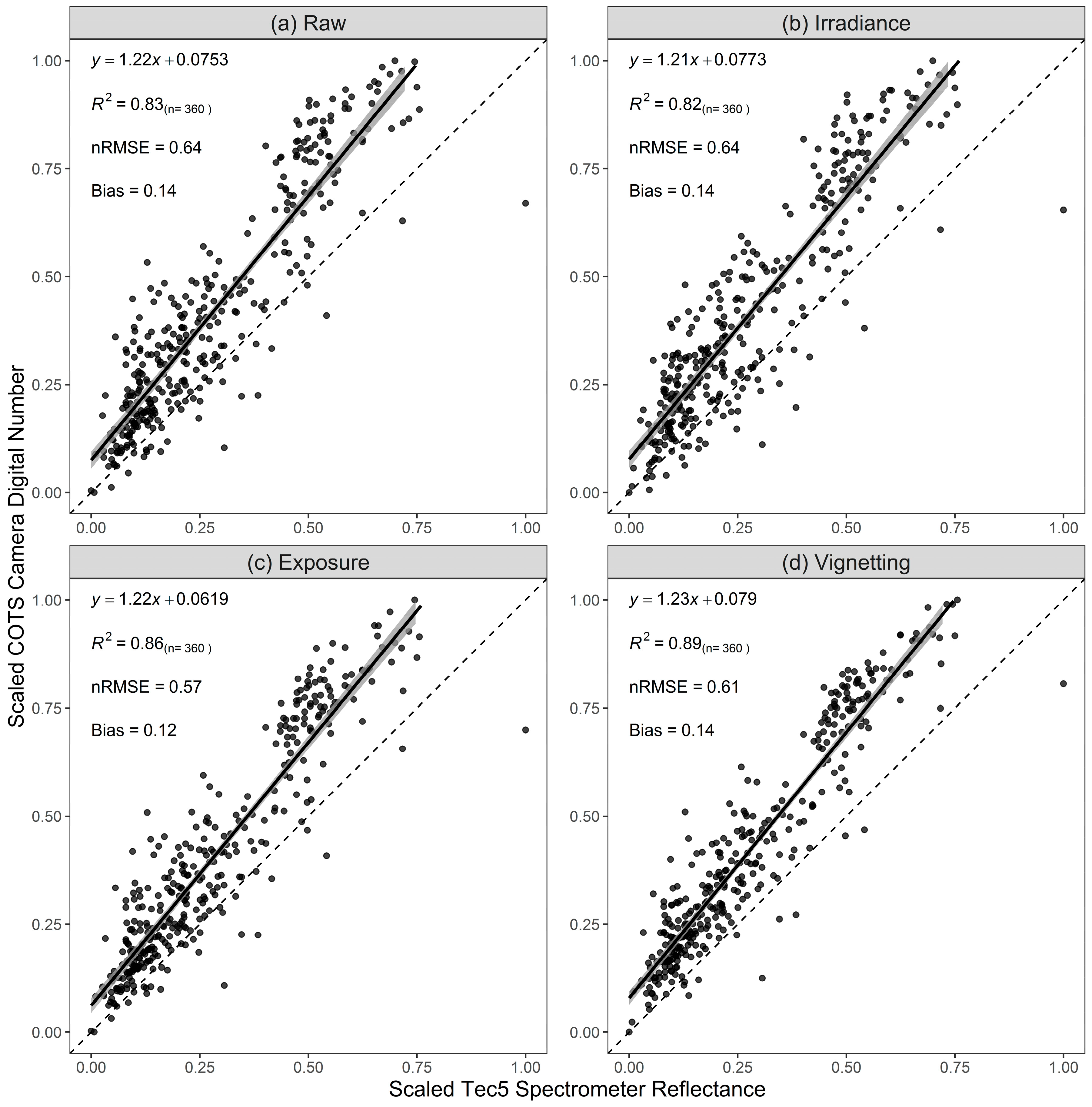

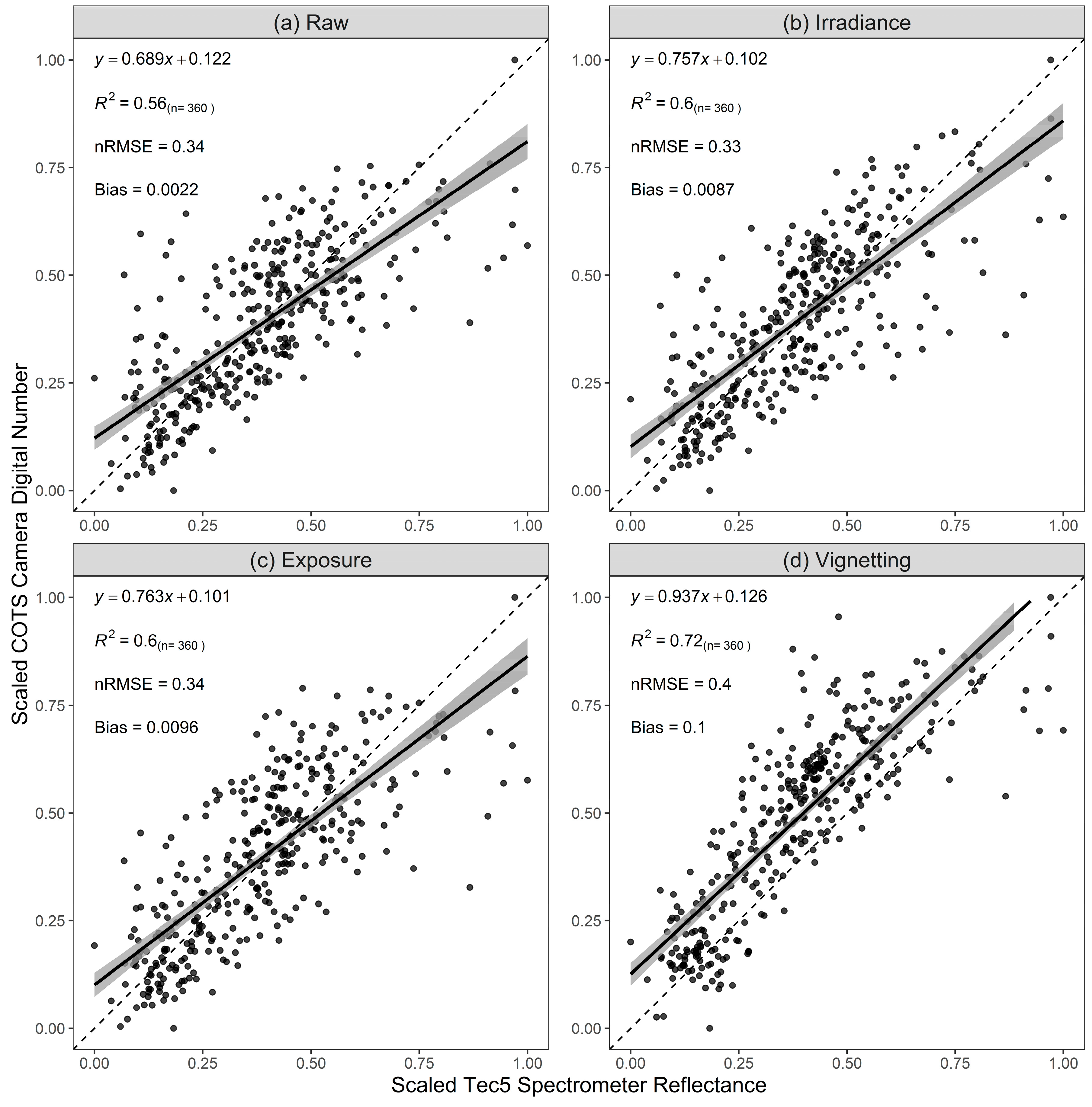

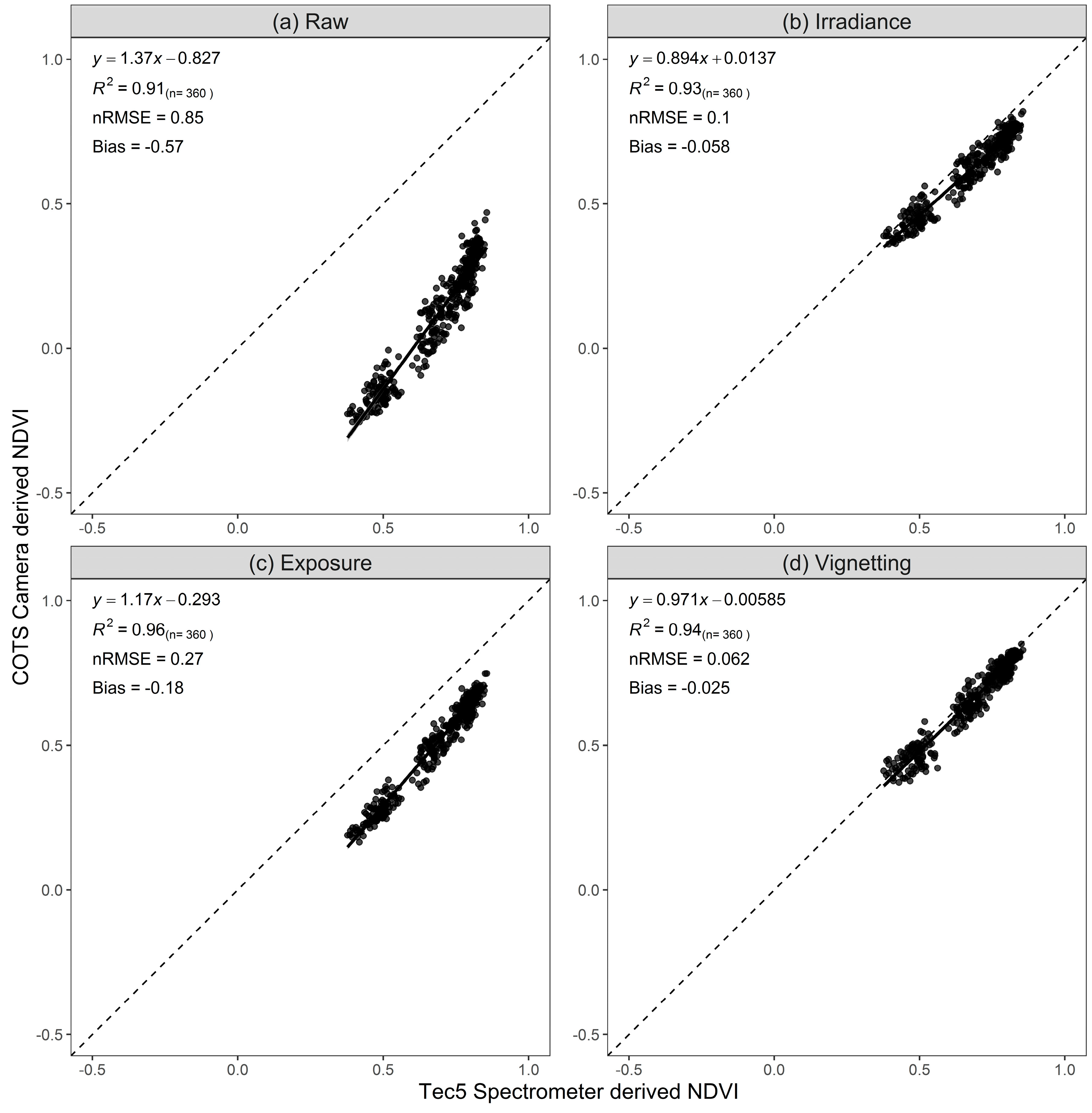

3.1. Validation of Calibrations

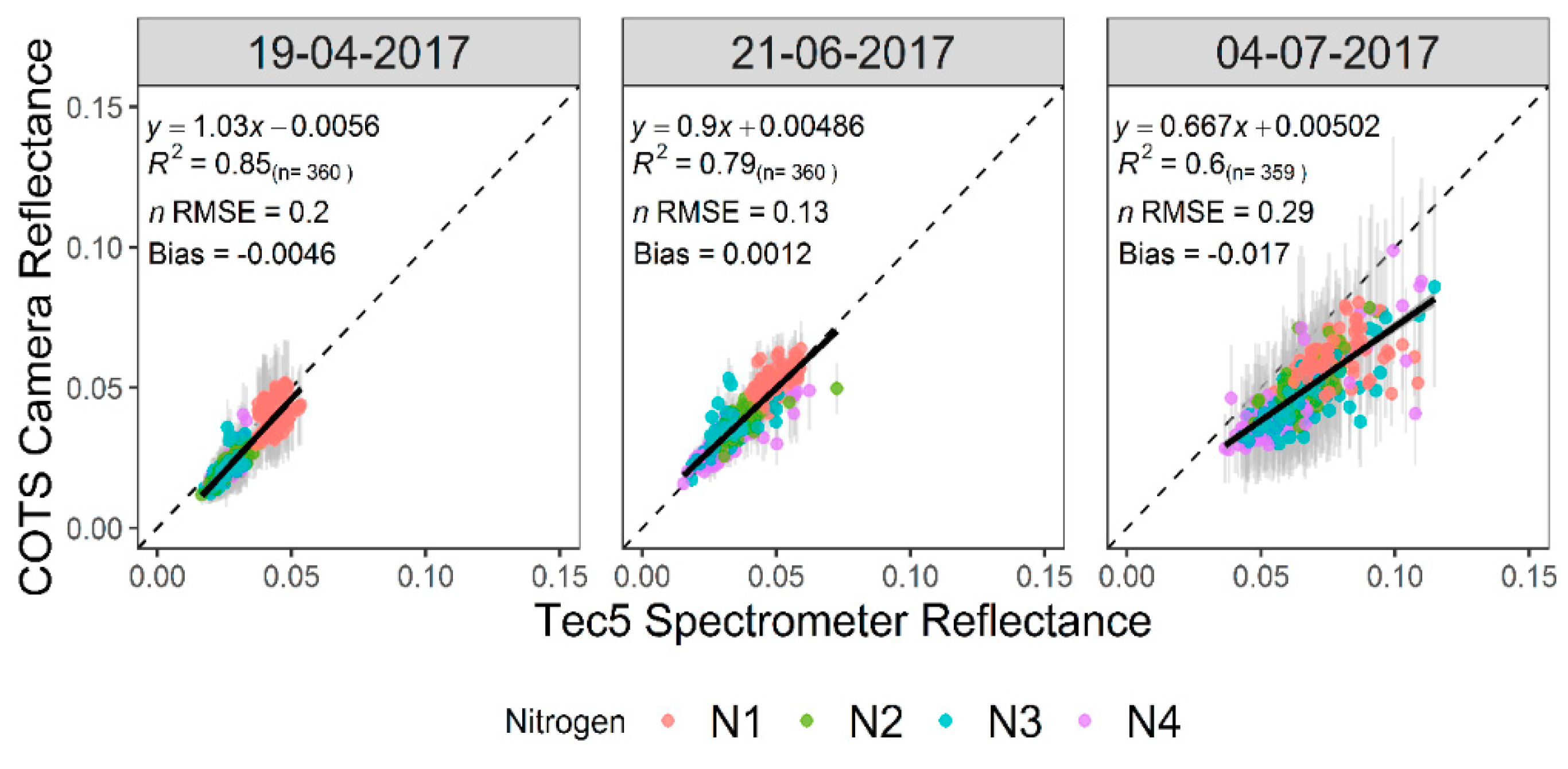

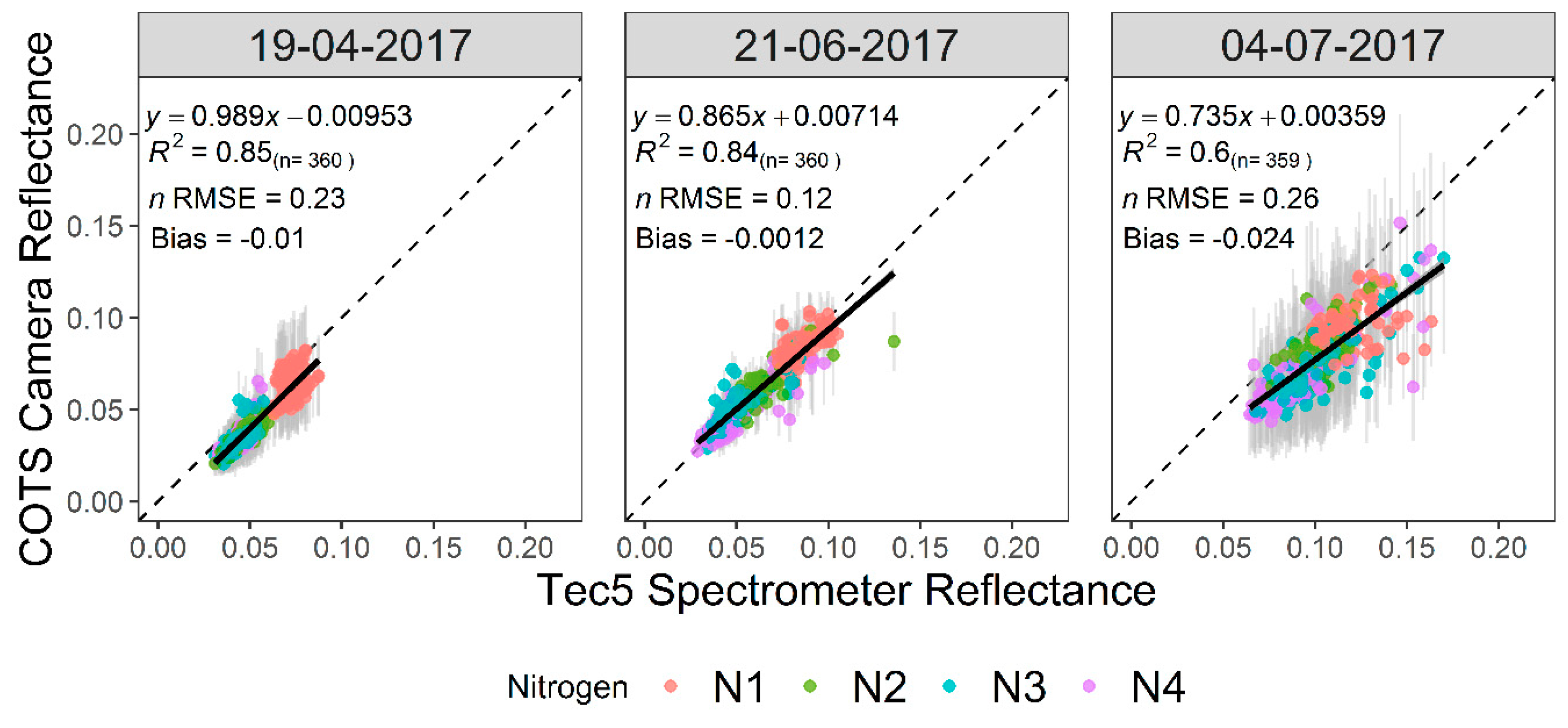

3.2. Accuracy Assessment of COTS Camera Reflectance

3.3. Influence of Canopy on NDVI

4. Discussion

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Pask, A.J.D.; Pietragalla, J.; Mullan, D.M.; Reynolds, M.P. Physiological Breeding II: A Field Guide to Wheat Phenotyping; CIMMYT: Mexico City, Mexico, 2012. [Google Scholar]

- Khan, Z.; Chopin, J.; Cai, J.; Eichi, V.R.; Haefele, S.; Miklavcic, S.J. Quantitative Estimation of Wheat Phenotyping Traits Using Ground and Aerial Imagery. Remote Sens. 2018, 10, 950. [Google Scholar] [CrossRef]

- Kipp, S.; Mistele, B.; Baresel, P.; Schmidhalter, U. High-throughput phenotyping early plant vigour of winter wheat. Eur. J. Agron. 2014, 52, 271–278. [Google Scholar] [CrossRef]

- Cabrera-Bosquet, L.; Molero, G.; Stellacci, A.M.; Bort, J.; Nogues, S.; Araus, J.L. NDVI as a potential tool for predicting biomass, plant nitrogen content and growth in wheat genotypes subjected to different water and nitrogen conditions. Cereal Res. Commun. 2011, 39, 147–159. [Google Scholar] [CrossRef]

- Bendig, J.; Bolten, A.; Bennertz, S.; Broscheit, J.; Eichfuss, S.; Bareth, G. Estimating Biomass of Barley Using Crop Surface Models (CSMs) Derived from UAV-Based RGB Imaging. Remote Sens. 2014, 6, 10395–10412. [Google Scholar] [CrossRef]

- Muñoz-Huerta, R.F.; Guevara-Gonzalez, R.G.; Contreras-Medina, L.M.; Torres-Pacheco, I.; Prado-Olivarez, J.; Ocampo-Velazquez, R.V. A Review of Methods for Sensing the Nitrogen Status in Plants: Advantages, Disadvantages and Recent Advances. Sensors 2013, 13, 10823–10843. [Google Scholar] [CrossRef] [PubMed]

- Ali, M.; Montzka, C.; Stadler, A.; Menz, G.; Thonfeld, F.; Vereecken, H. Estimation and Validation of RapidEye-Based Time-Series of Leaf Area Index for Winter Wheat in the Rur Catchment (Germany). Remote Sens. 2015, 7, 2808–2831. [Google Scholar] [CrossRef]

- Zheng, G.; Moskal, L.M. Retrieving Leaf Area Index (LAI) Using Remote Sensing: Theories, Methods and Sensors. Sensors 2009, 9, 2719–2745. [Google Scholar] [CrossRef]

- Lopresti, M.F.; Di Bella, C.M.; Degioanni, A.J. Relationship between MODIS-NDVI data and wheat yield: A case study in Northern Buenos Aires province, Argentina. Inf. Process. Agric. 2015, 2, 73–84. [Google Scholar] [CrossRef]

- Jay, S.; Gorretta, N.; Morel, J.; Maupas, F.; Bendoula, R.; Rabatel, G.; Dutartre, D.; Comar, A.; Baret, F. Estimating leaf chlorophyll content in sugar beet canopies using millimeter- to centimeter-scale reflectance imagery. Remote Sens. Environ. 2017, 198, 173–186. [Google Scholar] [CrossRef]

- Duan, T.; Chapman, S.; Guo, Y.; Zheng, B. Dynamic monitoring of NDVI in wheat agronomy and breeding trials using an unmanned aerial vehicle. Field Crop. Res. 2017, 210, 71–80. [Google Scholar] [CrossRef]

- Holman, F.H.; Riche, A.B.; Michalski, A.; Castle, M.; Wooster, M.J.; Hawkesford, M.J. High Throughput Field Phenotyping of Wheat Plant Height and Growth Rate in Field Plot Trials Using UAV Based Remote Sensing. Remote Sens. 2016, 8, 1031. [Google Scholar] [CrossRef]

- Parrot SEQUOIA+|Parrot Store Official. Available online: https://www.parrot.com/business-solutions-uk/parrot-professional/parrot-sequoia (accessed on 8 April 2019).

- Young, N.E.; Anderson, R.S.; Chignell, S.M.; Vorster, A.G.; Lawrence, R.; Evangelista, P.H. A survival guide to Landsat preprocessing. Ecolgy 2017, 98, 920–932. [Google Scholar] [CrossRef] [PubMed]

- Lebourgeois, V.; Bégué, A.; Labbé, S.; Mallavan, B.; Prévot, L.; Roux, B. Can Commercial Digital Cameras Be Used as Multispectral Sensors? A Crop Monitoring Test. Sensors 2008, 8, 7300–7322. [Google Scholar] [CrossRef] [PubMed]

- Lelong, C.C.D.; Burger, P.; Jubelin, G.; Roux, B.; Labbé, S.; Baret, F. Assessment of Unmanned Aerial Vehicles Imagery for Quantitative Monitoring of Wheat Crop in Small Plots. Sensors 2008, 8, 3557–3585. [Google Scholar] [CrossRef] [PubMed]

- Mathews, A.J. A Practical UAV Remote Sensing Methodology to Generate Multispectral Orthophotos for Vineyards: Estimation of Spectral Reflectance Using Compact Digital Cameras. Int. J. Appl. Geospat. Res. 2015, 6, 65–87. [Google Scholar] [CrossRef]

- Gibson-Poole, S.; Humphris, S.; Toth, I.; Hamilton, A. Identification of the onset of disease within a potato crop using a UAV equipped with un-modified and modified commercial off-the-shelf digital cameras. Adv. Anim. Biosci. 2017, 8, 812–816. [Google Scholar] [CrossRef]

- Filippa, G.; Cremonese, E.; Migliavacca, M.; Galvagno, M.; Sonnentag, O.; Humphreys, E.; Hufkens, K.; Ryu, Y.; Verfaillie, J.; Di Cella, U.M.; et al. NDVI derived from near-infrared-enabled digital cameras: Applicability across different plant functional types. Agric. For. Meteorol. 2018, 249, 275–285. [Google Scholar] [CrossRef]

- Petach, A.R.; Toomey, M.; Aubrecht, D.M.; Richardson, A.D. Monitoring vegetation phenology using an infrared-enabled security camera. Agric. For. Meteorol. 2014, 195, 143–151. [Google Scholar] [CrossRef]

- Sakamoto, T.; Shibayama, M.; Kimura, A.; Takada, E. Assessment of digital camera-derived vegetation indices in quantitative monitoring of seasonal rice growth. ISPRS J. Photogramm. Remote Sens. 2011, 66, 872–882. [Google Scholar] [CrossRef]

- Sakamoto, T.; Gitelson, A.A.; Nguy-Robertson, A.L.; Arkebauer, T.J.; Wardlow, B.D.; Suyker, A.E.; Verma, S.B.; Shibayama, M. An alternative method using digital cameras for continuous monitoring of crop status. Agric. For. Meteorol. 2012, 154, 113–126. [Google Scholar] [CrossRef]

- Luo, Y.; El-Madany, T.S.; Filippa, G.; Ma, X.; Ahrens, B.; Carrara, A.; Gonzalez-Cascon, R.; Cremonese, E.; Galvagno, M.; Hammer, T.W.; et al. Using Near-Infrared-Enabled Digital Repeat Photography to Track Structural and Physiological Phenology in Mediterranean Tree-Grass Ecosystems. Remote Sens. 2018, 10, 1293. [Google Scholar] [CrossRef]

- Kelcey, J.; Lucieer, A. Sensor Correction of a 6-Band Multispectral Imaging Sensor for UAV Remote Sensing. Remote Sens. 2012, 4, 1462–1493. [Google Scholar] [CrossRef]

- Berra, E.F.; Gaulton, R.; Barr, S. Commercial Off-the-Shelf Digital Cameras on Unmanned Aerial Vehicles for Multitemporal Monitoring of Vegetation Reflectance and NDVI. IEEE Trans. Geosci. Remote Sens. 2017, 55, 4878–4886. [Google Scholar] [CrossRef]

- Smith, G.M.; Milton, E.J. The use of the empirical line method to calibrate remotely sensed data to reflectance. Int. J. Remote Sens. 1999, 20, 2653–2662. [Google Scholar] [CrossRef]

- Anderson, K.; Milton, E.J. Characterisation of the apparent reflectance of a concrete calibration surface over different time scales. In Proceedings of the Ninth International Symposium on Physical Measurements and Signatures in Remote Sensing (ISPMSRS), Beijing, China, 17–19 October 2005. [Google Scholar]

- Ritchie, G.L.; Sullivan, D.G.; Perry, C.D.; Hook, J.E.; Bednarz, C.W. Preparation of a Low-Cost Digital Camera System for Remote Sensing. Appl. Eng. Agric. 2008, 24, 885–894. [Google Scholar] [CrossRef]

- Hiscocks, P.D. Measuring Luminance with a Digital Camera. Available online: https://www.atecorp.com/atecorp/media/pdfs/data-sheets/Tektronix-J16_Application.pdf (accessed on 10 August 2017).

- Spreading Wings S900–Highly Portable, Powerful Aerial System for the Demanding Filmmaker. Available online: https://www.dji.com/uk/spreading-wings-s900 (accessed on 8 April 2019).

- Smart Camera|a5100 NFC & Wi-Fi Enabled Digital Camera|Sony UK. Available online: https://www.sony.co.uk/electronics/interchangeable-lens-cameras/ilce-5100-body-kit (accessed on 8 April 2019).

- Berra, E.; Gibson-Poole, S.; MacArthur, A.; Gaulton, R.; Hamilton, A. Estimation of the spectral sensitivity functions of un-modified and modified commercial off-the-shelf digital cameras to enable their use as a multispectral imaging system for UAVs. ISPRS–Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2015, XL-1/W4, 207–214. [Google Scholar] [CrossRef]

- Geo 7X|Handhelds|Trimble Geospatial. Available online: https://geospatial.trimble.com/products-and-solutions/geo-7x (accessed on 8 April 2019).

- Customized Systems for HandySpec® Field|tec5. Available online: https://www.tec5.com/en/products/custom-solutions/handyspec-field (accessed on 8 April 2019).

- Professional Photogrammetry and Drone Mapping Software. Available online: https://www.pix4d.com/ (accessed on 10 April 2019).

- Decoding Raw Digital Photos in Linux. Available online: https://www.cybercom.net/~dcoffin/dcraw/ (accessed on 18 February 2019).

- Agisoft Agisoft PhotoScan User Manual Professional Edition, Version 1.2. Available online: http://www.agisoft.com/pdf/photoscan-pro_1_2_en.pdf (accessed on 24 August 2016).

- Aasen, H.; Bolten, A. Multi-temporal high-resolution imaging spectroscopy with hyperspectral 2D Imagers–from Theory to Application. Remote Sens. Environ. 2018, 205, 374–389. [Google Scholar] [CrossRef]

- Meyer, G.E.; Neto, J.C. Verification of color vegetation indices for automated crop imaging applications. Comput. Electron. Agric. 2008, 63, 282–293. [Google Scholar] [CrossRef]

- Sadeghi-Tehran, P.; Virlet, N.; Sabermanesh, K.; Hawkesford, M.J. Multi-feature machine learning model for automatic segmentation of green fractional vegetation cover for high-throughput field phenotyping. Plant Methods 2017, 13, 103. [Google Scholar] [CrossRef]

- Zhou, X.; Guan, H.; Xie, H.; Wilson, J.L. Analysis and optimization of NDVI definitions and areal fraction models in remote sensing of vegetation. Int. J. Remote Sens. 2009, 30, 721–751. [Google Scholar] [CrossRef]

- Hassan, M.A.; Yang, M.; Rasheed, A.; Jin, X.; Xia, X.; Xiao, Y.; He, Z. Time-Series Multispectral Indices from Unmanned Aerial Vehicle Imagery Reveal Senescence Rate in Bread Wheat. Remote Sens. 2018, 10, 809. [Google Scholar] [CrossRef]

- Hassan, M.A.; Yang, M.; Rasheed, A.; Yang, G.; Reynolds, M.; Xia, X.; Xiao, Y.; He, Z. A rapid monitoring of NDVI across the wheat growth cycle for grain yield prediction using a multi-spectral UAV platform. Plant Sci. 2019, 282, 95–103. [Google Scholar] [CrossRef] [PubMed]

- Rossi, M.; Niedrist, G.; Asam, S.; Tonon, G.; Tomelleri, E.; Zebisch, M. A Comparison of the Signal from Diverse Optical Sensors for Monitoring Alpine Grassland Dynamics. Remote Sens. 2019, 11, 296. [Google Scholar] [CrossRef]

- Agriculture and Horticulture Development Board (AHDB). Wheat Growth Guide; Agriculture and Horticulture Development Board (AHDB): Warwickshire, UK, 2015. [Google Scholar]

- Makanza, R.; Zaman-Allah, M.; Cairns, J.E.; Magorokosho, C.; Tarekegne, A.; Olsen, M.; Prasanna, B.M. High-Throughput Phenotyping of Canopy Cover and Senescence in Maize Field Trials Using Aerial Digital Canopy Imaging. Remote Sens. 2018, 10, 330. [Google Scholar] [CrossRef]

| Treatment Code | Total Nitrogen Application (kg N ha−1) | Application Date | Nitrogen Applied (kg n ha−1) |

|---|---|---|---|

| N1 | 0 | - | 0 |

| - | 0 | ||

| - | 0 | ||

| N2 | 100 | 15/03/2017 | 50 |

| 05/04/2017 | 50 | ||

| 09/05/2017 | 0 | ||

| N3 | 200 | 15/03/2017 | 50 |

| 05/04/2017 | 100 | ||

| 09/05/2017 | 50 | ||

| N4 | 350 | 15/03/2017 | 50 |

| 05/04/2017 | 250 | ||

| 09/05/2017 | 50 |

| Camera Channel | Wavelength Range (nm) |

|---|---|

| Green | 530–570 |

| Red | 640–680 |

| Red Edge | 730–740 |

| NIR | 770–810 |

| Model | Channel | Wavelength Range (nm) |

|---|---|---|

| “RGB” Camera | Red | 580–660 |

| Green | 420–610 | |

| Blue | 410–540 | |

| “NIR” Camera | NIR (blue channel) | 800–900 |

| DCRAW Command | Action |

|---|---|

| –v | Print verbose messages |

| –6 | Write 16bit |

| –W | No automatic image brightening |

| –g 1 1 | Apply unadjusted gamma curve |

| –T | Write Tiff format |

| –r 1 1 1 1 | Set unadjusted white balance |

| –t 0 | Do not rotate image |

| –q 0 | Apply linear demosaicing |

| –o 0 | Raw output colour space |

| –K darkimage.pgm | Apply dark image correction using file specified |

| Camera and Band | Calibration Equation |

|---|---|

| RGB-Blue | |

| RGB-Green | |

| RGB-Red | |

| NIR-Blue |

| Processing Step | Setting |

|---|---|

| Align photos | High |

| Generate dense point cloud | Medium |

| Generate mesh | High |

| Generate orthomosaic | Disabled |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Holman, F.H.; Riche, A.B.; Castle, M.; Wooster, M.J.; Hawkesford, M.J. Radiometric Calibration of ‘Commercial off the Shelf’ Cameras for UAV-Based High-Resolution Temporal Crop Phenotyping of Reflectance and NDVI. Remote Sens. 2019, 11, 1657. https://doi.org/10.3390/rs11141657

Holman FH, Riche AB, Castle M, Wooster MJ, Hawkesford MJ. Radiometric Calibration of ‘Commercial off the Shelf’ Cameras for UAV-Based High-Resolution Temporal Crop Phenotyping of Reflectance and NDVI. Remote Sensing. 2019; 11(14):1657. https://doi.org/10.3390/rs11141657

Chicago/Turabian StyleHolman, Fenner H., Andrew B. Riche, March Castle, Martin J. Wooster, and Malcolm J. Hawkesford. 2019. "Radiometric Calibration of ‘Commercial off the Shelf’ Cameras for UAV-Based High-Resolution Temporal Crop Phenotyping of Reflectance and NDVI" Remote Sensing 11, no. 14: 1657. https://doi.org/10.3390/rs11141657

APA StyleHolman, F. H., Riche, A. B., Castle, M., Wooster, M. J., & Hawkesford, M. J. (2019). Radiometric Calibration of ‘Commercial off the Shelf’ Cameras for UAV-Based High-Resolution Temporal Crop Phenotyping of Reflectance and NDVI. Remote Sensing, 11(14), 1657. https://doi.org/10.3390/rs11141657